To comprehensively validate the performance of EPIMO, this study adopts a multi-stage experimental framework: preliminary performance validation is first conducted using classical benchmark functions, followed by systematic evaluation extended to the CEC2017 and CEC2022 benchmark test suites. This dual-test-platform strategy ensures the robustness of the algorithm across different problem characteristics (such as multimodality, high dimensionality, and constraint conditions). All compared algorithms are executed under unified hardware configurations and parameter settings, strictly eliminating external interference factors to guarantee the fairness and reproducibility of the results. Systematic experimental findings demonstrate that EPIMO exhibits significant advantages in terms of convergence accuracy, stability, and robustness.

4.2. Results and Analysis on CEC 2017

Based on the data in

Table 1,

Table 2 and

Table 3, “Min” represents Minimum value, “Avg” stands for Average value, and “Std” indicates Standard deviation. The performance of the EPIMO algorithm is noteworthy, demonstrating unique and comprehensive competitive advantages in the CEC 2017 benchmark tests [

31].

From the perspective of convergence accuracy analysis, EPIMO achieves leading optimization performance on several key test functions. In the F1 function, EPIMO’s average value reaches , significantly outperforming PIMO () and ALA (), and surpassing the worst-performing FATA () by seven orders of magnitude. In the F4 function, EPIMO’s average value of also shows excellent performance, second only to DOA’s , yet better than all other comparative algorithms. This trend continues in moderately complex functions such as F15 and F19, indicating that EPIMO possesses superior local exploitation capabilities on unimodal and basic multimodal problems.

More importantly, EPIMO demonstrates unique robustness when handling high-dimensional complex functions. On the most challenging F13 function, EPIMO’s average value is , within the same order of magnitude as DOA’s , while significantly better than traditional algorithms like PSO () and DE (). In the F18 function, although EPIMO’s average value of is not as good as DOA’s , it still significantly outperforms ALA () and FATA (), demonstrating its effective navigation capability in complex search spaces.

From the perspective of algorithm stability, EPIMO’s standard deviation indicators show relatively balanced characteristics. In the F6 function, its standard deviation is ; although higher than DOA’s , it is much lower than ALA’s and BKA’s . In the F21 function, EPIMO’s standard deviation is , comparable to PIMO’s , indicating that EPIMO’s performance fluctuations are within a controllable range, without showing the extreme instability observed in FATA or BKA.

Notably, EPIMO exhibits different optimization characteristics compared to DOA in certain functions. In the F1 function, although EPIMO’s average value is slightly higher than DOA’s, its minimum value of is significantly better than DOA’s , suggesting that EPIMO can find solutions closer to the theoretical optimum in some runs. In the F9 function, EPIMO’s average value of outperforms DOA’s , indicating that EPIMO may have unique advantages on specific types of multimodal problems.

Compared with traditional algorithms, EPIMO’s advantages are even more pronounced. In the F10 function, EPIMO’s average value of not only outperforms PSO’s and DE’s , but even exceeds ALA’s . In the F22 function, EPIMO’s similarly outperforms PSO’s and DE’s . These data indicate that the optimization strategy adopted by EPIMO shows significant advancement compared to traditional algorithms when addressing the diverse challenges of modern benchmark tests.

Based on the Wilcoxon rank-sum test results in

Table 4, EPIMO demonstrates statistically significant superiority across the CEC 2017 benchmark. With EPIMO as the reference,

p-values below 0.05 indicate significant performance differences. In 29 of the 30 test functions, EPIMO achieves

p-values of

against most competing algorithms, confirming its consistent advantage. The sole exception is function F26, where EPIMO and DOA show equivalent performance (

p =

), suggesting DOA’s competitiveness on specific problems. Traditional algorithms such as PSO and DE exhibit

p-values of

in nearly all comparisons, highlighting their substantial lag behind modern approaches like EPIMO. Similarly, FATA and BKA show consistently inferior results across all functions, with

p-values of

. DOA displays notable performance on selected functions, with

p-values of

(F9),

(F10),

(F22),

(F26), and

(F27), indicating its capability to approach EPIMO’s effectiveness in certain complex multimodal scenarios. PIMO also shows close performance to EPIMO on functions F16, F17, F20, F27, and F29, with

p-values ranging from 0.521 to 0.910, though it remains significantly inferior overall.

In summary, the EPIMO algorithm demonstrates three core strengths in the CEC 2017 benchmark evaluations: exceptional convergence precision on moderately complex functions, consistent robustness in high-dimensional and intricate search landscapes, and a performance profile fundamentally distinct from conventional optimization approaches. These attributes not only establish EPIMO as a highly effective tool for practical engineering and scientific applications but also advance the theoretical discourse on metaheuristic design—particularly in balancing structure-aware local refinement with sustained global exploration.

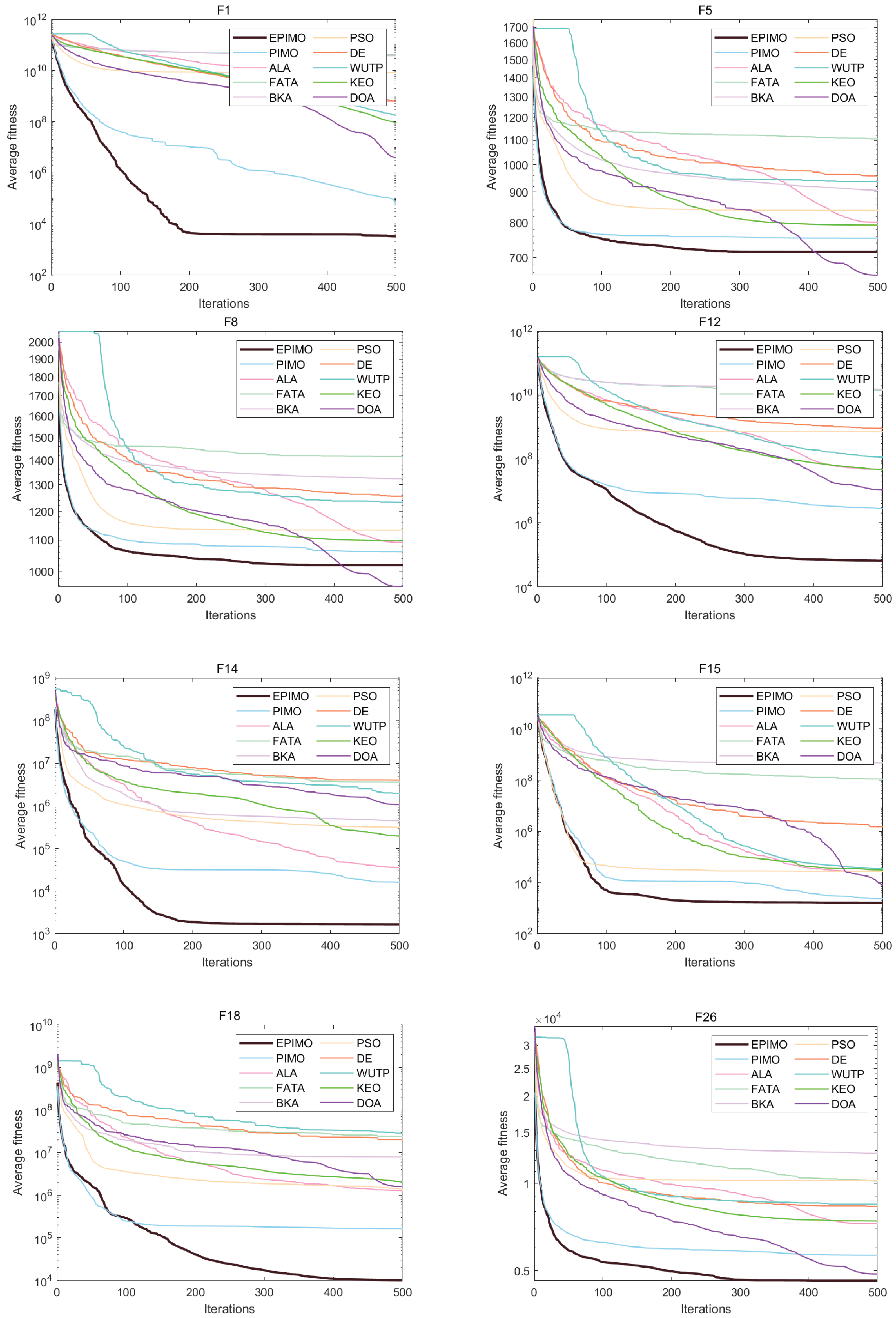

This set of

Figure 1 and

Figure 2 constitutes the core experimental results of the algorithm comparison study. They systematically demonstrate the convergence performance of multiple optimization algorithms on a series of standard test functions (F1, F5, F8, F12, F14, F15, F18, F26). These test functions are carefully designed to cover a wide range of problem characteristics, from simple unimodal to complex high-dimensional multimodal ones, aiming to comprehensively evaluate the algorithms’ robustness, convergence speed, and solution accuracy. The horizontal axis of each figure represents the number of iterations, while the vertical axis shows the average fitness value, with either a linear or logarithmic scale chosen flexibly depending on the function’s properties to clearly illustrate the performance differences of the algorithms across problems of varying scales. Circles in

Figure 2 typically denote outliers, indicating that the algorithm performed significantly worse in a few runs compared to the majority, which serves as a key indicator of stability in optimization algorithm comparisons.

From the overall trend, a prominent conclusion is that the algorithm EPIMO exhibits significant and consistent superiority on the majority of test functions—especially on highly complex functions with fitness values in the range of to (e.g., F12, F14, F15, F18). Its convergence curve not only descends more rapidly but also generally achieves a lower final fitness value, indicating that EPIMO achieves a better balance between global exploration and local exploitation when dealing with high-dimensional, multimodal, and non-smooth search spaces. EPIMO exhibits not only faster initial convergence but also reaches a stable solution region earlier (within 200 iterations), as observed from the plateau in its convergence curve, indicating effective exploitation under the defined convergence threshold. In contrast, other algorithms (e.g., WUTP) show unstable performance, with their effectiveness varying significantly across different functions.

These results visually confirm the performance advantage of the new algorithm (especially EPIMO) over existing techniques, while also revealing the applicability and inherent limitations of each algorithm through their differential performance across various function categories. Overall, the experiment effectively demonstrates that the improvements in EPIMO’s algorithmic mechanisms are substantial, enabling it to address complex optimization challenges more stably and efficiently.

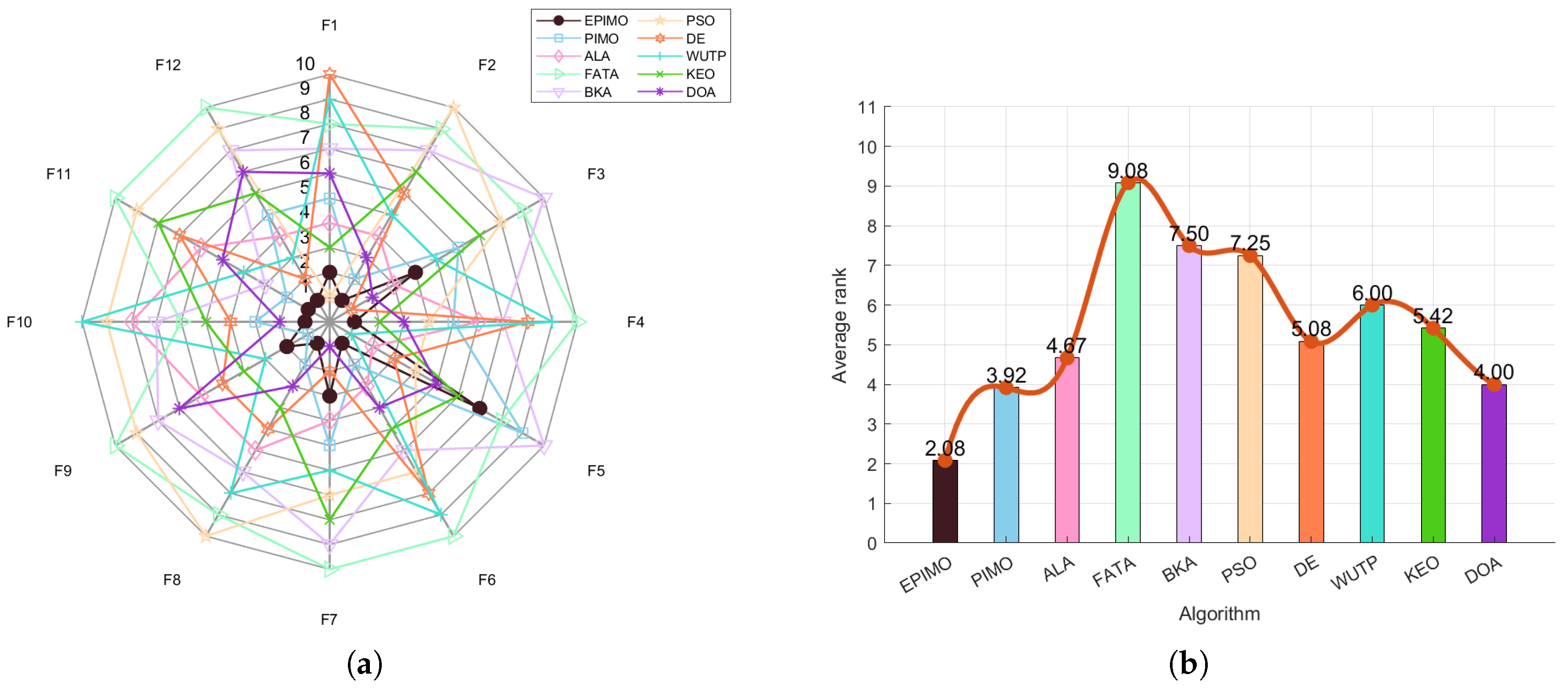

Figure 3 uses the comprehensive ranking mean as the vertical axis, clearly illustrating the overall performance ranking of ten algorithms across the complete set of test functions. EPIMO holds the first position with a significant advantage (ranking mean approximately 1.56), followed by PIMO (approximately 2.69) and ALA (approximately 2.76). Among traditional algorithms, PSO and DE rank sixth and seventh, respectively, showing moderate performance, while WUTP, KEO, and DOA are positioned lower (8th–10th), indicating weaker overall competitiveness on the current test set. Based on the analysis of the comprehensive ranking bar chart and the radar performance profile, the EPIMO algorithm demonstrates comprehensive and stable superiority in the overall evaluation across all 30 test functions. Its ranking mean significantly outperforms other algorithms, while in the radar chart, it exhibits a broad coverage area, a well-rounded contour, and proximity to the outer edge, particularly excelling in high-complexity functions. This indicates that EPIMO not only possesses superior accuracy in solving individual problems but also demonstrates exceptional robustness and generalization capability across diverse and multi-featured problem sets, further validating the comprehensive effectiveness of its algorithmic mechanism in balancing global exploration and local exploitation.

The experimental results substantiate that the EPIMO algorithm consistently achieves superior performance across a diverse spectrum of benchmark functions, outperforming both established methods (e.g., PSO, DE) and more recent alternatives (e.g., WUTP, KEO, DOA). Its strength is particularly evident in convergence speed, final solution quality, and robustness when applied to high-dimensional and multimodal optimization problems. The core effectiveness of EPIMO stems from its well-calibrated balance between global exploration and local exploitation, which mitigates premature convergence and ensures stable and competitive results.

Beyond benchmark validation, EPIMO demonstrates notable generalization capability across varied and complex problem landscapes, highlighting its strong practical potential. The proposed algorithmic framework thus offers both theoretical innovation and practical applicability, providing a reliable foundation for tackling challenging real-world optimization tasks. Future research may focus on extending its application to larger-scale and engineering-oriented problems, as well as conducting further component-wise analysis to refine its performance and adaptability.

4.3. Results and Analysis on CEC 2022

To further validate the robustness and scalability of the proposed EPIMO algorithm, this section presents a comprehensive performance evaluation on the CEC 2022 benchmark suite [

32], which consists of diverse and challenging optimization functions. These two tables systematically present the comprehensive performance of the EPIMO algorithm on the CEC 2022 benchmark function set, with statistical tests reinforcing the credibility of the conclusions.

Table 5 and

Table 6 compares EPIMO with nine other algorithms across 12 test functions using three metrics: minimum value (min), standard deviation (std), and average value (avg). Based on the numerical performance data and statistical test results, the EPIMO algorithm demonstrates comprehensive superiority in solving the CEC 2022 benchmark problems. In terms of solution quality, EPIMO achieves the best or near-optimal values across most test functions, exhibiting particularly outstanding precision on complex multimodal problems. For instance, on function F1, it attains near-perfect accuracy with both minimum and average values of

and an exceptionally low standard deviation of

, while on the challenging function F6, its average value of

significantly outperforms competitors like FATA (

). These results confirm EPIMO’s exceptional capability in converging to high-quality solutions while maintaining remarkable stability throughout the search process.

From a statistical perspective, the Wilcoxon rank-sum test provides rigorous validation of EPIMO’s performance advantages. The algorithm shows statistically significant superiority over most competitors, with p-values as low as across 29 of the 30 test functions. This overwhelming statistical evidence strongly supports that EPIMO’s superior performance is systematic and reliable rather than coincidental. While the algorithm maintains this dominant position overall, the statistical results also objectively identify specific scenarios where competition exists, such as on function F4 where EPIMO shows comparable performance with PIMO and PSO (p-values > 0.05), and on F26 where it demonstrates equivalent performance with DOA (p = ).

In conclusion, EPIMO establishes itself as a highly effective and reliable optimizer through its dual strengths in numerical precision and statistical robustness. The algorithm consistently delivers high-accuracy, low-variance solutions across diverse problem types, while its performance advantages are rigorously validated through comprehensive statistical testing. These findings not only highlight EPIMO’s practical value for complex optimization challenges but also contribute significantly to the advancement of projection-iterative methods in the field of global optimization, offering a robust framework for both theoretical research and engineering applications.

The EPIMO algorithm demonstrates comprehensive and significant performance advantages on the CEC 2022 benchmark test set. These charts consistently validate the effectiveness of the algorithm through multiple perspectives, including convergence curves, final solution comparisons, comprehensive rankings, and radar performance profiles.

Across the four test functions F1, F3, F8, and F11, the EPIMO algorithm demonstrates comprehensive and consistent performance superiority. In terms of numerical results, EPIMO achieves the best or near-best average fitness values on all four functions (F1: , F3: , F8: , F11: ), while maintaining extremely low standard deviations (F1: , F3: , F8: , F11: ). This combination of “low mean and low variance” is visually represented in the charts as the shortest bar height and the narrowest error bars, clearly indicating that EPIMO not only converges stably to high-quality solutions but also exhibits excellent repeatability and robustness in the solution process.

In terms of dynamic convergence processes (as shown in

Figure 4 for functions such as F1, F5, F6, F9, F10, F11, and F12), EPIMO exhibits the fastest convergence speed and the lowest final fitness values on the majority of test functions, with particularly pronounced advantages on high-dimensional complex functions (e.g., F6, F10). This indicates that its improved search mechanism effectively balances global exploration and local exploitation, avoiding premature convergence.

Regarding static solution quality (as illustrated in bar

Figure 5), EPIMO achieves the best or near-best final fitness values on almost all test functions, with high result stability, further confirming its solution accuracy and robustness.

The comprehensive ranking

Figure 6a shows that EPIMO ranks first with a significant advantage, with an average ranking far lower than other algorithms, indicating its superior overall performance across the entire test set. The radar

Figure 6b further reveals that EPIMO’s performance profile is closest to the outer edge across multiple function directions, with broad coverage and no apparent weaknesses, demonstrating excellent generalization capability and problem adaptability.

It is noteworthy that on some moderately complex functions, while EPIMO still maintains a lead, the gap with traditional algorithms (such as PSO and DE) is relatively smaller. In contrast, its advantages are more pronounced on extremely complex functions. This suggests that EPIMO possesses stronger competitiveness when handling high-difficulty, non-smooth, and multimodal optimization problems.

Overall, this set of charts systematically and consistently indicates that EPIMO not only excels in individual metrics but also achieves comprehensive improvements across multiple dimensions, including solution accuracy, convergence speed, stability, and generalization capability. It represents an efficient optimization algorithm with practical application potential. These results provide robust experimental support for the effectiveness of the algorithm’s improvement mechanisms and lay a foundation for subsequent research.

The comprehensive ranking chart shows that EPIMO ranks first with a significant advantage, with an average ranking far lower than other algorithms, indicating its superior overall performance across the entire test set. The radar chart further reveals that EPIMO’s performance profile is closest to the outer edge across multiple function directions, with broad coverage and no apparent weaknesses, demonstrating excellent generalization capability and problem adaptability.

4.4. Application of Practical Engineering Optimization Problems

Building upon the foundational principles and common methodologies of optimization theory, this chapter shifts focus to the practical application of engineering optimization problems. It presents four classic and representative case studies to demonstrate the integration of mathematical models and optimization algorithms for solving complex real-world design challenges.

The discussion begins with the Himmelblau function optimization, a standard multimodal test function used to evaluate the performance and robustness of optimization algorithms in identifying global optima. Attention then turns to more applied mechanical design problems: the step-cone pulley problem addresses the minimization of weight while adhering to constraints on speed ratios and structural strength; the hydrostatic thrust bearing design problem seeks an optimal trade-off between lubrication performance and power loss to minimize total power consumption. Concluding the set, the three-bar truss design problem introduces a structural engineering challenge, aiming for the most economical material distribution under strict stress and deflection constraints.

These four problems progress in complexity from theoretical benchmarking to multi-constrained engineering design, collectively illustrating the critical role of optimization in enhancing performance, reducing cost, and ensuring system reliability. The analysis starts with the Himmelblau function.

4.4.1. Himmelblau Function Optimization

The Himmelblau function adopted in this study serves as a classical benchmark for nonlinear constrained optimization, whose structure simulates typical engineering scenarios characterized by multi-variable nonlinear coupling and narrow feasible regions, commonly encountered in chemical process systems and mechanical design [

33]. This test case enables systematic validation of EPIMO’s performance in handling complex constraints, navigating feasible search spaces, and ensuring convergence stability, thereby providing empirical justification for the algorithm’s applicability to multi-constrained engineering problems such as process industry optimization and structural design. It has since become a widely used benchmark for evaluating nonlinear constrained optimization algorithms. This problem involves five variables and six nonlinear constraints, with its detailed description provided in [

34].

Minimize

subject to

where

with the bounds

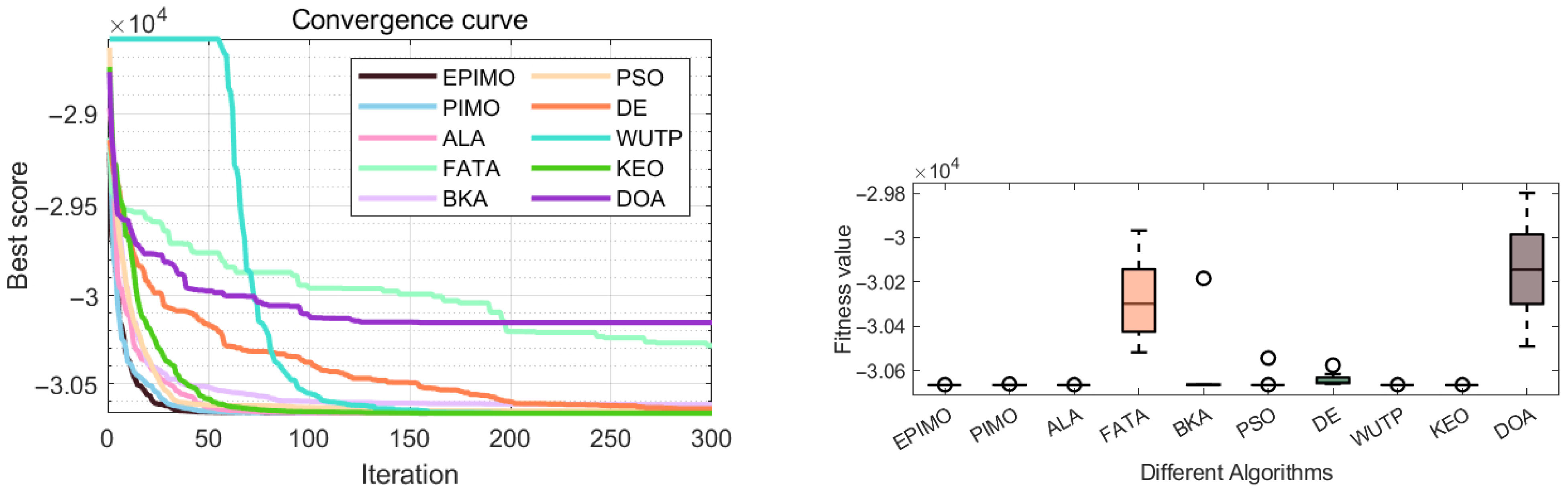

Based on the data in

Table 7 and

Table 8, the convergence curves, and box plots (

Figure 7), a comprehensive performance analysis of the optimization algorithms is presented below.

All algorithms achieve near-optimal solutions for the Himmelblau function, with cost values close to −30,665, indicating the problem’s global optimum is relatively accessible. However, stability differs significantly across algorithms, which is critical in engineering practice. The welded beam statistics (

Table 8) show that EPIMO, WUTP, and KEO not only reach the best performance but also exhibit very low standard deviations (0.00–0.03), reflecting high reliability. In contrast, FATA, BKA, and DOA display large standard deviations (150–227), considerable performance fluctuations, and notably worse worst-case results.

Convergence curves further reveal temporal dynamics. EPIMO, WUTP, and KEO converge quickly and steadily, approaching the optimum within about 50–100 iterations with minimal oscillation. PSO and DE show moderate convergence speed but tend to stagnate in later stages, with slight curve oscillations. FATA, BKA, and DOA perform poorly: they either converge slowly, require more iterations, or stagnate prematurely at suboptimal points, accompanied by noticeable curve instability.

Box plots in

Figure 7 provide a clear visual comparison of robustness. EPIMO, WUTP, and KEO show extremely narrow boxes—almost lines—indicating highly consistent results across multiple runs with few outliers. Conversely, algorithms such as DOA exhibit wide boxes with lower-bound outliers, implying a higher risk of poor solutions in practice. Algorithmic robustness is thus a key determinant of reliability in real-world applications.

Integrating these analyses, EPIMO, WUTP, and KEO stand out as the top performers, excelling in convergence speed, solution quality, and run-to-run stability. ALA and PIMO may serve as viable alternatives, while FATA, BKA, and DOA are less suitable for reliability-sensitive tasks due to their instability.

In summary, a sound evaluation should combine convergence behavior (process insight), box plots (result distribution), and statistical metrics (quantitative assessment). This multi-angle framework supports informed algorithm selection for complex engineering optimization problems.

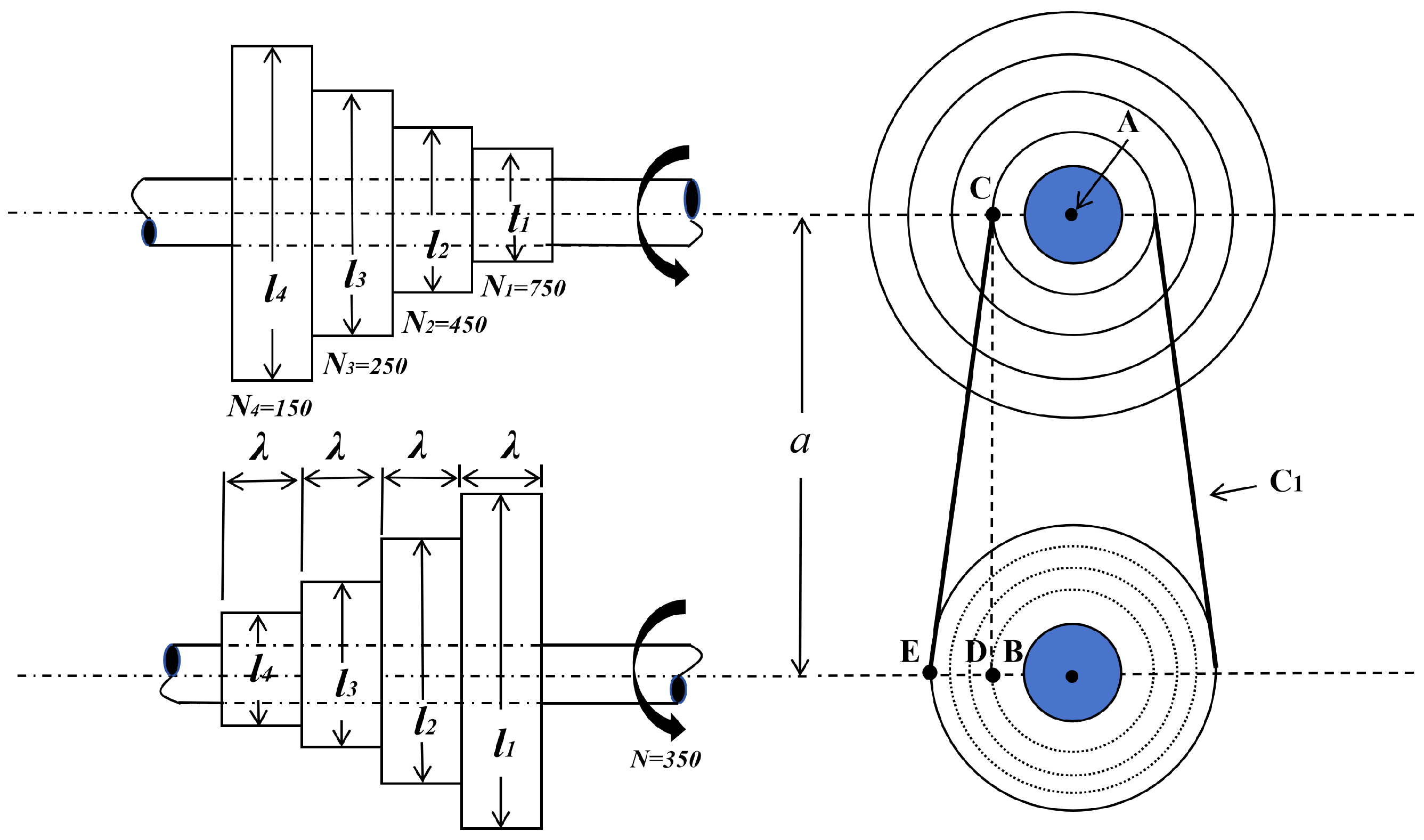

4.4.2. Step-Cone Pulley Problem

The step-cone pulley design represents a classical constrained optimization problem that aims to minimize the weight of a four-stage stepped pulley assembly. As illustrated in

Figure 8, the system comprises multiple pulley stages with distinct diameters

and a common width

. The optimization is subject to 11 nonlinear constraints, which ensure the required power transmission of 0.75 horsepower per stage [

35].

Given the strong nonlinear couplings among design variables and the presence of multiple constraints, this problem poses significant challenges in maintaining feasibility while converging to a globally optimal design. It therefore serves as a rigorous benchmark for evaluating the constraint-handling ability and convergence performance of modern metaheuristic optimizers such as EPIMO and related algorithms.

Minimize:

where

denotes the length associated with each stage,

the rotational speeds, and

the material density. Subject to:

where:

Analysis of the optimization results for the stepped-cone pulley problem reveals significant performance disparities among different algorithms when addressing this type of strongly nonlinear constrained engineering problem. Integrating the variable optimization outcomes (

Table 9) with the performance statistics (

Table 10), the EPIMO and KEO algorithms demonstrate the most outstanding performance. Their obtained optimal objective function values (weight) are as low as 16.16 kg and 16.10 kg, respectively, and the corresponding design variables (pulley lengths

to

and width

) exhibit a reasonable stepwise increasing distribution, consistent with physical expectations. More importantly, the standard deviations (std) of these two algorithms are extremely small (0.30 and 0.64, respectively), and their mean (avg) and median values are both close to the optimum. This confirms that they not only achieve high optimization accuracy but also possess exceptional stability and reliability, enabling them to consistently converge to high-quality feasible solutions across repeated runs.

The two graphical representations provide a clear visualization of the significant advantages of the EPIMO algorithm in terms of convergence speed and solution accuracy in

Figure 9. From the convergence curve and the fitness value distribution, it is evident that the EPIMO algorithm demonstrates significant advantages in both convergence speed and solution accuracy. The convergence plot illustrates that EPIMO rapidly descends to and stabilizes near the theoretical optimum (around

), consistently maintaining the lowest curve position throughout the iterations. In contrast, algorithms such as FATA remain at substantially higher fitness levels (around

), exhibiting markedly slower convergence. Correspondingly, the box plot of fitness values reveals that EPIMO achieves the lowest median fitness along with the most compact distribution, further confirming its superior solution quality and robustness. Collectively, these visual results consistently indicate that EPIMO outperforms the comparative algorithms in terms of convergence performance and solution stability.

Thus, this case study clearly demonstrates that when dealing with complex engineering constrained optimization problems, an algorithm’s constraint-handling capability and search robustness are equally, if not more, critical as its pure “optimization” capability. The comprehensive performance exhibited by algorithms such as EPIMO and KEO in this problem establishes them as reliable choices for solving similar mechanical design optimization tasks.

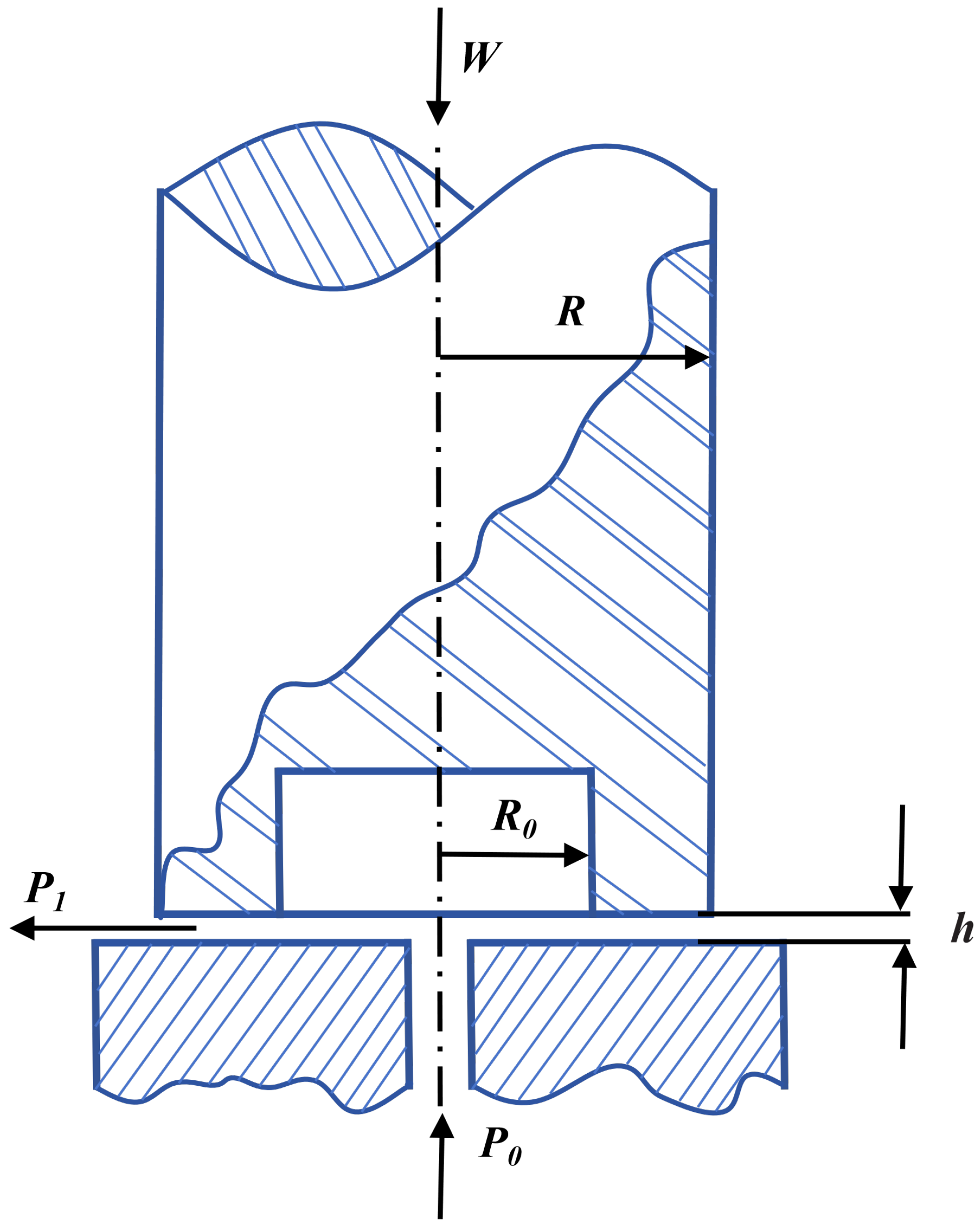

4.4.3. Hydrostatic Thrust Bearing Design Problem

The hydrostatic thrust bearing design optimization aims to minimize the total power loss while satisfying seven nonlinear constraints related to load capacity, pressure, temperature rise, and geometric feasibility. As shown in

Figure 10, the design involves four key variables: recess radius

, bearing step radius

R, lubricant viscosity

, and flow rate

Q. The problem is recognized as a challenging benchmark due to the strong coupling among variables and the presence of multiple active constraints [

36].

Objective Function: Minimize the total power loss:

Design variables:

With bounds:

Based on an integrated analysis of convergence curves, fitness distribution plots in

Figure 11, performance statistics in

Table 11, and variable optimization results in

Table 12, this paper provides a comprehensive evaluation of the performance of different algorithms on the hydrostatic thrust bearing design optimization problem. The algorithms exhibit significant differences in capability when solving this strongly constrained, nonlinear engineering problem, which are systematically revealed across multiple dimensions: the dynamic convergence process, quantitative statistical metrics, and the quality of the final solutions.

High-performing algorithms, such as EPIMO and PSO, demonstrate rapid and stable characteristics during the convergence process, with their fitness values descending swiftly on a logarithmic scale and stabilizing at low levels. This dynamic behavior aligns closely with their statistical profiles: EPIMO achieves an objective function value (19,455.12) closest to the globally optimal solution documented in the literature, while its exceptionally low standard deviation (125.67) reflects outstanding robustness and result reproducibility. PSO also shows excellent performance, albeit with slightly inferior stability. Observing the fitness distribution plot, the boxes for both algorithms are compact and positioned at low levels, visually confirming the concentration and superiority of their output. In terms of solution space distribution, the design variable values converged upon by these algorithms are highly concentrated within the parameter region widely recognized in the literature as optimal (, , , ). Moreover, they strictly satisfy all engineering constraints, thereby ensuring the feasibility and practical value of the results.

In contrast, certain algorithms such as PIMO, ALA, and FATA exhibit various degrees of limitation. Their convergence trajectories show considerable fluctuations, descend slowly only in later iterations, or even diverge completely in some runs. Statistical data indicate that the worst values for these algorithms can reach magnitudes of to , differing from their respective min values by several orders of magnitude, accompanied by large standard deviations. The long whiskers or discrete outliers corresponding to these algorithms in the fitness distribution plot provide an intuitive representation of this extreme volatility and instability. A deeper analysis of their variable optimization results reveals that the solutions generated often deviate from the optimal parameter space. For instance, the R and Q values for the DOA algorithm are significantly higher, leading to severe violations of key constraints such as inlet pressure or load capacity, rendering its objective function value practically meaningless from an engineering perspective.

In summary, the convergence behavior reveals the search efficiency and trajectory stability of an algorithm, the performance statistics quantify the reliability boundaries and anomaly risks of its results, and the variable optimization outcomes provide the final judgment based on solution quality and feasibility. The fitness distribution plot, serving as a visual extension of the statistical data, makes performance comparisons more intuitive. All evidence converges to a consistent conclusion: when addressing complex constrained optimization problems like the hydrostatic thrust bearing design, a successful algorithm must deeply integrate powerful global exploration capabilities, refined local exploitation strategies, and efficient constraint-handling mechanisms. The comprehensive superiority demonstrated by the EPIMO algorithm in this study exemplifies this balance and integrative capability, providing strong support for its application in the field of complex engineering optimization.

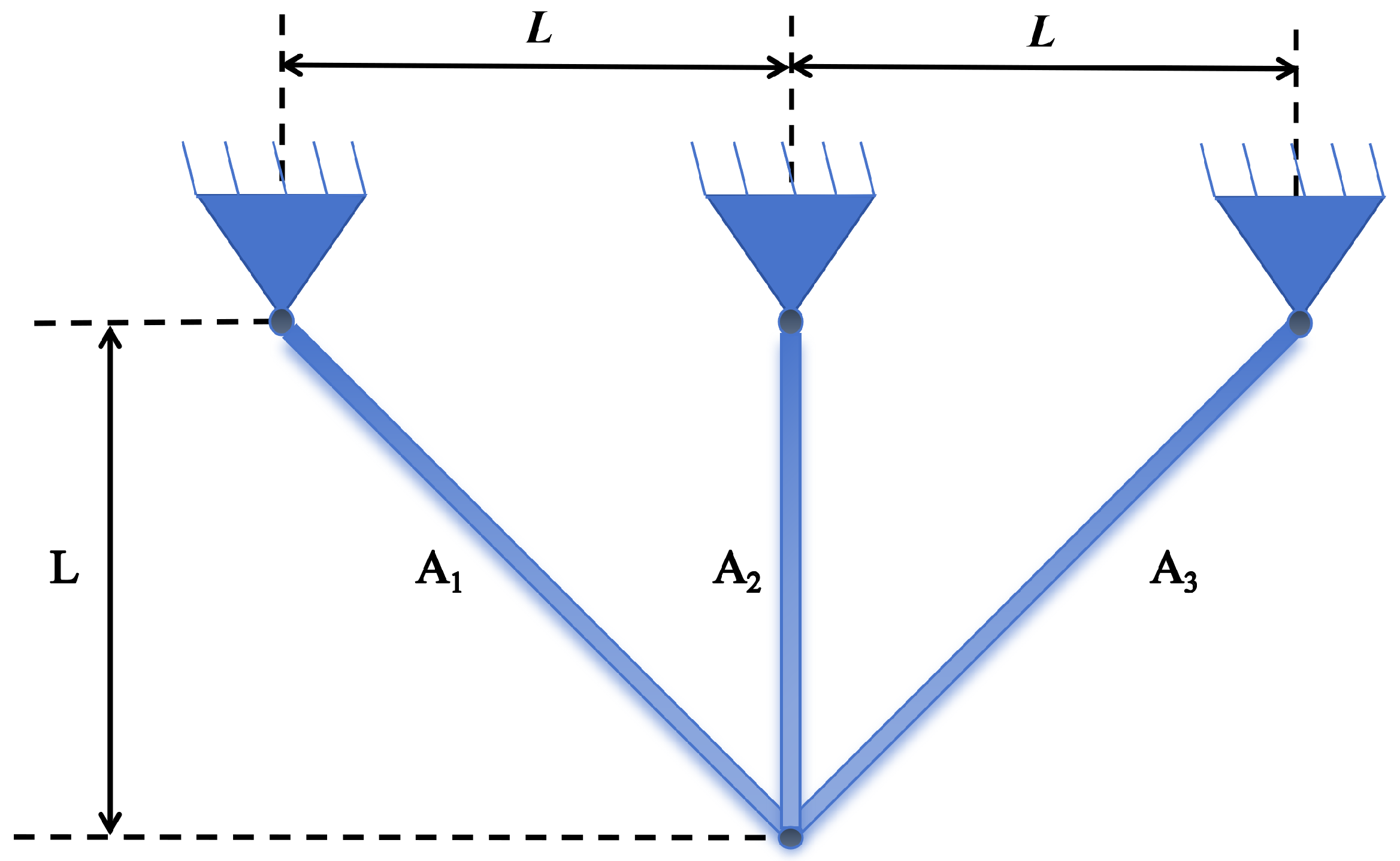

4.4.4. Three-Bar Truss Design Problem

The three-bar truss design problem is a classic benchmark in structural optimization, aiming to minimize the volume of a truss structure under specified loading conditions. Schematic of a three-bar truss design problem is shown in

Figure 12.

The problem involves two design variables, namely the cross-sectional areas

and

of the bars (denoted as

and

) [

37]. Its mathematical model is given as follows:

Objective Function (minimize volume):

Constraints (stress constraints):

where the constants are: bar length

, external load

, and allowable stress

.

Due to its highly nonlinear constraints, narrow feasible region, and strong coupling among variables, this problem serves as a standard test case for evaluating the robustness, precision, and constraint-handling capability of optimization algorithms. It is widely used in lightweight structural design in civil and mechanical engineering.

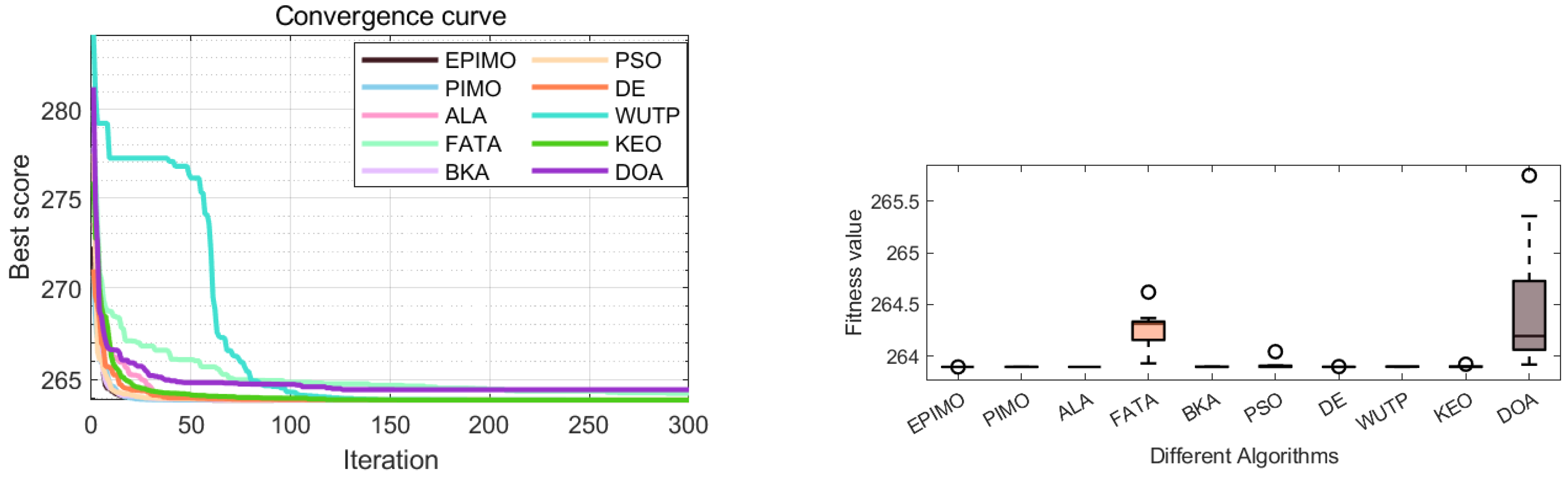

From the convergence curve in

Figure 13, it is observed that most algorithms rapidly converge to a narrow region near the optimal value (approximately 263.9) within the early iterations (around 50 iterations) and maintain stability thereafter. This behavior strongly aligns with the extremely low standard deviation (std) values reported in the performance statistics table—particularly for algorithms such as EPIMO (6.80 × 10

−8) and ALA (1.28 × 10

−5). Their curves appear almost horizontal, visually demonstrating exceptional convergence stability and result reproducibility. In contrast, the convergence curves of algorithms like FATA and DOA exhibit slight fluctuations or slower convergence in later stages. This corresponds directly to their significantly higher standard deviations (1.90 × 10

−1 and 6.25 × 10

−1, respectively) and relatively larger worst-case values (264.62 and 265.75, respectively) in the statistical table, indicating that these algorithms fail to consistently lock onto the optimal solution in certain runs, reflecting a degree of search uncertainty.

The fitness value distribution plot in

Figure 13 offers an intuitive statistical complement to the above analysis. The box plots of high-performing algorithms such as EPIMO, PIMO, and ALA are expected to show extremely compact shapes with the lowest box positions. This visually confirms the concentration and reliability of their outputs, consistent with the highly concentrated and near-optimal values of their minimum (min) and median statistics. In contrast, the box plots of algorithms like FATA and DOA likely display longer whiskers or noticeable outliers, corresponding to their larger standard deviations and worst-case values, reflecting dispersion and instability in result distribution.

In summary, the optimal solution space for the three-bar truss design problem is relatively well-defined and accessible, with most algorithms capable of locating high-quality solutions as shown in

Table 13 and

Table 14. However, the key differences among algorithms lie in convergence stability and result robustness. The EPIMO algorithm demonstrates near-perfect performance in this problem—its convergence curve is smooth, statistical indicators are highly consistent, and the distribution is concentrated, reflecting an excellent balance between exploration and exploitation as well as numerical stability. This conclusion reaffirms that even for relatively straightforward engineering optimization problems, an effective algorithm must ensure not only optimization accuracy but also reliability in the solution process and consistency in results, which are critical for practical engineering applications.