1. Introduction

Human physical activity refers to any body movement produced by skeletal muscles or different position of the limbs with respect to time upstanding against gravity that results in an energy expenditure [

1,

2]. Activity recognition and monitoring system concurrently identifies, evaluates the actions carried out by a person on a daily basis in real conditions of the surrounding environment and provides context aware feedback for healthcare and elder care. Daily activity is a complex concept; it depends on many factors, including physiological, anatomical, psychological, and environmental effects. Human daily activity tracking was traditionally solved by an image processing approach and vision-based techniques [

3,

4]. However, these techniques may violate user privacy, mostly require infrastructure support like installing video cameras in the monitoring areas, and depend heavily on lighting conditions. Several works consider wearable sensors individually and combined with ambient sensor for activity recognition [

5,

6,

7]. Many of the early efforts focused on detecting fall and daily-life activities, mainly using one/or more wearable accelerometers. However, it may not be convenient for patients to carry out daily activities with sensors worn in hands and/or limbs. However, inertial sensors of smartphones can be a convenient option for activity recognition as most users almost always carry smartphones. Most smartphones are equipped with accelerometer, gyroscope, compass and proximity sensors. In addition, these devices also have communication facilities like Wi-Fi and Bluetooth by which sensor readings can be transferred to a server. Continuous raw data are collected from several sensors of the smartphone during monitoring. The data are processed for extracting useful features, fed to some classification algorithm for training an appropriate activity model, in order to recognize a variety of activities.

Daily activities can be categorized in two ways: coarse-grained or simple activity and fine-grained or detailed activity. Coarse-grained (Sit, Stand, Walk, etc.) is simplified larger sub-component of basic activity, whereas fine-grained, that is, detailed activity, refers to smaller distinguishable subcomponents that can be composed together to get a coarse-grained activity. Fine-grained or detailed activity contains activities like Sit on floor/chair. Identifying detailed activity can be beneficial for many medical applications such as elderly assistance at home, post trauma rehabilitation after a surgery, detection of gestures, motions and fitness of diabetic patients, etc. The elderly population has increased significantly who are living alone and mostly suffering from chronic diseases. Stroke patients need assistance and require regular monitoring during rehabilitation. Increased walking ability is the focus of rehabilitation. Accurate information on daily activity has the potential to improve the regular monitoring and treatment in several diseases and sometimes it reduces high burden of hospitalization costs.

Existing works [

8,

9,

10] mostly focus on coarse grained activity recognition. Few works could be found on detailed activity [

11,

12] using several inertial sensors that may not be present in many smartphone configurations, thus the system is not ubiquitous. In literature, most of the works [

9,

13,

14] consider a single classifier approach to study activity recognition system with smartphones. In [

15,

16], the authors use an ensemble learning technique for activity recognition. In real life, the training and testing environment for activity recognition are not same all the time. Generally, raw data from several devices are collected to monitor activities. Due to several hardware configuration and calibration problem, sensor readings vary from one device to another. Even orientation of the smartphone, which depends on the usage behavior with respect to human subjects, is also a factor affecting classification accuracy of the system. Several sensors are used for activity recognition in order to make the recognition system device independent in [

17]. A recent work in [

18] addresses different usage behavior like smartphones kept at a coat pocket or bag. However, no work could be found that enables detailed activity recognition even when training data is collected using one device at one position (say, right trouser pocket) and activity is recognized for test data collected from a different device kept at the same or different position (say, shirt pocket). Consequently, our main contribution in this work are as follows:

- (a)

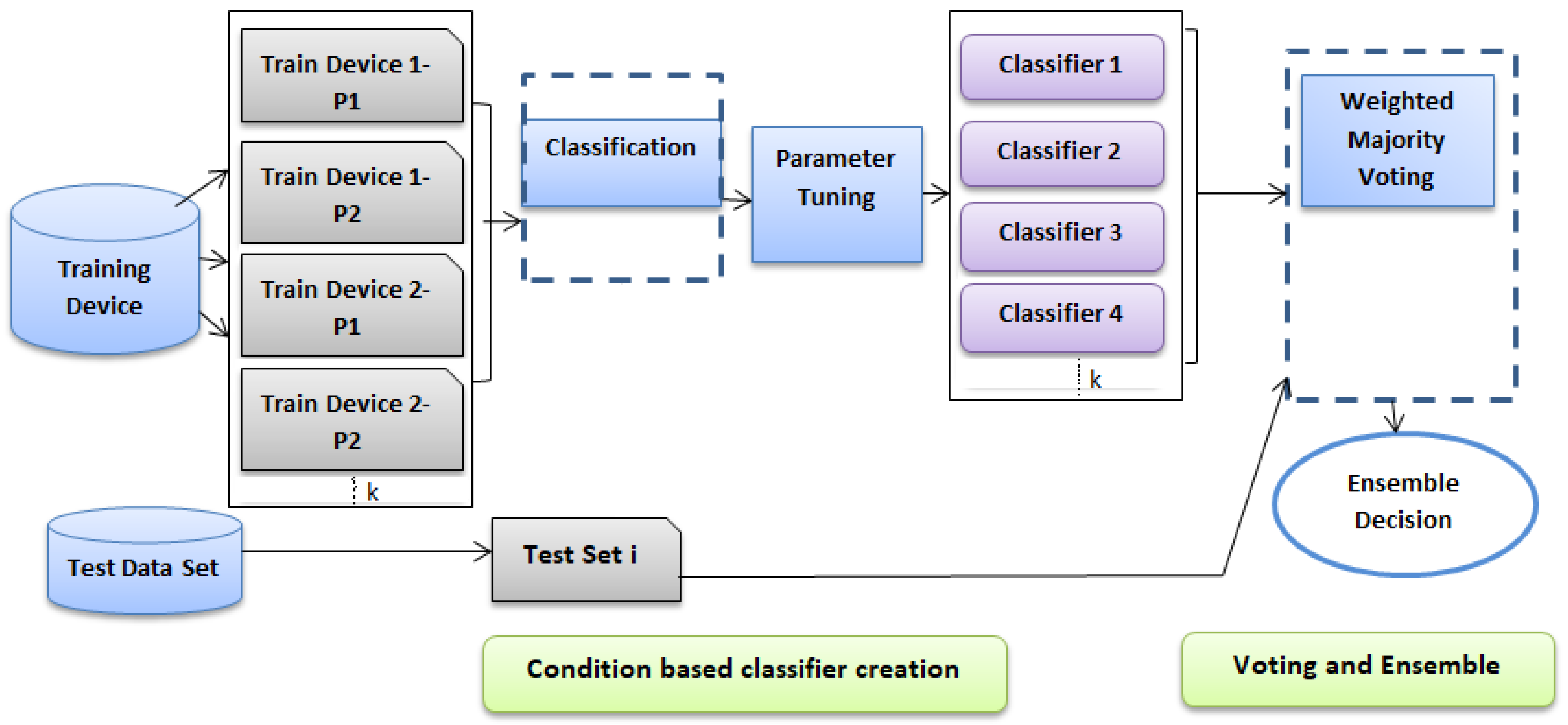

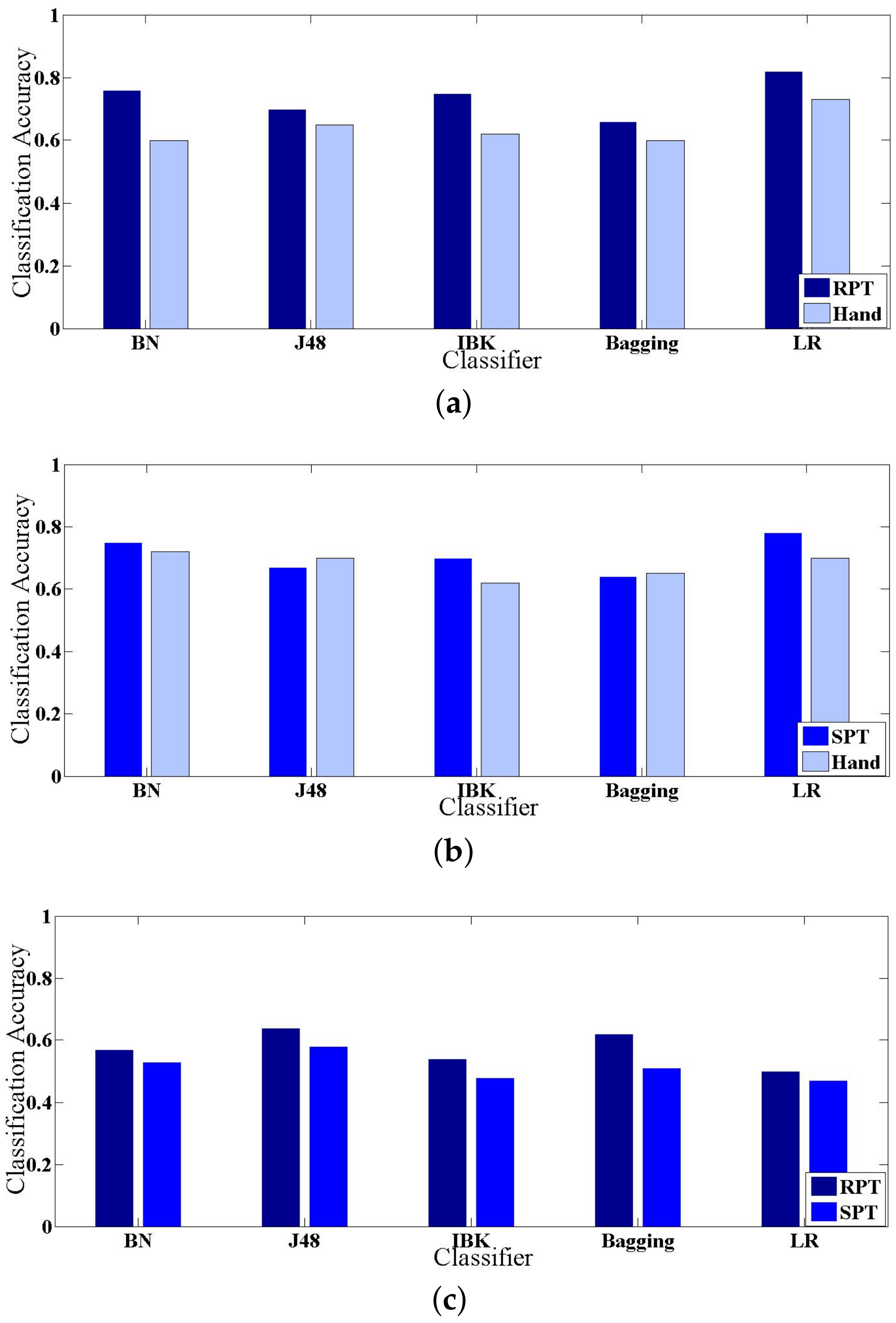

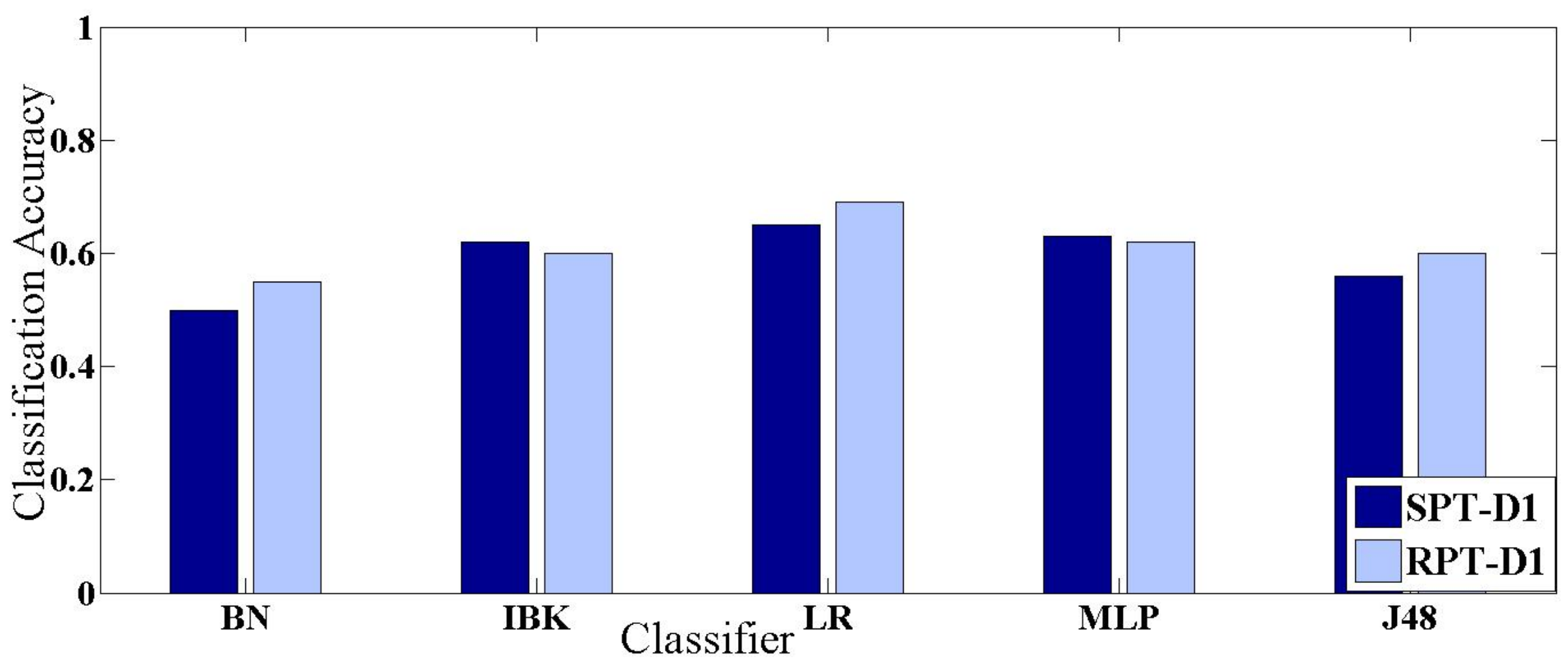

We propose an activity recognition framework using an ensemble of condition based classifiers to identify detailed static activities (Sit on chair, Sit on floor, Lying right, Lying left) as well as detailed dynamic activities (Slow walk, Brisk walk). The proposed technique works irrespective of device configuration and usage behavior.

- (b)

The process utilizes accelerometer and gyroscope sensor of smartphones that are available in almost all smartphones by most of the manufacturers, thus making the framework ubiquitous.

- (c)

The proposed technique can identify the effect of accelerometer and gyroscope for identifying individual detailed activity.

The rest of this paper is organized as follows:

Section 2 describes state-of-the-art techniques for activity recognition through smartphones. Definition of the problem is discussed in

Section 3. Design of the proposed system is detailed in

Section 4.

Section 5 describes the experimental setup and summarizes the results. Finally, we conclude in

Section 6.

2. Related Work

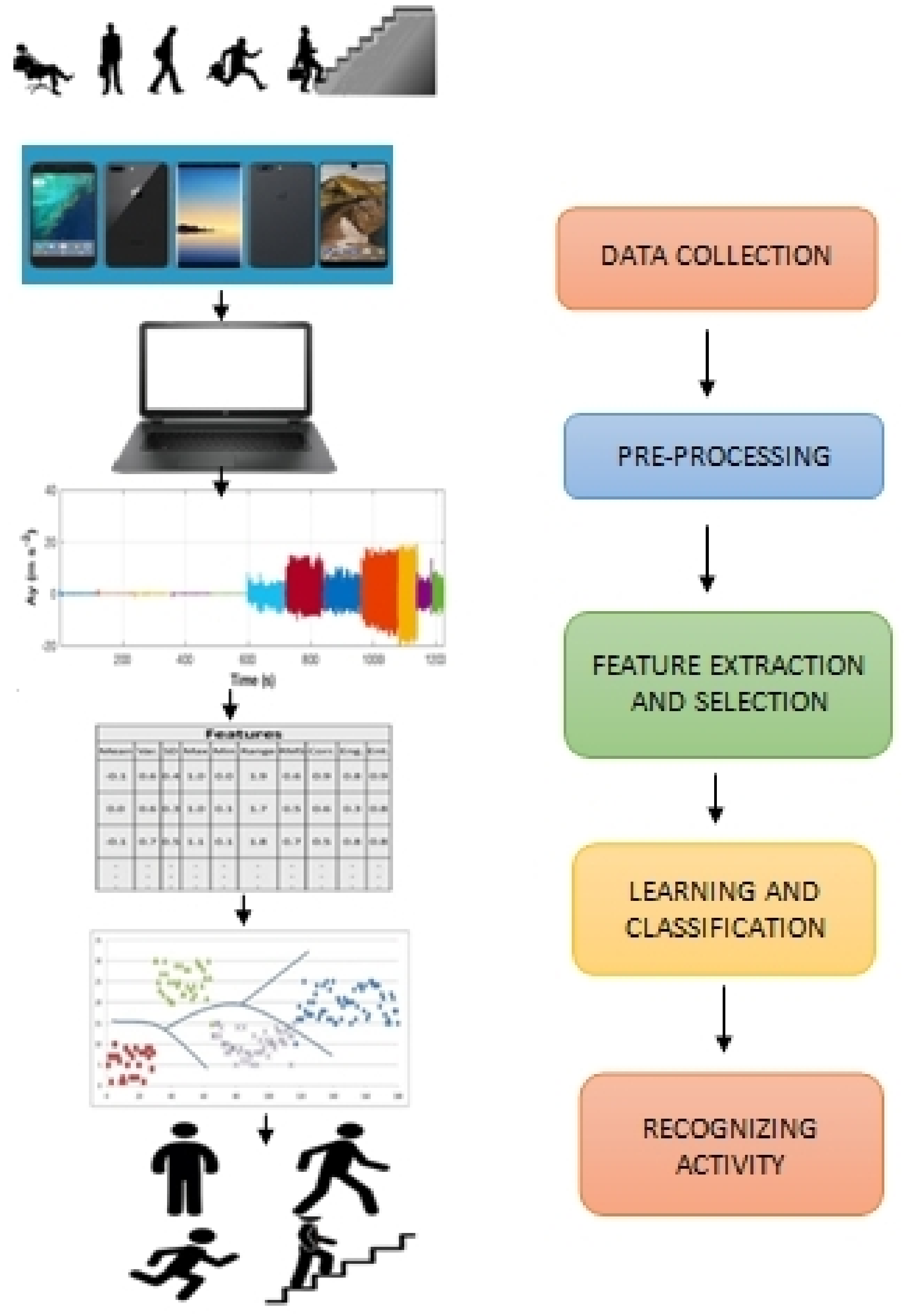

A typical activity identification framework mostly follows four phases including (1) Data collection and preprocessing; (2) Feature extraction; (3) Feature selection and (4) Classification as shown in

Figure 1. Data are collected through several sensors with respect to human usage and behavior. Preprocessed data are sent to a server for further processing. Several time and frequency domain features are extracted and selected from preprocessed data. The classification techniques are applied on the server side to recognize an activity.

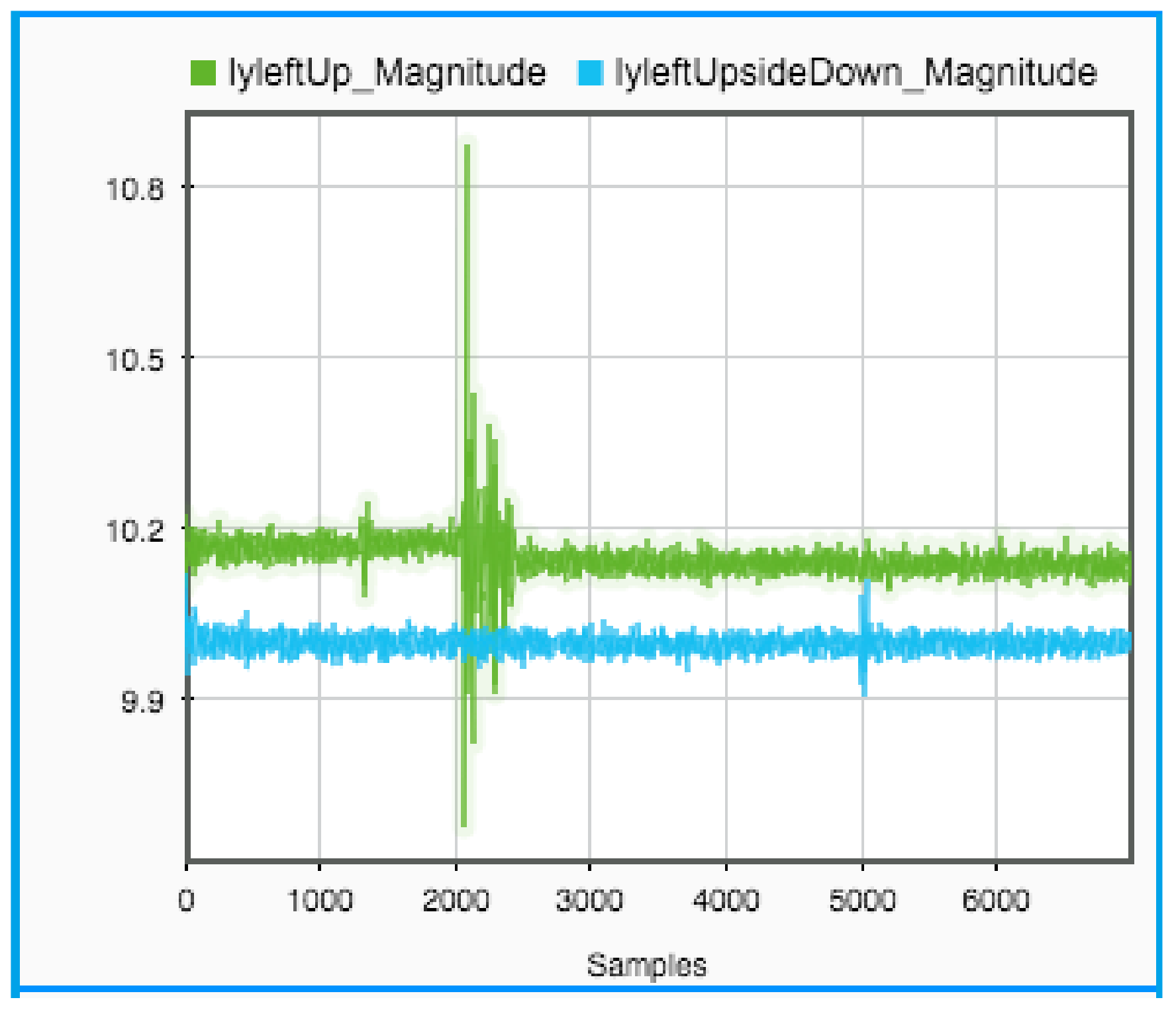

Data are collected from wearable sensors and smart handhelds. One of the most important issues in data collection is the selection of sensors and the attributes to be measured, which play an important role in the activity recognition system’s performance. Incorrect selection of sensors may adversely affect the recognition performance. Inertial sensors such as accelerometers and gyroscopes are used for activity recognition. The accelerometer measures non-gravitational acceleration of a smart device while the gyroscope senses the rate of change of orientation or angular velocity.

Human Activity Recognition can be broadly classified on the basis of medium of data collection into two categories of “Using Wearable Sensor” and “Using Smartphone”. Some relevant state-of-the art works are summarized in

Table 1. Most of these works use wearable accelerometers, or accelerometer sensors of smartphones. It is evident that works are done in different directions, not only detecting detailed daily life activities and fall [

7,

19], but also on online activity recognition [

20], publishing benchmark datasets [

21] as well as analyzing different usage behaviour as in [

18,

22].

A lot of research has been done in Human Activity Recognition (HAR) using Wearable Devices [

25] mostly using acceleration data independent of orientation [

26]. Combination of accelerometer sensor and gyroscope sensor placed on the neck of users are used in [

27] to identify activities. They have evaluated the effect of appending gyroscope with accelerometer data and maintained individual threshold value for identifying several activities. The authors in [

19] used gyroscopes and accelerometers placed on the thigh position and chest position of the user to identify several activities as well as fall using inclination angle and accelerometer value. An unintentional transition to a lying posture is regarded as a fall, where large changes in accelerometer and gyroscope readings can be observed. Authors in [

19] differentiate intentional and unintentional transitions by applying thresholds to peak values of acceleration and rate of angular velocity from gyroscope. A certain change of angular velocity determines the fall of the subject.

A few works can also be found on wearable sensors using classifiers to recognize activities. In [

28], authors considered accelerometers kept in three positions (wrist, ankle, chest) of the human body to monitor daily activities and applied Decision Table on the preprocessed data for activity classification. In [

29], several activities are monitored using a customized device that is configured with an accelerometer attached to wrist position. However, the gyroscope sensor alone has not achieved a significant place in human activity recognition, and it can work in combination with other sensors. Using many wearable sensors for activity detection may hamper the movement of a person itself. In [

30], “Vital Signs” are detected in addition to acceleration data, where vital signs vary in each activity, for example, when an individual begins running, it is expected that their heart rate and breath amplitude increase. This then becomes the vital sign for that activity and provides better accuracy. However, the advent of smartphones and the exponential increase in their usage in the past decade has resulted in a growing interest in HAR using smartphones for data collection as smartphones provide somewhat more convenient wearable computing environment.

Several works extensively study the use of smartphone based inertial sensors like accelerometers and gyroscopes in activity recognition. The Activity Recognition API by Google [

31] provides insights into what users are currently doing and is used by several Android applications to enhance their user experience. The API automatically detects activities by periodically reading short bursts of sensor data and processing them using machine learning models. However, the set of activities that it can recognize is limited to coarse grained activities such as “sit”, “stand”, “walk”, “run”, “biking”, “device in vehicle”, etc. Few works could be found that focus on using minimal sensors like only accelerometers [

10,

22,

24] for making the framework energy efficient and ubiquitous. Both of the works use state-of-the-art classifiers including MultiLayer Perceptron (MLP); however, the work in [

22] aims at detecting detailed activities like slow walk and fast walk, while, in [

24], mainly coarse grained activities are covered. In [

22], the average accuracies of combination of classifiers is used for recognizing activity resulting in around 91% accuracy. Few works consider gyroscope along with accelerometer for gait analysis [

32,

33] and fall detection [

34]. In [

33], K-Nearest Neighbor (KNN) is used and achieved around 80% accuracy while different supervised learning algorithms are explored in [

34], and Support Vector Machine (SVM) giving the highest accuracy.

In [

14], authors consider all sensors available in smartphones like accelerometer, gyroscope and magnetometer for identifying human activity and explain the role of each sensor. The combination of accelerometer and gyroscope [

14,

35] is found to yield better results in some aspects. In [

14], several machine learning algorithms are applied for activity recognition while the SVM is used to classify activities in [

35]. The authors in [

36] show the potential of using only magnetometer and how it affects activity recognition. In [

37], the authors use a combination of an accelerometer and a gyroscope, and the recognition accuracy for some of the activities increases from 3.1–13.4%. The authors in [

15] consider Decision tree, Logistic regression and Multilayer neural networks algorithms as base classifiers and designed a majority voting based ensemble [

38] to identify human activity. It is found to increase accuracy up to 3.6% from a single classifier based approach. In [

16], the authors combine multiple classifiers to improve the accuracy of activity recognition up to 7% and overall accuracy of 93.5% using a 5-fold cross validation technique. In this way, the output of different classifiers can be combined using several fusion techniques to improve classification accuracy and efficiency.

In [

39], authors identify activity applying KNN on the combination of various data like accelerometers, magnetometers, gyroscopes, linear acceleration and gravity, and it performs better than the accelerometer alone. However, most of these works above focus on coarse grained activities [

8,

9]. Few works could be found on [

7,

11,

12] detailed activity recognition. In [

7], the authors classified a number of detailed daily life activities with the help of several wearable sensors. They even predicted possible classification of a slow walk and brisk walk on the basis of speed. The authors in [

12] uses wearable sensors to monitor detailed activity along with fall detection using Hidden Markov Model (HMM). In [

40], HMM is applied to get activities subject to smartphone and ambient sensors. User ambience is also used in [

11], where authors use accelerometers and gyroscopes for body locomotion, temperature and humidity sensors for sensing ambient environment, and location (via communication with Bluetooth beacon location tags). Two-level supervised classification is performed to detect the final activity state. A modified conditional random field based supervised activity classifier is designed by the authors for this purpose. However, the use of several sensors makes the systems more expensive and inconvenient for users.

In reality, for smartphone based ubiquitous activity recognition, we cannot impose constraints like the same device being used for training and testing or smartphones needing to be kept at a fixed position (the same as the one used for training the system). Thus, detailed activity recognition works should also consider the usage of different devices for training and testing (device independence) and usage behavior in terms of where the smartphone is kept for training and testing (position independence). The work in [

17] considers device independence issues with multiple sensors and, in [

41], position independent activity recognition framework is presented. In [

17], the authors focus on several challenges like different users, different smartphone models and orientation. They have used several smartphone based sensors like accelerometers, gyroscopes and magnetic field sensors to remove gravity from accelerometer signals and converted accelerometer signal data from the body coordinate system to the earth coordinate system. Frequency domain features are extracted and the KNN (K Nearest Neighbor) classification algorithm is used in the work. In [

1], device independent activity monitoring is achieved using Logistic Regression (LR) based two phase classifier where the best training device gets selected in the first phase while the second phase tunes the classifier for better recognition of activities. However, only coarse grained activities are recognized by this technique. Consequently, in this work, a detailed activity recognition framework is proposed that attempts to recognize detailed activities irrespective of the hardware configuration of smartphones and how the smartphones are kept during training and testing phases. We have not made use of Google’s API as the class of activities that we are trying to classify are comprised of finer distinctions of a coarse grained class of activities, such as, for “walk”, we have finer distinctions as: “brisk walk” and “slow walk”. Similarly, for every coarse grained activity, finer distinctions exist and we propose a system here that learns such finer class of activities.