Abstract

This article is an overview of the SP theory of intelligence, which aims to simplify and integrate concepts across artificial intelligence, mainstream computing and human perception and cognition, with information compression as a unifying theme. It is conceived of as a brain-like system that receives “New” information and stores some or all of it in compressed form as “Old” information; and it is realised in the form of a computer model, a first version of the SP machine. The matching and unification of patterns and the concept of multiple alignment are central ideas. Using heuristic techniques, the system builds multiple alignments that are “good” in terms of information compression. For each multiple alignment, probabilities may be calculated for associated inferences. Unsupervised learning is done by deriving new structures from partial matches between patterns and via heuristic search for sets of structures that are “good” in terms of information compression. These are normally ones that people judge to be “natural”, in accordance with the “DONSVIC” principle—the discovery of natural structures via information compression. The SP theory provides an interpretation for concepts and phenomena in several other areas, including “computing”, aspects of mathematics and logic, the representation of knowledge, natural language processing, pattern recognition, several kinds of reasoning, information storage and retrieval, planning and problem solving, information compression, neuroscience and human perception and cognition. Examples include the parsing and production of language with discontinuous dependencies in syntax, pattern recognition at multiple levels of abstraction and its integration with part-whole relations, nonmonotonic reasoning and reasoning with default values, reasoning in Bayesian networks, including “explaining away”, causal diagnosis, and the solving of a geometric analogy problem.

1. Introduction

The SP theory of intelligence, which has been under development since about 1987 [1], aims to simplify and integrate concepts across artificial intelligence, mainstream computing and human perception and cognition, with information compression as a unifying theme.

The name “SP" is short for Simplicity and Power, because compression of any given body of information, I, may be seen as a process of reducing informational “redundancy" in I and thus increasing its “simplicity", whilst retaining as much as possible of its non-redundant expressive “power". Likewise with Occam’s Razor (Section 2.3, below).

Aspects of the theory, as it has developed, have been described in several peer-reviewed articles [2]. The most comprehensive description of the theory as it stands now, with many examples, is in [3]. But this book, with more than 450 pages, is too long to serve as an introduction to the theory. This article aims to meet that need, with a fairly full description of the theory and a selection of examples [4]. For the sake of brevity, the book will be referred to as “BK”.

2. Origins and Motivation

The following subsections outline the origins of the SP theory, how it relates to some other research and how it has developed.

2.1. Information Compression

Much of the inspiration for the SP theory is a body of research, pioneered by Fred Attneave [5], Horace Barlow [6,7], and others, showing that several aspects of the workings of brains and nervous systems may be understood in terms of information compression [8].

For example, when we view a scene with two eyes, the image on the retina of the left eye is almost exactly the same as the image on the retina of right eye, but our brains merge the two images into a single percept and thus compress the information [7,9].

More immediately, the theory has grown out of my own research, developing models of the unsupervised learning of a first language, where the importance of information compression became increasingly clear (e.g., [10]. See also [11]).

The theory also draws on principles of “minimum length encoding” pioneered by Ray Solomonoff [12,13] and others. And it has become apparent that several aspects of computing, mathematics and logic may be understood in terms of information compression (BK, Chapters 2 and 10).

At an abstract level, information compression can bring two main benefits:

- For any given body of information, I, information compression may reduce its size and thus facilitate the storage, processing and transmission of I.

- Perhaps more important is the close connection between information compression and concepts of prediction and probability (see, for example, [14]). In the SP system, it is the basis for all kinds of inference and for calculations of probabilities.

In animals, we would expect these things to have been favoured by natural selection, because of the competitive advantage they can bring. Notwithstanding the “QWERTY” phenomenon—the dominance of the QWERTY keyboard despite its known inefficiencies—there is reason to believe that information compression, properly applied, may yield comparable advantages in artificial systems.

2.2. The Matching and Unification of Patterns

In the SP theory, the matching and unification of patterns is seen as being closer to the bedrock of information compression than more mathematical techniques such as wavelets or arithmetic coding, and closer to the bedrock of information processing and intelligence than, say, concepts of probability. A working hypothesis in this programme of research is that, by staying close to relatively simple, “primitive”, concepts of matching patterns and unifying them, there is a better chance of cutting through unnecessary complexity and in gaining new insights and better solutions to problems. The mathematical basis of wavelets, arithmetic coding and probabilities may itself be founded on the matching and unification of patterns (BK, Chapter 10).

2.3. Simplification and Integration of Concepts

In accordance with Occam’s Razor, the SP system aims to combine conceptual simplicity with descriptive and explanatory power. Apart from this widely-accepted principle, the drive for simplification and integration of concepts in this research programme has been motivated in part by Allen Newell’s critique [15] of some kinds of research in cognitive science and, in part, by the apparent fragmentation of research in artificial intelligence and mainstream computing, with their myriad of concepts and many specialisms.

In attempting to simplify and integrate ideas, the SP theory belongs in the same tradition as unified theories of cognition, such as Soar [16] and ACT-R [17]—both of them inspired by Allen Newell [15]. Furthermore, it chimes with the resurgence of interest in understanding artificial intelligence as a whole (see, for example [18]) and with research on “natural computation” [19].

Although the SP programme shares some objectives with projects such as the Gödel Machine [20] and “universal artificial intelligence” [21], the approach is very different.

2.4. Transparency in the Representation of Knowledge

In this research, it is assumed that knowledge in the SP system should normally be transparent or comprehensible, much as in the “symbolic” tradition in artificial intelligence (see also, Section 5.2), and distinct from the kind of “sub-symbolic” representation of knowledge that is the rule in, for example, “neural networks” as they are generally conceived in computer science.

As we shall see in Section 7 and elsewhere in this article, SP patterns in the multiple alignment framework may serve to represent a variety of kinds of knowledge, in symbolic forms.

2.5. Development of the Theory

In developing the theory, it was apparent at an early stage that existing systems—such as my models of language learning [11] and systems like Prolog—would need radical re-thinking to meet the goal of simplifying and integrating ideas across a wide area.

The first published version of the SP theory [22] described “some unifying ideas in computing”. Early work on the SP computer model concentrated on developing an improved version of the “dynamic programming” technique for the alignment of two sequences (see BK, Appendix A) as a possible route to modelling human-like flexibility in pattern recognition, analysis of language, and the like.

About 1992, it became apparent that the explanatory range of the theory could be greatly expanded by forming alignments of two, three or more sequences, much as in the “multiple alignment” concept of bioinformatics. That idea was developed and adapted in new versions of the SP model and incorporated in new procedures for unsupervised learning.

Aspects of the theory, with many examples, have been developed in the book [3].

3. Introduction to the SP Theory

The main elements of the SP theory are:

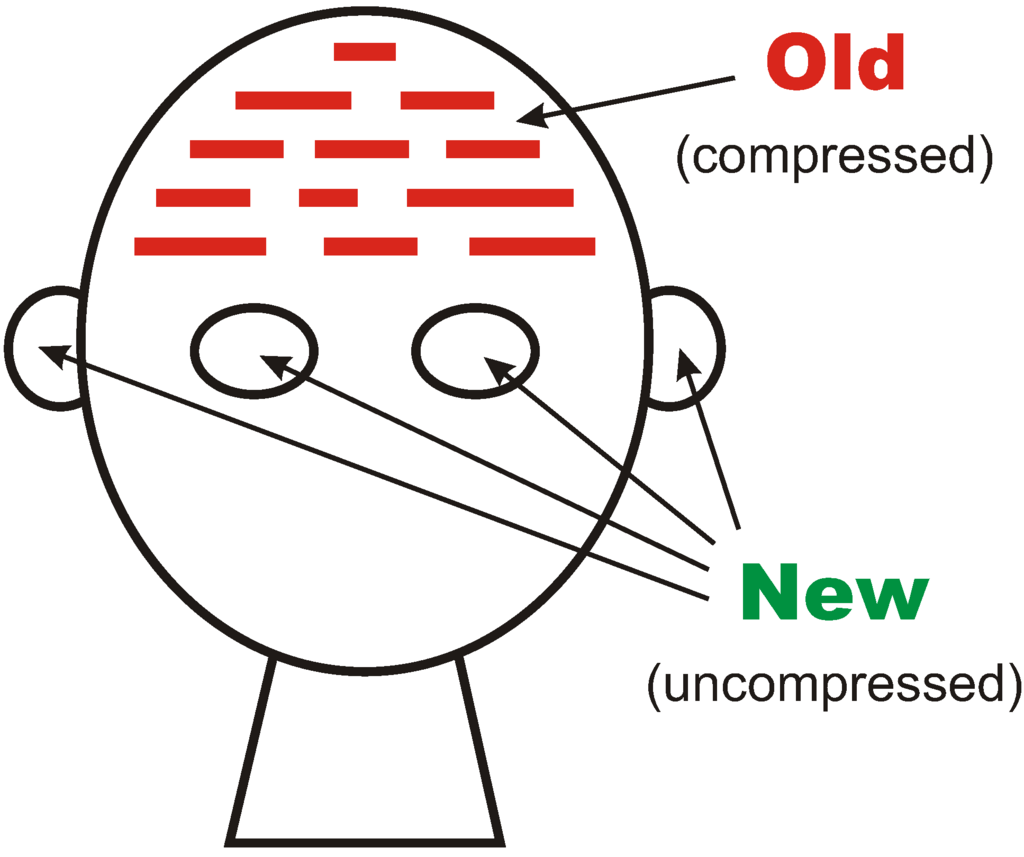

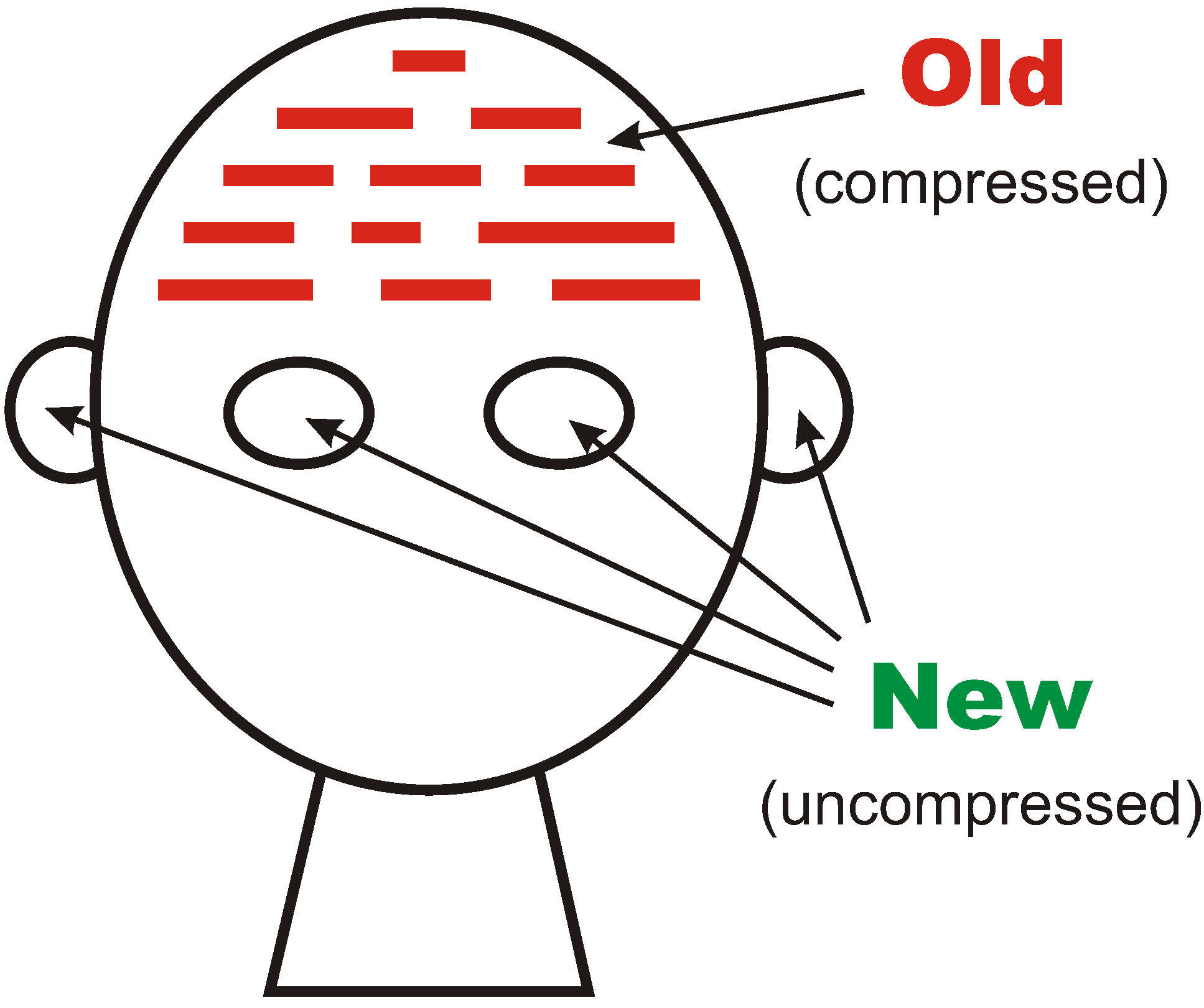

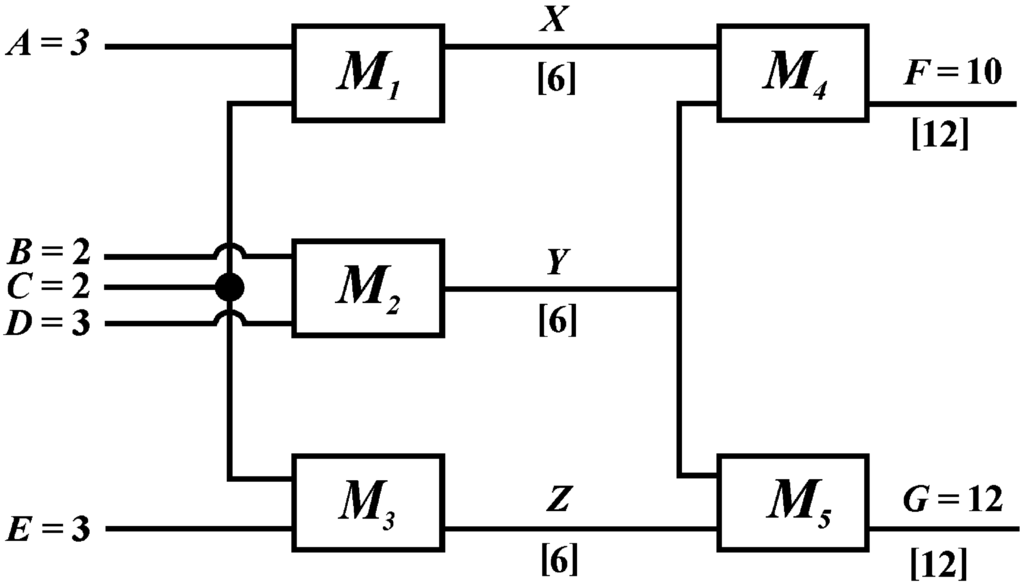

- The SP theory is conceived as an abstract brain-like system that, in an “input” perspective, may receive “New” information via its senses and store some or all of it in its memory as “Old” information, as illustrated schematically in Figure 1. There is also an “output” perspective, described in Section 4.5.

- The theory is realised in the form of a computer model, introduced in Section 3.1, below, and described more fully later.

- All New and Old information is expressed as arrays (patterns) of atomic symbols in one or two dimensions. An example of an SP pattern may be seen in each row in Figure 4. Each symbol can be matched in an all-or-nothing manner with any other symbol. Any meaning that is associated with an atomic symbol or group of symbols must be expressed in the form of other atomic symbols.

- Each pattern has an associated frequency of occurrence, which may be assigned by the user or derived via the processes for unsupervised learning. The default value for the frequency of any pattern is 1.

- The system is designed for the unsupervised learning of Old patterns by compression of New patterns [23].

- An important part of this process is, where possible, the economical (compressed) encoding of New patterns in terms of Old patterns. This may be seen to achieve such things as pattern recognition, parsing or understanding of natural language, or other kinds of interpretation of incoming information in terms of stored knowledge, including several kinds of reasoning.

- In keeping with the remarks in Section 2.2, compression of information is achieved via the matching and unification (merging) of patterns. In this, there are key roles for the frequency of occurrence of patterns, and their sizes.

- The concept of multiple alignment, described in Section 4, is a powerful central idea, similar to the concept of multiple alignment in bioinformatics, but with important differences.

- Owing to the intimate connection, previously mentioned, between information compression and concepts of prediction and probability, it is relatively straightforward for the SP system to calculate probabilities for inferences made by the system and probabilities for parsings, recognition of patterns, and so on (Section 4.4).

- In developing the theory, I have tried to take advantage of what is known about the psychological and neurophysiological aspects of human perception and cognition and to ensure that the theory is compatible with such knowledge (see Section 14).

Figure 1.

Schematic representation of the SP system from an “input” perspective.

Figure 1.

Schematic representation of the SP system from an “input” perspective.

3.1. The SP Computer Model

The SP theory is realised most fully in the SP70 computer model, with capabilities in the building of multiple alignments and in unsupervised learning. This will be referred to as the SP model, although in some cases examples are from a subset of the model or slightly earlier precursors of it.

The SP model and its precursors have played a key part in the development of the theory:

- As an antidote to vagueness. As with all computer programs, processes must be defined with sufficient detail to ensure that the program actually works.

- By providing a convenient means of encoding the simple but important mathematics that underpins the SP theory, and performing relevant calculations, including calculations of probability.

- By providing a means of seeing quickly the strengths and weaknesses of proposed mechanisms or processes. Many ideas that looked promising have been dropped as a result of this kind of testing.

- By providing a means of demonstrating what can be achieved with the theory.

3.2. The SP Machine

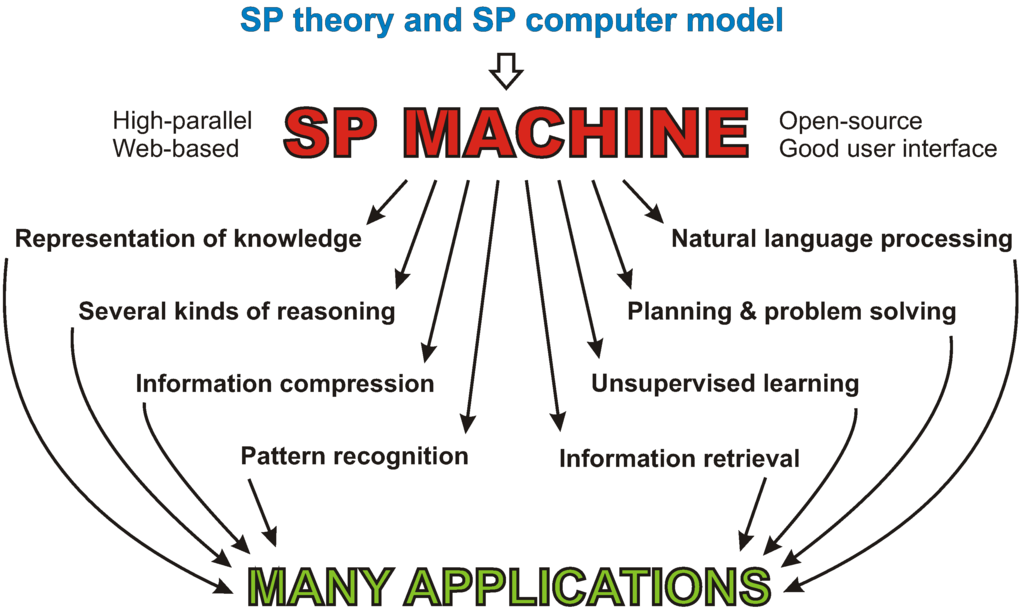

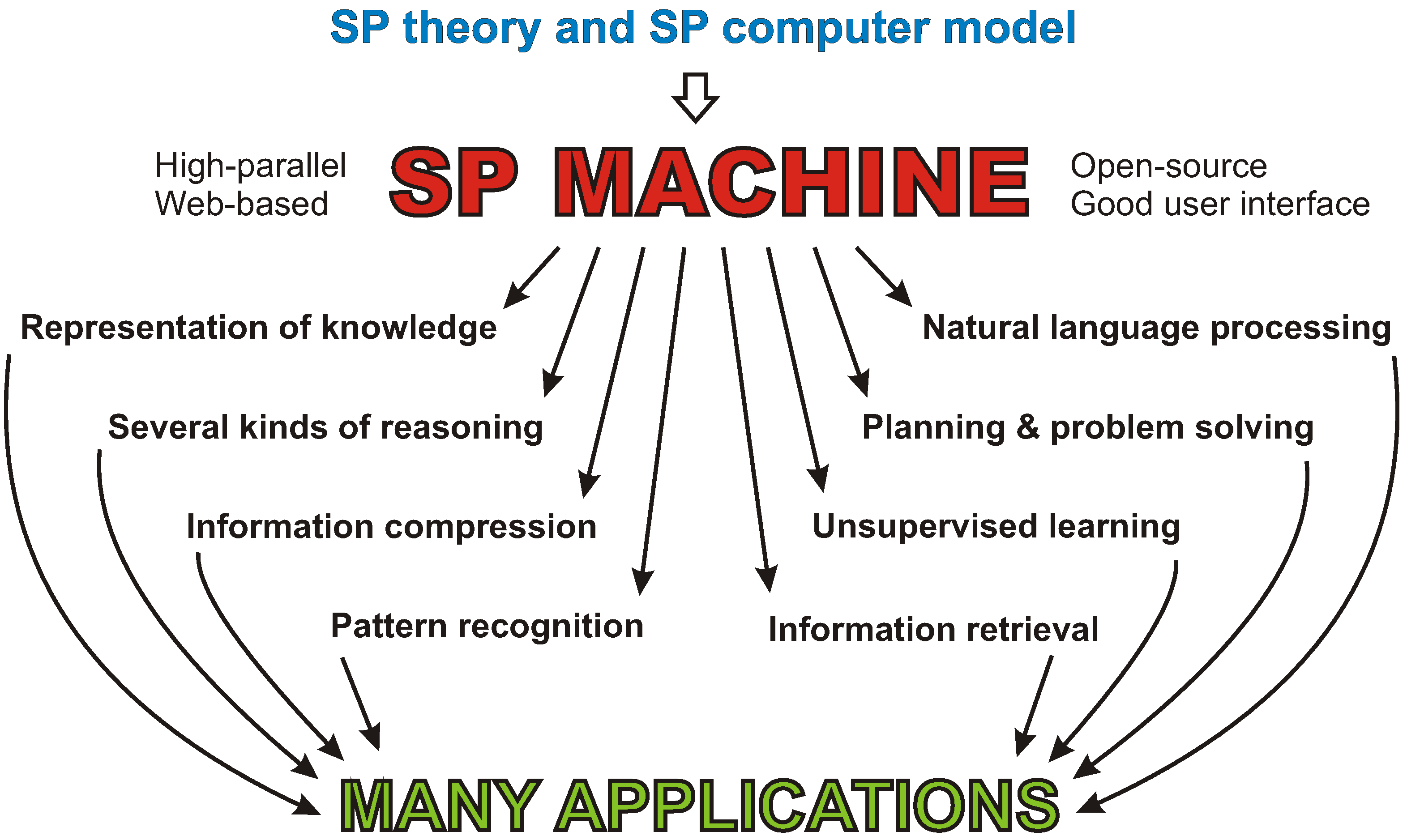

The SP computer model may be regarded as a first version of the SP machine, an expression of the SP theory and a means for it to be applied.

A useful step forward in the development of the SP theory would be the creation of a high-parallel, open-source version of the SP machine, accessible via the web, and with a good user interface [25]. This would provide a means for researchers to explore what can be done with the system and to improve it. How things may develop is shown schematically in Figure 2.

Figure 2.

Schematic representation of the development and application of the proposed SP machine.

Figure 2.

Schematic representation of the development and application of the proposed SP machine.

The high-parallel search mechanisms in any of the existing internet search engines would probably provide a good foundation for the proposed development.

Further ahead, there may be a case for the creation of new kinds of hardware, dedicated to the building of multiple alignments and other processes in the SP framework (Section 6.13 in [26]).

3.3. Unfinished Business

Like most theories, the SP theory has shortcomings, but it appears that they may be overcome. At present, the most immediate problems are:

- Processing of information in two or more dimensions. No attempt has yet been made to generalise the SP model to work with patterns in two dimensions, although that appears to be feasible to do, as outlined in BK (Section 13.2.1). As noted in BK (Section 13.2.2), it is possible that information with dimensions higher than two may be encoded in terms of patterns in one or two dimensions, somewhat in the manner of architects’ drawings. A 3D structure may be stitched together from several partially-overlapping 2D views, in much the same way that, in digital photography, a panoramic view may be created from partially-overlapping pictures (Sections 6.1 and 6.2 in [27]).

- Recognition of perceptual features in speech and visual images. For the SP system to be effective in the processing of speech or visual images, it seems likely that some kind of preliminary processing will be required to identify low-level perceptual features, such as, in the case of speech, phonemes, formant ratios or formant transitions, or, in the case of visual images, edges, angles, colours, luminances or textures. In vision, at least, it seems likely that the SP framework itself will prove relevant, since edges may be seen as zones of non-redundant information between uniform areas containing more redundancy and, likewise, angles may be seen to provide significant information where straight edges, with more redundancy, come together (Section 3 in [27]). As a stop-gap solution, the preliminary processing may be done using existing techniques for the identification of low-level perceptual features (Chapter 13 in [28]).

- Unsupervised learning. A limitation of the SP computer model as it is now is that it cannot learn intermediate levels of abstraction in grammars (e.g., phrases and clauses), and it cannot learn the kinds of discontinuous dependencies in natural language syntax that are described in Section 8.1 to Section 8.3. I believe these problems are soluble and that solving them will greatly enhance the capabilities of the system for the unsupervised learning of structure in data (Section 5).

- Processing of numbers. The SP model works with atomic symbols, such as ASCII characters or strings of characters with no intrinsic meaning. In itself, the SP system does not recognise the arithmetic meaning of numbers such as “37” or “652” and will not process them correctly. However, the system has the potential to handle mathematical concepts if it is supplied with patterns representing Peano’s axioms or similar information (BK, Chapter 10). As a stop-gap solution in the SP machine, existing technologies may provide whatever arithmetic processing may be required.

4. The Multiple Alignment Concept

The concept of multiple alignment in the SP theory has been adapted from a similar concept in bioinformatics, where it means a process of arranging, in rows or columns, two or more DNA sequences or amino-acid sequences, so that matching symbols—as many as possible—are aligned orthogonally in columns or rows.

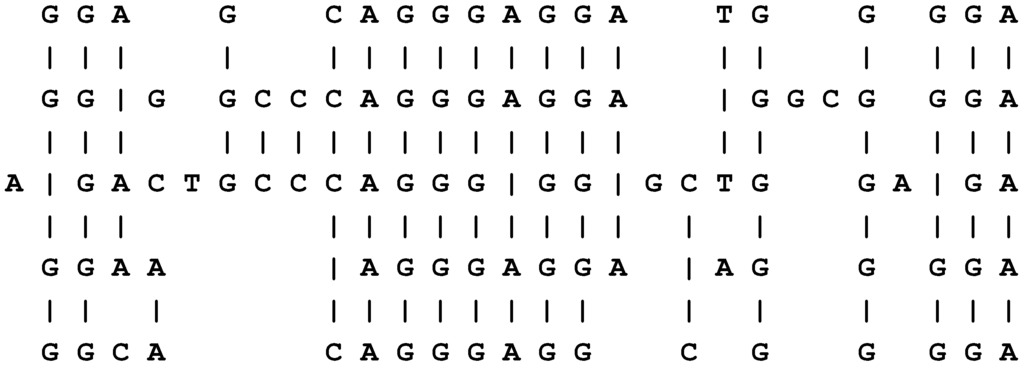

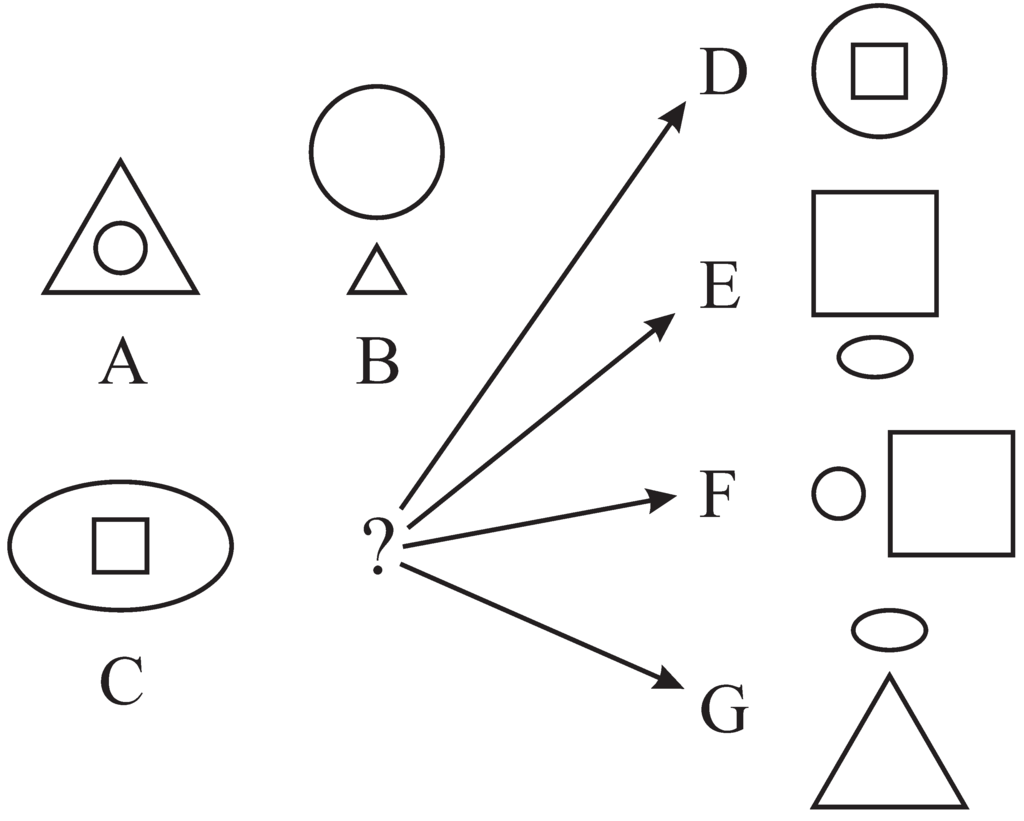

Multiple alignments like these are normally used in the computational analysis of (symbolic representations of) sequences of DNA bases or sequences of amino acid residues as part of the process of elucidating the structure, functions or evolution of the corresponding molecules. An example of this kind of multiple alignment is shown in Figure 3.

Figure 3.

A “good” alignment amongst five DNA sequences.

Figure 3.

A “good” alignment amongst five DNA sequences.

As in bioinformatics, a multiple alignment in the SP system is an arrangement of two or more patterns in rows (or columns), with one pattern in each row (or column) [29]. The main difference between the two concepts is that, in bioinformatics, all sequences have the same status, whereas in the SP theory, the system attempts to create a multiple alignment which enables one New pattern (sometimes more) to be encoded economically in terms of one or more Old patterns. Other differences are described in BK (Section 3.4.1).

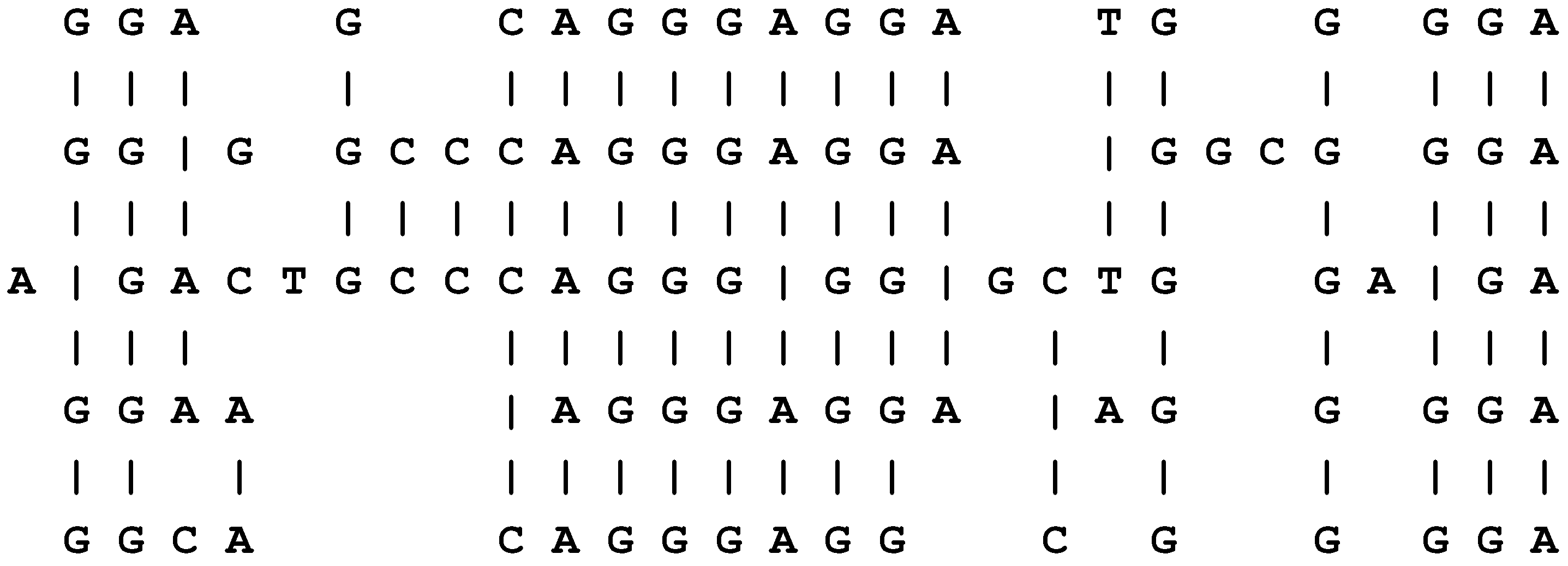

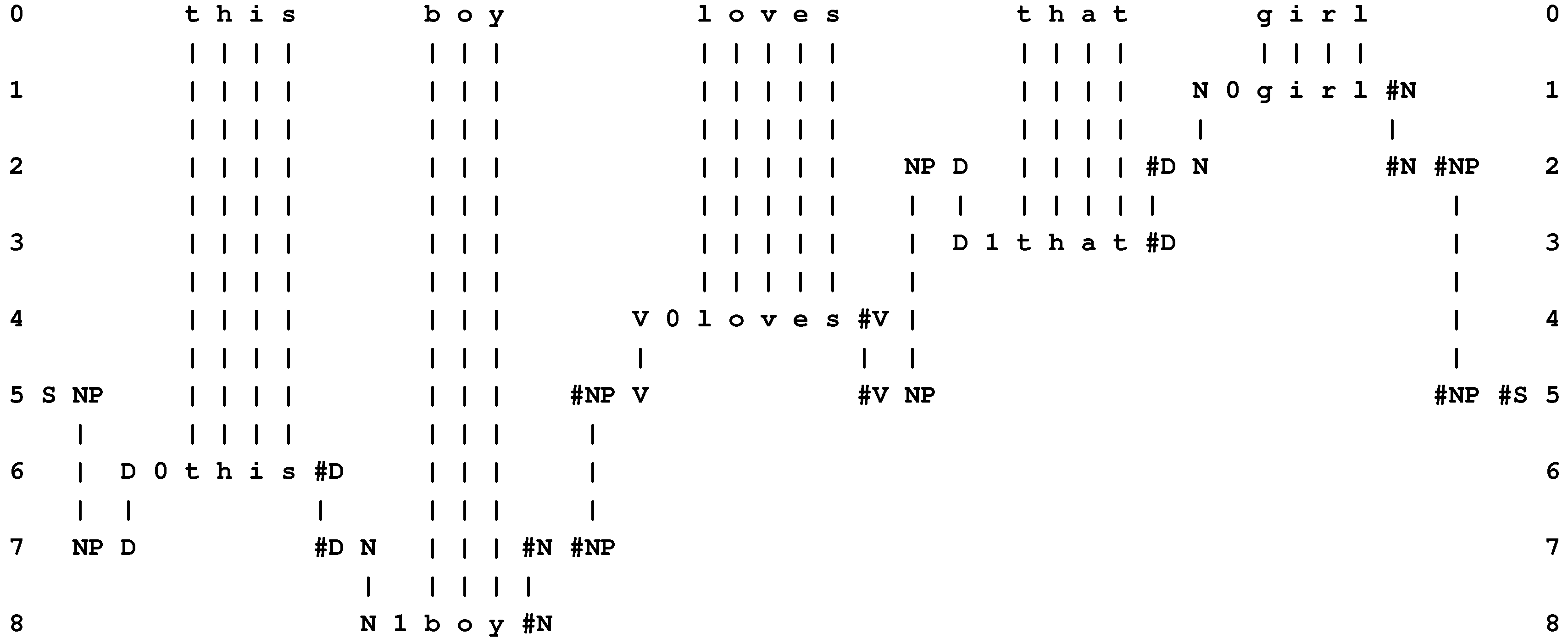

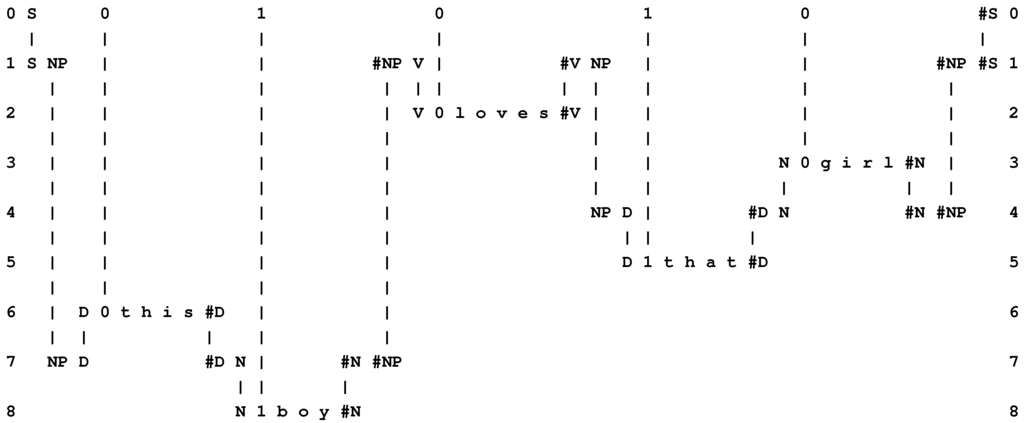

In Figure 4, row 0 contains a New pattern representing a sentence: “t h i s b o y l o v e s t h a t g i r l”, while each of rows 1 to 8 contains an Old pattern representing a grammatical rule or a word with grammatical markers. This multiple alignment, which achieves the effect of parsing the sentence in terms of grammatical structures, is the best of several built by the model when it is supplied with the New pattern and a set of Old patterns that includes those shown in the figure and several others as well.

Figure 4.

The best multiple alignment found by the SP model with the New pattern “t h i s b o y l o v e s t h a t g i r l” and a set of user-supplied Old patterns representing some of the grammatical forms of English, including words with their grammatical markers.

Figure 4.

The best multiple alignment found by the SP model with the New pattern “t h i s b o y l o v e s t h a t g i r l” and a set of user-supplied Old patterns representing some of the grammatical forms of English, including words with their grammatical markers.

In this example, and others in this article, “best” means that the multiple alignment in the figure is the one that enables the New pattern to be encoded most economically in terms of the Old patterns, as described in Section 4.1, below.

4.1. Coding and the Evaluation of an Alignment in Terms of Compression

This section describes in outline how multiple alignments are evaluated in the SP model. More detail may be found in BK (Section 3.5).

Each Old pattern in the SP system contains one or more “identification” symbols, or ID-symbols, which, as their name suggests, serve to identify the pattern. Examples of ID-symbols in Figure 4 are “D” and “0” at the beginning of “D 0 t h i s #D” (row 6) and “N” and “1” at the beginning of “N 1 b o y #N” (row 8).

Associated with each type of symbol (where a “type” of symbol is any one of a set of symbols that match each other exactly) is a notional code or bit pattern that serves to distinguish the given type from all the others. This is only notional, because the bit patterns are not actually constructed. All that is needed for the purpose of evaluating multiple alignments is the size of the notional bit pattern associated with each type. This is calculated via the Shannon-Fano-Elias coding scheme (described in [30]), using information about the frequency of occurrence of each Old pattern, so that the shortest codes represent the most frequent symbol types and vice versa [31]. Notice that these bit patterns and their sizes are totally independent of the names for symbols that are used in written accounts like this one: names that are chosen purely for their mnemonic value.

Given a multiple alignment like the one shown in Figure 4, one can derive a code pattern from the multiple alignment in the following way:

- (1)

- Scan the multiple alignment from left to right looking for columns that contain an ID-symbol by itself, not aligned with any other symbol.

- (2)

- Copy these symbols into a code pattern in the same order that they appear in the multiple alignment.

The code pattern derived in this way from the multiple alignment shown in Figure 4 is “S 0 1 0 1 0 #S”. This is, in effect, a compressed representation of those symbols in the New pattern that are aligned with Old symbols in the multiple alignment. In this case, the code pattern is a compressed representation of all the symbols in the New pattern, but it often happens that some of the symbols in the New pattern are not matched with any Old symbols and then the code pattern will represent only those New symbols that are aligned with Old symbols.

In the context of natural language processing, it is perhaps more plausible to suppose that the encoding of a sentence is some kind of representation of the meaning of the sentence, instead of a pattern like “S 0 1 0 1 0 #S”. How a meaning may be derived from a sentence via multiple alignment is described in BK (Section 5.7).

4.1.1. Compression Difference and Compression Ratio

Given a code pattern, like “S 0 1 0 1 0 #S”, we may calculate a “compression difference” as:

or a “compression ratio” as:

where is the total number of bits in those symbols in the New pattern that are aligned with Old symbols in the alignment and is the total number of bits in the symbols in the code pattern, and the number of bits for each symbol is calculated via the Shannon-Fano-Elias scheme, as mentioned above.

and are each an indication of how effectively the New pattern (or those parts of the New pattern that are aligned with symbols within Old patterns in the alignment) may be compressed in terms of the Old patterns that appear in the given multiple alignment. The of a multiple alignment—which has been found to be more useful than —may be referred to as the compression score of the multiple alignment.

In each of these equations, is calculated as:

where is the size of the code for the ith symbol in a sequence, , comprising those symbols within the New pattern that are aligned with Old symbols within the multiple alignment.

is calculated as:

where is the size of the code for the ith symbol in the sequence of s symbols in the code pattern derived from the multiple alignment.

4.2. The Building of Multiple Alignments

This section describes in outline how the SP model builds multiple alignments. More detail may be found in BK (Section 3.10).

Multiple alignments are built in stages, with pairwise matching and alignment of patterns. At each stage, any partially-constructed multiple alignment may be processed as if it was a basic pattern and carried forward to later stages. This is broadly similar to some programs for the creation of multiple alignments in bioinformatics [32]. At all stages, the aim is to encode New information economically in terms of Old information and to weed out multiple alignments that score poorly in that regard.

The model may create Old patterns for itself, as described in Section 5, but when the formation of multiple alignments is the focus of interest, Old patterns may be supplied by the user. In all cases, New patterns must be supplied by the user.

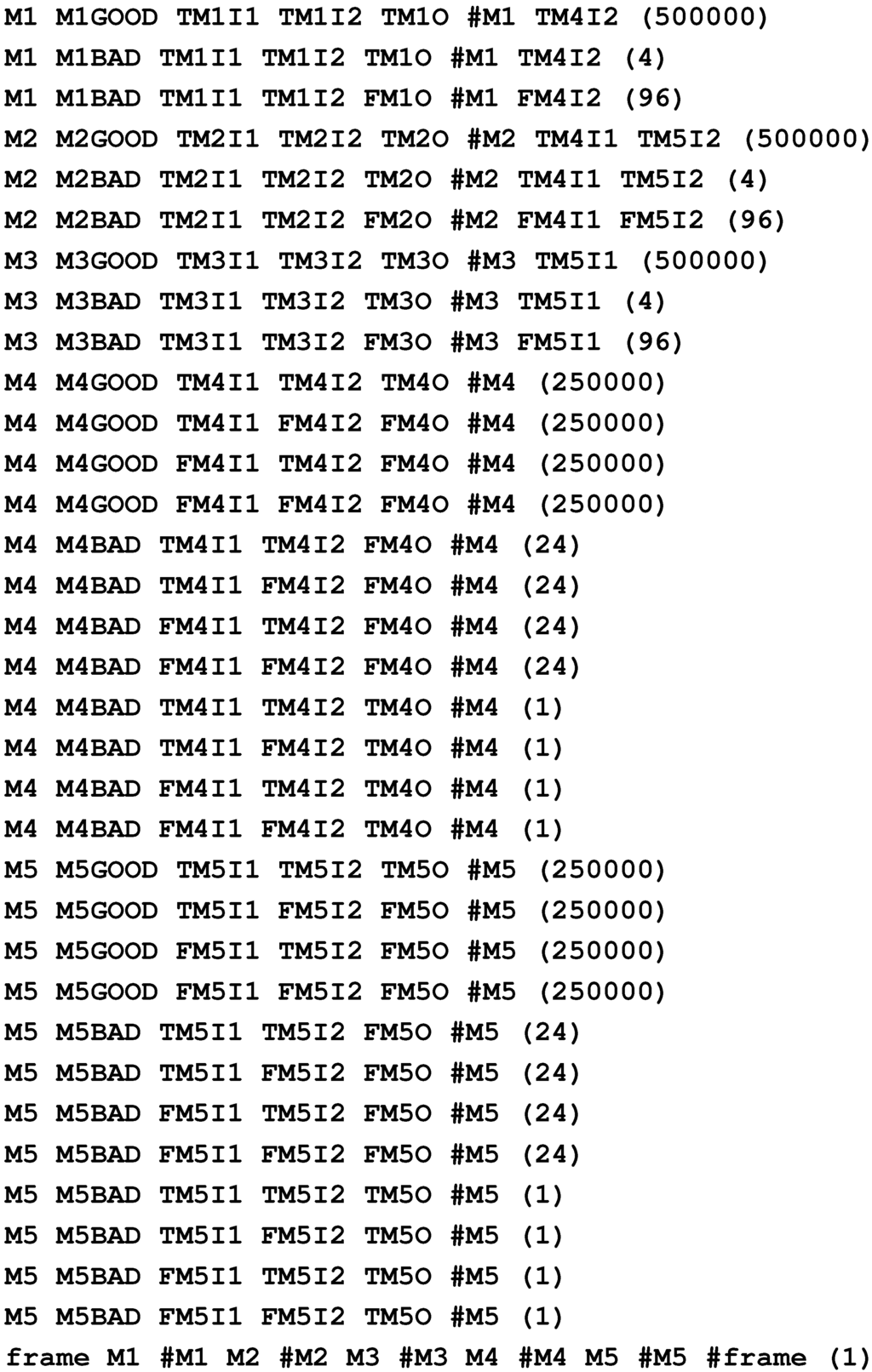

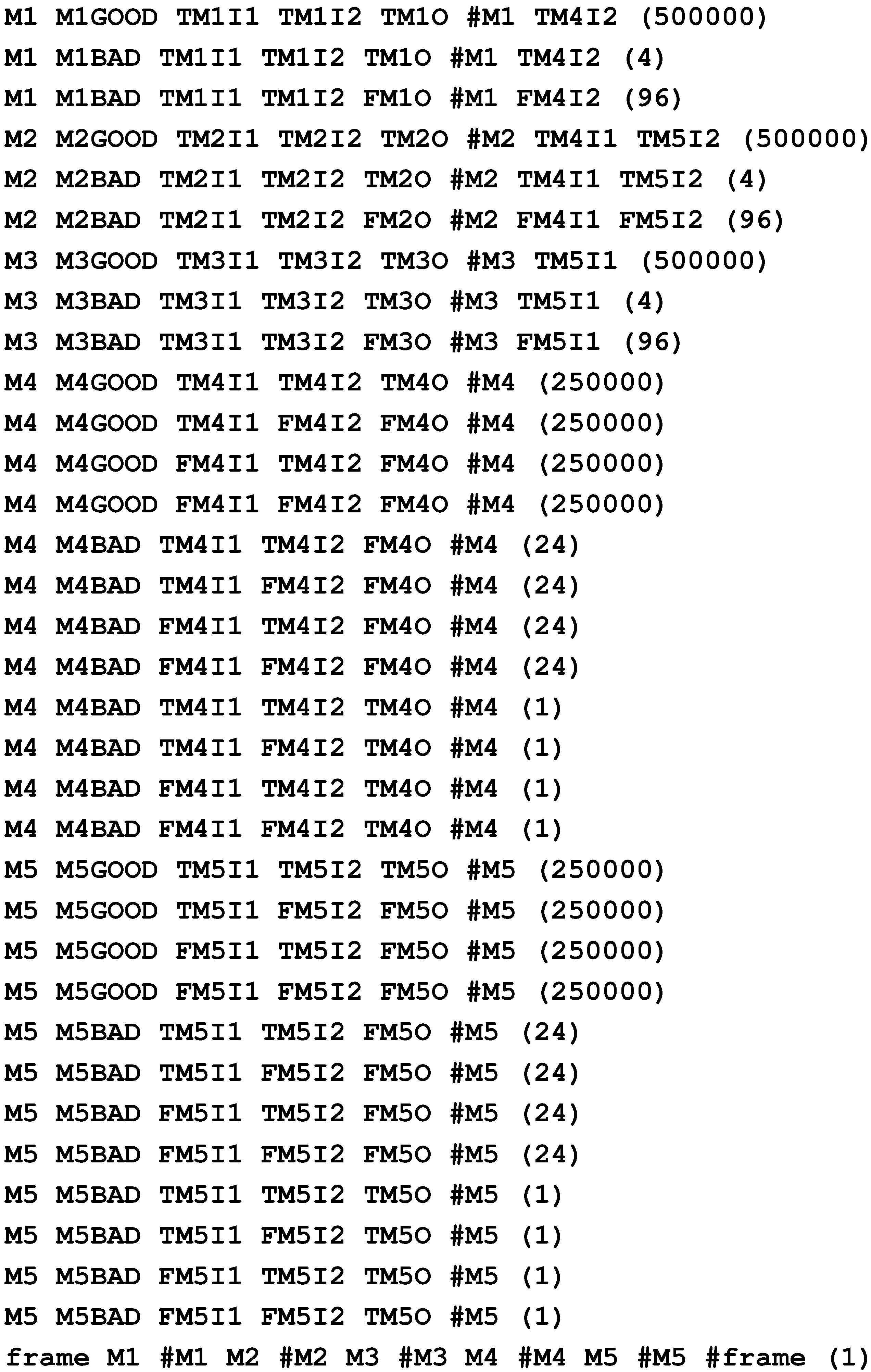

At each stage of building multiple alignments, the operations are as follows:

- (1)

- Identify a set of “driving” patterns and a set of “target” patterns. At the beginning, the New pattern is the sole driving pattern, and the Old patterns are the target patterns. In all subsequent stages, the best of the multiple alignments formed so far (in terms of their scores) are chosen to be driving patterns, and the target patterns are the Old patterns together with a selection of the best multiple alignments formed so far, including all of those that are driving patterns.

- (2)

- Compare each driving pattern with each of the target patterns to find full matches and good partial matches between patterns. This is done with a process that is essentially a form of “dynamic programming” [33], somewhat like the WinMerge utility for finding similarities and differences between files [34]. The process is described quite fully in BK (Appendix A) and outlined in Section 4.2.1, below. The main difference between the SP process and others is that the former can deliver several alternative matches between a pair of patterns, while WinMerge and standard methods for finding alignments deliver one “best” result.

- (3)

- From the best of the matches found in the current stage, create corresponding multiple alignments and add them to the repository of multiple alignments created by the program.

This process of matching driving patterns against target patterns and building multiple alignments is repeated until no more multiple alignments can be found. For the best of the multiple alignments created since the start of processing, probabilities are calculated, as described in Section 4.4.

4.2.1. Finding Good Matches between Patterns

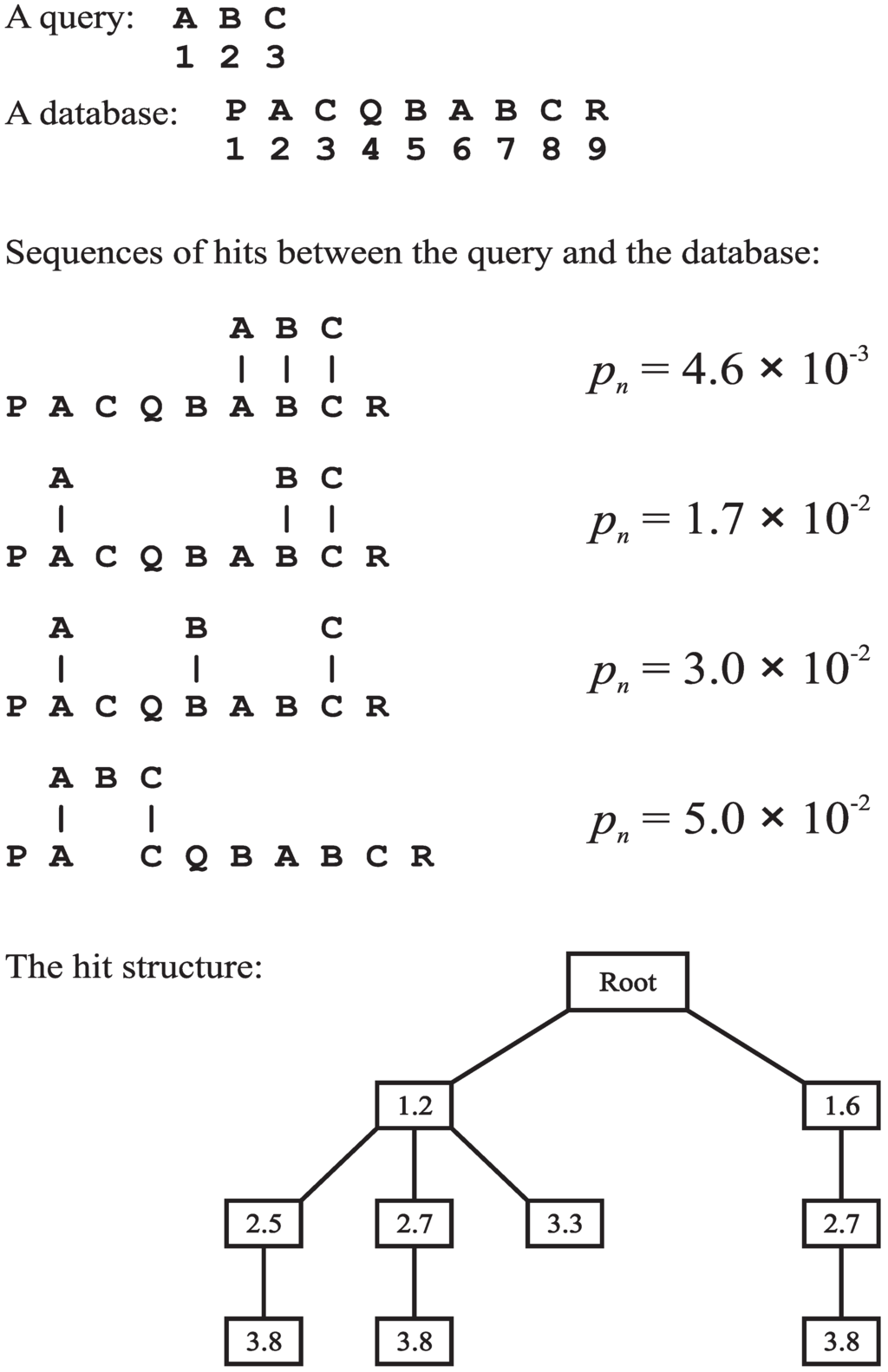

Figure 5 shows with a simple example how the SP model finds good full and partial matches between a “query” string of atomic symbols (alphabetic characters in this example) and a “database” string:

- (1)

- The query is processed left to right, one symbol at a time.

- (2)

- Each symbol in the query is, in effect, broadcast to every symbol in the database to make a yes/no match in each case.

- (3)

- Every positive match (hit) between a symbol from the query and a symbol in the database is recorded in a hit structure, illustrated in the figure.

- (4)

- If the memory space allocated to the hit structure is exhausted at any time, then the hit structure is purged: the leaf nodes of the tree are sorted in reverse order of their probability values, and each leaf node in the bottom half of the set is extracted from the hit structure, together with all nodes on its path which are not shared with any other path. After the hit structure has been purged, the recording of hits may continue using the space, which has been released.

4.2.2. Noisy Data

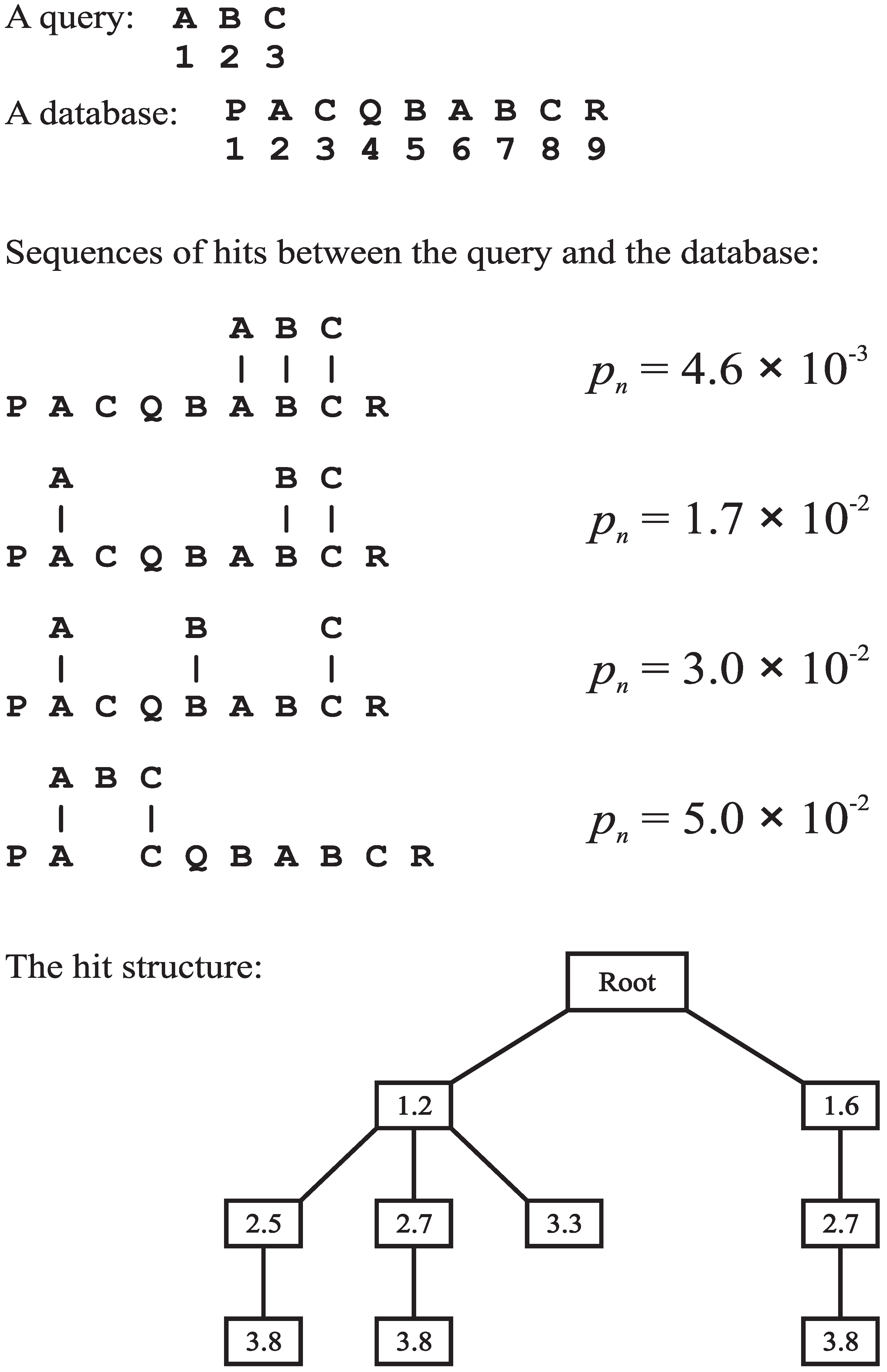

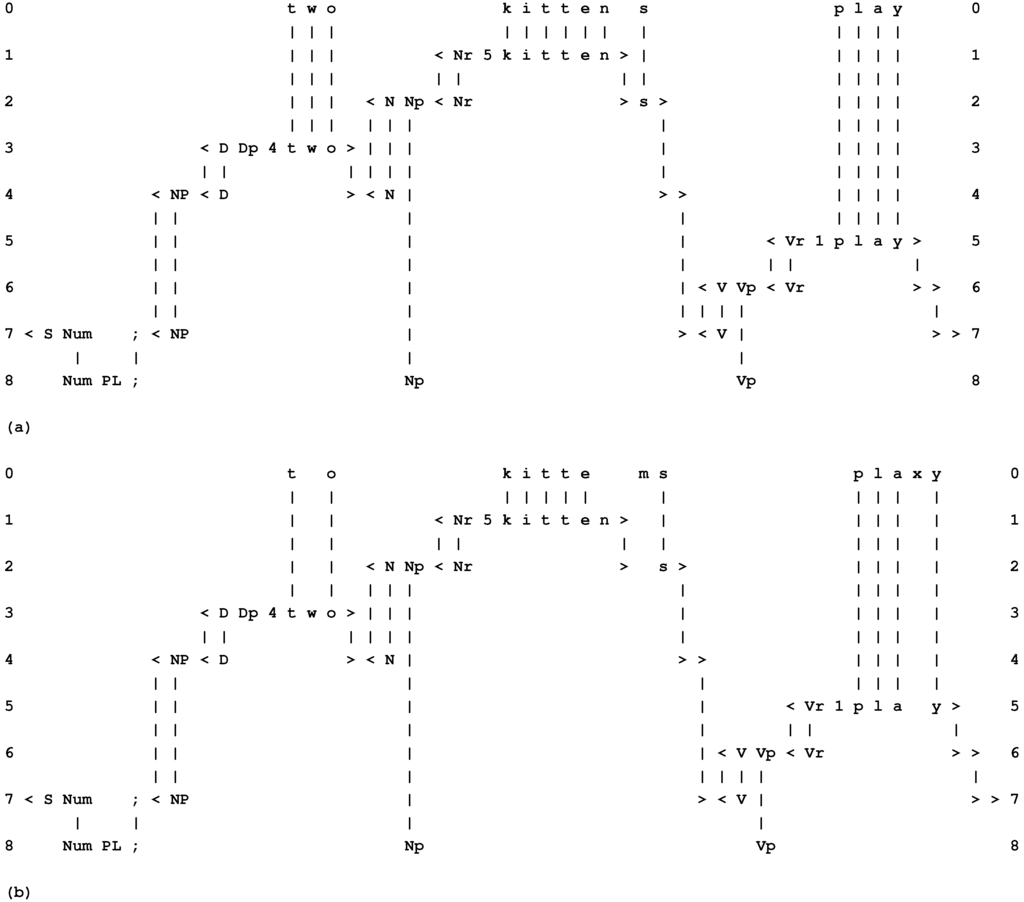

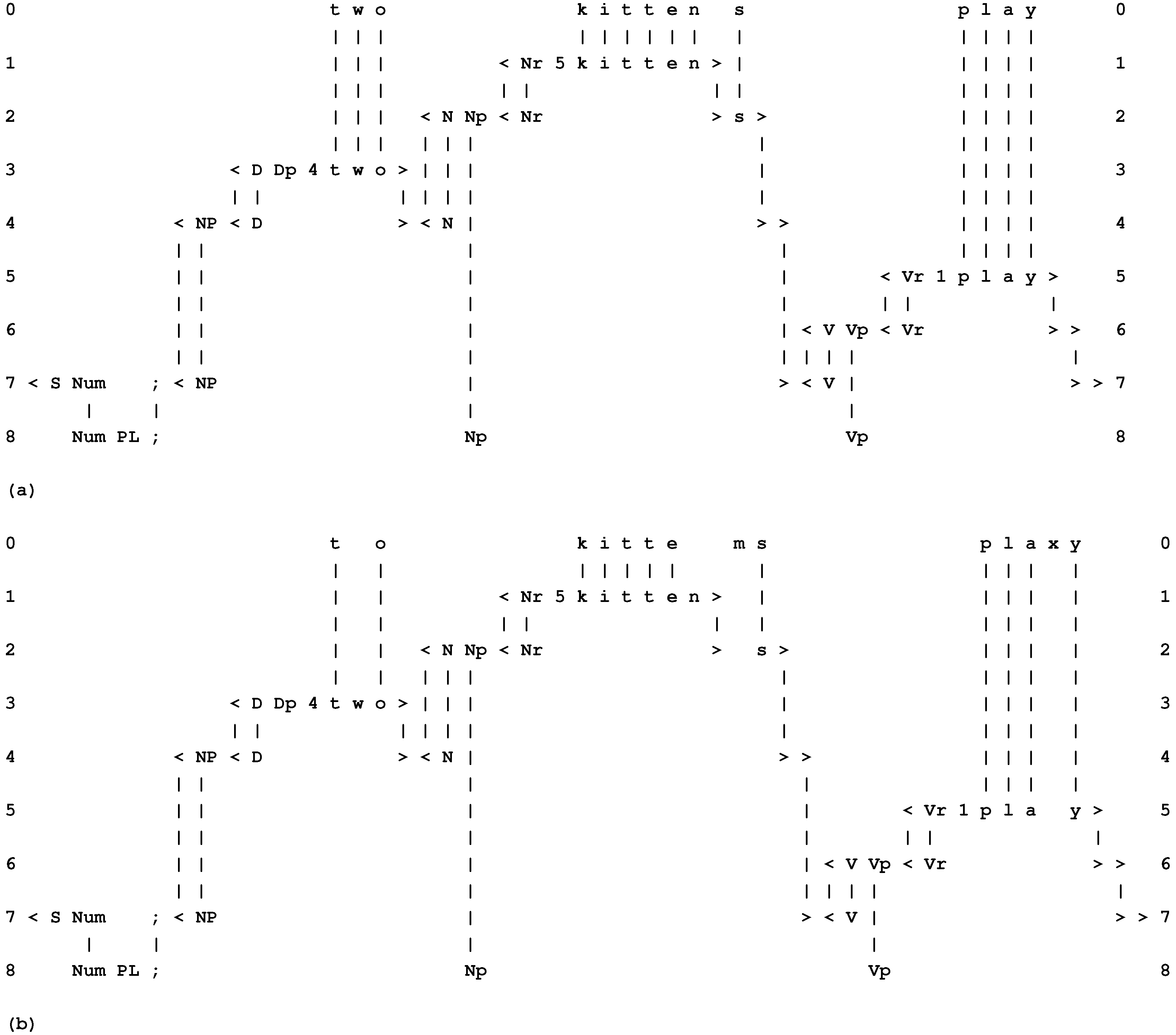

Because of the way each model searches for a global optimum in the building of multiple alignments, it does not depend on the presence or absence of any particular feature or combination of features. Up to a point, plausible results may be obtained in the face of errors of omission, commission and substitution in the data. This is illustrated in the two multiple alignments in Figure 6, where the New pattern in row 0 of (b) is the same sentence as in (a) (“t w o k i t t e n s p l a y”), but with the omission of the “w” in “t w o”, the substitution of “m” for “n” in “k i t t e n s” and the addition of “x” within the word “p l a y”. Despite these errors, the best multiple alignment created by the SP model is, as shown in (b), the one that we judge intuitively to be “correct”.

Figure 5.

An example to show how the SP model finds good full and partial matches between patterns. A “query” string and a “database” string are shown at the top with the ordinal positions of symbols marked. Sequences of hits between the query and the database are shown in the middle with corresponding values of (described in BK, Section A.2). Each node in the hit structure shows the ordinal position of a query symbol and the ordinal position of a matching database symbol. Each path from the root node to a leaf node represents a sequence of hits.

Figure 5.

An example to show how the SP model finds good full and partial matches between patterns. A “query” string and a “database” string are shown at the top with the ordinal positions of symbols marked. Sequences of hits between the query and the database are shown in the middle with corresponding values of (described in BK, Section A.2). Each node in the hit structure shows the ordinal position of a query symbol and the ordinal position of a matching database symbol. Each path from the root node to a leaf node represents a sequence of hits.

Figure 6.

(a) The best multiple alignment created by the SP model with a store of Old patterns, like those in rows 1 to 8 (representing grammatical structures, including words) and a New pattern (representing a sentence to be parsed) shown in row 0; (b) as in (a), but with errors of omission, commission and substitution, and with the same set of Old patterns as before. (a) and (b) are reproduced from Figures 1 and 2 in [35], with permission.

Figure 6.

(a) The best multiple alignment created by the SP model with a store of Old patterns, like those in rows 1 to 8 (representing grammatical structures, including words) and a New pattern (representing a sentence to be parsed) shown in row 0; (b) as in (a), but with errors of omission, commission and substitution, and with the same set of Old patterns as before. (a) and (b) are reproduced from Figures 1 and 2 in [35], with permission.

This kind of ability to cope gracefully with noisy data is very much in keeping with our ability to understand speech in noisy surroundings, to understand written language despite errors and to recognise people, trees, houses and the like, despite fog, snow, falling leaves, or other things that may obstruct our view. In a similar way, it is likely to prove useful in artificial systems for such applications as the processing of natural language and the recognition of patterns.

4.3. Computational Complexity

In considering the matching and unification of patterns, it not hard to see that, for any body of information I, except very small examples, there is a huge number of alternative ways in which patterns may be matched against each other, there will normally be many alternative ways in which patterns may be unified, and exhaustive search is not tractable (BK, Section 2.2.8.4).

However, with the kinds of heuristic techniques that are familiar in other artificial-intelligence applications—reducing the size of the search space by pruning the search tree at appropriate points, and being content with approximate solutions which are not necessarily perfect—this kind of matching becomes quite practical. Much the same can be said about the heuristic techniques used for the building of multiple alignments (Section 4.2) and for unsupervised learning (Section 5.1).

An example of how effective this rough-and-ready approach can be is the way colonies of ants can find reasonably good solutions to the travelling salesman problem via the simple technique of marking their routes with pheromones and choosing routes that are most strongly marked [36].

For the process of building multiple alignments in the SP model, the time complexity in a serial processing environment, with conservative assumptions, has been estimated to be O, where n is the size of the pattern from New (in bits) and m is the sum of the lengths of the patterns in Old (in bits). In a parallel processing environment, the time complexity may approach O, depending on how well the parallel processing is applied. In serial and parallel environments, the space complexity has been estimated to be O.

Although the data sets used with the current SP model have generally been small—because the main focus has been on the concepts being modelled and not the speed of processing—there is reason to be confident that the models can be scaled up to deal with large data sets because the kind of flexible matching of patterns, which is at the heart of the SP model, is done very fast and with huge volumes of data by all the leading internet search engines. As was suggested in Section 3.2, the relevant processes in any one of those search engines would probably provide a good basis for the creation of a high-parallel version of the SP machine.

4.4. Calculation of Probabilities Associated with Multiple Alignments

As described in BK (Chapter 7), the formation of multiple alignments in the SP framework supports several kinds of probabilistic reasoning. The core idea is that any Old symbol in a multiple alignment that is not aligned with a New symbol represents an inference that may be drawn from the multiple alignment. This section outlines how probabilities for such inferences may be calculated. There is more detail in BK (Section 3.7).

4.4.1. Absolute Probabilities

Any sequence of L symbols, drawn from an alphabet of alphabetic types, represents one point in a set of N points, where N is calculated as:

If we assume that the sequence is random or nearly so (see BK, Section 3.7.1.1)—which means that the N points are equi-probable or nearly so—the probability of any one point (which represents a sequence of length L) is close to:

This equation may be used to calculate the absolute probability of the code pattern that may be derived from any given multiple alignment (as described in Section 4.1). That number may also be regarded as the absolute probability of any inferences that may be drawn from the multiple alignment. In this calculation, L is the sum of all the bits in the symbols of the code pattern, and is 2.

As we shall see (Section 4.4.3), Equation 6 may, with advantage, be generalised by replacing L with a value, , calculated in a slightly different way.

4.4.2. Relative Probabilities

The absolute probabilities of multiple alignments, calculated as described in the last subsection, are normally very small and not very interesting in themselves. From the standpoint of practical applications, we are normally interested in the relative values of probabilities, calculated as follows.

- (1)

- For the multiple alignment which has the highest (which we shall call the reference multiple alignment), identify the reference set of symbols in New, meaning the symbols from New which are encoded by the multiple alignment.

- (2)

- Compile a reference set of multiple alignments, which includes the reference multiple alignment and all other multiple alignments (if any), which encode exactly the reference set of symbols from New, neither more nor less.

- (3)

- Calculate the sum of the values for in the reference set of multiple alignments:where R is the size of the reference set of multiple alignments and is the value of for the ith multiple alignment in the reference set.

- (4)

- For each multiple alignment in the reference set, calculate its relative probability as:

The values of , calculated as just described, provide an effective means of comparing the multiple alignments in the reference set.

4.4.3. A Generalisation of the Method for Calculating Absolute and Relative Probabilities

The value of L, calculated as described in Section 4.4.1, may be regarded as the informational “cost” of encoding the New symbol or symbols that appear in the multiple alignment, excluding those New symbols that have not appeared in the multiple alignment.

This is OK, but it is somewhat restrictive, because it means that if we want to calculate relative probabilities for two or more multiple alignments, they must all encode the same symbol or symbols from New. We cannot easily compare multiple alignments that encode different New symbols.

The generalisation proposed here is that, in the calculation of absolute probabilities, a new value, , would be used instead of L. This would be calculated as:

where L is the total number of bits in the symbols in the code patterns (as in Section 4.4.1) and is the total number of bits in the New symbols that have not appeared in the multiple alignment.

The rationale is that, to encode all the symbols in New, we can use the code pattern to encode those New symbols that do appear in the multiple alignment, and for each of the remaining New symbols, we can simply use its code. The advantage of this scheme is that we can compare any two or more multiple alignments, regardless of the number of New symbols that appear in the multiple alignment.

4.4.4. Relative Probabilities of Patterns and Symbols

It often happens that a given pattern from Old, or a given symbol type within patterns from Old, appears in more than one of the multiple alignments in the reference set. In cases like these, one would expect the relative probability of the pattern or symbol type to be higher than if it appeared in only one multiple alignment. To take account of this kind of situation, the SP model calculates relative probabilities for individual patterns and symbol types in the following way:

- (1)

- Compile a set of patterns from Old, each of which appears at least once in the reference set of multiple alignments. No single pattern from Old should appear more than once in the set.

- (2)

- For each pattern, calculate a value for its relative probability as the sum of the values for the multiple alignments in which it appears. If a pattern appears more than once in a multiple alignment, it is only counted once for that multiple alignment.

- (3)

- Compile a set of symbol types, which appear anywhere in the patterns identified in step 2.

- (4)

- For each alphabetic symbol type identified in step 3, calculate its relative probability as the sum of the relative probabilities of the patterns in which it appears. If it appears more than once in a given pattern, it is only counted once.

The foregoing applies only to symbol types, which do not appear in the New. Any symbol type that appears in the New necessarily has a probability of one—because it has been observed, not inferred.

4.5. One System for Both the Analysis and the Production of Information

A potentially useful feature of the SP system is that the processes which serve to analyse or parse a New pattern in terms of Old patterns and to create an economical encoding of the New pattern, may also work in reverse, to recreate the New pattern from its encoding. This is the “output” perspective, mentioned in Section 3.

If the New pattern is the code sequence “S 0 1 0 1 0 #S” (as described in Section 4), and if the Old patterns are the same as were used to create the multiple alignment shown in Figure 4, then the best multiple alignment found by the system is the one shown in Figure 7. This multiple alignment contains the same words as the original sentence (“t h i s b o y l o v e s t h a t g i r l”), in the same order as the original. Readers who are familiar with Prolog will recognise that this process of recreating the original sentence from its encoding is similar in some respects to the way in which an appropriately-constructed Prolog program may be run “backwards”, deriving “data” from “results”.

How is it possible to decompress the compressed code for the original sentence by using information compression? This apparent paradox—decompression by compression—may be resolved by ensuring that, when a code pattern like “S 0 1 0 1 0 #S” is used to recreate the original data, each symbol is treated, at least notionally, as if it contained a few more bits of information than is strictly necessary. That residual redundancy allows the system to recreate the original sentence by the same process of compression as was used to create the original parsing and encoding [37].

This process of creating a relatively large pattern from a relatively small encoding provides a model for the creation of sentences by a person or an artificial system. However, instead of the New pattern being a rather dry code, like “S 0 1 0 1 0 #S”, it would be more plausible if it were some kind of representation of the meaning of the sentence, like that mentioned in Section 4.1. How a sentence may be generated from a representation of meaning is outlined in BK (Section 5.7.1).

Figure 7.

The best multiple alignment found by the SP model with the New pattern, “S 0 1 0 1 0 #S”, and the same Old patterns as were used to create the multiple alignment shown in Figure 4.

Figure 7.

The best multiple alignment found by the SP model with the New pattern, “S 0 1 0 1 0 #S”, and the same Old patterns as were used to create the multiple alignment shown in Figure 4.

Similar principles may apply to other kinds of “output”, such as planning an outing, cooking a meal, and so on.

5. Unsupervised Learning

As was mentioned in Section 2.1, part of the inspiration for the SP theory has been a programme of research developing models of the unsupervised learning of language. But although the SNPR model [38] is quite successful in deriving plausible grammars from samples of English-like artificial language, it has proved to be quite unsuitable as a basis for the SP theory. In order to accommodate other aspects of intelligence, such as pattern recognition, reasoning, and problem solving, it has been necessary to develop an entirely New conceptual framework, with multiple alignment at centre stage.

Therefore, there is now the curious paradox that, while the SP theory is rooted in work on unsupervised learning and that kind of learning has a central role in the theory, the SP model does much the same things as the earlier model and with similar limitations (Section 3.3 and Section 5.1.4). However, I believe that the New conceptual framework has many advantages, that it provides a much sounder footing for further developments, and that with some reorganisation of the learning processes in the SP computer model, its current weaknesses may be overcome (Section 5.1.4).

5.1. Outline of Unsupervised Learning in the SP Model

The outline of the SP model in this section aims to provide sufficient detail for a good intuitive grasp of how it works. A lot more detail may be found in BK (Chapter 9).

In addition to the processes for building multiple alignments, the SP model has processes for deriving Old patterns from multiple alignments, evaluating sets of newly-created Old patterns in terms of their effectiveness for the economical encoding of the New information, and the weeding out low-scoring sets. The system does not merely record statistical information, it uses that information to learn new structures.

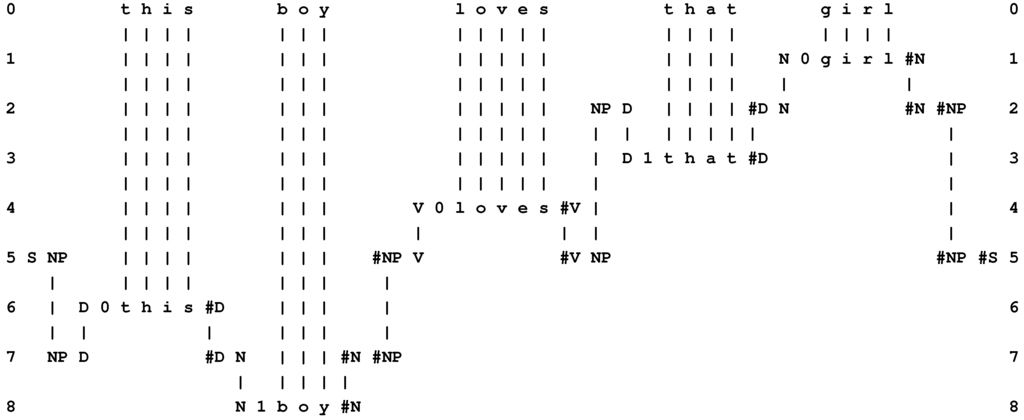

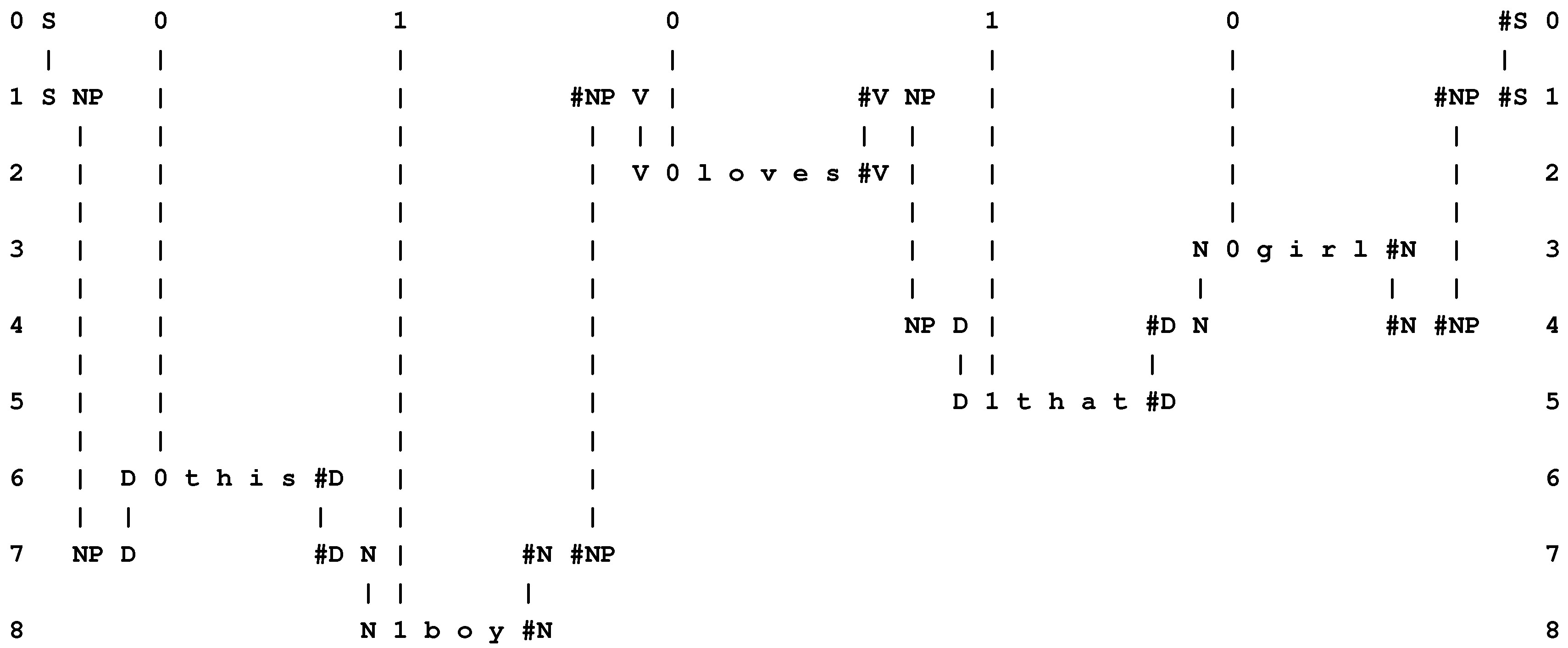

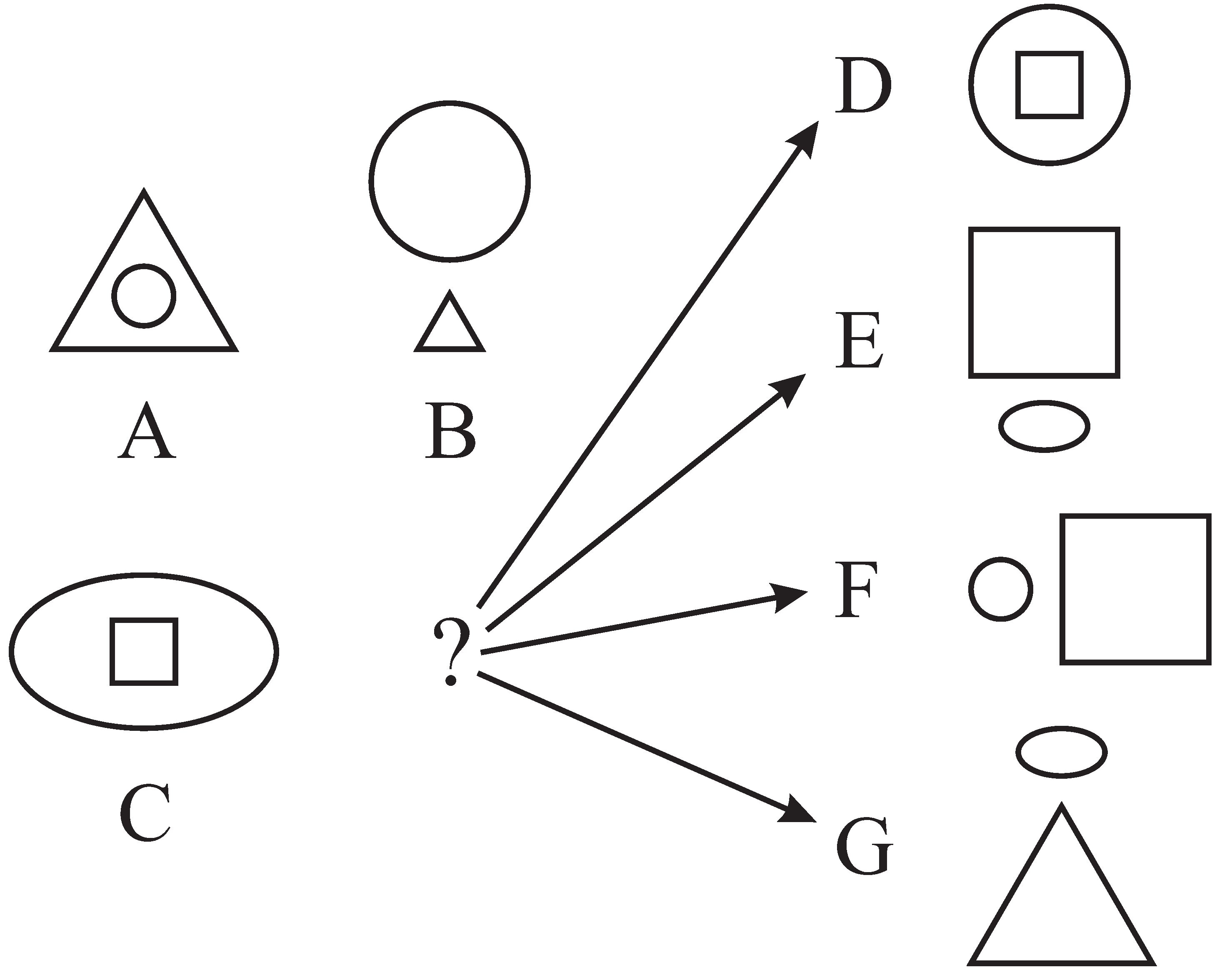

5.1.1. Deriving Old Patterns from Multiple Alignments

The process of deriving Old patterns from multiple alignments is illustrated schematically in Figure 8. As was mentioned in Section 3, the SP system is conceived as an abstract brain-like system that, in “input” mode, may receive “New” information via its senses and store some or all of it as “Old” information. Here, we may think of it as the brain of a baby who is listening to what people are saying. Let us imagine that he or she hears someone say “t h a t b o y r u n s” [39]. If the baby has never heard anything similar, then, if it is stored at all, that New information may be stored as a relatively straightforward copy, something like the Old pattern shown in row 1 of the multiple alignment in part (a) of the figure.

Figure 8.

(a) A simple multiple alignment from which, in the SP model, Old patterns may be derived; (b) Old patterns derived from the multiple alignment shown in (a).

Figure 8.

(a) A simple multiple alignment from which, in the SP model, Old patterns may be derived; (b) Old patterns derived from the multiple alignment shown in (a).

Now, let us imagine that the information has been stored and that, at some later stage, the baby hears someone say “t h a t g i r l r u n s”. Then, from that New information and the previously-stored Old pattern, a multiple alignment may be created like the one shown in part (a) of Figure 8. And, by picking out coherent sequences that are either fully matched or not matched at all, four putative words may be extracted: “t h a t”, “g i r l”, “b o y”, and “r u n s”, as shown in the first four patterns in part (b) of the figure. In each newly-created Old pattern, there are additional symbols, such as “B”, “2” and “#B” that are added by the system and which serve to identify the pattern, to mark its boundaries, and to mark its grammatical category or categories.

In addition to these four patterns, a fifth pattern is created, “E 6 B #B C #C D #D #E”, as shown in the figure, that records the sequence, “t h a t ... r u n s”, with the category “C #C” in the middle representing a choice between “b o y” and “g i r l”. Part (b) in the figure is the beginnings of a grammar to describe that kind of phrase.

5.1.2. Evaluating and Selecting Sets of Newly-Created Old Patterns

The example just described shows how Old patterns may be derived from a multiple alignment, but it gives a highly misleading impression of how the SP model actually works. In practice, the program forms many multiple alignments that are much less tidy than the one shown, and it creates many Old patterns that are clearly “wrong”. However, the program contains procedures for evaluating candidate sets of patterns (“grammars”) and weeding out those that score badly in terms of their effectiveness for encoding the New information economically. Out of all the muddle, it can normally abstract one or two “best” grammars, and these are normally ones that appear intuitively to be “correct”, or nearly so. In general, the program can abstract one or more plausible grammars from a sample of English-like artificial language, including words, grammatical categories of words, and sentence structure.

In accordance with the principles of minimum length encoding [12,13], the aim of these processes of sifting and sorting is to minimise , where G is the size (in bits) of the grammar that is under development and E is the size (in bits) of the New patterns when they have been encoded in terms of the grammar.

For a given grammar comprising patterns, , the value of G is calculated as:

where is the number of symbols in the ith pattern and is the encoding cost of the jth symbol in that pattern.

Given that each grammar is derived from a set of multiple alignments (one multiple alignment for each pattern from the New), the value of E for the grammar is calculated as:

where is the size, in bits, of the code string derived from the ith multiple alignment (Section 4.1).

For a given set of patterns from New, a tree of alternative grammars is created with branching occurring wherever there are two or more alternative multiple alignments for a given pattern from New. The tree is grown in stages and pruned periodically to keep it within reasonable bounds. At each stage, grammars with high values for (which will be referred to as T) are eliminated.

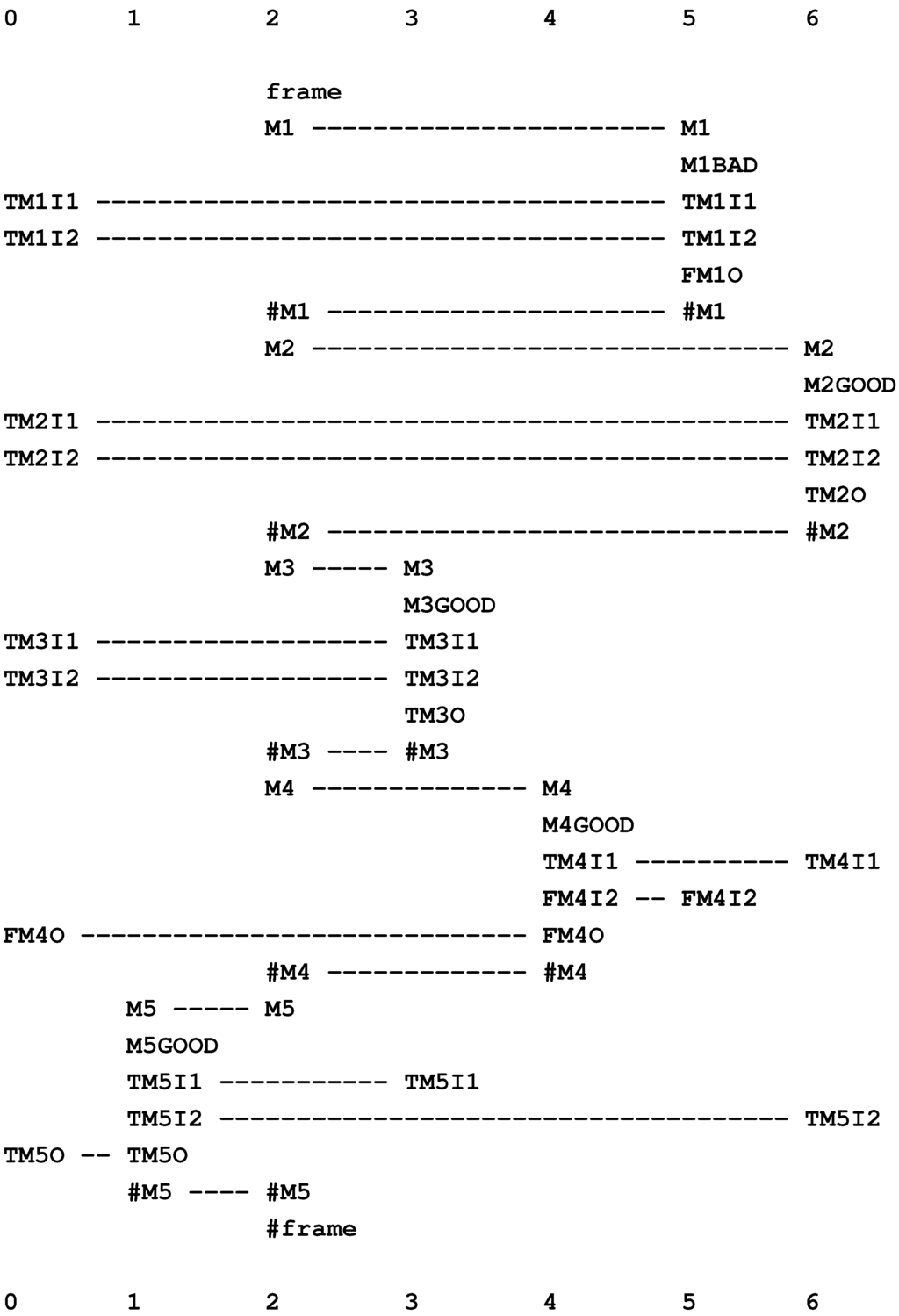

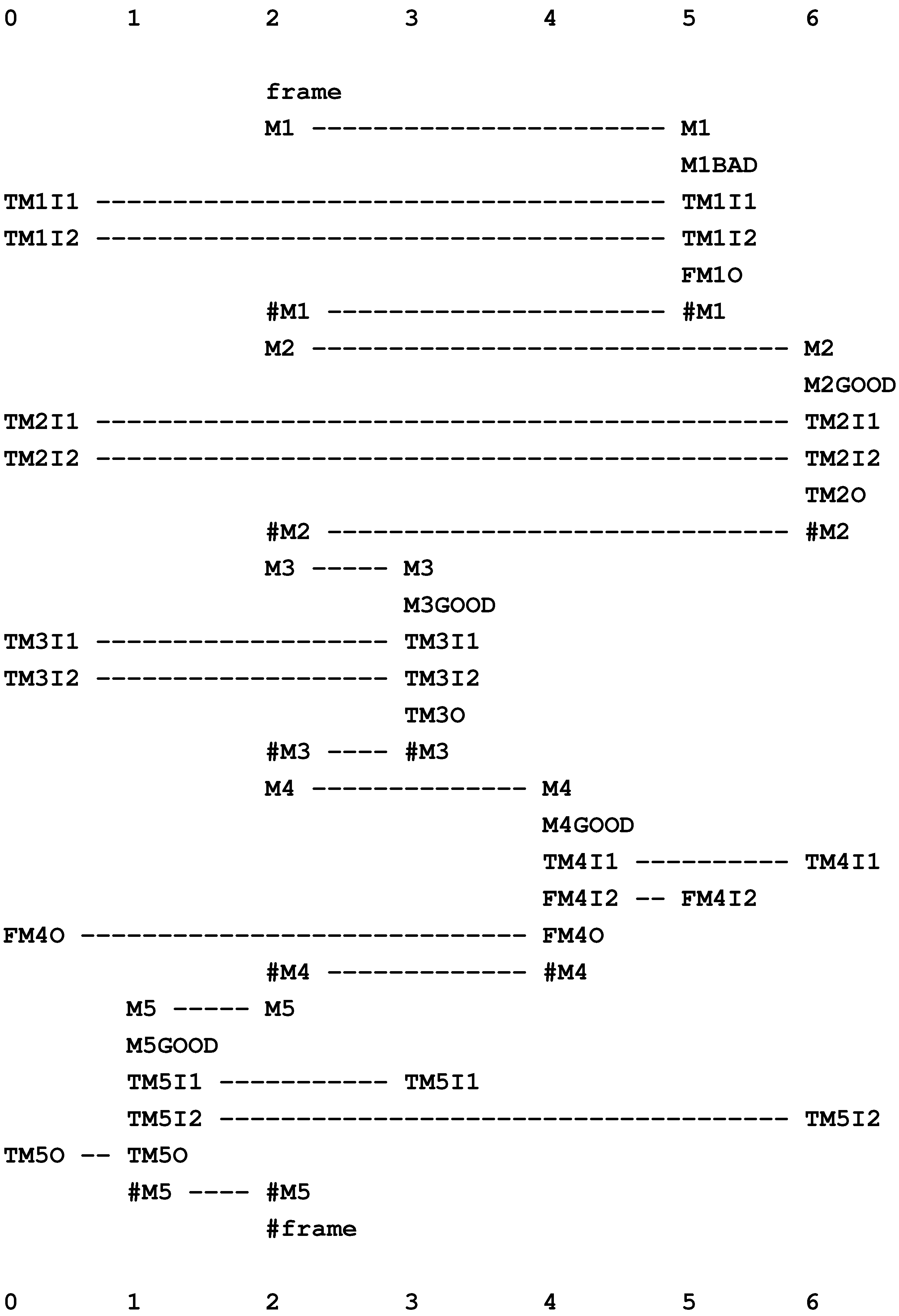

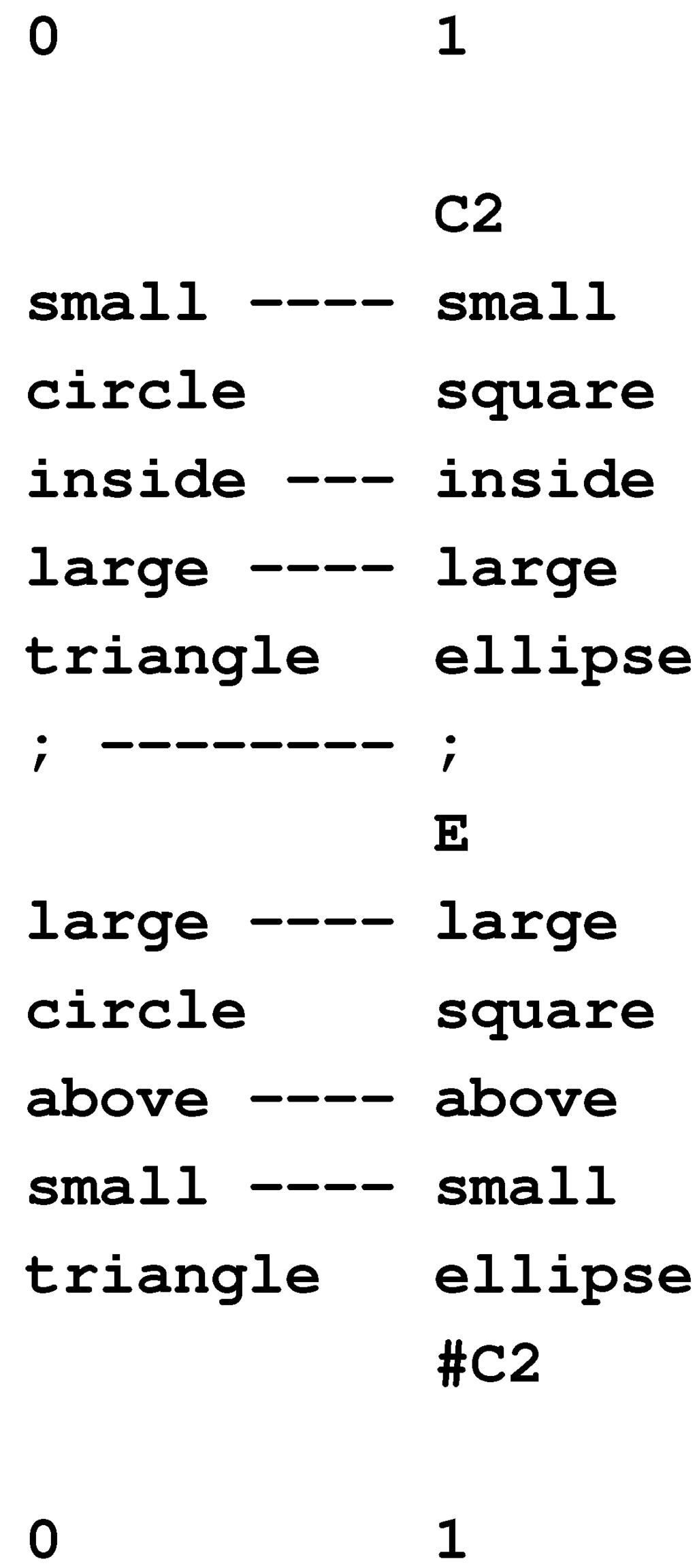

5.1.3. Plotting Values for G, E and T

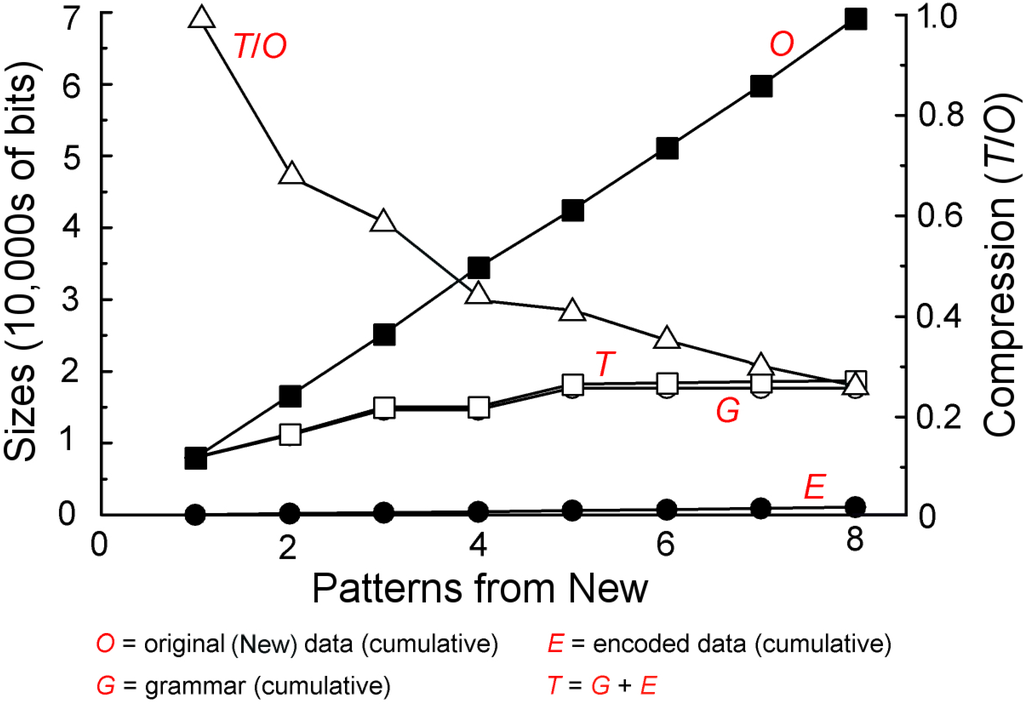

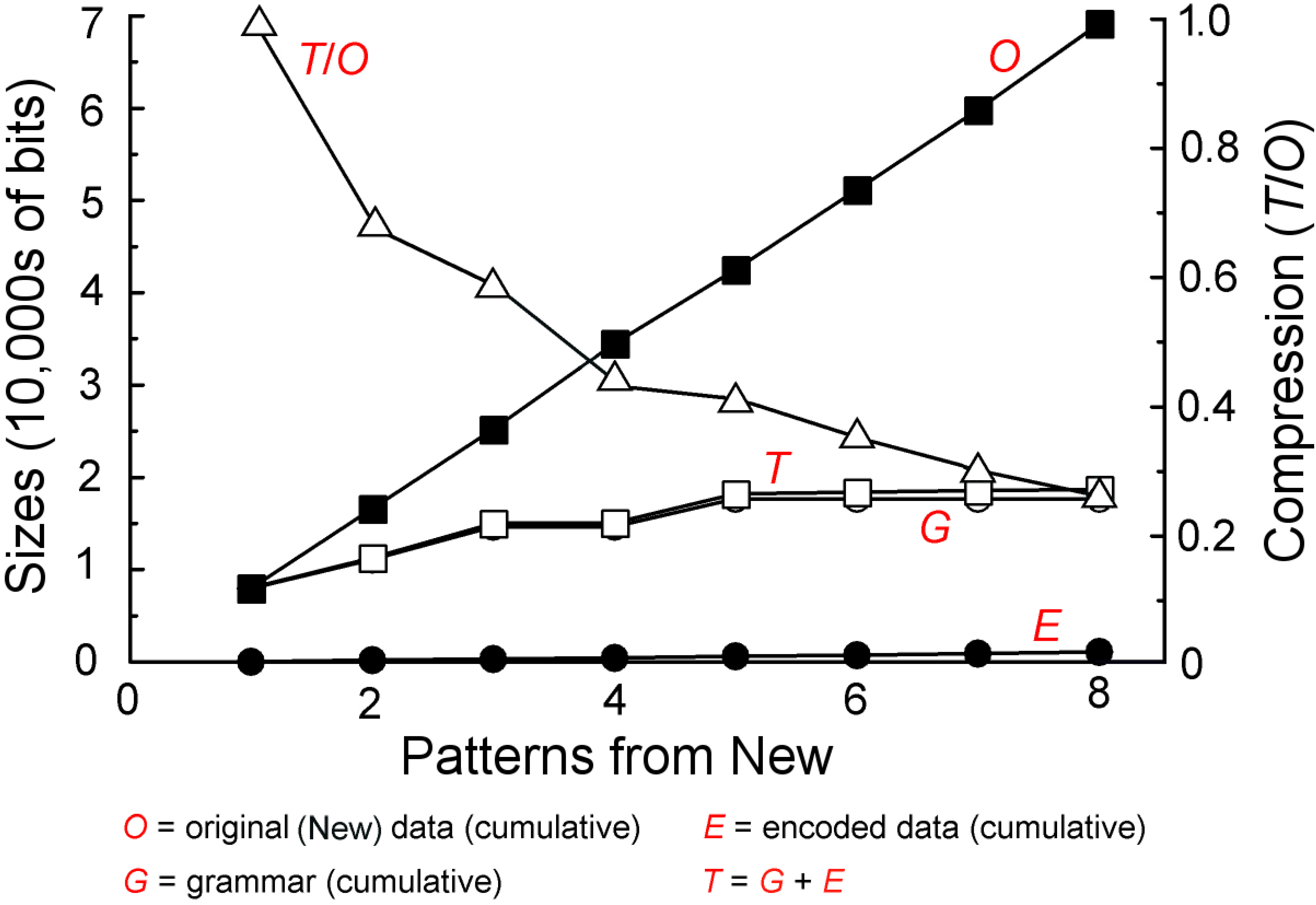

Figure 9 shows cumulative values for G, E and T as the SP model searches for good grammars for a succession of eight New patterns, each of which represents a sentence. Each point on each of the lower three graphs represents the relevant value (on the scale at the left) from the best grammar found after a given pattern from New has been processed. The graph labelled “O” shows cumulative values on the scale at the left for the succession of New patterns. The graph labelled “” shows the amount of compression achieved (on the scale to the right).

Figure 9.

Changing values for G, E and T and related variables as learning proceeds, as described in the text.

Figure 9.

Changing values for G, E and T and related variables as learning proceeds, as described in the text.

5.1.4. Limitations in the SP Model and How They May Be Overcome

As mentioned before (Section 3.3), there are two main weaknesses in the processes for unsupervised learning in the SP model as it is now: the model does not learn intermediate levels in a grammar (phrases or clauses) or discontinuous dependencies of the kind described in Section 8.1 to Section 8.3.

It appears that some reorganisation of the learning processes in the model would solve both problems. What seems to be needed is a tighter focus on the principle that, with appropriately-constructed Old patterns, multiple alignments may be created without the kind of mismatch between patterns that may be seen in Figure 8 (a) (“g i r l” and “b o y” do not match each other), and that any such multiple alignment may be treated as if it was a simple pattern. That reform should facilitate the discovery of structures at multiple levels and the discovery of structures that are discontinuous in the sense that they can bridge intervening structures.

5.1.5. Computational Complexity

As with the building of multiple alignments (Section 4.3), the computational complexity of learning in the SP model is kept under control by pruning the search tree at appropriate points, aiming to discover grammars that are reasonably good and not necessarily perfect.

In a serial processing environment, the time complexity of learning in the SP model has been estimated to be O, where N is the number of patterns in New. In a parallel processing environment, the time complexity may approach O, depending on how well the parallel processing is applied. In serial or parallel environments, the space complexity has been estimated to be O.

5.2. The Discovery of Natural Structures Via Information Compression (DONSVIC)

In our dealings with the world, certain kinds of structure appear to be more prominent and useful than others: in natural languages, there are words, phrase and sentences; we understand the visual and tactile worlds to be composed of discrete “objects”; and conceptually, we recognise classes of things, like “person”, “house”, “tree”, and so on.

It appears that these “natural” kinds of structure are significant in our thinking because they provide a means of compressing sensory information, and that compression of information provides the key to their learning or discovery. At first sight, this looks like nonsense, because popular programs for compression of information, such as those based on the Lempel-Ziv-Welch (LZW) algorithm, or programs for JPEG compression of images, seem not to recognise anything resembling words, objects or classes. However, those programs are designed to work fast on low-powered computers. With other programs that are designed to be relatively thorough in their compression of information, natural structures can be revealed:

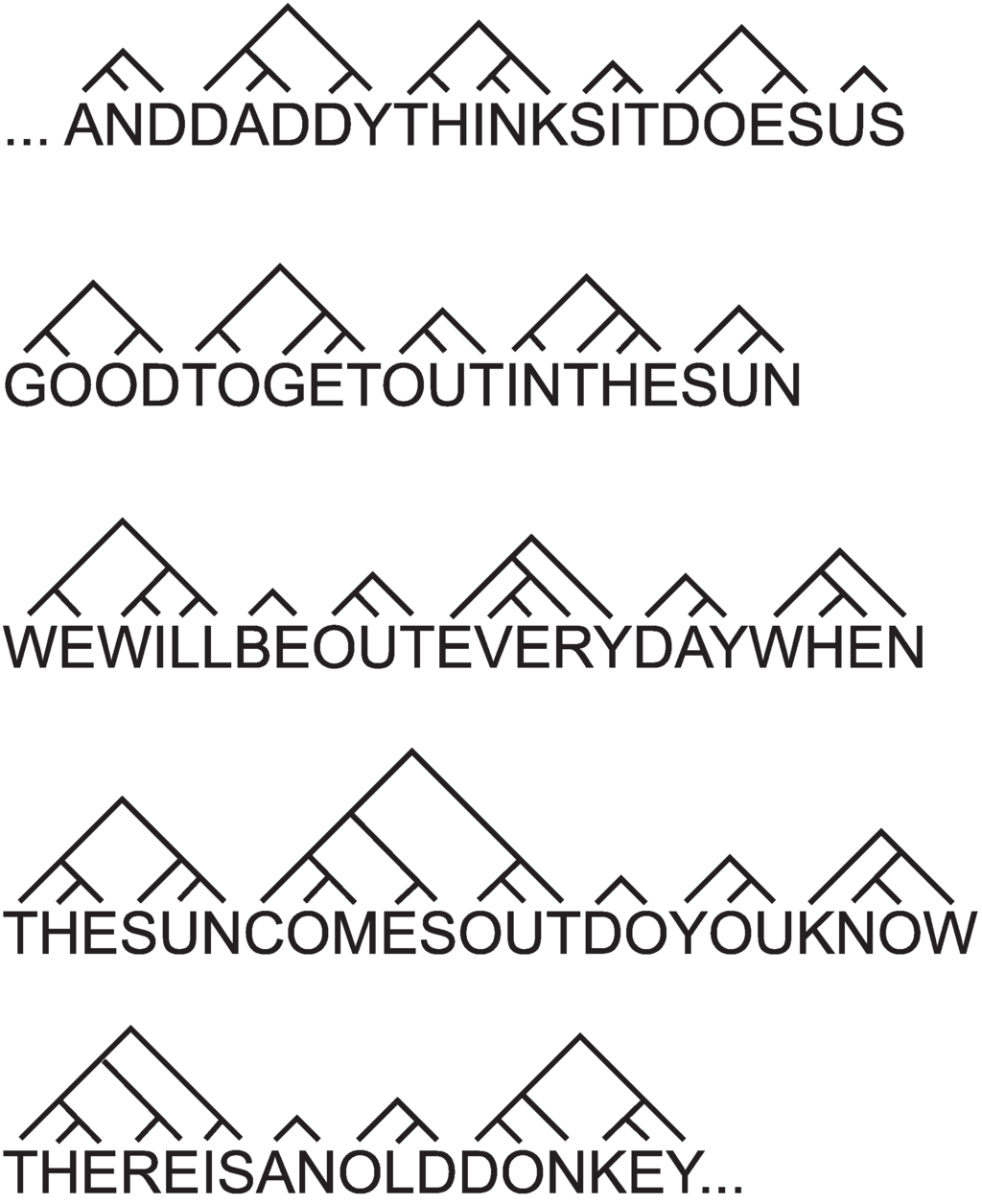

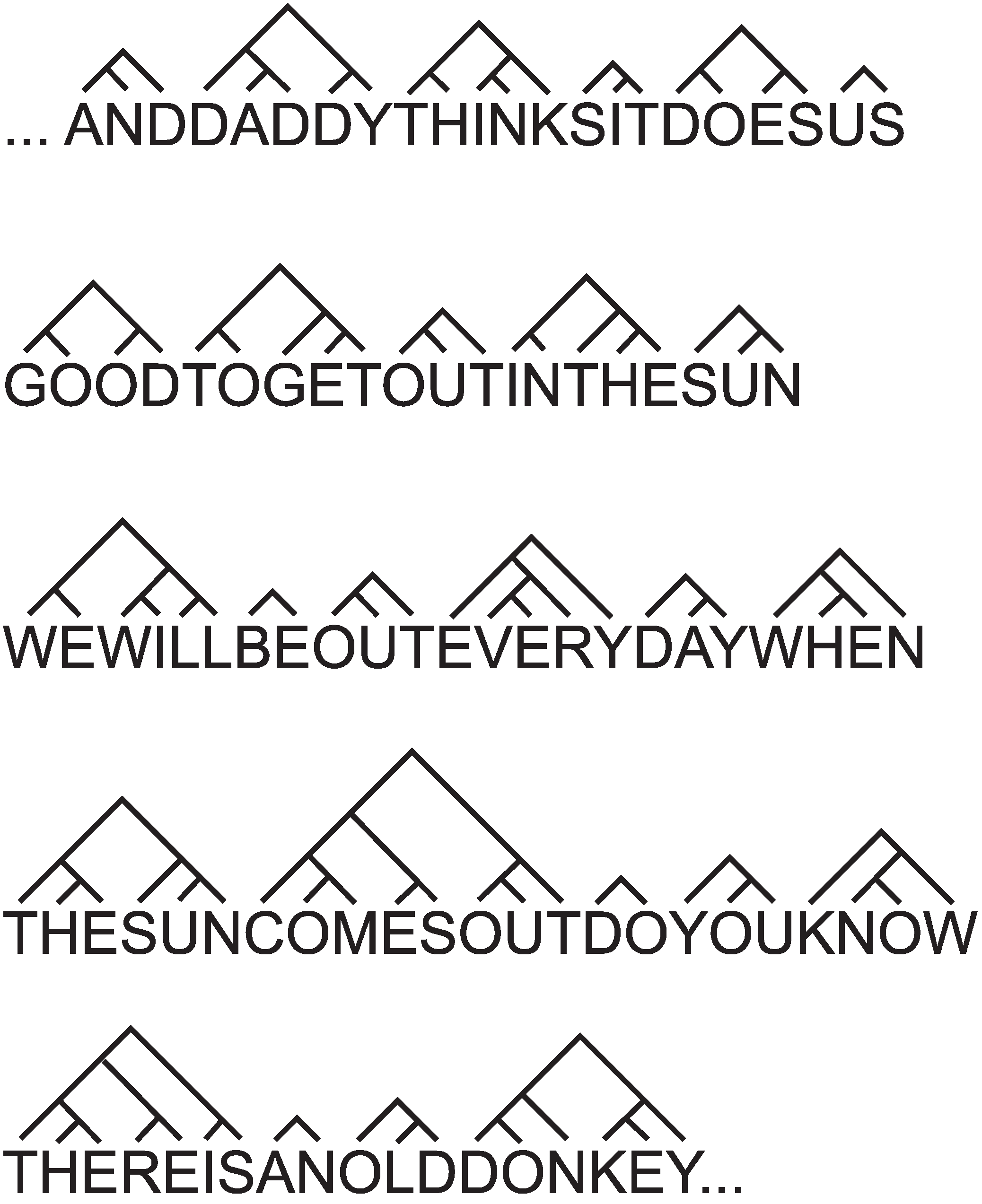

- Figure 10 shows part of a parsing of an unsegmented sample of natural language text created by the MK10 program [40] using only the information in the sample itself and without any prior dictionary or other knowledge about the structure of language. Although all spaces and punctuation had been removed from the sample, the program does reasonably well in revealing the word structure of the text. Statistical tests confirm that it performs much better than chance.

- The same program does quite well—significantly better than chance—in revealing phrase structures in natural language texts that have been prepared, as before, without spaces or punctuation—but with each word replaced by a symbol for its grammatical category [41]. Although that replacement was done by a person trained in linguistic analysis, the discovery of phrase structure in the sample is done by the program, without assistance.

- The SNPR program for grammar discovery [38] can, without supervision, derive a plausible grammar from an unsegmented sample of English-like artificial language, including the discovery of words, of grammatical categories of words, and the structure of sentences.

- In a similar way, with samples of English-like artificial languages, the SP model has demonstrated an ability to learn plausible structures, including words, grammatical categories of words and the structure of sentences.

It seems likely that the principles that have been outlined in this subsection may be applied not only to the discovery of words, phrases and grammars in language-like data, but also to such things as the discovery of objects in images [27] and classes of entity in all kinds of data. These principles may be characterised as the discovery of natural structures via information compression, or “DONSVIC” for short.

Figure 10.

Part of a parsing created by program MK10 [40] from a 10,000 letter sample of English (book 8A of the Ladybird Reading Series) with all spaces and punctuation removed. The program derived this parsing from the sample alone, without any prior dictionary or other knowledge of the structure of English. Reproduced from Figure 7.3 in [10], with permission.

Figure 10.

Part of a parsing created by program MK10 [40] from a 10,000 letter sample of English (book 8A of the Ladybird Reading Series) with all spaces and punctuation removed. The program derived this parsing from the sample alone, without any prior dictionary or other knowledge of the structure of English. Reproduced from Figure 7.3 in [10], with permission.

5.3. Generalisation, the Correction of Overgeneralisations and Learning from Noisy Data

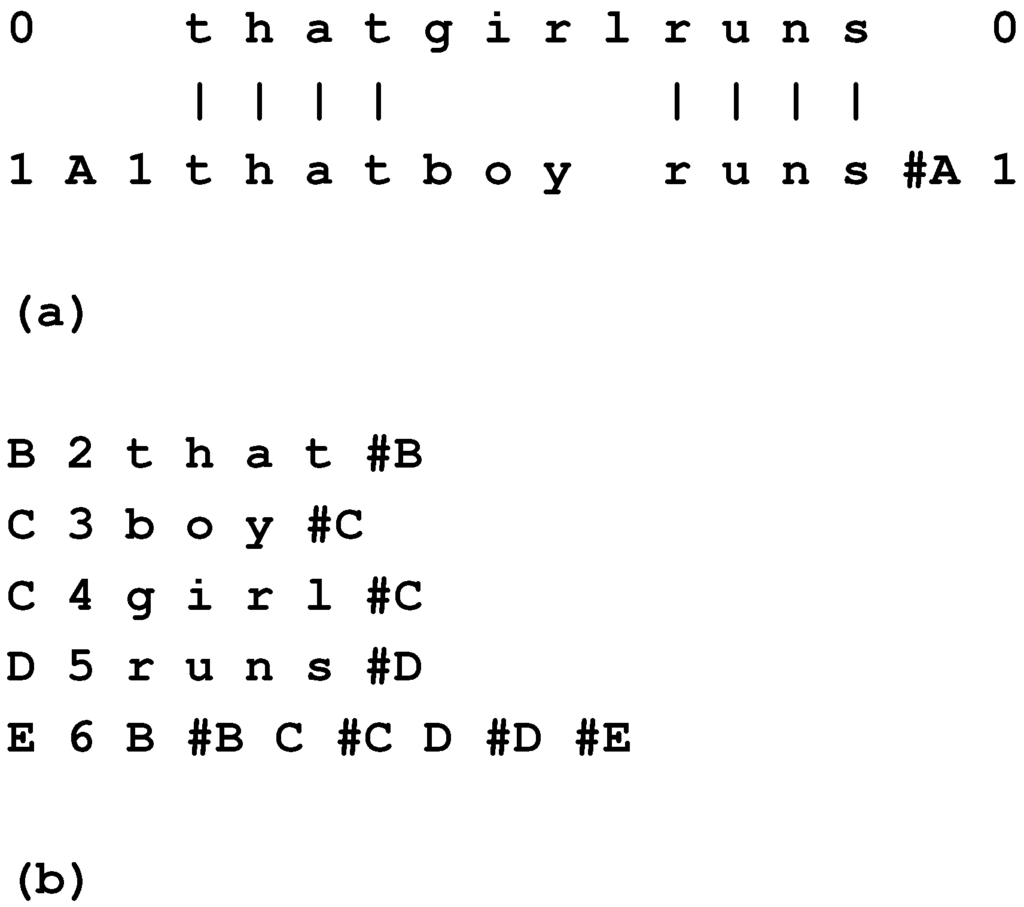

Issues that arise in the learning of a first language, and probably in other kinds of learning, are illustrated in Figure 11:

- Given that we learn from a finite sample [42], represented by the smallest envelope in the figure, how do we generalise from that finite sample to a knowledge of the language corresponding to the middle-sized envelope, without overgeneralising into the region between the middle envelope and the outer one?

- How do we learn a “correct” version of our native language despite what is marked in the figure as “dirty data” (sentences that are not complete, false starts, words that are mispronounced, and more)?

One possible answer is that mistakes are corrected by parents, teachers and others. However, the weight of evidence is that children can learn their first language without that kind of assistance [43].

Figure 11.

Categories of utterances involved in the learning of a first language, L. In ascending order size, they are: the finite sample of utterances from which a child learns; the (infinite) set of utterances in L; and the (infinite) set of all possible utterances. Adapted from Figure 7.1 in [10], with permission.

Figure 11.

Categories of utterances involved in the learning of a first language, L. In ascending order size, they are: the finite sample of utterances from which a child learns; the (infinite) set of utterances in L; and the (infinite) set of all possible utterances. Adapted from Figure 7.1 in [10], with permission.

A better answer is the principle of minimum length encoding (described in its essentials in Section 5.1.2):

- As a general rule, the greatest reductions in are achieved with grammars that represent moderate levels of generalisation, neither too little nor too much. In practice, the SNPR program, which is designed to minimise , has been shown to produce plausible generalisations, without over-generalising [38].

- Any particular error is, by its nature, rare, and so, in the search for useful patterns (which, other things being equal, are the more frequently-occurring ones), it is discarded from the grammar along with other “bad” structures [44]. In the case of lossless compression, errors in any given body of data, I, would be retained in the encoding of I. However, with learning, it is normally the grammar and not the encoding that is the focus of interest. In practice, the MK10 and SNPR programs have been found to be quite insensitive to errors (of omission, addition or substitution) in their data, much as in the building of multiple alignments (Section 4.2.2).

5.4. One-Trial Learning and Its Implications

In many theories of learning [45], the process is seen as gradual: behaviour is progressively shaped by rewards or punishments or other kinds of experience.

However, any theory of learning in which the process is necessarily gradual is out of step with our ordinary experience that we can and do learn things from a single experience, especially if that single experience is very significant for us (BK, Section 11.4.4.1).

In the SP theory, one-trial learning is accommodated in the way the system can store New information directly. And the gradual nature of, for example, language learning, may be explained by the complexity of the process of sifting and sorting the many alternative sets of candidate patterns to find one or more sets that are good in terms of information compression (BK, Section 11.4.4.2).

6. Computing, Mathematics and Logic

Drawing mainly on BK (Chapters 4 to 11), this and the following sections describe, with a selection of examples, how the SP theory relates to several areas in artificial intelligence, mainstream computing, and human perception and cognition.

In BK (Chapter 4), I have argued that the SP system is equivalent to a universal Turing machine [46,47], in the sense that anything that may be computed with a Turing machine may, in principle, also be computed with an SP machine. The “in principle” qualification is necessary, because the SP theory is still not fully mature, and there are still some weaknesses in the SP computer model. The gist of the argument is that the operation of a post canonical system [48] may be understood in terms of the SP theory, and since it is accepted that the post canonical system is equivalent to the Turing machine (as a computational system), the Turing machine may also be understood in terms of the SP theory.

The key differences between the SP theory and earlier theories of computing are that the SP theory has a lot more to say about the nature of intelligence than earlier theories, that the theory is founded on principles of information compression via the matching and unification of patterns (“computing as compression”), and that it includes mechanisms for building multiple alignments and for heuristic search that are not present in earlier models.

6.1. Conventional Computing Systems

In conventional computing systems, compression of information may be seen in the matching of patterns with at least implicit unification of patterns that match each other—processes that appear in a variety of guises (BK, Chapter 2). And three basic techniques for the compression of information—chunking-with-codes, schema-plus-correction and run-length coding—may be seen in various forms in the organisation of computer programs (ibid.).

6.2. Mathematics and Logic

In a similar way, several structures and processes in mathematics and logic may be interpreted in terms of information compression via the matching and unification of patterns and the compression techniques just mentioned (BK, Chapter 10). For example: multiplication (as repeated addition) and exponentiation (as repeated multiplication) may be seen as examples of run-length coding; a function with parameters may be seen as an example of schema-plus-correction; the chunking-with-codes technique may be seen in the organisation of number systems; and so on.

6.3. Computing and Probabilities

As we have seen, the SP system is fundamentally probabilistic. If it is indeed Turing-equivalent, as suggested above, and if the Turing machine is regarded as a definition of “computing”, then we may conclude that computing is fundamentally probabilistic. That may seem like a strange conclusion in view of the clockwork certainties that we associate with the operation of ordinary computers and the workings of mathematics and logic. There are at least three answers to that apparent contradiction:

- It appears that computing, mathematics and logic are more probabilistic than our ordinary experience of them might suggest. Gregory Chaitin has written: “I have recently been able to take a further step along the path laid out by Gödel and Turing. By translating a particular computer program into an algebraic equation of a type that was familiar even to the ancient Greeks, I have shown that there is randomness in the branch of pure mathematics known as number theory. My work indicates that—to borrow Einsteins metaphor—God sometimes plays dice with whole numbers.” (p. 80 in [49] ).

- The SP system may imitate the clockwork nature of ordinary computers by delivering probabilities of 0 and 1. This can happen with certain kinds of data, or tight constraints on the process of searching the abstract space of alternative matches, or both those things.

- It seems likely that the all-or-nothing character of conventional computers has its origins in the low computational power of early computers. In those days, it was necessary to apply tight constraints on the process of searching for matches between patterns. Otherwise, the computational demands would have been overwhelming. Similar things may be said about the origins of mathematics and logic, which have been developed for centuries without the benefit of any computational machine, except very simple and low-powered devices. Now that it is technically feasible to apply large amounts of computational power, constraints on searching may be relaxed.

7. Representation of Knowledge

Within the multiple alignment framework (Section 4), SP patterns may serve to represent several kinds of knowledge, including grammars for natural languages (Section 8; BK, Chapter 5), ontologies ([50]; BK, Section 13.4.3), class hierarchies with inheritance of attributes, including cross-classification or multiple inheritance (BK, Section 6.4), part-whole hierarchies and their integration with class-inclusion hierarchies (Section 9.1; BK, Section 6.4), decision networks and trees (BK, Section 7.5), relational tuples ([35]; BK, Section 13.4.6.1), if-then rules (BK, Section 7.6), associations of medical signs and symptoms [51], causal relations (BK, Section 7.9), and concepts in mathematics and logic, such as “function”, “variable”, “value”, “set” and “type definition” (BK, Chapter 10).

The use of one simple format for the representation of knowledge facilitates the seamless integration of different kinds of knowledge.

8. Natural Language Processing

One of the main strengths of the SP system is in natural language processing (BK, Chapter 5):

- Both the parsing and production of natural language may be modelled via the building of multiple alignments (Section 4.5; BK, Section 5.7).

- The system can accommodate syntactic ambiguities in language (BK, Section 5.2) and also recursive structures (BK, Section 5.3).

- The framework provides a simple, but effective means of representing discontinuous dependencies in syntax (Section 8.1 to Section 8.3, below; BK, Sections 5.4 to 5.6).

- The system may also model non-syntactic “semantic” structures, such as class-inclusion hierarchies and part-whole hierarchies (Section 9.1).

- Because there is one simple format for different kinds of knowledge, the system facilitates the seamless integration of syntax with semantics (BK, Section 5.7).

- The system is robust in the face of errors of omission, commission or substitution in data (Section 4.2.2 and Section 5.3).

- The importance of context in the processing of language [52] is accommodated in the way the system searches for a global best match for patterns: any pattern or partial pattern may be a context for any other.

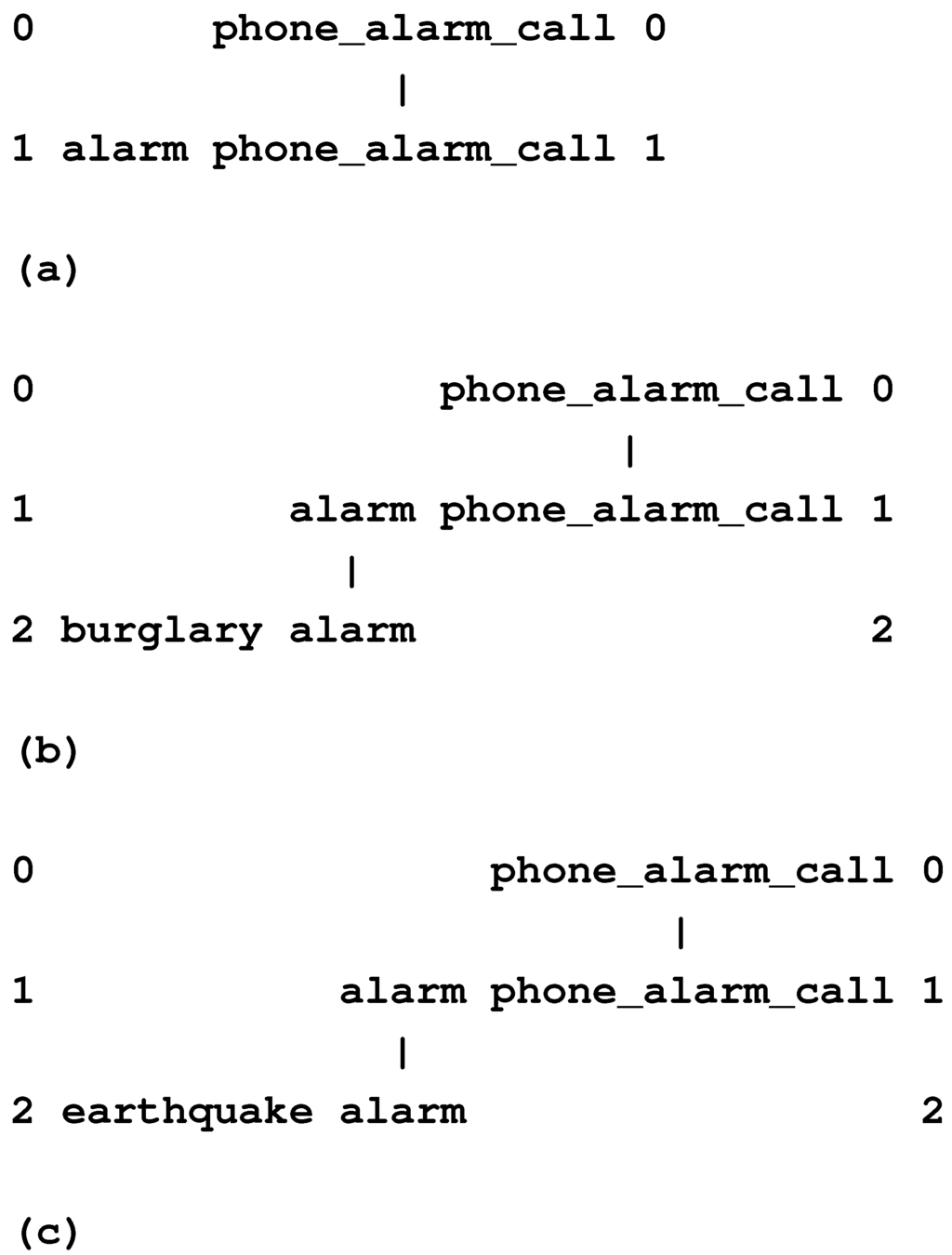

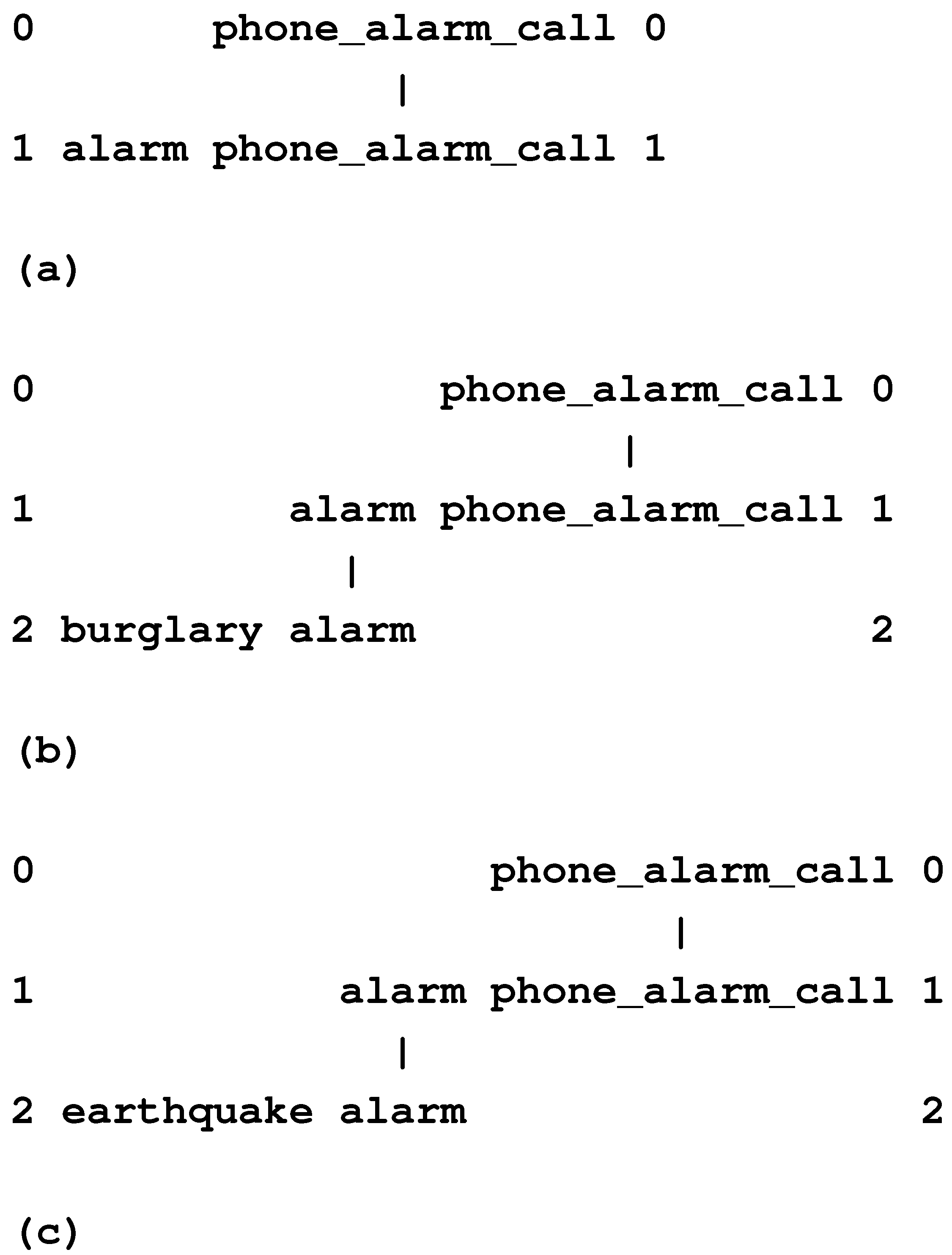

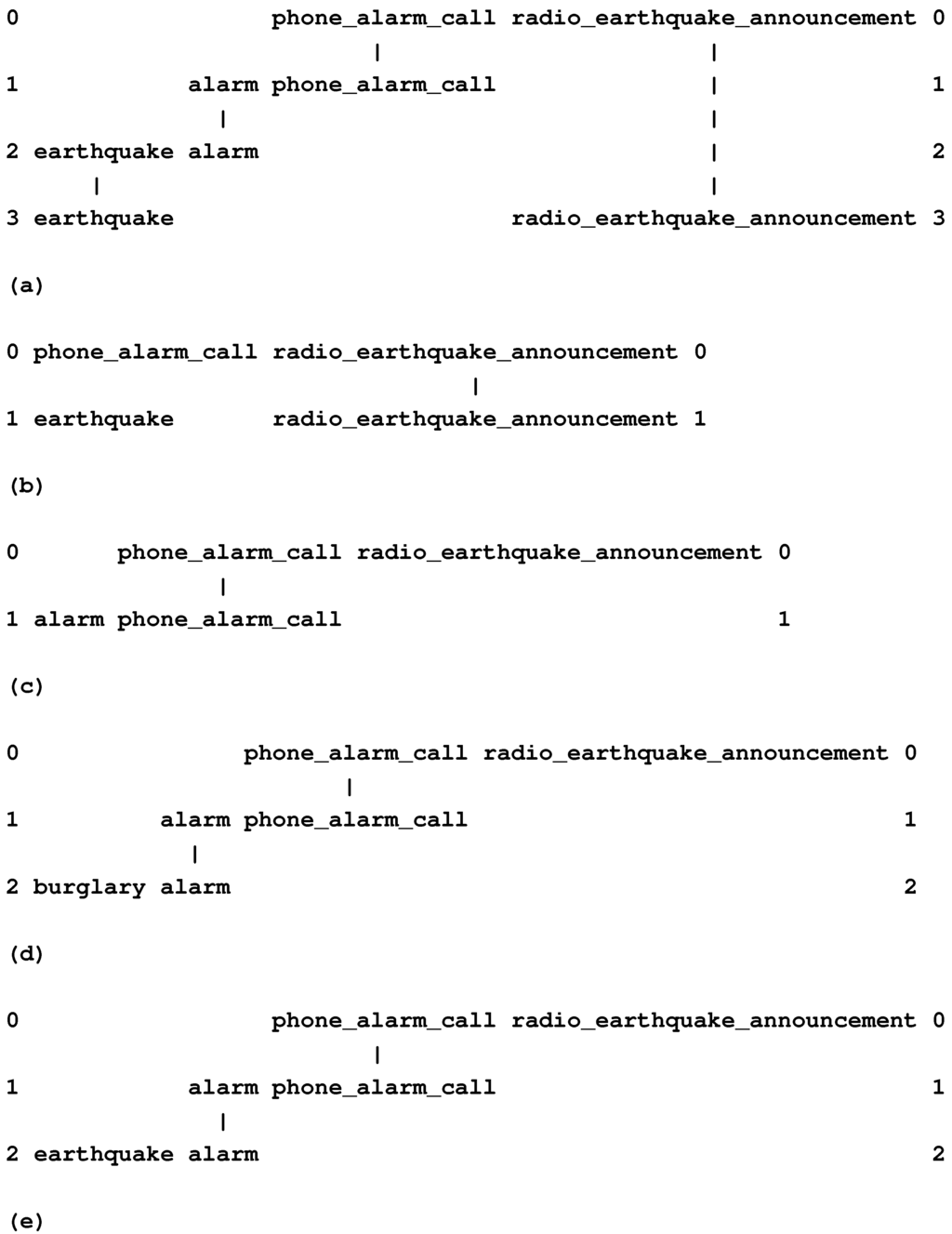

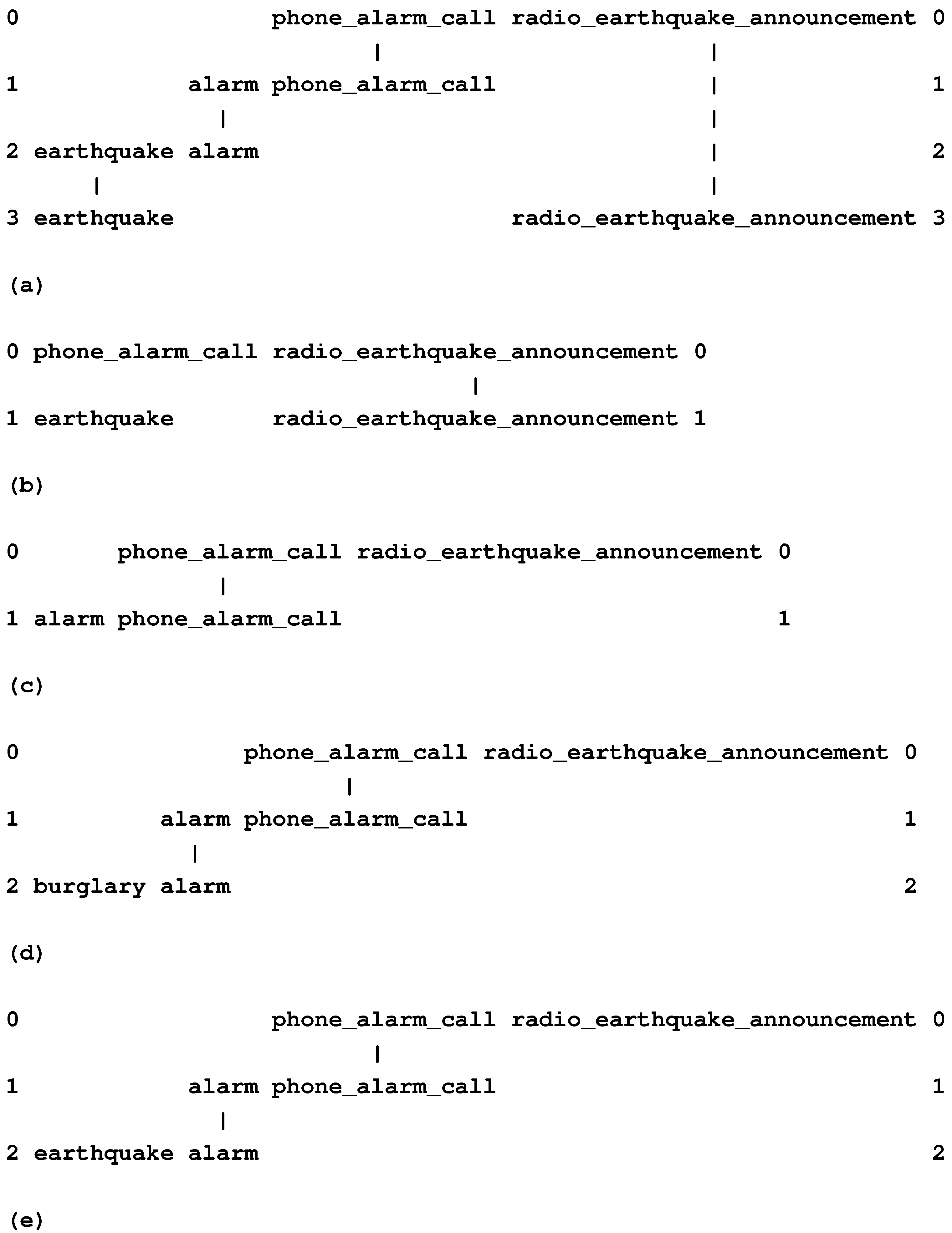

8.1. Discontinuous Dependencies in Syntax

The way in which the SP system can record discontinuous dependencies in syntax may be seen in both of the two parsings in Figure 6. The pattern in row 8 of each multiple alignment records the syntactic dependency between the plural noun phrase (“t w o k i t t e n s”), which is the subject of the sentence—marked with “Np”—and the plural verb phrase (“p l a y”)—marked with “Vp”—which belongs with it.

This kind of dependency is discontinuous, because it can bridge arbitrarily large amounts of intervening structure, such as, for example, “from the West” in a sentence, like “Winds from the West are strong”.

This method of marking discontinuous dependencies can accommodate overlapping dependencies, such as number dependencies and gender dependencies in languages like French (BK, Section 5.4). It also provides a means of encoding the interesting system of overlapping and interlocking dependencies in English auxiliary verbs, described by Noam Chomsky in Syntactic Structures [53].

In that book, the structure of English auxiliary verbs is part of Chomsky’s evidence in support of Transformational Grammar. Despite the elegance and persuasiveness of his arguments, it turns out that the structure of English auxiliary verbs may be described with non-transformational rules in, for example, Definite Clause Grammars [54], and also in the SP system, as outlined in the subsections that follow.

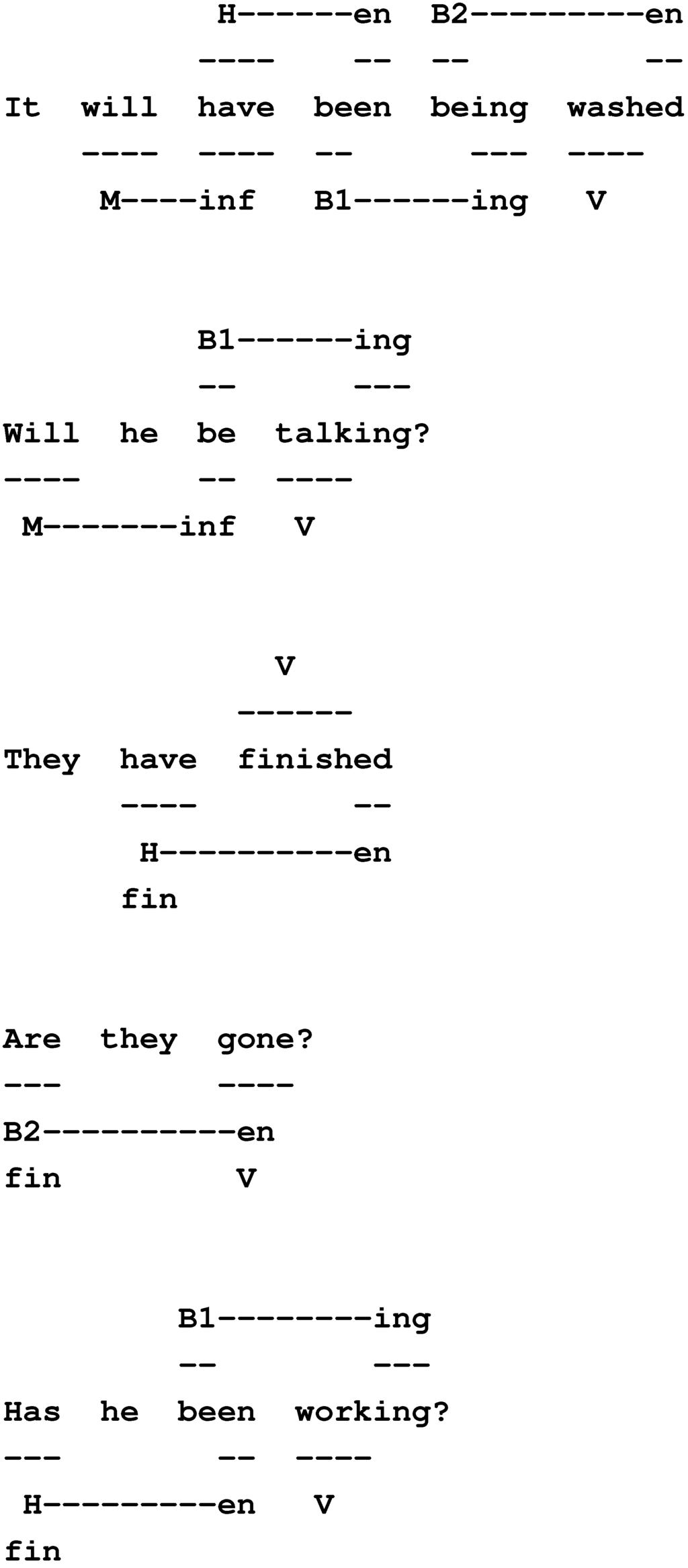

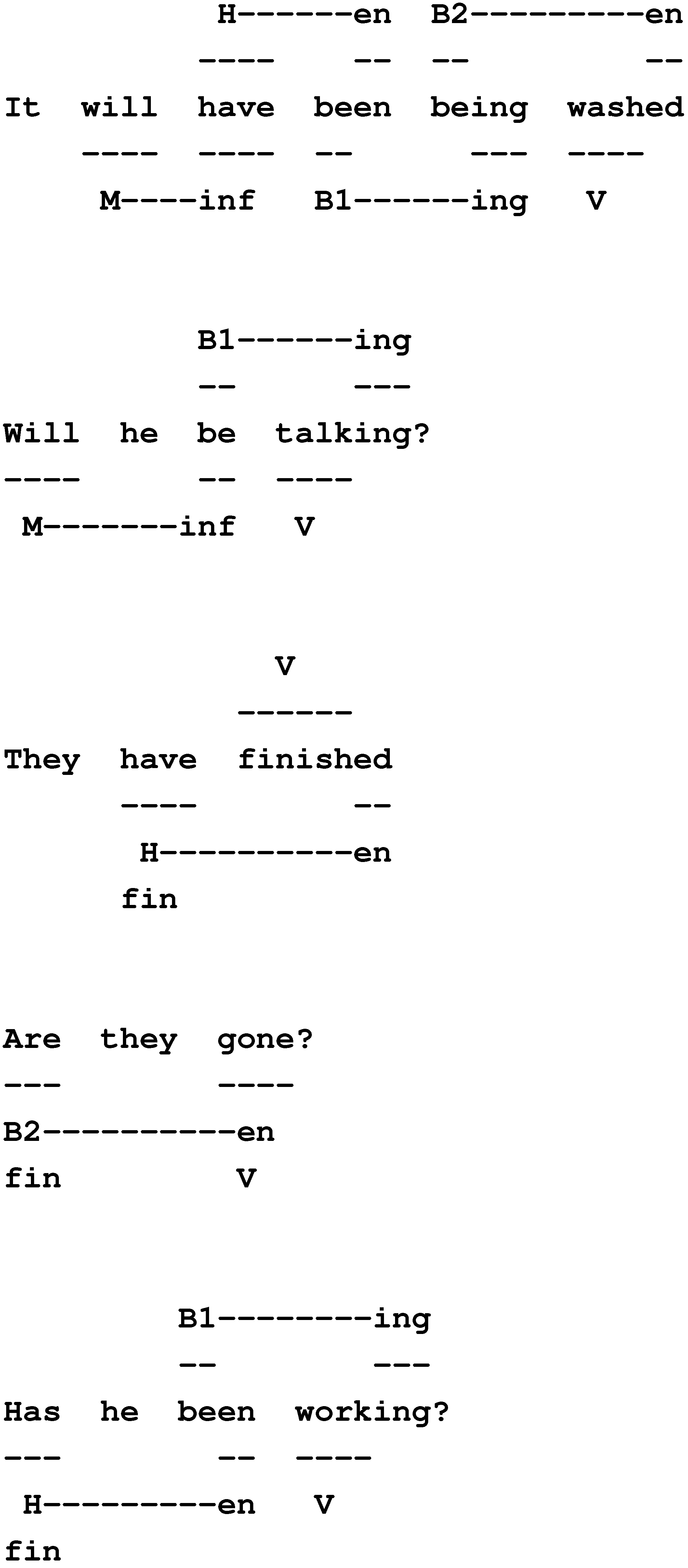

8.2. Two Quasi-Independent Patterns of Constraint in English Auxiliary Verbs

In English, the syntax for main verbs and the auxiliary verbs which may accompany them follows two quasi-independent patterns of constraint which interact in an interesting way.

The primary constraints may be expressed with this sequence of symbols:

which should be interpreted in the following way:

M H B B V,

- Each letter represents a category for a single word:

- –

- “M” stands for “modal” verbs, like “will”, “can”, “would”, etc.

- –

- “H” stands for one of the various forms of the verb, “to have”.

- –

- Each of the two instances of “B” stands for one of the various forms of the verb, “to be”.

- –

- “V” stands for the main verb, which can be any verb, except a modal verb (unless the modal verb is used by itself).

- The words occur in the order shown, but any of the words may be omitted.

- Questions of “standard” form follow exactly the same pattern as statements, except that the first verb, whatever it happens to be (“M”, “H”, the first “B”, the second “B” or “V”), precedes the subject noun phrase instead of following it.

Here are two examples of the primary pattern with all of the words included:

The secondary constraints are these:

It will have been being washed

M H B B V

Will it have been being washed?

M H B B V

M H B B V

Will it have been being washed?

M H B B V

- Apart from the modals, which always have the same form, the first verb in the sequence, whatever it happens to be (“H”, the first “B”, the second “B” or “V”), always has a “finite” form (the form it would take if it were used by itself with the subject).

- If an “M” auxiliary verb is chosen, then whatever follows it (“H”, first “B”, second “B” or “V”) must have an “infinitive” form (i.e., the “standard” form of the verb as it occurs in the context, “to ...”, but without the word “to”).

- If an “H” auxiliary verb is chosen, then whatever follows it (the first “B”, the second “B” or “V”) must have a past tense form, such as “been”, “seen”, “gone”, “slept”, “wanted”, etc. In Chomsky’s Syntactic Structures [53], these forms were characterised as en forms, and the same convention has been adopted here.

- If the first of the two “B” auxiliary verbs is chosen, then whatever follows it (the second “B” or “V”) must have an ing form, e.g., “singing”, “eating”, “having”, “being”, etc.

- If the second of the two “B” auxiliary verbs is chosen, then whatever follows it (only the main verb is possible now) must have a past tense form (marked with en, as above).

- The constraints apply to questions in exactly the same way as they do to statements.

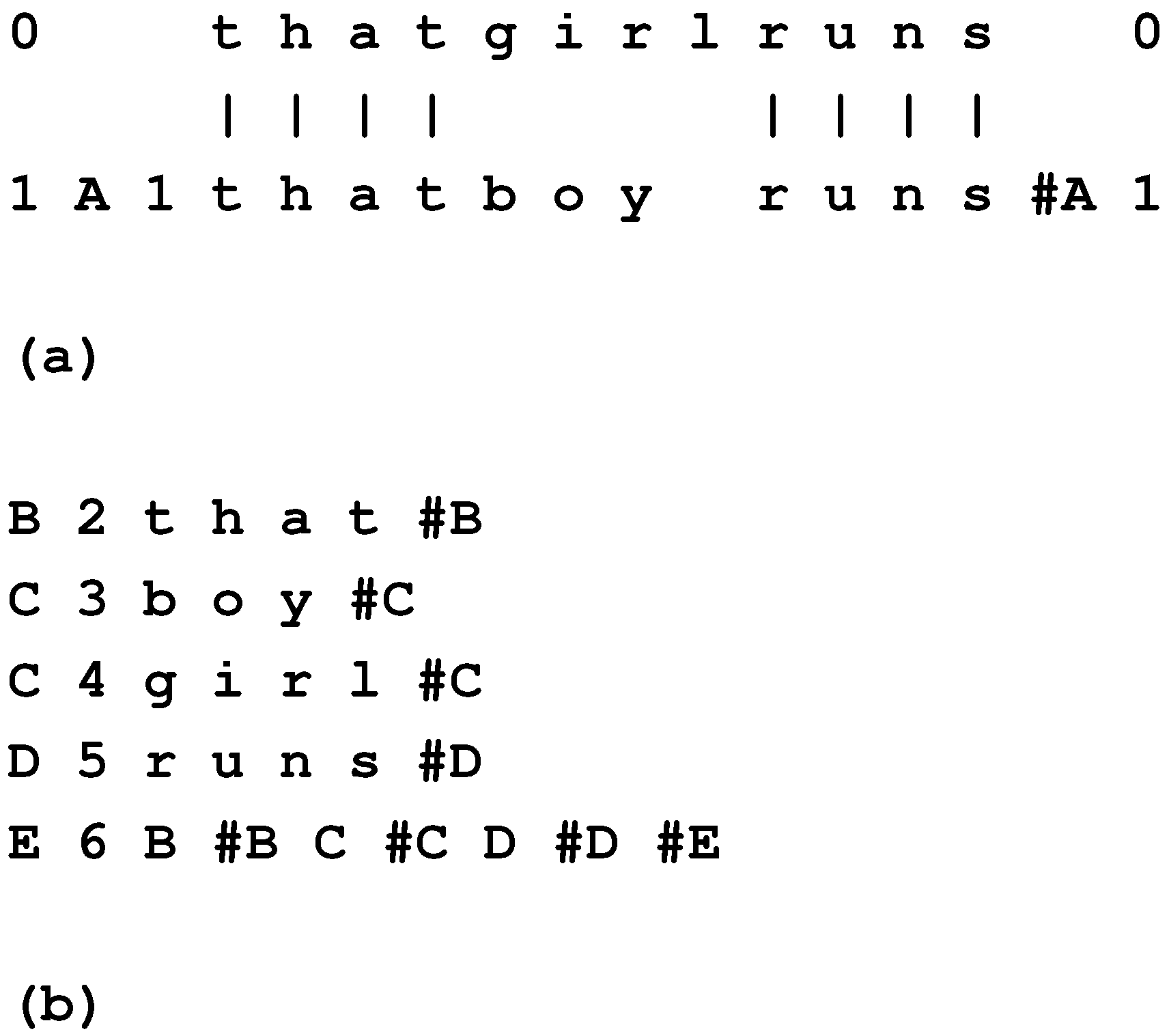

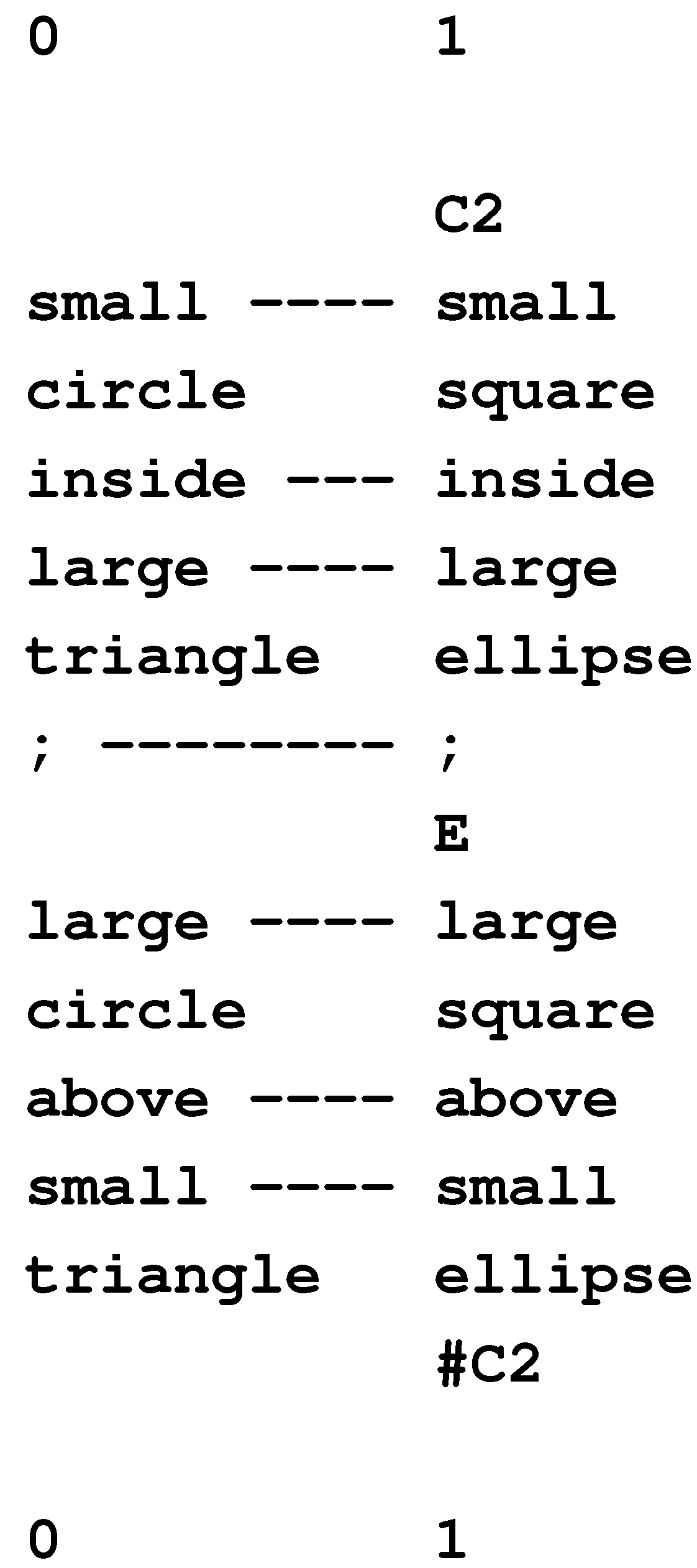

Figure 12 shows a selection of examples with the dependencies marked.

Figure 12.

A selection of example sentences in English with markings of dependencies between the verbs. Key: “M” = modal, “H” = forms of the verb “have”, “B1” = first instance of a form of the verb “be”, “B2” = second instance of a form of the verb “be”, “V” = main verb, “fin” = a finite form, “inf” = an infinitive form, “en” = a past tense form, “ing” = a verb ending in “ing”.

Figure 12.

A selection of example sentences in English with markings of dependencies between the verbs. Key: “M” = modal, “H” = forms of the verb “have”, “B1” = first instance of a form of the verb “be”, “B2” = second instance of a form of the verb “be”, “V” = main verb, “fin” = a finite form, “inf” = an infinitive form, “en” = a past tense form, “ing” = a verb ending in “ing”.

8.3. Multiple Alignments and English Auxiliary Verbs

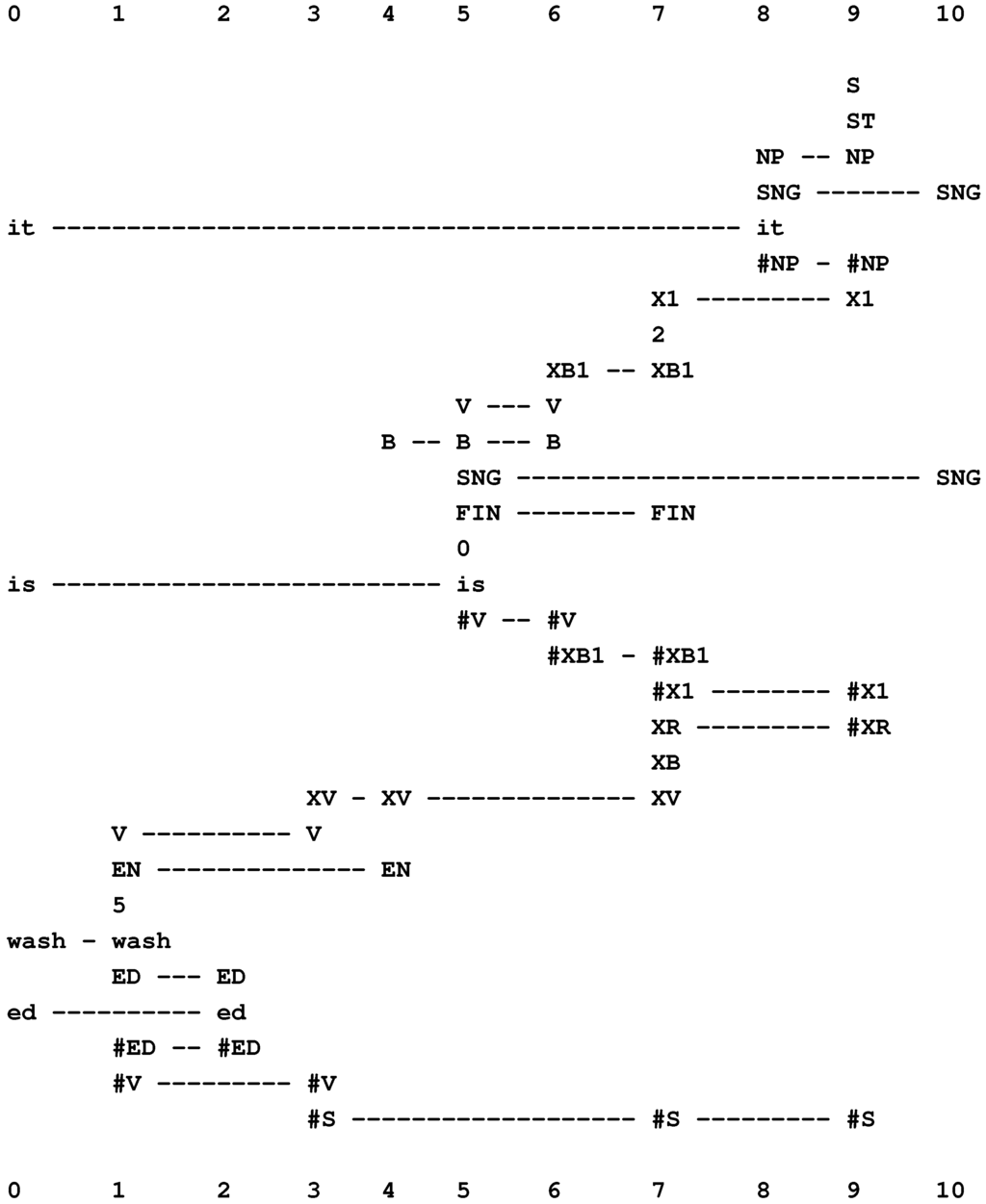

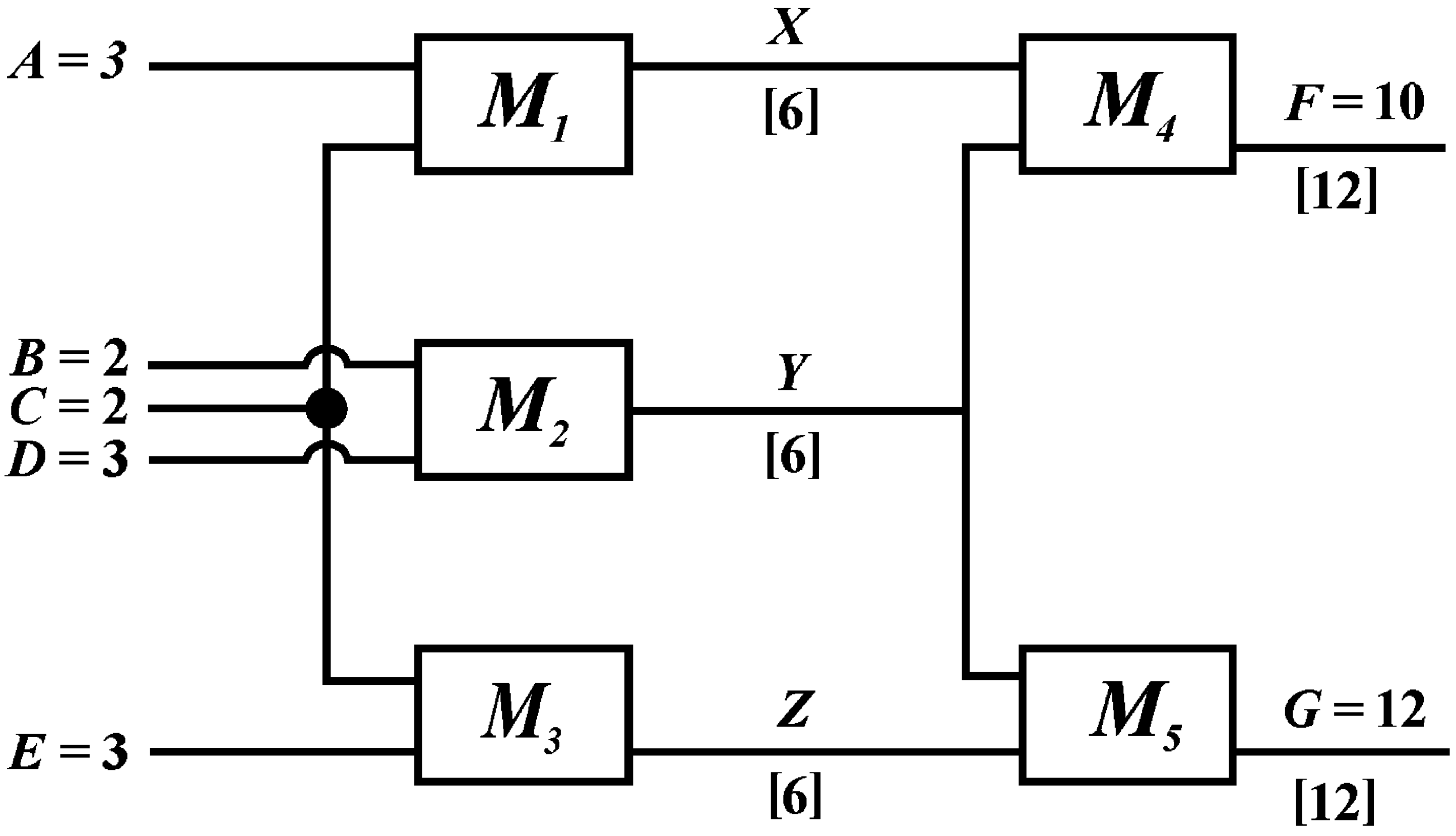

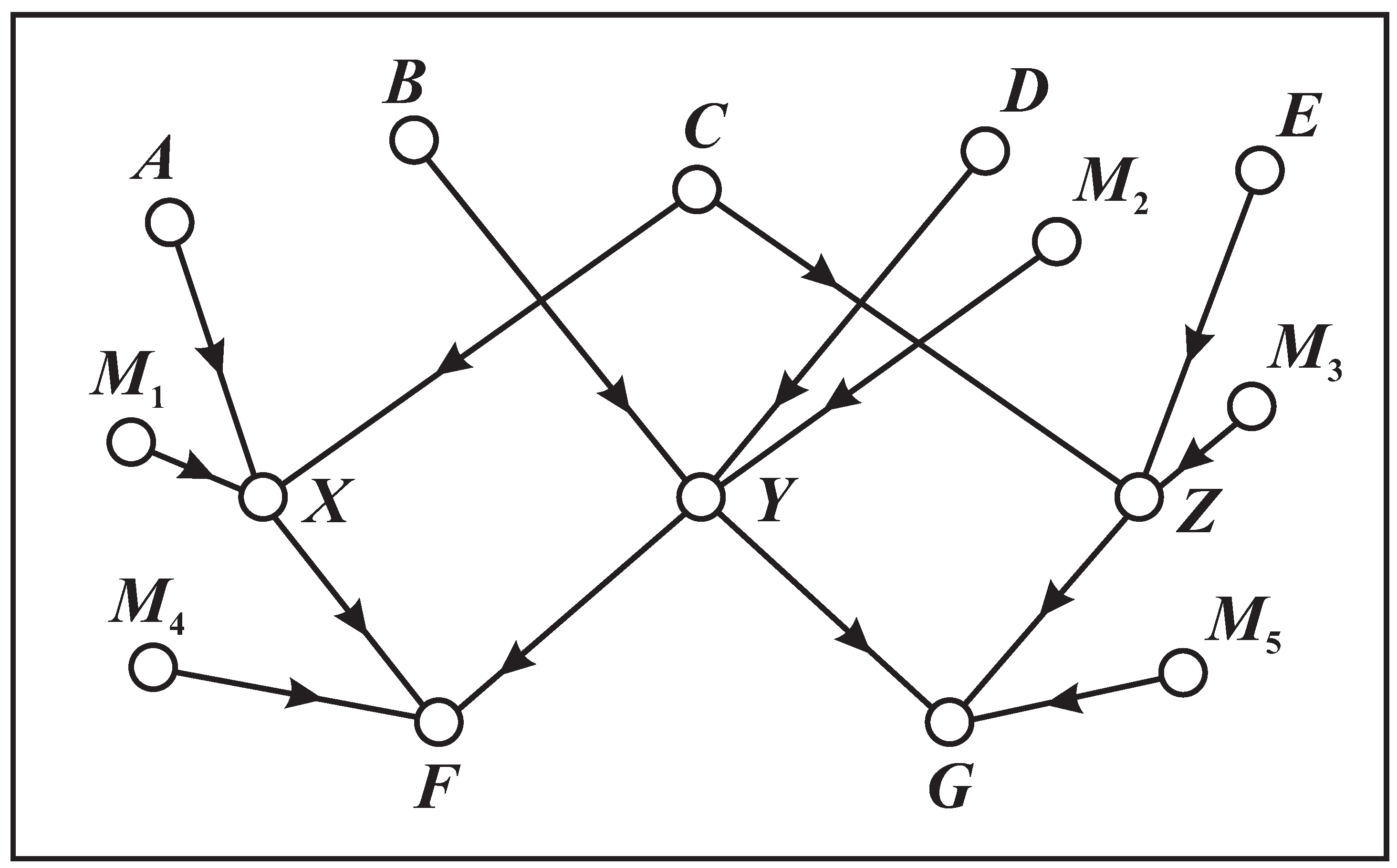

Without reproducing all the detail in BK (Section 5.5), we can see from Figure 13 and Figure 14 how the primary and secondary constraints may be applied in the multiple alignment framework.

Figure 13.

The best alignment found by the SP model with “it is wash ed” in New (column 0) and a user-supplied grammar in Old.

Figure 13.

The best alignment found by the SP model with “it is wash ed” in New (column 0) and a user-supplied grammar in Old.

In each figure, the sentence to be analysed is shown as a New pattern in column 0. The primary constraints are applied via the matching of symbols in Old patterns in the remaining columns, with a consequent interlocking of the patterns, so that they recognise sentences of the form, “M H B B V”, with options as described above.

In Figure 13 [55], the secondary constraints apply as follows:

- The first verb, “is”, is marked as having the finite form (with the symbol “FIN” in columns 5 and 7). The same word is also marked as being a form of the verb “to be” (with the symbol “B” in columns 4, 5 and 6). Because of its position in the parsing, we know that it is an instance of the second “B” in the sequence “M H B B V”.

- The second verb, “washed”, is marked as being in the en category (with the symbol “EN” in columns 1 and 4).

- That a verb corresponding to the second instance of “B” must be followed by an en kind of verb is expressed by the pattern, “B XV EN”, in column 4.

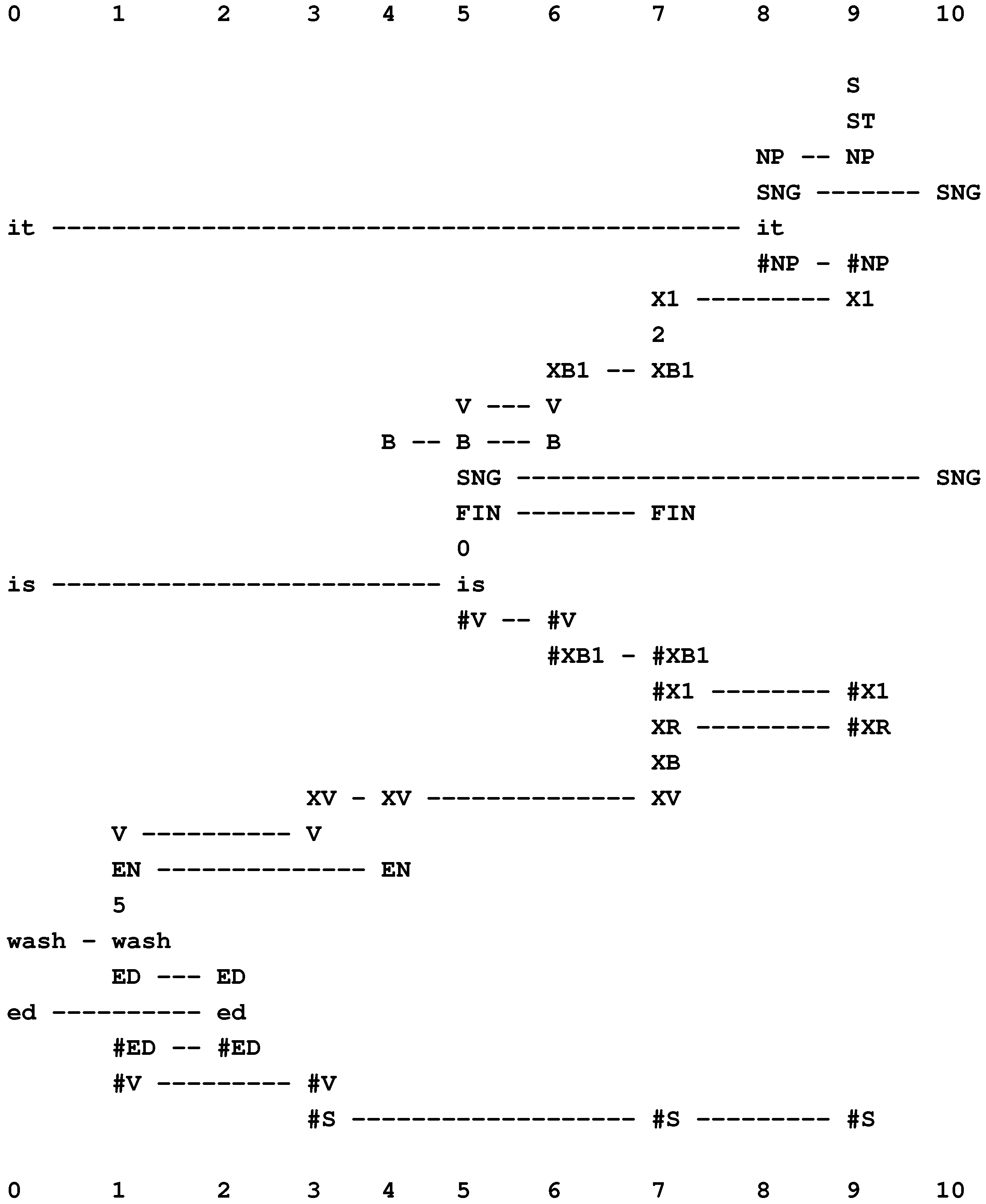

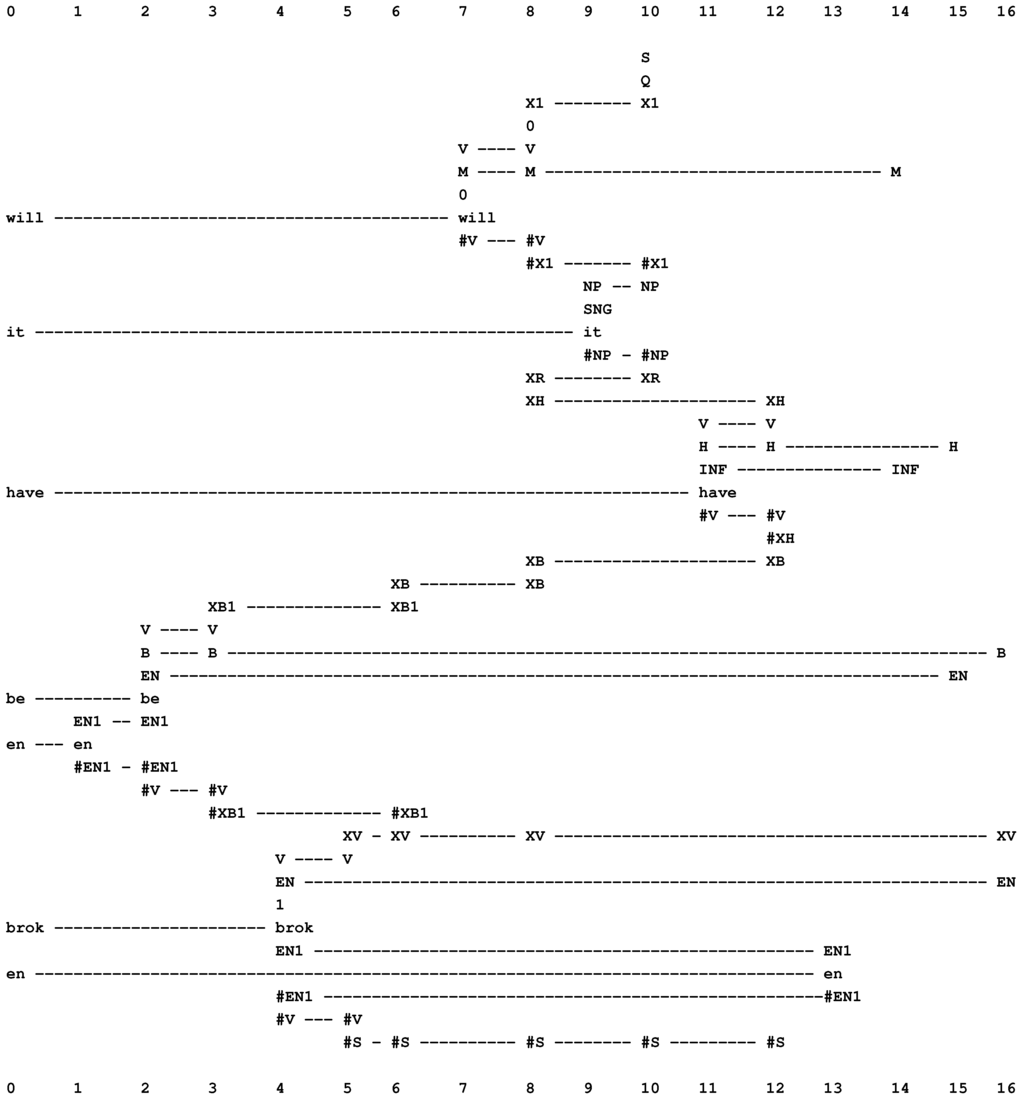

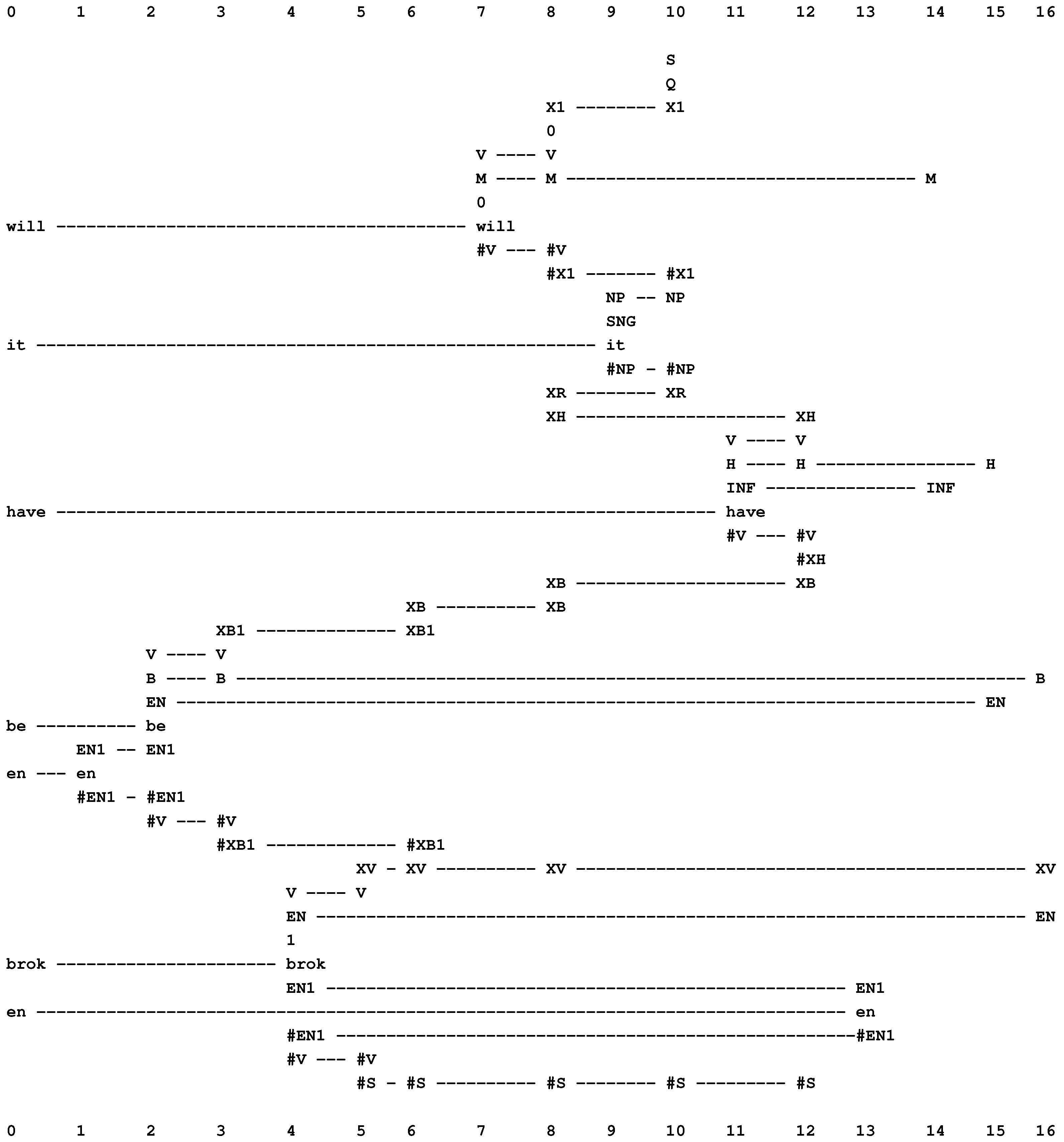

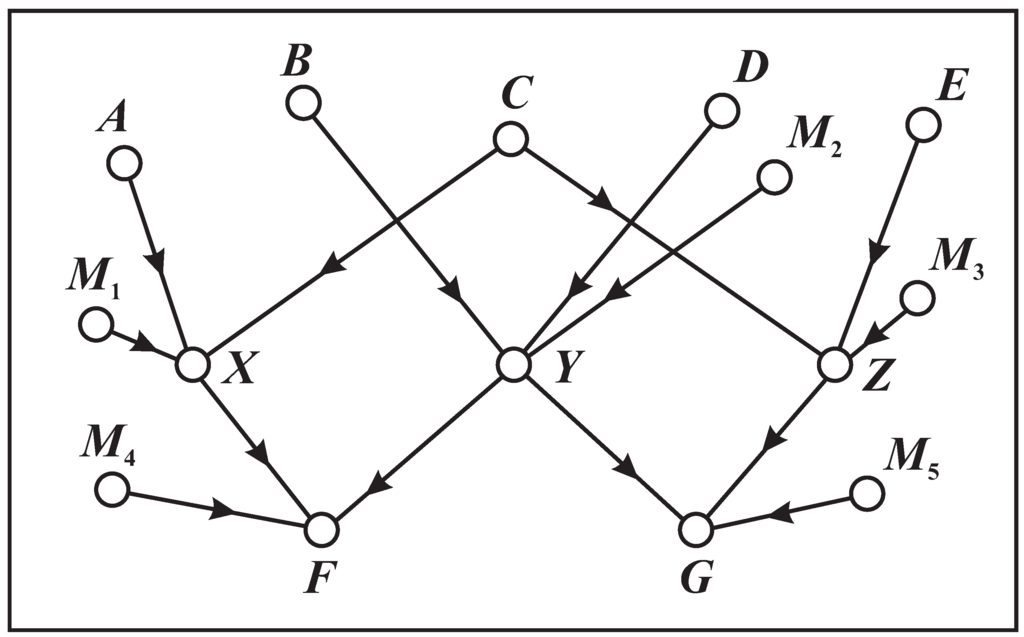

Figure 14.

The best alignment found by the SP model with “will it have be en brok en” in New (column 0) and the same grammar in Old as was used for the example in Figure 13.

Figure 14.

The best alignment found by the SP model with “will it have be en brok en” in New (column 0) and the same grammar in Old as was used for the example in Figure 13.

In Figure 14, the secondary constraints apply like this:

- The first verb, “will”, is marked as modal (with “M” in columns 7, 8 and 14).

- The second verb, “have”, is marked as having the infinitive form (with “INF” in columns 11 and 14), and it is also marked as a form of the verb, “to have” (with “H” in columns 11, 12, and 15).

- That a modal verb must be followed by a verb of infinitive form is marked with the pattern, “M INF”, in column 14.

- The third verb, “been”, is marked as being a form of the verb, “to be” (with “B” in columns 2, 3 and 16). Because of its position in the parsing, we know that it is an instance of the second “B” in the sequence, “M H B B V”. This verb is also marked as belonging to the en category (with “EN” in columns 2 and 15).

- That an “H” verb must be followed by an “EN” verb is marked with the pattern, “H EN”, in column 15.

- The fourth verb, “broken”, is marked as being in the en category (with “EN” in columns 4 and 16).

- That a “B” verb (second instance) must be followed by an “EN” verb is marked with the pattern, “B XV EN”, in column 16.

9. Pattern Recognition

The system also has some useful features as a framework for pattern recognition (BK, Chapter 6):

- It can model pattern recognition at multiple levels of abstraction, as described in BK (Section 6.4.1), and with the integration of class-inclusion relations with part-whole hierarchies (Section 9.1; BK, Section 6.4.1).

- The SP system can accommodate “family resemblance” or polythetic categories, meaning that recognition does not depend on the presence absence of any particular feature or combination of features. This is because there can be alternatives at any or all locations in a pattern and, also, because of the way the system can tolerate errors in data (next point).

- The system is robust in the face of errors of omission, commission or substitution in data (Section 4.2.2).

- The system facilitates the seamless integration of pattern recognition with other aspects of intelligence: reasoning, learning, problem solving, and so on.

- A probability may be calculated for any given identification, classification or associated inference (Section 4.4).

- As in the processing of natural language (Section 8), the importance of context in recognition [56] is accommodated in the way the system searches for a global best match for patterns. As before, any pattern or partial pattern may be a context for any other.

One area of application is medical diagnosis, viewed as pattern recognition [51]. There is also potential to assist in the understanding of natural vision and in the development of computer vision, as discussed in [27].

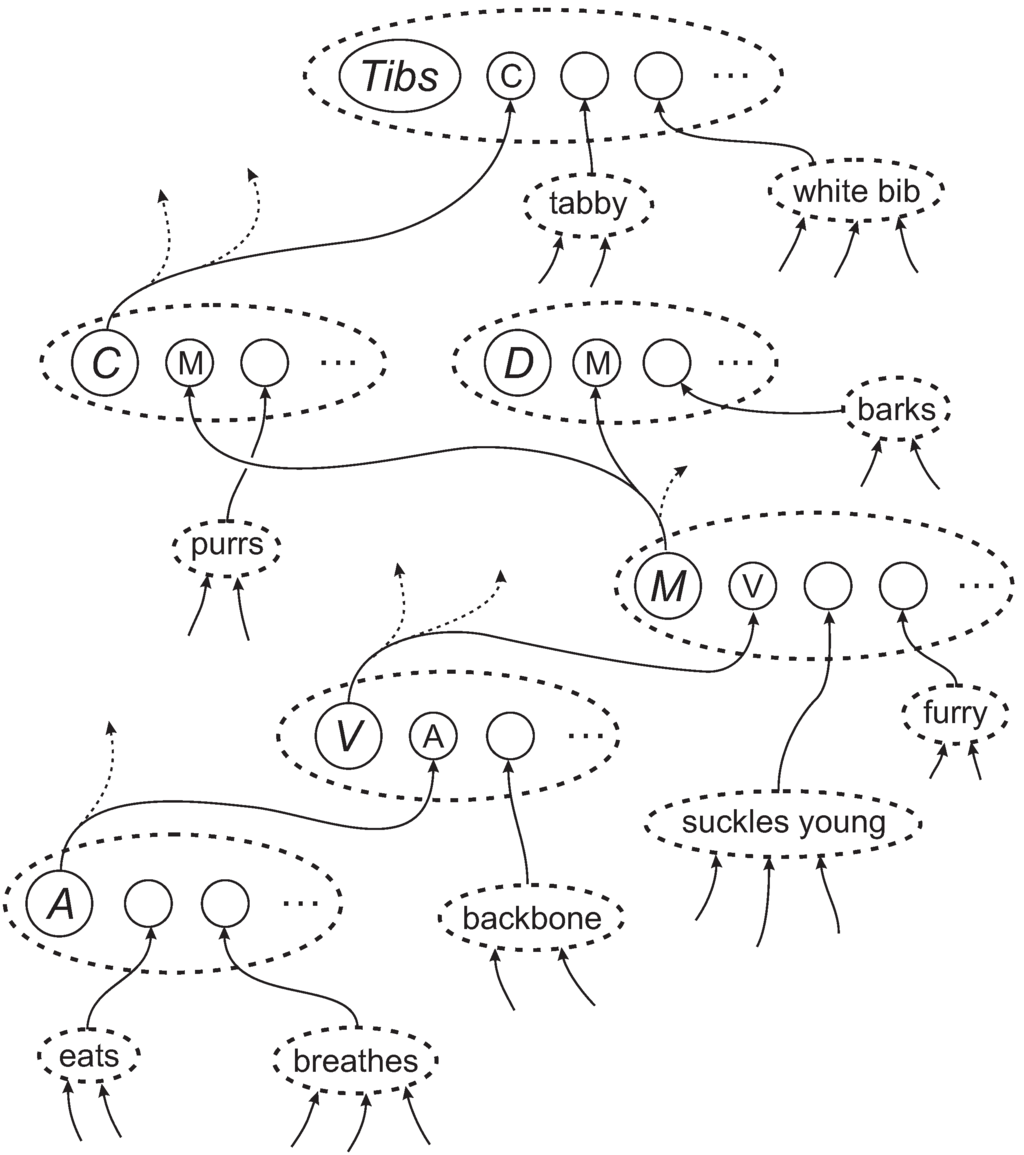

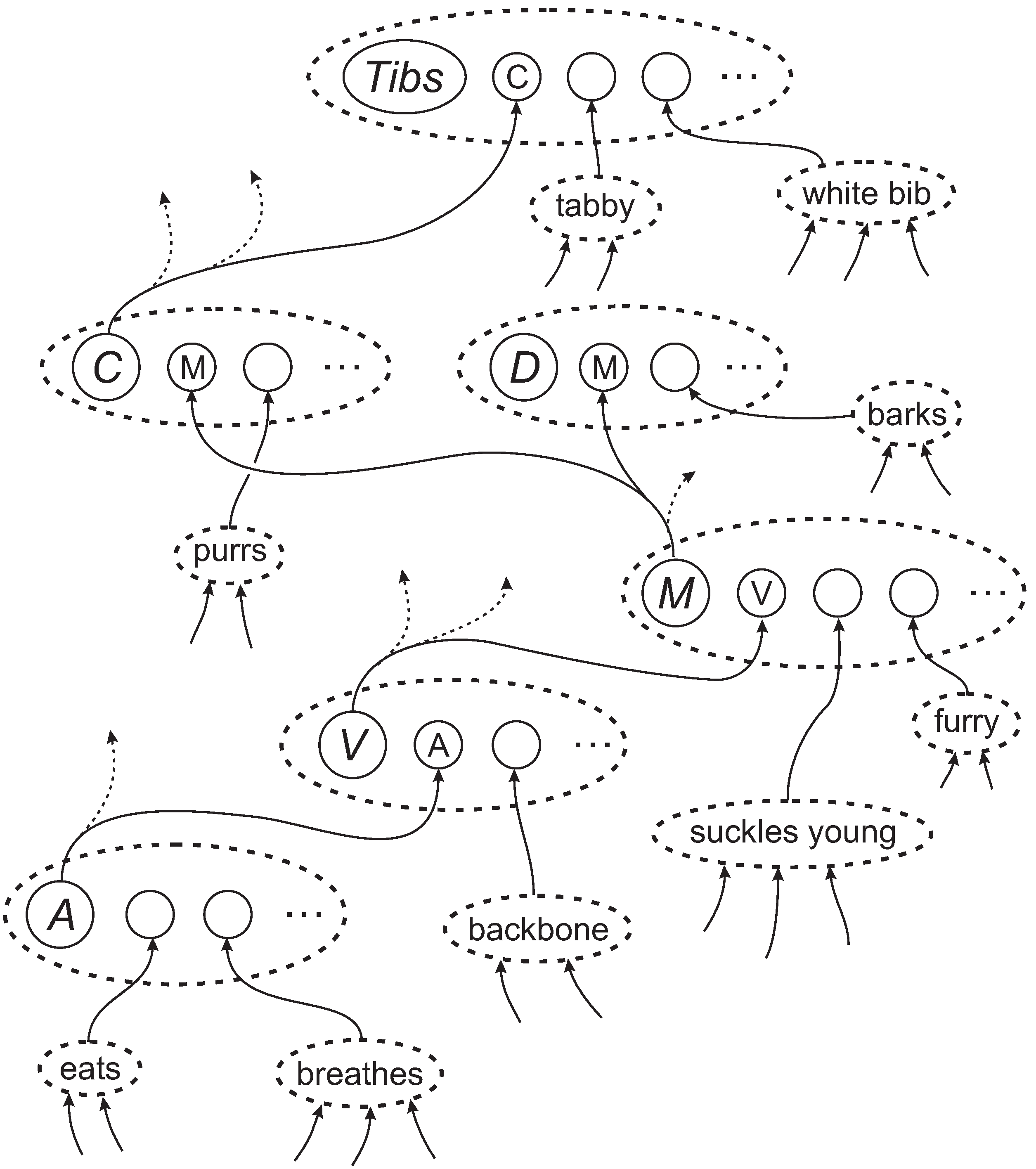

9.1. Class Hierarchies, Part-Whole Hierarchies and Their Integration

A strength of the multiple alignment concept is that it provides a simple but effective vehicle for the representation and processing of class-inclusion hierarchies, part-whole hierarchies and their integration.

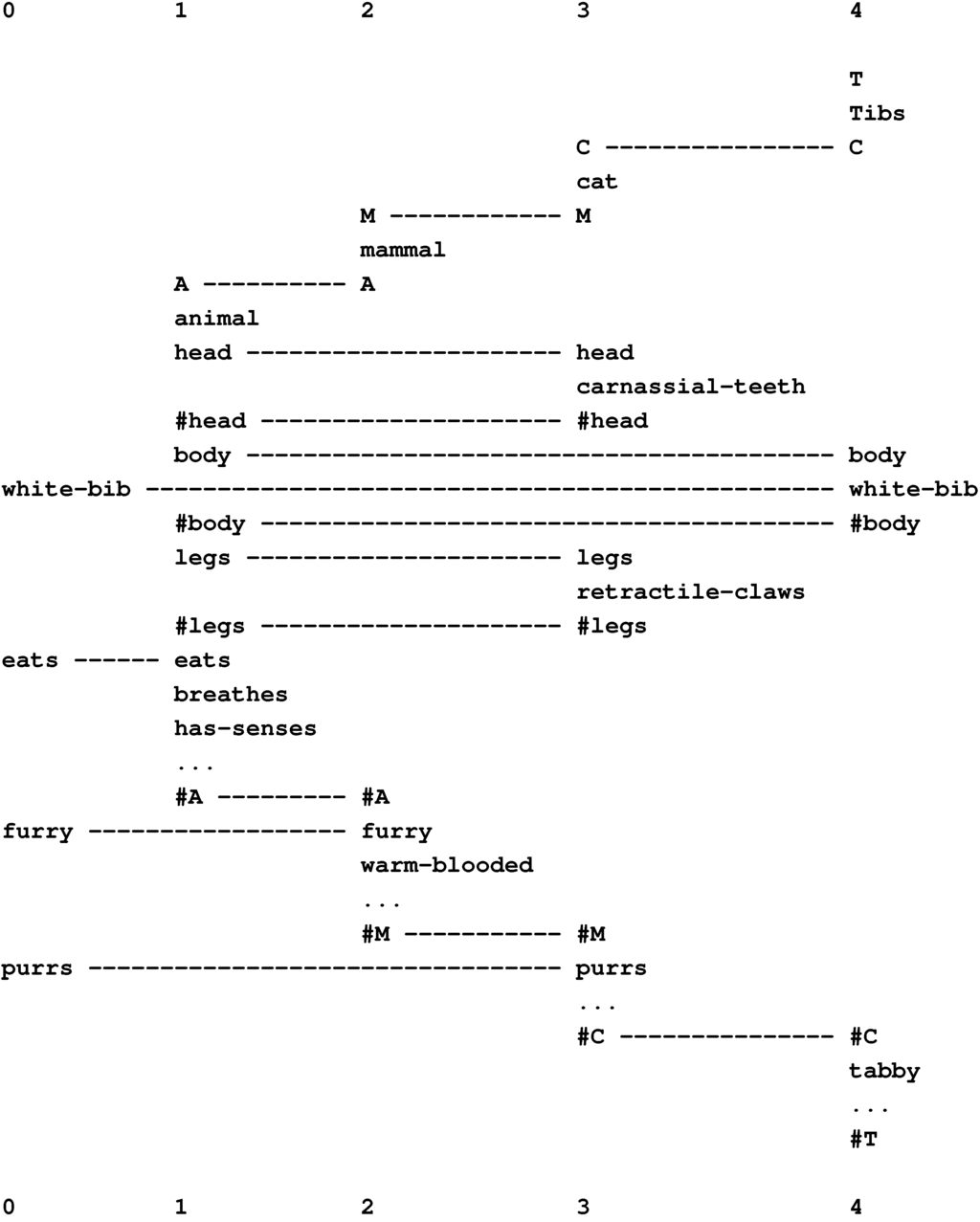

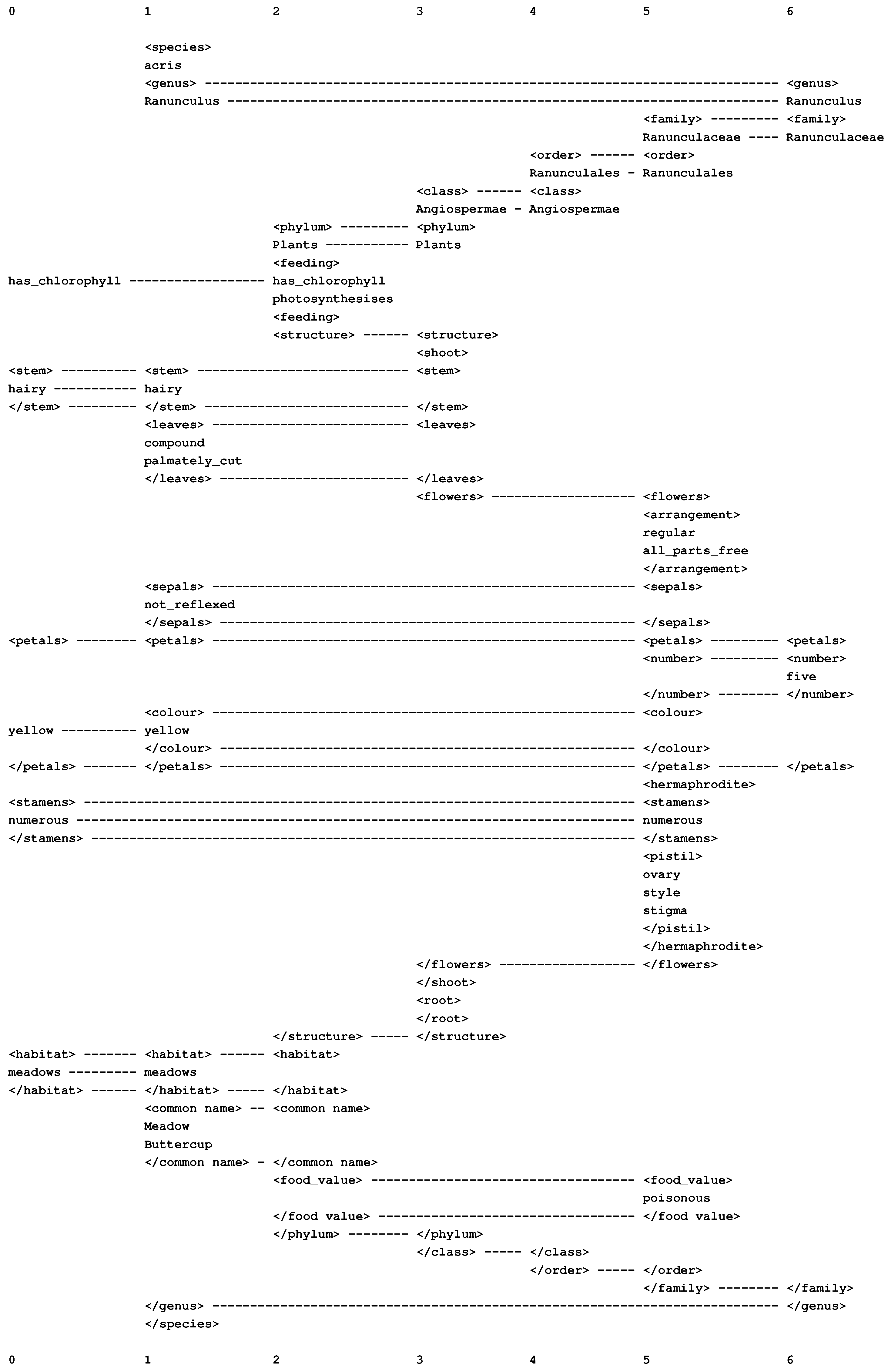

Figure 15 shows the best multiple alignment found by the SP model with the New pattern, “white-bib eats furry purrs” (column 0), representing some features of an unknown creature, and with a set of Old patterns representing different classes of animal, at varying levels of abstraction. From this multiple alignment, we may conclude that the unknown entity is an animal (column 1), a mammal (column 2), a cat (column 3), and the specific individual “Tibs” (column 4).

The framework also provides for the representation of heterarchies or cross classification: a given entity, such as “Jane” (or a class of entities), may belong in two or more higher-level classes that are not themselves hierarchically related, such as “woman” and “doctor” [57].

Figure 15.

The best multiple alignment found by the SP model, with the New pattern “white-bib eats furry purrs” and a set of Old patterns representing different categories of animal and their attributes.

Figure 15.

The best multiple alignment found by the SP model, with the New pattern “white-bib eats furry purrs” and a set of Old patterns representing different categories of animal and their attributes.

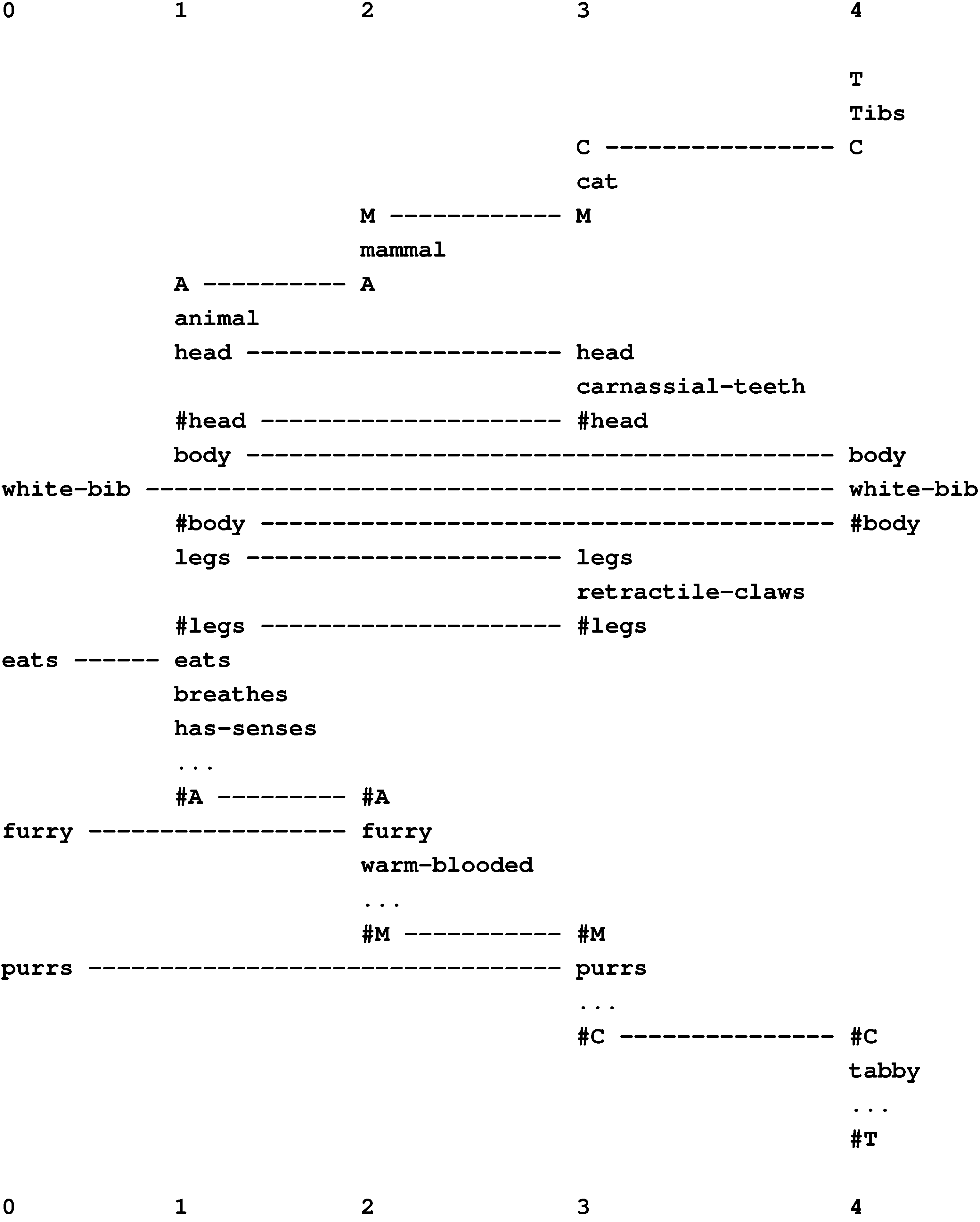

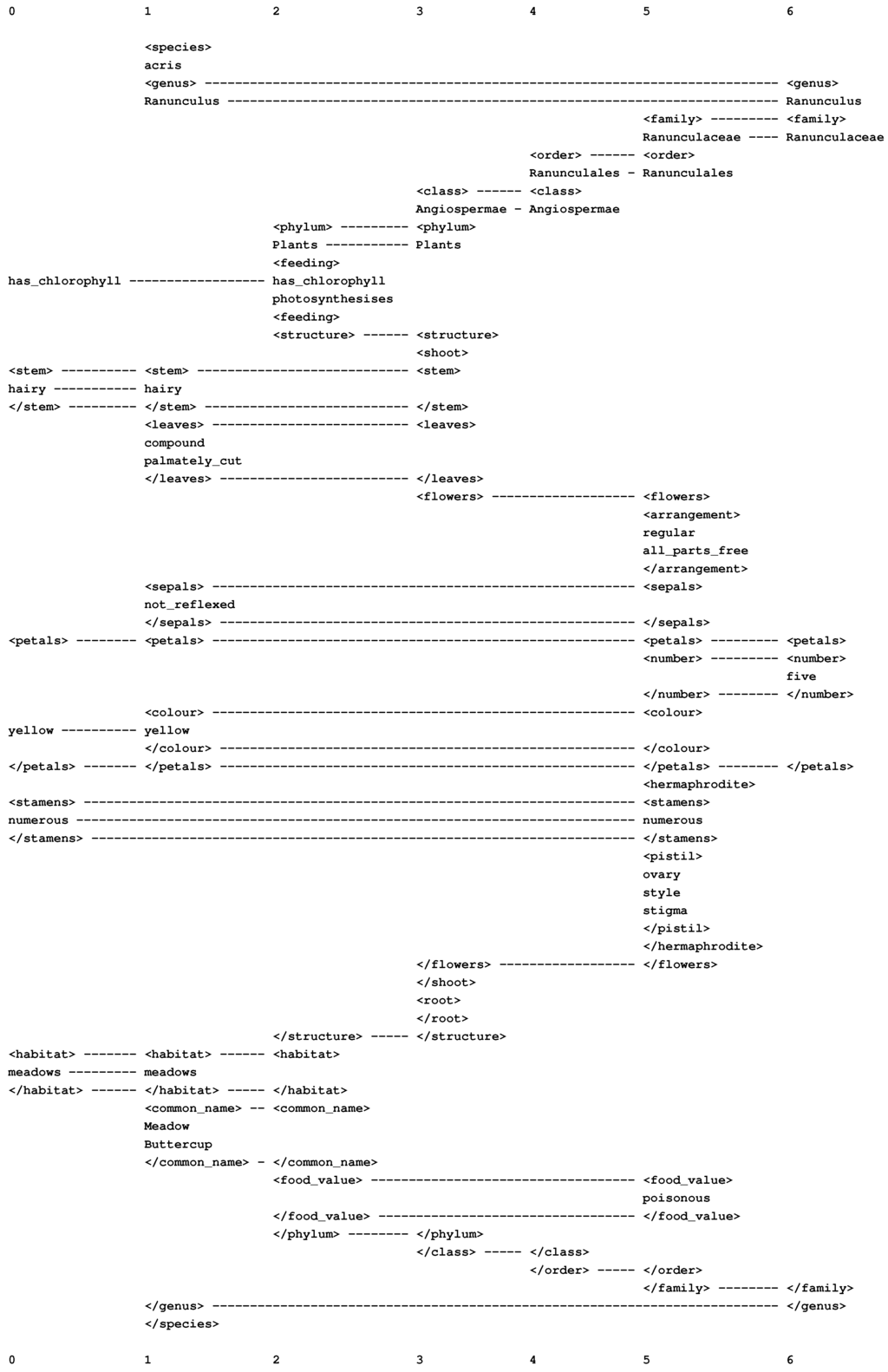

The way that class-inclusion relations and part-whole relations may be combined in one multiple alignment is illustrated in Figure 16. Here, some features of an unknown plant are expressed as a set of New patterns, shown in column 0: the plant has chlorophyll, the stem is hairy, it has yellow petals, and so on.

Figure 16.

The best multiple alignment created by the SP model, with a set of New patterns (in column 0) that describe some features of an unknown plant and a set of Old patterns, including those shown in columns 1 to 6, that describe different categories of plant, with their parts and sub-parts and other attributes.

Figure 16.

The best multiple alignment created by the SP model, with a set of New patterns (in column 0) that describe some features of an unknown plant and a set of Old patterns, including those shown in columns 1 to 6, that describe different categories of plant, with their parts and sub-parts and other attributes.

From this multiple alignment, we can see that the unknown plant is most likely to be the Meadow Buttercup, Ranunculus acris, as shown in column 1. As such, it belongs in the genus Ranunculus (column 6), the family Ranunculaceae (column 5), the order Ranunculales (column 4), the class Angiospermae (column 3), and the phylum Plants (column 2).

Each of these higher-level classifications contributes information about attributes of the plant and its division into parts and sub-parts. For example, as a member of the class Angiospermae (column 3), the plant has a shoot and roots, with the shoot divided into stem, leaves and flowers; as a member of the family Ranunculaceae (column 5), the plant has flowers that are “regular”, with all parts “free”; as a member of the phylum Plants (column 2), the buttercup has chlorophyll and creates its own food by photosynthesis; and so on.

9.2. Inference and Inheritance

In the example just described, we can infer from the multiple alignment, very directly, that the plant, which has been provisionally identified as the Meadow Buttercup, performs photosynthesis (column 2), has five petals (column 6), is poisonous (column 5), and so on. As in other object-oriented systems, the first of these attributes has been “inherited” from the class “Plants”, the second from the class Ranunculus and the third from the class Ranunculaceae. These kinds of inference illustrate the close connection, often noted, between pattern recognition and inferential reasoning (see also [58]).

10. Probabilistic Reasoning

The SP system can model several kinds of reasoning including inheritance of attributes (as just described), one-step “deductive” reasoning, abductive reasoning, reasoning with probabilistic decision networks and decision trees, reasoning with “rules”, nonmonotonic reasoning and reasoning with default values, reasoning in Bayesian networks (including “explaining away”), causal diagnosis, and reasoning that is not supported by evidence (BK, Chapter 7).

Since these several kinds of reasoning all flow from one computational framework (multiple alignment), they may be seen as aspects of one process, working individually or together without awkward boundaries.

Plausible lines of reasoning may be achieved, even when relevant information is incomplete.

Probabilities of inferences may be calculated, including extreme values (0 or 1) in the case of logic-like “deductions”.

A selection of examples is described in the following subsections.

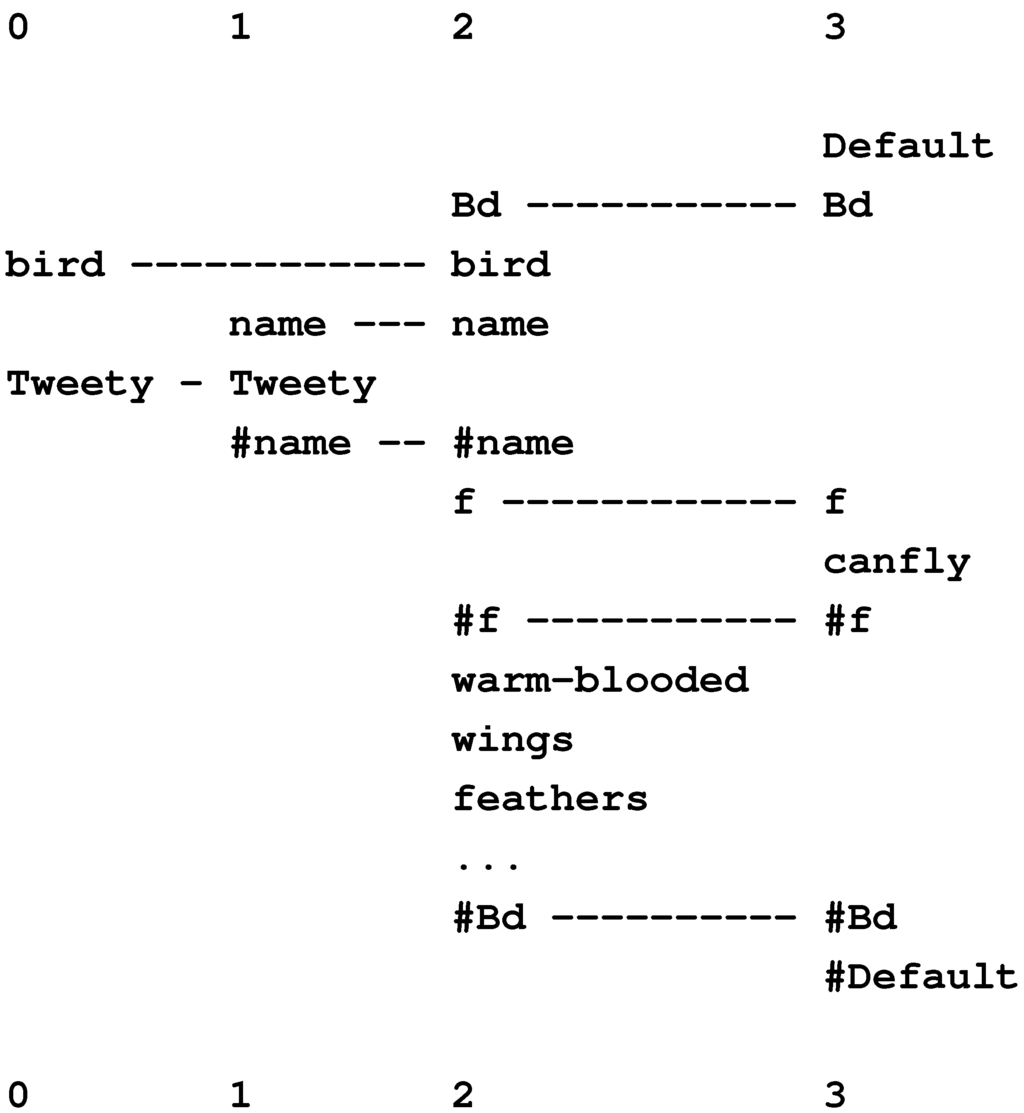

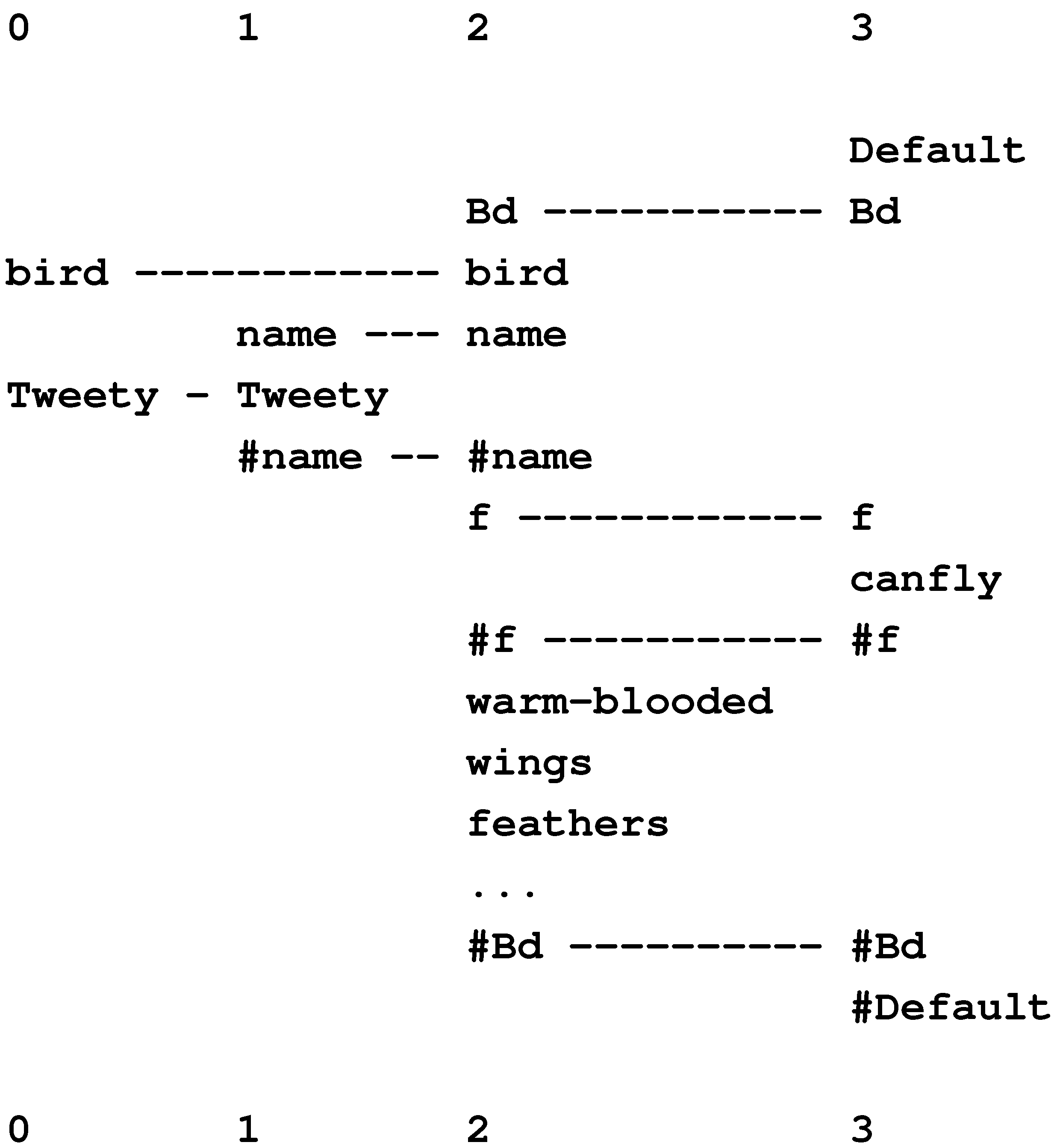

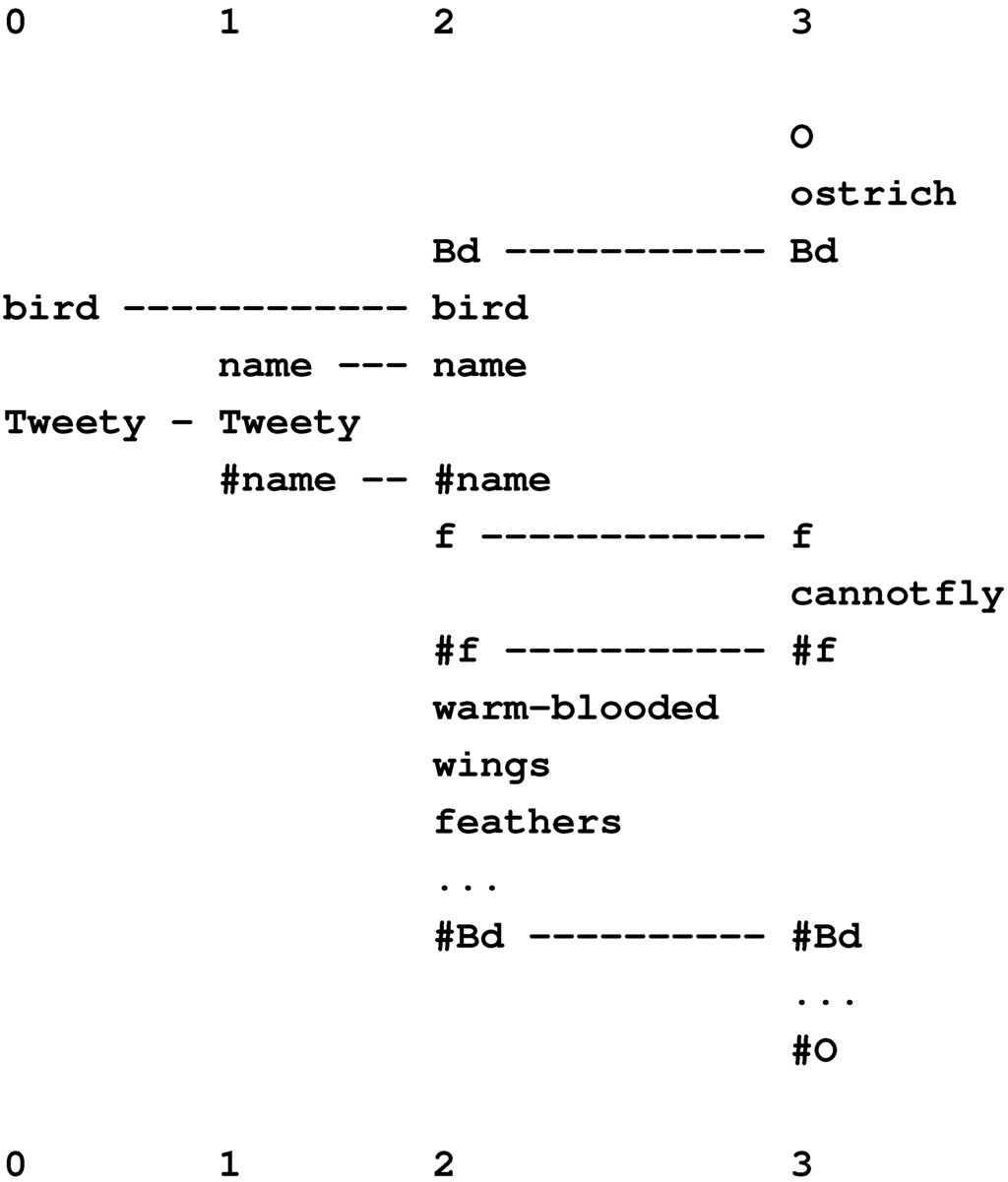

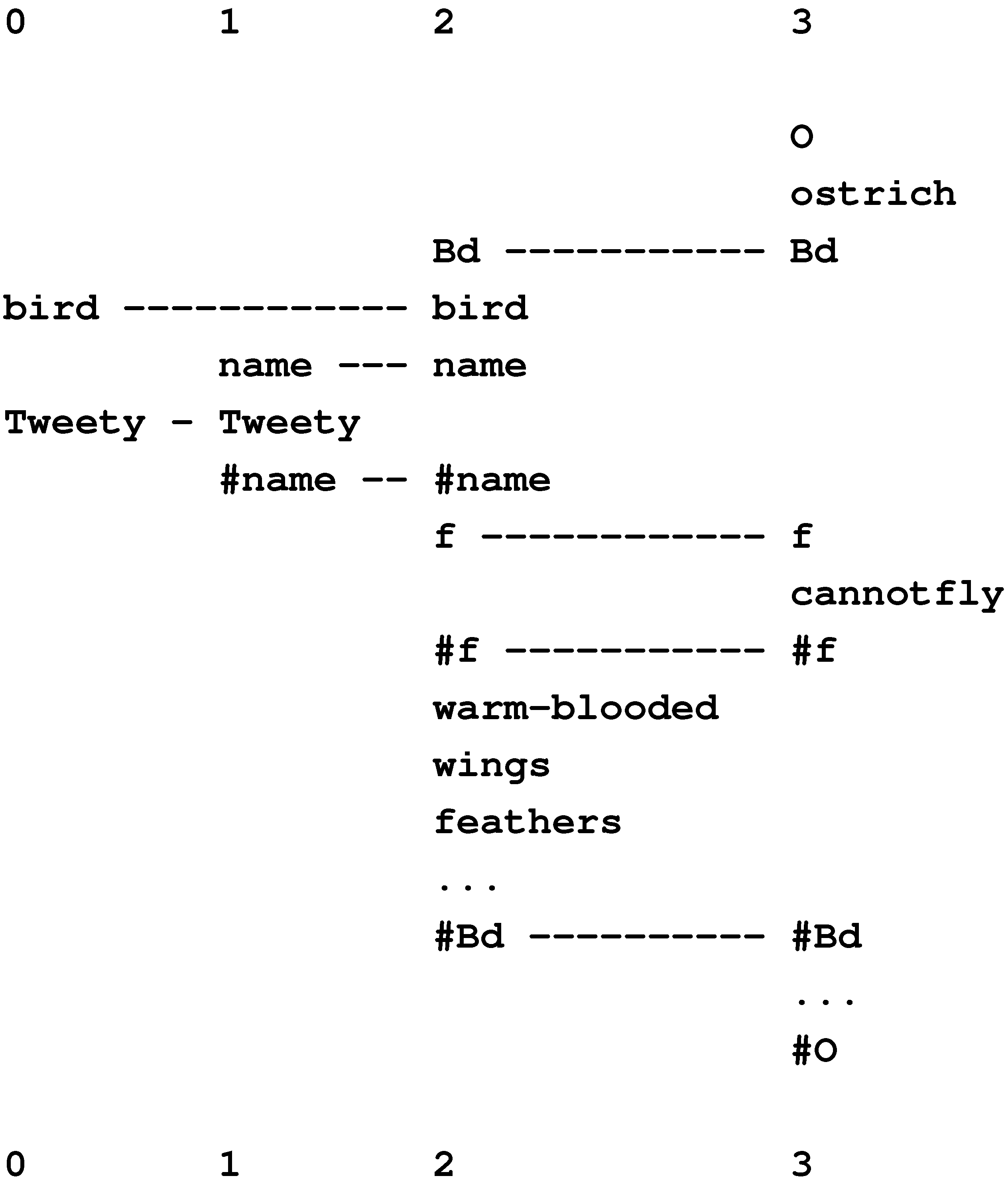

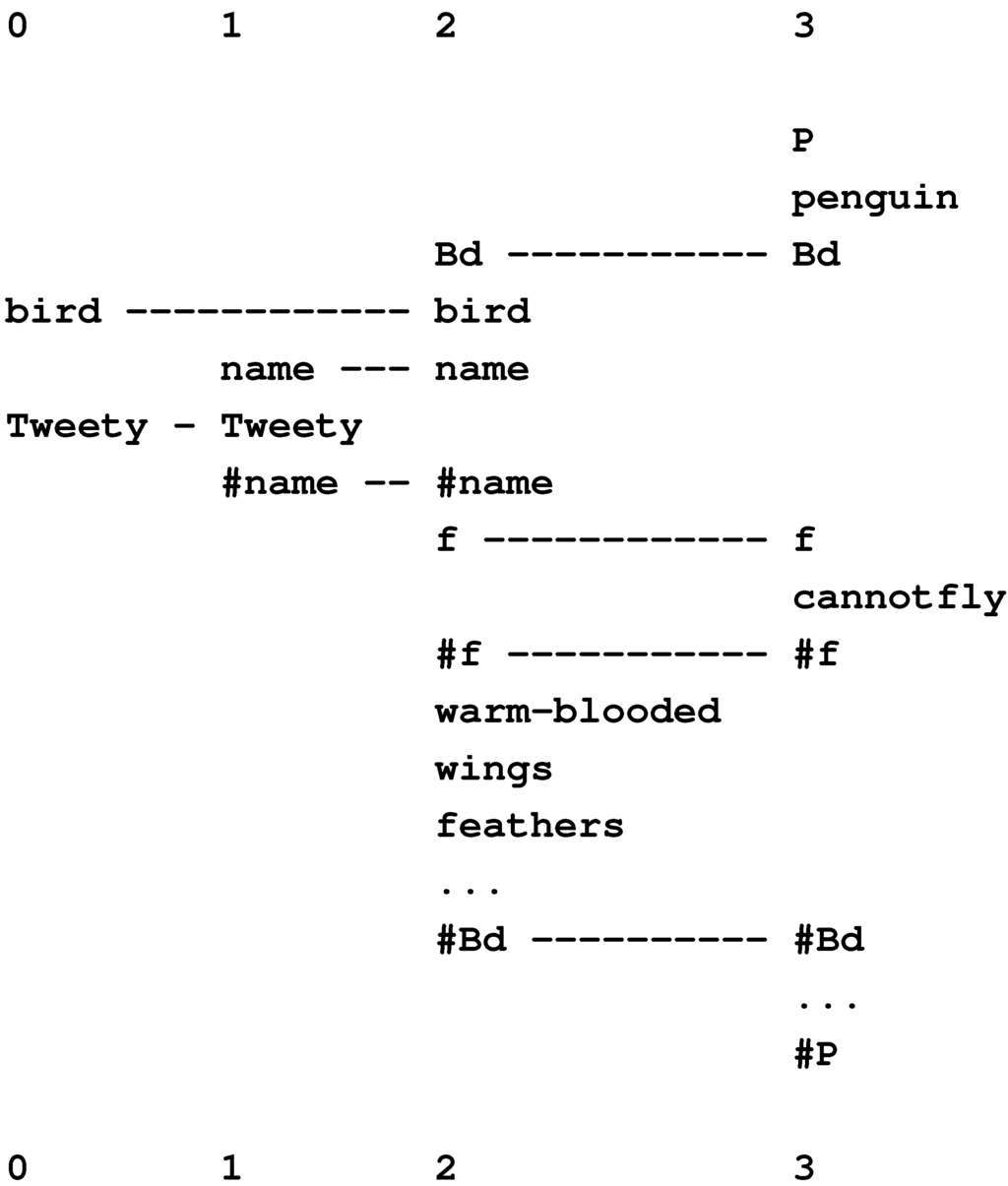

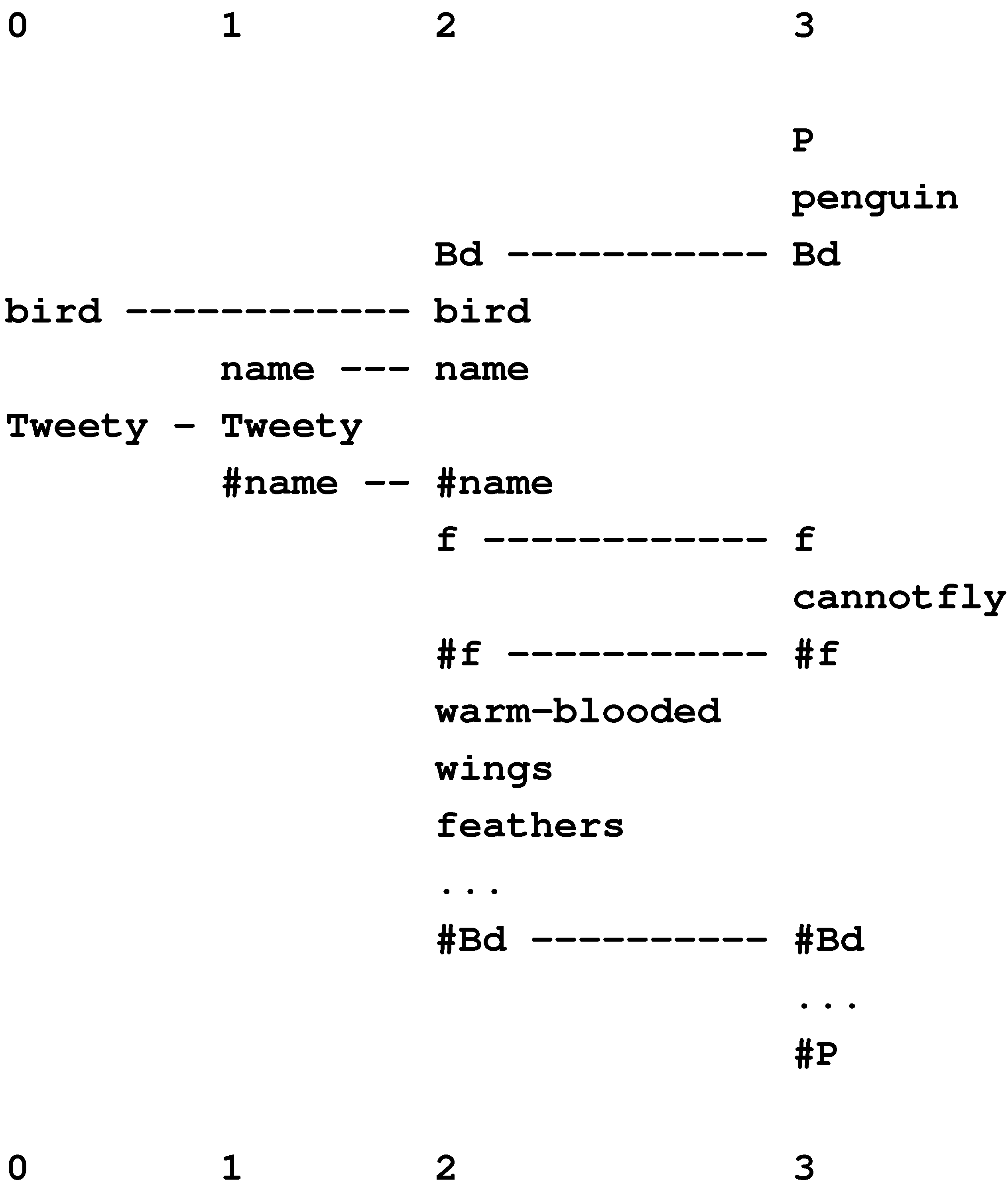

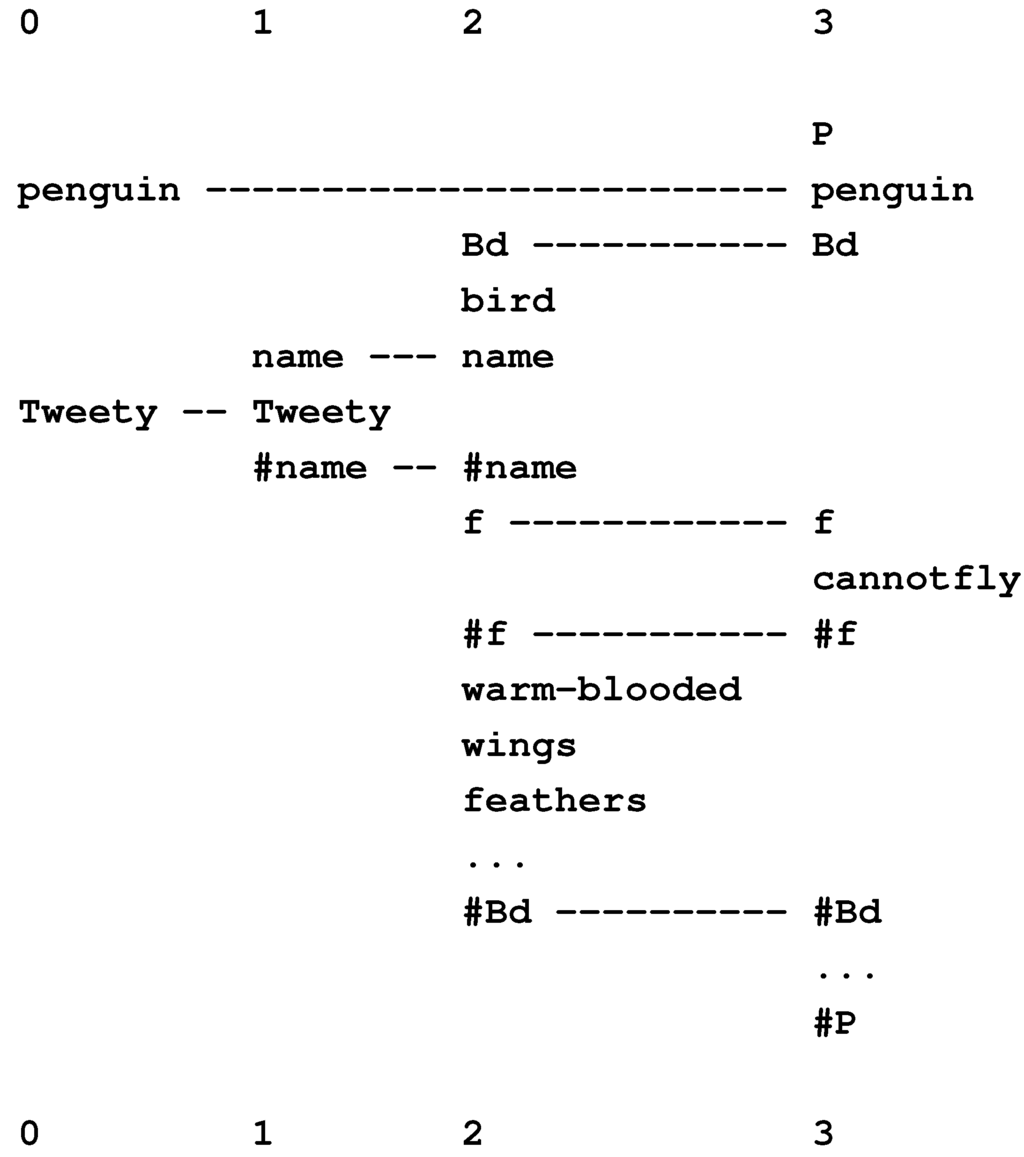

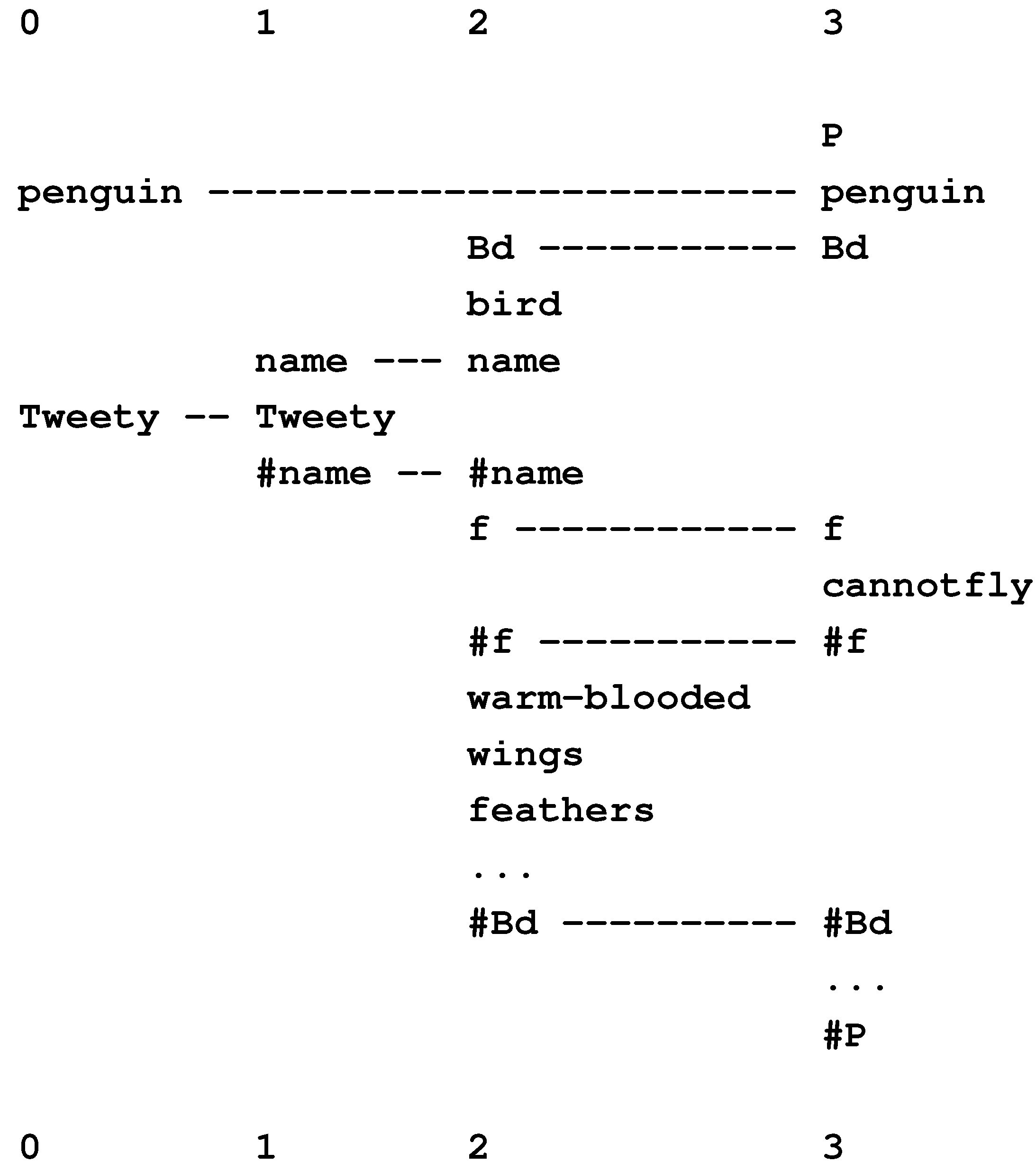

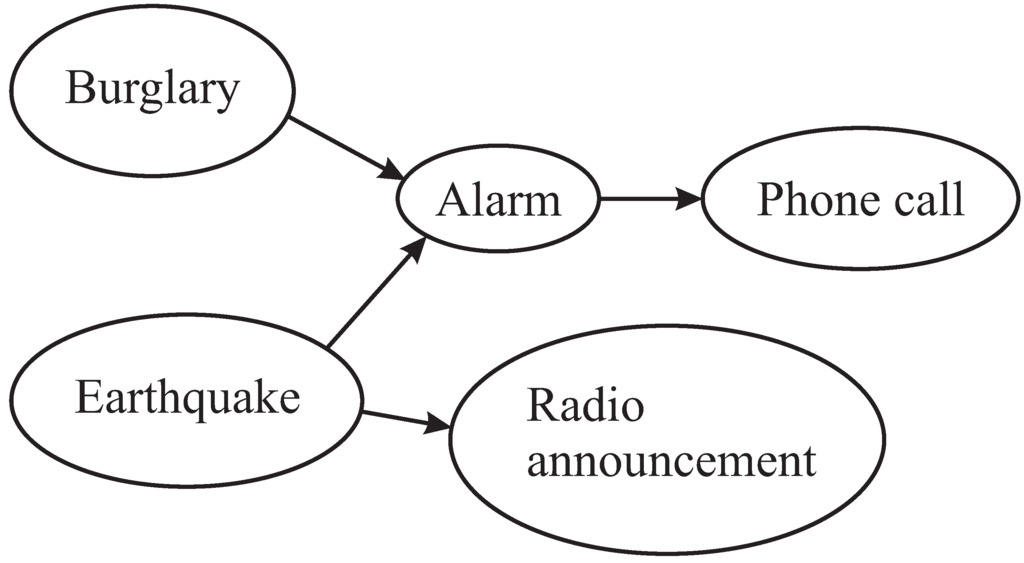

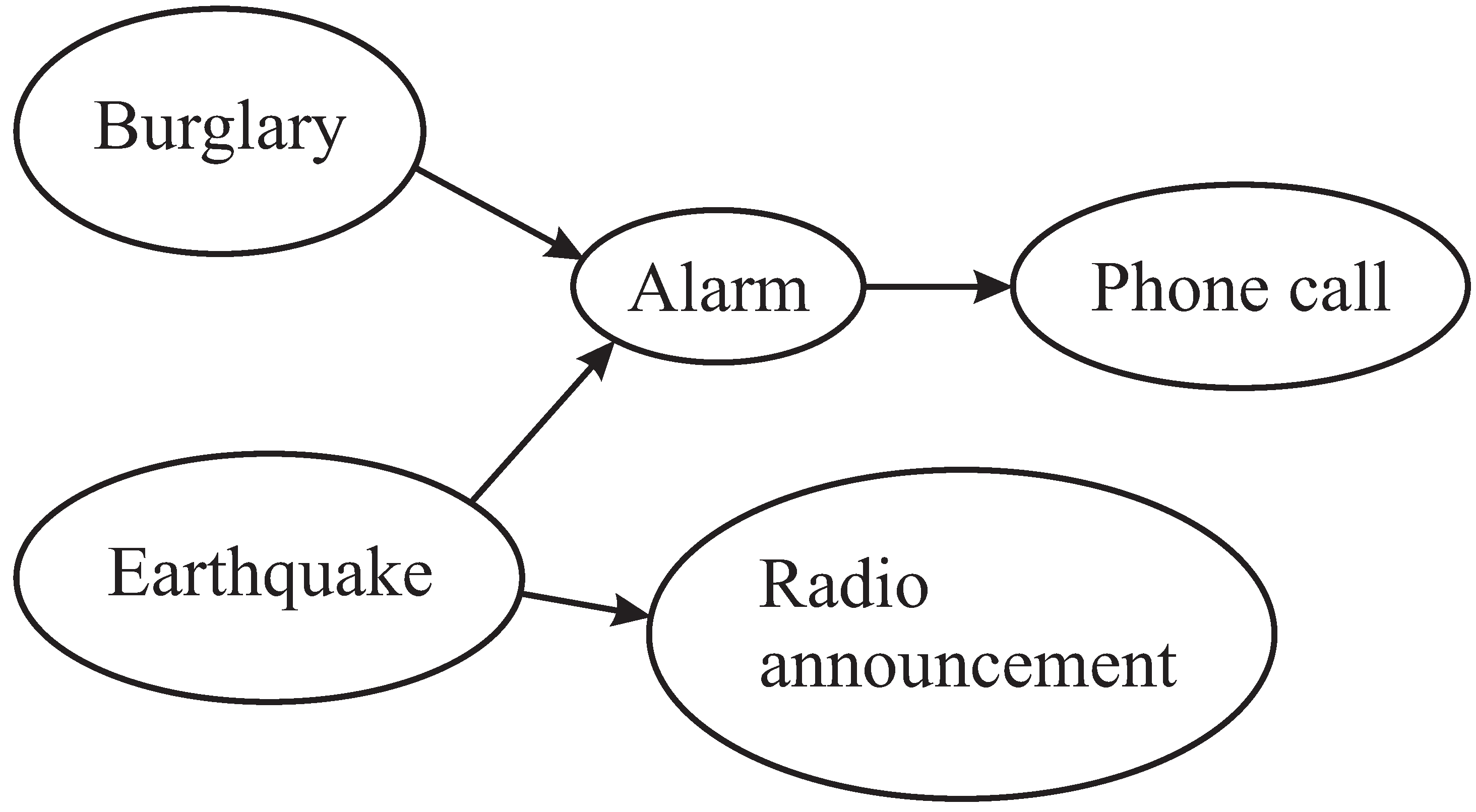

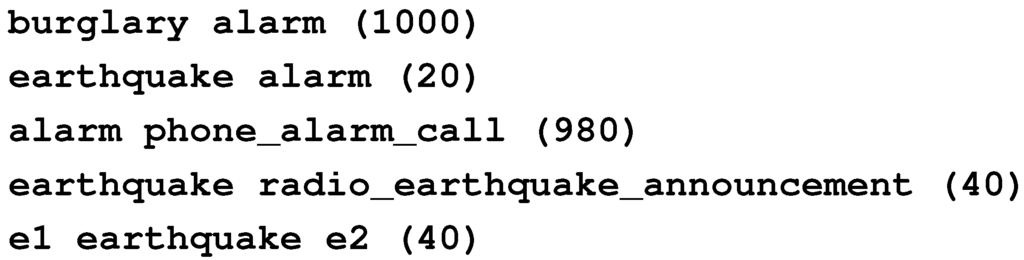

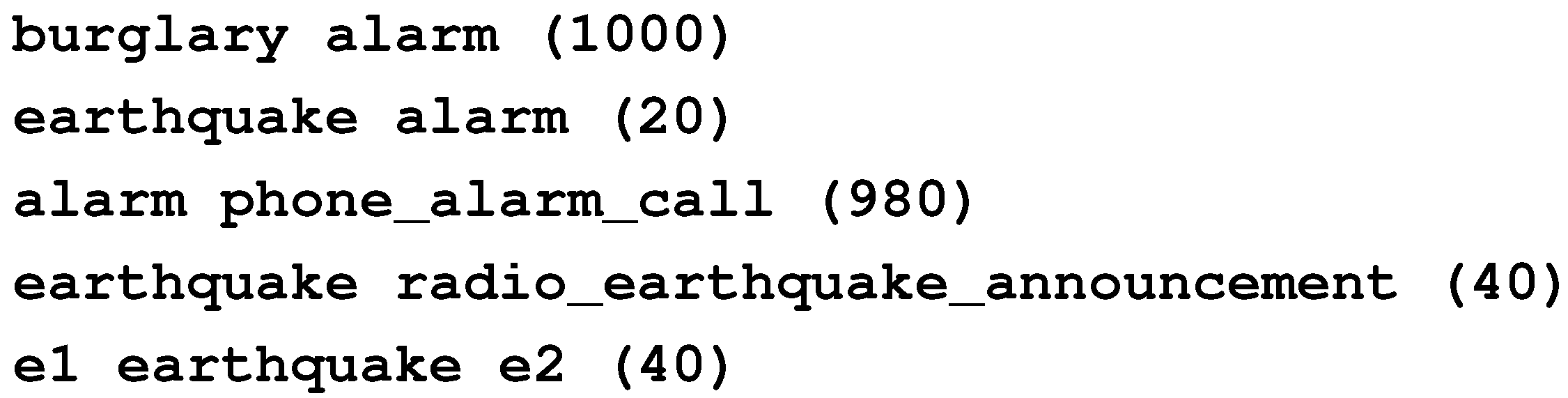

10.1. Nonmonotonic Reasoning and Reasoning with Default Values