Crowd Counting in Diverse Environments Using a Deep Routing Mechanism Informed by Crowd Density Levels

Abstract

1. Introduction

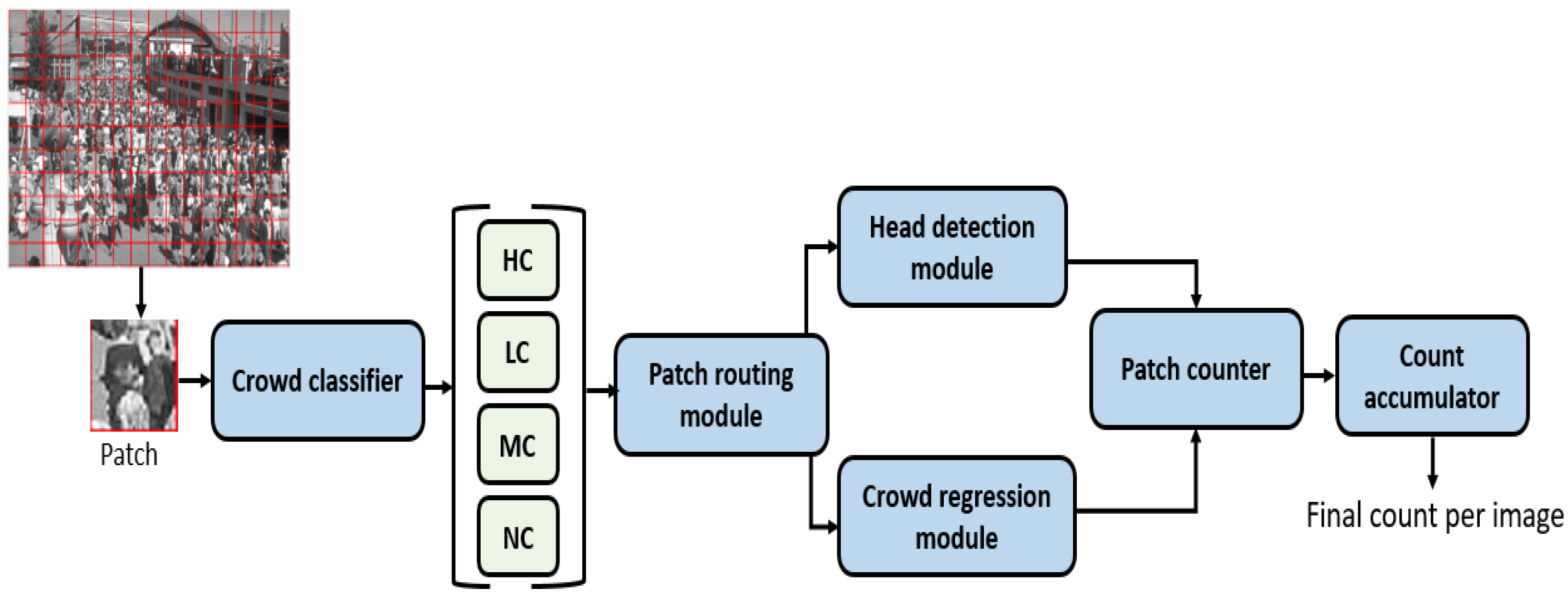

- A unified deep-learning framework is proposed that estimates crowd count in diverse scenes.

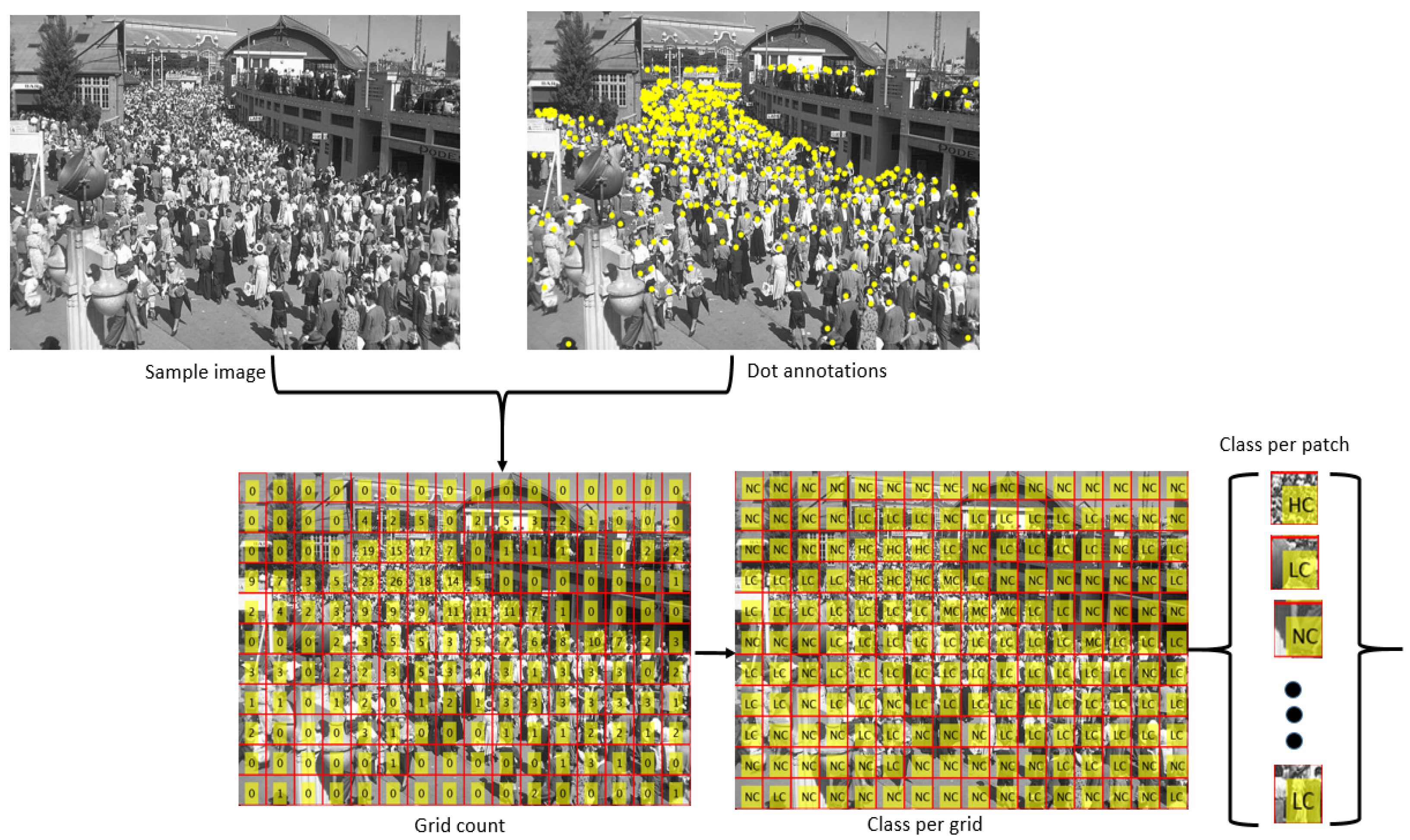

- We introduce a Crowd Classifier (CC) that classifies the patches into four categories, including Low Crowd, Medium Crowd, High Crowd, and No Crowd.

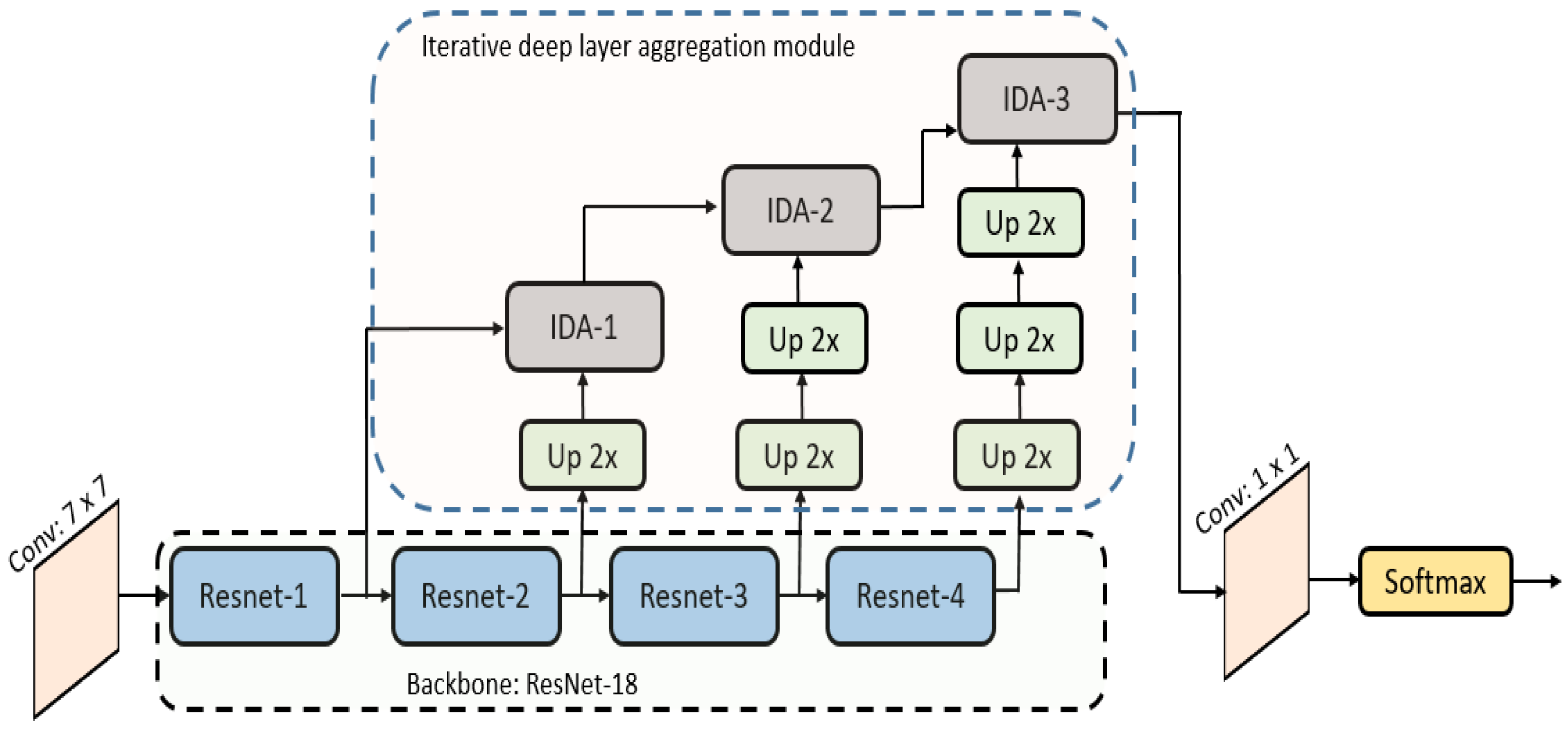

- A novel Head-Detection (HD) network is introduced for the efficient detection of human heads in complex scenes, leveraging iterative deep aggregation (IDA) to extract multi-scale features from various layers of the network.

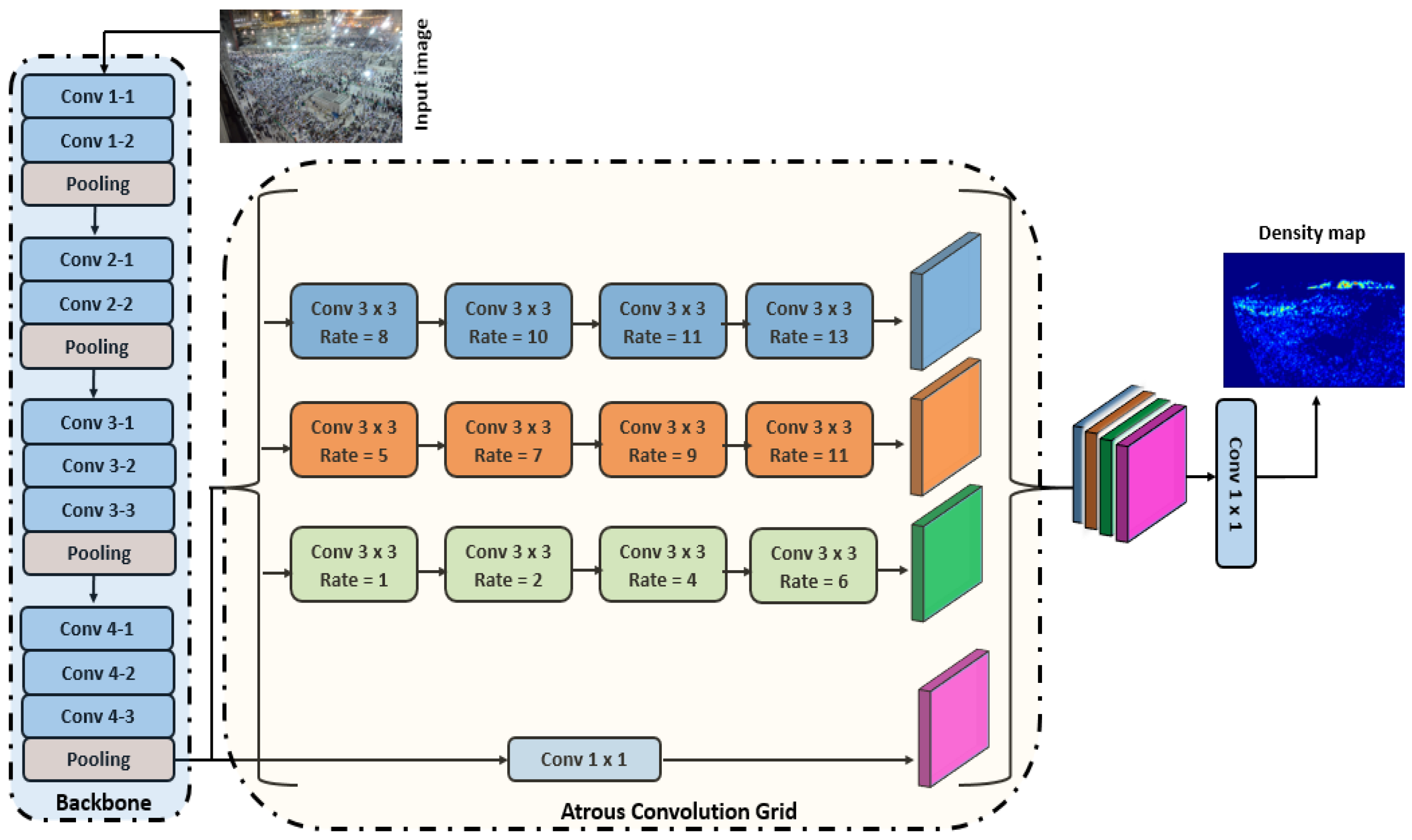

- A novel Crowd-Regression Module (CRM) is introduced, which utilizes an Atrous Convolution Grid (ACG) to densely sample a wide range of scales and contextual information for accurate crowd count estimation.

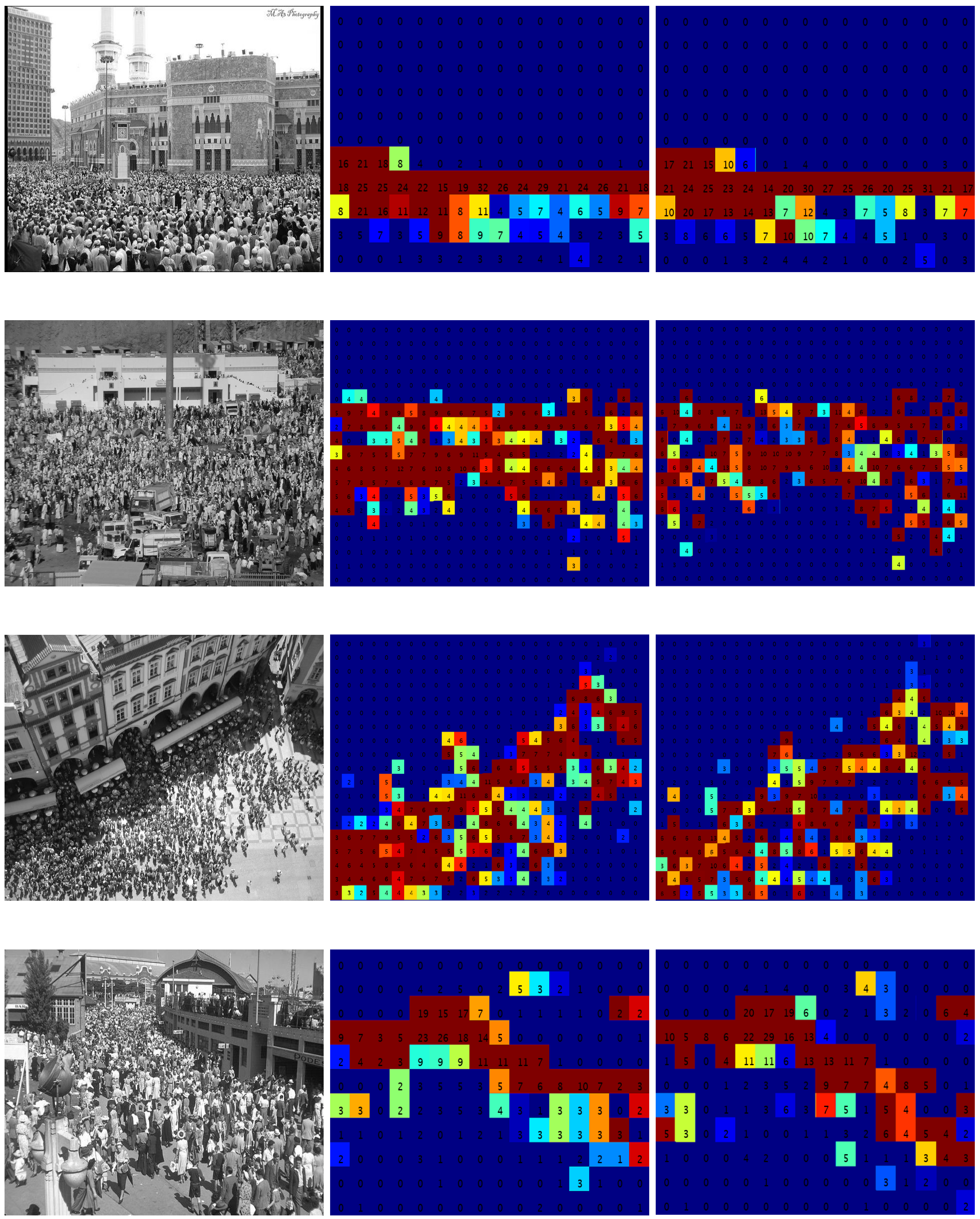

- An effective routing strategy is developed that efficiently routes the patches to either a detection network or regression module based on crowd density variations within an image.

2. Related Work

2.1. Regression-Based Methods

2.2. Detection-Based Methods

3. Proposed Methodology

3.1. Crowd Classifier

3.2. Patch-Routing Module

| Algorithm 1 Routing patches and counting during inference stage |

| Input: Image I, N, M |

| Output: Count Grid CG |

| Overlay N × M grid G over the input image. |

| Initialize count grid CG equal to the size of G. |

| for each i in N do |

| for each j in M do |

| Normalize and re-size patch |

| Re-size patch to 224 × 224 pixels |

| Classify in categories: LC, NC, HC, MC |

| if is HC then |

| = CountRegressor() |

| else if is NC then |

| = 0 |

| else |

| = HeadDetector() |

| end if |

| end for |

| end for |

3.3. Head-Detection Module

3.4. Crowd-Regression Module

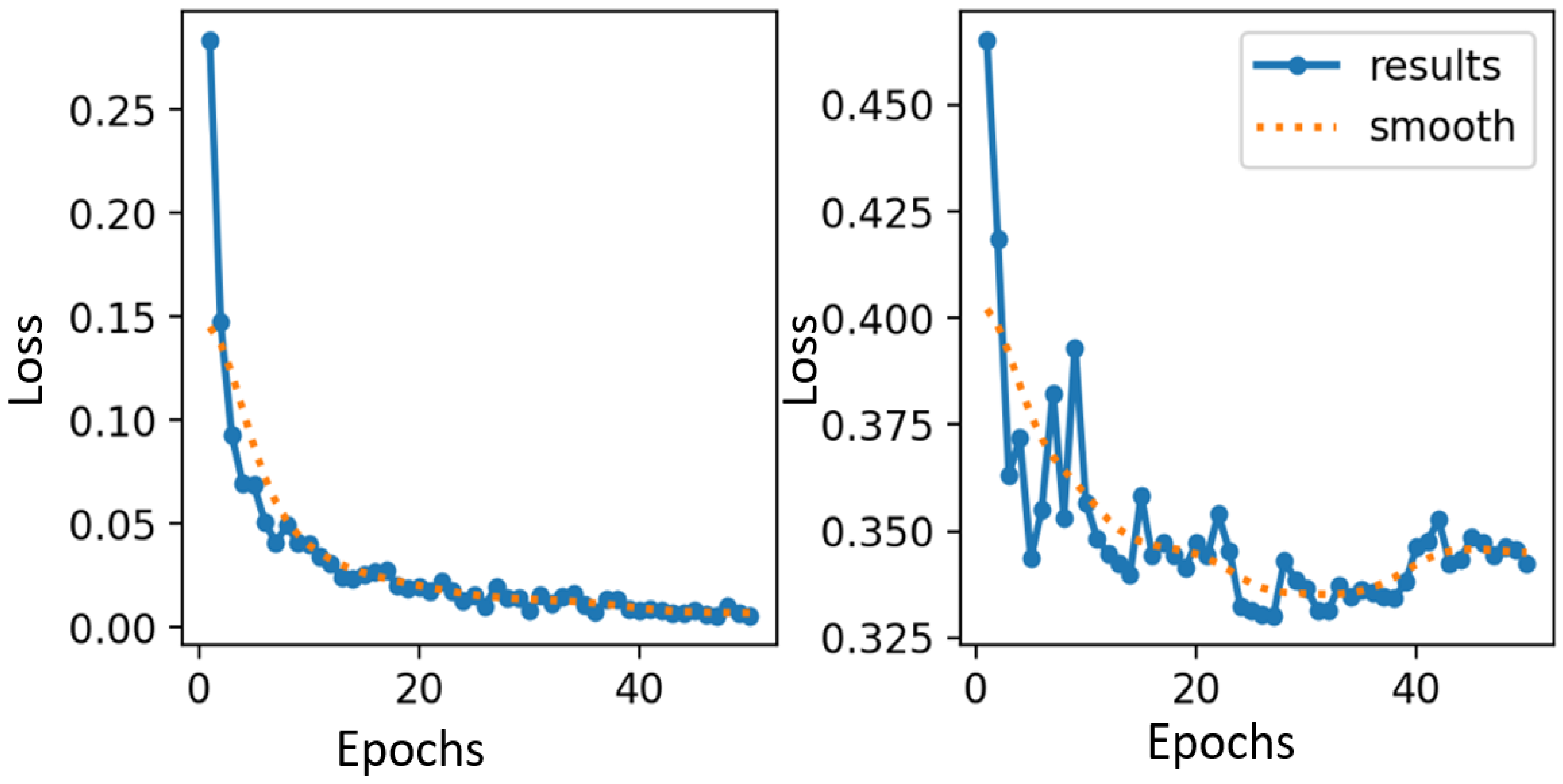

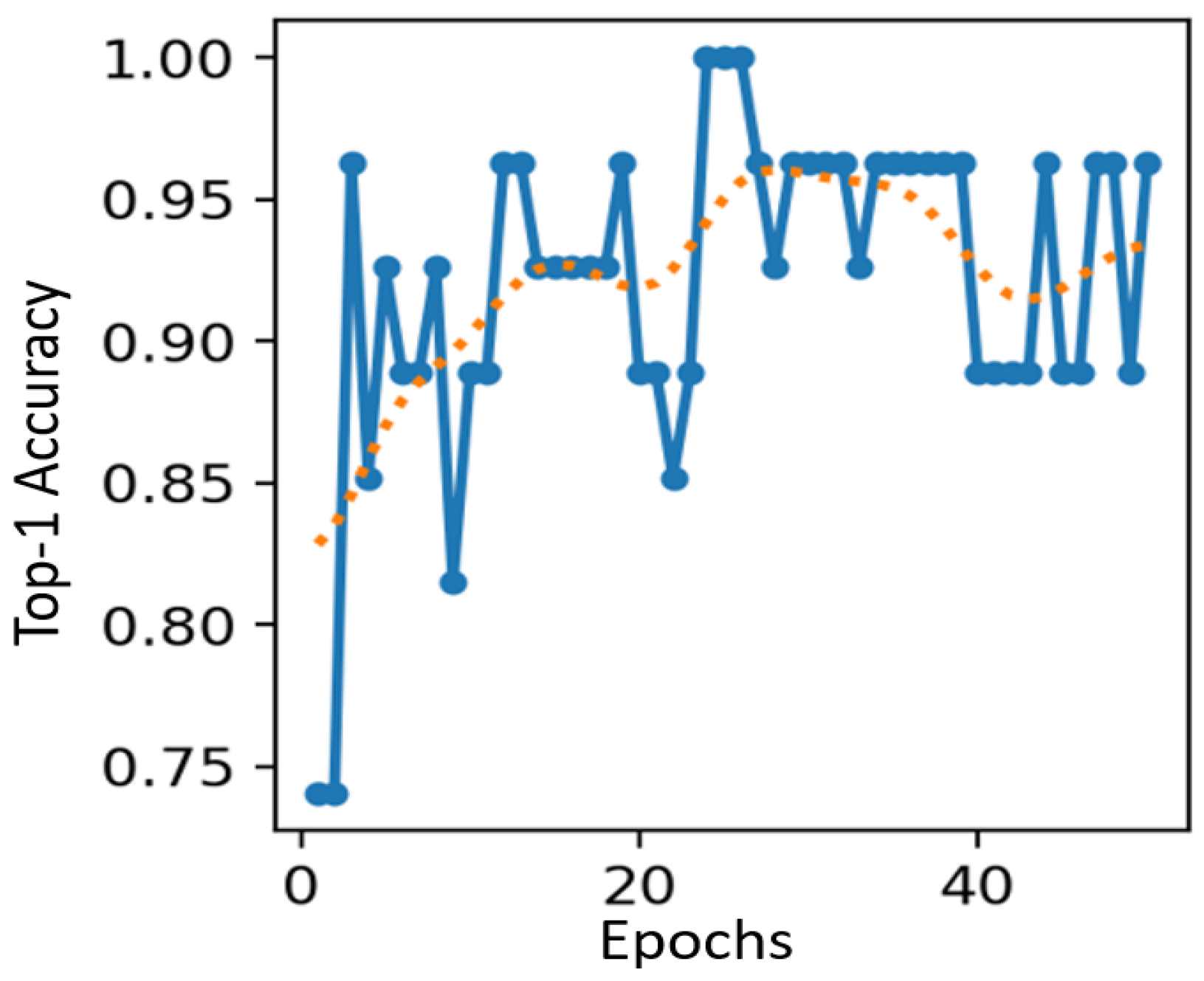

4. Experiment Results

4.1. Datasets

4.2. Evaluation Metrics

4.3. Performance Evaluation

4.4. Comparisons and Discussion

5. Ablation Study

- Method M1: This method comprises the Head-Detection and crowd-regression modules. However, the Atrous Convolution Grid (ACG) of the Crowd-Regression Module consists of three branches, each containing one convolutional layer, resulting in a total of three convolutional layers with dilation rates of (6,12,18).

- Method M2: This method comprises Head-Detection and crowd-regression modules. Similar to M1, the Atrous Convolution Grid (ACG) of the Crowd-Regression Module consists of three branches. Each branch contains two convolutional layers, resulting in a total of six convolutional layers with dilation rates of (3,6) in the first branch, (8,12) in the second branch, and (12,18) in the third branch.

- Method M3: Similar to previous methods, the M3 method comprises Head-Detection and a Crowd-Regression Module. The Atrous Convolution Grid (ACG) of the Crowd-Regression Module consists of three branches. Each branch contains three convolutional layers, resulting in a total of nine (9) convolutional layers with dilation rates of (2,3,4) in the first branch, (5,7,11) in the second branch and (8,12,18) in the third branch.

- Method M4: Similar to previous methods, the M4 method comprises a Head-Detection and Crowd-Regression Module. However, the Atrous Convolution Grid (ACG) of the Crowd-Regression Module consists of only one branch. The branch contains three convolutional layers with dilation rates of (6,12,18).

- Method M5: The M5 method comprises only a Crowd-Regression Module and does not have a Head-Detection Module. The Atrous Convolution Grid (ACG) of the Crowd-Regression Module consists of three branches. Each branch contains four convolutional layers, resulting in a total of twelve (12) convolutional layers with dilation rates of (1,2,3,4) in the first branch, (5,7,9,11) in the second branch and (8,10,11,13) in the third branch.

- Method M6: Similar to previous methods, the M6 method comprises Head-Detection and a Crowd-Regression Module. The Atrous Convolution Grid (ACG) of the Crowd-Regression Module consists of three branches. Each branch contains five convolutional layers, resulting in a total of fifteen (15) convolutional layers with dilation rates of (2,4,6,8,9) in the first branch, (4,7,8,10,11) in the second branch and (8,12,16,18,20) in the third branch.

- Method M7: The M7 method is comprised of only head detection and does not have a Crowd-Regression Module.

- Method M8: The M8 method comprises Head-Detection and crowd-regression modules. The Atrous Convolution Grid (ACG) of the Crowd-Regression Module consists of three branches. Each branch contains four convolutional layers, resulting in a total of twelve (12) convolutional layers with dilation rates of (1,2,3,4) in the first branch, (5,7,9,11) in the second branch and (8,10,11,13) in the third branch.

6. Computational Complexity

7. Conclusions

- The proposed framework demonstrates superior performance across all datasets, demonstrating its effectiveness and versatility in addressing the challenges posed by various complex scenes.

- The proposed framework employs a unique way of handling the scale problem in crowd counting by adopting a routing strategy that directs image patches to one of two counting modules based on their density levels. In this way, based on the complexity of the crowd, the network can effectively handle the scale problem and achieve high performance across all datasets.

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Khan, S.D.; Tayyab, M.; Amin, M.K.; Nour, A.; Basalamah, A.; Basalamah, S.; Khan, S.A. Towards a crowd analytic framework for crowd management in Majid-al-Haram. arXiv 2017, arXiv:1709.05952. [Google Scholar]

- Gayathri, H.; Aparna, P.; Verma, A. A review of studies on understanding crowd dynamics in the context of crowd safety in mass religious gatherings. Int. J. Disaster Risk Reduct. 2017, 25, 82–91. [Google Scholar] [CrossRef]

- Khan, M.A.; Menouar, H.; Hamila, R. Revisiting crowd counting: State-of-the-art, trends, and future perspectives. Image Vis. Comput. 2023, 129, 104597. [Google Scholar] [CrossRef]

- Wang, M.; Cai, H.; Dai, Y.; Gong, M. Dynamic Mixture of Counter Network for Location-Agnostic Crowd Counting. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, Waikoloa, HI, USA, 3–7 January 2023; pp. 167–177. [Google Scholar]

- Basalamah, S.; Khan, S.D.; Felemban, E.; Naseer, A.; Rehman, F.U. Deep learning framework for congestion detection at public places via learning from synthetic data. J. King Saud Univ.-Comput. Inf. Sci. 2023, 35, 102–114. [Google Scholar] [CrossRef]

- Stadler, D.; Beyerer, J. Modelling ambiguous assignments for multi-person tracking in crowds. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, Waikoloa, HI, USA, 3–8 January 2022; pp. 133–142. [Google Scholar]

- Li, Y. A deep spatiotemporal perspective for understanding crowd behavior. IEEE Trans. Multimed. 2018, 20, 3289–3297. [Google Scholar] [CrossRef]

- Grant, J.M.; Flynn, P.J. Crowd scene understanding from video: A survey. ACM Trans. Multimed. Comput. Commun. Appl. (TOMM) 2017, 13, 1–23. [Google Scholar] [CrossRef]

- Khan, S.D.; Bandini, S.; Basalamah, S.; Vizzari, G. Analyzing crowd behavior in naturalistic conditions: Identifying sources and sinks and characterizing main flows. Neurocomputing 2016, 177, 543–563. [Google Scholar] [CrossRef]

- Gao, G.; Gao, J.; Liu, Q.; Wang, Q.; Wang, Y. Cnn-based density estimation and crowd counting: A survey. arXiv 2020, arXiv:2003.12783. [Google Scholar]

- Zhang, C.; Li, H.; Wang, X.; Yang, X. Cross-scene crowd counting via deep convolutional neural networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 833–841. [Google Scholar]

- Zhang, Y.; Zhou, D.; Chen, S.; Gao, S.; Ma, Y. Single-image crowd counting via multi-column convolutional neural network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 589–597. [Google Scholar]

- Babu Sam, D.; Surya, S.; Venkatesh Babu, R. Switching convolutional neural network for crowd counting. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 5744–5752. [Google Scholar]

- Idrees, H.; Tayyab, M.; Athrey, K.; Zhang, D.; Al-Maadeed, S.; Rajpoot, N.; Shah, M. Composition loss for counting, density map estimation and localization in dense crowds. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 532–546. [Google Scholar]

- Sam, D.B.; Peri, S.V.; Sundararaman, M.N.; Kamath, A.; Babu, R.V. Locate, size and count: Accurately resolving people in dense crowds via detection. arXiv 2019, arXiv:1906.07538. [Google Scholar]

- Basalamah, S.; Khan, S.D.; Ullah, H. Scale driven convolutional neural network model for people counting and localization in crowd scenes. IEEE Access 2019, 7, 71576–71584. [Google Scholar] [CrossRef]

- Wang, Y.; Lian, H.; Chen, P.; Lu, Z. Counting people with support vector regression. In Proceedings of the 2014 10th International Conference on Natural Computation (ICNC), Xiamen, China, 19–21 August 2014; pp. 139–143. [Google Scholar]

- Chan, A.B.; Liang, Z.S.J.; Vasconcelos, N. Privacy preserving crowd monitoring: Counting people without people models or tracking. In Proceedings of the 2008 IEEE Conference on Computer Vision and Pattern Recognition, Anchorage, AK, USA, 23–28 June 2008; pp. 1–7. [Google Scholar]

- Pham, V.Q.; Kozakaya, T.; Yamaguchi, O.; Okada, R. Count forest: Co-voting uncertain number of targets using random forest for crowd density estimation. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015; pp. 3253–3261. [Google Scholar]

- Idrees, H.; Saleemi, I.; Seibert, C.; Shah, M. Multi-source multi-scale counting in extremely dense crowd images. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Portland, OR, USA, 23–28 June 2013; pp. 2547–2554. [Google Scholar]

- Wan, J.; Chan, A. Adaptive density map generation for crowd counting. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, Republic of Korea, 27 October–2 November 2019; pp. 1130–1139. [Google Scholar]

- Dong, L.; Zhang, H.; Ji, Y.; Ding, Y. Crowd counting by using multi-level density-based spatial information: A Multi-scale CNN framework. Inf. Sci. 2020, 528, 79–91. [Google Scholar] [CrossRef]

- Li, Y.; Zhang, X.; Chen, D. Csrnet: Dilated convolutional neural networks for understanding the highly congested scenes. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 1091–1100. [Google Scholar]

- Xu, Y.; Zhong, Z.; Lian, D.; Li, J.; Li, Z.; Xu, X.; Gao, S. Crowd counting with partial annotations in an image. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, BC, Canada, 11–17 October 2021; pp. 15570–15579. [Google Scholar]

- Sindagi, V.A.; Patel, V.M. Generating high-quality crowd density maps using contextual pyramid cnns. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 1861–1870. [Google Scholar]

- Zhai, W.; Gao, M.; Souri, A.; Li, Q.; Guo, X.; Shang, J.; Zou, G. An attentive hierarchy ConvNet for crowd counting in smart city. Clust. Comput. 2023, 26, 1099–1111. [Google Scholar] [CrossRef]

- Zhang, J.; Ye, L.; Wu, J.; Sun, D.; Wu, C. A Fusion-Based Dense Crowd Counting Method for Multi-Imaging Systems. Int. J. Intell. Syst. 2023, 2023, 6677622. [Google Scholar] [CrossRef]

- Zhai, W.; Gao, M.; Li, Q.; Jeon, G.; Anisetti, M. FPANet: Feature pyramid attention network for crowd counting. Appl. Intell. 2023, 53, 19199–19216. [Google Scholar] [CrossRef]

- Guo, X.; Song, K.; Gao, M.; Zhai, W.; Li, Q.; Jeon, G. Crowd counting in smart city via lightweight ghost attention pyramid network. Future Gener. Comput. Syst. 2023, 147, 328–338. [Google Scholar] [CrossRef]

- Gao, M.; Souri, A.; Zaker, M.; Zhai, W.; Guo, X.; Li, Q. A comprehensive analysis for crowd counting methodologies and algorithms in Internet of Things. Clust. Comput. 2024, 27, 859–873. [Google Scholar] [CrossRef]

- Viola, P.; Jones, M.J. Robust real-time face detection. Int. J. Comput. Vis. 2004, 57, 137–154. [Google Scholar] [CrossRef]

- Ren, X. Finding people in archive films through tracking. In Proceedings of the Computer Vision and Pattern Recognition, Anchorage, AK, USA, 23–28 June 2008; pp. 1–8. [Google Scholar]

- Yan, J.; Lei, Z.; Wen, L.; Li, S.Z. The fastest deformable part model for object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 2497–2504. [Google Scholar]

- Li, H.; Lin, Z.; Shen, X.; Brandt, J.; Hua, G. A convolutional neural network cascade for face detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 5325–5334. [Google Scholar]

- Yang, S.; Luo, P.; Loy, C.C.; Tang, X. From facial parts responses to face detection: A deep learning approach. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015; pp. 3676–3684. [Google Scholar]

- Zhang, K.; Zhang, Z.; Wang, H.; Li, Z.; Qiao, Y.; Liu, W. Detecting faces using inside cascaded contextual cnn. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 3171–3179. [Google Scholar]

- Zhu, C.; Zheng, Y.; Luu, K.; Savvides, M. Cms-rcnn: Contextual multi-scale region-based cnn for unconstrained face detection. In Deep Learning for Biometrics; Springer: Berlin/Heidelberg, Germany, 2017; pp. 57–79. [Google Scholar]

- Hu, P.; Ramanan, D. Finding tiny faces. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 951–959. [Google Scholar]

- Khan, S.D.; Basalamah, S. Scale and density invariant head detection deep model for crowd counting in pedestrian crowds. Vis. Comput. 2021, 37, 2127–2137. [Google Scholar] [CrossRef]

- Shami, M.B.; Maqbool, S.; Sajid, H.; Ayaz, Y.; Cheung, S.C.S. People counting in dense crowd images using sparse head detections. IEEE Trans. Circuits Syst. Video Technol. 2018, 29, 2627–2636. [Google Scholar] [CrossRef]

- Lian, D.; Chen, X.; Li, J.; Luo, W.; Gao, S. Locating and counting heads in crowds with a depth prior. IEEE Trans. Pattern Anal. Mach. Intell. 2021, 44, 9056–9072. [Google Scholar] [CrossRef]

- Zhou, T.; Yang, J.; Loza, A.; Bhaskar, H.; Al-Mualla, M. Crowd modeling framework using fast head detection and shape-aware matching. J. Electron. Imaging 2015, 24, 023019. [Google Scholar] [CrossRef]

- Saqib, M.; Khan, S.D.; Sharma, N.; Blumenstein, M. Crowd counting in low-resolution crowded scenes using region-based deep convolutional neural networks. IEEE Access 2019, 7, 35317–35329. [Google Scholar] [CrossRef]

- Arandjelovic, O. Crowd detection from still images 2008. In Proceedings of the British Machine Vision Conference, Leeds, UK, 1–4 September 2008. [Google Scholar]

- Sirmacek, B.; Reinartz, P. Automatic crowd analysis from airborne images. In Proceedings of the 5th International Conference on Recent Advances in Space Technologies-RAST2011, Istanbul, Turkey, 9–11 June 2011; pp. 116–120. [Google Scholar]

- Saqib, M.; Khan, S.D.; Blumenstein, M. Texture-based feature mining for crowd density estimation: A study. In Proceedings of the 2016 International Conference on Image and Vision Computing New Zealand (IVCNZ), Palmerston North, New Zealand, 21–22 November 2016; pp. 1–6. [Google Scholar]

- Lin, T.Y.; Goyal, P.; Girshick, R.; He, K.; Dollár, P. Focal loss for dense object detection. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 2980–2988. [Google Scholar]

- Wang, Y.; Hou, J.; Hou, X.; Chau, L.P. A self-training approach for point-supervised object detection and counting in crowds. IEEE Trans. Image Process. 2021, 30, 2876–2887. [Google Scholar] [CrossRef]

- Wang, Y.; Zhang, W.; Liu, Y.; Zhu, J. Two-branch fusion network with attention map for crowd counting. Neurocomputing 2020, 411, 1–8. [Google Scholar] [CrossRef]

- Yang, Y.; Li, G.; Du, D.; Huang, Q.; Sebe, N. Embedding perspective analysis into multi-column convolutional neural network for crowd counting. IEEE Trans. Image Process. 2020, 30, 1395–1407. [Google Scholar] [CrossRef]

- Dai, F.; Liu, H.; Ma, Y.; Zhang, X.; Zhao, Q. Dense scale network for crowd counting. In Proceedings of the 2021 International Conference on Multimedia Retrieval, Taipei, Taiwan, 21–24 August 2021; pp. 64–72. [Google Scholar]

- Chen, L.C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. Deeplab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected crfs. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 40, 834–848. [Google Scholar] [CrossRef]

- Paszke, A.; Gross, S.; Chintala, S.; Chanan, G.; Yang, E.; DeVito, Z.; Lin, Z.; Desmaison, A.; Antiga, L.; Lerer, A. Automatic Differentiation in Pytorch 2017. Available online: https://openreview.net/forum?id=BJJsrmfCZ (accessed on 18 March 2024).

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. Adv. Neural Inf. Process. Syst. 2012, 25. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Cheng, Z.Q.; Dai, Q.; Li, H.; Song, J.; Wu, X.; Hauptmann, A.G. Rethinking spatial invariance of convolutional networks for object counting. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–27 June 2022; pp. 19638–19648. [Google Scholar]

- Huang, L.; Zhu, L.; Shen, S.; Zhang, Q.; Zhang, J. SRNet: Scale-aware representation learning network for dense crowd counting. IEEE Access 2021, 9, 136032–136044. [Google Scholar] [CrossRef]

- Zeng, X.; Wu, Y.; Hu, S.; Wang, R.; Ye, Y. DSPNet: Deep scale purifier network for dense crowd counting. Expert Syst. Appl. 2020, 141, 112977. [Google Scholar] [CrossRef]

- Wang, S.; Lu, Y.; Zhou, T.; Di, H.; Lu, L.; Zhang, L. SCLNet: Spatial context learning network for congested crowd counting. Neurocomputing 2020, 404, 227–239. [Google Scholar] [CrossRef]

- Sindagi, V.A.; Patel, V.M. Cnn-based cascaded multi-task learning of high-level prior and density estimation for crowd counting. In Proceedings of the 2017 14th IEEE International Conference on Advanced Video and Signal Based Surveillance (AVSS), Lecce, Italy, 29 August–1 September 2017; pp. 1–6. [Google Scholar]

- Gao, J.; Wang, Q.; Li, X. Pcc net: Perspective crowd counting via spatial convolutional network. IEEE Trans. Circuits Syst. Video Technol. 2019, 30, 3486–3498. [Google Scholar] [CrossRef]

- Hafeezallah, A.; Al-Dhamari, A.; Abu-Bakar, S.A.R. U-ASD net: Supervised crowd counting based on semantic segmentation and adaptive scenario discovery. IEEE Access 2021, 9, 127444–127459. [Google Scholar] [CrossRef]

| Class | AlexNet [55] | VGG-16 [56] | ResNet-50 [57] | ResNet-152 [57] | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| P | R | F1 | P | R | F1 | P | R | F1 | P | R | F1 | |

| High Crowd | 0.92 | 0.9 | 0.91 | 0.95 | 0.96 | 0.95 | 0.97 | 0.94 | 0.95 | 0.98 | 0.98 | 0.98 |

| Low Crowd | 0.94 | 0.92 | 0.93 | 0.94 | 0.95 | 0.94 | 0.95 | 0.96 | 0.95 | 0.98 | 0.97 | 0.98 |

| Medium Crowd | 0.92 | 0.94 | 0.93 | 0.96 | 0.94 | 0.95 | 0.96 | 0.95 | 0.95 | 0.99 | 0.97 | 0.98 |

| No Crowd | 0.92 | 0.9 | 0.91 | 0.95 | 0.96 | 0.95 | 0.96 | 0.95 | 0.95 | 0.98 | 0.97 | 0.98 |

| Method | MAE | MSE |

|---|---|---|

| MCNN [12] | 277.0 | 426.0 |

| Idrees et al. [14] | 132.0 | 191.0 |

| CSRNet [23] | 119.2 | 211.4 |

| GauNet (MCNN) [58] | 204.2 | 280.4 |

| URC [24] | 128.1 | 218.1 |

| SCLNet [61] | 109.6 | 182.5 |

| SRNet [59] | 108.2 | 177.5 |

| Switching CNN [13] | 228.0 | 445.0 |

| DSPNet [60] | 107.5 | 182.7 |

| Khan et al. [39] | 112.0 | 173.0 |

| Proposed | 97.20 | 156.4 |

| Method | MAE | MSE |

|---|---|---|

| MCNN [12] | 377.6 | 509.1 |

| Idrees et al. [14] | 419.5 | 541.6 |

| CSRNet [23] | 266.1 | 397.5 |

| GauNet (MCNN) [58] | 282.6 | 387.2 |

| URC [24] | 294.0 | 443.1 |

| SCLNet [61] | 258.92 | 326.24 |

| Switching CNN [13] | 318.1 | 439.2 |

| Cascaded-MTL [62] | 322.8 | 397.9 |

| DSPNet [60] | 243.3 | 307.6 |

| Proposed | 201.6 | 286.4 |

| Method | MAE | MSE |

|---|---|---|

| MCNN [12] | 110.2 | 173.2 |

| CSRNet [23] | 68.2 | 115.0 |

| GauNet (MCNN) [58] | 94.2 | 141.8 |

| URC [24] | 72.8 | 111.6 |

| SCLNet [61] | 67.89 | 102.94 |

| Switching CNN [13] | 90.4 | 135.0 |

| Cascaded-MTL [62] | 101.3 | 152.4 |

| DSPNet [60] | 68.2 | 107.8 |

| CP-CNN [25] | 73.6 | 106.4 |

| PCC Net [63] | 73.5 | 124 |

| U-ASD Net [64] | 64.6 | 106.1 |

| Proposed | 57.7 | 97.5 |

| Method | Head Detection | Crowd Regression | MAE | MSE | |||||

|---|---|---|---|---|---|---|---|---|---|

| ACG-Row-1 | ACG-Row-2 | ACG-Row-3 | |||||||

| No. of Layers | Dilation Rate | No. of Layers | Dilation Rate | No. of Layers | Dilation Rate | ||||

| M1 | Yes | 1 × Conv | 6 | 1 × Conv | 12 | 1 × Conv | 18 | 127.03 | 194.36 |

| M2 | Yes | 2 × Conv | 3,6 | 2 × Conv | 8,12 | 2 × Conv | 12,18 | 117.54 | 186.28 |

| M3 | Yes | 3 × Conv | 2,3,4 | 3 × Conv | 5,7,11 | 3 × Conv | 8,12,18 | 105.72 | 172.10 |

| M4 | Yes | 3 × Conv | 6,12,18 | No | 125.20 | 192.72 | |||

| M4 | No | 4 × Conv | 1,2,3,4 | 4 × Conv | 5,7,9,11 | 4 × Conv | 8,10,11,13 | 132.42 | 195.37 |

| M5 | Yes | 5 × Conv | 2,4,6,8,9 | 5 × Conv | 4,7,8,10,11 | 5 × Conv | 8,12,16,18,20 | 107.82 | 178.75 |

| M6 | Yes | No | 187.23 | 221.14 | |||||

| M7 (Proposed) | Yes | 4 × Conv | 1,2,3,4 | 4 × Conv | 5,7,9,11 | 4 × Conv | 8,10,11,13 | 97.20 | 156.4 |

| Method | Inference Time (Milliseconds) | Frames per Second | MAE | MSE |

|---|---|---|---|---|

| Switching CNN [13] | 153 | 6.54 | 90.4 | 135 |

| CSRNet [23] | 330 | 3.0 | 68.2 | 115.0 |

| CP-CNN [25] | 5113 | 0.195 | 73.6 | 106.4 |

| PCC Net [63] | 89 | 11.24 | 73.5 | 124.0 |

| U-ASD Net [64] | 94 | 10.63 | 64.6 | 106.1 |

| Proposed | 146 | 6.84 | 57.7 | 97.5 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Alhawsawi, A.N.; Khan, S.D.; Ur Rehman, F. Crowd Counting in Diverse Environments Using a Deep Routing Mechanism Informed by Crowd Density Levels. Information 2024, 15, 275. https://doi.org/10.3390/info15050275

Alhawsawi AN, Khan SD, Ur Rehman F. Crowd Counting in Diverse Environments Using a Deep Routing Mechanism Informed by Crowd Density Levels. Information. 2024; 15(5):275. https://doi.org/10.3390/info15050275

Chicago/Turabian StyleAlhawsawi, Abdullah N, Sultan Daud Khan, and Faizan Ur Rehman. 2024. "Crowd Counting in Diverse Environments Using a Deep Routing Mechanism Informed by Crowd Density Levels" Information 15, no. 5: 275. https://doi.org/10.3390/info15050275

APA StyleAlhawsawi, A. N., Khan, S. D., & Ur Rehman, F. (2024). Crowd Counting in Diverse Environments Using a Deep Routing Mechanism Informed by Crowd Density Levels. Information, 15(5), 275. https://doi.org/10.3390/info15050275