1. Introduction

The SOcial LInk Data (Solid) protocol [

1,

2] is a draft specification for managing personal data on the Web. Solid was proposed to decentralise social networking and take data out of the hands of corporations while enhancing

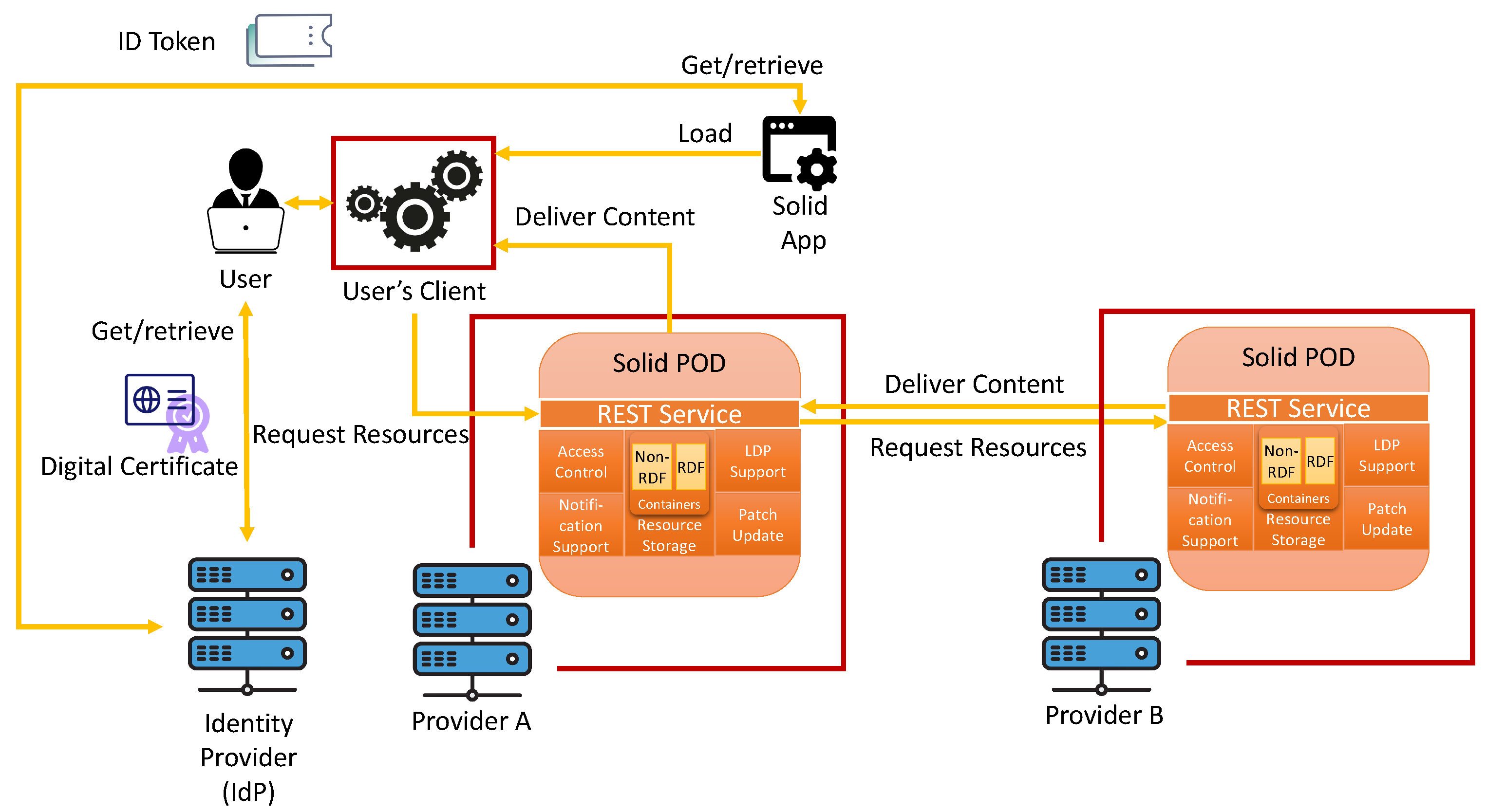

data sovereignty, i.e., empowering data owners regarding access to their own data by leveraging reusable W3C standards for the Semantic Web. The Solid protocol standardises interfaces between Solid apps that use data, Solid pods that store data, and services that issue identities to users and other agents participating in the Solid ecosystem. In doing so, Solid brings a layer of trust, authentication, and authorisation to the Web, which are intended to ensure the contextual integrity of information flows through the Solid ecosystem.

The ongoing development of the specifications defining the Solid protocol has been led by Tim Berners-Lee and the Solid Community Group at MIT since 2016. At the time of writing, the process of formalising the relevant standard as W3C recommendations has been initiated, making this paper a timely refection of the current status of drafts at a moment when the standards are expected to be tightened while taking into account, in particular, the experience of a network of developers that are already deploying Solid-based solutions. One such Solid-based solution, described in this paper, is a concrete Solid-based quality-of-care survey on the We Are platform (

https://we-are-health.be/en accessed on 12 July 2023) developed by the team of the authors based at VITO for use in conjunction with Belgian hospitals. This use case we will use to illustrate why privacy is paramount in applications handling personal data, thereby justifying the need for the more general privacy analysis that we provide.

This paper investigates the use of Solid in conjunction with in-force legislation, while exemplifying the interrelation between the two with respect to the European General Data Protection Regulation (GDPR), the principal EU regulation for data protection and privacy. In addition, relevant industrial standards will be considered to identify measures and controls to be integrated into the Solid project in order to provide security and privacy. In particular, this paper conducts an initial analysis to assess GDPR legal requirements in Solid, fit to enable legally acceptable uses of the protocol by whoever stores or processes personal data within the borders of the European Union. However, our methodology is general enough to be replicated in other regulations and/or technologies in the future.

While privacy is a stated goal of Solid, being compliant with specific legislation on the topic is unattainable given the current status of the Solid specification. Even if a specification contains some protection means to safeguard users’ privacy, compliance with GDPR requires the provision of detailed information about the purpose, legal basis, etc., of personal data processing, to follow specific procedures, e.g., about maintaining a record of processing activities or about notifying personal data breaches to the supervisory authority, and to guarantee rights such as the right to be forgotten, the right of data portability, etc.

Nevertheless, while GDPR provides a well-defined list of all such legal requirements, it does not specify how and to what extent these are implemented in real-world scenarios. This is due to the fact that the scenarios to which the regulation applies are too many in number, and worst of all, most of them were unpredictable at the time in which the regulation was drafted because they depend on IT technologies that evolve over time.

Incompleteness and vagueness as such are indeed found, in different ratios, in every legislative document [

3]. Law usually contains plenty of uninformative expressions that must be interpreted in context, which makes it rather difficult to develop AI-based solutions truly useful for the legal profession [

4,

5]. For example, GDPR specifies that controllers must take “reasonable steps” or “appropriate measures” to meet the legal requirements. However, it does not specify how these steps and measures are

concretely implemented in context, fit to check compliance with these legal requirements.

For this reason, legislative documents usually specify which appointed authorities are in charge of monitoring the state of the art and define operational requirements that implement the legal ones. For instance, GDPR appoints the European Data Protection Board (EDPB) and the Data Protection Authorities (DPA) of the EU Member States to release further guidelines and recommendations of its norms (see, e.g., Art. 70(1)(d)), encourage associations and other bodies representing categories of controllers/processors to prepare codes of conducts (see, e.g., Art. 40), etc.

Other authoritative bodies such as the International Organisation for Standardisation (ISO) or the National Institute of Standards and Technology (NIST) may release further guidance and standard practices that, although not legally binding, enable organisations that adopt them to argue in favour of their proactive attitude and best efforts to be compliant according to the state of the art in a certain domain [

6,

7].

Ultimately, it is up to judges in courts to decide what is the most applicable legal interpretation of the norms in a certain context, although in the European legal framework, legal interpretations from jurisprudence are not legally binding either, as they might be subsequently overridden by other judges.

The contextual interpretation of GDPR legal requirements into operational requirements calls for a holistic methodology, which takes into account the additional non-legislative documents mentioned above (recommendations from appointed authorities, codes of conduct, jurisprudence, etc.), most of which, as explained above, are not legally binding, but they can still provide presumption of compliance, i.e., proactive attitude and best efforts to safeguard the personal data and their owners’ rights, as explained above.

The methodology consists of two steps. The first step creates a table of legally binding requirements related to the technology under examination—in our case, the Solid protocol. This table makes references to certification schemes and codes of conduct, which are texts that offer some specificity in view of the abstract GDPR requirements. In that regard, the documents that have been used as guidance in view of the abstract requirements of GDPR are the EU Cloud Code of Conduct (EU Cloud) [

8], the Data Protection Code of Conduct for Cloud Infrastructure Service Providers (CISPE) [

9], and the GDPR-CARPA certification scheme [

10]. These texts are not legally binding, but they have been approved by the competent DPAs. In view of this, the first step of our research extracts GDPR obligations from these documents that have received authoritative approval.

The second step discusses, for each requirement collected in the first step, the extent to which Solid technically fulfils it. The discussion focuses on the concrete technological choices made in the protocol; their relation with international standards from ISO, NIST, etc.; critical analyses from contemporary literature in the field, etc.; and, of course, the pros and cons of possible mitigation measures to better address GDPR legal requirements.

Such a coverage analysis could also include jurisprudence; however, in the case of Solid, this jurisprudence is not yet available because the protocol is still an emerging technology not yet consolidated in the market.

The key contributions of this paper are as follows:

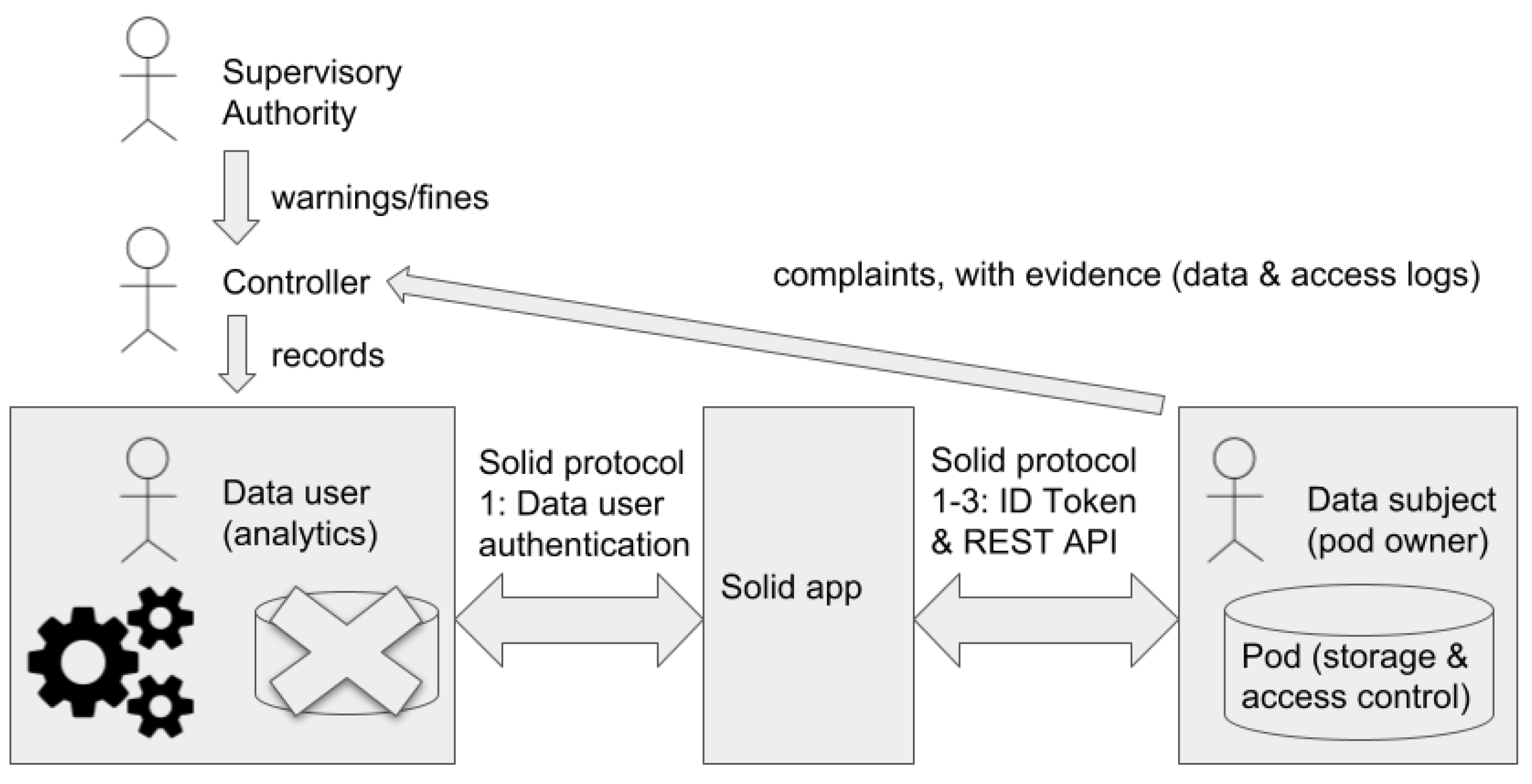

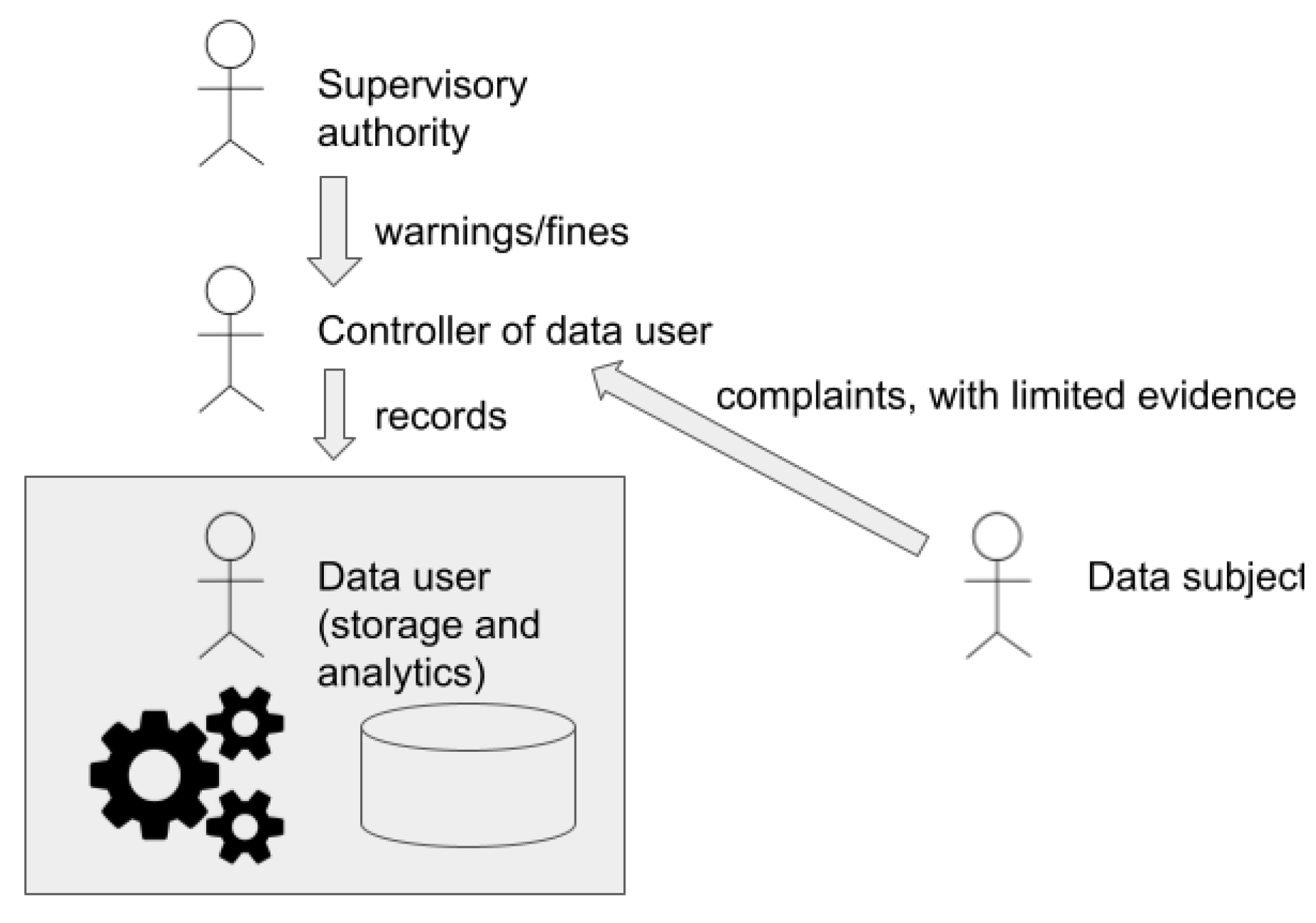

The identification of a class of Solid-based systems where the data subject is the owner of a Solid pod, and the app or app user is involved in processing activities. We argue why this is the priority scenario where GDPR applies to Solid.

A mapping, between the actors in the Solid ecosystem and concepts in GDPR, drawing attention to the different controllers and their obligations in this distributed system. This mapping is used to extract requirements from GDPR that are grounded in officially approved documents.

A substantial real-world Solid-based case study to which our mapping and requirements apply, demonstrating that our analysis is of a practical nature.

A detailed technical security and privacy analysis of the requirements above, in relation to the draft specifications that define the Solid ecosystem at the time of writing. Existing measures to address the requirements in the Solid specifications and ISO standards are discussed.

The above analysis is used to support the case for novel emerging measures proposed by the authors and in related work. Those measures address security and privacy concerns in the specification at the time of writing, and suggest how to enhance Solid as a tool for facilitating GDPR compliance of actors participating in the Solid ecosystem.

Outline. The paper is structured as follows.

Section 2 provides an overview of the current technical status of the Solid ecosystem, and explains why the scope of this work is restricted to scenarios where pods are used to store personal data about the owner of the pod.

Section 3 maps actors in the Solid ecosystem to roles in GDPR and extracts primary requirements from GDPR and related officially approved documents relevant to Solid, and notably,

Section 3.2 describes a real-life case study involving personal health data and Solid in which our mappings are relevant, and indeed required, in order to justify that such systems are GDPR compliant.

Section 4 maps the set of requirements of security and privacy measures in the Solid ecosystem and also assesses emerging proposals by the authors and related researchers to improve coverage of requirements.

Section 5 draws attention to emerging legislation, yet to be officially approved, that may impact Solid in the future.

4. Security and Privacy Assessment of the Solid Protocol

We now consider at a technical level the requirements extracted from GDPR and other officially approved documents in the previous section by explaining how they are reflected in the Solid protocol. Privacy and data protection are closely related, although they do not refer to the same notion. Specifically, under the interpretation that privacy is related to preserving information in its intended context, free from interference or intrusion from outside that context [

41], data protection impacts privacy since it concerns the governance of the context in which data may be used. Security is also not synonymous with privacy, despite considerable overlap. Security may be characterised as the well-informed balance between multiple risks and controls [

42].

Authoritative bodies such as the International Organisation for Standardisation (ISO) or the National Institute of Standards and Technology (NIST) release guidance and standard practices for security and privacy that, although not legally binding, enable organisations that adopt them to argue in favour of their proactive attitude and best efforts to be compliant according to the state of the art in a certain domain. Most requirements in

Table 1 are also reinforced by security standard ISO 27001:2017 [

43], except perhaps those specific to personal data, the legal basis and purpose of processing (Req_05), and notification of data breaches (Req_10). Indeed, ISO 27701[

44] (an extension to ISO 27001 and ISO 27002 for privacy information management) presents, in Annex D, a mapping between the introduced ISO controls and those in GDPR. The ISO 27001 standard clearly supports GDPR Article 32 as ISO 27001 defines best practices for mitigating risks within the organisation, while Article 32 indicates that such risks must be taken into account.

The contribution of this section is to present, in

Table 2, a systematic assessment of the degree of satisfaction of security and privacy requirements from

Table 1 with respect to the current Solid specification and the implementations. We explain to what extent requirements are covered by the Solid specification, and where there are vulnerabilities or potential weaknesses in the system. We identify gaps in the current specifications that may be addressed constructively by evolving the Solid specifications. Some evolutions are under development in related work, while others are proposals of this work. Compromises may be required when requirements are in conflict, e.g., while security demands logs, the data subject has the right to be forgotten.

4.1. Ensure Access to Authorised Users Only (Req_01)

The normative authentication protocol for Solid mentioned in the Solid protocol specification at the time of writing is Solid OpenID Connect (Solid OIDC) [

12,

13]. Adopting a flow of Open ID Connect for authentication alleviates some security challenges for Solid apps since they need not handle their own ad-hoc login logic nor store the passwords of users. It also enhances security for users since users need not hold separate identities and passwords across multiple sites, reducing associated risks [

45]. The key feature that Solid OIDC brings to OpenID Connect is the use of public key cryptography between the app and issuer. This allows Solid apps with no previous trust relationship with an issuer (and hence no shared symmetric secret such as a password) to make use of that issuer. This is partly enabled by PKI that maps HTTPS URIs, called WebIDs, to WebID documents containing the public keys of the actors involved, including the app itself. The app must also advertise secure callback endpoints in their WebID documents; otherwise, authentication can be hijacked via a man-in-the-middle attack, where an attacker masquerades as an app of their choice and provides their own malicious callback URI to intercept secrets. These constraints are specified in the Solid OIDC specification and primer [

12,

46].

OpenID Connect is widely deployed with robust libraries, but there remain vulnerabilities that may be addressed in the specification. We review some vulnerabilities that apply to Solid OIDC, below, and explain how they may be addressed by tightening the specification of Solid OIDC.

Issuer Mix-Up. Some flows of OpenID Connect are known to be vulnerable to issuer mixup attacks [

47,

48], and Solid OIDC is no exception. Recall that in Solid OIDC multiple issuers may be used, some of which may be previously unknown to the app. Even if the relevant Solid app is honest, an attacker may pose as a fake issuer. The attacker can then pretend to be the app in relation to an honest issuer, IdP (Issuer) in

Figure 6, which some legitimate user of the app uses to log in, using the password registered by the app in its WebID document, that the honest issuer checks. The issuer then uses a secure callback URI, pre-registered by the honest app, to send a pseudo-randomised

to the honest app (along with the

identifying the user that logged in, but

without an

as emphasised by it being struck out in

Figure 6). At this point, the attacker has not yet intercepted the code; however, since the app was confused and originally believed it was talking to the attacker’s fake issuer, the app then tries to exchange the code with the attacker instead of the honest issuer. When exchanging the code, a cryptographic secret session key is also generated by the app,

in

Figure 6, which the app is supposed to be able to use later to prove that it has possession of what is received in exchange for the code. However, since the attacker has intercepted the code, it can instead make up its own secret session key,

in

Figure 6. This enables the attacker to prove ownership of an ID token which was intended for the honest app but is in possession of the attacker, at the next authorisation step of Solid OIDC involving the authorisation server of some Solid pod; that is, the attacker can now log in to a Solid pod as if it were the honest app with the honest user logged into it.

This attack can be mitigated by the issuer recording its own identity when the client is redirected back to the Solid app. A standard way of implementing this measure is to add an

iss field to the HTTP header of the response, as reported in RFC 9207 [

49]. To see this, observe that if we introduce the

issuerID in

Figure 6, as suggested by restoring

struck out, the app will block the attack since it will be able to spot that the fake issuer is not the issuer that responded. Such clarifications are not yet made in the draft specifications of Solid OIDC.

Further clarifications. We mention two further vulnerabilities known to be relevant to OIDC in general [

48]. To protect the credentials of agents from malicious Solid apps attempting to steal passwords, having received credentials from a user, the issuer must never use an HTTP 307 “TEMPORARY REDIRECT” status code since it replays the credentials in the body of the POST to the Sold app. Instead, the issuer MUST use a HTTP 302 “FOUND” or 303 “SEE OTHER” status code having handled credentials from a user. Indeed, the Solid OIDC draft suggest 302, since the HTTP semantics of 303 can be interpreted by some clients as a permanent change of the location of the issuer rather than a redirect as parts of the flow of the protocol. Also, Solid should protect against session hijacking since URIs with external untrusted domains may appear in resources obtained from pods. Solid pods should implement a

referrer policy that instructs the browser to strip away the state information from the referrer field of the header when accessing such external URIs.

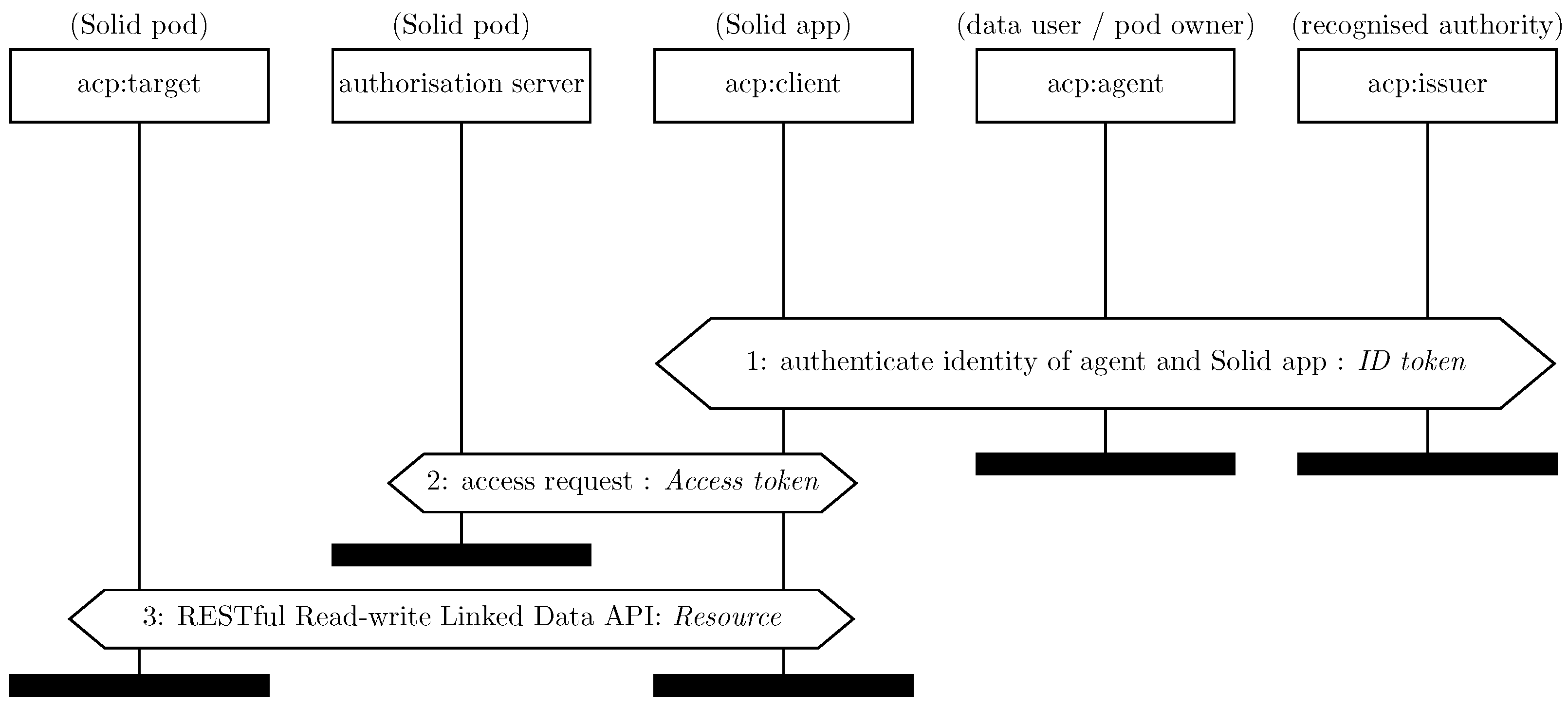

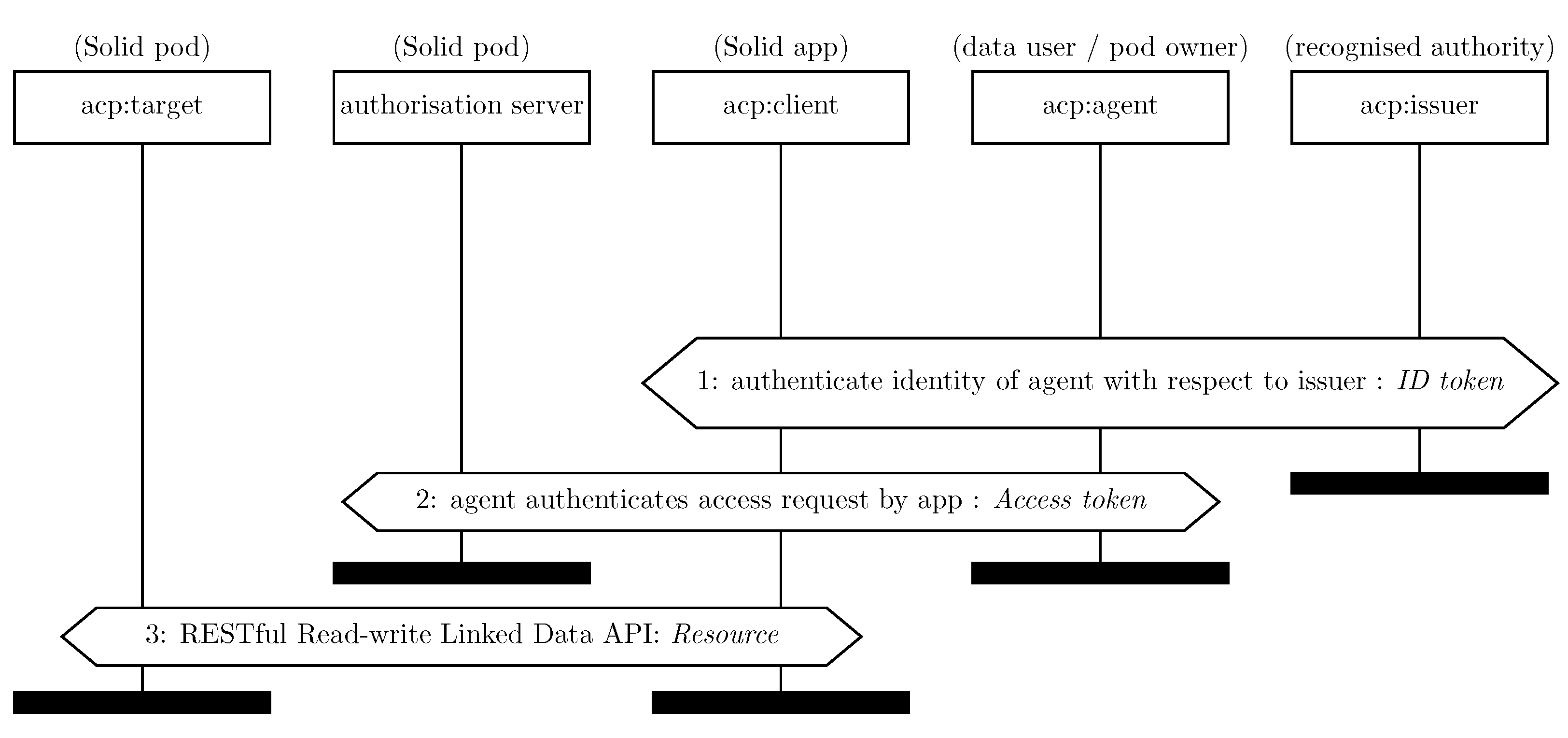

We observe here a security problem concerning the interaction between the authentication protocols such as Solid OIDC and access control mechanisms, present in the current Solid protocol specification at the time of writing. We believe the relationship between actors in the authentication protocols (currently Solid OIDC) and the access control specifications WAC and ACP should be made more explicit to avoid data breaches. In particular, the owner of a Solid pod, defining a policy, may not wish any Solid app with which an agent authenticates to access some resource intended for the agent in some other context. This means that naming only the agent in the access control policy is insufficient, which is currently the case in ACL, as used by WAC. Instead, ACP must be employed whenever an app is used to access a pod via Solid OIDC. Furthermore, the context graph in ACP, referred to by an authorisation graph that describes access control policies, must explicitly indicate that access is granted to a particular Solid app by using the property acp:client. This is not clearly stated in the specification of ACP or Solid. Indeed, the first example provided in the ACP specification at the time of writing indicates only an acp:agent, making it insecure for use with Solid OIDC, as we explain next.

Failing to address the above-mentioned issue enables the following attack vector.

An honest agent, say agent1, logs into an honest app, say app1, that is granted read access to a resource, say resource1, pod.

The authorisation graph indicates acl:agent agent1, in the case of WAC, or provides a context with acp:agent agent1 and does not provide reference to app1.

The same user authenticates with any other app, say app2, that was not intended to receive resource1. That app may be compromised since not all apps may be trusted to be as secure as app1.

Since the compromised app is authenticated with an ID token referencing agent1, and since acp:client is absent from the policy of the given resource, the authorisation server protecting resource resource1 will grant read access to the resource when app2 requests access.

The access token issued by the authorisation server to app2 will be valid for app2 to use to retrieve resource1, resulting in a data breach.

One cannot trust all apps to be perfectly secure. Thus, a security failure in one app that an agent uses cannot result in all the data intended for the agent across all pods and apps becoming compromised. Thus, the above attack vector must be addressed to avoid data breaches.

The draft concerning Solid Application Interoperability [

50] goes some way to address the above by indicating that the subject of an access grant may be an agent and app, indicated as follows.

An authorization subject [snip] is either an Agent [snip], a User-Piloted Application in use by any Agent, or a combination of a specific Agent using a specific User-Piloted Application.

The mechanism could be clarified since there is no explanation of how a combination of both should be indicated (via ACP for example). To address our observations above, we suggest such statements should be strengthened to ensure that a grant towards an agent must be tied to a specific app. There is a community discussion related to this:

https://github.com/Solid/web-access-control-spec/issues/81 accessed 13 December 2022.

Going further, the issuer must also be indicated explicitly, to avoid accepting ID tokens where an honest issuer is bypassed entirely, and a malicious issuer manufactures their own ID token for any combination of honest app and user. Even if an authorisation server attempts to mitigate such an attack by looking up the WebID of the agent, and checking the list of permitted issuers, there is no guarantee that there is one issuer, nor that all issuers are equally secure. This would mean neglecting to mention the issuers means that the security of the agent becomes only as good as the security of the least secure issuer listed by the agent, and is not in the hands of the pod, which may trust one issuer more than another. This is a legitimate concern for Solid OIDC, which permits anyone to become an issuer.

A complementary measure is for the agent using the app to explicitly restrict the scope of an ID token granted to an app as part of the authentication protocol. As it stands, if the specification of the Solid OIDC protocol were to be implemented literally, without further refinements, the scope is limited to a single string

“Solid” [

12]. Thus, although the identities of the app, the agent, and the issuer are simultaneously authenticated by both the agent and the app, the app is implicitly delegated by the agent to use the resulting

ID token to access in any way any resource owned by the agent in any Solid pod. A potential measure is to also include, instead of just

“Solid”, a representation of a policy (e.g., an authorisation graph), indicating an explicitly narrower scope, which means an agent has delegated access to a Solid app. The scope should be able to narrow down the set of pods and resources within those pods that the Solid app has to be approved to access on behalf of the user. To implement this, the authorisation server should only grant an operation for a resource if the policy indicated in the ID token also grants permission. This can help avert data breaches, and hence is pertinent with respect to GDPR from the perspective of any data user logging into a Solid app, who should be able to set appropriate policies themselves to avoid such breaches, and not depend exclusively on the owner of pods.

4.2. Effective Use of Cryptography (Req_02)

In the Solid specification, cryptography is mandated via the use of HTTPS in a RESTful API for transmitting private data between data pods and authenticated and authorised clients and as part of the authentication protocol (Req_01). The use of HTTPS, in a RESTful API for sharing private data between data pods and authenticated/authorised clients, does not necessarily guarantee privacy; hence, we examine how effectively HTTPS is employed in the current Solid specifications. We focus here on which aspects of the RESTful API of Solid should be addressed due to privacy issues.

Regarding effective cryptography beyond HTTPS, GDPR stipulates cryptography can be used to improve trust in the access logs, which is not currently covered by the Solid protocol (see also Req_04). Moreover, Solid does not mandate the use of cryptography when storing data in pods (see also Req_03).

We illustrate profiling vulnerabilities here using a leading example scenario. Consider a scenario where a data subject makes resources (e.g., a health record) available to an agent (e.g., a doctor). In addition, to avoid profiling, the data subject would prefer that not even the existence of the resource should be revealed to third parties. Suppose that the resource in the pod is made available via

https://john.provider.net/vaccinationdata.ttl. There is then a crude but effective profiling attack, impacting privacy, where the attacker poses as an app trying to access a resource and observes from the HTTP response whether a resource exists. Suppose that 404 “NOT FOUND” is the response for resources that do not exist, and 401 “UNAUTHORIZED” is used when the app has not yet been authenticated to use a resource. For pods implemented in this way, an attacker can determine that a data subject has a vaccination record even though the attacker has no access to the record, thereby violating privacy. Note that, at the time of writing, the Solid protocol specification indeed states, “when a POST method request targets a resource without an existing representation, the server MUST respond with the 404 status code”.

A resolution to the privacy problem identified above is for pods to respond with 401 “UNAUTHORIZED” whether or not the resource exists. That is, a pod should never respond with a 404 “NOT FOUND” even if a resource does not have an existing representation, and should instead respond to such a request with a dummy 401 “UNAUTHORIZED” with a dummy token in its header that is indistinguishable from a genuine 401. This way, pods remain compatible with the current flow of Solid OIDC that makes use of the handle provided in a 401 “UNAUTHORIZED” token to indicate which resource needs to be accessed. This resolution would be important to make explicit for functionality reasons so that apps take into account that 401 could also mean that resource does not exist. The existence of a resource can only be known by prior knowledge, e.g., by the data subject informing the data user, or by authenticating with valid credentials according to the policy of the resource. Non-existence cannot be determined, and not even by attempting to authorise using incorrect credentials and observing the response.

Another issue is that the specification permits HTTP URIs. HTTP URIs are redirected to their HTTPS counterparts using a 301 “MOVED PERMANENTLY” status code and a location header. The specification states that “a data pod SHOULD use TLS connections through the HTTPS URI scheme to secure the communication between clients and servers.” However, “SHOULD” should be upgraded to “MUST” since the protocol for handling HTTP URIs reveals the URI that is being requested by honest clients to any eavesdropper. An eavesdropper may further exploit the HTTP URI by injecting their own malicious payload in place of the 301 returned by the honest server. Therefore, the above line should be erased from the specification, or, better still, all HTTP URIs must return a uniform error, such as 501 “NOT IMPLEMENTED”. To counter the argument that such status codes may impede users who habitually enter http: into their browser, note that it is Solid apps that access pods, and not naïve end users, and hence Solid pods can be expected to implement appropriate API usage.

Even with the above issues addressed, HTTPS traffic between pods and apps can reveal significant information even with effective cryptography in place. This is because an external attacker may nonetheless infer information about the resources accessed. An attacker can trivially infer the fact that

john.provider.net is contacted by a particular app, hence the domain should not reveal the name of the data subject as a sub-domain (as is the case for Inrupt for example). To reinforce this, also consider that a man in the middle knows the following: the length of the URL being accessed, rounded up to the nearest block length in the cipher, say 128 bits; the length of the resource in the response; and the response time between initiation of the session and termination of the session, from which behaviours may be inferred. Indeed, in reasonably busy applications, such as those with a dynamic Ajax API, such information has been shown to reveal fine-grained information, such as keywords being typed [

51]. Even coarse-grained information inferred in this way can be used to trace behaviours profiling a data subject. For instance, if a data subject is a candidate in an election, activity concerning their data may reveal information about their popularity without consent. Avoiding such profiling attacks would require radical steps that perhaps obfuscate the above information. We expect, for now, such measures are out of the scope of the state of the art and resources available to pod providers. The Solid protocol specification at the time of writing mentions protecting against timing attacks, without being specific.

4.5. Identification of Purpose and Legal Basis (Req_05)

The Solid protocol suggests that users have control of their data by directly managing WAC and ACP. The intention is that data subjects, who store their data in their own pod, can determine for themselves whether an entity requesting to access the data has a legal basis and purpose to use the data in a particular way. The limitations of such arguments are that, firstly, manipulating WAC and ACP is too low-level for most users, and secondly, the relevant legal information is inferred from the external context, independently of Solid.

As mentioned in

Section 3.3.6, controllers are obliged to record specific information related to processing activities. For large organisations and public entities, the controller assigns a DPO for whom recording such processing activities is one of their formal duties. For example, the DPO of a university records in an internal information system the purpose and legal basis for processing activities related to each ongoing research project involving personal data. The question here is which agents in the Solid ecosystem are responsible for identifying the purpose and legal basis for processing personal data. The majority of data subjects will not have the legal expertise to make a judgement about what purpose and legal basis applies in a scenario. Ultimately, the responsibility for identifying the purpose and the legal basis is the controller responsible for the data user logging into an app, or the controller responsible for the app itself, depending on the use case. Thus, responsibility for a failure to record the correct purpose or legal basis for access by a data user at the time of each authorisation request lies with their controller, whether or not the data user followed correctly the guidelines recorded by their controller. Thus, by a pod requiring that the data user indicates their legal basis at the time of an authorisation request, the data subject has stronger grounds for holding the data user accountable, via their controller, in the case of a dispute.

Technical measures to address this requirement currently being explored include where ACP is extended to take into account for what purpose the personal data of a data subject is being processed [

52,

53,

54,

55]; that is, an access control policy in ACP should record explicitly the legal basis under which agents and apps are granted access to a resource. The purpose can also be recorded in specific accesses granted, and if an access control log is retained, recording the history of accesses granted, then we obtain a history of the purposes and legal basis associated with each grant. Such steps are “encouraged” by GDPR (cf. Recital 100), “allowing data subjects to quickly assess the level of data protection of relevant products and services”.

In addition to recording the policy in the authorisation server of the pod, authorisation requests from Solid apps can also record the reason why the access is requested by making explicit the purpose in the scope of the ID token agreed upon with the agent logging in. This will explain how data users will process data upfront. The Data Protection Vocabulary (DPV) is a key step towards presenting such structured information so that the purpose may be taken into account by automated agents [

56]. For example, personal data accessed may be used once in a computation to find the percentage of recovered COVID patients in a community, without revealing an individual’s health status. For

data retention purposes, which is another requirement of GDPR, it would be beneficial if the policy stipulates whether the data must be destroyed after use by the Solid app, or whether the data is permitted to be used only within a particular time window.

There are several possible mappings from controllers to concepts in Solid, as discussed in

Section 3.1. For example, it is natural for controllers responsible for multiple data users (e.g., their employees) to act as an issuer in authentication protocols, as discussed in

Section 4.1. This way, since the WebID of controllers would then become cryptographically tied to the ID token, then generic information that must be recorded, such as the contact details of the relevant DPO, can be recorded in the WebID. Similarly, if the processing activities of the app itself are of concern, the WebID of the app can record contact details of the relevant controller, and that WebID becomes cryptographically tied to a particular access. Since controllers can delegate some processing activities to processors that act on their behalf, but they remain responsible for them according to GDPR, authentication protocols could be adapted to provide the means for delegation of access to a processor (e.g., when a controller delegates part of its processing to a privacy-preserving service operated by another organisation). Authenticating chains of delegation is out of the scope of ACP and Solid OIDC currently, and would require more clarification than just making use of the

acl:delegates term since such a pattern should be reflected in the authentication protocol.

4.6. Access Logs Recorded as Evidence of Policies and Accesses Granted (Req_06)

We now focus on access logs relevant to GDPR in the sense explained in

Section 3.3.3. Access logs concern access to a pod of a data subject by data users, and their corresponding authorisation requests that established the policy under which a particular access for the access was granted. Records in logs of authorisation servers and Solid apps can build on ACP [

21], which can describe access policies and grants recording, for example, the user, app, and issuer used to log in; the resources accessed; operations granted; and the agreed terms of the policy granting access (see Req_04). Logs should also be retained by issuers to record the app, user, and scope of ID tokens approved.

To back up log entries with evidence, there are several aspects of each successful access that can also be logged by various actors in the Solid ecosystem. Assuming Solid OIDC is employed, the following cryptographic evidence is generated for each successful access request.

The Solid app can log instances of issuance of an ID token as cryptographic proof of access being granted by the user via an issuer.

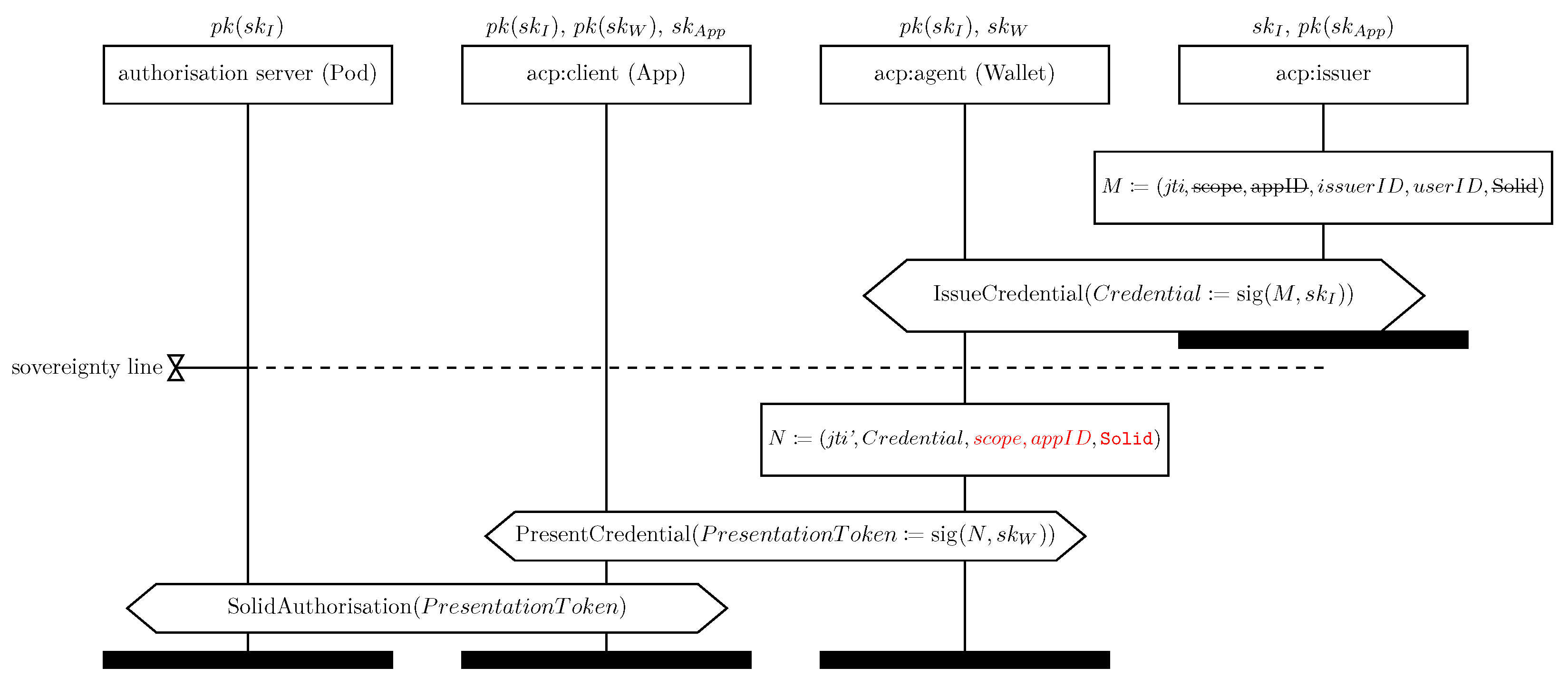

The issuer can record evidence of their contribution to authentication, e.g., ID tokens they have issued in Solid OpenID Connect (or the verifiable credential they issue in VC protocols).

The authorisation server can log cryptographic proof of access grants via the ID token and DPoP token used in the authorisation process, where the DPoP token contains context information about the scope of the access requested, notably the URI and method and a hash of a public session key, which cryptographically proves possession, by the app, of the ID token. The ID token cryptographically asserts the user, app, and issuer. The authorisation server may also log access tokens issued.

The resource server can log access tokens used to access a resource.

The desired formal property we wish to rely on in order for the above evidence to serve as trustworthy evidence that can be used in logs to assert that the agents involved really approved is known as

non-repudiation [

57]; that is, by some agent presenting their logged evidence, it is impossible for the other agents involved in the protocol to deny that they were actually involved in their part of approving the access under the terms agreed.

What is notable about the above observations concerning the trustworthy evidence available to the various agents for logging purposes is that data users, subject to GDPR as controllers or processors, do not directly obtain cryptographic evidence of their accesses by virtue of participation in the Solid OIDC flow, according to the current protocol specifications. This suggests that data users are reliant on the logs retained by apps, pods and issuers that they use for cryptographic proof of access when the Solid OIDC flow is employed. The current flow proposed for Solid OIDC therefore requires the data user to trust one or more of these actors to properly handle accesses. Without relying on other actors, the data user may only record informally non-cryptographically asserted logging attempts to a particular app, issuer and scope, in the personal informal ledger of the data user, external to the Solid ecosystem. This is of course consistent with GDPR since the trust of logs may be inferred from the trustworthiness of the controller responsible for the data user; however, trust is enhanced in the case of legal disputes that bring into question the integrity of the data user if they can provide cryptographic non-repudiable proof to back up log entries.

In order to improve trust in the logs of data users, independently of an app they log into, a potential future solution is to support authentication flows, whereby the data user is involved directly in signing ID tokens (we cover further VC protocols with this property in

Section 4.8). Going further, in order for a data user to observe accesses made by an app on their behalf, the data user may be involved in signing authorisation requests initiated by the app, where access is granted to a resource, as suggested in

Figure 7. Thereby, each party receives cryptographic evidence of the agreement between the authorisation server, app, and data user, as part of the protocol, which they can independently log. If designed appropriately, such a flow can also provide an alternative resolution for some of the authentication concerns in

Section 4.1 since the app is forced to check with the data user for each request to the authorisation server, permitting the data user to verify the access against their own policy for how the app may access data on behalf of the data user. This flow could be preferred by a data user if the data user does not fully trust the app to retain evidence and access logs (or indeed if the data user wishes to restrict the app). We are not aware of any such flow proposed for Solid.

Another notable observation concerning the availability of authenticated cryptographic evidence is that there is nothing in the Solid specifications that cryptographically ties the access token used to access a resource via a resource server to a particular DPoP token where access to the resource was requested by the authorisation server. Hence, the authorisation server must be trusted to correctly assert the pairing of access token and resource access instance if it is required to provide cryptographic evidence of fine-grained access information. Specifically, the resource server may record that an access was made by an app to a particular resource with a given operation at a particular moment, and not just that the access was granted. Since access tokens are not specified, currently, the resource server must be trusted in order to believe that an access token was indeed used to access a particular resource, if the specific operations performed by an app are challenged. This means that access grants logged by the authorisation server currently contain the most pertinent trustworthy information about access grants, rather than the accesses to the resource server itself. Thus currently, having retained authorisation logs, one can prove that a user was allowed to access a resource but not that they did access a resource, which could be problematic legally regarding conflicts of interest for instance.

Recall that ACP separates specific instances of grant-access grant graphs from the policy—the authorisation graph. It is therefore appropriate to retain two separate logs for the authorisation server, where the log containing policy entries (authorisation graphs) can be used to provide explanations of each instance of an access grant under some policy. Having the policies logged separately, would permit replaying access grant requests in the past to explain, not only currently granted accesses, but also patterns of access in the past, which could be useful for explaining abuses of contractual agreements. An alternative to retaining the policy could be to rely on relevant policy information being recorded in each access grant graph, which has the advantage that less information about a policy need be leaked if the policy employed is of a general nature that pertains to a broader context than requested, e.g., if the policy encompasses conditions under which other data users may access the data.

ACP can be extended with features that can help usage control. At a basic level, ACP does not currently specify how timing information is recorded, which would be included in access logs, but would be better still built into policies, as we explain. For policies, the invocation timestamp and expiry of a general policy can be useful to limit processing activities and for logging purposes. Also, recording the duration of instances permitted by a policy allows the expiry of each access grant instance to be set automatically in the resulting access grant graph. Thus access instances, recorded by access grant graphs, should record their time validity and revocation conditions. As with other policy information, this should also be authenticated via the authentication protocol employed, e.g., backed up cryptographically via appropriate timestamps in ID tokens and DPoP tokens. Going further, the access token issued by an authorisation server may be stateful and build in a particular control of usage, prescribing a process with specific URIs that may be accessed and operations that may be performed on them in a particular order.

By logging policies, non-compliance with obligations in such policies can be detected [

58,

59]; however, proposals related to usage control are not yet incorporated into Solid specifications. Ensuring policies are logged caters for such future extensions to Solid, in addition to improving accountability in the short term.

As mentioned at the beginning of this section, ideally, logs should be supported cryptographically, and some information, but not all such information, is provided by Solid OIDC. Solid OIDC was never designed with producing cryptographic non-repudiable evidence with logs in mind. Thus, the observations we make are a creative step to leverage the existing technology to support logging. A better solution would be to design the authentication protocols and the certificates they produce with the logging consideration we have mentioned in mind.

An alternative to producing trusted cryptographic evidence of logging is to use access logs simply asserting the relevant context graph, and for the access logs to be governed by a trusted third party. The obvious incarnation of this is that the authorisation server may be governed by a pod provider independently of the pod owner, meaning that logs presented as evidence of an access by the authorisation server can be trusted independently of claims made by the pod owner or data user, assuming that the pod provider is legally external to the dispute and makes use of a trusted solution such as an encrypted database that they do not access themselves (in particular the pod cannot be self-hosted by the pod owner). Whether using cryptography or a trusted 3rd party, either approach to tamper-proofing access logs should guard against scenarios where a malicious data subject aims to incriminate a data user by claiming falsely they accessed the system in violation of an agreed purpose, and hence the data subject owning the pod should not be able to forge a proof of an access that did not occur.

Related work by Pandit [

60] on GDPR and Solid also emphasises the need for different types of logs to be retained by different actors in the Solid ecosystem. Pandit points out correctly that the Solid interoperability draft [

50] does introduce the notion of an access receipt that an agent can provide to another agent. This may be useful for presenting evidence of access to users for example. However, as observed by Pandit, the form of access receipts is not specified. Going further, we add that just providing a receipt as a message does not mean the receipt can be trusted and thus such a receipt should be part of the authentication protocol, and properties such as non-repudiation of the receipt should be verified if the receipt is to be trusted as proof in the relevant contexts.

4.9. Protocols Facilitating the Rights of Data Subjects (Req_09)

Article 20 enshrines the right to data portability, allowing the data subject to obtain their personal data in a structured format. Thus, Article 20 is catered for by using Solid to store personal data, as in the use cases we focus on throughout this paper.

Articles 16–18 of GDPR enshrine the right of data subjects to rectify their personal data, erase their data entirely, or restrict their usage for data processing. By Article 19, the controller has the responsibility to notify the data subject of compliance with such requests. We discuss here how such rights may be supported by an additional protocol layer involving the controller and the pod.

In Solid, one may argue that since data subjects store their personal data in their own pod and control access, they have direct control of these processes. In the current version of Solid, the data subject always has full control of the data in their own pod, which means they are always able to write, modify, and remove data elements and files in their pods. A limitation with such an argument is that there are scenarios where erasing the data stored in the pod does not mean that the data is erased by data users connected to the pod since the data user also retains a copy, and the data in the pod is a courtesy to the pod owner for transparency purposes and to leverage Solid to facilitate compliance. Going further, there are scenarios where a contractual agreement does not allow the data subject to modify, remove, or restrict data stored in their own pod freely, even if they have full power to inspect the data, access control policies, and logs. One such scenario may be if data is required for billing purposes or medical records. In other scenarios, data subjects may prefer to permit management of part of their personal data to be handled most of the time by the relevant data user since they may lack the expertise or time to understand their data and modify them correctly.

In any of the above-mentioned situations, we propose that a protocol involving the data subject owning the pod and the controller responsible for data users would facilitate compliance with Article 19. Such a protocol would notify the controller about requests to erase (via an app with suitable access to the data concerned, for example), in order to facilitate the erasure of personal data across multiple locations, not only the pod. Such a protocol would also enable the controller to explain why certain requests for erasure may not be possible. This is likely an additional protocol layer built on top of the existing Solid specifications, rather than an enhancement of the existing REST operations.

Similarly to erasure, the right to restrict processing may only be triggered via Article 19 and not unilaterally by the data subject without notification of the request to change the purpose of processing. It would be incorrect just to change the purpose of processing in the access control policies of the pod unilaterally.

Article 21 ensures a data subject may always challenge processing activities. This is partly catered for by Solid, in that there is transparency about the data stored and the accesses, which can be used by the data subject to substantiate an objection. For example, it was reported (

https://www.dublineconomy.ie/insights/american-football-game-linked-to-us-tourist-spending-surge-in-dublin-17703/ accessed 8 December 2022) that Mastercard partnered with Dublin Council to process personal data on payments during sporting events hosted by the city. If someone was in Dublin during events, they may be reassured by the fact that anonomysation was applied, but alternatively, they may wish to exercise their right not to be subject to such processing, or even question whether there was sufficient legal basis. In such scenarios, if the personal data held and the accesses to that data made by the organisation involved were made transparent by logging them through Solid, then resolutions according to the preferences of data subjects can be facilitated. Therefore, this right could be facilitated by agreeing on protocols for notifying a relevant controller about specific objections, and by the use of cryptography to ensure tamper-proof logs. Such a protocol should be able to produce evidence to back up a request from the data subject to the controller. This includes evidence recorded concerning accesses and the purpose of accesses extracted from access logs of entities wishing to comply, such an authorisation server retaining logs on behalf of the data subject, as discussed in

Section 4.6.

Similar provisions may be built into Solid to facilitate the right enshrined in Article 22, concerning the right not to be subject to decisions based solely on automated processing. Article 22 is, of course, related to emerging regulation concerning the ethical use of AI.

5. Open Issues and Challenges

Besides the open technical challenges highlighted in the previous section concerning the evolution of the Solid specifications in order to improve GDPR compliance and tool support, there is also the issue of keeping up with emerging legislation initiatives. We present here some emerging legislation that we expect to impact Solid. We leave as a future work a more detailed analysis when the legislation is approved and their content is precisely known since for some of them only drafts are circulating.

First of all, the topic of data protection in the EU has been approached using a couple of legal tools: a so-called lex generalis, issuing a set of generic and universal rights and obligations, and a lex specialis, overriding the general provisions and issuing specific norms with respect to a particular context. The relevant lex generalis is of course GDPR 2016/679, which superseded the Data Protection Directive 95/46/EC (DPD). Connected to them, we have the Privacy and Electronic Communications Directive 2002/58/EC on Privacy and Electronic Communications or ePrivacy Directive (ePD), which has been amended by Directive 2009/136. The ePD directive is narrow in scope, targeting only the confidentiality of network-enabled communications, and the treatment of traffic data, spam, and cookies, and specialising in DPD. Therefore, the EU legislation is incomplete in the sense that a counterpart of GDPR has not been approved yet. In the near future, we expect to witness new regulations superseding the ePD, as GDPR has superseded DPD. This regulation, named the ePrivacy Regulation (ePR), will introduce important novelties that we will explain next.

A limitation of ePD is that it addresses traditional telecom operators, and not necessarily other players in the digital society and ICT market, which are subject to GDPR. Furthermore, GDPR obligations are ambiguous regarding how they are applied by specific actors. As evidence, there are ongoing debates on how blockchain, cloud, or IoT can be compliant with GDPR. This issue we expect to be addressed by ePR by prescribing that such technologies meet security and privacy requirements comparable to telecom operators who are already subject to ePD. This will be made possible by introducing the concept of data intermediaries that are involved in data processing on behalf of data users, and by specifying the legal obligations of such data intermediaries. We expect Solid pod providers and Solid app providers, for example, to be seen as intermediaries, and hence ePR will likely augment the requirements in this paper. For example, in ePR, consent gathering and handling is expected to be more user-friendly, and hence actors in the Solid ecosystem should gather, log, and manage consent according to this new model.

In addition to these legislative efforts to complete the EU data protection framework, novel legal tools related to cybersecurity and resiliency are emerging. The Cyber Resilience Act (CRA) aims to define common IT security standards for digital products connected to the network (so-called “IoT”) and related services. This will add to the recent NIS 2 Directive 2022/2555 that has been approved to respond to the growing threats posed by digitisation and the wave of cyberattacks. These novel EU legislative initiatives and tools will strengthen and enlarge the set of requirements we have listed in

Table 2, for which actors in the Solid ecosystem will need to find solutions. Last but not least, the EU Council has also approved the Data Governance Act (DGA), applicable from 24 September 2023, which has been anticipated by elements of the Open Data Directive (2019/1024, ODD) concerning

data altruism aimed at liberalising the data market. DGA aims to support a flourishing European data economy, but does not replace the rights and protections set in place by GDPR. New roles in DGA should be mapped to those in GDPR. Specifically, DGA defines novel types of data intermediaries we expect to be relevant to Solid. The role of data intermediaries as defined under DGA, together with an expansion of data subject’s rights (more access control, transparency, and data portability) in the complementary Data Act (currently under review) seem to fit with principles underlying Solid. All of these legal aspects will surely give more business opportunities and growth to Solid and, at the same time, will impose novel challenges to be properly addressed, leading to an evolution of the standards and technologies governing the Solid project.

In light of these novelties, we expect

Table 1 and

Table 2 to evolve over time, along with technological and societal advancements and related forthcoming legislation to regulate them. In order to make this evolution feasible and effective from a practical point of view, we propose that such tables are published and maintained online thus allowing external contributors to also join the discussion and point out relevant literature and supporting documentation. In addition, they might be stored and visualised as semantically enriched hypertext that also employs AI to check consistency/completeness of the coverage of legal requirements (see [

7,

64] for the use of AI on similar use cases). The present paper can be a starting point for the creation of such an online repository and community.

6. Conclusions with Recommendation

In this paper, we elicited requirements from GDPR and officially approved documents, as summarised in

Table 1 and how they relate to measures in the Solid protocol and its ongoing evolution, as summarised in

Table 2. We also present, in

Section 3.2, an overview of a Solid-based healthcare system developed by VITO, which concretely illustrates a typical use case where our legal analysis applies. Indeed, some of our analysis is necessary in order for the system to be deployed outside a sandbox where the healthcare data of real data subjects is processed, notably the controllers in the system and their responsibilities must be identified and catered for appropriately.

We reflect on challenges uncovered for strengthening privacy in Solid. Foremost, the access logs should be an integral part of Solid pods and Solid apps, as a record of accesses granted required for security and privacy audits and to support various rights of the data subject (c.f. Req_04, Req_06, Req_08, Req_10). Evidence can be used by data subjects who own a pod to challenge the behaviour of data users and apps using data in the pod, by notifying the relevant controller while providing a relevant view of the logs that they can back up with cryptographic evidence that the accesses concerned were indeed granted in a given context (Req_08, Req_10). Providing logs externally to a pod, in a separate wallet, creates unnecessary risks as more privacy-critical interactions are exposed over the internet than required; instead, each actor in the Solid ecosystem (authorisation servers, resource servers, Solid apps, data users) should log locally their view of interactions during authentication, along with cryptographic evidence derived from messages they send and receive during the authentication protocol itself. Such cryptographic evidence generated during the running of the authentication protocols used to log in and grant access to resources, elevates trust in logs. This way, controllers responsible for accesses violating their stated purpose may be held accountable, without the ability of the controller to cast doubt on the records of the data subject (Req_10). To support this, both access control policies and authentication protocols should be aligned on context information, including the purpose of an access (Req_01, Req_05). Indeed, failing to align context information in the authentication and access control mechanisms appropriately can leave Solid open to data breaches as explained in

Section 4.1. Current low-level APIs for granting access leave room for developers, who need not be privacy experts, to make mistakes.

This work also makes a case for Solid to support more normative authentication protocols beyond Solid OIDC (c.f., Req_01, Req_04, Req_05, Req_06, Req_07, Req_08). The current normative proposal, Solid OIDC, is vulnerable to attacks violating authentication, although readily implementable measures are proposed to address those vulnerabilities, as explained when discussing Req_01. The greater challenge to address is that Solid OIDC cannot be upgraded directly to support data minimisation via unlinkability from the perspective of an issuer, as explained in the discussion surrounding Req_09. This leads us to suggest that Solid should support verifiable-credential-based protocols [

17], but warn that such protocols should be carefully designed so that they indeed support unlinkability towards issuers, generate non-repudiable cryptographic evidence for parties involved in the protocol (see

Section 4.6), align context information with ACP to avoid attacks (c.f. Req_01), support alternative flows (c.f.

Figure 7), support delegation patterns for processors acting on behalf of controllers, etc. In addition to introducing new normative protocols, stronger multi-factor authentication methods should also be supported, e.g., for administrative functions (c.f. Req_07).

Additional protocols between data subjects and controllers, external to those already defined in the Solid specifications, can be introduced to explicitly facilitate the rights of data subjects and data breach notifications (c.f. Req_06, Req_09), and to streamline the compliance of controllers themselves. Such a layer would enhance Solid as a tool for facilitating compliance with GDPR, but is, thus far, not part of the considerations of the Solid ecosystem.

There are of course many privacy obligations that perhaps should not be catered for by the Solid protocol, but instead lie with pod providers. For example, additional physical and organisation measures typical of ISO 270001 should be enforced by pod providers (c.f. Req_03). We quote GDPR Recital 100, which is pertinent to Solid:

“In order to enhance transparency and compliance with this Regulation, the establishment of certification mechanisms and data protection seals and marks should be encouraged.”

This suggests that the degree of GDPR compliance should be transparently asserted in relation to the technological solution. By tightening specifications we tighten all implementations, and can perhaps facilitate steps towards identifying what certification and seals and marks are appropriate for technologies and actors diligently adhering to standards. One may also leverage the analysis in this paper to prioritise considerations towards certification of such actors in the Solid ecosystem.

Finally, we emphasise that the security and privacy of any system is a moving target since new vulnerabilities are discovered in key standards and libraries on a regular basis. Some of the suggestions in this work (e.g., tightening Solid OIDC using RFC 9207 in Req_01) illustrate this kind of evolution. A possible path for addressing this is to separate the core Solid protocol from an evolving security and privacy review that is updated as vulnerabilities are disclosed. This suggestion can be seen as an evolution of the Security and Privacy Review section in the draft Solid protocol at the time of writing [

2], which is a list covering generic self-review questions for Web platforms [

65]. Such a review can serve as a policy benchmark for pod providers, app providers, and other developers to adhere to, thereby improving trust in the Solid ecosystem.