Interpretation of Bahasa Isyarat Malaysia (BIM) Using SSD-MobileNet-V2 FPNLite and COCO mAP

Abstract

1. Introduction

2. Related Work

2.1. SSD-MobileNet-V2 FPNLite

2.2. TensorFlow Lite Object Detection

2.3. MobileNets Architecture and Working Principle

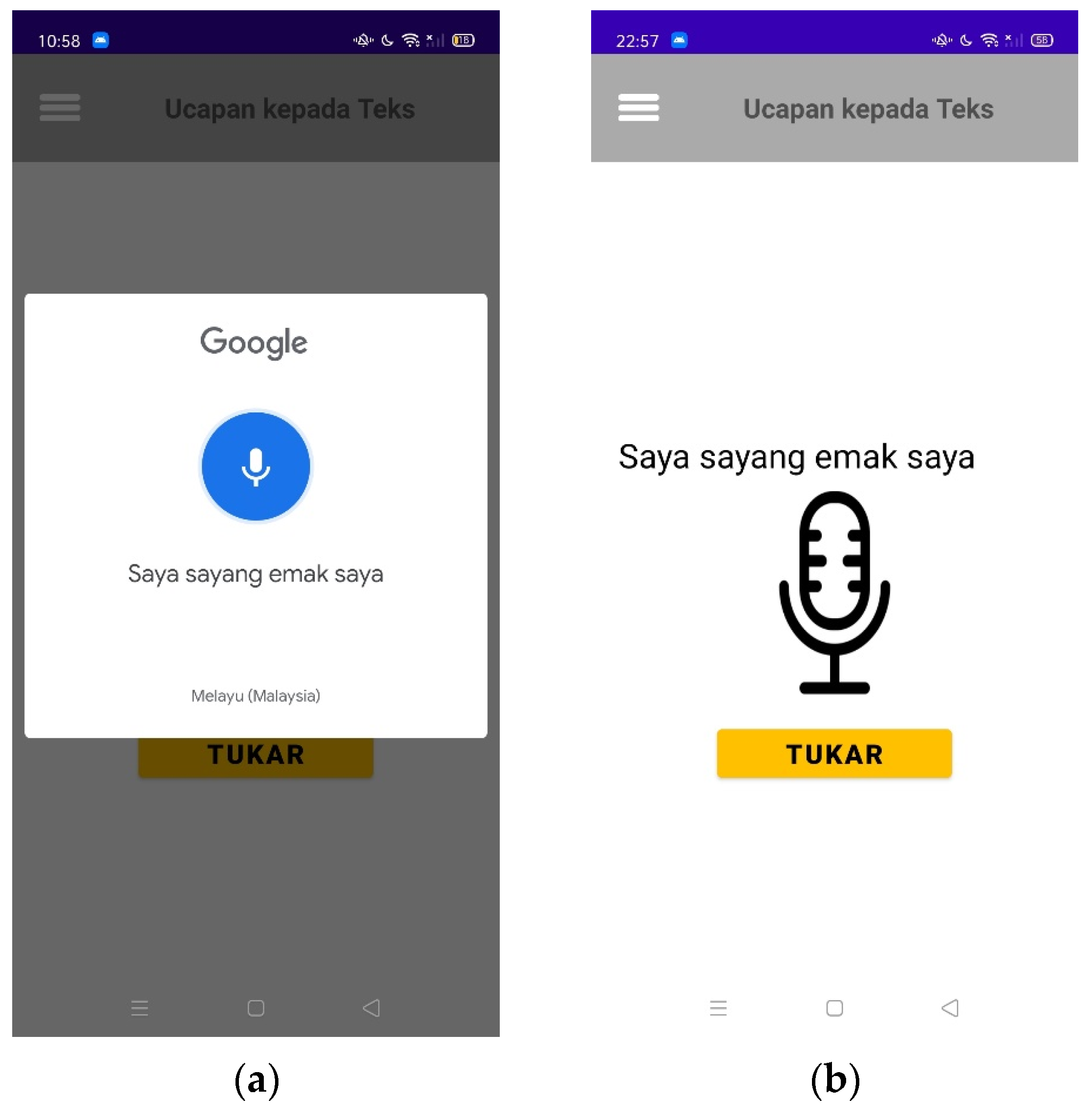

2.4. Android Speech-to-Text API

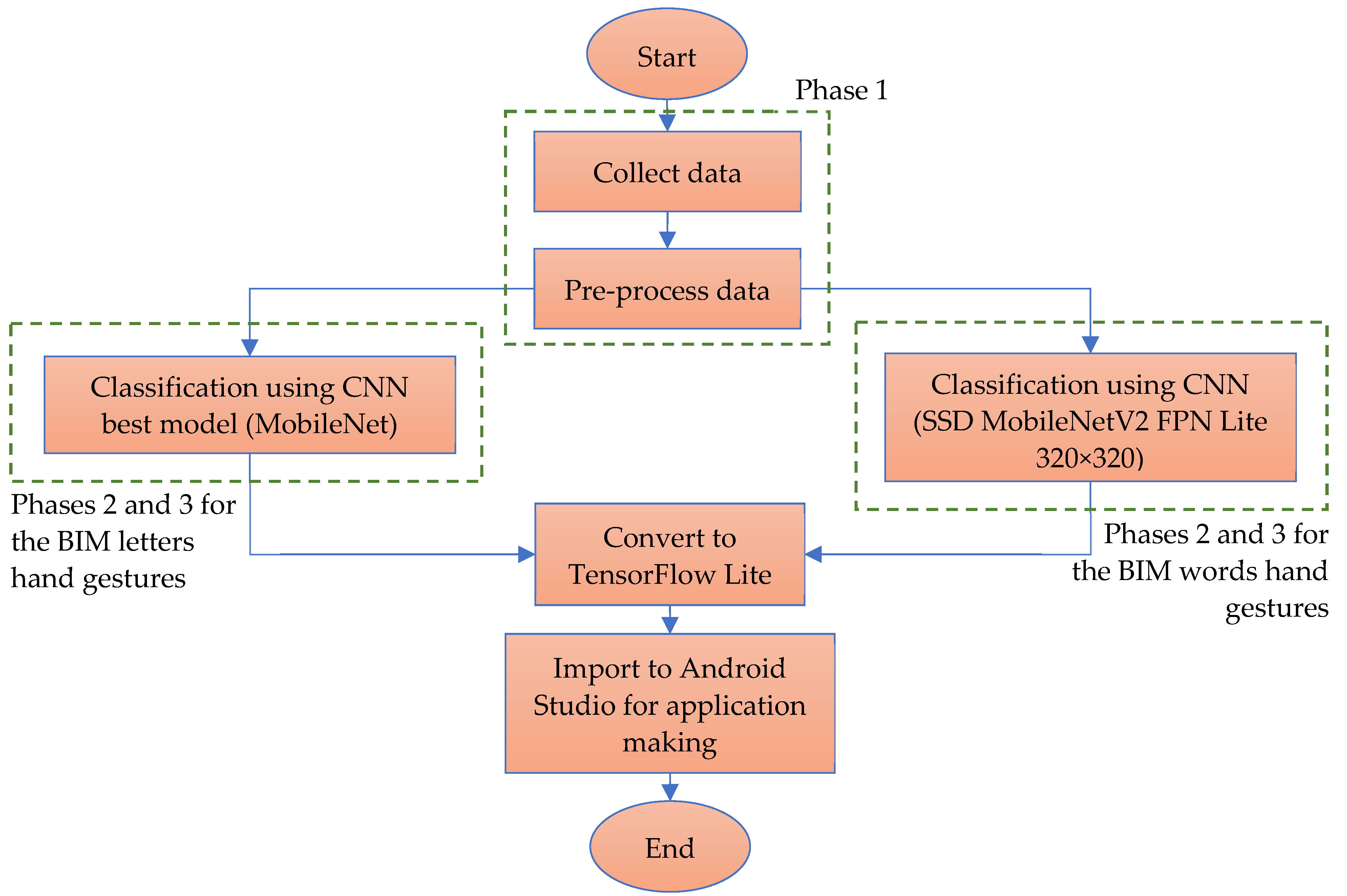

3. Materials and Methods

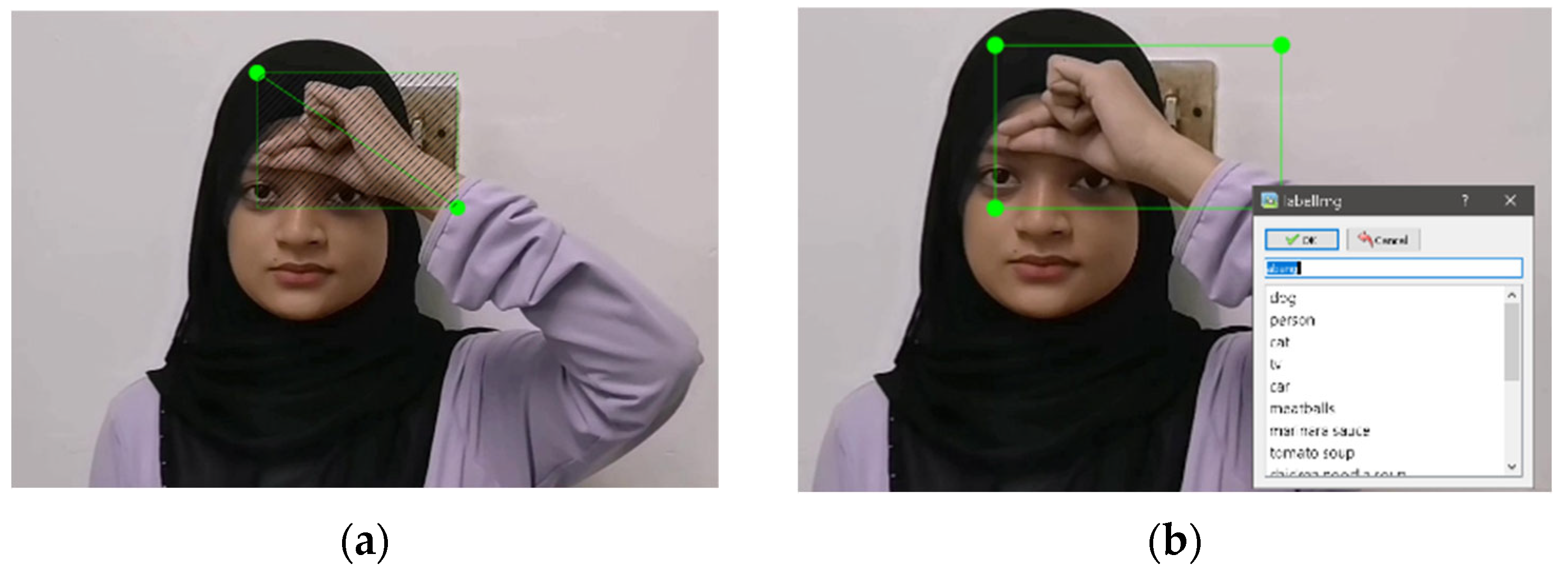

3.1. BIM Letters

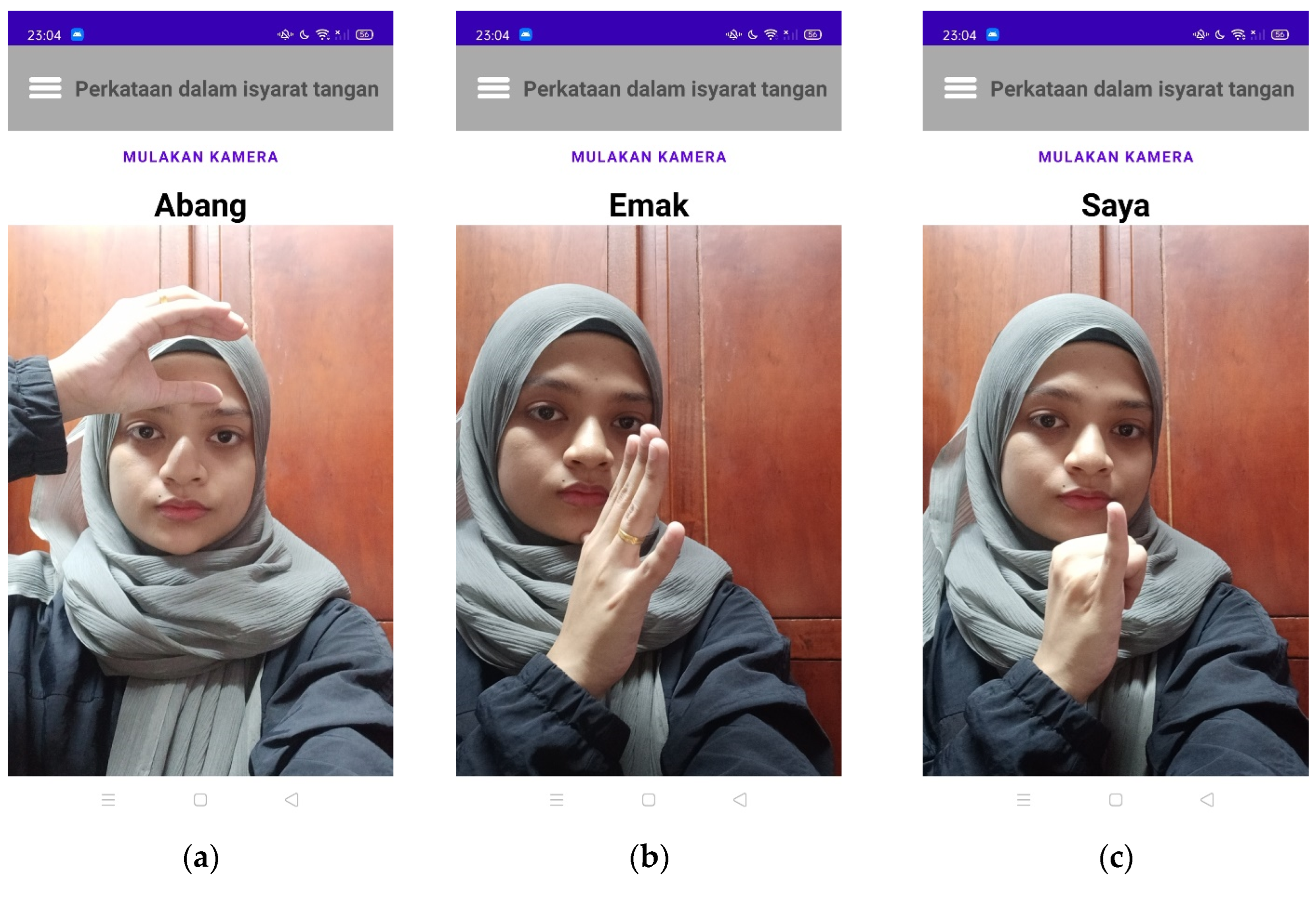

3.2. BIM Word Hand Gestures

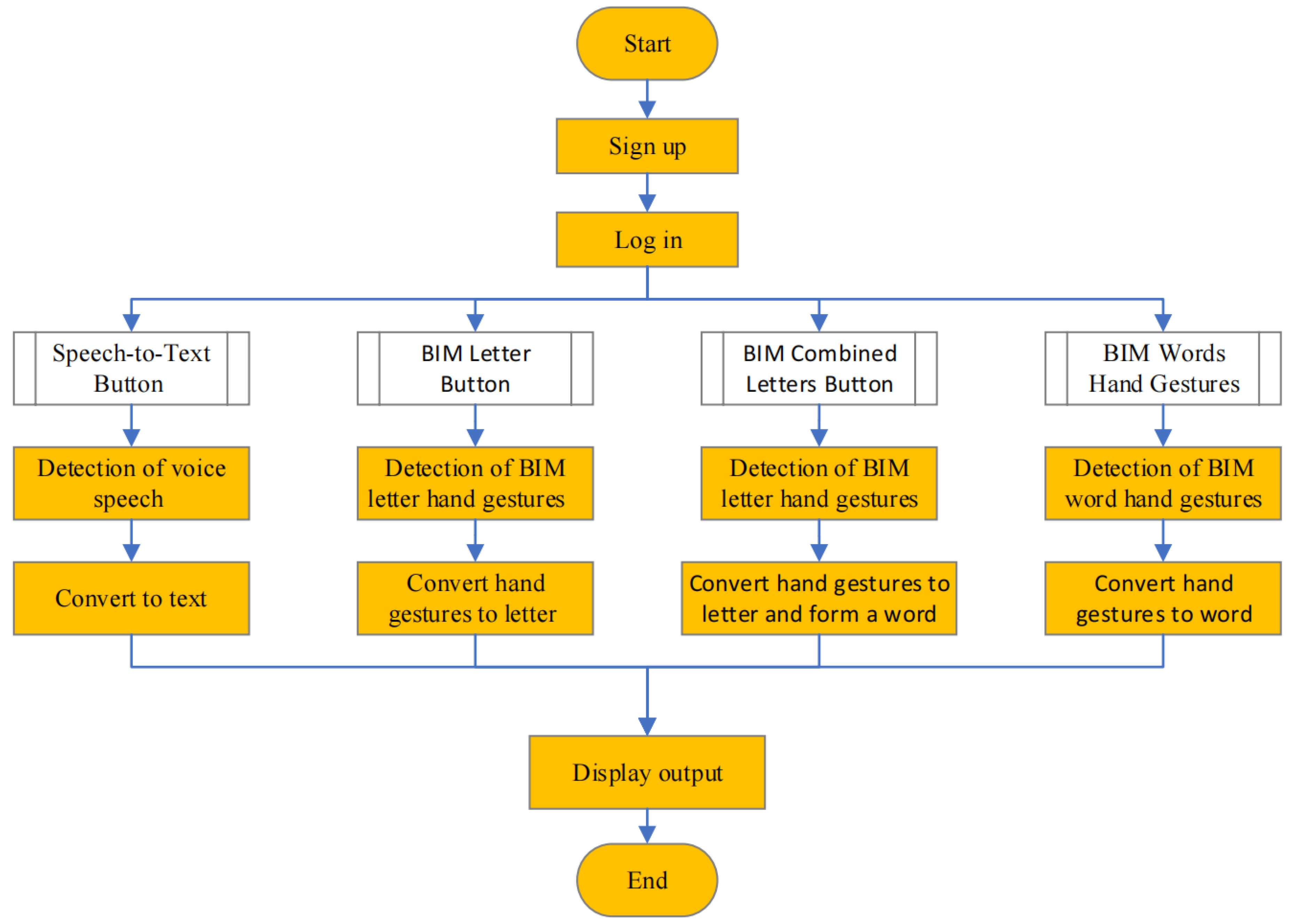

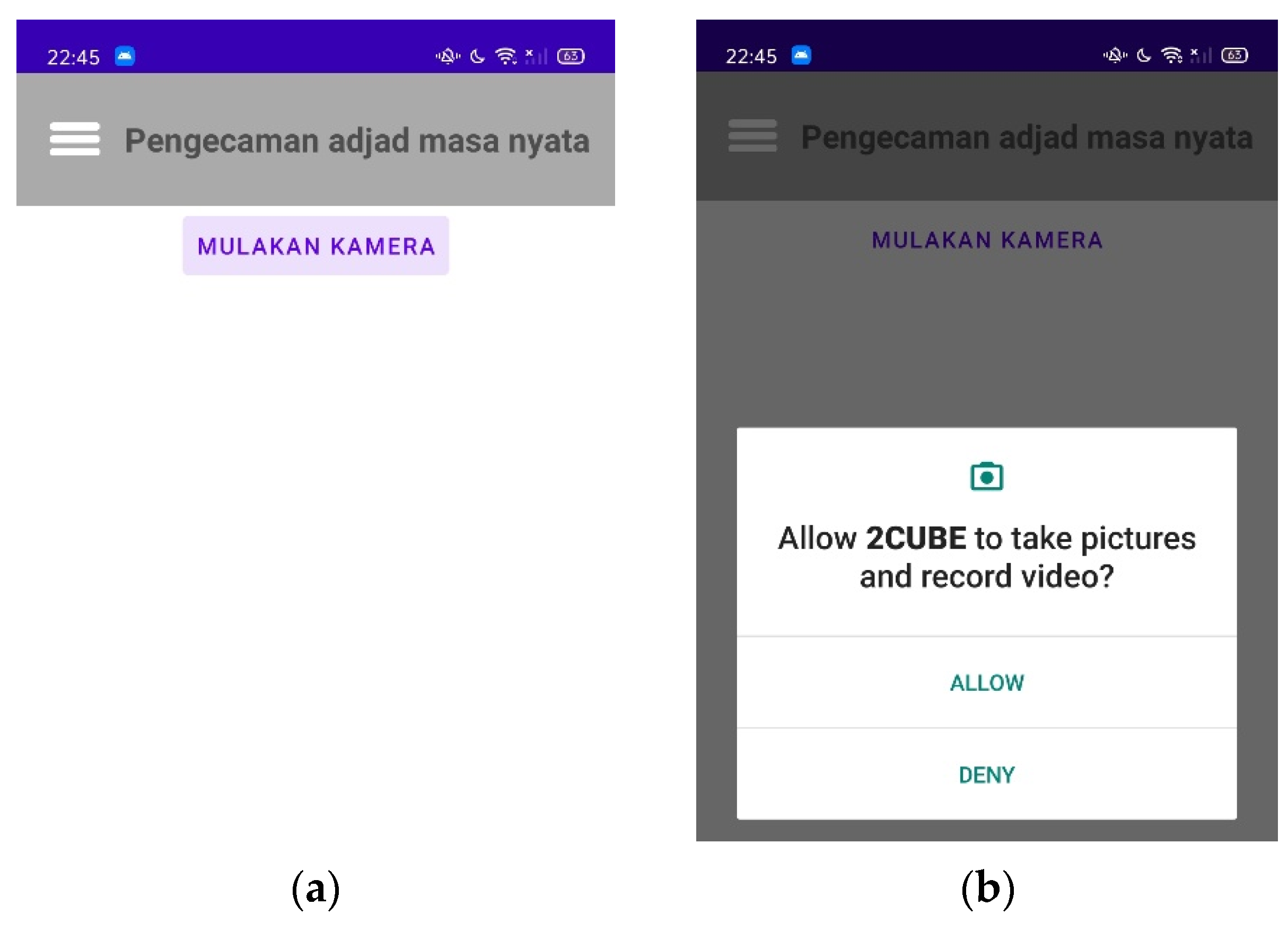

3.3. Android Application

- The user needs to turn on the internet connection.

- The user needs to download and install the app on their smartphone.

- The user needs to register to the app if they are a first-time user (input name, email address, and password).

- The user needs to log in as a user with their successfully registered account (input name and password).

- The user must allow the app to use the camera and record audio.

4. Results

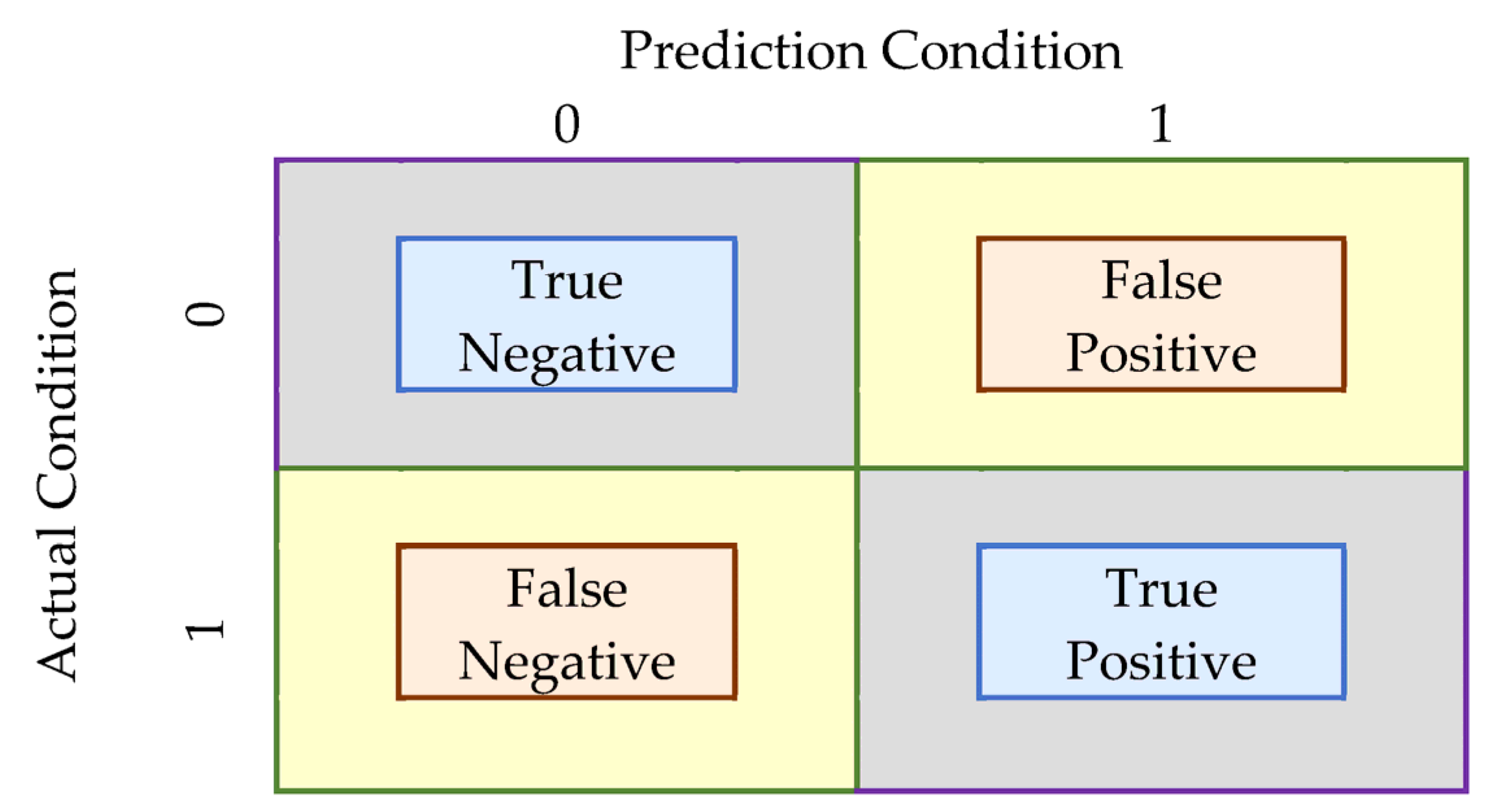

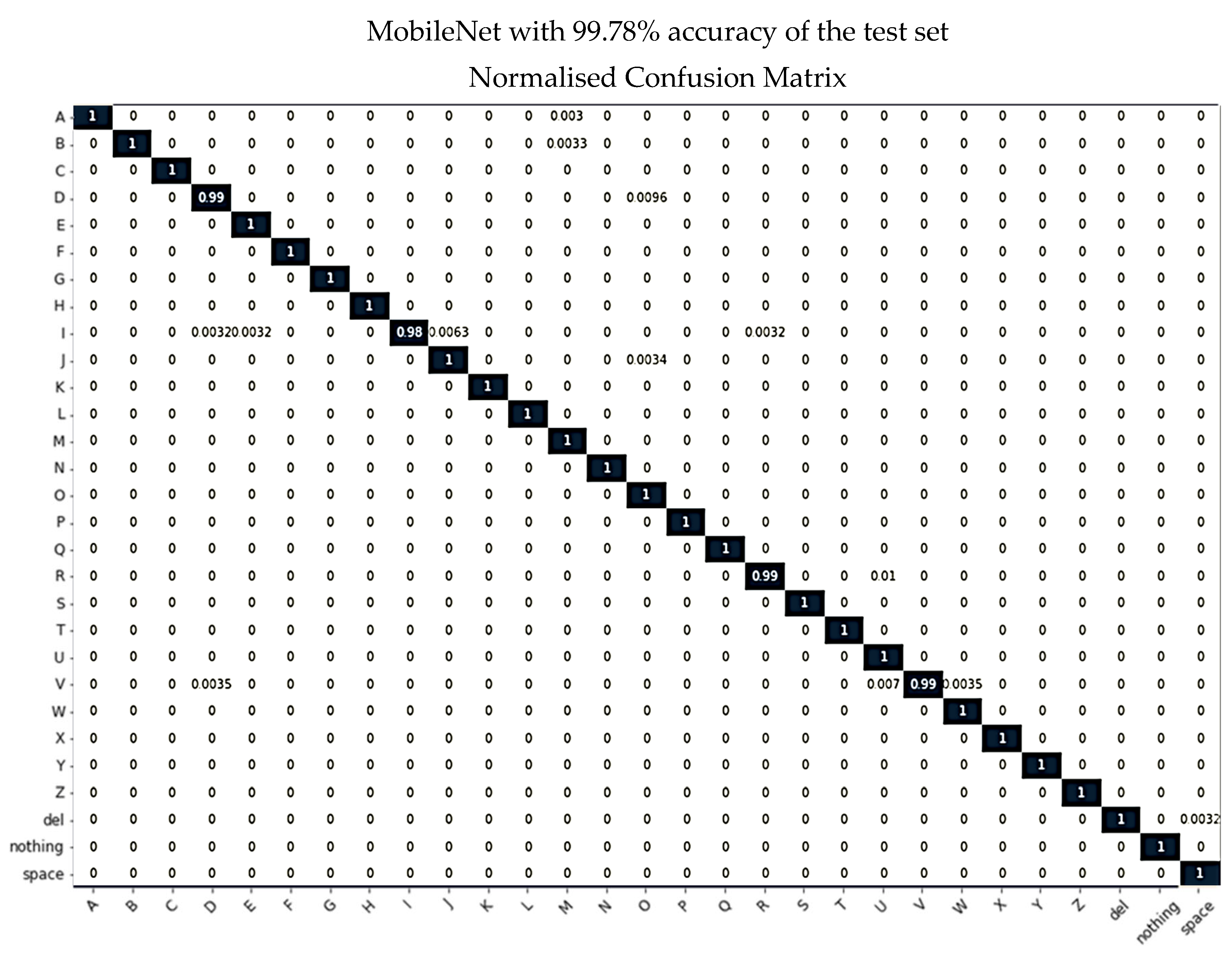

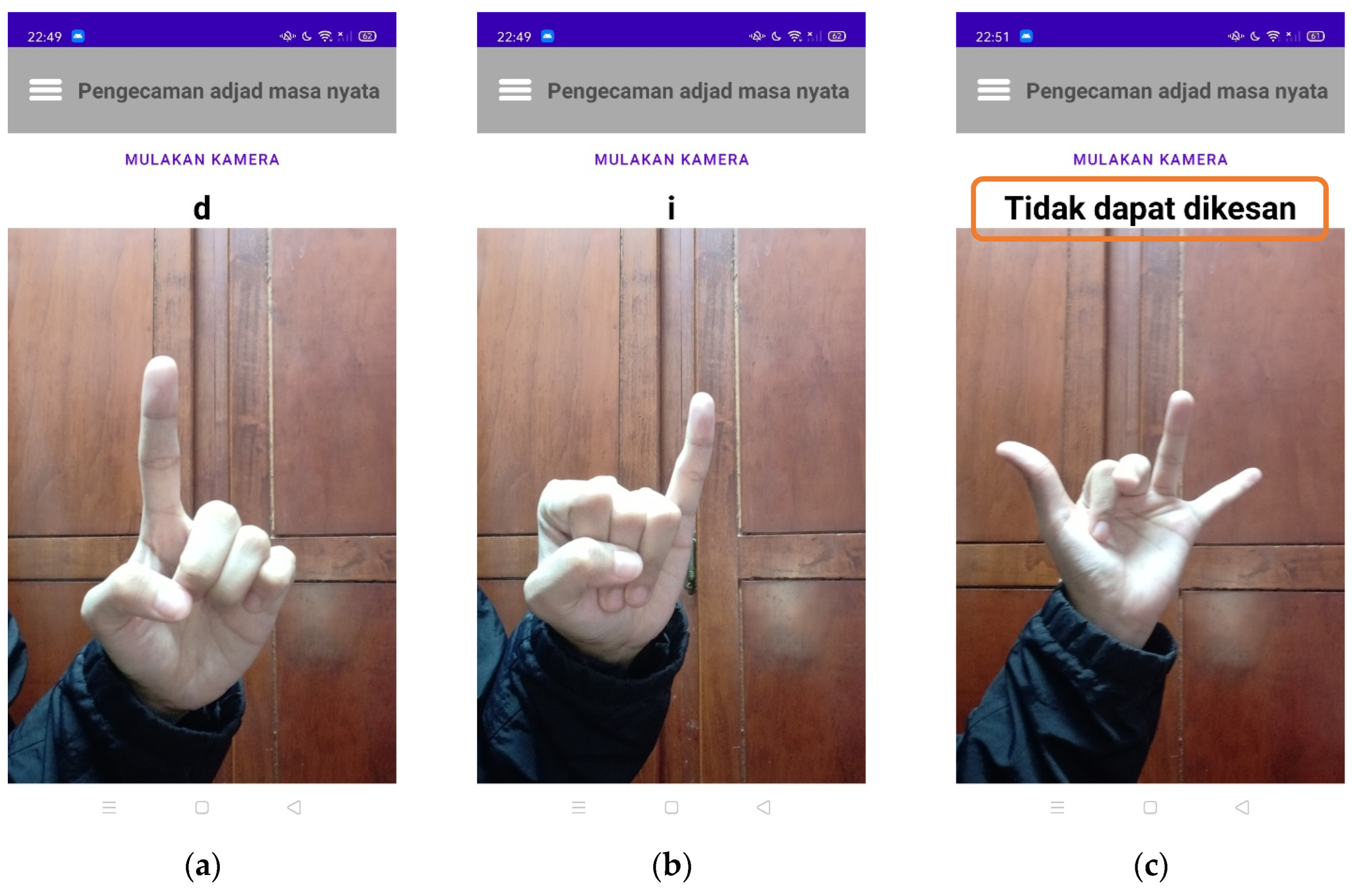

4.1. BIM Letters

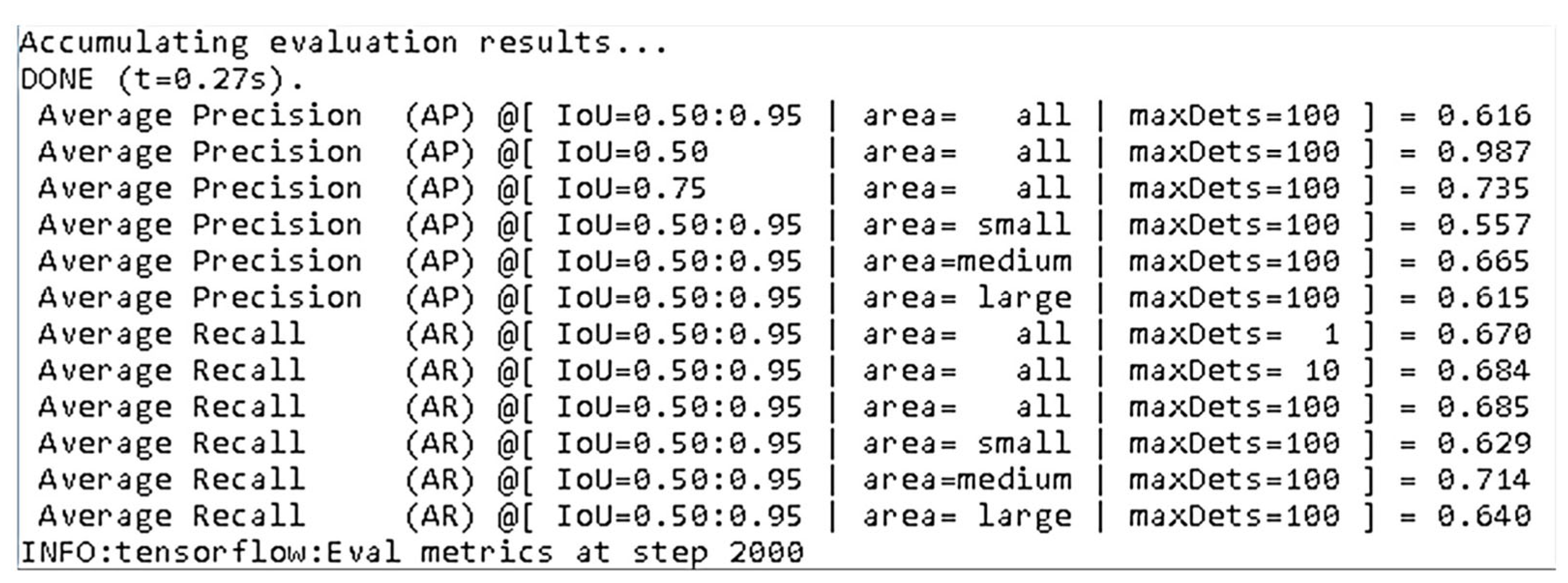

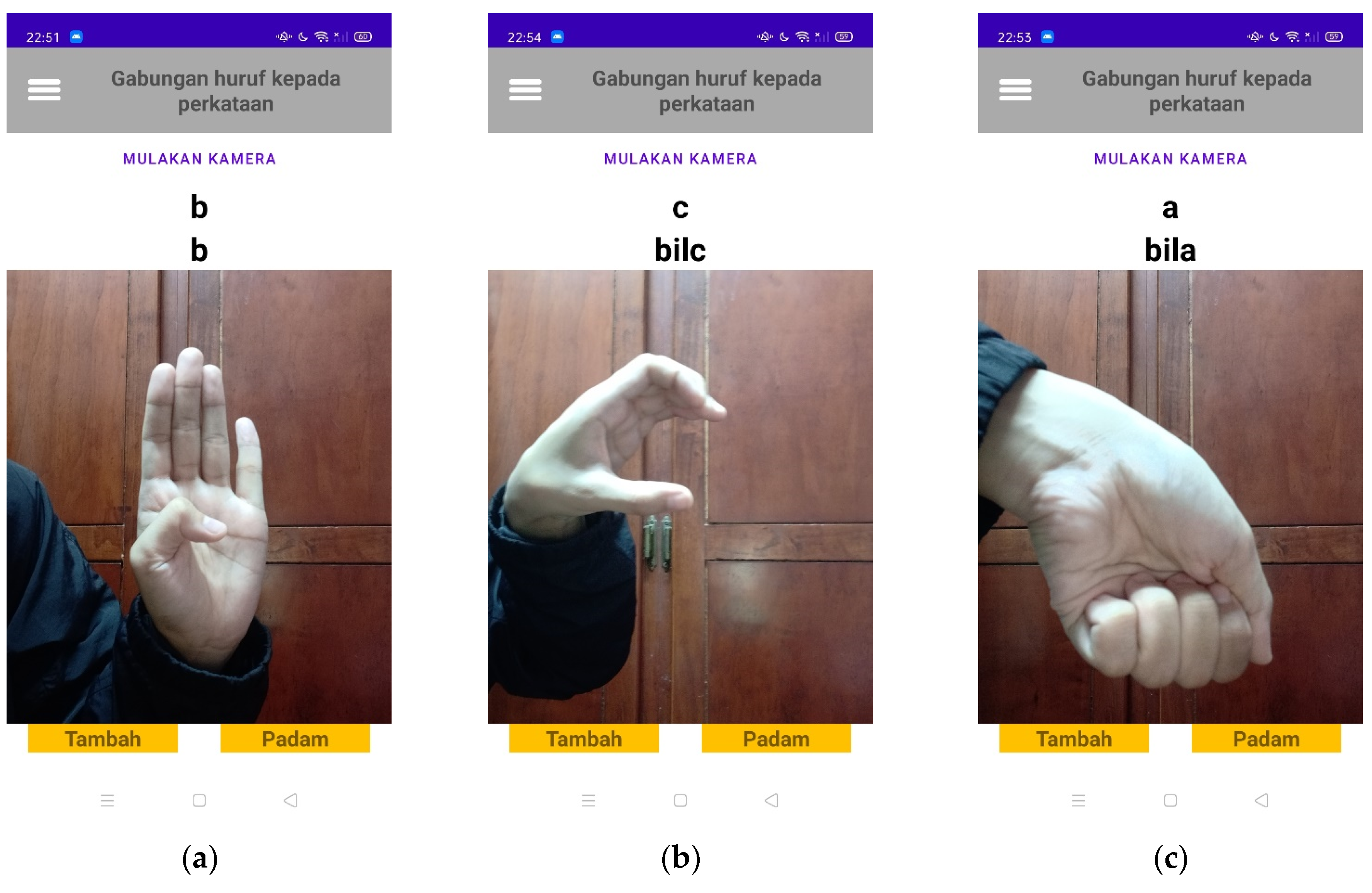

4.2. BIM Word Hand Gestures

4.3. Development of Android Application

4.4. Analysis of Android Application

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Ahire, P.G.; Tilekar, K.B.; Jawake, T.A.; Warale, P.B. Two Way Communicator between Deaf and Dumb People and Normal People. In Proceedings of the 2015 International Conference on Computing Communication Control and Automation, Pune, India, 26–27 February 2015; pp. 641–644. [Google Scholar] [CrossRef]

- Kanvinde, A.; Revadekar, A.; Tamse, M.; Kalbande, D.R.; Bakereywala, N. Bidirectional Sign Language Translation. In Proceedings of the 2021 International Conference on Communication information and Computing Technology (ICCICT), Mumbai, India, 25–27 June 2021; pp. 1–5. [Google Scholar] [CrossRef]

- Alobaidy, M.A.; Ebraheem, S.K. Application for Iraqi sign language translation. Int. J. Electr. Comput. Eng. (IJECE) 2020, 10, 5226–5234. [Google Scholar] [CrossRef]

- Dewasurendra, D.; Kumar, A.; Perera, I.; Jayasena, D.; Thelijjagoda, S. Emergency Communication Application for Speech and Hearing-Impaired Citizens. In Proceedings of the 2020 From Innovation to Impact (FITI), Colombo, Sri Lanka, 15 December 2020; pp. 1–6. [Google Scholar] [CrossRef]

- Patil, D.B.; Nagoshe, G.D. A Real Time Visual-Audio Translator for Disabled People to Communicate Using Human-Computer Interface System. Int. Res. J. Eng. Technol. (IRJET) 2021, 8, 928–934. [Google Scholar]

- Mazlina, A.M.; Masrulehsan, M.; Ruzaini, A.A. MCMSL Translator: Malaysian Text Translator for Manually Coded Malay Sign Language. In Proceedings of the IEEE Symposium on Computers & Informatics (ISCI 2014), Kota Kinabalu, Sabah, 28–29 September 2014. [Google Scholar]

- Ke, S.C.; Mahamad, A.K.; Saon, S.; Fadlilah, U.; Handaga, B. Malaysian sign language translator for mobile application. In Proceedings of the 11th International Conference on Robotics Vision, Signal Processing and Power Applications, Penang, Malaysia 5–6 April 2021; Mahyuddin, N.M., Mat Noor, N.R., Mat Sakim, H.A., Eds.; Lecture Notes in Electrical Engineering; Springer: Singapore, 2022; Volume 829. [Google Scholar] [CrossRef]

- Asri, M.A.; Ahmad, Z.; Mohtar, I.A.; Ibrahim, S. A Real Time Malaysian Sign Language Detection Algorithm Based on YOLOv3. Int. J. Recent Technol. Eng. (IJRTE) 2019, 8, 651–656. [Google Scholar] [CrossRef]

- Karbasi, M.; Zabidi, A.; Yassin, I.M.; Waqas, A.; Bhatti, Z. Malaysian sign language dataset for automatic sign language recognition system. J. Fundam. Appl. Sci. 2017, 9, 459–474. [Google Scholar] [CrossRef]

- Jayatilake, L.; Darshana, C.; Indrajith, G.; Madhuwantha, A.; Ellepola, N. Communication between Deaf-Dumb People and Normal People: Chat Assist. Int. J. Sci. Res. Publ. 2017, 7, 90–95. [Google Scholar]

- Yugopuspito, P.; Murwantara, I.M.; Sean, J. Mobile Sign Language Recognition for Bahasa Indonesia using Convolutional Neural Network. In Proceedings of the 16th International Conference on Advances in Mobile Computing and Multimedia, (MoMM2018), Yogyakarta, Indonesia, 19–21 November 2018; pp. 84–91. [Google Scholar] [CrossRef]

- Sincan, O.M.; Keles, H.Y. AUTSL: A Large Scale Multi-Modal Turkish Sign Language Dataset and Baseline Methods. IEEE Access 2020, 8, 181340–181355. [Google Scholar] [CrossRef]

- Yee, C.V. Development of Malaysian Sign Language in Malaysia. J. Spec. Needs Educ. 2018, 8, 15–24. [Google Scholar]

- Siong, T.J.; Nasir, N.R.; Salleh, F.H. A mobile learning application for Malaysian sign language education. J. Phys. Conf. Ser. 2021, 1860, 012004. [Google Scholar] [CrossRef]

- Sahid, A.F.; Ismail, W.S.; Ghani, D.A. Malay Sign Language (MSL) for Beginner using android application. In Proceedings of the 2016 International Conference on Information and Communication Technology (ICICTM), Kuala Lumpur, Malaysia, 16–17 May 2016; pp. 189–193. [Google Scholar] [CrossRef]

- Hafit, H.; Xiang, C.W.; Yusof, M.M.; Wahid, N.; Kassim, S. Malaysian sign language mobile learning application: A recommendation app to communicate with hearing-impaired communities. Int. J. Electr. Comput. Eng. (IJECE) 2019, 9, 5512–5518. [Google Scholar] [CrossRef]

- Monika, K.J.; Nanditha, K.N.; Gadina, N.; Spoorthy, M.N.; Nirmala, C.R. Conversation Engine for Deaf and Dumb. Int. J. Res. Appl. Sci. Eng. Technol. (IJRASET) 2021, 9, 2271–2275. [Google Scholar] [CrossRef]

- Jacob, S.A.; Chong, E.Y.; Goh, S.L.; Palanisamy, U.D. Design suggestions for an mHealth app to facilitate communication between pharmacists and the Deaf: Perspective of the Deaf community (HEARD Project). mHealth 2021, 7, 29. [Google Scholar] [CrossRef] [PubMed]

- Mishra, D.; Tyagi, M.; Verma, A.; Dubey, G. Sign Language Translator. Int. J. Adv. Sci. Technol. 2020, 29, 246–253. [Google Scholar]

- Özer, D.; Göksun, T. Gesture Use and Processing: A Review on Individual Differences in Cognitive Resources. Front. Psychol. 2020, 11, 573555. [Google Scholar] [CrossRef] [PubMed]

- Ferré, G. Gesture/speech integration in the perception of prosodic emphasis. In Proceedings of the Speech Prosody, Poznań, Poland, 13–16 June 2018; pp. 35–39. [Google Scholar] [CrossRef]

- Hsu, H.C.; Brône, G.; Feyaerts, K. When Gesture “Takes Over”: Speech-Embedded Nonverbal Depictions in Multimodal Interaction. Front. Psychol. 2021, 11, 552533. [Google Scholar] [CrossRef] [PubMed]

- Tambe, S.; Galphat, Y.; Rijhwani, N.; Goythale, A.; Patil, J. Analysing and Enhancing Communication Platforms available for a Deaf-Blind user. In Proceedings of the 2020 IEEE International Symposium on Sustainable Energy, Signal Processing and Cyber Security (iSSSC), Gunupur Odisha, India, 16–17 December 2020; pp. 1–5. [Google Scholar] [CrossRef]

- Seebun, G.R.; Nagowah, L. Let’s Talk: An Assistive Mobile Technology for Hearing and Speech Impaired Persons. In Proceedings of the 2020 3rd International Conference on Emerging Trends in Electrical, Electronic and Communications Engineering (ELECOM), Balaclava, Mauritius, 25–27 November 2020; pp. 210–215. [Google Scholar] [CrossRef]

- Maarif, H.A.Q.; Akmeliawati, R.; Bilal, S. Malaysian Sign Language database for research. In Proceedings of the 2012 International Conference on Computer and Communication Engineering (ICCCE), Kuala Lumpur, Malaysia, 3–5 July 2012; pp. 798–801. [Google Scholar] [CrossRef]

- Ryumin, D.; Ivanko, D.; Ryumina, E. Audio-Visual Speech and Gesture Recognition by Sensors of Mobile Devices. Sensors 2023, 23, 2284. [Google Scholar] [CrossRef] [PubMed]

- Novopoltsev, M.; Verkhovtsev, L.; Murtazin, R.; Milevich, D.; Zemtsova, I. Fine-tuning of sign language recognition models: A technical report. arXiv 2023, arXiv:2302.07693. [Google Scholar] [CrossRef]

- Konaite, M.; Owolawi, P.A.; Mapayi, T.; Malele, V.; Odeyemi, K.; Aiyetoro, G.; Ojo, J.S. Smart Hat for the blind with Real-Time Object Detection using Raspberry Pi and TensorFlow Lite. In Proceedings of the International Conference on Artificial Intelligence and its Applications (icARTi ‘21), Bagatelle, Mauritius, 9–10 December 2021; pp. 1–6. [Google Scholar] [CrossRef]

- Dai, J. Real-time and accurate object detection on edge device with TensorFlow Lite. J. Phys. Conf. Ser. 2020, 1651, 012114. [Google Scholar] [CrossRef]

- Kannan, R.; Jian, C.J.; Guo, X. Adversarial Evasion Noise Attacks Against TensorFlow Object Detection API. In Proceedings of the 15th International Conference for Internet Technology and Secured Transactions (ICITST), London, UK, 8–10 December 2020; pp. 1–4. [Google Scholar] [CrossRef]

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Applications. arXiv 2017, arXiv:1704.04861. [Google Scholar] [CrossRef]

- Yu, T.; Gande, S.; Yu, R. An Open-Source Based Speech Recognition Android Application for Helping Handicapped Students Writing Programs. In Proceedings of the International Conference on Wireless Networks (ICWN), Las Vegas, NV, USA, 27–30 July 2015; pp. 71–77. [Google Scholar]

| Model Name | Speed (ms) | COCO mAP | TensorFlow Version |

|---|---|---|---|

| SSD-MobileNet-V2 320 × 320 | 19 | 20.2 | 2 |

| SSD-MobileNet-V1-COCO | 30 | 21 | 1 |

| SSD-MobileNet-V2-COCO | 31 | 22 | 1 |

| Faster R-CNN ResNet50 V1 640 × 640 | 53 | 29.3 | 2 |

| Faster RCNN Inception V2 COCO | 58 | 28 | 1 |

| Advantages | Disadvantages | |

|---|---|---|

| Google Cloud API | It supports 80 different languages. | Not free. |

| Can recognise audio uploaded in the request. | Requires higher-performance hardware. | |

| Returns text results in real time. | ||

| Accurate in noisy environments. | ||

| Works with apps across any device and platform. | ||

| Android Speech-to-Text API | Free to use. | Need to pass local language to convert speech to-text. |

| Easy to use. | Not all devices support offline speech input. | |

| It does not require high-performance hardware. | It cannot pan an audio file to be recognised. | |

| Easy to develop. | It only works with Android phones. |

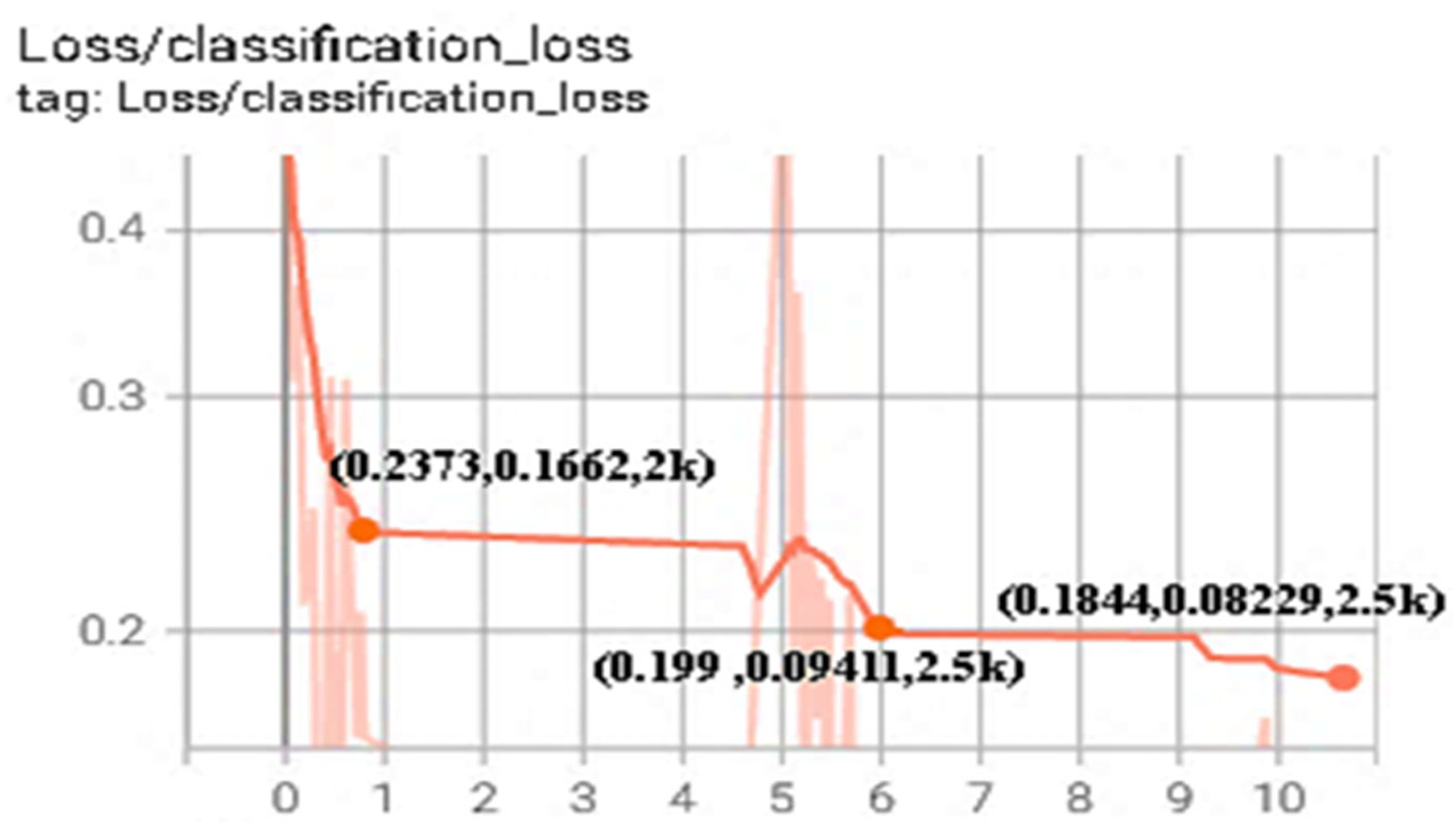

| 1st Training with 2000 Steps | 2nd Training with 2500 Steps | 3rd Training with 2500 Steps | ||||

|---|---|---|---|---|---|---|

| Smoothed | Loss Value | Smoothed | Loss Value | Smoothed | Loss Value | |

| Classification loss | 0.23730 | 0.16620 | 0.19900 | 0.09411 | 0.18440 | 0.08229 |

| Localisation loss | 0.16730 | 0.09643 | 0.15180 | 0.04301 | 0.13880 | 0.05348 |

| Regularisation loss | 0.15240 | 0.14820 | 0.15130 | 0.14510 | 0.15050 | 0.14510 |

| Total loss | 0.55700 | 0.41100 | 0.50210 | 0.28220 | 0.47360 | 0.28090 |

| Learning rate | 0.06807 | 0.07992 | 0.06560 | 0.06933 | 0.07206 | 0.07982 |

| Steps per second | 0.65980 | 0.74190 | 0.65720 | 0.63350 | 0.62930 | 0.06296 |

| Letter | A | B | C | D | E | F | G | H | I | J | K | L | M | N | O | P | Q | R | S | T | U | V | W | X | Y | Z |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Accuracy (%) | 60 | 100 | 70 | 100 | 50 | 90 | 70 | 90 | 100 | 80 | 70 | 90 | 100 | 70 | 80 | 80 | 70 | 60 | 90 | 70 | 60 | 100 | 90 | 70 | 90 | 60 |

| Word | Abang | Bapa | Emak | Saya | Sayang |

|---|---|---|---|---|---|

| Accuracy (%) | 100 | 90 | 80 | 80 | 100 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Saiful Bahri, I.Z.; Saon, S.; Mahamad, A.K.; Isa, K.; Fadlilah, U.; Ahmadon, M.A.B.; Yamaguchi, S. Interpretation of Bahasa Isyarat Malaysia (BIM) Using SSD-MobileNet-V2 FPNLite and COCO mAP. Information 2023, 14, 319. https://doi.org/10.3390/info14060319

Saiful Bahri IZ, Saon S, Mahamad AK, Isa K, Fadlilah U, Ahmadon MAB, Yamaguchi S. Interpretation of Bahasa Isyarat Malaysia (BIM) Using SSD-MobileNet-V2 FPNLite and COCO mAP. Information. 2023; 14(6):319. https://doi.org/10.3390/info14060319

Chicago/Turabian StyleSaiful Bahri, Iffah Zulaikha, Sharifah Saon, Abd Kadir Mahamad, Khalid Isa, Umi Fadlilah, Mohd Anuaruddin Bin Ahmadon, and Shingo Yamaguchi. 2023. "Interpretation of Bahasa Isyarat Malaysia (BIM) Using SSD-MobileNet-V2 FPNLite and COCO mAP" Information 14, no. 6: 319. https://doi.org/10.3390/info14060319

APA StyleSaiful Bahri, I. Z., Saon, S., Mahamad, A. K., Isa, K., Fadlilah, U., Ahmadon, M. A. B., & Yamaguchi, S. (2023). Interpretation of Bahasa Isyarat Malaysia (BIM) Using SSD-MobileNet-V2 FPNLite and COCO mAP. Information, 14(6), 319. https://doi.org/10.3390/info14060319