Usability Evaluation of an Adaptive Serious Game Prototype Based on Affective Feedback

Abstract

:1. Introduction

2. Related Work

3. Method

3.1. Participants

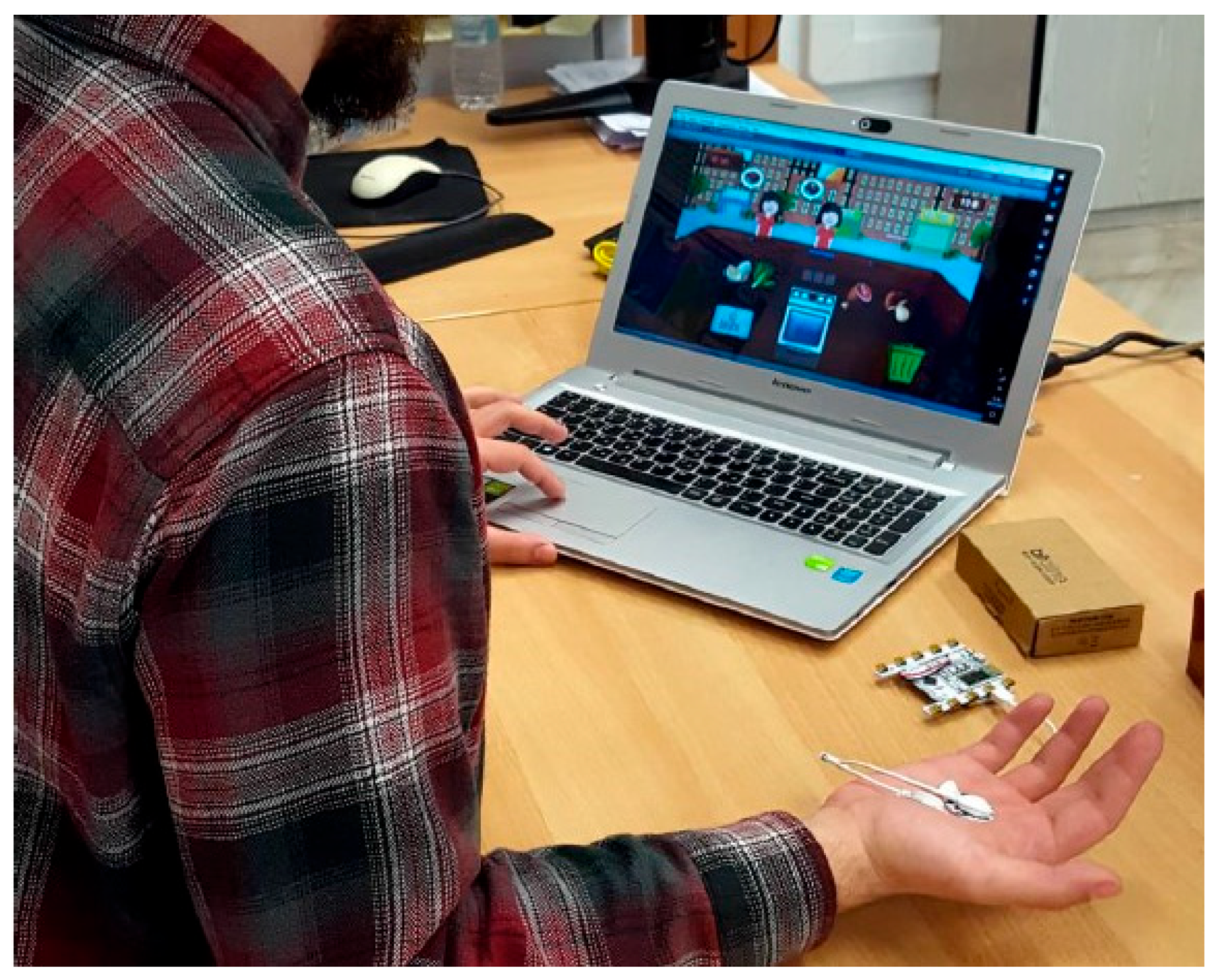

3.2. Materials

3.2.1. System Architecture

- Mean SCR, (F1);

- Max SCR, (F2);

- Min SCR, (F3);

- Range SCR, (F4);

- Skewness SCR, (F5);

- Kurtosis SCR (F6).

3.2.2. The Classification Model

- Baseline

- ○

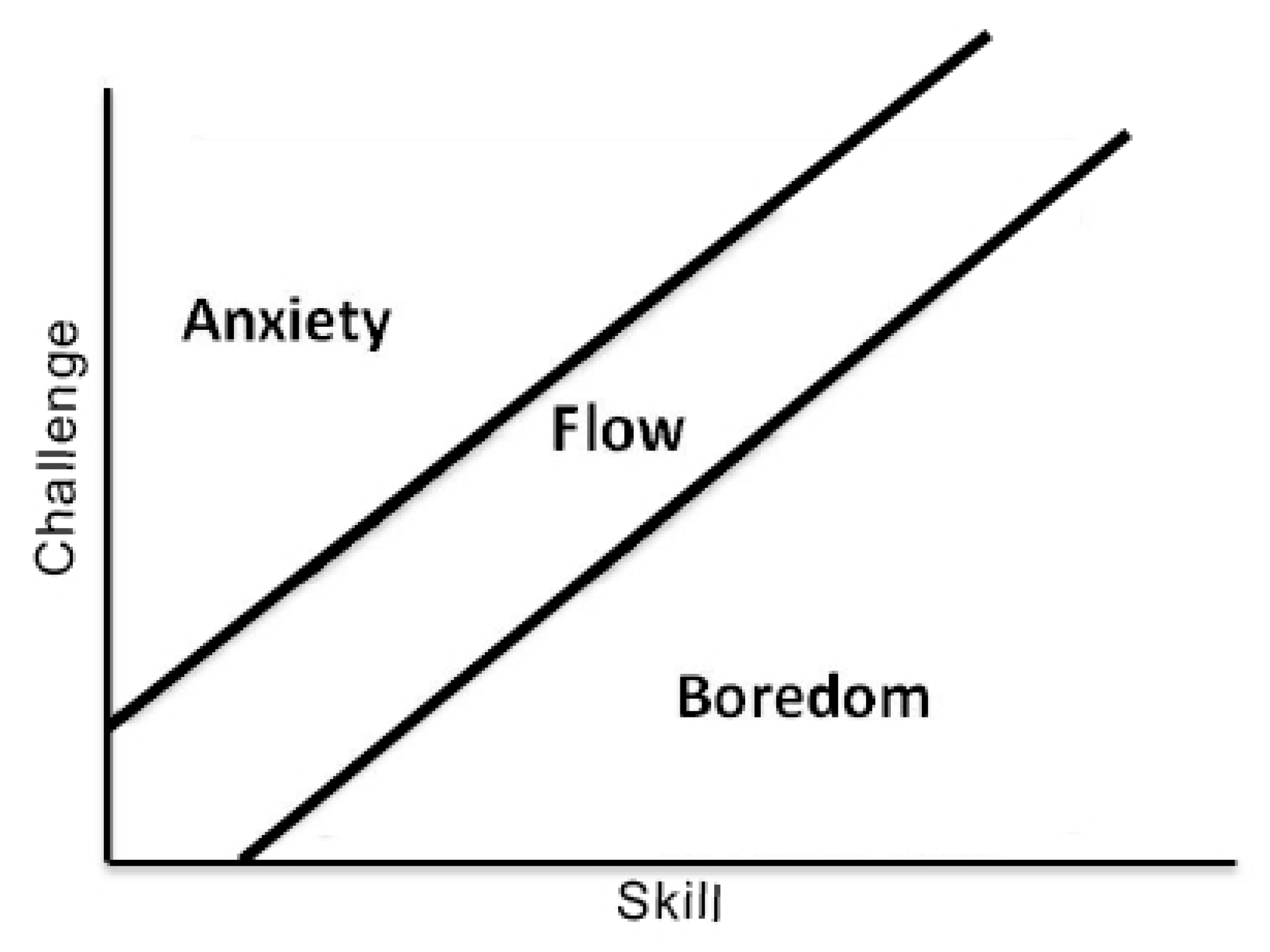

- Low, class: 0—close to boredom;

- ○

- Medium, class: 1—also close to boredom;

- ○

- High, class: 2—close to neutral.

- Amusement

- ○

- Low, class: 3—flow;

- ○

- Medium, class: 4—flow;

- ○

- High, class: 5—flow.

- Anxiety

- ○

- Low, class: 6—low anxiety;

- ○

- Medium, class: 7—anxiety;

- ○

- High, class: 8—anxiety.

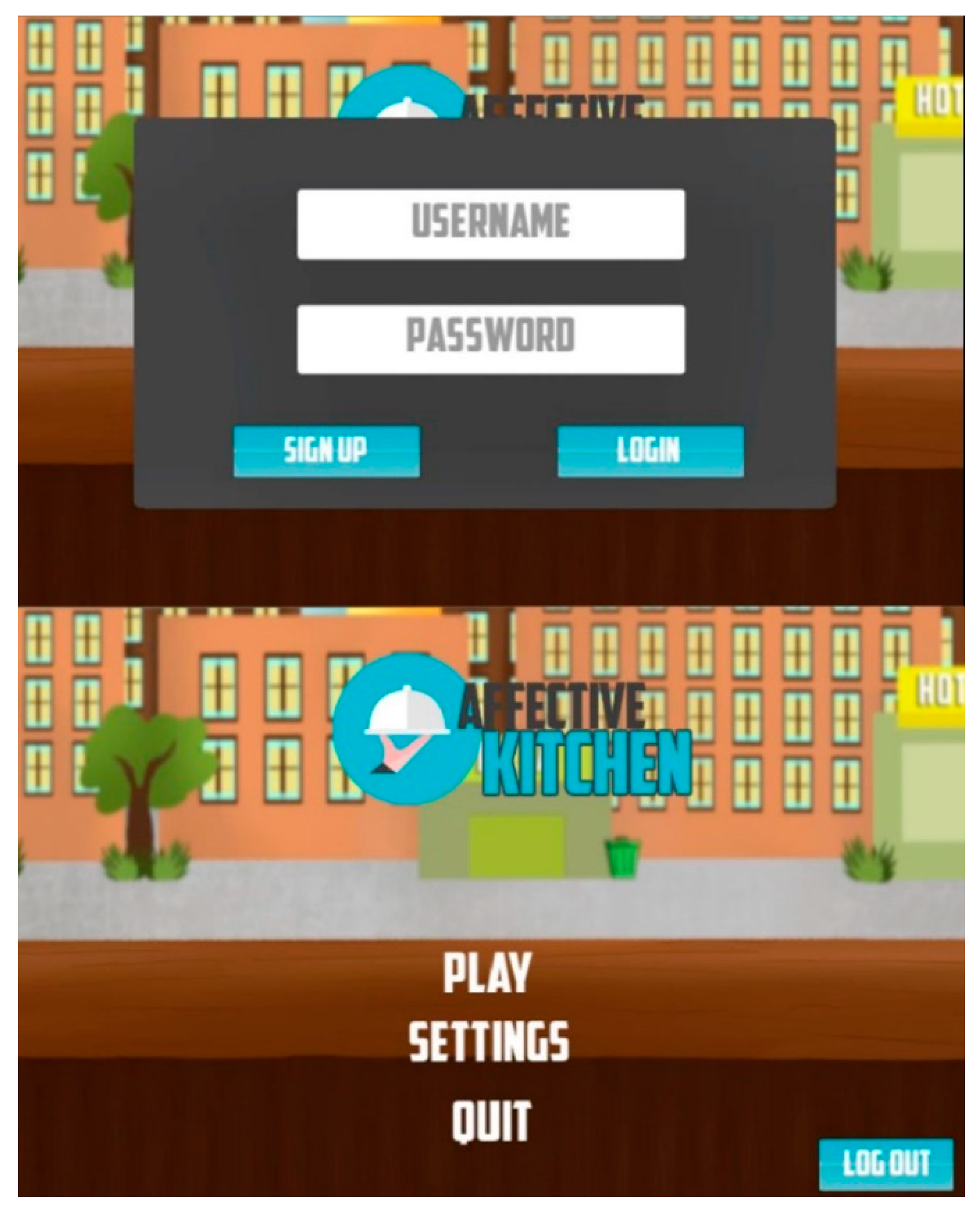

3.2.3. The Serious Game

3.3. Expert Evaluation Questionnaire

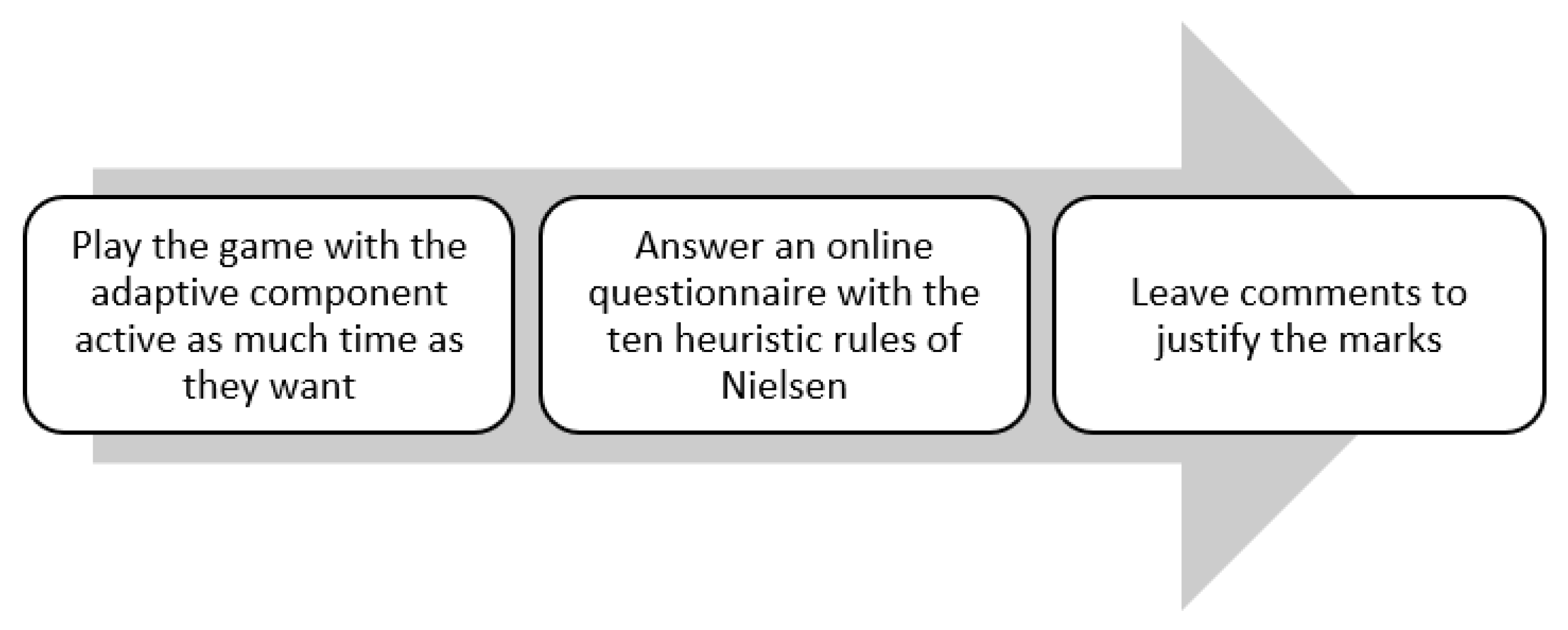

3.4. Procedure

- 0 = not a usability problem;

- 1 = cosmetic problem, not necessary to be fixed if there is no extra time in the project;

- 2 = minor usability problem, fixing this should be given low priority;

- 3 = major usability problem, important to fix;

- 4 = usability catastrophe, must be fixed before product can be released.

3.5. Data Analysis

4. Discussion

5. Limitations and Future Work

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Zhonggen, Y. A Meta-Analysis of Use of Serious Games in Education over a Decade. Int. J. Comput. Games Technol. 2019, 2019, 1–8. [Google Scholar] [CrossRef]

- Kim, B.; Park, H.; Baek, Y. Not just fun, but serious strategies: Using meta-cognitive strategies in game-based learning. Comput. Educ. 2009, 52, 800–810. [Google Scholar] [CrossRef]

- Calleja, G. Digital Games and Escapism. Games Cult. 2010, 5, 335–353. [Google Scholar] [CrossRef]

- Oksanen, K.; Lainema, T.; Hämäläinen, R. Learning from Social Collaboration. In Gamification in Education; IGI Global: Hershey, PA, USA, 2018; pp. 500–524. [Google Scholar] [CrossRef]

- Vorderer, P.; Hartmann, T.; Klimmt, C. Explaining the enjoyment of playing video games: The role of competition. In Proceedings of the ICEC ’03 Proceedings of the Second International Conference on Entertainment Computing, Pittsburgh, PA, USA, 8–10 May 2003; pp. 1–9. [Google Scholar] [CrossRef]

- Juul, J. Fear of Failing? The Many Meanings of Difficulty in Video Games: The Video Game Theory Reader; Routledge: New York, NY, USA, 2009; Volume 2, pp. 237–252. [Google Scholar]

- Su, Y.-S.; Chiang, W.-L.; Lee, C.-T.J.; Chang, H.-C. The effect of flow experience on player loyalty in mobile game application. Comput. Hum. Behav. 2016, 63, 240–248. [Google Scholar] [CrossRef]

- Csikszentmihalyi, M.; Nakamura, J. Flow Theory and Research. In The Oxford Handbook of Positive Psychology; Oxford University Press: Oxford, UK, 2009; pp. 195–206. [Google Scholar]

- Andrade, G.; Ramalho, G.; Santana, H.; Corruble, V. Automatic computer game balancing. In Proceedings of the International Conference on Autonomous Agents, New York, NY, USA, 25–29 July 2005; pp. 1229–1230. [Google Scholar] [CrossRef]

- Stein, A.; Yotam, Y.; Puzis, R.; Shani, G.; Taieb-Maimon, M. EEG-triggered dynamic difficulty adjustment for multiplayer games. Entertain. Comput. 2018, 25, 14–25. [Google Scholar] [CrossRef]

- Picard, R.W. Affective Computing. In M.I.T. Media Laboratory Perceptual Computing; M.I.T Media Laboratory Perceptual Computing Section Technical Report No. 321; MIT Media Laboratory: Cambridge, MA, USA, 1995; pp. 1–11. [Google Scholar]

- Daily, S.B.; James, M.T.; Cherry, D.; Porter, J.J.; Darnell, S.S.; Isaac, J.; Roy, T. Affective Computing: Historical Foundations, Current Applications, and Future Trends. In Emotions and Affect in Human Factors and Human-Computer Interaction; Academic Press: Cambridge, MA, USA, 2017; pp. 213–231. [Google Scholar] [CrossRef]

- Liu, Y.; Sourina, O. Real-Time Subject-Dependent EEG-Based Emotion Recognition Algorithm. In Transactions on Computational Science XXIII; Springer: Berlin/Heidelberg, Germany, 2014; pp. 199–223. [Google Scholar]

- Ninaus, M.; Tsarava, K.; Moeller, K. A Pilot Study on the Feasibility of Dynamic Difficulty Adjustment in Game-Based Learning Using Heart-Rate. In International Conference on Games and Learning Alliance; Springer: Cham, Switzerland, 2019; pp. 117–128. [Google Scholar] [CrossRef]

- Monaco, A.; Sforza, G.; Amoroso, N.; Antonacci, M.; Bellotti, R.; de Tommaso, M.; Di Bitonto, P.; Di Sciascio, E.; Diacono, D.; Gentile, E.; et al. The PERSON project: A serious brain-computer interface game for treatment in cognitive impairment. Health Technol. 2019, 9, 123–133. [Google Scholar] [CrossRef]

- Bitalino. Electrodermal Activity (EDA) User’s Manual. 2020. Available online: https://bitalino.com/storage/uploads/media/electrodermal-activity-eda-user-manual.pdf (accessed on 4 July 2022).

- Biosignalnotebook. EDA Signal Analysis—A Complete Tour. 2018. Available online: http://notebooks.pluxbiosignals.com/notebooks/Categories/Other/eda_analysis_rev.html (accessed on 20 December 2021).

- Chen, W.; Jaques, N.; Taylor, S.; Sano, A.; Fedor, S.; Picard, R.W. Wavelet-Based Motion Artifact Removal for Electrodermal Activity. In Proceedings of the 2015 37th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Milan, Italy, 25–29 August 2015; Volume 2015, pp. 6223–6226. [Google Scholar] [CrossRef]

- Nkurikiyeyezu, K.; Yokokubo, A.; Lopez, G. The influence of person-specific biometrics in improving generic stress predictive models. Sens. Mater. 2019, 32, 703–722. [Google Scholar]

- Schmidt, P.; Reiss, A.; Duerichen, R.; Marberger, C.; Van Laerhoven, K. Introducing wesad, a multimodal dataset for wearable stress and affect detection. In Proceedings of the 20th ACM International Conference on Multimodal Interaction, Boulder, CO, USA, 16–20 October 2018; pp. 400–408. [Google Scholar]

- Koldijk, S.; Neerincx, M.A.; Kraaij, W. Detecting Work Stress in Offices by Combining Unobtrusive Sensors. IEEE Trans. Affect. Comput. 2016, 9, 227–239. [Google Scholar] [CrossRef]

- Koldijk, S.; Sappelli, M.; Verberne, S.; Neerincx, M.; Kraaij, W. The SWELL Knowledge Work Dataset for Stress and User Modeling Research. In Proceedings of the 16th ACM International Conference on Multimodal Interaction (ICMI 2014), Istanbul, Turkey, 12–16 November 2014. [Google Scholar]

- Bajpai, D.; He, L. Evaluating KNN Performance on WESAD Dataset. In Proceedings of the 12th International Conference on Computational Intelligence and Communication Networks (CICN), Bhimtal, India, 25–26 September 2020; pp. 60–62. [Google Scholar] [CrossRef]

- Aqajari, S.A.A.H.; Kasaeyan Naeini, E.; Mehrabadi, M.A.; Labbaf, S.; Rahmani, A.M.; Dutt, N. GSR Analysis for Stress: Development and Validation of an Open Source Tool for Noisy Naturalistic GSR Data. arXiv 2020, arXiv:2005.01834, preprint. [Google Scholar]

- Douglas, M.; Wilson, J.; Ennis, S. Multiple-choice question tests: A convenient, flexible and effective learning tool? A case study. Innov. Educ. Teach. Int. 2012, 49, 111–121. [Google Scholar] [CrossRef]

- Hlasny, V. Students’ Time-Allocation, Attitudes and Performance on Multiple-Choice Tests (January 4, 2014). Available online: https://papers.ssrn.com/sol3/papers.cfm?abstract_id=2379024 (accessed on 10 July 2022).

- Nielsen, J. How to Conduct a Heuristic Evaluation; Nielsen Norman Group: Fremont, CA, USA, 1994; Volume 1, p. 8. [Google Scholar]

- Nielsen, J.; Molich, R. Heuristic evaluation of user interfaces. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, New York, NY, USA, 1–5 April 1990; pp. 249–256. [Google Scholar]

- Nielsen, J. Usability Engineering; Academic Press: Boston, MA, USA, 1993. [Google Scholar]

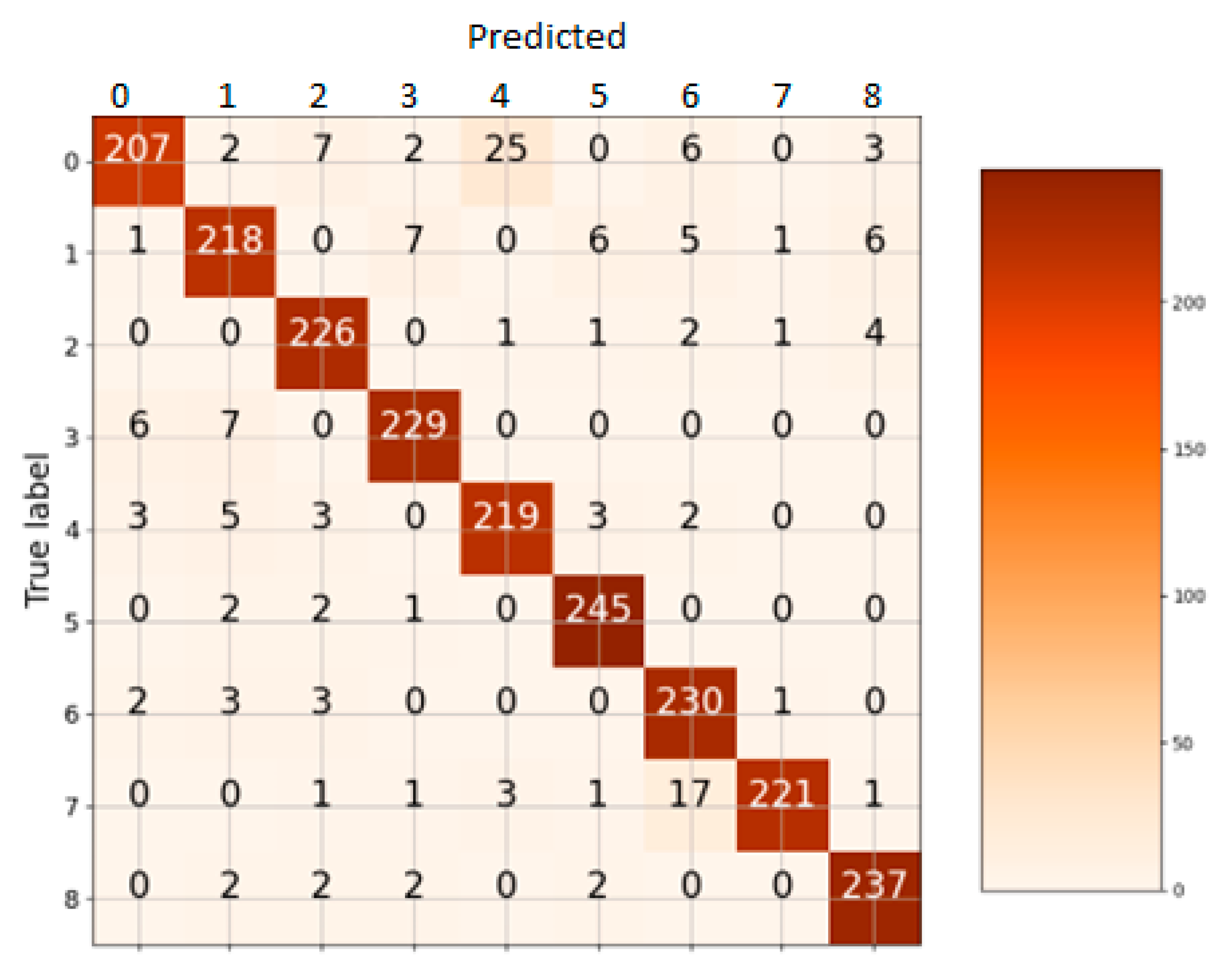

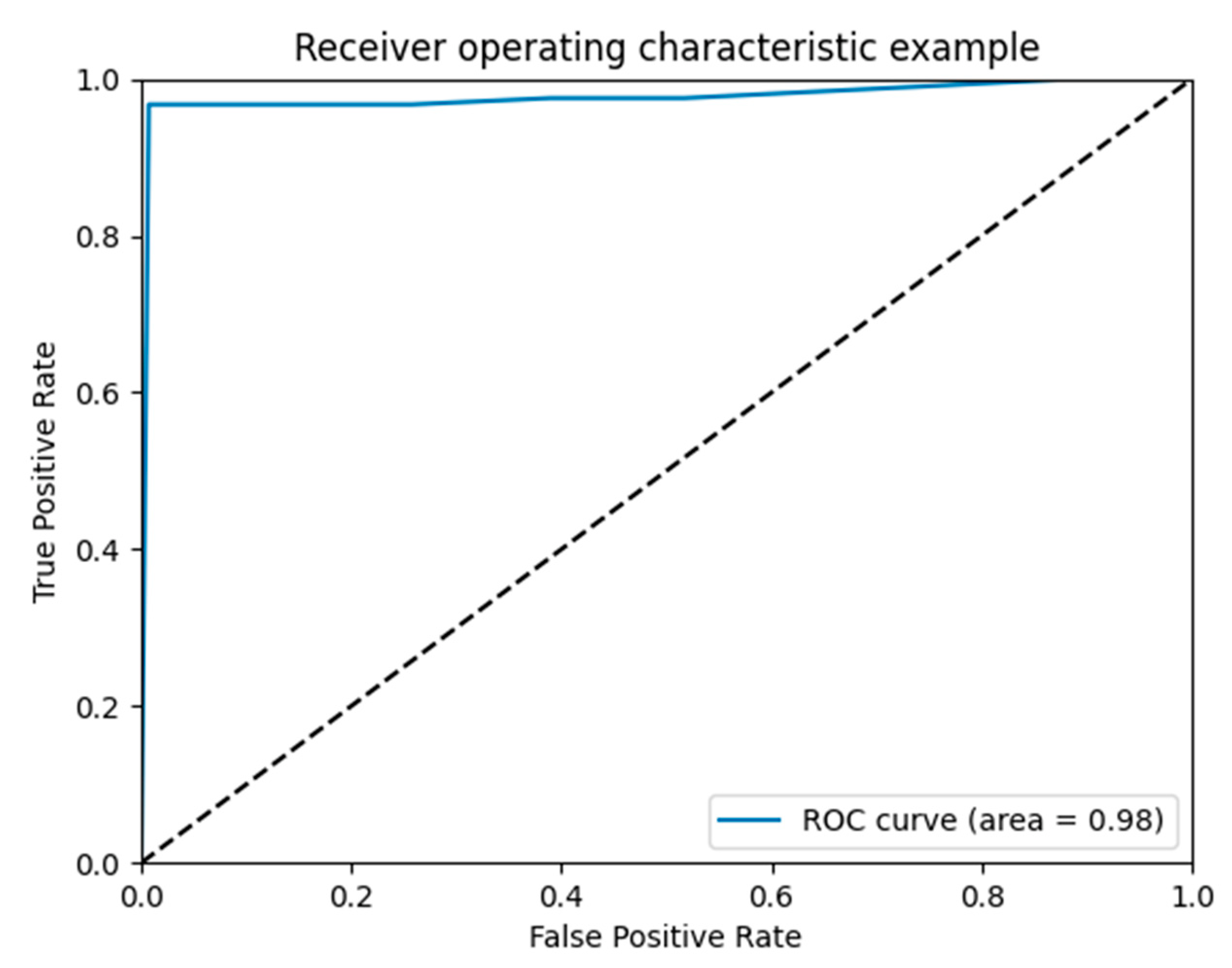

| Class | Precision | Recall | F1-Score |

|---|---|---|---|

| 0 | 0.95 | 0.82 | 0.88 |

| 1 | 0.91 | 0.89 | 0.90 |

| 2 | 0.93 | 0.96 | 0.94 |

| 3 | 0.95 | 0.95 | 0.95 |

| 4 | 0.88 | 0.93 | 0.91 |

| 5 | 0.95 | 0.98 | 0.96 |

| 6 | 0.88 | 0.96 | 0.92 |

| 7 | 0.99 | 0.90 | 0.94 |

| 8 | 0.94 | 0.97 | 0.96 |

| K Neighbors accuracy score: 0.9291266575217193 | |||

| Rule Number | Heuristic Rule |

|---|---|

| 1 | Simple and natural dialogue |

| 2 | Speak the user’s language |

| 3 | Minimize user memory load |

| 4 | Be consistent |

| 5 | Provide feedback |

| 6 | Provide clearly marked exits |

| 7 | Provide shortcuts |

| 8 | Good error messages |

| 9 | Prevent errors |

| 10 | Provide help and documentation |

| Sex | Number | Percentage (%) |

|---|---|---|

| Female | 0 | 0 |

| Male | 6 | 100 |

| Age | Number | Percentage (%) |

| 25–34 | 3 | 50.0 |

| 35–44 | 2 | 33.3 |

| >45 | 1 | 16.6 |

| Education level | Number | Percentage (%) |

| Master’s degree | 5 | 83.3 |

| PhD | 1 | 16.6 |

| Ev.1 | Ev.2 | Ev.3 | Ev.4 | Ev.5 | Ev.6 | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| No. | Heuristic Rule | S | F | S | F | S | F | S | F | S | F | S | F |

| 1 | Simple and natural dialogue | 1 | 0 | 3 | 0 | 3 | 0 | 3 | 0 | 2 | 0 | 1 | 0 |

| 2 | Speak the user’s language | 1 | 0 | 3 | 0 | 2 | 0 | 3 | 0 | 1 | 0 | 1 | 0 |

| 3 | Minimize user memory load | 1 | 0 | 2 | 3 | 3 | 0 | 4 | 0 | 2 | 0 | 1 | 0 |

| 4 | Be consistent | 1 | 0 | 2 | 0 | 3 | 0 | 4 | 0 | 2 | 0 | 1 | 0 |

| 5 | Provide feedback | 1 | 0 | 3 | 1 | 3 | 1 | 3 | 2 | 2 | 0 | 2 | 0 |

| 6 | Provide clearly marked exits | 1 | 0 | 3 | 1 | 3 | 0 | 2 | 1 | 2 | 2 | 2 | 1 |

| 7 | Provide shortcuts | 1 | 0 | 2 | 2 | 3 | 0 | 2 | 0 | 2 | 0 | 1 | 0 |

| 8 | Good error messages | 1 | 0 | 3 | 0 | 3 | 0 | 2 | 0 | 1 | 0 | 1 | 0 |

| 9 | Prevent errors | 1 | 1 | 3 | 0 | 3 | 0 | 3 | 0 | 1 | 0 | 1 | 0 |

| 10 | Provide help and documentation | 1 | 1 | 3 | 2 | 3 | 0 | 4 | 2 | 3 | 2 | 3 | 1 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Karavidas, L.; Apostolidis, H.; Tsiatsos, T. Usability Evaluation of an Adaptive Serious Game Prototype Based on Affective Feedback. Information 2022, 13, 425. https://doi.org/10.3390/info13090425

Karavidas L, Apostolidis H, Tsiatsos T. Usability Evaluation of an Adaptive Serious Game Prototype Based on Affective Feedback. Information. 2022; 13(9):425. https://doi.org/10.3390/info13090425

Chicago/Turabian StyleKaravidas, Lampros, Hippokratis Apostolidis, and Thrasyvoulos Tsiatsos. 2022. "Usability Evaluation of an Adaptive Serious Game Prototype Based on Affective Feedback" Information 13, no. 9: 425. https://doi.org/10.3390/info13090425

APA StyleKaravidas, L., Apostolidis, H., & Tsiatsos, T. (2022). Usability Evaluation of an Adaptive Serious Game Prototype Based on Affective Feedback. Information, 13(9), 425. https://doi.org/10.3390/info13090425