Natural Language Processing Techniques for Text Classification of Biomedical Documents: A Systematic Review

Abstract

1. Introduction

2. Material and Methods

2.1. Data Collection

2.1.1. Searched Databases

2.1.2. Search Terms

2.1.3. Inclusion Criteria

2.1.4. Exclusion Criteria

2.2. Quality Metrics

3. Results

3.1. Quality Metric Result

3.2. Text Classification Methods Performance According Datasets Used

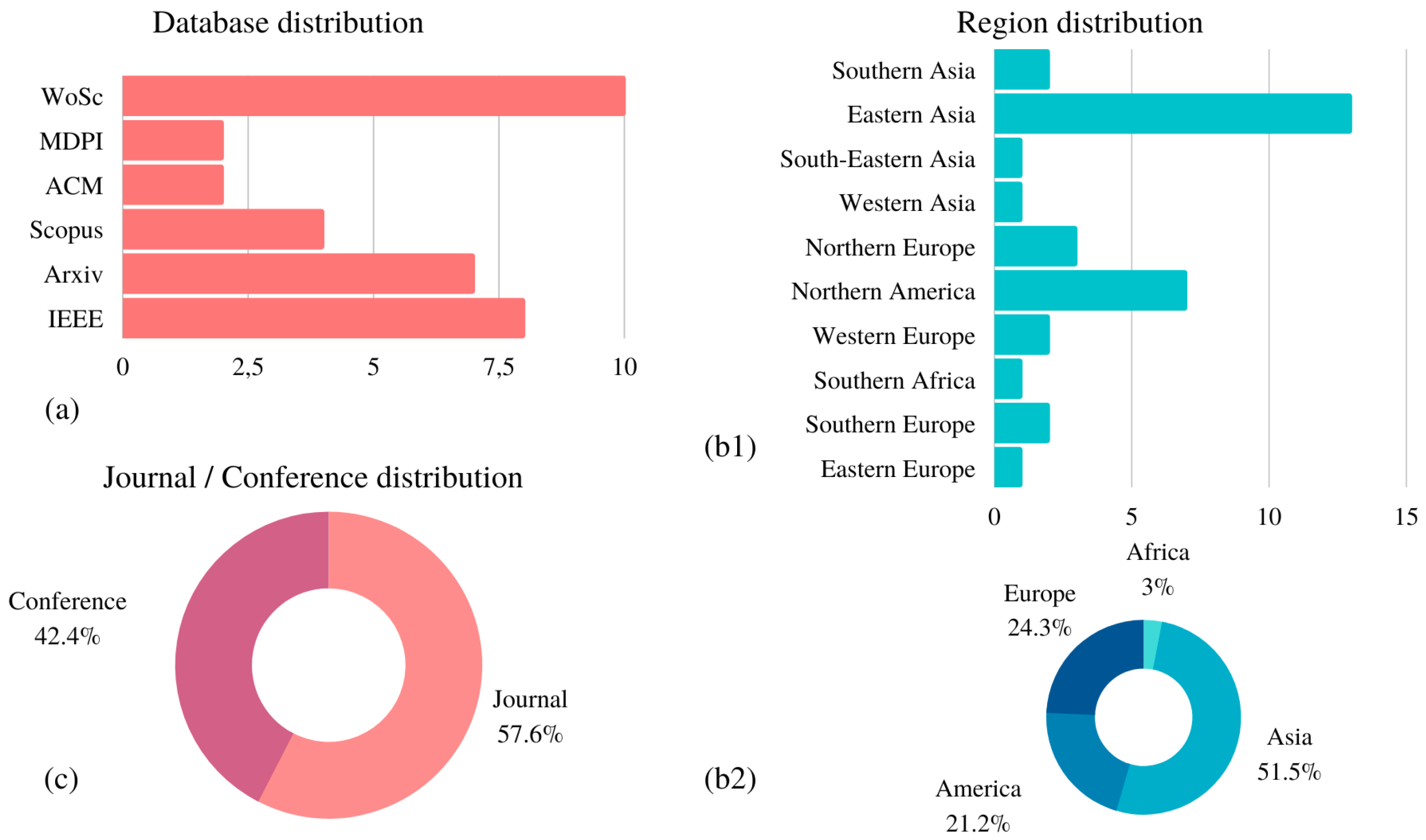

3.3. Frequency Result According Geographical Distribution and Type of Publication

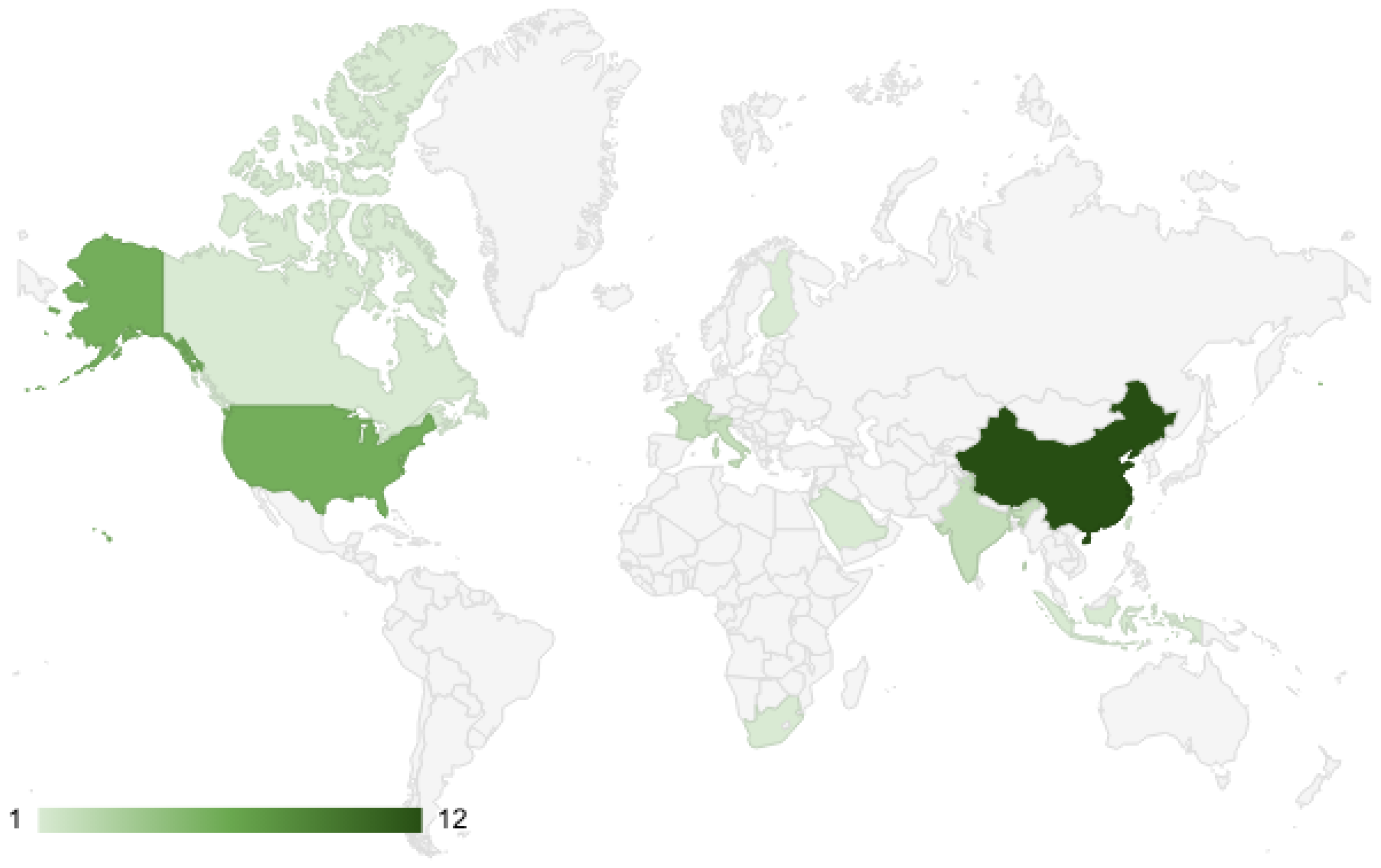

3.4. Paper Publication Map by Country

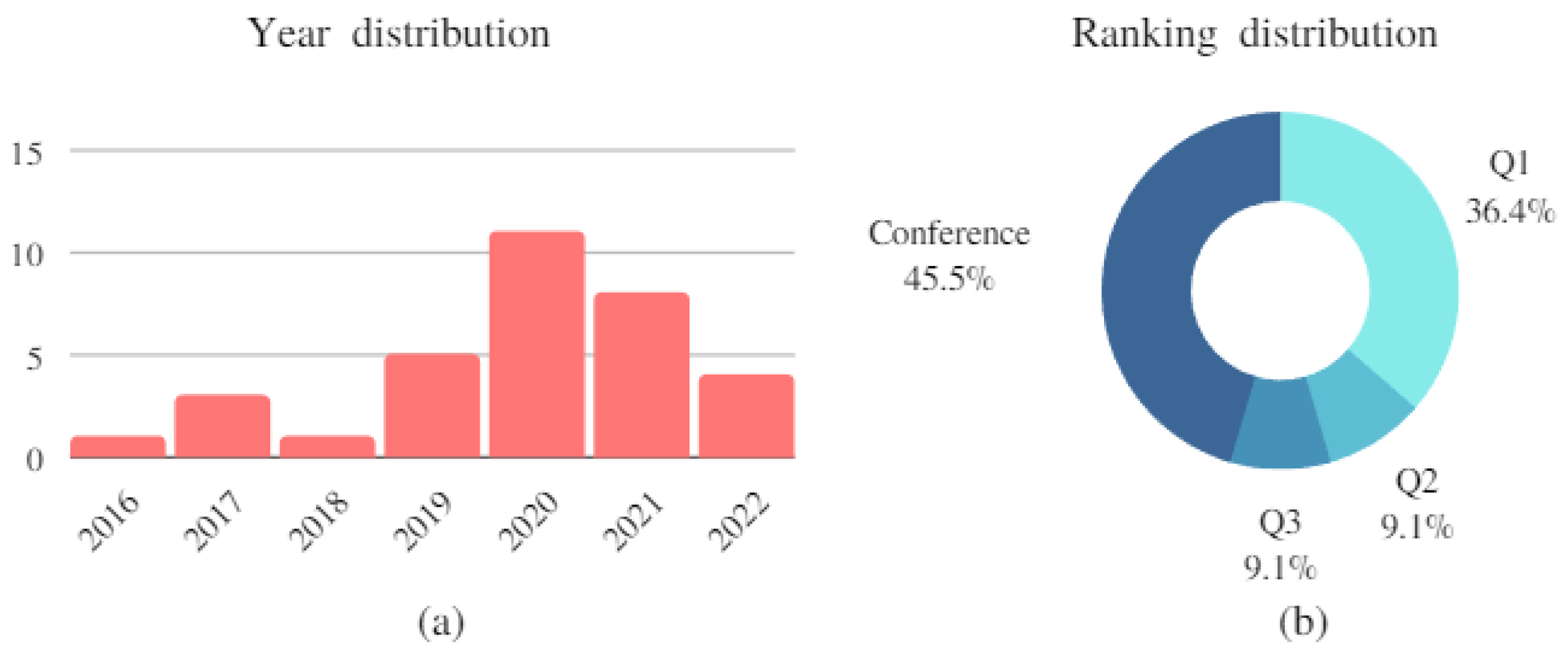

3.5. Frequency Result According Year and Journal Ranking

4. Discussion

5. Conclusions and Perspectives

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

| P. | Year | J/C | Loc | Database | Methods | Dataset | Best Method | Metric (Best) | Rank | Cite |

|---|---|---|---|---|---|---|---|---|---|---|

| [10] | 2020 | J | India | Scopus | Fuzzy similarity-based data cleansing approach, supervised multi-label classification models, MLP, KNN, KNN as OvR, AGgregated using fuzzy Similarity (TAGS) | MIMIC-III | TAGS | Ac: 82.0 | Q1 | 18 |

| [23] | 2020 | J | India | IEEE | MLP, ConvNet, LSTM, Bi-LSTM, Conv-LSTM, Seg-GRU | EMR text data (benchmark) | Conv-LSTM | Ac: 83.3 | Q1 | 7 |

| [22] | 2020 | J | China | IEEE | BiLSTM, CNN, CRF layer. In particular, they used BiLSTM and CNN to learn text features and CRF as the last layer of the model; MT-MI-BiLSTM-ATT | EMR data set comes from a hospital (benchmark) | MT-MI-BiLSTM-ATT | Ac: 93.0 F1: 87.0 | Q1 | 3 |

| [57] | 2021 | C | China | IEEE | ResNet; BERT-BiGRU; ResNet-BERTBiGRU | Text-image data (benchmark) | ResNet-BERTBiGRU | Mavg.P: 98.0 Mavg.R: 98.0 Mavg.F1: 98.0 | None | 0 |

| [58] | 2021 | C | Indonesia | IEEE | SVM (Linear Kernel); SVM (Polynomial Kernel); SVM (RBF Kernel); SVM (Sigmoid Kernel) | EMR data from outpatient visits during 2017 to 2018 at a public hospital in Surabaya City, Indonesia (benchmark) | SVM (Sigmoid Kernel) | R: 76.46; P: 81.28; F1: 78.80; Ac: 91.0 | None | 0 |

| [24] | 2020 | C | China | IEEE | GM; Seq2Seq; CNN; LP; HBLA-A (This model can be seen as a combination of BERT and BiLSTM.) | ARXIV Academic Paper Dataset (AAPD); Reuters Corpus Volume I (RCV1-V2) | BLA-A | Micro-P: 90.6; Micro-R: 89.2; Micro-F1: 89.9 | None | 1 |

| [15] | 2021 | C | China | IEEE | Text CNN; BERT; ALBERT | THUCNews; iFLYTEK | BERT | Ac: 96.63; P: 96.64; R: 96.63; F1: 96.61 | None | 0 |

| [16] | 2022 | J | Saudi Arabia | Scopus | BERT-base; BERT-large; RoBERTa-base; RoBERTa-large; DistilBERT; ALBERT-base-v2; XLM-RoBERTa-base; Electra-small; and BART-large | COVID-19 fake news dataset” by Sumit Bank; extremist-non-extremist dataset | BERT-base | Ac: 99.71; P: 98.82; R: 97.84; F1: 98.33 | Q3 | 3 |

| [18] | 2020 | C | UK | WoSc | LSTM; Multilingual; BERT-base; SCIBERT; SCIBERT 2.0 | SQuAD | LSTM | P: 98.0; R: 98.0; F1: 98.0 | None | 10 |

| [13] | 2021 | J | China | WoSc | CNN, LSTM, BiLSTM, CNN-LSTM, CNN-BiLSTM, logistic regression, naïve Bayesian classifier (NBC), SVM, and BiGRU. QC-LSTM; BiGRU | Hallmarks dataset; AIM dataset | QC-LSTM | AC: 96.72 | Q3 | 1 |

| [25] | 2021 | J | China | WoSc | Seq2Seq; SQLNet; PtrGen; Coarse2Fine; TREQS; MedTS | MIMICSQL | MedTS | AC: 88.0 | Q2 | 0 |

| [26] | 2029 | J | China | WoSc | CNN; LSTM | DingXiangyisheng’s question and answer module (benchmark) | CNN | AC: 86.28 | Q1 | 1 |

| [27] | 2027 | J | USA | WoSc | Tf-Idf CRNN | iDASH dataset; MGH dataset | CRNN | AUC: 99.1; F1: 84.5 | Q1 | 59 |

| [21] | 2027 | J | China | WoSc | CNN; LSTM; CNN-LSTM; GRU; DC-LSTM | cMedQA medical diagnosis dataset; Sentiment140 Twitter dataset | DC-LSTM | Ac: 97.2; P: 91.8; R: 91.8; F1: 91.0 | Q3 | 1 |

| [28] | 2020 | J | Taiwan | WoSc | CNN; CNN Based model | EMR Progress Notes from a medical center (benchmark) | CNN Based model | Ac: 58.0; P: 58.2; R: 57.9; F1: 58.0 | Q1 | 2 |

| [9] | 2019 | J | China | Scopus | CNN; RCNN; LSTM; AC-BiLSTM; SVM; Logistic-Regression | TCM—Traditional Chinese medicine dataset; CCKS dataset; Hallmarks—corpus dataset; AIM—Activating invasion and metastasis dataset | BIGRU | Hallmarks, Ac: 75.72; TCM, Ac: 89.09; CCKS, Ac: 93.75; AIM, Ac: 97.73 | Q2 | 15 |

| [59] | 2021 | J | China | WoSc | RoBERTa; ALBERT; transformers-sklearn based | TrialClassifcation, BC5CDR, DiabetesNER, and BIOSSES | transformers-sklearn based | Mavg-F1: 89.03 | Q1 | 2 |

| [7] | 2020 | C | UK | WoSc | BioBERT; Bert | MIMIC-III database | BioBERT | Ac: 90.05; Precision: 77.37; F1: 48.63 | None | 0 |

| [29] | 2019 | C | Finland | IEEE | BidirLSTM, LSTM, CNN, fastText, BoWLinearSVC, RandomForest, Word Heading Embeddings, Most Common, Random | clinical nursing shift notes (benchmark) | BidirLSTM | Avg-R: 54.35 | None | 3 |

| [19] | 2021 | J | South Africa | MDPI | Random forest, SVMLinear, SVMRadial | text dataset from NHLS-CDW | Random forest | F1: 95.34; R: 95.69 P: 94.60 Ac: 95.25 | Q2 | 2 |

| [30] | 2020 | J | Italy | Scopus | SVM | Medical records from from digital health (benchmark) | SVM | Mavg-P: 88.6; Mavg-Ac: 80.0 | Q1 | 27 |

| [8] | 2020 | J | UK | WoSc | LSTM; LSTM-RNNs; SVM, Decision Tree; RF | MIMIC-III; CSU dataset | LSTM | F1: 91.0 | Q1 | 13 |

| [20] | 2020 | C | USA | ACM | CNN-MHA-BLSTM; CNN, LSTM | EMR texte dataset (benchmark) | CNN-MHA-BLSTM | Ac: 91.99; F1: 92.03 | None | 22 |

| [31] | 2019 | C | USA | IEEE | MLP | EMR dataset (benchmark) | MLP | Ac: 82.0; F1: 82.0 | None | 1 |

| [12] | 2019 | C | USA | Arxiv | BERT-base, ELMo, BioBERT | PubMed abstract; MIMIC III | BERT-base | Ac: 82.3 | None | 0 |

| [32] | 2020 | J | France | Arxiv | MLP, CNN CNN 1D, MobileNetV2, MobileNetV2 (w/ DA) | RVL-CDIP dataset | MobileNetV2 | F1: 82:0 | Q1 | 55 |

| [33] | 2016 | C | USA | ACM | Med2Vec | CHOA dataset | Med2Vec | R: 91.0 | None | 378 |

| [52] | 2018 | C | Canada | Arxiv | word2vec, Hill, dict2vec | MENd dataset; SV-d dataset | word2vec | Spearman.C.C: 65.3 | None | 37 |

| [34] | 2017 | C | Switzerland | Arxiv | biGRU, GRU, DENSE | RCV1/RCV2 dataset | biGRU | F1: 84.0 | None | 34 |

| [11] | 2021 | J | China | Arxiv | Logistic regression; SWAM-CAML; SWAM-text CNN | MIMIC-III full dataset; MIMIC-III 50 dataset | SWAM-text CNN | F1: 60.0 | Q1 | 6 |

| [35] | 2022 | J | China | MDPI | LSTM, CNN, GRU, Capsule+GRU, Capsule+LSTM | Chinese electronic medical record dataset | Capsule+LSTM | F1: 73.51 | Q2 | 2 |

| [36] | 2022 | C | USA | Arxiv | BERTtiny; LinkBERTtiny, GPT-3, BioLinkBERT, UnifiedQA | MedQA-USMLE; MMLU-professional medicine | BioLinkBERT | Ac: 50.0 | None | 4 |

| [17] | 2022 | J | USA | Arxiv | CNN, LSTM, RNN, GRU, Bi-LSTM, Transformers, Bert-based | Harvard obesity 2008 challenge dataset | Bert-based | Ac: 94.7 | Q1 | 0 |

| P. | M1 | M2 | M3 | M4 | M5 | M6 | M7 | M8 | M9 | M10 | M11 | Result |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| [10] | 1 | 1 | 1 | 0 | 1 | 1 | 1 | 1 | 1 | 0 | 4 | 12/15 |

| [23] | 1 | 1 | 1 | 0 | 1 | 1 | 1 | 1 | 1 | 0 | 4 | 12/15 |

| [22] | 1 | 1 | 1 | 0 | 1 | 1 | 0 | 2 | 1 | 0 | 4 | 12/15 |

| [57] | 1 | 1 | 1 | 0 | 1 | 0 | 0 | 2 | 0 | 0 | Conf. | 6/11 |

| [58] | 1 | 1 | 0 | 0 | 1 | 0 | 0 | 2 | 0 | 0 | Conf. | 5/11 |

| [24] | 1 | 1 | 1 | 1 | 1 | 0 | 1 | 2 | 1 | 0 | Conf. | 9/11 |

| [15] | 1 | 1 | 1 | 0 | 1 | 0 | 0 | 2 | 0 | 0 | Conf. | 6/11 |

| [16] | 1 | 1 | 1 | 0 | 1 | 0 | 0 | 2 | 0.5 | 0 | 2 | 8.5/15 |

| [18] | 1 | 1 | 1 | 1 | 1 | 0 | 1 | 2 | 1 | 0 | Conf. | 9/11 |

| [13] | 1 | 1 | 1 | 1 | 1 | 0 | 1 | 2 | 0.5 | 1 | 2 | 11.5/15 |

| [25] | 1 | 1 | 1 | 0 | 1 | 0 | 1 | 2 | 1 | 0 | 3 | 11/15 |

| [26] | 1 | 1 | 1 | 1 | 1 | 0 | 1 | 1.5 | 0.5 | 0 | 4 | 12/15 |

| [27] | 1 | 1 | 1 | 1 | 1 | 0 | 1 | 2 | 1 | 1 | 4 | 14/15 |

| [21] | 1 | 1 | 1 | 1 | 1 | 0 | 1 | 2 | 0.5 | 0 | 3 | 11/15 |

| [28] | 1 | 1 | 0 | 1 | 1 | 0 | 1 | 0.5 | 0.5 | 0 | 4 | 9/15 |

| [9] | 1 | 1 | 1 | 1 | 1 | 0 | 1 | 2 | 1 | 0 | 3 | 12/15 |

| [59] | 1 | 1 | 1 | 1 | 1 | 0 | 1 | 1.5 | 0.5 | 0 | 4 | 12/15 |

| [7] | 1 | 1 | 1 | 1 | 0 | 0 | 1 | 1.5 | 0 | 0 | Conf. | 6.5/11 |

| [29] | 1 | 1 | 1 | 1 | 1 | 0 | 1 | 0 | 0.5 | 0 | Conf. | 6.5/11 |

| [19] | 1 | 1 | 1 | 1 | 1 | 0 | 1 | 2 | 0.5 | 0 | 3 | 11.5/15 |

| [30] | 1 | 1 | 0 | 1 | 1 | 1 | 0 | 1.5 | 1 | 0 | 4 | 11.5/15 |

| [8] | 1 | 1 | 1 | 1 | 1 | 0 | 0 | 2 | 1 | 0 | 4 | 12/15 |

| [20] | 1 | 1 | 1 | 1 | 1 | 1 | 0 | 2 | 1 | 0 | Conf. | 9/11 |

| [31] | 1 | 1 | 0 | 1 | 1 | 1 | 0 | 1.5 | 0.5 | 0 | Conf. | 7/11 |

| [12] | 1 | 1 | 1 | 1 | 1 | 0 | 0 | 0 | 0 | 1 | Conf. | 6/11 |

| [32] | 1 | 1 | 1 | 1 | 1 | 0 | 2 | 1.5 | 1 | 1 | 4 | 14.5/15 |

| [33] | 1 | 1 | 0 | 1 | 1 | 1 | 2 | 1 | 1 | 1 | Conf. | 10/11 |

| [52] | 1 | 1 | 1 | 1 | 1 | 1 | 0.5 | 1 | 1 | 1 | Conf. | 9.5/11 |

| [34] | 1 | 1 | 1 | 1 | 1 | 1 | 1.5 | 1 | 1 | 1 | Conf. | 10.5/11 |

| [11] | 1 | 1 | 1 | 1 | 1 | 0 | 0 | 1 | 1 | 1 | 4 | 12/15 |

| [35] | 1 | 1 | 1 | 1 | 1 | 0 | 1 | 1 | 0.5 | 1 | 3 | 11/15 |

| [36] | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 0 | 0.5 | 1 | Conf. | 8/11 |

| [17] | 1 | 1 | 1 | 1 | 1 | 0 | 1 | 2 | 0 | 1 | 4 | 13/15 |

References

- Mikolov, T.; Chen, K.; Corrado, G.; Dean, J. Efficient estimation of word representations in vector space. arXiv 2013, arXiv:1301.3781. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. In Proceedings of the 31st Conference on Neural Information Processing Systems (NIPS 2017), Long Beach, CA, USA, 4–9 December 2017; Volume 30. [Google Scholar]

- World Health Organization. The International Classification of Diseases, 10th Revision. 2015. Available online: https://icd.who.int/browse10/2015/en (accessed on 4 August 2021).

- Chen, P.; Wang, S.; Liao, W.; Kuo, L.; Chen, K.; Lin, Y.; Yang, C.; Chiu, C.; Chang, S.; Lai, F. Automatic ICD-10 Coding and Training System: Deep Neural Network Based on Supervised Learning. JMIR Med. Inform. 2021, 9, e23230. [Google Scholar] [CrossRef] [PubMed]

- Zahia, S.; Zapirain, M.B.; Sevillano, X.; González, A.; Kim, P.J.; Elmaghraby, A. Pressure injury image analysis with machine learning techniques: A systematic review on previous and possible future methods. Artif. Intell. Med. 2020, 102, 101742. [Google Scholar] [CrossRef] [PubMed]

- Urdaneta-Ponte, M.C.; Mendez-Zorrilla, A.; Oleagordia-Ruiz, I. Recommendation Systems for Education: Systematic Review. Electronics 2021, 10, 1611. [Google Scholar] [CrossRef]

- Amin-Nejad, A.; Ive, J.; Velupillai, S. LREC Exploring Transformer Text Generation for Medical Dataset Augmentation. In Proceedings of the Twelfth Language Resources and Evaluation Conference, Palais du Pharo, Marseille, France, 11–16 May 2020; Available online: https://aclanthology.org/2020.lrec-1.578 (accessed on 4 August 2021).

- Venkataraman, G.R.; Pineda, A.L.; Bear Don’t Walk, O.J., IV; Zehnder, A.M.; Ayyar, S.; Page, R.L.; Bustamante, C.D.; Rivas, M.A. FasTag: Automatic text classification of unstructured medical narratives. PLoS ONE 2020, 15, e0234647. [Google Scholar] [CrossRef]

- Qing, L.; Linhong, W.; Xuehai, D. A Novel Neural Network-Based Method for Medical Text Classification. Future Internet 2019, 11, 255. [Google Scholar] [CrossRef]

- Gangavarapu, T.; Jayasimha, A.; Krishnan, G.S.; Kamath, S. Predicting ICD-9 code groups with fuzzy similarity based supervised multi-label classification of unstructured clinical nursing notes. Knowl.-Based Syst. 2020, 190, 105321. [Google Scholar] [CrossRef]

- Hu, S.; Teng, F.; Huang, L.; Yan, J.; Zhang, H. An explainable CNN approach for medical codes prediction from clinical text. BMC Med. Inform. Decis. Mak. 2021, 21, 256. [Google Scholar] [CrossRef]

- Peng, Y.; Yan, S.; Lu, Z. Transfer Learning in Biomedical Natural Language Processing: An Evaluation of BERT and ELMo on Ten Benchmarking Datasets. arXiv 2019, arXiv:1906.05474. [Google Scholar]

- Prabhakar, S.K.; Won, D.O. Medical Text Classification Using Hybrid Deep Learning Models with Multihead Attention. Comput. Intell. Neurosci. 2021, 2021, 9425655. [Google Scholar] [CrossRef]

- Pappagari, R.; Zelasko, P.; Villalba, J.; Carmiel, Y.; Dehak, N. Hierarchical Transformers for Long Document Classification. In Proceedings of the 2019 IEEE Automatic Speech Recognition and Understanding Workshop (ASRU), Sentosa, Singapore, 14–18 December 2019; pp. 838–844. [Google Scholar] [CrossRef]

- Fang, F.; Hu, X.; Shu, J.; Wang, P.; Shen, T.; Li, F. Text Classification Model Based on Multi-head self-attention mechanism and BiGRU. In Proceedings of the 2021 IEEE Conference on Telecommunications, Optics and Computer Science (TOCS), Shenyang, China, 11–13 December 2021; pp. 357–361. [Google Scholar] [CrossRef]

- Qasim, R.; Bangyal, W.H.; Alqarni, M.A.; Ali Almazroi, A. A Fine-Tuned BERT-Based Transfer Learning Approach for Text Classification. J. Healthc. Eng. 2022, 2022, 3498123. [Google Scholar] [CrossRef]

- Lu, H.; Ehwerhemuepha, L.; Rakovski, C. A comparative study on deep learning models for text classification of unstructured medical notes with various levels of class imbalance. BMC Med. Res. Methodol. 2022, 22, 181. [Google Scholar] [CrossRef]

- Schmidt, L.; Weeds, J.; Higgins, J. Data Mining in Clinical Trial Text: Transformers for Classification and Question Answering Tasks. arXiv 2020, arXiv:2001.11268. [Google Scholar]

- Achilonu, O.J.; Olago, V.; Singh, E.; Eijkemans, R.M.J.C.; Nimako, G.; Musenge, E. A Text Mining Approach in the Classification of Free-Text Cancer Pathology Reports from the South African National Health Laboratory Services. Information 2021, 12, 451. [Google Scholar] [CrossRef]

- Shen, Z.; Zhang, S. A Novel Deep-Learning-Based Model for Medical Text Classification. In Proceedings of the 2020 9th International Conference on Computing and Pattern Recognition (ICCPR 2020), Xiamen, China, 30 October–1 November 2020; Association for Computing Machinery: New York, NY, USA, 2020; pp. 267–273. [Google Scholar] [CrossRef]

- Liang, S.; Chen, X.; Ma, J.; Du, W.; Ma, H. An Improved Double Channel Long Short-Term Memory Model for Medical Text Classification. J. Healthc. Eng. 2021, 2021, 6664893. [Google Scholar] [CrossRef]

- Wang, S.; Pang, M.; Pan, C.; Yuan, J.; Xu, B.; Du, M.; Zhang, H. Information Extraction for Intestinal Cancer Electronic Medical Records. IEEE Access 2020, 8, 125923–125934. [Google Scholar] [CrossRef]

- Gangavarapu, T.; Krishnan, G.S.; Kamath, S.; Jeganathan, J. FarSight: Long-Term Disease Prediction Using Unstructured Clinical Nursing Notes. IEEE Trans. Emerg. Top. Comput. 2021, 9, 1151–1169. [Google Scholar] [CrossRef]

- Cai, L.; Song, Y.; Liu, T.; Zhang, K. A Hybrid BERT Model That Incorporates Label Semantics via Adjustive Attention for Multi-Label Text Classification. IEEE Access 2020, 8, 152183–152192. [Google Scholar] [CrossRef]

- Pan, Y.; Wang, C.; Hu, B.; Xiang, Y.; Wang, X.; Chen, Q.; Chen, J.; Du, J. A BERT-Based Generation Model to Transform Medical Texts to SQL Queries for Electronic Medical Records: Model Development and Validation. JMIR Med. Inform. 2021, 9, e32698. [Google Scholar] [CrossRef]

- Liu, K.; Chen, L. Medical Social Media Text Classification Integrating Consumer Health Terminology. IEEE Access 2019, 7, 78185–78193. [Google Scholar] [CrossRef]

- Weng, W.H.; Wagholikar, K.B.; McCray, A.T.; Szolovits, P.; Chueh, H.C. Medical subdomain classification of clinical notes using a machine learning-based natural language processing approach. BMC Med. Inform. Decis. Mak. 2017, 17, 155. [Google Scholar] [CrossRef]

- Hsu, J.-L.; Hsu, T.-J.; Hsieh, C.-H.; Singaravelan, A. Applying Convolutional Neural Networks to Predict the ICD-9 Codes of Medical Records. Sensors 2020, 20, 7116. [Google Scholar] [CrossRef]

- Moen, H.; Hakala, K.; Peltonen, L.M.; Suhonen, H.; Ginter, F.; Salakoski, T.; Salanterä, S. Supporting the use of standardized nursing terminologies with automatic subject heading prediction: A comparison of sentence-level text classification methods. J. Am. Med. Inform. Assoc. 2020, 27, 81–88. [Google Scholar] [CrossRef]

- Chintalapudi, N.; Battineni, G.; Canio, M.D.; Sagaro, G.G.; Amenta, F. Text mining with sentiment analysis on seafarers’ medical documents. Int. J. Inf. Manag. Data Insights 2021, 1, 100005. [Google Scholar] [CrossRef]

- Al-Doulat, A.; Obaidat, I.; Lee, M. Unstructured Medical Text Classification using Linguistic Analysis: A Supervised Deep Learning Approach. In Proceedings of the 2019 IEEE/ACS 16th International Conference on Computer Systems and Applications (AICCSA), Abu Dhabi, United Arab Emirates, 3–7 November 2019; pp. 1–7. [Google Scholar] [CrossRef]

- Audebert, N.; Herold, C.; Slimani, K.; Vidal, C. Multimodal Deep Networks for Text and Image-Based Document Classification. In Proceedings of the Joint European Conference on Machine Learning and Knowledge Discovery in Databases, Würzburg, Germany, 16–20 September 2020. [Google Scholar] [CrossRef]

- Choi, E.; Bahadori, M.T.; Searles, E.; Coffey, C.; Thompson, M.; Bost, J.; Tejedor-Sojo, J.; Sun, J. Multi-layer Representation Learning for Medical Concepts. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (KDD ’16), San Francisco, CA, USA, 13–17 August 2016; Association for Computing Machinery: New York, NY, USA, 2016; pp. 1495–1504. [Google Scholar] [CrossRef]

- Pappas, N.; Popescu-Belis, A. Multilingual hierarchical attention networks for document classification. arXiv 2017, arXiv:1707.00896. [Google Scholar]

- Zhang, Q.; Yuan, Q.; Lv, P.; Zhang, M.; Lv, L. Research on Medical Text Classification Based on Improved Capsule Network. Electronics 2022, 11, 2229. [Google Scholar] [CrossRef]

- Yasunaga, I.; Leskovec, J.; Liang, P. LinkBERT: Pretraining Language Models with Document Links. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), Dublin, Ireland, 22–27 May 2022; Association for Computational Linguistics: Dublin, Ireland, 2022; pp. 8003–8016. [Google Scholar]

- Zhang, D.; Mishra, S.; Brynjolfsson, E.; Etchemendy, J.; Ganguli, D.; Grosz, B.; Lyons, T.; Manyika, J.; Niebles, J.C.; Sellitto, M.; et al. “The AI Index 2022 Annual Report,” AI Index Steering Committee; Stanford Institute for Human-Centered AI, Stanford University: Stanford, CA, USA, 2022. [Google Scholar]

- Le, Q.; Mikolov, T. Distributed representations of sentences and documents. In Proceedings of the International Conference on Machine Learning (PMLR), Bejing, China, 22–24 June 2014; pp. 1188–1196. [Google Scholar]

- Joulin, A.; Grave, E.; Bojanowski, P.; Mikolov, T. Bag of Tricks for Efficient Text Classification. arXiv 2016, arXiv:1607.01759. [Google Scholar]

- Radford, A.; Narasimhan, K.; Salimans, T.; Sutskever, I. Improving Language Understanding by Generative Pre-Training. 2018. Available online: https://www.cs.ubc.ca/~amuham01/LING530/papers/radford2018improving.pdf (accessed on 10 October 2022).

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. RoBERTa: A Robustly Optimized BERT Pretraining Approach. arXiv 2019, arXiv:1907.11692. [Google Scholar]

- Abreu, J.; Fred, L.; Macêdo, D.; Zanchettin, C. Hierarchical Attentional Hybrid Neural Networks for Document Classification. In Proceedings of the International Conference on Artificial Neural Networks, Munich, Germany, 17–19 September 2019. [Google Scholar] [CrossRef]

- Yang, Z.; Dai, Z.; Yang, Y.; Carbonell, J.; Salakhutdinov, R.R.; Le, Q.V. XLNet: Generalized Autoregressive Pretraining for Language Understanding. arXiv 2019, arXiv:1906.08237. [Google Scholar]

- Fries, J.A.; Weber, L.; Seelam, N.; Altay, G.; Datta, D.; Garda, S.; Kang, M.; Su, R.; Kusa, W.; Cahyawijaya, S.; et al. BigBIO: A Framework for Data-Centric Biomedical Natural Language Processing. arXiv 2022, arXiv:2206.15076. [Google Scholar]

- Zunic, A.; Corcoran, P. Spasic ISentiment Analysis in Health and Well-Being: Systematic Review. JMIR Med. Inform. 2020, 8, e16023. [Google Scholar] [CrossRef] [PubMed]

- Aattouchi, I.; Elmendili, S.; Elmendili, F. Sentiment Analysis of Health Care: Review. E3s Web Conf. 2021, 319, 01064. [Google Scholar] [CrossRef]

- Tai, K.S.; Socher, R.; Manning, C.D. Improved Semantic Representations From Tree-Structured Long Short-Term Memory Networks. arXiv 2015, arXiv:1503.00075. [Google Scholar]

- Nii, M.; Tsuchida, Y.; Kato, Y.; Uchinuno, A.; Sakashita, R. Nursing-care text classification using word vector representation and convolutional neural networks. In Proceedings of the 2017 Joint 17th World Congress of International Fuzzy Systems Association and 9th International Conference on Soft Computing and Intelligent Systems (IFSA-SCIS), Otsu, Japan, 27–30 June 2017; pp. 1–5. [Google Scholar]

- Qian, Y.; Woodland, P.C. Very Deep Convolutional Neural Networks for Robust Speech Recognition. arXiv 2016, arXiv:1607.01759. [Google Scholar]

- Zhang, Y.; Wallace, B. A sensitivity analysis of (and practitioners’ guide to) convolutional neural networks for sentence classification. arXiv 2015, arXiv:1510.03820. [Google Scholar]

- Hossin, M.; Sulaiman, M.N. A Review on Evaluation Metrics for Data Classification Evaluations. Int. J. Data Min. Knowl. Manag. Process 2015, 5, 1–11. [Google Scholar] [CrossRef]

- Bosc, T.; Vincent, P. Auto-Encoding Dictionary Definitions into Consistent Word Embeddings. In Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, Brussels, Belgium, 31 October–4 November 2018; pp. 1522–1532. [Google Scholar] [CrossRef]

- Spearman, C. ‘General Intelligence,’ Objectively Determined and Measured. Am. J. Psychol. 1904, 15, 201–292. [Google Scholar] [CrossRef]

- Zhan, X.; Wang, F.; Gevaert, O. Reliably Filter Drug-Induced Liver Injury Literature With Natural Language Processing and Conformal Prediction. IEEE J. Biomed. Health Inform. 2022, 26, 5033–5041. [Google Scholar] [CrossRef]

- Rathee, S.; MacMahon, M.; Liu, A.; Katritsis, N.; Youssef, G.; Hwang, W.; Wollman, L.; Han, N. DILIc: An AI-based classifier to search for Drug-Induced Liver Injury literature. bioRxiv 2022. [Google Scholar] [CrossRef]

- Oh, J.H.; Tannenbaum, A.R.; Deasy, J.O. Automatic identification of drug-induced liver injury literature using natural language processing and machine learning methods. bioRxiv 2022. [Google Scholar] [CrossRef]

- Chen, Y.; Zhang, X.; Li, T. Medical Records Classification Model Based on Text-Image Dual-Mode Fusion. In Proceedings of the 2021 4th International Conference on Artificial Intelligence and Big Data (ICAIBD), Chengdu, China, 28–31 May 2021; pp. 432–436. [Google Scholar] [CrossRef]

- Jamaluddin, M.; Wibawa, A.D. Patient Diagnosis Classification based on Electronic Medical Record using Text Mining and Support Vector Machine. In Proceedings of the 2021 International Seminar on Application for Technology of Information and Communication (iSemantic), Semarangin, Indonesia, 18–19 September 2021; pp. 243–248. [Google Scholar] [CrossRef]

- Yang, F.; Wang, X.; Ma, H.; Li, J. Transformers-sklearn: A toolkit for medical language understanding with transformer-based models. BMC Med. Inform. Decis. Mak. 2021, 21, 90. [Google Scholar] [CrossRef]

| Question | Purpose | |

|---|---|---|

| Q1 | What are the best NLP methods used in medical text classification? | To Describe the best methods used in the medical classification framework based on the evaluation metrics. And identify the challenges |

| Q2 | How are medical text classification datasets constructed? | To study the composition and description of medical texts in the classification task. |

| Q3 | In terms of data, what are the most common problems that medical text classification can solve? | To understand and highlight the common problems and challenges addressed in medical text-based problem solving. |

| Q4 | What are the mostly used evaluation metrics of medical document classification? | To identify the different mostly metrics used in medical document classification |

| Criteria | Description |

|---|---|

| Date | The publications included for this research were screened between 1 January 2016 and 10 July 2022. The quantity of relevant articles to filter dictated the selection of this range. Given the fast advancement of deep learning models and machine learning. |

| Type of publications | filtering was performed on two categories of publications, papers published at international conferences and articles published in international journals. |

| Ranking | To determine the finest articles, we used the ranking count of papers published in journals systematically. This criteria was not applied to papers presented at conferences. We examined the rankings Q1, Q2, and Q3 for the publications in the journals. |

| Type of problem | Only articles on biomedical text or image-text classification were evaluated for this criteria. |

| Citations | This criteria was given less weight, particularly for articles published recently, such as those from 2021 and 2022 |

| Category Metric | Metric | Description | Value | Weight |

|---|---|---|---|---|

| M1 | Provide a clear and balanced summary according to the context of the problem solved in the paper | (No/Yes) [0,1] | 1 | |

| M2 | Provide details of the model’s performance metrics and the entire evaluation process | [0,1] | 1 | |

| Metrics based on the text content of the paper (5 points) | M3 | Implement one or more medical text classification models | [0,1] | 1 |

| M4 | compares the results with other similar work and presents the limitations of this work | [0,1] | 1 | |

| M5 | Contains a deep and rich discussion on the result obtained | [0,1] | 1 | |

| M6 | Innovation | [0,1] | 1 | |

| M7 | The dataset used in the research is a benchmark or it has been made publicly available | [No,Yes] (0–1) | 1 | |

| Other quality metrics (10 points ) | M8 | Performance (Accuracy) | Regarding the performance, if the percentage of quality of result is between 60–70% (0.5), between 71–80% (1), between 81–90% (1.5) and 91% + (2) otherwise (0) | 2 |

| M9 | Citation | If the paper is cited 0 times (0), 1–4 times (0.5) and cited 6+ (1) | 1 | |

| M10 | Availability of source code | [0,1] | 1 | |

| M11 | Journal ranking | If rank = Q1 then (4), rank = Q2 then (3) rank = Q3 then (2) and if rank = Q4 then 1 | 4 |

| Score | No Journal | No Conference | Total |

|---|---|---|---|

| Excellence | 3 | 6 | 9 |

| Very good | 14 | 7 | 21 |

| Good | 2 | 1 | 3 |

| Sufficient | 0 | 0 | 0 |

| Deficient | 0 | 0 | 0 |

| Total | 19 | 14 | 33 |

| Methods | Dataset | Accuracy | Precision | Recall | F1-Score |

|---|---|---|---|---|---|

| TAGS [10] | MIMIC-III dataset | 82.00% | - | - | - |

| SWAM-text CNN [11] | MIMIC-III dataset | - | - | - | 60.00% |

| BioBERT [7] | MIMIC-III database | 90.05% | 77.37% | 48.63% | |

| BERT-base [12] | MIMIC III dataset | 82.30% | - | - | 82.20% |

| LSTM [8] | MIMIC-III dataset | - | - | - | 91.00% |

| QC-LSTM; BiGRU [13] | AIM dataset | 96.72% | - | - | - |

| BIGRU [9] | AIM dataset | 97.73% | - | - | - |

| Methods | Dataset | Accuracy | Precision | Recall | F1-Score |

|---|---|---|---|---|---|

| TAGS [10] | MIMIC-III | 82.00% | - | - | - |

| SWAM-text CNN [11] | MIMIC-III full dataset; MIMIC-III 50 dataset | - | - | 60.00% | |

| BioBERT [7] | MIMIC-III database | 90.05% | 77.37% | 48.63% | |

| BERT-base [12] | PubMed abstract; MIMIC III | 82.30% | - | - | 82.20% |

| LSTM [8] | MIMIC-III; CSU dataset | - | - | - | 91.00% |

| QC-LSTM; BiGRU [13] | Hallmarks dataset; AIM dataset | 96.72% | - | - | - |

| BIGRU [9] | TCM—Traditional Chinese medicine dataset; CCKS dataset; Hall-marks—corpus dataset; AIM—Activating invasion and metastasis dataset | 97.73% | - | - | - |

| Conv-LSTM [23] | EMR text data (benchmark) | 83.30% | - | - | - |

| MT-MI-BiLSTM-ATT [22] | EMR data set comes from a hospital (benchmark) | 93.00% | - | - | 87.00% |

| SVM (Sigmoid Kernel) [24] | EMR data from outpatient visits during 2017 to 2018 at a public hospital in Surabaya City, Indonesia (benchmark) | 88.40% | 81.28% | 76.46% | 78.80% |

| BERT [15] | THUCNews; iFLYTEK | 96.63% | 96.64% | 96.63% | 96.61% |

| BERT-based [16] | COVID-19 fake news dataset” by Sumit Bank; extremist-non-extremist dataset | 99.71% | 98.82% | 97.84% | 98.33% |

| LSTM [18] | SQuAD | 98.00% | 98.00% | 98.00% | |

| MedTS [25] | MIMICSQL | 88.00% | - | - | - |

| CNN [26] | DingXiangyisheng’s question and answer module (benchmark) | 86.28% | - | - | - |

| CRNN [27] | iDASH dataset; MGH dataset | - | - | - | 84.50% |

| Double-channel (DC-LSTM) [21] | cMedQA medical diagnosis dataset; Sentiment140 Twitter dataset | 97.20% | 91,80% | 91.80% | 91.00% |

| CNN Based model [28] | EMR Progress Notes from a medical center (benchmark) | 58.00% | 58.20% | 57.90% | 58.00% |

| BidirLSTM [29] | clinical nursing shift notes (benchmark) | - | - | - | - |

| Random forest [19] | Text dataset from NHLS-CDW | 95.25% | 94.60% | 95.69% | 95.34% |

| SVM [30] | Medical records from from digital health (benchmark) | 80.00% | - | - | - |

| CNN-MHA-BLSTM [20] | EMR texte dataset (benchmark) | 91.99% | - | - | 92.03% |

| MLP [31] | EMR dataset (benchmark) | 82.00% | - | - | 82.00% |

| MobileNetV2 [32] | RVL-CDIP dataset | - | - | - | 82.00% |

| Med2Vec [33] | CHOA dataset | - | - | 91.00% | - |

| biGRU [34] | RCV1/RCV2 dataset | - | - | - | 84.00% |

| Capsule+LSTM [35] | Chinese electronic medical record dataset | - | - | - | 73.51% |

| BioLinkBERT [36] | MedQA-USMLE; MMLU-professional medicine | 50.00% | - | - | - |

| Bert-based [17] | Harvard obesity 2008 challenge dataset | 94.70% | - | - | - |

| Parameters | Category | Frequency | |

|---|---|---|---|

| No. Papers | Percentage | ||

| Location | Southern Africa | 1 | 3.0% |

| Africa | 1 | 3.0% | |

| Eastern Asia | 13 | 39.4% | |

| Southern Asia | 2 | 6.1% | |

| Western Asia | 1 | 3.0% | |

| South-Eastern Asia | 1 | 3.0% | |

| Asia | 17 | 51.5% | |

| Northern Europe | 3 | 9.1% | |

| Eastern Europe | 1 | 3.0% | |

| Southern Europe | 2 | 6.1% | |

| Western Europe | 2 | 6.1% | |

| Europe | 8 | 24.3% | |

| Northern America | 7 | 21.2% | |

| America | 7 | 21.2% | |

| Database | Arxiv | 7 | 21.2% |

| ACM | 2 | 6.1% | |

| MDPI | 2 | 6.1% | |

| WoSc | 10 | 30.3% | |

| Scopus | 4 | 12.1% | |

| IEEE | 8 | 24.2% | |

| Type of publication | Conference | 14 | 42.4% |

| Journal | 19 | 57.6% | |

| Parameters | Category | Frequency | |

|---|---|---|---|

| No. Papers | Percentage | ||

| Year | 2016 | 1 | 3.0% |

| 2017 | 3 | 9.1% | |

| 2018 | 1 | 3.0% | |

| 2019 | 5 | 15.1% | |

| 2020 | 11 | 33.3% | |

| 2021 | 8 | 24.3% | |

| 2022 | 4 | 12.1% | |

| Paper ranking | Q1 | 12 | 36,4% |

| Q2 | 3 | 9.1% | |

| Q3 | 3 | 9.1% | |

| Conference | 15 | 45.5% | |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kesiku, C.Y.; Chaves-Villota, A.; Garcia-Zapirain, B. Natural Language Processing Techniques for Text Classification of Biomedical Documents: A Systematic Review. Information 2022, 13, 499. https://doi.org/10.3390/info13100499

Kesiku CY, Chaves-Villota A, Garcia-Zapirain B. Natural Language Processing Techniques for Text Classification of Biomedical Documents: A Systematic Review. Information. 2022; 13(10):499. https://doi.org/10.3390/info13100499

Chicago/Turabian StyleKesiku, Cyrille YetuYetu, Andrea Chaves-Villota, and Begonya Garcia-Zapirain. 2022. "Natural Language Processing Techniques for Text Classification of Biomedical Documents: A Systematic Review" Information 13, no. 10: 499. https://doi.org/10.3390/info13100499

APA StyleKesiku, C. Y., Chaves-Villota, A., & Garcia-Zapirain, B. (2022). Natural Language Processing Techniques for Text Classification of Biomedical Documents: A Systematic Review. Information, 13(10), 499. https://doi.org/10.3390/info13100499