Cognitive Digital Twins for Resilience in Production: A Conceptual Framework

Abstract

:1. Introduction and Motivation

2. Cognition in Manufacturing

3. Industrial Production Cases

3.1. Industrial Case Descriptions

3.1.1. Oil Refinery: LPG Off-Specs Production Recovery

3.1.2. Waste-to-Fuel Transformation: Mitigation of Clogging Problems and New Feedstock Set-Up

3.1.3. Automotive Electronics: Production Scheduling with Predictive Maintenance

3.1.4. Steel Processing: Production Scheduling Based on Machine and Crane Performance

3.1.5. Textile Industry—Production Scheduling with New Orders and Disruptions Caused by Looms or Yarns

3.2. Industrial Case Needs

- Real-time monitoring of the production processes and the corresponding manufacturing elements,

- Anomaly detection with respect to the monitored manufacturing elements,

- Root-cause identification focused on the corresponding events that disrupt production,

- Impact assessment of the disruptive events to the current production schedule/plan to evaluate the need for recovery actions,

- Decision support to drive the corresponding recovery actions and

- Evaluation of alternatives to enable informed decision making.

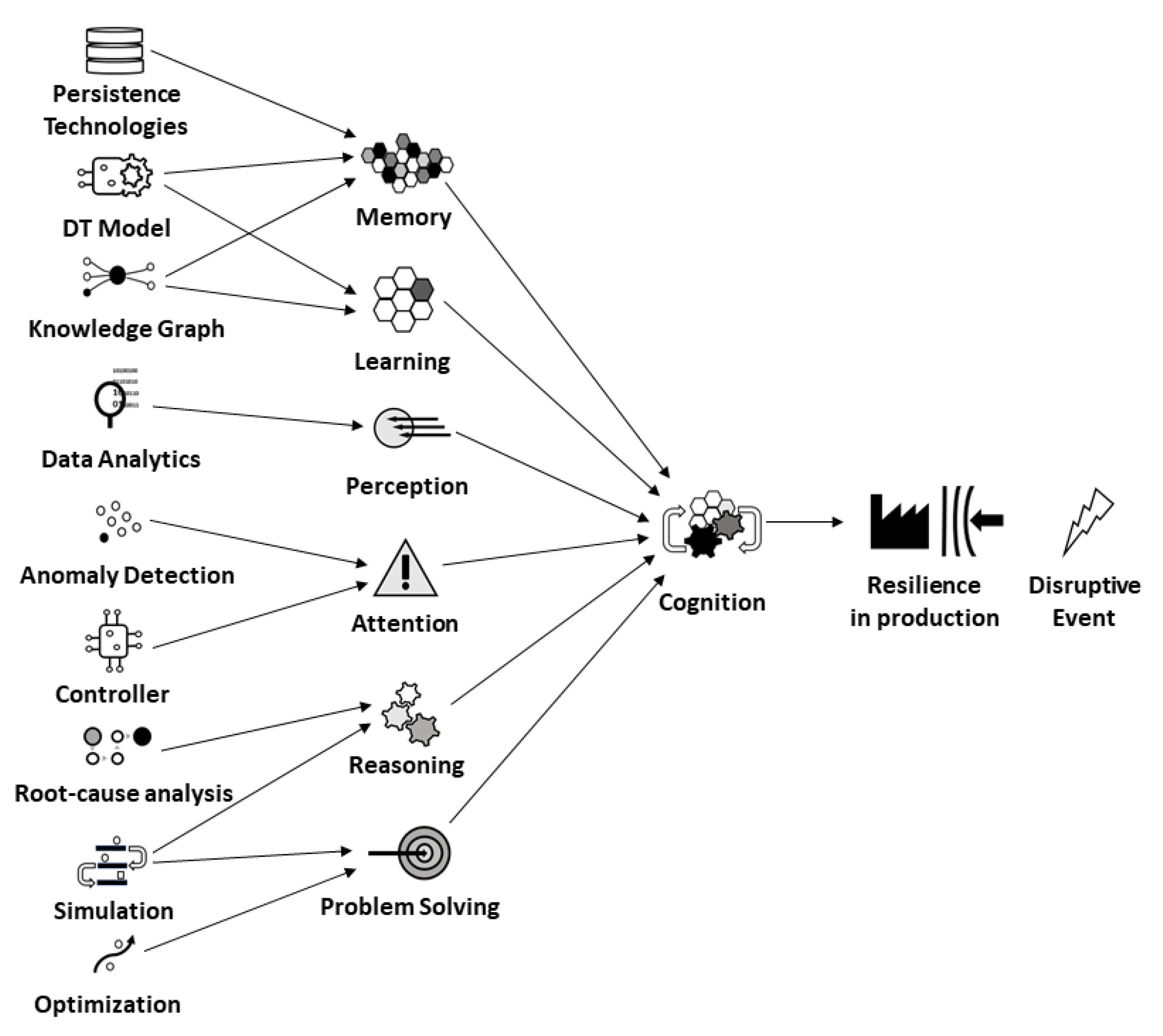

4. Resilience in Production through Cognitive Capabilities

5. Implementing CDTs for Resilience in Production

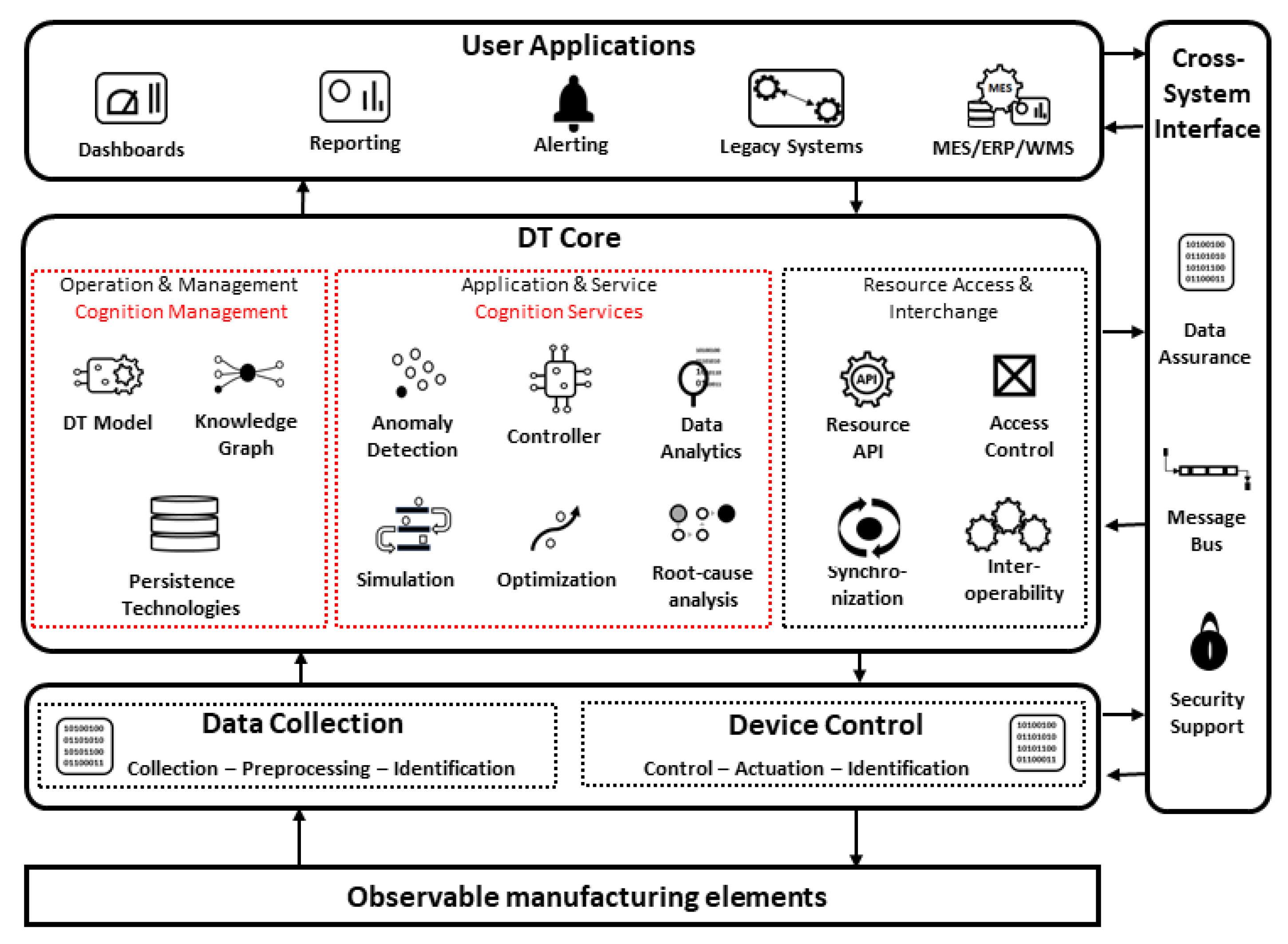

5.1. Conceptual Architecture

5.2. Cognition in the DT Core

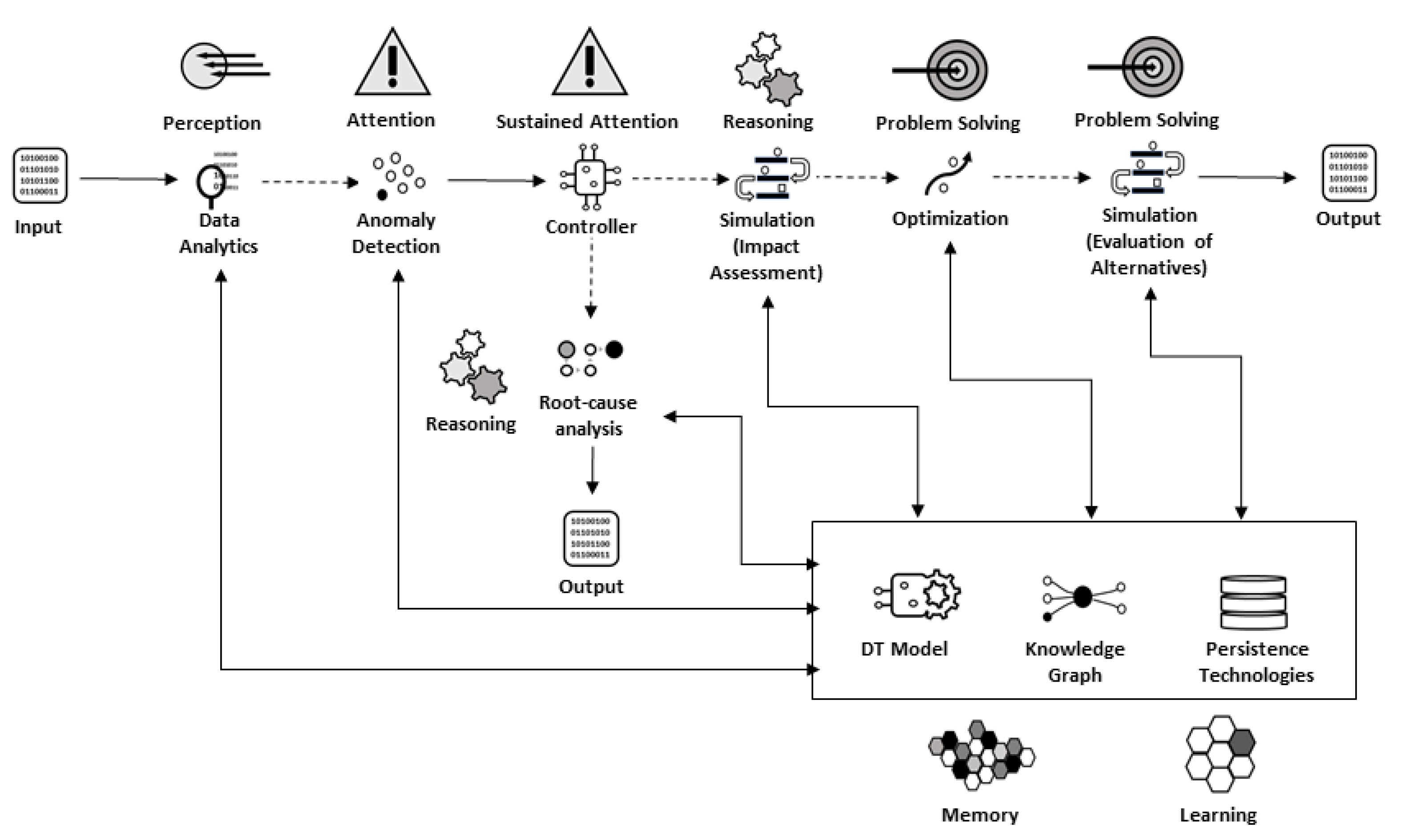

5.3. Pathways to Resilience in Production

6. Discussion

7. Conclusions and Future Research

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Gu, X.; Jin, X.; Ni, J.; Koren, Y. Manufacturing system design for resilience. Procedia CIRP 2015, 36, 135–140. [Google Scholar] [CrossRef] [Green Version]

- Eirinakis, P.; Kasapidis, G.; Mourtos, I.; Repoussis, P.; Zampou, E. Situation-aware manufacturing systems for capturing and handling disruptions. J. Manuf. Syst. 2021, 58, 365–383. [Google Scholar] [CrossRef]

- Tao, F.; Zhang, H.; Liu, A.; Nee, A.Y. Digital twin in industry: State-of-the-art. IEEE Trans. Ind. Inform. 2018, 15, 2405–2415. [Google Scholar] [CrossRef]

- Cimino, C.; Negri, E.; Fumagalli, L. Review of digital twin applications in manufacturing. Comput. Ind. 2019, 113, 103–130. [Google Scholar] [CrossRef]

- Liu, M.; Fang, S.; Dong, H.; Xu, C. Review of digital twin about concepts, technologies, and industrial applications. J. Manuf. Syst. 2021, 58, 346–361. [Google Scholar] [CrossRef]

- Grieves, M. Digital Twin: Manufacturing Excellence through Virtual Factory Replication; White Paper; Florida Institute of Technology: Melbourne, FL, USA, 2014; Volume 1, pp. 1–7. [Google Scholar]

- Becue, A.; Maia, E.; Feeken, L.; Borchers, P.; Praca, I. A new concept of digital twin supporting optimization and resilience of factories of the future. Appl. Sci. 2020, 10, 4482. [Google Scholar] [CrossRef]

- Tao, F.; Zhang, M.; Cheng, J.; Qi, Q. Digital twin workshop: A new paradigm for future workshop. Comput. Integr. Manuf. Syst. 2017, 23, 1–9. [Google Scholar]

- Al Faruque, M.A.; Muthirayan, D.; Yu, S.Y.; Khargonekar, P.P. Cognitive Digital Twin for Manufacturing Systems. In Proceedings of the 2021 Design, Automation & Test in Europe Conference & Exhibition, Grenoble, France, 1–5 February 2021; pp. 440–445. [Google Scholar]

- Kritzinger, W.; Karner, M.; Traar, G.; Henjes, J.; Sihn, W. Digital Twin in manufacturing: A categorical literature review and classification. IFAC-PapersOnLine 2018, 51, 1016–1022. [Google Scholar] [CrossRef]

- International Organization for Standardization. Automation Systems and Integration—Digital Twin Framework for Manufacturing (ISO Standard 23247-1:2021). 2021. Available online: https://www.iso.org/standard/75066.html (accessed on 30 December 2021).

- Abburu, S.; Berre, A.J.; Jacoby, M.; Roman, D.; Stojanovic, L.; Stojanovic, N. COGNITWIN–Hybrid and cognitive digital twins for the process industry. In Proceedings of the 2020 IEEE International Conference on Engineering, Technology and Innovation (ICE/ITMC), Cardiff, UK, 15–17 June 2020; ΙΕΕΕ: Piscataway, NJ, USA, 2020; pp. 1–8. [Google Scholar]

- Li, Y.; Chen, J.; Hu, Z.; Zhang, Z.; Lu, J.; Kiritsis, D. Co-simulation of complex engineered systems enabled by a cognitive twin architecture. Int. J. Prod. Res. 2021. [Google Scholar] [CrossRef]

- Huffman, K.; Dowdell, K.; Sanderson, C.A. Psychology in Action; John Wiley & Sons: Hoboken, NJ, USA, 2017. [Google Scholar]

- Phaf, R.H.; Van der Heijden, A.H.C.; Hudson, P.T. SLAM: A connectionist model for attention in visual selection tasks. Cogn. Psychol. 1990, 22, 273–341. [Google Scholar] [CrossRef]

- DeGangi, G.; Porges, S. Neuroscience Foundations of Human Performance; American Occupational Therapy Association Inc.: Rockville, MD, USA, 1990. [Google Scholar]

- Baddeley, A.D.; Hitch, G. Working memory. In Psychology of Learning and Motivation; Academic Press: Cambridge, MA, USA, 1974; Volume 8, pp. 47–89. [Google Scholar]

- Leighton, J.P. Defining and Describing Reason. In The Nature of Reasoning; Leighton, J.P., Sternberg, R.J., Eds.; Cambridge University Press: Cambridge, UK, 2004; pp. 3–11. [Google Scholar]

- Newell, A.; Simon, H.A. Human Problem Solving; Prentice-Hall: Hoboken, NJ, USA, 1972. [Google Scholar]

- Sprenger, M. Learning and Memory: The Brain in Action; Association of Supervision and Curriculum Development: Alexandria, VA, USA, 1999; ISBN 0-87120-350-2. [Google Scholar]

- Saracco, R. Digital twins: Bridging physical space and cyberspace. Computer 2019, 52, 58–64. [Google Scholar] [CrossRef]

- Iarovyi, S.; Lastra, J.L.M.; Haber, R.; del Toro, R. From artificial cognitive systems and open architectures to cognitive manufacturing systems. In Proceedings of the 2015 IEEE 13th International Conference on Industrial Informatics (INDIN), Cambridge, UK, 22–24 July 2015; pp. 1225–1232. [Google Scholar]

- Mortlock, T.; Muthirayan, D.; Yu, S.Y.; Khargonekar, P.P.; Faruque, M.A.A. Graph Learning for Cognitive Digital Twins in Manufacturing Systems. arXiv 2021, arXiv:2109.08632. [Google Scholar] [CrossRef]

- Zhang, N.; Bahsoon, R.; Theodoropoulos, G. Towards Engineering Cognitive Digital Twins with Self-Awareness. In Proceedings of the 2020 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Toronto, ON, Canada, 11–14 October 2020; IEEE: Piscataway, NJ, USA, 2020; p. 3891. [Google Scholar]

- Zhao, Y.F.; Xu, X. Enabling cognitive manufacturing through automated on-machine measurement planning and feedback. Adv. Eng. Inform. 2010, 24, 269–284. [Google Scholar] [CrossRef]

- Li, S.; Wang, R.; Zheng, P.; Wang, L. Towards proactive human–robot collaboration: A foreseeable cognitive manufacturing paradigm. J. Manuf. Syst. 2021, 60, 547–552. [Google Scholar] [CrossRef]

- Eirinakis, P.; Kalaboukas, K.; Lounis, S.; Mourtos, I.; Rožanec, J.M.; Stojanovic, N.; Zois, G. Enhancing cognition for digital twins. In Proceedings of the 2020 IEEE International Conference on Engineering, Technology and Innovation (ICE/ITMC), Cardiff, UK, 15–17 June 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 1–7. [Google Scholar]

- Lu, J.; Zheng, X.; Gharaei, A.; Kalaboukas, K.; Kiritsis, D. Cognitive Twins for Supporting Decision-Makings of Internet of Things Systems. In Proceedings of the 5th International Conference on the Industry 4.0 Model for Advanced Manufacturing. Lecture Notes in Mechanical Engineering; Wang, L., Majstorovic, V., Mourtzis, D., Carpanzano, E., Moroni, G., Galantucci, L., Eds.; Springer: Cham, Switzerland, 2020. [Google Scholar] [CrossRef]

- Rožanec, J.M.; Lu, J.; Rupnik, J.; Škrjanc, M.; Mladenić, D.; Fortuna, B.; Kiritsis, D. Actionable Cognitive Twins for Decision Making in Manufacturing. arXiv 2021, arXiv:2103.12854. [Google Scholar] [CrossRef]

- Kalaboukas, K.; Rožanec, J.; Košmerlj, A.; Kiritsis, D.; Arampatzis, G. Implementation of Cognitive Digital Twins in Connected and Agile Supply Networks—An Operational Model. Appl. Sci. 2021, 11, 4103. [Google Scholar] [CrossRef]

- Zhang, W.J.; Van Luttervelt, C.A. Toward a resilient manufacturing system. CIRP Ann. 2011, 60, 469–472. [Google Scholar] [CrossRef]

- Wang, J.; Muddada, R.R.; Wang, H.; Ding, J.; Lin, Y.; Liu, C.; Zhang, W. Toward a resilient holistic supply chain network system: Concept, review and future direction. IEEE Syst. J. 2014, 10, 410–421. [Google Scholar] [CrossRef] [Green Version]

- Mourtzis, D.; Angelopoulos, J.; Panopoulos, N. Robust Engineering for the Design of Resilient Manufacturing Systems. Appl. Sci. 2021, 11, 3067. [Google Scholar] [CrossRef]

- Kusiak, A. Fundamentals of smart manufacturing: A multi-thread perspective. Annu. Rev. Control 2019, 47, 214–220. [Google Scholar] [CrossRef]

- Hollnagel, E.; Woods, D.D.; Leveson, N. Resilience Engineering: Concepts and Precepts; Ashgate Publishing, Ltd.: Farnham, UK, 2006. [Google Scholar]

- Ingemansson, A.; Bolmsjö, G.S. Improved efficiency with production disturbance reduction in manufacturing systems based on discrete-event simulation. J. Manuf. Technol. Manag. 2004, 15, 267–279. [Google Scholar] [CrossRef]

- Galaske, N.; Anderl, R. Disruption Management for Resilient Processes in Cyber-Physical Production System. Procedia CIRP 2016, 50, 442–447. [Google Scholar] [CrossRef] [Green Version]

- Abdullah, Z.; Norashid, A.; Zainal, A. Nonlinear modelling application in distillation column. Chem. Prod. Process Model. 2007, 2. [Google Scholar] [CrossRef]

- Ramli, N.; Hussain, M.A.; Jan, B.M.; Abdullah, B. Composition Prediction of a Debutanizer Column using Equation Based Artificial Neural Network Model. Neurocomputing 2014, 131, 59–76. [Google Scholar] [CrossRef]

- Giobergia, F.; Baralis, E.; Camuglia, M.; Cerquitelli, T.; Mellia, M.; Neri, A.; Tricarico, D.; Tuninetti, A. Mining Sensor Data for Predictive Maintenance in the Automotive Industry. In Proceedings of the IEEE 5th International Conference on Data Science and Advanced Analytics (DSAA), Turin, Italy, 1–3 October 2018; pp. 351–360. [Google Scholar] [CrossRef]

- Einabadi, B.; Baboli, A.; Ebrahimi, M. Dynamic Predictive Maintenance in industry 4.0 based on real time information: Case study in automotive industries. IFAC-PapersOnLine 2019, 52, 1069–1074. [Google Scholar] [CrossRef]

- Theissler, A.; Pérez-Velázquez, J.; Kettelgerdes, M.; Elger, G. Predictive maintenance enabled by machine learning: Use cases and challenges in the automotive industry. Reliab. Eng. Syst. Saf. 2021, 215, 107864. [Google Scholar] [CrossRef]

- Yuan, F.; Feng, K.; Lin, S.; Xu, A. A study on DAA-based crane scheduling models for steel plant. Int. J. Prod. Res. 2021, 59, 6241–6251. [Google Scholar] [CrossRef]

- Fanti, M.P.; Iacobellis, G.; Rotunno, G.; Ukovich, W. A simulation based analysis of production scheduling in a steelmaking and continuous casting plant. In Proceedings of the 2013 IEEE International Conference on Automation Science and Engineering (CASE), Madison, WI, USA, 17–20 August 2013; IEEE: Piscataway, NJ, USA, 2013. [Google Scholar]

- He, W.; Meng, S.; Wang, J.; Pan, R.; Gao, W. Weaving scheduling based on an improved ant colony algorithm. Text. Res. J. 2021, 91, 543–554. [Google Scholar] [CrossRef]

- Mahmood, A. Smart lean in ring spinning—A case study to improve performance of yarn manufacturing process. J. Text. Inst. 2020, 111, 1681–1696. [Google Scholar] [CrossRef]

- Serafini, P.; Grazia Speranza, M. Production scheduling problems in a textile industry. Eur. J. Oper. Res. 1992, 58, 173–190. [Google Scholar] [CrossRef]

- Aron, I.D.; Genç-Kaya, L.; Harjunkoski, I.; Hoda, S.; Hooker, J.N. Factory crane scheduling by dynamic programming. In Proceedings of the 12th INFORMS Computing Society Conference, Monterey, CA, USA, 9–11 January 2011. [Google Scholar] [CrossRef] [Green Version]

- Peterson, B.; Harjunkoski, I.; Hoda, S.; Hooker, J.N. Scheduling multiple factory cranes on a common track. Comput. Oper. Res. 2014, 48, 102–112. [Google Scholar] [CrossRef]

| Oil Refinery | Waste-to-Fuel | Automotive Electronics | Steel Processing | Textile Industry | |

|---|---|---|---|---|---|

| Involved manufacturing elements | Process units, input feeds and output tank | Process units and pipes | Machines and production lines | Machines and cranes | Looms and yarns |

| Disruptive events | Anomalies in process units, off-specs LPG in final tank | Clogging in pipes, new feedstock | Machine breakdowns and predictive maintenance | Machine and crane malfunctions and bottlenecks | Machine malfunctions, yarn breakage and new order |

| Impact alleviation measures | Anomaly detection, root-cause and optimal process parameters for on-specs recovery | Clogging detection and root-cause, optimized process parameters for new raw feedstock | Machine monitoring and need for predictive maintenance, root-cause and production reschedule | Machine and crane movement monitoring, root-cause and production reschedule | Machine and yarn breakage monitoring, new orders with priorities and production reschedule |

| Oil Refinery | Waste-to-Fuel | Automotive Electronics | Steel Processing | Textile Industry | |

|---|---|---|---|---|---|

| Real-time monitoring | Process units and the final tank | Process units and pipes | Machines’ status and maintenance needs | Machines’ status and process times and crane movement | Looms’ and yarns’ status, new orders and priorities |

| Anomaly detection | Anomalies in process units and off-specs production | Clogging of pipes | Machine anomalies and prediction of potential failure | Machine anomalies, bottlenecks in cranes’ movement | Yarn breakage and loom stoppage |

| Root-cause identification | Reason for off-specs production | Reason for clogging | Reason for potential failure | Reason for machine malfunction and crane bottleneck | Reason for loom malfunction and yarn breakage |

| Impact assessment | Evaluation of impact of anomaly to final tank impurities | Evaluation of impact of clogging or new feedstock | Evaluation of impact of anomaly or predictive maintenance | Evaluation of impact of machine or crane bottleneck or malfunction | Evaluation of impact of machine malfunction, yarn breakage, new orders |

| Decision support | Optimal process parameters for all units for on-specs recovery | Selection of optimal process parameters for new feedstock | Production reschedule with machine failures and predictive maintenance | Production reschedule with actual process times and availability of machines and cranes | Production reschedule with machine failures, new orders and priorities |

| Evaluation of alternatives | Evaluation of alternative recovery plans | Evaluation of alternative process parameters | Evaluation of alternative production schedules | Evaluation of alternative production schedules | Evaluation of alternative production schedules |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Eirinakis, P.; Lounis, S.; Plitsos, S.; Arampatzis, G.; Kalaboukas, K.; Kenda, K.; Lu, J.; Rožanec, J.M.; Stojanovic, N. Cognitive Digital Twins for Resilience in Production: A Conceptual Framework. Information 2022, 13, 33. https://doi.org/10.3390/info13010033

Eirinakis P, Lounis S, Plitsos S, Arampatzis G, Kalaboukas K, Kenda K, Lu J, Rožanec JM, Stojanovic N. Cognitive Digital Twins for Resilience in Production: A Conceptual Framework. Information. 2022; 13(1):33. https://doi.org/10.3390/info13010033

Chicago/Turabian StyleEirinakis, Pavlos, Stavros Lounis, Stathis Plitsos, George Arampatzis, Kostas Kalaboukas, Klemen Kenda, Jinzhi Lu, Jože M. Rožanec, and Nenad Stojanovic. 2022. "Cognitive Digital Twins for Resilience in Production: A Conceptual Framework" Information 13, no. 1: 33. https://doi.org/10.3390/info13010033

APA StyleEirinakis, P., Lounis, S., Plitsos, S., Arampatzis, G., Kalaboukas, K., Kenda, K., Lu, J., Rožanec, J. M., & Stojanovic, N. (2022). Cognitive Digital Twins for Resilience in Production: A Conceptual Framework. Information, 13(1), 33. https://doi.org/10.3390/info13010033