P2ISE: Preserving Project Integrity in CI/CD Based on Secure Elements †

Abstract

:1. Introduction

- Define security and functional requirements of a tool that is meant to provide developers with CI/CD features following a security by design approach.

- Propose P2ISE, a solution for integrity preservation for software projects within CI/CD environments based on the use of secure elements, in particular the TPM chipset. To the best of our knowledge, this is the first paper that proposes a tool to bridge the identified security gap.

- Assess the proposed P2ISE’s performance and qualitatively reason about its security properties. For this purpose, we have implemented and evaluated it against various projects.

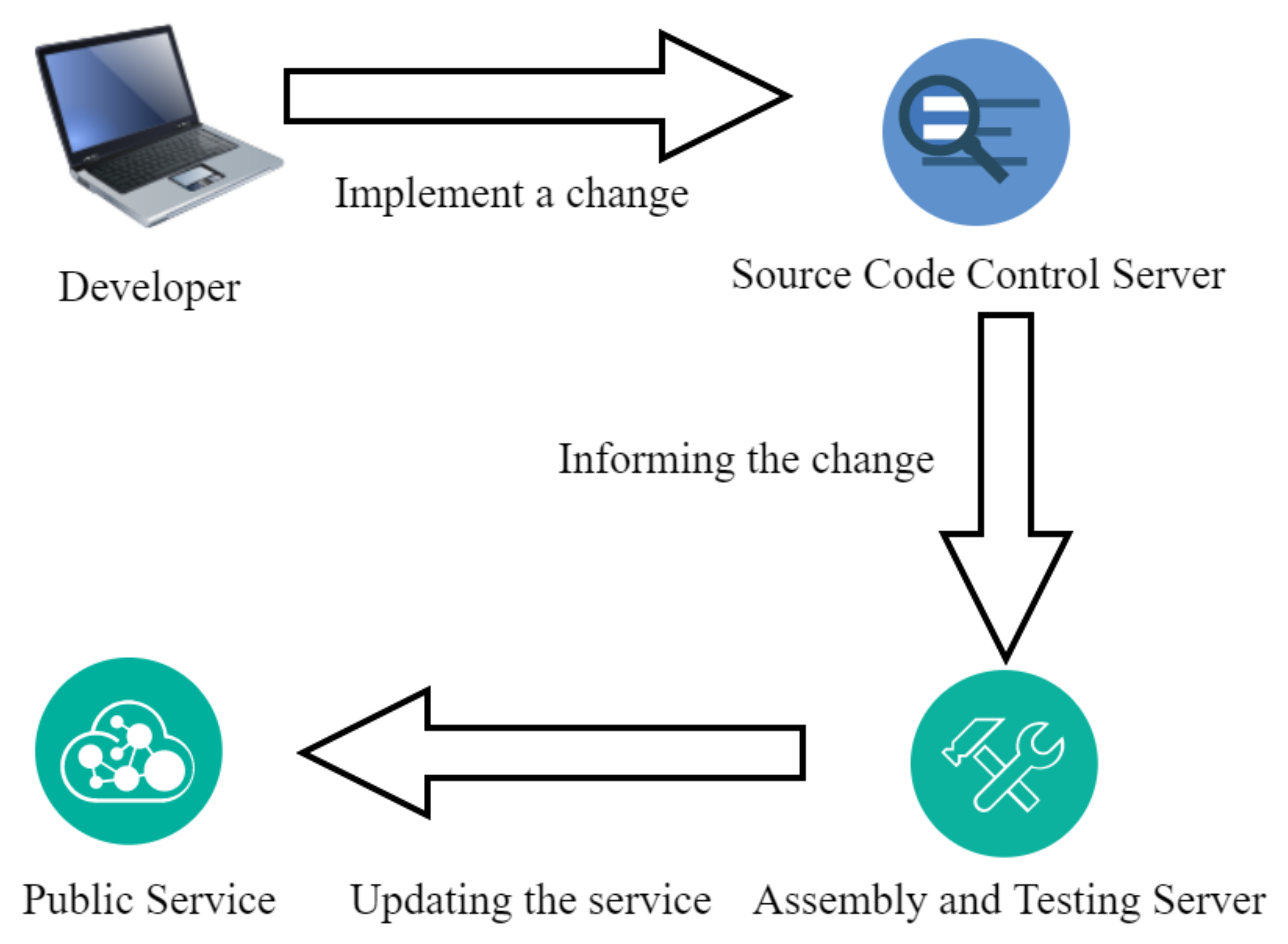

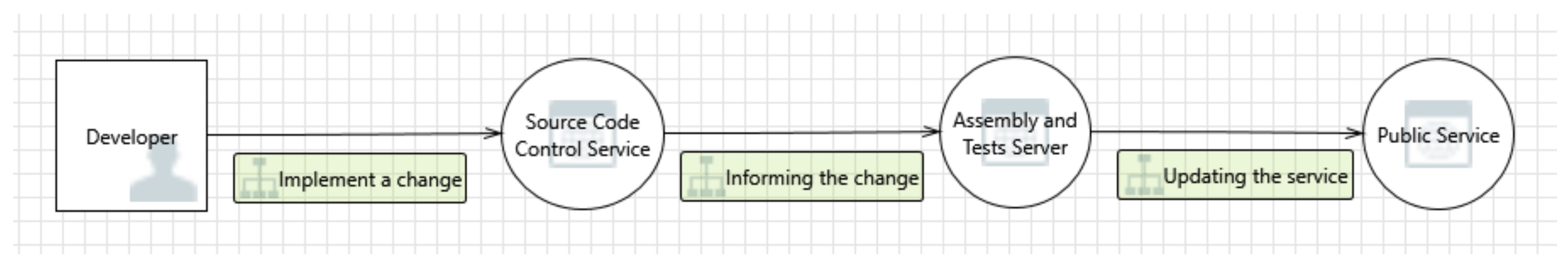

2. The CI/CD Concept

2.1. Definition and Participants

2.2. Motivation

2.3. Threat Analysis

- T1.

- Elevation Using Impersonation: Source Code Control Service may be able to impersonate the context of a Developer in order to gain additional privilege.

- T2.

- Elevation Using Impersonation: Assembly and Test Server may be able to impersonate the context of Source Code Control Service in order to gain additional privilege.

- T3.

- Weak Authentication Scheme: Custom authentication schemes are susceptible to common weaknesses such as weak credential change management, credential equivalence, easily guessable credentials, null credentials, downgrade authentication or a weak credential change management system.

- T4.

- Source Code Control Service Process Memory Tampered: If Source Code Control Service is given access to memory, such as shared memory or pointers, or is given the ability to control what Assembly and Test Server executes (for example, passing back a function pointer.), then Source Code Control Service can tamper with Assembly and Test Server. Consider if the function could work with less access to memory, such as passing data rather than pointers. Copying data provided, and then validate it.

- T5.

- Collision Attacks: Attackers who can send a series of packets or messages may be able to overlap data. For example, packet 1 may be 100 bytes starting at offset 0. Packet 2 may be 100 bytes starting at offset 25. Packet 2 will overwrite 75 bytes of packet 1.

- T6.

- Assembly and Test Server Process Memory Tampered: If Assembly and Test Server is given access to memory, such as shared memory or pointers, or is given the ability to control what Public Service executes (for example, passing back a function pointer.), then Assembly and Test Server can tamper with Public Service. Consider if the function could work with less access to memory, such as passing data rather than pointers. Copy in data provided, and then validate it.

- T7.

- Replay Attacks: Packets or messages without sequence numbers or timestamps can be captured and replayed in a wide variety of ways. Implement or utilize an existing communication protocol that supports anti-replay techniques (investigate sequence numbers before timers) and strong integrity.

- T8.

- Elevation Using Impersonation: Public Service may be able to impersonate the context of Assembly and Test Server in order to gain additional privilege.

- T9.

- Cross Site Scripting: The web server Public Service could be a subject to a cross-site scripting attack because it does not sanitize untrusted input.

2.4. Security and Functional Requirements

2.4.1. Security Requirements

- S1.

- Data confidentiality: Code of a project within the CI/CD environment should be available only to responsible developers. No adversaries should be able to read and edit the code of the software project.

- S2.

- Data integrity: All code transactions (e.g., push commands) among the engaging entities (developers) should be protected against malicious alternations. Each process should be monitored and verified.

- S3.

- Non-repudiation: Once a developer completes an action (e.g., code changes) then he should not by able to deny it; each actor should be responsible for his actions. This lead to the fact that each action should be monitored and securely recorded.

- S4.

- Accountability: A developer should be held accountable for his actions.

2.4.2. Functional Requirements

- F1.

- Passive storage with no shared access: Data that should be accessed only by entities that have been authorized by the owner for specific actions needs to be protected against access attempts by unauthorized entities or to unauthorized actions, while maintaining availability for authorized users.

- F2.

- Privileged activity tracking: All modification attempts should be monitored.

- F3.

- Integrity verification: Each modification attempt should be verified via hash function before being deployed for avoiding malicious activities.

- F4.

- Key utilization: The keys that are used for modifications should have been created and stored only inside the TPM preventing possible hardware attacks and data leakage.

- F5.

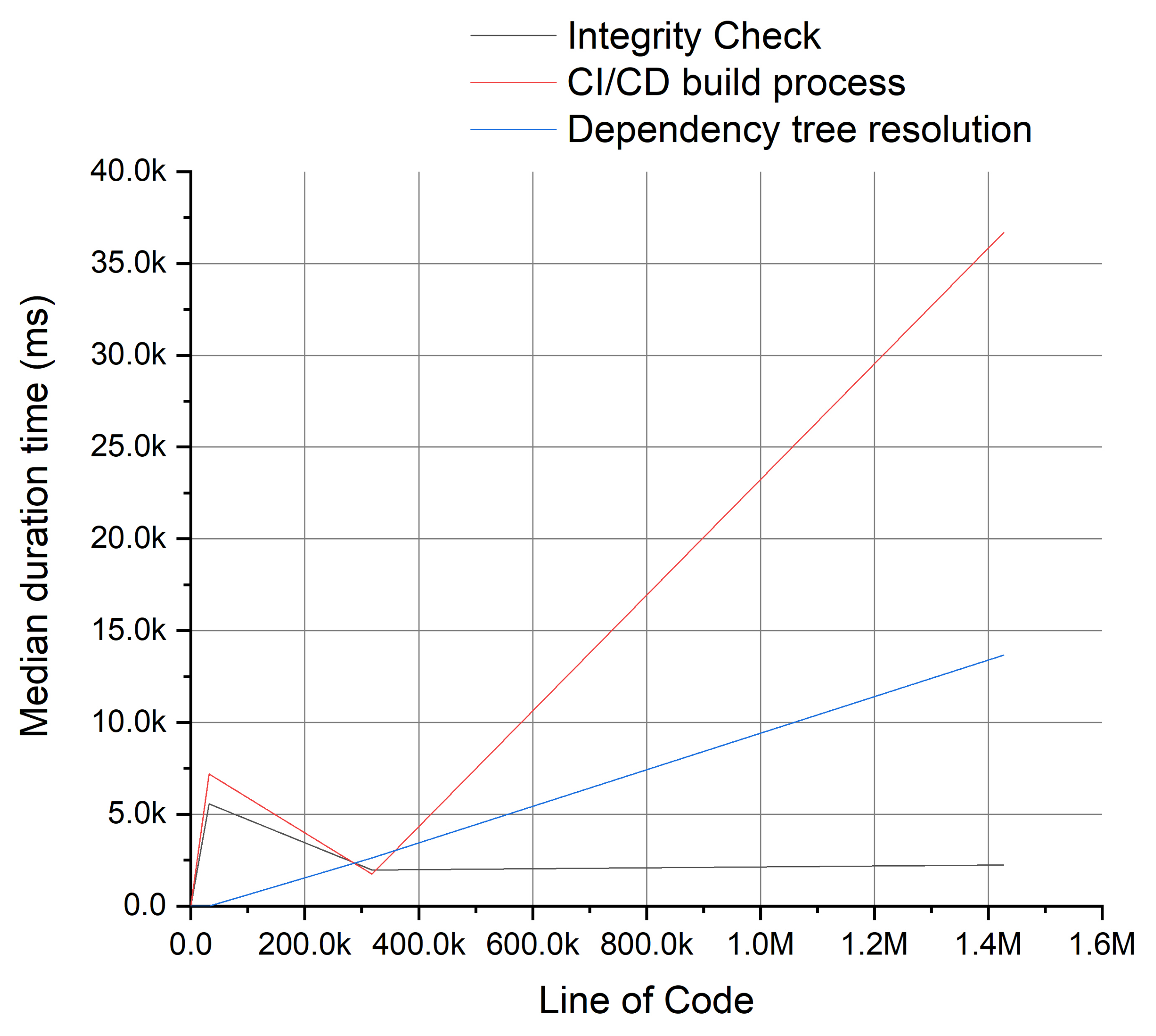

- Time consuming: Since the deployment of project modifications depends on the project’s size, the time added due to the extra verification should not negatively affect the CI/CD performance.

3. Related Work

3.1. Security Approach in CI/CD Environment

3.2. Secure Element as Trust Anchor

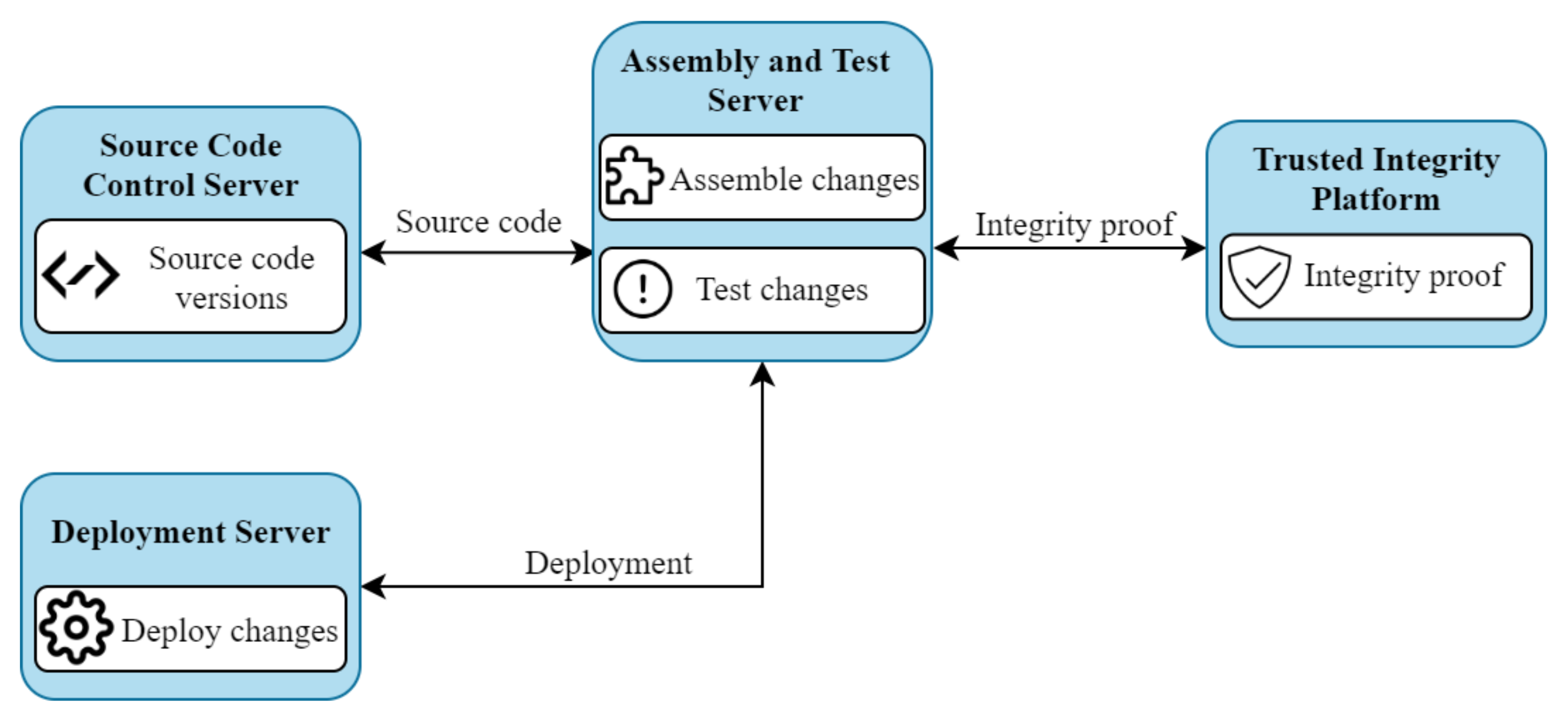

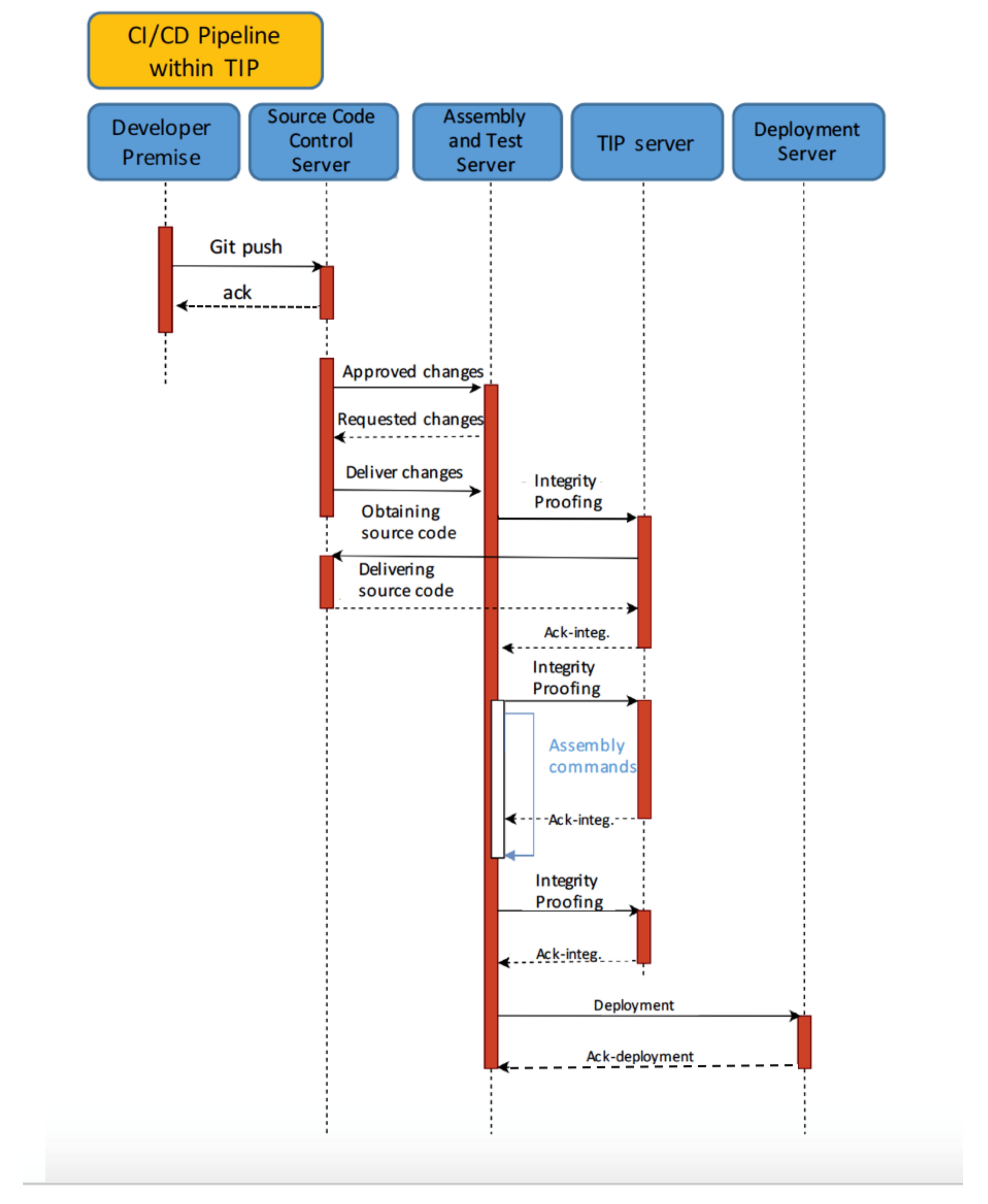

4. The P2ISE Concept

4.1. P2ISE

- First integrity proof measure: is taken before installing all dependencies required for the project; this guarantees that the source code from the Assembly and Test Server is identical to the Source Code Control Server.

- Second integrity proof measure: it guarantees that source code under assembly remains unchanged from external agents in Assembly and Test Servers.

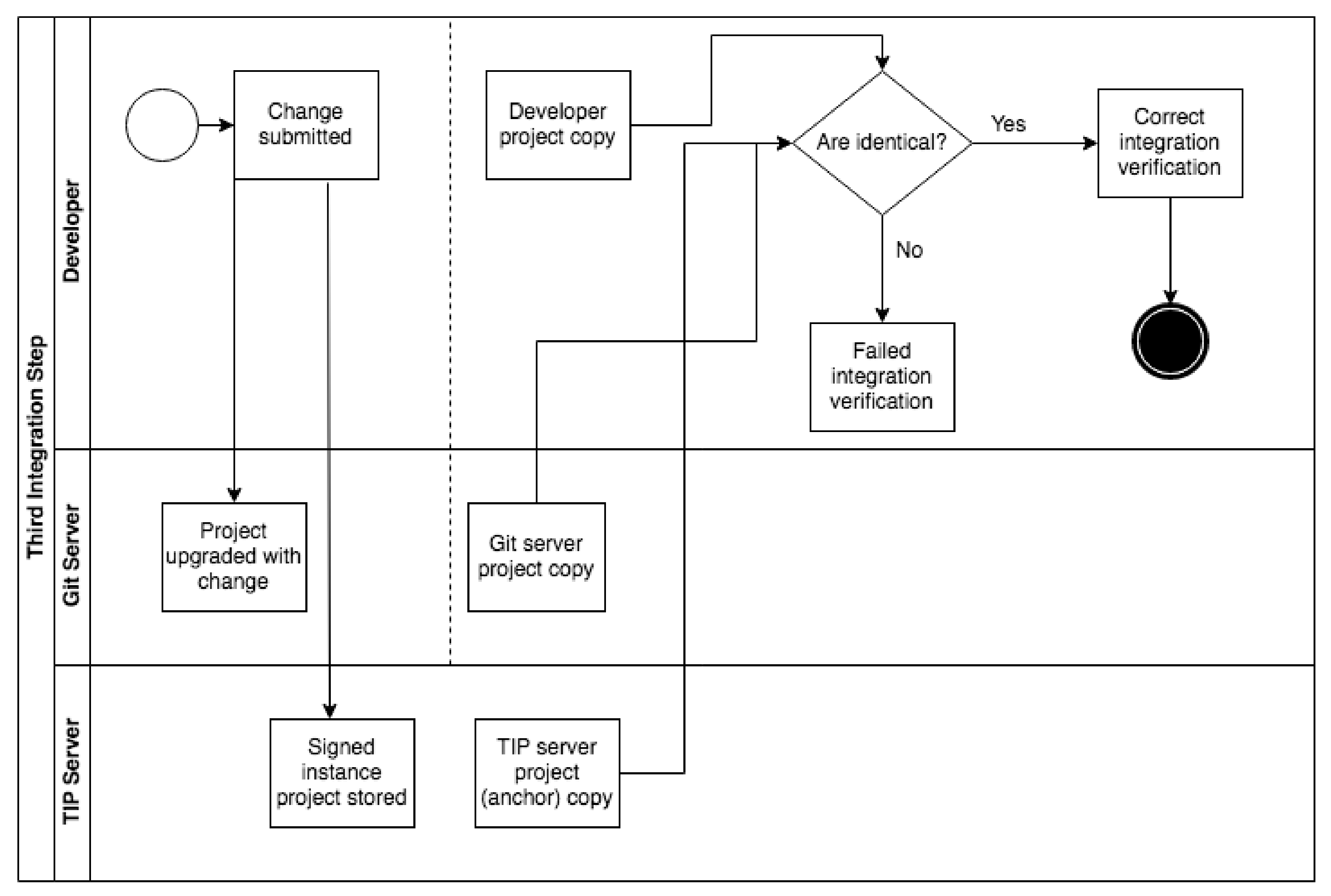

- Third integrity proof measure: it guarantees that the whole process was successfully completed without undesired modifications after the project was assembled.

- Suspicious code reception: Assembly and Test Server forwards to TIP server a compressed file with the suspicious source code. If the uncompressing phase is not successful, this file is discarded and the integrity proof is considered invalid.

- Trust code reception: TIP server retrieves source code from the Git repository that is considered as trusted.

- BigHashes proofs: TIP server verifies, using the respective TPM functionalities, that the content of the compress file and the corresponding source code from the repository are identical. This is conducted consulting every hash file from the Git server. These Git registered metadata are linked as a unique chain named bigHash and the TPM hash functions are used to verify bigHash values. Therefore, when both bigHash values (project bigHash and compressed file’s bigHash) are identical, integrity proof is considered successful.

4.2. Technical Approach and Methodology

4.2.1. First Integrity Validation Check

4.2.2. Second Integrity Validation Check

4.2.3. Third Integrity Validation Check

4.3. Security Appraisal

5. Performance Evaluation

6. Security Analysis

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Bass, L.; Weber, I.; Zhu, L. DevOps: A Software Architect’s Perspective; SEI Series in Software Engineering; Addison-Wesley: New York, NY, USA, 2015. [Google Scholar]

- Humble, J.; Farley, D.G. Continuous Delivery: Reliable Software Releases through Build, Test, and Deployment Automation; Addison-Wesley: Upper Saddle River, NJ, USA, 2010. [Google Scholar]

- Tichy, M.; Goedicke, M.; Bosch, J.; Fitzgerald, B. Rapid Continuous Software Engineering. J. Syst. Softw. 2017, 133, 159. [Google Scholar] [CrossRef]

- DigitalOcean. CURRENTS: A Quarterly Report on Developer Trends in the Cloud. Available online: https://assets.digitalocean.com/currents-report/DigitalOcean-Currents-Q1-2018.pdf (accessed on 22 August 2021).

- André, P.; Cardin, O. Trusted services for cyber manufacturing systems. In Service Orientation in Holonic and Multi-Agent Manufacturing; Springer: Berlin/Heidelberg, Germany, 2018; pp. 359–370. [Google Scholar]

- Ellingwood, J. An Introduction to CI/CD Best Practices. 2018. Available online: https://www.digitalocean.com/community/tutorials/an-introduction-to-ci-cd-best-practices (accessed on 29 June 2021).

- National Cyber Security Centre. Multi-Factor Authentication for Online Services. 2013. Available online: https://www.ncsc.gov.uk/guidance/multi-factor-authentication-online-services (accessed on 29 June 2021).

- Milka, G. Anatomy of Account Takeover. Available online: https://2018.swisscyberstorm.com/wp-content/uploads/2018/11/The-Anatomy-of-Account-Takeover.pdf (accessed on 29 June 2021).

- Paule, C.; Düllmann, T.F.; Van Hoorn, A. Vulnerabilities in Continuous Delivery Pipelines? A Case Study. In Proceedings of the 2019 IEEE International Conference on Software Architecture Companion (ICSA-C), Hamburg, Germany, 25–26 March 2019; pp. 102–108. [Google Scholar]

- Sathyanarayanan, N.; Nanda, M.N. Two Layer Cloud Security Set Architecture On Hypervisor. In Proceedings of the 2018 Second International Conference on Advances in Electronics, Computers and Communications (ICAECC), Bangalore, India, 9–10 February 2018; pp. 1–5. [Google Scholar]

- Mahboob, J.; Coffman, J. A Kubernetes CI/CD Pipeline with Asylo as a Trusted Execution Environment Abstraction Framework. In Proceedings of the 2021 IEEE 11th Annual Computing and Communication Workshop and Conference (CCWC), Online, Virtual Conference, Las Vegas, NV, USA, 27–30 January 2021; pp. 0529–0535. [Google Scholar]

- Rangnau, T.; Buijtenen, R.V.; Fransen, F.; Turkmen, F. Continuous Security Testing: A Case Study on Integrating Dynamic Security Testing Tools in CI/CD Pipelines. In Proceedings of the 2020 IEEE 24th International Enterprise Distributed Object Computing Conference (EDOC), Eindhoven, The Netherlands, 5–8 October 2020; pp. 145–154. [Google Scholar] [CrossRef]

- Bass, L.; Holz, R.; Rimba, P.; Tran, A.B.; Zhu, L. Securing a deployment pipeline. In Proceedings of the 2015 IEEE/ACM 3rd International Workshop on Release Engineering, Florence, Italy, 19 May 2015; pp. 4–7. [Google Scholar]

- Ullah, F.; Raft, A.J.; Shahin, M.; Zahedi, M.; Babar, M.A. Security support in continuous deployment pipeline. arXiv 2017, arXiv:1703.04277. [Google Scholar]

- Rimba, P.; Zhu, L.; Bass, L.; Kuz, I.; Reeves, S. Composing patterns to construct secure systems. In Proceedings of the 2015 11th European Dependable Computing Conference (EDCC), Paris, France, 7–11 September 2015; pp. 213–224. [Google Scholar]

- Muñoz, A.; Farao, A.; Correia, J.R.C.; Xenakis, C. ICITPM: Integrity validation of software in iterative Continuous Integration through the use of Trusted Platform Module (TPM). In European Symposium on Research in Computer Security; Springer: Berlin/Heidelberg, Germany, 2020; pp. 147–165. [Google Scholar]

- Buchanan, S.; Rangama, J.; Bellavance, N. CI/CD with Azure Kubernetes Service. In Introducing Azure Kubernetes Service; Springer: Berlin/Heidelberg, Germany, 2020; pp. 191–219. [Google Scholar]

- Mohan, V.; Othmane, L.B. Secdevops: Is it a marketing buzzword?-mapping research on security in devops. In Proceedings of the 2016 11th International Conference on Availability, Reliability and Security (ARES), Salzburg, Austria, 31 August–2 September 2016; pp. 542–547. [Google Scholar]

- Jauernig, P.; Sadeghi, A.R.; Stapf, E. Trusted execution environments: Properties, applications, and challenges. IEEE Secur. Priv. 2020, 18, 56–60. [Google Scholar] [CrossRef]

- Moon, S.J.; Sekar, V.; Reiter, M.K. Nomad: Mitigating arbitrary cloud side channels via provider-assisted migration. In Proceedings of the 22nd Acm Sigsac Conference on Computer and Communications Security, Denver, CO, USA, 12–16 October 2015; pp. 1595–1606. [Google Scholar]

- Deepa, G.; Thilagam, P.S. Securing web applications from injection and logic vulnerabilities: Approaches and challenges. Inf. Softw. Technol. 2016, 74, 160–180. [Google Scholar] [CrossRef]

- Lee, T.; Won, G.; Cho, S.; Park, N.; Won, D. Detection and mitigation of web application vulnerabilities based on security testing. In Proceedings of the IFIP International Conference on Network and Parallel Computing, Gwangju, Korea, 6–8 September 2012; Springer: Berlin/Heidelberg, Germany, 2012; pp. 138–144. [Google Scholar]

- Lipke, S. Building a Secure Software Supply Chain. 2017. Available online: https://hdms.bsz-bw.de/files/6321/20170830_thesis_final.pdf (accessed on 29 June 2021).

- Schneider, C. Security DevOps-Staying Secure in Agile Projects. Available online: https://docplayer.net/19676868-Security-devops-staying-secure-in-agile-projects-christian-schneider-cschneider4711.html (accessed on 29 June 2021).

- Koutroumpouchos, N.; Ntantogian, C.; Xenakis, C. Building Trust for Smart Connected Devices: The Challenges and Pitfalls of TrustZone. Sensors 2021, 21, 520. [Google Scholar] [CrossRef] [PubMed]

- Cerdeira, D.; Santos, N.; Fonseca, P.; Pinto, S. Sok: Understanding the prevailing security vulnerabilities in trustzone-assisted tee systems. In Proceedings of the 2020 IEEE Symposium on Security and Privacy (SP), San Francisco, CA, USA, 18–21 May 2020; pp. 1416–1432. [Google Scholar]

- Farao, A.; Rubio, J.E.; Alcaraz, C.; Ntantogian, C.; Xenakis, C.; Lopez, J. SealedGRID: A Secure Interconnection of Technologies for Smart Grid Applications. In Critical Information Infrastructures Security; Nadjm-Tehrani, S., Ed.; Springer International Publishing: Cham, Switzerland, 2020; pp. 169–175. [Google Scholar]

- Farao, A.; Veroni, E.; Ntantogian, C.; Xenakis, C. P4G2Go: A Privacy-Preserving Scheme for Roaming Energy Consumers of the Smart Grid-to-Go. Sensors 2021, 21, 2686. [Google Scholar] [CrossRef] [PubMed]

- Matetic, S.; Schneider, M.; Miller, A.; Juels, A.; Capkun, S. DelegaTEE: Brokered delegation using trusted execution environments. In Proceedings of the 27th USENIX Security Symposium, Baltimore, MD, USA, 15–17 August 2018; pp. 1387–1403. [Google Scholar]

- Vasudevan, A.; Owusu, E.; Zhou, Z.; Newsome, J.; McCune, J.M. Trustworthy Execution on Mobile Devices: What Security Properties can My Mobile Platform Give Me? Available online: https://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.220.220&rep=rep1&type=pdf (accessed on 29 June 2021).

- Beniamini, G. War of the Worlds-Hijacking the Linux Kernel from QSEE. Available online: http://bits-please.blogspot.com/2016/05/war-of-worlds-hijacking-linux-kernel.html (accessed on 29 June 2021).

- Machiry, A.; Gustafson, E.; Spensky, C.; Salls, C.; Stephens, N.; Wang, R.; Bianchi, A.; Choe, Y.R.; Kruegel, C.; Vigna, G. BOOMERANG: Exploiting the Semantic Gap in Trusted Execution Environments. In Proceedings of the NDSS’17, San Diego, CA, USA, 26 February–1 March 2017. [Google Scholar]

- Rosenberg, D. Reflections on Trusting Trustzone. Available online: https://paper.bobylive.com/Meeting_Papers/BlackHat/USA-2014/us-14-Rosenberg-Reflections-on-Trusting-TrustZone.pdf (accessed on 29 June 2021).

- Chen, Y.; Zhang, Y.; Wang, Z.; Wei, T. Downgrade attack on trustzone. arXiv 2017, arXiv:1707.05082. [Google Scholar]

- Osvik, D.A.; Shamir, A.; Tromer, E. Cache attacks and countermeasures: The case of AES. In Proceedings of the Cryptographers’ Track at the RSA Conference, San Jose, CA, USA, 13–17 February 2006; pp. 1–20. [Google Scholar]

- Yarom, Y.; Falkner, K. FLUSH + RELOAD: A high resolution, low noise, L3 cache side-channel attack. In Proceedings of the 23rd USENIX Security Symposium (USENIX Security 14), San Diego, CA, USA, 20–22 August 2014; pp. 719–732. [Google Scholar]

- Gruss, D.; Maurice, C.; Wagner, K.; Mangard, S. Flush + Flush: A fast and stealthy cache attack. In Proceedings of the International Conference on Detection of Intrusions and Malware, and Vulnerability Assessment, San Sebastián, Spain, 7–8 July 2016; Springer: Berlin/Heidelberg, Germany, 2016; pp. 279–299. [Google Scholar]

- Brasser, F.; Müller, U.; Dmitrienko, A.; Kostiainen, K.; Capkun, S.; Sadeghi, A.R. Software grand exposure: SGX cache attacks are practical. In Proceedings of the 11th USENIX Workshop on Offensive Technologies (WOOT 17), Vancouver, BC, Canada, 14–15 August 2017. [Google Scholar]

- Moghimi, A.; Irazoqui, G.; Eisenbarth, T. Cachezoom: How SGX amplifies the power of cache attacks. In Proceedings of the International Conference on Cryptographic Hardware and Embedded Systems, Taipei, Taiwan, 25–28 September 2017; Springer: Berlin/Heidelberg, Germany, 2017; pp. 69–90. [Google Scholar]

- Schwarz, M.; Weiser, S.; Gruss, D.; Maurice, C.; Mangard, S. Malware guard extension: Using SGX to conceal cache attacks. In Proceedings of the International Conference on Detection of Intrusions and Malware, and Vulnerability Assessment, Taipei, Taiwan, 25–28 September 2017; Springer: Berlin/Heidelberg, Germany, 2017; pp. 3–24. [Google Scholar]

- Lipp, M.; Gruss, D.; Spreitzer, R.; Maurice, C.; Mangard, S. Armageddon: Cache attacks on mobile devices. In Proceedings of the 25th USENIX Security Symposium (USENIX Security 16), Austin, TX, USA, 10–12 August 2016; pp. 549–564. [Google Scholar]

- Zhang, N.; Sun, K.; Shands, D.; Lou, W.; Hou, Y.T. TruSpy: Cache Side-Channel Information Leakage from the Secure World on ARM Devices. IACR Cryptol. ePrint Arch. 2016, 2016, 980. [Google Scholar]

- Lipp, M.; Schwarz, M.; Gruss, D.; Prescher, T.; Haas, W.; Mangard, S.; Kocher, P.; Genkin, D.; Yarom, Y.; Hamburg, M. Meltdown. arXiv 2018, arXiv:1801.01207. [Google Scholar] [CrossRef]

- Kocher, P.; Horn, J.; Fogh, A.; Genkin, D.; Gruss, D.; Haas, W.; Hamburg, M.; Lipp, M.; Mangard, S.; Prescher, T.; et al. Spectre attacks: Exploiting speculative execution. In Proceedings of the 2019 IEEE Symposium on Security and Privacy (SP), San Francisco, CA, USA, 19–23 May 2019; pp. 1–19. [Google Scholar]

- Dheerendra, M.; Sourav, M.; Saru, K.; Khurram, K.M.; Ankita, C. Security enhancement of a biometric based authentication scheme for telecare medicine information systems with nonce. J. Med. Syst. 2014, 38, 41. [Google Scholar]

- Saru, K.; Kumar, D.A.; Xiong, L.; Fan, W.; Khurram, K.M.; Qi, J.; Hafizul, I.S. A provably secure biometrics-based authenticated key agreement scheme for multi-server environments. Multimed. Tools Appl. 2018, 77, 2359–2389. [Google Scholar]

- Stevens, M.; Bursztein, E.; Karpman, P.; Albertini, A.; Markov, Y. The first collision for full SHA-1. In Proceedings of the Annual International Cryptology Conference, Santa Barbara, CA, USA, 20–24 August 2017; Springer: Berlin/Heidelberg, Germany, 2017; pp. 570–596. [Google Scholar]

- IBM’s TPM 2.0 TSS. Available online: https://sourceforge.net/projects/ibmtpm20tss/ (accessed on 29 June 2021).

- Microsoft. TPM Software Stack (TSS) Implementations from Microsoft. 2013. Available online: https://github.com/microsoft/TSS.MSR (accessed on 9 August 2021).

- Server Operating System Market Share. Available online: https://www.t4.ai/industry/server-operating-system-market-share (accessed on 5 August 2021).

- Caddy Server v2 Official One. Available online: https://github.com/caddyserver/caddy (accessed on 29 June 2021).

- Nuxt.js + Vuetify Project. Available online: https://gitlab.com/tip-benchmarking/nuxt-vuetify (accessed on 29 June 2021).

- Svelte Project. Available online: https://gitlab.com/tip-benchmarking/svelte (accessed on 29 June 2021).

| Information Assets | Physical Assets |

|---|---|

| User credential | Server |

| Authorization mechanism | Computer (Developer’s PC) |

| Log information | |

| Project code | |

| Product (Public Service) |

| Entity | Description |

|---|---|

| Developer | A developer who initiates commands. |

| Source Code Control Server | Track changes in source code. |

| Assembly and Test Server | Receives changes and assembles them. |

| Deployment Server | Deploy changes. |

| Trusted Integrity Platform | Proves software project’s integrity. |

| Process Duration (in Seconds) | Caddy Server v2 | Nuxt + Vuetify Server | Svelte Server |

|---|---|---|---|

| Integrity check | 5.5 | 2.2 | 1.9 |

| CI/CD build process | 7.1 | 36.6 | 1.7 |

| Dependency tree resolution | n/a | 13.6 | 2.6 |

| Baseline | 1.1 | 39.12 | 2.1 |

| Software Project | P2ISE Process | CPU Utilization | Memory Consumption |

|---|---|---|---|

| Caddy Server v2 | Integrity Check | 10.6% | 26.4% |

| CI/CD build process | 38.3% | 26.4% | |

| Dependency tree resolution | n/a | n/a | |

| Nuxt + Vuetify Server | Integrity Check | 6.5% | 23.2% |

| CI/CD build process | 10.2% | 26.1% | |

| Dependency tree resolution | 26.6% | 23.6% | |

| Svelte Server | Integrity Check | 14.8% | 25.7% |

| CI/CD build process | 14.2% | 26% | |

| Dependency tree resolution | 25% | 25.9% |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Muñoz, A.; Farao, A.; Correia, J.R.C.; Xenakis, C. P2ISE: Preserving Project Integrity in CI/CD Based on Secure Elements. Information 2021, 12, 357. https://doi.org/10.3390/info12090357

Muñoz A, Farao A, Correia JRC, Xenakis C. P2ISE: Preserving Project Integrity in CI/CD Based on Secure Elements. Information. 2021; 12(9):357. https://doi.org/10.3390/info12090357

Chicago/Turabian StyleMuñoz, Antonio, Aristeidis Farao, Jordy Ryan Casas Correia, and Christos Xenakis. 2021. "P2ISE: Preserving Project Integrity in CI/CD Based on Secure Elements" Information 12, no. 9: 357. https://doi.org/10.3390/info12090357

APA StyleMuñoz, A., Farao, A., Correia, J. R. C., & Xenakis, C. (2021). P2ISE: Preserving Project Integrity in CI/CD Based on Secure Elements. Information, 12(9), 357. https://doi.org/10.3390/info12090357