5.1. General Settings

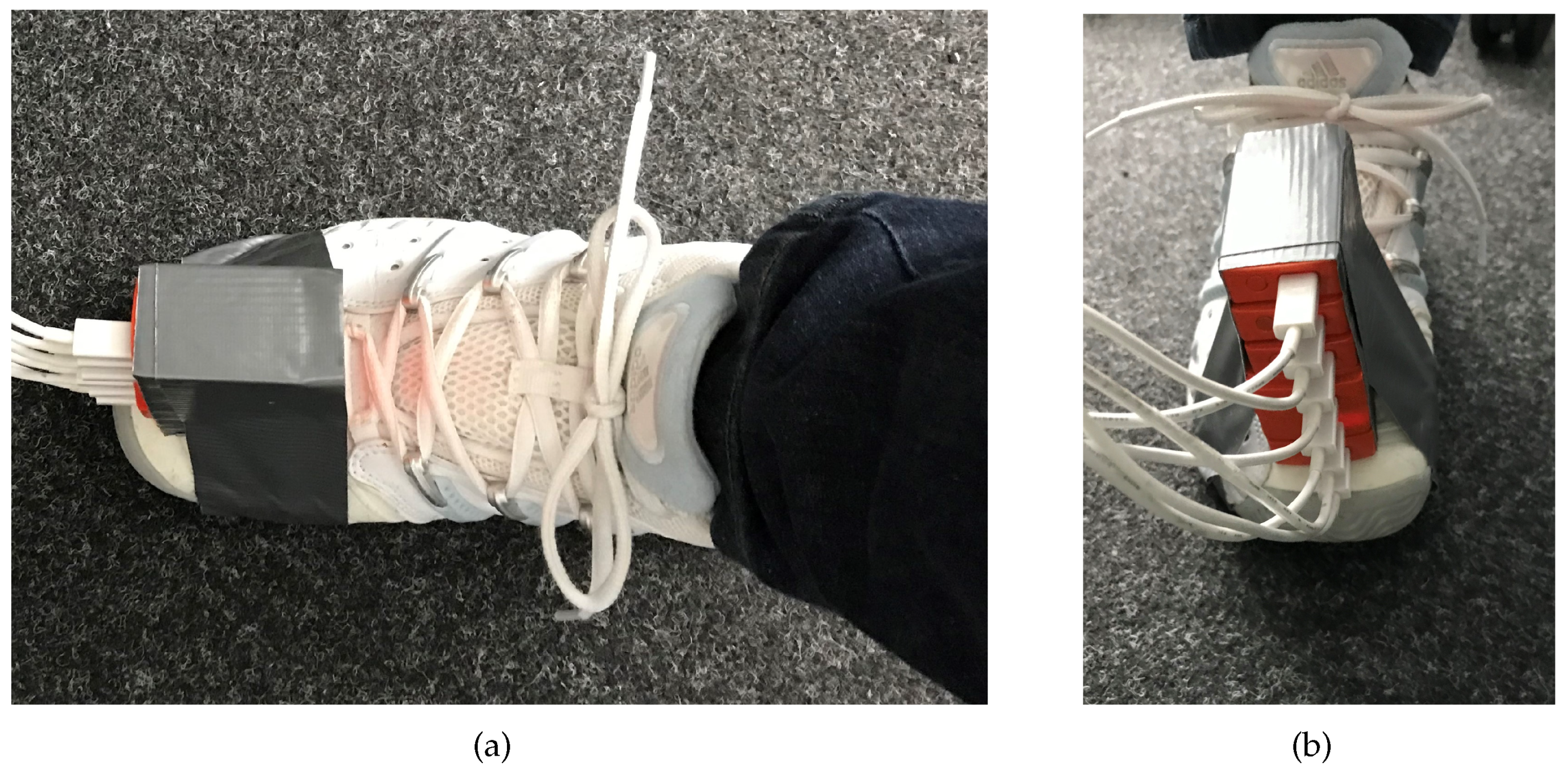

In our experiments, we collected data of different walks with a total of 6 sensors mounted on the foot of one pedestrian as depicted in

Figure 2. It has been shown that the ZUPT-phase lasts the longest above the toes of the foot; therefore we used this placement. Our vision is to integrate the sensor in the sole of the shoe, but other placements like the ankle of the foot are also possible. The placement of the sensors should be carefully chosen due to the fact that sensor misplacement influences the ground reaction force estimation as shown in [

28] and with it the results of the PDR. Due to the fact that the sensor measurement errors vary and the ZUPT detection varies with the measurement errors, too, we observed varying drifts similarly to drifts of trajectories from different pedestrians like in [

29]. Therefore, we are convinced that the results are similar when using trajectories of different pedestrians. It should be noted that we use general parameters for a sensor type inside the UKF and do not need to specifically calibrate.

During the walk, we crossed several GTPss for providing a reference. For the indoor GTPss, the positions of the GTPss were measured with a laser distance measurer (LDM, millimeter accuracy) and a tape measurer (sub centimeter range accuracy), whereas outdoors we used a Leica tachymeter as described in [

30].

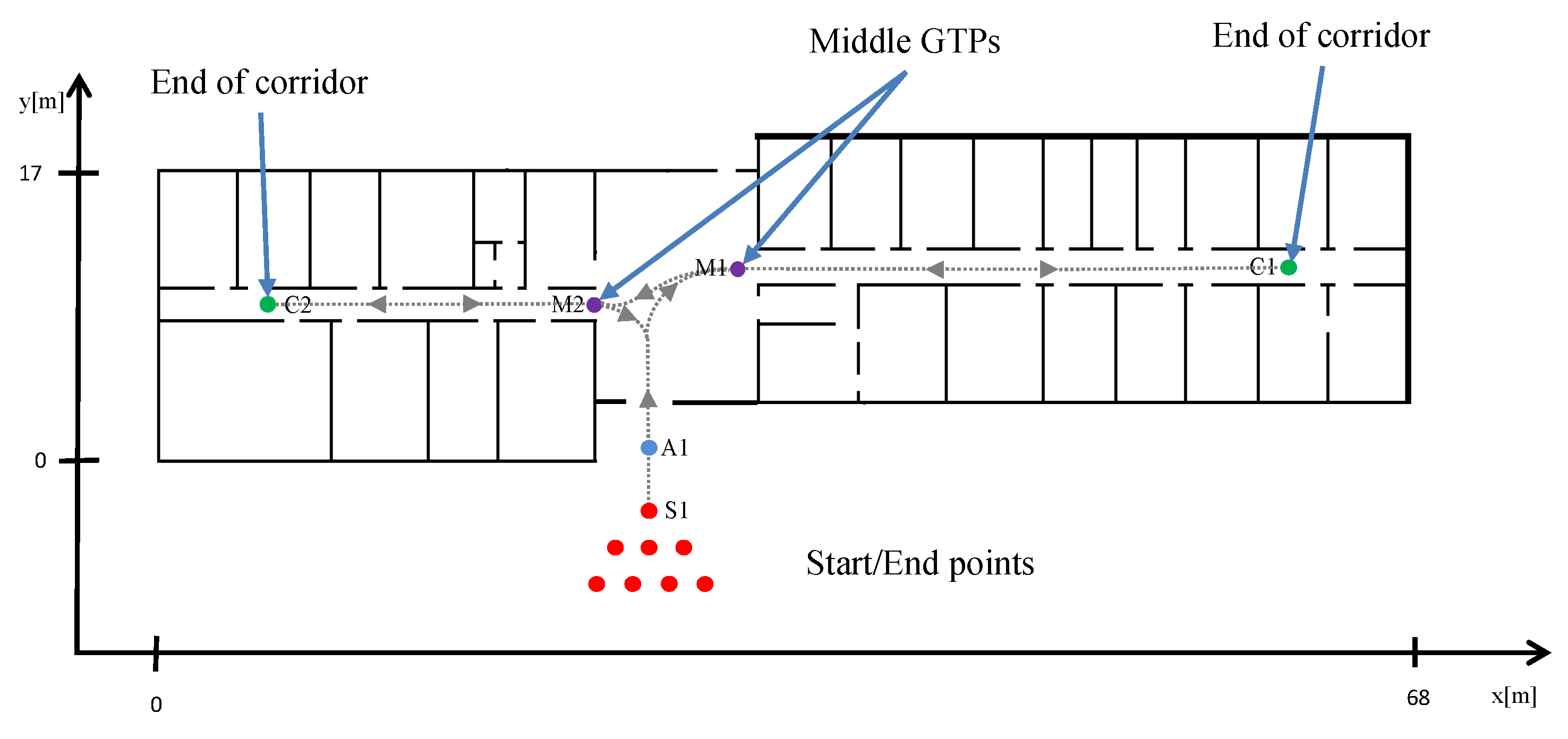

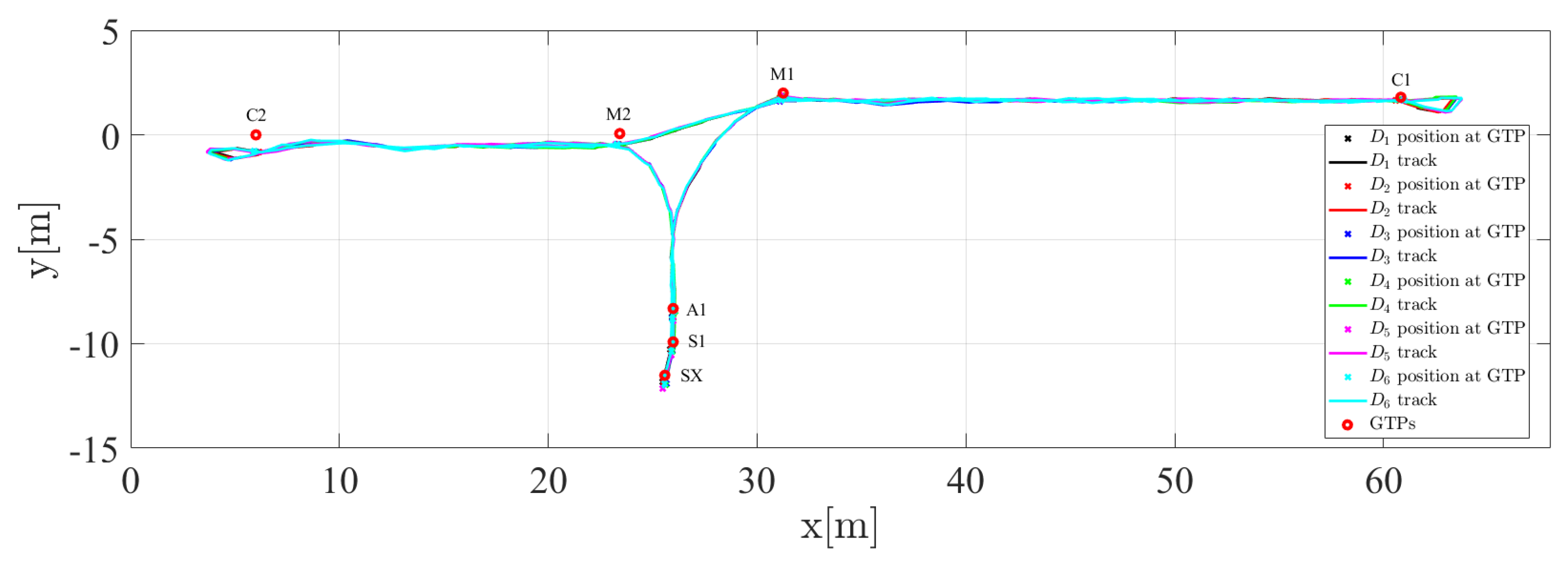

The GTPss used in the ground floor are depicted in

Figure 3. The pedestrian started at different starting points in front of the building to emulate a group of emergency forces entering a building in triangle formation with a distance of

m between the forces. This is assumed as a sample formation. Depending on the situation, special forces are grouped in different formations, e.g., triangle or line formations. We chose the triangle formation because it is more challenging due to the different starting headings. When entering the building, the forces are assumed to be behind each other because the door and corridors leave no space for entering together at the same time in that formation. When not otherwise stated, the GTPss depicted in

Figure 3 are used in the following order:

. When we started from another starting point than

, we crossed additionally the respective starting point at the beginning and end of the walk. The points

and

are used for the adjustment of the first heading angle. As mentioned before, the distance between

and

was

m. The path was intentionally chosen without entering rooms, because entering and exiting rooms result in loop closures that help the SLAM to perform better. We wanted to test the longest way inside the building without loop closures. The pedestrian stopped at the GTPss for 2–3 s and the time when passing the GTPs is logged.

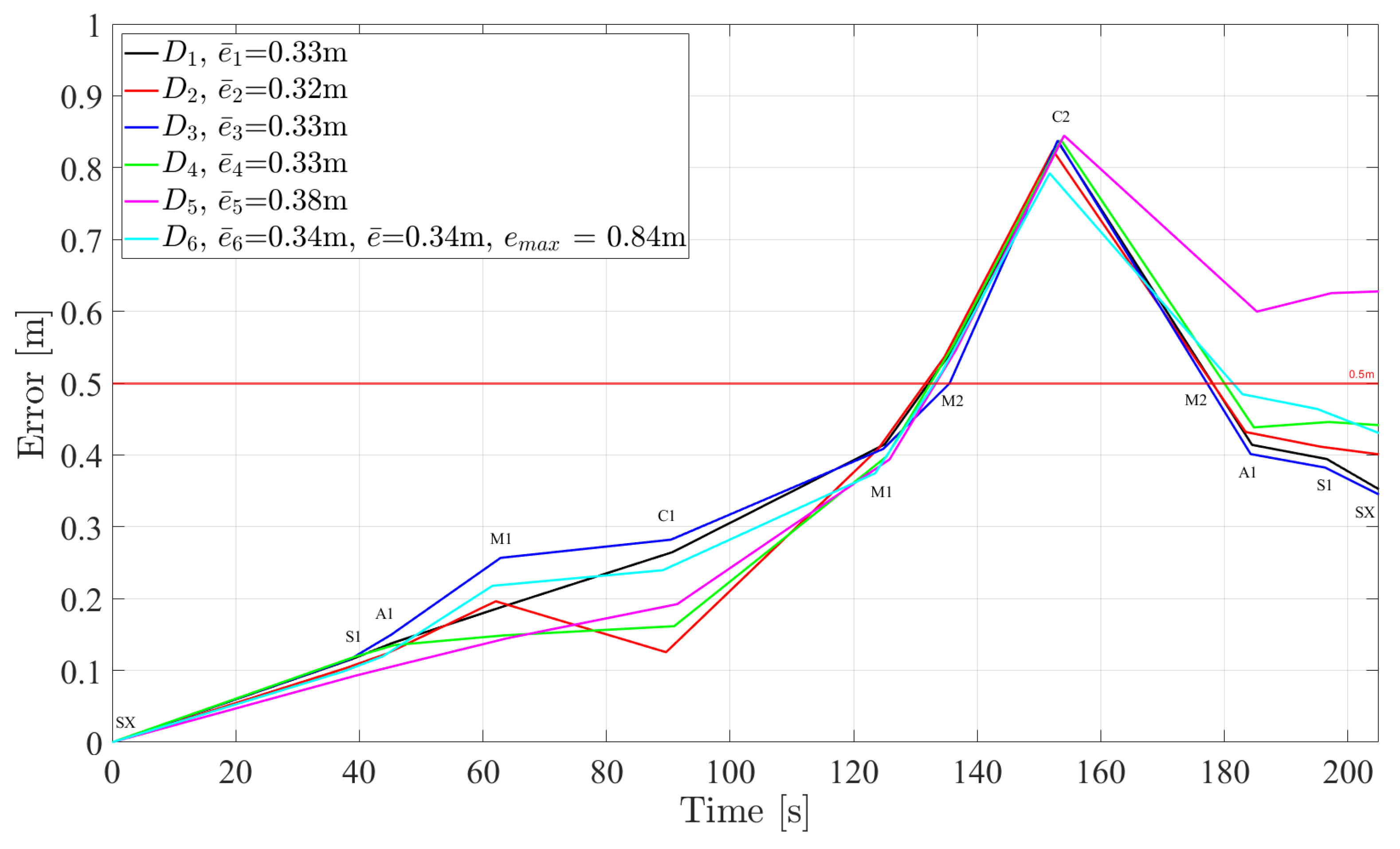

The total number of walks was 10 which resulted in a total of 60 trajectories. The pedestrians walked 3.8–7.4 min with estimated distances of 137–226 m. In the first two walks, the trajectories inside the building are repeated. Additionally, to test the algorithm for the motion mode “running”, we performed 3 running walks with 6 sensors mounted on the foot following the same trajectory resulting in 18 different estimated trajectories. The duration of the walks was

min and the estimated distances 136–139 m. The six sensors used are one Xsens MTi-G sensor, three new Xsens MTw sensors, and two elder Xsens MTw sensors. The raw IMU data are processed by a lower UKF [

24] and the following parameters are applied to the upper FeetSLAM-algorithm:

particles and

m for the hexagon radius.

For the SCs, we set the following parameter values: Depending on the window, the mean -positions and heading are either the known starting position or the respective positions of the best particle. The only parameter that we manually set was the mean scale: We set it to for the sensors Xsens MTi-G and MTw, old generation, because they are properly calibrated by the factory and already provide a good estimation in -distances. The new generation Xsens MTw-sensors, which are cheaper, are not factory calibrated as of our knowledge. We observed that we had to adjust the mean scale to for all of them in order to get comparable results.

The sigma values of the SCs used in the experiments are given in

Table 1. For windows starting at

, we set the sigma values to be zero to force the particles at the beginning to that position, direction, and scale. This does not mean that the particles have same position, heading, and scale at the beginning, because after initialization, we sample from the FootSLAM error model and add the drawn error vector to the initial position, heading, and scale. We refer the reader to [

27], page 37, where a comprehensive description of the error model is provided. For the windows starting after

, we set the sigma values to fixed values as given in

Table 1. Due to the fact that the sigma values of the best particles are sometimes very high and lead to disturbed results, we set most of the sigma values to fixed values in order to provide a reasonable position and heading of the particles. The sigma values for the

-positions are set with a small sigma because the position of the best particle might be inaccurate. The same holds for the heading-angle. We set the sigma-scale value to zero because of the good estimations of the UKF regarding the delta step measurements. Please note that we used these sigma values only for the first iteration of window

. After that, we set all sigma values to zero.

In the following, we explain the different error metrics that are used in the experimental results sections. The 2D-error

of data set

l at the

hth GTP

,

, where

is the number of GTPss, is calculated as the Euclidean distance to the known GTPs:

where

are the known and

are the estimated

-positions at GTP

, respectively.

The mean error value

is the mean over all

n mean error results

of the

n data sets:

The maximum error

is the maximum over all maximum errors of the

n error curves of the different data set results:

It reflects the maximum error that may occur in one of the results for one data set.

It should be noted that we used in this paper 2D-FeetSLAM. Whereas it has been shown that the system also runs for 3D-environments, we concentrated in this paper on the 2D-case to show that it works. We are convinced that the algorithm performs similar in 3D-environments. In future work, we will extend it to 3D environments.