Terminology Translation in Low-Resource Scenarios

Abstract

1. Introduction

- We present a faster and less expensive annotation scheme that can semi-automatically create a reusable gold standard evaluation test set for evaluating terminology translation in MT. This strategy provides a faster and less expensive solution compared to a slow and expensive manual evaluation process.

- We highlight various linguistic phenomena in relation to the annotation process on English and a low resource language, Hindi.

- We present an automatic metric for evaluating terminology translation in MT, namely TermEval. We also demonstrate a classification framework, TermCat, that can automatically classify terminology translation-related errors in MT. TermEval is shown to be a promising metric, as it shows very high correlations with the human judgements. TermCat achieves competitive performance in the terminology translation error classification task.

- We compare PB-SMT and NMT on terminology translation in two translation directions: English-to-Hindi and Hindi-to-English. We present the challenges in relation to the investigation of the automation of the terminology translation evaluation and error classification processes in the low resource scenario.

2. Related Work

2.1. Terminology Annotation

2.2. Terminology Translation Evaluation Methods

2.3. Terminology Translation in PB-SMT & NMT

3. MT Systems

3.1. PB-SMT System

3.2. NMT System

3.3. Data Used

3.4. PB-SMT versus NMT

4. Creating Gold Standard Evaluation Set

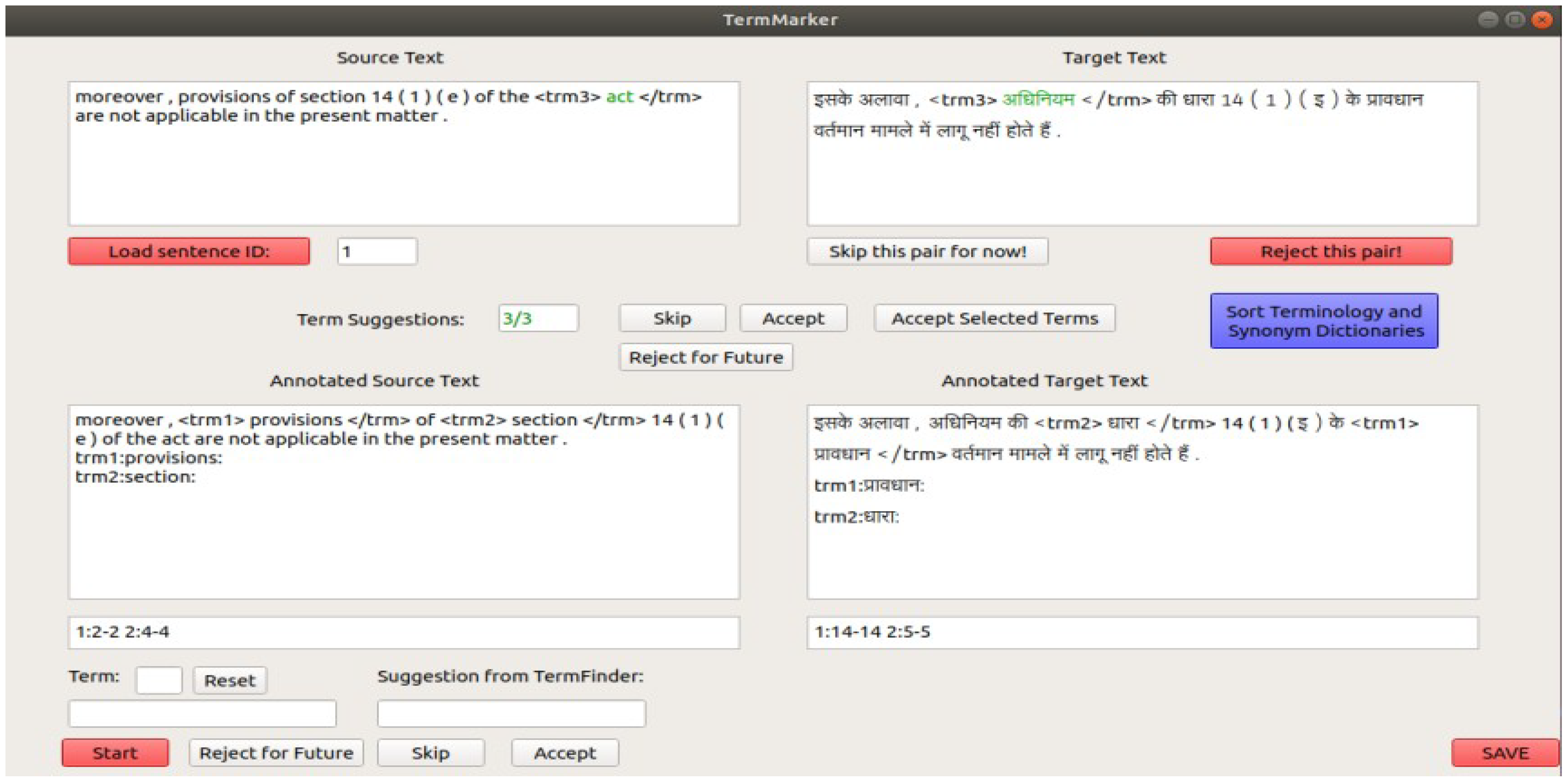

4.1. Annotation Suggestions from Bilingual Terminology

4.2. Variations of Terms

4.3. Consistency in Annotation

4.4. Ambiguity in Terminology Translation

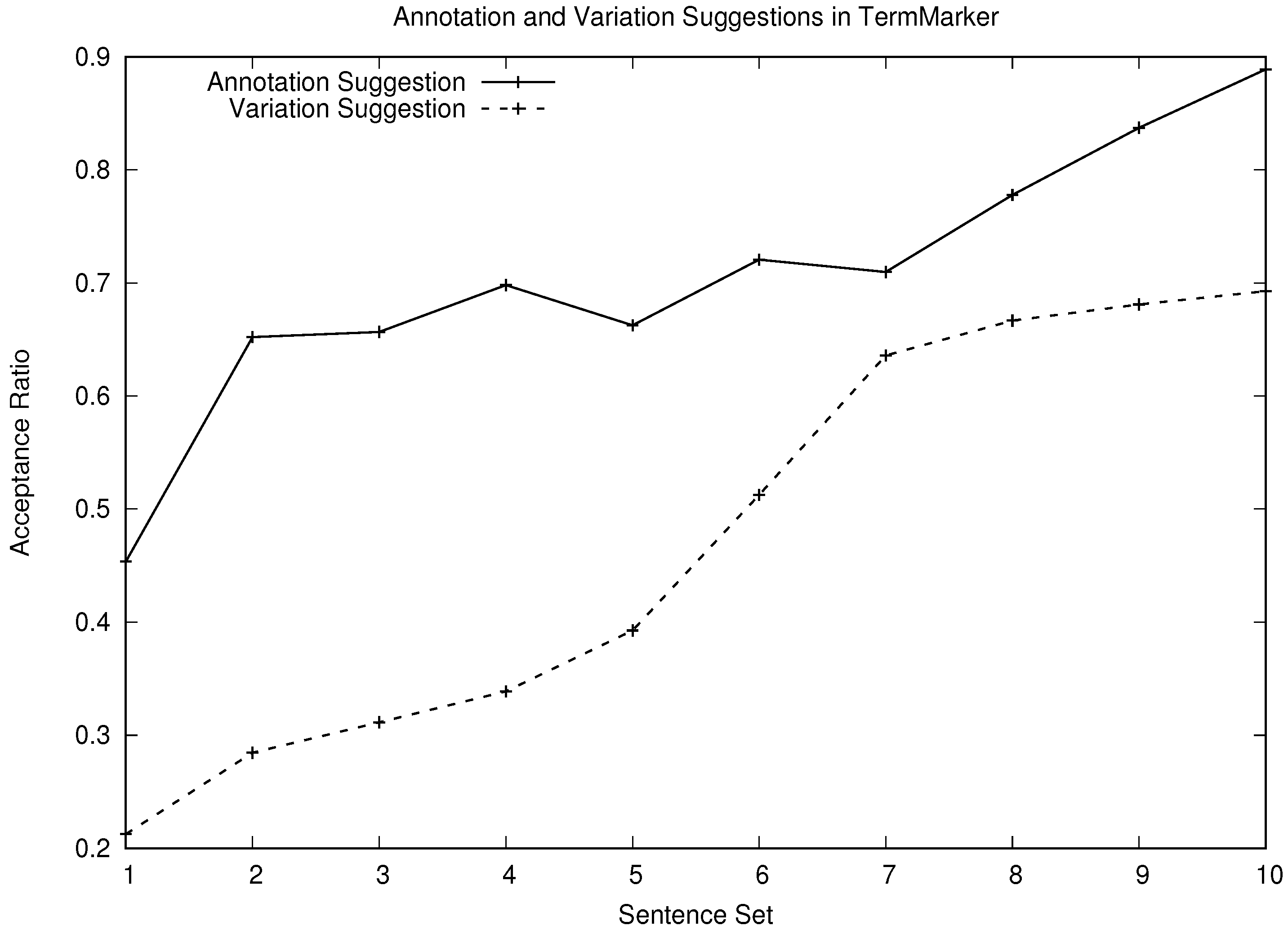

4.5. Measuring the Performance of TermMarker

5. TermEval

| N | the number of sentences in the test set |

| S | the number of source terms in the n-th source sentence |

| V | the number of reference translations (including LIVs) for the s-th source term |

| the v-th reference term for the s-th the source term | |

| the translation of the n-th source input sentence | |

| NT | the total number of terms in the test set |

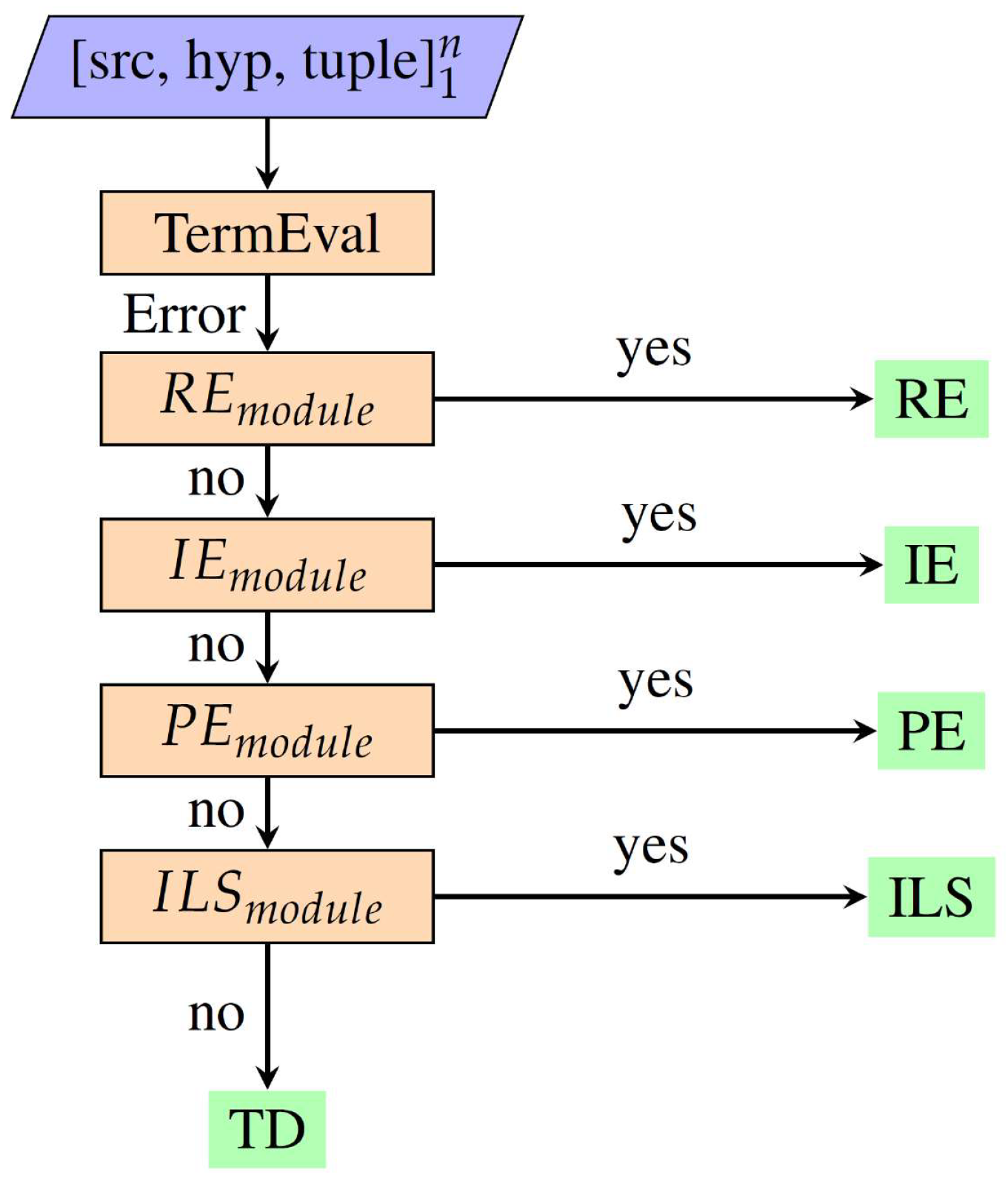

6. TermCat

6.1. Error Classes

- Reorder error (RE): the translation of a source term forms the wrong word order in the target language.

- Inflectional Error (IE): the translation of a source term inflicts a morphological error (e.g., includes an inflectional morpheme that is a misfit in the context of the target translation, which essentially causes a grammatical error in translation).

- Partial error (PE): the MT system correctly translates part of a source term into the target language and commits an error for the remainder of the source term.

- Incorrect lexical selection (ILS): the translation of a source term is an incorrect lexical choice.

- Term drop (TD): the MT system omits the source term in translation.

6.2. Classification Framework

6.2.1. Reorder Error Identification Module

6.2.2. Inflectional Error Identification Module

6.2.3. Partial Error Identification Module

6.2.4. Incorrect Lexical Selection Identification Module

7. Results, Discussion and Analysis

7.1. Manual Evaluation

7.2. Automatic Evaluation Metrics

7.3. Validating TermEval

7.4. TermEval: Discussion and Analysis

7.4.1. False Positives

7.4.2. False Negatives

- Term transliteration: The translation-equivalent of a source term is the transliteration of the source term itself. We observed this happening only when the target language was Hindi. In practice, many English terms (transliterated form) are often used in Hindi text (e.g., “decree” as “dikre”, “tariff orders” as “tarif ordars”, “exchange control manual” as “eksachenj kantrol mainual”).

- Terminology translation coreferred: The translation-equivalent of a source term was not found in the hypothesis; however, it was rightly coreferred in the target translation.

- Semantically-coherent terminology translation: The translation-equivalent of a source term was not seen in the hypothesis, but its meaning was correctly transferred into the target language. As an example, consider the source Hindi sentence “sabhee apeelakartaon ne aparaadh sveekaar nahin kiya aur muqadama chalaaye jaane kee maang kee” and the reference English sentence “all the appellants pleaded not guilty to the charge and claimed to be tried” from the gold-test set. In this example, the reference English sentence was the literal translation of the source Hindi sentence. Here, “aparaadh sveekaar nahin” is a Hindi term, and its English translation is “pleaded not guilty”. The Hindi-to-English NMT system produced the following English translation “all the appellants did not accept the crime and sought to run the suit” for the source sentence. In this example, we see that the meaning of the source term “aparaadh sveekaar nahin” was preserved in the target translation.

7.5. Validating TermCat

7.6. TermCat: Discussion and Analysis

7.6.1. Reorder Error

7.6.2. Inflectional Error

7.6.3. Partial Error

7.6.4. Incorrect Lexical Selection

7.6.5. Term Drop

8. Conclusions

Author Contributions

Funding

Conflicts of Interest

Abbreviations

| TW | Translation workflow |

| TSP | Translation service provider |

| MT | Machine translation |

| LIV | Lexical and inflectional variation |

| SMT | Statistical machine translation |

| NMT | Neural machine translation |

| PB-SMT | Phrase-based statistical machine translation |

| RE | Reorder error |

| IE | Inflectional error |

| PE | Partial error |

| ILS | Incorrect lexical selection |

| TD | Term drop |

| CT | Correct translation |

| REM | Remaining class |

References

- Papineni, K.; Roukos, S.; Ward, T.; Zhu, W.J. BLEU: A Method for Automatic Evaluation of Machine Translation. In Proceedings of the ACL-2002: 40th Annual Meeting of the Association for Computational Linguistics, Philadelphia, PA, USA, 7–12 July 2002; pp. 311–318. [Google Scholar]

- Haque, R.; Hasanuzzaman, M.; Way, A. TermEval: An automatic metric for evaluating terminology translation in MT. In Proceedings of the 20th International Conference on Computational Linguistics and Intelligent Text Processing, La Rochelle, France, 7–13 April 2019. [Google Scholar]

- Haque, R.; Penkale, S.; Way, A. Bilingual Termbank Creation via Log-Likelihood Comparison and Phrase-Based Statistical Machine Translation. In Proceedings of the 4th International Workshop on Computational Terminology (Computerm), Dublin, Ireland, 23 August 2014; pp. 42–51. [Google Scholar]

- Haque, R.; Penkale, S.; Way, A. TermFinder: Log-likelihood comparison and phrase-based statistical machine translation models for bilingual terminology extraction. Lang. Resour. Eval. 2018, 52, 365–400. [Google Scholar] [CrossRef]

- Devanagari. Available online: https://en.wikipedia.org/wiki/Devanagari (accessed on 28 August 2019).

- Junczys-Dowmunt, M.; Dwojak, T.; Hoang, H. Is Neural Machine Translation Ready for Deployment? A Case Study on 30 Translation Directions. arXiv 2016, arXiv:1610.01108. [Google Scholar]

- Kunchukuttan, A.; Mehta, P.; Bhattacharyya, P. The IIT Bombay English—Hindi Parallel Corpus. arXiv 2017, arXiv:1710.02855. [Google Scholar]

- Koehn, P.; Och, F.J.; Marcu, D. Statistical Phrase-based Translation. In Proceedings of the HLT-NAACL 2003: Conference Combining Human Language Technology Conference Series and the North American Chapter of the Association For Computational Linguistics Conference Series, Edmonton, AB, Cananda, 27 May–1 June 2003; pp. 48–54. [Google Scholar]

- Kalchbrenner, N.; Blunsom, P. Recurrent Continuous Translation Models. In Proceedings of the 2013 Conference on Empirical Methods in Natural Language Processing (EMNLP), Seattle, WA, USA, 18–21 October 2013; pp. 1700–1709. [Google Scholar]

- Cho, K.; van Merriënboer, B.; Gülçehre, Ç.; Bougares, F.; Schwenk, H.; Bengio, Y. Learning Phrase Representations using RNN Encoder–Decoder for Statistical Machine Translation. In Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP), Doha, Qatar, 25–29 October 2014; pp. 1724–1734. [Google Scholar]

- Sutskever, I.; Vinyals, O.; Le, Q.V. Sequence to Sequence Learning with Neural Networks. In Proceedings of the 27th International Conference on Neural Information Processing Systems, Montreal, QC, Cananda, 8–13 December 2014; pp. 3104–3112. [Google Scholar]

- Bahdanau, D.; Cho, K.; Bengio, Y. Neural Machine Translation by Jointly Learning to Align and Translate. In Proceedings of the 3rd International Conference on Learning Representations, San Diego, CA, USA, 7–9 May 2015; pp. 1–15. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention Is All You Need. arXiv 2017, arXiv:1706.03762. [Google Scholar]

- Hassan, H.; Aue, A.; Chen, C.; Chowdhary, V.; Clark, J.; Federmann, C.; Huang, X.; Junczys-Dowmunt, M.; Lewis, W.; Li, M.; et al. Achieving Human Parity on Automatic Chinese to English News Translation. arXiv 2018, arXiv:1803.05567. [Google Scholar]

- Sag, I.A.; Baldwin, T.; Bond, F.; Copestake, A.; Flickinger, D. Multiword expressions: A pain in the neck for NLP. In Proceedings of the CICLing 2002, the Third International Conference on Intelligent Text Processing and Computational Linguistics, Lecture Notes in Computer Science, Mexico City, Mexico, 17–23 February 2002; Gelbukh, A., Ed.; Springer-Verlag: Berlin/Heidelberg, Germany, 2002. [Google Scholar]

- Mitkov, R.; Monti, J.; Pastor, G.C.; Seretan, V. (Eds.) Multiword Units in Machine Translation and Translation Technology, Current Issues in Linguistic Theory; John Benjamin Publishing Company: Amsterdam, The Netherlands, 2018; Volume 341. [Google Scholar]

- Haque, R.; Hasanuzzaman, M.; Way, A. Multiword Units in Machine Translation—Book Review. Mach. Transl. 2019, 34, 1–6. [Google Scholar] [CrossRef]

- Rigouts Terryn, A.; Hoste, V.; Lefever, E. In no uncertain terms: A dataset for monolingual and multilingual automatic term extraction from comparable corpora. Lang. Resour. Eval. 2019. [Google Scholar] [CrossRef]

- Pinnis, M.; Ljubešić, N.; Ştefănescu, D.; Skadiņa, I.; Tadić, M.; Gornostay, T. Term Extraction, Tagging, and Mapping Tools for Under-Resourced Languages. In Proceedings of the 10th Conference on Terminology and Knowledge Engineering (TKE 2012), Madrid, Spain, 19–22 June 2012; pp. 193–208. [Google Scholar]

- Arčan, M.; Turchi, M.; Tonelli, S.; Buitelaar, P. Enhancing statistical machine translation with bilingual terminology in a cat environment. In Proceedings of the 11th Biennial Conference of the Association for Machine Translation in the Americas (AMTA 2014), Vancouver, BC, USA, 22–26 October 2014; pp. 54–68. [Google Scholar]

- Tiedemann, J. Parallel Data, Tools and Interfaces in OPUS. In Proceedings of the 8th International Conference on Language Resources and Evaluation (LREC 2012), Istanbul, Turkey, 23–25 May 2012; pp. 2214–2218. [Google Scholar]

- BitterCorpus. Available online: https://hlt-mt.fbk.eu/technologies/bittercorpus (accessed on 28 August 2019).

- Pazienza, M.T.; Pennacchiotti, M.; Zanzotto, F.M. Terminology extraction: An analysis of linguistic and statistical approaches. In Knowledge Mining; Sirmakessis, S., Ed.; Springer: Berlin/Heidelberg, Germany, 2005; pp. 255–279. [Google Scholar]

- Farajian, M.A.; Bertoldi, N.; Negri, M.; Turchi, M.; Federico, M. Evaluation of Terminology Translation in Instance-Based Neural MT Adaptation. In Proceedings of the 21st Annual Conference of the European Association for Machine Translation, Alacant/Alicante, Spain, 28–30 May 2018; pp. 149–158. [Google Scholar]

- Popović, M.; Ney, H. Towards Automatic Error Analysis of Machine Translation Output. Comput. Linguist. 2011, 37, 657–688. [Google Scholar] [CrossRef]

- Bentivogli, L.; Bisazza, A.; Cettolo, M.; Federico, M. Neural versus Phrase-Based Machine Translation Quality: A Case Study. arXiv 2016, arXiv:1608.04631. [Google Scholar]

- Burchardt, A.; Macketanz, V.; Dehdari, J.; Heigold, G.; Peter, J.T.; Williams, P. A Linguistic Evaluation of Rule-Based, Phrase-Based, and Neural MT Engines. Prague Bull. Math. Linguist. 2017, 108, 159–170. [Google Scholar] [CrossRef]

- Macketanz, V.; Avramidis, E.; Burchardt, A.; Helcl, J.; Srivastava, A. Machine Translation: Phrase-based, Rule-Based and Neural Approaches with Linguistic Evaluation. Cybern. Inf. Technol. 2017, 17, 28–43. [Google Scholar] [CrossRef]

- Specia, L.; Harris, K.; Blain, F.; Burchardt, A.; Macketanz, V.; Skadiņa, I.; Negri, M.; Turchi, M. Translation Quality and Productivity: A Study on Rich Morphology Languages. In Proceedings of the MT Summit XVI: The 16th Machine Translation Summit, Nagoya, Japan, 18–22 September 2017; pp. 55–71. [Google Scholar]

- Lommel, A.R.; Uszkoreit, H.; Burchardt, A. Multidimensional Quality Metrics (MQM): A Framework for Declaring and Describing Translation Quality Metrics. Tradumática 2014, 12, 455–463. [Google Scholar] [CrossRef]

- Beyer, A.M.; Macketanz, V.; Burchardt, A.; Williams, P. Can out-of-the-box NMT Beat a Domain-trained Moses on Technical Data? In Proceedings of the EAMT User Studies and Project/Product Descriptions, Prague, Czech Republic, 29–31 May 2017; pp. 41–46. [Google Scholar]

- Vintar, Š. Terminology Translation Accuracy in Statistical versus Neural MT: An Evaluation for the English–Slovene Language Pair. In Proceedings of the LREC 2018 Workshop MLP–MomenT: The Second Workshop on Multi-Language Processing in a Globalising World and The First Workshop on Multilingualism at the intersection of Knowledge Bases and Machine Translation, Vancouver, BC, Canada, 2–7 October 2018; Du, J., Arčan, M., Liu, Q., Isahara, H., Eds.; European Language Resources Association (ELRA): Miyazaki, Japan, 2018; pp. 34–37. [Google Scholar]

- Wu, Y.; Schuster, M.; Chen, Z.; Le, Q.V.; Norouzi, M.; Macherey, W.; Krikun, M.; Cao, Y.; Gao, Q.; Macherey, K.; et al. Google’s Neural Machine Translation System: Bridging the Gap between Human and Machine Translation. arXiv 2016, arXiv:1609.08144. [Google Scholar]

- Karstology. Available online: https://en.wiktionary.org/wiki/karstology (accessed on 28 August 2019).

- Haque, R.; Hasanuzzaman, M.; Way, A. Investigating Terminology Translation in Statistical and Neural Machine Translation: A Case Study on English-to-Hindi and Hindi-to-English. In Proceedings of the RANLP 2019: Recent Advances in Natural Language Processing, Varna, Bulgaria, 2–4 September 2019. (to appear). [Google Scholar]

- Huang, G.; Zhang, J.; Zhou, Y.; Zong, C. A Simple, Straightforward and Effective Model for Joint Bilingual Terms Detection and Word Alignment in SMT. In Natural Language Understanding and Intelligent Applications, ICCPOL/NLPCC 2016; Springer: Cham, Switzerland, 2016; Volume 10102, pp. 103–115. [Google Scholar]

- Koehn, P.; Hoang, H.; Birch, A.; Callison-Burch, C.; Federico, M.; Bertoldi, N.; Cowan, B.; Shen, W.; Moran, C.; Zens, R.; et al. Moses: Open Source Toolkit for Statistical Machine Translation. In Proceedings of the Interactive Poster and Demonstration Sessions, Prague, Czech Republic, 25–27 June 2007; pp. 177–180. [Google Scholar]

- James, F. Modified Kneser-Ney Smoothing of N-Gram Models. Available online: https://core.ac.uk/download/pdf/22877567.pdf (accessed on 28 August 2019).

- Heafield, K.; Pouzyrevs.ky, I.; Clark, J.H.; Koehn, P. Scalable Modified Kneser—Ney Language Model Estimation. In Proceedings of the 51st Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), Sofia, Bulgaria, 4–9 August 2013; pp. 690–696. [Google Scholar]

- Vaswani, A.; Zhao, Y.; Fossum, V.; Chiang, D. Decoding with Large-Scale Neural Language Models Improves Translation. In Proceedings of the 2013 Conference on Empirical Methods in Natural Language Processing, Seattle, WA, USA, 18–21 October 2013; pp. 1387–1392. [Google Scholar]

- Durrani, N.; Schmid, H.; Fraser, A. A Joint Sequence Translation Model with Integrated Reordering. In Proceedings of the 49th Annual Meeting of the Association for Computational Linguistics: Human Language Technologies, Portland, OR, USA, 19–24 June 2011; pp. 1045–1054. [Google Scholar]

- Och, F.J.; Ney, H. A Systematic Comparison of Various Statistical Alignment Models. Comput. Linguist. 2003, 29, 19–51. [Google Scholar] [CrossRef]

- Cherry, C.; Foster, G. Batch tuning strategies for statistical machine translation. In Proceedings of the 2012 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Montréal, QC, Canada, 3–8 June 2012; pp. 427–436. [Google Scholar]

- Huang, L.; Chiang, D. Forest Rescoring: Faster Decoding with Integrated Language Models. In Proceedings of the 45th Annual Meeting of the Association of Computational Linguistics, Prague, Czech Republic, 23–30 June 2007; pp. 144–151. [Google Scholar]

- Junczys-Dowmunt, M.; Grundkiewicz, R.; Dwojak, T.; Hoang, H.; Heafield, K.; Neckermann, T.; Seide, F.; Germann, U.; Fikri Aji, A.; Bogoychev, N.; et al. Marian: Fast Neural Machine Translation in C++. In Proceedings of the ACL 2018, System Demonstrations; Association for Computational Linguistics, Melbourne, Australia, 26–31 July 2018; pp. 116–121. [Google Scholar]

- Gage, P. A New Algorithm for Data Compression. C Users J. 1994, 12, 23–38. [Google Scholar]

- Sennrich, R.; Haddow, B.; Birch, A. Neural Machine Translation of Rare Words with Subword Units. In Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), Berlin, Germany, 7–12 August 2016; pp. 1715–1725. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. arXiv 2015, arXiv:1512.03385. [Google Scholar]

- Ba, J.L.; Kiros, J.R.; Hinton, G.E. Layer normalization. arXiv 2016, arXiv:1607.06450. [Google Scholar]

- Press, O.; Wolf, L. Using the Output Embedding to Improve Language Models. arXiv 2016, arXiv:1608.05859. [Google Scholar]

- Gal, Y.; Ghahramani, Z. A Theoretically Grounded Application of Dropout in Recurrent Neural Networks. arXiv 2016, arXiv:1512.05287. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A Method for Stochastic Optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Sennrich, R.; Haddow, B.; Birch, A. Improving Neural Machine Translation Models with Monolingual Data. arXiv 2015, arXiv:1511.06709. [Google Scholar]

- Poncelas, A.; Shterionov, D.; Way, A.; de Buy Wenniger, G.M.; Passban, P. Investigating Backtranslation in Neural Machine Translation. In Proceedings of the 21st Annual Conference of the European Association for Machine Translation (EAMT 2018), Alacant/Alicante, Spain, 28–30 May 2018; pp. 249–258. [Google Scholar]

- Bojar, O.; Diatka, V.; Rychlý, P.; Straňák, P.; Suchomel, V.; Tamchyna, A.; Zeman, D. HindEnCorp – Hindi-English and Hindi-only Corpus for Machine Translation. In Proceedings of the Ninth International Language Resources and Evaluation Conference (LREC’14), Reykjavik, Iceland, 26–31 May 2014; pp. 3550–3555. [Google Scholar]

- Koehn, P. Europarl: A parallel corpus for statistical machine translation. In Proceedings of the MT Summit X: The Tenth Machine Translation Summit, Phuket, Thailand, 12–16 September 2005; pp. 79–86. [Google Scholar]

- Moses Tokeniser. Available online: https://github.com/moses-smt/mosesdecoder/blob/master/scripts/tokenizer/tokenizer.perl (accessed on 28 August 2019).

- Denkowski, M.; Lavie, A. Meteor 1.3: Automatic Metric for Reliable Optimization and Evaluation of Machine Translation Systems. In Proceedings of the Sixth Workshop on Statistical Machine Translation, Edinburgh, UK, 30–31 July 2011; pp. 85–91. [Google Scholar]

- Snover, M.; Dorr, B.; Schwartz, R.; Micciulla, L.; Makhoul, J. A Study of Translation Edit Rate with Targeted Human Annotation. In Proceedings of the 7th Biennial Conference of the Association for Machine Translation in the Americas (AMTA-2006), Cambridge, MA, USA, 8–12 August 2006; pp. 223–231. [Google Scholar]

- Koehn, P. Statistical Significance Tests for Machine Translation Evaluation. In Proceedings of the 2004 Conference on Empirical Methods in Natural Language Processing (EMNLP), Barcelona, Spain, 25–26 July 2004; Lin, D., Wu, D., Eds.; 2004; pp. 388–395. [Google Scholar]

- Skadiņš, R.; Puriņš, M.; Skadiņa, I.; Vasiļjevs, A. Evaluation of SMT in localization to under-resourced inflected language. In Proceedings of the 15th International Conference of the European Association for Machine Translation (EAMT 2011), Leuven, Belgium, 30–31 May 2011; pp. 35–40. [Google Scholar]

- SDL Trados Studio. Available online: https://en.wikipedia.org/wiki/SDL_Trados_Studio (accessed on 28 August 2019).

- PyQt. Available online: https://en.wikipedia.org/wiki/PyQt (accessed on 28 August 2019).

- Gold Standard Data Set (English–Hindi). Available online: https://www.computing.dcu.ie/~rhaque/termdata/terminology-testset.zip (accessed on 28 August 2019).

- Cohen, J. A Coefficient of Agreement for Nominal Scales. Educ. Psychol. Measur. 1960, 20, 37–46. [Google Scholar] [CrossRef]

- Porter, M.F. An algorithm for suffix stripping. Program 1980, 14, 130–137. [Google Scholar] [CrossRef]

- Ramanathan, A.; Rao, D. Lightweight Stemmer for Hindi. In Proceedings of the EACL 2003 Workshop on Computational Linguistics for South-Asian Languages—Expanding Synergies with Europe, Budapest, Hungary, 12–17 April 2003; pp. 42–48. [Google Scholar]

- Fellbaum, C. WordNet: An Electronic Lexical Database; Language, Speech, and Communication; MIT Press: Cambridge, MA, USA, 1998. [Google Scholar]

- Narayan, D.; Chakrabarti, D.; Pande, P.; Bhattacharyya, P. An Experience in Building the Indo WordNet—A WordNet for Hindi. In Proceedings of the First International Conference on Global WordNet (GWC2002), Mysore, India, 21–25 January 2002; p. 8. [Google Scholar]

| English–Hindi Parallel Corpus | |||

| Sentences | Words (English) | Words (Hindi) | |

| Training set | 1,243,024 | 17,485,320 | 18,744,496 |

| (Vocabulary) | 180,807 | 309,879 | |

| Judicial | 7374 | 179,503 | 193,729 |

| Development set | 996 | 19,868 | 20,634 |

| Test set | 2000 | 39,627 | 41,249 |

| Monolingual Corpus | Sentences | Words | |

| Used for PB-SMT Language Model | |||

| English | 11 M | 222 M | |

| Hindi | 10.4 M | 199 M | |

| Used for NMT Back Translation | |||

| English | 1 M | 20.2 M | |

| Hindi | 903 K | 14.2 M | |

| BLEU | METEOR | TER | |

|---|---|---|---|

| EHPS | 28.8 | 30.2 | 53.4 |

| EHNS | 36.6 (99.9%) | 33.5 (99.9%) | 46.3 (99.9%) |

| HEPS | 34.1 | 36.6 | 50.0 |

| HENS | 39.9 (99.9%) | 38.5 (98.6%) | 42.0 (99.9%) |

| Number of Source—Target Term-Pairs | 3064 | |

|---|---|---|

| English | Terms with LIVs | 2057 |

| LIVs/Term | 5.2 | |

| Hindi | Terms with LIVs | 2709 |

| LIVs/Term | 8.4 | |

| English-to-Hindi | English-to-Hindi | Hindi-to-English | Hindi-to-English | |

|---|---|---|---|---|

| PB-SMT | NMT | PB-SMT | NMT | |

| CT | 2761 | 2811 | 2668 | 2711 |

| RE | 15 | 5 | 18 | 5 |

| IE | 79 | 77 | 118 | 76 |

| PE | 52 | 47 | 65 | 73 |

| ILS | 77 | 44 | 139 | 90 |

| TD | 53 | 56 | 38 | 86 |

| REM | 27 | 24 | 18 | 23 |

| English-to-Hindi Task | ||

| Correct | TermEval | |

| Translation | ||

| PB-SMT | 2610 | 0.852 |

| NMT | 2680 | 0.875 |

| Hindi-to-English Task | ||

| PB-SMT | 2554 | 0.834 |

| NMT | 2540 | 0.829 |

| English-to-Hindi Task | |||||

| PB-SMT | NMT | ||||

| 2610 | 454 | 2680 | 384 | ||

| 2761 | 2602 | 159 | 2811 | 2677 | 134 |

| 303 | 8 | 295 | 253 | 3 | 250 |

| Hindi-to-English Task | |||||

| PB-SMT | NMT | ||||

| 2554 | 510 | 2540 | 524 | ||

| 2668 | 2554 | 114 | 2711 | 2540 | 171 |

| 396 | 0 | 396 | 353 | 0 | 353 |

| English-to-Hindi Task | ||

| PB-SMT | NMT | |

| Precision | 0.997 | 0.999 |

| Recall | 0.942 | 0.953 |

| F1 | 0.968 | 0.975 |

| Hindi-to-English Task | ||

| PB-SMT | NMT | |

| Precision | 1.0 | 1.0 |

| Recall | 0.957 | 0.937 |

| F1 | 0.978 | 0.967 |

| False Negative (Due to Term Reordering) | |||

|---|---|---|---|

| PB-SMT | NMT | PB-SMT ∩ NMT | |

| English-to-Hindi | 4 | 7 | 4 |

| Hindi-to-English | 13 | 11 | 4 |

| False Negative (Due to term’s morphological variations) | |||

|---|---|---|---|

| PB-SMT | NMT | PB-SMT ∩ NMT | |

| English-to-Hindi | 112 | 87 | 31 |

| Hindi-to-English | 75 | 109 | 48 |

| False Negative (Due to Miscellaneous Reasons) | |||

|---|---|---|---|

| PB-SMT | NMT | PB-SMT ∩ NMT | |

| English-to-Hindi | 8 | 4 | - |

| Hindi-to-English | 2 | 18 | - |

| False Negative (Due to Missing LIVs) | |||

|---|---|---|---|

| PB-SMT | NMT | PB-SMT ∩ NMT | |

| English-to-Hindi | 33 | 36 | 10 |

| Hindi-to-English | 24 | 33 | 5 |

| PB-SMT | NMT | ||||||||||

| RE | IE | PE | ILS | TD | RE | IE | PE | ILS | TD | ||

| English-to-Hindi | Predictions | 15 | 167 | 83 | 113 | 72 | 14 | 132 | 72 | 67 | 99 |

| Correct Predictions | 14 | 69 | 48 | 67 | 45 | 5 | 62 | 42 | 34 | 53 | |

| Reference (cf. Table 4) | 19 | 79 | 52 | 77 | 53 | 5 | 77 | 47 | 44 | 56 | |

| Precision | 73.7 | 40.8 | 57.8 | 59.3 | 62.5 | 35.7 | 39.9 | 58.3 | 50.7 | 54.5 | |

| Recall | 93.3 | 87.3 | 92.3 | 87.1 | 84.9 | 100.0 | 81.2 | 89.4 | 77.3 | 94.6 | |

| F1 | 82.3 | 55.6 | 71.1 | 70.6 | 72.0 | 51.3 | 53.1 | 75.1 | 61.2 | 57.6 | |

| macroP | 58.8 | 47.8 | |||||||||

| macroR | 88.9 | 88.3 | |||||||||

| macroF1 | 70.3 | 59.6 | |||||||||

| PB-SMT | NMT | ||||||||||

| RE | IE | PE | ILS | TD | RE | IE | PE | ILS | TD | ||

| Hindi-to-English | Predictions | 32 | 173 | 77 | 136 | 92 | 16 | 162 | 88 | 89 | 169 |

| Correct Predictions | 18 | 101 | 65 | 112 | 36 | 5 | 64 | 71 | 57 | 82 | |

| Reference (cf. Table 4) | 18 | 118 | 62 | 139 | 38 | 5 | 76 | 73 | 90 | 86 | |

| Precision | 56.3 | 62.4 | 80.5 | 82.4 | 39.1 | 31.5 | 39.5 | 80.7 | 62.9 | 48.5 | |

| Recall | 100.0 | 91.5 | 95.4 | 80.6 | 94.7 | 100 | 84.2 | 78.8 | 62.2 | 95.4 | |

| F1 | 72.0 | 74.2 | 87.3 | 80.5 | 55.4 | 47.9 | 53.8 | 79.8 | 62.6 | 64.3 | |

| macroP | 64.1 | 52.6 | |||||||||

| macroR | 92.4 | 84.1 | |||||||||

| macroF1 | 73.8 | 61.7 | |||||||||

| True-Positives | False-Positives | |||

|---|---|---|---|---|

| PB-SMT | 138 [177] | 78.0% | 50 [66] | 75.7% |

| NMT | 51 [84] | 60.7% | 44 [60] | 73.3% |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Haque, R.; Hasanuzzaman, M.; Way, A. Terminology Translation in Low-Resource Scenarios. Information 2019, 10, 273. https://doi.org/10.3390/info10090273

Haque R, Hasanuzzaman M, Way A. Terminology Translation in Low-Resource Scenarios. Information. 2019; 10(9):273. https://doi.org/10.3390/info10090273

Chicago/Turabian StyleHaque, Rejwanul, Mohammed Hasanuzzaman, and Andy Way. 2019. "Terminology Translation in Low-Resource Scenarios" Information 10, no. 9: 273. https://doi.org/10.3390/info10090273

APA StyleHaque, R., Hasanuzzaman, M., & Way, A. (2019). Terminology Translation in Low-Resource Scenarios. Information, 10(9), 273. https://doi.org/10.3390/info10090273