Multi-Modal Emotion Aware System Based on Fusion of Speech and Brain Information

Abstract

1. Introduction

2. Related Work

2.1. Literature Review on Speech Emotion Recognition

2.2. Literature Review on EEG-Based Emotion Recognition

2.3. Literature Review on Multi-Modal Emotion Recognition

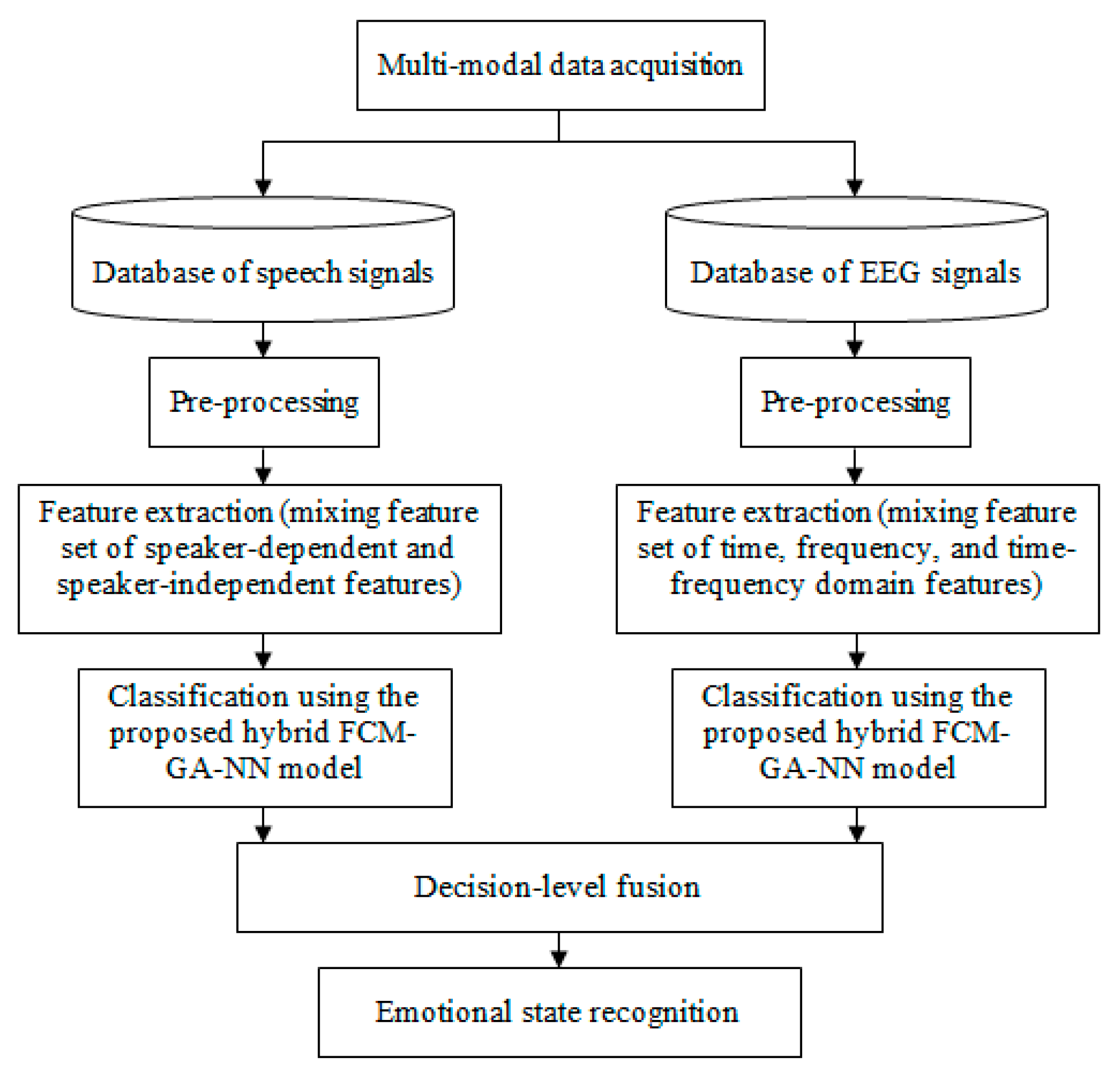

3. Proposed Methodology

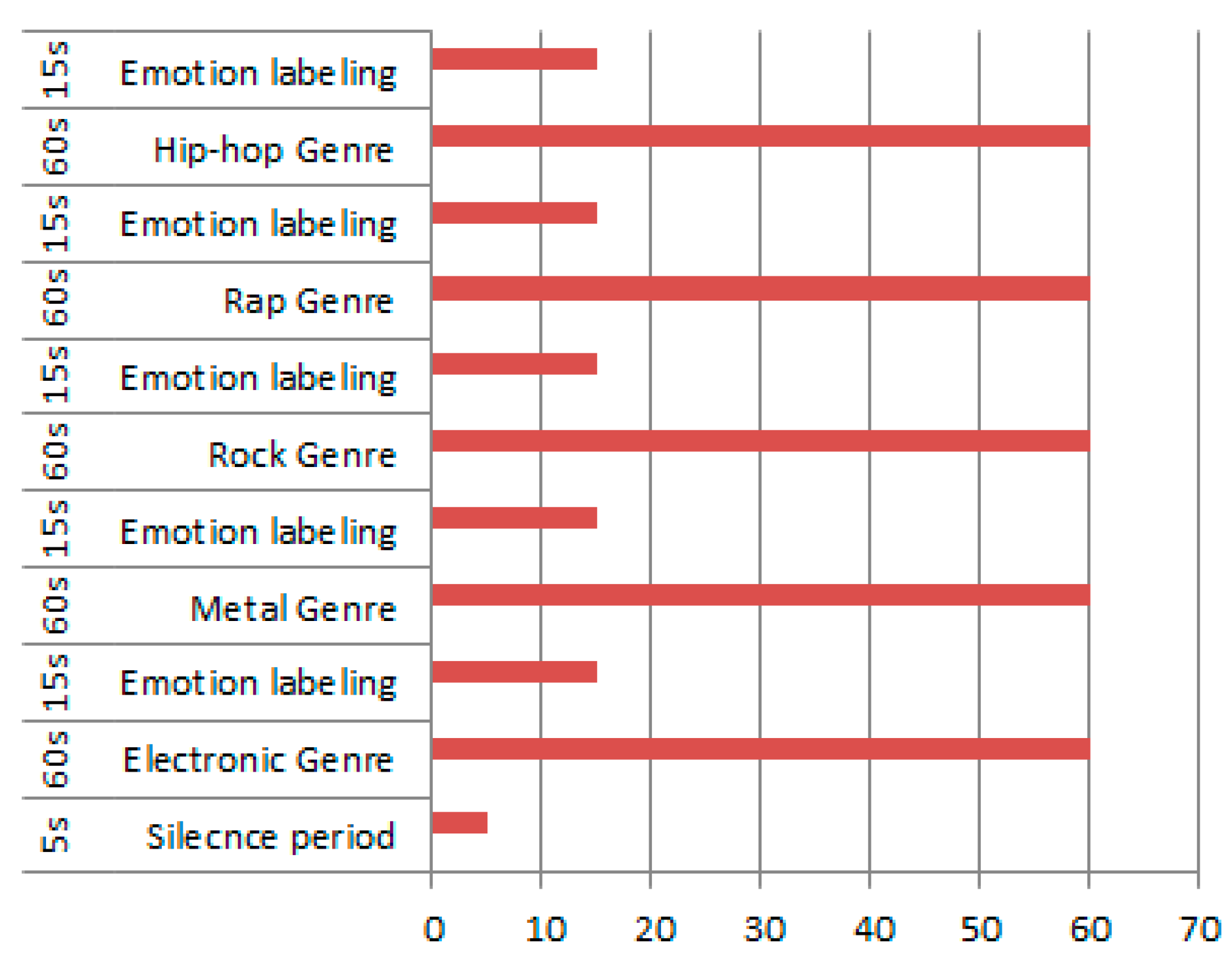

3.1. Multimodal Data Acquisition

- Before the experiment, students were informed of the experiment purpose, and each completed a consent form subsequent to an introduction about the steps of simulation.

- Identities of subjects were kept anonymous and confidential, where the personal information will not be ever associated or disclosed with any answer.

- Information acquired from subjects was employed only for the aim of the current research.

- The accuracy and suitability of health and medical information used in current research were confirmed by experts.

3.2. Speech Signal Pre-Processing

3.3. EEG Signal Pre-Processing

3.4. Speech Feature Extraction

- (a)

- (b)

- Speaker-independent features: These features are used to suppress the speaker’s personal characteristics. In [20], the speaker-independent features from emotional speech involve the fundamental frequency average change ratio, etc.

3.5. EEG Feature Extraction

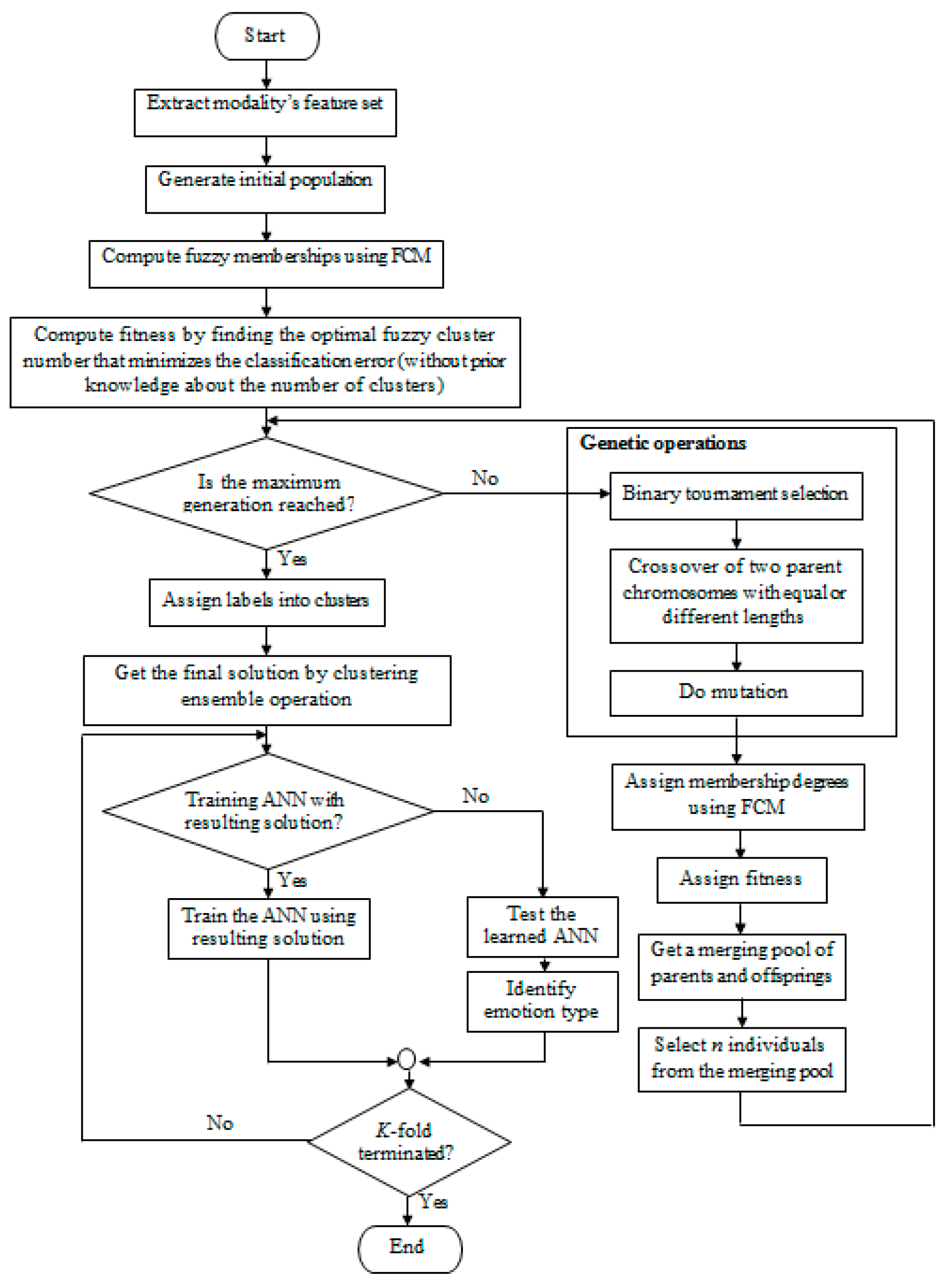

3.6. The Classifier

3.6.1. The Basic Classifier

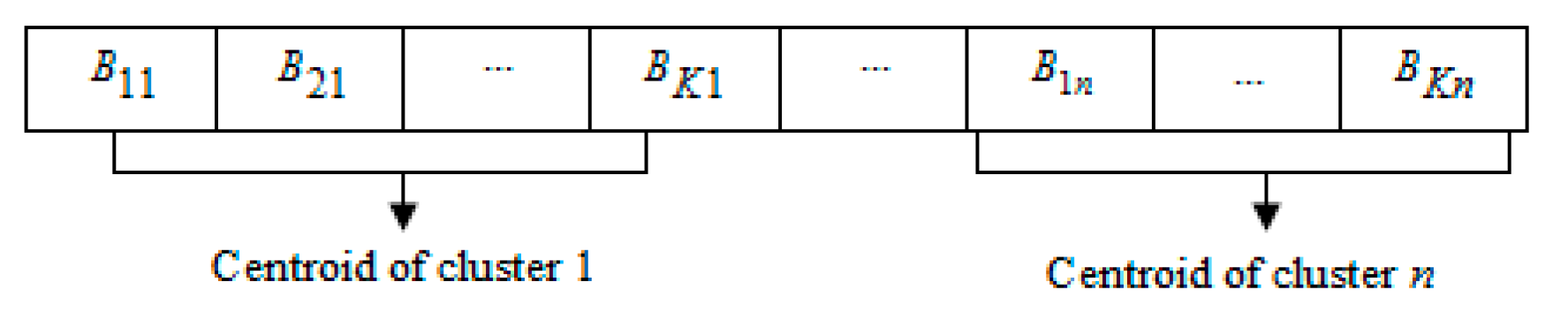

3.6.2. Proposed Classifier Design

- the number of emotional classes in each modality’s dataset,

- the emotion feature vector of the sample ,

- the emotion feature vector’s membership grade belonging to cluster at time , ,

- decides time complexity and precision for clustering. (in this paper, it was set as ).

4. Experimental Results

4.1. Performance Evaluation Metrics

- the data points number.

- the output predicted by the model.

- the sample actual value.

- the mean of .

- the mean of .

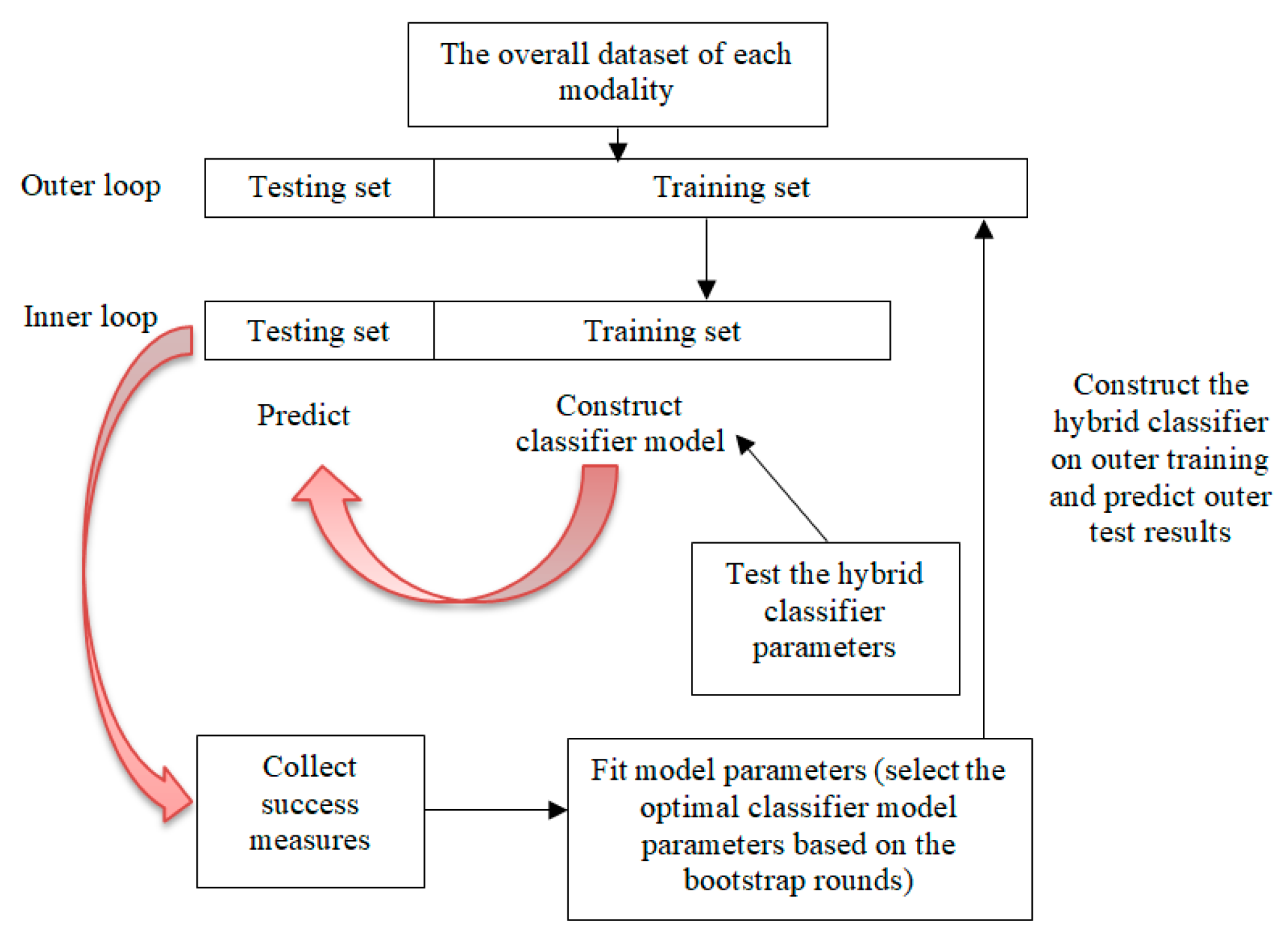

4.2. Cross Validation (CV)

4.3. First Experiment: Comparison of the Proposed FCM-GA-NN Model to the Developed

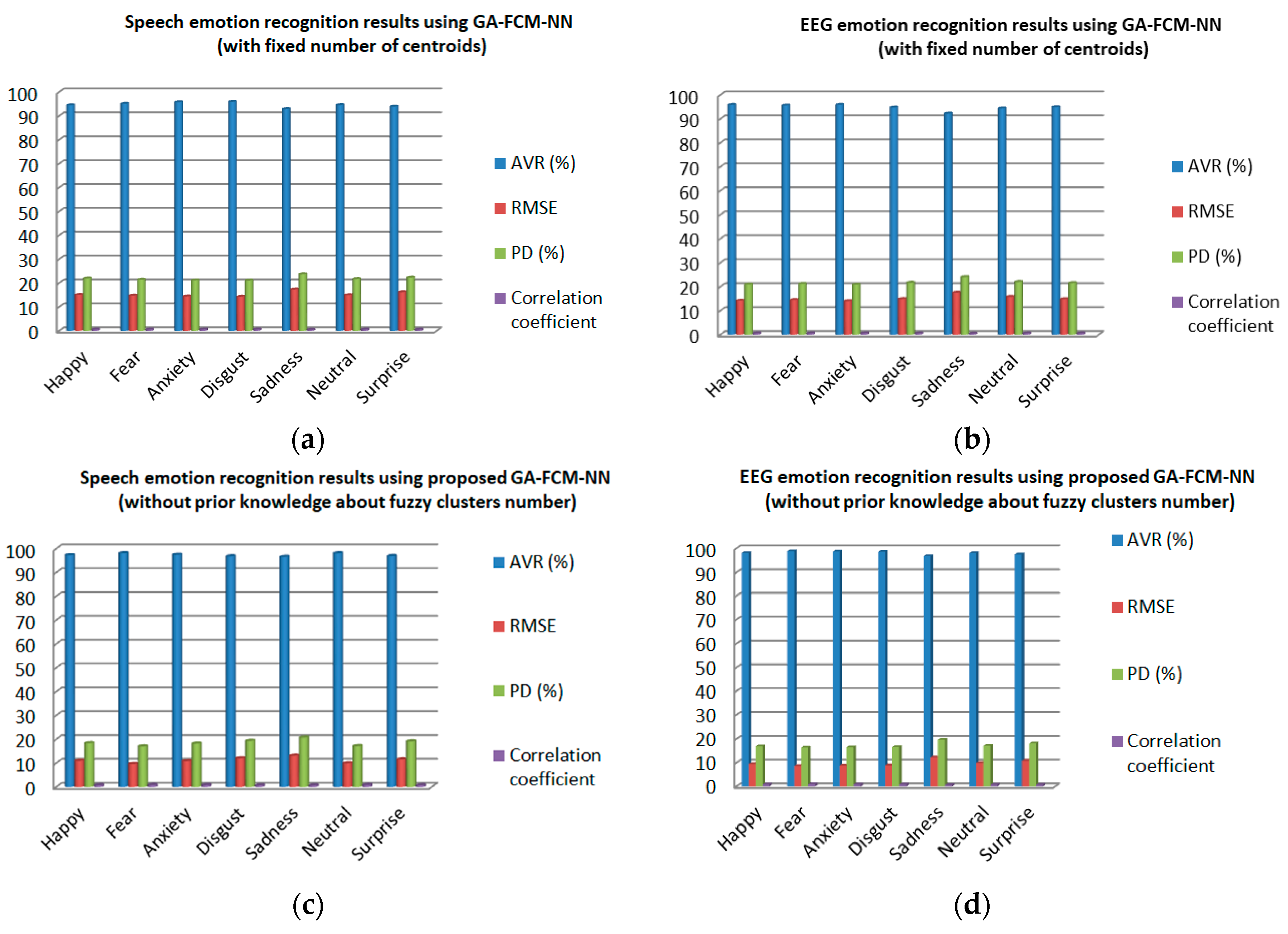

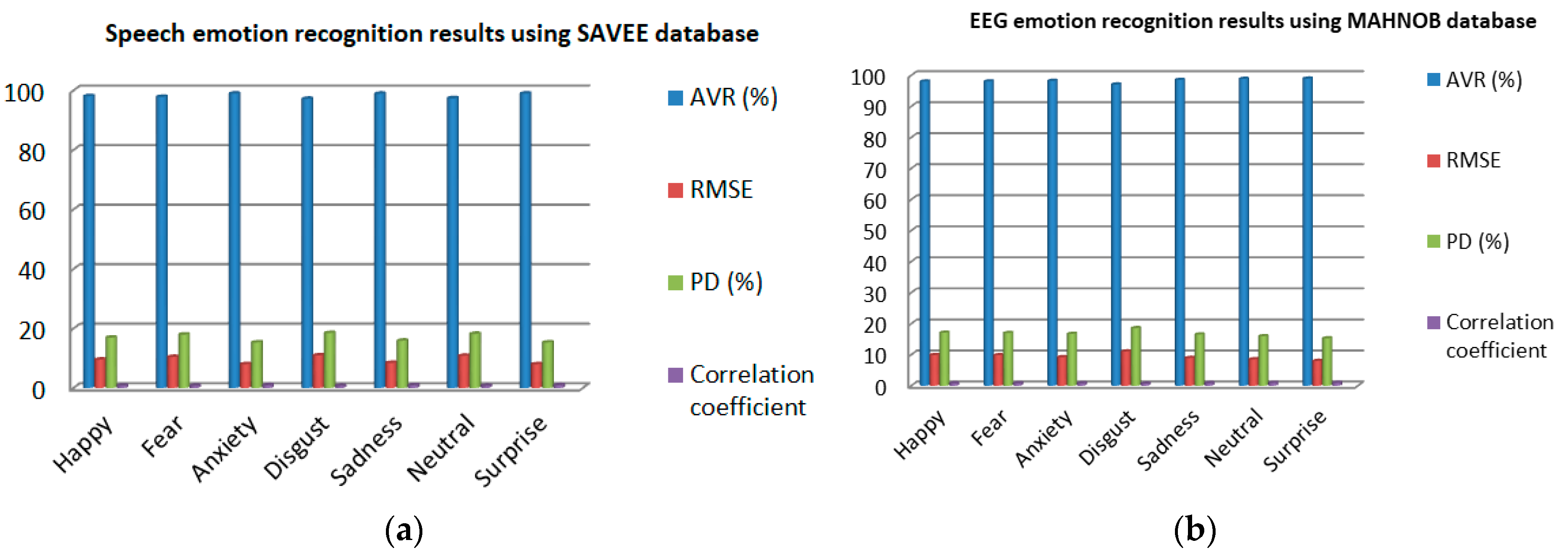

4.4. Second Experiment: Speech Emotion Recognition

- (a)

- From Figure 9c, it can be vividly recognized that using the collected dataset, the proposed model achieved higher AVRs of 98.08%, 98.03%, 97.44%, 97.24%, 96.87%, 96.76%, and 96.55% for fear, neutral, anxiety, happy, surprise, disgust, and sadness classes, respectively. Likewise, it can be observed that during training the proposed model exhibits lower of 9.6233, 9.9299, 10.8219, 10.9501, 11.5206, 12.0312, and 13.1127, respectively, for the aforementioned classes. The model also gives lower of 16.99%, 17.15%, 18.19%, 18.35%, 19.13%, 19.32%, and 20.67%, respectively, for the same classes.

- (b)

- From Figure 11a, the proposed model achieved higher AVRs using the SAVEE dataset, 98.98%, 98.96%, 98.93%, 98.11%, 97.83%, 97.42%, and 97.21% for surprise, anxiety, sadness, happy, fear, neutral, and disgust classes, respectively. The minimum and maximum occur at surprise class (15.31%) and disgust class (18.49%), respectively. The highest correlation coefficient equals 0.9801, which is found at surprise class, while the lowest value is 0.733 and noted at disgust class.

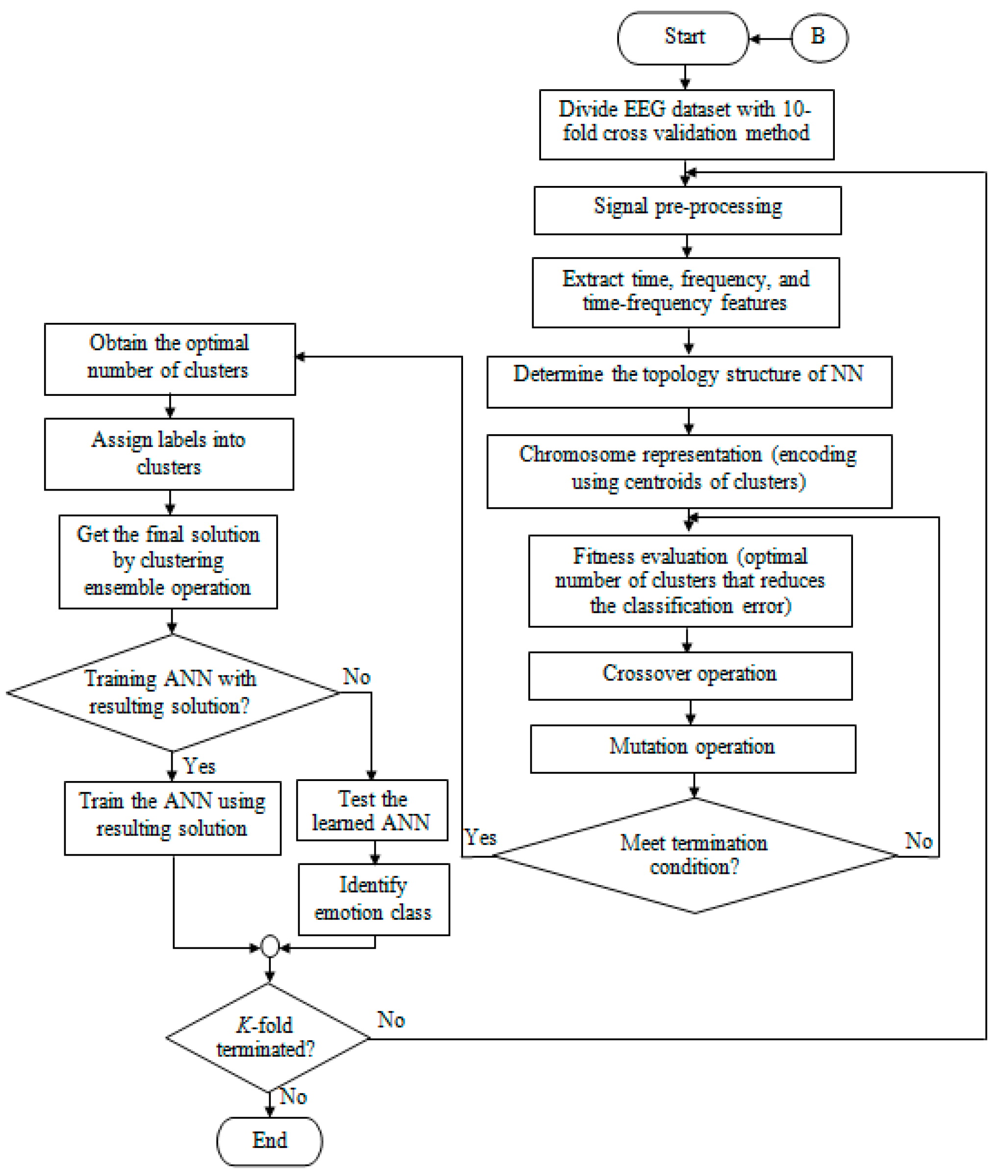

4.5. Third Experiment: EEG Emotion Recognition

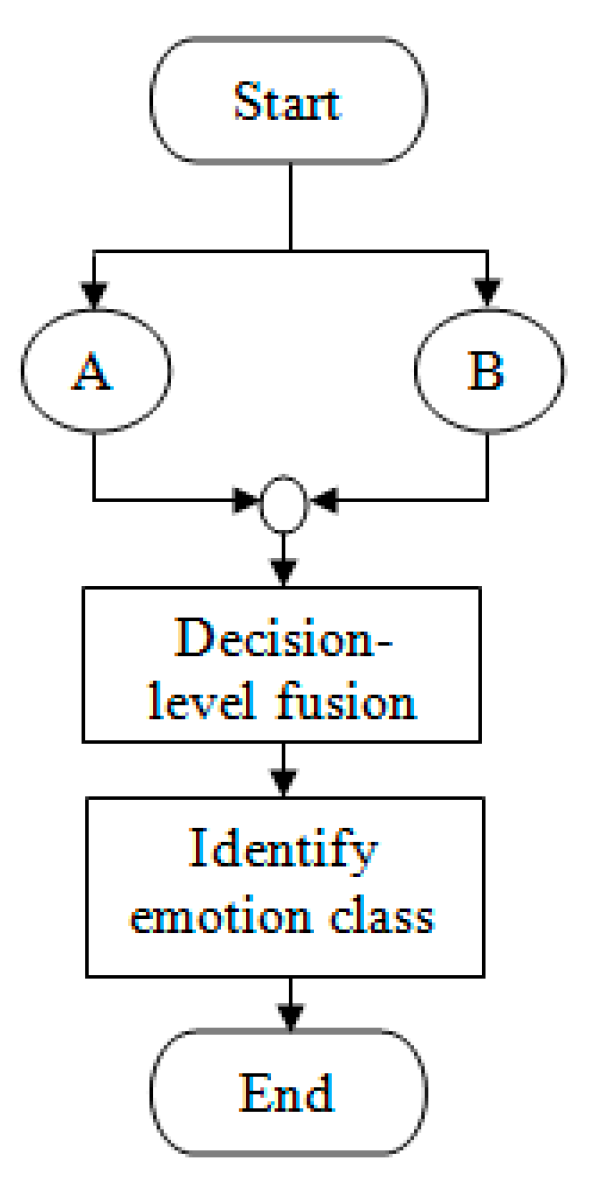

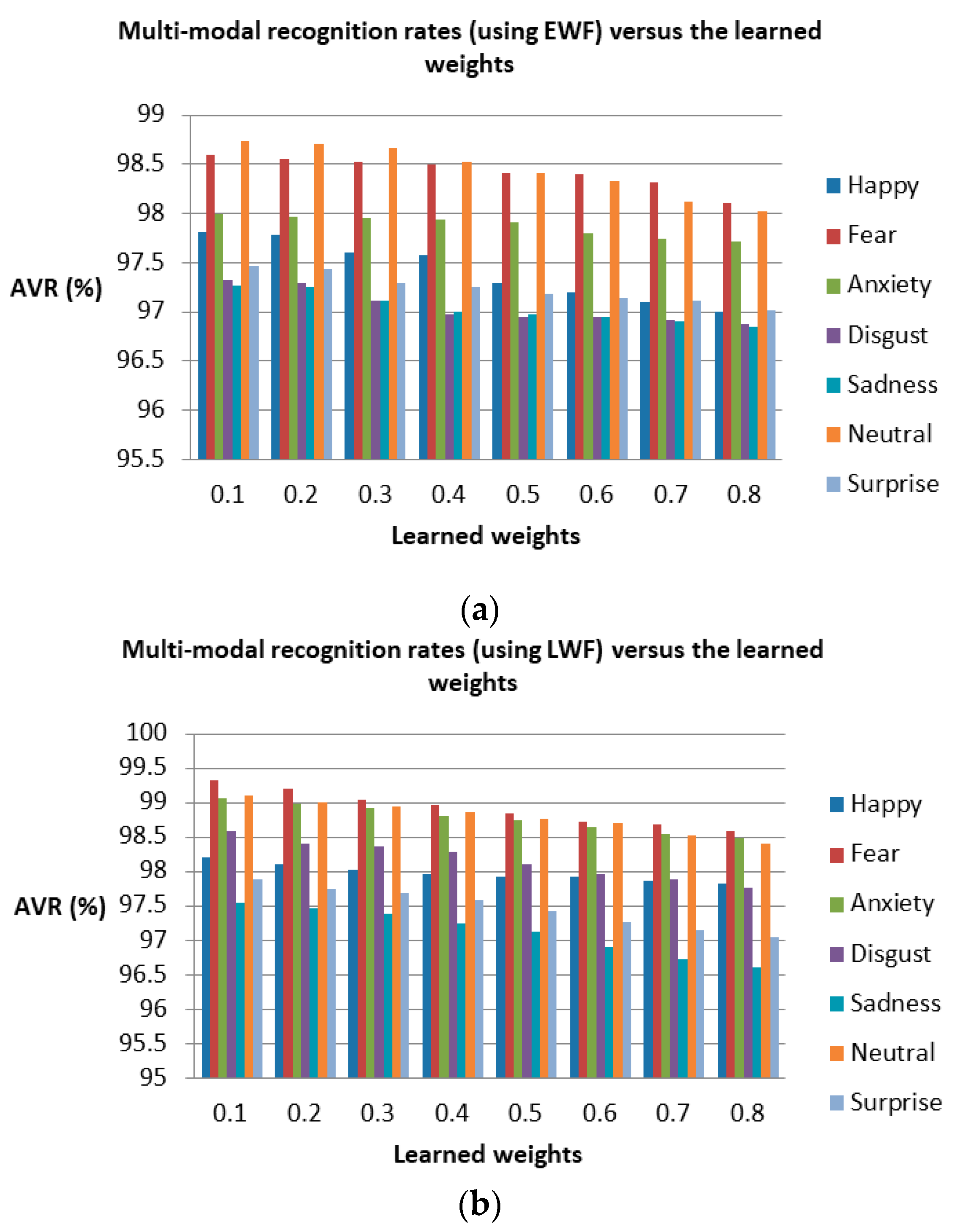

4.6. Fourth Experiment: Multi-Modal Emotion Recognition

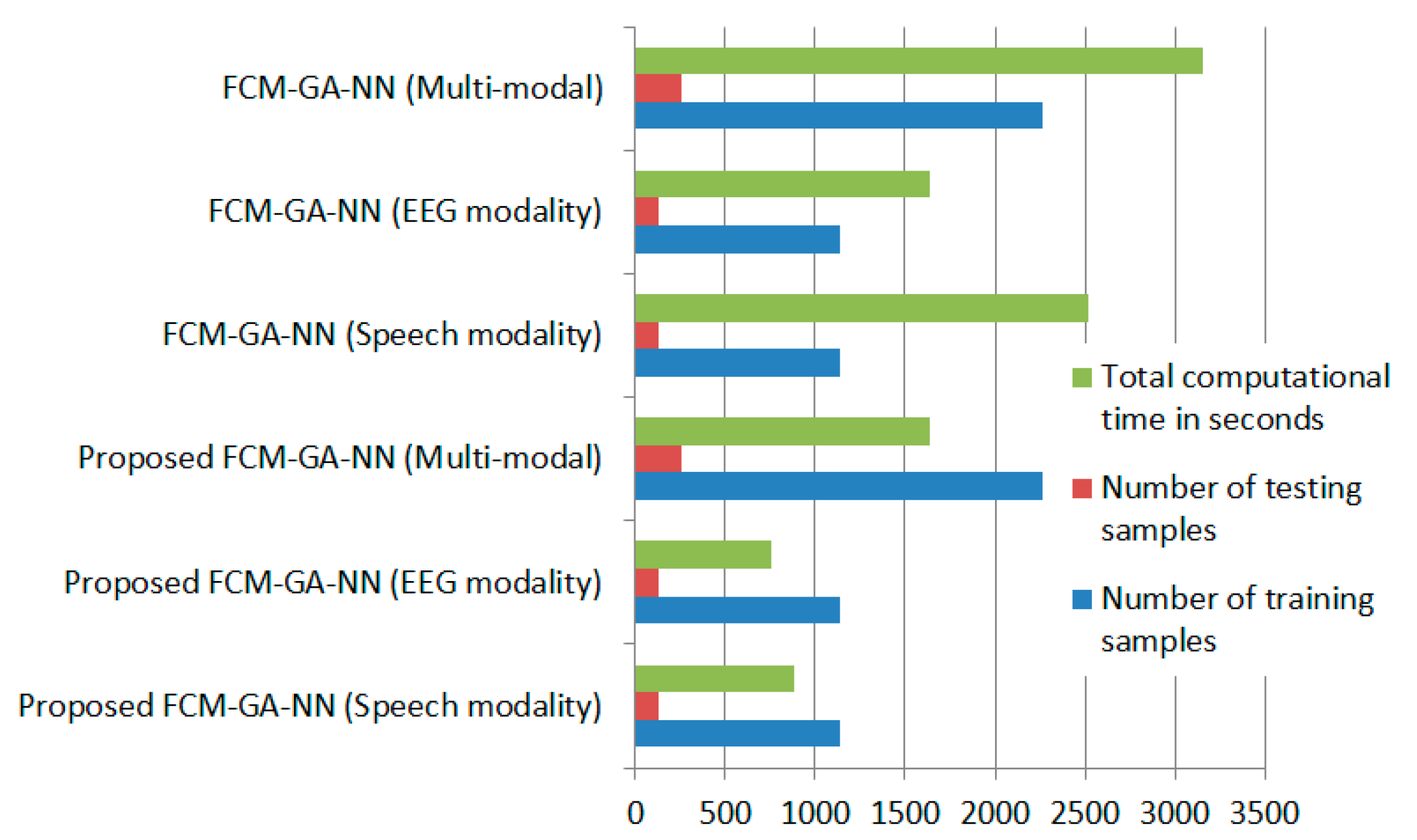

4.7. Computational Time Comparisons

5. Conclusions and Future Work

Author Contributions

Funding

Conflicts of Interest

Appendix A. Algorithms

| Algorithm A1: The proposed FCM-GA-NN model for emotion classification. |

| Inputs: (A) Parent population of each single modality (speech and EEG), indicated as . Output: Offspring population where each offspring contains an optimal fuzzy cluster set that minimizes the classification error.

|

| Algorithm A2: The clustering algorithm. |

|

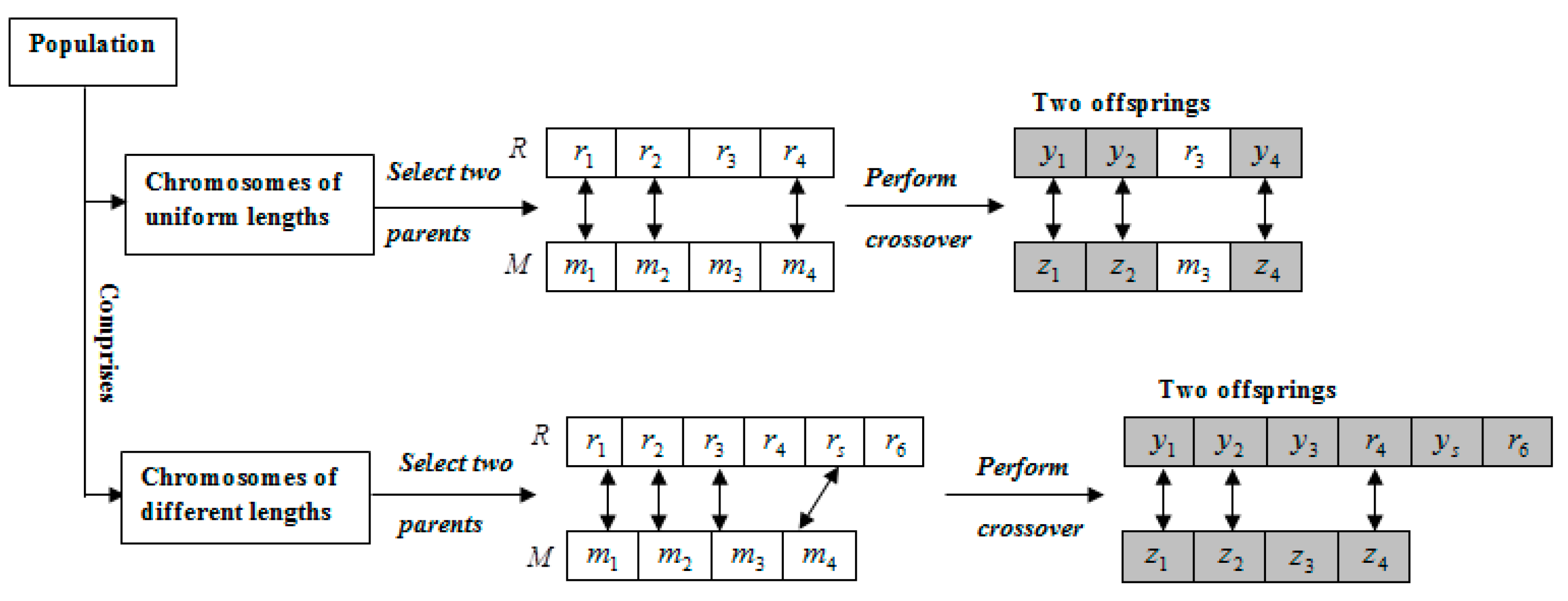

| Algorithm A3: Crossover algorithm. |

| Inputs: Parents and assigned as , and , respectively, where , and Output: Children , and

|

| Algorithm A4: Mutation algorithm. |

| Inputs: Set of crossover-subjected chromosomes , Output: mutated chromosomes

|

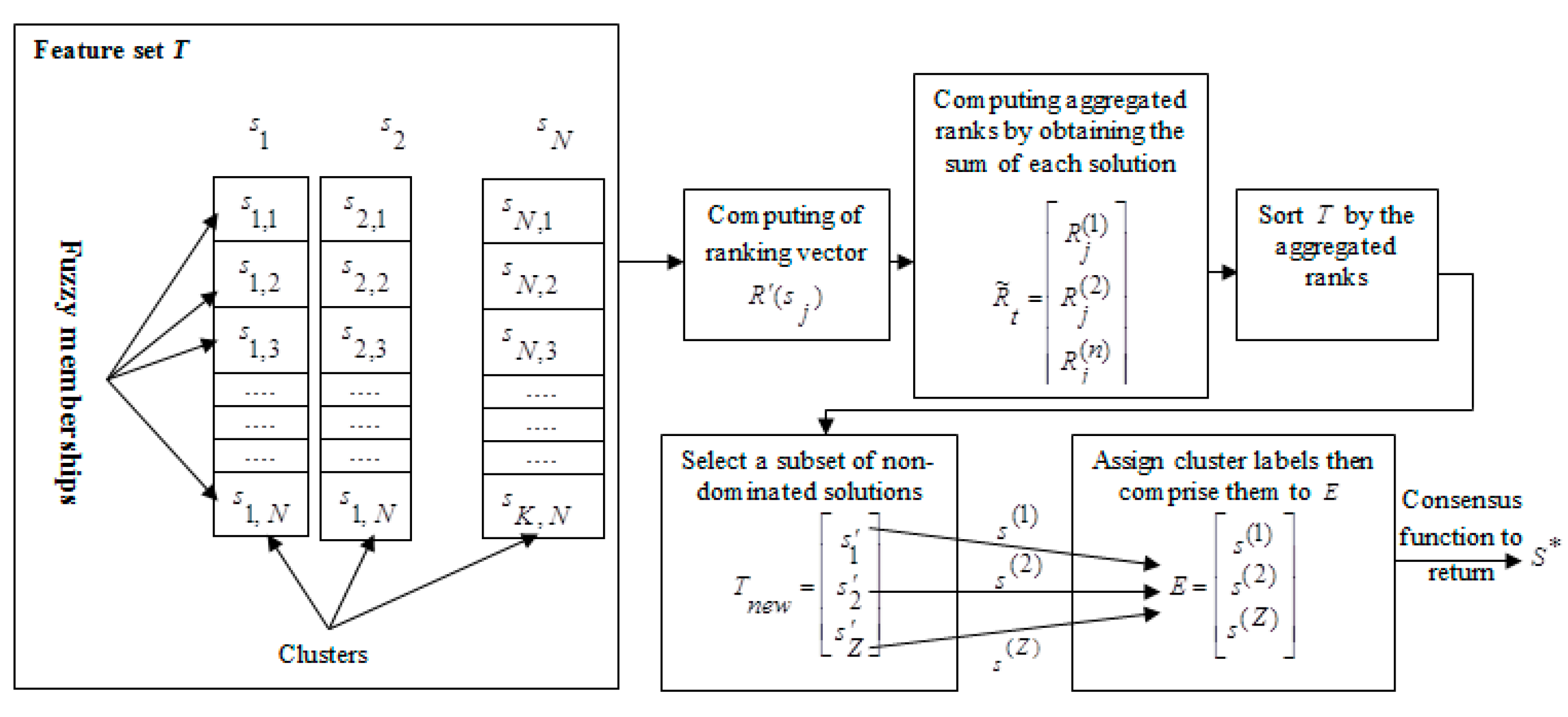

| Algorithm A5: Final solution computation by clustering ensemble. |

| Inputs: Solutions set indicating fuzzy memberships. Output: Final cluster label .

|

References

- Kolodyazhniy, V.; Kreibig, S.D.; Gross, J.J.; Roth, W.T.; Wilhelm, F.H. An affective computing approach to physiological emotion specificity: Toward subject-independent and stimulus-independent classification of film-induced emotions. Psychophysiology 2011, 48, 908–922. [Google Scholar] [CrossRef] [PubMed]

- Liu, Y.-J.; Yu, M.; Zhao, G.; Song, J.; Ge, Y.; Shi, Y. Real-Time Movie-Induced Discrete Emotion Recognition from EEG Signals. IEEE Trans. Affect. Comput. 2018, 9, 550–562. [Google Scholar] [CrossRef]

- Menezes, M.L.R.; Samara, A.; Galway, L.; Sant’Anna, A.; Verikas, A.; Alonso-Fernandez, F.; Wang, H.; Bond, R. Towards emotion recognition for virtual environments: an evaluation of EEG features on benchmark dataset. Pers. Ubiquitous Comput. 2017, 21, 1003–1013. [Google Scholar] [CrossRef]

- Gharavian, D.; Bejani, M.; Sheikhan, M. Audio-visual emotion recognition using FCBF feature selection method and particle swarm optimization for fuzzy ARTMAP neural networks. Multimed. Tools Appl. 2016, 76, 2331–2352. [Google Scholar] [CrossRef]

- Li, Y.; He, Q.; Zhao, Y.; Yao, H. Multi-modal Emotion Recognition Based on Speech and Image. Adv. Multimed. Inf. Process. – PCM 2017 Lecture Notes Comput. Sci. 2018, 844–853. [Google Scholar]

- Rahdari, F.; Rashedi, E.; Eftekhari, M. A Multimodal Emotion Recognition System Using Facial Landmark Analysis. Iran. J. Sci. Tech. Trans. Electr. Eng. 2018, 43, 171–189. [Google Scholar] [CrossRef]

- Wan, P.; Wu, C.; Lin, Y.; Ma, X. Optimal Threshold Determination for Discriminating Driving Anger Intensity Based on EEG Wavelet Features and ROC Curve Analysis. Information 2016, 7, 52. [Google Scholar] [CrossRef]

- Poh, N.; Bengio, S. How do correlation and variance of base-experts affect fusion in biometric authentication tasks? IEEE Trans. Signal Process. 2005, 53, 4384–4396. [Google Scholar] [CrossRef]

- Majumder, N.; Hazarika, D.; Gelbukh, A.; Cambria, E.; Poria, S. Multimodal sentiment analysis using hierarchical fusion with context modeling. Knowl.-Based Syst. 2018, 161, 124–133. [Google Scholar] [CrossRef]

- Adeel, A.; Gogate, M.; Hussain, A. Contextual Audio-Visual Switching For Speech Enhancement in Real-World Environments. Inf. Fusion 2019. [Google Scholar]

- Gogate, M.; Adeel, A.; Marxer, R.; Barker, J.; Hussain, A. DNN Driven Speaker Independent Audio-Visual Mask Estimation for Speech Separation. In Proceedings of the Interspeech 2018, Hyderabad, India, 2–6 September 2018. [Google Scholar]

- Huang, F.; Zhang, X.; Zhao, Z.; Xu, J.; Li, Z. Image–text sentiment analysis via deep multimodal attentive fusion. Knowl.-Based Syst. 2019, 167, 26–37. [Google Scholar] [CrossRef]

- Ahmadi, E.; Jasemi, M.; Monplaisir, L.; Nabavi, M.A.; Mahmoodi, A.; Jam, P.A. New efficient hybrid candlestick technical analysis model for stock market timing on the basis of the Support Vector Machine and Heuristic Algorithms of Imperialist Competition and Genetic. Expert Syst. Appl. 2018, 94, 21–31. [Google Scholar] [CrossRef]

- Melin, P.; Miramontes, I.; Prado-Arechiga, G. A hybrid model based on modular neural networks and fuzzy systems for classification of blood pressure and hypertension risk diagnosis. Expert Syst. Appl. 2018, 107, 146–164. [Google Scholar] [CrossRef]

- Engberg, I.; Hansen, A. Documentation of the Danish emotional speech database des 1996. Available online: http://kom.aau.dk/~tb/speech/Emotions/des (accessed on 4 July 2019).

- Wang, K.; An, N.; Li, B.N. Speech emotion recognition using Fourier parameters. IEEE Trans. Affect. Comput. 2015, 6, 69–75. [Google Scholar] [CrossRef]

- Eyben, F.; Scherer, K.R.; Schuller, B.W.; Sundberg, J.; Andre, E.; Busso, C.; Devillers, L.Y.; Epps, J.; Laukka, P.; Narayanan, S.S.; et al. The Geneva Minimalistic Acoustic Parameter Set (GeMAPS) for Voice Research and Affective Computing. IEEE Trans. Affect. Comput. 2016, 7, 190–202. [Google Scholar] [CrossRef]

- Tahon, M.; Devillers, L. Towards a Small Set of Robust Acoustic Features for Emotion Recognition: Challenges. IEEE/ACM Transact. Audio Speech Lang. Process. 2016, 24, 16–28. [Google Scholar] [CrossRef]

- Zhao, J.; Mao, X.; Chen, L. Speech emotion recognition using deep 1D & 2D CNN LSTM networks. Biomed. Signal Process. Control 2019, 47, 312–323. [Google Scholar]

- Liu, Z.-T.; Wu, M.; Cao, W.-H.; Mao, J.-W.; Xu, J.-P.; Tan, G.-Z. Speech emotion recognition based on feature selection and extreme learning machine decision tree. Neurocomputing 2018, 273, 271–280. [Google Scholar] [CrossRef]

- Alonso, J.B.; Cabrera, J.; Medina, M.; Travieso, C.M. New approach in quantification of emotional intensity from the speech signal: Emotional temperature. Expert Syst. Appl. 2015, 42, 9554–9564. [Google Scholar] [CrossRef]

- Cao, H.; Verma, R.; Nenkova, A. Speaker-sensitive emotion recognition via ranking: Studies on acted and spontaneous speech. Comput. Speech Lang. 2015, 29, 186–202. [Google Scholar] [CrossRef] [PubMed]

- D Griol, D.; Molina, J.M.; Callejas, Z. Combining speech-based and linguistic classifiers to recognize emotion in user spoken utterances. Neurocomputing 2019, 326-327, 132–140. [Google Scholar] [CrossRef]

- Yogesh, C.K.; Hariharan, M.; Ngadiran, R.; Adom, A.; Yaacob, S.; Polat, K. Hybrid BBO_PSO and higher order spectral features for emotion and stress recognition from natural speech. Appl. Soft Comput. 2017, 56, 217–232. [Google Scholar]

- Shon, D.; Im, K.; Park, J.-H.; Lim, D.-S.; Jang, B.; Kim, J.-M. Emotional Stress State Detection Using Genetic Algorithm-Based Feature Selection on EEG Signals. Int. J. Environ. Res. Public Health 2018, 15, 2461. [Google Scholar] [CrossRef] [PubMed]

- Mert, A.; Akan, A. Emotion recognition based on time–frequency distribution of EEG signals using multivariate synchrosqueezing transform. Digit. Signal Process. 2018, 81, 106–115. [Google Scholar] [CrossRef]

- Zoubi, O.A.; Awad, M.; Kasabov, N.K. Anytime multipurpose emotion recognition from EEG data using a Liquid State Machine based framework. Artif. Intell. Med. 2018, 86, 1–8. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Y.; Ji, X.; Zhang, S. An approach to EEG-based emotion recognition using combined feature extraction method. Neurosc. Lett. 2016, 633, 152–157. [Google Scholar] [CrossRef] [PubMed]

- Bhatti, A.M.; Majid, M.; Anwar, S.M.; Khan, B. Human emotion recognition and analysis in response to audio music using brain signals. Comput. Human Behav. 2016, 65, 267–275. [Google Scholar] [CrossRef]

- Ma, Y.; Hao, Y.; Chen, M.; Chen, J.; Lu, P.; Košir, A. Audio-visual emotion fusion (AVEF): A deep efficient weighted approach. Inf. Fusion 2019, 46, 184–192. [Google Scholar] [CrossRef]

- Hossain, M.S.; Muhammad, G. Emotion recognition using deep learning approach from audio–visual emotional big data. Inf. Fusion 2019, 49, 69–78. [Google Scholar] [CrossRef]

- Huang, Y.; Yang, J.; Liao, P.; Pan, J. Fusion of Facial Expressions and EEG for Multimodal Emotion Recognition. Comput. Intell. Neurosci. 2017, 2017, 1–8. [Google Scholar] [CrossRef]

- Abhang, P.A.; Gawali1, B.W. Correlation of EEG Images and Speech Signals for Emotion Analysis. Br. J. Appl. Sci. Tech. 2015, 10, 1–13. [Google Scholar] [CrossRef]

- MacQueen, J.B. Some methods for classification and analysis of multivariate observations. In Proceedings of the Fifth Berkeley Symposium on Mathematical Statistics and Probability, Berkeley, CA, USA, 21 June–18 July 1965 and 27 December 1965–7 January 1966; pp. 281–297. [Google Scholar]

- Bezdek, J. Corrections for “FCM: The fuzzy c-means clustering algorithm”. Comput. Geosci. 1985, 11, 660. [Google Scholar] [CrossRef]

- Duda, R.O.; Hart, P.E.; Stork, D.G. Pattern Classification, 2nd ed.; Wiley: Hoboken, NJ, USA, 2001. [Google Scholar]

- Ripon, K.; Tsang, C.-H.; Kwong, S. Multi-Objective Data Clustering using Variable-Length Real Jumping Genes Genetic Algorithm and Local Search Method. In Proceedings of the 2006 IEEE International Joint Conference on Neural Network, Vancouver, BC, Canada, 16–21 July 2006. [Google Scholar]

- Karaboga, D.; Ozturk, C. A novel clustering approach: Artificial Bee Colony (ABC) algorithm. Appl. Soft Comput. 2011, 11, 652–657. [Google Scholar] [CrossRef]

- Zabihi, F.; Nasiri, B. A Novel History-driven Artificial Bee Colony Algorithm for Data Clustering. Appl. Soft Comput. 2018, 71, 226–241. [Google Scholar] [CrossRef]

- Islam, M.Z.; Estivill-Castro, V.; Rahman, M.A.; Bossomaier, T. Combining K-Means and a genetic algorithm through a novel arrangement of genetic operators for high quality clustering. Expert Syst. Appl. 2018, 91, 402–417. [Google Scholar] [CrossRef]

- Song, R.; Zhang, X.; Zhou, C.; Liu, J.; He, J. Predicting TEC in China based on the neural networks optimized by genetic algorithm. Adv. Space Res. 2018, 62, 745–759. [Google Scholar] [CrossRef]

- Krzywanski, J.; Fan, H.; Feng, Y.; Shaikh, A.R.; Fang, M.; Wang, Q. Genetic algorithms and neural networks in optimization of sorbent enhanced H2 production in FB and CFB gasifiers. Energy Convers. Manag. 2018, 171, 1651–1661. [Google Scholar] [CrossRef]

- Vakili, M.; Khosrojerdi, S.; Aghajannezhad, P.; Yahyaei, M. A hybrid artificial neural network-genetic algorithm modeling approach for viscosity estimation of graphene nanoplatelets nanofluid using experimental data. Int. Commun. Heat Mass Transf. 2017, 82, 40–48. [Google Scholar]

- Sun, W.; Xu, Y. Financial security evaluation of the electric power industry in China based on a back propagation neural network optimized by genetic algorithm. Energy 2016, 101, 366–379. [Google Scholar] [CrossRef]

- Soleymani, M.; Lichtenauer, J.; Pun, T.; Pantic, M. A Multimodal Database for Affect Recognition and Implicit Tagging. IEEE Trans. Affect. Comput. 2012, 3, 42–55. [Google Scholar] [CrossRef]

- Harley, J.M.; Bouchet, F.; Hussain, M.S.; Azevedo, R.; Calvo, R. A multi-componential analysis of emotions during complex learning with an intelligent multi-agent system. Comput. Human Behav. 2015, 48, 615–625. [Google Scholar] [CrossRef]

- Ozdas, A.; Shiavi, R.; Silverman, S.; Silverman, M.; Wilkes, D. Investigation of Vocal Jitter and Glottal Flow Spectrum as Possible Cues for Depression and Near-Term Suicidal Risk. IEEE Trans. Biomed. Eng. 2004, 51, 1530–1540. [Google Scholar] [CrossRef] [PubMed]

- Muthusamy, H.; Polat, K.; Yaacob, S. Particle Swarm Optimization Based Feature Enhancement and Feature Selection for Improved Emotion Recognition in Speech and Glottal Signals. PLoS ONE 2015, 10, e0120344. [Google Scholar] [CrossRef] [PubMed]

- Jebelli, H.; Hwang, S.; Lee, S. EEG Signal-Processing Framework to Obtain High-Quality Brain Waves from an Off-the-Shelf Wearable EEG Device. J. Comput. Civil Eng. 2018, 32, 04017070. [Google Scholar] [CrossRef]

- Ferree, T.C.; Luu, P.; Russell, G.S.; Tucker, D.M. Scalp electrode impedance, infection risk, and EEG data quality. Clin. Neurophys. 2001, 112, 536–544. [Google Scholar] [CrossRef]

- Delorme, A.; Makeig, S. EEGLAB: An open source toolbox for analysis of single-trial EEG dynamics including independent component analysis. J. Neurosci. Methods 2004, 134, 9–21. [Google Scholar] [CrossRef] [PubMed]

- Ayadi, M.E.; Kamel, M.S.; Karray, F. Survey on speech emotion recognition: Features, classification schemes, and databases. Pattern Recogn. 2011, 44, 572–587. [Google Scholar] [CrossRef]

- Candra, H.; Yuwono, M.; Chai, R.; Handojoseno, A.; Elamvazuthi, I.; Nguyen, H.T.; Su, S. Investigation of window size in classification of EEG-emotion signal with wavelet entropy and support vector machine. In Proceedings of the 37th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Milano, Italy, 25–29 August 2015. [Google Scholar]

- Ou, G.; Murphey, Y.L. Multi-class pattern classification using neural networks. Pattern Recogn. 2007, 40, 4–18. [Google Scholar] [CrossRef]

- Yang, J.; Yang, X.; Zhang, J. A Parallel Multi-Class Classification Support Vector Machine Based on Sequential Minimal Optimization. In Proceedings of the First International Multi-Symposiums on Computer and Computational Sciences (IMSCCS06), Hangzhou, China, 20–24 June 2006. [Google Scholar]

- Ghoniem, R.M.; Shaalan, K. FCSR - Fuzzy Continuous Speech Recognition Approach for Identifying Laryngeal Pathologies Using New Weighted Spectrum Features. In Proceedings of the 2017 International Conference on Advanced Intelligent Systems and Informatics (AISI), Cairo, Egypt, 9–11 September 2017; pp. 384–395. [Google Scholar]

- Tan, J.H. On Cluster Validity for Fuzzy Clustering. Master Thesis, Applied Mathematics Department, Chung Yuan Christian University, Taoyuan, Taiwan, 2000. [Google Scholar]

- Wikaisuksakul, S. A multi-objective genetic algorithm with fuzzy c-means for automatic data clustering. Appl. Soft Comput. 2014, 24, 679–691. [Google Scholar] [CrossRef]

- Strehl, A.; Ghosh, J. Cluster ensembles—a knowledge reuse framework for combining multiple partitions. J. Mach. Learn. Res. 2002, 3, 583–617. [Google Scholar]

- Koelstra, S.; Patras, I. Fusion of facial expressions and EEG for implicit affective tagging. Image Vision Comput. 2013, 31, 164–174. [Google Scholar] [CrossRef]

- Dora, L.; Agrawal, S.; Panda, R.; Abraham, A. Nested cross-validation based adaptive sparse representation algorithm and its application to pathological brain classification. Expert Syst. Appl. 2018, 114, 313–321. [Google Scholar] [CrossRef]

- Oppedal, K.; Eftestøl, T.; Engan, K.; Beyer, M.K.; Aarsland, D. Classifying Dementia Using Local Binary Patterns from Different Regions in Magnetic Resonance Images. Int. J. Biomed. Imaging 2015, 2015, 1–14. [Google Scholar] [CrossRef] [PubMed]

- Gao, X.; Lee, G.M. Moment-based rental prediction for bicycle-sharing transportation systems using a hybrid genetic algorithm and machine learning. Comput. Ind. Eng. 2019, 128, 60–69. [Google Scholar] [CrossRef]

- Liu, Z.-T.; Xie, Q.; Wu, M.; Cao, W.-H.; Mei, Y.; Mao, J.-W. Speech emotion recognition based on an improved brain emotion learning model. Neurocomputing 2018, 309, 145–156. [Google Scholar] [CrossRef]

- Özseven, T. A novel feature selection method for speech emotion recognition. Appl. Acoust. 2019, 146, 320–326. [Google Scholar] [CrossRef]

- Ghoniem, R.M. Deep Genetic Algorithm-Based Voice Pathology Diagnostic System. In Proceedings of the Natural Language Processing and Information Systems Lecture Notes in Computer Science, Salford, UK, 26–28 June 2019; pp. 220–233. [Google Scholar]

- Ghoniem, R.M.; Shaalan, K. A Novel Arabic Text-independent Speaker Verification System based on Fuzzy Hidden Markov Model. Procedia Comput. Sci. 2017, 117, 274–286. [Google Scholar] [CrossRef]

- Nakisa, B.; Rastgoo, M.N.; Tjondronegoro, D.; Chandran, V. Evolutionary computation algorithms for feature selection of EEG-based emotion recognition using mobile sensors. Expert Syst. Appl. 2018, 93, 143–155. [Google Scholar] [CrossRef]

- Munoz, R.; Olivares, R.; Taramasco, C.; Villarroel, R.; Soto, R.; Barcelos, T.S.; Merino, E.; Alonso-Sánchez, M.F. Using Black Hole Algorithm to Improve EEG-Based Emotion Recognition. Comput. Intell. Neurosci. 2018, 2018, 1–21. [Google Scholar] [CrossRef]

- Munoz, R.; Olivares, R.; Taramasco, C.; Villarroel, R.; Soto, R.; Alonso-Sánchez, M.F.; Merino, E.; Albuquerque, V.H.C.D. A new EEG software that supports emotion recognition by using an autonomous approach. Neural Comput. Appl. 2018. [Google Scholar] [CrossRef]

| Reference | Publication Year | Corpus | Speech Analysis | Feature Selection | Classifier | Classification Accuracy |

|---|---|---|---|---|---|---|

| [19] | 2019 | Berlin EmoDB | The local feature learning blocks (LFLB) and the LSTM are used to learn the local and global features from the raw signals and log-mel spectrograms. | - | 1D & 2D CNN LSTM networks. | 95.33% and 95.89% for speaker-dependent and speaker-independent results, respectively. |

| IEMOCAP | 89.16% and 52.14% for speaker-dependent and speaker-independent results, respectively. | |||||

| [20] | 2018 | Chinese speech database from Chinese academy of sciences (CASIA) | Speaker-dependent features, and speaker-independent features. | A correlation analysis and Fisher-based method. | Extreme learning machine (ELM) | 89.6% |

| [21] | 2015 | BES | Two prosodic features and four paralinguistic features of the pitch and spectral energy balance. | None | Support vector machines (SVM) | 94.9% |

| LDC Emotional Prosody Speech and Transcripts | 88.32% | |||||

| Polish Emotional Speech Database | 90% | |||||

| [22] | 2015 | BES | Spectral and prosodic features. | None | Ranking SVMs | 82.1% |

| LDC | 52.4% | |||||

| FAU Aibo | 39.4% |

| Reference | Corpus | Publication Year | Feature Extraction | Feature Selection | Classifier | Emotion Classes | Classification Accuracy |

|---|---|---|---|---|---|---|---|

| [26] | DEAP database | 2018 | Multivariate synchrosqueezing transform. | Non-negative matrix factorization and independent component analysis. | Artificial Neural Network (ANN) | Arousal/valence states (high arousal-high valence, high arousal-low valence, low arousal-low valence, low arousal -high valence). | 82.03% and 82.11% for valence and arousal state recognition. |

| [27] | DEAP database | 2018 | Liquid State Machines (LSM) | - | Decision Trees | Valence, arousal as well as liking classes. | 84.63%, 88.54%, and 87.03% for valence, arousal, and liking classes, respectively. |

| [28] | DEAP database | 2016 | Empirical mode decomposition and sample entropy. | None | SVM | Arousal/valence states (high arousal-high valence, high arousal-low valence, low arousal-low valence, low arousal-high valence). | 94.98% for binary-class tasks, and 93.20% for the multi-class task. |

| [29] | Collected database | 2016 | Features of time (i.e., Latency to Amplitude Ration, Peak to Peak Signal Value, etc.), frequency (i.e., Power Spectral Density, and Band Power), and wavelet domain. | None | Three different classifiers (ANN, k-nearest neighbor, and SVM) | Happiness, sadness, love, and, anger | 78.11% for ANN classifier. |

| Reference | Publication Year | Corpus | Fused Modalities | Feature Extraction | Fusion Approach | Classifier | Classification Accuracy |

|---|---|---|---|---|---|---|---|

| [30] | 2019 | RML, Enterface05, and BAUM-1s | Speech and image features | 2D convolutional neural network for audio signals, and 3D convolutional neural network for image features. | Deep belief network | SVM | 85.69% for Enterface05 dataset in case of multi-modal classification based upon six discrete emotions. |

| 91.3% and 91.8%, respectively, for binary arousal-valence model, in case of multi-modal classification using Enterface05 dataset. | |||||||

| [31] | 2019 | Private database, namely, Big Data and a publicly available database of Enterface05. | Speech and video | For speech feature extraction, Mel-spectrogram is obtained. For video feature extraction, a number of representative frames are selected from a video segment. | Extreme Learning Machines (ELM) | The CNN is separately fed with speech and video features, respectively. | An accuracy of 99.9% and 86.4% for the favor of ELM fusion using the Big Data and Enterface05, respectively. |

| [32] | 2017 | MAHNOB-HCI database | Facial expressions and EEG | As for facial expressions, the appearance features are estimated from each frame block then the expression percentage feature is computed. The EEG features are calculated using the Welch’s Averaged Periodogram. | Feature-level fusion by concatenating all features within a single vector, in addition to decision-level fusion by processing each modality in a separate way, and amalgamating results from their special classifiers in the recognition stage. | For decision-level fusion, LWF and EWF are used. For feature-level fusion, the paper used several statistical fusion methods like Canonical Correlation Analysis (CCA) and Multiple Feature Concatenation (MFC). | For decision-level fusion, LWF achieved recognition rates of 66.28% and 63.22%, for valence and arousal classes, respectively. For feature-level fusion, MFC achieved recognition rates of 57.47% and 58.62%, for valence and arousal classes, respectively. |

| [33] | 2015 | Private database, which were collected from university students. | EEG images along with speech signals | For EEG images, the features were extracted using the threshold, the Sobel edge detection, and some statistical measures (e.g., mean, variance, standard deviation, etc.). While the intensity, the RMS Energy, and the pitch were used for speech signal feature extraction. | None (the study investigated correlation of EEG images as well as Speech signals) | None | The significance accuracy of correlation coefficient was about 95% for the favor of said emotional status. |

| Emotion Dimension | Valence | Others | ||||||||

| Positive valence | Num. of music tracks inducing emotion | Samples number in database | Negative valence | Num. of music tracks inducing emotion | Samples number in database | Class | Num. of music tracks inducing emotion | Samples number in database | ||

| Arousal | High arousal | Happy | 5 | 5 music tracks × 36 participants = 180 samples | Fear | 5 | 5 music tracks × 36 participants = 180 samples | Neutral | 5 | 5 music tracks × 36 participants = 180 samples |

| Anxiety | 5 | 180 | Surprise | 5 | 180 | |||||

| Disgust | 5 | 180 | ||||||||

| Low arousal | Sadness | 5 | 180 | |||||||

| Total | 180 samples representing positive valence-high arousal emotion classes of each single modality × 2 modalities (speech and EEG) = 360 | 540 samples representing negative valence-high arousal emotion classes of each single modality × 2 modalities = 1080 180 samples representing negative valence-low arousal emotions of each single modality × 2 modalities = 360 | 360 samples for each single modality × 2 modalities = 720 | |||||||

| Speech Features | Characteristics | ||||

|---|---|---|---|---|---|

| Prosodic Features | Speech Quality Features | Spectrum Features | |||

| Fundamental frequency-related | Energy-related | Time length correlation-related | |||

| Speaker-dependent | Fundamental frequency | Short-time maximum amplitude | Short-time average crossing zero ratio | Breath sound | 12-order MFCC |

| Fundamental frequency maximum | Short-time average energy | Speech speed | Throat sound | SEDC for 12 frequency bands (equally-spaced) | |

| Fundamental frequency four-bit value | Short-time average amplitude | Maximum and average values of first, two as well as three formant frequencies | Linear Predictor Coefficients (LPC) | ||

| Speaker-independent | Fundamental frequency average change rate | The Short-Time-Energy average rate | Partial time ratio | Average variation of 1st, 2nd, and 3rd formant frequency rate | 1st-order difference MFCC |

| Standard deviation of fundamental frequency | The amplitude of short-time energy | Standard deviation of 1st, 2nd, and 3rd formant frequency rate | 2nd-order difference MFCC | ||

| Change rate of four-bit point frequency | Every sub-point value of 1st, 2nd, and 3rd formant frequencies change ratio | ||||

| Domain of Analysis | EEG Feature | Explanation | Equation |

|---|---|---|---|

| Time domain | Cumulative maximum | Highest amplitude of channel until sample | |

| Cumulative minimum | Lowest amplitude of channel until sample | ||

| Mean | Amplitude average absolute value over the various EEG channels | ||

| Median | Signal median over the various channels | ||

| Standard deviation | EEG signals deviations over the various channels in every window | ||

| Variance | EEG signal amplitude variance over the various channels | ||

| Kurtosis | Reveals the EEG signal peak sharpness | ||

| Smallest window components | Lowest amplitude over the various channels | ||

| Moving median using a window size of | Signal median with channel and a widow of -samples size | ||

| Maximum-to-minimum difference | Difference among highest and lowest EEG signal amplitude over the various channels | ||

| Peak | Highest amplitude of EEG signal over the various channels in time domain | ||

| Frequency domain | Peak to Peak | Time among EEG signal’s peaks over the various windows | |

| Peak location | Location of highest EEG amplitude over channels | ||

| Root-mean-square level | EEG signal’s Norm 2 divided by the square root of samples number over the various EEG channels | ||

| Root-sum-of-squares level | EEG signal’s Norm over the distinct channels in every window | ||

| Peak-magnitude-to-root-mean-square ratio | Highest amplitude of EEG signal divided by the | ||

| Total zero crossing number | Points number where the EEG amplitude sign changes | ||

| Alpha mean power | EEG signal power in channel in an interval of | ||

| Beta mean power | EEG signal power in Beta interval | ||

| Delta mean power | EEG signal power in Delta interval | ||

| Theta mean power | EEG signal power in Theta interval | ||

| Median frequency | Signal power half of channel which is distributed over the frequencies lower than . | ||

| Time-frequency domain | Spectrogram | The spectrogram (short-time Fourier transform), is computed through multiplying the time signal by a sliding time-window, referred as . The time-dimension is added by window location and one outputs time-varying frequency analysis. refers to time location and is the discrete frequencies number. |

| Parameter | Value |

|---|---|

| Number of layers for each NN | 3 (input, hidden, output) |

| Number of hidden nodes in each NN | 20 |

| Activation functions for the hidden and output layers | tansig-purelin |

| Learning rule | Back-propagation |

| Learning rate | 0. 1 |

| Momentum constant | 0. 7 |

| Units of population | 150 |

| Maximum generations | 50 |

| Mutation rate | 0.2 |

| Crossover rate | 0.5 |

| Corpus | Reference | Year of Publication | Feature Extraction | Feature Selection | Classifier | Classification Accuracy |

|---|---|---|---|---|---|---|

| SAVEE | [24] | 2017 | The study used 50 higher order features (28 Bispectral feature + 22 Bicoherence), which were combined with Inter-Speech 2010 features for improving the recognition rate. | Feature selection included to phases: Multi-cluster feature selection, and proposed hybrid method of Biogeography-based Optimization as well as Particle Swarm Optimization. | SVM and ELM. | The speaker-independent accuracies were 62.38% and 50.60%, for SVM and ELM, respectively, whereas the speaker-dependent accuracies were 70.83%, and 69.51%, respectively. |

| [64] | 2018 | Feature set of 21 statistics | Principal Component Analysis (PCA), Linear Discriminant Analysis (LDA), PCA + LDA. | Genetic algorithm- Brain Emotional Learning model. | The speaker independent accuracy was 44.18%, when using PCA as feature selector | |

| [65] | 2019 | The openSMILE toolbox was used to extract 1582 features from each speech sample | Proposed feature selection method relies on the changes in emotions according to acoustic features. | SVM, k-nearest neighbor (k-NN), and NN. | 77.92%, 73.62%, and 57.06% for SVM, k-NN, and NN, respectively. | |

| Proposed speech emotion recognition model | Mixing feature set of speaker-dependent and speaker-independent characteristics. | Hybrid of FCM and GA. | Proposed hybrid Optimized multi-class NN | 98.21% |

| Dataset | AVR (%) | |

|---|---|---|

| Fixed window | Sliding window | |

| Collected database | 98.06 | 96.93 |

| MAHNOB | 98.26 | 97.41 |

| Corpus | Reference | Year of Publication | Feature Extraction | Feature Selection | Classifier | Classification Accuracy |

|---|---|---|---|---|---|---|

| MAHNOB | [68] | 2017 | Features of time, frequency, and time–frequency. | Genetic Algorithm, Ant Colony Optimization, Particle Swarm Optimization, and Differential Evolution. | Probabilistic Neural Network | 96.97 1.893% |

| [69] | 2018 | Empirical Mode Decomposition (EMD) and the Wavelet Transform. | Heuristic Black Hole Algorithm | Multi-class SVM | 92.56% | |

| [70] | 2018 | EMD and the Bat algorithm | Autonomous Bat Algorithm | Multi-class SVM | 95% | |

| Proposed model | Hybrid of FCM and GA. | Optimized multi-class NN | 98.06% |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ghoniem, R.M.; Algarni, A.D.; Shaalan, K. Multi-Modal Emotion Aware System Based on Fusion of Speech and Brain Information. Information 2019, 10, 239. https://doi.org/10.3390/info10070239

Ghoniem RM, Algarni AD, Shaalan K. Multi-Modal Emotion Aware System Based on Fusion of Speech and Brain Information. Information. 2019; 10(7):239. https://doi.org/10.3390/info10070239

Chicago/Turabian StyleGhoniem, Rania M., Abeer D. Algarni, and Khaled Shaalan. 2019. "Multi-Modal Emotion Aware System Based on Fusion of Speech and Brain Information" Information 10, no. 7: 239. https://doi.org/10.3390/info10070239

APA StyleGhoniem, R. M., Algarni, A. D., & Shaalan, K. (2019). Multi-Modal Emotion Aware System Based on Fusion of Speech and Brain Information. Information, 10(7), 239. https://doi.org/10.3390/info10070239