1. Introduction

In recent years, autonomous surface vehicles (ASVs) have garnered unprecedented attention and experienced rapid development in both military and civilian domains [

1,

2]. With the continuous advancement of vehicle intelligence, the operation and application of ASVs has expanded from safe environments focused on reconnaissance and surveillance to complex scenarios involving combat and confrontation [

3,

4]. Target encirclement represents a crucial research direction in cooperative control of multi-ASVs, where non-cooperative targets are enclosed within a specific circular domain, thereby diminishing their threat capabilities [

5,

6]. However, the targets in real-world scenarios are not stationary, such as pollutant spills, illegal fishing, or autonomous interdiction, which can move and disperse [

7]. Sequential encirclement of such targets is frequently ineffective and prone to uncontrolled spreading or escape, leading to excessive duration and mission failure. Therefore, the study of cooperative encirclement for ASVs against multiple targets has significant practical value.

Existing target encirclement control methods can be broadly categorized into three approaches: bio-inspired encirclement [

8], differential game-based encirclement [

9], and reinforcement learning-based encirclement [

10]. To be specific, bio-inspired approaches primarily mimic the dynamic interactions observed between predators and prey in nature. For example, Ref. [

11] simulates the cooperative behaviors of biological groups to design a bio-inspired distributed encirclement controller, achieving efficient encirclement for non-cooperative targets. Ref. [

12] develops a switching encirclement control strategy using animal group behavior. Ref. [

13] designs a bio-inspired optimization algorithm based on orca predation behavior, simulating orcas’ search, encirclement, and attack behaviors. By combining with agent position-based area partitioning and bio-inspired neural networks, Ref. [

14] proposes a cooperative encirclement method using an improved crayfish optimization algorithm. However, these methods exhibit limited adaptability to complex environments, and abrupt changes in target behavior often render the system incapable of adjusting the encirclement strategy effectively.

Differential game-based methods construct pursuit-evasion models and derive optimal encirclement strategies by solving Hamilton–Jacobi equations [

15,

16]. For example, Ref. [

17] constructs a cooperative encirclement framework based on differential games, analytically solving via Hamilton–Jacobi–Isaacs equations. Ref. [

18] proposes a hybrid differential game-based encirclement guidance method, enabling pursuit-evasion missions under static obstacle constraints. Ref. [

19] develops a pursuit-evasion matching framework through global game decomposition. Ref. [

20] designs a cooperative control method based on stochastic potential games and multi-agent reinforcement learning that estimates Nash equilibrium strategies using temporal relative motion information. However, as the size of the agent group increases, these methods require extensive assumptions and rules, making them less feasible for real-time control in large-scale scenarios.

Due to the rapid advancement of artificial intelligence technology, deep reinforcement learning has been extensively applied in decision-making, control, and optimization [

21,

22]. Reinforcement learning-based encirclement methods can optimize encirclement strategies through agent–environment interactions, effectively handling high-dimensional state spaces and demonstrating strong adaptability in complex environments [

23,

24]. For example, Ref. [

25] balances individual and collective benefits through knowledge-embedded encirclement rewards. Ref. [

26] establishes an obstacle-assisted cooperative encirclement method based on multi-agent reinforcement learning. Ref. [

27] conducts decentralized encirclement training via curriculum learning. Ref. [

28] utilizes a centralized training-decentralized execution framework to solve the pursuit evasion problem. Ref. [

29] proposes a reinforcement learning-based adaptive encirclement control method under an obstacle environment. Ref. [

30] uses virtual barriers and curriculum learning techniques during the training process, improving the generalization capabilities and convergence speed of the capture policy for limited perception ASVs. However, the reward functions employed in these approaches often lead to insufficient learning motivation for individual agents. Some agents may exploit collective rewards and cease optimizing the encirclement strategies, thereby prolonging the training process. Note that most existing studies focus on single-target encirclement, with limited attention paid to multi-target cooperative encirclement scenarios.

Motivated by these research gaps, this article investigates the cooperative encirclement problem for ASVs against multi-targets based on multi-agent reinforcement learning (MARL). By integrating dynamic target allocation and multi-stage reward guidance, the method utilizes a MARL framework to train ASVs for cooperative target encirclement. Simulation results with six-on-two game demonstrate that the encirclement performance of our proposed control method significantly outperforms existing control methods. Main contributions are as follows: (1) A dynamic target allocation algorithm based on proximity principles and encirclement distance is developed to optimize the target selection process for ASVs. (2) A curriculum learning-inspired multi-stage reward function is designed, including search, besiege, and capture, increasing the success rate of target encirclement. (3) A cooperative control solution is proposed by employing a multi-agent soft actor-critic reinforcement learning framework with long short-term memory networks, resulting in more efficient and stable ASV encirclement maneuvers.

The rest of this article is composed of the following sections.

Section 2 formulates the encirclement problem of ASVs against multiple targets. The dynamic target assignment, cooperative encirclement reward, and reinforcement learning control are presented in

Section 3.

Section 4 verifies the performance of the designed control method by simulations. Finally,

Section 5 concludes this article.

Notations: Throughout this article, denotes the Euclidean norm of a vector. denotes the time step. denotes the mathematical expectation. denotes the Hadamard product and denotes the gradient operator.

2. Problem Statement

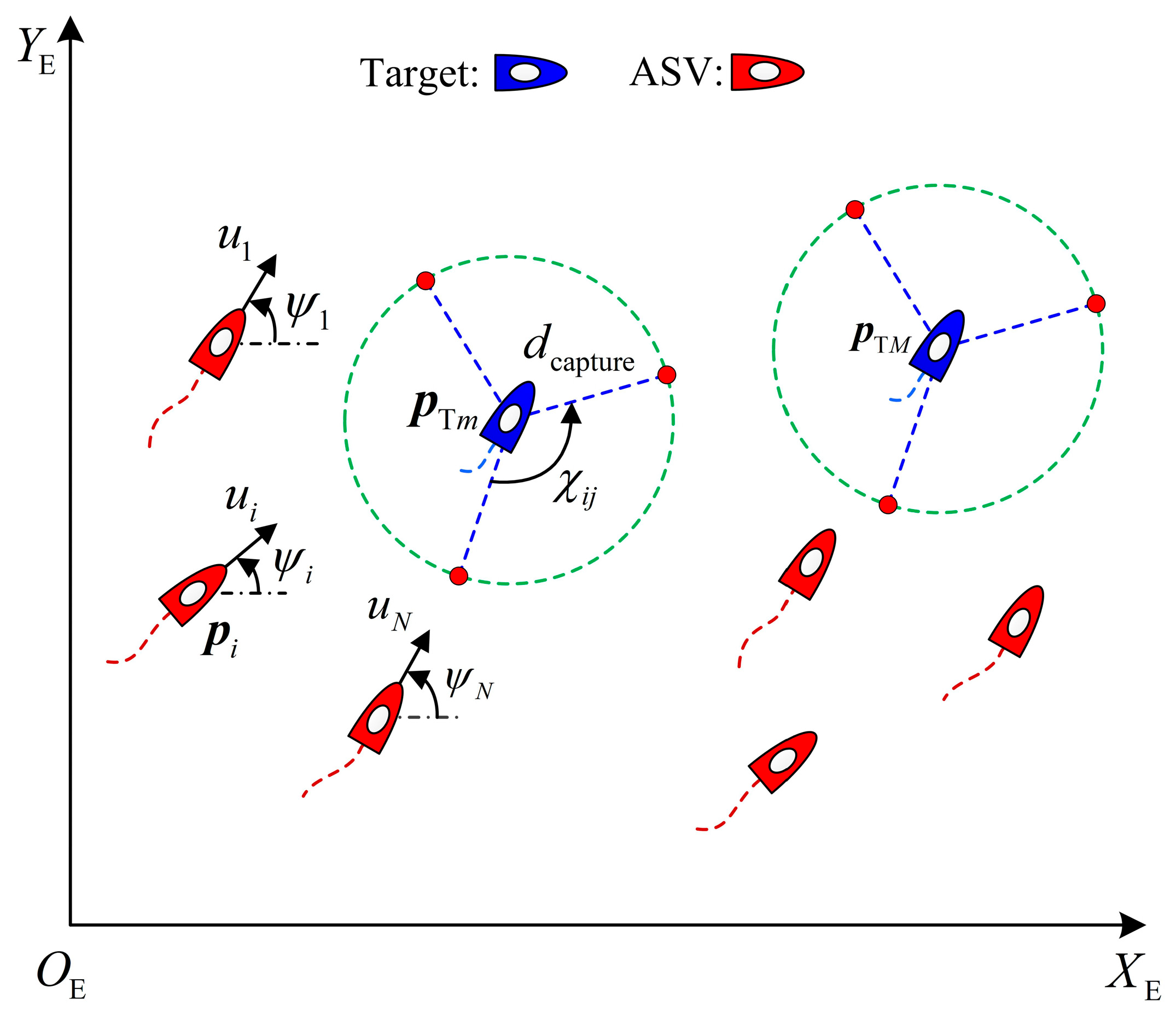

As shown in

Figure 1, consider multiple high-speed ASVs and multiple low-speed targets in the horizontal plane.

denotes the position of the

ASV with

.

denotes the position of the

target with

.

denotes the encirclement radius.

denotes encirclement angles formed by adjacent ASVs.

According to Ref. [

31], the kinematic and kinetic equation of the

ASV can be expressed as

where

denotes the position and heading angle information of the ASV in the earth-fixed frame.

denotes the surge, sway and yaw velocities of the ASV in the body-fixed frame.

denotes the input of the ASV including surge forces and yaw moments.

denotes the time-varying environmental disturbances.

denotes the rotation matrix from the body-fixed frame to the earth-fixed frame, which can be written as

while the inertial matrix

, the nominal dynamics

,

,

,

,

,

and

denote the fluid damping.

,

and

denote the inertial component on the three degrees of freedom.

In this article, a MARL-based cooperative encirclement control method is constructed for ASVs to guarantee that vehicles are evenly distributed around the targets with desired distances and angles under the premise of safety. To be specific, the control objective can be formalized as

where

denotes the encirclement alliance for the

target.

denotes the minimum distance between the ASV and the target.

denotes the desired encirclement angle with

being the number of one alliance.

is a small positive constant.

Remark 1.

In this paper, the encirclement mission of ASVs is not subject to geographical boundary constraints. To guarantee the existence of a feasible solution, we focus exclusively on high-speed ASVs and low-speed targets. Moreover, considering the minimum number of nodes required for a closed loop in the two-dimensional plane, there exists .

Remark 2.

By virtue of artificial potential field method, the maneuvering strategy of targets based on position information is generated bywhere .

3. Reinforcement Learning-Based Cooperative Encirclement Control

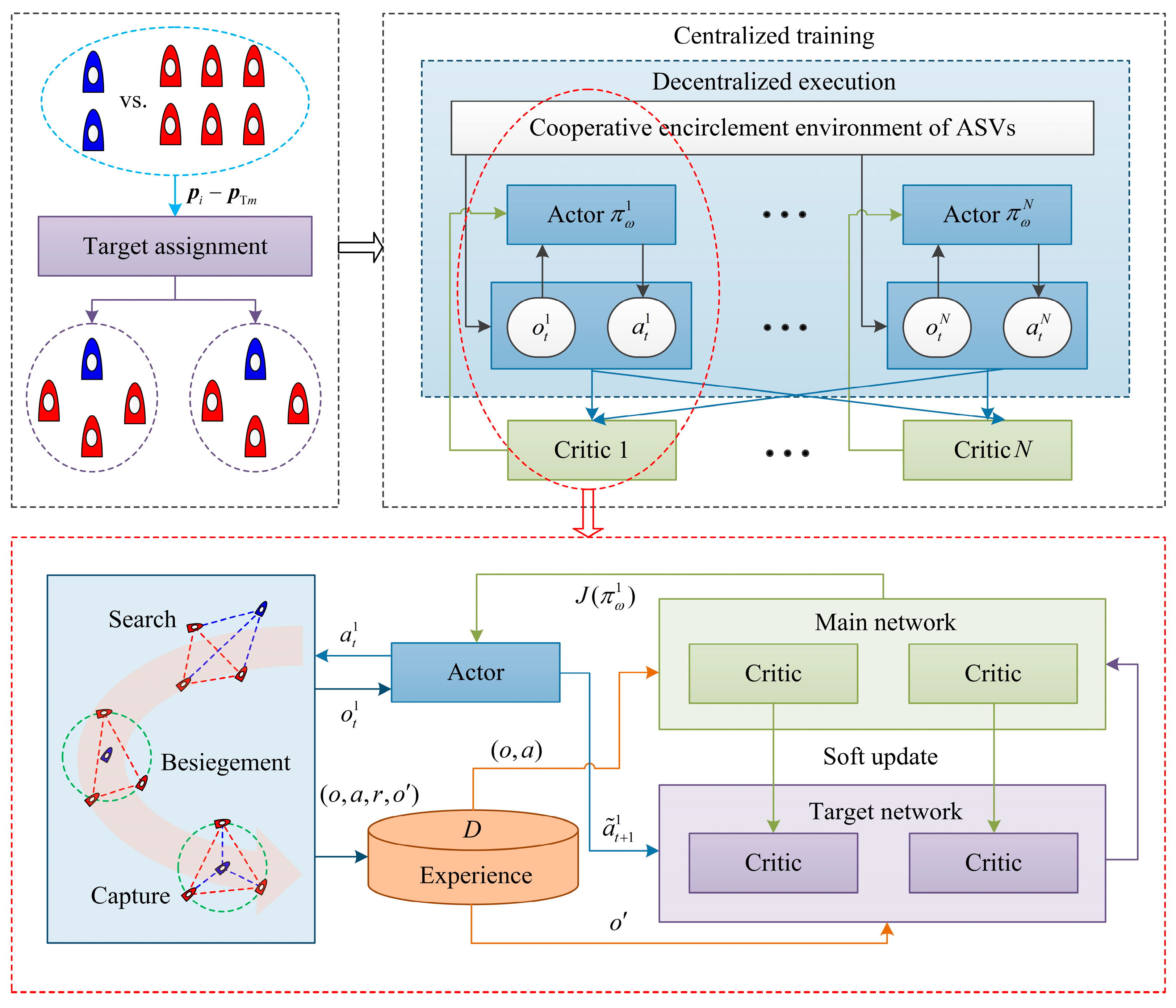

This section presents the proposed cooperative encirclement control design. The entire control architecture is illustrated in

Figure 2, which includes dynamic target assignment, cooperative encirclement reward and reinforcement learning control modules. To be specific, the assignment module is used to assign targets to the ASVs based on the shortest encirclement distance. The cooperative encirclement reward module is responsible for guiding ASVs to approach and capture the assigned targets. The multi-agent soft actor-critic reinforcement learning module is used to train ASVs with unknown dynamics, optimizing encirclement performance through agent–environment interactions.

3.1. Dynamic Target Assignment

In this part, a dynamic task allocation algorithm is designed using the total encirclement distance. To obtain the minimal encirclement distance at any time, the position-based objective function is designed as

with

satisfying

Note that in the cooperative encirclement process, each ASV can choose one target to encircle, while each target requires at least vehicles to form an effective encirclement. If the ASV is matched with a target, then . Otherwise, .

The optimal assignment between ASVs and targets at each time step is obtained by solving the maximization of Equation (5). The dynamic task allocation is presented in Algorithm 1, where

denotes assignment matrices that satisfy Equation (6).

| Algorithm 1: Target allocation for cooperative encirclement. |

Inputs: Target position , ASV position , the number of ASVs/targets and .

Initialization: Optimal allocation relation matrix , extreme value .

1: Calculating the Euclidean distance between ASVs and targets.

2: for do

3:

4:

5:

6: end if

7: end for

Outputs: Optimal allocation relation matrix . |

3.2. Cooperative Encirclement Reward

In this part, to overcome the learning inefficiency caused by sparse rewards, a curriculum learning-based encirclement reward function is developed for one alliance, which divides the whole process into three stages: search, besiegement, and capture, progressively enhancing the cooperative encirclement capability of ASVs.

To be specific, the condition of the search stage is defined as

where

denotes the polygonal area formed by connecting all ASVs. The search stage-induced reward is designed as

where

.

The condition of the besiegement stage is defined as

where

,

denotes the Euclidean distance between two vehicles. The besiegement stage-induced reward is designed as

where

,

, and

.

The condition of the capture stage is defined as

where

denotes the maximum value. The capture stage-induced reward is designed as

where

and

.

By combining rewards Equations (8), (10), and (12), the stage-induced reward of cooperative target encirclement is written as

Moreover, to prevent collision among ASVs, the collision avoidance reward is designed as

where

and

.

Moreover, to reduce the control input chattering, the constraint reward is designed as

In this context, the cooperative target encirclement reward function for the

ASV at time step

can be computed by

3.3. Reinforcement Learning Control

By virtue of centralized training with a decentralized execution framework, a maximum entropy-based multi-agent soft actor-critic algorithm is developed for ASVs against multiple targets. In centralized training, the critic network of each ASV evaluates the global encirclement status by incorporating observations and actions from all vehicles, which can alleviate coordination inefficiencies caused by local observations. In distributed execution, the actor network of each ASV independently executes policy without relying on behavioral information from other vehicles, just utilizing its local observations to execute the encirclement strategy.

Firstly, the observation and action of the

ASV is defined as

which satisfies

and

according to the maneuverability of ASVs.

The actor network

of the

ASV fits the policy function

, whose outputs of are the mean and standard deviation of a Gaussian distribution. The action is sampled by reparameterization

where

denotes the Gaussian noise,

denotes the mean value,

denotes the standard deviation and

is the parameter of the actor network for the

ASV.

Within the reinforcement learning, the maximum entropy mechanism is adopted, enabling ASVs to enhance the random exploration ability of the policy while maximizing cumulative rewards. Consequently, the objective function of actor networks is designed based on the policy entropy and action value function

where

,

,

.

represents the smaller value generated by the two critic networks in the main network, where

represents the parameter of two critic networks.

denotes the experience replay buffer, and each set of experience data is stored in the form of tuple

.

represents the reward set of all ASVs.

denotes the observation information set at the next moment.

denotes the regularization coefficient, which is updated by minimizing the loss function

where

is the predefined entropy threshold.

The critic network of the

ASV evaluates the current encirclement status based on the global observation and action information, which is updated by minimizing the following loss function

with

where

represents the smaller value generated by two target critic networks.

denotes the parameters of the target critic network.

with

being obtained by real-time sampling, that is

, rather than from the experience replay buffer.

The target network of the

ASV is updated using soft update

where

denotes update rate.

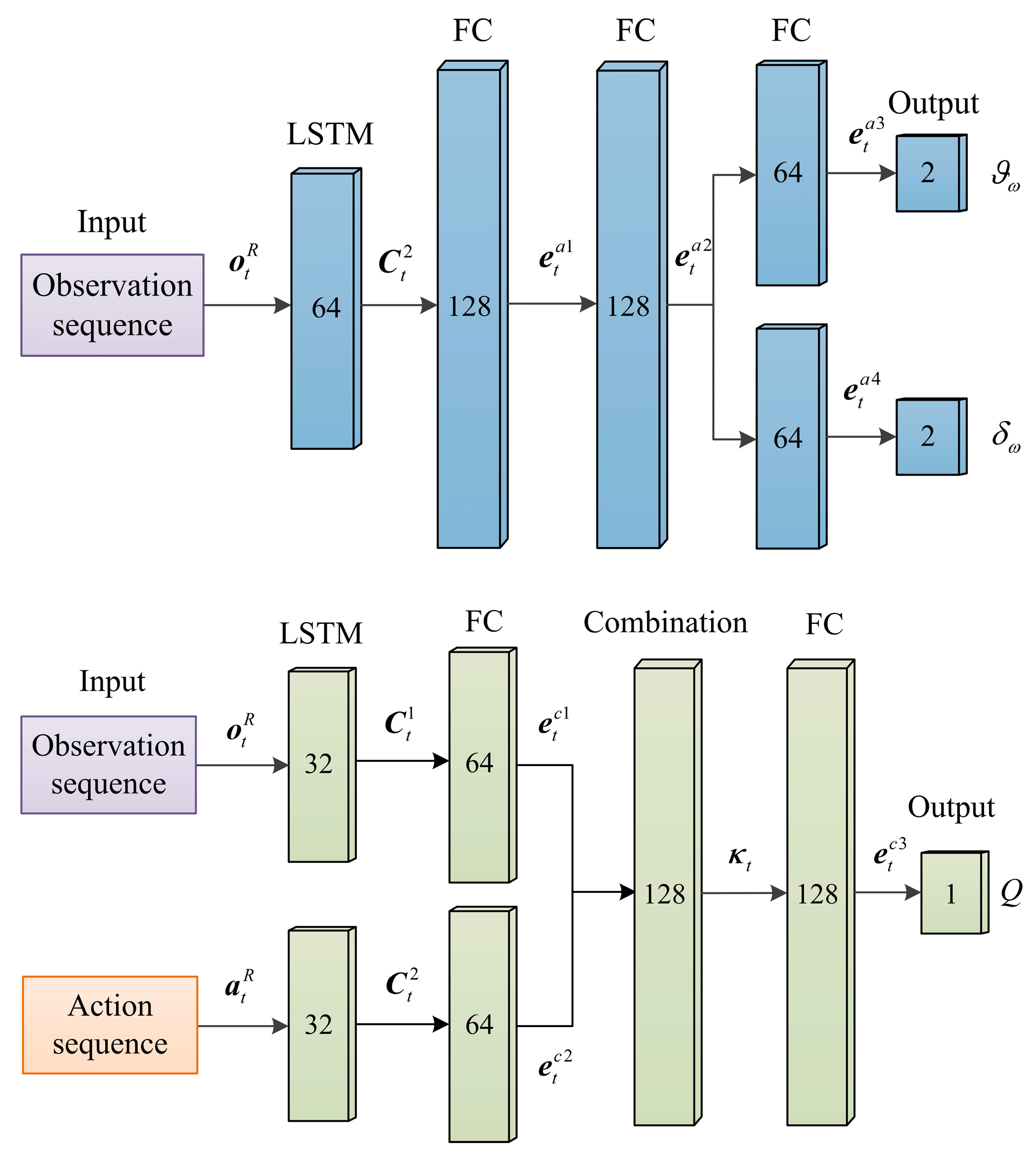

Moreover, to enhance the sequential modeling capability of the deep network structure for historical encirclement information, the actor and critic networks are constructed using long short-term memory (LSTM), as shown in

Figure 3, thereby capturing the temporal dependencies in the encirclement process.

The computation process of the actor network is as follows

where

denotes the historical observation sequence.

and

denote the hidden state and cell state generated by the LSTM layer.

denotes fully connected (FC) layer.

denotes the output of the fully connected layer.

denotes output layer.

denotes learnable network weights.

The computation process of the critic network is as follows

where

denotes the historical action sequence.

and

denote the hidden state and cell state generated by the two LSTM layers.

denotes fully connected layer.

denotes the output of the fully connected layer.

denotes connection layer and

is its output.

denotes output layer.

denotes learnable network weights.

The specific training process is presented in Algorithm 2, where

denotes learning rates and satisfies

.

| Algorithm 2: Cooperative encirclement control via MARL. |

| Inputs: Actor network parameters , critic network parameters and . |

| Initialization: Target network parameters and , experience replay buffer , cooperative encirclement environment. |

| 1: for do |

| 2: for do |

| 3: Sample action from policy based on observation |

| 4: Compute reward and obtain |

| 5: Store into the experience replay buffer |

| 6: end for |

| 7: Replay mini-batch samples from |

8: Update network parameters using the gradient descent/ascent and soft update

for

for |

| 9: end for |

| Outputs: Network parameters , and . |

4. Simulation Results

In order to demonstrate the effectiveness of the proposed cooperative encirclement control method, simulation studies together with comprehensive comparisons are conducted. Principal parameters of the ASV are as follows:

,

and

. The nominal dynamics is given by

,

and

[

32]. Time-varying environmental disturbances are added to the ASV model at the beginning of each episode and remain throughout the episode, which are given by

where

is a positive constant.

represents a Gaussian white noise process,

.

represents the transfer function.

The simulation scenario is as follows. Consider a networked system composed of six ASVs and two targets in the horizontal plane without geographical boundary constraints. If the distance between the ASV and the target is less than 50 m, the target adopts the maneuver strategy given by (4). When the criteria (3) are met, it is determined that the target encirclement mission is completed.

At the beginning of each episode, the positions and heading angles of ASVs are randomly initialized with a specified range. To be specific,

,

,

. The initial positions of the two targets are

and

, and initial heading angles are both

. Let the encirclement radius be

and the minimum distance between ASVs and targets be

. After numerous simulations and parameter adjustments, the reward coefficients are set as

,

,

, and

. The training hyperparameters are listed in

Table 1.

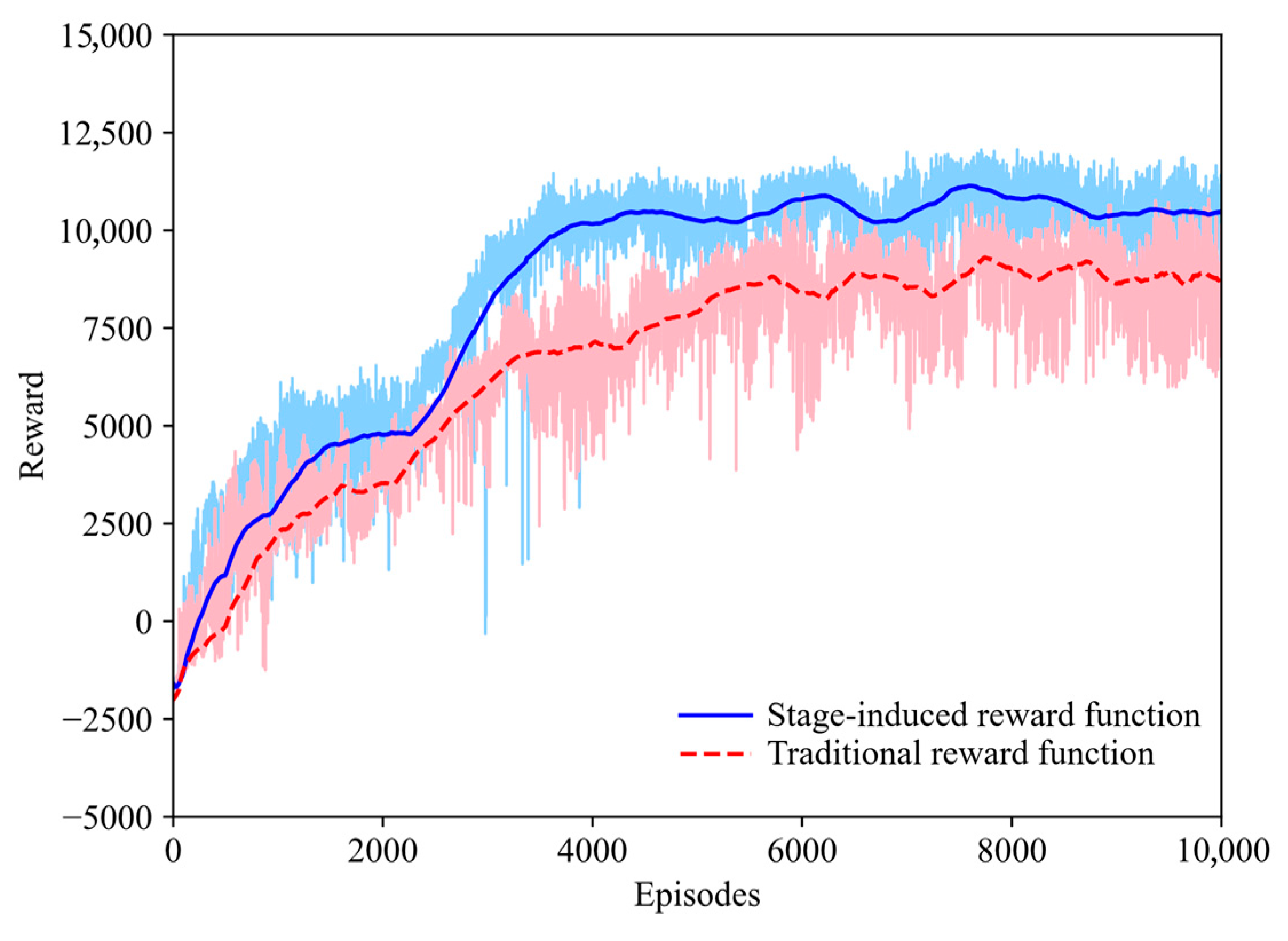

In order to demonstrate the superiority of the proposed stage-induced reward function, the traditional reward method in [

33] is deployed to derive comparison results. Specifically, the traditional encirclement reward function is governed by

with

if

and

if

, where

and

are the encirclement angles of the

vehicle and the neighbor vehicle, respectively.

is the standard deviation of the encirclement angle. Note that the superiority and comparisons of the LSTM can be found in our previous work [

34].

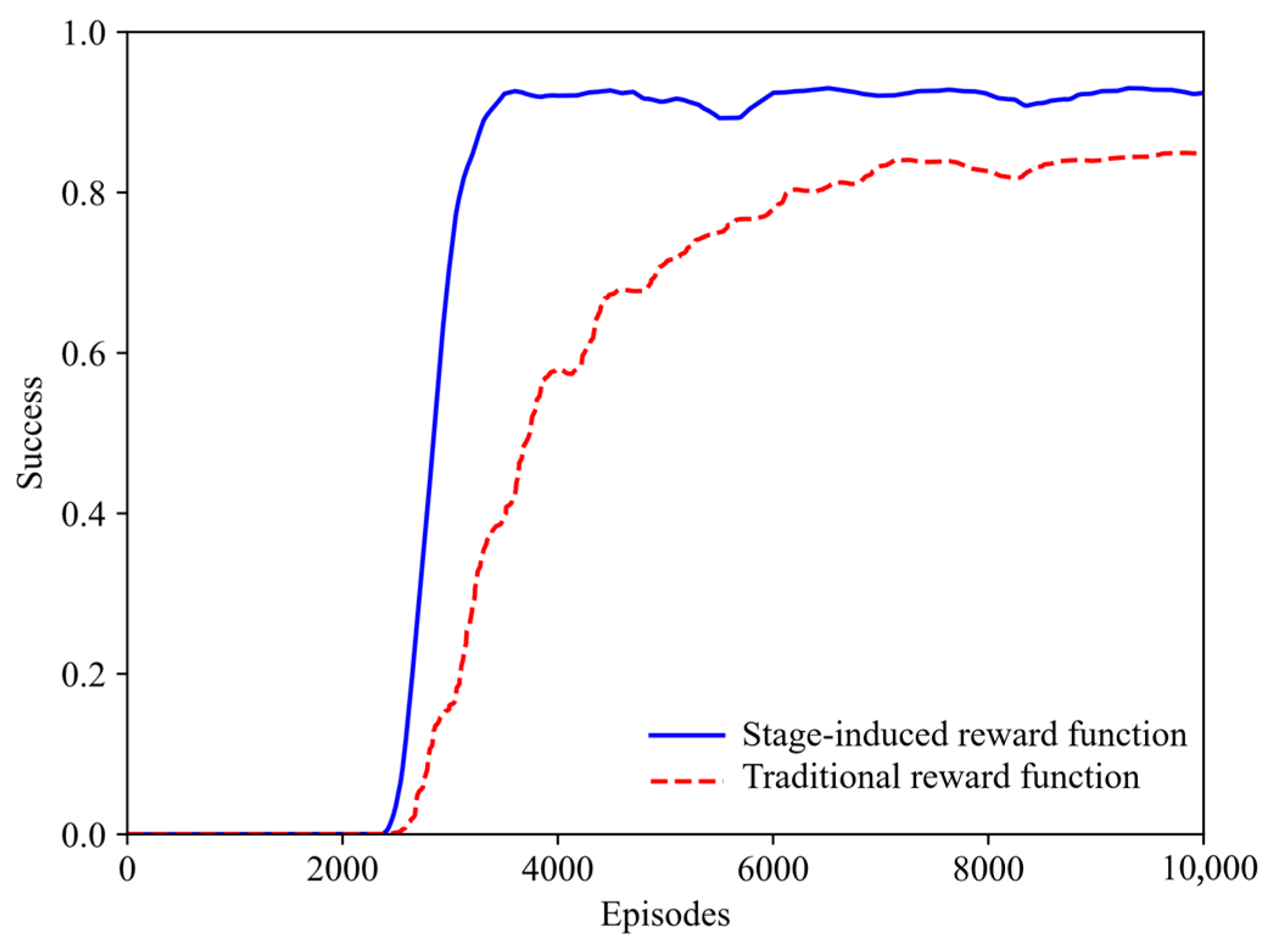

The encirclement performance is evaluated from two aspects, including episode rewards and task success rates. Episode rewards of cooperative target encirclement using different reward functions are shown in

Figure 4, where the shaded areas represent the standard deviation. As illustrated, all methods converge within the training steps. However, policy training using the stage-induced reward function clearly exhibits faster convergence and higher reward values, which can be explained by the fact that ASVs using the curriculum rewards gradually learn to search, besiegement and capture, avoiding unnecessary exploration for the dynamic environment. Success rate of cooperative target encirclement using different reward functions is shown in

Figure 5. During the first 2000 episodes, the success rate is basically close to zero, indicating that the ASVs are in the exploration process and have not developed a successful policy for cooperative target encirclement. After 2500 episodes, with the assistance of curriculum learning, the success rates of encirclement achieved by the stage-induced reward function continue to increase (approaching 90%), while the success rates of the comparison method rise more slowly.

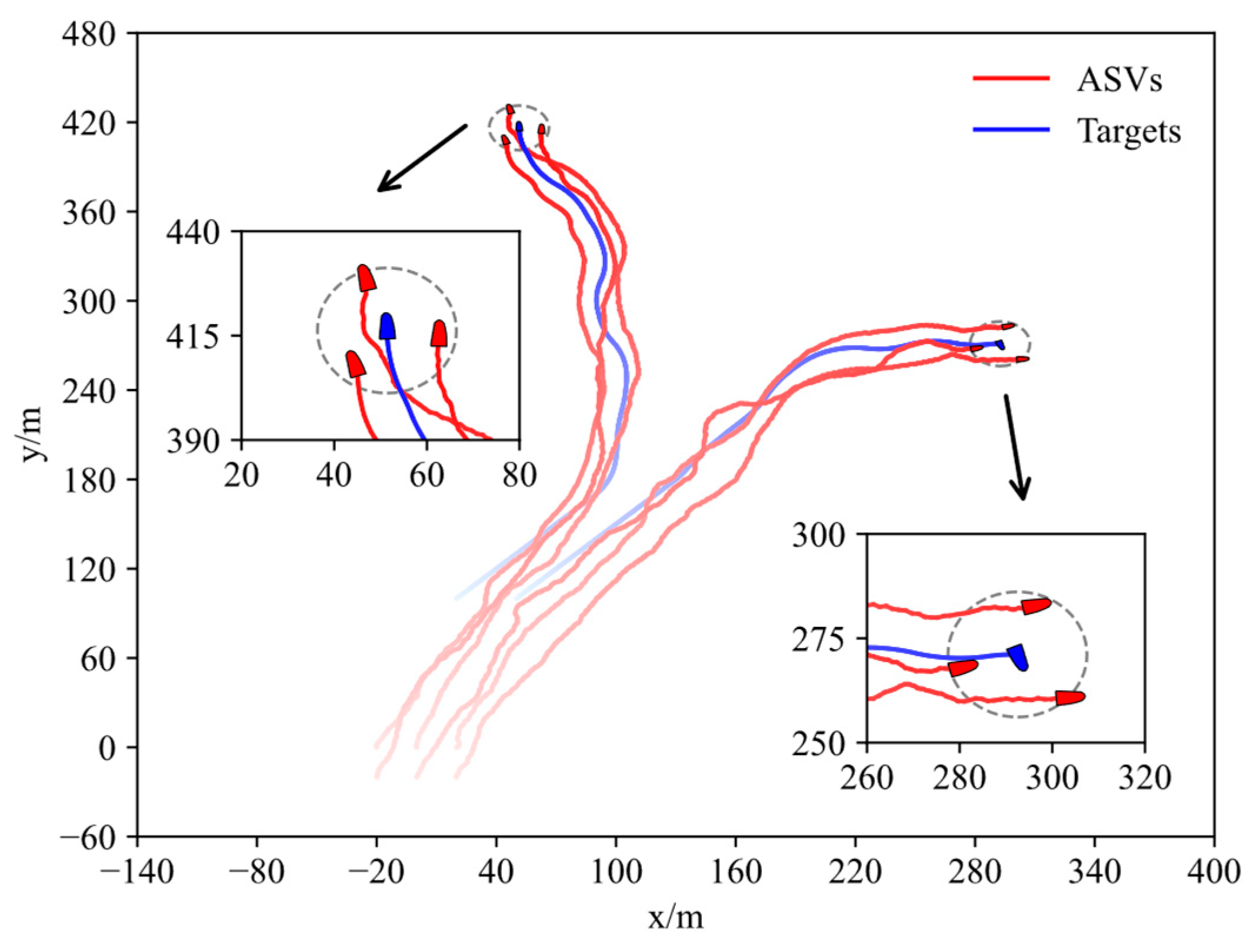

After the training is completed, we save the network parameters with the highest reward value, and conduct a six-on-two cooperative target encirclement test. The vehicles’ initial positions are set as , , , , , and . The other values are the same as those in the training process. Note that if the target meets the encirclement condition (3), the encirclement mission will be immediately terminated.

The trajectories of both ASVs and targets throughout the encirclement process are depicted in

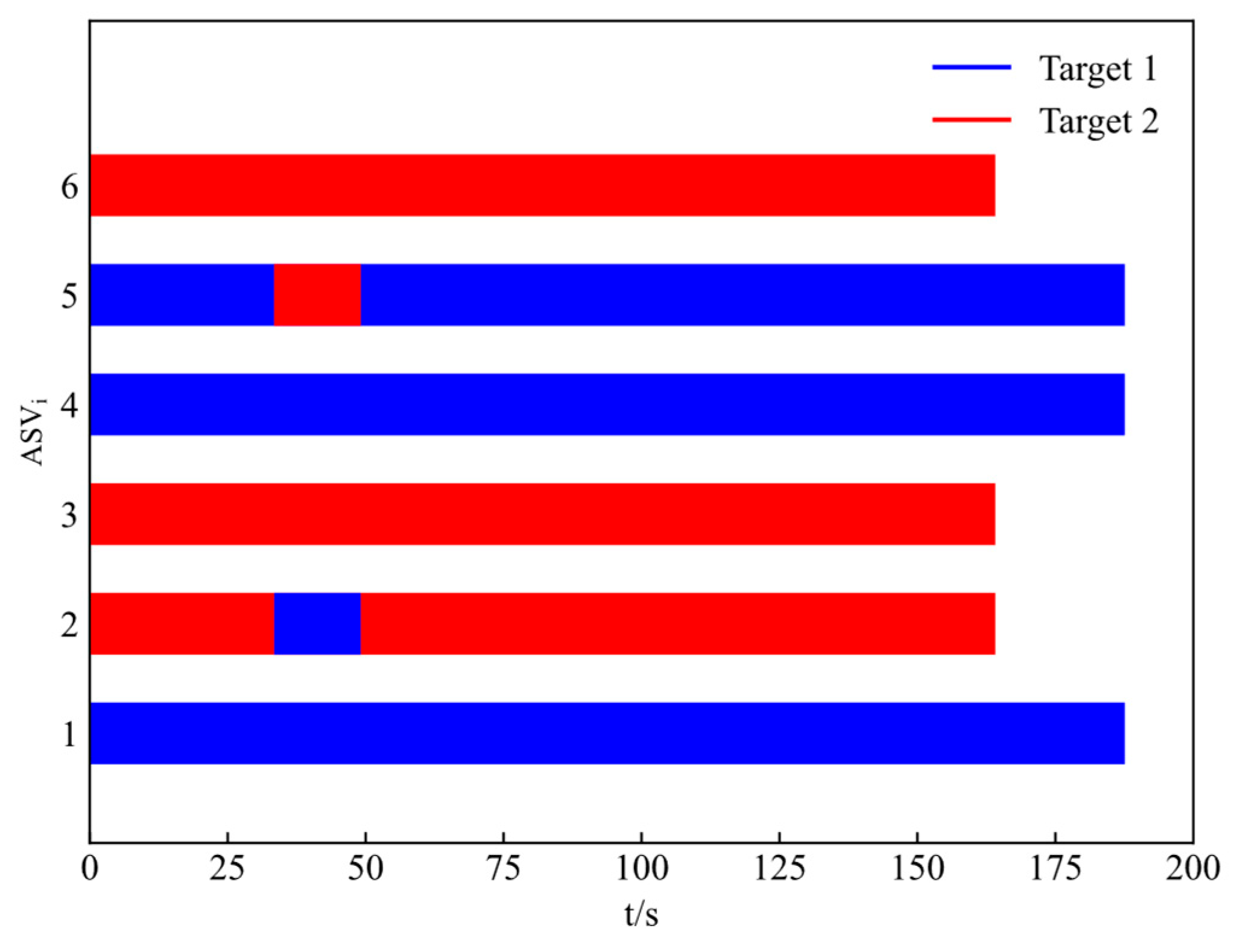

Figure 6. Notably, six vehicles set off from the initial positions and gradually capture the two moving targets, respectively, forming an encirclement circle. Worth of mention is that six vehicles are gradually divided into two encirclement alliances. Each alliance has three ASVs, and an effective encirclement for the two moving targets is achieved in the end. The target allocation result is shown in

Figure 7, where the second ASV and the fifth ASV switch the encirclement target at about 40 s. Finally, the first, fourth, and fifth ASVs encircle the first target, while the second, third, and sixth ASVs encircle the second target. In addition, different encirclement alliances have differential termination times.

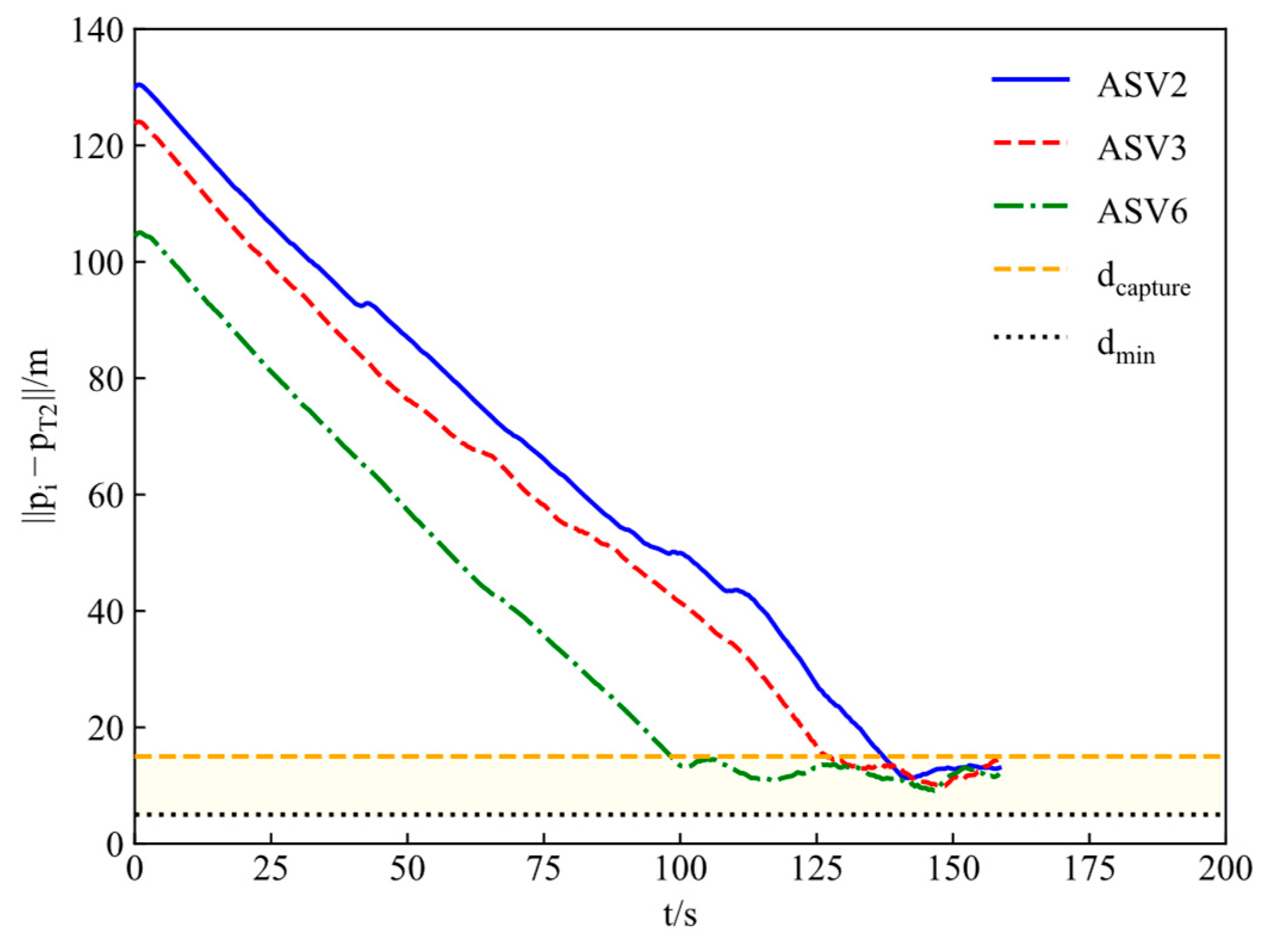

The distances between ASVs and the corresponding targets are shown in

Figure 8 and

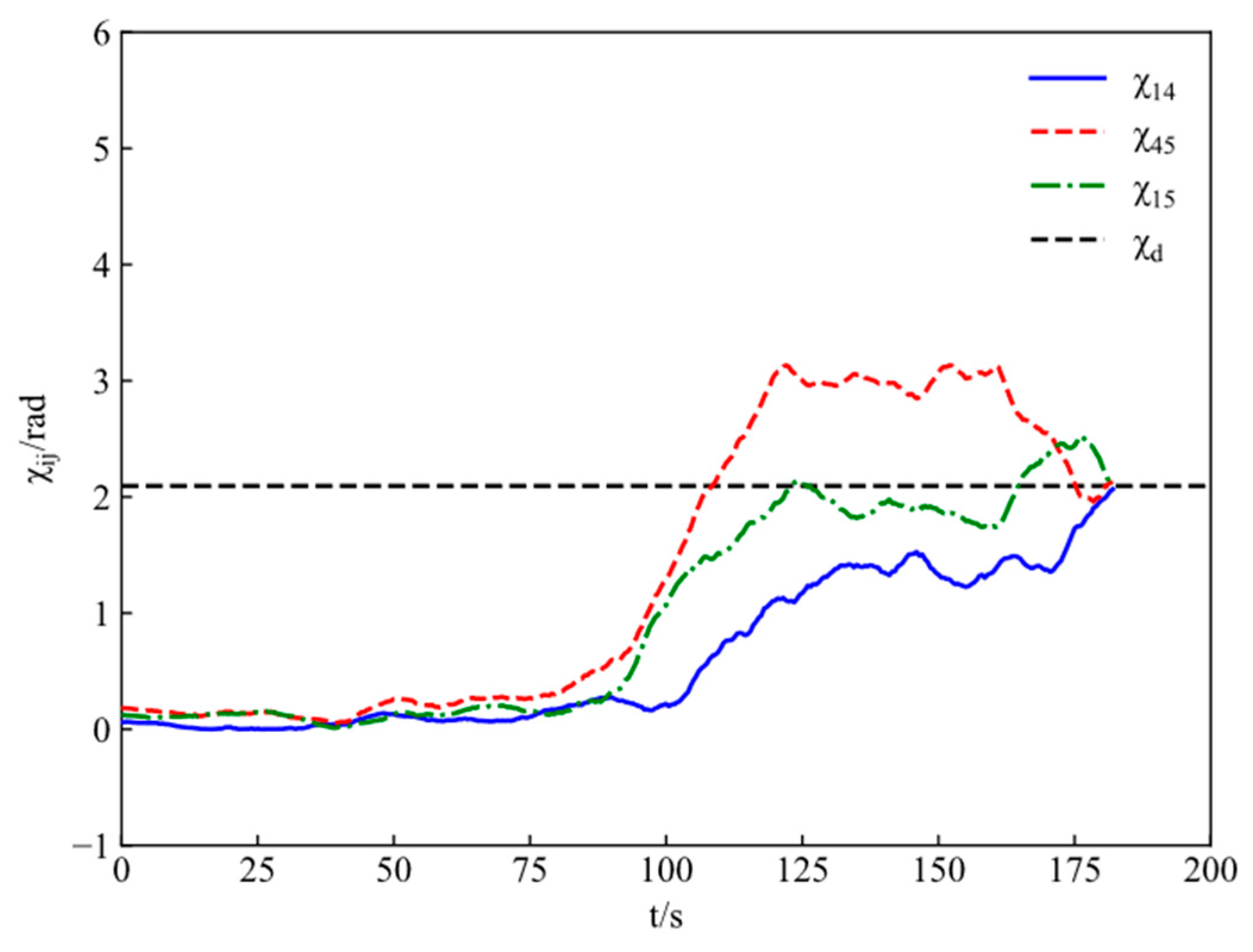

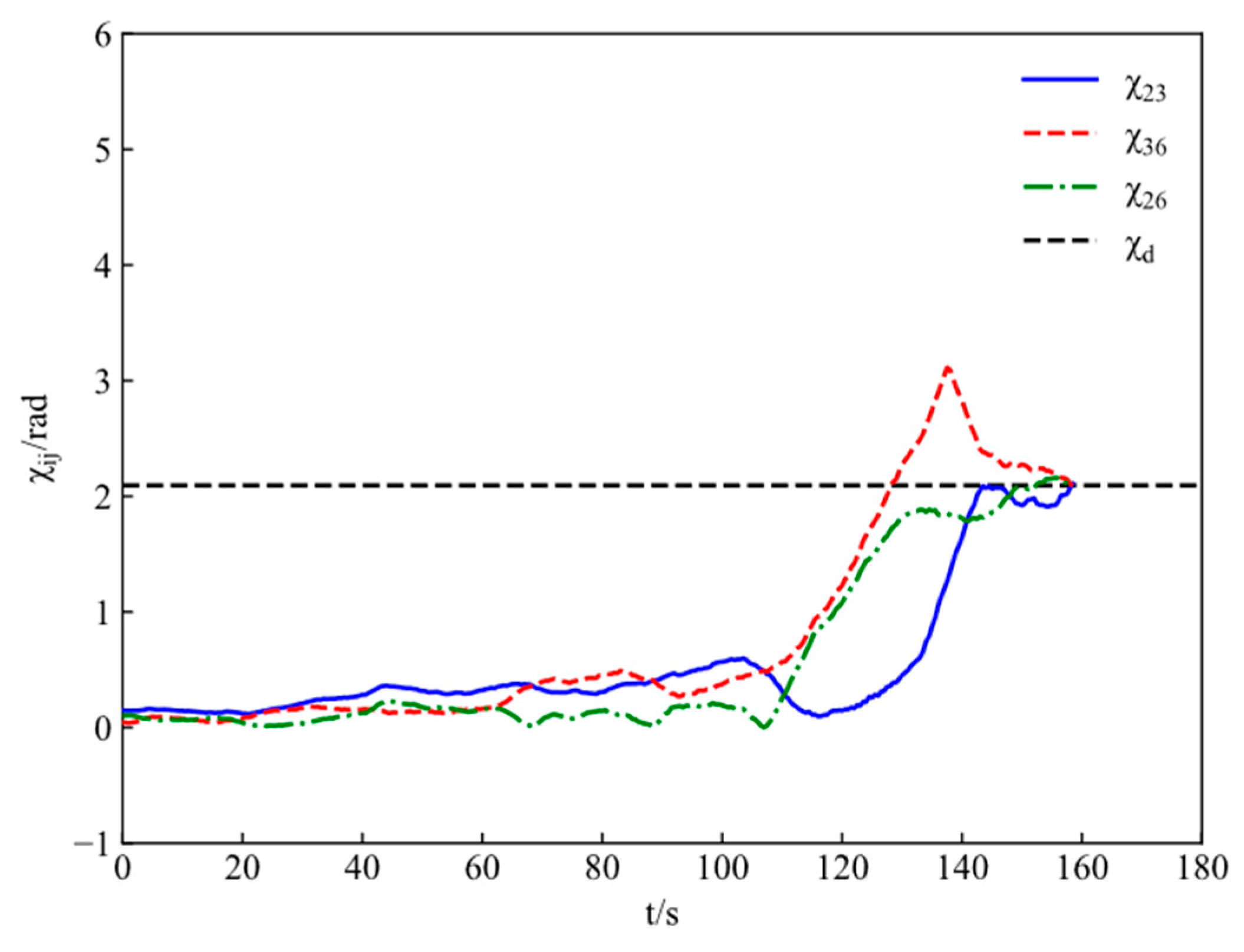

Figure 9. The encirclement angles of adjacent ASVs are shown in

Figure 10 and

Figure 11. One can conclude that both distances and angles satisfy the desired requirements. Moreover, under the incentive of curriculum rewards, ASVs first meet the distance constraint and then the angle constraint when conducting the cooperative encirclement, achieving the given control objective from the easier to the more difficult.

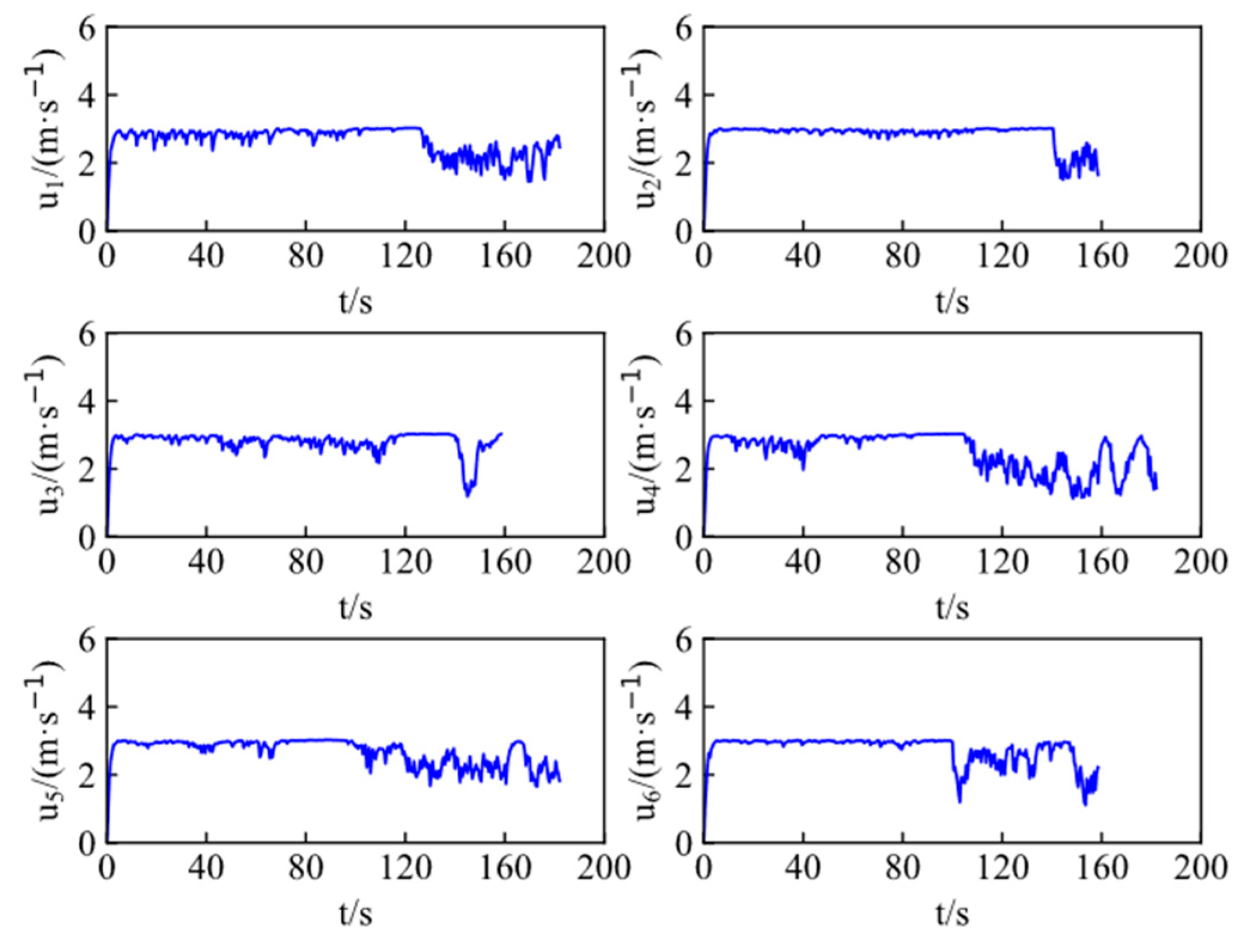

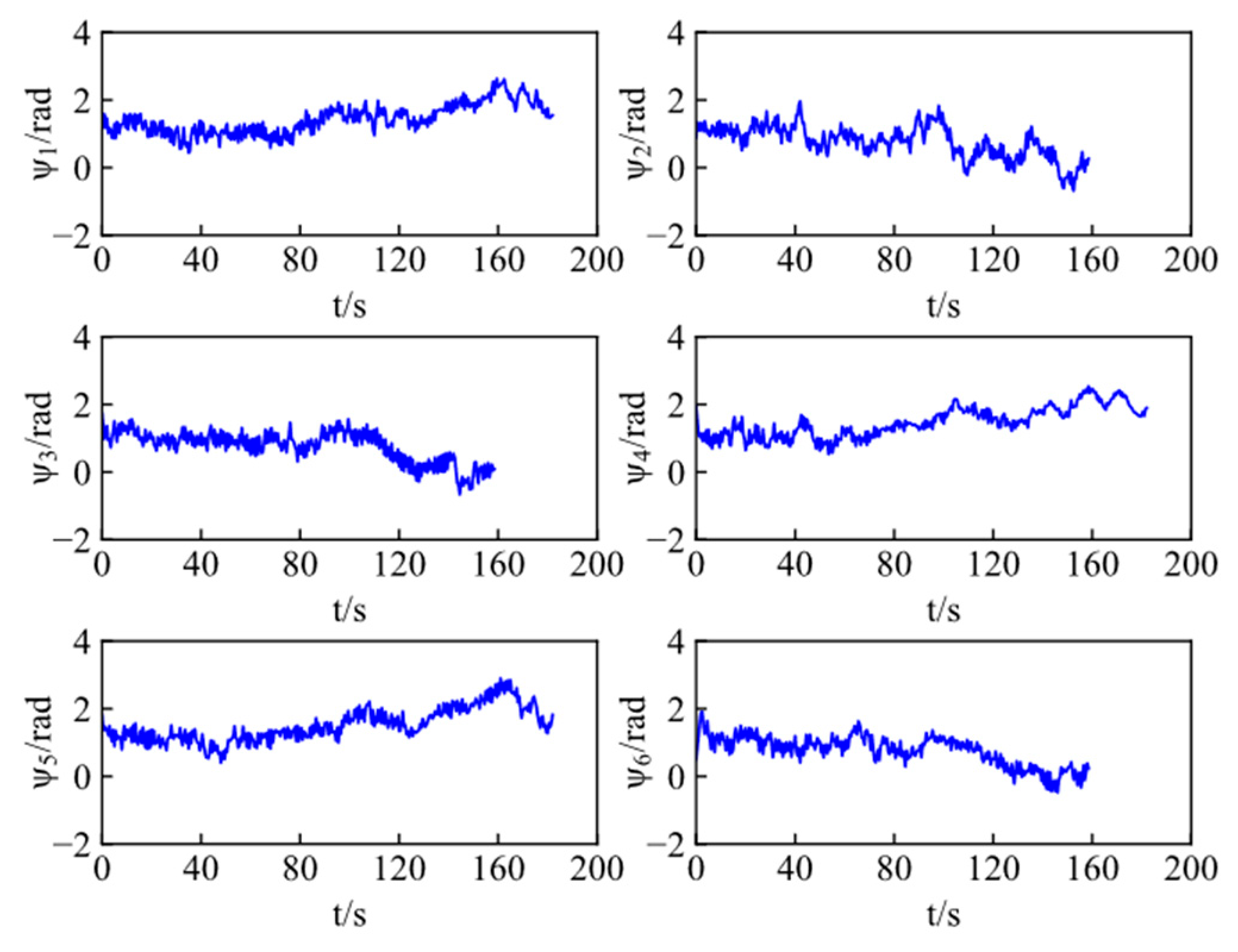

Figure 12 and

Figure 13 present the velocities and heading angles of ASVs, respectively, where each vehicle adjusts the velocity and angle in real time according to the moving target.

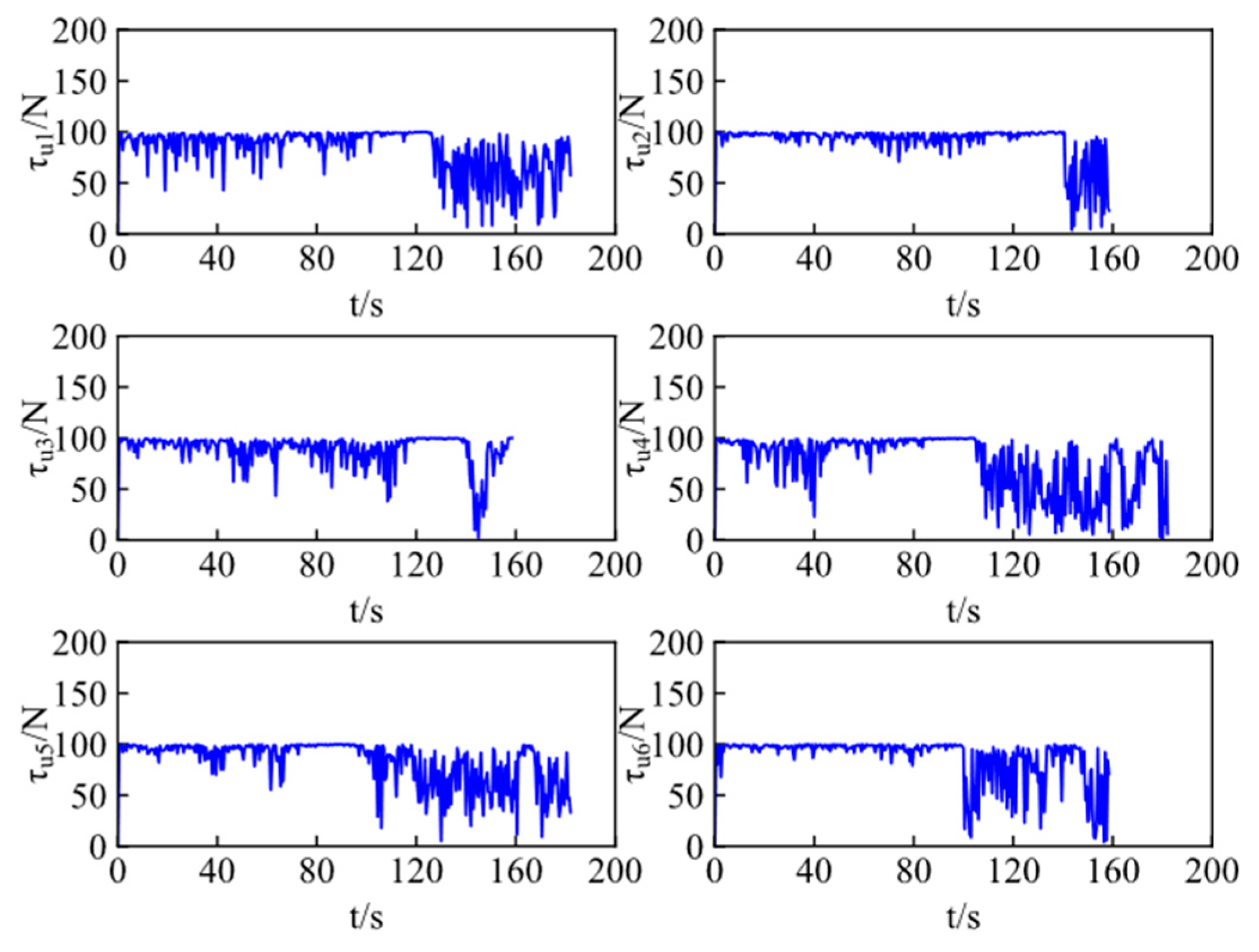

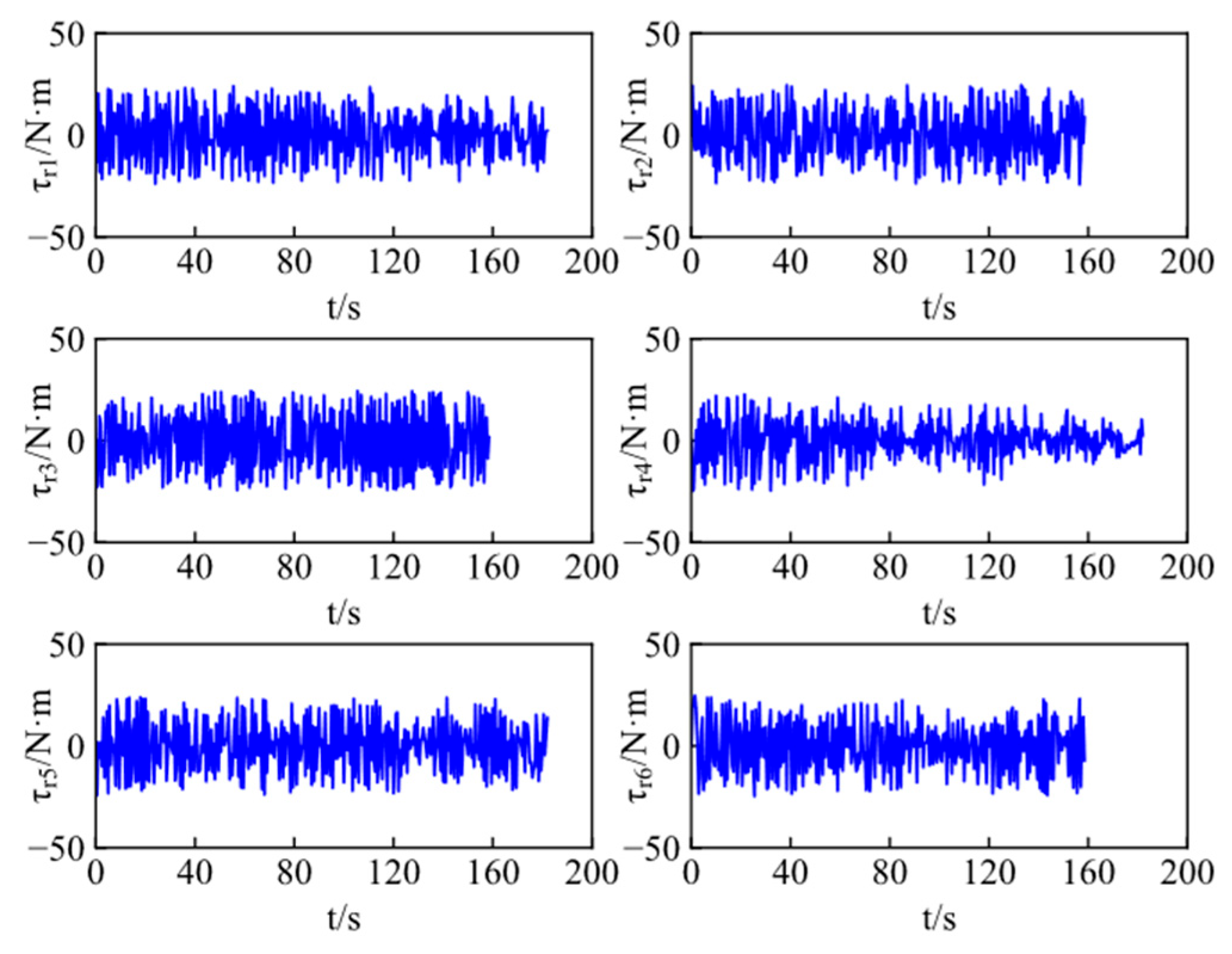

Figure 14 and

Figure 15 show control inputs of ASVs, including the surge forces and yaw moments, which are bounded and realistic from a practical viewpoint. Both exhibit minor fluctuations during cooperative target encirclement. These fluctuations arise from the time-varying environmental disturbances.

5. Conclusions

This article focuses on the cooperative encirclement problem for multiple ASVs and proposes a multi-target cooperative encirclement control method using MARL. Considering practical mission requirements and operational constraints, the conditions for successful cooperative encirclement by multiple ASVs are established. A dynamic target allocation algorithm, based on the positional information of both vehicles and targets, is designed to optimize target selection in real time. To meet the demands of high-performance cooperative training, a curriculum learning-based multi-stage encirclement reward function is developed, guiding ASVs to approach targets progressively from simpler to more complex tasks. Within a centralized training and decentralized execution framework, a multi-agent soft actor-critic algorithm incorporating long short-term memory is designed to compute the control inputs of vehicles, enabling effective multi-target encirclement. Finally, simulation results validate the effectiveness and superiority of the proposed control method.

Further investigations may aim at the cooperative encirclement of ASVs against unknown targets. It is also desirable to investigate the practical implementation of the proposed method for target encirclement with hardware constraints, communication delays, and measurement errors.