1. Introduction

Vessels often operate in remote maritime areas with limited shore-based support. Reliable operation of onboard equipment is essential for ensuring operational efficiency, crew safety, and protection of the marine environment. Traditionally, marine equipment’s reliability is defined as a system’s ability to perform its intended functions under specified conditions over a given period. In this study, we further refine this definition as the probability of failure-free operation within a defined interval. We focus on two core elements: (1) the watchkeeping practices mandated by the IMO’s International Convention on Standards of Training, Certification, and Watchkeeping for Seafarers (STCW), and (2) the Planned Maintenance System (PMS) frameworks implemented by shipowners and management companies to maintain ongoing regulatory compliance (see [

1,

2]).

Reliability assessments of marine equipment and systems guide critical decisions on ship design, equipment selection, and vendor evaluation, and they underpin emergency response planning and maintenance scheduling. Consequently, developing robust reliability assessment methodologies has become a central research focus in modern shipping, drawing significant attention from both scholars and industry practitioners.

Several Bayesian and hybrid approaches have been proposed for marine equipment reliability assessment. Toroody et al. [

3] combined Bayesian inference with Markov chain Monte Carlo to estimate ship systems’ reliability. Kang et al. [

4] developed a hierarchical fault detection and diagnosis framework for engine systems. Xiao et al. [

5] integrated probabilistic simulation with experimental data fusion to evaluate local structural reliability in ships. Wang et al. [

6] introduced a dynamic Bayesian network merging reverse diagnosis and forward reasoning for subsea mud-lifting system reliability. Bicen et al. [

7] formulated the SOHRA (Ship Operator’s Health and Reliability Assessment) model to assess operator reliability during crankshaft replacement. More recently, Chen et al. [

8] proposed an algorithm for predictive health monitoring of ship equipment.

Traditional metrics such as mean time between failures (MTBF) and theoretical reliability calculations provide basic estimates for marine equipment. However, they often overlook the combined impacts of human factors, equipment condition, and harsh marine environments—where ships face unique challenges like severe weather, humidity, and salt spray, and lack the maintenance support available to land-based systems. Furthermore, manufacturer data, especially for commercial off-the-shelf (COTS) equipment, typically reflect limited definitions, further constraining reliability assessments. To address these issues, this paper proposes a reliability assessment framework based on actual deployment scenarios, which integrates multidimensional data, better matches the real operational needs of ships, and provides strong support for scientific decision-making by various stakeholders.

Moreover, a complete reliability evaluation must quantify not only the probability of failure but also the potential consequences of failure, including impact severity, repair feasibility under constrained onboard resources, repair duration, economic cost, and human–machine coordination efficiency. For example, shipowners often trade off reliability against operating costs, whereas regulatory bodies such as the Maritime Safety Administration (MSA) and Port State Control (PSC) place greater emphasis on personnel safety and environmental protection. Given the diverse priorities and evaluation criteria of these stakeholders, assessing ship equipment’s reliability is inherently a multi-attribute, group decision-making process.

In multi-attribute decision contexts, engaging multiple decision-makers (DMs) is often essential. Group decision-making (GDM) [

9,

10] provides a framework for collective evaluation, yet DMs’ differing knowledge, experience, and expertise result in uneven contributions and influence. Consequently, accurately determining each DM’s weight remains a central challenge in GDM research (see [

11]).

Entropy, introduced by Shannon [

12], quantifies uncertainty within a system. Entropy-based weighting methods viewing the evaluation matrix as an interrelated system have attracted significant attention [

13,

14]. Wang and Wan [

15] proposed a cross-entropy approach for decision-maker weights; Liu et al. [

16] developed a socially influenced informational entropy method; Yang et al. [

17] formulated a linear programming model; Yang and Pang [

18] introduced a cross-entropy measure in a T-spherical fuzzy setting; and Yue [

11,

19] and He et al. [

20] advanced hybrid entropy-based frameworks. Parnell et al. [

21] presented a range of entropy-based weighting methods, providing essential theoretical support for determining decision-makers’ weights. However, these existing methods may not fully address the nuances of group decision-making in marine equipment reliability assessment (see Examples 3–4 in

Section 5). To address this gap, we propose a novel entropy-based weighting scheme specifically tailored to marine equipment reliability evaluation.

In 1965, Zadeh introduced the concept of fuzzy sets to systematically represent imprecise information in decision-making processes [

22]. However, fuzzy sets have inherent limitations in handling higher-order uncertainty. To address this, Atanassov [

23,

24] extended fuzzy sets to intuitionistic fuzzy sets (IFSs) by introducing membership (

) and non-membership (

) degrees constrained such that

, thereby enriching the modeling of vagueness. Xu [

25] further developed IFS aggregation operators, but IFSs still cannot fully represent options such as abstention or refusal, as in voting scenarios. To overcome this, Cuong and Kreinovich [

26] proposed picture fuzzy sets (PFSs), which incorporate positive (

), neutral (

), negative (

), and refusal (

) membership degrees, enabling the representation of four possible outcomes. Since their introduction, research on PFS similarity measures has further advanced the field of fuzzy decision-making.

Building on picture fuzzy sets (PFSs), Wei [

27] first introduced a cosine-based similarity measure using PFSs’ four membership degrees for strategic decision-making. Wei and Gao [

28] then proposed a normalized Dice similarity measure with an adjustable parameter for material selection. Luo and Zhang [

29] developed a novel PFS similarity metric combining membership, non-membership, and neutral degrees, with applications in pattern recognition and medical diagnosis. Thao [

30] conducted a systematic entropy-based evaluation of various PFS similarity measures. Luo et al. [

31] presented a relationship-matrix-derived similarity measure for multi-attribute decision tasks. More recently, Ganie and Singh [

32] formulated a direct measure operating on all four PFS degrees and embedded it within a new multi-attribute decision-making method.

To the best of our knowledge, picture fuzzy sets (PFSs) have not yet been applied to marine equipment reliability assessment. To fill this gap, we extend PFSs into a shipboard reliability evaluation framework. After reviewing fuzzy information representation techniques and various aggregation operators, we observe that preprocessing or normalization steps inherent to these operators can inadvertently discard critical decision information. To overcome this limitation, we develop a PFS VIKOR framework that bypasses aggregation operators and derives evaluation results directly from raw decision data.

The VIKOR (VlseKriterijumska Optimizacija I Kompromisno Resenje) method [

33] is a well-established compromise ranking approach that operates without explicit aggregation operators. It accommodates varying sample sizes and heterogeneous criteria—each with its own weight, interdependence, and potentially conflicting objectives. By simultaneously balancing group utility and group regret measures, VIKOR delivers a decision framework that seeks to maximize overall satisfaction while minimizing collective regret. Moreover, VIKOR’s compromise ranking can group alternatives with similar outcomes into the same rank, aiding decision interpretability.

Marine equipment reliability assessment is highly complex. On the one hand, it requires balancing multiple conflicting objectives, uncertain information, and the potential for catastrophic failures. On the other hand, it involves integrating the perspectives of various stakeholders, including shipowners, classification societies, regulatory authorities, and onboard personnel. Common multi-criteria decision-making (MCDM) methods each have limitations in this context: the Analytic Hierarchy Process (AHP) relies on extensive pairwise comparisons, which become impractical when faced with numerous and conflicting criteria; the Technique for Order of Preference by Similarity to the Ideal Solution (TOPSIS) considers only the distances to ideal and anti-ideal solutions, neglecting “regret” under extreme scenarios and, thus, offering limited value for safety redundancy and risk warning; COPRAS, although able to integrate benefits and costs, does not account for collective regret, which may result in underestimating high-risk equipment; and the Best–Worst Method (BWM), while efficient for weight elicitation, still requires an additional ranking step and provides limited support for dynamic and complex scenarios.

To address these limitations, this study adopts and extends the VIKOR method. VIKOR’s compromise programming framework simultaneously optimizes group utility (

) and group regret (

), achieving a dynamic balance between average performance and worst-case risk—an approach well suited to the dual demands for safety and reliability in marine operations. Previous studies [

34,

35,

36,

37] have further demonstrated that VIKOR maintains robust ranking stability even under indicator perturbations and fuzzy input conditions. In addition, VIKOR relies only on the normalized

and

vectors for ranking, allowing for seamless integration with picture fuzzy numbers and the novel projection metric proposed in this paper, without requiring additional aggregation operators.

Extensive research [

38] has also demonstrated that the VIKOR method exhibits strong adaptability in a wide range of fuzzy environments. In intuitionistic fuzzy contexts, several GDM models have been developed [

39,

40,

41], while Pythagorean fuzzy extensions further improve decision accuracy [

42,

43,

44]. Interval type-2 fuzzy environments have inspired specialized VIKOR-based frameworks [

45,

46,

47,

48], and subsequent work has incorporated linguistic information models into VIKOR-GDM approaches [

49,

50,

51]. Ren et al. [

52] proposed a dual-hesitant fuzzy VIKOR method, and Belošević et al. [

53] applied VIKOR with triangular fuzzy numbers to early-stage infrastructure evaluation. More recently, Singh and Kumar [

54] introduced an integral-based VIKOR technique tailored to complex group decisions, further broadening VIKOR’s application scope.

These approaches have significantly advanced group decision-making research. However, the classical VIKOR method depends on only two reference points—namely, the positive ideal solution (maximum utility matrix) and the negative ideal solution (minimum utility matrix)—which limits its applicability. When additional reference information is available—for example, supplementary datasets, vectors, or matrices—incorporating it into the model allows us to capture richer decision characteristics and address more complex problems.

In this study, we present an extended VIKOR method that integrates multidimensional reference information (supplementary datasets, vectors, and matrices) within a picture fuzzy group decision-making framework to substantially improve the robustness and reliability of evaluation outcomes.

Traditionally, VIKOR employs Euclidean and Hamming distances [

55] to assess each alternative’s proximity to the ideal solution; however, the scale-dependent sensitivity of these metrics—where a 1 cm deviation is negligible on a 28 m basketball court but significant for a few-millimeter ant—suggests that adopting a more adaptable proximity measure may improve evaluation precision.

In this work, we replace the conventional Euclidean and Hamming distance measures in the classic VIKOR method with a novel normalized projection metric, thereby refining the decision-making process and providing a more integrated evaluation framework.

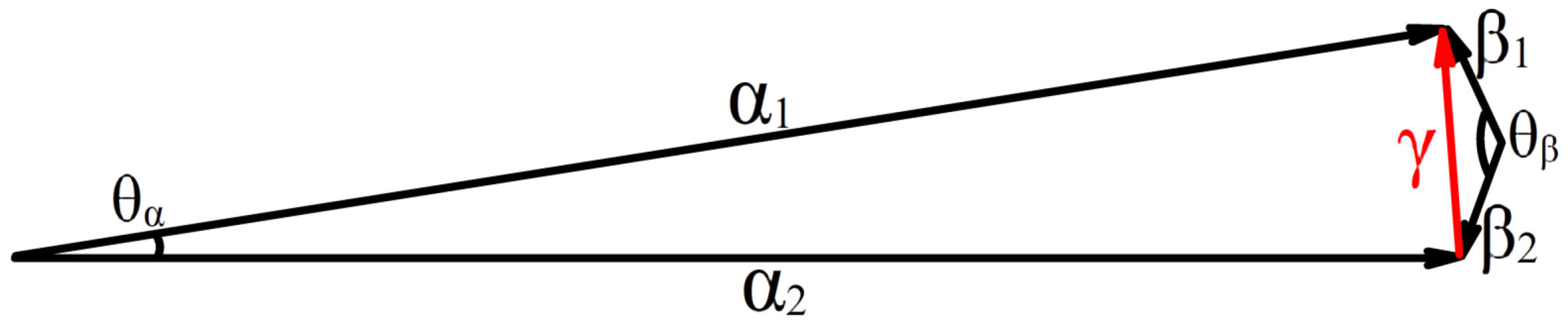

Figure 1 shows that the distances between vectors

and

and between

and

are both equal to the length of the red vector

. In the first case, the two vectors have identical lengths and a small angle

, whereas in the second case the vectors have identical lengths but are nearly opposite, resulting in a large angle

. Therefore, under equal distances,

and

are evidently more similar than

and

. Relying solely on distance to assess vector proximity is thus incomplete; angular information should also be considered. Inner product and projection techniques provide a more comprehensive similarity measure (see [

19,

56,

57]).

Group decision-making (GDM) studies that employ projection metrics follow two distinct tracks. Track 1 adopts conventional projection measures: Yue and Jia [

58] extended TOPSIS to mixed intuitionistic–fuzzy data by replacing distance with the classic projection metric, Xu and Liu [

59] combined interval multiplication with fuzzy preference relations, and Yue [

60] used projection-based TOPSIS to rank user satisfaction and loyalty. Track 2 employs standardized projection metrics, whose values are normalized to [0, 1]. Because this range facilitates cross-criterion comparison, interest in standardized projection has increased. Yue and Jia [

61] applied it to partner selection with real and interval data, while Jia [

62] used it to assess offshore equipment’s reliability. To remedy the incompatibility of traditional projection within TOPSIS-based GDM, Yue [

56,

63,

64] proposed several standardized projection frameworks for software quality evaluation.

A gap remains in that existing projection metrics can be unsuitable in a picture fuzzy setting (see Example 1,

Section 4). To close this gap, we have developed a new projection metric that is fully compatible with picture fuzzy sets and yields more reasonable similarity assessments.

Therefore, this study aims to propose a group decision-making framework that integrates picture fuzzy sets, an extended VIKOR method, a standardized projection metric, and an innovative entropy-based weighting scheme, so as to enhance the scientific rigor and objectivity of marine equipment reliability assessment.

The main contributions of this paper are as follows:

- (1)

A novel standardized projection metric formula is introduced, enabling the direct assessment of proximity between two picture fuzzy matrices. This development effectively addresses the shortcomings of both classical and standardized projection metrics identified in the existing literature.

- (2)

To enhance decision-making accuracy, a weighted geometric mean operator is introduced to calculate the average decision matrix for all participants. Additionally, a method is proposed for determining expert weights based on the proximity between individual decision matrices and the collective average matrix, thus reducing subjectivity and mitigating the dominance of influential authorities.

- (3)

By incorporating the projection measure of two picture fuzzy numbers, a complementary matrix is constructed for the maximum evaluation matrix. This results in the generation of three reference matrices for the VIKOR-based group decision-making (GDM) model: one positive and two negative reference matrices for calculating the group utility measure, and two negative reference matrices for determining the group regret measure. By representing the evaluation information as picture fuzzy numbers, we present a novel multi-attribute group decision-making (MAGDM) approach based on the developed VIKOR method, detailing the specific steps for its implementation.

- (4)

The proposed extended VIKOR-based GDM method is applied to the reliability assessment of marine equipment. Furthermore, this study introduces new ranking methods tailored for both static and dynamic environments. The effectiveness and advantages of the proposed model are validated through comparisons with existing methods using the same original decision data.

The structure of this paper is as follows: After the Introduction in

Section 1,

Section 2 details the data collection methods and questionnaire design.

Section 3 outlines the fundamental concepts underpinning the study.

Section 4 introduces a standardized projection measure.

Section 5 describes the weighting method for decision-makers.

Section 6 presents the proposed approach for assessing marine equipment reliability.

Section 7 applies the method to a case study, demonstrating its effectiveness and providing a discussion based on experimental analysis. Finally,

Section 8 concludes the paper and highlights directions for future research.

2. Data Collection

Assessment activities such as tenure reviews and research grant selections are central to professional and scientific practice. As decision problems grow more complex, a single decision-maker (DM) can rarely weigh every relevant factor [

65,

66,

67]; therefore, major choices, ranging from national policy to corporate strategy, now rely on group decision-making. Voting is long-standing and simple, yet each ballot covers only one issue and, thus, conveys limited information. Questionnaires yield richer input, but lengthy item lists or handwritten responses often deter experts due to the extra time and effort involved. In marine equipment reliability assessment, balancing information richness with respondent burden is essential for accurate and efficient data gathering. In light of the limitations of both voting and questionnaire methods, this section will present our data collection and survey design based on the following three principles:

- (1)

User Engagement: A high level of participant involvement captures the diverse concerns of ship equipment stakeholders, improves evaluation accuracy, broadens sample representativeness and, consequently, enhances assessment reliability.

- (2)

Questionnaire Clarity: A user-friendly, well-organized survey enables respondents to answer quickly and accurately; such clarity encourages participation, minimizes response bias, and increases data reliability.

- (3)

Streamlined Data Processing: Efficient post-survey handling avoids information distortion or loss, preserves data integrity, and maximizes analytical value.

To improve evaluation efficiency, we adopt a hybrid approach that combines the simplicity of voting with the information richness of a questionnaire. The questionnaire is the main data collection instrument, and each response is encoded symbolically to provide a structured input format. The complete questionnaire layout appears in

Table 1.

Each equipment alternative occupies one row of the questionnaire matrix, and each reliability attribute occupies one column with three symbolic options. Respondents note their judgment by selecting the appropriate symbol in a cell: (✓) denotes agreement, meaning that the attribute is present or strong; (◯) indicates neutrality or uncertainty; and (✗) signifies disagreement, meaning that the attribute is absent or weak. Blank cells, multiple selections, or unreturned forms are treated as non-responses.

The evaluation experiment was conducted onboard an educational training vessel. This vessel has an overall length of 116 m, a maximum beam of 18 m, a molded depth of 8.35 m, a design draught of 5.4 m, a gross tonnage of 6106 t, and an endurance of approximately 10,000 nmi under unrestricted navigation certification. It is equipped with an advanced integrated bridge system, an AUTO-O class engine-room automation system, a controllable-pitch propeller, shaft and diesel generators, a bow thruster, and a retractable fin stabilizer. Although it lacks cargo holds, the vessel can accommodate up to 196 cadets per voyage, making it an ideal platform for immersive maritime training.

To quantify the group’s preference, we computed a voting percentage, defined as the ratio of “agree” votes to the total number of valid ballots for each option. This metric provides a clear numerical measure of consensus. The complete voting results were presented in picture fuzzy set (PFS) format, which captures agreement, neutrality, and disagreement simultaneously. Using PFSs preserves the full pattern of responses while retaining all information relevant to subsequent decision analysis.

Each decision-maker (DM) assigns a single symbol (✓ for agree, ◯ for neutral, or ✗ for disagree) to every attribute–alternative pair, thereby recording individual evaluations in a concise and uniform manner. The resulting symbolic matrix captures personal preferences while avoiding linguistic ambiguity. Aggregating these entries integrates the panel’s expertise, provides an equitable portrayal of collective priorities, and mitigates bias arising from overly optimistic or pessimistic assessments. Because the three symbols coexist in one structure, the matrix transparently shows the degree of consensus. Full details of the questionnaire design and data collection protocol can be found in

Section 7.1.

3. Preliminaries

Definition 1. A picture fuzzy set (PFS) [

26]

on a universal set is defined as , where represents the degree of positive membership, denotes the degree of neutrality, and corresponds to the degree of negative membership. These parameters satisfy the conditions , and for all . Additionally, the refusal degree is determined as . Notably, when , the PFS reduces to an intuitionistic fuzzy set (IFS) [

23]

. Furthermore, if both and , the PFS further simplifies to a conventional fuzzy set [

68].

For convenience, Wei [

69] called

a picture fuzzy number (PFN), where

,

, and

.

A picture fuzzy number (PFN) can be effectively illustrated through the following practical scenario: In a democratic election held at a polling station, the council distributes 100 ballots for a specific candidate. The voting outcomes are classified into four distinct categories: “vote for” (30 ballots), “abstain” (50 ballots), “vote against” (10 ballots), and “refusal to vote” (10 ballots). The “abstain” category represents blank ballots, signifying that the voter neither endorses nor opposes the candidate while still participating in the election. Meanwhile, the “refusal to vote” category includes both invalid ballots and cases where voters opted not to cast a vote. These voting results can be mathematically represented as a PFN: , where the refusal degree is determined as follows: .

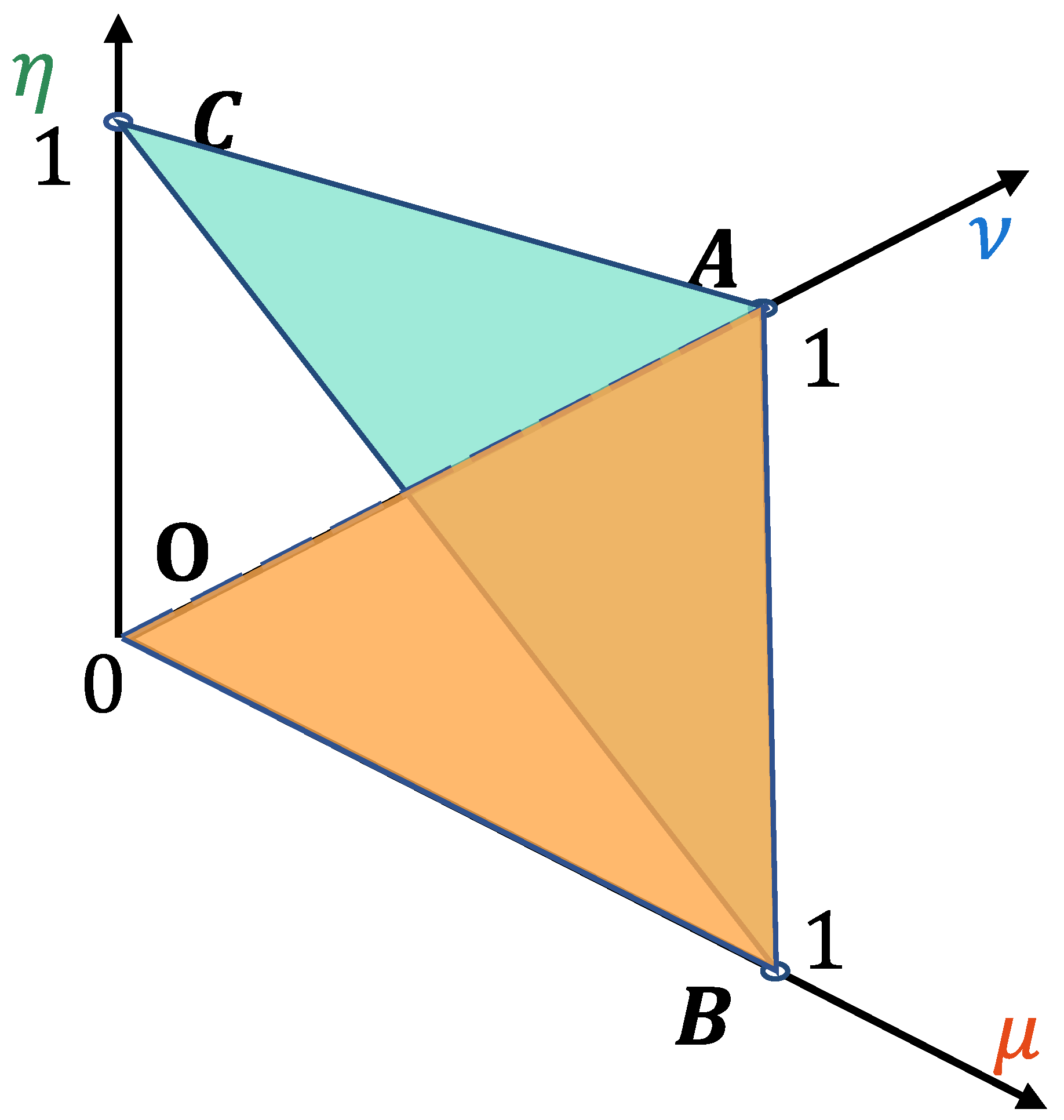

In addition to its numerical formulation, a PFS can be intuitively visualized in the three-dimensional

space. Under the above constraints, its feasible domain forms a tetrahedron OABC with vertices at

,

,

, and

. The triangular face OAB (where

) corresponds to the conventional intuitionistic fuzzy set (IFS); when a nonzero neutral membership degree (

) is allowed, the feasible region expands to the entire tetrahedron, as illustrated in

Figure 2.

Some operations of PFNs are introduced [

69,

70] as follows:

Definition 2. Let and be two PFNs, then

- 1.

;

- 2.

;

- 3.

;

- 4.

.

Definition 3. Let be a matrix, where each element is a picture fuzzy number (PFN). In this context, is referred to as a picture fuzzy matrix (PFM). Notably, when either or , is designated as a picture fuzzy vector (PFV). For the aggregation of PFNs, Zhang et al. [

70]

introduced the following operator: Definition 4. Let be a picture fuzzy vector (PFV), where each element is defined as for . Suppose denotes the corresponding weight vector, satisfying and . Then, the picture fuzzy weighted averaging (PFWA) operator is formulated as follows:

Additionally, the PFWA operator can be equivalently expressed as .

In particular, if the weight vector is uniformly distributed, i.e.,

, the PFWA operator simplifies to the picture fuzzy arithmetic averaging operator, given by

Moreover, Peng et al. [

71] introduced a seven-point linguistic rating scale, which establishes a mapping between linguistic variables, corresponding numerical values, and picture fuzzy numbers (PFNs), as summarized in

Table 2.

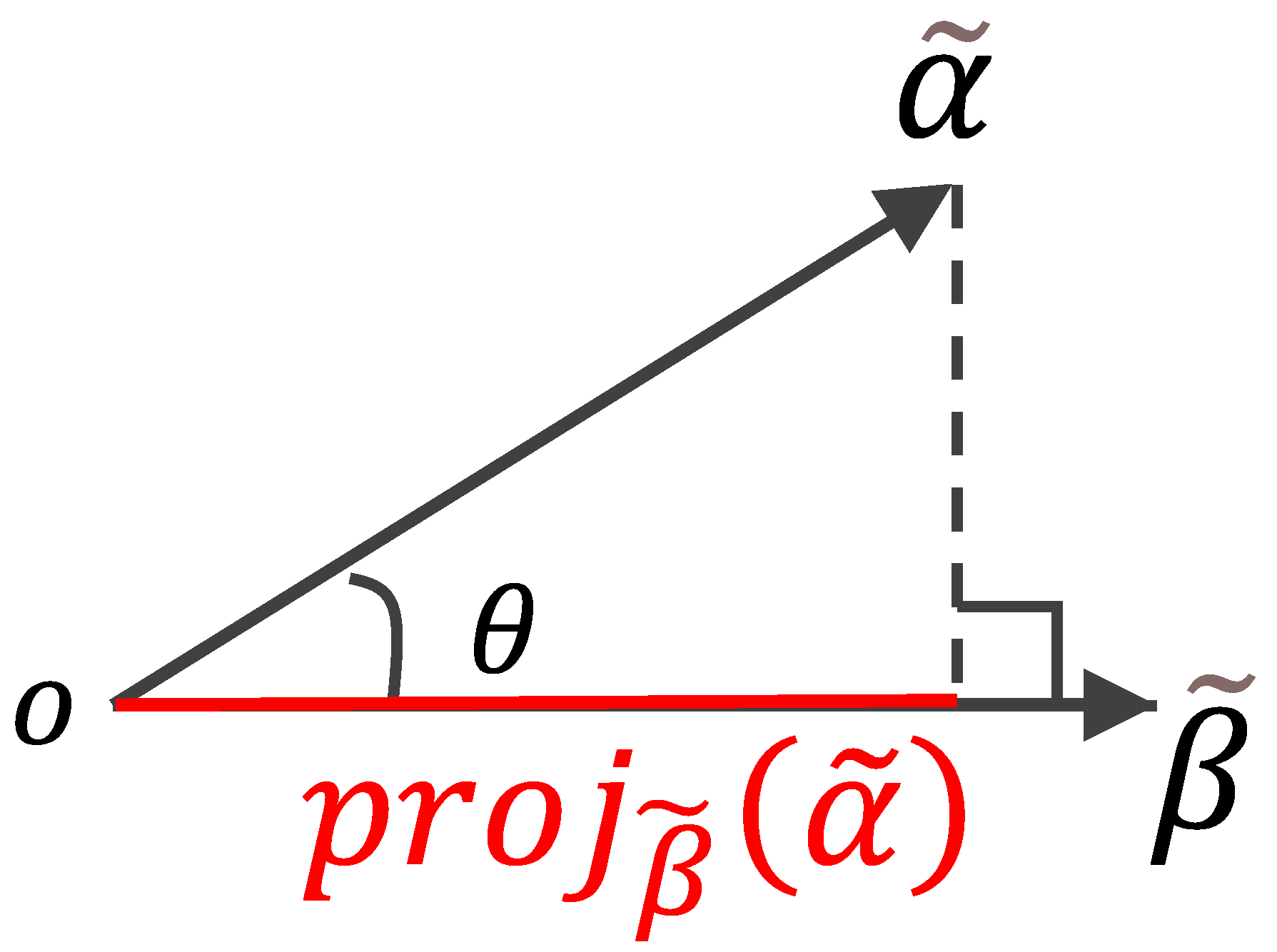

The projection measure plays a pivotal role in evaluating the degree of similarity between different evaluation matrices. In this regard, Wei et al. [

72] introduced a projection-based approach to quantify the proximity of one picture fuzzy vector (PFV) to another, as outlined in Definition 5:

Definition 5. Let and represent two picture fuzzy vectors (PFVs), where and for . The inner product between and is defined as follows:where and

, for

.

The modulus of is given by The projection of onto ,

denoted as ,

is defined as follows: Figure 3 presents a graphical representation of the projection of

onto

.

The projection measure, as described in Equation (6), satisfies the following criterion:

Criterion 1. The greater the value of , the closer is to .

4. Presented Projection Measure

As discussed in the Introduction, projection serves as a fundamental and widely adopted measure for assessing relationships between fuzzy vectors. However, this study uncovers a limitation in its existing formulation, demonstrating that Equation (4) does not consistently adhere to Criterion 1. The following example illustrates this inconsistency:

Example 1. Let and be two picture fuzzy vectors (PFVs). It is evident that . The projection measure is expected to satisfy the condition . However, applying Equation (4) results in , while the norms calculated using Equation (5) are and . By substituting these values into Equation (6), the projection values are obtained as and . This outcome, where , contradicts the expected relationship, highlighting a fundamental inconsistency in the existing projection formulation.

From Example 1, a series of pertinent research questions (RQs) arise:

RQ 1. The proximity of one picture fuzzy vector (PFV) to another, as determined by Equation (6), does not always adhere to Criterion 1.

RQ 2. The projection defined in Equation (6) is not a normalized measure. Specifically, it fails to consistently satisfy the condition . For instance, in Example 1, , which exceeds the upper bound of 1.

RQ 3. The projections and , as derived from Equation (6), may not be equal. In Example 1, for instance, based on Equation (4), and according to Equation (5). Consequently, and as calculated from Equation (6), clearly indicating that .

These observations lead to the following research objectives (ROs):

RO 1. Develop a projection measure for a PFV relative to another PFV such that for all PFVs.

RO 2. Ensure that an increase in corresponds to a closer proximity of to .

RO 3. Guarantee that for all PFVs.

In response to these research questions, a novel projection measure is proposed:

Definition 6. Let and represent two picture fuzzy vectors (PFVs), where and for . The normalized projection of the vector onto is defined as follows:where represents the inner product between and , is the square of the norm of , and is the square of the norm of . Furthermore, and for .

Criterion 2. The closer the in Equation (7) is to 1, the closer the vector is to .

For instance, consider the picture fuzzy vectors (PFVs) and in Example 2. The values are computed as follows: , , , and . The minimum value among is , while the maximum value plus the adjustment term gives . Hence, the projection of vector onto is . Additionally, since and , the projection of vector onto itself is . Similarly, the projection of onto itself is .

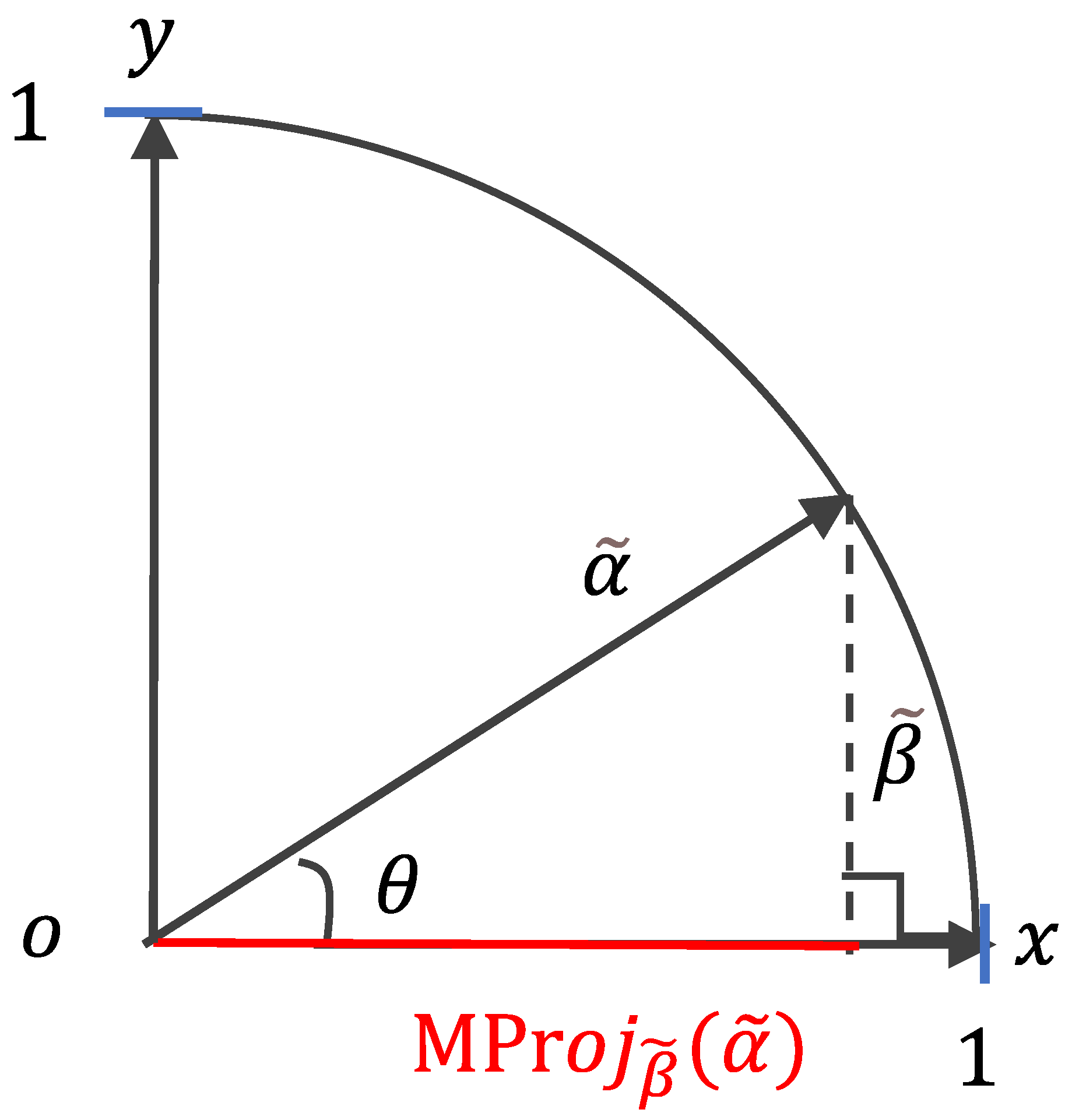

Equation (7) satisfies the criteria set forth in

ROs 1–3. As illustrated in

Figure 4, the normalized projection value

is constrained within the interval [0, 1]. The vector

is positioned in the first quadrant, with its endpoint lying on the circumference of a unit circle (radius = 1).

The projection measure defined in Equation (7) is inherently bidirectional, satisfying the condition

for any pair of vectors

and

. A visual depiction of this bidirectional relationship is provided in

Figure 5.

Definition 7. Let and be two PFMs, where , ; thenis the normalization projection of matrix onto , where , , , and , .

The degree of similarity between two decision matrices, and , is assessed according to Criterion 3.

Criterion 3. The closer the value of is to 1, the greater the closeness of matrix to .

5. A Novel Entropy Measure for Determining Decision Weights

The determination of decision-maker (DM) and attribute weights is a critical component in resolving multi-attribute group decision-making (MAGDM) challenges [

73,

74,

75]. In this study, decision data were collected via structured questionnaires distributed among four expert groups in the maritime industry: ship crew members, technical staff from shipping companies, shipyard maintenance personnel, and regulatory or classification authority representatives such as those from maritime safety administrations (MSAs). Given their varying backgrounds in knowledge, expertise, and practical experience, the influence and contribution of each DM differ. Accordingly, quantifying each DM’s impact—reflected through their assigned weight—remains a key concern in group decision-making (GDM) research (see [

11]).

Shannon entropy [

11] is a widely used metric for quantifying the degree of disorder within a system, with entropy values increasing as the system becomes more disordered. In multi-criteria decision-making, the decision matrix can be regarded as a decision system, the quality of which can be assessed through an entropy-based approach. Decision-makers (DMs) who provide higher-quality decision matrices, characterized by lower entropy, are assigned greater weights. This highlights the role of entropy as a comprehensive measure for determining the weights of DMs. The relationship between the degree of disorder and entropy is defined as follows:

Criterion 4. As the level of disorder within a system increases, entropy correspondingly rises; conversely, a higher degree of order results in lower entropy.

The correlation between entropy and the weight assigned to a decision-maker (DM) is defined as follows:

Criterion 5. A greater entropy value within the evaluation matrix corresponds to a lower weight assigned to the respective DM.

By integrating Criteria 4 and 5, the following principle is established:

Criterion 6. A DM who provides a more structured and orderly evaluation matrix is assigned a higher weight.

Example 3. Consider two decision matrices, and , provided by decision-makers (DMs) and , respectively, as follows:where and represent two alternatives, and , , and are three benefit attributes (where higher values are preferred), expressed using linguistic variables. Examining , it is evident that all assessment values are categorized as “Absolutely Poor” (AP), indicating a highly structured and uniform evaluation. According to Criterion 6, a well-ordered decision matrix should result in a higher assigned weight for the corresponding DM. However, in this case, DM has provided only “Absolutely Poor” ratings, seemingly without substantive consideration. Consequently, the weight assigned to should, in fact, be significantly lower. This apparent contradiction with Criterion 6 underscores a limitation in the current weighting mechanism.

Similarly, the evaluation values in range from “Very Good” to “Absolutely Poor” in an apparently arbitrary fashion, indicating that DM may not have exercised careful judgment. Consequently, the weight assigned to should be relatively low. However, since the assessment values in are well ordered, Criterion 6 suggests that should be assigned a high weight. This contradiction further underscores an inconsistency in the weighting mechanism.

Example 4 introduces two additional assessment matrices, where

,

,

,

, and

remain consistent with those presented in Example 3. The assessment values in

were generated randomly by decision-maker

using the MATLAB function

(7, 2, 3) (MATLAB R2023b). This function produces discrete random numbers drawn from a uniform distribution, with a maximum value of 7, corresponding to the total number of linguistic rating scales specified in

Table 2. Parameters 2 and 3 denote the row and column dimensions of the matrix

, respectively. The resulting matrix

is shown in Example 4:

Example 4. The numerical values in are translated into linguistic variables, as shown in matrix . It is clear that the assessment values provided by decision-maker seem to be assigned in an arbitrary manner, resulting in a highly disordered set of values. As a result, the weight assigned to should be relatively low.

These anomalies can be analyzed through the entropy values of , , and in Examples 3 and 4. To support this evaluation, the linguistic variables in , , and are transformed into picture fuzzy numbers (PFNs), with the conversion method outlined in Table 2. This transformation results in the matrices , , and , as presented below. As outlined by Thao [

30], the entropy value of a picture fuzzy variable (PFV) is defined as follows:

Definition 8. Let be a PFV, where ; thenis defined as the entropy of , where .

Note: In accordance with Arya and Kumar [

76]

, special cases are addressed as follows: if , then ; if , then ; and if , then , as specified in Equation (9). Analogously, the entropy value of a picture fuzzy matrix (PFM) can be defined in an analogous manner:

Definition 9. Let be a PFM, where ; thenis defined as the entropy of , where .

Special cases are processed according to the conditions outlined in the note to Definition 8.

Based on Equation (10), we can calculate the entropies of

,

, and

as follows:

Let us now revisit the assessment values in , , and , as presented in Examples 3 and 4. In the case of , all assessment values are classified as “Absolutely poor.” Correspondingly, the values in matrix remain constant at . As such, the entropy value is relatively high.

As noted earlier, the assessment values in are similarly well ordered. Consequently, the entropy value is also relatively higher.

Finally, we examine the assessment values in , which, as previously mentioned, are randomly assigned by . While the entropy value is deemed acceptable, the corresponding weight, , is problematic. This is due to the fact that the assessment values were assigned without careful consideration. Consequently, it is suggested that the weight be adjusted to zero.

In conclusion, the rationale for investigating more effective approaches to deriving decision-maker (DM) weights lies in the understanding that, when each decision matrix is appropriately provided (as opposed to being randomly assigned, for instance), the average matrix , along with its corresponding entropy, should more closely align with expectations. If the entropy of a given decision matrix is closer to that of , it suggests that the weight assigned to the respective DM should be greater than that of the other DMs.

In summary, the approach proposed in this study is that the weight assigned to each decision-maker is based on the similarity between the individual decision matrix and the average decision matrix. The average decision matrix is computed using the PFS-weighted arithmetic mean operator. The proximity between the evaluation matrix of the

-th decision-maker and the average decision matrix determines the weight assigned to that decision-maker. If the proximity value is higher, it indicates that the evaluation matrix of the expert is more consistent with the average decision matrix, thereby giving more weight to their opinion. As a result, the

-th decision-maker is assigned a higher weight. This approach will be implemented in

Section 6.

6. Research Methodology

Building on the previously developed framework, this section presents a group decision-making approach based on the VIKOR method, specifically tailored for evaluating the reliability of marine equipment.

The notations presented in

Table 3 will be employed throughout the model developed in this paper, with the following set of decision-maker (DM) indices:

We assume that the individual decision matrix is formulated by the

-th DM

, as outlined below:

where

is the PFM. That is, the attribute values

are PFNs for all

,

, and

.

Assuming that

denotes the weight vector of the attributes, the corresponding weighted assessment matrices are given by:

where

and

based on Equation (2).

In accordance with Equation (3), the arithmetic mean matrix

is defined as follows: For

in Equation (12), the arithmetic mean matrix of

(where

) is formulated as follows:

where

and

,

,

,

,

for all

,

[

77].

In accordance with Equation (10), the entropy value of

is determined as follows:

where

,

, and

are the same as in Equation (12),

, and

[

78].

In accordance with Equation (10), the entropy value of

is determined as follows:

where

,

, and

are the same as in Equation (13),

, and

.

Expanding on the concept outlined in

Section 5, we define the following criterion governing the relationship between entropy values and DM weights:

Criterion 10. As the entropy value approaches , the corresponding weight assigned to the DM should increase.

The relative closeness is formulated as follows:

The weight attributed to DM is established based on the following criterion:

Criterion 11. As the relative closeness approaches 1, a higher weight is assigned to DM .

In accordance with Criterion 11, the attribute weights

can be defined as follows:

It is evident that and .

The DM weight vector

, as defined in Equation (17), facilitates the assignment of

to each corresponding decision

in Equation (12). As a result, the individual weighted decision matrix for both attributes and DMs, denoted by

, is expressed as follows:

where

according to Definition 2.

To determine the preferential ranking of alternatives, the individual weighted decisions

are transformed into a group-weighted decision

for each alternative

, as follows:

where

, and the element

is the same as the element

in

of Equation (18).

According to the idea of the VIKOR technique, the maximal assessment matrix is determined as follows:

where

according to Definition 2.

The minimal assessment matrix is defined as follows:

where

according to Definition 2.

The complement of

logically represents an alternative minimal assessment matrix, which is defined as follows:

where

according to Definition 2.

The normalized projection of each group decision

on the

, according to Equation (8), is calculated as follows:

where

,

,

,

,

,

.

According to Criterion 3, we have the following Criterion 12:

Criterion 12. The closer the is to 1, and the closer the matrix is to , the better the ship equipment is.

Similarly, the normalized projection of each group decision

on

, according to Equation (8), is calculated as follows:

where

,

,

. The

and

are the same as in Equation (23).

The -based decision criterion is as follows:

Criterion 13. The closer the is to 1, and the closer the matrix is to , the worse the ship equipment is.

The normalized projection of

onto

, based on Equation (8), is calculated as follows:

where

,

,

.

and

are the same as in Equation (23).

The -based decision criterion is as follows:

Criterion 14. The closer the is to 1, and the closer the matrix is to , the worse the ship equipment is.

The group utility measure for marine equipment

is calculated using the following expression:

where

is from Equation (23),

is the same as in Equation (24),

is the same as in Equation (25),

is a parameter with

,

is the weight of

, and

is the weight of

.

The -based decision criterion is as follows:

Criterion 15. The closer the is to 1, the better the ship equipment is.

Inspired by the literature [

79], let

, while

.

is called the largest group utility, while

is called the smallest group utility. The normalized group utility is defined as follows:

Based on Criterion 14, we have the following Criterion 16:

Criterion 16. The closer the is to 1, the better the ship equipment is.

The group regret measure for marine equipment

is defined by the following equation:

where

is defined as in Equation (24), and

is given as in Equation (25). The parameter

lies within the range

.

represents the weight of

, while

corresponds to the weight of

.

The -based decision criterion is as follows:

Criterion 17. The closer the is to 1, the worse the ship equipment is.

Similar to Equation (28), if we let

,

. The

is called the smallest group regret, while the

is called the largest group regret. The normalized group regret of

is defined as follows:

Building on Criterion 16, we propose the following:

Criterion 18. The closer the value of is to 1, the more preferable the marine equipment becomes.

Accordingly, a comprehensive VIKOR-based measure for equipment

can be derived using the following expression:

where

denotes the compromise coefficient, with

. This represents the weight assigned to the normalized group utility

, while

corresponds to the weight of the normalized group regret

.

If , it indicates that experts tend to prioritize group utility in their decision-making. Conversely, if , group regret becomes the dominant consideration. When , a balanced and compromise-oriented strategy is adopted. In most cases, is employed to reflect a neutral stance. By integrating Criteria 16 and 18, we propose the following Criterion 19:

The -based decision criterion is as follows:

Criterion 19. A larger comprehensive relative closeness means the ship equipment is better.

All of the alternatives are ranked by the index . Suppose that denotes the alternative ranked at the th position by for all , . This ship is written as for . Now, alternatives compose a ranking , in descending order.

For two sets of ship equipment and , if , where is the quantity of ship equipment, , then we say that the distinction between and is small. In this case, the ship equipment is classified into the same grade. Beginning with , the ship equipment is ranked in phases according to the following procedure:

- Phase 1:

If and , where then the alternatives are considered to be tied for the top rank in the ranking list.

- Phase 2:

Starting from

, if

and

, where

, then

are tied for second place. In this case, a partial order is established as follows:

This ranking procedure continues iteratively for the remaining alternatives.

Phase Conclusion: According to the method in Phase 2, the process concludes once is included in the ranking list.

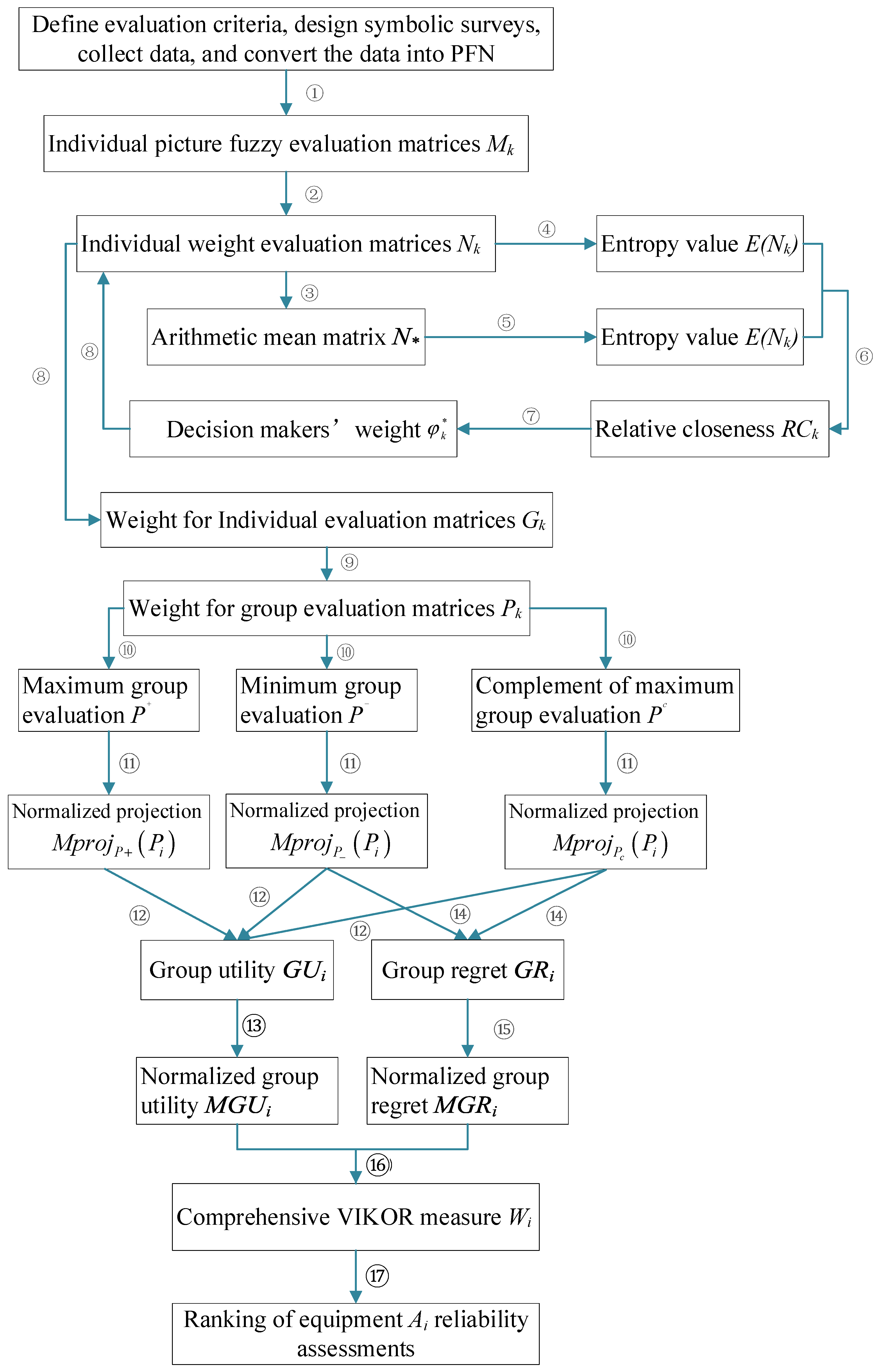

Procedure for Proposed Approach

Based on the expert voting data described in

Section 2 and the attribute definitions pecified in

Section 7.1, the proposed method is implemented as follows:

- Step 1:

Establish individual decisions.

The individual assessment matrix for evaluating the reliability of ship equipment is formulated based on Equation (11), where each element is expressed as a picture fuzzy number (PFN). Specifically, , where , , and denote the degrees of membership, non-membership, and hesitation, respectively, associated with the evaluation provided by the -th DM.

- Step 2:

Construction of weighted attribute decision matrices.

Given the attribute weight vector , the corresponding weighted attribute decision matrix for each DM is computed in accordance with Equation (12).

- Step 3:

Construct the arithmetic mean matrix.

The arithmetic mean matrix , derived from the weighted decision matrices , is constructed in accordance with Equation (13).

- Step 4:

Calculation of the entropy values for weighted decisions.

The entropy value of the weighted decision matrices (where ) is computed in accordance with Equation (14).

- Step 5:

Calculation of the entropy value for the arithmetic mean matrix.

The entropy value of the arithmetic mean matrix is computed using Equation (15).

- Step 6:

Calculation of the relative closeness between the weighted decision entropy and the arithmetic mean matrix entropy.

The relative closeness of the weighted decision entropy to the entropy value of the arithmetic mean matrix is determined using Equation (16).

- Step 7:

Determining the DM weights.

The entropy-based weight for each decision-maker (DM), where , is computed using Equation (17).

- Step 8:

Assign DM weights to individual weighted decisions.

The weight (where ) of each DM is applied to the corresponding weighted decision (where ). The weighted decision for each DM, (where ), is given by Equation (18).

- Step 9:

Transformation of individual decision matrices into group decision matrices.

In accordance with Equation (18), the individual decision matrices (where ) are transformed into group decision matrices (where ), as shown in Equation (19).

- Step 10:

Determine the maximum assessment matrix , the minimum assessment matrix , and the complement of the maximum assessment matrix.

The maximum assessment matrix is calculated using Equation (20). The minimum assessment matrix is established according to Equation (21). The complement of the maximum assessment matrix is formed based on Equation (22).

- Step 11:

Calculate the normalization projection of onto , , and .

The normalization projections , , and are calculated using Equations (23), (24), and (25), respectively.

- Step 12:

Calculation of the group utility measure.

The group utility is computed based on Equation (26).

- Step 13:

Normalization of the group utility.

The normalized group utility is derived using Equation (27).

- Step 14:

Calculation of the group regret measure.

The group regret value is determined as per Equation (28).

- Step 15:

Normalization of the group regret.

The normalized group regret is obtained according to Equation (29).

- Step 16:

Compute the comprehensive VIKOR measure.

The comprehensive VIKOR score for the th ship equipment is calculated using Equation (30).

- Step 17:

Rank the alternatives.

The alternatives are ranked in descending order according to Criterion 19.

For enhanced clarity, the proposed method’s comprehensive flowchart is presented in

Figure 6.

7. Experimental Analysis

7.1. Case Study

This section describes a practical evaluation of the reliability of marine equipment conducted onboard a training vessel operated by a renowned maritime university in Liaoning Province, China. Based on the actual operating environment of the training vessel and expert consultations, three sets of marine equipment were selected as evaluation alternatives, denoted by the set . The decision-makers (DMs) comprised four groups of experts with extensive professional backgrounds and practical experience, represented as the set .

(Marine Mechanical Engineering Experts; 100 personnel in total): Graduates in naval architecture and ocean engineering or related fields, experienced in the design, manufacturing, and testing of ships’ mechanical systems, possessing comprehensive expertise in reliability analysis and performance optimization.

(Marine Electrical and Electronic Engineering Experts; 80 personnel in total): Graduates in marine electrical and electronic engineering, proficient in ship automation, power, and control systems, with extensive practical experience in electrical system planning, integration, and fault diagnosis.

(Marine Maintenance Engineering Experts; 77 personnel in total): Graduates in maintenance or reliability engineering, having served as equipment maintenance engineers in shipyards or onboard vessels, skilled in fault diagnosis procedures, maintenance operations, and planning, with practical expertise in equipment lifespan assessment and reliability improvement.

(Maritime Regulatory and Certification Experts; 82 personnel in total): Graduates in maritime management or related fields, employed by the Maritime Safety Administration (MSA) or classification societies, actively involved in formulating and implementing international maritime safety regulations and inspection standards, and specializing in compliance reviews and risk assessments.

This categorization framework effectively integrates the diverse positions and perspectives of stakeholders, ensuring a comprehensive and balanced evaluation process.

The evaluation attribute system was established based on literature review, expert discussions, and practical experience, resulting in four core attributes defined as the set {good reputation, low cost, quick repair, easy recovery}, which are detailed as follows:

A good reputation reflects the well-established standing of the equipment or system within the maritime industry, signifying a history of reliable, fault-free performance. Experts assess this attribute based on their professional experience, machinery logs, maintenance records, and other relevant sources. A higher proportion of votes marked with a check (✓) and a lower proportion marked with a cross (✗) or circle (◯) indicate superior performance of this attribute. As such, it is classified as a benefit attribute.

Low cost denotes the relatively minimal financial burden involved in maintaining the reliability of ship equipment or systems, encompassing expenses related to spare parts and materials. Experts assess this attribute based on their professional experience and available inventory of spare parts. As such, low cost is categorized as a benefit attribute.

Rapid repair denotes the minimal time required to restore ship equipment or systems to full operational capacity. This attribute reflects the equipment’s ability to swiftly recover functionality following a failure, which may be enhanced by features such as robust self-diagnostic capabilities or an optimized modular design. As such, this attribute is classified as a benefit factor.

Ease of recovery refers to the capacity of ship equipment or systems to be reinstated with minimal effort following a failure. It reflects the ship’s ability to restore its equipment using only onboard resources and the expertise of the crew, without the need for external support from shipyards or docks. This attribute is therefore classified as a benefit factor.

All of the experts received unified instructions on the evaluation method and scoring criteria before the assessment. They then evaluated the ship equipment according to the defined assessment attributes. The raw scoring data are presented in

Table 4.

In the first step, the evaluation values can be determined by picture fuzzy numbers (PFNs) calculated based on the symbolic voting data.

For example, the total number of participants was 100, among whom

,

,

. The evaluation matrices for three sets of marine equipment, denoted as

, are presented in

Table 5.

Through a negotiation process, the decision-makers (DMs) determine the attribute weights, yielding the weight vector

. In Step 2, the weighted group decisions

,

,

, and

are outlined, as shown in

Table 6. Subsequently, the arithmetic mean matrix

, derived from all weighted decisions, is established in Step 3. For clarity, the matrix

is also provided in

Table 6.

In Step 4, the entropy values

for the weighted matrices

(where

) are computed, with the results presented in

Table 6. Step 5 involves the calculation of the entropy value

for the arithmetic mean matrix

, which yields

. The relative closeness is determined in Step 6, and in Step 7, the entropy-based weights of the decision-makers (DMs) are derived. The entropy values

, relative closeness

, DMs’ weights

, and the corresponding rankings of the DMs are comprehensively summarized in

Table 7.

In

Table 7, the decision-makers’ (DMs) weight vector

is presented. In Step 8, these weights,

, are assigned to the individual decisions

. This results in the weighted decision matrices

, which are displayed in

Table 8.

In Step 9, the individual assessment matrices

are transformed into the group assessment matrices

, as shown in

Table 9.

Based on Step 10, three reference matrices,

,

, and

, are established, as shown in

Table 10.

In Step 11, the normalized projections of each group assessment matrix onto the maximum assessment matrix , the minimum assessment matrix , and the complementary assessment matrix are calculated.

The normalization projections and rankings of three sets of marine equipment based on projections are summarized in

Table 11.

Let

, and the group utility values

of marine equipment

are computed in Step 12, based on Equation (26). In Step 13, the normalized group utility values

are determined using Equation (27). Let

, and the group regret values

of marine equipment

are calculated in Step 14, according to Equation (28). Step 15 yields the normalized group regret values

using Equation (29). Let

, and the comprehensive VIKOR values

of ships

are computed in Step 16, as specified by Equation (30). The VIKOR indices

,

,

,

, and

, along with the rankings of the three sets of marine equipment, are summarized in

Table 12.

From

Table 12, we can see that

and

are both less than

(where

, so

). According to Step 17, we find that the compromise solutions with classification is

. That is,

are in joint first place in the ranking list. According to the traditional rule, the ranking of three marine equipment sets is

. According to the new VIKOR technique, there is no significant difference among the three marine equipment sets. This identification is referred to as one of the VIKOR technique’s advantages.

7.2. Static Analysis

In the experimental analysis presented above, the current model incorporates several evaluation metrics, including , , , , , , and . These metrics are individually employed to rank the marine equipment , with the ranking process closely resembling the approach used for the comprehensive VIKOR measure .

According to the grading rule defined in

Section 6 (Phase 1), the group utility

, as defined in Equation (23), serves as a measure, hereafter referred to as

for convenience. The rankings derived from

are presented in

Table 11. Step 11 yields the following values:

. Notably, the difference

is less than

, implying that the three alternatives

must be classified into the same grade based on the

measure.

Similar to the results obtained with , the application of the measures , , , and also leads to the classification of three sets of marine equipment into a single grade.

The three sets of marine equipment are assigned to a single grade based on the five aforementioned measures. This outcome is due to the minimal differences observed in the measured values. To mitigate this limitation, the values of the measures are normalized using Equation (27) and Equation (29), respectively.

From

Table 12, we can see that

,

,

. From the difference,

, and

, so we have

according to the measure

.

If the regret measure

is normalized, then the

in

Table 12 shows that

,

,

. From the difference

and

, we know that

according to the measure

.

The rankings with classification based on the measures

,

,

,

,

,

, and

are summarized in

Table 13.

It can be observed from

Table 13 that the ranking of the three sets of marine equipment is

. In other words,

and

are tied for first place, followed by

.

7.3. Comparison with a Different VIKOR-Based GDM Approach

As introduced by Yue [

80], the group utility measure is defined as follows:

where

is the same as defined in Equation (23). The authors of [

80] defined the group regret for marine equipment

as follows:

where

is specified as shown in Equation (31).

If the group utility measure is redefined according to Equation (32), the comprehensive VIKOR index

is subsequently modified as follows:

where

,

,

.

,

,

.

The subsequent experimental data are likewise derived from the illustrative example presented in

Section 7.1.

The measurements derived from the VIKOR indices, namely,

,

,

,

, and

, along with the precise rankings of the three sets of marine equipment, are presented in

Table 14.

Table 14 shows that the differences of measurements

and

are all smaller than

. Therefore, they should be classified into one grade

. This ranking is equivalent to no classification being made.

Similar to the above experimental analysis, the three sets of marine equipment can be distinguished by

,

, or

. We can see that

, and

. Therefore, we can observe that

based on the measure

in Equation (27). The measurements

and

are similar to

.

Table 14 reveals that the rankings with classification are all

according to the measures

,

, and

. These three rankings are consistent. This result is shown in

Table 15.

Thus, the measure

has a limited impact on

in Equation (33). Furthermore, these rankings are also radically different from the ranking

in

Table 13 in

Section 7.2. Compared with Equation (33), we can say that the VIKOR measure

in Equation (30) is more comprehensive.

The primary cause of the difference lies in the absence of specific regret information in model [

80]. In this model, the group regret of marine equipment

is directly measured by the complement of the group utility

. This represents an imperfection in the approach.

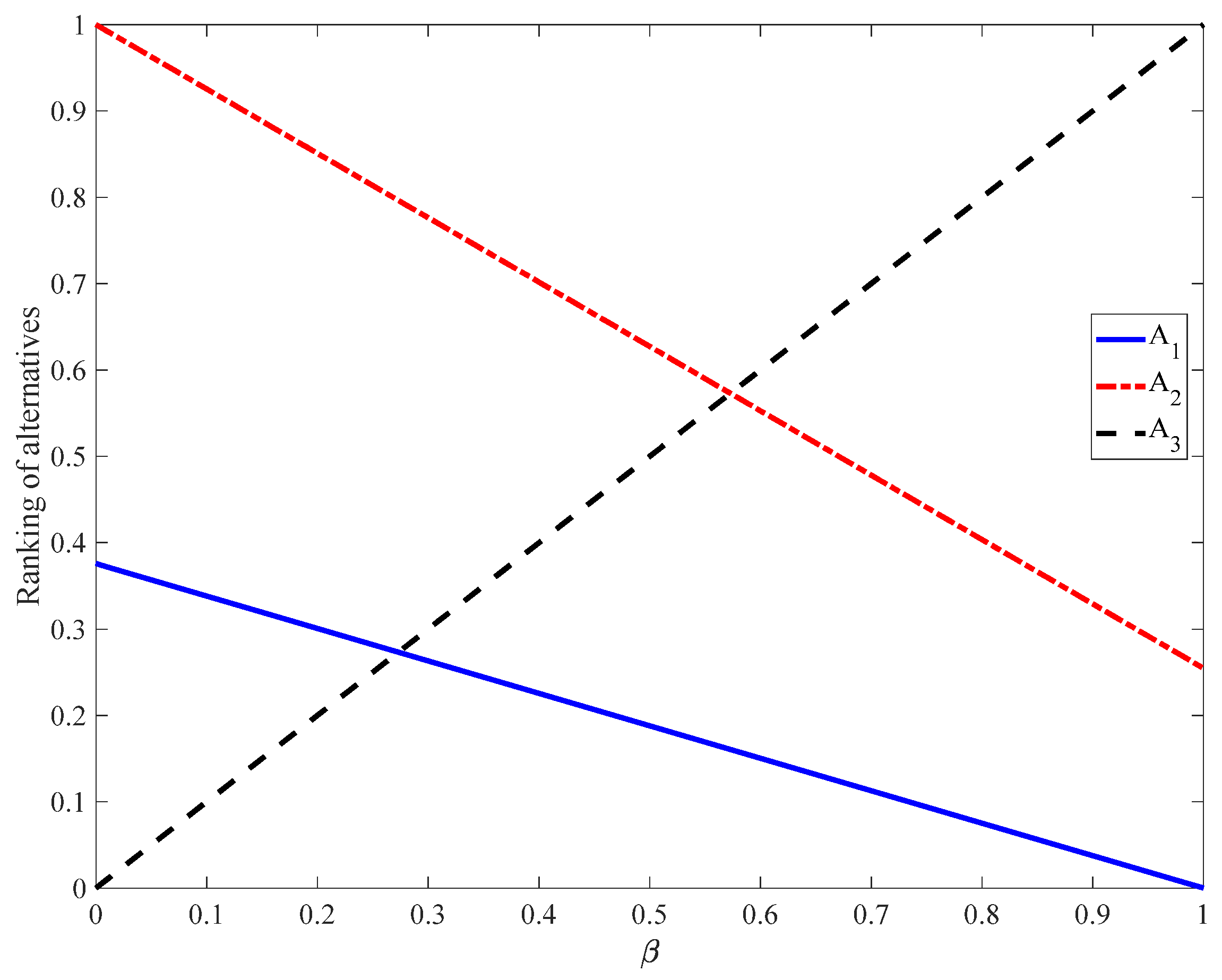

7.4. Dynamic Experiment for the Comprehensive VIKOR Measure

This section presents a dynamic analysis of the comprehensive VIKOR measure

as defined in Equation (30), focusing on the impact of varying compromise coefficients

within the interval [0, 1]. To ensure consistency and facilitate a robust comparative evaluation, the same dataset used in

Section 7.1 is applied in this experiment.

Firstly, the compromise coefficients,

and

in Equation (30) represent the respective weights of

and

. As

increases from 0 to 1, the resulting rankings for the three sets of marine equipment (

,

, and

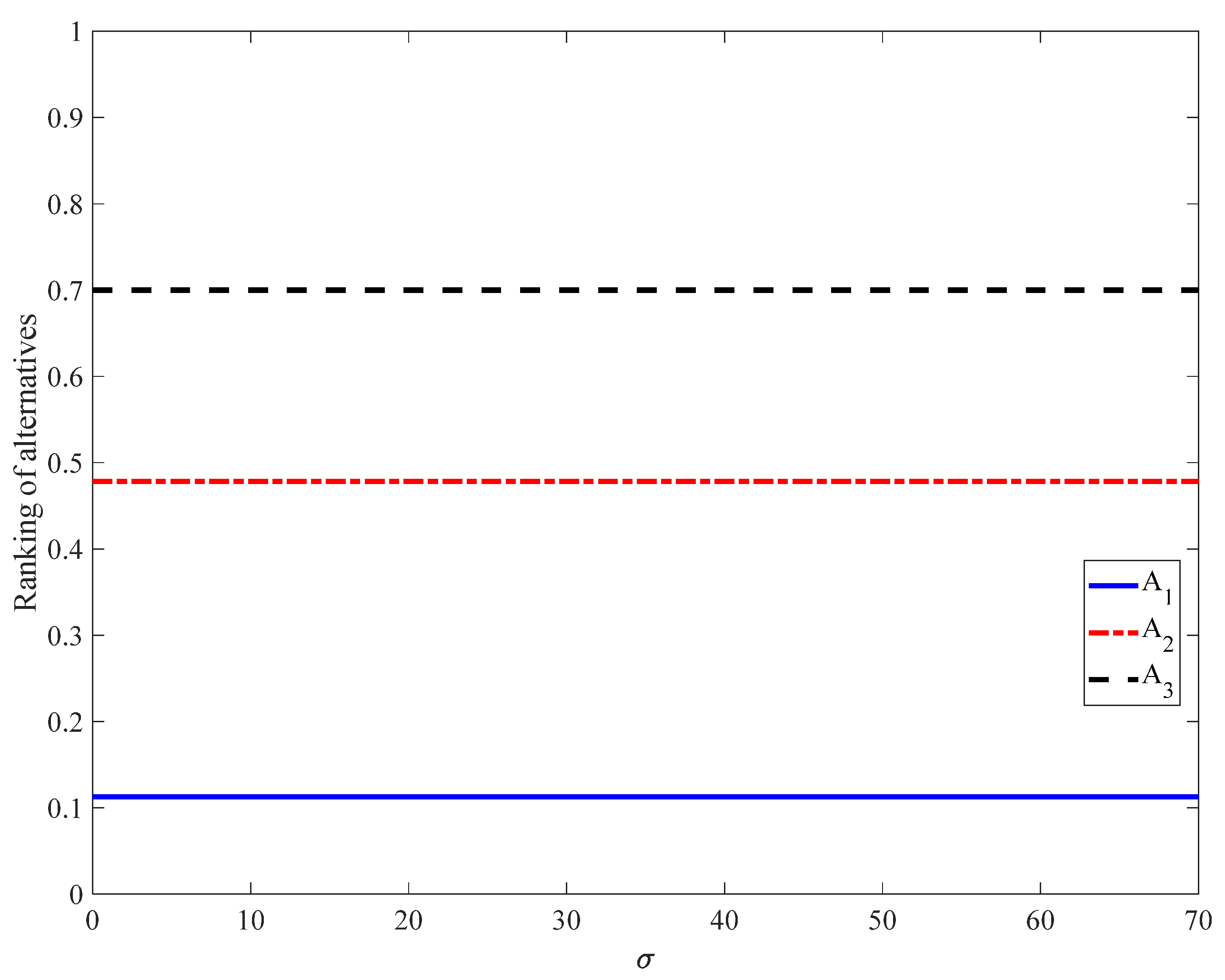

) are depicted in

Figure 7.

From

Figure 7, it can be observed that the ranking sequence is

when

lies within the range [0, 0.28];

when

lies within the range [0.28, 0.57]; and

when

lies within the range [0.57, 1]. How can the overall ranking of the three marine equipment sets be defined throughout this process? Following the approach outlined in [

11], if the ranking curves for two alternatives (

and

) intersect, both

and

are classified into the same category. In this context, the three marine equipment sets (

,

, and

) are considered to be tied for first place in the overall ranking.

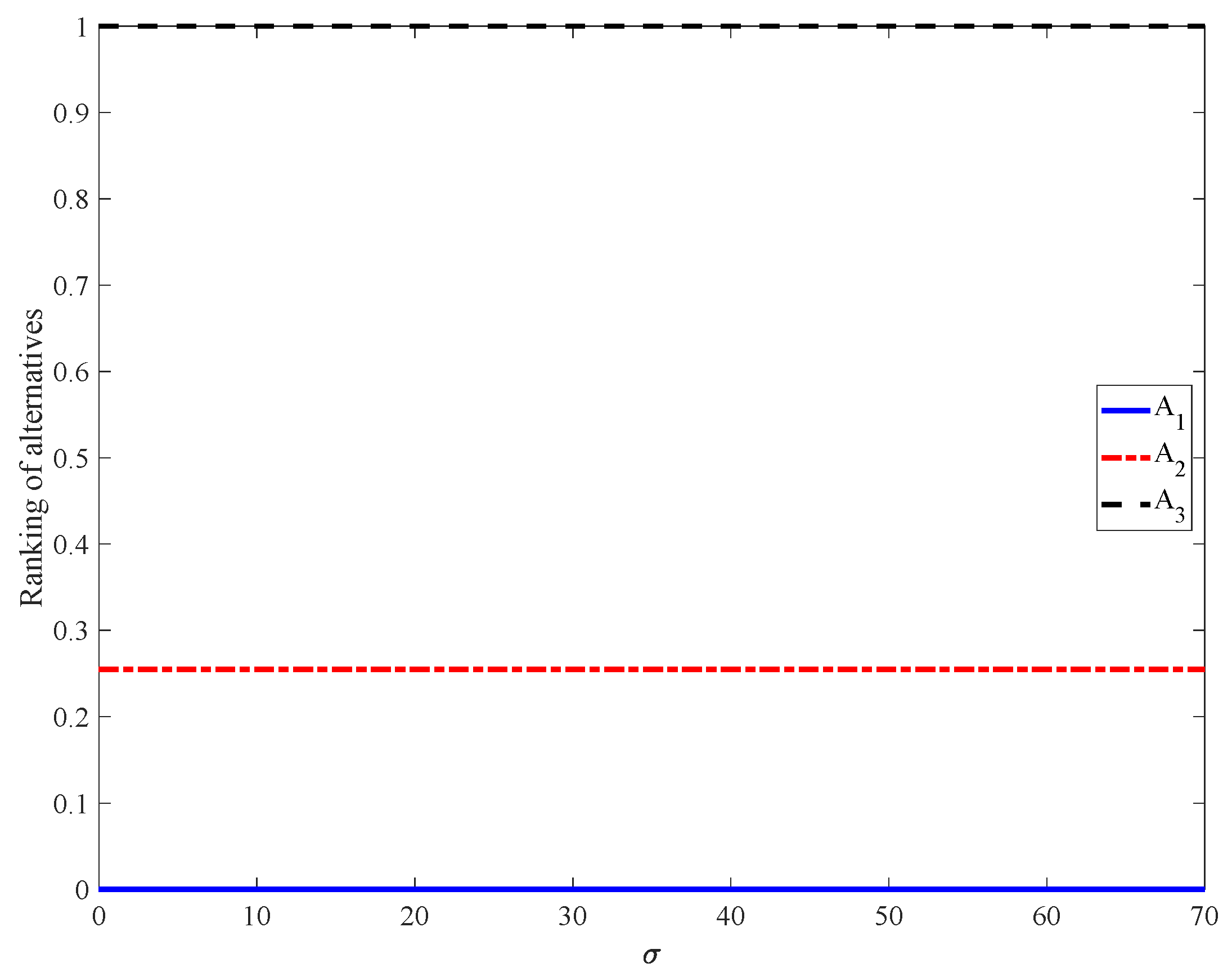

Second, the proposed method is further examined under a dynamic environment. Specifically, we consider the assessment value

in matrix

from

Table 5. To simulate variability, we define

,

, where the dynamic parameter

. All other evaluation values remain consistent with those in

Table 5. By setting

in Equation (30) and allowing

to vary from 0 to 69, the dynamic ranking behavior of the three marine equipment alternatives

,

, and

, based on the comprehensive VIKOR index

, is illustrated in

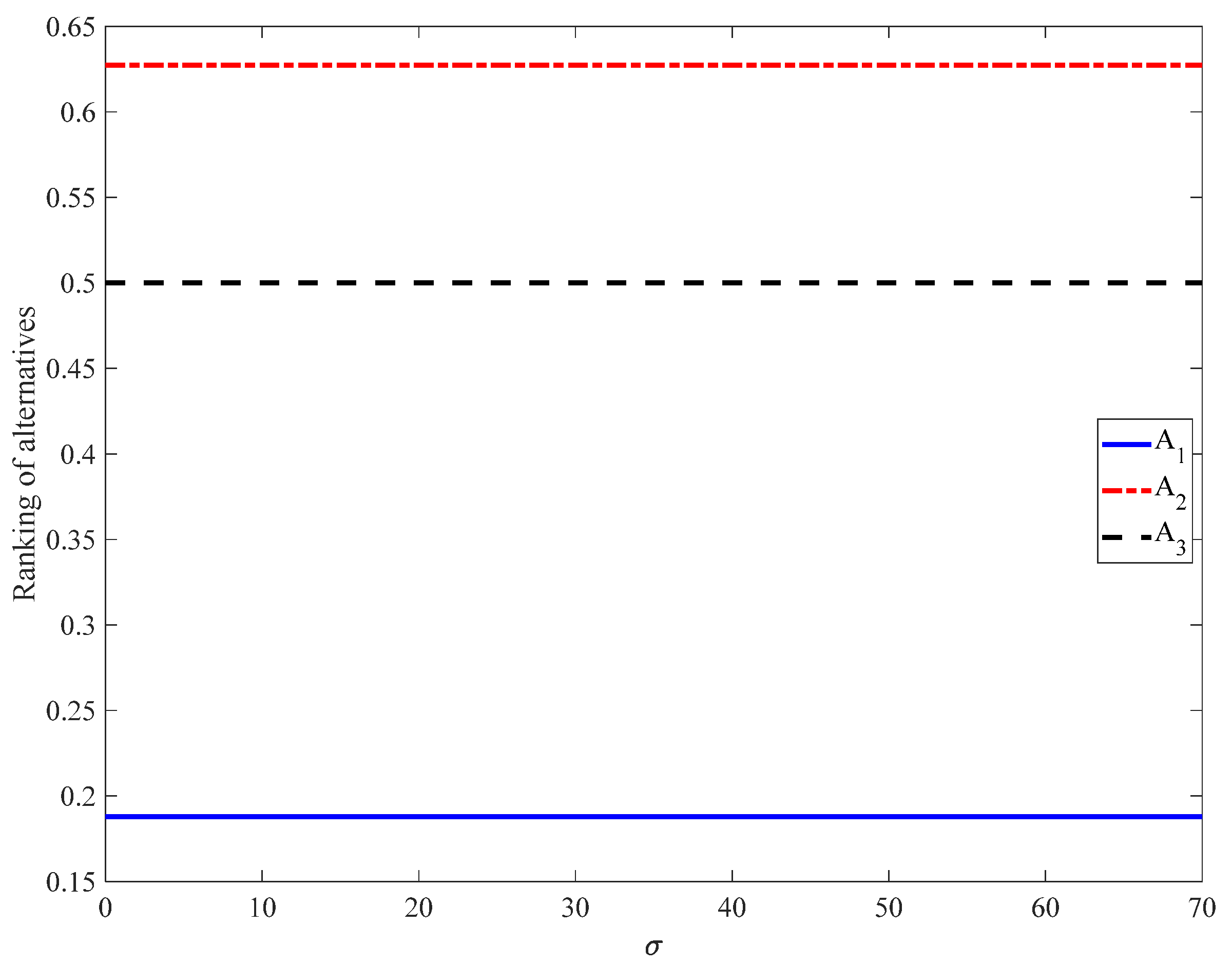

Figure 8.

Figure 8 shows that, for

, the

values are constant:

,

, and

. Thus, the ranking is

. To further investigate the impact of

, rankings evaluated under the same dynamic conditions for

values of 0.2, 0.6, and 0.7 are presented in

Figure 9,

Figure 10 and

Figure 11, respectively.

Figure 9 shows that, for

, the

values are constant:

,

, and

. Thus, the ranking is

.

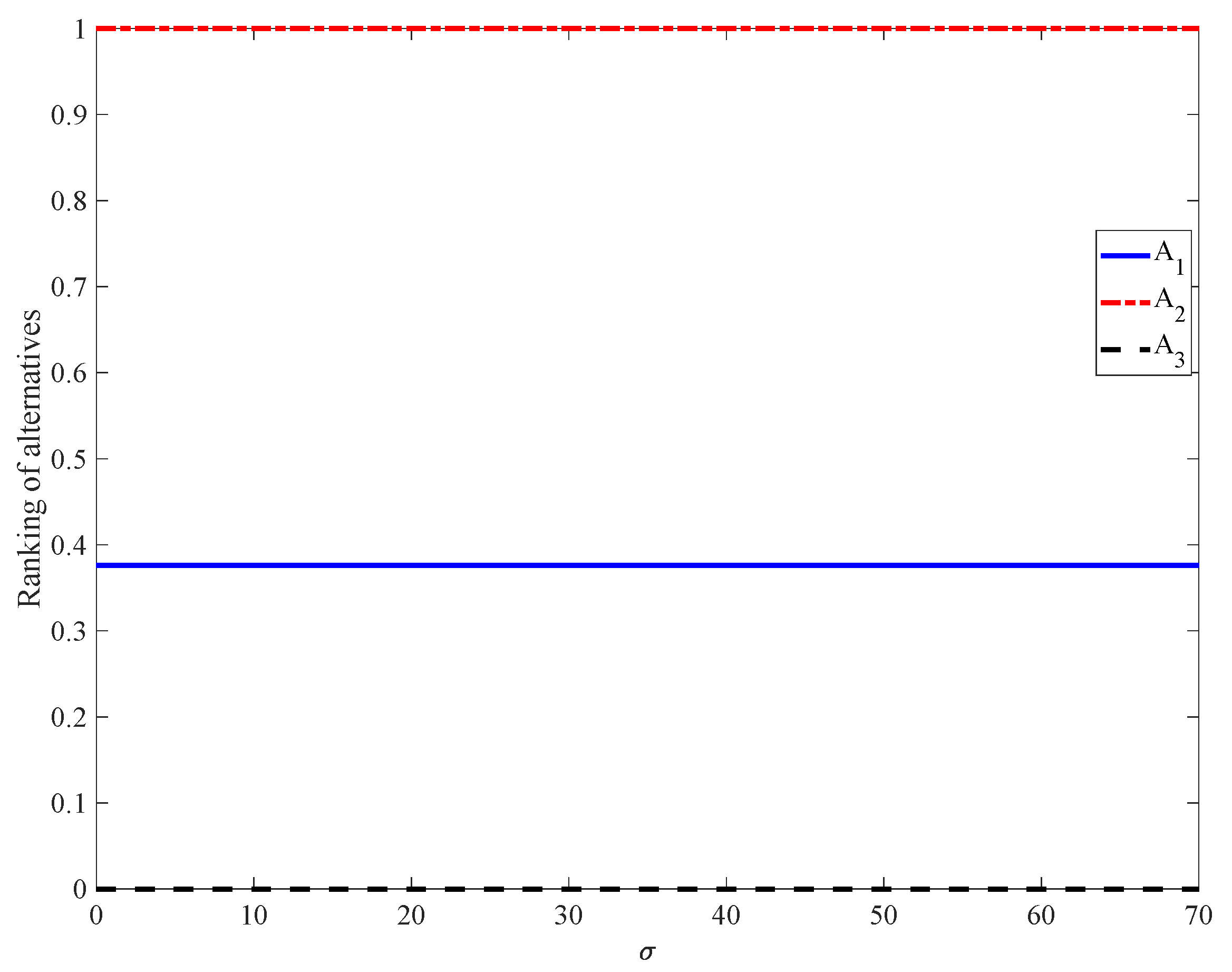

Figure 10 shows that, for

, the

values are constant:

,

, and

. Thus, the ranking is

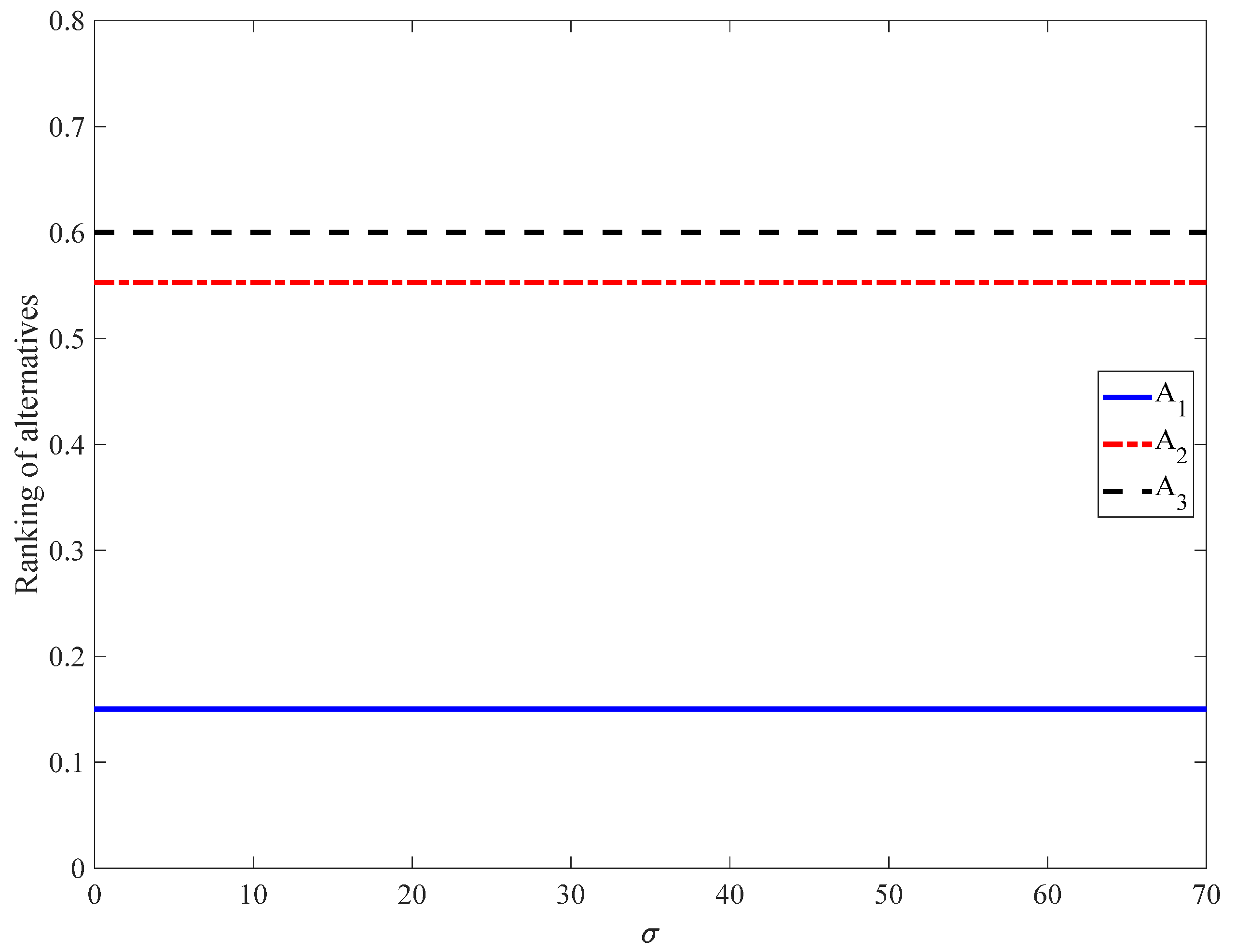

.

Figure 11 shows that, for

, the

values are constant:

,

, and

. Thus, the ranking is

. When

is set to 1,

in Equation (30) reduces to

. Conversely, when

is set to 0,

simplifies to

. To further explore dynamic behavior, rankings based on

in Equation (27) under the same dynamic

as

Figure 8 and setting

in Equation (26) are presented in

Figure 12.

Figure 12 shows that, for

in Equation (27), the values are constant:

,

, and

. Thus, the ranking is

. For the dynamic parameter

as defined in

Figure 8 and setting

in Equation (28), rankings based on

in Equation (27) are presented in

Figure 13.

Figure 13 shows that, for

in Equation (29), the values are constant:

,

, and

. Thus, the ranking is

. The dynamic changes in

were further investigated. Their ranking results were calculated according to Equation (26) and are presented in

Figure 14. The experiment used parameter σ as defined in

Figure 8 and setting

in Equation (26).

Figure 14 illustrates that the

curves do not intersect, showing distinct performance levels. Thus, the ranking is

.

For the VIKOR group regret index

, a smaller value indicates better performance. To ensure consistency,

is transformed as follows:

A higher

corresponds to a superior performance.

Figure 15 presents the rankings based on it being transformed, under the same dynamic conditions as depicted in

Figure 8.

Figure 15 demonstrates that the three curves do not intersect. Consequently, it can be concluded that the rankings of the three sets of marine equipment are

based on

in Equation (34) under this dynamic context.

Based on the above experimental analysis, it is evident that (1) different models may yield varying results, and (2) different experimental conditions may lead to diverse outcomes. A natural conclusion is that the ranking occurring most frequently should be regarded as the most reliable result. The rankings derived from the aforementioned experimental results are presented in

Table 16.

Table 16 shows that

and

are the preferred solutions based on the evaluation of all measures.

The experimental results demonstrate that the ranking methods developed in this study for both static and dynamic environments are reasonable. The order relationships of the three sets of marine equipment based on these ranking methods remain stable. These ranking methods constitute a new VIKOR-based group decision-making (GDM) model.

In the long term, investing in reliable equipment is cost-effective, as it reduces the frequency of breakdowns, repairs, and replacements. Companies with a reputation for reliable operations also gain a competitive edge in the maritime industry, attracting clients and ensuring long-term economic viability. To achieve these goals, a scientific and objective evaluation method is crucial. The method proposed in this paper provides powerful support for this purpose: compared to existing methods, its reliability grading of key equipment is clearer, effectively distinguishing between previously indistinguishable equipment grades, and its decision ranking is more stable. This model can accurately identify low-reliability and high-risk equipment, support maintenance prioritization and risk warnings, and help operational departments rationally allocate maintenance resources to improve equipment safety and management efficiency.