2.1. Methods Based on Traditional Machine Learning

UATR technology originally depended on the manual analysis of acoustic signal characteristics from various perspectives. Skilled sonar operators could identify acoustic signals by analyzing beats, timbres, and other features in conjunction with various spectrograms. However, this approach is prone to environmental influences and subjective interpretations, leading to inconsistent accuracy and notable constraints. With advancements in science and technology, the underwater acoustic field has witnessed the introduction of machine learning methods based on statistical classification, as illustrated in

Figure 1. This development marks a significant shift toward more objective and stable recognition techniques.

Since the 1990s, researchers have been integrating signal analysis theory with machine learning techniques, utilizing handcrafted feature identifiers to extract attributes from underwater acoustic signals. These attributes include the Zero-crossing Rate (ZCR), Wavelet Transform (WT) [

8], Hilbert–Huang Transform (HHT), Higher-order Spectral Estimation, and Mel-frequency Cepstral Coefficient (MFCC) [

9]. Machine learning classifiers such as Bayesian, Decision Tree, and Support Vector Machines (SVMs) [

10] are then employed to identify and classify underwater targets. Notably, Moura et al. trained an SVM model using a dataset of ship-radiated noise collected from a real marine environment, employing LOFAR images as input and achieving an accuracy of 73.18% [

11]. In another study, a Gaussian Mixed Model (GMM) classifier, trained using the standard Expectation–Maximization algorithm, attained a classification accuracy of 75.4% [

12]. The BAT algorithm optimizes kernel parameters and achieves higher classification accuracy, using MFCC features as input [

13]. Compared with other parameter optimization algorithms, such as genetic algorithms (GAs) and particle swarm optimization (PSO), the BAT algorithm has the advantage of conducting global and local searches simultaneously to avoid falling into the local optimum. The results show that the accuracy of the classifier using the BAT optimization algorithm is six percentage points higher than the PSO algorithm. Yang et al. [

14] introduced a novel AdaBoost SVM model based on a weighted sample and feature selection method (WSFSelect-SVME) to improve the accuracy of UATR, reducing extra computational and storage costs. The proposed model solved the limitations of traditional ensemble SVM methods: (1) Training data often have poor quality results in errors between actual and theoretical results. (2) Ensemble recognition systems usually have higher complexity and computational costs. The experimental results on the UCI sonar dataset and real-world underwater acoustic target dataset show that the WSFSelect-SVME model obtains better recognition performance and robustness than the Adaboost SVM ensemble algorithm. Kim et al. used synthesized sonar signals as input to avoid the problem of data acquisition and applied a multi-aspect target classification scheme based on a hidden Markov model for classification [

15]. Meng et al. introduced an SVM classification method based on waveform structure, reaching an accuracy of 81.20% [

16]. While traditional machine learning approaches have demonstrated commendable recognition capabilities in less complex marine settings, their capacity to accurately fit the sample distribution and generalize across datasets that require intricate feature extraction remains limited.

2.2. Methods Based on Deep Learning

In recent years, rapid advancements in deep learning technology have significantly impacted computer vision and pattern recognition, heralding a new era marked by self-optimization and deep feature mining capabilities. These advancements have found applications across a diverse range of fields [

17,

18,

19]. UATR essentially falls under the umbrella of pattern recognition, and techniques based on deep learning are particularly pertinent and promising within this domain.

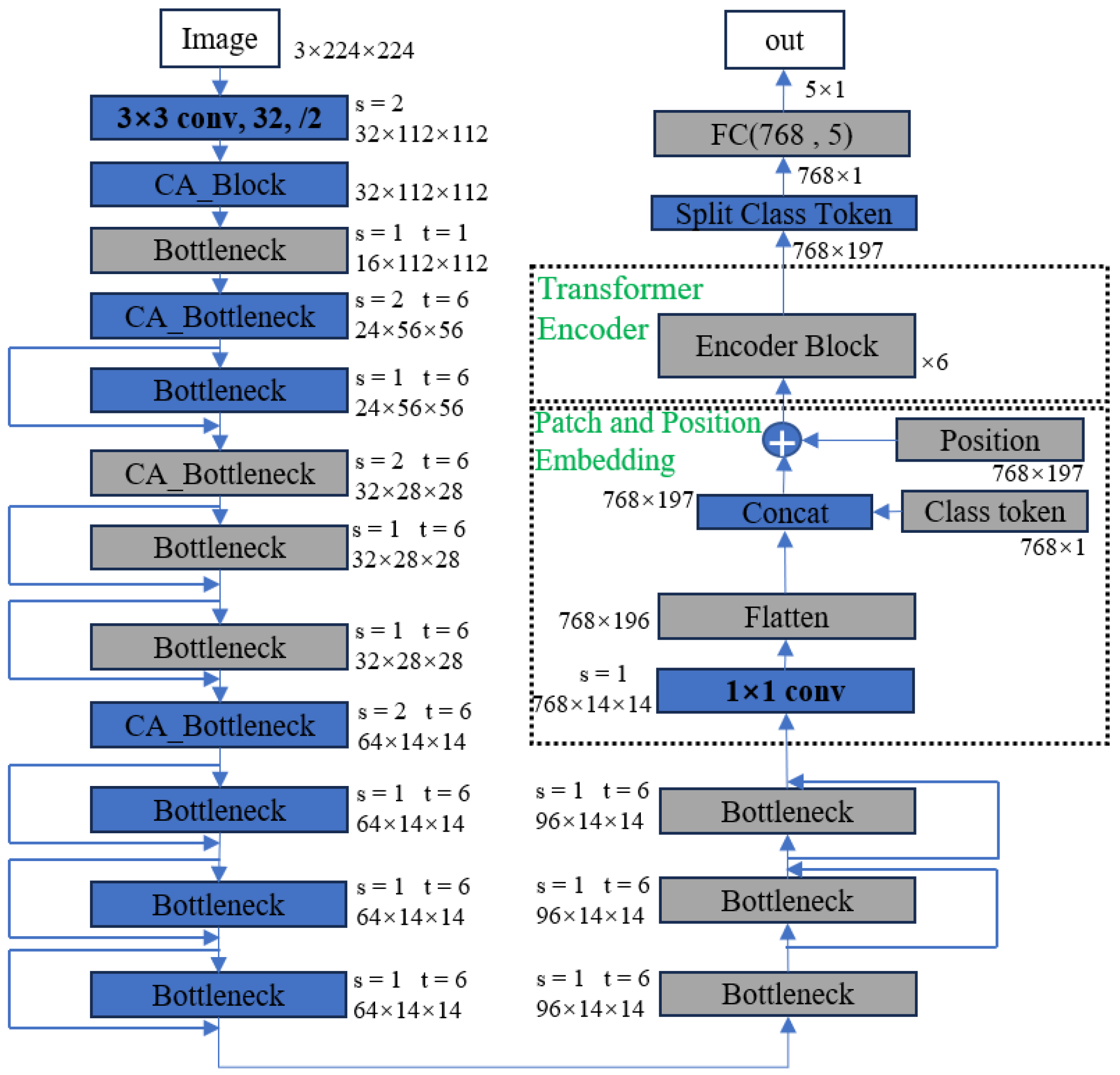

Figure 2 delineates the recognition process, showcasing how deep learning methodologies can be effectively applied to UATR.

Recent advancements in deep learning have ushered in significant improvements in UATR, with various researchers employing innovative approaches to enhance recognition accuracy. Sabara et al. utilized spectrograms as inputs for aquatic target recognition and classification through convolutional neural networks (CNNs), achieving an accuracy of 80% [

20]. Hu et al. distinguished the Shipsear dataset into three ship sizes (large, medium, and small) and utilized the original one-dimensional time–domain signals for input into a novel deep neural network model. This model, which combines depth separable convolution with time expansion convolution, attained a classification accuracy of 90.09% [

21]. Zhao et al. introduced a multiscale residual unit (MSRU) to develop a deep convolutional stack network, demonstrating the MSRU algorithm’s effectiveness within a generative adversarial network framework and achieving an accuracy of 83.15% [

22]. Li et al. proposed a method that leverages a deep neural network alongside an optimized loss function to reach 84.00% accuracy [

23]. Ke et al. enhanced neural network performance through migration learning, achieving a recognition accuracy of 93.28% [

24]. Luo et al. employed Restricted Boltzmann Machines (RBM) based on a stochastic neural network for recognition, achieving an accuracy of 93.17% [

25]. A study extracted MFCC and LOFAR spectrogram features of underwater acoustic signals as network inputs, comparing CNN, LSTM, and SVM machine learning methods across different signal-to-noise ratios. The combination of LOFAR inputs with the CNN emerged as the most effective, reaching 95% accuracy. The three classifiers attained accuracies of 0.9914, 0.9892, and 0.9536, respectively, translating to a 22% recognition rate improvement. For ship-radiated noise simulation signals, both CNN and LSTM models were capable of nearly 80% recognition rates at a −10 dB signal-to-noise ratio [

26].

While many of the methodologies previously outlined are somewhat basic and overlook comprehensive information integration, recent scholarly work has delved into UATR methods that leverage both feature-level and decision-level fusion. This approach has shown to significantly enhance recognition accuracy by synergizing different types of information. Han et al. adopted a feature-level one-dimensional fusion strategy, amalgamating feature vectors into a combined CNN and LSTM neural network, resulting in a classification accuracy of 92.14% [

27]. Hong et al. implemented a feature-level three-dimensional fusion recognition method based on ResNet18, achieving a correct rate of 94.30% [

28]. Feng et al. employed decision-level fusion by separately inputting three types of features, including MFCC, into the network, reaching a recognition accuracy of 98.34% [

29]. These advancements underscore the efficacy of information fusion in improving recognition accuracy. However, while CNNs excel in local feature extraction, they fall short in global feature representation and the clear delineation of line spectra from background noise. Similarly, while LSTMs address certain sequence dependencies, they exhibit inefficiencies in processing time series due to a lack of long-term dependency and parallel computation capabilities, leading to inefficient training. Addressing these limitations, Li et al. pioneered the introduction of the Transformer model into UATR. Utilizing the Mel spectrum as input, their STM model achieved an impressive accuracy of 97.70% [

30]. This innovative approach, grounded in information fusion, demonstrates enhanced effectiveness in classification and recognition in the underwater acoustic domain, marking a significant advancement over traditional and singular feature-based methods.

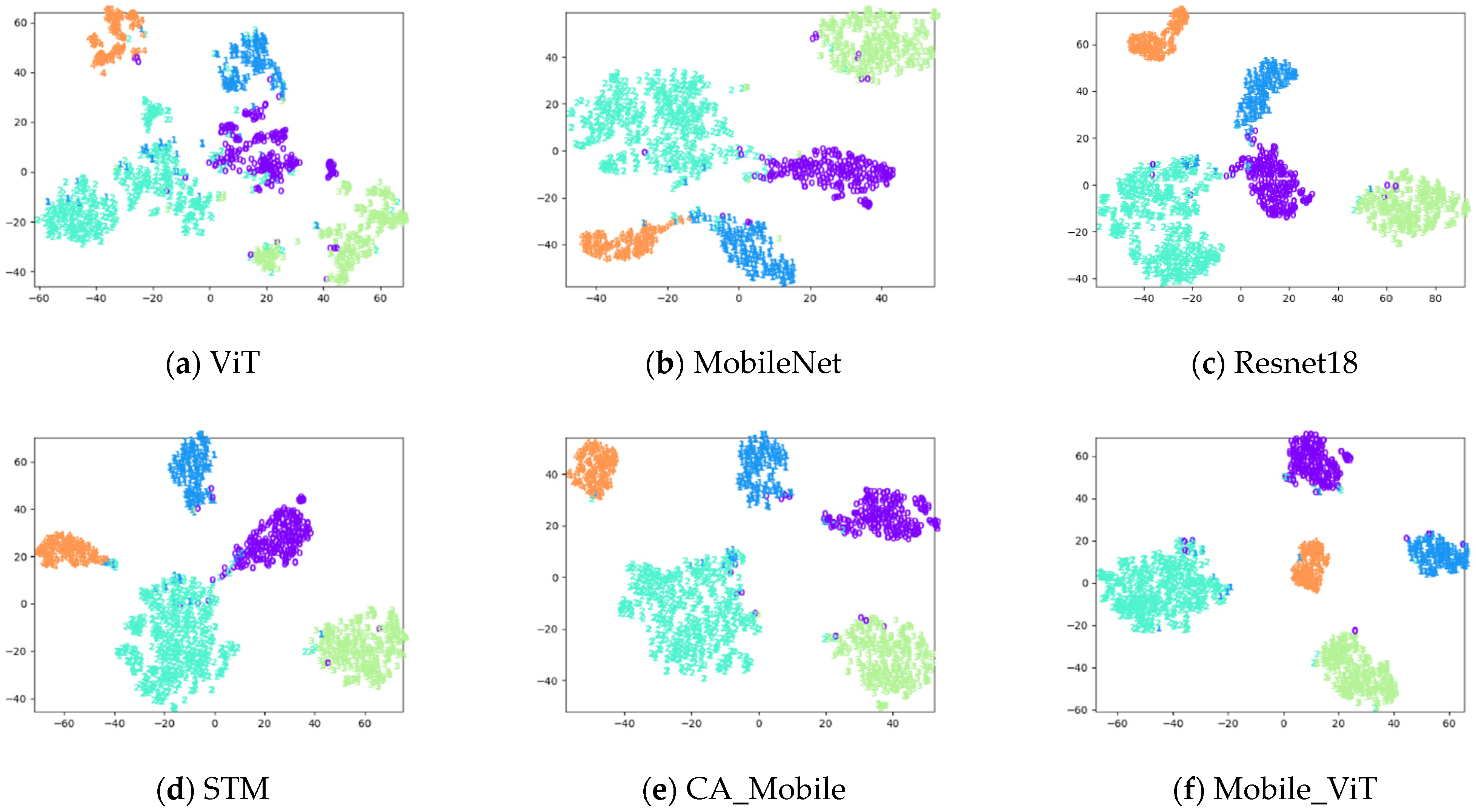

In summary, firstly, CNNs excel at capturing local features, while Transformers are skilled in capturing global features. The combination could improve the local and global feature extraction capabilities of the model. Secondly, Transformers exhibit strong capabilities in modeling long-range dependencies in sequence data, and underwater acoustic signals exhibit richer features over long-range scales. By cascading Transformers after MobileNet, the model can effectively handle long-sequence data and capture long-range dependencies. Thirdly, the combination of MobileNet and Transformers is adaptable to various types of data, including images, text, and speech, and exhibits lighter weight than other Transformers. So, integrating the MobileNet and Transformer allows for leveraging their complementary strengths, facilitating effective processing of sequence data, and yielding improved model performance across diverse tasks.