Theoretical Models of Consciousness: A Scoping Review

Abstract

1. Introduction

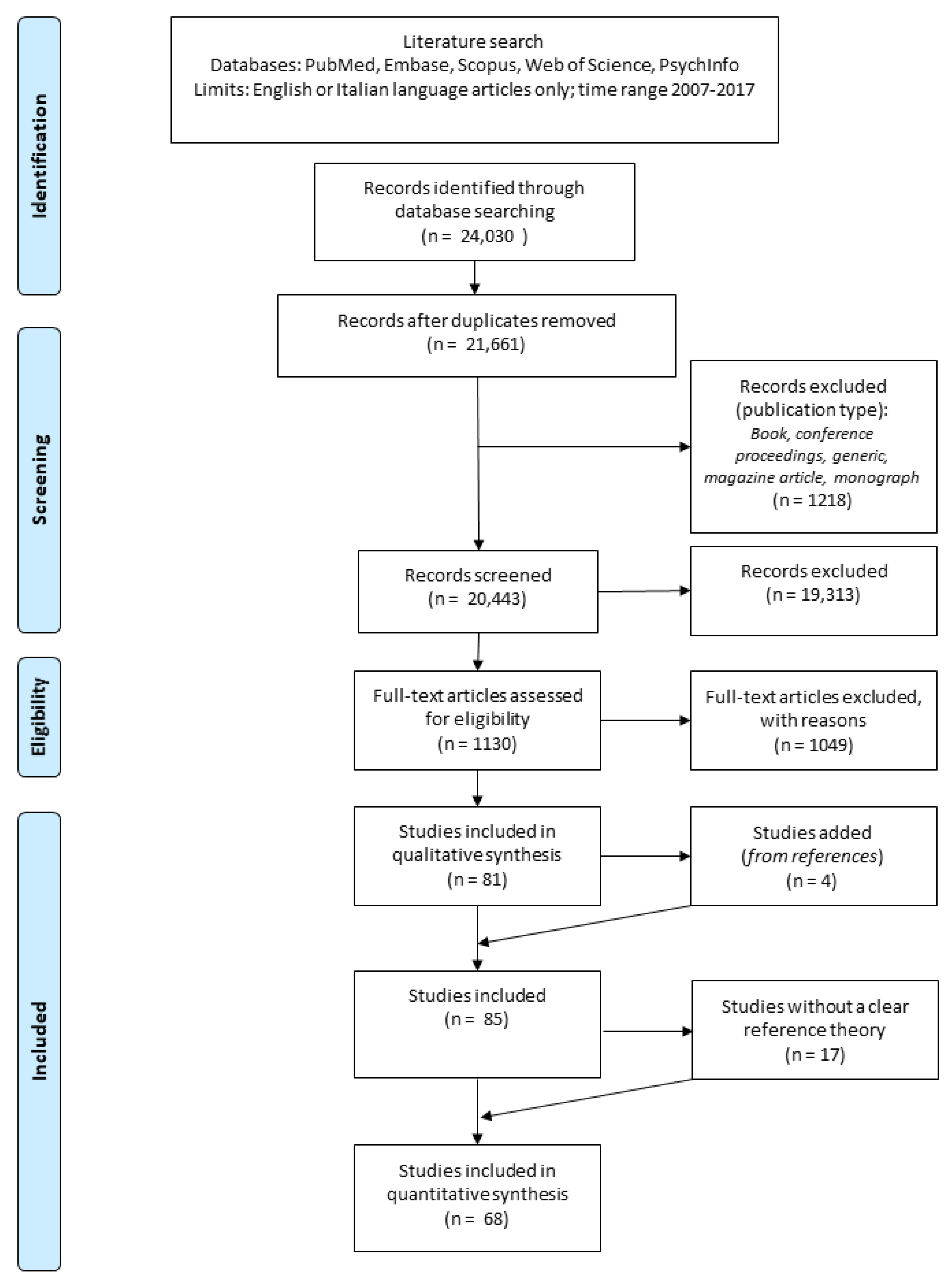

2. Materials and Methods

2.1. Search Strategies and Information Sources

2.2. General Selection Criteria

2.3. Screening and Eligibility Criteria for Each Step

2.3.1. Step 1: Abstract Selection

2.3.2. Step 2: Full-Text Selection

2.3.3. Step 3: Data Extraction and Management

2.4. Outcome Measures

2.5. Main Dimensions Analyzed

2.5.1. Neural Correlates of Consciousness (NCCs)

2.5.2. Association between Consciousness and Other Cognitive Functions

2.5.3. Translation from Theory to Clinical Practice

2.5.4. Quantitative Measures of Consciousness

2.5.5. Consciousness, Sensory Processes, and the Autonomic Nervous System

2.5.6. Subjectivity

2.6. Outcomes

2.6.1. Quantitative Outcomes

2.6.2. Qualitative Outcomes

- Main definitions of consciousness. Raters were asked to report if there was a main definition of consciousness (e.g., consciousness is/represents/serves/refers to/consists of/results in/defined as/has to do with) linked to the main theory described in the articles they read. The definition of consciousness expressed by other authors and only cited in the text was not reported unless strictly related to the theory presented in the article. Definitions of what is not consciousness were also included.

- Definitions of consciousness’ components/parts/sub-elements. Raters noted any definitions concerning parts and elements of consciousness and their definitions (if available).Related terms/features. Raters reported specific terms and/or features concerning the nature of consciousness as described by the single article/theory that is complementary to the main definition.

3. Results

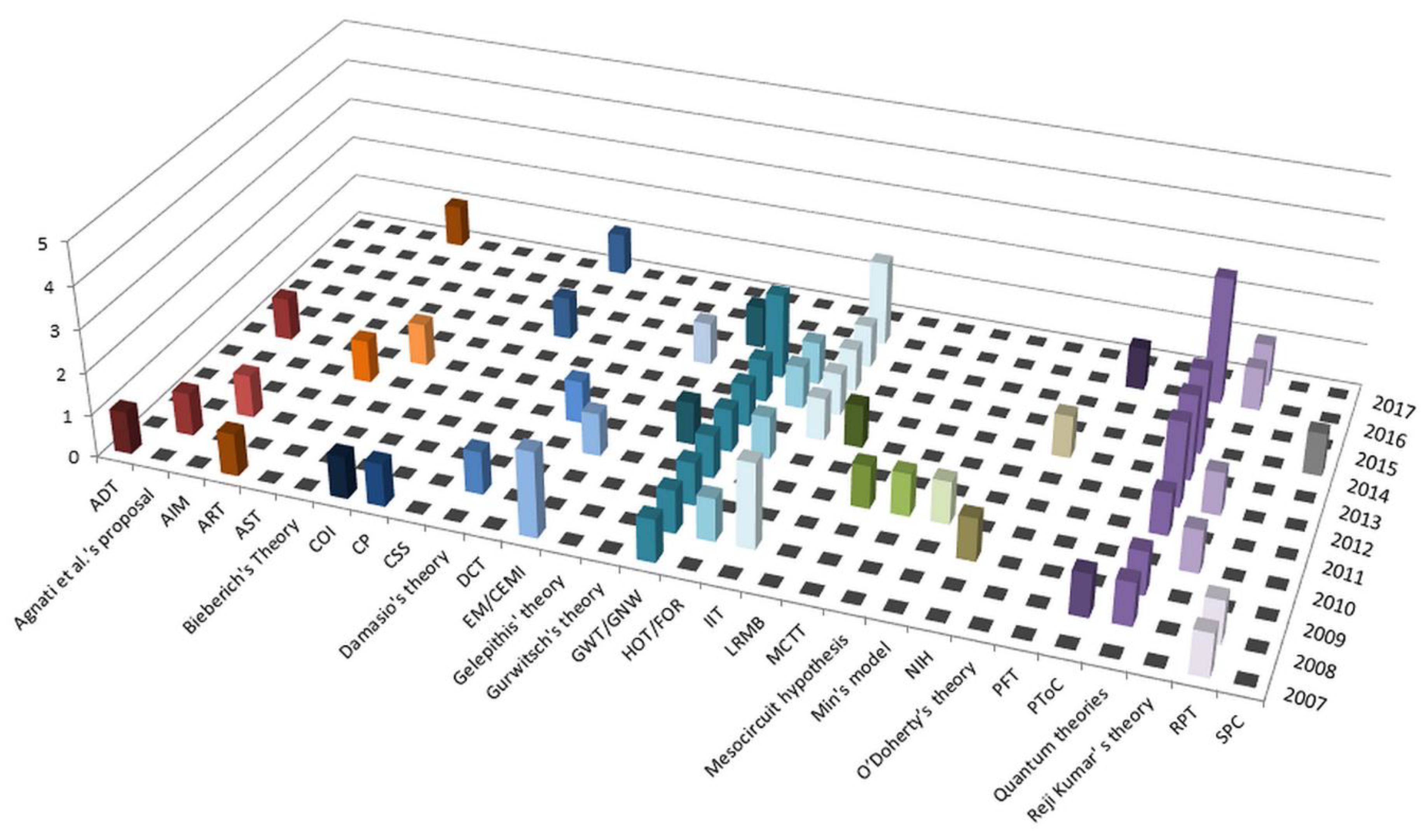

3.1. Review Results

3.2. Analytical Description of Each Theory

3.2.1. The Apical Dendrite Theory (ADT)

3.2.2. Agnati et al.’s Proposal

3.2.3. REM Sleep–Dream Protoconsciousness Hypothesis (AIM)

3.2.4. Adaptive Resonance Theory (ART)

3.2.5. Attention Schema Theory (AST)/Graziano’s Theory

3.2.6. Bieberich’s Theory

3.2.7. The Cross-Order Integration (COI) Theory

3.2.8. The Centrencephalic Proposal (CP)

3.2.9. The Consciousness State Space Model (CSS)

3.2.10. Damasio’s Theory

3.2.11. The Thalamic Dynamic Core Theory (DCT)

3.2.12. The Electromagnetic Field Theories

- (i)

- Hypothesis 7. The neural foundations and the nature, actions, and properties of consciousness are all describable in terms of a system of resonator elements by which consciousness participates in energy exchanges with the brain, which mediate both the generation of conscious awareness and the active modulation of volitional processes in the brain. All resonator elements produce P → M transformation and engage in collective partially-free M–M selections, but some (perceptual) may produce lesser or no M → P transformation”;

- (ii)

- Hypothesis 8. (a) The capacity for consciousness is undergirded by the physical matter of resonator elements, which are target structures of a consciousness gene. (b) Consciousness itself is a dispositional form of energy (DE) that may relate to physical forms of energy, similar to how the phase state of a gas relates in a material way to its liquid phase. Conscious awareness and its selective release are parts of DE and under the partially-free regulation of home resonator DE advised by collective systemic DE. (c) The functional identity of conscious perceptions and feelings is intrinsically determined by the anatomical locations of neurons which either house resonators or are connected directly to non-neural cells which house resonators (the labeled line hypothesis).

3.2.13. Gelepithis’s Theory

3.2.14. The Global Workspace Theory

3.2.15. Gurwitsch’s Theory

- Considering some results about inattentional blindness and changes in blindness, subjects sometimes fail to report seeing anything, so they hypothesized that there also exists a peripheral experience.

- They distinguished several types of relevance, showing how one of these concepts (i.e., “causal relevance”) can be empirically tested. They introduced a new type of relevance called “predictive relevance”.

- They developed the idea that the theme has a “variable size”, expanding and contracting, and sometimes disappearing.

3.2.16. The Representational Theories: High-Order (HOT) and First-Order (FOR) Models

3.2.17. The Integrated Information Theory (IIT)

- Existence: Consciousness exists—it is an undeniable aspect of reality. Paraphrasing Descartes: “I think, therefore, I am”.

- Composition: Consciousness is compositional (structured)—each experience consists of multiple aspects in various combinations. Within the same experience, one can see, for example, left and right, red and blue, a triangle and a square, a red triangle on the left, a blue square on the right, and so on.

- Information: consciousness is informative—each experience differs from other possible experiences. Thus, an experience of pure darkness is what it is by particularly differing from an immense number of other possible experiences. A small subset of these possible experiences includes, for example, all the frames of all possible movies.

- Integration: consciousness is integrated—each experience is (strongly) irreducible to non-interdependent components. Thus, experiencing the Italian word “SONO” (i.e., I am) written in the middle of a blank page is irreducible to an experience of the Italian word “SO” (i.e., I know) at the right border of a half-page, plus an experience of the Italian word “NO” (i.e., no) on the left border of another half page—the experience is whole. Similarly, seeing a red triangle is irreducible to seeing a triangle but no red color, plus a red patch but no triangle.

- Exclusion: consciousness is exclusive—each experience excludes all others. At any given time there is only one experience having its full content, rather than a superposition of multiple partial experiences; each experience has definite borders—certain things can be experienced while others cannot; each experience has a particular spatial and temporal grain—it flows at a particular speed and has a certain resolution such that some distinctions are possible, whereas finer or coarser distinctions are not.

- Existence: mechanisms in a state exist. A system is a set of mechanisms.

- Composition: elementary mechanisms can be combined into higher-order ones.

- Information: a mechanism can contribute to consciousness only if it specifies “differences that make a difference” within a system. That is, a mechanism in a state generates information only if it constrains the states of a system that can be its possible cause and effect repertoire. The more selective the possible causes and effects, the higher the cause–effect information specified by the mechanism.

- Integration: a mechanism can contribute to consciousness only if it specifies a cause–effect repertoire (information) that is irreducible to independent components. Integration/irreducibility φ is assessed by partitioning the mechanism and measuring what difference this makes to its cause–effect repertoire.

- Exclusion: a mechanism can contribute to consciousness at most one cause–effect repertoire, the one having the maximum value of integration/irreducibility φMax. This is its maximally irreducible cause–effect repertoire (MICE, or quale sensu stricto (in the narrow sense of the word)). If MICE exist, the mechanism constitutes a concept.

3.2.18. Layered Reference Model of the Brain (LRMB)

3.2.19. The Memory Consciousness and Temporality Theory (MCTT)

3.2.20. The Mesocircuit Hypothesis

3.2.21. The Min’s Model

3.2.22. The Network Inhibition Hypothesis (NIH)

3.2.23. O’Doherty’s Theory

3.2.24. Passive-Frame Theory (PFT)

3.2.25. A Psychological Theory of Consciousness (PToC)

3.2.26. Q-Theories

3.2.27. Reji Kumar’s Theory

3.2.28. The Radical Plasticity Thesis (RPT)

3.2.29. The Semantic Pointer Competition Theory of Consciousness (SPC)

4. Discussion

4.1. Descriptive Analysis of the Results for Each Dimension

4.2. A Definition of Consciousness: Problems and Perspectives

4.3. Limitations

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Sommerhoff, G. Consciousness explained as an internal integrating system. J. Conscious. Stud. 1996, 3, 139–157. [Google Scholar]

- Monzavi, M.; Murad, M.H.S.A.; Rahnama, M.; Shamshirband, S. Historical path of traditional and modern idea of ‘conscious universe’. Qual. Quant. 2016, 51, 1183–1195. [Google Scholar] [CrossRef]

- Capra, F. The Tao of Physics; Shambhala Publications: Boston, MA, USA, 1974. [Google Scholar]

- Clark, A. Supersizing the Mind: Embodiment, Action, and Cognitive Extension; Oxford University Press: Oxford, UK, 2008. [Google Scholar]

- Yu, L.; Blumenfeld, H. Theories of Impaired Consciousness in Epilepsy. Ann. N. Y. Acad. Sci. 2009, 1157, 48–60. [Google Scholar] [CrossRef] [PubMed]

- Georgiev, D. Quantum No-Go Theorems and Consciousness. Axiomathes 2013, 23, 683–695. [Google Scholar] [CrossRef]

- Hardie, W.F.R. Concepts of Consciousness in Aristotle. Mind 1976, 85, 388–411. [Google Scholar] [CrossRef]

- Crick, F.; Koch, C. Towards a neurobiological theory of consciousness. Semin. Neurosci. 1990, 2, 263–275. [Google Scholar]

- Koch, C. The Quest for Consciousness: A Neurobiological Approach. Eng. Sci. 2004, 67, 28–34. [Google Scholar]

- Moher, D.; Liberati, A.; Tetzlaff, J.; Altman, D.G.; The PRISMA Group. Preferred reporting items for systematic reviews and meta-analyses: The PRISMA statement. PLoS Med. 2009, 6, e1000097. [Google Scholar] [CrossRef]

- Aru, J.; Bachmann, T.; Singer, W.; Melloni, L. Distilling the neural correlates of consciousness. Neurosci. Biobehav. Rev. 2012, 36, 737–746. [Google Scholar] [CrossRef]

- Facco, E.; Lucangeli, D.; Tressoldi, P. On the Science of Consciousness: Epistemological Reflections and Clinical Implications. Explore 2017, 13, 163–180. [Google Scholar] [CrossRef]

- Fekete, T.; van Leeuwen, C.; Edelman, S. System, Subsystem, Hive: Boundary Problems in Computational Theories of Consciousness. Front. Psychol. 2016, 7, 1041. [Google Scholar] [CrossRef]

- Lee, U.; Blain-Moraes, S.; Mashour, G.A. Assessing levels of consciousness with symbolic analysis. Philos. Trans. R. Soc. A Math. Phys. Eng. Sci. 2015, 373, 20140117. [Google Scholar] [CrossRef] [PubMed]

- Tononi, G.; Koch, C. Consciousness: Here, there and everywhere? Philos. Trans. R. Soc. B Biol. Sci. 2015, 370, 20140167. [Google Scholar] [CrossRef] [PubMed]

- Koch, C.; Massimini, M.; Boly, M.; Tononi, G. Neural correlates of consciousness: Progress and problems. Nat. Rev. Neurosci. 2016, 17, 307–321. [Google Scholar] [CrossRef] [PubMed]

- Cohen, M.A.; Dennett, D.C. Consciousness cannot be separated from function. Trends Cogn. Sci. 2011, 15, 358–364. [Google Scholar] [CrossRef]

- LaBerge, D.; Kasevich, R. The apical dendrite theory of consciousness. Neural Netw. 2007, 20, 1004–1020. [Google Scholar] [CrossRef] [PubMed]

- Cook, N. The neuron-level phenomena underlying cognition and consciousness: Synaptic activity and the action potential. Neuroscience 2008, 153, 556–570. [Google Scholar] [CrossRef] [PubMed]

- Agnati, L.F.; Guidolin, D.; Cortelli, P.; Genedani, S.; Cela-Conde, C.; Fuxe, K. Neuronal correlates to consciousness. The “Hall of Mirrors” metaphor describing consciousness as an epiphenomenon of multiple dynamic mosaics of cortical functional modules. Brain Res. 2012, 1476, 3–21. [Google Scholar] [CrossRef]

- Hobson, J.A. REM sleep and dreaming: Towards a theory of protoconsciousness. Nat. Rev. Neurosci. 2009, 10, 803–814. [Google Scholar] [CrossRef]

- Grossberg, S. Consciousness CLEARS the mind. Neural Netw. 2007, 20, 1040–1053. [Google Scholar] [CrossRef] [PubMed]

- Grossberg, S. Towards solving the hard problem of consciousness: The varieties of brain resonances and the conscious experiences that they support. Neural Netw. 2017, 87, 38–95. [Google Scholar] [CrossRef]

- Graziano, M.S.A.; Kastner, S. Awareness as a perceptual model of attention. Cogn. Neurosci. 2011, 2, 125–127. [Google Scholar] [CrossRef] [PubMed]

- Bieberich, E. Introduction to the Fractality Principle of Consciousness and the Sentyon Postulate. Cogn. Comput. 2012, 4, 13–28. [Google Scholar] [CrossRef]

- Kriegel, U. A cross-order integration hypothesis for the neural correlate of consciousness. Conscious. Cogn. 2007, 16, 897–912. [Google Scholar] [CrossRef]

- Merker, B. Consciousness without a cerebral cortex: A challenge for neuroscience and medicine. Behav. Brain Sci. 2007, 30, 63–81. [Google Scholar] [CrossRef] [PubMed]

- Eberkovich-Ohana, A.; Eglicksohn, J. The consciousness state space (CSS)—A unifying model for consciousness and self. Front. Psychol. 2014, 5, 341. [Google Scholar] [CrossRef]

- Berkovich-Ohana, A.; Wittmann, M. A typology of altered states according to the consciousness state space (CSS) model: A special reference to subjective time. J. Conscious. Stud. 2017, 24, 37–61. [Google Scholar]

- Bosse, T.; Jonker, C.M.; Treur, J. Formalisation of Damasio’s theory of emotion, feeling and core consciousness. Conscious. Cogn. 2008, 17, 94–113. [Google Scholar] [CrossRef]

- Ward, L.M. The thalamic dynamic core theory of conscious experience. Conscious. Cogn. 2011, 20, 464–486. [Google Scholar] [CrossRef] [PubMed]

- McFadden, J. Conscious Electromagnetic (CEMI) Field Theory. NeuroQuantology 2007, 5, 262–270. [Google Scholar] [CrossRef]

- Pockett, S. Difficulties with the Electromagnetic Field Theory of Consciousness: An Update. NeuroQuantology 2007, 5, 271–275. [Google Scholar] [CrossRef]

- Lewis, E.R.; MacGregor, R.J. A Natural Science Approach to Consciousness. J. Integr. Neurosci. 2010, 9, 153–191. [Google Scholar] [CrossRef]

- Gelepithis, P.A.M. A Novel Theory of Consciousness. Int. J. Mach. Conscious. 2014, 6, 125–139. [Google Scholar] [CrossRef]

- Yoshimi, J.K. Phenomenology and Connectionism. Front. Psychol. 2011, 2, 288. [Google Scholar] [CrossRef] [PubMed]

- Yoshimi, J.; Vinson, D.W. Extending Gurwitsch’s field theory of consciousness. Conscious. Cogn. 2015, 34, 104–123. [Google Scholar] [CrossRef]

- Baars, B.J.; Franklin, S. An architectural model of conscious and unconscious brain functions: Global Workspace Theory and IDA. Neural Netw. 2007, 20, 955–961. [Google Scholar] [CrossRef] [PubMed]

- Prakash, R.; Prakash, O.; Prakash, S.; Abhishek, P.; Gandotra, S. Global workspace model of consciousness and its electromagnetic correlates. Ann. Indian Acad. Neurol. 2008, 11, 146–153. [Google Scholar] [CrossRef]

- Baars, B.J.; Franklin, S. Consciousness is Computational: The Lida Model of Global Workspace Theory. Int. J. Mach. Conscious. 2009, 1, 23–32. [Google Scholar] [CrossRef]

- Raffone, A.; Pantani, M. A global workspace model for phenomenal and access consciousness. Conscious. Cogn. 2010, 19, 580–596. [Google Scholar] [CrossRef]

- Dehaene, S.; Changeux, J.-P.; Naccache, L. The global neuronal workspace model of conscious access: From neuronal architectures to clinical applications. Res. Perspect. Neurosci. 2011. [Google Scholar] [CrossRef]

- Sergent, C.; Naccache, L. Imaging neural signatures of consciousness: “What”, “When”, “Where” and “How” does it work? Arch. Ital. Biol. 2012, 150, 91–106. [Google Scholar] [CrossRef]

- Baars, B.J.; Franklin, S.; Ramsøy, T.Z. Global Workspace Dynamics: Cortical “Binding and Propagation” Enables Conscious Contents. Front. Psychol. 2013, 4, 200. [Google Scholar] [CrossRef] [PubMed]

- Dehaene, S.; Charles, L.; King, J.-R.; Marti, S. Toward a computational theory of conscious processing. Curr. Opin. Neurobiol. 2014, 25, 76–84. [Google Scholar] [CrossRef]

- Bartolomei, F.; McGonigal, A.; Naccache, L. Alteration of consciousness in focal epilepsy: The global workspace alteration theory. Epilepsy Behav. 2014, 30, 17–23. [Google Scholar] [CrossRef]

- Lau, H.C. A higher order Bayesian decision theory of consciousness. Prog. Brain Res. 2007, 168, 35–48. [Google Scholar]

- Lau, H.; Rosenthal, D. Empirical support for higher-order theories of conscious awareness. Trends Cogn. Sci. 2011, 15, 365–373. [Google Scholar] [CrossRef] [PubMed]

- Friesen, L. Higher-Order Thoughts and the Unity of Consciousness. J. Mind Behav. 2014, 35, 201–224. [Google Scholar]

- Mehta, N.; Mashour, G.A. General and specific consciousness: A first-order representationalist approach. Front. Psychol. 2013, 4, 407. [Google Scholar] [CrossRef] [PubMed]

- Balduzzi, D.; Tononi, G. Integrated Information in Discrete Dynamical Systems: Motivation and Theoretical Framework. PLoS Comput. Biol. 2008, 4, e1000091. [Google Scholar] [CrossRef]

- Tononi, G. Consciousness as Integrated Information: A Provisional Manifesto. Biol. Bull. 2008, 215, 216–242. [Google Scholar] [CrossRef] [PubMed]

- Tononi, G. Integrated information theory of consciousness: An updated account. Arch. Ital. Biol. 2012, 150, 293–329. [Google Scholar]

- Casali, A.G.; Gosseries, O.; Rosanova, M.; Boly, M.; Sarasso, S.; Casali, K.R.; Casarotto, S.; Bruno, M.-A.; Laureys, S.; Tononi, G.; et al. A theoretically based index of consciousness independent of sensory processing and behavior. Sci. Transl. Med. 2013, 5. [Google Scholar] [CrossRef]

- Oizumi, M.; Albantakis, L.; Tononi, G. From the Phenomenology to the Mechanisms of Consciousness: Integrated Information Theory 3.0. PLoS Comput. Biol. 2014, 10, e1003588. [Google Scholar] [CrossRef]

- Tononi, G.; Boly, M.; Massimini, M.; Koch, C. Integrated information theory: From consciousness to its physical substrate. Nat. Rev. Neurosci. 2016, 17, 450–461. [Google Scholar] [CrossRef] [PubMed]

- Tsuchiya, N.; Taguchi, S.; Saigo, H. Using category theory to assess the relationship between consciousness and integrated information theory. Neurosci. Res. 2016. [Google Scholar] [CrossRef] [PubMed]

- Wang, Y. The Cognitive Mechanisms and Formal Models of Consciousness. Int. J. Cogn. Inform. Nat. Intell. 2012, 6, 23–40. [Google Scholar] [CrossRef]

- Barba, G.D.; Boisse, M.-F. Temporal consciousness and confabulation: Is the medial temporal lobe “temporal”? Cogn. Neuropsychiatry 2010, 15, 95–117. [Google Scholar] [CrossRef] [PubMed]

- Schiff, N.D. Recovery of consciousness after brain injury: A mesocircuit hypothesis. Trends Neurosci. 2010, 33, 1–9. [Google Scholar] [CrossRef]

- Min, B.-K. A thalamic reticular networking model of consciousness. Theor. Biol. Med. Model. 2010, 7, 10. [Google Scholar] [CrossRef] [PubMed]

- O’Doherty, F. A Contribution to Understanding Consciousness: Qualia as Phenotype. Biosemiotics 2012, 6, 191–203. [Google Scholar] [CrossRef]

- Morsella, E.; Godwin, C.A.; Jantz, T.K.; Krieger, S.C.; Gazzaley, A. Homing in on consciousness in the nervous system: An action-based synthesis. Behav. Brain Sci. 2015, 39, e168. [Google Scholar] [CrossRef] [PubMed]

- Shannon, B. A Psychological Theory of Consciousness. J. Conscious. Stud. 2008, 15, 5–47. [Google Scholar]

- Klemm, D.E.; Klink, W.H. Consciousness and Quantum Mechanics: Opting from Alternatives. Zygon 2008, 43, 307–327. [Google Scholar] [CrossRef]

- Das, T. Theory of Consciousness. NeuroQuantology 2009, 7. [Google Scholar] [CrossRef]

- Di Biase, F. Quantum-Holographic Informational Consciousness. NeuroQuantology 2009, 7, 657–664. [Google Scholar] [CrossRef]

- Koehler, G. Q-consciousness: Where is the flow? Nonlinear Dyn. Psychol. Life Sci. 2011, 15, 335–357. [Google Scholar]

- Argonov, V.Y. Neural correlate of consciousness in a single electron: Radical answer to “quantum theories of consciousness”. NeuroQuantology 2012, 10, 276–285. [Google Scholar] [CrossRef][Green Version]

- Li, J. A Timeless and Spaceless Quantum Theory of Consciousness. NeuroQuantology 2013, 11. [Google Scholar] [CrossRef]

- Hameroff, S.; Penrose, R. Consciousness in the universe: A review of the “Orch OR” theory. Phys. Life Rev. 2014, 11, 39–78. [Google Scholar] [CrossRef] [PubMed]

- Hoffman, D.D.; Prakash, C. Objects of consciousness. Front. Psychol. 2014, 5, 577. [Google Scholar] [CrossRef]

- Kak, A.; Gautam, A.; Kak, S. A Three-Layered Model for Consciousness States. NeuroQuantology 2016, 14, 166–174. [Google Scholar] [CrossRef]

- Sieb, R.A. Human Conscious Experience is Four-Dimensional and has a Neural Correlate Modeled by Einstein’s Special Theory of Relativity. NeuroQuantology 2016, 14, 630–644. [Google Scholar] [CrossRef]

- Brabant, O. More Than Meets the Eye: Toward a Post-Materialist Model of Consciousness. Explore 2016, 12, 347–354. [Google Scholar] [CrossRef]

- Reji Kumar, K. Modeling of Consciousness: Classification of Models. Adv. Stud. Biol. 2010, 2, 141–146. [Google Scholar]

- Ahmad, F.; Khan, Q. Can mathematical cognition formulate consciousness? In Proceedings of the ICOSST 2012—International Conference on Open Source Systems and Technologies, Lahore, Pakistan, 20–22 December 2012; pp. 7–11. [Google Scholar]

- Reji Kumar, K. Mathematical modeling of consciousness: A foundation for information processing. In Proceedings of the IEEE International Conference on Emerging Technological Trends in Computing, Communications and Electrical Engineering, ICETT 2016, Turku, Finland, 24–26 August 2016; Institute of Electrical and Electronics Engineers Inc.: Piscataway, NJ, USA, 2016. [Google Scholar]

- Reji Kumar, K. Mathematical modeling of consciousness: Subjectivity of mind. In Proceedings of the IEEE International Conference on Circuit, Power and Computing Technologies, ICCPCT 2016, Nagercoil, India, 18–19 March 2016; Institute of Electrical and Electronics Engineers Inc.: Piscataway, NJ, USA, 2016. [Google Scholar]

- Cleeremans, A.; Timmermans, B.; Pasquali, A. Consciousness and metarepresentation: A computational sketch. Neural Netw. 2007, 20, 1032–1039. [Google Scholar] [CrossRef] [PubMed]

- Cleeremans, A. Consciousness: The radical plasticity thesis. Prog. Brain Res. 2007, 168, 19–33. [Google Scholar] [CrossRef]

- Thagard, P.; Stewart, T.C. Two theories of consciousness: Semantic pointer competition vs. information integration. Conscious. Cogn. 2014, 30, 73–90. [Google Scholar] [CrossRef]

- Mountcastle, V.B. Modality and Topographic Properties of Single Neurons of Cat’s Somatic Sensory Cortex. J. Neurophysiol. 1957, 20, 408–434. [Google Scholar] [CrossRef]

- Sevush, S. Single-neuron theory of consciousness. J. Theor. Biol. 2006, 238, 704–725. [Google Scholar] [CrossRef]

- Edelman, G.M. Bright Air, Brilliant Fire: On The Matter Of The Mind; Basic Books: New York, NY, USA, 1992. [Google Scholar]

- Tononi, G.; Edelman, G. Consciousness and the integration of information in the brain. In Consciousness: At the Frontiers of Neuroscience; Jasper, H.H., Descarries, L., Castellucci, V.F., Rossignol, S., Eds.; Lippencott-Raven: Philadelphia, PA, USA, 1998; pp. 245–279. [Google Scholar]

- Pompeiano, O. The neurophysiological mechanisms of the postrual and motor events during desynchronized sleep. Res. Publ. Assoc. Res. Nerv. Ment. Dis. 1967, 45, 351–423. [Google Scholar] [PubMed]

- Aston-Jones, G.; Bloom, F. Activity of norepinephrine-containing locus coeruleus neurons in behaving rats anticipates fluctuations in the sleep-waking cycle. J. Neurosci. 1981, 1, 876–886. [Google Scholar] [CrossRef]

- Hobson, J.A.; McCarley, R.W. The brain as a dream state generator: An activation-synthesis hypothesis of the dream process. Am. J. Psychiatry 1977, 134, 1335–1348. [Google Scholar] [CrossRef] [PubMed]

- Baumeister, R.F.; Masicampo, E.J. Conscious thought is for facilitating social and cultural interactions: How mental simulations serve the animal–culture interface. Psychol. Rev. 2010, 117, 945–971. [Google Scholar] [CrossRef] [PubMed]

- Carruthers, P. How we know our own minds: The relationship between mindreading and metacognition. Behav. Brain Sci. 2009, 32, 121–138. [Google Scholar] [CrossRef] [PubMed]

- Baars, B.J. A Cognitive Theory of Consciousness; Cambridge University Press: Cambridge, UK, 1993. [Google Scholar]

- Rosenthal, D.M. A Theory of consciousness. In The Nature of Consciousness; Block, N., Flanagan, O.J., Guzeldere, G., Eds.; ZiF Technical Report 40; MIT Press: Cambridge, MA, USA, 1997. [Google Scholar]

- Rosenthal, D.M. Explaining consciousness. In Philosophy of Mind: Classical and Contemporary Readings; Chalmers, D.J., Ed.; Oxford University Press: Oxford, UK, 2002; pp. 406–421. [Google Scholar]

- Treisman, A.; Schmidt, H. Illusory conjunctions in the perception of objects. Cogn. Psychol. 1982, 14, 107–141. [Google Scholar] [CrossRef]

- Penfield, W.; Jasper, H. Epilepsy and the Functional Anatomy of the Human Brain; Little Brown & Co: Boston, MA, USA, 1954. [Google Scholar]

- Damasio, A. The Feeling of What Happens: Body and Emotion in the Making of Consciousness; Houghton Mifflin Harcourt: New York, NY, USA, 1999. [Google Scholar]

- Jonker, C.M.; Treur, J. Compositional Verification of Multi-Agent Systems: A Formal Analysis of Pro-activeness and Reactiveness. Int. J. Coop. Inf. Syst. 1998, 11, 51–91. [Google Scholar] [CrossRef]

- Edelman, G.; Tononi, G. A Universe of Consciousness; Basic Books: New York, NY, USA, 2000. [Google Scholar]

- Jones, E.G. Thalamic circuitry and thalamocortical synchrony. Philos. Trans. R. Soc. B Biol. Sci. 2002, 357, 1659–1673. [Google Scholar] [CrossRef]

- McFadden, J. The Conscious Electromagnetic Information (Cemi) Field Theory: The Hard Problem Made Easy? J. Conscious. Stud. 2002, 9, 45–60. [Google Scholar]

- McFadden, J. Synchronous Firing and Its Influence on the Brain’s Electromagnetic Field. J. Conscious. Stud. 2002, 9, 23–50. [Google Scholar]

- Nagel, T. What Is It Like to Be a Bat? Philos. Rev. 1974, 83, 435. [Google Scholar] [CrossRef]

- Damasio, A.R. The somatic marker hypothesis and the possible functions of the prefrontal cortex. Philos. Trans. R. Soc. B Biol. Sci. 1996, 351, 1413–1420. [Google Scholar] [CrossRef]

- Gelepithis, P.A.M. A concise comparison of selected studies of consciousness. Cogn. Syst. 2001, 5, 373–392. [Google Scholar]

- Baars, B.J. In the theatre of consciousness. Global workspace theory, a rigorous scientific theory of consciousness. J. Conscious. Stud. 1997, 4, 292–309. [Google Scholar]

- Block, N. Consciousness, accessibility, and the mesh between psychology and neuroscience. Behav. Brain Sci. 2007, 30, 481–499. [Google Scholar] [CrossRef]

- Baars, B.J.; Ramsøy, T.Z.; Laureys, S. Brain, conscious experience and the observing self. Trends Neurosci. 2003, 26, 671–675. [Google Scholar] [CrossRef]

- Dehaene, S.; Changeux, J.-P. Experimental and Theoretical Approaches to Conscious Processing. Neuron 2011, 70, 200–227. [Google Scholar] [CrossRef] [PubMed]

- Dehaene, S. Towards a cognitive neuroscience of consciousness: Basic evidence and a workspace framework. Cognition 2001, 79, 1–37. [Google Scholar] [CrossRef]

- Dehaene, S.; Changeux, J.-P. Ongoing Spontaneous Activity Controls Access to Consciousness: A Neuronal Model for Inattentional Blindness. PLoS Biol. 2005, 3, e141. [Google Scholar] [CrossRef] [PubMed]

- Del Cul, A.; Baillet, S.; Dehaene, S. Brain Dynamics Underlying the Nonlinear Threshold for Access to Consciousness. PLoS Biol. 2007, 5, e260. [Google Scholar] [CrossRef] [PubMed]

- De Lange, F.P.; van Gaal, S.; Lamme, V.A.F.; Dehaene, S. How Awareness Changes the Relative Weights of Evidence during Human Decision-Making. PLoS Biol. 2011, 9, e1001203. [Google Scholar] [CrossRef] [PubMed]

- Sergent, C.; Baillet, S.; Dehaene, S. Timing of the brain events underlying access to consciousness during the attentional blink. Nat. Neurosci. 2005, 8, 1391–1400. [Google Scholar] [CrossRef]

- De Lange, F.P.; Jensen, O.; Dehaene, S. Accumulation of Evidence during Sequential Decision Making: The Importance of Top-Down Factors. J. Neurosci. 2010, 30, 731–738. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Schwiedrzik, C.M.; Singer, W.; Melloni, L. Subjective and objective learning effects dissociate in space and in time. Proc. Natl. Acad. Sci. USA 2011, 108, 4506–4511. [Google Scholar] [CrossRef]

- Gurwitsch, A. The Field of Consciousness; Duquesne University Press: Pittsburgh, PA, USA, 1964. [Google Scholar]

- Gurwitsch, A. Marginal Consciousness; Lester Emb. Ohio University Press: Athens, OH, USA, 1985. [Google Scholar]

- Kant, I. Critique of Pure Reason; Guyer, P., Wood, A., Eds.; Cambridge University Press: Cambridge, UK, 1787. [Google Scholar]

- Nidditch, P. John Locke: An Essay Concerning Human Understanding; Oxford University Press: Oxford, UK, 1975. [Google Scholar]

- Dretske, F. Naturalizing the Mind; Bradford/MIT Press: Cambridge, MA, USA, 1995. [Google Scholar]

- Tye, M. Ten Problems of Consciousness: A Representational Theory of the Phenomenal Mind; MIT Press: Cambridge, MA, USA, 1995. [Google Scholar]

- Tye, M. Consciousness, Color, and Content; MIT Press: Cambridge, MA, USA, 2000. [Google Scholar]

- Rosenthal, D.M. Consciousness and Mind; Clarendon Press: Wotton-under-Edge, UK, 2005. [Google Scholar]

- Rounis, E.; Maniscalco, B.; Rothwell, J.C.; Passingham, R.E.; Lau, H. Theta-burst transcranial magnetic stimulation to the prefrontal cortex impairs metacognitive visual awareness. Cogn. Neurosci. 2010, 1, 165–175. [Google Scholar] [CrossRef]

- Gennaro, R. The Consciousness Paradox: Consciousness, Concepts, and Higher-Order Thoughts (Representation and Mind); MIT Press: Cambridge, MA, USA, 2011. [Google Scholar]

- Wang, Y.; Patel, S.; Patel, D. A layered reference model of the brain (LRMB). IEEE Trans Syst. Man Cybern. Part C Appl. Rev. 2006, 36, 124–133. [Google Scholar] [CrossRef]

- Wang, Y. Formal description of the cognitive process of memorization. In Transactions on Computational Science V; Lecture Notes in Computer Science (Including Subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics); Springer: Berlin/Heidelberg, Germany, 2009; pp. 81–98. [Google Scholar]

- Wang, Y. On Cognitive Informatics. Brain Mind 2003, 4, 151–167. [Google Scholar] [CrossRef]

- Wang, Y. The Theoretical Framework of Cognitive Informatics. Int. J. Cogn. Inform. Nat. Intell. 2007, 1, 1–27. [Google Scholar] [CrossRef]

- Payne, D.; Wenger, M. Cognitive Psychology; Houghton Mifflin College Division: Boston, MA, USA, 1998. [Google Scholar]

- Smith, R. Psychology; West Publishing Company: St. Paul, MN, USA, 1993. [Google Scholar]

- Sternberg, R.J. In Search of the Human Mind, 2nd ed.; Harcourt Brace College Publishers: Cambridge, MA, USA, 1998. [Google Scholar]

- Wang, Y. On abstract intelligence and brain informatics: Mapping the cognitive functions onto the neural architectures. In Proceedings of the IEEE 11th International Conference on Cognitive Informatics and Cognitive Computing, Kyoto, Japan, 22–24 August 2012; IEEE: Pistcataway, NJ, USA, 2012; pp. 5–6. [Google Scholar]

- Castaigne, P.; Lhermitte, F.; Buge, A.; Escourolle, R.; Hauw, J.J.; Lyon-Caen, O. Paramedian thalamic and midbrain infarcts: Clinical and neuropathological study. Ann. Neurol. 1981, 10, 127–148. [Google Scholar] [CrossRef] [PubMed]

- Fridman, E.A.; Schiff, N.D. Neuromodulation of the conscious state following severe brain injuries. Curr. Opin. Neurobiol. 2014, 29, 172–177. [Google Scholar] [CrossRef]

- Schiff, N.D. Central thalamic deep brain stimulation to support anterior forebrain mesocircuit function in the severely injured brain. J. Neural Transm. 2016, 123, 797–806. [Google Scholar] [CrossRef]

- Schiff, N.D. Central thalamic deep brain stimulation for support of forebrain arousal regulation in the minimally conscious state. In Handbook of Clinical Neurology; Elsevier B.V.: Amsterdam, The Netherlands, 2013; pp. 295–306. [Google Scholar]

- Lee, K.H.; Meador, K.J.; Park, Y.D.; King, D.W.; Murro, A.M.; Pillai, J.J.; Kaminski, R.J. Pathophysiology of altered consciousness during seizures: Subtraction SPECT study. Neurology 2002, 59, 841–846. [Google Scholar] [CrossRef]

- Blumenfeld, H.; McNally, K.A.; Vanderhill, S.D.; Paige, A.L.; Chung, R.; Davis, K.; Norden, A.D.; Stokking, R.; Studholme, C.; Novotny, E.J.; et al. Positive and Negative Network Correlations in Temporal Lobe Epilepsy. Cereb. Cortex 2004, 14, 892–902. [Google Scholar] [CrossRef] [PubMed]

- Blumenfeld, H. Impaired consciousness in epilepsy. Lancet Neurol. 2012, 11, 814–826. [Google Scholar] [CrossRef]

- Gray, J. Consciousness: Creeping Up on the Hard Problem; Oxford University Press: Oxford, UK, 2004. [Google Scholar]

- Godwin, C.A.; Gazzaley, A.; Morsella, E. Homing in on the brain mechanisms linked to consciousness: Buffer of the perception-and-action interface. In The Unity of Mind, Brain and World: Current Perspectives on a Science of Consciousness; Cambridge University Press: Cambridge, UK, 2013; pp. 43–76. [Google Scholar]

- Husserl, E. The Phenomenology of Internal Time-Consciousness; Churchill, J., Ed.; Martinus Nijhoff: The Hague, The Netherlands, 1964. [Google Scholar]

- Natsoulas, T. Basic problems of consciousness. J. Pers. Soc. Psychol. 1981, 41, 132–178. [Google Scholar] [CrossRef]

- Petkov, V. Minkowski Spacetime: A hundred Years Later; Springer: Berlin, Germany, 2010. [Google Scholar]

- O’Keefe, J.; Dostrovsky, J. The hippocampus as a spatial map. Preliminary evidence from unit activity in the freely-moving rat. Brain Res. 1971, 34, 171–175. [Google Scholar] [CrossRef]

- O’Keefe, J. Place units in the hippocampus of the freely moving rat. Exp. Neurol. 1976, 51, 78–109. [Google Scholar] [CrossRef]

- MacDonald, C.J.; Lepage, K.Q.; Eden, U.T.; Eichenbaum, H. Hippocampal “Time Cells” Bridge the Gap in Memory for Discontiguous Events. Neuron 2011, 71, 737–749. [Google Scholar] [CrossRef]

- Sieb, R. Four-Dimensional Consciousness. Act. Nerv. Super. 2017, 59, 43–60. [Google Scholar] [CrossRef][Green Version]

- Di Biase, F. A holoinformational model of consciousness. Quantum Biosyst. 2009, 3, 207–220. [Google Scholar]

- Pribram, K.; Yasue, K.; Jibu, M. Brain and Perception: Holonomy and Structure in Figural Processing; Psychology Press: London, UK, 1991. [Google Scholar]

- Bohm, D.; Hiley, B. The Undivided Universe—An Ontological Interpretation of Quantum Theory; Routledge: London, UK, 1993. [Google Scholar]

- Pribram, K.H. Rethinking Neural Networks: Quantum Fields and Biological Data; Lawrence Erlbaum Associates: Mahwah, NJ, USA, 1993. [Google Scholar]

- Eccles, J. A unitary hypothesis of mind-brain interaction in the cerebral cortex. Proc. R. Soc. Lond. Ser. B Biol. Sci. 1990, 240, 433–451. [Google Scholar] [CrossRef]

- Hameroff, S. Consciousness, the brain, and spacetime geometry. Ann. N. Y. Acad. Sci. 2006, 929, 74–104. [Google Scholar] [CrossRef]

- Gödel, K. Über formal unentscheidbare sätze der principia mathematica und verwandter systeme I. Mon. Math. Phys. 1931, 38, 173–198. [Google Scholar] [CrossRef]

- Penrose, R. Shadows of the Mind: A Search for the Missing Science of Consciousness; Oxford University Press: Oxford, UK, 1994. [Google Scholar]

- Schrödinger, E. An Undulatory Theory of the Mechanics of Atoms and Molecules. Phys. Rev. 1926, 28, 1049–1070. [Google Scholar] [CrossRef]

- Penrose, R. On Gravity’s role in Quantum State Reduction. Gen. Relativ. Gravit. 1996, 28, 581–600. [Google Scholar] [CrossRef]

- Robinson, W. Epiphenomenalism. In Stanford Encyclopedia of Philosophy; Zalta, E., Nodelman, U., Allen, C., Eds.; Stanford University: Stanford, CA, USA, 2012. [Google Scholar]

- Wegner, D. The Illusion of Conscious Will; The MIT Press: Cambridge, MA, USA, 2002. [Google Scholar]

- Tegmark, M. Importance of quantum decoherence in brain processes. Phys. Rev. E Stat. Phys. Plasmas Fluids Relat. Interdiscip. Top. 2000, 61, 4194–4206. [Google Scholar] [CrossRef]

- Bell, J.S. On the Problem of Hidden Variables in Quantum Mechanics. Rev. Mod. Phys. 1966, 38, 447–452. [Google Scholar] [CrossRef]

- Kochen, S.; Specker, E. The problem of hidden variables in quantum mechanics. J. Math. Mech. 1967, 17, 59–87. [Google Scholar] [CrossRef]

- Frankfurt, H. Equality as a Moral Ideal. Ethics 1987, 98, 21–43. [Google Scholar] [CrossRef]

- Widerker, D.; Frankfurt, H. Alternate possibilities and moral responsibility. In Moral Responsibility and Alternative Possibilities: Essays on the Importance of Alternative Possibilities, 1st ed.; Frankfurt, H., Ed.; Taylor and Francis: Abingdon, UK, 2018; pp. 17–25. [Google Scholar]

- Draguhn, A.; Traub, R.D.; Schmitz, D.; Jefferys, J.G.R. Electrical coupling underlies high-frequency oscillations in the hippocampus in vitro. Nature 1998, 394, 189–192. [Google Scholar] [CrossRef]

- Gilbert, C.D.; Sigman, M. Brain States: Top-Down Influences in Sensory Processing. Neuron 2007, 54, 677–696. [Google Scholar] [CrossRef]

- Lambert, N.; Chen, Y.-N.; Cheng, Y.-C.; Li, C.-M.; Chen, G.-Y.; Nori, F. Quantum biology. Nat. Phys. 2012, 9, 10–18. [Google Scholar] [CrossRef]

- Kak, S. Biological Memories and Agents as Quantum Collectives. NeuroQuantology 2013, 11, 391–398. [Google Scholar] [CrossRef]

- Bohm, D. Wholeness and the Implicate Order; Psychology Press: London, UK, 2002. [Google Scholar]

- Mensky, M.B. Mathematical Models of Subjective Preferences in Quantum Concept of Consciousness. NeuroQuantology 2011, 9, 614–620. [Google Scholar] [CrossRef]

- DeWitt, B. The Many Worlds Interpretation of Quantum Mechanics; Princeton University Press: Princeton, NJ, USA, 1973. [Google Scholar]

- Hoffman, D.D.; Singh, M.; Prakash, C. The Interface Theory of Perception. Psychon. Bull. Rev. 2015, 22, 1480–1506. [Google Scholar] [CrossRef]

- Hoffman, D.D. The construction of visual reality. In Hallucinations: Research and Practice; Springer: New York, NY, USA, 2012; pp. 7–15. [Google Scholar]

- Hoffman, D.D. The Interface Theory of Perception (To appear in The Stevens’ Handbook of Experimental Psychology and Cognitive Neuroscience). In Object Categorization: Computer and Human Vision Perspectives; Dickinson, S., Tarr, M., Leonardis, A., Schiele, B., Eds.; Cambridge University Press: New York, NY, USA, 2009; pp. 148–165. [Google Scholar]

- Hoffman, D.D. “The sensory desktop”. In This Will Make You Smarter: New Scientific Concepts to Improve Your Thinking; Brockman, J., Ed.; Harper Perennial: New York, NY, USA, 2012; pp. 135–138. [Google Scholar]

- Coleman, S. The Real Combination Problem: Panpsychism, Micro-Subjects, and Emergence. Erkenntnis 2013, 79, 19–44. [Google Scholar] [CrossRef]

- Bargh, J.A.; Morsella, E. The Unconscious Mind. Perspect. Psychol. Sci. 2008, 3, 73–79. [Google Scholar] [CrossRef]

- Hoffman, D.D. Public Objects and Private Qualia. In The Wiley-Blackwell Handbook of Experimental Phenomenology; Albertazzi, L., Ed.; Wiley-Blackwell: New York, NY, USA, 2013; pp. 71–89. [Google Scholar]

- Dayan, P.; Abbott, L.; Abbott, L. Theoretical Neuroscience: Computational and Mathematical Modeling of Neural Systems; MIT Press: Cambridge, MA, USA, 2001. [Google Scholar]

- Eliasmith, C.; Anderson, C. Neural Engineering: Computation, Representation, and Dynamics in Neurobiological Systems; MIT Press: Cambridge, MA, USA, 2004. [Google Scholar]

- O’Reilly, R.; Munakata, Y. Computational Explorations in Cognitive Neuroscience: Understanding the Mind by Simulating the Brain; MIT Press: Cambridge, MA, USA, 2000. [Google Scholar]

- Blouw, P.; Solodkin, E.; Thagard, P.; Eliasmith, C. Concepts as Semantic Pointers: A Framework and Computational Model. Cogn. Sci. 2015, 40, 1128–1162. [Google Scholar] [CrossRef]

- Damasio, A. Self Comes to Mind: Constructing the Conscious Brain; Vintage: New York, NY, USA, 2012. [Google Scholar]

- Northoff, G.; Lamme, V. Neural signs and mechanisms of consciousness: Is there a potential convergence of theories of consciousness in sight? Neurosci. Biobehav. Rev. 2020, 118, 568–587. [Google Scholar] [CrossRef]

- Drigas, A.S.; Pappas, M.A. The Consciousness-Intelligence-Knowledge Pyramid: An 8 × 8 Layer Model. Int. J. Recent Contrib. Eng. Sci. IT 2017, 5, 14–25. [Google Scholar] [CrossRef]

- Drigas, A.; Mitsea, E. The Triangle of Spiritual Intelligence, Metacognition and Consciousness. Int. J. Recent Contrib. Eng. Sci. IT 2020, 8, 4–23. [Google Scholar] [CrossRef]

- Shannon, C.E. A Mathematical Theory of Communication. Bell Syst. Tech. J. 1948, 27, 379–423. [Google Scholar] [CrossRef]

- Madden, A.D. A definition of information. Aslib Proc. 2000, 52, 343–349. [Google Scholar] [CrossRef]

- Capurro, R.; Hjørland, B. The concept of information. Annu. Rev. Inf. Sci. Technol. 2005, 37, 343–411. [Google Scholar] [CrossRef]

- Drigas, A.; Mitsea, E. The 8 Pillars of Metacognition. Int. J. Emerg. Technol. Learn. 2020, 15, 162–178. [Google Scholar] [CrossRef]

- Blum, M.; Blum, L. A Theoretical Computer Science Perspective on Consciousness. J. Artif. Intell. Conscious. 2021, 8, 1–42. [Google Scholar] [CrossRef]

- Rudrauf, D.; Bennequin, D.; Granic, I.; Landini, G.; Friston, K.; Williford, K. A mathematical model of embodied consciousness. J. Theor. Biol. 2017, 428, 106–131. [Google Scholar] [CrossRef] [PubMed]

| Theory | Authors (year) | Main Definition | Other Terms/Subcategorization Related to Consciousness (Definitions Reported If Available) |

|---|---|---|---|

| ADT | LaBerge and Kasevich (2007) [18] | Consciousness is an activity that is extended in time and typically continues from the time we awake to the time we fall asleep. | - Background consciousness: some processes in the brain create “cognitive event that may be called ‘having an impression’ of something”. - Elevated consciousness for selected aspects of background consciousness is assumed to arise when sustained activity of a primary sensory area is sent to higher sensory areas, and where a selected part of the sensory scene is amplified by attentional activity controlled from the frontal lobes. - Foreground consciousness: the elevated attentional activity of a part of the sensory scene in higher sensory areas. - Content of consciousness: the simultaneous impressions from both foreground and background consciousness together; |

| Agnati et al.’s proposal | Cook (2008) [19] | nf | |

| Agnati et al. (2012) [20] | Consciousness may be thought as the global result of integrative processes taking place at different levels of miniaturization in plastic mosaics | ||

| AIM | Hobson (2009) [21] | Waking consciousness can be defined as the awareness of the external world, our bodies, and ourselves (including the awareness of our awareness) that humans experience when awake. | - Primary consciousness can be defined as simple awareness that includes perception and emotion. - Secondary consciousness depends on language and includes such features as self-reflective awareness, abstract thinking, volition, and metacognition. |

| ART | Grossberg (2007) [22] | nf | |

| Grossberg (2017) [23] | Consciousness is not just a whir of information-processing. | - Core consciousness (see Damasio’s theory) | |

| AST | Graziano and Kastner (2011) [24] | Consciousness is not an emergent property or a metaphysical emanation but is itself information computed by an expert system. […] Consciousness=awareness (i.e., a perceptual model of attention). | |

| Bieberich’s theory | Bieberich (2012) [25] | Consciousness determines what is perceived as reality. | |

| COI | Kriegel (2007) [26] | When a subject has a higher-order representation that is unified with the first-order representation it represents, their representational unity constitutes a conscious state. Consciousness determines what is perceived as reality. | |

| CP | Merker (2007) [27] | Consciousness may be regarded most simply as the “medium” of all possible experience. Consciousness as the state or condition presupposed by any experience whatsoever. | - Reflective consciousness: is one of many contents of consciousness available to creatures with sophisticated cognitive capacities; it is “a luxury of consciousness on the part of certain big-brained species, and not its defining property”; self-consciousness, unselfconsciously. |

| CSS | Berkovich-Ohana et al. (2014) [28] | Consciousness is an experienced property of mental states and processes, which is lost during a dreamless deep sleep, deep anesthesia, or coma | - Minimal self (MS): a self that is short of temporal extension and endowed with a sense of agency, ownership, and non-conceptual first-person content. - Narrative self (NS): it involves personal identity and continuity across time, as well as conceptual thought. - Core consciousness (CC): it supports the MS; its scope is in the here and now. - Extended consciousness (EC): it supports the NS and involves memory of past, imagination of future, and verbal thought. It deals with holding in mind, overtime, a multiplicity of neural patterns that describe the autobiographical self. |

| Berkovich-Ohana et al. (2017) [29] | nf | ||

| Damasio’s theory | Bosse et al. (2008) [30] | Proto-self: The proto-self is a coherent collection of neural patterns which map, moment by moment, the state of the physical structure of the organism in its many dimensions. Core consciousness (or feeling a feeling) is what emerges when the organism detects that its representation of its own body state (the proto-self) has been changed by the occurrence of the stimulus. Thus, it becomes (consciously) aware of the feeling, i.e., extended consciousness; | |

| DCT | Ward (2011) [31] | Phenomenal consciousness is generated by synchronized neural activity in the dendritic trees of dorsal thalamic neurons: a thalamic dynamic core. | Meta-consciousness and Self-consciousness are viewed as contents of consciousness that are built from recursive application of primary consciousness to conscious contents. |

| EMI/CEMI | McFadden (2007) [32] | Consciousness is that component of the brain’s electromagnetic information field that is downloaded to motor neurons and is thereby capable of communicating its state to the outside world. Consciousness is what electromagnetic field information feels like from the inside. | |

| Pockett (2007) [33] | nf | ||

| Lewis and MacGregor (2010) [34] | Consciousness itself is a dispositional form of energy that may relate to physical forms of energy as the phase state of a gas relates in a material way to its liquid phase. | ||

| Gelepithis’ theory | Gelepithis (2014) [35] | nf | |

| Gurwitsch’s theory | Yoshimi (2011) [36] | nf | |

| Yoshimi and Vinson (2015) [37] | Consciousness is the totality of co-present data […] Any field of consciousness can be parsed into these three co-present domains of data: theme, thematic field, and margin. | Marginal consciousness: unattended data not relevant to the theme. Theme: data at the focus of attention organized according to Gestalt law. Thematic field: unattended data relevant to the theme. | |

| GWT/GNW | Baars and Franklin (2007) [38] | Consciousness serves as a lookout function to spot potential dangers or opportunities so that there is a particularly close relationship between conscious content and significant sensory input. | |

| Prakash et al. (2008) [39] | nf | ||

| Baars and Franklin (2009) [40] | nf | ||

| Raffone and Pantani (2010) [41] | nf | Phenomenal consciousness consists in phenomenally conscious states that are not cognitively accessible. Access consciousness represents a content information which is ‘broadcast’ in the GW. | |

| Dehaene and Changeux (2011) [42] | The availability of information is what we subjectively experience in a conscious state. “Conscious” is an ambiguous word. In its intransitive use (e.g., “the patient was still conscious”) it refers to the state of consciousness, also called wakefulness or vigilance, which is thought to vary continuously from coma and slow-wave sleep to full vigilance. In its transitive use (e.g., “I was not conscious of the red light”), it refers to conscious access to and/or conscious processing of a specific piece of information. | ||

| Sergent and Naccache (2012) [43] | It is possible to use subjective reports to probe the content of consciousness and therefore define any representation which is not reported by the subject as non-conscious, even when questioned about it, the existence of which can be demonstrated through behavioral and/or functional brain-imaging measures. | Phenomenal consciousness is a much larger domain of conscious contents than the one accessible through reports; perceptual consciousness; micro-consciousness | |

| Baars et al. (2013) [44] | Conscious experiences reflect a flexible “binding and broadcasting” function in the brain, which mobilize a large, distributed collection of specialized cortical networks and processes that are not conscious by themselves. | Sensory consciousness; perceptual consciousness. | |

| Dehaene et al. (2014) [45] | Conscious access is the process by which a piece of information becomes conscious content. Conscious processing refers to the various operations that can be applied to a conscious content (as when multiplying two numbers mentally). Conscious report is the process by which a conscious content can be described verbally or by various gestures. Such reportability remains the main criterion for whether a piece of information is or is not conscious, i.e., I can report something if and only if I am aware of it. | Self-consciousness is a particular instance of conscious accessibility where the conscious ‘spotlight’ is oriented toward internal states […] The state of consciousness, associated with fluctuations in wakefulness or vigilance, refers to the brain’s very ability to entertain stream of conscious contents. | |

| Bartolomei et al. (2014) [46] | The global availability of information through the workspace is what we subjectively experience as a conscious state. | ||

| HOT | Lau (2007) [47] | Perceptual consciousness depends on the representation of the probability distributions that describe the behavior of the internal signal. | |

| Lau and Rosenthal (2011) [48] | Consciousness consists in perceptual processes that occur with subjective experience, of which we are aware and about which we can report under normal circumstances. This contrasts with perceptual processes that occur without subjectivity, of which we are unaware and about which we cannot report. The term ‘conscious awareness’ can also apply to thoughts and volitional states of which one is subjectively aware | ||

| Friesen (2014) [49] | nf | ||

| Mehta and Mashour (2013) [50] | Consciousness is the story that our perceptual system tells us about the world. Any conscious state is a representation of what it is like to be in a conscious state, which is wholly determined by the content of that representation. | General consciousness: it pertains the levels of consciousness; - Specific consciousness pertains the content of consciousness | |

| IIT | Balduzzi and Tononi (2008) [51] | Consciousness has to do with a system’s capacity to generate integrated information. This suggestion stems from considering two basic properties of consciousness: (i) each conscious experience generates a large amount of information by ruling out alternative experiences; (ii) the information is integrated, meaning it cannot be decomposed into independent parts. | |

| Tononi (2008) [52] | Consciousness is integrated information and its quality is given by the informational relationships generated by a complex of elements. | The quantity of consciousness generated by a complex of elements is determined by the amount of integrated information it generates above and beyond its parts. The quality of consciousness is determined by the set of all the informational relationships its mechanisms generate. | |

| Tononi (2012) [53] | Consciousness is what vanishes every night when we fall into dreamless sleep and reappears when we wake up or when we dream. Consciousness is synonymous with experience. | ||

| Casali et al. (2013) [54] | Conscious experience is both differentiated (i.e., it has many specific features that distinguish it from a large repertoire of other experiences) and integrated (i.e., it cannot be divided into discrete, independent components). | ||

| Oizumi et al. (2014) [55] | An experience (i.e., consciousness) is thus an intrinsic property of a complex of elements in a state: how they constrain—in a compositional manner—its space of possibilities, in the past, and in the future. | ||

| Tononi and Koch (2015) [15] | Consciousness is a fundamental property possessed by physical systems having specific causal properties. Consciousness is a fundamental, observer-independent property that can be accounted for by the intrinsic cause–effect power of certain mechanisms in a state—how they give form to the space of possibilities in their past and their future. Consciousness is a fundamental property of certain physical systems, one that requires having real cause–effect power, specifically the power of shaping the space of possible past and future states in a way that is maximally irreducible intrinsically. | ||

| Tononi et al. (2016) [56] | Consciousness is subjective experience. | The quality or content of consciousness is identical to the form of the conceptual structure specified by the PSC. The quantity or level of consciousness corresponds to its irreducibility (integrated information Φ). | |

| Tsuchiya et al. (2016) [57] | Consciousness usually refers to either level or contents of consciousness. | The level of consciousness ranges from very high in the aroused awake state to low as in coma, vegetative states, deep dreamless sleep, and deep general anesthesia. At a given level of consciousness, every experiential moment contains various contents of consciousness. The contents of consciousness are synonymous with the other concepts such as qualia (its singular is a quale) or phenomenal consciousness. | |

| LRMB | Wang (2012) [58] | Consciousness is a collective mental state of self-awareness that represents the bodily and mental status and their relations to the external environment, which is inductively generated or synthesized from the levels of metabolic homeostasis, unconsciousness, subconsciousness, and consciousness from the bottom-up. Consciousness is the basic characteristic of life and the mind, which is the state of being awareness of oneself, of perception to both internal and external worlds, and of responsive to one’s surroundings. Consciousness is a collective state at the perception layer of the 7-layer LRMB model, such as the sensation, action, memory, perception, metacognition, inference, and cognitive layers from the bottom-up. Consciousness is the sense of self and sign of life in natural intelligence. Consciousness is a collective and general state of advanced living organisms encompassing all cognitive attributes, which is generated based on the physiological structures, memory, and the brain’s power for acquiring and manipulating sensory and mental information. | - Subconsciousness |

| MCTT | Dalla Barba and Boissè (2010) [59] | Consciousness is not a generic and a specific dimension that then becomes the consciousness of its object. It is immediately conscious of something. We would also add that consciousness is always consciousness of something in a certain way. This means that consciousness takes a certain point of view of its object and of the same object consciousness can take various points of view. | Knowing consciousness (KC) describes what is usually referred to as semantic memory. KC is temporal since it is a synthesis of what I have been. At the same time, KC is also atemporal in the sense that the time of which it is made is unrecognizable. Temporal consciousness is an organized, original, and irreducible form of consciousness for addressing the world. Unlike KC, TC transcends the mere presence of the object to set it in time. |

| Mesocircuit Hypothesis | Schiff (2010) [60] | Consciousness is the combination of different components: arousal, which relates to behavioral and physiological observations and establishes a threshold level that allows other aspects of higher consciousness to occur; awareness and motivation, which presupposes the will to interact with the environment and therefore can be intense as a “tension towards something”. | |

| Min’s Model | Min (2010) [61] | Consciousness is a mental state embodied through TRN-modulated synchronization of thalamocortical networks. Consciousness consists of each mental unit, which is an individual thalamocortical looping mechanism, no matter what cognitive stages it involves. Consciousness is referred to as thalamocortical response modes controlled by the TRN and is embodied in the form of dynamically synchronized thalamocortical networks ready for upcoming attentional processes | Awareness: conscious perception of an attended mental representation by strengthening relevant neural networks through thalamocortical reiterating. |

| NIH | Yu and Blumenfeld (2009) [5] | Consciousness is the ability to maintain an alert state, attention, and awareness of self and environment | |

| O’Doherty’s theory | O’Doherty (2013) [62] | Consciousness represents the storage of past events for use in future situations and it is altered by external experience of the organism. Consciousness results from the gradual evolutionary development of the human information processing function. Consciousness is a phenomenon resulting from interactions between organisms rather than being located within an organism. Consciousness may consist of higher level of signal detection that has evolved through categorization and storage of past events. | |

| PFT | Morsella et al. (2015) [63] | Consciousness is a phenomenon associated with perceptual-like processing and interfacing with the somatic nervous system. | |

| PToC | Shannon (2008) [64] | Consciousness is the subjective experience and there are three types of consciousness: the sensed being, mental awareness, and meta-mentation. | Sensed being or sentience concerns the primitive and elementary aspect of consciousness that distinguishes the living from inanimate organisms. It has no specific context or structure; it is pervasive, and it is present during all our life. Mental awareness, typical of higher-order mammals, relates subjective experience that is distinct and differentiated, and the contents and forms of those experiences, such as mental images, ideations, flows of consciousness, and internal verbal monologues. Meta-mentation is the mind’s ability to take its own productions as object for further reflection. This type of ability has different manifestation like meta-observation, reflection, monitoring, and control. |

| Quantum theories | Klemm and Klink (2008) [65] | Consciousness is the capacity of a system to opt among presented alternatives. | Unreflected consciousness refers to conscious acts and meanings in their immediate experiential and direct givenness. |

| Das (2009) [66] | Consciousness is a property of the Nambu-Goldtone bosons created by Yukawa coupling between the Namubu-Goldstone boson scalar field and the electron Dirac field. | ||

| Di Biase (2009) [67] | Consciousness is non-local information with a status equal to matter and energy. | ||

| Koehler (2011) [68] | nf | ||

| Argonov (2012) [69] | Consciousness in the phenomenal sense is a synonym of “subjective reality”, i.e., perception, volition, mental images, and emotions | ||

| Georgiev (2013) [6] | Consciousness is a collective term that refers to the subjective character of our mental states, and of our ability to experience or to feel. A conscious state is a state of experience. | ||

| Li (2013) [70] | nf | ||

| Hameroff and Penrose (2014) [71] | Consciousness consists of a sequence of discrete events, each being a moment of ‘objective reduction’ (OR) of a quantum state (according to the DP scheme), where it is taken that these quantum states exist as parts of quantum computations carried on primarily in neuronal microtubules. Such OR events would have to be ‘orchestrated’ in an appropriate way (Orch OR), for genuine consciousness to arise. | ||

| Hoffman and Prakash (2014) [72] | A conscious agents as a six tubules C = ((X,X), (G,G), P,D, A,N)), where: (X, X) and (G, G) are measurable spaces; P: W × X→[0, 1], D: X × G→[0, 1], A: G ×W→[0, 1] are mathematical formalism of Markoviank kernels, where N is an integer. | ||

| Kak et al. (2016) [73] | States of consciousness are mediated by languages and metalanguages with varying capacity or ability to recruit memories. | ||

| Sieb (2016) [74] | Conscious experience is defined as the direct observation of conscious events. A conscious event [...] is the fundamental entity of conscious experience (observed physical reality) represented by three coordinates of space and one coordinate of time in the space–time continuum: conscious event that consist of a set of qualia; conscious experience that is intimately tied to perception; postulated for conscious experience; conscious experience that is essentially an orientation in space and time. | ||

| Brabant (2016) [75] | nf | ||

| Reji Kumar’s theory | Reji Kumar (2010) [76] | Human consciousness is built with models and these models are involved in the development of consciousness at a very basic level. As such, consciousness is subject to all advantages and limitations of models. Consciousness is the result of all information processing activities that occur in the mind. | |

| Ahmad and Khan (2012) [77] | Consciousness is the core component of the mind. Consciousness can be defined as the spirit of consciousness and the transitivity of an information system. Transitivity can be defined as a certain correspondence between the system of information and outside matters. Consciousness is the final output of all information processing activities that occur in the mind. | ||

| Reji Kumar (2016) [78] | Consciousness is the result of all processes that occur in the brain. | ||

| Reji Kumar (2016) [79] | Consciousness is the totality of all effects of the functions of the brain and the supporting nervous system that produces the feeling of the objective world and the subjective mind or self | ||

| RPT | Cleeremans et al. (2007) [80] | Consciousness is the brain’s theory about itself, gained through experience interacting with the world, and, crucially, with itself […] Conscious experience occurs if and only if an information processing system has learned about its own representations of the world […] Experience is, almost by definition (“what it feels like”), something that takes place not in any physical entity but rather only in special physical entities, namely cognitive agents. | |

| Cleeremans (2008) [81] | nf | ||

| SPC | Thagard and Stewart (2014) [82] | Consciousness is a neural process resulting from three mechanisms: representation by firing patterns in neural populations, binding of representations into more complex representations called semantic pointers, and competition among semantic pointers to capture the most important aspects of an organism’s current state. |

| Terms | N. of Citations | Theory |

|---|---|---|

| Activity | 4 | ADT, DCT |

| Awareness | 13 | AIM, AST, HOT, LMRB, Mesocircuit Hypothesis, NIH, PToC |

| Brain | 6 | EMI/CEMI, GWT/GNW, LRMB, Reji Kumar’s theory, RPT |

| Cognitive | 4 | LRMB, Min’s Model, RPT |

| Collective | 6 | LRMB, Quantum theories |

| Component | 4 | EMI/CEMI, IIT, Mesocircuit Hypothesis, Reji Kumar’s theory |

| Content | 7 | GWT/GNW, HOT, IIT |

| Events | 7 | O’Doherty’s theory, Quantum theories |

| Experience | 26 | AIM, CP, CSS, GWT/GNW, HOT, IIT, O’Doherty’s theory, PToC, Quantum theories, RPT |

| External | 4 | AIM, LRMB, O’Doherty’s theory |

| Feeling | 7 | Damasio’s theory, Reji Kumar’s theory |

| Field | 6 | EMI/CEMI, Gurwitsch’s theory, Quantum theories |

| Form | 4 | EMI/CEMI, IIT, Min’s Model |

| Function | 5 | GWT/GNW, O’Doherty’s theory, Reji Kumar’s theory |

| Information | 20 | ART, AST, EMI/CEMI, GWT/GNW, IIT, LRMB, O’Doherty’s theory, Reji Kumar’s theory, RPT |

| Integrated | 5 | IIT |

| Level | 6 | Agnati et al.’s proposal, IIT, LRMB, Mesocircuit Hypothesis, O’Doherty’s theory, Reji Kumar’s theory |

| Mental | 10 | CSS, GWT/GNW, LRMB, Min’s Model, Quantum theories |

| Mind | 5 | LRMB, Reji Kumar’s theory |

| Neural | 4 | Damasio’s theory, DCT, SPC, |

| Object | 5 | MCTT, Quantum theories, Reji Kumar’s theory |

| Organism | 7 | Damasio’s theory, LRMB, O’Doherty’s theory, SPC |

| Past | 5 | IIT, O’Doherty’s theory |

| Perception | 5 | LRMB, Quantum theories |

| Perceptual | 5 | AST, GWT/GNW, HOT, PFT |

| Physical | 7 | AST, Damasio’s theory, EMI/CEMI, IIT, Quantum theories, RPT |

| Possible | 5 | CP, GWT/GNW, IIT |

| Power | 4 | IIT, LRMB |

| Processes | 17 | Agnati et al.’s proposal, CSS, GWT/GNW, HOT, Min’s Model, Reji Kumar’s theory |

| Property | 9 | AST, CSS, IIT, Quantum theories |

| Reality | 4 | Bieberich’s theory, COI, Quantum theories |

| Representation | 12 | COI, Damasio’s theory, GWT/GNW, HOT, RPT, SPC |

| Something | 5 | GWT/GNW, MCTT, Mesocircuit Hypothesis, RPT |

| Space | 6 | IIT, Quantum theories |

| State | 28 | CP, Damasio’s theory, EMI/CEMI, GWT/GNW, HOT, LRMB, Min’s Model, Quantum theories |

| Subjective | 14 | GWT/GNW, HOT, IIT, PToC, Quantum theories, Reji Kumar’s theory |

| System | 11 | AST, HOT, IIT, PFT, PToC, Reji Kumar’s theory, RPT, |

| Thalamocortical | 4 | Min’s Model |

| World | 7 | AIM, EMI/CEMI, HOT, LRMB, Reji Kumar’s theory, RPT |

| Brain Structures | Theories Percentage Single Structure | Theories | Theories Percentage |

|---|---|---|---|

| Micro-structures | |||

| Single Neuron | 3.44% | Agnati’s theory | 24% |

| Pyramidal neurons | 6.89% | ADT, Bieberich’s Theory | |

| GABAergic inhibitory neurons | 3.44% | Min’s model | |

| Periaqueductal gray neurons | 3.44% | CP | |

| Astroglial-pyramidal cells | 3.44% | CEMI | |

| Glia cells | 3.44% | Agnati’s theory | |

| Dendritic cells | 10.34% | ADT, Bieberich’s Theory, Quantum Theories | |

| Neural Microtubules | 3.44% | Quantum Theories | |

| Ionic channels | 10.34% | Agnati’s theory, Bieberich’s Theory, Quantum Theories | |

| Cortical minicolumns | 3.44% | ADT | |

| Cortical single structures | |||

| Prefrontal cortex (PFC) | 24.13% | Agnati’s theory, CP, GWT, HOT, Min’s model, Quantum Theories, RPT | 48% |

| Orbitofrontal areas | 3.44% | ART | |

| Dorsolateral prefrontal cortex (dlPFC) | 6.89% | COI, CSS | |

| Medial pre-frontal cortex (mPFC) | 10.34% | AST, COI, CSS | |

| Cingulate cortex | 13.79% | CSS, Damasio’s theory, GWT, NIH | |

| Anterior cingulate cortex | 13.79% | Agnati’s theory, COI, CSS, GWT | |

| Experidorsal anterior cingulate cortex (dACC) | 3.44% | CSS | |

| Medial cingulate gyrus | 3.44% | CP | |

| Posterior cingulate cortex (PCC) | 3.44% | CSS | |

| Precuneus | 6.89% | CSS, NIH | |

| Medial frontal cortex | 3.44% | NIH | |

| Supplementary motor area (SMA) | 3.44% | CSS | |

| Premotor cortex | 6.89% | CSS, GWT | |

| Parietal cortex | 10.34% | CSS, GWT | |

| Superior parietal lobule | 3.44% | CSS | |

| Inferior parietal lobule | 3.44% | CSS | |

| Posterior parietal cortex (PPC) | 10.34% | CP, HOT, quantum theories | |

| Temporoparietal junction | 6.89% | AST, CSS | |

| Temporal lobe | 17.24% | ART, AST, CSS, GWT, MCTT | |

| Occipital cortex | 3.44% | GWT | |

| Subcortical single structures | |||

| Basal ganglia | 10.34% | ART, CP, quantum theories | 52% |

| Nucleus accumbens | 3.44% | Agnati’s theory | |

| Claustrum | 3.44% | Agnati’s theory | |

| Insula | 6.89% | Agnati’s theory, CSS | |

| Amygdala | 3.44% | ART | |

| Hippocampus | 17.24% | ART, CSS, GWT, quantum theories, RPT | |

| Cerebellum | 10.34% | GWT, LRMB, Reji Kumar’s theory | |

| Thalamus | 24.13% | Agnati’s theory, CP, Damasio’s theory, DCT, GWT, HOT, LRMB | |

| Anterior Pretectal nucleus | 3.44% | DCT | |

| Medial thalamus nuclei | 3.44% | NIH | |

| Thalamic reticular nucleus | 6.89% | DCT, Min’s model | |

| Pulvinar | 3.44% | DCT | |

| Hypothalamus | 6.89% | ART, CP | |

| Midbrain | 3.44% | CP | |

| Superior colliculus | 6.89% | CP, Damasio’s theory | |

| Ventral tegmental area | 3.44% | Agnati’s theory | |

| Brain stem | 3.44% | NIH | |

| Raphe nuclei | 3.44% | Agnati’s theory | |

| Brain systems/regions | |||

| Cortico-thalamic system | 17.24% | ART, AIM, IIT, Mesocircuit hypothesis, quantum Theories | 28% |

| Fronto-parietal areas | 6.89% | CSS, GWT | |

| Sensory cortical areas | 3.44% | GWT | |

| Perceptual regions | 3.44% | PFT | |

| Limbic system | 3.44% | AIM | |

| Striate regions | 3.44% | GWT | |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sattin, D.; Magnani, F.G.; Bartesaghi, L.; Caputo, M.; Fittipaldo, A.V.; Cacciatore, M.; Picozzi, M.; Leonardi, M. Theoretical Models of Consciousness: A Scoping Review. Brain Sci. 2021, 11, 535. https://doi.org/10.3390/brainsci11050535

Sattin D, Magnani FG, Bartesaghi L, Caputo M, Fittipaldo AV, Cacciatore M, Picozzi M, Leonardi M. Theoretical Models of Consciousness: A Scoping Review. Brain Sciences. 2021; 11(5):535. https://doi.org/10.3390/brainsci11050535

Chicago/Turabian StyleSattin, Davide, Francesca Giulia Magnani, Laura Bartesaghi, Milena Caputo, Andrea Veronica Fittipaldo, Martina Cacciatore, Mario Picozzi, and Matilde Leonardi. 2021. "Theoretical Models of Consciousness: A Scoping Review" Brain Sciences 11, no. 5: 535. https://doi.org/10.3390/brainsci11050535

APA StyleSattin, D., Magnani, F. G., Bartesaghi, L., Caputo, M., Fittipaldo, A. V., Cacciatore, M., Picozzi, M., & Leonardi, M. (2021). Theoretical Models of Consciousness: A Scoping Review. Brain Sciences, 11(5), 535. https://doi.org/10.3390/brainsci11050535