1. Introduction

Usability refers to the degree to which a product can be used to achieve specific goals in a specific context of use [

1]. The standard ISO/IEC 25000 defines the usability of a software product as the ability to be understood, learned, used, and be attractive to the user under specific conditions of use. These definitions suggest the possibility of using specific protocols and instruments to measure the usability level [

2]. Currently, there are multiple efforts underway to improve the security, performance, and reliability of software products [

3,

4,

5], but less attention has been given to addressing usability problems in any type of software, including those intended for medical assistance such as telerehabilitation platforms [

6,

7]. These platforms propose the use and practice of focused rehabilitation services for those patients who cannot go to a rehabilitation centre because they have limited mobility or should avoid mobilization [

8].

Usability problems can lead to a failure to complete the determined tasks on the part of the user [

9]. A software product that has not gone through a usability evaluation process will not guarantee that users take advantage of the qualities and benefits of the application. To avoid user abandonment of telerehabilitation platforms, it is necessary to carry out extensive usability evaluations.

The project “Telerehabilitation system for education of patients after a hip replacement surgery (ePHoRT)” provides a telerehabilitation platform for patients who have undergone arthroplasty surgery. Hip fracture has a great worldwide incidence, mainly in people over 65 years old, as a result of degenerative joint disease or progressive wear of the joint. In 1990, hip fractures occurred in 1.66 million elderly patients. However, there are studies that estimate that hip arthroplasty for fracture and joint replacement will surpass six million in 2050 [

10,

11]. It is important to proceed with such replacements whilst anticipating potential fractures which have a high incidence of mortality because of the sepsis risks.

The ePHoRT platform allows hip arthroplasty patients recovering from fracture or joint replacement to carry out part of the rehabilitation treatment at home and communicate the evolution of the recovery process to the physiotherapist [

8]. The ePHoRT platform makes use of the controller for Kinect games, which is a device that is capable of capturing the movement of the human skeleton, recognizing it, and positioning it using a set of components: a depth sensor, infrared sensor (transmitter and receiver), camera RGB, and an array of microphones.

The ePHoRT platform has a ’practice module’ organized into three stages that are significant for the patient’s recovery process. These stages are characterized by an increasing level in the intensity of the exercises. Stage 1 takes place during the week that follows the surgery, and it is mainly constituted by exercises performed in a lying-down position. The standing-up rehabilitation movements start in stage 2 (the 2nd week after the surgery). Finally, stage 3 is characterized by functional exercises, which consist of preparing the patient to return to regular walking [

12].

The ePHoRT platform is in the design stage and follows an agile and collaborative development process focused on the user. In this context, a formative evaluation is the most appropriate [

13]. Published research recommended the combination of two inspection methods for usability assessment: heuristic evaluation and cognitive walkthrough [

14,

15,

16,

17].

In this study, an exploratory prototype of the ePHoRT platform was used to perform the usability evaluation. An exploratory prototype is an initial version of a software product that is used to validate requirements, demonstrate concepts, try out design options, and check the usability level of the product being designed according to the stakeholders’ needs [

18,

19]. As described by Jacob Nielsen in the Usability Engineering Lifecycle, the prototyping process should be done in cycles of iterative refinements [

20,

21].

2. Related Research

In this section, summaries of the most relevant contributions found in the related research publications that served as context for this study are presented, but brief definitions of the heuristic evaluation and cognitive walkthrough are given first. Heuristic evaluation is a usability inspection method that identifies usability problems based on usability principles or heuristics [

20]. The cognitive walkthrough is a usability evaluation method in which one or more evaluators work through a set of tasks, ask a set of questions from the perspective of the user, and check if the system design supports the effective accomplishment of the proposed goals [

16].

The authors in References [

2,

22] mentioned that it is important, once the usability guidelines are defined, to select the usability attributes to be measured; these are methods and metrics that are best for answering the research questions and objectives from the user interface.

Furthermore, another study [

23] mentioned that a side benefit of performing the cognitive walkthrough was that the technique served as a structured tool for collaboration between the medical partners and the technical partners. However, the authors mentioned that a study which performed the heuristic evaluation separately affected the consistency of the usability evaluation results.

Other studies [

14,

15,

16,

17] have highlighted the advantages of combining heuristic evaluation and cognitive walkthrough. The main advantages that authors mention are the identification of major usability issues, the immediacy of response, the non-intrusive methods, it is inexpensive in respect to time or resources, it can be done in a laboratory setting, it is good for refining requirements, it does not require a fully functional prototype, and thus is applicable to the stages of design, coding, testing, and deployment of the software development lifecycle.

The authors in References [

16,

24,

25] presented the disadvantages of performing a heuristic evaluation and cognitive walkthrough. The disadvantages to consider are: expert variability, evaluators must have experience and adequate knowledge to evaluate the product interface, evaluators may not understand the tasks performed by the product making it difficult to identify usability problems, usability problems are identified without directly giving a solution, limited task applicability, variation on the set of heuristics, and the method is biased by the current mindset of the evaluators.

Other studies [

17,

26] presented the usability evaluation results over prototypes. The advantages considered were that the prototype facilitates the development of future tasks to final users and facilitates the learning and development of an iterative processes. However, the disadvantage was that the online prototype presents only a part of the target platform. The prototype is functional and limited only in terms of colors and interactive elements.

On the basis of the quantitative results and recommendation of the authors [

14,

27], two usability evaluation methods were selected: heuristic evaluation and cognitive walkthrough. Heuristic evaluation is a usability inspection method that identifies usability problems based on usability principles or heuristics [

20]. The cognitive walkthrough is a usability evaluation method in which one or more evaluators work through a series of tasks, ask a set of questions from the perspective of the user, and check if the system design supports the effective accomplishment of the proposed goals [

16]. Authors [

25,

28] recommended the use of a testing technique with a customized instrument and an interview as the inquiry method.

Most of the previous studies do not systematically present the evolution of usability through an orderly and cyclical process. This study intended to tackle this issue to show that it is possible to improve the usability of telerehabilitation platforms without jeopardizing patient safety.

3. Materials and Methods

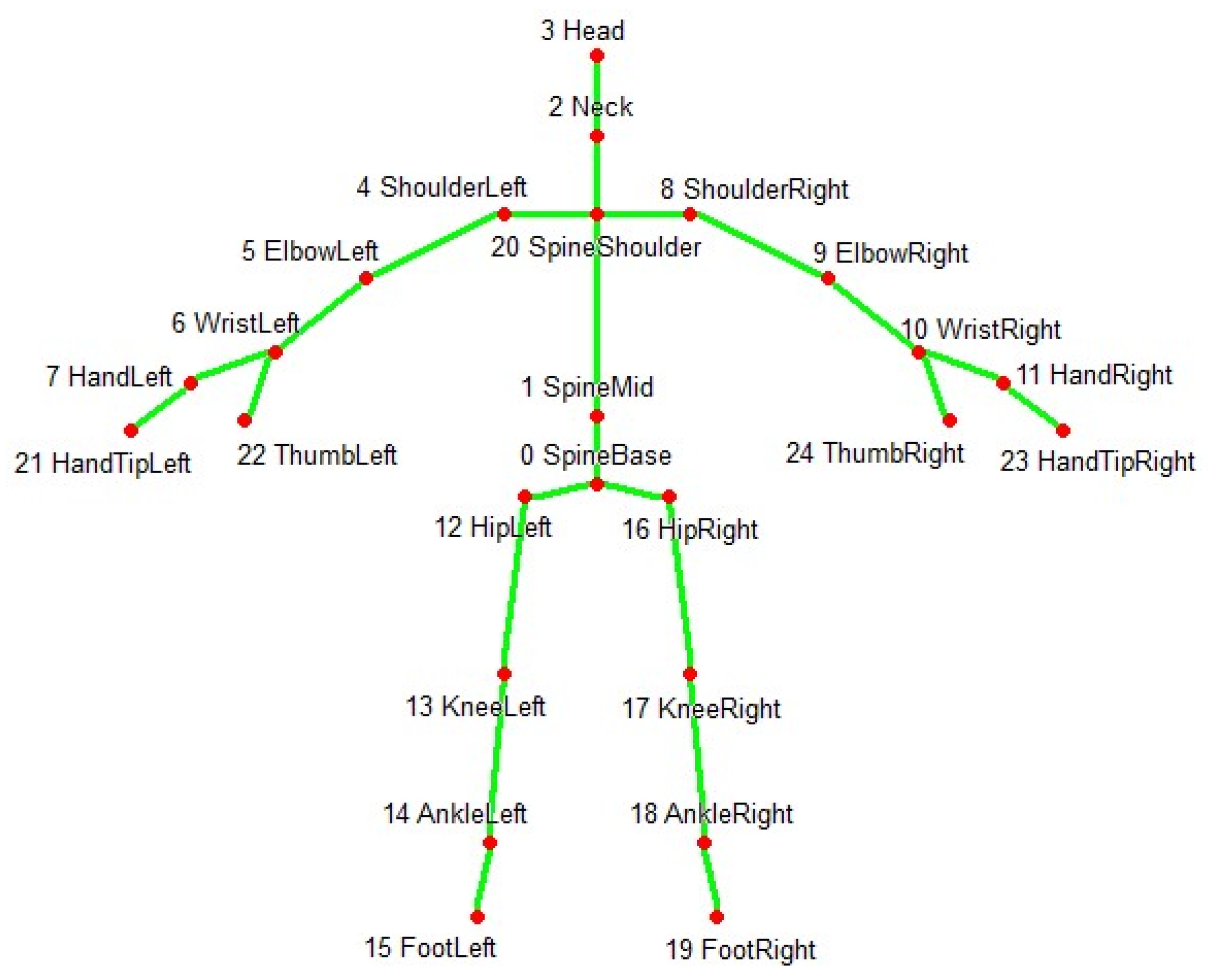

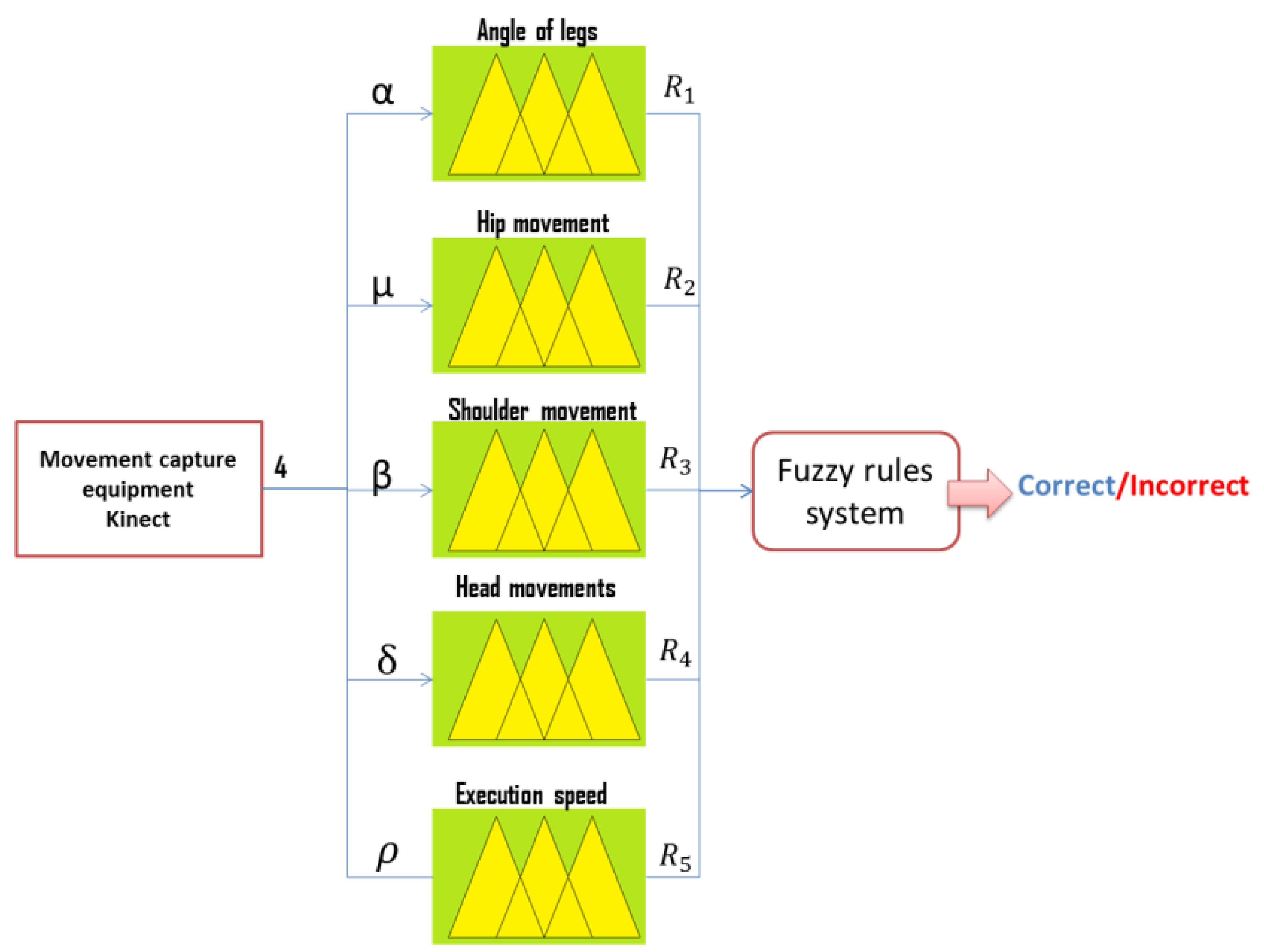

In the rehabilitation process, patients permitted the capture of their correct and incorrect movements with the help of the Kinect equipment. This equipment captures the three-dimensional coordinates (X, Y, Z) of the 25 main joints of the human body as show on

Figure 1. Information preprocessing was carried out to identify the relevant information, eliminating repeated data as well as noise as presented in Reference [

29]. According to a study conducted by Nielsen [

30], it is not possible to detect 100% of usability problems. In this study, we started with the usability evaluation of an exploratory prototype. This evaluation was a first agile approach aimed at detecting the majority of usability problems and was refined posteriorly to identify any minor remaining issues. For the posterior analysis, it was decided to have the collaboration of a minimum of three usability experts in the first, second, and third iterations. With six experts collaborating in the fourth iteration. This, according to the Nielsen study, would guarantee to find 75% of the usability problems.

To perform the heuristic evaluation, Nielsen’s 10 heuristics principles [

24] and three specific heuristics detailed in

Table 1 were used. This set of heuristics was complemented with three specific heuristics as suggested by Reference [

31]. The cognitive walkthrough was made with the help of volunteers that completed a demographic survey of 40 questions aimed to understand their background regarding gender, age, experience using computers, ability to learn software packages, experience using computer games, and experience using Kinect or similar gesture user interface devices. The first phase was composed of two exploratory iterations. A prototype was used in order to validate the user’s requirements.

The second phase was also composed of two iterations of refinement. In addition, an instrument called NASA-TLX [

32,

33] was added to perform the evaluation of the workload of patients when performing tasks on the ePHoRT platform. The platform is designed to work with patients after hip replacement surgery. However, government regulations require a complete version of the platform in order to authorize experimentation. For this reason, the evaluation was made with the help of volunteers.

The first iteration began with the selection of three relevant interfaces from the exploratory prototype: Login, Questionnaire, and Exercise Assessment. These interfaces were selected using the following criteria [

27]:

The login interface is the main gate to the ePHoRT platform. The interface also provides security of the resources assigned to the user and user identification for progress monitoring purposes.

The questionnaire interface must be completed by the user before doing the rehabilitation exercise. This interface defines whether the user is sufficiently healthy to practice.

The exercise assessment interface is one of the set of interfaces that presents the 129 physical exercises and provides instructions to the patient.

The results of the evaluation of the sample interfaces were organized as a list of improvements ordered by severity. These results constituted the baseline for the second iteration performed with an improved version of the sample interface. The second iteration began with a second usability evaluation with the aim of improving the results of the previous evaluation. In this iteration, the same inspection methods and instruments were used, and the results were catalogued and ordered according to their degree of severity.

The third iteration began with the design of 14 mock-ups based on the list of improvements obtained from the first phase. The fourth evaluation was carried out using a set of 17 web interfaces that were developed taking into consideration the results of the previous iterations.

Table 2 shows the final list of the evaluated mock-ups.

Following Nielsen’s recommendations, three to six usability experts collaborated in each iteration.

Table 1 shows the set of heuristics used.

A rating scale proposed by Nielsen was used to rate the severity of usability problems.

Table 3 shows the scale used for the heuristic evaluations.

The usability evaluation was complemented with a workload assessment, and to perform this, an experiment was designed composed of three tasks with different levels of cognitive demand.

Table 4 shows the tasks designed for the experiment.

The experiment had 39 participants (32 males and seven females) for the third iteration and 12 participants (seven males and five females) for the fourth iteration, with an age range of 18–40 years old, all without experience in telerehabilitation platforms, but with experience in the use of computers, computer games, and browsers. A baseline was established to measure the usability attributes. This was an important part of the usability evaluation, because the baseline determined the current status of the interfaces and identified usability deficiencies based on recognized heuristics principles for specific applications, which in this case were web interfaces. The authors recommended starting the usability analysis with Nielsen’s 10 heuristics principles [

24] and this was complemented with three specific heuristics [

27] for this platform. In an effort to further improve the usability of the ePHoRT platform, during the second phase (third and fourth iteration), the process proposed for the first phase was replicated [

27]: (1) Sort and prioritize the results by severity; (2) Select a suitable methodology for developing improvements; (3) Make a change control request form and record the improvements to be made; (4) Re-evaluate and compare results; (5) Test and deploy the new improvements.

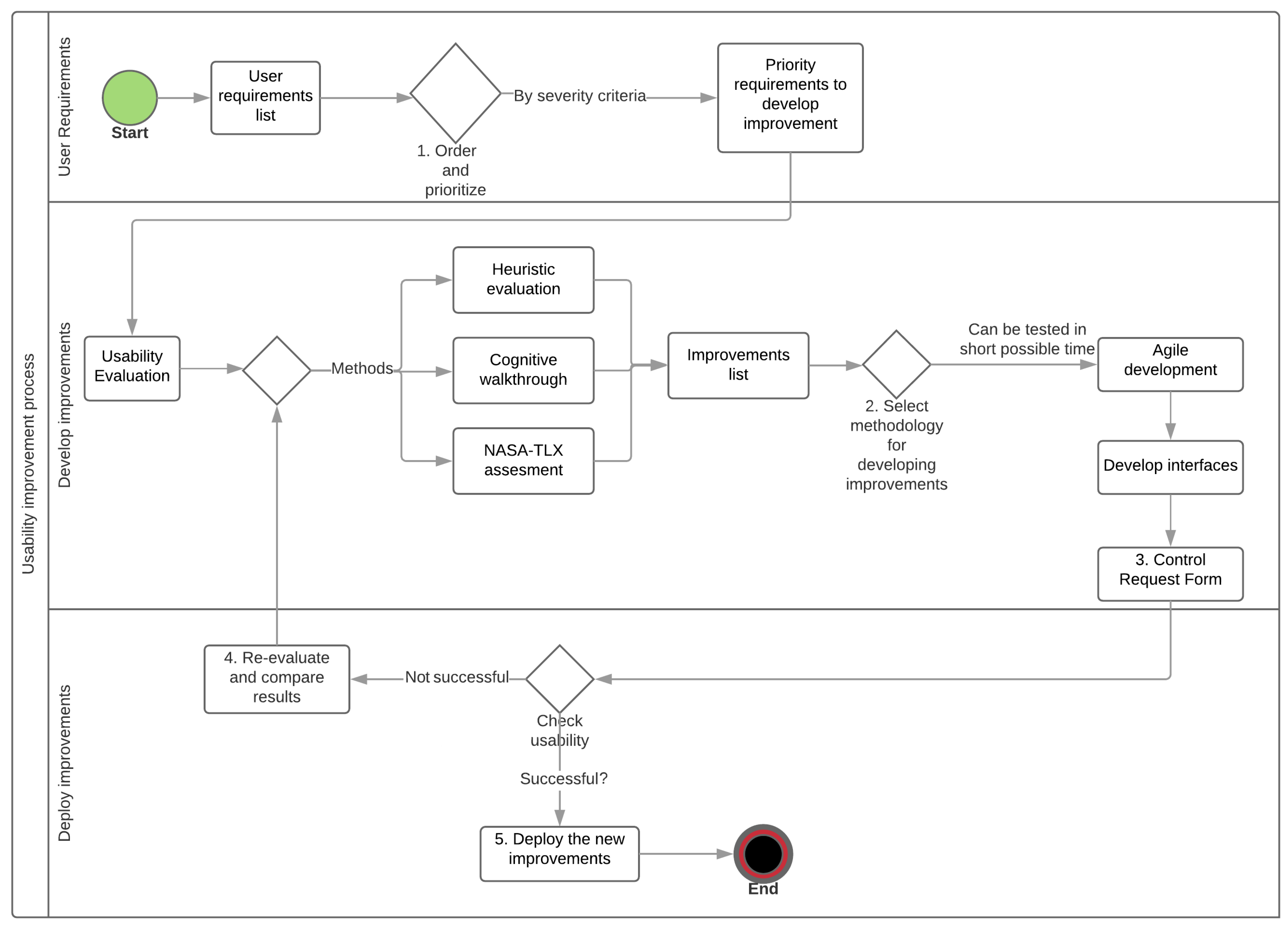

The proposed process was improved for this study.

Figure 2 illustrates the new schema for the usability improvement method. The new process to improve usability has three phases. In the first phase, which is called user requirements, the authors propose devising a list of requirements, and ordering and prioritizing them by the criterion level of severity. In the second phase, known as the development of improvements, the iterative schema proposed uses the agile software development method [

34] to improve the interfaces. In this way, the evolution of the level of usability of the ePHoRT platform is obtained through several iterations while keeping record of the changes made. In the third phase, the deployment of improvements phase, the improved interfaces are evaluated to check if the desired level of usability has been reached. If this is not the case then it is necessary to reevaluate and compare the results.

3.1. Heuristic Evaluation

The heuristic evaluation was performed with the following protocol: (1) Mock-ups of the interfaces were developed using the Balsamiq software [

35]. These mock-ups were presented in a document with simulated navigation using hyperlinks. The Balsamiq software provided controls to add functionality to the mock-up set, such as the interaction with the user and flow between pages; (2) A document for the usability experts was designed. This document contained the set of instructions and a guide to the heuristics defined for this study; (3) A document with the instructions for the tasks to be completed in each of the web interfaces of the platform was designed; (4) An online survey was developed. This survey consisted of a screenshot of each interface, a matrix to select one or several violations of the defined heuristics and two text areas where the evaluators could explain the usability problem found and share their recommendations; (5) The results were processed and reported in the results section.

3.2. Cognitive Walkthrough

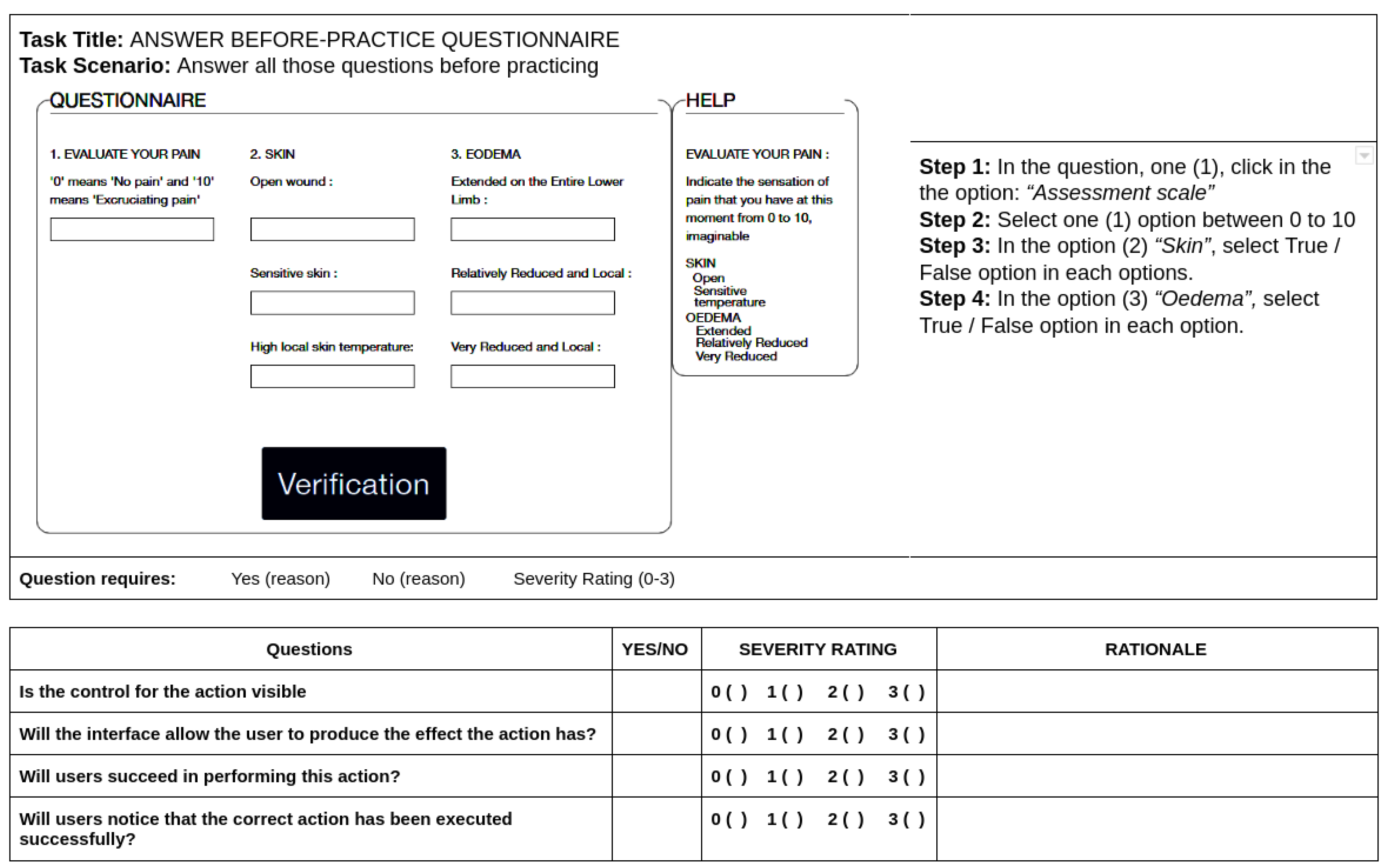

The cognitive walkthrough was performed with the following protocol: (1) A documentation package was developed containing the set of instructions, a guide for the survey and the tasks that the user would be expected to carry out; (2) A consent form was designed for volunteers taking part in the experiment; (3) An online demographic survey for experiment participants was created; (4) The cognitive walkthrough used the same mock-ups developed using the Balsamiq software. For the cognitive walkthrough, two online surveys were used to perform the evaluation. For the first and second iteration, a customized survey was designed.

Figure 3 presents the cognitive walkthrough survey instrument.

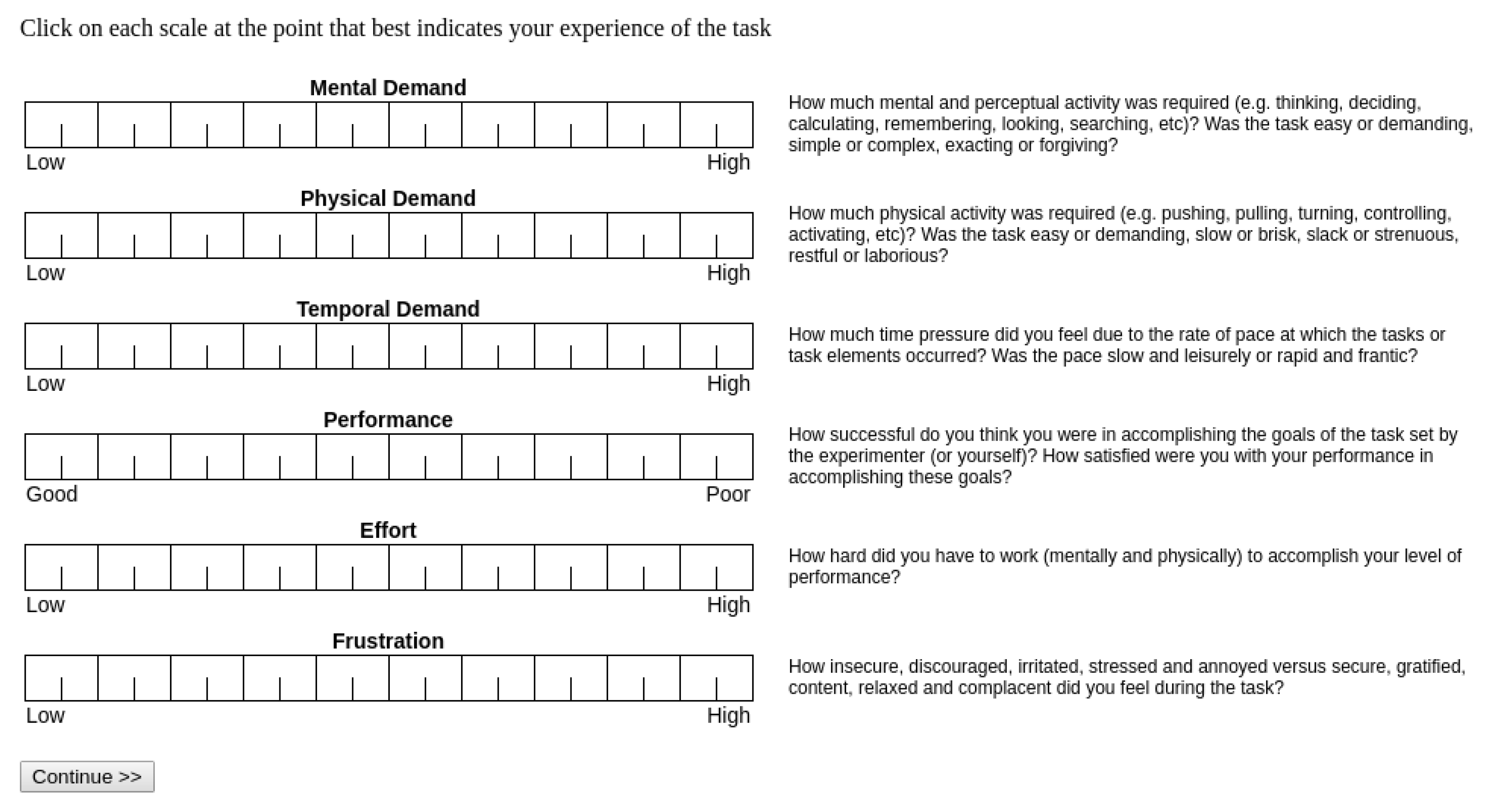

The instrument consisted of two sections. The first section comprised a screenshot of the questionnaire interface and a list of the steps to perform the task proposed for the users. In the second section, four questions were added related to the difficulty that the user experienced when completing the task, the degree of severity and their rationale. In the third and fourth iterations, a workload assessment tool was used. The NASA Task Load Index (NASA-TLX) is a subjective workload assessment. This tool allows assessments of users working with various human-machine interface systems. NASA-TLX is composed of six items: mental demand, physical demand, temporal demand, performance, effort, and frustration level, in order to enumerate the degree of the demands quantified by comparison of each item [

32,

36]. The evaluation has two stages. In the first stage, the users assign a weighting to every dimension before the execution of the task. In the second stage, the users assign a score to everyone of the six dimensions immediately after the execution of the task. With this background, this research used NASA-TLX with the goal of predicting the workload generated by the web interfaces, thus adding one more dimension of usability to the platform with the aim of guaranteeing greater patient safety.

Figure 4 shows the NASA-TLX assessment tool.

4. Results and Discussion

The results obtained with the sensor tests include 500 entries for each user, of which 200 corresponded to correct movements and 300 to incorrect movements. According to the proposed fuzzy model illustrated in

Figure 5 and the movement detection scheme explained in Reference [

29], patients only performed an exercise correctly with the correct corresponding angle 18% of the times, and only 27% performed it at an acceptable speed. During the testing stage, the optimal exercise detection rate obtained was 97.4% with a low false positive percentage of 2.6%.

The usability improvements are presented through four iterations. Each iteration contributed with a list of improvements that were implemented according to the level of severity.

4.1. First Iteration

The first iteration began in November 2017 with a literature review to establish the methods and instruments to use for the usability evaluation. An exploratory prototype was designed, and the questionnaire interface was used as a starting point for the evaluation. In the questionnaire web interface, three experts found 39 heuristic violations distributed as follows: 12 usability catastrophes, 16 major usability problems, and 11 minor usability problems. The heuristics with higher incidence and severity were: HP1, HP11, SH1, and SH3.

For the first cognitive walkthrough evaluation, 25 users found 19 usability problems in the questionnaire interface. The usability problems were classified according to features defined in the instrument and arranged based on severity rating (catastrophes, major, minor). The issues with the greatest severity grade were [

27]:

Implement messages/animations that confirm that the form has been sent correctly.

Validate the consistency of the information entered.

Change lists to check-box controls for information input.

4.2. Second Iteration

The second iteration began in February 2018 and the same methods as in the first iteration were used. However, a new team of three experts was invited to participate, and the evaluation was performed using a mock-up. The improvement list obtained in the previous iteration was incorporated in the new mock-up set.

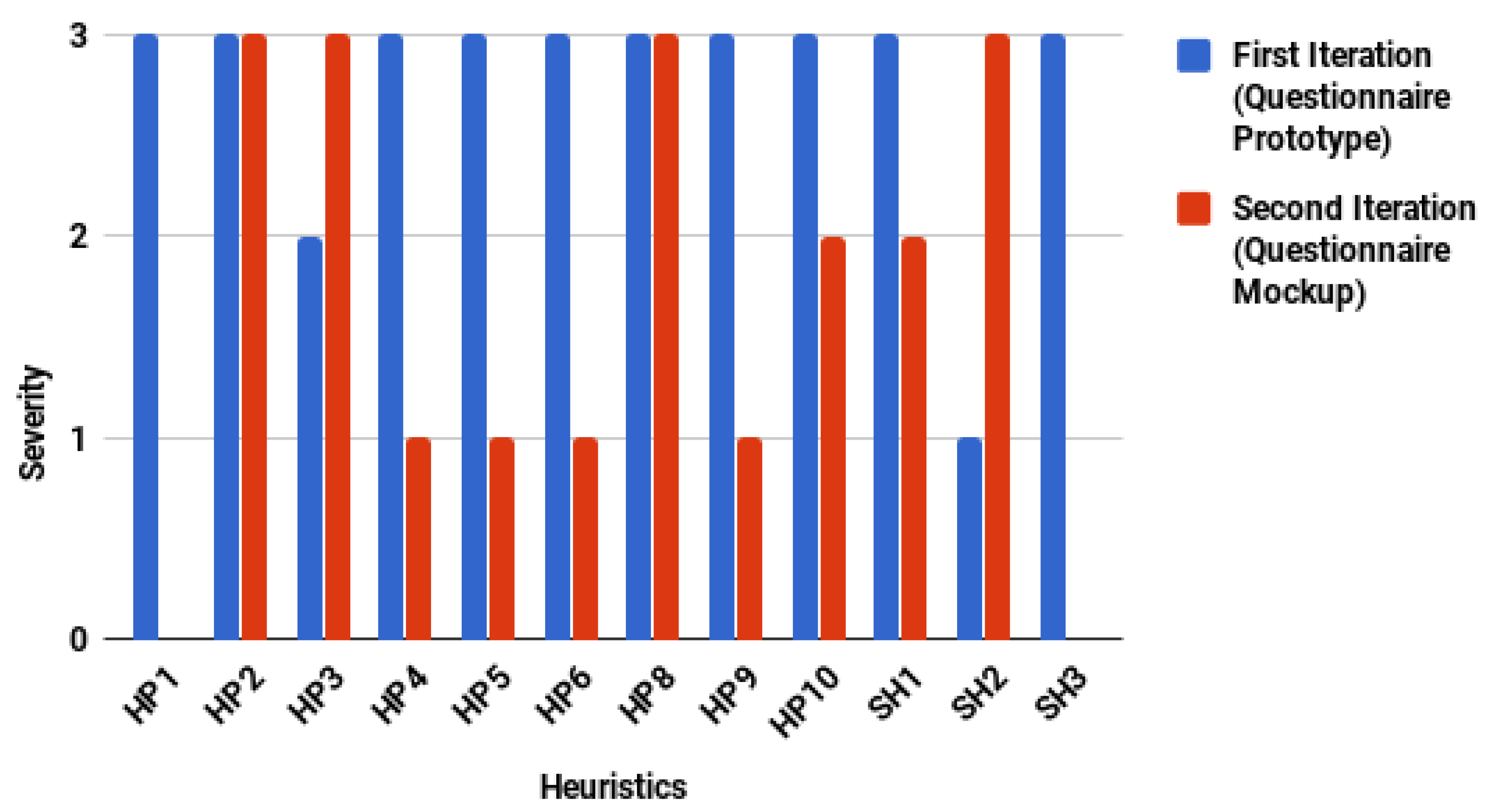

Figure 6 shows a comparative chart of the heuristic violations found in the two iterations for the exploratory prototype and mock-up of the questionnaire interface.

In general, the number of usability problems decreased. However, two atypical cases were presented: (1) An increase in the number of usability problems for HP3 and SH2; (2) The number of usability problems did not vary for the heuristics HP2 and HP8. It is necessary to understand the experts’ rationale to explain these atypical cases. For the first case, the experts recommended increasing the font size of the letters and not saturating the space. For the second case, the experts recommended using appropriate terms (medical terms) and validation controls for information input (medical criteria). Thus, the recommendations of the experts were due to a refinement of the interface rather than an increase in the number of usability problems attributable to the construction of the mock-up.

For the cognitive walkthrough, a customized survey was used to guide participants in completing a list of the tasks proposed. Experts acted as observers and registered user actions performed to complete the tasks. For the experiment, 19 volunteers participated and four questions were defined:

Are the controls for the action visible? This question evaluated if the controls developed to complete a task were easily accessible or identified by the user.

Will the interface allow the user to produce the effect the action has? This question evaluated if there was concordance between the objective of the task and the objective reached by the user.

Will users succeed in performing this action? This question sought to quantify the number of users who fulfilled the objective of the task.

Will users notice that the correct action has been executed successfully? This question sought to quantify the number of users who identified that their actions performed to complete the task were correct.

Table 5 shows the comparative results for first and second iterations.

The results for the second iteration showed that the controls were visible and that 100% of users fulfilled the objective of the task. However, the interface only facilitated concordance between the objective of the task and the objective reached by the user in 67% of them.

4.3. Third Iteration

The third iteration began in May 2018. In this iteration, 14 mock-ups were designed taking into consideration the feedback from the previous iteration. For the third iteration, three experts and 39 participants were invited. In the heuristic evaluation, there were a total of 92 heuristic violations in all the mock-ups. The questionnaire interface had only four usability problems of major and minor severity. The usability problems were decreasing in quantity and severity throughout the evaluation process, complying in this way with the proposed objective of improving the global usability of the ePHoRT platform.

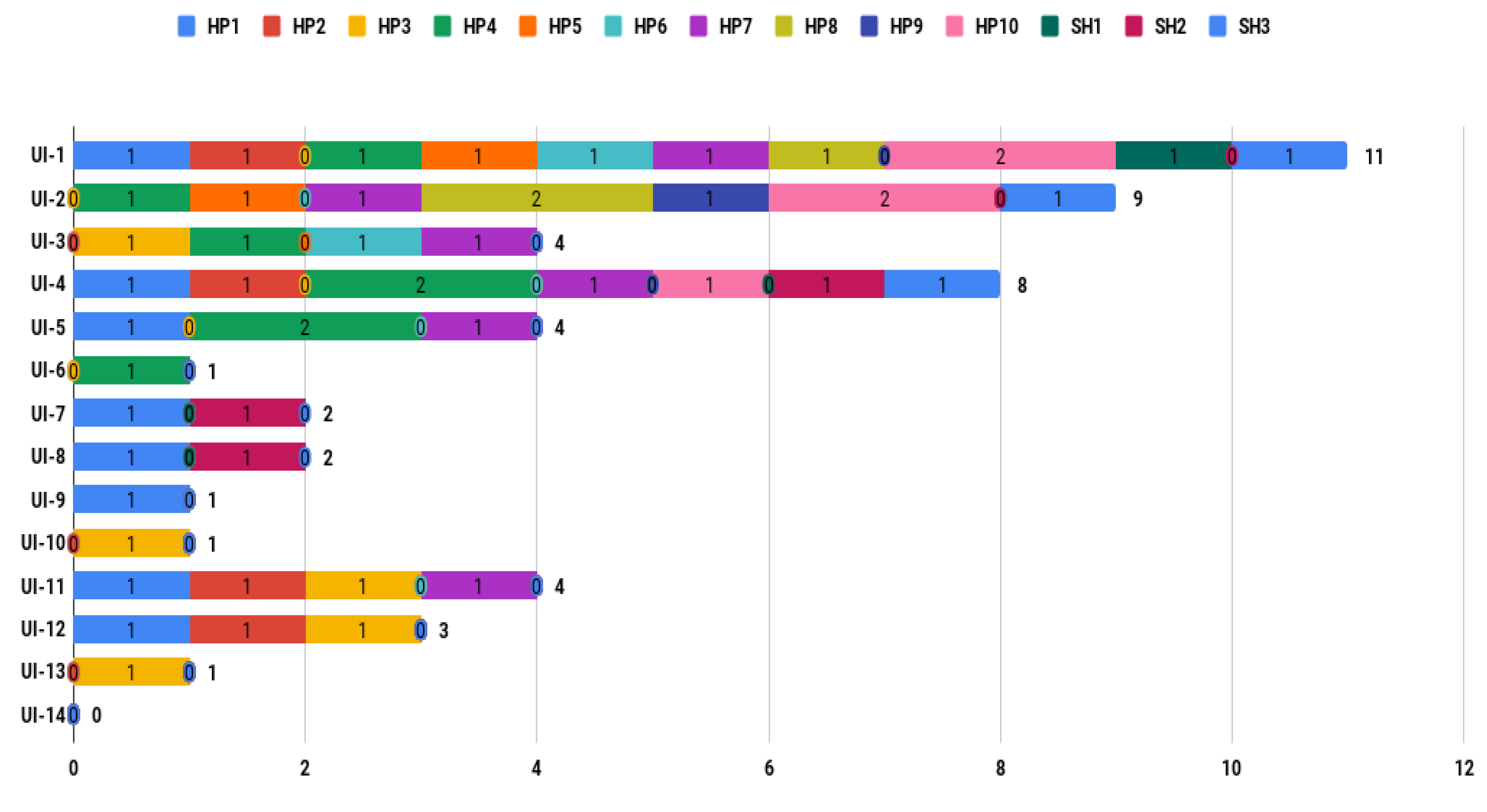

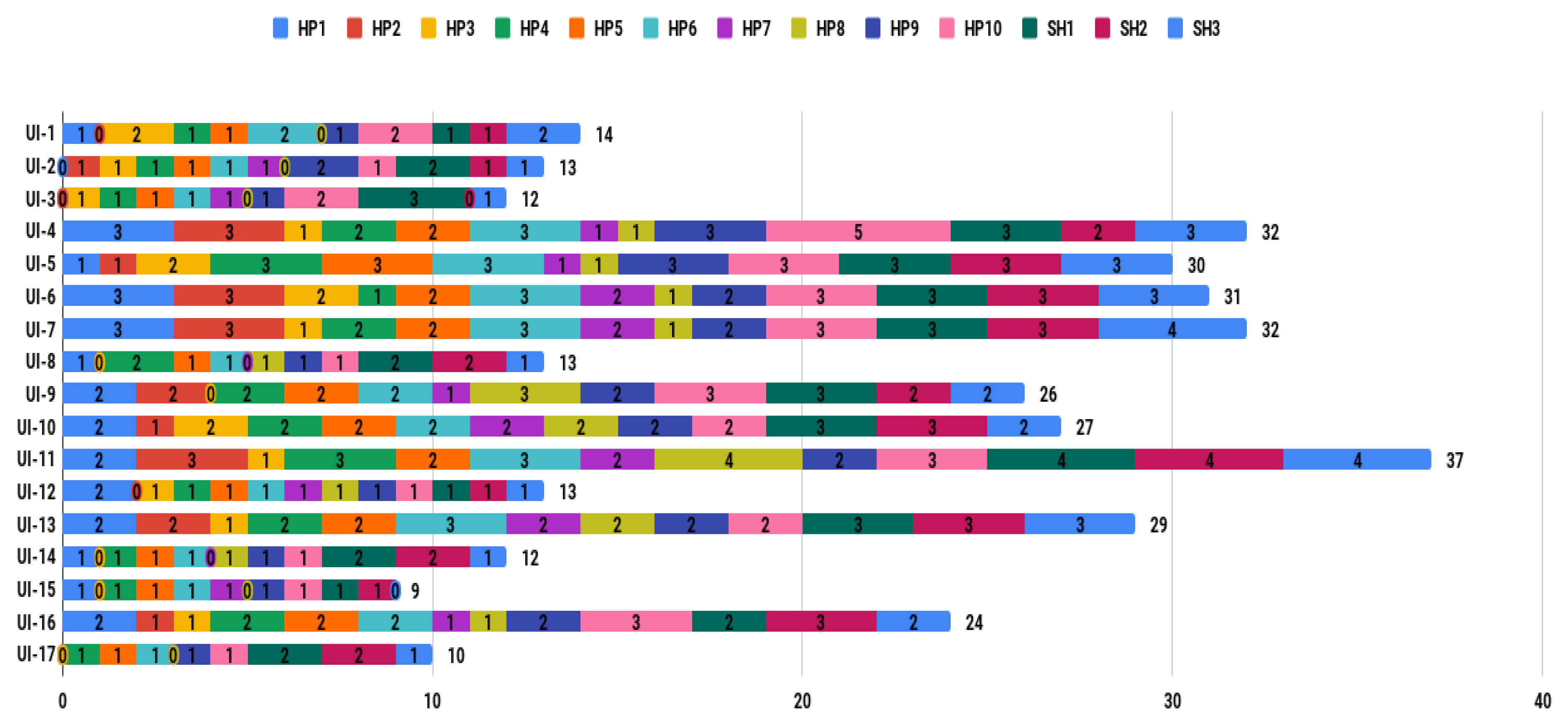

Figure 7 shows the frequency of heuristic violations per interface. According to the results obtained from the heuristic evaluation, the experts reported that eight interfaces (57.14%) did not achieve appropriate feedback from the users. Therefore, the platform incurred a clear violation of the HP1 heuristic. In addition, of these eight interfaces, the experts reported the HP10 heuristic violation at the UI-1, UI-2, and UI-4 interfaces. Finally, the experts considered that six interfaces (42.86%) were not flexible or efficient in their use.

The questionnaire interface had been the subject of all the iterations. The questionnaire interface is the interface that had the most controls, in comparison with the login interface, which had the most usability problems despite having very few controls. When comparing the results of the evaluation of these two interfaces, the positive results of the iterative evaluation process can clearly be verified.

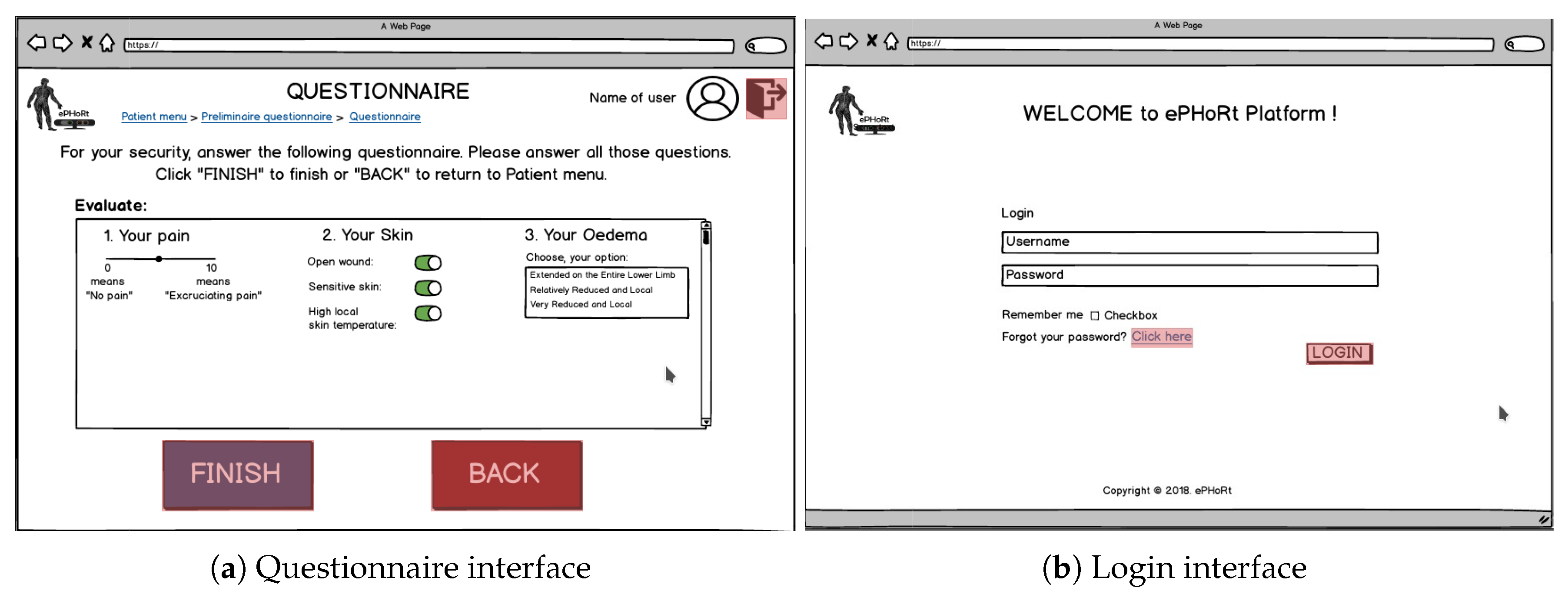

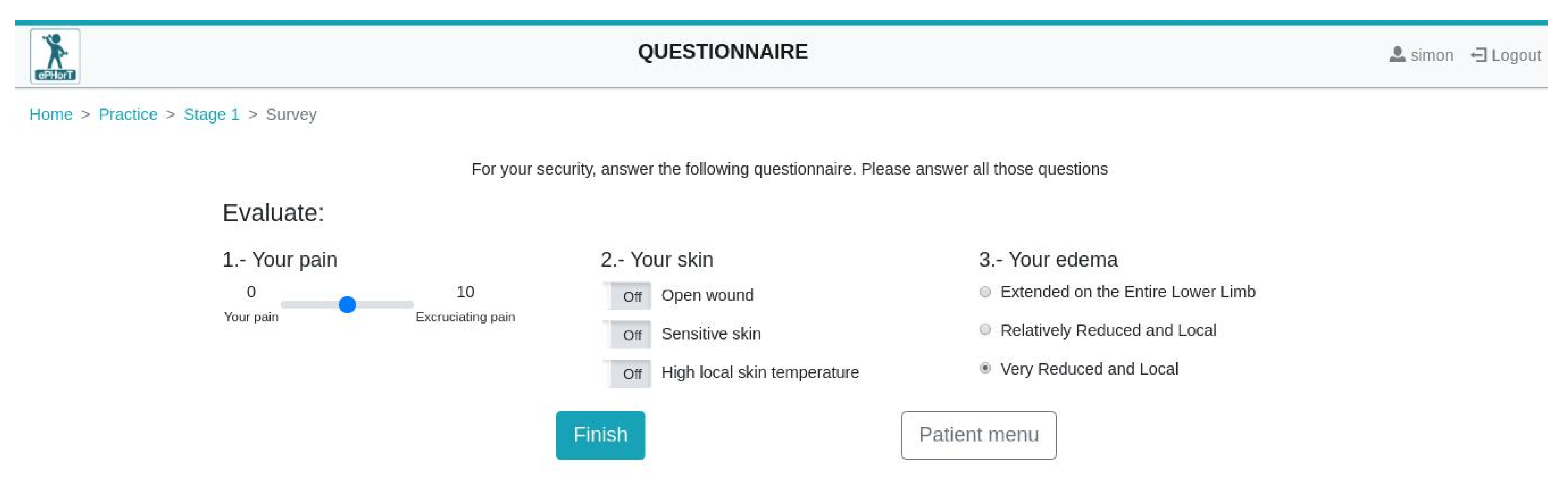

Figure 8 shows the questionnaire and login interfaces.

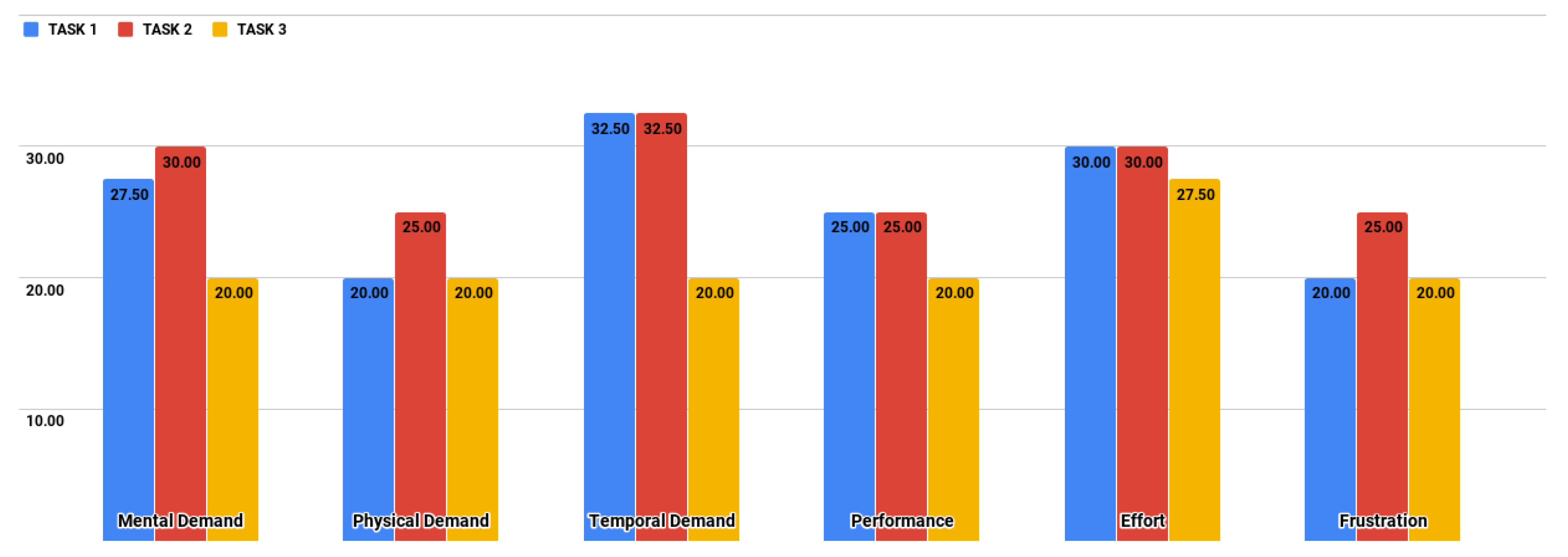

The evaluation was complemented with the NASA-TLX assessment, with the purpose of measuring by experimentation the cognitive workload of the users when using the ePHoRT platform. The three tasks proposed (

Table 4) were performed by the users and the first results showed that task T1 (31.33/100) had the highest global workload weighted, followed by task T2 (30.67/100), and task T3 (22.17/100). The results presented a low cognitive workload for patients in the three proposed tasks. However, we must consider that the results are only a prediction of what we would expect when performing the same evaluation on the final interfaces with real patients. As shown in

Figure 9, task T2 places the greater workload on the users. The dimensions with the highest workload are Effort and Temporal Demand. The results indicate that in tasks T1 and T2 users experienced the need for a greater mental effort to complete a task on time as a result of the lack of feedback at the interface level.

4.4. Fourth Iteration

The fourth iteration began in August 2018. In this iteration, 17 mock-ups were re-designed taking into account the feedback from the previous iteration. The same usability evaluation methods and instruments as the previous iteration were used. Six experts and 12 participants (five female and seven male) were invited for the fourth iteration.

In the heuristic evaluation, the experts reported a total of 364 heuristic violations in the mock-ups. However, the questionnaire interface had only three usability problems of minor severity. This value had been decreasing in severity throughout the whole evaluation process.

Figure 10 shows the frequency of heuristic violations per interface.

The results show an increase in the number of usability problems. The increase is due to a greater number of interfaces evaluated and a greater number of experts who participated in the evaluation. The advantage of having greater criteria diversity was that it detected a greater number of usability problems. The disadvantage was the risk of it becoming an infinite cycle of refinements. The Control Request Form proposed in a previous study [

27] was used to avoid this risk. The Control Request Form allows one to keep a record of the changes performed in each iteration. The document prevented the recommendations of each new group of experts becoming repetitive and contradictory. Experts placed more significant importance on interfaces UI-9, UI-10, UI-11, UI-12, and UI-13. These interfaces are more related to the objectives of the project.

Table 6 shows the comparative results for iterations three and four regarding heuristics violations according to the severity scale described in

Table 3. In the third iteration, the experts reported 38 minor usability problems (41.30%), 23 major usability problems (25.00%), and four usability catastrophes (4.35%) in the 14 mock-up interfaces. The experts reported that the three interfaces with major quantity heuristic violations were: UI-1 (11), UI-2 (9), and UI-4 (8). In the fourth iteration, the experts reported 141 minor usability problems (38.74%), 10 major usability problems (2.75%), and zero usability catastrophes in the 17 mock-up interfaces. The experts reported that the three interfaces with the largest number of heuristic violations were UI-11 (37), UI-7 (32), and UI-6 (31).

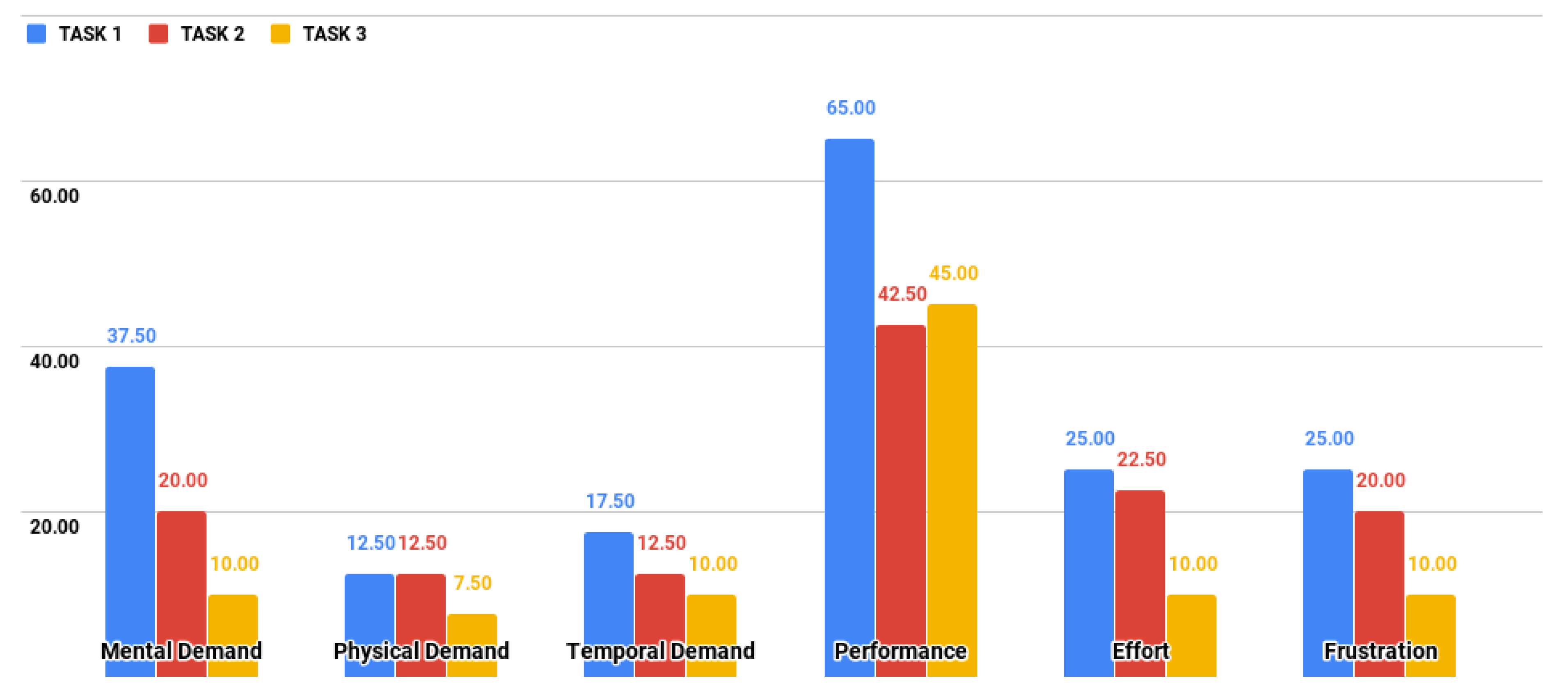

In the fourth iteration, the NASA-TLX test was also executed.

Table 7 shows the comparative results of iterations three and four for the user workload when performing the task T2 “Perform an active exercise” using the interface UI12 “Acute Rehabilitation: Active Exercise (3/3)”.

Figure 11 shows some details of the distribution of the global workload through the six dimensions of NASA-TLX. The most significant workload is located in the Performance dimension of the three tasks defined for the experiment. Task T1 had the highest workload through the six dimensions. Since the Performance dimension measures the degree of user dissatisfaction with their level of performance when completing a task, the authors deduce that this result is attributable to the inexperience of users with telerehabilitation systems and rehabilitation processes. This result does not necessarily mean that patients experience the same level of workload since there are several studies on patients’ satisfaction with teletreatment that show patients were satisfied with the use of the technology [

37], as long as an acceptable level of usability is reached during the operation of the platform.

The results show that users in the fourth iteration showed a higher degree of dissatisfaction with their level of performance when carrying out the three proposed tasks. The results showed a variation in the score, passing from a mean of 23.33 (third iteration) to 50.66 (fourth iteration). Conversely, in five of the six remaining dimensions, the scores decreased, which means a lower contribution in the workload. This variation could be due to two factors: (1) The difference of criteria because it was a different group of users; (2) The redesign of the interfaces to reduce the time and effort requirement (third iteration), which affected the performance dimension of NASA-TLX in the fourth iteration. The most convenient solution for the second factor seemed to be using the agile methodology, because of the great diversity of patients using the platform makes it impossible to carry out refinements for each particular case.

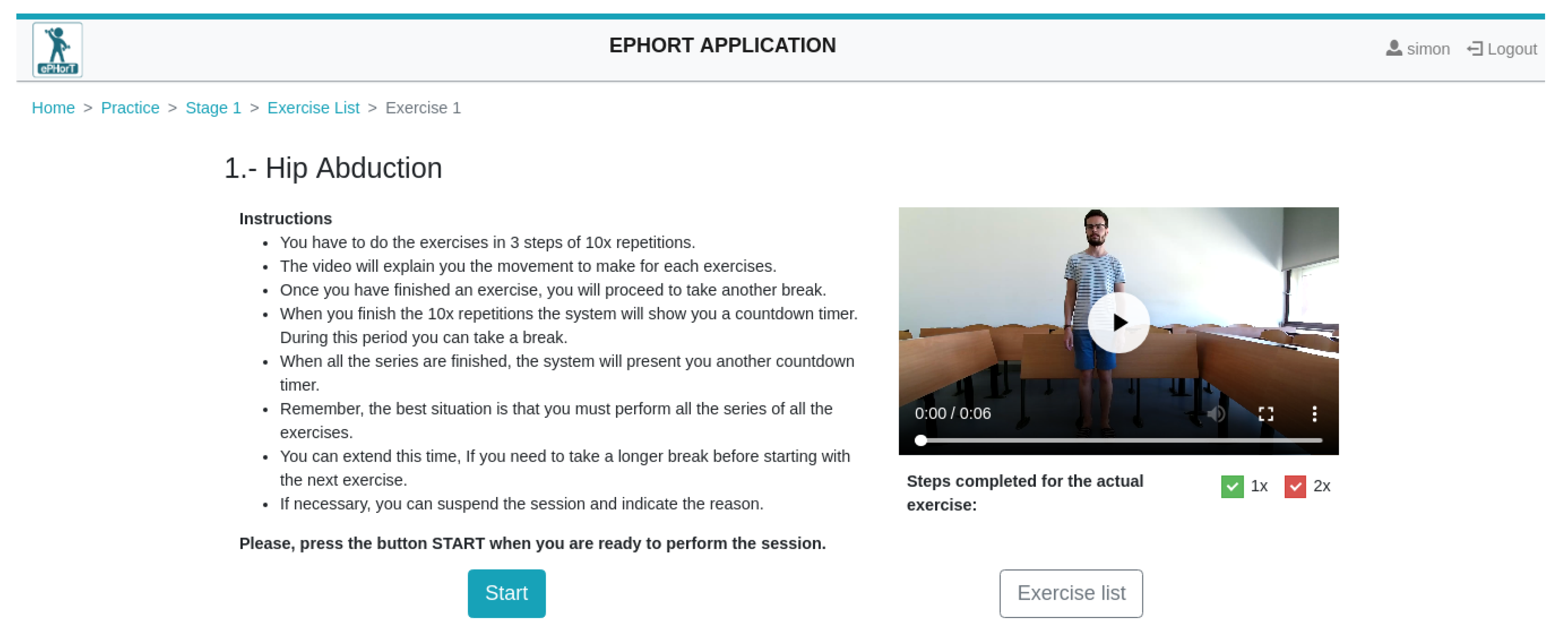

The results obtained from the heuristic evaluation, the cognitive walkthrough, and the assessments of the NASA-TLX were used as a guide to develop the functional prototype for the ePHoRT platform in this first development cycle. The interfaces’ questionnaire and exercises, shown as an example in

Figure 12 and

Figure 13, are part of the functional prototype.

The questionnaire interface allows the assessment of the patient’s current situation before performing the exercises. This evaluation is based on three parameters: (1) Pain level; (2) Condition of the skin at the operation site; and (3) State of oedema. This information is sent to the therapists for their evaluation and allows them to decide whether or not a specific set of exercises can be provided to the patient.

The exercise interface provides the patient with instructions/recommendations to start the exercise. The instructions can be in video, audio, or text. The patient is authorized to perform the physical exercises following the results of the questionnaire (

Figure 12).

Applying NASA-TLX provided an additional level of usability testing besides the normal method of observing a user interacting with the platform [

38]. For this analysis, the contribution of applying NASA-TLX to a prototype is to predict the minimum workload that a patient will have when using the interfaces and the identification of the interfaces that generate a large workload, thus being able to refine them before the platform is tested on real patients. Usability experts shared their recommendations for the improvement of the interfaces as follows:

Increase help tools that describe the operation of the controls of each interface.

Improve the contrast of colors used in the interfaces.

Implement error messages for invalid data entry.

With the text that already appears at the top of each window, add a hypertext menu that informs the user where he/she is located.

The messaging interface should allow attachment of images of the current state of the patient.

There should be some indications whether the username and/or the password are correct or not.

For special users, there should be an alternative way to log in and enter the password.

For visually impaired people, there should be a way either to vocally access the interface or to increase the size of fields and labels on demand.

For future evaluation, it is recommended to use task-based usability inspection.

5. Conclusions

The purpose of the ePHoRT platform is to provide therapeutic assistance through a system focused on patients who have gone through hip arthroplasty. For this reason, it is important to have a usability assessment study that motivates patients to use the platform with safety, effectiveness, efficiency, and satisfaction, as suggested by the International Organization for Standardization [

1].

The usability evaluation process started with a minimum set of methods and instruments to establish a baseline that allowed the identification of the improvements that needed to be incorporated in the final software application. The usability evaluation has to be an ordered, planned, and iterative process, keeping a historical record of the changes made, which allows a verification of the evolution and improvement of the usability of the software product within its development cycle. Performing the first iterations with an exploratory prototype allowed the research team to polish the logic of the process and to improve the usability of the platform without having to write a single line of code. Improving the usability of a platform within the software development cycle also contributes to the quality of the software product by establishing a more secure path to achieve user satisfaction once the platform is operative. The feedback obtained from the experts during the usability evaluation process confirms that it is advisable to start a software development project with a usability analysis before starting with the process of coding the application, avoiding rework and assuring the safe rehabilitation of the patient. The results from the first iteration motivated the refinement of the exploratory prototype to mock-ups based on the list of improvements.

Currently, there is an ongoing generalized discussion regarding the advantages and disadvantages of testing software in the laboratory [

39] as opposed to when the development cycle is over [

40]. This study considers that these two approaches are complementary, and must be applied according to the user’s needs and intended context of use. For this reason, this study used exploratory prototypes, the testing was performed in the lab and during the development cycle, with the aim of protecting the integrity of the patients. The first contribution of this study is to propose a cyclical, orderly process for the development of improvements and the keeping of a history to avoid conflicts between the different criteria of the experts obtained in each of the iterations. The second contribution is to present the process of combining the heuristic evaluation, cognitive walkthrough, and NASA-TLX assessment to identify and eliminate usability barriers and estimate the workload that a patient using the platform will experience. The outcomes of each iteration provide the developers and e-Health stakeholders with relevant feedback, a usability improvement method to optimize the implementation of tele-rehabilitation platforms and a prediction of the cognitive workload. In a complementary study [

33], authors provide empirical evidence that another assessment that can be added to the proposed process to measure the perceived usability of an e-Health platform is the IBM Computer System Usability Questionnaire (CSUQ).

Any future study should perform a cost-benefit analysis that estimates the cost of applying a usability evaluation for each iteration throughout the development of the software. Metrics such as the platform cost, cognitive walkthrough evaluator’s cost, heuristic experts cost, and materials cost should be considered. Another idea for future studies consists of performing complementary iterations of usability evaluation with respect to specific attributes based on public health standards, for example, the biomedical records of the evolution of patients when applying the therapeutic protocol.

Some methods and tools have been developed to improve the usability of web platforms; for example, this study was based on mock-ups which allowed us to get an agile assessment of the usability, avoiding putting the patient’s safety at risk. Nevertheless, future studies will consist of applying further methods and tools to perform a more in-depth usability evaluation of an advanced version of the prototype. For these final evaluations, it will be mandatory to involve real patients and analyze the system usability during the completion of an entire rehabilitation program.