Respiration Monitoring for Premature Neonates in NICU

Abstract

:1. Introduction

2. Related Work

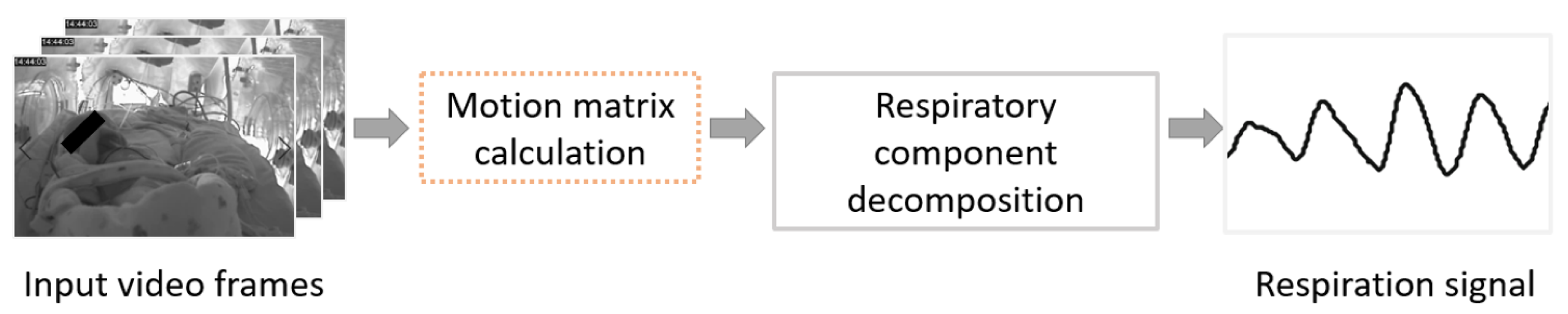

3. Methods

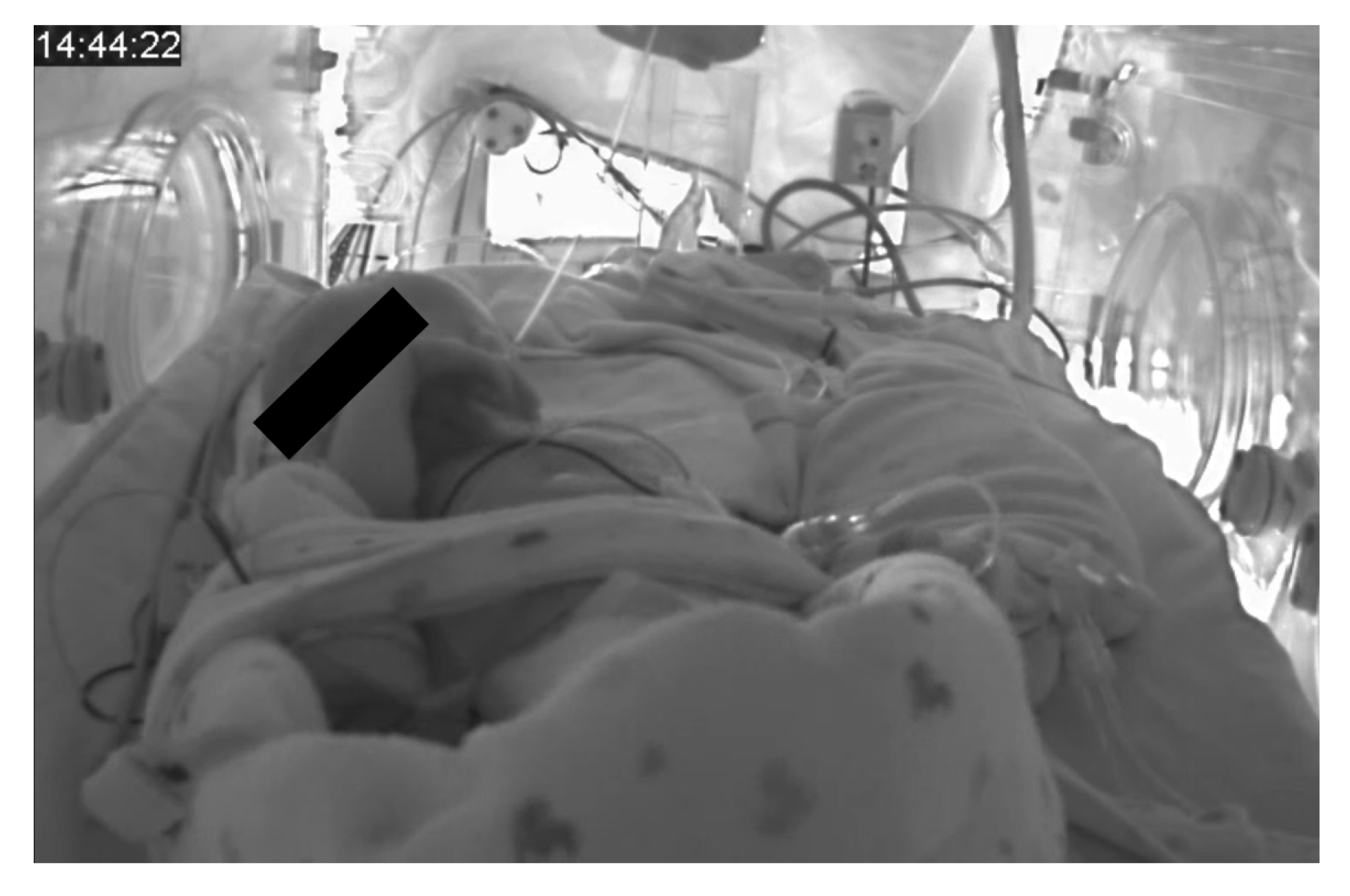

3.1. Material

3.2. Motion Matrix Calculation

3.2.1. Conventional Optical Flow

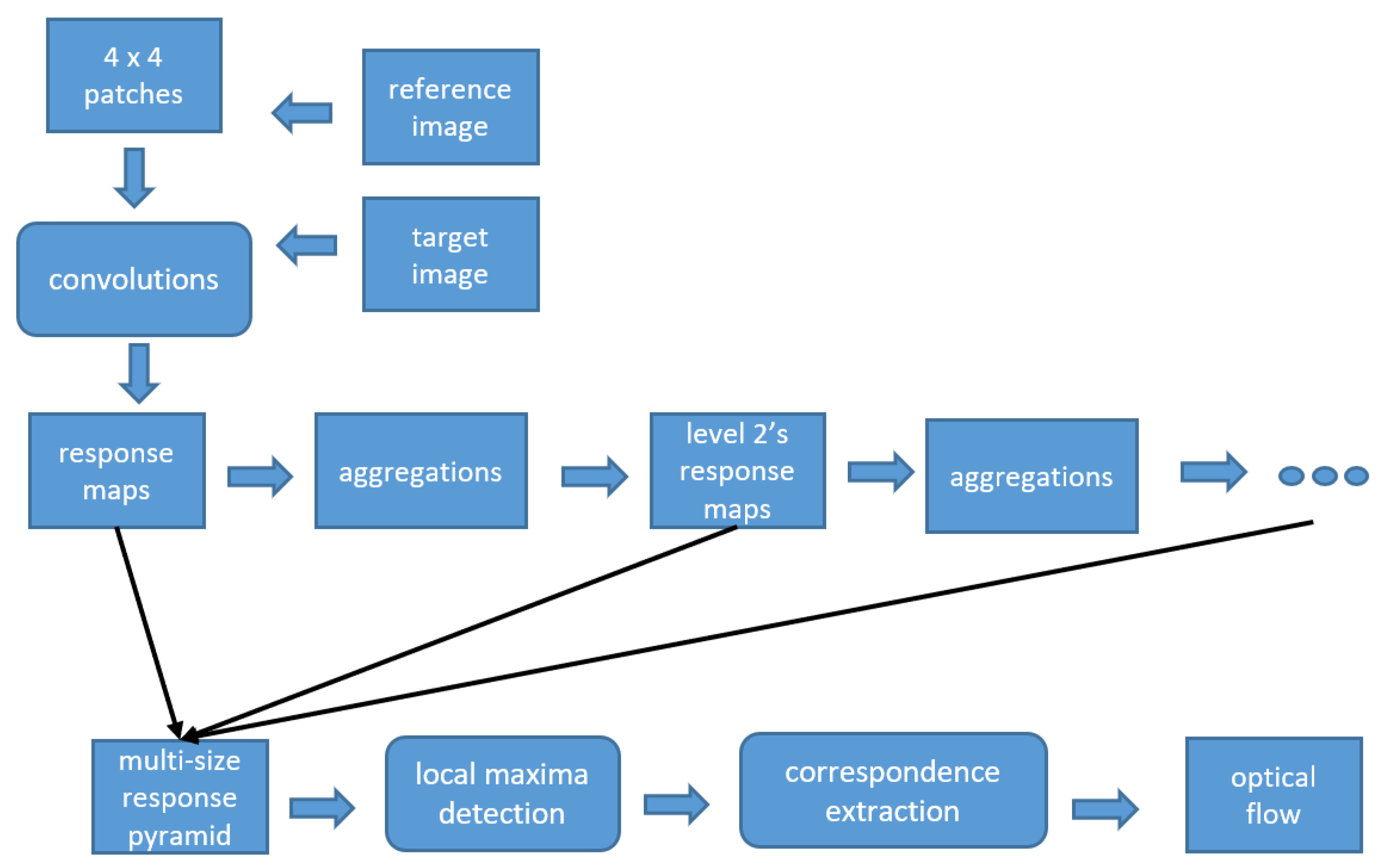

3.2.2. Deep Flow

3.3. Respiratory Description

3.4. Evaluation

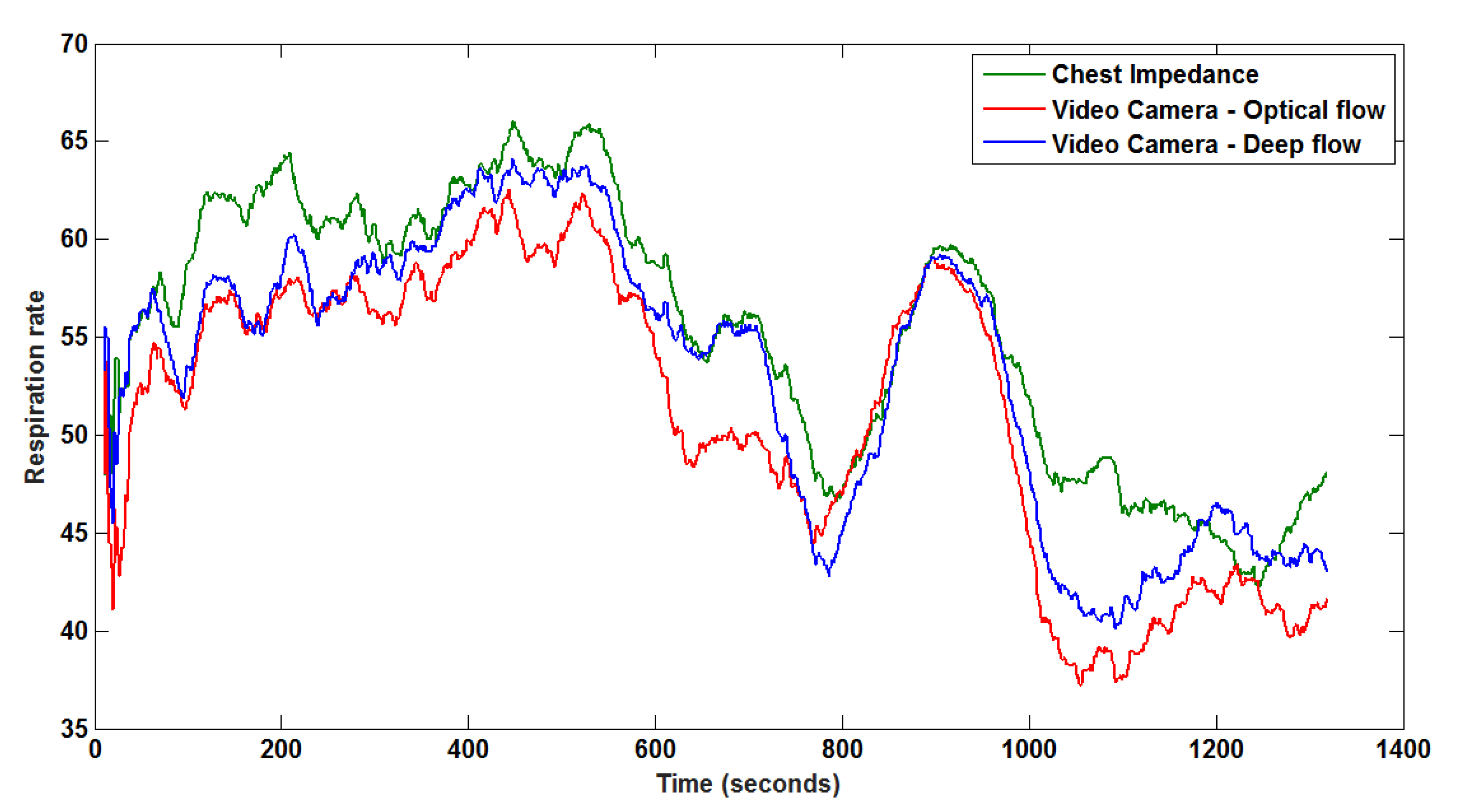

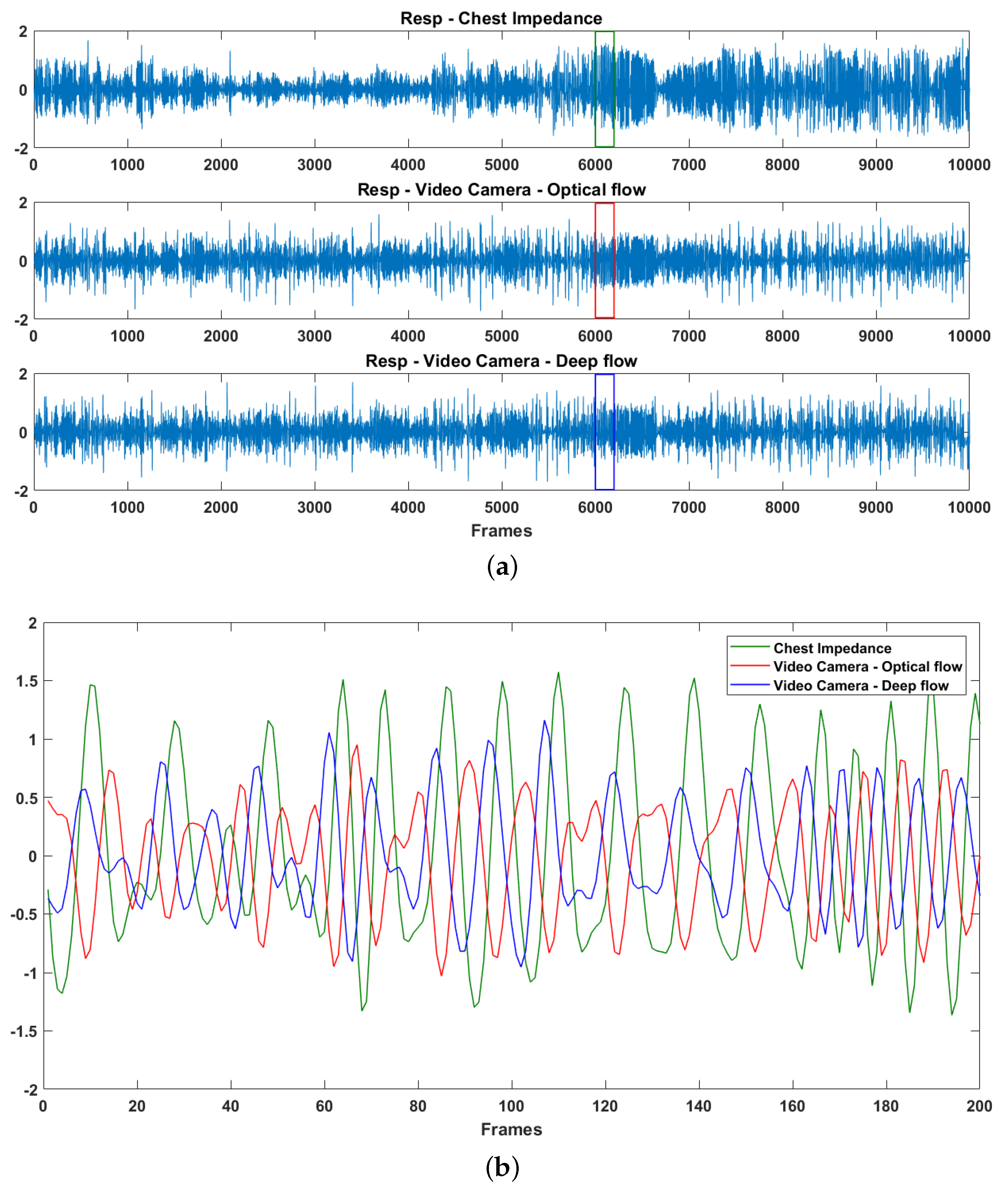

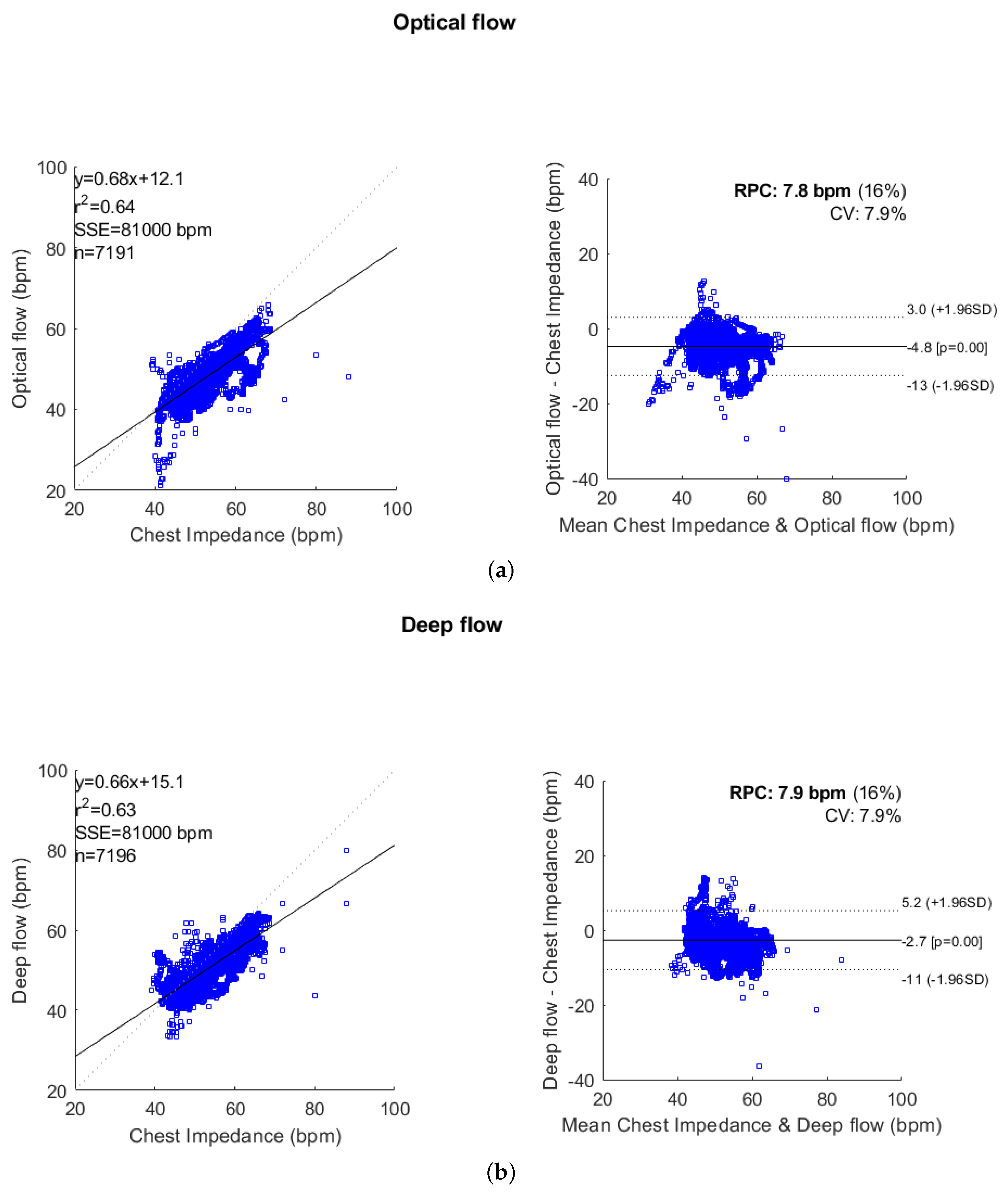

4. Experimental Results and Discussion

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Sale, S.M. Neonatal apnoea. Best Pract. Res. Clin. Anaesthesiol. 2010, 24, 323–336. [Google Scholar] [CrossRef] [PubMed]

- Chernick, V.; Heldrich, F.; Avery, M.E. Periodic breathing of premature infants. J. Pediatr. 1964, 64, 330–340. [Google Scholar] [CrossRef]

- Poets, C.F.; Stebbens, V.A.; Samuels, M.P.; Southall, D.P. The relationship between bradycardia, apnea, and hypoxemia in preterm infants. Pediatr. Res. 1993, 34, 144. [Google Scholar] [CrossRef] [PubMed]

- Prechtl, H.; Theorell, K.; Blair, A. Behavioural state cycles in abnormal infants. Dev. Med. Child Neurol. 1973, 15, 606–615. [Google Scholar] [CrossRef] [PubMed]

- Prechtl, H.F. The behavioural states of the newborn infant (a review). Brain Res. 1974, 76, 185–212. [Google Scholar] [CrossRef]

- Lund, C.H.; Nonato, L.B.; Kuller, J.M.; Franck, L.S.; Cullander, C.; Durand, D.K. Disruption of barrier function in neonatal skin associated with adhesive removal. J. Pediatr. 1997, 131, 367–372. [Google Scholar] [CrossRef]

- Afsar, F. Skin care for preterm and term neonates. Clin. Exp. Dermatol. Clin. Dermatol. 2009, 34, 855–858. [Google Scholar] [CrossRef] [PubMed]

- Baker, G.; Norman, M.; Karunanithi, M.; Sullivan, C. Contactless monitoring for sleep disordered-breathing, respiratory and cardiac co-morbidity in an elderly independent living cohort. Eur. Respir. J. 2015, 46, PA3379. [Google Scholar] [CrossRef]

- Matthews, G.; Sudduth, B.; Burrow, M. A non-contact vital signs monitor. Crit. Rev. Biomed. Eng. 2000, 28, 173–178. [Google Scholar] [CrossRef] [PubMed]

- Deng, F.; Dong, J.; Wang, X.; Fang, Y.; Liu, Y.; Yu, Z.; Liu, J.; Chen, F. Design and Implementation of a Noncontact Sleep Monitoring System Using Infrared Cameras and Motion Sensor. IEEE Trans. Instrum. Meas. 2018, 67, 1555–1563. [Google Scholar] [CrossRef]

- De Chazal, P.; O’Hare, E.; Fox, N.; Heneghan, C. Assessment of sleep/wake patterns using a non-contact biomotion sensor. In Proceedings of the 2008 30th Annual International Conference of the IEEE Engineering in Medicine and Biology Society, Vancouver, BC, Canada, 20–25 August 2008; pp. 514–517. [Google Scholar]

- Gupta, P.; Bhowmick, B.; Pal, A. Accurate heart-rate estimation from face videos using quality-based fusion. In Proceedings of the IEEE International Conference on Image Processing (ICIP), Beijing, China, 17–20 September 2017; pp. 4132–4136. [Google Scholar] [CrossRef]

- Prathosh, A.P.; Praveena, P.; Mestha, L.K.; Bharadwaj, S. Estimation of Respiratory Pattern From Video Using Selective Ensemble Aggregation. IEEE Trans. Signal Process. 2017, 65, 2902–2916. [Google Scholar] [CrossRef]

- Werth, J.; Atallah, L.; Andriessen, P.; Long, X.; Zwartkruis-Pelgrim, E.; Aarts, R.M. Unobtrusive sleep state measurements in preterm infants—A review. Sleep Med. Rev. 2017, 32, 109–122. [Google Scholar] [CrossRef] [PubMed]

- Abbas, A.K.; Heimann, K.; Jergus, K.; Orlikowsky, T.; Leonhardt, S. Neonatal non-contact respiratory monitoring based on real-time infrared thermography. Biomed. Eng. Online 2011, 10, 93. [Google Scholar] [CrossRef] [PubMed]

- Koolen, N.; Decroupet, O.; Dereymaeker, A.; Jansen, K.; Vervisch, J.; Matic, V.; Vanrumste, B.; Naulaers, G.; Van Huffel, S.; De Vos, M. Automated Respiration Detection from Neonatal Video Data. In Proceedings of the International Conference on Pattern Recognition Applications and Methods ICPRAM, Lisbon, Portugal, 10–12 January 2015; pp. 164–169. [Google Scholar]

- Antognoli, L.; Marchionni, P.; Nobile, S.; Carnielli, V.; Scalise, L. Assessment of cardio-respiratory rates by non-invasive measurement methods in hospitalized preterm neonates. In Proceedings of the 2018 IEEE International Symposium on Medical Measurements and Applications (MeMeA), Rome, Italy, 11–13 June 2018; pp. 1–5. [Google Scholar]

- Barron, J.L.; Fleet, D.J.; Beauchemin, S.S.; Burkitt, T. Performance of optical flow techniques. In Proceedings of the 1992 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Champaign, IL, USA, 15–18 June 1992; pp. 236–242. [Google Scholar]

- Weinzaepfel, P.; Revaud, J.; Harchaoui, Z.; Schmid, C. DeepFlow: Large Displacement Optical Flow with Deep Matching. In Proceedings of the IEEE International Conference on Computer Vision, Sydney, Australia, 1–8 December 2013; pp. 1385–1392. [Google Scholar] [CrossRef]

- Brox, T.; Malik, J. Large Displacement Optical Flow: Descriptor Matching in Variational Motion Estimation. IEEE Trans. Pattern Anal. Mach. Intell. 2011, 33, 500–513. [Google Scholar] [CrossRef] [PubMed]

- Pearson, K. LIII. On lines and planes of closest fit to systems of points in space. Lond. Edinb. Dublin Philos. Mag. J. Sci. 1901, 2, 559–572. [Google Scholar] [CrossRef]

- Bland, J.M.; Altman, D. Statistical methods for assessing agreement between two methods of clinical measurement. Lancet 1986, 327, 307–310. [Google Scholar] [CrossRef]

- Klein, R. Bland-Altman and Correlation Plot. Mathworks File Exch. 2014. Available online: http://www.mathworks.com/matlabcentral/fileexchange/45049-bland-altman-and-correlation-plot (accessed on 11 September 2015).

- Van Luijtelaar, R.; Wang, W.; Stuijk, S.; de Haan, G. Automatic roi detection for camera-based pulse-rate measurement. In Proceedings of the Asian Conference on Computer Vision, Singapore, 1–2 November 2014; pp. 360–374. [Google Scholar]

- Wang, W.; den Brinker, A.C.; Stuijk, S.; de Haan, G. Robust heart rate from fitness videos. Physiol. Meas. 2017, 38, 1023. [Google Scholar] [CrossRef] [PubMed]

- Sun, Y.; Kommers, D.; Wang, W.; Joshi, R.; Shan, C.; Tan, T.; Aarts, R.M.; van Pul, C.; Andriessen, P.; de With, P.H. Automatic and continuous discomfort detection for premature infants in a NICU using video-based motion analysis. In Proceedings of the 2019 41st Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Berlin, Germany, 23–27 July 2019; pp. 5995–5999. [Google Scholar]

- Sun, Y.; Shan, C.; Tan, T.; Long, X.; Pourtaherian, A.; Zinger, S.; de With, P.H. Video-based discomfort detection for infants. Mach. Vis. Appl. 2019, 30, 933–944. [Google Scholar] [CrossRef]

- Sun, Y.; Shan, C.; Tan, T.; Tong, T.; Wang, W.; Pourtaherian, A.; de With, P.H.N. Detecting discomfort in infants through facial expressions. Physiol. Meas. 2019. [Google Scholar] [CrossRef] [PubMed]

- Wu, Y.; Huang, T.S. Vision-based gesture recognition: A review. In Proceedings of the International Gesture Workshop, Gif-sur-Yvette, France, 17–19 March 1999; pp. 103–115. [Google Scholar]

| Patient ID | Duration (h:m:s) | Mean ± std | ||

|---|---|---|---|---|

| Reference (CI) | Optical Flow | Deep Flow | ||

| 1 | 00:50:13 | |||

| 2 | 00:22:00 | |||

| 3 | 00:26:54 | |||

| 4 | 00:09:22 | |||

| 5 | 00:06:15 | |||

| Patient ID | RMSE | CC Coefficients | ||

|---|---|---|---|---|

| Optical Flow | Deep Flow | Optical Flow | Deep Flow | |

| 1 | 5.09 | 4.32 | 0.85 | 0.82 |

| 2 | 4.94 | 3.10 | 0.94 | 0.95 |

| 3 | 3.86 | 3.51 | 0.73 | 0.75 |

| 4 | 5.75 | 5.11 | 0.47 | 0.52 |

| 5 | 10.85 | 6.71 | 0.49 | 0.66 |

| Average | 6.10 | 4.55 | 0.70 | 0.74 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sun, Y.; Wang, W.; Long, X.; Meftah, M.; Tan, T.; Shan, C.; Aarts, R.M.; de With, P.H.N. Respiration Monitoring for Premature Neonates in NICU. Appl. Sci. 2019, 9, 5246. https://doi.org/10.3390/app9235246

Sun Y, Wang W, Long X, Meftah M, Tan T, Shan C, Aarts RM, de With PHN. Respiration Monitoring for Premature Neonates in NICU. Applied Sciences. 2019; 9(23):5246. https://doi.org/10.3390/app9235246

Chicago/Turabian StyleSun, Yue, Wenjin Wang, Xi Long, Mohammed Meftah, Tao Tan, Caifeng Shan, Ronald M. Aarts, and Peter H. N. de With. 2019. "Respiration Monitoring for Premature Neonates in NICU" Applied Sciences 9, no. 23: 5246. https://doi.org/10.3390/app9235246

APA StyleSun, Y., Wang, W., Long, X., Meftah, M., Tan, T., Shan, C., Aarts, R. M., & de With, P. H. N. (2019). Respiration Monitoring for Premature Neonates in NICU. Applied Sciences, 9(23), 5246. https://doi.org/10.3390/app9235246