1. Introduction

Discrimination of speech and singing is not an easy task even for humans, who need approximately one-second-long segments to discriminate singing and speaking voices with more than 95% accuracy [

1]. When speech is repeated, rhythm patterns appear and repeated spoken segments are perceived as singing [

2,

3]. Even if singing and speech are closely related and difficult to be distinguished by humans, speech technologies developed for spoken speech are not directly applicable to singing speech. In general, results deteriorate heavily when using classic automatic speech recognition [

4] or phonetic alignment [

5] methods with singing speech. Therefore, when dealing with recordings that contain both types of speech, some tool must be designed to identify them in order to apply the technique suitable for each type of voice. Many works have addressed the problem of speech and music discrimination [

6,

7,

8,

9], but these techniques are not directly applicable in the case of a capella singing because they exploit the presence of music.

There are two different tasks related to the automatic discrimination of singing and speech: classification, when each segment (or file) belongs to only one class and segmentation where both classes are present in the same file and have to be first separated and then classified. For the classification step, short-term and long-term features extracted from the audio signal have been traditionally used. With the use of short-term features, the signal is classified at frame level and then decisions for different frames must be combined to obtain a single decision for each segment. Most works, however, rely on long-term parameters related to pitch to discriminate speech and singing. For instance, distribution of different pitch based features was tested in [

10] and most of them achieved correctly classifying more than half the database. The best results in this work were obtained for autocorrelation of pitch between syllables. Pitch and energy related features were also fed to a multi-layer Support Vector Machine (SVM) in [

11] and the pitch related features contributed the most to the discrimination. Thomson [

12] proposed to use the discrete Fourier transform of the pitch histogram to estimate the distribution of pitch deviations that should have lower variance for singing than for speech and obtained very good results.

Some works combine short-term features related with the spectral envelope and long-term features related with prosody to train Gaussian Mixture Models (GMM) and distinguish speech and singing. In [

1], the authors found that short-term features work better for segments shorter than 1 s and pitch related features obtain the best results for segments longer than 1 s. On the contrary, the work in [

13] finds that spectral features work better than pitch based ones when used alone in their database composed of segments between 17 and 26 s. Regardless, they obtained the best results when combining both types of features. In [

14], a large set of 276 attributes related with spectral envelope, pitch, harmonic to noise ratio and other characteristics and a ensemble of classifiers are proposed to classify singing voice, speech and polyphonic music and get very good results.

As mentioned before, a related problem that has been studied in more depth is the separation of the singing voice from the background music. In this area, different strategies have been applied. Many works are based on the use of pitch related information. For instance, in [

15] and with the goal of melody extraction, the use of a joint detection and classification network to simultaneously estimate pitch and detect the singing voice segments in a music signal is proposed. Pitch information is also exploited in an iterative way to detect singing voice in a music signal using a tandem algorithm in [

16]. Other works apply voice activity detection, like [

17] where the authors propose to separate the singing voice from the instrumental accompaniment using vocal activity information derived with robust principal component analysis. Spectral information has also been used, as in [

18] where Long Short-Term Memory (LSTM) Networks are applied to separate singing from the musical accompaniment in an online way. Deep neural networks are also considered in [

19] where a Bidirectional Long Short-Term Memory (BLSTM) Network is applied to enhanced vocal and music components separated by Harmonic/Percussive Source Separation [

20]. However, these techniques usually exploit the differences between vocal signals and signals produced by music instruments. In general, they are not well suited for speech and singing separation [

11,

12], which is the problem we want to address in this work.

We are interested in singing and speech segmentation because it is a basic tool necessary to deal with recordings from bertsolarism. Bertsolarism (bertsolaritza in Basque) is the art of live improvising sung poems typical in the Basque Country. The host of the bertsolarism show proposes the topic for the improvised verses and introduces the singer. Then, the singer has to create a rhymed verse about the proposed topic and sing it with a melody that fits the defined metre. The singers are called bertsolari and they have to perform a capella, without the support of any musical instrument. The main goal is to produce good quality verses and not to sing on tune, so most bertsolaris are not professional singers. Bertsolarism associations provide access to many recorded live sessions of bertsolaritza together with the corresponding transcriptions and metadata. The recordings of the live shows include speech from the host, sung verses and overlapped applauses and, consequently, a good segmentation system is required to isolate the segments of interest.

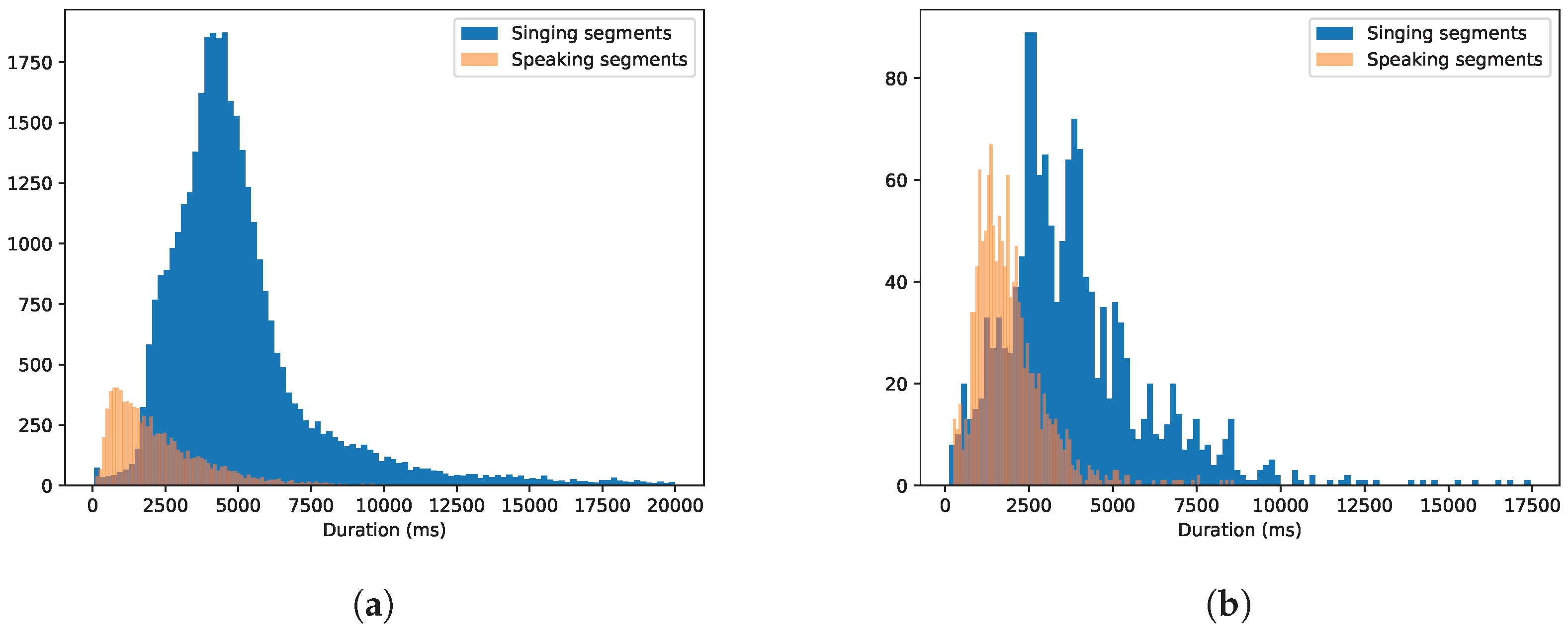

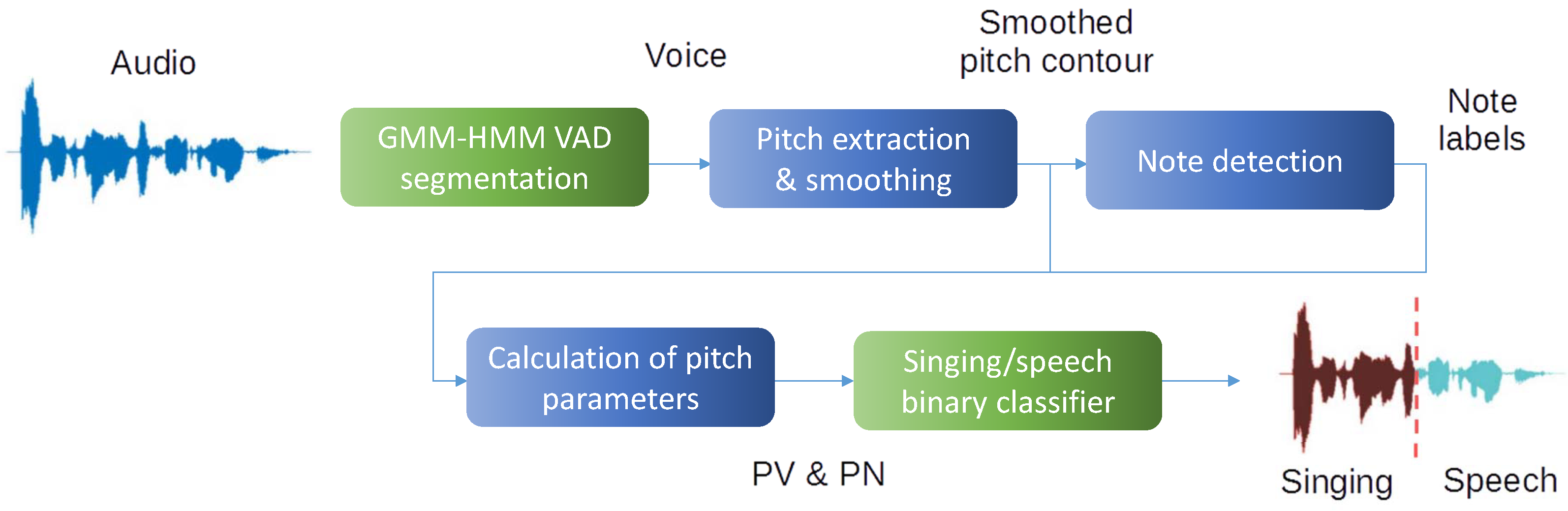

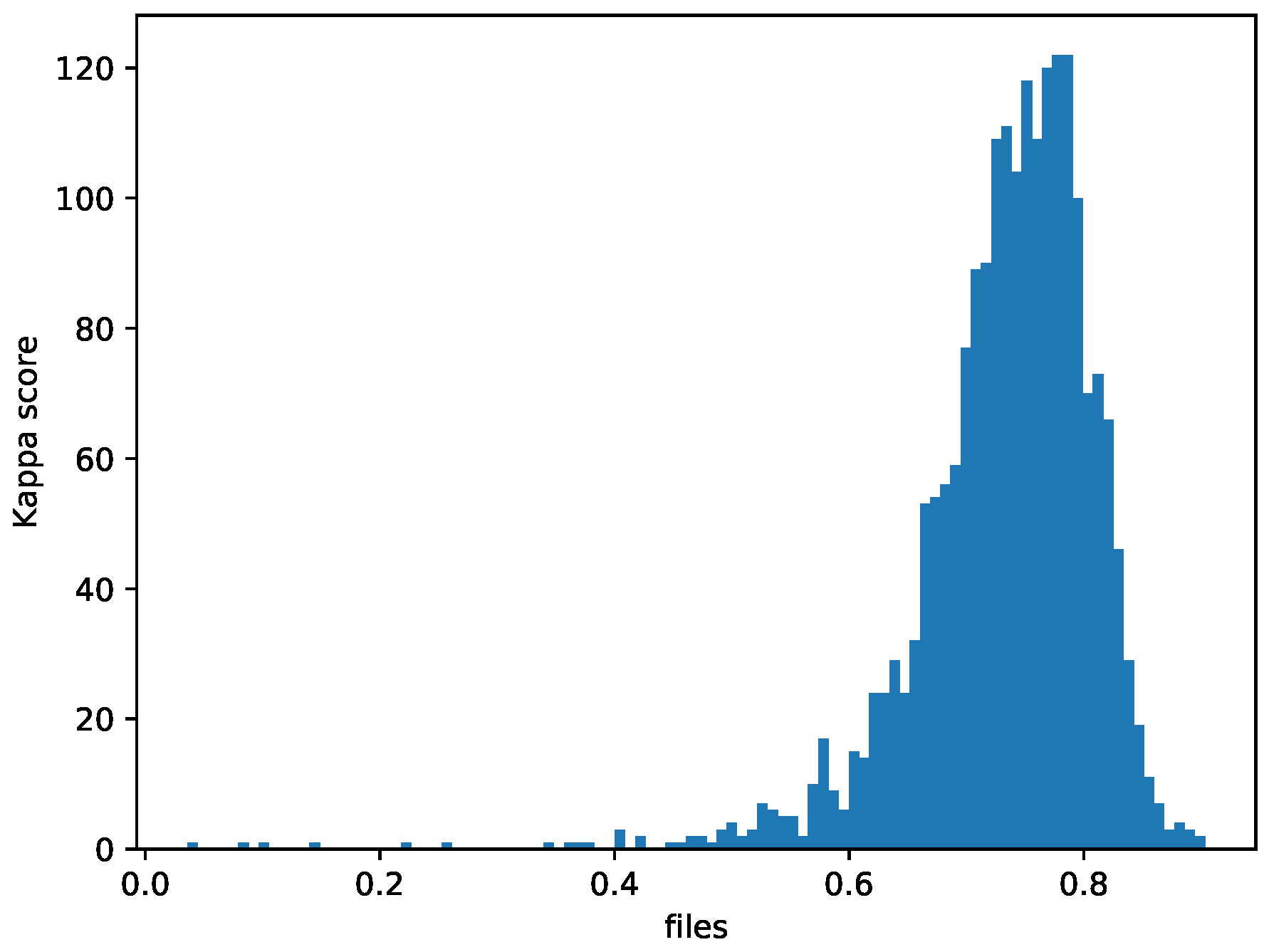

In this paper, we propose a new technique to segment speech and singing using only two parameters derived from the pitch curve. The technique is applied to a Bertsolaritza database and a database of popular English songs and compared with other techniques proposed in the literature. Experiments show that the proposed segmentation algorithm is fast, robust and obtains good results. A novel algorithm to automatically label notes in the audio file is also proposed.

The rest of the paper is organized as follows.

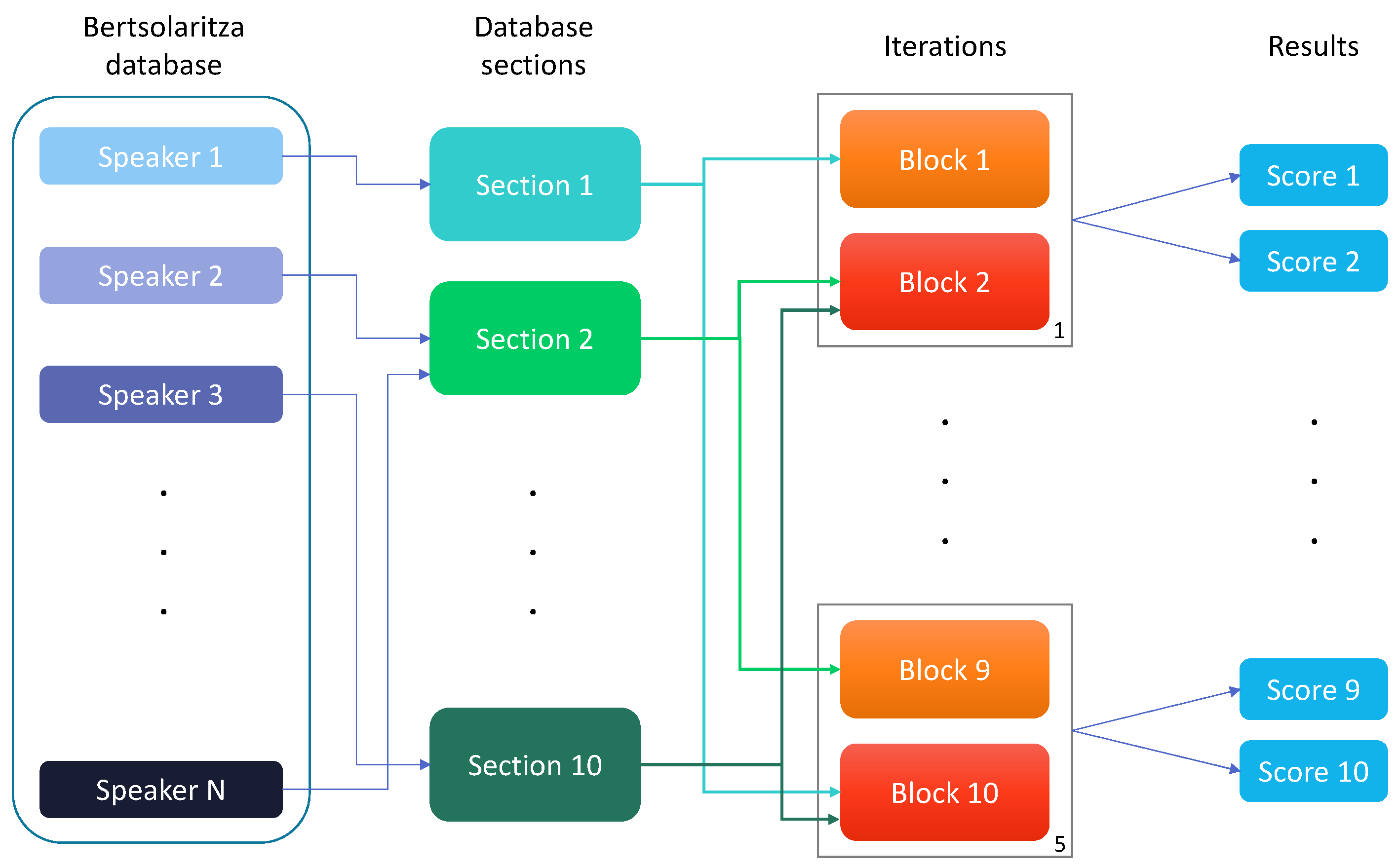

Section 2 presents the datasets and describes the proposed segmentation system, including the algorithm developed to assign a musical note label to each audio frame.

Section 3 describes the experiments and results of both algorithms and compares them to other techniques for note labelling and speech/singing voice classification. Finally,

Section 4 discusses our findings.

4. Discussion

In this article, we present a novel method of discriminating speech and singing segments using only two parameters derived from . The system has been tested and compared with two systems based also on analysis and another one based on spectral information in two databases with different characteristics. The proposed method gets better results than the other systems using pitch parameters and equivalent results compared to the spectrum analysis system. It has been also proven that the proposed one generalises the classification in other databases without bias to the singing class and has a lower computation time.

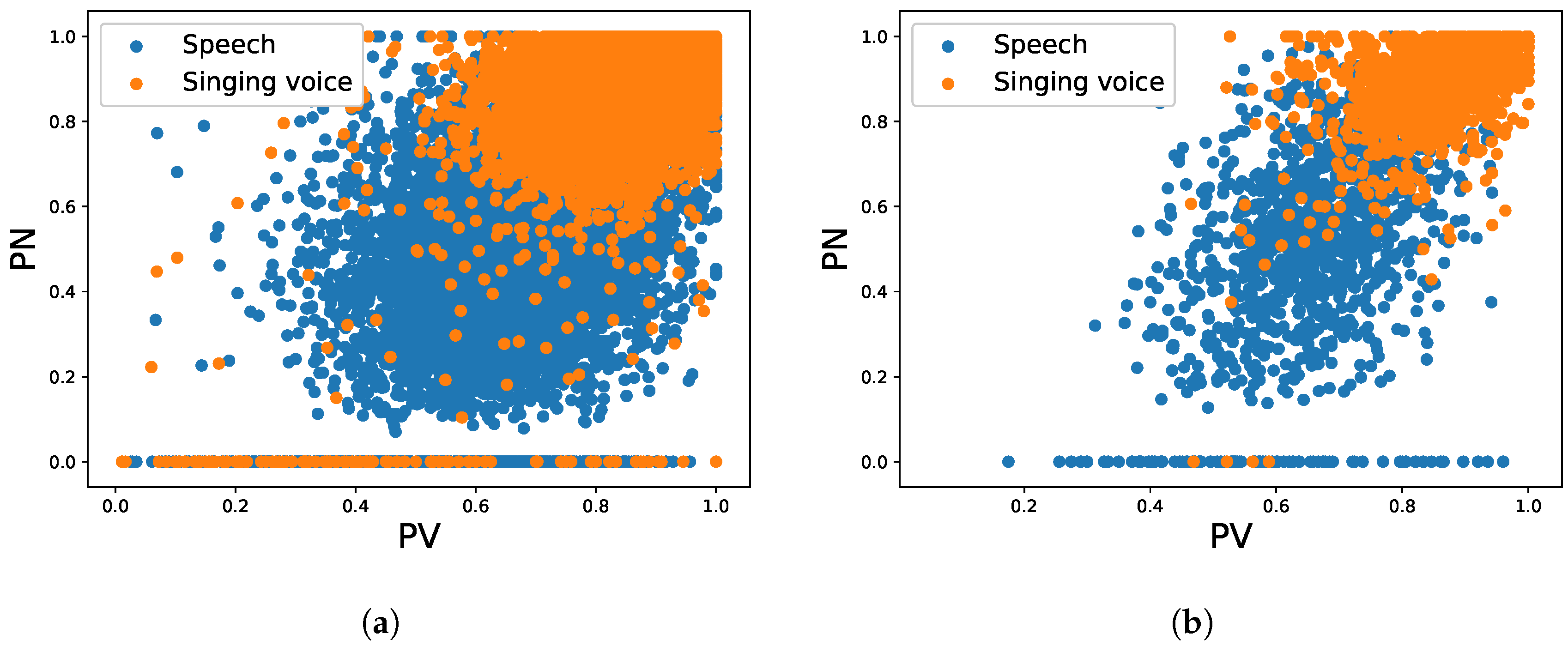

We observed that the two parameters used for the classification in the proposed system, PV and PN, are very discriminative in databases with diverse characteristics: different languages, singing proficiency levels, recording conditions and quality, etc. These parameters are also capable of classifying voice segments of short length and this provides a high flexibility to the classification system.

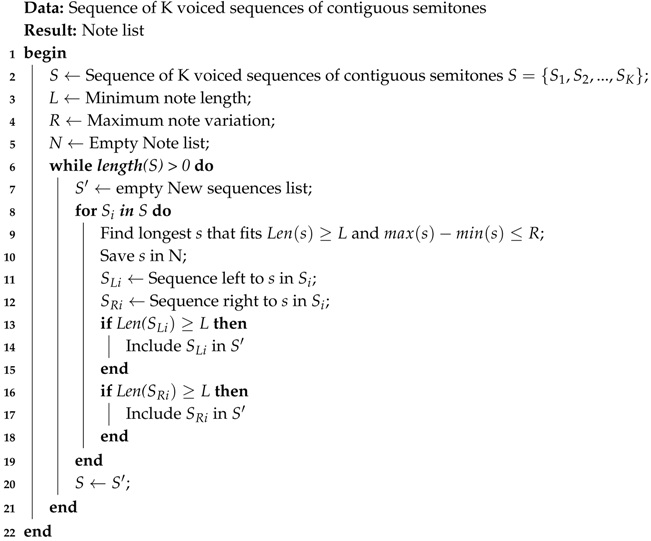

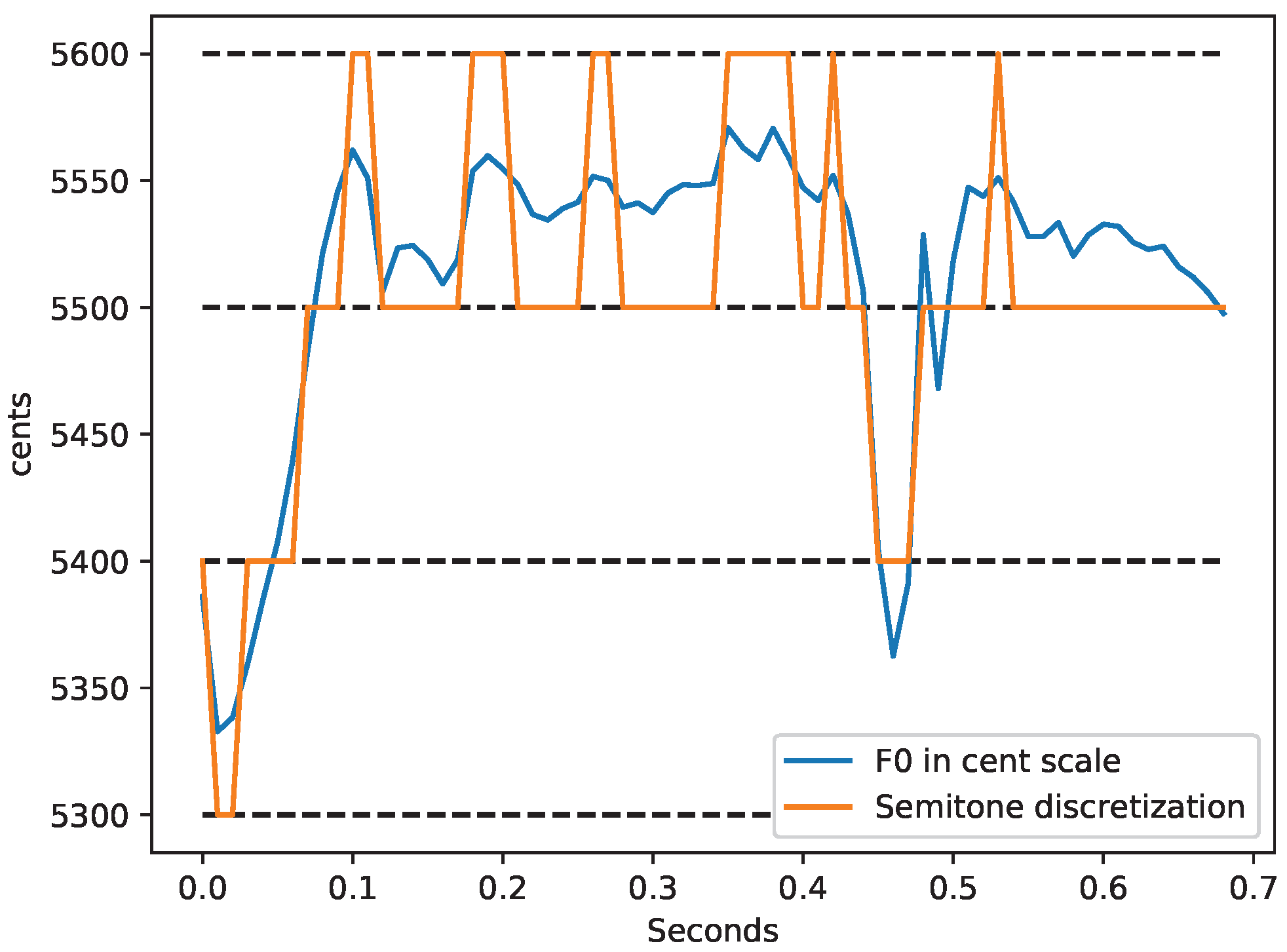

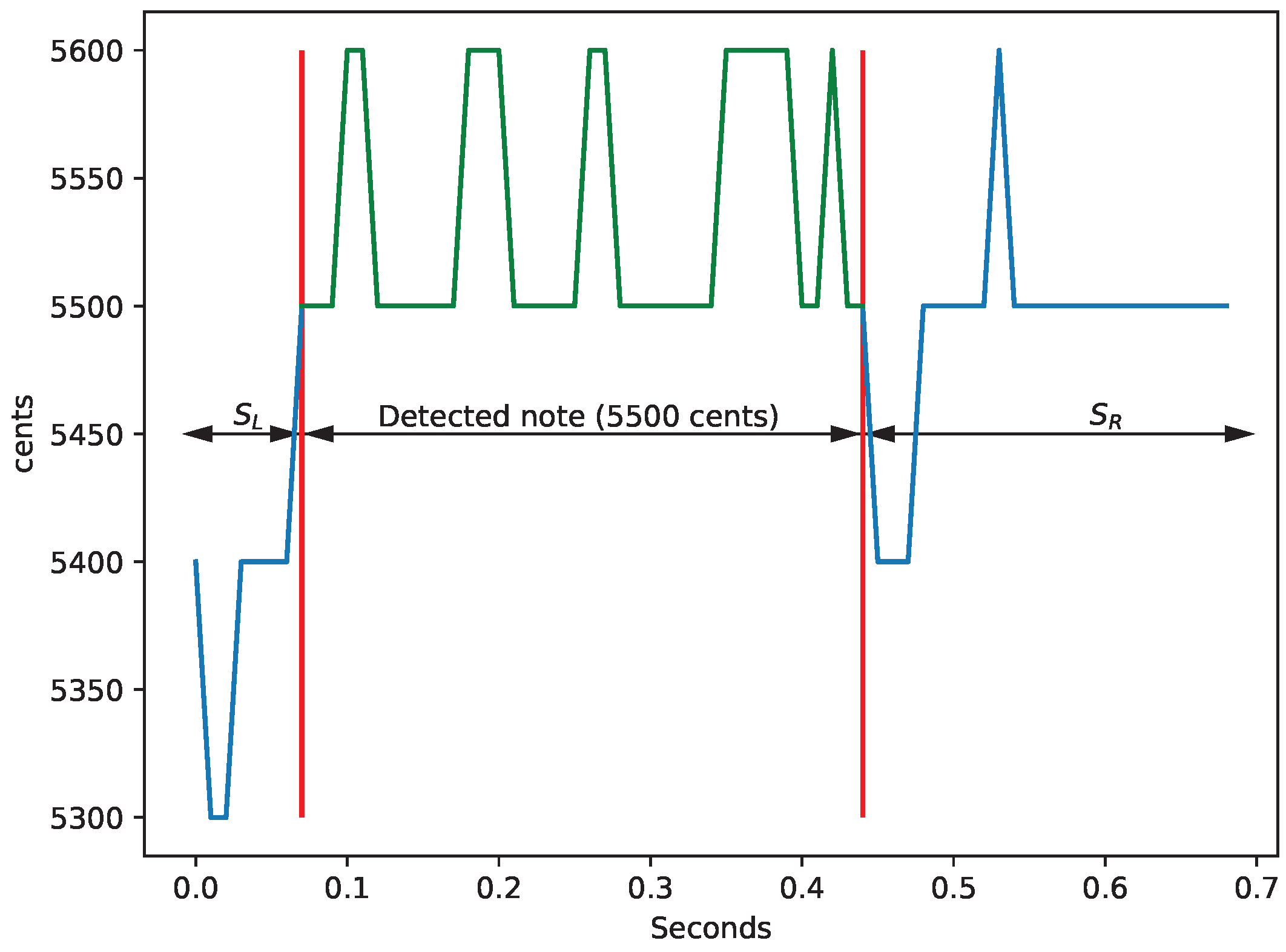

As a by-product, an algorithm to detect notes in a singing voice has been created. The algorithm is easy and intuitive to tune. It only uses three configuration parameters that are directly related with the characteristics of a specific music style, in this case, the Western music. The test has shown that the algorithm is able to cope with audio files containing music and speech segments. It is capable of obtaining musical information from the singing voice without trying to classify speech as singing. Other existing note detection algorithms are tuned to deal with music signals and therefore they try to find notes in all the segments they process. On the contrary, our algorithm has been designed expressly for detecting notes in signals with speech and music and it is not biased to any of the classes. We compared our note detection algorithm with the state-of-the-art algorithm of Tony and we have seen that the agreement between the two methods regarding note detection is strong.

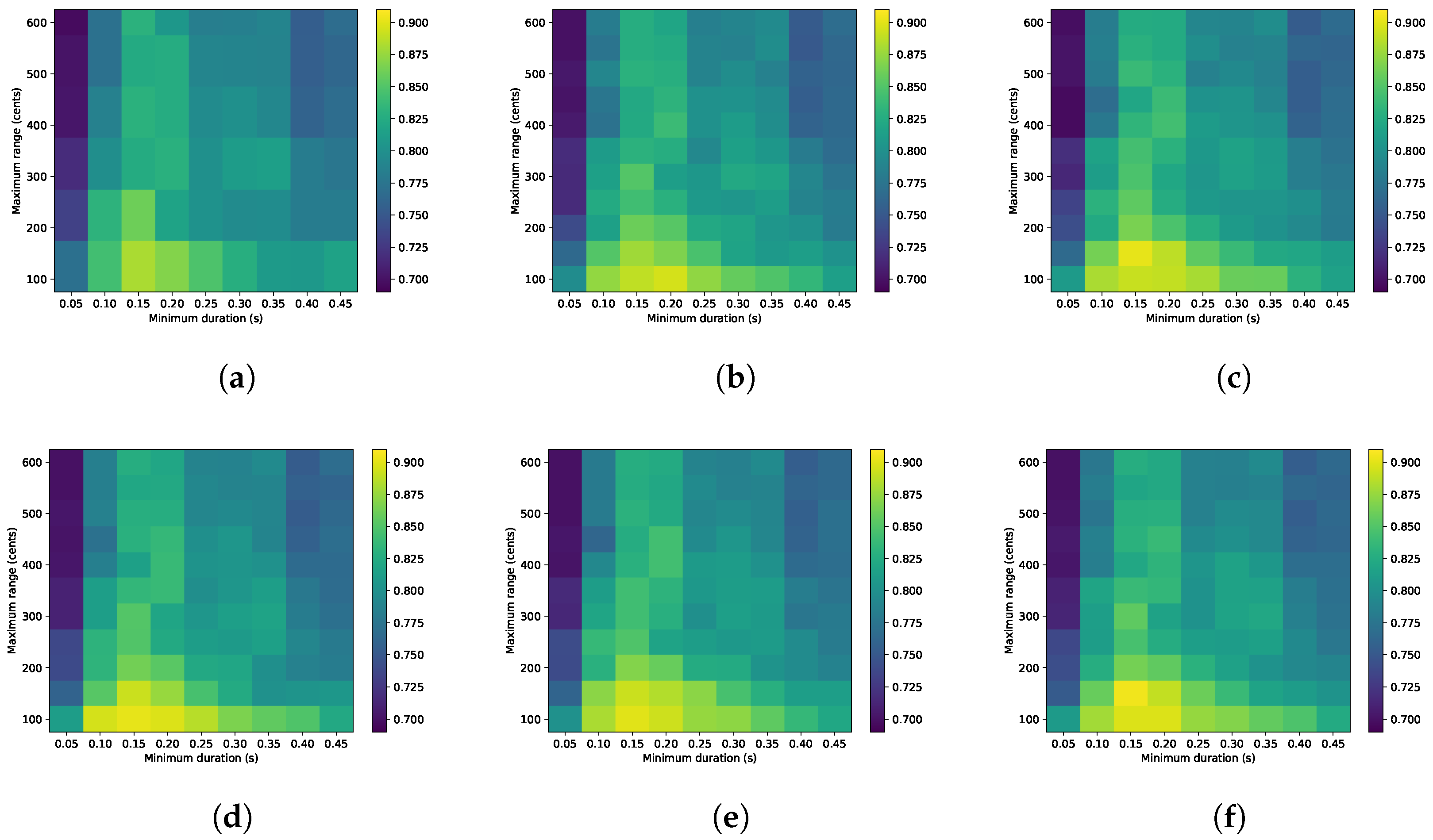

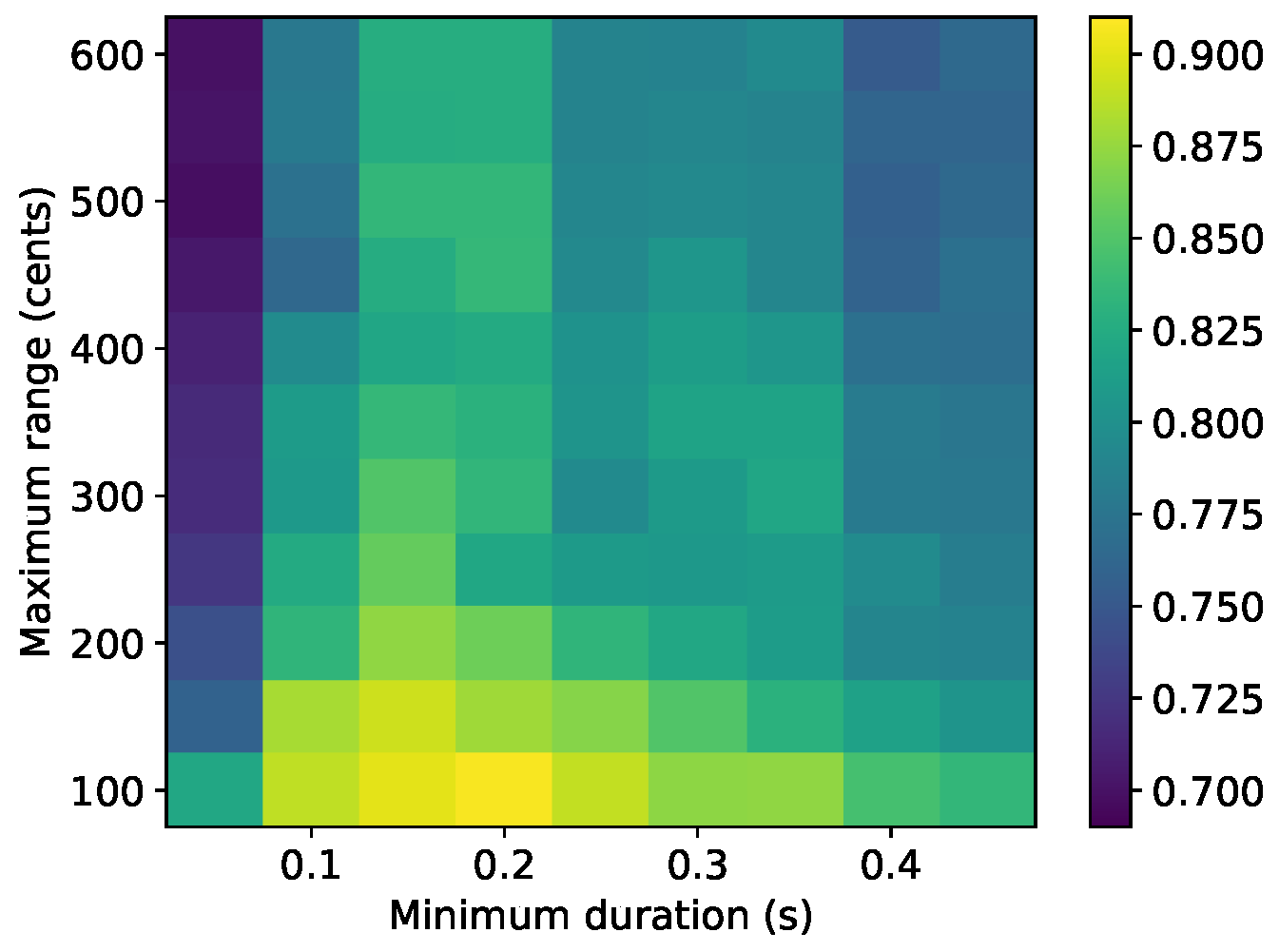

The parameter sensitivity analysis of the note detection algorithm showed that changes in the minimum duration have more effect on the speech/singing classification results than the maximum pitch range. If the maximum pitch range considered is high, all the pitch variations in the signal would be included in the range and then the only parameter with influence on the results has a minimum length. When the minimum length considered is very small, the pitch variation inside the segment usually is not very large and then all segments are treated as musical notes, which gives poor speech/singing classification results regardless of the maximum pitch range applied. In general, the singing voice has a greater pitch range than speech, but it has smaller local pitch changes once the vibrato has been smoothed in the pitch curve.

For future work, we consider it interesting to apply the note labelling algorithm to different music styles and datasets and find the optimal values for the configuration parameters valid for other singing styles. It would be also very interesting to develop new objective measures for the evaluation of note detection algorithms. In addition, we are considering the possibility to test the potential of LSTMs for performing the note detection.