Dual-Branch Deep Convolution Neural Network for Polarimetric SAR Image Classification

Abstract

:1. Introduction

2. Basics of the CNN

2.1. The Forward Propagation

2.2. The Backward Propagation

- Selection of cost function. The quadratic function is the common cost function. However, it would be time-consuming if the neurons make an obvious mistake during the training process. Alternatively, we take Cross-Entropy () as the cost function which is determined by Equation (2):where n is the total number of training sets, and N is the number of neurons in the output Layer corresponding to the N classes. is the targeted value corresponding to the neuron of the output layer, and is the actual output value of the neuron of the output layer.

- Calculation of error vectors. The error vector of the output layer L is defined bywhere the symbolic represents the partial derivative operation. Back-propagate the error vector . For each , can be computed by the Chain Rule as:where the symbolic ∘ is the Hadamard product (or Schur product) which denotes the element-wise product of the two vectors.

- Updates of weights and the bias matrix. The gradients of and are denoted as and respectively. The partial derivative of to and can be calculated with Equations (1) and (3):The change values of and : and , can be calculated respectively bywhere represents the learning rate.

2.3. Feature Extraction

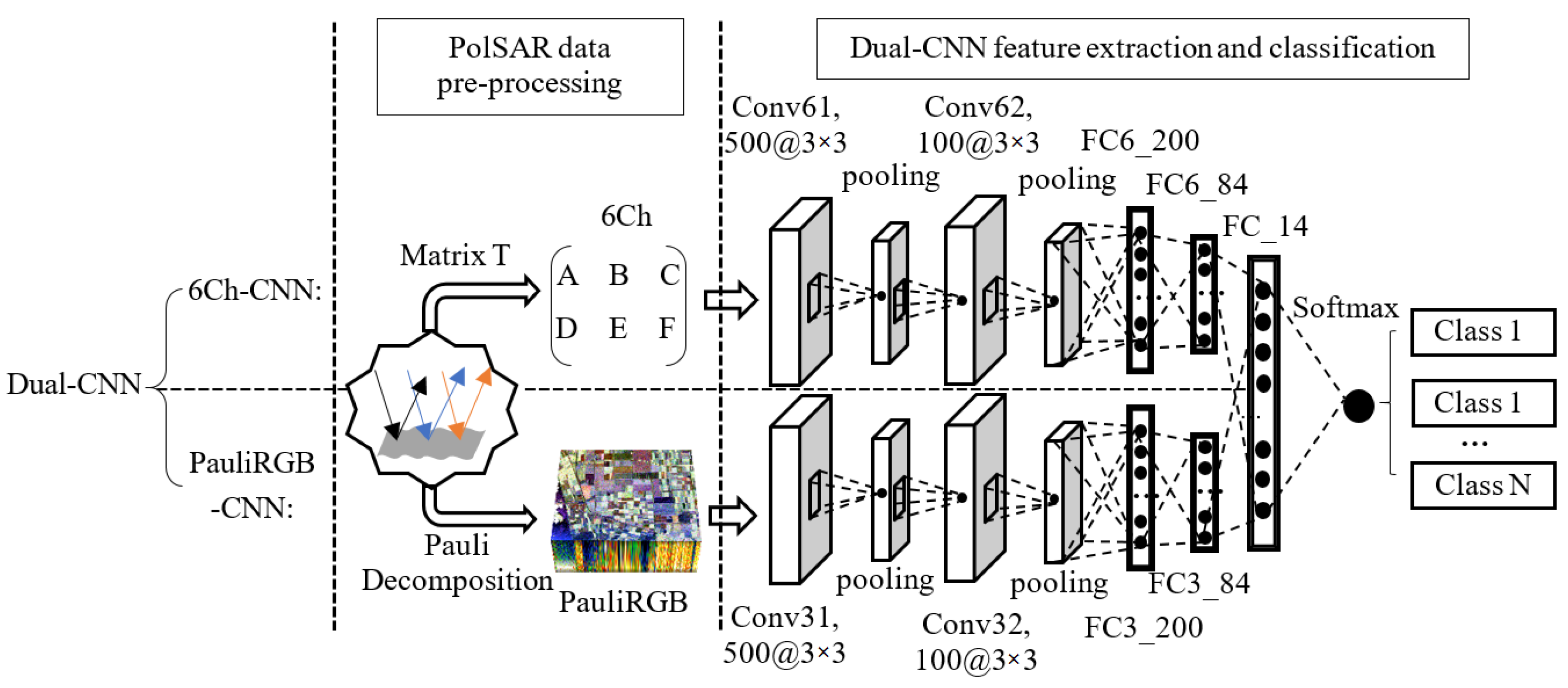

3. The Proposed Method

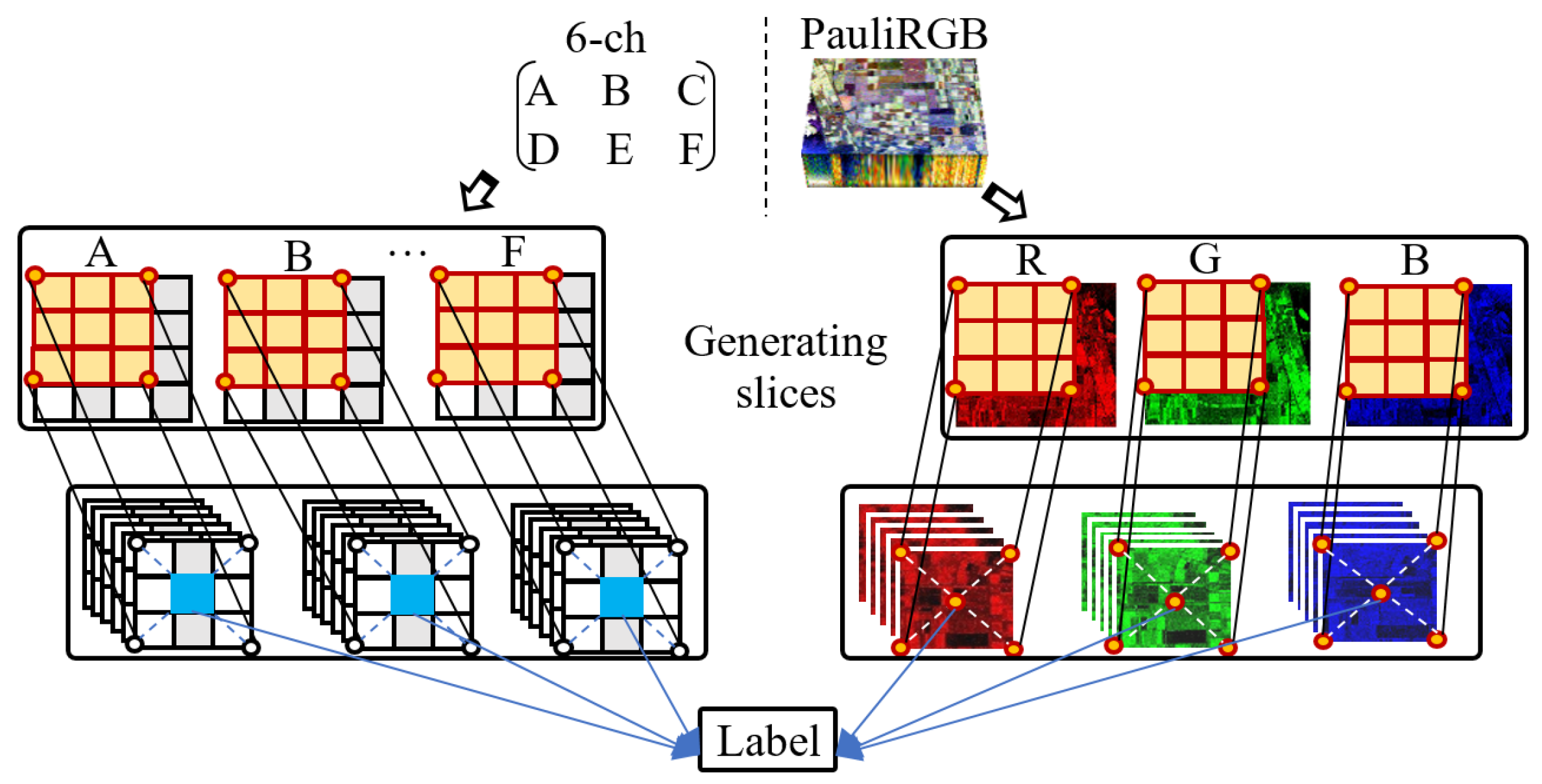

3.1. PolSAR Data Pre-Processing

3.1.1. Creating 6Ch to Represent the Polarimetric Data

3.1.2. Generating Pauli RGB Image to Obtain the Spatial Feature

3.1.3. Patching the Images with Fixed Size

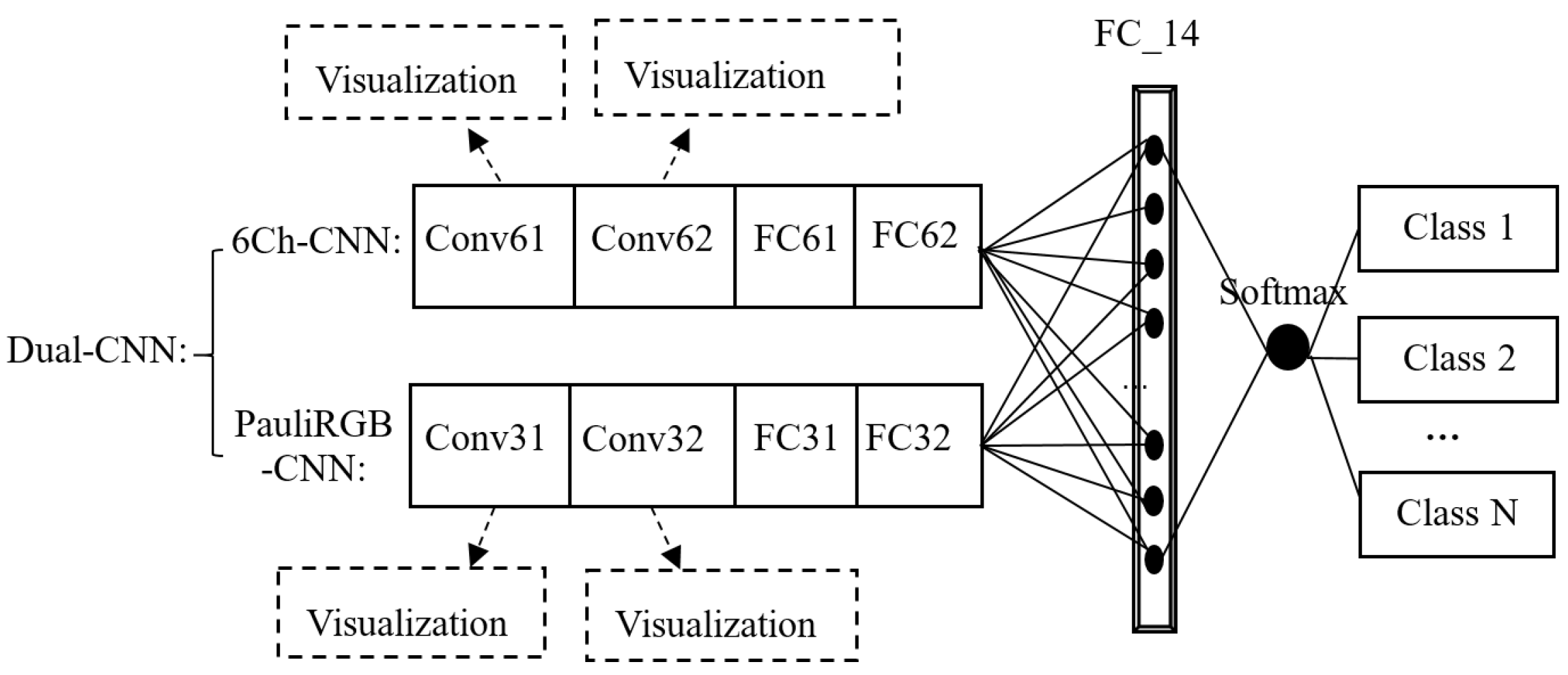

3.2. Feature Extraction and Classification Based on the Dual-CNN Model

3.2.1. The Forward Propagation of the Dual-CNN Model

3.2.2. The Backward Propagation of the Dual-CNN Model

4. Experiment

- Comparing our method with the single-branch network, i.e., the 6Ch-CNN and PauliRGB-CNN model.

- Comparing our method with some classical algorithms and some recently proposed classification algorithms with the same dataset.

- Discussing how the size of the slices influences the performance of our method, and then conducting research on the visual representation of the features.

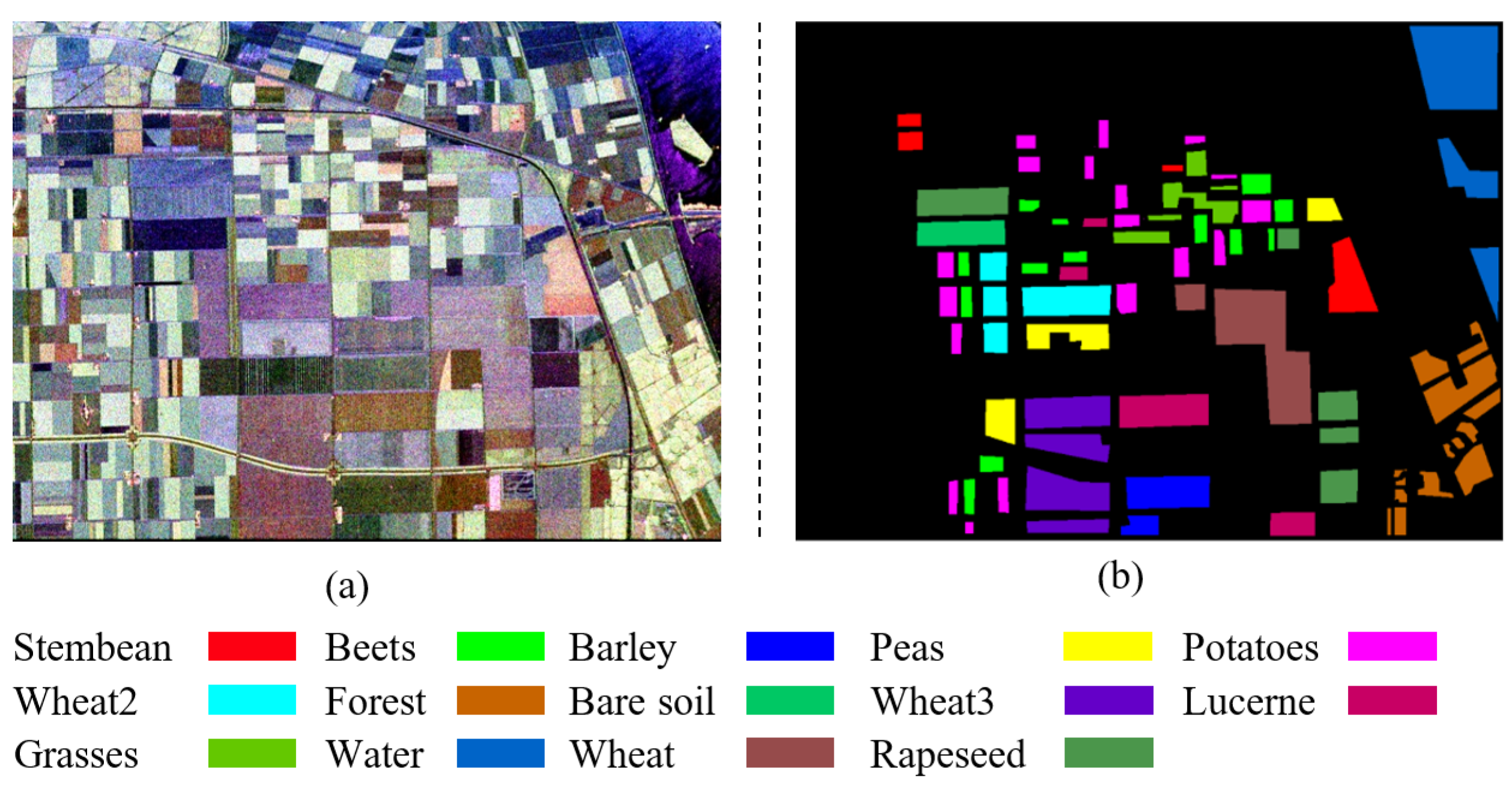

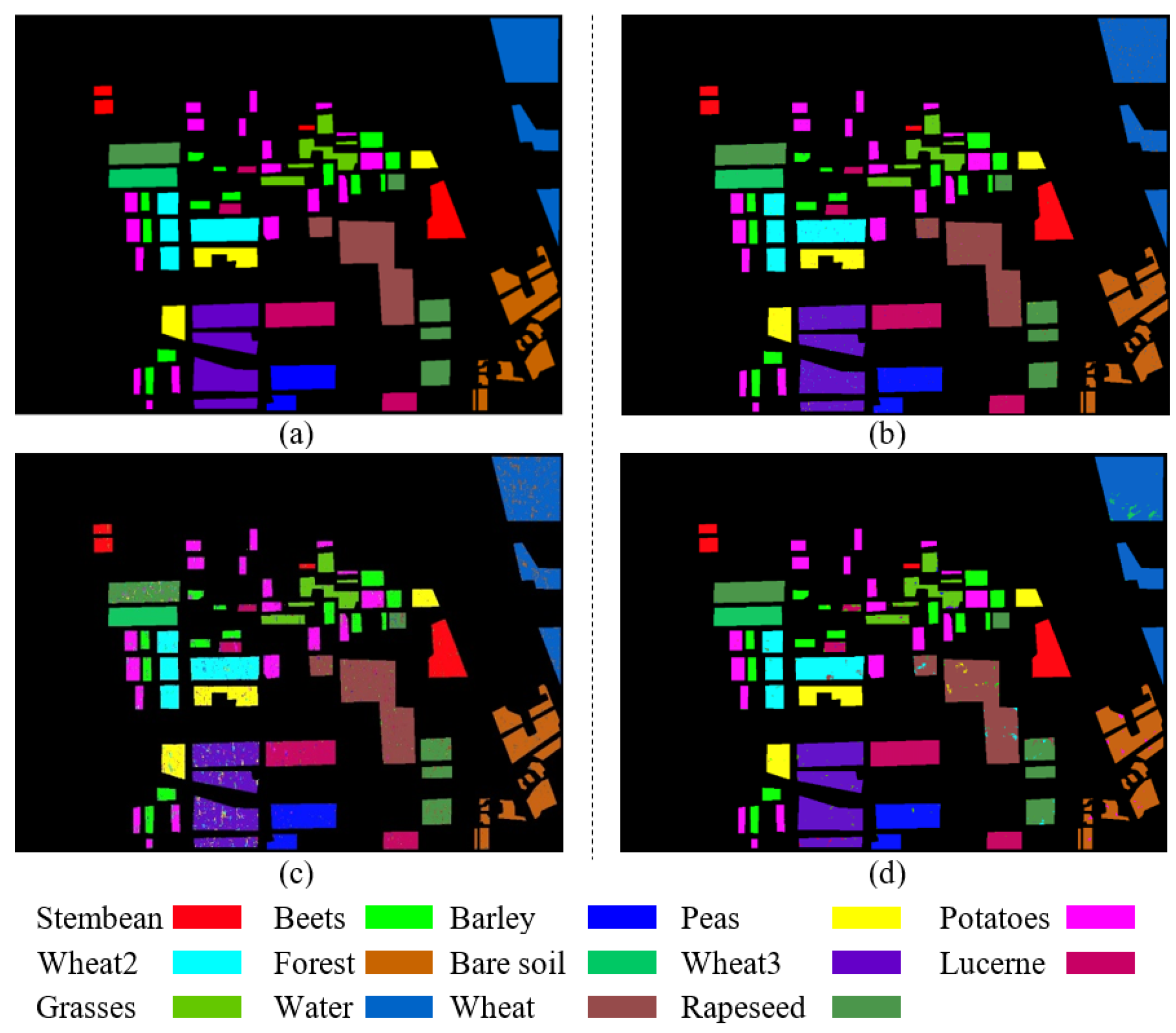

4.1. Flevoland Data

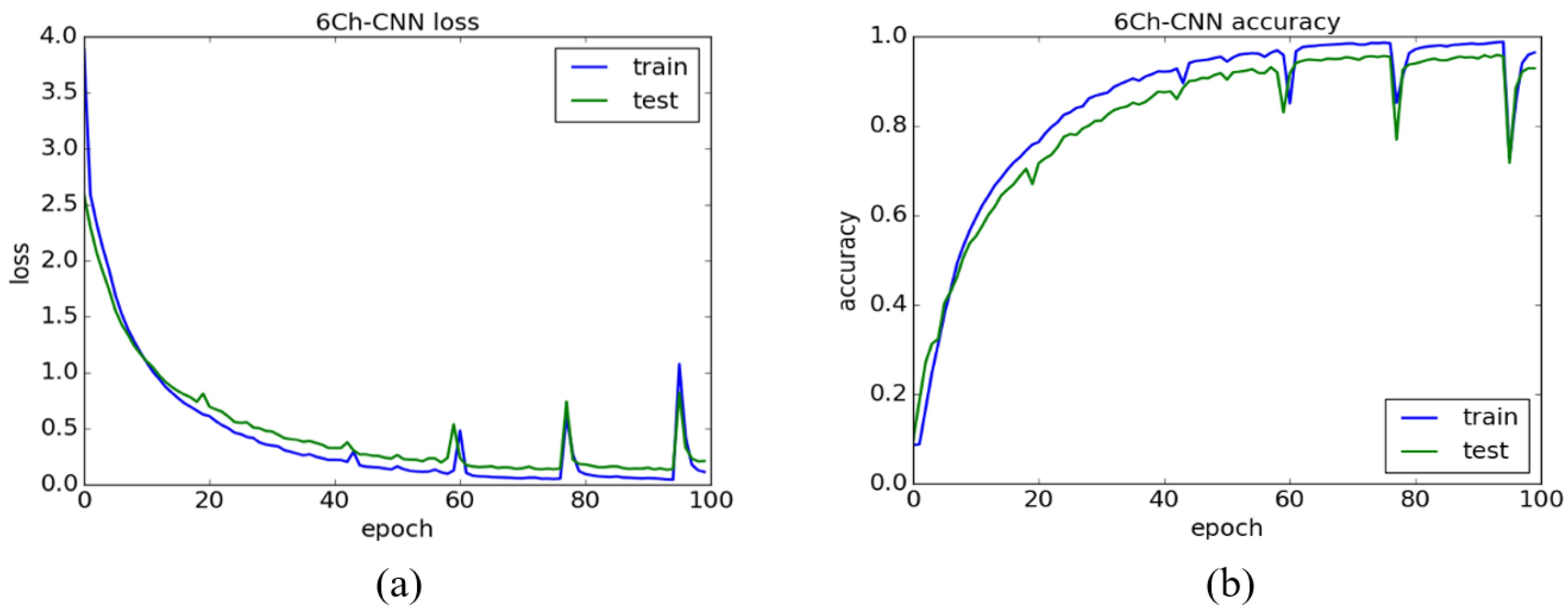

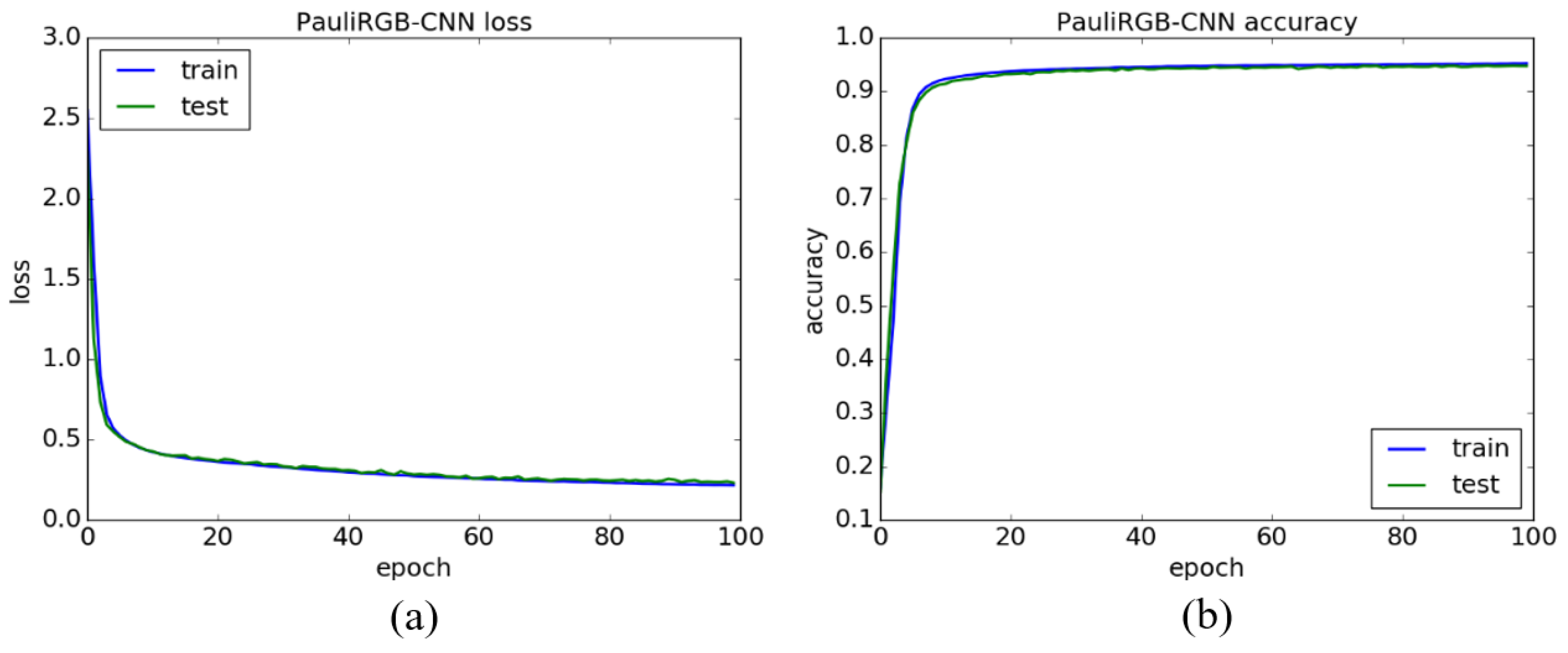

4.2. Comparing with One-CNN

4.3. Comparing with Other Methods

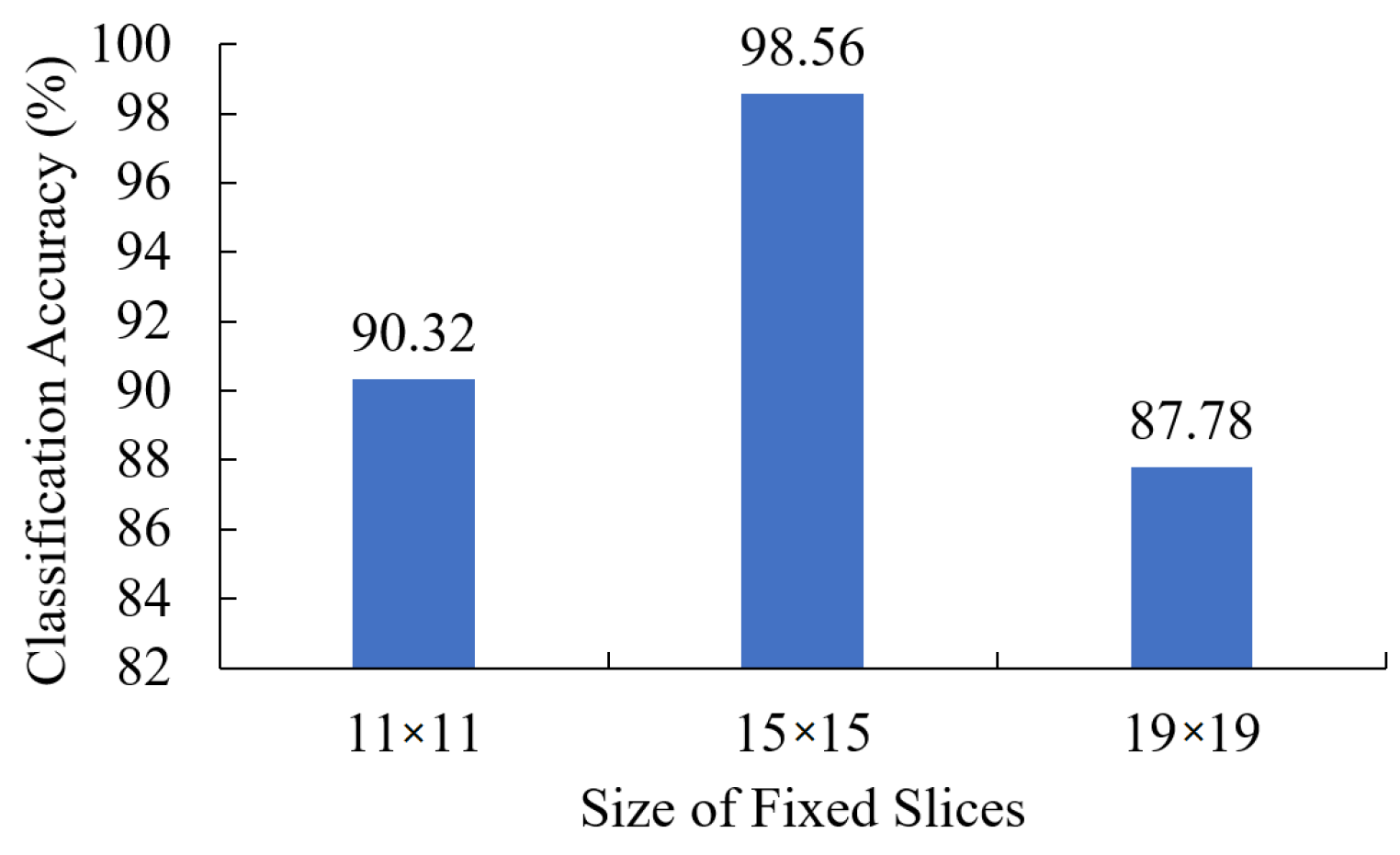

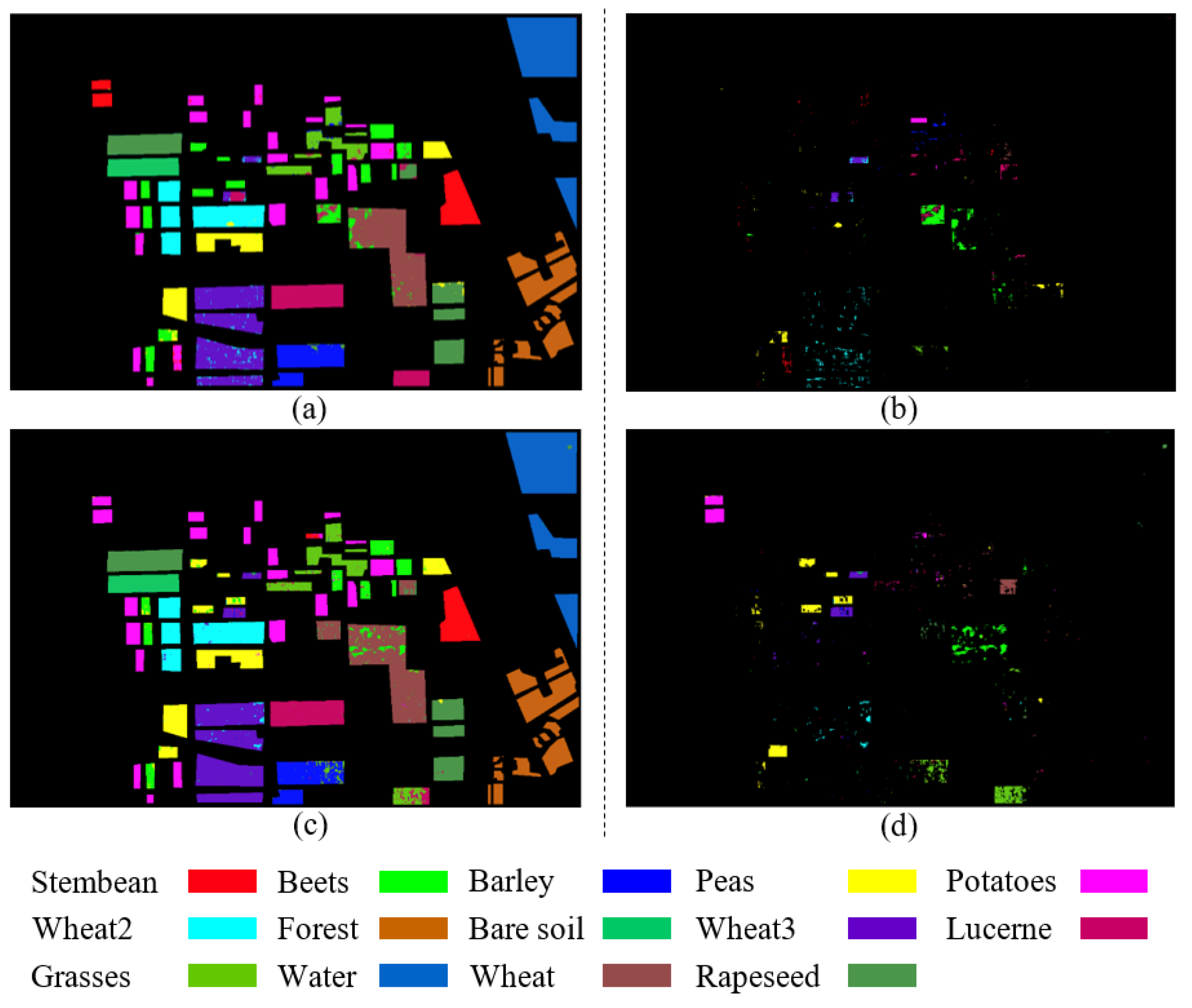

4.4. Different Fixed Size Slices and Visualization of Feature Maps

4.4.1. The Effect of Slicing Size on Classification Accuracy

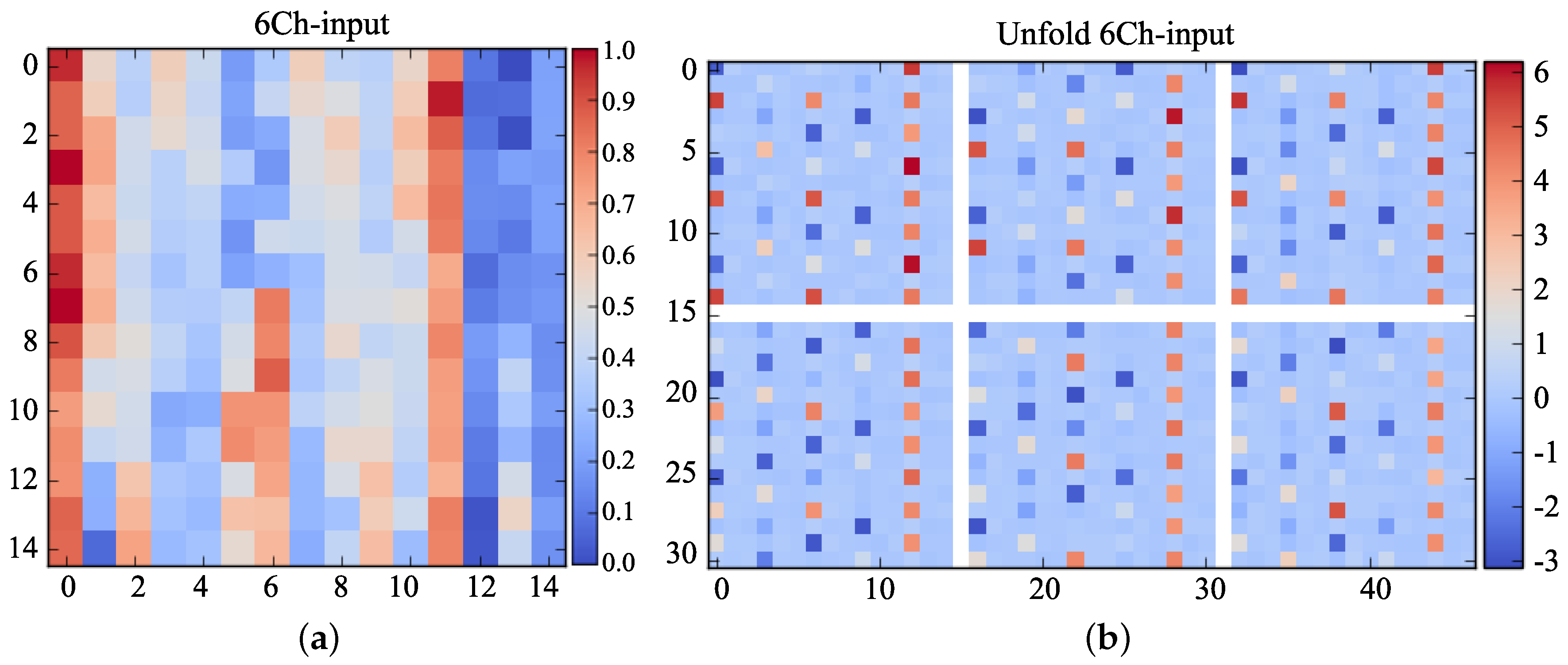

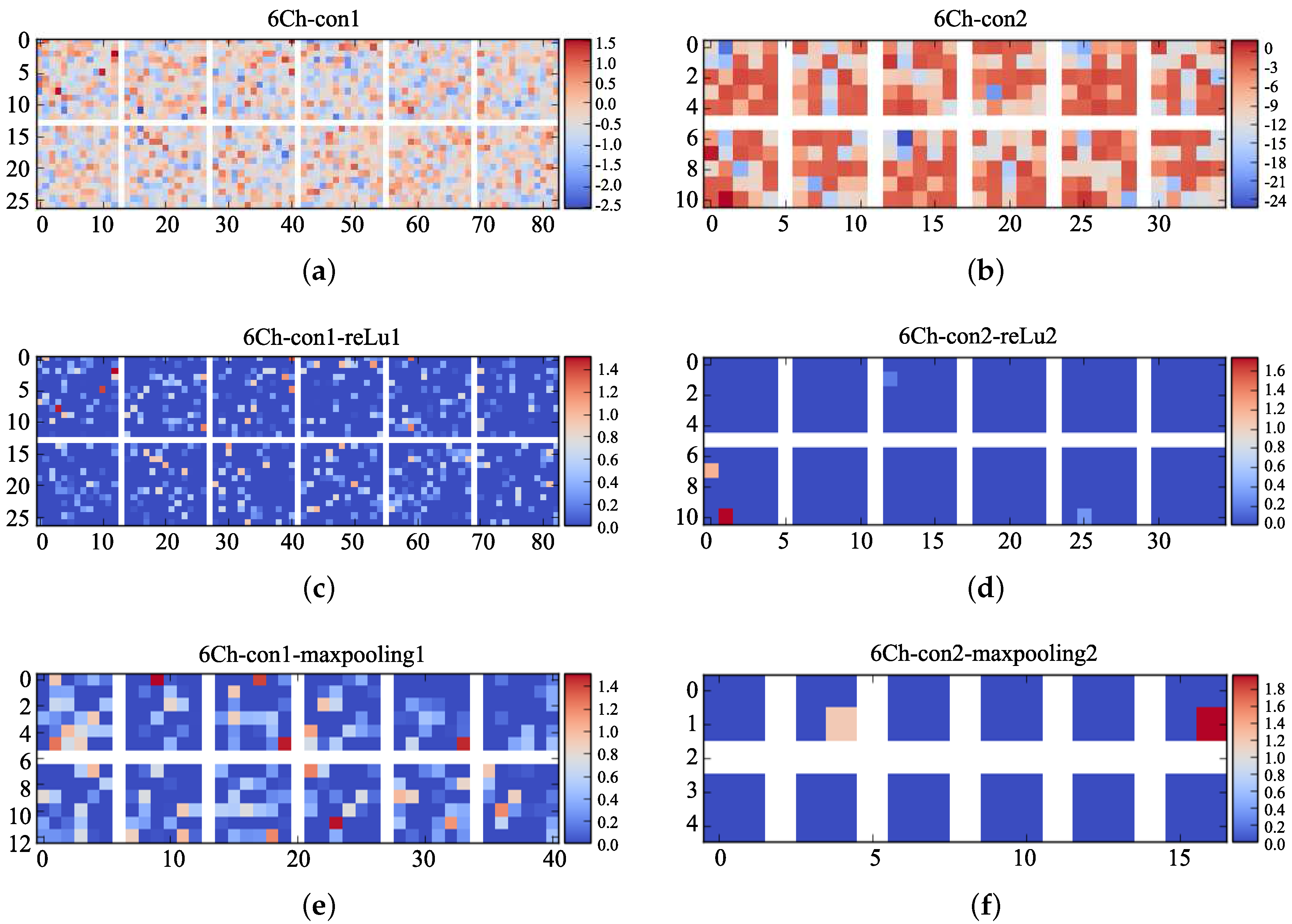

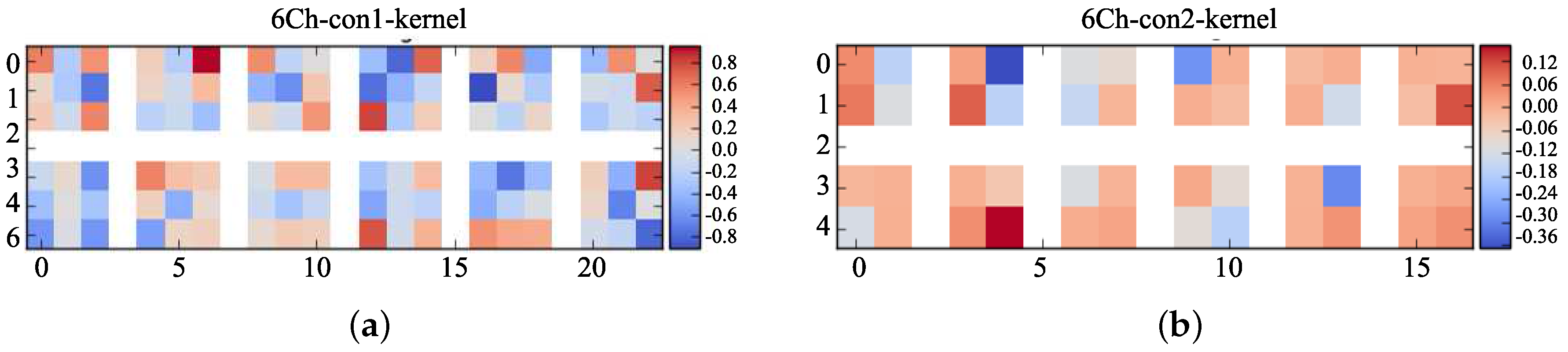

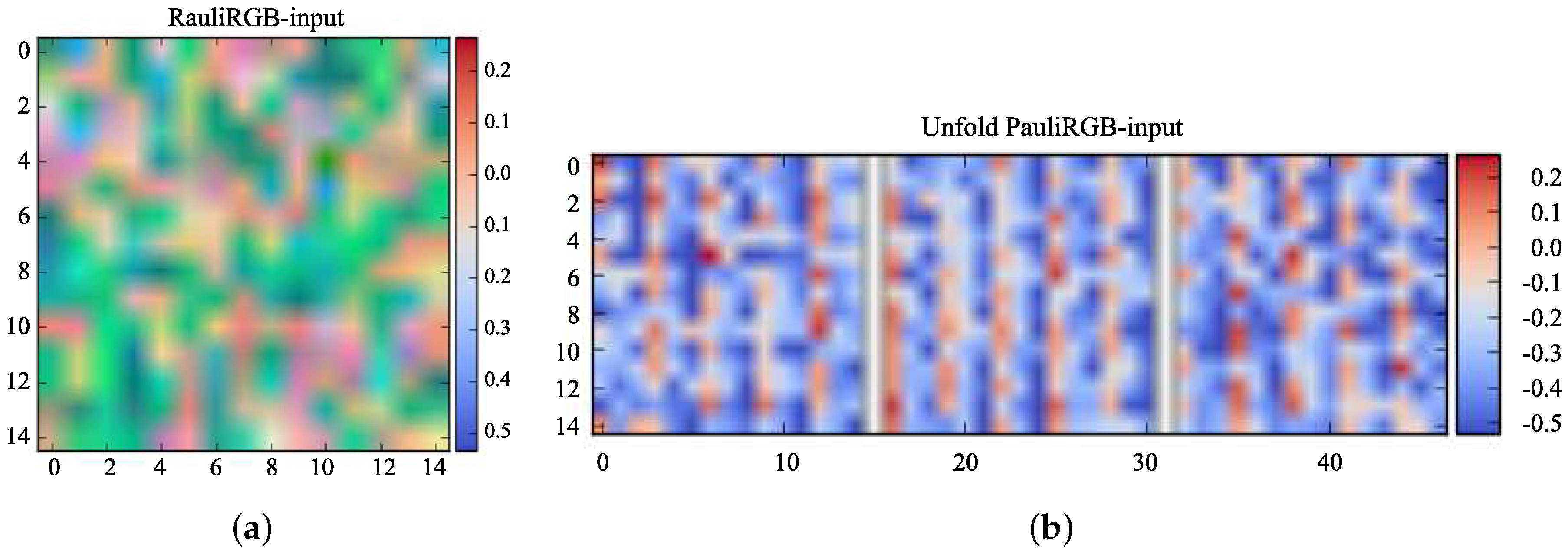

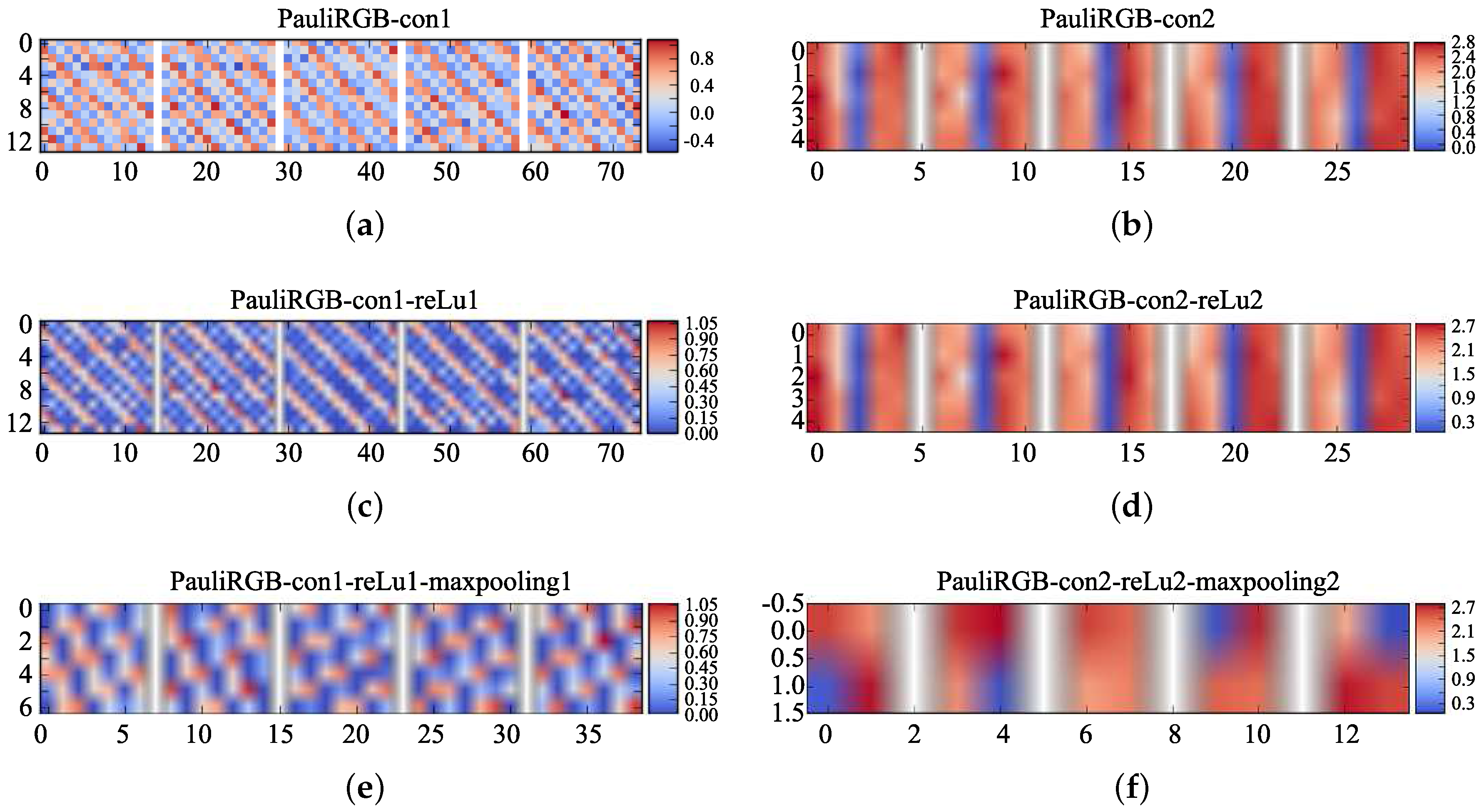

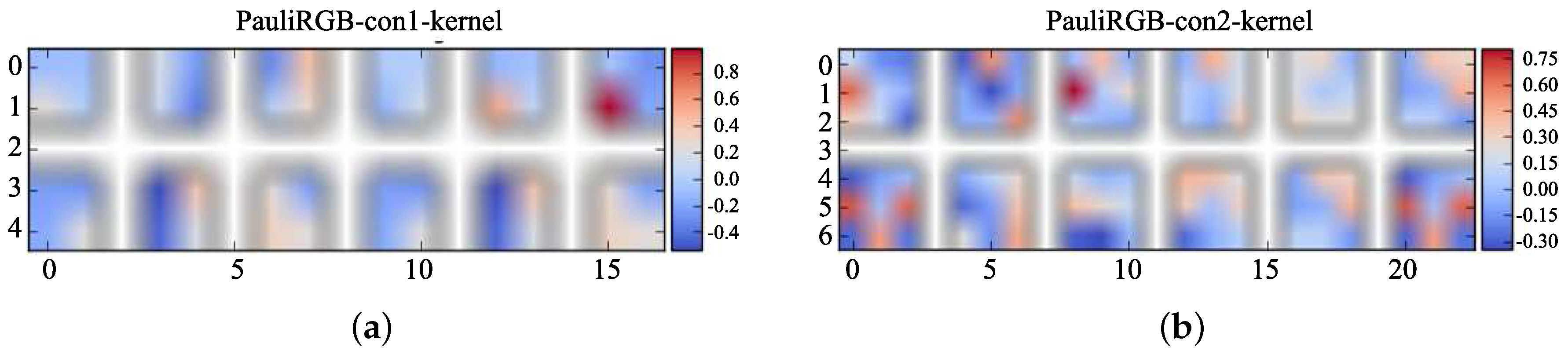

4.4.2. The Visualization of Feature Maps

5. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Gaber, A.; Soliman, F.; Koch, M.; El-Baz, F. Using full-polarimetric SAR data to characterize the surface sediments in desert areas: A case study in El-Gallaba Plain, Egypt. Remote Sens. Environ. 2015, 162, 11–28. [Google Scholar] [CrossRef]

- Canty, M.J. Images, Arrays, and Matrices. In Image Analysis, Classification and Change Detection in Remote Sensing: With Algorithms for ENVI/IDL and Python, 3rd ed.; CRC Press: Boca Raton, FL, USA, 2014; pp. 1–32. [Google Scholar]

- Rosenqvist, A.; Shimada, M.; Ito, N.; Watanabe, M. ALOS PALSAR: A Pathfinder mission for global-scale monitoring of the environment. IEEE Trans. Geosci. Remote Sens. 2007, 45, 3307–3316. [Google Scholar] [CrossRef]

- Yang, M.; Zhang, G. A novel ship detection method for SAR images based on nonlinear diffusion filtering and Gaussian curvature. Remote Sens. Lett. 2016, 7, 211–219. [Google Scholar] [CrossRef]

- Niu, C.; Zhang, G.; Zhu, J.; Liu, S.; Ma, D. Correlation Coefficients Between Polarization Signatures for Evaluating Polarimetric Information Preservation. IEEE Geosci. Remote Sens. Lett. 2011, 8, 1016–1020. [Google Scholar] [CrossRef]

- Deng, L.; Yan, Y.N.; Sun, C. Use of Sub-Aperture Decomposition for Supervised PolSAR Classification in Urban Area. Remote Sens. 2011, 7, 1380–1396. [Google Scholar] [CrossRef]

- Xiang, D.L.; Tang, T.; Hu, C.B.; Fan, Q.H.; Su, Y. Built-up Area Extraction from PolSAR Imagery with Model-Based Decomposition and Polarimetric Coherence. Remote Sens. 2016, 8, 685. [Google Scholar] [CrossRef]

- Hinton, G.E.; Salakhutdinov, R.R. Reducing the dimensionality of data with neural networks. Science 2006, 313, 504–507. [Google Scholar] [CrossRef] [PubMed]

- Wong, W.K.; Sun, M. Deep Learning Regularized Fisher Mappings. IEEE Trans. Neural Netw. 2011, 22, 1668–1675. [Google Scholar] [CrossRef] [PubMed]

- Han, J.; Zhang, D.; Cheng, G.; Guo, L.; Ren, J. Object Detection in Optical Remote Sensing Images Based on Weakly Supervised Learning and High-Level Feature Learning. IEEE Trans. Geosci. Remote Sens. 2015, 53, 3325–3337. [Google Scholar] [CrossRef]

- Vincent, P.; Larochelle, H.; Lajoie, I.; Bengio, Y.; Manzagol, P.A. Stacked denoising autoencoders: Learning useful representations in a deep network with a local denoising criterion. J. Mach. Learn. Res. 2010, 11, 3371–3408. [Google Scholar]

- Sun, M.J.; Zhang, D.; Ren, J.C.; Wang, Z.; Jin, J.S. Brushstroke Based Sparse Hybrid Convolutional Neural Networks for Author Classification of Chinese Ink-Wash Paintings. In Proceedings of the 2015 IEEE International Conference on Image Processing (ICIP), Quebec City, QC, Canada, 27–30 September 2015; pp. 626–630. [Google Scholar]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. ImageNet classification with deep convolutional neural networks. Adv. Neural Inf. Proc. Syst. 2012, 25, 1097–1105. [Google Scholar]

- Wang, Y.Y.; Wang, G.H.; Lan, Y.H. PolSAR Image Classification Based on Deep Convolutional Neural Network. Metall. Min. Ind. 2015, 8, 366–371. [Google Scholar]

- Zhou, Y.; Wang, H.P.; Xu, F.; Jin, Y.Q. Polarimetric SAR Image Classification Using Deep Convolutional Neural Networks. IEEE Geosci. Remote Sens. Lett. 2016, 99, 1–5. [Google Scholar] [CrossRef]

- Aghababaee, H.; Amini, J. Contextual PolSAR image classification using fractal dimension and support vector machines. Eur. J. Remote Sens. 2013, 46, 317–332. [Google Scholar] [CrossRef]

- Margarit, G.; Mallorqui, J.J.; Fabregas, X. Single-Pass Polarimetric SAR Interferometry for Vessel Classification. Geosci. Remote Sens. IEEE Trans. 2007, 45, 3494–3502. [Google Scholar] [CrossRef]

- Bruzzone, L.; Prieto, D.F. Unsupervised retraining of a maximum likelihood classifier for the analysis of multitemporal remote sensing images. IEEE Trans. Geosci. Remote Sens. 2001, 39, 456–460. [Google Scholar] [CrossRef]

- Zhao, Q.; Principe, J.C. Support vector machines for SAR automatic target recognition. IEEE Trans. Aerosp. Electron. Syst. 2001, 37, 643–654. [Google Scholar] [CrossRef]

- Zhang, D.; Chen, S.; Zhou, Z.H. Rapid and brief communication: Learning the kernel parameters in kernel minimum distance classifier. Pattern Recognit. 2006, 39, 133–135. [Google Scholar] [CrossRef]

- Wang, Y.P.; Chen, D.F.; Song, Z.G. Detecting surface oil slick related to gas hydrate/petroleum on the ocean bed of South China Sea by ENVI/ASAR radar data. J. Asian Earth Sci. 2013, 65, 21–26. [Google Scholar] [CrossRef]

- Aplin, P. Image Analysis, Classification and Change Detection in Remote Sensing, with algorithms for ENVI/IDL. Int. J. Geogr. Inf. Sci. 2009, 23, 129–130. [Google Scholar]

- Lee, J.S.; Grunes, M.R.; Pottier, E. Quantitative comparison of classification capability: Fully polarimetric versus dual and single-polarization SAR. IEEE Trans. Geosci. Remote Sens. 2001, 39, 2343–2351. [Google Scholar]

- Wang, H.; Zhou, Z.; Turnbull, J.; Song, Q.; Qi, F. Pol-SAR classification based on generalized polar decomposition of mueller matrix. IEEE Trans. Geosci. Remote Sens. 2016, 13, 565–569. [Google Scholar] [CrossRef]

| Label | Type | Color | Train | Test | ||

|---|---|---|---|---|---|---|

| 6Ch | PauliRGB | 6Ch | PauliRGB | |||

| 1 | Stembeans | 5082 | 5082 | 1693 | 1693 | |

| 2 | Beets | 6039 | 6039 | 2012 | 2012 | |

| 3 | Barley | 5106 | 5106 | 1701 | 1701 | |

| 4 | Peas | 5530 | 5530 | 1843 | 1843 | |

| 5 | Potatoes | 9180 | 9180 | 3060 | 3060 | |

| 6 | Wheat2 | 7343 | 7343 | 2447 | 2447 | |

| 7 | Forest | 10,093 | 10,093 | 3364 | 3364 | |

| 8 | Bare soil | 3299 | 3299 | 4099 | 4099 | |

| 9 | Wheat3 | 12,663 | 12,663 | 4221 | 4221 | |

| 10 | Lucerne | 6872 | 6872 | 2290 | 2290 | |

| 11 | Grasses | 4200 | 4200 | 1399 | 1399 | |

| 12 | Water | 14,739 | 14,739 | 4913 | 4913 | |

| 13 | Wheat | 12,361 | 12,361 | 4120 | 4120 | |

| 14 | Rapeseed | 9013 | 9013 | 2838 | 2838 | |

| Total | – | – | 111,520 | 111,520 | 37,000 | 37,000 |

| Label | Dual-CNN (%) | 6Ch-CNN (%) | PauliRGB-CNN (%) |

|---|---|---|---|

| 1 | 97.77 | 96.04 | 95.64 |

| 2 | 98.21 | 90.85 | 90.70 |

| 3 | 97.88 | 93.94 | 94.17 |

| 4 | 96.72 | 91.91 | 93.67 |

| 5 | 95.96 | 88.56 | 92.57 |

| 6 | 100 | 95.05 | 94.26 |

| 7 | 99.94 | 97.08 | 95.97 |

| 8 | 100 | 95.54 | 93.45 |

| 9 | 95.95 | 87.84 | 90.48 |

| 10 | 99.51 | 92.70 | 94.07 |

| 11 | 98.85 | 95.40 | 95.42 |

| 12 | 99.92 | 91.34 | 96.74 |

| 13 | 99.85 | 93.20 | 93.48 |

| 14 | 99.39 | 90.45 | 95.53 |

| overall | 98.56 | 92.85 | 94.01 |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Gao, F.; Huang, T.; Wang, J.; Sun, J.; Hussain, A.; Yang, E. Dual-Branch Deep Convolution Neural Network for Polarimetric SAR Image Classification. Appl. Sci. 2017, 7, 447. https://doi.org/10.3390/app7050447

Gao F, Huang T, Wang J, Sun J, Hussain A, Yang E. Dual-Branch Deep Convolution Neural Network for Polarimetric SAR Image Classification. Applied Sciences. 2017; 7(5):447. https://doi.org/10.3390/app7050447

Chicago/Turabian StyleGao, Fei, Teng Huang, Jun Wang, Jinping Sun, Amir Hussain, and Erfu Yang. 2017. "Dual-Branch Deep Convolution Neural Network for Polarimetric SAR Image Classification" Applied Sciences 7, no. 5: 447. https://doi.org/10.3390/app7050447

APA StyleGao, F., Huang, T., Wang, J., Sun, J., Hussain, A., & Yang, E. (2017). Dual-Branch Deep Convolution Neural Network for Polarimetric SAR Image Classification. Applied Sciences, 7(5), 447. https://doi.org/10.3390/app7050447