1. Introduction

Human-environment interaction is carried out by means of the five senses: sight, ear, taste, smell, and touch. In some cases, these senses—understood as information channels—become overloaded, weakened, or even lost. This is an issue for individuals with deaf-blindness, due to the fact that their principal information channels to interact with their environment are noticeably weakened or, in many cases, lost. Therefore, these subjects need different channels to manage external information and a specific approach to communicate with other individuals and express themselves.

Communication is achieved through one of the unimpaired senses; in this case, touch. Haptic communication has been one of the most common strategies applied to improve deaf-blind individuals’ interaction with the environment [

1]. The solutions include the Braille system [

2] and related devices such as the finger-Braille [

3,

4] or the body-Braille [

5], the Tadoma method [

6], the tactile sign language, the Malossi alphabet [

7], spectral displays [

1], tactile displays [

8], electro-tactile displays [

9], or the stimulation of the mechano-receptive systems of the skin, among others. In the case of residual sight, sign language or lipreading [

10] can be used. On the other hand, for some deaf individuals, acoustic nerve stimulating devices can be implanted when suitable. Commercial products for deaf-blind people adapted to the telephone are available, based on teletype writer (TTY) systems adapted with a telephone device for the deaf (TDD). Some examples are the PortaView 20 Plus TTY system from Krown manufacturing, the FSTTY (Freedom Scientific’s TTY) adapted to the deaf-blind users with the FaceToFace proprietary software, or the more recent Interpretype Deaf-Blind Communication System [

11,

12]. These systems rely on the Braille code by means of tactile displays or input devices.

In order to design a communication channel based on touch, it is necessary to know the basic physiology of the skin [

8]. Skin is classified either as non-hairy (glabrous) or hairy. This classification is relevant for haptic stimulation because each type of skin possesses different sensory receptor systems and, thus, different perception mechanisms [

13]. Also, it is important to consider that each mechano-receptive fibre plays a specific role in the perception of external stimuli [

8].

Although there are several methods [

14], tactile stimulation is usually based on either a moving coil or a direct current (DC) motor with an eccentric weight mounted on it [

15]. Therefore, the stimuli generate a vibrotactile sensation that is related to the stimulation frequency and its amplitude [

16]. The literature on vibrotactile methods describes two physiological systems, according to the fibre grouping: the Pacinian system and the non-Pacinian system. The Pacinian system involves a large receptive field that can be stimulated by higher frequencies (40–500 Hz). On the contrary, the non-Pacinian system has a small receptive field that can be stimulated by lower frequencies: 0.4–3 Hz and 3–40 Hz [

8,

17]. Vibration is highly suitable because the user tends to adapt rapidly to stationary touch stimuli [

18], in such a way that a repeated stimulus produces a sensation that remains in conscious awareness.

Tactile stimulation through vibration has been widely studied. These studies include the evaluation of different place and space effects on the abdomen [

19], torso [

20], upper leg [

21], arm [

22,

23], and palm and fingers [

13]. In addition, other parameters were taken into account when evaluating vibrotactile sensitivity: age [

24], skin temperature [

25], menstrual cycle [

26], body fat [

27], contactor area [

28], several spatial parameters involved in the stimulation of mechano-receptors [

29,

30], or the influence of having yet another sense weakened or impaired, e.g., sight or hearing [

31].

Blind individuals are familiar with the Braille code, but deaf-blindness is often degenerative, as in those diagnosed with Usher’s syndrome, and visual loss becomes progressive. Hence, the learning of the Braille code may take too long to accomplish or to feel comfortable with due to older age. On the other hand, communication by concepts is faster than letter by letter wording, as in Braille. Thus, the learning of a small set of concepts is simpler and can always be progressively increased. This paper analyses the feasibility of communication by concepts for deaf-blind individuals through a vibrotactile system and introduces TactileCom, as a communication system of substitution based on an efferent human-machine interface (HMI) implemented as a glove. The term efferent refers to the direction of the HMI interaction: if the interface performs a stimulation function it is an efferent HMI, but if the interface performs an acquisition function it is an afferent HMI [

32]. TactileCom delivers information to the user by means of vibrotactile stimulation. This information is coded in an abstract stimulus-meaning relationship [

33], similar to the sign language, i.e., each stimulation pattern corresponds to a concept or idea. For instance, some information that can be relayed is “we are going to the hospital” or “you will be left alone.” This facilitates the learning of the code because deaf-blind individuals are often familiar with that kind of communication. The entire system was developed in a manner taking into account deaf-blind individuals’ preferences after discussion with potential users. Two community associations of deaf-blind individuals were consulted: ASOCYL (Association of Deaf-Blind People Castilla y León) and ASPAS (Association of Parents and Friends of the Deaf).

Although TactileCom is presented as a communication system for individuals with deaf-blindness, unimpaired subjects can also benefit from it. Its application in environments with difficult verbal communication is straightforward, e.g., noisy workplaces where people from different countries collaborate together, as in major infrastructure works or oil rigs, or for multimodal action-specific warnings [

34]. The coded work instructions and coordination between the workers would be language independent. The wireless link to the HMI overcomes the noise limitations of the environment and even the lack of visual between sender and receiver.

Case Scenario

TactileCom is aimed at deaf-blind individuals, so it is specifically helpful when somebody wants to convey a message to a person with deaf-blindness.

Figure 1 presents an overview of the scenario with all the parts involved. On one side (that of the deaf-blind user), the system is composed of the vibrotactile glove to receive messages and a small keyboard to send messages. On the other side, the unimpaired interlocutor sends and receives messages by means of a computer, i.e., a tablet or smartphone, which implements a voice recognition system.

In daily life, the interlocutor of a deaf-blind individual can send a message or idea by choosing one of the three selection modes included in the mobile application: an icon menu, typing, or voice recognition. The message is transmitted via the wireless link to the efferent HMI, i.e., the glove. The messages are coded in an abstract representation [

33] and delivered on the fingers by a stimulation pattern.

The codification strategy was suggested by potential users. The main principals for that suggested representation are: (i) the users are already familiar with these kind of communication, and (ii) the users only need a small amount of concepts to communicate. It was the latter that prompted the election of the stimulation patterns or tactons [

35] analysed in the following sections. Hence, the simplest tactons were the ones chosen as a starting point to facilitate the everyday use of the HMI. Additionally, due to the abstract meaning-stimulus relationship, this set of tactons scales better than those that use a more mnemonic meaning-stimulus relationship [

33]. Also, this method is faster and easier to decode than those that only transmit characters.

The proposed system leads to an improvement in communication compared with the tactile sign language, which requires proximity between the two interlocutors. Moreover, the wireless link between the two interfaces represents a clear benefit in terms of portability.

2. Methods

The vibrotactile glove was developed for this research project to assess the feasibility of vibrotactile communication of concepts. This efferent HMI is aimed at improving the interaction of the deaf-blind user with both the environment and the rest of the people. The glove is part of the TactileCom system that will be explained in this section along with the assessment procedure. The assessment is focused on the efferent HMI, as it is the main and more innovative part of our TactileCom.

2.1. Apparatus

The system allows the interaction between an unimpaired user A and a deaf-blind user B. User A does not need any previous knowledge of any adapted language, e.g., tactile sign language. The system design follows a methodology based on the involvement of all parties concerned [

36]. This means that potential deaf-blind users, care-givers, and engineers collaborated closely in the discussion of trade-offs, achieving the implementation laid out here.

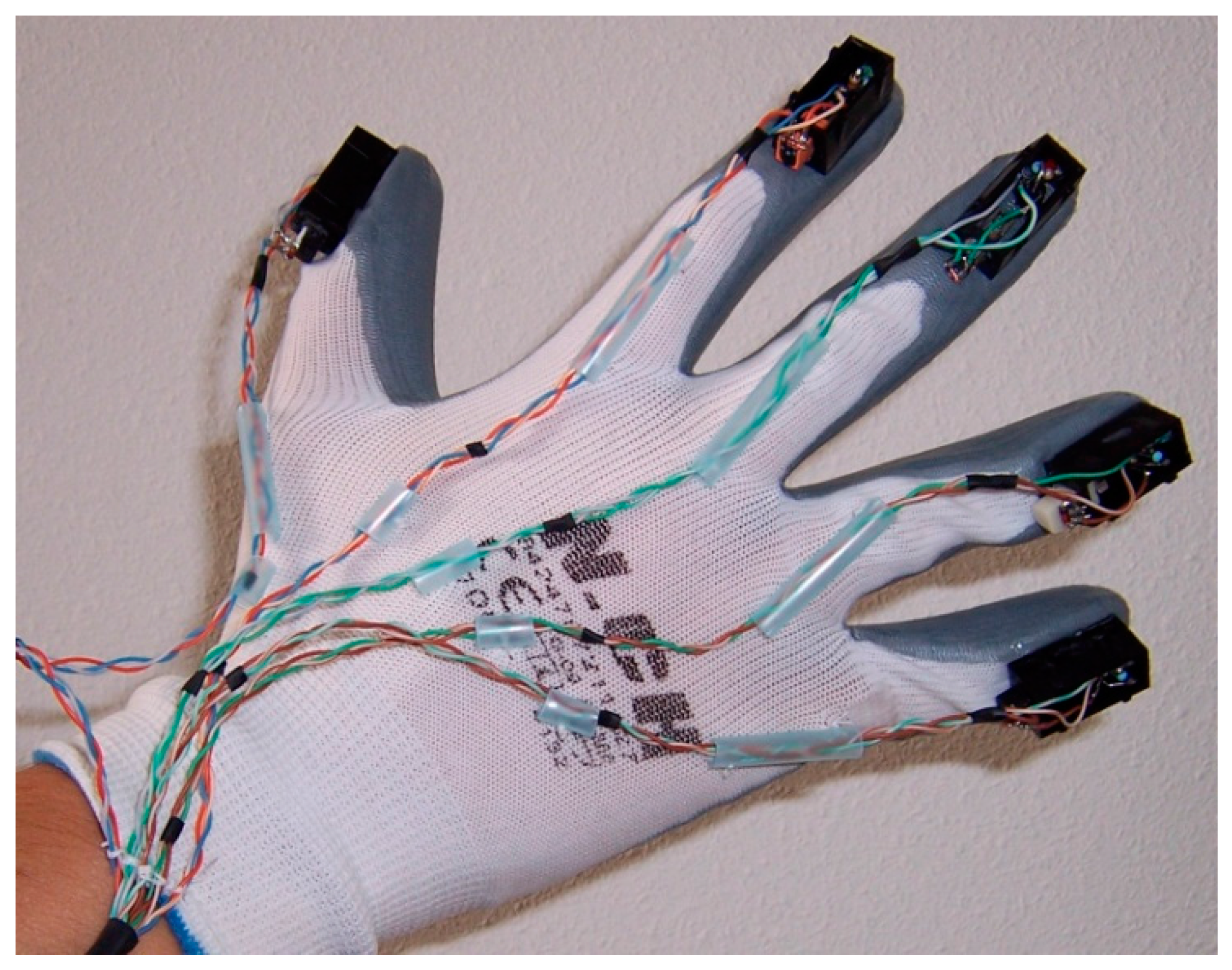

The HMI consists of a glove that stimulates the fingers with a predefined pattern, as shown in

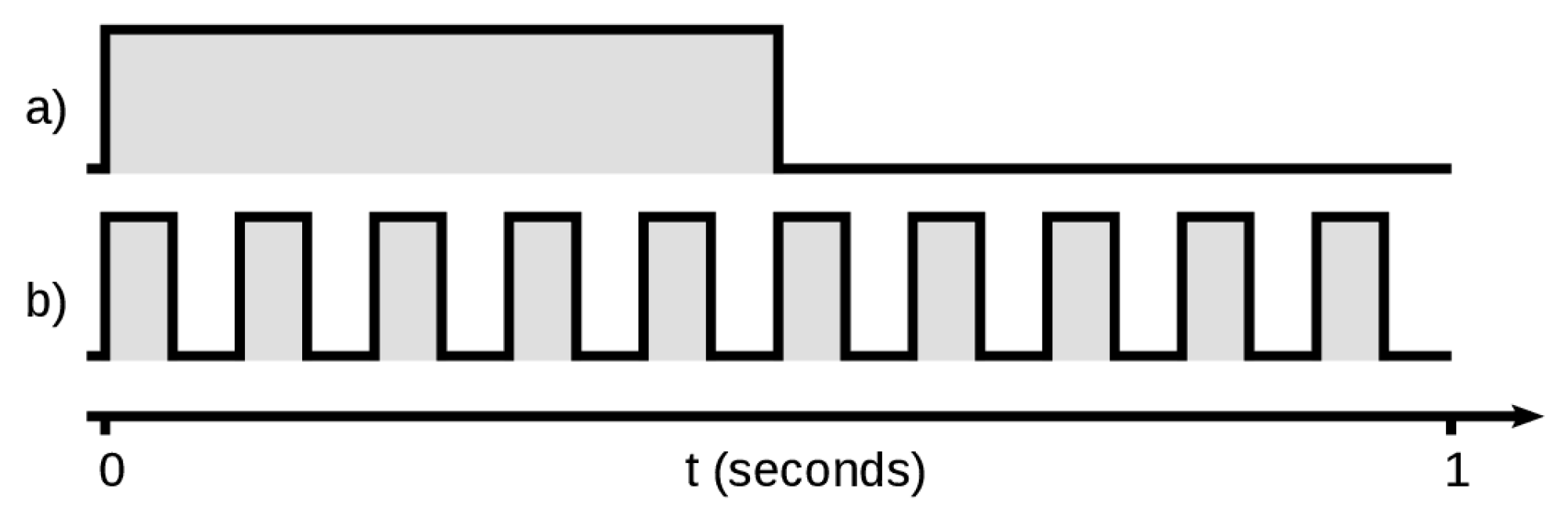

Figure 2. Each finger has a tactor attached, i.e., a DC motor with an eccentric weight mounted on it, so there are five tactors. The DC motor is a CEBEK C6070, used as a vibrator in cellular phones. The motors are controlled by an Arduino Micro board through a set of drivers to deliver the requested current. The Arduino board has a Bluetooth link to connect with the interlocutor. Tactor activation is coded with two on-off keying frequencies—1 Hz and 10 Hz—that modulate the activation or not of the tactors, as shown in

Figure 3. The transient time to achieve the full vibration is negligible at 10 Hz. And this starting transient is compensated with the stopping transient.

Hence, a two-dimensional stimulus mapping is obtained, i.e., tactor activation (location) and tactor keying frequency (rhythm). This mapping is consistent with the amount of information that can be received, processed, and remembered taking into account the span of absolute judgment and the span of immediate memory [

37]. As previous studies have demonstrated, users perform better with two-dimensions rather than with three or more [

38].

According to previous studies [

39,

40], sensitivity towards the location of a touch or toward the separation between touched locations is greater when the body site is more mobile. Finger stimulation in this case fulfils this principle, and this was the main reason for choosing the glove as the efferent HMI. Thus, the fingers are used to stimulate the subject. On the other hand, it has been shown that repeated stimulation of a specific part of the body can lead to an improvement of tactile discrimination performance for that part of the body [

41]. This allows the device to meet the user’s wishes regarding the location of the HMI in another target body part. Hence, other possible HMI locations can be proposed, though proper training is required.

The tactor produces a stimulating burst generated by the 170 Hz tactor rotation frequency. This stimulating frequency avoids the rapid adaptation to stationary touch stimuli [

18]. The ratio between the applied amplitude and the achieved stimulus should be taken into account, as it is related to the tactor rotation frequency. The obtained 170 Hz is close to the range of frequencies with the lowest perception threshold amplitudes and, at that frequency, there is a sensitivity peak at the fingers that reinforces the effect [

42]. This makes the HMI vibrotactile stimuli remain in conscious awareness. Hence, this set of characteristics match the requirements of the system.

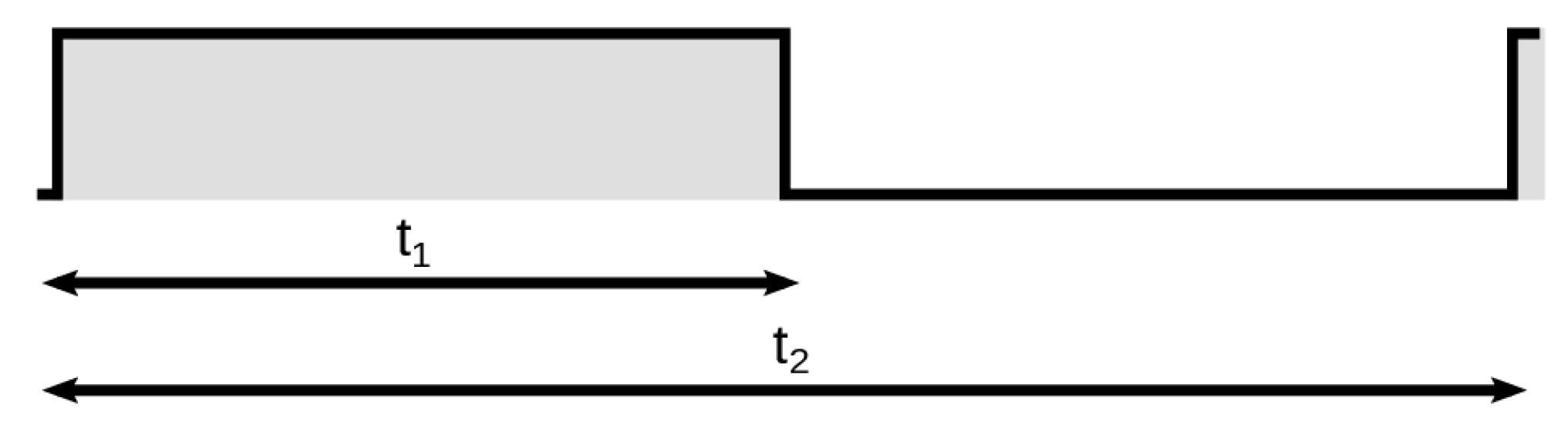

The explained rotation frequency is modulated by means of an on-off keying signal in order to achieve two keying frequencies, i.e., 1 Hz or 10 Hz, as shown in

Figure 3. Thus, two possible stimuli are implemented: 170 Hz modulated with 1 Hz or 170 Hz modulated with 10 Hz. Two performance parameters should be taken into account: the time between the onset and the end of a burst,

t1, and the time between the onsets of two bursts,

t2 (

Figure 4). As the burst duty cycle is 50%, then

t2 is two times

t1. The 1 Hz and 10 Hz frequencies, in combination with the 50% duty cycle, meet the

t1 and

t2 timing constraints to achieve an optimum performance [

20].

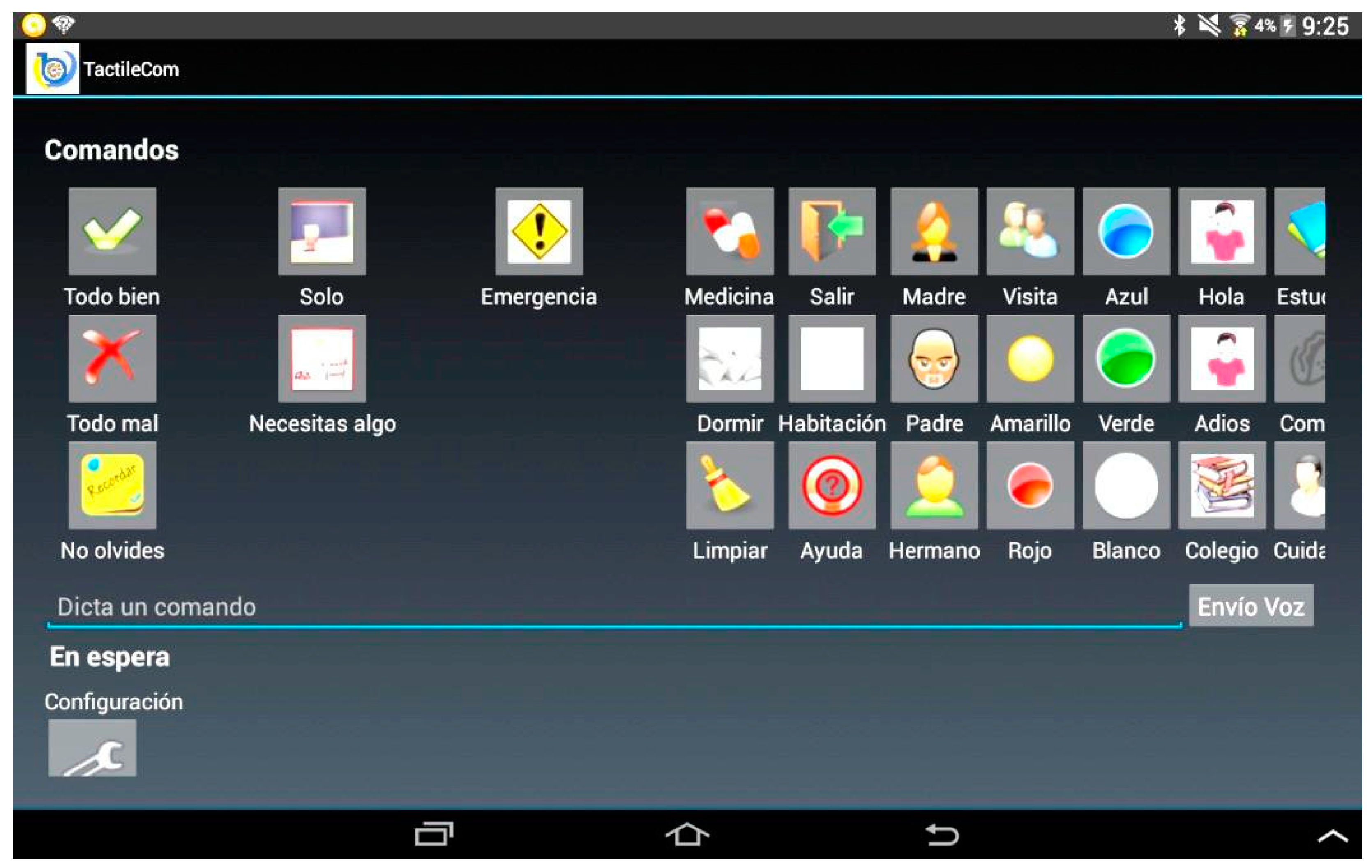

At the other end of the communication channel, the afferent interface is based on a general purpose mobile phone or tablet. Contemporary mobile phones are like small computers, which allows for a broad set of applications. Therefore, an application that provides the user with a set of pieces of information, i.e., concepts or ideas, was developed, as shown in

Figure 5. This set contains the pieces of information that somebody might want to convey to a deaf-blind subject. The unimpaired user can select these messages or ideas from an icon menu, by typing them or by voice recognition. Thus, the previous afferent interface based on a personal computer [

36] is substituted by a more portable one.

The channel between the vibrotactile HMI and the mobile phone is a Bluetooth link. Thus, only one mobile phone at a time can be linked to the efferent HMI that delivers the vibrotactile information to the deaf-blind subject.

2.2. Population

The experimental trials were performed, on the one hand, on eighteen gender-balanced control subjects (CS): nine male and nine female. The CS were students or employees at the University of Valladolid, and their ages ranged from 18 to 58 years old. The experiments were non-invasive, performed with a glove with vibrators, and no physical or psychological risks were involved. The experiments were approved by the Research Ethics Committee of the university (CEI—Comité Ético de Investigación de la Universidad de Valladolid). The subjects were not familiar with psychophysical studies or with the use of this kind of vibrotactile devices to get information from their environment. Seventeen of them were right-handed and only one was left-handed.

The study also included four deaf-blind subjects (DBS)—three male and one female, with an age range from 42 to 45 years old, with Usher’s syndrome, which is the most frequent cause of deaf-blindness in developed countries [

43]. Usher’s syndrome is an autosomal recessive disorder characterized by sensorineural hearing loss and progressive visual loss secondary to retinitis pigmentosa [

43]. Three of the subjects were familiar with other haptic and vibrotactile devices because they suffered advanced deaf-blindness, whereas the other one was not familiar with these systems because he still had some remnant sight. The deaf-blind population is fortunately not very large in Castilla y León (Spain). With a population of 2.5 million, there are 26 individuals affiliated to the deaf-blind associations and their availability is very limited. Therefore, our sample of four represents 15% of the individuals with deaf-blindness in this area.

2.3. Procedure

Twenty stimulation patterns or tactons were implemented on the HMI for the assessment. These patterns include the stimulation of one or more fingers with any combination of the two possible keying frequencies, as shown in

Table 1. The number of patterns can be easily increased according to the user’s necessities, with a maximum of 242. All of these tactons have a one-second duration, as shown in

Figure 3. In the experiment, each tacton is repeated continuously four times to achieve a four-second duration. The repetitions can be reduced as training improves the user’s performance, understood as correct identification of the perceived messages.

This pattern map was chosen after several meetings with the deaf-blind people and their caregivers. A basic set of messages was proposed for the daily activities; twenty messages was the number agreed upon. To relay these messages, the following design procedure was adopted: (i) stimuli in space—during the meetings, the hand was chosen because of its sensitivity and as a known instrument for them to communicate; (ii) stimuli in time—vibration frequency (170 Hz), adequate for a good perception, and on-off frequencies (1 or 10 Hz), that meet the timing constraints for optimum performance, as explained; and (iii) complexity of the stimuli—deaf-blind people and caregivers reported that the one-finger stimulus was the simplest, simultaneous stimulus of all the fingers is also very simple, and the next complexity level was the combinations of two and three fingers, to be studied in this work.

The same HMI glove was employed with all subjects. Due to the glove’s elastic material, it fitted most of the hands under test, although in some cases when the glove was not tight enough, an elastic bandage was also applied.

The table displays the location and rhythm of the stimulation patterns.

2.4. Initial Training

Before starting the actual assessment with the subjects in the experiment, both CS and DBS went through training to understand how the system worked. The training was both passive (i.e., explanation about the system operation) and active (i.e., wearing the HMI and making a demonstration to get the feeling of the two keying frequencies). There was also some visual feedback, as each glove has a light that blinks on the finger at the same pace as the tacton. The contents of the explanation were the same for both the CS and the DBS; however, the explanation was oral for the CS and then translated into tactile sign language for the DBS by a language assistant.

2.5. Trials with CS and DBS

After the initial training, the assessment began. The subjects were asked to guess the stimulation pattern, i.e., which tactors were turned on and which were the tactor keying frequencies. The subjects were told to guess the tacton as fast and accurately as possible in three attempts. The vibrotactile glove is a communication system, so we expect a high success rate in the first attempt. However, as in oral communication, sporadic repetitions are permitted to clarify the message. Hence, we allowed two more attempts in the procedure. For this task, eight patterns were randomly selected for the experiment, marked as * in

Table 1. If the stimulation pattern was correctly identified, a subsequent pattern was then supplied. Otherwise, if the four-second tacton was wrongly guessed, it was repeated up to three times. The repetition allowed the subject to overcome communication failures when the guessing speed was not a limiting factor. Hence, we considered as a right guess any of the three attempts correctly guessed. In case the pattern was not accurately recognized in those three attempts, it was definitely marked as a wrong identification. Additionally, if the pattern was wrongly recognized by the subject under test, feedback was provided to the user as an aid. The feedback consisted in a new stimulation with the unrecognized pattern after the user was informed about the active tactors and their keying frequencies. This clue was selected because we detected that the main difficulty was guessing which fingers were involved. The subjects were informed in the same way as in the initial training.

These tests took place in a quiet and favourable environment (i.e., an isolated laboratory) in order to eliminate potentially distracting ambient sounds. The CS were blindfolded to avoid visual distractions. Their gloved right hand remained in the air without any physical contact with any surface or other objects. This avoided the bias that might have been produced in cases of contact with any other surface due to vibration propagation [

30].

2.6. Instruction Procedure for the DBS

After performing the assessment test, the DBS were actively instructed on all possible stimulations, as displayed in

Table 1, and on the concepts or ideas associated to the stimulation provided. As DBS are familiar with concept relay, such as tactile sign language, this apprenticeship adds a new communication skill. This means that the subjects do not need to learn another code in order to understand different concepts or expressions that they do not previously know. This happens with Braille code: the user has to learn letters, words, and a grammar to communicate. We found that often deaf-blind people are not receptive to learn this new code. Additionally, it has been shown that this kind of abstract representation with messages scales better [

33] and makes communication faster and more customizable.

2.7. Extended Trials with the DBS

Afterward, the DBS were requested to guess the whole set of patterns shown in

Table 1. The task procedure was repeated in the same manner, i.e., with three possible attempts, in the same environment and without any physical contact of the HMI hand with any other object.

3. Results

In this section, the performance of both groups is compared, starting with the trials after the initial training for understanding the system operation. The results achieved by the DBS in the extended trials are analysed according to the different stimulation parameters, i.e., the number of tactors, the keying frequencies or the fingers involved. The performance is analysed in terms of the success rate. For the statistical analysis, normality is assumed in the populations. A normality test was not considered because of the low number of subjects and the high number of ties achieved during the trials, i.e., repeated values in the sample. With these assumptions, the populations are compared with the t-test, the F-test or the ANOVA test when suitable. Finally, a discussion of the results is presented.

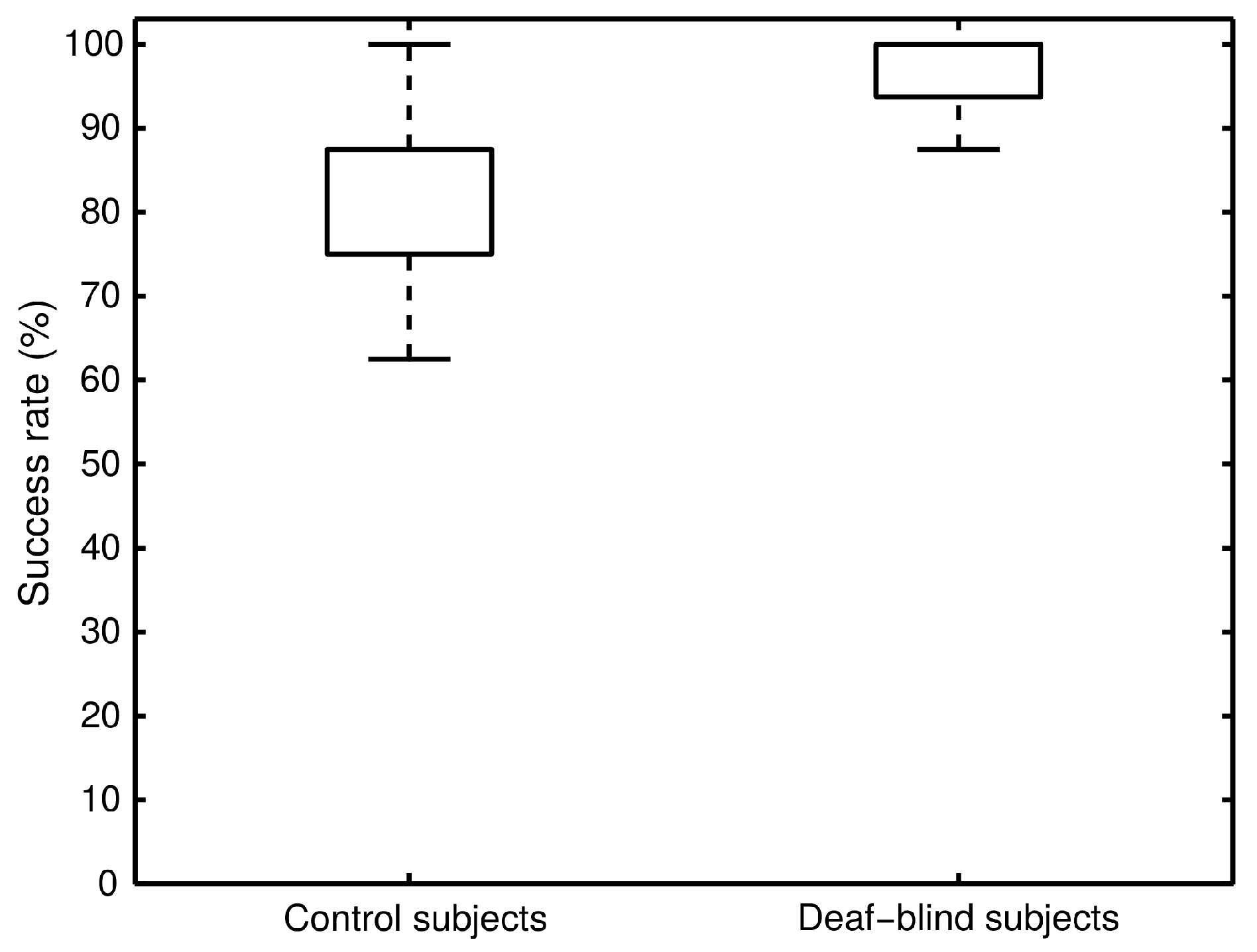

The results of the trials performed on both CS and DBS show a significant difference, as shown in

Figure 6. The figure presents a boxplot with the overall success rate of the eight stimulation patterns under test. It takes into account the three recognition attempts for each pattern. Note that there is no median line inside the box because it coincides with both the 25th percentile and the 75th percentile for the CS and the DBS, respectively.

The performance was analysed by unpaired t-test, which shows different behaviour between the CS and the DBS (p < 0.01). A large difference was observed in the mean success rates: 81% for CS and 97% for DBS. The t-test was applied to the two data sets assuming that have the same variance, as the result of the F-test showed that this assumption was reasonable (F(17,3) = 3.399, p = 0.171).

After the comparative analysis between population samples, the extended trials performed on the DBS were analysed.

Table 2 shows the raw scores achieved during these trials. Their tactile pattern recognition improved in the first attempt from 63% to 71% after the instruction. However, the overall average success rate over the three attempts remained at 97%, while the worst score improved from 87% to 90%, and the statistical mode was 100%. Thus, for the HMI practical purposes, the twenty possible patterns can be recognized with high effectiveness.

Table 2 displays the four possible cases when guessing each stimulation pattern within the three attempts. Tactons are defined in

Table 1. The influence of having either one or more active tactors at the same time is widely reflected in the pattern recognition performance. At first glance, this outcome can be extracted from the obtained data because the patterns applied to a single tactor indicate a success rate of 100%. In contrast, a lower efficiency is achieved with those patterns that activate more than one tactor. The paired

t-test applied to these experimental data (one active tactor vs. multiple active tactors data) reinforces the previous impression (

p = 0.04). In fact, it was noticed that the patterns that simultaneously stimulate the middle finger and the ring finger confuse the subjects, so further analysis should be done.

The two keying frequencies chosen—1 Hz and 10 Hz—were used either isolated or combined. As explained previously, the chosen values present great vibrotactile acuity on the skin. Moreover, the 170 Hz burst stimulus contributes to the performance because of the high vibrotactile sensitivity of the fingers at that frequency [

42]. Thus, the results should be consistent with these statements. Therefore, the one-way ANOVA test (factor: frequencies) reflects no significant difference in recognition both for the isolated keying frequencies—1 Hz and 10 Hz—and their combination (

p = 0.730).

The patterns listed in

Table 1 are applied evenly to all five fingers of the right hand. The one-way ANOVA test (factor: fingers) shows that all the fingers perform in the same way or, at least, performance was qualitatively similar between fingers (

p = 0.938).

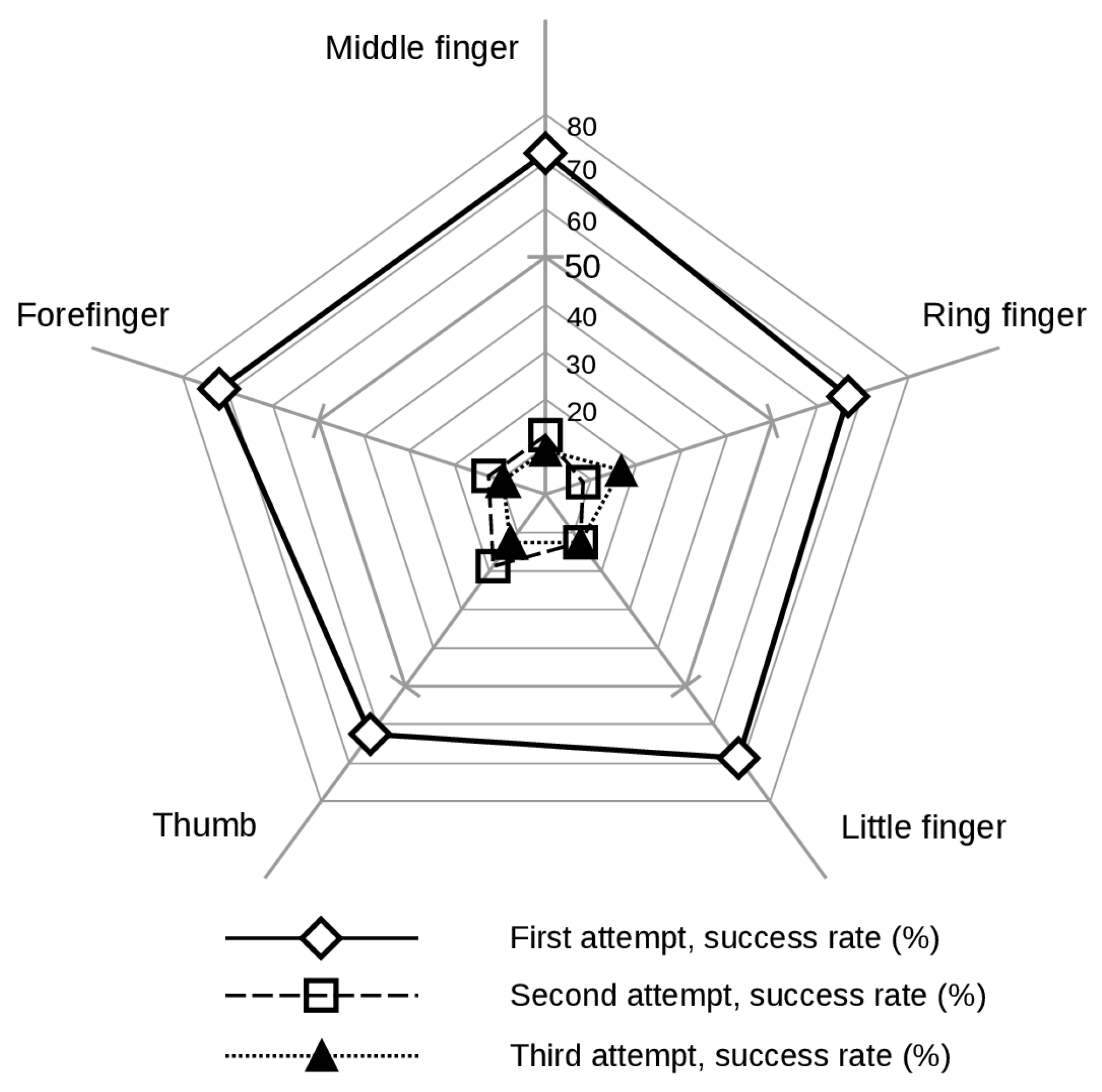

Figure 7 displays the five fingers success rate for each of the three recognition attempts.

Figure 7 includes data from all the stimulation patterns, i.e., the ones that are applied to a single tactor and those that activate more than one. The wrong guess in multiple-finger stimulation is considered in

Figure 7 as incorrect detection for all the stimulated fingers. The figure represents four disjoint events particularized to each finger: guess in the first attempt, guess in the second attempt, guess in the third attempt, and no-guess in any attempt. So the rates associated to each event sum 100%. The success rate for each of the three attempts shows that all fingers perform in a similar way. Subjects guess the finger nearly 70% of the times on the first attempt; a second attempt is required about 15% of the times; and a third attempt is required about 10% of the times. These numbers are consistent with the overall success rate that accounts for 97%. Notice that in these numbers a guess on the first attempt excludes other attempts. A guess on the second attempt excludes the third one. The statistical analysis reinforces the fingers’ similar performance mentioned above (

p = 0.999).

4. Discussion

In order to represent a wide range of population, the CS were selected on a gender-balance basis and age interval from 18 to 58 years old. Older age was limited to avoid tactile sensitivity decrease that comes with age [

24]. The CS are younger adults attending to mean age value, so a better sensitivity is expected from them. This makes the comparative test more challenging for the DBS.

Compared to the CS, the DBS had a better performance. This was not totally unexpected as gathered in the corresponding scientific literature: people with some kind of sense impairment often achieve better acuity through another sense (for example, touch) [

31,

44]. Therefore, future studies on vibrotactile recognition can be carried out by focusing on the performance and the learning of unimpaired subjects. This is due to the fact that most DBS develop better touch sensitivity than unimpaired people; notwithstanding, it is convenient to ensure the validity of the vibrotactile analyses with unimpaired individuals. This will allow us to increase the population sample, make face-to-face tests, and minimize communication issues. However, the tests also need to be performed on DBS because we cannot disregard their feedback, as they are the potential users. They should also participate in the system development sequence [

32].

Pattern recognition improves with the activation of a single tactor. Therefore, the codification of stimuli with a single tactor can be prioritized so as to associate them with the most important concepts or ideas. Thus, two keying frequencies applied to the five fingers give 10 stimuli combinations. This number of combinations is deemed to be good enough to communicate the most important everyday concepts for the interaction between DBS and their care-givers. This is a significant advance for those DBS with no knowledge of adapted language or for those who already handle one but do not require a large number of concepts.

The number of orders with a single tactor can be increased by adding a third keying frequency. This new frequency could be either (a) in the range from 1 Hz to 10 Hz that meets the aforementioned timing constraints [

20], such as 5 Hz, or (b) a 0 Hz keying, i.e., a 170 Hz stimulus burst with no interruption. These keying frequencies can be adapted to each user by choosing a custom set aimed at achieving the best performance. Hence, the new frequency expands the stimulation pattern set and maintains the two-dimensional mapping, i.e., tactor activation and tactor keying frequency. This expanded map fits with the span of absolute judgment and the span of immediate memory [

37]. Thus, the inclusion of a new dimension that could decrease the users’ performance is avoided [

38].

When the stimulation pattern set is expanded by increasing the keying frequencies, those frequencies that meet the timing constraints improve recognition. The experiments show a similar detection performance for both 1 Hz and 10 Hz and their combination. However, the increase in the keying frequency can introduce the skin effect. This effect integrates specific spatio-temporal stimuli, so spatial and temporal masking can be produced [

20]. Thus, timing constraints should be met in order to achieve a balanced recognition performance among the keying frequencies as well as a high success rate.

Great recognition efficiency can be achieved by stimulating the fingers. According to classical studies, as explained in the introduction section, the fingers are one of the most sensitive parts of the body. The developed system takes advantage of these features by means of the vibrotactile stimulation of the five fingers of the right hand. This technique raises a problem because, although the HMI glove allows for the performance of all or almost all regular daily tasks, it is not comfortable for continuous use. This disadvantage was reported by both CS and DBS. Changing the placement of the efferent HMI was considered; however, new locations in the body were not as suitable as the fingers. Moreover, the deaf-blind group reported that they did not want to look different from other people, so the HMI would need to be covered by their clothing. Following the literature guidelines, our research experience and the indications of potential users, our research group is already working on a vibrotactile belt. Even though there is a sensitivity loss, the spatial separability is increased, so the belt does achieve a high degree of recognition [

19], the stimuli can be organized to meet the human cognitive restrictions [

37], and, so far, our preliminary tests show very satisfactory results.

From the results obtained, the system is also suitable for unimpaired individuals. Its success rate, although a bit lower than that of the DBS, is high enough to consider it efficient. This fact supports TactileCom as a communication alternative for noisy and/or multilingual working environments.

5. Conclusions

Communication of concepts through a vibrotactile device is feasible. Responsiveness is much higher with this device than letter by letter communication. A bidirectional communication system named TactileCom was developed for this purpose. The system is aimed at helping the deaf-blind population. However, other communication applications are possible, e.g., in noisy or multilingual environments. The analysed HMI is a vibrotactile glove that was tested with deaf-blind and unimpaired subjects. The achieved recognition performance is high enough to consider it a valid system: 97% for the DBS and 81% for the CS. These results were achieved without previous training with the HMI. The ability to discriminate vibrotactile patterns was more acute in the deaf-blind individuals than in unimpaired subjects.

A dedicated experiment focused on DBS was performed in a second stage. The success rate improved from 63% to 71% on the first attempt. These results show that adaptability to the HMI is fast and learning its functioning is easy. The response of the DBS was also analysed in terms of pattern complexity, the two keying frequencies, and the sensitivity of each finger. The outcome showed a 100% success rate when the stimulus was applied to a single tactor and a lower performance when multiple tactors were activated.

As the device is aimed primarily at aiding the deaf-blind population, the development procedure needs to take into account opinions and suggestions by these potential users. The subjects of the trials expressed their satisfaction with the general HMI operation and suggested improvements related to practical issues concerning everyday life activities and aesthetics. Their feedback led us to the design of new vibrotactile HMIs to wear under the clothing.

For the potential user, TactileCom is a communication system of substitution that can codify concepts or ideas. This allows a faster and more efficient communication than the character-by-character coding. On the other hand, tactor usage is more efficient than those in tactile displays used to present a figure. And finally, the person who communicates with the deaf-blind individuals does not require previous knowledge of the coding language, as opposed to tactile sign language.