Dynamic Defense Strategy Selection Through Reinforcement Learning in Heterogeneous Redundancy Systems for Critical Data Protection

Abstract

1. Introduction

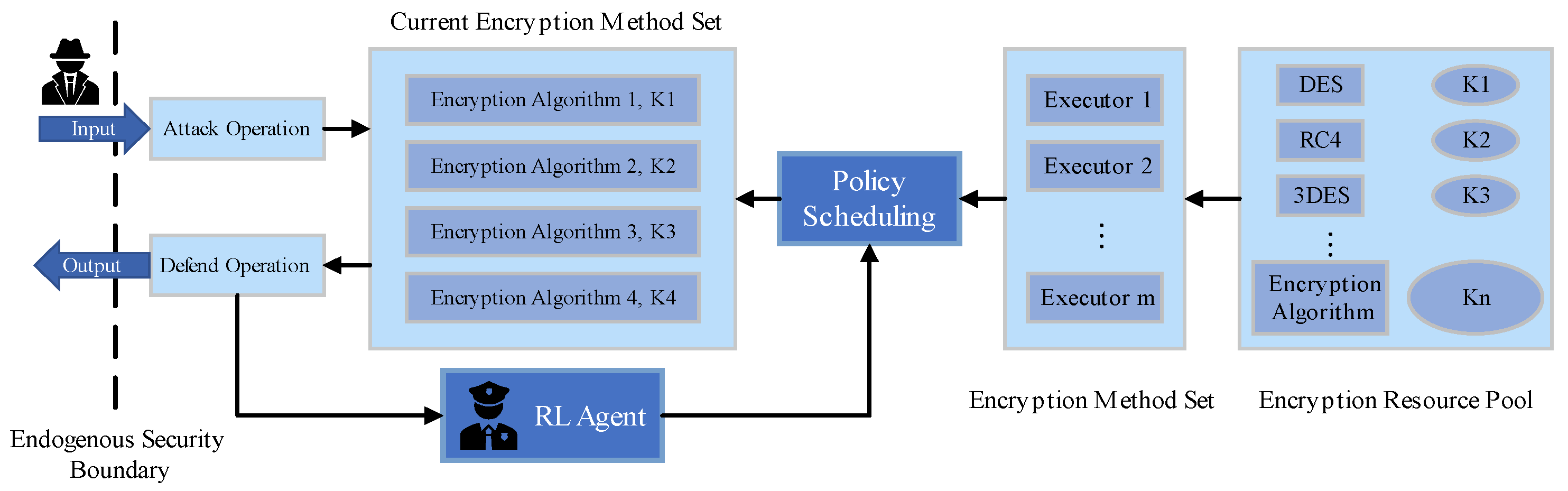

- It introduces a reinforcement learning-based framework designed to enhance the dynamic and adaptive defense capabilities of the DHR architecture. By integrating reinforcement learning to control the strategy scheduling of the DHR architecture, the proposed framework outputs defense strategies based on environmental information, effectively responding to emerging threats.

- It provides a simulation environment that mimics attack–defense scenarios, offering a robust platform to validate the proposed approach.

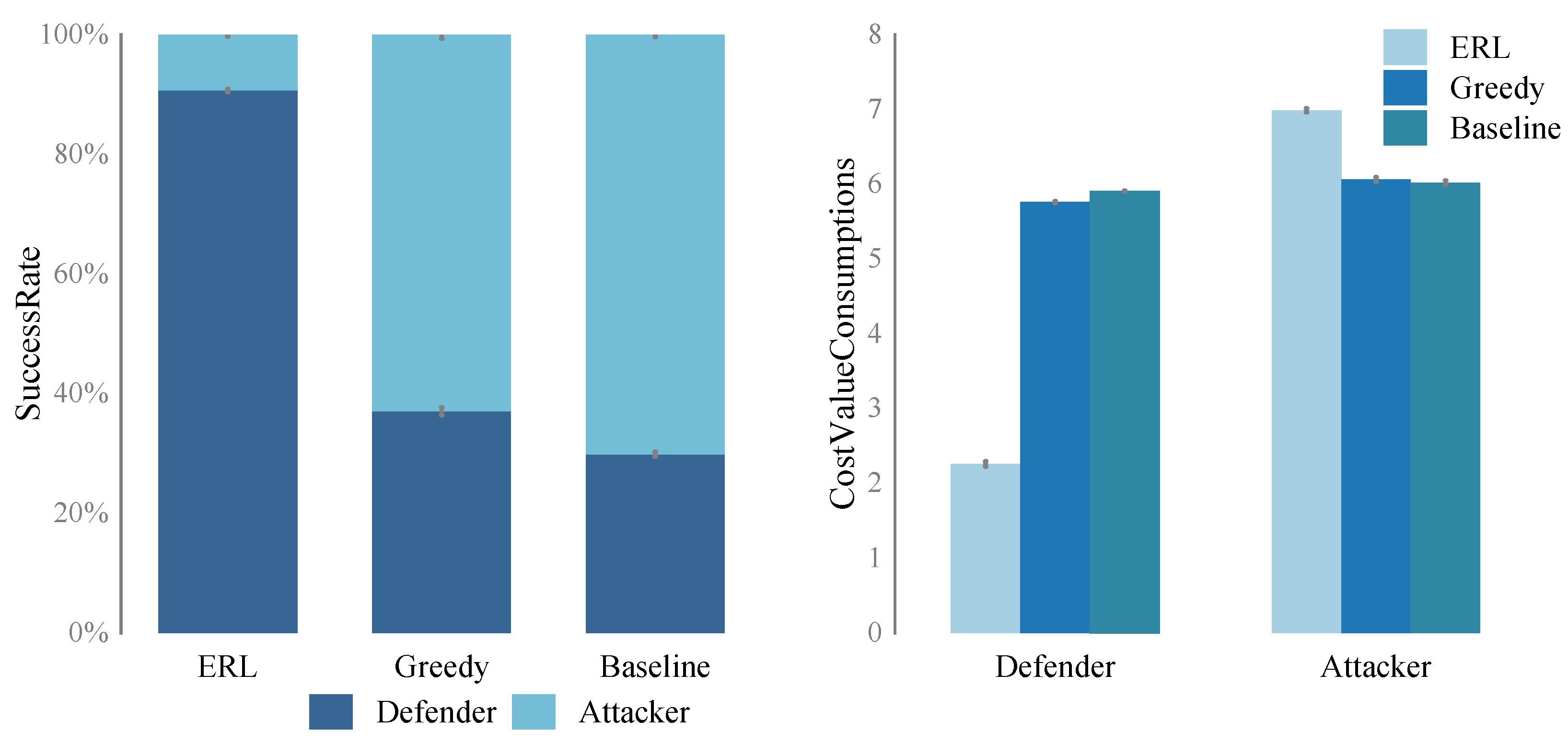

- The research presents a comparative analysis, demonstrating the superiority of the proposed reinforcement learning-enhanced mimic defense system over traditional static and heuristic approaches.

2. Related Work

2.1. Defense Strategies for Critical Data Protection in Network Systems

2.2. Application of Reinforcement Learning in Network Security

3. Methodology

3.1. Attack and Defense Scenario System Design

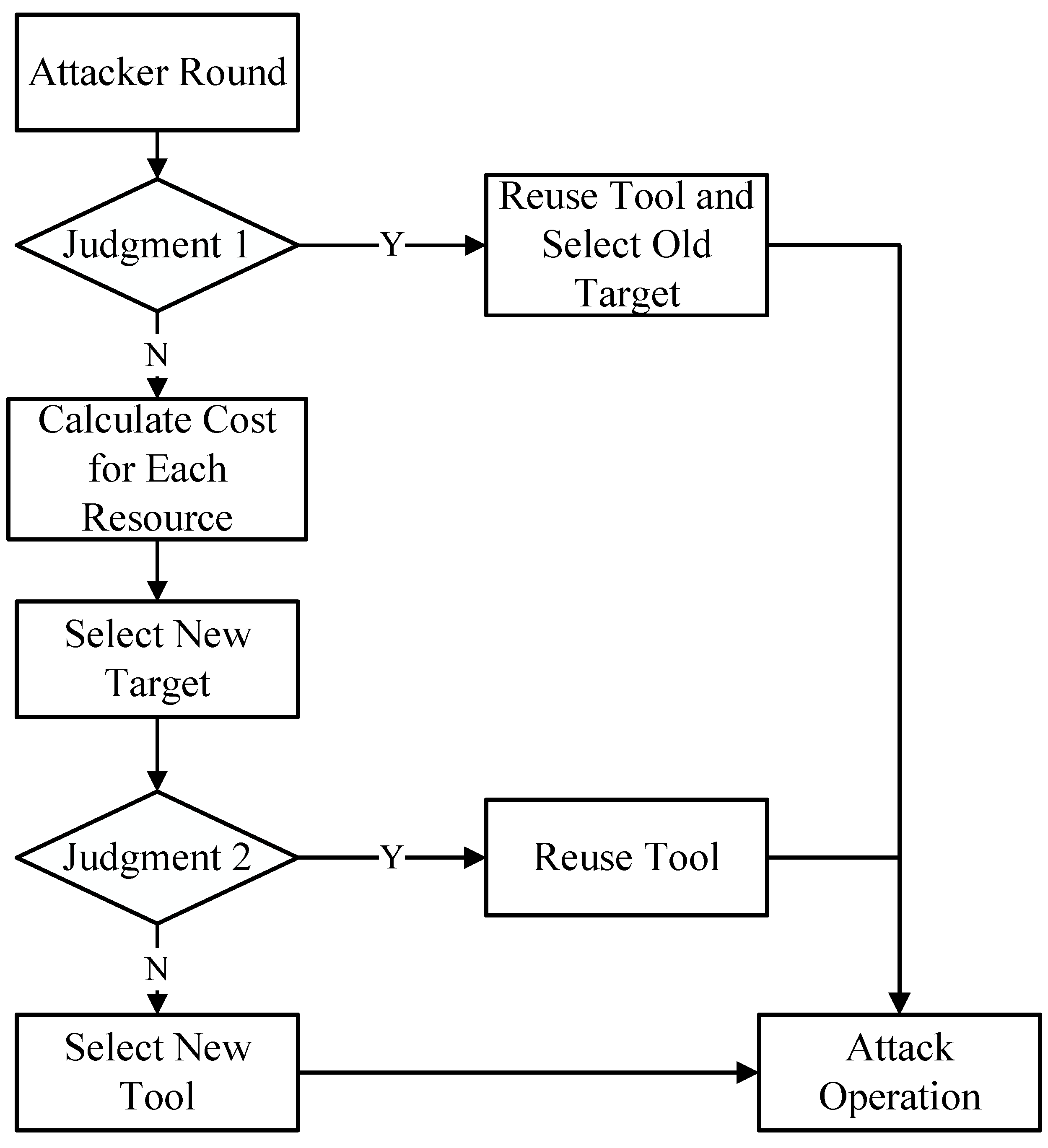

- (1)

- Enter the attacker’s round.

- (2)

- The attacker checks (corresponding to “Judgment 1” in Figure 2) whether the tool selected in the previous round can be reused for the previously chosen target resource. If yes, the attacker reuses the tool to continue attacking the previous target resource, ending the round. If no, proceed to the next step.

- (3)

- For the outermost layer of each resource, determine whether to reuse the tool from the previous round. If yes, calculate the value of the resource after removing the outermost layer; if no, directly calculate the value including the outermost layer. This step corresponds to “Calculate for Each Resource” in Figure 2.

- (4)

- Compare the values of each resource and select the resource with the smallest value as the target resource for this round. This step corresponds to “Select New Target” in Figure 2.

- (5)

- The above process determines the target resource for the attacker’s round. Once the target resource is determined, choosing the attack tool becomes relatively straightforward. Next, determine whether the value of the new target calculated in Step (3) was derived after removing the outermost layer (corresponding to “Judgment 2” in Figure 2). If the calculation was based on reusing the tool from the previous round and removing the outermost layer, the attacker reuses the tool from the previous round (corresponding to “Reuse Tool” in Figure 2). If not, the attacker selects a tool with higher reusability for the top 2 to 3 layers. Here, the function can be directly used to match the top 2 or 3 layers. If the function reveals that the top 2 or 3 layers of the new target resource cannot be reused, the attacker directly selects an attack tool of length 2 that can attack the outermost layer (corresponding to “Select New Tool” in Figure 2).

- (6)

- After confirming both the target resource and the tool, the attacker launches the attack, ending the round.

| Algorithm 1 Target Resource Selection Algorithm |

|

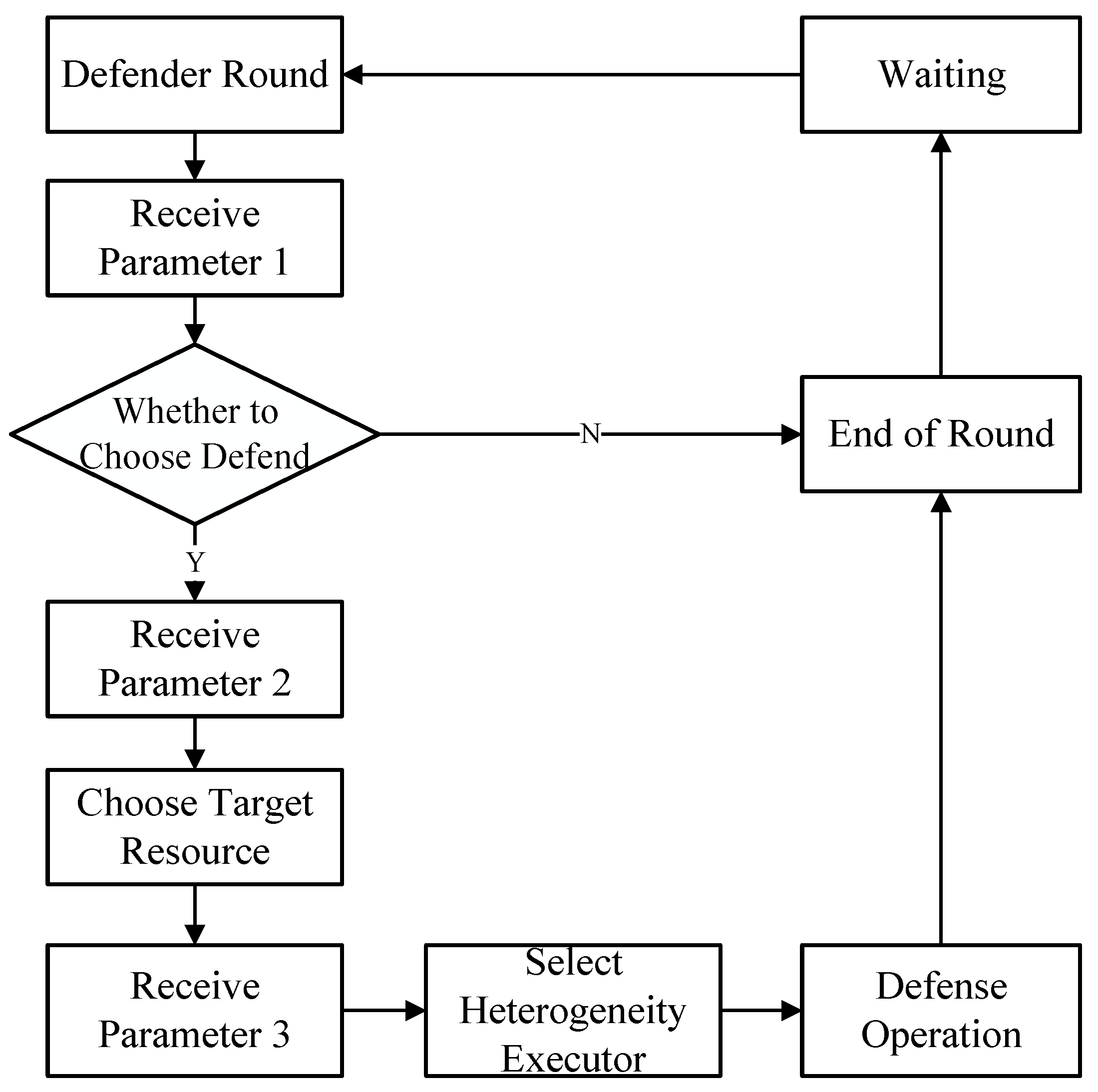

3.2. Reinforcement Learning Framework Platform Construction

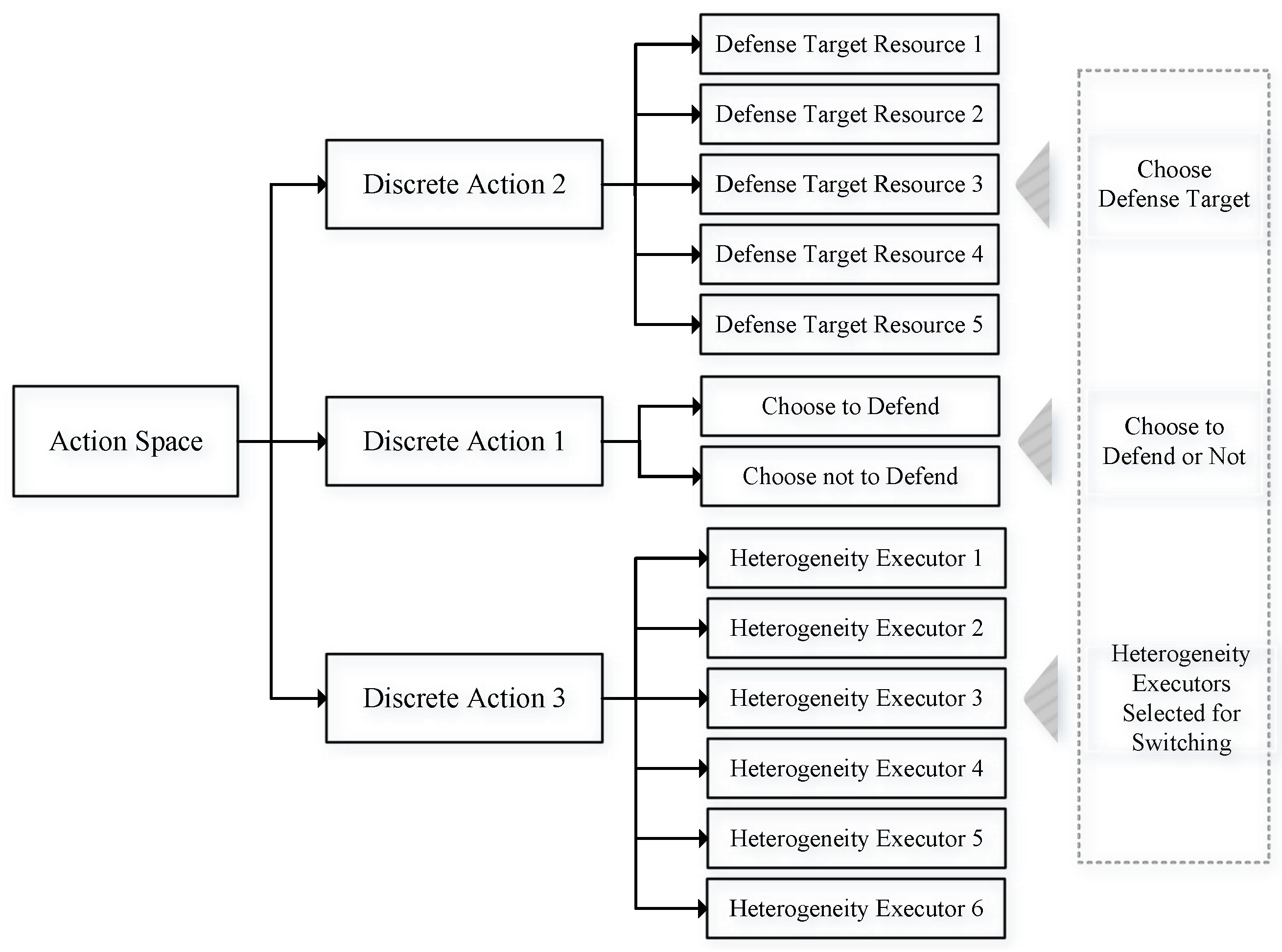

3.2.1. Action Space Design

- (1)

- Discrete Action Space: In this type of action space, the agent’s selectable actions form a discrete set. For example, in board games, the action space might include all legal moves of the pieces or specific positions on the board. In a discrete action space, each action represents a specific choice.

- (2)

- Continuous Action Space: In this type of action space, the agent can choose a series of continuous values as actions. For instance, in robot control problems, the action space might consist of a continuous action vector, where each element represents the angle or speed of different robot joints. In a continuous action space, the agent has an infinite number of possible action choices, typically achieved by selecting specific values within a continuous range.

3.2.2. State Space Design

3.2.3. Reward Function Design

4. Experiment

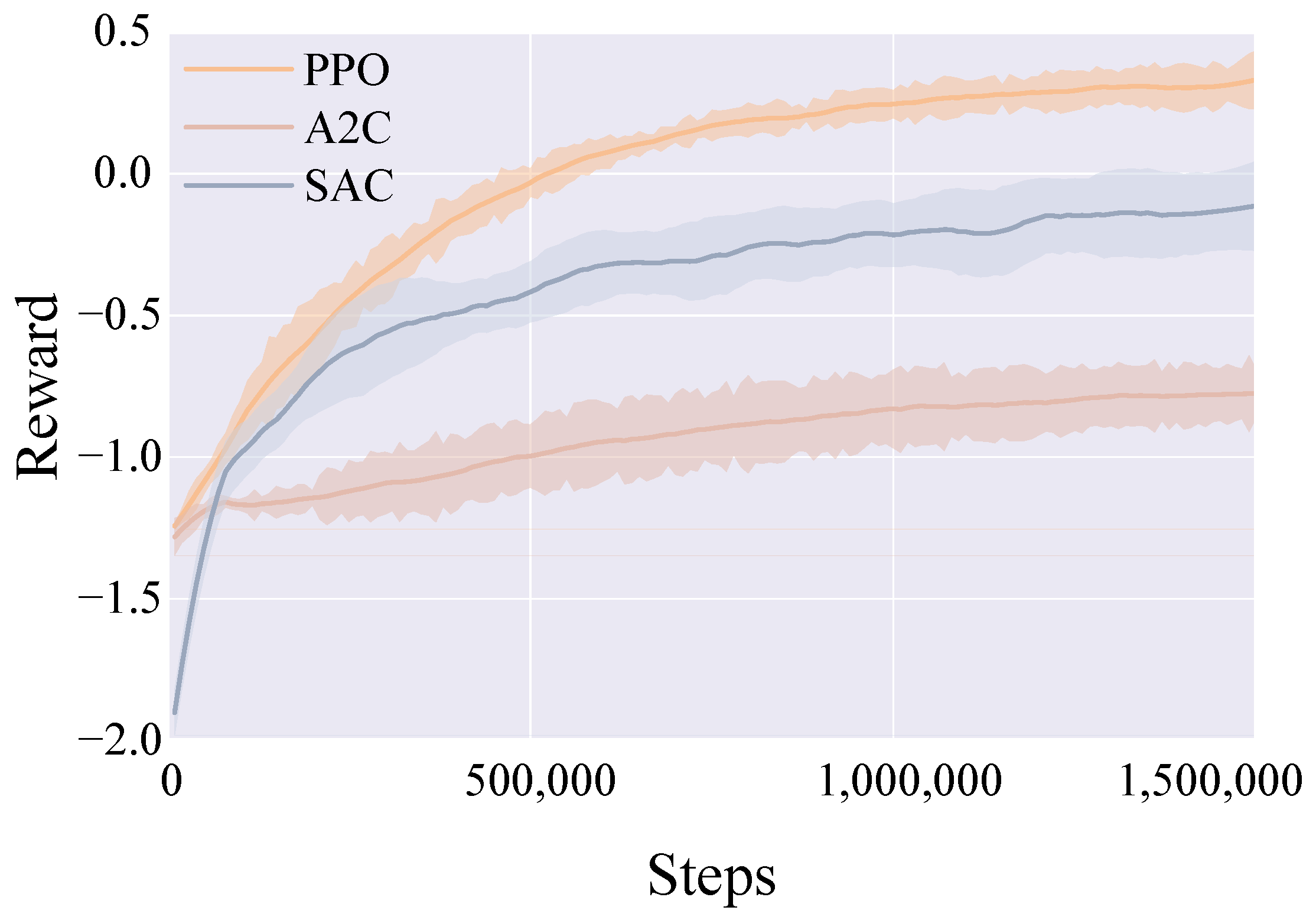

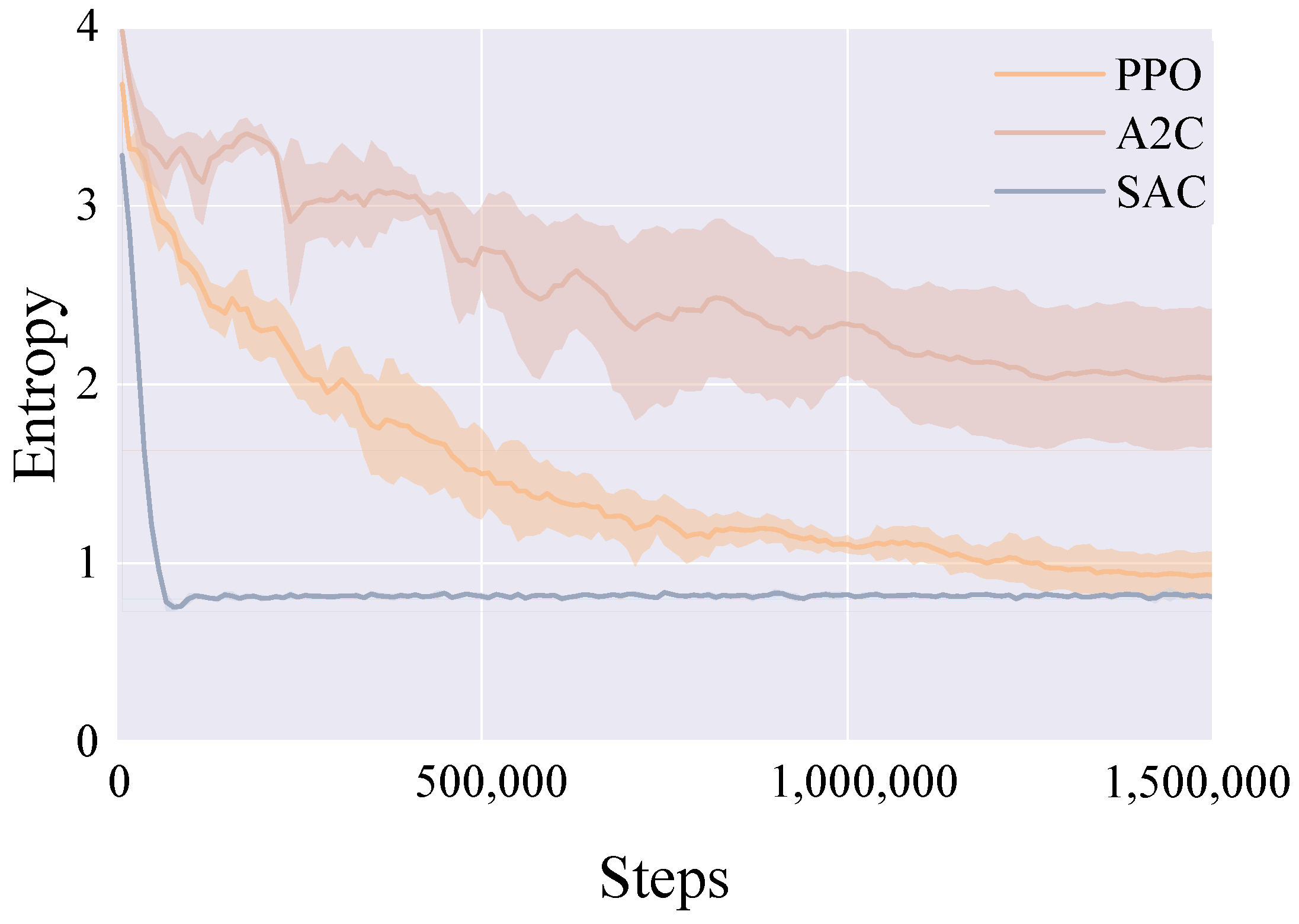

4.1. Preliminary Model Experiment

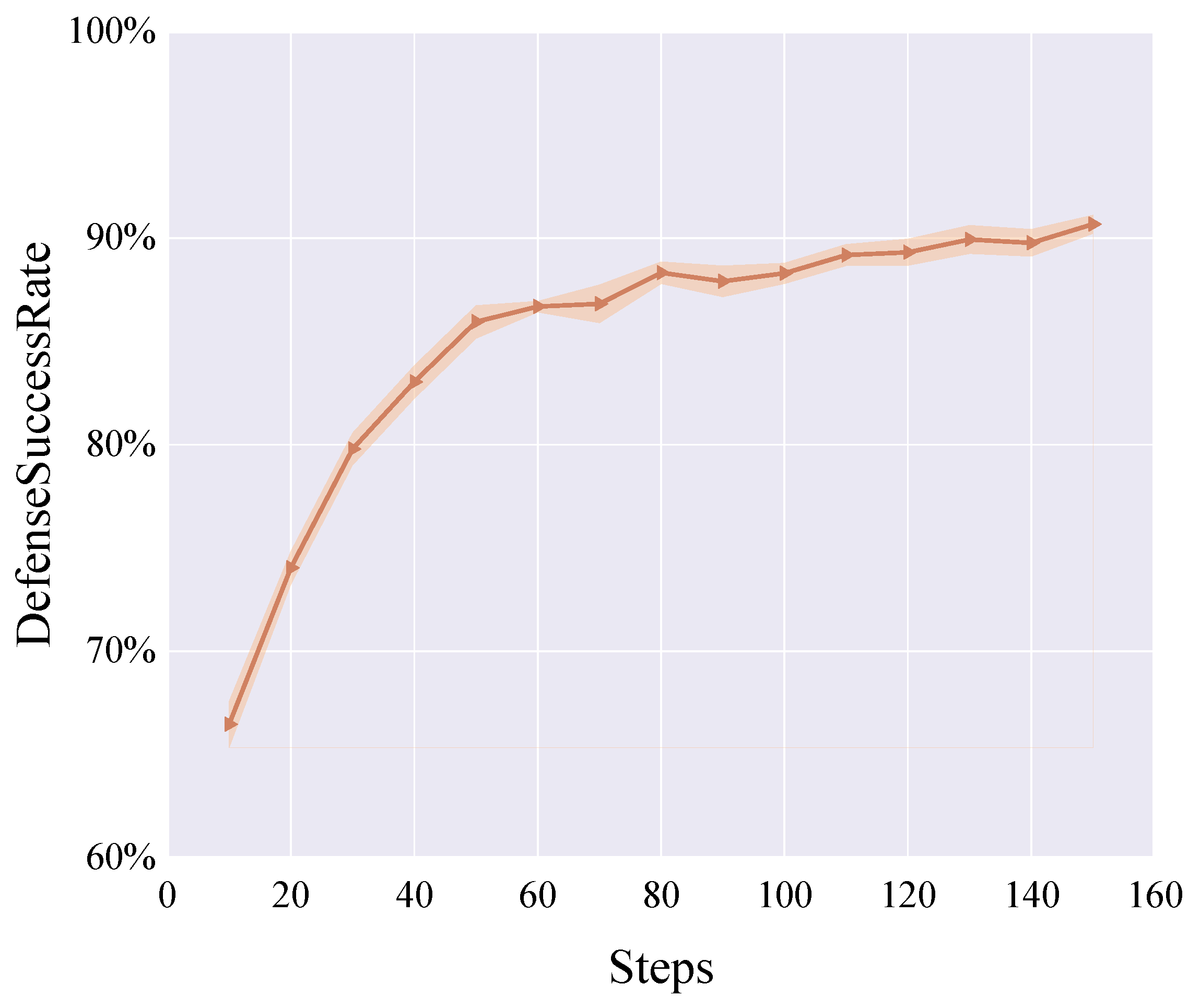

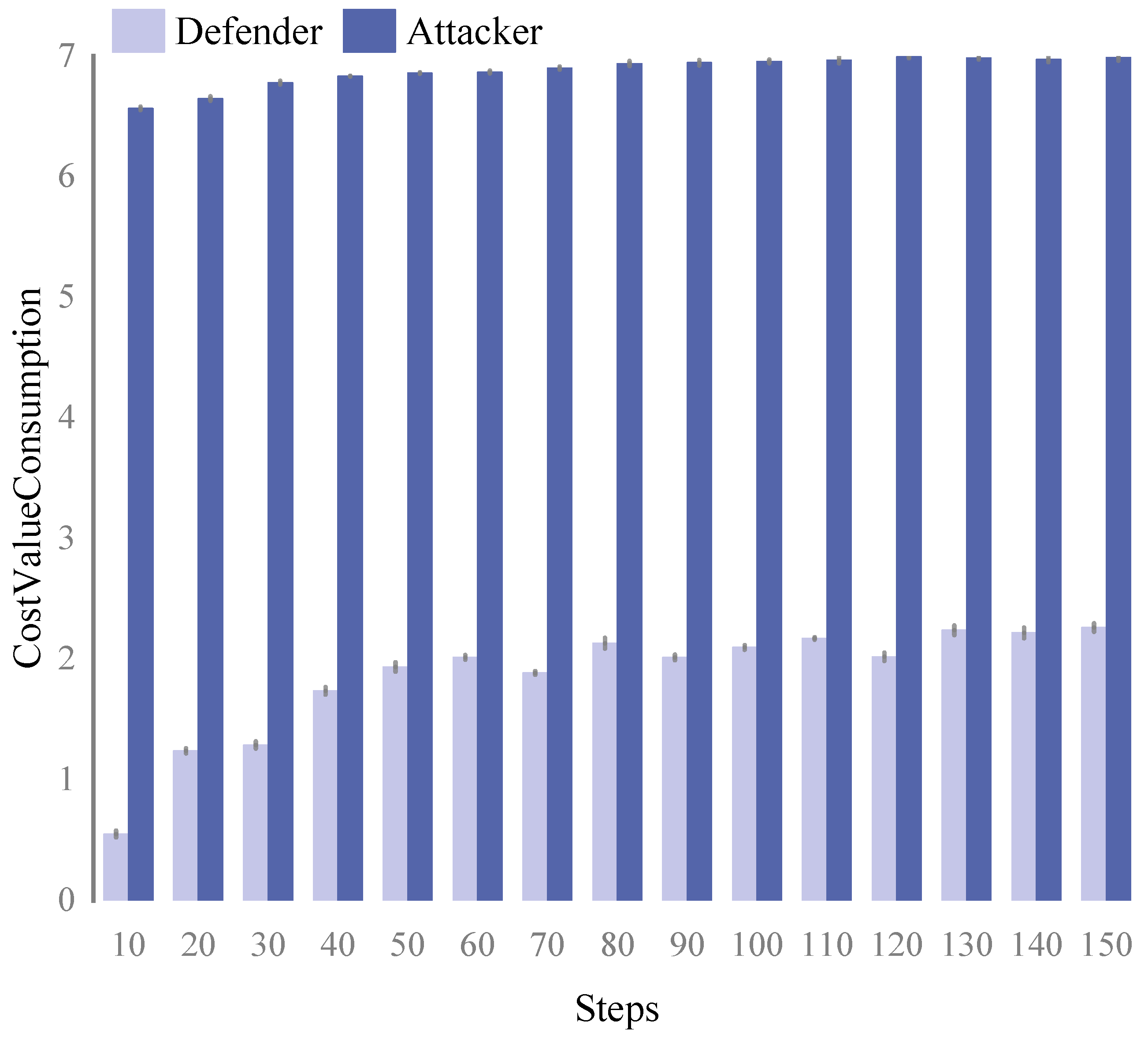

4.2. Performance of the Model at Different Training Stages in Reinforcement Learning

4.3. Comparison of ERL Model Strategies with Other Strategies

5. Discussion

5.1. Contributions

5.2. Ethical and Legal Considerations

5.3. Limitations

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| DHR | Dynamic Heterogeneous Redundancy |

| APTs | Advanced Persistent Threats |

| RL | Reinforcement Learning |

| DDoS | Distributed Denial of Service |

| AI | Artificial Intelligence |

| DRL | Deep Reinforcement Learning |

| IDS | Intrusion Detection Systems |

| SDN | Software Defined Networking |

| QoS | Quality of Service |

| CSOCs | Cybersecurity Operation Centers |

| XRL | Explainable Reinforcement Learning |

| ERL | Reinforcement Learning-based Endogenous Security |

References

- Blanco, J.M.; Del Alamo, J.M.; Duenas, J.C.; Cuadrado, F. A Formal Model for Reliable Data Acquisition and Control in Legacy Critical Infrastructures. Electronics 2024, 13, 1219. [Google Scholar] [CrossRef]

- Lallie, H.S.; Shepherd, L.A.; Nurse, J.R.; Erola, A.; Epiphaniou, G.; Maple, C.; Bellekens, X. Cyber security in the age of COVID-19: A timeline and analysis of cyber-crime and cyber-attacks during the pandemic. Comput. Secur. 2021, 105, 102248. [Google Scholar] [CrossRef] [PubMed]

- Yoo, S.K.; Baik, D.K. Comprehensive damage assessment of cyberattacks on defense mission systems. IEICE Trans. Inf. Syst. 2019, 102, 402–405. [Google Scholar] [CrossRef]

- Williams, B.; Qian, L. Semi-Supervised Learning for Intrusion Detection in Large Computer Networks. Appl. Sci. 2025, 15, 5930. [Google Scholar] [CrossRef]

- Park, T.; Kim, K. Strengthening network-based moving target defense with disposable identifiers. IEICE Trans. Inf. Syst. 2022, 105, 1799–1802. [Google Scholar] [CrossRef]

- Wu, J.; Ji, X.; He, L.; Cao, Z.; Xie, Y. Endogenous Security Enabled Cyber Resilience Research. Inf. Commun. Technol. 2023, 17, 4–11. [Google Scholar]

- Yu, A.; Guan, G. The Research of Computer Network Security Protection Strategy. Comput. Study 2010, 5, 47–49. [Google Scholar]

- Yungaicela-Naula, N.M.; Vargas-Rosales, C.; Pérez-Díaz, J.A.; Zareei, M. Towards security automation in Software Defined Networks. Comput. Commun. 2022, 183, 64–82. [Google Scholar] [CrossRef]

- Sheikh, Z.A.; Singh, Y.; Singh, P.K.; Ghafoor, K.Z. Intelligent and secure framework for critical infrastructure (CPS): Current trends, challenges, and future scope. Comput. Commun. 2022, 193, 302–331. [Google Scholar] [CrossRef]

- Mutambik, I.; Almuqrin, A. Balancing Efficiency and Efficacy: A Contextual Bandit-Driven Framework for Multi-Tier Cyber Threat Detection. Appl. Sci. 2025, 15, 6362. [Google Scholar] [CrossRef]

- Ko, K.; Kim, S.; Kwon, H. Selective Audio Perturbations for Targeting Specific Phrases in Speech Recognition Systems. Int. J. Comput. Intell. Syst. 2025, 18, 103. [Google Scholar] [CrossRef]

- Ko, K.; Kim, S.; Kwon, H. Multi-targeted audio adversarial example for use against speech recognition systems. Comput. Secur. 2023, 128, 103168. [Google Scholar] [CrossRef]

- Ko, K.; Gwak, H.; Thoummala, N.; Kwon, H.; Kim, S. SqueezeFace: Integrative face recognition methods with LiDAR sensors. J. Sensors 2021, 2021, 4312245. [Google Scholar] [CrossRef]

- Gao, N.; Gao, L.; He, Y.; Le, Y.; Gao, Q. Dynamic Security Risk Assessment Model Based on Bayesian Attack Graph. J. Sichuan Univ. Sci. Ed. 2016, 48, 111–118. [Google Scholar]

- Huang, L.; Feng, D.; Lian, Y.; Chen, K.; Zhang, Y.; Liu, Y. Method of DDoS Countermeasure Selection Based on Multi-Attribute Decision Making. J. Softw. 2015, 26, 1742–1756. [Google Scholar]

- Si, J.; Zhang, B.; Man, D.; Yang, W. Approach to making strategies for network security enhancement based on attack graphs. J. Commun. 2009, 30, 123–128. [Google Scholar]

- Jin, Z.; Li, D.; Zhang, X. Research on dynamic searchable encryption method based on Bloom filter. Appl. Sci. 2024, 14, 3379. [Google Scholar] [CrossRef]

- Wu, J. Research on Cyber Mimic Defense. J. Cyber Secur. 2016, 1, 1–10. [Google Scholar]

- Wu, J.; Zou, H.; Xue, X.; Zhang, F.; Shang, Y. Cyber Resilience Enabled by Endogenous Security and Safety: Vision, Techniques, and Strategies. Strateg. Study Chin. Acad. Eng. 2023, 25, 106–115. [Google Scholar]

- Yuan, H.; Guo, J.; Mingyang, X. Research on honeypot based on endogenous safety and security architecture. Appl. Res. Comput. 2023, 40, 1194–1202. [Google Scholar]

- Chen, H.; Han, X.; Zhang, Y. Endogenous Security Formal Definition, Innovation Mechanisms, and Experiment Research in Industrial Internet. Tsinghua Sci. Technol. 2024, 29, 492–505. [Google Scholar] [CrossRef]

- Cai, N.; He, G. Multi-cloud resource scheduling intelligent system with endogenous security. Electron. Res. Arch. 2024, 32, 1380–1405. [Google Scholar] [CrossRef]

- Hu, Z.; Zhu, M.; Liu, P. Adaptive Cyber Defense Against Multi-Stage Attacks Using Learning-Based POMDP. ACM Trans. Priv. Secur. 2020, 24, 1–25. [Google Scholar] [CrossRef]

- Minsky, M. Steps toward Artificial Intelligence. Proc. IRE 1961, 49, 8–30. [Google Scholar] [CrossRef]

- Bellman, R. Dynamic programming. Science 1966, 153, 34–37. [Google Scholar] [CrossRef] [PubMed]

- Ruszczyński, A. Risk-averse dynamic programming for Markov decision processes. Math. Program. 2010, 125, 235–261. [Google Scholar] [CrossRef]

- Waltz, M.; Fu, K. A heuristic approach to reinforcement learning control systems. IEEE Trans. Autom. Control 1965, 10, 390–398. [Google Scholar] [CrossRef]

- Kröse, B.J. Learning from delayed rewards. Robot. Auton. Syst. 1995, 15, 233–235. [Google Scholar] [CrossRef]

- Bueff, A.; Belle, V. Logic + Reinforcement Learning + Deep Learning: A Survey. In Proceedings of the 15th International Conference on Agents and Artificial Intelligence. SCITEPRESS-Science and Technology Publications; Lisbon, Portugal, 22–24 February 2023.

- Nguyen, T.T.; Reddi, V.J. Deep Reinforcement Learning for Cyber Security. IEEE Trans. Neural Netw. Learn. Syst. 2023, 34, 3779–3795. [Google Scholar] [CrossRef]

- Oh, S.H.; Jeong, M.K.; Kim, H.C.; Park, J. Applying Reinforcement Learning for Enhanced Cybersecurity against Adversarial Simulation. Sensors 2023, 23, 3000. [Google Scholar] [CrossRef]

- Louati, F.; Ktata, F.B.; Amous, I. Big-IDS: A decentralized multi agent reinforcement learning approach for distributed intrusion detection in big data networks. Clust. Comput. 2024, 27, 6823–6841. [Google Scholar] [CrossRef]

- Khayat, M.; Barka, E.; Serhani, M.A.; Sallabi, F.; Shuaib, K.; Khater, H.M. Reinforcement Learning with Deep Features: A Dynamic Approach for Intrusion Detection in IOT Networks. IEEE Access 2025, 13, 92319–92337. [Google Scholar] [CrossRef]

- Kumar, A.; Chakravarty, S.; Nanthaamornphong, A. Investigation of the satellite internet of things and reinforcement learning via complex software defined network modeling. Int. J. Electr. Comput. Eng. (2088-8708) 2025, 15, 3506–3518. [Google Scholar] [CrossRef]

- Huang, W.; Gui, W.; Li, Y.; Lv, Q.; Zhang, J.; He, X. Faulty Links’ Fast Recovery Method Based on Deep Reinforcement Learning. Algorithms 2025, 18, 241. [Google Scholar] [CrossRef]

- Xiao, L.; Liu, H.; Lv, Z.; Chen, Y.; Lin, Z.; Du, Y. Reinforcement Learning Based APT Defense for Large-scale Smart Grids. IEEE Internet Things J. 2024, 12, 11917–11925. [Google Scholar] [CrossRef]

- Chen, J.; Lan, X.; Zhang, Q.; Ma, W.; Fang, W.; He, J. Defending Against APT Attacks in Cloud Computing Environments Using Grouped Multi-Agent Deep Reinforcement Learning. IEEE Internet Things J. 2025, 12, 19459–19470. [Google Scholar] [CrossRef]

- Shah, A.; Sinha, A.; Ganesan, R.; Jajodia, S.; Cam, H. Two can play that game: An adversarial evaluation of a cyber-alert inspection system. ACM Trans. Intell. Syst. Technol. (TIST) 2020, 11, 1–20. [Google Scholar] [CrossRef]

- Yasmin, N.; Gupta, R. Modified lightweight cryptography scheme and its applications in IoT environment. Int. J. Inf. Technol. 2023, 15, 4403–4414. [Google Scholar] [CrossRef]

- Shen, X.; Li, X.; Yin, H.; Cao, C.; Zhang, L. Lattice-based multi-authority ciphertext-policy attribute-based searchable encryption with attribute revocation for cloud storage. Comput. Netw. 2024, 250, 110559. [Google Scholar] [CrossRef]

- Zhang, L.; Wang, Z.; Lu, J. Differential-Neural Cryptanalysis on AES. IEICE Trans. Inf. Syst. 2024, 107, 1372–1375. [Google Scholar] [CrossRef]

- Benhamou, E. Similarities Between Policy Gradient Methods (PGM) in Reinforcement Learning (RL) and Supervised Learning (SL). SSRN Electron. J. 2019. [Google Scholar] [CrossRef]

- Juliani, A.; Berges, V.P.; Teng, E.; Cohen, A.; Harper, J.; Elion, C.; Goy, C.; Gao, Y.; Henry, H.; Mattar, M.; et al. Unity: A general platform for intelligent agents. arXiv 2020, arXiv:1809.02627. [Google Scholar]

| Domain | Work | RL Type | Dynamic | Heterogeneous | Redundant |

|---|---|---|---|---|---|

| Intrusion Detection Systems | [32] | MARL | YES | NO | NO |

| [33] | DQN | YES | NO | NO | |

| Software Defined Networking | [34] | DRL | YES | NO | YES |

| [35] | DDPG | YES | YES | NO | |

| Advanced Persistent Threats | [36] | Actor-Critic | YES | NO | YES |

| [37] | MADRL | YES | YES | NO | |

| Adversarial Cyber-attack | [31] | DRL | YES | NO | NO |

| [38] | ARL | YES | YES | NO |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Yu, X.; He, L.; Geng, J.; Liang, Z.; Gan, Z.; Zhao, H. Dynamic Defense Strategy Selection Through Reinforcement Learning in Heterogeneous Redundancy Systems for Critical Data Protection. Appl. Sci. 2025, 15, 9111. https://doi.org/10.3390/app15169111

Yu X, He L, Geng J, Liang Z, Gan Z, Zhao H. Dynamic Defense Strategy Selection Through Reinforcement Learning in Heterogeneous Redundancy Systems for Critical Data Protection. Applied Sciences. 2025; 15(16):9111. https://doi.org/10.3390/app15169111

Chicago/Turabian StyleYu, Xuewen, Lei He, Jingbu Geng, Zhihao Liang, Zhou Gan, and Hantao Zhao. 2025. "Dynamic Defense Strategy Selection Through Reinforcement Learning in Heterogeneous Redundancy Systems for Critical Data Protection" Applied Sciences 15, no. 16: 9111. https://doi.org/10.3390/app15169111

APA StyleYu, X., He, L., Geng, J., Liang, Z., Gan, Z., & Zhao, H. (2025). Dynamic Defense Strategy Selection Through Reinforcement Learning in Heterogeneous Redundancy Systems for Critical Data Protection. Applied Sciences, 15(16), 9111. https://doi.org/10.3390/app15169111