1. Introduction

The evolution of personal transportation is being significantly reshaped by emerging automotive technologies. With the rise of automated and autonomous vehicles, there’s a notable shift in User Interface (UI) development for cars, predominantly at SAE level 2 or “Partial Driving Automation” [

1]. This progression requires drivers to adapt to more sophisticated Advanced Driving Assistant Systems (ADAS) that manage vehicle dynamics but still rely on the driver for object detection and response, increasing cognitive load. Moreover, current Human–Machine Interfaces (HMI) and Human–Computer Interaction (HCI) concepts designed for manually controlled vehicles may inadvertently escalate manual and visual distractions, challenging the promised safety levels of ADAS [

2,

3]. In this context, the human driver’s situational awareness remains crucial, especially as ADAS, despite advancements, are not infallible and may require human intervention to rectify errors or misjudgments in certain scenarios [

4].

1.1. Driving Studies

The categorization of driving research methodologies into Driving Simulators, Naturalistic Driving (ND) studies, Instrumented Vehicle Studies (IVS), and CDS serve as a critical framework for dissecting and understanding the different aspects of driving behavior, vehicle maneuvering, and interactions within the traffic ecosystem. This categorization is instrumental in integrating ET research.

Driving simulators offer a controlled environment for safely assessing driver behavior and cognitive abilities [

5,

6]. These studies highlight both the absolute and relative validity of simulators in mimicking real-road conditions, albeit with noted limitations in replicating the full spectrum of driving errors, particularly those related to vehicle positioning and speed regulation. The controlled nature of simulators allows for the manipulation of specific variables and the safe assessment of driver responses to hypothetical scenarios, which are impractical or dangerous to test in real-world settings.

ND studies are pivotal for capturing real-world driving behavior by equipping vehicles with cameras and sensors [

7]. This unobtrusive data collection approach offers authentic insights into the dynamic interactions between drivers, vehicles, and traffic environments. The strength of ND studies lies in their ability to provide a rich, contextual understanding of driving behavior without the artificial constraints imposed by experimental settings.

IVS are similar to ND studies but utilize real vehicles outfitted with advanced measurement tools [

8]. However, IVS can be more focused in their objectives, often geared towards quantifying specific aspects of driving behavior or vehicle performance under naturalistic conditions. This approach enables a detailed analysis of driver strategy, vehicle usage, and decision-making processes. IVS can serve as a bridge between the authentic environments of ND studies and the controlled conditions of driving simulators.

CDS involve the operation of real cars within a managed setting, such as a closed circuit [

9]. This method allows researchers to study driving behavior under adjustable conditions, facilitating a focused examination of specific hypotheses about driver performance or the effectiveness of interventions. While lacking the ecological validity of ND studies, controlled driving allows for the precise manipulation of experimental variables, making it a valuable tool for testing specific driving aids or interventions under semi-naturalistic conditions.

1.2. Driver Distraction

Driver distraction, defined as the shift of attention from essential driving tasks to other activities, forms a part of the broader concept of driver inattention [

10]. This encompasses several forms, including Driver Diverted Attention, which is synonymous with distraction, whether the focus is on Driving-Related and Non-Driving-Related Tasks (NDRT). Distractions can impede activities necessary for safe driving [

11]. Eye tracking and algorithmic analysis of glance behavior help to quantify driver inattention, despite drivers having visual spare capacity or off-target glances [

12]. Distractions are classified by the National Highway Traffic Safety Administration into four types: visual, auditory, biomechanical (manual or physical), and cognitive. Visual distractions involve loss of road awareness due to a blocked field of vision or focus on non-road visual targets [

13], while auditory distractions come from sounds or auditory signals diverting attention [

14]. Manual distractions involve handling devices or interfaces instead of the steering wheel, reducing reaction time [

15]. Cognitive distractions are thoughts that limit focus on driving, often caused by external factors or cognitive overload, leading to a “Look at but not see” issue.

The NDRT necessitate the allocation of visual attention and give rise to visual distraction, a phenomenon that can be quantified through gaze tracking techniques and subsequently manifests as Total Eyes-Off-Road Time (TEORT) [

16]. TEORT represents the duration during which the driver’s gaze is not directed towards the road but is instead focused on the Area of Interest (AOI) represented by the In-Vehicle Information System (IVIS) interface or any other NDRT including phone usage or eating and drinking.

1.3. Eye-Tracking

ET technology is a valuable tool for user testing as it enables the precise assessment of a subject’s perception and behavior during task execution. ET is widely used in both real-world and simulated settings for diverse measurements, proving especially beneficial in examining human behavior within the contexts of aviation and vehicular driving [

17]. ET is underscored as a crucial method for determining driver distraction through the classification of glance targets. Three primary approaches are present to interpreting ET data in driver attention research [

18]: 1. Direction-Based Approach: Evaluates gaze direction (e.g., forward, up, down) to calculate indicators like Eyes-Off-Road. Its limitation lies in not considering the context of the driver’s gaze; 2. Target-Based Approach: Identifies objects intersected by the driver’s gaze through manual video coding or deep learning. While it distinguishes glance targets, it may overlook the context; 3. Purpose-Based Approach: Focuses on areas deemed essential for driving attentiveness, integrating traffic rules and situational contexts to evaluate gaze relevance and adequacy. These approaches offer varying perspectives on analyzing eye-tracking data for driver attention, each with its strengths and limitations in context sensitivity and specificity. ET systems can record detailed user interactions and provide accurate measurements of gaze shifts, AOI times and pupillometry, even for cognitive abilities. Previous CDS measurements were based on a wearable eye-tracker that provides fixation points of the driver’s gaze and detects changes in pupil diameter to monitor distraction and estimate higher cognitive load in comparison tests [

19]. Others introduced the Index of Pupillary Activity, a novel ET measure assessing cognitive load via pupil oscillation frequency [

20]. The replicable method helps differentiating task difficulty and cognitive load.

1.4. Area of Interest

Several key studies stand out in the literature on AOI detection in various applications. Early research has demonstrated the importance of ET in evaluating interface usability using eye movements, establishing a methodology that has become fundamental in usability studies [

21]. This work emphasized the importance of understanding how users interact with interfaces, guiding improvements in design to enhance user experience. In one study researchers highlight differences in gaze patterns between natural environments and lab settings, emphasizing the need to consider natural settings in ET research as it significantly influences gaze behavior [

22]. This study underscored the variability of eye movement data and the importance of designing experiments that mimic real-world settings as closely as possible. Another study tackled the area-of-interest problem in ET research by proposing a noise-robust solution for analyzing gaze data, especially when dealing with faces and sparse stimuli. Their methodology significantly contributed to improving the accuracy and reliability of ET analyses across multiple disciplines [

23]. Another study introduces a specialized system for ET in video lecture contexts, showcasing its utility in enhancing educational research through engagement analysis and cognitive-process understanding [

24]. The research on dynamic AOIs in ET incorporates various methodologies. New methods were introduced for filtering eye movements from dynamic areas of interest, marking a significant advance in real-time ET analysis [

25]. The methodology allows for a more precise and automated analysis of gaze data in scenarios where the objects of interest are not static [

20]. One study presented guidelines for integrating dynamic AOIs in setups involving moving objects, such as aircraft [

26]. This study is crucial for research areas requiring automated and structured analysis of eye movements in dynamic environments, offering a blueprint for setting up such experiments. Another method explores using ArUco fiducial markers to map gaze data in dynamic settings, resolving issues of object occlusion and overlap, and also improves the accuracy of gaze tracking in complex environments [

27]. A different approach introduces an open-source software for determining dynamic AOIs, enhancing tracking in studies with moving stimuli [

28]. From a signal detection perspective, one study investigates the impact of area of interest (AOI) size on measuring object attention and cognitive processes. The findings contribute to a better understanding of the factors that influence the interpretation of ET data [

29]. Some presented a toolkit for wide-screen dynamic AOI measurements using the Pupil Labs Core Eye Tracker, applicable in psychology and transportation research, such as multi-display driving simulators [

30]. This toolkit expands the capabilities of researchers to conduct sophisticated analyses of eye movements in diverse and dynamic visual environments. These studies collectively illustrate the diverse applications and advancements in AOI detection using ET technology.

1.5. Image Binarization

Several articles have explored the complexities of image binarization, especially in the context of historical documents. The evolution of methodologies has highlighted the incorporation of machine learning to enhance preservation efforts [

31]. One review underscores the challenges faced in preserving and digitizing historical documents, which often suffer from degradation, variable text quality, and background noise. This work is pivotal in guiding future research towards developing more robust and adaptive binarization techniques. The AprilTag 2 system has been developed and optimized for better efficiency and accuracy to present significant improvements in the detection of fiducial markers, which are essential for robotics and augmented reality applications [

32]. The AprilTag 2 system improves the detection of fiducial markers. These markers serve as reference points in physical space for various technological applications, including navigation, object tracking, and interaction in augmented reality environments. The presented advancements signify a substantial improvement in the operational capabilities of systems that rely on fiducial markers. This showcases the potential for more seamless integration of virtual and physical elements in technological applications. For instance, in the agricultural sector, researchers have developed an image recognition system for cow identification. This system uses YOLO for cow head detection and CNNs for ear tag recognition, which supports improved herd management in precision dairy farming [

33]. By employing advanced image recognition techniques, the study demonstrates the applicability and benefits of such technologies in the agricultural sector, particularly in enhancing the management and welfare of livestock through improved identification and tracking capabilities. These studies demonstrate the increasing range and ongoing enhancement of image analysis technologies, such as binarization and other techniques, in diverse fields.

1.6. Present Study

Recent advancements in ET technology have greatly improved the understanding of driver behavior and distraction, especially on-road, where there are many diverse environmental variables. Although traditional methods can provide valuable insights, they may not fully address the complexities of real-world driving due to reliability issues, and the inability to adapt to the three-dimensional nature of a driver’s Field of View (FOV). This gap in the literature underscores a pressing need for innovative solutions capable of overcoming these aggravating factors. Specifically, there exists a critical demand for methodologies that can accurately define and detect AOIs within the cabin space of passenger vehicles, where the windshield’s tilt and dashboard architecture introduce unique spatial considerations. Conventional flat-surface marker-based identification systems do not suffice due to potential obstructions, such as the steering wheel or the driver’s hands, and external factors like changing light conditions and glare, which compromise detection efficiency. To address these challenges, our study presents a novel approach that utilizes a mathematical model implemented in Matlab. This model is designed to detect markers with exceptional efficiency, accurately identifying complex areas of interest (AOIs) within the driver’s operational environment. Our method stands out due to its ability to consider the spatial dynamics of in-vehicle interfaces and the external environmental factors that affect visibility and detection accuracy.

This research aims to bridge the gap in AOI detection methodologies by providing a robust tool that enhances the reliability and applicability of eye-tracking studies in CDS. Our contribution is expected to have a significant impact on the field by offering a practical solution to one of the most pressing challenges in understanding driver distraction and cognitive load in real-world conditions.

2. Methods

This research focused on analyzing driver behavior and distraction levels caused by IVIS, with a particular emphasis on traffic safety. The objective was to conduct data acquisition using an ET device to identify instances of gaze diversion from the road (gaze-off-the-road) and precisely quantify visual distractions from onboard interfaces.

2.1. The Experiment

The visual distraction was measured using an ET system, conducted with 10 volunteer participants (2 females, 8 males, aged 20–44 years), who had varying driving experiences and regularly used IVIS-equipped cars. The test took place on the “High-Speed Handling Course” at the ZalaZONE Test Center, Hungary. The study aimed to assess visual distractions during a brief NDRT using two distinct interface types. Uniform test conditions were maintained (same test car and lighting conditions) for all participants, with task brevity ensuring a minimal environmental impact on the results and comparability of tasks. Conducted on a consistent, straight track section under stable weather conditions, this minimized external distractions like road curvature and variable sunlight. The specific task involved adjusting the car’s internal temperature using the climate control system, with each participant receiving standardized verbal and practical instructions on interface use before the test.

2.2. The Measurement System

For detecting visual, manual, and cognitive distractions, the study utilized the Pupil Labs Core, a wearable ET device complemented with high-definition cameras [

34]. This head-mounted device, chosen for its superior accuracy compared to alternatives like the SMI ETG 2.6 and Tobii Pro Glasses 2, featured binocular glasses equipped with two infrared eye cameras and a wide-angle Red-Green-Blue (RGB) world-view camera [

35]. ET data includes video recordings (with a world-view camera, Eye0, and Eye1) and raw data components (such as time stamps, pupil positions, pupil diameters, and calculated gaze positions marked using x and y coordinates). The setup included an extensible, open-source mobile ET system with software for recording and analyzing data. Calibration was semi-automated, and for enhanced post-processing capabilities, ID-tag markers (AprilTag; tag36h11 family [

36]), sized 50 mm × 50 mm and printed on hard plastic, were strategically positioned around the vehicle’s dashboard center console and in the driver’s windscreen area, as shown in

Figure 1. The parameters of the ET system are presented in

Table 1.

2.3. Processing of Measurement

The aim of the measurements is to determine if the driver is looking at the road by tracking their gaze and fixation points using ‘Pupil Labs Software’. The gaze is compared with the positions of ID tags on and around the windscreen. While these tags are initially detected with low density, additional post-processing video analysis steps enhance their precision. The critical aspect of ID tag detection is to identify the AOIs, representing the driver’s usable field of view and the IVIS. Accurately determining these areas allows the precise assessment of whether the driver’s gaze is on the road or looking at the dashboard, providing essential data for measuring eyes-off-the-road times.

2.3.1. Tag Identification

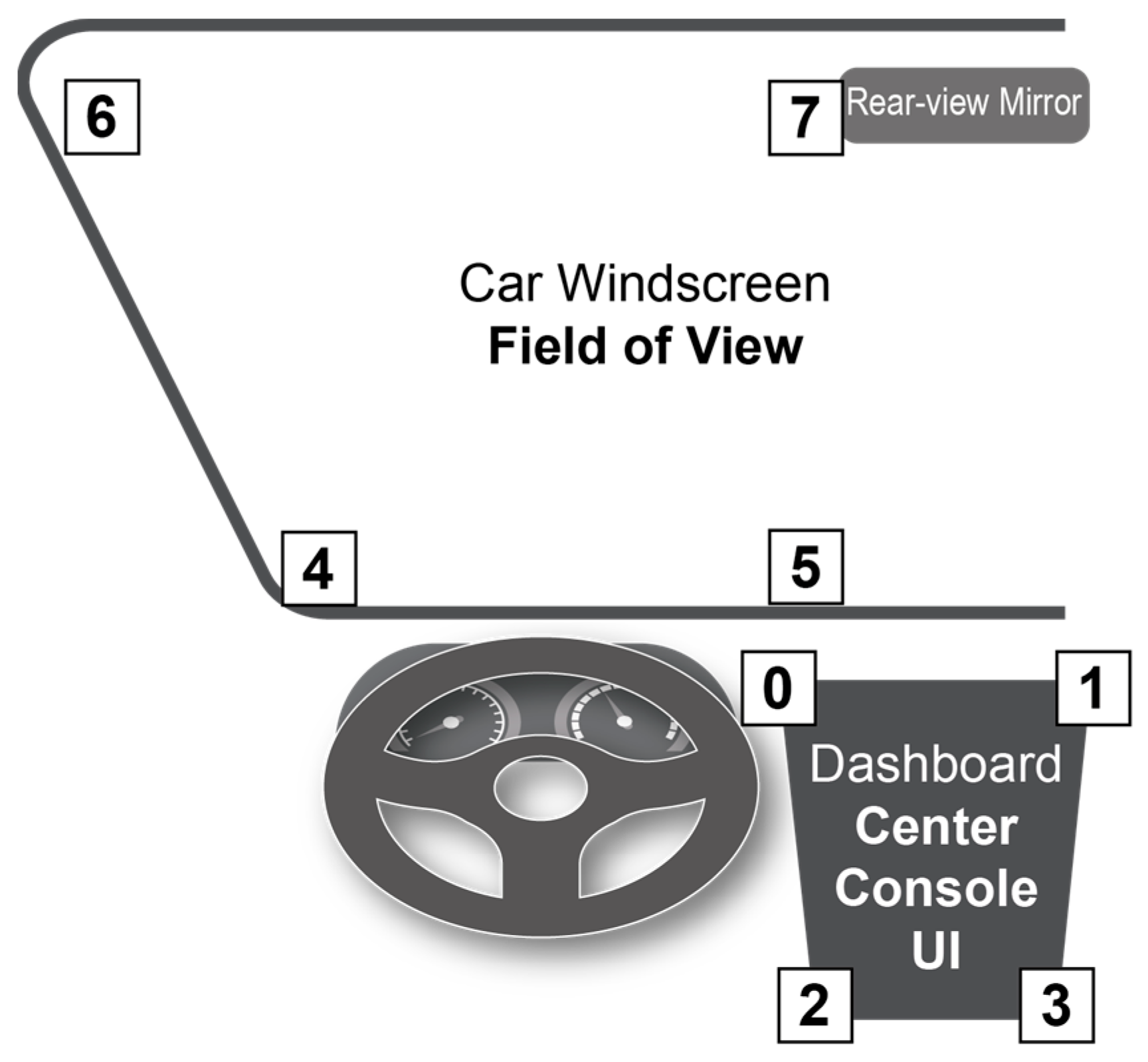

During our previous ET studies, we encountered limitations with the ‘Pupil Labs Software’ when using Pupil Labs ET glasses. The software was unable to accurately detect tags on the vehicle due to sudden obstructions or distortions in the raw video images. The tags, from the 36h11 family, were initially read using MATLAB’s AprilTag function. To mark the corners of the investigated AOIs, the ID tags were positioned as follows and shown in

Figure 2:

Area of driver’s view of the road (windscreen); ID tag numbers: 6, 7, 4, 6 (from left to right down).

Centre console surface (dashboard1); ID tag number: 0, 1, 2, 3, (from left to right down),

One of the challenges encountered was the inconsistent lighting and varying viewing angles, which made it difficult to identify tags in the unedited video. In CDS scenarios, it is difficult to avoid external light variations that may degrade camera images. It has been observed that alterations in lighting conditions may result in flares, optical distortions, and glare. The head-mounted ET glasses move with the subject’s head, avoiding distortion of the AOI areas as the ID tag positions constantly shift and change relative to each other. Another problem is when the hand, arm or control unit (e.g., steering wheel) obscures the ID tag. These phenomena are less likely to occur in a simulation environment.

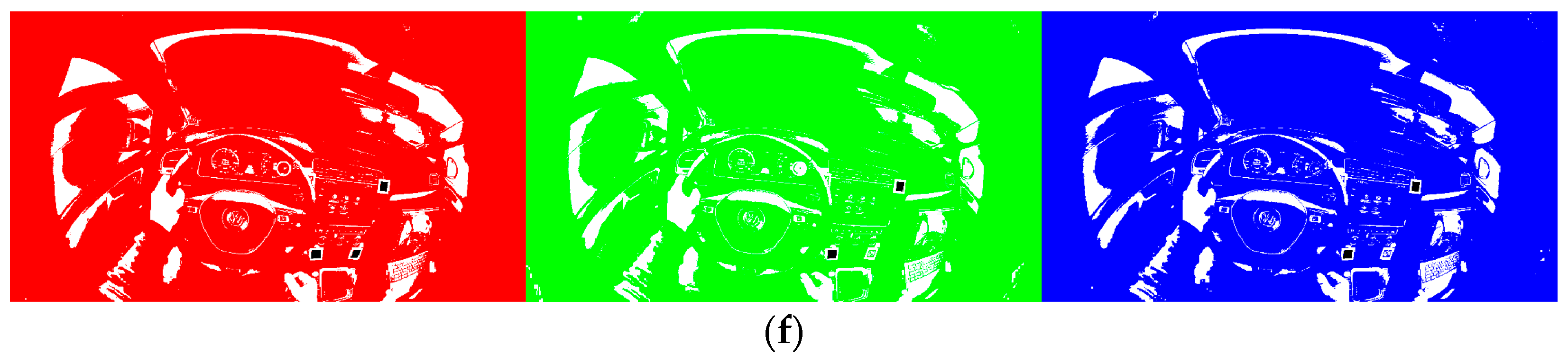

In our research, we devised a novel approach to effectively identify ID tags in video frames; we named our method BAIT. This process involves the initial separation of each video frame into its constituent RGB color components. Subsequently, we implemented a binarization technique on these separated components at varying levels of sensitivity. Binarization here refers to the conversion of the color pixels into a binary format, essentially transforming them into either black or white pixels. The term ‘sensitivity’ in this context describes the threshold value for the color intensity of a pixel to be considered black. For example, at a sensitivity setting of 0%, only pixels with the maximum color intensity value of 255 are rendered black. Conversely, at a sensitivity of 100%, even a minimal color intensity value of 1 is sufficient to turn a pixel black. The analysis includes examining the original RGB frame, taking a holistic approach. For each set of analyses, one original frame and eighteen binarized frames are considered. There are three sets at six different levels of sensitivity each.

Once the frames have undergone this binarization process, we can then identify the ID tags within each frame. These identifications are conducted separately for each binarized frame, and the findings are subsequently amalgamated.

Figure 3 shows the original video frame.

Figure 4 show the results of our binarization process on the three separate RGB components of a frame, performed at different levels of sensitivity. The ID tags successfully identified in each iteration are accentuated in black.

Figure 4 underscores the efficacy of our methodology in recognizing ID tags under diverse illumination conditions. This aspect is crucial, as it demonstrates the system’s adaptability and reliability in accurately detecting ID tags in a range of lighting environments, a key requirement for consistent AOI detection in dynamic settings. It is important to note that this component of our method is most effective when the ID tags are in clear view and not obscured, even in scenarios where the subject’s head is constantly moving while wearing the eye tracking glasses.

2.3.2. Gaze on Road

In our research, we have formulated a method to guarantee effective assessment of driver attention. This involves strategically positioning four ID tags on the windshield, which serve to delineate the driver’s road view field as an AOI. These tags are positioned to form a boundary that frames the driver’s view of the road. When the driver’s gaze is located within the confines of this boundary, it is indicative of the driver’s focus on the road. To ascertain the direction of the gaze, we analyze whether it falls to the left of the boundary lines, which are delineated by connecting these tags in a counterclockwise sequence. It is important to note that these ID tags are not situated precisely at the corners of the windshield. Therefore, we have implemented specific adjustments to their placement, which are elaborately described in

Figure 5. This adjustment is critical to ensure the accurate determination of whether the driver’s gaze is directed towards the road.

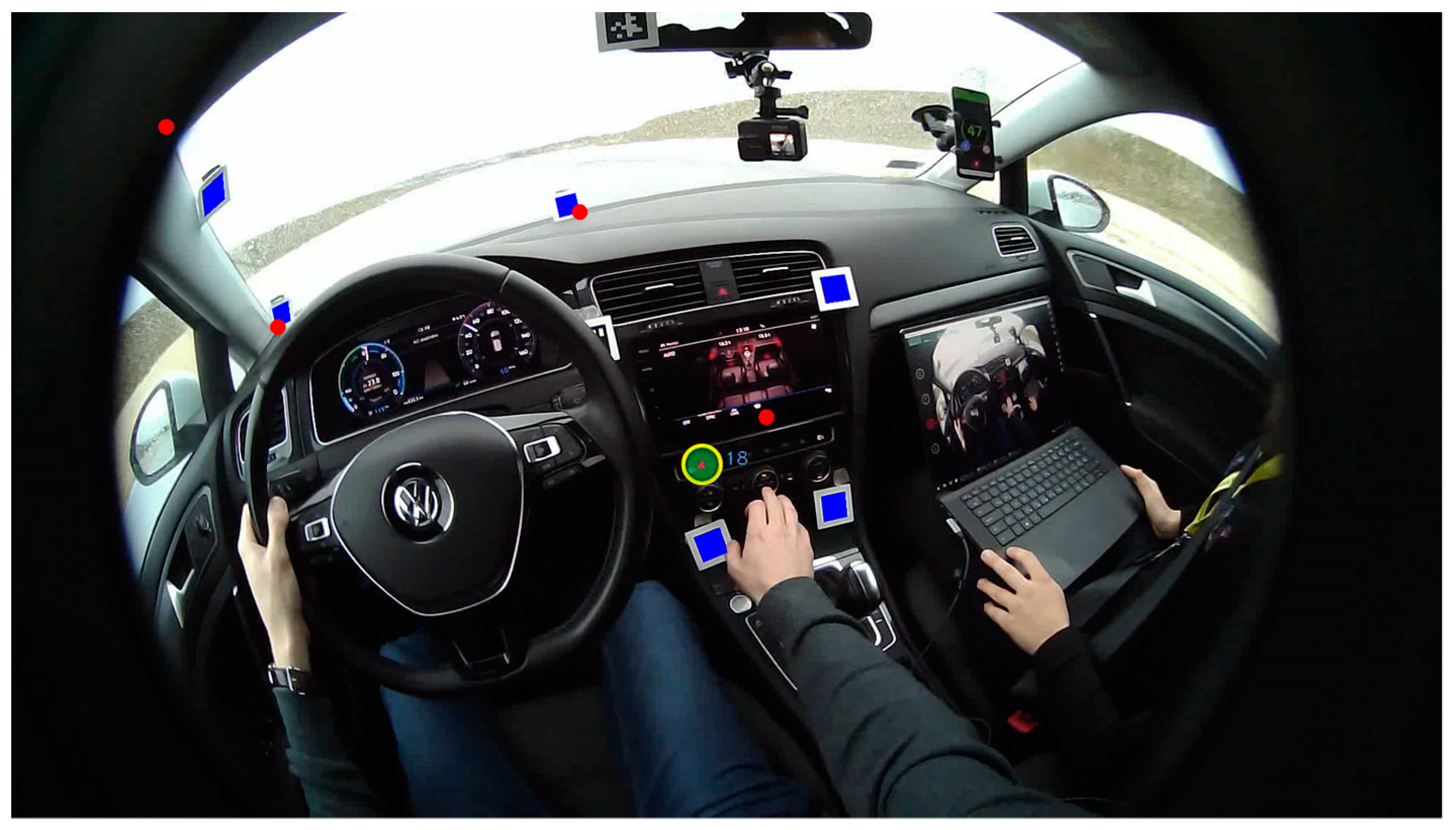

In our experimental setup, we utilize marking points placed at the four corners of the windshield to define the specific AOI. When the driver’s gaze is located within this demarcated area, it is interpreted as focusing on the road ahead, and the visualization shows green color indicators. Additionally, a central green mark is used to signify the actual position of the driver’s gaze. The larger diameter green circle with a yellow frame represents the precise and fixation state of gaze identified using the ‘Pupil Labs Software’, shown in the raw videos that aid visualization, independent of our own analysis.

A key aspect of our methodology is the implementation of an interpolation procedure between successive video frames. This procedure allows us to accurately track the position of these points, even in instances where the associated ID tag is not directly detected in a particular frame. It is important to note, however, that this interpolation technique is most effective when the absence of the tag from the frame is for relatively brief durations. Moreover, our approach accounts for scenarios where the known position of the ID tag may not be within the FOV. In such cases, the previously mentioned points can still be accurately determined based on their known positions relative to the ID tags. A practical demonstration of this scenario is shown in

Figure 6, where the effectiveness of the method in tracking gaze position regardless of the direct visibility of ID tags is shown, and the red color of the corner points indicates the gaze-off-road condition. This aspect underscores the robustness of our system in maintaining accurate gaze tracking under various conditions.

In our study, as an alternative analytical approach, we consider any gaze that does not fall within the specified boundary (gaze-off-road case) as an “Eyes-Off-The-Road” scenario. This demarcation is essential to identify instances where the driver is not looking at the road, which is a critical factor in monitoring visual distraction when conducting a specific CDS. In cases where the experiment requires the subject to perform complex activities, consideration should be given to modifying the boundaries of the usable FOV (e.g., by adding mirrors) to make the measurement more accurate. Further investigation in this regard involves the measurement of TEORT, which provides a quantitative assessment of the duration for which the driver’s attention is diverted away from the road, thereby offering valuable insights for visual distraction monitoring in driving scenarios.

2.3.3. Gaze on Dashboard

In order to improve driver attention monitoring and increase the accuracy of detecting instances when eyes are off the road, it is important to develop a method for examining interactions with the central console controls on the dashboard. In our study, the climate control button array and the integrated touchscreen located on the central console of the passenger car, as UI elements, play a pivotal role. The analysis of the driver’s visual distraction during the operation of these controls (NDRTs) is a key focus. To facilitate this, the identification of the central console as an AOI is essential. In our case, the console’s slight orientation towards the driver, the perpendicular angle of incidence between the driver’s gaze and the plane of the central console, and the positioning of the physical button array and touchscreen on the same plane made it feasible to easily mark the corner points with ID tags. However, typically one ID tag becomes obscured due to the position of the steering wheel or driver’s hand. Therefore, the dashboard boundaries were demarcated using only the data from ID tag 1 and ID tag 2. To compensate for the missing left-hand corner points, predefined offsets were applied. The upper offset should be 300 pixels and the lower offset should be 170 pixels, both parallel to the line connecting ID tag 4 and the marked corner points of ID tag 5.

Figure 7 provides an example of this approach.

The method can be applied universally, but the specific predefined plane figure and geometric rules used to calculate the position of missing or obscured UI boundary ID tags are determined by the measurement environment. Since the analysis and processing of these points occur post-experiment, the method can be refined based on the specific environmental conditions present. This approach allows for a tailored analysis that accommodates varying dashboard layouts and driver interaction dynamics, ensuring a more accurate assessment of driver attention and UI interaction in diverse driving scenarios.

3. Results

Table 2 presents the success rates of identifying windscreen boundary tags in 10 different measurements. These rates are compared between the standard software provided with Pupil Core ET glasses and the proprietary algorithm developed for the study, highlighting the effectiveness of each method in tag detection under varying conditions.

To compare the performance of ‘Pupil Labs software’ and ‘BAIT’, we conducted a comprehensive statistical analysis. We first assessed the normality of our data distributions using the Shapiro–Wilk test. The results showed a significant deviation from normality for both ‘Pupil Labs software’ (p = 0.0005) and ‘BAIT’ (p < 0.0001), indicating that neither dataset followed a normal distribution. This finding highlights the need for caution when using tests that assume normality. Additionally, the Levene’s test for the equality of variances indicated a significant difference in variances between the groups (p = 0.00065), suggesting a violation of the homogeneity of variances assumption. Based on these preliminary findings, we chose statistical methods that do not depend on the assumption of equal variances between groups for accurate analysis.

Due to the deviations from normality and homogeneity of variances, we opted to use the Wilcoxon Signed-Rank Test for our analysis. The results of the Wilcoxon Signed-Rank Test indicate a highly significant difference in median values between ‘Pupil Labs software’ and ‘BAIT’ (p < 0.00001), confirming a statistically significant difference in performance between the two conditions. The ‘Pupil Labs Software’ failed to recognize ID tag 7 in 5 out of 10 instances, while ‘BAIT’ performed the worst at number 9 due to the participant head movements (looking down) and the camera failing to capture the ID tag. The ‘BAIT’ consistently outperformed the ‘Pupil Labs software’ across all measured conditions in accuracy and reliability. It effectively addresses challenges such as brief occlusions or slight angle changes in ID tags, which are common in tracking scenarios. Through sophisticated interpolation techniques, our algorithm compensates for moments when an ID tag is temporarily obscured or viewed from different angles, thereby minimizing errors in tracking and ensuring continuous and precise ID tag identification. This capability is crucial for applications requiring uninterrupted monitoring and exact location tracking.

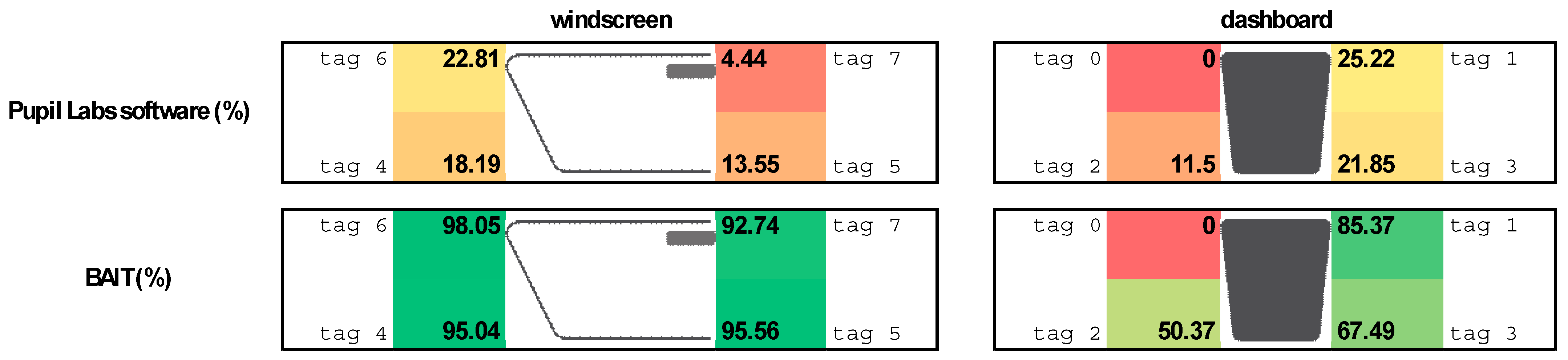

Analysis of the performance data across ID tags 4 to 7 revealed that the mean percentage of success for the ‘BAIT’ ranged from 92.74% to 98.05%, with relatively low mean deviations and standard deviations, indicating consistent performance across the trials, as shown in

Table 3. In contrast, the ‘Pupil Labs software’ showed lower mean performances with greater variability.

Table 4 compares the effectiveness of ‘Pupil Labs software’ with a custom-developed algorithm in detecting dashboard boundary tags across 10 distinct measurements. This comparison serves to demonstrate the relative efficiency of each method in ID tag identification under various conditions.

To evaluate the comparative performance of ‘Pupil Labs software’ and ‘BAIT’, we conducted a comprehensive statistical analysis. We ensured the robustness of our findings by first running the Shapiro–Wilk test to assess the normality of our data distributions. The results show that ‘Pupil Labs software’ did not significantly deviate from a normal distribution (p = 0.097), however, ‘BAIT’ exhibited a significant deviation from normality (p = 0.011), suggesting that its data did not follow a normal distribution. Therefore, caution should be exercised when applying tests based on this assumption. The Levene’s test for equality of variances revealed a significant difference in variances between the groups (p = 0.0065), violating the homogeneity of variances assumption. Therefore, statistical methods that do not rely on equal variances between groups were selected for accurate analysis.

Based on these preliminary findings, we chose to use the Wilcoxon Signed-Rank Test. The results of this test showed a significant difference in median values between ‘Pupil Labs software’ and ‘BAIT’ (

p < 0.00001), clearly demonstrating a statistically significant difference in performance between the two. The ‘BAIT’ outperformed the ‘Pupil Labs software’ across all measured conditions in accuracy and reliability. During the measurements, ID tag 0 was always obscured.

Table 3 shows that identifying ID tag 3 was also difficult, often because drivers obscured it with their hands while interacting with the dashboard.

The efficacy of the proposed method is contingent on a high rate of ID tag identification and minimal non-identifiable intervals, such as when ID tags are obscured. Consequently, reliable detection of dashboard boundaries throughout the entire measurement was feasible only in measurements 1 and 10. In measurements 2 to 7, detection was only partially successful due to the driver’s hand obstructing the dashboard boundary ID tags for extended periods. In measurements 8 and 9, the driver frequently obscured the markers necessary for detection, leading to an inability to determine the dashboard area during these specific measurements.

Upon the analysis of the performance metrics, it is clear that the ‘BAIT’ software consistently outperformed the ‘Pupil Labs Software’ for various ID tags, as demonstrated in

Table 5, with the exception of ID tag 0, as it was not recognized by any of the solutions after being obscured. The ‘BAIT’ demonstrated significantly higher mean accuracy rates. Notably, the standard deviations indicated greater consistency in the ‘BAIT’s performance, with lower variability compared to the ‘Pupil Labs software’. The 95% confidence intervals suggest that the true mean performances of the BAIT are significantly higher than those of the ‘Pupil Labs software’ across all ID tags (except ID tag0), confirming its higher accuracy.

Our comparative analysis of ID tag detection algorithms highlights significant performance disparities between the ‘BAIT’ and ‘Pupil Labs software’, as shown in

Table 6.

The results indicate that around the windscreen ‘Pupil Labs software’ failed to detect some ID tags compared to BAIT. We also observed that ID tag0 remained unidentifiable by all methods, with a recorded value of 0. This presence of extreme values adversely affected the statistical outcomes. However, by implementing a filtering process to exclude the instances with a value of 0, we were able to recalibrate the statistics, as illustrated in

Table 7.

In terms of consistency, the ‘BAIT’ displayed a more stable performance with lower mean deviations for windscreen scenarios, suggesting a steadier focus detection. This steadiness was particularly evident in the windscreen condition, where the mean deviation of the ‘BAIT’ was significantly less than that of ‘Pupil Labs software’. However, the ‘BAIT’ demonstrated increased variability in dashboard focus measurements, indicated by a higher standard deviation. The precision of ID tag detection was further characterized by the confidence intervals; the ‘BAIT’ presented a narrower interval for windscreen focus, implying more reliable estimates. In contrast, its estimates for dashboard focus were less precise, as reflected by a wider confidence interval compared to its windscreen performance and to ‘Pupil Labs software’.

Overall, these findings underline the ‘BAIT’s’ robustness in windscreen focus detection while signaling its variable performance in dashboard focus assessment, offering critical insights for its application and potential optimization, as visualized in

Figure 8.

4. Discussion

In our research, we developed and implemented a bespoke ID tag identification algorithm called BAIT, which exhibited a notably higher level of effectiveness in comparison to conventional, standard software solutions (compared to Pupil Labs software). This enhanced performance was particularly evident in the algorithm’s ability to manage and compensate for certain challenges inherent in the ID tag identification process. The following methods were used for higher identification efficiency:

Binarization process on the three distinct RGB components of a frame, executed at various sensitivity levels.

Interpolation between successive video frames.

Adding predefined offset.

A key limitation encountered in ID tag tracking is the obstruction of the ID tag’s visibility. Naturally, when an ID tag is completely obscured from the camera’s view, its identification becomes unfeasible using direct visual methods. However, our algorithm demonstrates a significant strength in dealing with transient occlusions or minor alterations in the viewing angle during such occlusions. This is achieved through a robust interpolation technique, which is a critical component of our algorithm. The interpolation method employed is designed to predict the position of an ID tag during short periods when it is not visible, using the data from frames where the tag is clearly identified.

Our study evaluates the performance of the ‘BAIT’ against the ‘Pupil Labs software’ in attention detection, revealing key differences in accuracy and consistency across different focus areas like the windscreen and dashboard, and highlighting potential areas for further research in algorithm optimization for specific tasks as follows:

Algorithm Performance: The ‘BAIT’ demonstrated a markedly higher mean attention percentage on the windscreen, suggesting enhanced detection capabilities in this area compared to the ‘Pupil Labs software’. While the ‘BAIT’ also exhibited a higher mean attention percentage for the dashboard, the increment was not as substantial as observed in the windscreen condition.

Consistency of Results: The reduced mean deviation in the ‘BAIT’ for the windscreen condition implies a more consistent measurement of focus across trials than the ‘Pupil Labs software’. However, the larger mean deviation in the dashboard condition for the ‘BAIT’ indicates greater variability, which could be attributed to specific challenges in this setting or inherent algorithmic differences.

Variability of Data: Standard deviation assessments reveal that the ‘BAIT’ yields more consistent results for the windscreen condition. Conversely, the ‘Pupil Labs software’ appears to provide more consistent outcomes when measuring focus on the dashboard.

Confidence in Estimates: The narrower confidence interval for the ‘BAIT’ in windscreen observations suggests a higher level of precision in these estimates, potentially reflecting a more reliable performance in this specific task.

Implications for Future Research: Future research could benefit from further investigation into the conditions and parameters under which each algorithm operates most effectively, as suggested by the observed variability in standard deviation and confidence intervals.

Algorithmic Suitability: The data suggests that the ‘BAIT’ may be more suitable for applications requiring precise attention detection on windshields, while the ‘Pupil Labs software’ may be favored for tasks that demand consistent dashboard focus measurements.

The BAIT algorithm is a significant advancement in ID tag identification and has potential for practical applications. Its robustness and precision make it suitable for integration into research studies in both controlled environments and naturalistic driving scenarios. The BAIT algorithm can significantly enhance the accuracy and reliability of visual distraction measurements in the automotive industry, particularly in semi-naturalistic studies conducted under varying environmental conditions and custom cockpit setups.

The study is limited by its specialized focus and environmental settings, and it only used ET data from 10 participants, each with 8 ID tags. The custom algorithm, tailored for driving scenarios and targeting specific areas like the FOV and UI in cars, may not perform as well in non-driving contexts. Its effectiveness is primarily in driving scenarios used in CDS, limiting its utility in managed settings. Reliance on ET technology and its tuning to specific vehicle interiors and IVIS also constrain its applicability. Furthermore, its focus on visual distraction in driving restricts broader usage beyond driver behavior analysis, impacting its generalizability.

5. Conclusions

In conclusion, this research presents an AOI identification method specifically designed for measuring visual distraction of passenger car drivers while using wearable ET device in CDS. The BAIT algorithm demonstrates superior identification accuracy over the Pupil Labs software, across various ID tags in a driving context. BAIT employs a binarization process on RGB components at multiple sensitivity levels and an innovative interpolation method between video frames to maintain tracking even during transient occlusions.

In our analysis, the BAIT algorithm demonstrates a significant advantage in mean accuracy compared to Pupil Labs software, with 95.35% on the windscreen and 50.81% on the dashboard, suggesting a robust capacity for attention detection in CDS. The algorithm also shows a lower mean deviation, especially on the windscreen, indicating more consistent measurements. A notable limitation for any algorithm, including the BAIT, is the obstruction of visibility of the ID tags. This challenge is particularly acute for dashboard and UI AOI detection, where occlusions are more prevalent and significantly affect tracking accuracy and consistency. However, the BAIT algorithm has greater variability on the dashboard as evidenced by a higher standard deviation (36.42%) compared to the windscreen (8.82%), pointing to a potential area for improvement in complex or variable conditions.

These results indicate that while the BAIT algorithm offers substantial improvements in certain aspects, such as accuracy and consistency for windscreen-focused tasks, it requires further development to enhance its reliability for dashboard-related tasks. Future work should compare BAIT with other ET software and devices, and should focus on refining the algorithm to reduce variability and improve confidence in its dashboard application.

Author Contributions

Conceptualization, V.N., P.F. and G.I.; methodology, G.I.; software, G.I.; validation, V.N. and G.I.; formal analysis, G.I.; investigation, V.N.; resources, V.N.; data curation, V.N.; writing—original draft preparation, V.N. and G.I.; writing—review and editing, V.N.; visualization, V.N. and G.I.; supervision, V.N., P.F. and G.I. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

Ethical review was not required as participants joined voluntarily and without compensation, and no personal data was collected or shared. The study prioritised participant privacy and minimised risks, adhering to ethical standards.

Informed Consent Statement

Informed consent was obtained from all subjects involved in the study.

Data Availability Statement

Acknowledgments

The research was supported by the European Union within the framework of the National Laboratory for Artificial Intelligence. (RRF-2.3.1-21-2022-00004) The research was carried out as a part of the Cooperative Doctoral Program supported by the National Research, Development and Innovation Office and Ministry of Culture and Innovation.

Conflicts of Interest

The authors declare no conflicts of interest.

Abbreviations

| ADAS | Advanced Driving Assistant Systems |

| AOI | Area of Interest |

| BAIT | Binarized Area of Interest Tracking |

| CDS | Controlled Driving Study |

| ET | Eye-tracking |

| FOV | Field of View |

| HCI | Human–Computer Interaction |

| HMI | Human–Machine Interface |

| ID tag | Identification tag |

| IVIS | In-Vehicle Information System |

| IVS | Instrumented Vehicle Study |

| ND | Naturalistic Driving |

| NDRT | Non-Driving-Related Task |

| RGB | Red-Green-Blue |

| TEORT | Total Eyes-Off-Road Time |

| UI | User Interface |

References

- Taxonomy and Definitions for Terms Related to Driving Automation Systems for On-Road Motor Vehicles. 2018. Available online: https://www.sae.org/standards/content/j3016_202104/ (accessed on 2 March 2024).

- Greenlee, E.T.; DeLucia, P.R.; Newton, D.C. Driver Vigilance in Automated Vehicles: Hazard Detection Failures Are a Matter of Time. Hum. Factors 2018, 60, 465–476. [Google Scholar] [CrossRef]

- Strayer, D.L.; Cooper, J.M.; Goethe, R.M.; McCarty, M.M.; Getty, D.J.; Biondi, F. Assessing the Visual and Cognitive Demands of In-Vehicle Information Systems. Cogn. Res. Princ. Implic. 2019, 4, 18. [Google Scholar] [CrossRef] [PubMed]

- Kovács, G. Human Factor Aspects of Situation Awareness in Autonomous Cars—A Psychological Approach. Acta Polytech. Hung. 2020, 18, 7. [Google Scholar] [CrossRef]

- Shechtman, O.; Classen, S.; Awadzi, K.; Mann, W. Comparison of Driving Errors between On-the-Road and Simulated Driving Assessment: A Validation Study. Traffic Inj. Prev. 2009, 10, 379–385. [Google Scholar] [CrossRef]

- Mayhew, D.R.; Simpson, H.M.; Wood, K.M.; Lonero, L.; Clinton, K.M.; Johnson, A.G. On-Road and Simulated Driving: Concurrent and Discriminant Validation. J. Safety Res. 2011, 42, 267–275. [Google Scholar] [CrossRef]

- van Schagen, I.; Sagberg, F. The Potential Benefits of Naturalistic Driving for Road Safety Research: Theoretical and Empirical Considerations and Challenges for the Future. Procedia Soc. Behav. Sci. 2012, 48, 692–701. [Google Scholar] [CrossRef]

- Rizzo, M.; Jermeland, J.; Severson, J. Instrumented Vehicles and Driving Simulators. Gerontechnology 2002, 1, 291–296. [Google Scholar] [CrossRef]

- Bach, K.M.; Jaeger, G.; Skov, M.B.; Thomassen, N.G. Interacting with In-Vehicle Systems: Understanding, Measuring, and Evaluating Attention. In Proceedings of the People and Computers XXIII Celebrating People and Technology (HCI), Cambridge, UK, 1–5 September 2009. [Google Scholar]

- Regan, M.A.; Lee, J.D.; Young, K. (Eds.) Driver Distraction: Theory, Effects, and Mitigation; CRT Press: Washington, DC, USA, 2008. [Google Scholar]

- Regan, M.A.; Hallett, C.; Gordon, C.P. Driver Distraction and Driver Inattention: Definition, Relationship and Taxonomy. Accid. Anal. Prev. 2011, 43, 1771–1781. [Google Scholar] [CrossRef]

- Kircher, K.; Ahlstrom, C. Minimum Required Attention: A Human-Centered Approach to Driver Inattention. Hum. Factors 2017, 59, 471–484. [Google Scholar] [CrossRef] [PubMed]

- Ito, H.; Atsumi, B.; Uno, H.; Akamatsu, M. Visual Distraction While Driving. IATSS Res. 2001, 25, 20–28. [Google Scholar] [CrossRef]

- Victor, T. Analysis of Naturalistic Driving Study Data: Safer Glances, Driver Inattention, and Crash Risk. In Analysis of Naturalistic Driving Study Data: Safer Glances, Driver Inattention, and Crash Risk; National Academies: Washington, DC, USA, 2014. [Google Scholar] [CrossRef]

- The Royal Society for the Prevention of Accidents (ROSPA). Road Safety Factsheet Driver Distraction Factsheet. 28 Calthorpe Road, Edgbaston, Birmingham B15 1RP. 2020. Available online: https://www.rospa.com/media/documents/road-safety/driver-distraction-factsheet.pdf (accessed on 2 March 2024).

- Purucker, C.; Naujoks, F.; Prill, A.; Neukum, A. Evaluating Distraction of In-Vehicle Information Systems While Driving by Predicting Total Eyes-off-Road Times with Keystroke Level Modeling. Appl. Ergon. 2017, 58, 543–554. [Google Scholar] [CrossRef]

- Madlenak, R.; Masek, J.; Madlenakova, L.; Chinoracky, R. Eye-Tracking Investigation of the Train Driver’s: A Case Study. Appl. Sci. 2023, 13, 2437. [Google Scholar] [CrossRef]

- Ahlström, C.; Kircher, K.; Nyström, M.; Wolfe, B. Eye Tracking in Driver Attention Research—How Gaze Data Interpretations Influence What We Learn. Front. Neuroergonomics 2021, 2, 778043. [Google Scholar] [CrossRef]

- Nagy, V.; Kovács, G.; Földesi, P.; Kurhan, D.; Sysyn, M.; Szalai, S.; Fischer, S. Testing Road Vehicle User Interfaces Concerning the Driver’s Cognitive Load. Infrastructures 2023, 8, 49. [Google Scholar] [CrossRef]

- Krejtz, K.; Duchowski, A.T.; Niedzielska, A.; Biele, C.; Krejtz, I. Eye Tracking Cognitive Load Using Pupil Diameter and Microsaccades with Fixed Gaze. PLoS ONE 2018, 13, e0203629. [Google Scholar] [CrossRef]

- Goldberg, J.H.; Kotval, X.P. Computer Interface Evaluation Using Eye Movements: Methods and Constructs. Int. J. Ind. Ergon. 1999, 24, 631–645. [Google Scholar] [CrossRef]

- Foulsham, T.; Walker, E.; Kingstone, A. The Where, What and When of Gaze Allocation in the Lab and the Natural Environment. Vision Res. 2011, 51, 1920–1931. [Google Scholar] [CrossRef]

- Hessels, R.S.; Kemner, C.; van den Boomen, C.; Hooge, I.T.C. The Area-of-Interest Problem in Eyetracking Research: A Noise-Robust Solution for Face and Sparse Stimuli. Behav. Res. Methods 2016, 48, 1694–1712. [Google Scholar] [CrossRef]

- Zhang, X.; Yuan, S.M.; Chen, M.D.; Liu, X. A Complete System for Analysis of Video Lecture Based on Eye Tracking. IEEE Access 2018, 6, 49056–49066. [Google Scholar] [CrossRef]

- Jayawardena, G.; Jayarathna, S. Automated Filtering of Eye Movements Using Dynamic AOI in Multiple Granularity Levels. Int. J. Multimed. Data Eng. Manag. 2021, 12, 49–64. [Google Scholar] [CrossRef]

- Friedrich, M.; Rußwinkel, N.; Möhlenbrink, C. A Guideline for Integrating Dynamic Areas of Interests in Existing Set-up for Capturing Eye Movement: Looking at Moving Aircraft. Behav. Res. Methods 2017, 49, 822–834. [Google Scholar] [CrossRef] [PubMed]

- Peysakhovich, V.; Dehais, F.; Duchowski, A.T. ETH Library ArUco/Gaze Tracking in Real Environments Other Conference Item. In Proceedings of the Eye Tracking for Spatial Research, Proceedings of the 3rd International Workshop (ET4S), Zurich, Switzerland, 14 January 2018; pp. 70–71. [Google Scholar]

- Bonikowski, L.; Gruszczynski, D.; Matulewski, J. Open-Source Software for Determining the Dynamic Areas of Interest for Eye Tracking Data Analysis. Procedia Comput. Sci. 2021, 192, 2568–2575. [Google Scholar] [CrossRef]

- Orquin, J.L.; Ashby, N.J.S.; Clarke, A.D.F. Areas of Interest as a Signal Detection Problem in Behavioral Eye-Tracking Research. J. Behav. Decis. Mak. 2016, 29, 103–115. [Google Scholar] [CrossRef]

- Faraji, Y.; van Rijn, J.W.; van Nispen, R.M.A.; van Rens, G.H.M.B.; Melis-Dankers, B.J.M.; Koopman, J.; van Rijn, L.J. A Toolkit for Wide-Screen Dynamic Area of Interest Measurements Using the Pupil Labs Core Eye Tracker. Behav. Res. Methods 2022, 55, 3820–3830. [Google Scholar] [CrossRef] [PubMed]

- Tensmeyer, C.; Martinez, T. Historical Document Image Binarization: A Review. SN Comput. Sci. 2020, 1, 173. [Google Scholar] [CrossRef]

- Wang, J. Edwin Olson AprilTag 2: Efficient and Robust Fiducial Detection. In Proceedings of the 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Daejeon, Republic of Korea, 9–14 October 2016; pp. 4193–4198. [Google Scholar]

- Zin, T.T.; Misawa, S.; Pwint, M.Z.; Thant, S.; Seint, P.T.; Sumi, K.; Yoshida, K. Cow Identification System Using Ear Tag Recognition. In Proceedings of the LifeTech 2020—2020 IEEE 2nd Global Conference on Life Sciences and Technologies, Kyoto, Japan, 10–12 March 2020; pp. 65–66. [Google Scholar]

- Kassner, M.; Patera, W.; Bulling, A. Pupil: An Open Source Platform for Pervasive Eye Tracking and Mobile Gaze-Based Interaction. In Proceedings of the 2014 ACM International Joint Conference on Pervasive and Ubiquitous Computing , Washington, DC, USA, 13–17 September 2014; pp. 1151–1160. [Google Scholar] [CrossRef]

- MacInnes, J. Wearable Eye-Tracking for Research: Comparisons across Devices. bioRxiv 2018. [Google Scholar]

- Olson, E. AprilTag: A Robust and Flexible Visual Fiducial System. In Proceedings of the IEEE International Conference on Robotics and Automation, Shanghai, China, 9–13 May 2011; pp. 3400–3407. [Google Scholar]

Figure 1.

Test apparatus: Pupil Core eye-tracking device installed on the head of driver; ID tags in the view of driver (around windscreen and center console).

Figure 1.

Test apparatus: Pupil Core eye-tracking device installed on the head of driver; ID tags in the view of driver (around windscreen and center console).

Figure 2.

ID tag positioning (around windscreen and center console).

Figure 2.

ID tag positioning (around windscreen and center console).

Figure 3.

The original frame.

Figure 3.

The original frame.

Figure 4.

The binary RGB color components with 0% (a), 20% (b), 40% (c), 60% (d), 80% (e), and 100% (f) sensitivity.

Figure 4.

The binary RGB color components with 0% (a), 20% (b), 40% (c), 60% (d), 80% (e), and 100% (f) sensitivity.

Figure 5.

Gaze position (yellow circle with green fill), detected tags (blue squares), compensated tag positions (dots in the corners) and “gaze-on-road” indication (dots are green not red).

Figure 5.

Gaze position (yellow circle with green fill), detected tags (blue squares), compensated tag positions (dots in the corners) and “gaze-on-road” indication (dots are green not red).

Figure 6.

Gaze position (yellow circle with green fill), detected tags (blue squares), compensated tag positions (dots in the corners) and “gaze-on-road” indication (dots are red not green).

Figure 6.

Gaze position (yellow circle with green fill), detected tags (blue squares), compensated tag positions (dots in the corners) and “gaze-on-road” indication (dots are red not green).

Figure 7.

Incorrect recognition of the dashboard area-gaze position (yellow circle with green fill), detected tags (blue squares), compensated tag positions (dots in the corners) and “gaze on dashboard” indication (red dots).

Figure 7.

Incorrect recognition of the dashboard area-gaze position (yellow circle with green fill), detected tags (blue squares), compensated tag positions (dots in the corners) and “gaze on dashboard” indication (red dots).

Figure 8.

The results are visualized as percentages, with the position of the ID tags reflecting their actual placement.

Figure 8.

The results are visualized as percentages, with the position of the ID tags reflecting their actual placement.

Table 1.

Eye-tracking system datasheet.

Table 1.

Eye-tracking system datasheet.

| Feature | Specification |

|---|

| Eye Tracking System | Pupil Core eye-tracking system |

| Infrared (IR) Eye Cameras | 2 cameras, 120 Hz @ 400 × 400 px |

| RGB World-view camera | 1 camera, 30 Hz @ 1080p/60 Hz @ 720p, 139° × 83° wide-angle lens |

| Recording Management | Pupil Capture software v3.5.1 (Pupil Labs GmbH., Berlin, Germany) |

| Workstation PC Specifications | 11th Gen Intel(R) Core(TM) i7–11800H, 32 DDR4 RAM, NVIDIA GeForce RTX 3050 Ti Laptop GPU |

| Calibration Process | Semi-automated, using calibration circles |

| ID-Tag Markers | tag36h11 family printed on 50 mm × 50 mm hard plastic plates (ID tags: 0 to 7) |

| Post-processing | Pupil Player software v3.5.1 (Pupil Labs GmbH., Berlin, Germany) hereinafter called ‘Pupil Labs Software’ |

Table 2.

The rates of successful identification of the windscreen boundary ID tags in percentage.

Table 2.

The rates of successful identification of the windscreen boundary ID tags in percentage.

| No. | ID Tag No. | Pupil Labs Software (%) | BAIT (%) |

|---|

| 1 | tag 4 | 20 | 99.50 |

| 1 | tag 5 | 20 | 99.75 |

| 1 | tag 6 | 20 | 100 |

| 1 | tag 7 | 0 | 100 |

| 2 | tag 4 | 26.15 | 100 |

| 2 | tag 5 | 25.07 | 100 |

| 2 | tag 6 | 26.15 | 100 |

| 2 | tag 7 | 0 | 100 |

| 3 | tag 4 | 23.28 | 100 |

| 3 | tag 5 | 23.53 | 99.75 |

| 3 | tag 6 | 23.77 | 100 |

| 3 | tag 7 | 0 | 100 |

| 4 | tag 4 | 28.26 | 93.91 |

| 4 | tag 5 | 23.91 | 93.91 |

| 4 | tag 6 | 11.30 | 96.09 |

| 4 | tag 7 | 2.83 | 97.39 |

| 5 | tag 4 | 24.52 | 90.74 |

| 5 | tag 5 | 16.89 | 89.92 |

| 5 | tag 6 | 23.98 | 98.09 |

| 5 | tag 7 | 0.27 | 98.91 |

| 6 | tag 4 | 15.99 | 96.45 |

| 6 | tag 5 | 1.27 | 96.45 |

| 6 | tag 6 | 34.01 | 99.49 |

| 6 | tag 7 | 22.84 | 98.98 |

| 7 | tag 4 | 22.54 | 84.44 |

| 7 | tag 5 | 12.38 | 84.44 |

| 7 | tag 6 | 26.98 | 90.79 |

| 7 | tag 7 | 10.48 | 89.52 |

| 8 | tag 4 | 19.30 | 97.95 |

| 8 | tag 5 | 10.53 | 97.37 |

| 8 | tag 6 | 28.95 | 99.12 |

| 8 | tag 7 | 0 | 100 |

| 9 | tag 4 | 1.88 | 87.76 |

| 9 | tag 5 | 1.88 | 95.53 |

| 9 | tag 6 | 32.94 | 96.94 |

| 9 | tag 7 | 8.00 | 48.24 |

| 10 | tag 4 | 0 | 99.69 |

| 10 | tag 5 | 0 | 98.44 |

| 10 | tag 6 | 0 | 100 |

| 10 | tag 7 | 0 | 94.39 |

Table 3.

Statistical values of the identified ID tags 4 to 7 (windscreen boundaries).

Table 3.

Statistical values of the identified ID tags 4 to 7 (windscreen boundaries).

| ID Tag No. | Pupil Labs Software (%) | BAIT (%) |

|---|

| Mean | Mean Deviation | Standard Deviation | CI 95% | Mean | Mean Deviation | Standard Deviation | CI 95% |

|---|

| tag 4 | 18.19 | 7.34 | 9.75 | 6.04 | 95.04 | 4.66 | 5.64 | 3.49 |

| tag 5 | 13.55 | 8.33 | 9.85 | 6.11 | 95.56 | 3.68 | 5.00 | 3.10 |

| tag 6 | 22.81 | 7.42 | 10.31 | 6.39 | 98.05 | 2.07 | 2.91 | 1.80 |

| tag 7 | 4.44 | 5.60 | 7.50 | 4.65 | 92.74 | 9.55 | 16.00 | 9.92 |

Table 4.

The rates of successful identification of the dashboard boundary ID tags in percentage.

Table 4.

The rates of successful identification of the dashboard boundary ID tags in percentage.

| No. | ID Tag No. | Pupil Labs Software (%) | BAIT (%) |

|---|

| 1 | tag 0 | 0 | 0 |

| 1 | tag 1 | 20 | 93.75 |

| 1 | tag 2 | 8.25 | 79.5 |

| 1 | tag 3 | 19.5 | 98.25 |

| 2 | tag 0 | 0 | 0 |

| 2 | tag 1 | 26.15 | 84.64 |

| 2 | tag 2 | 23.18 | 77.36 |

| 2 | tag 3 | 8.89 | 77.90 |

| 3 | tag 0 | 0 | 0 |

| 3 | tag 1 | 23.28 | 93.38 |

| 3 | tag 2 | 0 | 31.13 |

| 3 | tag 3 | 23.77 | 73.53 |

| 4 | tag 0 | 0 | 0 |

| 4 | tag 1 | 29.78 | 93.70 |

| 4 | tag 2 | 6.74 | 9.35 |

| 4 | tag 3 | 30.65 | 81.96 |

| 5 | tag 0 | 0 | 0 |

| 5 | tag 1 | 26.70 | 90.19 |

| 5 | tag 2 | 31.88 | 65.12 |

| 5 | tag 3 | 10.08 | 66.49 |

| 6 | tag 0 | 0 | 0 |

| 6 | tag 1 | 27.16 | 85.53 |

| 6 | tag 2 | 3.81 | 65.23 |

| 6 | tag 3 | 32.49 | 72.34 |

| 7 | tag 0 | 0 | 0 |

| 7 | tag 1 | 20.95 | 78.41 |

| 7 | tag 2 | 8.25 | 66.35 |

| 7 | tag 3 | 26.98 | 60.63 |

| 8 | tag 0 | 0 | 0 |

| 8 | tag 1 | 26.90 | 66.67 |

| 8 | tag 2 | 23.98 | 36.55 |

| 8 | tag 3 | 3.51 | 24.85 |

| 9 | tag 0 | 0 | 0 |

| 9 | tag 1 | 8 | 68.71 |

| 9 | tag 2 | 8.94 | 38.82 |

| 9 | tag 3 | 24.94 | 28.94 |

| 10 | tag 0 | 0 | 0 |

| 10 | tag 1 | 43.30 | 98.75 |

| 10 | tag 2 | 0 | 34.27 |

| 10 | tag 3 | 37.69 | 90.03 |

Table 5.

Statistical values of the identified ID tags 0 to 3 (dashboard boundaries).

Table 5.

Statistical values of the identified ID tags 0 to 3 (dashboard boundaries).

| ID Tag No. | Pupil Labs Software (%) | BAIT (%) |

|---|

| Mean | Mean Deviation | Standard Deviation | CI 95% | Mean | Mean Deviation | Standard Deviation | CI 95% |

|---|

| tag 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| tag 1 | 25.22 | 5.73 | 8.84 | 5.48 | 85.37 | 8.61 | 10.97 | 6.80 |

| tag 2 | 11.50 | 8.91 | 10.96 | 6.79 | 50.37 | 20.34 | 23.35 | 14.47 |

| tag 3 | 21.85 | 9.08 | 11.20 | 6.94 | 67.49 | 17.81 | 24.00 | 14.87 |

Table 6.

Statistical values of the comparison.

Table 6.

Statistical values of the comparison.

| | Windscreen | Dashboard |

|---|

| Pupil Labs Software (%) | BAIT (%) | Pupil Labs Software (%) | BAIT (%) |

|---|

| Mean | 14.75 | 95.35 | 14.64 | 50.81 |

| Mean Deviation | 10.23 | 5.04 | 12.05 | 32.99 |

| Standard Deviation | 11.37 | 8.82 | 13.20 | 36.42 |

| CI 95% | 3.52 | 2.73 | 4.09 | 11.29 |

Table 7.

Filtered statistical values of comparison where 0 values were excluded.

Table 7.

Filtered statistical values of comparison where 0 values were excluded.

| | Windscreen | Dashboard |

|---|

| Pupil Labs Software (%) | BAIT (%) | Pupil Labs Software (%) | BAIT (%) |

|---|

| Mean | 17.88 | 95.35 | 19.53 | 67.74 |

| Mean Deviation | 8.40 | 5.04 | 9.89 | 19.08 |

| Standard Deviation | 9.99 | 8.82 | 11.65 | 24.43 |

| CI 95% | 3.10 | 2.73 | 3.61 | 7.57 |

| Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).