A New Method of Time-Series Event Prediction Based on Sequence Labeling

Abstract

1. Introduction

- This paper transformed the problem of event prediction into a problem of sequence labeling and captures the dependency between subsequence sets;

- The CX-LC model developed in this paper can well extract the features in the original data set and smoothly optimize the prediction results;

- This paper conducted experiments on five data sets to prove that the CX-LC model has more accurate predictions.

2. Definitions and Methods

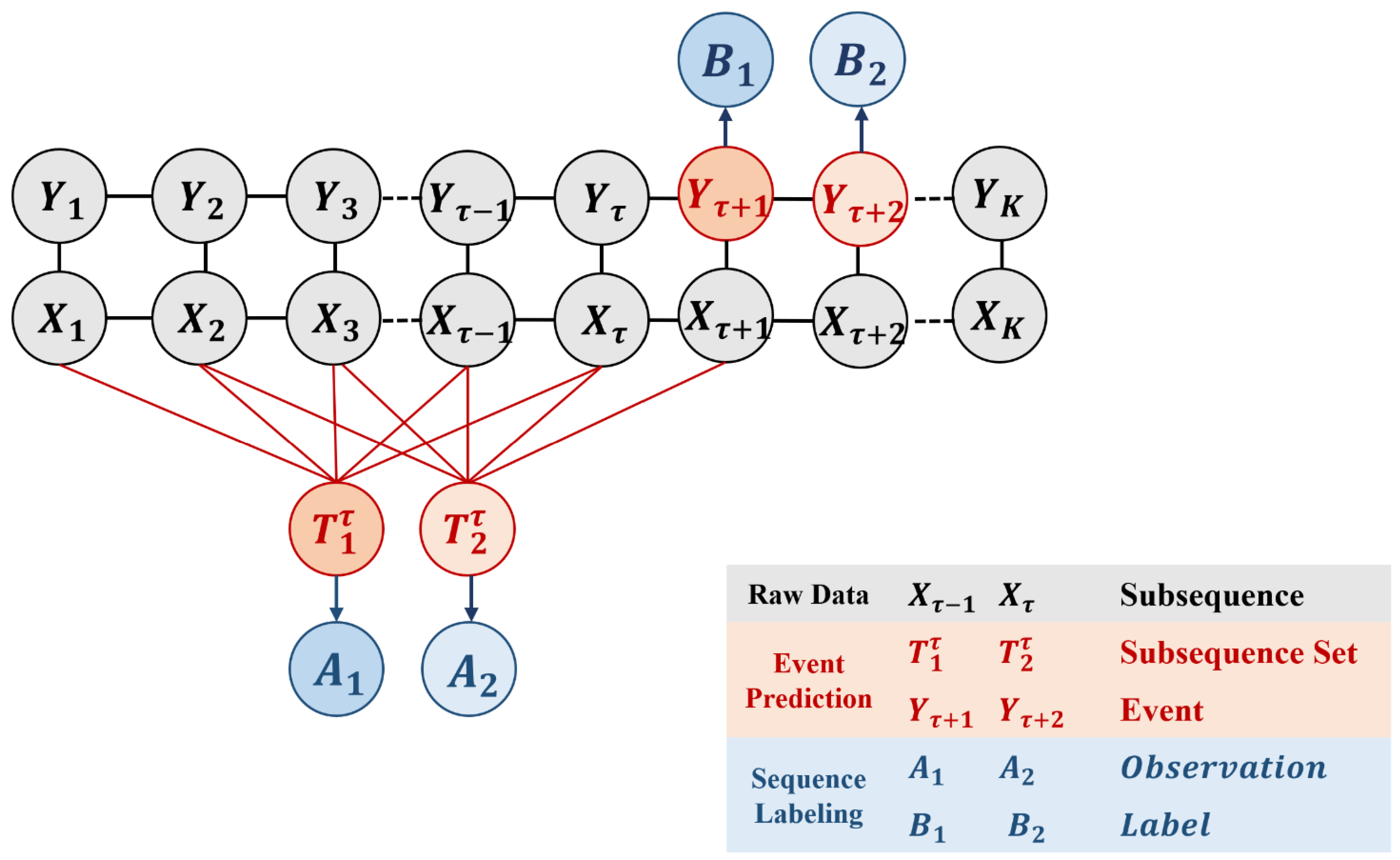

2.1. Definitions

- Each time series is independent and evenly distributed;

- The past of the event will continue into the future;

- The divided subsequence is appropriate for the description of the event.

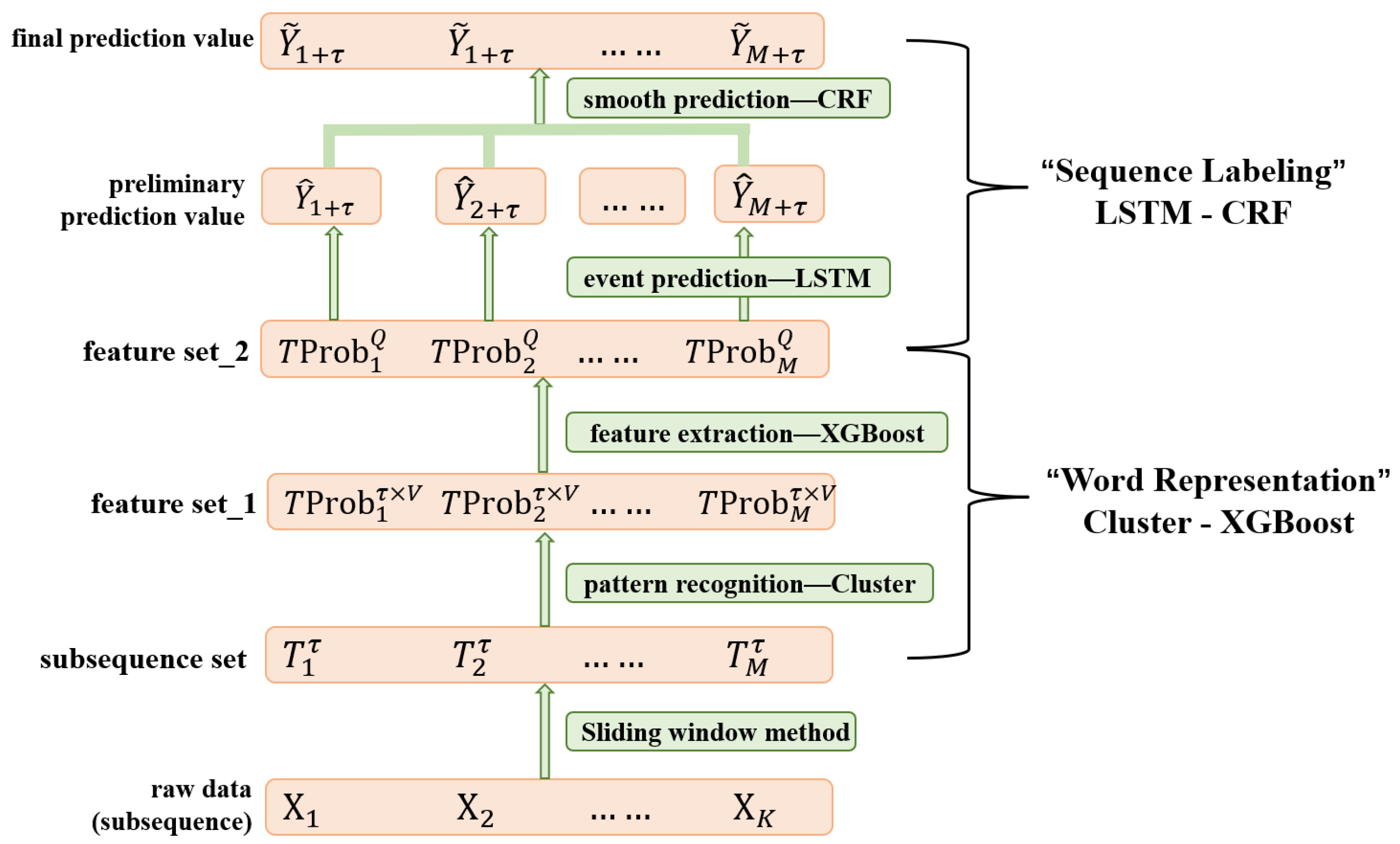

2.2. CX-LC Framework

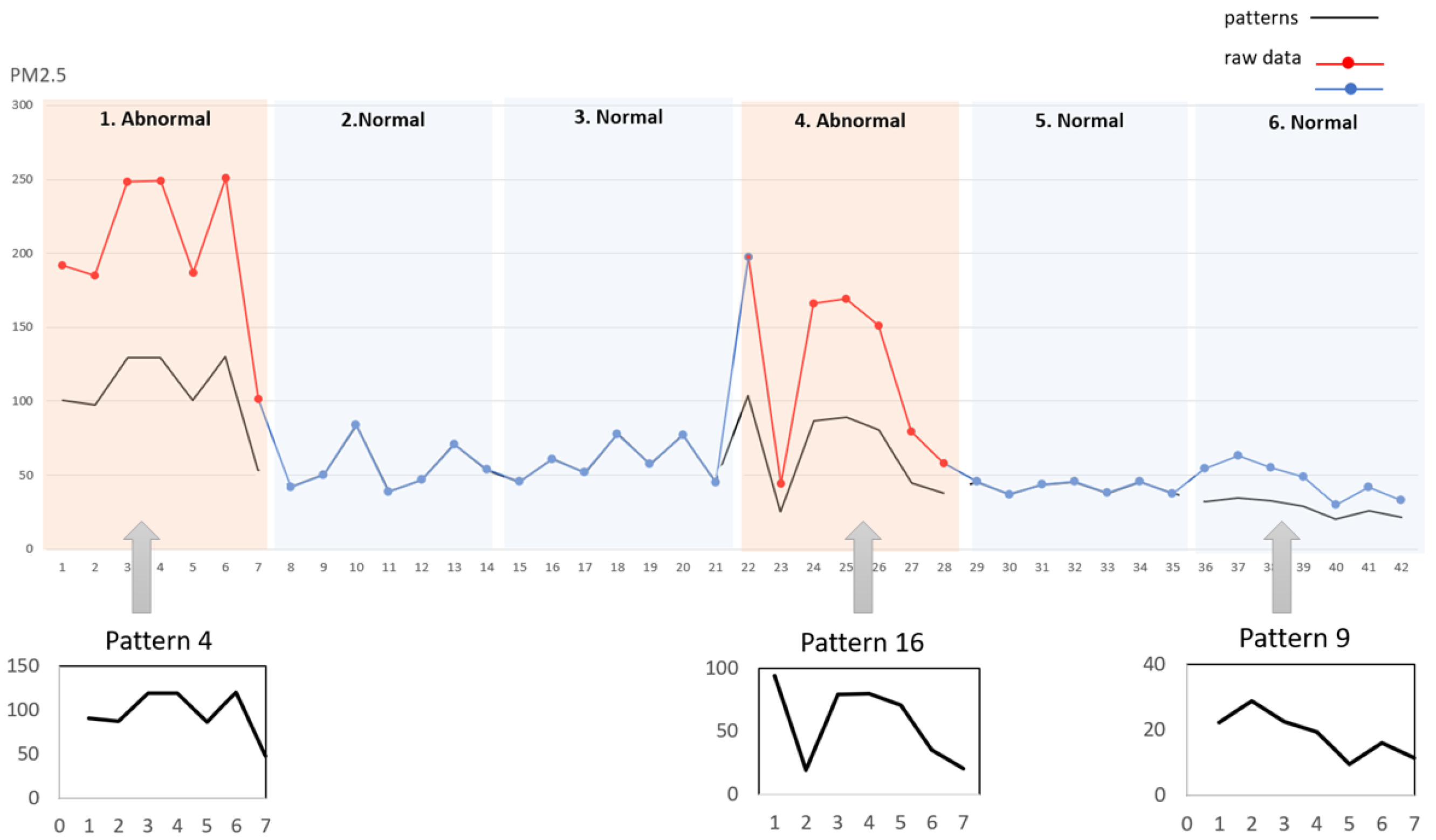

2.2.1. Patterns Recognition

2.2.2. Feature Extraction

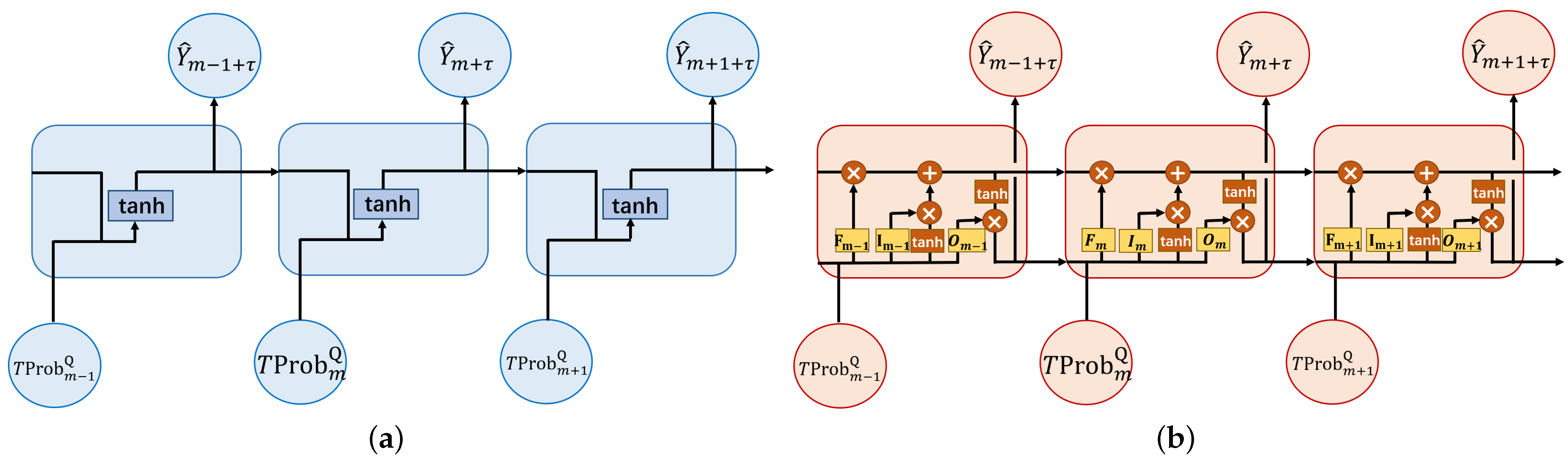

2.2.3. Event Prediction

2.2.4. Smooth Prediction

| Algorithm 1 The learning procedure of CX-LC. |

|

3. Experiment

- Q1: Compared with other advanced classification event prediction methods, how does CX-LC perform on the same prediction task?

- Q2: Do the pattern recognition, feature selection, and smoothing refinement parts of the CX-LC framework really improve the prediction results?

3.1. Introduction to Data Sets and Prediction Tasks

- 1.

- DJIA 30 Stock Time Series;

- 2.

- Web Traffic Time Series Forecasting;

- 3.

- Air Quality Data in India (2015–2020);

- 4.

- Daily Climate time series data.

3.2. Baseline Method

3.3. Implementation Details

3.4. Performance Comparison and Discussion

3.4.1. Performance Comparison

3.4.2. Discussion

4. Conclusions and Future Work

- 1.

- When performing feature extraction of subsequence sets, we can consider the pattern changes between subsequences, construct the pattern evolution diagram, and enrich the feature information;

- 2.

- The LSTM model is a relatively simple and conventional sequence labeling algorithm; only the sequence structure is considered in the calculation process, if the pattern is successfully constructed for the evolution diagram, we can try a more comprehensive network structure, such as GNN, GCN, etc.;

- 3.

- In the event prediction, the periodicity and seasonality of the original data were not considered, although such characteristics can be captured when fitting the CX-LC model. However, if these effects are directly reflected in the structure of the model, it may be able to improve the forecasting progress of the model;

- 4.

- The results of 4.4 show that the performance of CX-LC on small-sample data sets is better than other models, but the prediction effect on large data sets still needs to be improved.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix A

| Variable Symbol | Description |

|---|---|

| The i-th time series sample, which can be expressed as . | |

| is the k-th subsequence of , which can be expressed as . | |

| is the observation event of the k-th subsequence of . | |

| is a D-dimensional observation value of the time-series at time l. | |

| For , use the sliding window method to obtain M data sets containing subsequences from , denoted as . | |

| The m-th subsequence set of , . | |

| Based on subsequence set to predict the event of subsequence . | |

| The representative subsequence pattern in the time-series. | |

| Euclidean distance between states and subsequences . | |

| The probability of recognizing the subsequence as the state . | |

| Each subsequence can be calculated to obtain V identification weights, expressed as . | |

| The feature set of the subsequence set . | |

| The pattern feature of the filtered sub-sequence set is reduced to a Q-dimensional variable, and there is . |

References

- Theunissen, C.D.; Bradshaw, S.M.; Auret, L.; Louw, T.M. One-Dimensional Convolutional Auto-Encoder for Predicting Furnace Blowback Events from Multivariate Time Series Process Data—A Case Study. Minerals 2021, 11, 1106. [Google Scholar] [CrossRef]

- Wang, M.-D.; Lin, T.-H.; Jhan, K.-C.; Wu, S.-C. Abnormal event detection, identification and isolation in nuclear power plants using lstm networks. Prog. Nucl. Energy 2021, 140, 103928. [Google Scholar] [CrossRef]

- Soni, J.; Ansari, U.; Sharma, D.; Soni, S. Predictive data mining for medical diagnosis: An overview of heart disease prediction. Int. J. Comput. Appl. 2011, 17, 43–48. [Google Scholar]

- Arbian, S.; Wibowo, A. Time series methods for water level forecasting of dungun river in terengganu malayzia. Int. J. Eng. Sci. Technol. 2012, 4, 1803–1811. [Google Scholar]

- Asklany, S.A.; Elhelow, K.; Youssef, I.K.; Abd El-Wahab, M. Rainfall events prediction using rule-based fuzzy inference system. Atmos. Res. 2011, 101, 228–236. [Google Scholar] [CrossRef]

- Lai, R.K.; Fan, C.-Y.; Huang, W.-H.; Chang, P.-C. Evolving and clustering fuzzy decision tree for financial time series data forecasting. Expert Syst. Appl. 2009, 36, 3761–3773. [Google Scholar] [CrossRef]

- Molaei, S.M.; Keyvanpour, M.R. An analytical review for event prediction system on time series. In Proceedings of the 2015 2nd International Conference on Pattern Recognition and Image Analysis (IPRIA), Rasht, Iran, 11–12 March 2015; IEEE: Piscataway, NJ, USA, 2015; pp. 1–6. [Google Scholar]

- Anderson, O.D. The box-jenkins approach to time series analysis. Rairo Oper. Res. 1977, 11, 3–29. [Google Scholar] [CrossRef][Green Version]

- Cheng, Z.; Yang, Y.; Wang, W.; Hu, W.; Zhuang, Y.; Song, G. Time2graph: Revisiting time series modeling with dynamic shapelets. arXiv 2019. [Google Scholar] [CrossRef]

- Hu, W.; Yang, Y.; Cheng, Z.; Yang, C.; Ren, X. Time-series event prediction with evolutionary state graph. In Proceedings of the 14th ACM International Conference on Web Search and Data Mining, Virtual Event, 8–12 March 2021; pp. 580–588. [Google Scholar]

- Liu, M.; Huo, J.; Wu, Y. Stock Market Trend Analysis Using Hidden Markov Model and Long Short Term Memory. arXiv 2021. [Google Scholar] [CrossRef]

- Ma, X.; Hovy, E. End-to-end sequence labeling via bi-directional lstm-cnns-crf. arXiv 2016, arXiv:1603.01354. [Google Scholar]

- Malhotra, P.; Vig, L.; Shroff, G.; Agarwal, P. Long short term memory networks for anomaly detection in time series. In Proceedings of the 23rd European Symposium on Artifical Neural Networks, Computational Intelligence and Macine Learning, Bruges, Belgium, 22–24 April 2015; Volume 89, pp. 89–94. [Google Scholar]

- Senin, P.; Malinchik, S. Sax-vsm: Interpretable time series classification using sax and vector space model. In Proceedings of the 2013 IEEE 13th International Conference on Data Mining, Dallas, TX, USA, 7–10 December 2013; IEEE: Piscataway, NJ, USA, 2013; pp. 1175–1180. [Google Scholar]

- Rakthanmanon, T.; Keogh, E. Fast shapelets: A scalable algorithm for discovering time series shapelets. In Proceedings of the 2013 SIAM International Conference on Data Mining, Austin, TX, USA, 2–4 May 2013; SIAM: Philadelphia, PA, USA, 2013; pp. 668–676. [Google Scholar]

- Fawaz, H.; Forestier, G.; Weber, J.; Idoumghar, L.; Muller, P.-A. Deep learning for time series classification: A review. Data Min. Knowl. Discov. 2019, 33, 917–963. [Google Scholar] [CrossRef][Green Version]

- Chen, T.; Guestrin, C. Xgboost: A scalable tree boosting system. In Proceedings of the 22nd ACM Sigkdd International Conference on Knowledge Discovery and Data Mining, San Francisco, CA, USA, 13–17 August 2016; pp. 785–794. [Google Scholar]

- Bagnall, A.; Lines, J.; Bostrom, A.; Large, J.; Keogh, E. The great time series classification bake off: A review and experimental evaluation of recent algorithmic advances. Data Min. Knowl. Discov. 2017, 31, 606–660. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Ailliot, P.; Monbet, V. Markov-switching autoregressive models for wind time series. Environ. Model. Softw. 2012, 30, 92–101. [Google Scholar] [CrossRef][Green Version]

- Yang, Y.; Jiang, J. Hmm-based hybrid meta-clustering ensemble for temporal data. Knowl.-Based Syst. 2014, 56, 299–310. [Google Scholar] [CrossRef]

- Neogi, S.; Dauwels, J. Factored latent-dynamic conditional random fields for single and multi-label sequence modeling. Pattern Recognit. 2022, 122, 108236. [Google Scholar] [CrossRef]

- Kanungo, T.; Mount, D.; Netanyahu, N.; Piatko, C.; Silverman, R.; Wu, A. An efficient k-means clustering algorithm: Analysis and implementation. IEEE Trans. Pattern Anal. Mach. Intell. 2002, 24, 881–892. [Google Scholar] [CrossRef]

- Chen, C.; Zhang, Q.; Yu, B.; Yu, Z.; Lawrence, P.; Ma, Q.; Zhang, Y. Improving protein-protein interactions prediction accuracy using xgboost feature selection and stacked ensemble classifier. Comput. Biol. Med. 2020, 123, 103899. [Google Scholar] [CrossRef]

- Bengio, Y.; Simard, P.; Frasconi, P. Learning long-term dependencies with gradient descent is difficult. IEEE Trans. Neural Netw. 1994, 5, 157–166. [Google Scholar] [CrossRef]

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef]

- Kingma, D.P.; Ba, J. A method for stochastic optimization. arXiv 2015. [Google Scholar] [CrossRef]

- Lafferty, J.; McCallum, A.; Pereira, F.C.N. Conditional Random Fields: Probabilistic Models for Segmenting and Labeling Sequence Data; Penn Libraries: Philadelphia, PA, USA, 2001. [Google Scholar]

- Zheng, S.; Jayasumana, S.; Romera-Paredes, B.; Vineet, V.; Su, Z.; Du, D.; Huang, C.; Torr, P. Conditional random fields as recurrent neural networks. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 13–16 December 2015; pp. 1529–1537. [Google Scholar]

- Roth, D.; Yih, W. Integer linear programming inference for conditional random fields. In Proceedings of the 22nd International Conference on Machine Learning, Bonn, Germany, 7–11 August 2005; pp. 736–743. [Google Scholar]

- Chen, X.; Chen, Y.; Saunier, N.; Sun, L. Scalable low-rank tensor learning for spatiotemporal traffic data imputation. Transp. Res. Part C Emerg. Technol. 2021, 129, 103226. [Google Scholar] [CrossRef]

| Datasets | djia30 | Web | PM2.5 | CO | Temp | |

|---|---|---|---|---|---|---|

| Hyper-Paramete | ||||||

| #(samples)—N | 30 | 50,000 | 38 | 24 | 100 | |

| #(subsequences)—K | 518 (wk) | 26 (mth) | 130 (wk) | 111 (day) | 222 (wk) | |

| subsequence length—L | 5 (day) | 30 (day) | 7 (day) | 24 (h) | 7 (day) | |

| observation dimension—D | 4 | 2 | 1 | 1 | 1 | |

| history length— | 50 (wk) | 12 (mth) | 20 (wk) | 20 (day) | 50 (wk) | |

| positive ratio (%) | 19.5 | 28.2 | 32.1 | 37.0 | 38.5 | |

| Datasets | Djia30 | Web | PM2.5 | CO | Temp | |||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Models | P | R | F1 | P | R | F1 | P | R | F1 | P | R | F1 | P | R | F1 | |

| LBL | CX-LC | 35.79 | 31.73 | 33.64 | 47.68 | 38.96 | 42.88 | 70.43 | 60.19 | 64.91 | 75.47 | 66.06 | 70.45 | 86.56 | 74.07 | 79.83 |

| X-LC | 27.55 | 29.41 | 28.45 | 40.23 | 38.24 | 39.21 | 69.07 | 58.42 | 63.30 | 57.36 | 54.04 | 55.65 | 86.23 | 72.92 | 79.02 | |

| C-LC | 27.91 | 27.22 | 27.56 | 39.04 | 38.07 | 38.55 | 64.55 | 56.24 | 60.11 | 51.33 | 50.76 | 51.03 | 73.47 | 70.66 | 72.04 | |

| CX-L | 34.50 | 30.35 | 32.29 | 41.37 | 44.01 | 42.65 | 64.98 | 59.14 | 61.92 | 70.71 | 68.52 | 69.62 | 85.81 | 71.81 | 78.19 | |

| CLS | EvoNet_M | 36.55 | 30.87 | 33.47 | 46.12 | 50.17 | 48.06 | 60.59 | 58.10 | 59.32 | 70.89 | 61.18 | 65.68 | 85.49 | 74.03 | 79.35 |

| EvoNet_S | 33.78 | 27.90 | 30.56 | 35.67 | 26.26 | 30.25 | 60.11 | 56.60 | 58.32 | 62.19 | 62.15 | 62.17 | 79.32 | 65.38 | 71.68 | |

| Time2Graph | 30.27 | 26.38 | 28.19 | 42.31 | 41.34 | 41.82 | 60.09 | 57.22 | 58.62 | 64.91 | 63.06 | 63.97 | 80.17 | 73.24 | 76.55 | |

| X-HMM | 29.63 | 26.77 | 28.13 | 40.11 | 39.71 | 39.91 | 59.04 | 56.78 | 57.89 | 63.45 | 64.87 | 64.15 | 79.04 | 68.98 | 73.67 | |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhong, Z.; Lv, S.; Shi, K. A New Method of Time-Series Event Prediction Based on Sequence Labeling. Appl. Sci. 2023, 13, 5329. https://doi.org/10.3390/app13095329

Zhong Z, Lv S, Shi K. A New Method of Time-Series Event Prediction Based on Sequence Labeling. Applied Sciences. 2023; 13(9):5329. https://doi.org/10.3390/app13095329

Chicago/Turabian StyleZhong, Zihan, Shu Lv, and Kaibo Shi. 2023. "A New Method of Time-Series Event Prediction Based on Sequence Labeling" Applied Sciences 13, no. 9: 5329. https://doi.org/10.3390/app13095329

APA StyleZhong, Z., Lv, S., & Shi, K. (2023). A New Method of Time-Series Event Prediction Based on Sequence Labeling. Applied Sciences, 13(9), 5329. https://doi.org/10.3390/app13095329