1. Introduction

Object recognition is one of the fundamental problems in the field of machine vision, with deep neural networks achieving state-of-the-art performance in the last decade. These advances led to successes in numerous applications, such as autonomous vehicles, surveillance and mapping tasks, and industrial object detection. Nonetheless, in all the aforementioned fields, the reliability of these algorithms is paramount, and further research is necessary to increase generalization and robustness to various disturbances to ensure the applicability of these methods in real-world situations such as robotics applications or autonomous vehicle sensor systems.

The generalization capabilities of deep neural networks have been a major focus of recent research, resulting in substantial knowledge on the effect of training methods, efficient architectures or data augmentation strategies. Nonetheless, the robustness to various input disturbances has not been explored to the same degree. We argue that, due to the tendency of neural networks to overfit to data, these effects can affect performance significantly.

Due to the data augmentation strategies frequently used during the training of neural networks, some simple disturbances are relatively well-handled by these methods. These usually include various geometric and color transformations and random noise. However, some more complex environmental disturbances, such as rain, unique lighting conditions or fog are rarely investigated. The focus of our paper is partial occlusion, as it is one of the most significant and common sources of disturbance [

1,

2].

Naturally, the reason for the relative absence of these factors from deep learning training procedures is their cost; Acquiring an accurately labelled dataset containing the aforementioned natural disturbances would result in considerable difficulty. In order to resolve this issue, we propose using a high-quality synthetic dataset, generated using state-of-the-art rendering techniques, as that allows us to create high-quality, automatically labelled datasets containing ground truth object silhouettes and occluded areas with minimal costs.

Furthermore, most current object detection algorithms are passive methods, meaning that they use a single image to predict the location and classes of object in the scene, while they cannot perform actions to influence their environment. This limitation renders these methods unable to resolve some of these disturbances. Yet, humans are not brain-in-the-jar systems, as we are able to engage with our environment, creating what is called a Perception Action Loop (PAL). This allows us to combine information from multiple observations that were taken deliberately to maximize our understanding of the scene.

In this paper, a novel method is presented that is capable of identifying occlusion, and efficiently avoiding it by moving the camera to a new position and orientation. In order to achieve this, an OpenAI Gym-based reinforcement learning environment is developed that contains multiple objects in occlusion, and provides an RGB-D image for the agent. The environment also uses differentiable rendering to allow us to compute the derivative of the occlusion area with respect to the camera position (or other scene parameters). We show that, by using this gradient, the occlusion can be avoided using simple gradient-based optimization. Previous methods, e.g., Refs. [

3,

4,

5], rely on an end-to-end neural network-based solution for occlusion detection, which do not allow for such an optimization-based occlusion-minimization.

We also extend our work [

6] to propose two neural-network based methods that are able to resolve occlusion without relying on gradients extracted from the simulated environment. The first of such methods uses self-supervised learning to predict the occlusion map in the image, while it also predicts the gradient direction, using only an RGB-D image as input. The second method uses end-to-end reinforcement learning to avoid occlusion in a minimal amount of steps, using the same input. To demonstrate the effectiveness of the proposed learning methods, the Bayesian

t-test was used on 50 random runs of the environment to show that these methods credibly outperformed the gradient method, as well as the random movement policy.

To summarize, our main contributions are the following:

A novel, open-source reinforcement learning environment is developed using PyTorch3D that allows the rendering of images that contain occluded objects. The environment uses differential rendering to provide researchers with a differentiable reward function measuring the amount of occlusion in the scene.

The usability of this environment is demonstrated by performing Stochastic Gradient Descent (SGD) optimization of the camera pose using this differential reward to successfully resolve occlusion in scenes with multiple objects.

A novel solution is presented for occlusion avoidance using only RGB-D images as input. This method is trained to predict occlusion maps and gradient directions using self-supervised learning by generating example scenes in the environment.

Furthermore, a reinforcement learning (RL) agent is also trained to successfully avoid occlusion in the environment between several objects. This agent also uses RGB-D images as input, and is trained to avoid occlusion in the minimal amount of steps.

Finally, the effectiveness of the different methods is evaluated and compared via a series of experiments. We show the superiority of the RL-based method using the Bayesian t-test.

In

Section 2, the most relevant related works are reviewed, including methods for object detection under occlusion, as well as active perception methods. Then, in

Section 3 the environment is presented, detailing the action and observation spaces, as well as the reward function. In

Section 4 gradient-based optimization is presented, while in

Section 5 neural network-based methods are described.

Section 6 details the results of experimental evaluation, including the Bayesian

t-test, while

Section 7 summarizes our work, and details planned further research.

2. Previous Work

In this section, research connected to the topic of this paper is presented. First, works on object detection under partial occlusion are explored, then, in the latter part of this section, a selection of works that realize parts of a full perception–prediction–action loop are discussed in detail.

2.1. Object Detection in Occluded Scenes

Object detection is one of the fundamental problems in computer vision. In recent years, significant advances have been made in this field. Deep neural networks have revolutionized this field, starting with region-based methods [

7,

8,

9]. Then, single-shot detectors, such as SSD or YOLO, provided significantly more efficient detectors [

10,

11,

12], with their more advanced variants [

13,

14,

15] achieving SOTA results on standard object detection tasks. Transformer-based methods [

16] have also made advances recently, while, finally, anchor-free methods, such as CenterNet [

17], aim to solve the problems of post-processing steps, such as Non-Maximum Suppression (NMS).

Still, mostf these detection methods do not directly address the problem of detecting input disturbances, especially partial occlusion, in a supervised manner. Instead, most works focus on improving their results when occlusion is present without explicitly detecting it. This is partially due to the fact that, to the best of our knowledge, no large-scale datasets exist, where occlusion labels (e.g., masks defining the exact overlap area between two object silhouettes) are available, making direct supervised learning of occlusion maps infeasible. Notably, two datasets that contain partially occluded objects have been released recently. These sets of images with occluded objects and the adhering ground truth masks, describing the regions of the individual objects, are entitled the Occluded COCO [

18] and OVIS datasets [

19]. However, these datasets do not contain occlusion masks, i.e., the exact overlap area between two object silhouettes. This property limits the usability of both datasets for machine learning-based occlusion map detection.

Object detection methods that aim to deal with partial occlusion need to rely on some form of self-supervised learning, meaning that the labels have to be generated automatically [

20]. The most straightforward way of doing this is to generate synthetic occlusion in natural images, and train neural network-based methods to make correct predictions in spite of these artificial occlusions. Examples of this approach include Follmann et al. [

3], where a semantic segmentation method is trained to predict the full object segments correctly, despite parts of the image being masked out. Zhan et al. [

21] presented a generative approach. They trained a Generate Adversarial Network (GAN) to accurately restore masked-out parts of an image, thus, successfully restoring information lost by occlusion.

While the performance of these methods is superb, unfortunately, the use of synthetic occlusions introduces a critical weakness. Since the distribution of these masked-out image parts is vastly different from the distribution of a natural image, detecting the occluded parts becomes significantly easier, and, thus, the generalization capabilities of these methods in regard to natural occlusions are questionable.

This is not the case for Compositional Neural Networks (CompNets) [

4,

5], which are one of the most notable methods of object detection under partial occlusion. This method employs unsupervised learning to learn the distribution of occluding parts and distinguish these from the distributions of parts containing relevant objects. This allows the method to be trained in natural images containing natural occlusions, thus, avoiding the aforementioned problems.

While unsupervised learning of partial occlusion is impressive, we argue, however, that using supervised learning from annotated data might lead to superior performance. In a previous work, Pásztor [

22] used a simulation created via the Unity game engine to render realistic images with natural occlusion. They also used the game engine to extract weak occlusion labels for training. The intersections of the object bounding boxes were labeled as occlusion. They then trained a semantic segmentation network to predict the occlusion masks directly from RGB images, and found—quite surprisingly—that the network had a tendency to learn the true occlusion mask, despite being only provided with the inaccurate mask.

2.2. Active Detection Methods

While the object detection methods detailed above have achieved considerable success, they also share a limitation. All these methods envision object detection as a passive task, where the method is presented in a single frame (or in some cases multiple frames from a video), and responds with a single prediction. Even in multi-view systems, the collection of observations is independent from the detection method. In reality, however, most natural object detection systems (i.e., people and animals) integrate the task of data collection and object detection, and the needs of the latter influence the former, as these agents are able to change the parameters of the observed scene. This way, these autonomous agents can derive more robust predictions by implementing the so-called Perception–Action Cycle (PAC).

However, to implement such an active observation procedure, every agent needs to combine two essential skills. First, the agent needs to understand and correctly predict the effect of its own actions on the environment. This can be achieved by employing some form of unsupervised or—more likely due to the nature of the problem—self-supervised learning. Notably, this relatively novel type of machine learning has been a major focus of research recently, and is already being used to predict realistic possible futures in frame sequences [

23].

Second, the agent needs to be able to perform a sequence of actions that bring it to a desired state in a complex, interactive environment. This is usually accomplished via Reinforcement Learning (RL) [

24,

25], in which the agent is trained to maximize a reward function by performing action in the environment. This is usually a rather difficult problem due to difficulties, like the credit assignment problem and the exploration-exploitation dilemma. In summary, for the algorithm to be able to perform active object detection, it needs to combine all three forms of learning: reinforcement learning for determining actions, self-supervised for predicting their effects, and supervised for the object detection itself.

In the literature, there are a number of notable methods that combine the above types of learning in the same algorithm. For instance, Nair et al. [

26] published a solution that combines reinforcement and self-supervised learning, where the agent imagines goals for itself, and derives an improved understanding of its environment by trying to achieve them. Similarly, curiosity-based reinforcement learning methods [

27,

28,

29] include forward stepping prediction goals, while they also encourage agents to reach states, where their prediction is flawed, thereby facilitating the exploration of novel states. Finally, World Models [

30] combines perception methods with prediction and action modules, coming relatively close to implementing a perception–action loop, although the goal of the method is different.

Notably, several methods exist in the literature that use frames from multiple views to perform object detection [

31]. The skill of combining multiple information sources is a necessary component of active perception. It is worth mentioning that, in a previous work [

32,

33], we implemented a reinforcement learning environment including multiple cooperating agents that each had noisy partial observations. In this scenario, the communicating agents were rewarded for being able to identify scene objects accurately, and, therefore, they developed policies that took certain actions just to improve the agent’s vision of the scene.

3. The RL Environment

In this section, the reinforcement learning environment is presented in detail, including its observation and action spaces, and the reward function. The environment itself is based on differentiable rendering, to create the scene and observations, Meta’s PyTorch3D [

34] framework is used. We chose to use PyTorch and PyTorch3D for this project mainly due to the frameworks’ versatility and ease of use, as well as the enormous ecosystem of open-source projects, tools and knowledge transfer systems available. This library enables machine learning on 3D data, extending the popular PyTorch framework [

35]. The framework provides advanced mesh storage and batching methods, essential for 3D computer vision research. Furthermore, PyTorch3D also includes several differentiable renderers, which enabled us not only to calculate a reward function, based on the number of occluded pixels, but also to compute the derivative of this reward with regard to the camera pose.

A single instance of the environment renders a scene containing three different objects, selected randomly from the ShapeNet dataset [

36]. The objects are placed in different locations along the z axis. The first object is placed in the origin, the others are displaced in the negative direction by default values of

and 2. The camera is placed 4 units away on the negative z axis. This creates a base setup that guarantees occlusion between objects in the scene.

To avoid trivial solutions, and to allow more disturbances, the latter two objects—i.e., the ones not placed in the origin—are offset from the z axis horizontally. The size of the displacement is randomly chosen from a standard normal distribution, such that the camera is always able to observe some occlusion between the objects. The horizontal offset of the two objects are equal in magnitude but are opposite to each other—i.e., if the object closer to the camera is offset to the right, then the second object is moved to the left.

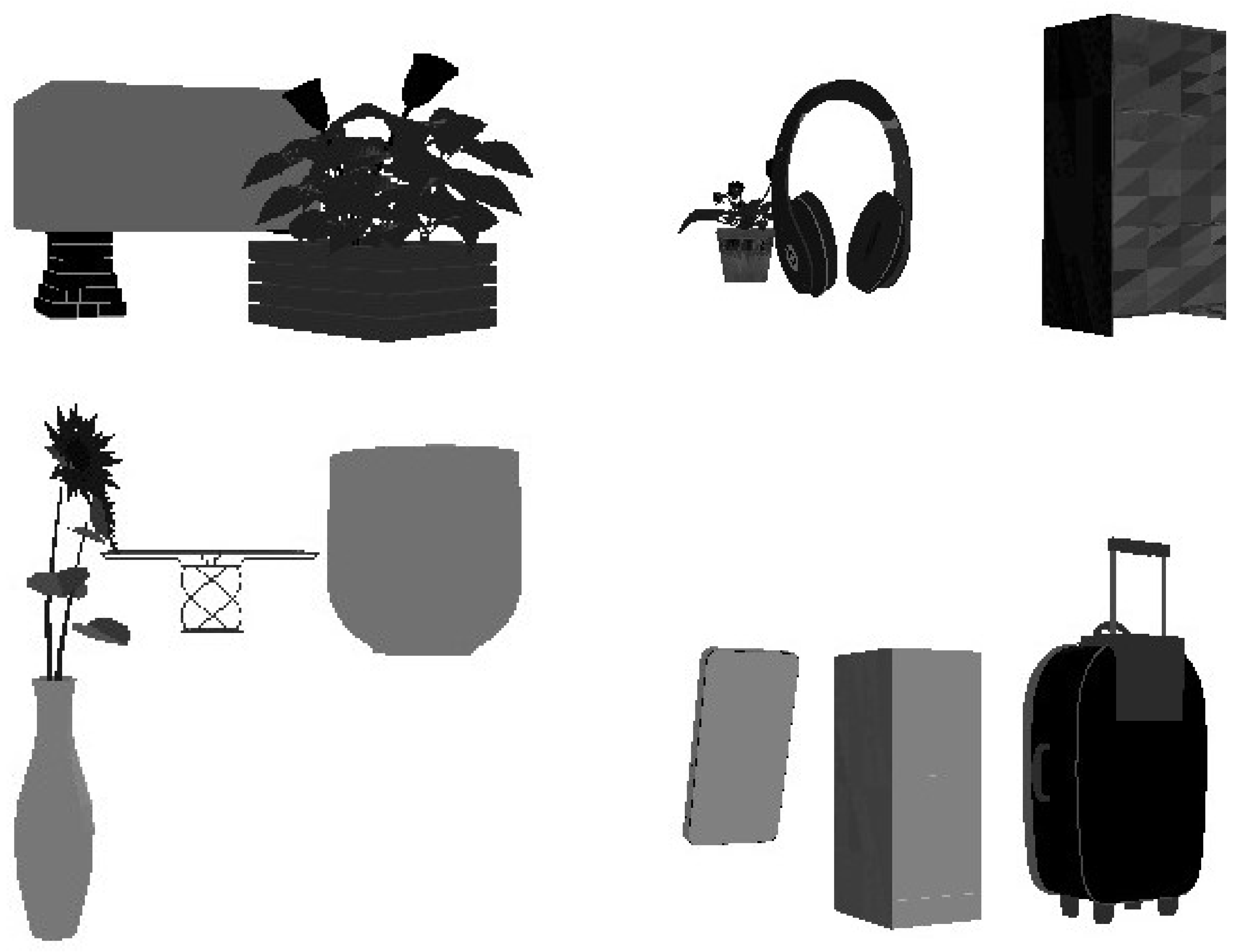

Figure 1 shows a few typical environment setups.

3.1. Action Space

As the goal of the environment is to train the agent to take a more advantageous position that ensures better visibility of the objects, the actions themselves are movements of the virtual camera used for rendering. Now, it is worth noting that if we intend to minimize the amount of occlusion measured in the image (using pixels or any other unit), then several trivial solutions present themselves. The agent could simply turn the camera away from the scene, thus ensuring no occlusion is seen by not seeing anything at all. Alternatively, the agent could just move the camera infinitely far away from the scene, thus reducing the visible objects to a size smaller than a single pixel, again minimizing the occlusion of the scene.

Thankfully, both trivial solutions are easily avoided by restricting the camera position to a spherical surface with a fixed radius, while ensuring that the camera is always in a look-at orientation. For this reason, the two actions the agent can take are to move the camera along this sphere using the azimuth (

) and elevation (

) angles. Since PyTorch3D expects the camera position to be given in the Cartesian coordinate system, the three parameters (

, and the radius

) have to be converted, and the look-at orientation has to be computed using PyTorch3D’s built-in functions. The spherical-to-Cartesian conversion is calculated as follows:

The initial extrinsic camera parameters in the spherical coordinate system are given by the equations below:

3.2. Observation Space

As stated previously, we intended to create an environment that allows end-to-end learning from images. However, having depth information would presumably improve the agent’s performance, so rendering RGB-D images of the scene would be desirable. Due to the wide availability of commercial RGB-D cameras, this environment is not unrealistic. Depth images can be simply acquired from the Z buffers of the renderer. It is worth noting, however, that depth images generated this way are somewhat “too perfect”, given that real depth cameras usually have gaps at object boundaries due to the parallax effect.

Once the new camera position is calculated, it is turned into Cartesian coordinates, and the look-at orientation is computed. Then, the camera pose is converted into a standard homogeneous format , and, then, a Hard Flat Shader is used to render the RGB image. By default, the RGB and depth images are rendered at a resolution, while other render settings are set to PyTorch3D’s defaults.

During our experiments, we noticed that all of PyTorch3D’s renderers tended to incorrectly calculate the maximum amount of triangle faces to use per bin, resulting in a final render with parts of certain more detailed objects missing. We solved this issue by automatically calculating a much higher limit for this variable, based on the number of triangles in the scene. However, this resulted in considerably higher render times in scenes that contained more complex geometries.

3.3. Reward Function

The final element of the environment is the reward function that is used for training the reinforcement learning agent. Here, our goal was to ensure that the agent found a pose that avoided all occlusion in as few steps as possible. To ensure this, in every step when the occlusion is not resolved, we give a small penalty () to the agent. Once the occlusion has been successfully avoided, the agent receives a reward of 5.

However, we felt that giving only sparse rewards at the end of the optimization would result in subpar performance compared to using a dense reward system. By giving direct feedback to the RL agent after every step, the training procedure can establish the relationship between actions and rewards much more quickly. To do this, we compare the amount of occlusion before and after the action is taken, and add this to the reward.

However, this addition presents a new problem. The amount of occlusion is measured in pixels and has a variance that is several orders of magnitude higher than the fixed rewards introduced earlier. Moreover, the amount of occlusion varies significantly due to the size of the objects in the environment, which is out of the agent’s control. To solve this problem, we normalize the size of this component with the size of the objects in the given scene. For our test. we investigated the following two normalization schemes:

Union-based normalization: the occlusion-based reward is divided by the total area of the object silhouettes in the first frame.

Intersection-based normalization: the occlusion-based reward is divided by the area of the occlusion in the first frame.

To summarize, the reward function is defined as:

where

is the amount of occluded pixels in timestep

t, and

P is the additional penalty term.

4. Optimization

As described above, one of the main objectives of our work was the creation and training of an agent capable of avoiding occlusions and successfully observing all objects in the simulated environment. Since this was a rather complex task to be accomplished by an autonomous agent purely relying on reinforcement learning, we developed a model-based learning method, which enables the agent to predict the alterations of the occlusion map following an action, i.e., a camera movement. This method allows the agent to predict the consequences of its actions and plan ahead—thus, achieving much faster convergence.

We found, however, that a superb solution was also achievable. The differential rendering capability provided by PyTorch3D enables the differentiation of the occlusion map with regard to the camera movement. This attribute enables the direct prediction of the locally optimal camera movement using the RGB-D images. Nevertheless, we needed to investigate whether such a loss/reward function was viable.

To this end, we defined an optimization method that uses Gradient Descent (GD) to achieve zero occlusion—that is, we move the camera to a pose where the amount of occluded pixels becomes 0. As mentioned above, we wanted to prevent degenerate solutions, and, therefore, the camera positions were restricted to a spherical surface. The surface was defined by its non-variable radius. Hence, the only parameters that the agent was able to change during optimization were the elevation () and azimuth () angles. The solutions yielded by this optimization could still be optimal, as moving radially, i.e., directly towards, or outwards from, the center of the sphere does not yield the complete disappearance of occlusions.

The loss function was defined as follows:

The differential rendering engine accessible via the PyTorch3D-based environment enabled the rendering of the silhouettes for all the individual objects in the scene.

The full occlusion map was created by an element-wise multiplication of these images.

The sum of the pixels of the full occlusion map was calculated yielding the fully differentiable loss function.

Alternatively, the loss can be expressed in equation form as:

where

N is the number of objects,

is the silhouette of the

Ith object, and ⨀ is element-wise multiplication.

Note that, in the cases where one of the objects fully contained the other object, i.e., the latter was not visible to the agent, the derivative of the loss function evaluated to 0 and no additional information was earned. To forego this problem, we introduced a number of large-kernel smoothing operations on the individual silhouette images. This caused them to be translated into a form where the pixel values corresponded to their distances from the original object silhouettes. Using this modification, we were able to ensure smooth gradients. However, this problem was solved in PyTorch3D, since it already produces grayscale silhouette renders.

The extrinsic camera parameters, i.e., elevation and azimuth angles, were then optimized using the occlusion map and a learning rate of . During optimization a common error scenario occurred when the output gradient of the render engine was NaN. We analyzed the occurrence of such errors, but found no evident pattern and concluded that the phenomenon resulted from within the PyTorch3D implementation of the engine. In order to avoid further complications resulting from NaN gradients, we introduced a workaround, which was that, whenever this occurred, the optimization was rerun after randomly perturbing the camera position.

5. Architecture and Training

In this section, the architecture and training procedure are presented. The goal of our neural network was to implement a strategy for occlusion avoidance, which would reduce the problem of occluded object detection to an occlusion-free scenario. This step is necessary, since the direct gradient-based optimization method is only utilizable in the original, synthetic environment. However, a CNN-based method does not require environment-calculated gradients, and only requires the input images for its functionality. This allows the below-described approach to be usable in real-world environments, given the right configuration. The Convolutional Neural Network (CNN) presented in this section was also trained to use the RGB-D images for predicting the occlusion map and gradient directions, as we predicted that these would aid the network in learning useful representations of the input.

5.1. Model Architecture

Since our goal with the CNN-based method was to predict the outputs for the images rendered in the environment, we designed a multi-head structure that, once properly trained using multi-task learning, would be capable of producing the occlusion masks, as well as the prediction for the environment gradients showing the optimal camera movement derived via differential rendering. The three parts of the architecture are:

a shared convolutional encoder,

a convolutional decoder, responsible for predicting the occlusion maps, and

a dense head predicting the gradient directions.

Our architecture is designed as a U-Net-like [

37] structure, containing both an encoder and decoder subsystem Both the encoder and decoder subsystems are designed as U-Net-like structures. The resulting architecture makes use of 5 downscaling and upscaling blocks, each with 2 consecutive,

convolutional layers, followed by ReLU and BatchNorm. For downscaling and upscaling, strided and transposed convolution blocks were used, respectively. The initial channel count of 8 was doubled on every downscaling step, and skip connections between the encoder and decoder networks were utilized at every level. We designed and trained numerous different variations of these parts. We analyzed the impact of having residual connections in our convolutional blocks between the two parts in the R-UNet variant, and then introduced depth-wise separable convolution blocks in the SR-UNet version. We also tested the effect of atrous convolutions using the SAR-UNet variant. The proposed architecture is visualized in

Figure 2.

5.2. Pre-Training

We created a labeled dataset using the above-defined environment for performing pre-training. For the database, all the ShapeNet-provided object categories were used. A total of 20 images for 6500 random scenes were generated, which gave us a total of 130,000 256 × 256 RGB-D images with the corresponding gradient directions and occlusion masks. Moreover, the camera positions for each image were saved. The algorithm used for dataset generation is shown in Algorithm 1.

| Algorithm 1 Dataset generation |

Input: # of scenes: # of images per scene: Output: Dataset: D - 1:

forj in range() do - 2:

Initialize Camera - 3:

for Object in range(3) do - 4:

Initialize Object → Objects - 5:

for k in range() do - 6:

Render Camera Image → - 7:

Calculate Occlusion Mask → - 8:

Calculate Camera Gradient via SGD → - 9:

Zip Data as [, , ] → - 10:

Move Camera Position Randomly - 11:

Zip Data over k→ - 12:

Zip Data over j→D - 13:

returnD

|

Backface culling and HardFlatShader were used, respectively, for rasterizing and shading the 3D models. We then split the dataset randomly using an 80–20% ratio. We did so while keeping all 20 images resulting from the individual scenes in the same split—that is either training or validation. The resulting task was, therefore, more complex than if the dataset split jad been carried out along the individual images.

The resulting dataset provided images with occluded objects as well as occlusion maps for three objects. Moreover, it provided the environment gradient for the optimal step at each environment state, i.e., relative camera and object positions. In contrast with previously published occlusion datasets [

18,

19], this array of data at each given datapoint allowed the training of a PAL agent.

We evaluated the model following every epoch during the training time, using the validation split, and assured that the best performing models were saved. We augmented the dataset using Color Jitter and randomized horizontal flipping, and trained the model using an AdamW optimizer with Cosine Annealing Learning Rate Schedule. For the two heads of the network we used different loss functions. The occlusion mask prediction was evaluated using the Soft Dice Coefficient (SDC), whereas the gradient direction was optimized with MSE loss between the ground truth and predicted values. In practice, the sine and cosine values of the gradient direction were used for calculating the error. To summarize, the loss function used for pre-training is the following:

where

f and

g are the segmenter and gradient predictor networks parameterized by

and

, respectively.

is the input image, while

and

are the ground truth gradient direction and occlusion mask. ⨀ is an element-wise multiplication, and

and

are the relative weights of the two loss terms.

Table 1 shows the hyperparameters we used during training.

5.3. Training

The reinforcement learning agent was based on this pre-trained model, as it used the CNN as its backbone. We trained action and value heads of the RL agent using Proximal Policy Optimization [

38]. Two variations were trained: one with union-based and one with intersection-based normalization.

Table 2 shows the hyperparameters we used for training the reinforcement-learning agents.

The training was performed using the following loss function:

where

is the advantage function,

is the PPO hyperparameter,

and

are the actor and critic networks, respectively, while

is the target value function derived from the reward

R (defined in Equation (

7)).

Figure 3 shows the number of steps taken to reach the unoccluded state and the rewards received by the agents. Note that the training was successful, since both RL agents were able to learn the solution of the given problem and avoid occlusion in a relatively low amount of steps.

6. Experimental Results

In this section, the results of our experiments are presented. First, we discuss the results of pre-training, and, then, we compare the results of the fully neural approaches with the direct, environment-supplied gradient-based occlusion avoidance. Our experiments were performed using an Intel i5 8400U CPU, an NVIDIA Titan XP GPU, and 16 GB DDR4 RAM, running Ubuntu 20.24.

6.1. Occlusion and Gradient Estimation

The results of the training procedures introduced in

Section 5 are shown in

Table 3. The best loss values of the training are presented, i.e., the dice-loss (denoted with Loss) and the MSE loss of the multi-task occlusion and gradient estimator heads. In addition, the pixel-wise accuracy and the Intersection over Union (IoU) metrics are also given for the occlusion predictions. The results of the four previously introduced network variants are compared in the table. Moreover, during one of our experiments, the smooth L1 loss was used (with

) to train the gradient direction estimator head.

Based on the results shown in

Table 3, we concluded that the SAR-UNet-L1 architecture slightly outperformed the other 4 network variants on the metrics related to the occlusion mask prediction task, and strongly surpassed them on the gradient direction estimation task. We strongly suspected that this increased accuracy was caused by slowly vanishing the smooth L1 loss around zero that allowed the persistence of useful gradients around small loss values. We found that separable convolutions caused the generalization capabilities of the network to improve, while only slightly deterring the performance of the network. As a result we decided to keep these modifications as implemented for the later experiments. Although a

IoU measure is generally not considered great, we emphasize that, in this task, the agent often had to predict the occlusion mask for fully occluded objects—a completely impossible task. For this reason, we accepted the training results, since the the neural network often had little to no clue about the environment and, therefore, was met with a task incorporating increased complexity as a result of fully occluded objects.

6.2. Occlusion Avoidance

With the neural network-based agent, we performed numerous experiments using the environment described in

Section 3. For the reproducibility of the experiments, we designed two simple test scenarios in which the object categories were fixed. First, we tested capabilities of the agents using 2 identical chairs, and, then, using 2 identical tables in the environment.

Figure 4 shows the initial environments for these test cases.

6.2.1. Optimization Using Environment Gradients

The test cases were repeated numerous times, where we varied the initial azimuth (

) value between [

;

) with a step size of

. The results shown in

Figure 5 visualize the main advantage of the pre-trained method. With objects characterized by complex geometries, such as the chairs and the tables used, the environment-supplied gradient-based method took a considerably higher amount of steps to reach an unoccluded state, especially if the initial azimuth value was set to be low, i.e., between −2

and 2

. We argue that this was caused by the environment gradients moving the camera towards local minima, which were more common in cases of objects containing structural holes and beams. This phenomenon can be seen from the variance of the steps taken in

Figure 5. During the two chairs test case, when the initial azimuth value was set to 0, the direct gradient-based agent took 2377 steps to find an unoccluded state, whereas completing the task for two tables only took 163 steps. Note that, in the figure, the maximum number of steps was limited to 2000 to enhance visibility.

We found that the methods usually yielded somewhat symmetrical results for negative and positive initial

values. This symmetry can also be observed in

Figure 5. We argue that this was caused by the symmetrical structure of the used table and chair objects. The slight differences were most likely caused by the slight imperfections of the surfaces of the models, that caused the silhouette renders used for gradient calculation to become unsymmetrical. These imperfections, however, can also be expected from real-life, physical objects. Therefore, we think that this feature of the ShapeNet dataset had a positive effect on the generalization capabilities of our solution. The previously mentioned problem of the render engine causing the sporadic appearance of NaN gradients could also lead to an increased number of steps taken by the agents.

6.2.2. Comparative Analysis

To compare the newly developed reinforcement learning agent with our prior solutions, we performed experiments using five different agents:

The agents were only able to perform normalized length () steps. For these experiments, we set up the environment to contain three random objects, but the initial azimuth values were now always set to . We ran each setting of the environment with the five aforementioned agents for 50 randomly initialized environments.

The outcomes of the experiments are displayed in

Table 4. From the shown results, it can be observed that the reinforcement learning agents were, in fact, capable of arriving at an unoccluded state in a reliably lower amount of steps than all the other agents used. The superiority of the robustness of this latter method could be observed in both the mean value and the variance of the number of steps taken by the agents. The intersection normalized RL agent outperformed all the other methods in our test scenario; however, the reasons contributing to this result are subject to further tests and examination.

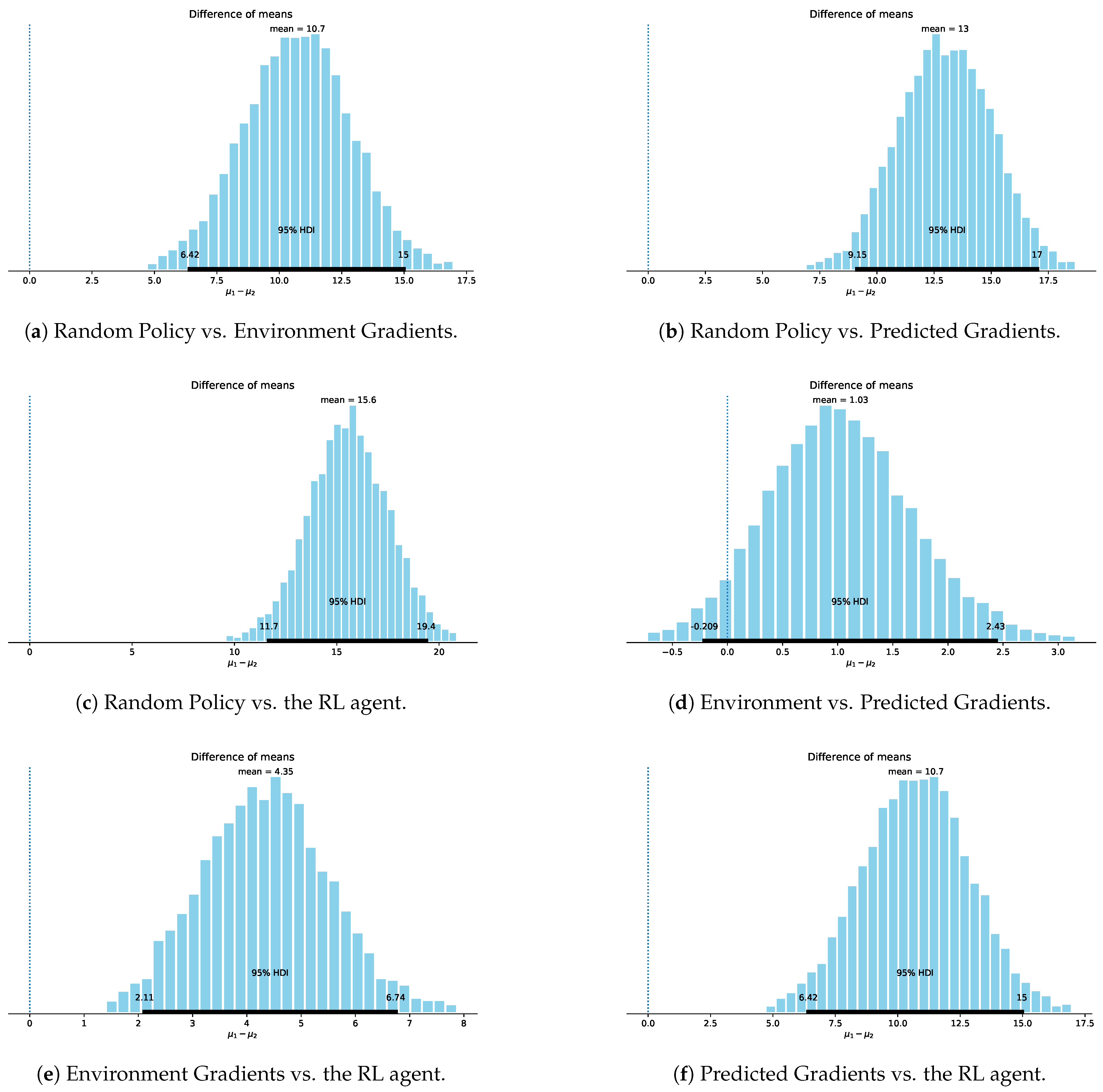

We also performed a paired sample Bayesian

t-test [

39] using the BEST library to provide statistical proof of our method’s efficacy. The test used 50 paired samples performed with the aforementioned four methods. For simplicity’s sake, we only used the Intersection-normalized version of the RL agent, as the two versions were very close in performance. The summarized results of the tests are presented in

Table 5, while the full results are shown in

Figure A1 in the

Appendix A.

The results demonstrated that all three proposed methods credibly surpassed the random policy with significant effect sizes. In practice, this meant that our methods reached the unoccluded state in significantly fewer steps. It was also apparent that the two neural network-based methods achieved higher results than the method using the environment gradients. However, the predicted-gradient method only surpassed the former with a confidence of , barely falling short of the commonly accepted threshold. The RL agent-based solution, however, credibly outperformed all other solutions, albeit the average difference between the two best performing methods was less than a single step. Still, this difference resulted in a confidence of , which, we argue, was possible due to the significantly lower variance of the RL agent’s performance.

7. Discussion

In this paper, a novel method was presented that successfully implemented occlusion avoidance as a perception–action–loop. The method was trained using a combination of self-supervised and reinforcement learning techniques, and was able to efficiently optimize the camera position in an OpenAI Gym-compatible virtual environment. The environment was created using PyTorch3D’s differentiable renderer and provided three random occluded objects from the ShapeNet dataset. In total, the following four agents were presented: the first used the gradients calculated analytically using differentiable rendering; the second employed a random policy; the third used self-supervised learning to predict the optimal direction of movement; the fourth employed reinforcement learning to avoid occlusion in a minimal number of steps. The first and second agents were used as baselines for comparison. The third and fourth methods used the RGB-D images as input.

Our experiments demonstrated that all agents performed well in the environment; however, the agents that employed learning managed to significantly outperform all others. The agent using reinforcement learning proved to be the most reliable, as demonstrated by its credibly superior performance according to the Bayesian t-test performed during our investigations. The RL-based agent also had the smallest variance in the number of steps taken to avoid occlusion, further cementing it as the highest performing solution.

Naturally, the methods come with some limitations, namely that they have been trained and tested in synthetic environments, where both the color and depth images used as inputs were devoid of any errors. This likely meant that, in order to use this agent in a real environment, sim-to-real transfer would have to be done. Furthermore, the background used in the environment would also need to be updated to include a more realistic background, with walls, floor and ceiling, as the existence of these in a real setting may confuse an agent trained on fully synthetic data. Our datasets and the code used have been made available at

https://github.com/MILAB-IIT-CV/OcclusionEnv (accessed on 27 January 2023).