1. Introduction

Numerical computations based on structured grids play an important role in scientific research and industrial applications. The commonly used finite element method, finite difference method, and finite volume method are all computed on grids. Sparse matrix-vector multiplication (SpMV) and Gauss–Seidel iterations are very important kernel functions [

1] that have a significant impact on performance in practical applications. Both functions are memory-intensive and require indirect memory access by an index.

All Krylov subspace methods require calls to SpMV functions, and Gauss–Seidel iterations are commonly used as smoothing operators and as the bottom solver in multigrid solvers. The original Gaussian–Seidel iteration has very limited parallelism because of its strict dependencies. It is possible to break its dependencies and develop fine-grained parallelism by multicolor reordering [

2]. Gauss–Seidel fine-grained parallel algorithms are usually closely related to the processor architecture. A two-level blocked Gauss–Seidel algorithm was used on the SW26010-Pro processor [

2]. A Gauss–Seidel algorithm with 8-color block reordering was applied to the SW26010 [

3]. The red–black reordering Gauss–Seidel algorithm was designed for the CPU–MIC architecture on the Tianhe-2 supercomputer [

4]. The K supercomputer is a homogeneous architecture, and the performance of the Gauss–Seidel algorithm for 8-color point reordering and 4-color row reordering was compared [

5].

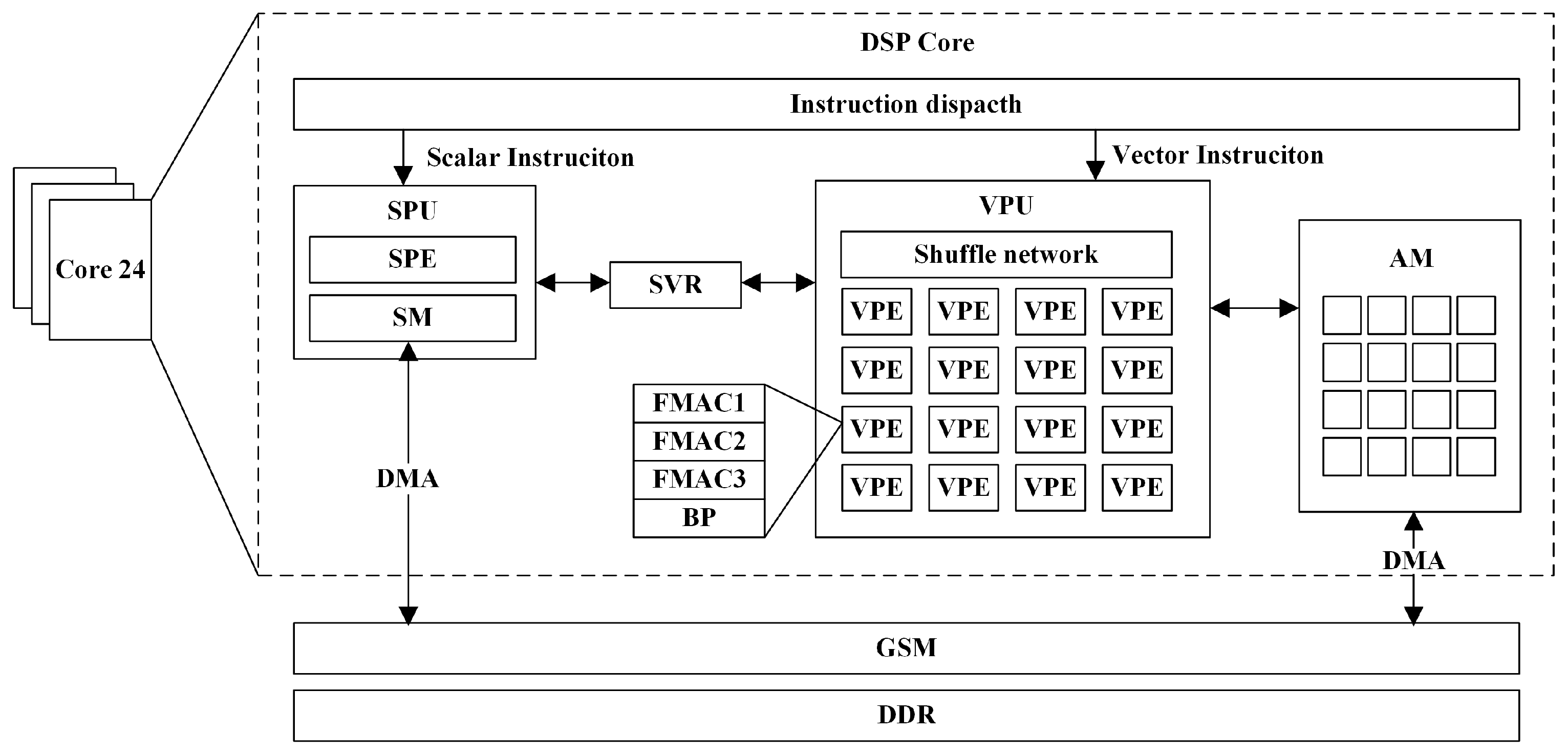

Currently, the computing performance of high-performance supercomputers has reached the E-level. The power consumption and heat dissipation are important factors affecting supercomputers. The general purpose digital signal processor (GPDSP) is a very important embedded processor. It has the advantage of ultra-low power consumption due to its very long instruction words and on-chip temporary memory [

6]. GPDSPs that have been introduced for high-performance computing include TI’s C66X series [

7,

8] and Phytium’s Matrix series [

9,

10,

11,

12,

13].

The Matrix-DSP is a GPDSP developed by Phytium, which can be used either as an accelerator with a CPU or as a processor alone. It has a complex architecture containing multilevel memory structures and multilevel parallel components, which can implement instruction-level parallelism, vectorized parallelism, and multicore parallelism. It is difficult to fully exploit the performance of the Matrix-DSP by simply transplanting existing algorithms designed for single-core processors [

12]. Zhao et al. mapped the Winograd algorithm for accelerating convolutional neural networks onto the Matrix-DSP [

14]. Z. Liu et al. designed a multicore vectorization GEMM for the Matrix-DSP [

12]. Yang et al. evaluated the efficiency of a DCNN on the Matrix-DSP [

15]. Due to the novelty of the Matrix-DSP processor, memory-intensive programs are still underdeveloped, and there are no public papers on sparse matrix computation for the Matrix-DSP yet available.

In this paper, we designed multi-core vector algorithms based on structured grid SpMV and Gauss–Seidel iterations for the Matrix-DSP and evaluated our algorithms on the Matrix-DSP platform. The main contributions are as follows:

(1) We improved the data locality and indirect memory access speed by blocking, using a multicolor reordering method to develop the fine-grained parallelism of the Gauss–Seidel algorithm, and dividing the data finely according to the memory structure of the Matrix-DSP.

(2) In terms of the data transfer, we used a double-buffered DMA scheme, which overlaps the computation and transfer time. We also implemented general mixed-precision algorithms, which reduced the memory access.

(3) We tested various grid cases on the Matrix-DSP, and the experimental results show that our improved algorithms could fully exploit the bandwidth efficiency of the Matrix-DSP, wherein they reached 72% and 81% of the theoretical bandwidth, respectively. Compared with the unoptimized methods, the SpMV and Gauss–Seidel iterations achieved 41× and 47× speedup outcomes, respectively. Our mixed-precision work further improved the performance by 1.60× and 1.45×, respecitvely.

The rest of the paper is as follows:

Section 2 introduces the background. In

Section 3, the Matrix-DSP architecture is described in detail.

Section 4 and

Section 5 describe the algorithm design and performance test results, respectively. Finally, a conclusion is presented in

Section 6.

4. SpMV and Gauss–Seidel on Matrix-DSP

In this section, we analyzed the Matrix-DSP architecture, optimized structured grid-based SpMV, and Gauss–Seidel algorithm by multiple methods.

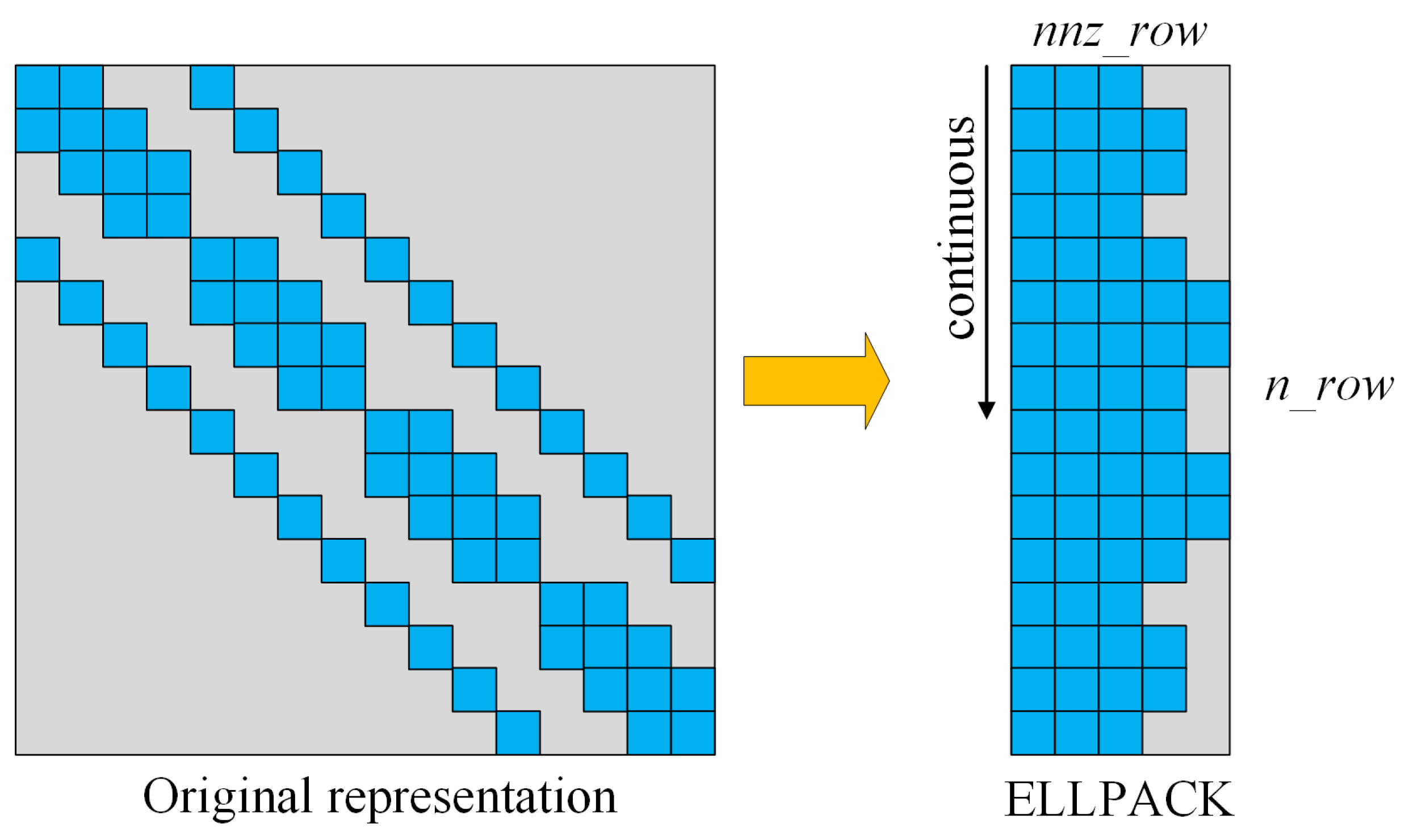

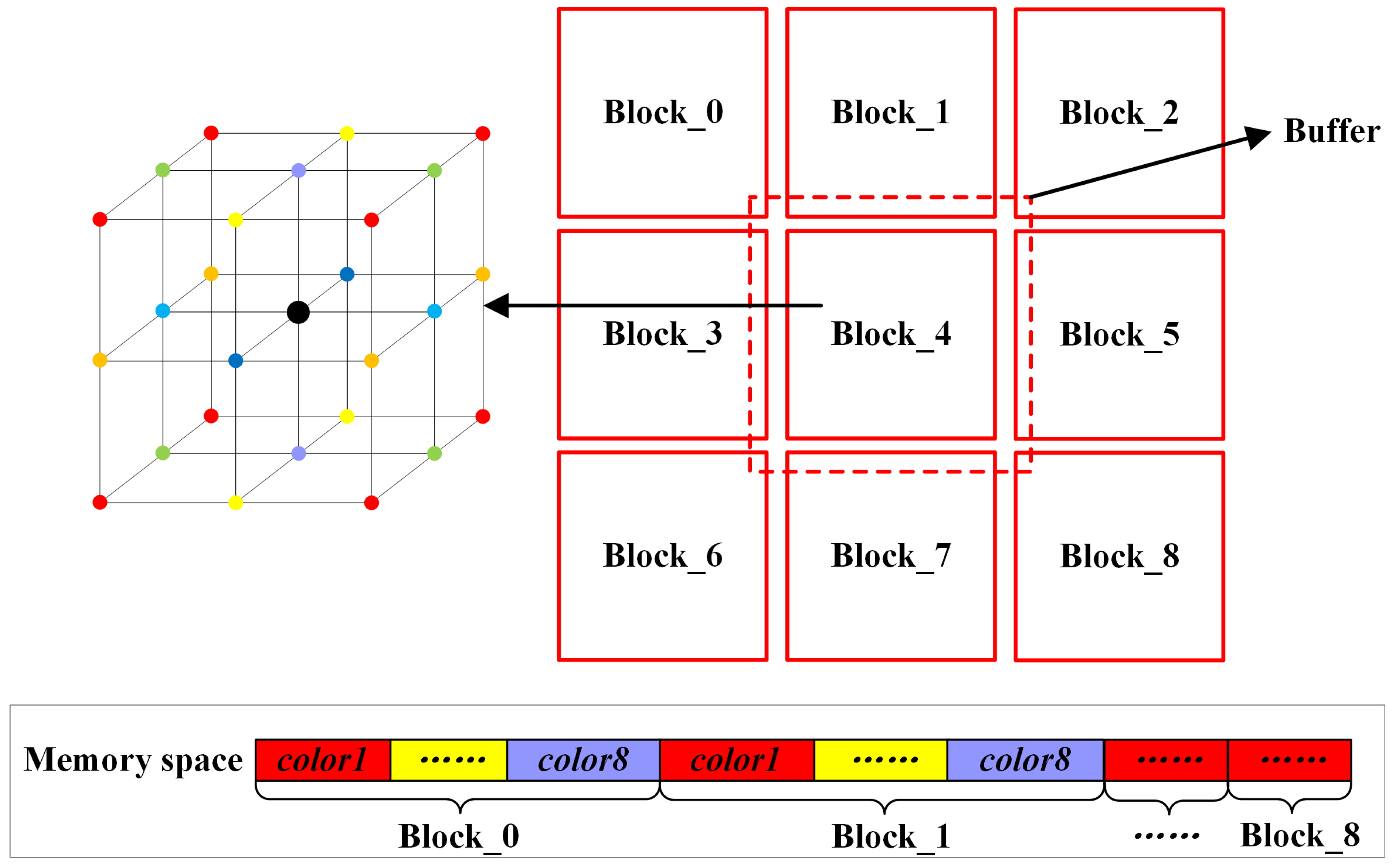

4.1. Blocking Method

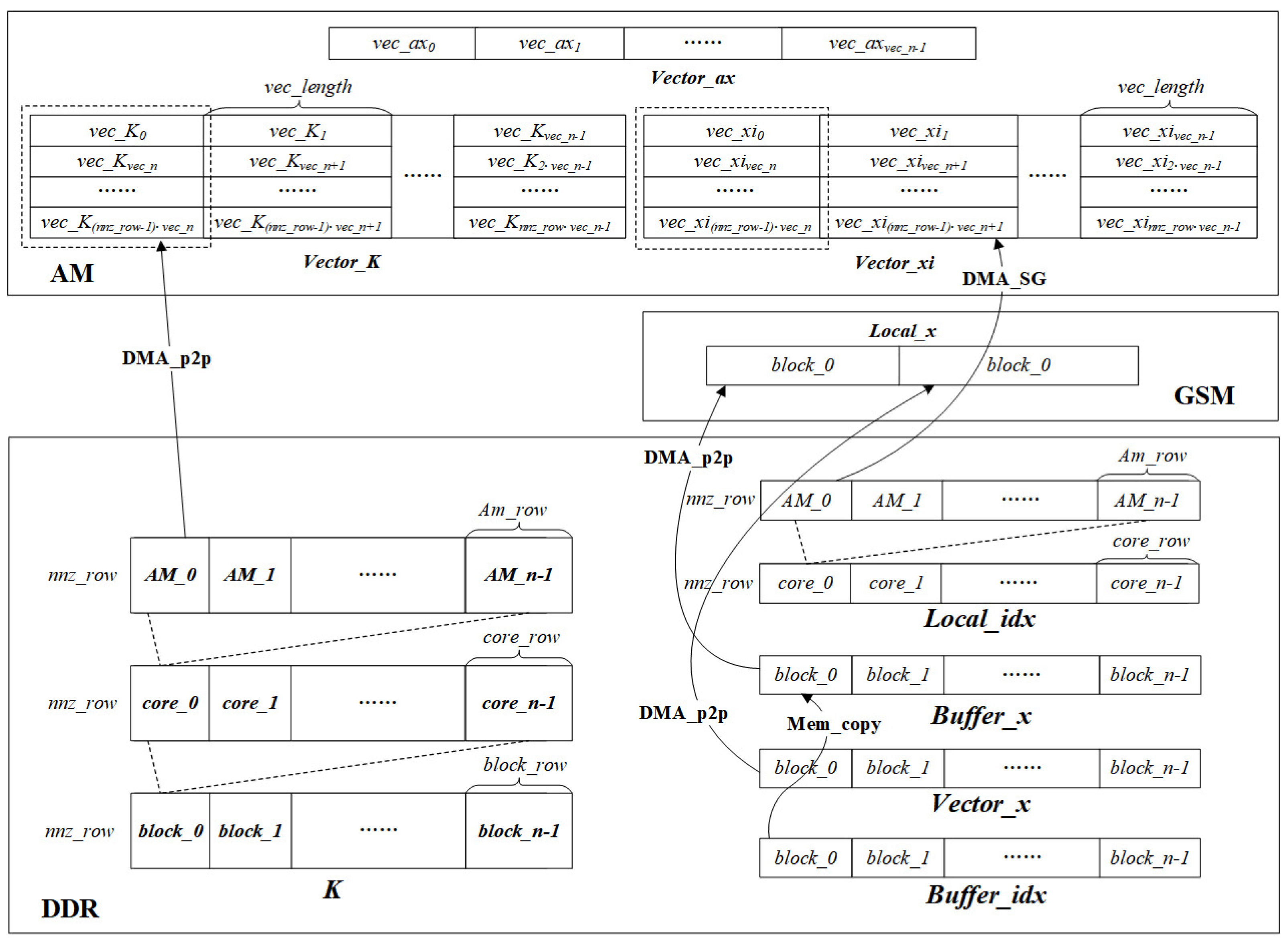

Both the SpMV and Gauss–Seidel algorithms require repeatedly addressing the vector by an index, and the number of repetitions depends on the discrete format. The speed of indirect addressing seriously affects the computational performance; thus, we designed a blocking method to increase the data locality. As shown in the right of

Figure 5, the overall grid is divided into grid blocks of equal size, and the address space of the vector of any block is within the block and its buffer layer.

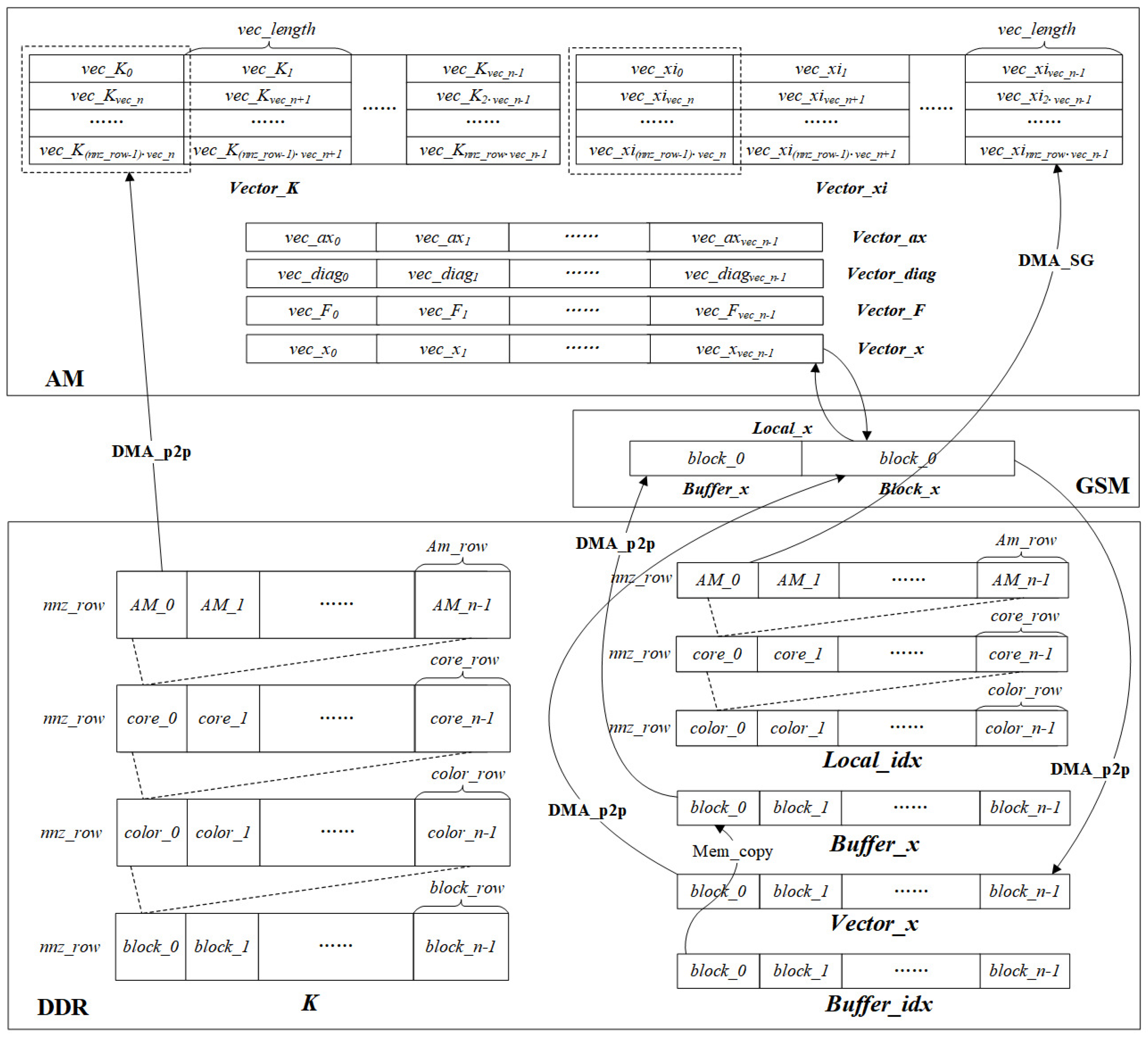

Figure 6 and

Figure 7 describe the data division and the data transfer direction for the two functions, respectively. As shown in the lower right of

Figure 6 and

Figure 7, the local vector

Local_x of block_0, which needs to be repeatedly addressed, is first put into the GSM, which can provide a higher bandwidth than the external memory. The

Local_x of block_0 consists of a

Vector_x of block_0 and a

Buffer_x of block_0, which is indexed by the

Buffer_idx of block_0. The memory size of the

Local_x cannot exceed the GSM memory, as shown in Equation (

2).

Theoretically, the higher the GSM memory usage, the higher the performance that is obtained. In addition, the blocking algorithm allows each block to have the same local index

Local_idx, so there is no need to store the array of the global column index

idx.

4.2. Multicolor Reordering Method

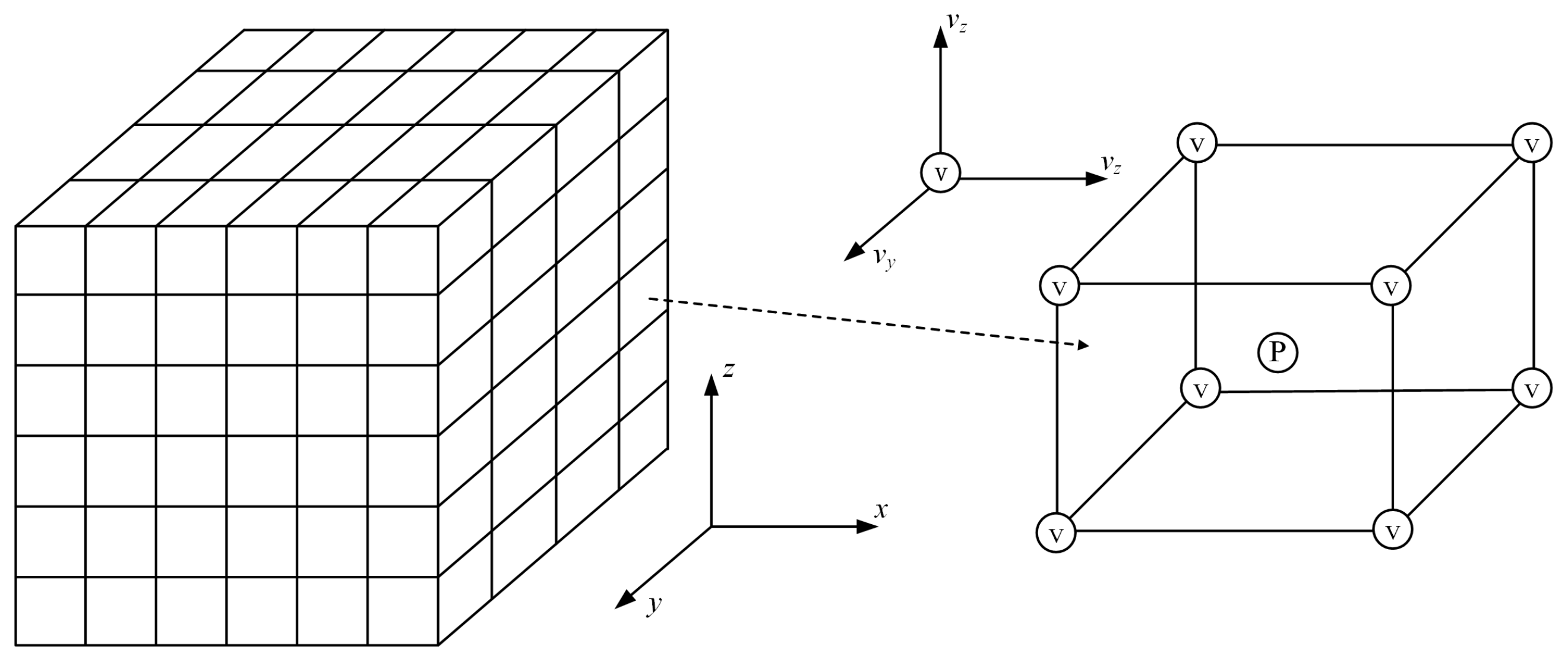

In a structured grid, a matrix row is represented as a DOF on a node or an edge, and the data dependency between matrix rows in the Gauss–Seidel algorithm is represented as the neighboring relationship between the DOF. We performed a multicolor reordering method, which is commonly used in developing Gauss–Seidel fine-grained parallelism on the blocked grid.

An example of multicolor reordering based on a 27-point discrete format is given on the left of

Figure 5 [

5]. The grid points in the 3D space are divided into eight colors, and the adjacent nodes of any node have different colors from the node itself. After reordering, the nodes of

are numbered first, then the nodes of

are numbered and so on until

. In this way, there is no data dependency between points of the same color during calculation, and they can be calculated in parallel, thus transforming the dependency between nodes into the dependency between colors. At the same time, in order to ensure the continuity of memory space access, the matrix rows corresponding to points with the same color are arranged continuously, as shown in

Figure 5 below.

The overall calculation order is shown in the data arrangement order in

Figure 5 below. First, calculate

to

in

; then, calculate color1 to color8 in

until all the blocks are calculated.

4.3. Data Division Method

The SpMV of the block and the Gauss–Seidel of the same color within the block are completely data independent and suitable for data parallelism. Considering that each row of the matrix has the same number of nonzero elements with no load imbalance, the matrix is partitioned by a one-dimensional static method. As shown at the bottom of

Figure 6 and

Figure 7, the matrix

K and

Local_idx are equally distributed to each DSP core that is used. In the SpMV,

, and in the Gauss–Seidel,

, where

denotes the number of DSP cores used,

denotes the number of matrix rows assigned to one DSP core,

denotes the number of matrix rows in one block, and

denotes the number of matrix rows of one color in one block. Specifically, row ID of

is assigned to the

, while row ID of

is assigned to the

, and so on.

However, the AM space has a capacity of only 768 KB, and it may not be possible to calculate matrix rows at once, so the needs to be further divided. We define the as the maximum number of matrix rows that the AM space can calculate at one time, and we define the as the number of loops that need to be calculated, which can be calculated as . Specifically in the SpMV, the AM space needs to store the product vector Vector_ax of size , the coefficient matrix vector Vector_K, and the index addressing vector Vector_xi of size . The Gauss–Seidel additionally needs to store the iteration vector Vector_x, the right-hand term vector Vector_F, and the diagonal element vector Vector_diag of size . Therefore, the SpMV needs to satisfy the ≤ 768 KB, and the Gauss–Seidel needs to satisfy the ≤ 768 KB. Theoretically, the higher the utilization of the AM memory, the lower the consumption of DMA transfers, and the higher the utilization of the bandwidth.

In AM space, data are stored as vectors, where denotes the vector length that an SIMD instruction can calculate, which can be computed by , and denotes the number of vectors in the , which can be computed by . Therefore, the also needs to satisfy the condition to be a multiple of .

As shown in

Figure 6 and

Figure 7, in each AM loop, the

Vector_K of length

is transferred from the DDR to the AM through the DMA_p2p, and the

Vector_xi of length

is transferred from the GSM to the AM through the DMA_SG. For the Gauss–Seidel, it is additionally necessary to transfer the

Vector_diag, the

Vector_F, and the

Vector_x of length

to the AM. After completing the data transfer, the SpMV and Gauss–Seidel are performed. Finally, the

Vector_ax and

Vector_x are returned to the DDR and GSM, respectively.

4.4. DMA Double Buffering Method

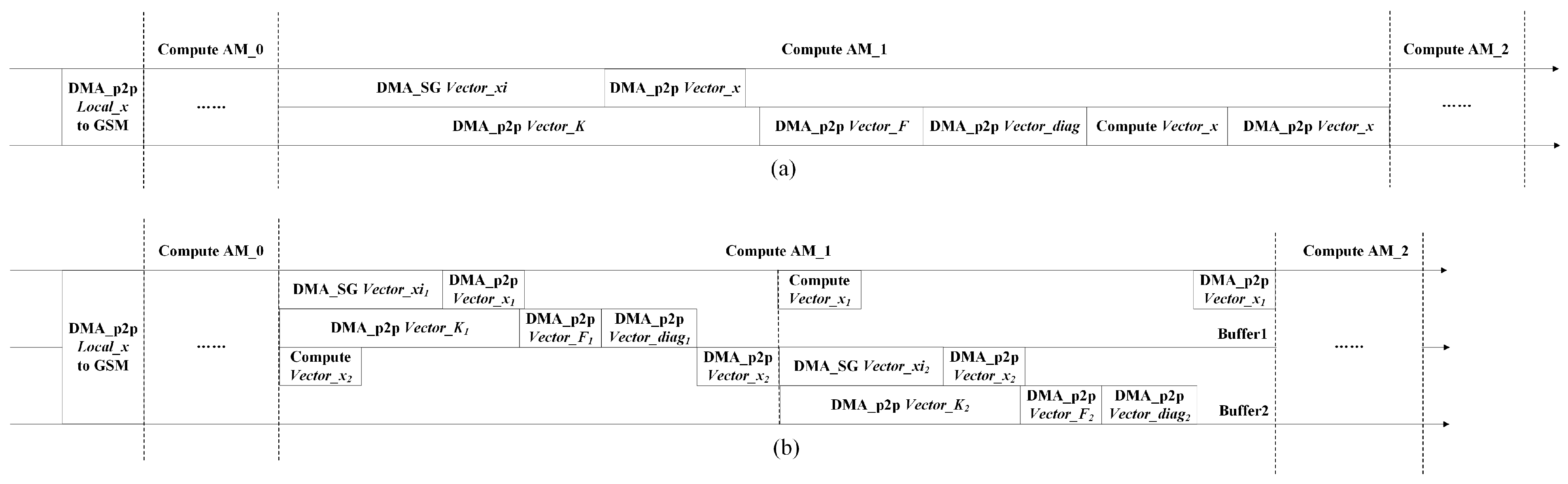

The Matrix-DSP accelerator supports transferring data and computation simultaneously, so we designed the DMA double-buffering method in the AM space to overlap the computation and transfer.

Figure 8 and

Figure 9a,b describe the flow of the conventional memory access calculation and the flow of the double buffer memory access calculation, respectively. We created two buffers for the

Vector_K, Vector_xi, Vector_ax in the SpMV and for the

Vector_K, Vector_xi, Vector_diag, Vector_F, Vector_x in the Gauss–Seidel. Therefore, all computation time, except the last one, can be overlapped by the transfer time. In both algorithms, the DMA memory access time accounts for a large proportion, and the calculation time accounts for a small proportion, so the performance improvement is limited.

4.5. Multicore Vectorization Algorithm

Combining the above optimization methods, the final multicore vectorization algorithms can be obtained as shown in Algorithms 3 and 4. Both algorithms compute blocks sequentially (Line 1 in Algorithms 3 and 4), and the first steps are generating the

Local_x and transferring it to the the GSM (Lines 2–4 in Algorithms 3 and 4). In the SpMV, each DSP core computes

subblocks in parallel, and double-buffering is performed in the AM loop (Lines 6–17 in Algorithm 3). Then, the

Vector_K and

Vector_xi are transferred into the AM (Lines 8–9 in Algorithm 3) for vector computation (Lines 10–15 in Algorithm 3). Finally, the computation output of the

Vector_ax is returned to the DDR (Line 16 in Algorithm 3). In Gauss–Seidel, all DSP cores are computed in parallel for one color (Lines 5–24 in Algorithm 4). In each AM loop with double buffering, the

Vector_x,

Vector_K,

Vector_xi,

Vector_diag, and

Vector_F are transferred into the AM (Lines 9–13 in Algorithm 4); then, vector iteration is performed (Lines 14–21 in Algorithm 4), and, finally, the updated

Vector_x is transferred back to the GSM (Line 22 in Algorithm 4). When all colors have been completed, the

Vector_x from the GSM is transferred back to the DDR for updating (Line 25 in Algorithm 4).

| Algorithm 3 Optimized SpMV on Matrix-DSP |

- 1:

for

do - 2:

MEM_copy:index by get - 3:

DMA_p2p:transfer from DDR to GSM - 4:

DMA_p2p:transfer from DDR to GSM - 5:

#DSP core parallel: - 6:

for do - 7:

#Double buffering: - 8:

DMA_p2p: from DDR to AM - 9:

DMA_SG:index by from GSM to AM - 10:

for do - 11:

for do - 12:

#vectorized parallel: - 13:

- 14:

end for - 15:

end for - 16:

DMA_p2p:transfer from AM to DDR - 17:

end for - 18:

end for

|

| Algorithm 4 Optimized Gauss-Seidel on Matrix-DSP |

- 1:

for

do - 2:

MEM_copy:index by get - 3:

DMA_p2p:transfer from DDR to GSM - 4:

DMA_p2p:transfer from DDR to GSM - 5:

for do - 6:

#DSP core parallel: - 7:

for do - 8:

#Double buffering: - 9:

DMA_p2p:transfer from GSM to AM - 10:

DMA_p2p:transfer from DDR to AM - 11:

DMA_SG:index by from GSM to AM - 12:

DMA_p2p:transfer from DDR to AM - 13:

DMA_p2p:transfer from DDR to AM - 14:

for do - 15:

for do - 16:

#vectorized parallel: - 17:

- 18:

end for - 19:

#vectorized parallel: - 20:

- 21:

end for - 22:

DMA_p2p:transfer from AM to GSM - 23:

end for - 24:

end for - 25:

DMA_p2p:transfer from GSM to DDR - 26:

end for

|

4.6. Mixed Precision Algorithm

Mixed precision is a method that can effectively improve the computational speed, and its main idea is to use low precision in the computation-intensive part while maintaining the final computational accuracy. We implemented a conventional mixed-precision function on the Matrix-DSP that stores the coefficient matrix in a float type while using a double type for computation. This function has been used in some open-source software and papers [

21,

22]. The specific process is as follows: First, the generated coefficient matrix is stored in the external memory in a float type. Second, the matrix elements are transferred to the AM through the DMA. Third, the VPU reads the data from the AM using a high half-word read instruction. Fourth, the high 32-bit float in the vector register is converted to a 64-bit double in the vector register by the high half-word precision enhancement instruction. Finally, the computation of the SpMV and Gauss–Seidel iterations are performed, and the results are transferred back to the external memory in a double type.

5. Experimental Evaluation

The Matrix-DSP supports two programming modes, which are the assembly mode and C mode. Assembly programming has the advantage of being manually pipelined, but the workload is high. Computation-intensive programs rely on good assembly programs to obtain high performance. The C programming model involves a relatively low workload. However, for access-intensive programs, the percentage of computation time is low and is masked by double buffering, so the C language mode can achieve a similarly high performance. Therefore, we chose the C programming mode.

We chose the sparse matrices, which are from a 27-point discrete format with three DOFs at each point, for the test. The tests are performed on three scale grids:

,

, and

. The

scale grid can put the vector x in the GSM without blocking, while the block sizes of the other two grids are

, and the memory of

in the GSM is

3.390 MB. Some other parameters can be obtained by the formula in

Section 4.3:

,

, and

.

Figure 10 depicts the results of the optimized SpMV and Gauss–Seidel. The peak performance of the SpMV without any optimization was only 0.128 Gflops/s, 0.128 Gflops/s, and 0.126 Gflops/s on

,

, and

grids, respectively, and 0.127 Gflops/s, 0.128 Gflops/s, and 0.127 Gflops/s for the Gauss–Seidel, respectively.

After multicore acceleration, the performances of the SpMV, which reached 1.88 Gflops/s, 1.91 Gflops/s, and 1.88 Gflops/s, respectively, were improved by about 14× for all three grids. As for the Gauss–Seidel, the peak performance was improved by 8.5× on the small-scale grid and about 13× on other larger-scale grids, thus reaching 1.08 Gflops/s, 1.78 Gflops/s, and 1.64 Gflops/s, respectively.

Vectorization optimizations on the SpMV and Gauss–Seidel share common properties in that the smaller the cores used, the higher the FLOPS percentage increase, and the performance plateaus when the core number exceeds six. This is mainly because the computational performance grows linearly with the number of DSP cores, but the bandwidth of the DDR has reached the bottleneck early. The performance results on three different scale grids were 2.18 Gflops/s, 1.96 Gflops/s, and 1.95 Gflops/s for the SpMV, respectively, and 2.11 Gflops/s, 2.37 Gflops/s and 2.33 Gflops/s for the Gauss-Seidel, respectively.

The blocking method makes efficient use of the high bandwidth features of the GSM. It can be seen from

Figure 10 that the performance improvement of the blocking method for the two functions became more obvious as the number of DSP cores increased. This is mainly because, when the number of DSP cores is large, the DDR memory bandwidth allocated to each DSP core is limited, thus making the advantage of the GSM’s high bandwidth very remarkable. On three scale grids, from small to large, the SpMV achieved 2.41×, 2.75×, and 2.70× in speedups, respectively, with FLOPSs of 5.26 Gflops/s, 5.41 Gflops/s, and 5.27 Gflops/s, respectively. The Gauss–Seidel achieved 2.21×, 2.58×, and 2.56× in speedups, respectively, with FLOPSs of 4.67 Gflops/s, 6.13 Gflops/s, and 6.00 Gflops/s, respectively.

As shown in

Figure 10, the performance improvement from the double buffering was very small, with neither the Gauss–Seidel nor the SpMV improving by more than 2%. However, when only 1–2 DSP cores were used, the double buffering could result in performance increases of 30–40%. This is because when the number of DSP cores is small, each DSP core is allocated with a higher DDR bandwidth and higher computation ratio. If the Matrix-DSP can be equipped with a higher bandwidth external memory, the effect of double buffering will be more significant. In summary, the optimized algorithms obtained a total of 41× and 47× in speedups, respectively, compared to the unoptimized algorithms.

Bandwidth efficiency is another important evaluation standard and is defined as

, where

is the bandwidth efficiency,

is the valid bandwidth, and

is the theoretical bandwidth. The total accessed memory of the SpMV is defined as

, and for the Gauss–Seidel, it is defined as

. As shown in

Figure 11, the bandwidth efficiency increased with the number of DSP cores, which can be explained by the fact that the bandwidth of indirect access to the GSM through the DMA_SG increased with the number of DSP cores. The bandwidth efficiencies of the SpMV on the three grids were all around 72%, and the Gauss–Seidel had an efficiency of 67% on the small-scale grid and about 81% on the other two grids. It is worth mentioning that the bandwidth efficiency of using the DMA to transfer the contiguous memory data was 85%.

Figure 12 shows the test results of the mixed-precision algorithms in sub

Section 4.6. Both the SpMV and Gauss–Seidel achieves speedups of about 1.2× on the

grid. On the other two scale grids, the FLOPS speedups were about 1.6× for the SpMV and 1.45× for the Gauss–Seidel, and all the FLOPSs were around 8.9 Gflops/s.