Real-Time Anomaly Detection with Subspace Periodic Clustering Approach

Abstract

1. Introduction

1.1. Origin of the Problem

1.2. Motivation and Contribution

2. Related Works

3. Problem Definitions

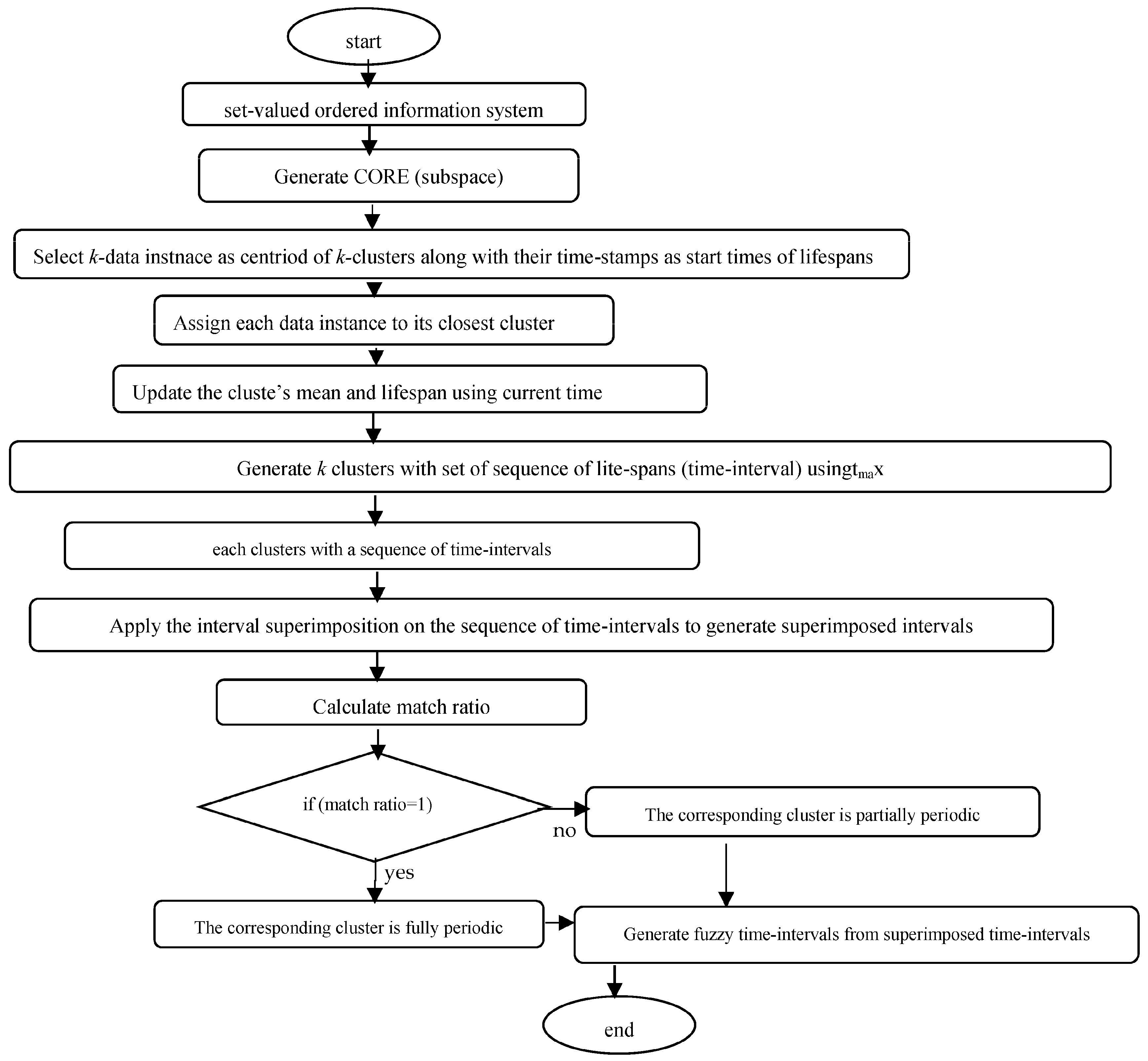

4. Proposed Algorithm

| Algorithm 1: Subspace Generation |

| Input: (U, A): the information system, where the attribute set A is divided into C-conditional attributes and D-decision attributes, consisting of n objects, Output: Subspace of (U, A) Step 1. Generate a dominance relation on U corresponding to C and X ⊆ U. Step 2. Generate the nano topology and its basis Step 3. for each x ∈ and Step 4. if ( ) Step 5. then drop x from C, Step 6. else form criterion reduction Step 7. end for Step 8. generate CORE(C) = ∩ {criterion reductions} Step 9. Generate subspace of the given information system. |

| Algorithm 2: Dynamic k-means clustering algorithm |

| Input: E: Information system consisting n objects and attribute set CORE(A) ⊆ A, tmax: the maximum time-gap of consecutive time-stamp, tmin: the minimum length of lifespan. Output: Set of clusters where each cluster is associated with a sequence of time intervals as its lifespans Step 1. Given d1-dimensional dataset CORE(A) Step 2. Select C[i] = {x[i], tp[i]}; i = 1, 2, …, k, where x[i] be the data instances or means of clusters, tp[i] points to list of time-intervals each maintained for every cluster contains time-stamps (start-time) of x[i] and start-time = last-time initially Step 3. for each incoming data instance x with current time-stamp current-time Step 3. {if d(x, Cj) ≤ d(x, Ci), i ≠ j; i = 1, 2, …, k Step 4. {Add x to Cj Step 5. Update mean(Cj) Step 6. if (|current-time − last-time[j]|≤ tmax) Step 7. {if(last-time[j] ≤ current-time) Step 8. extend lifespan(Cj) by setting last-time[j] = current-time Step 9. else go to Step3 Step 10. } Step 11. else if|last-time[j] − start-time|≥ tmin Step 12. {Add [start-time[j], last-time[j]] to tp[j] Step 13. set last-time[j] = start-time[j] = current-time Step 14. } Step 15. } Step 16. } Step 17. if (assign does not occur) go to step19 Step 18. else go to Step3 Step 19. Output cluster set |

| Algorithm 3: Algorithm for finding periodic (fully/partially) and fuzzy periodic clusters |

| Input: Set of clusters along with their lifespans (set of sequence of time intervals). Output: Set of fuzzy periodic clusters Step 1. For each cluster c with list of linespans L. Step 2. initially Lc=null//Lc is the list of superimposed intervals Step 3. lt = L.get() //lt points to the 1st time interval (lifespan) in L Step 4. Lc = append(lt) Step 5. m = 1 //m = number of intervals superimposed Step 6. while((lt=L.get())!=null) Step 7. {flag = 0 Step 8. while ((lct =L.get())!=null) Step 9. if (compsuperimp(lt, lct) Step 10. flag =1 Step 11. if (flag == 0) Step 12. Lc.append(lt) } Step 13. } Step 14. } Step 15. compsupeimp(lt, lct) Step 16. if(|intersect(lct, lt)!=null)| Step 17. { superimp(lct, lt) Step 18. m++ Step 19. return 1 Step 20. } Step 21. return 0 Step 22. Compute match ratio = m/n //n = number periods in the whole dataset. Step 23. if (match = 1) Step 24. the cluster c is fully periodic Step 25. else partially periodic Step 26. generate fuzzy time intervals from superimposed time intervals to get fuzzy periodic clusters. Step 27. End |

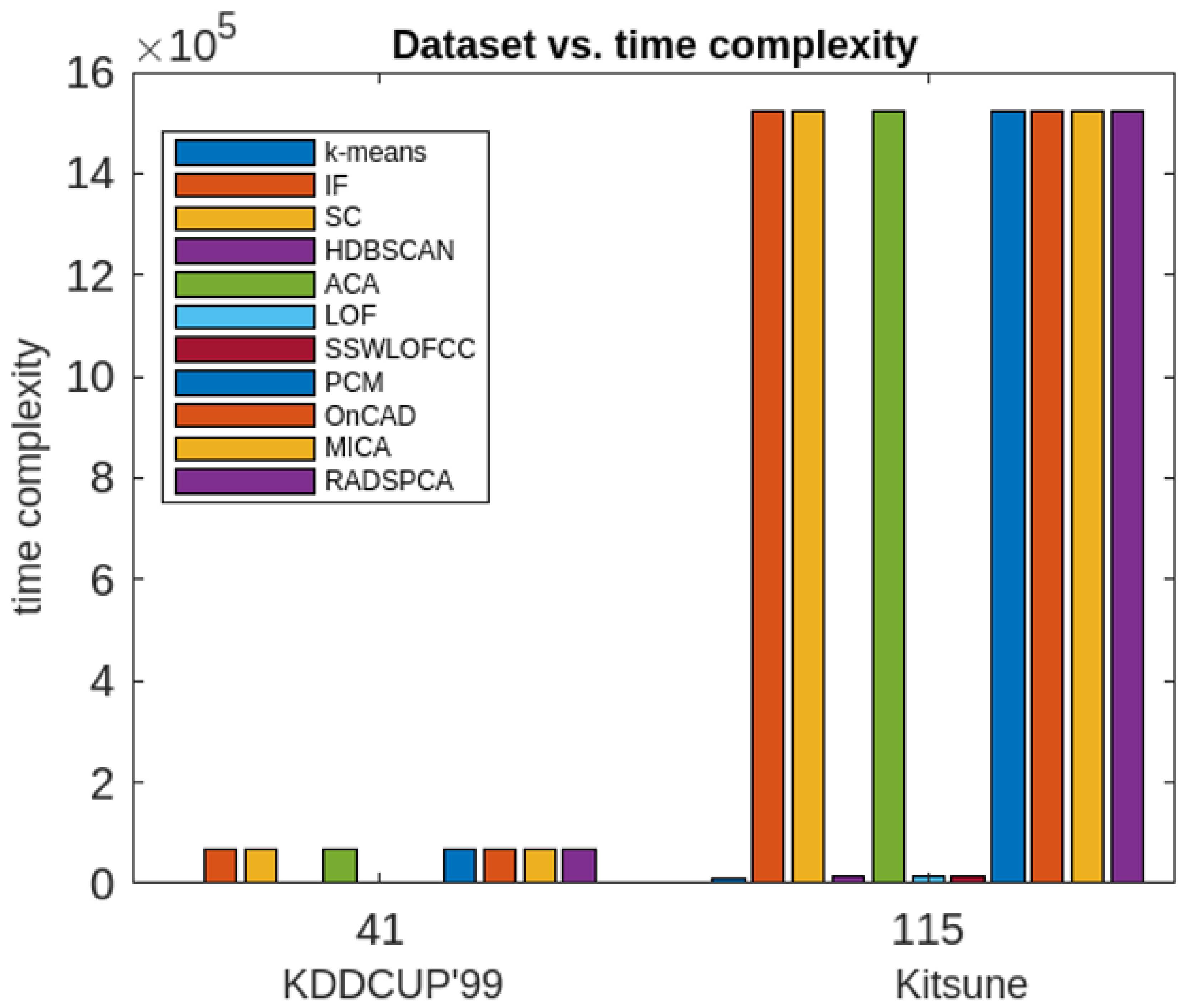

5. Complexity Analysis

6. Experimental Analysis and Results

7. Conclusions, Limitations and Lines for Future Works

7.1. Conclusions

7.2. Limitations and Future Directions of Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Xu, L.D.; He, W.; Li, S. Internet of Things in Industries: A Survey. IEEE Trans. Ind. Inform. 2014, 10, 2233–2243. [Google Scholar] [CrossRef]

- Sisinni, E.; Saifullah, A.; Han, S.; Jennehag, U.; Gidlund, M. Industrial Internet of Things: Challenges, Opportunities, and Directions. IEEE Trans. Ind. Inform. 2018, 14, 4724–4734. [Google Scholar] [CrossRef]

- Sethi, P.; Sarangi, S. Internet of Things: Architectures, Protocols, and Applications. J. Electr. Comput. Eng. 2017, 2017, 9324035. [Google Scholar] [CrossRef]

- Papaioannou, M.; Karageorgou, M.; Mantas, G.; Sucasas, V.; Essop, I.; Rodriguez, J.; Lymberpoulos, D. A Survey on Security Threats and Countermeasures in Internet of Medical Things (IoMT). Trans. Emerg. Telecommun. Technol. 2020, 33, e4049. [Google Scholar] [CrossRef]

- Mantas, G.; Komninos, N.; Rodriguz, J.; Logota, E.; Marques, H. Security for 5G Communications. In Fundamentals of 5G Mobile Networks; Wiley: Hoboken, NJ, USA, 2015; pp. 207–220. [Google Scholar] [CrossRef]

- Zarpelão, B.B.; Miani, R.S.; Kawakami, C.T.; de Alvarenga, S.C. A survey of intrusion detection in Internet of Things. J. Netw. Comput. Appl. 2017, 84, 25–37. [Google Scholar] [CrossRef]

- Makhdoom, I.; Abolhasn, M.; Lipman, J.; Liu, R.P.; Ni, W. Anatomy of Threats to the Internet of Things. IEEE Commun. Surv. Tutorials 2019, 21, 1636–1675. [Google Scholar] [CrossRef]

- Zachos, G.; Essop, I.; Mantas, G.; Porfyrkis, K.; Ribeiro, J.C.; Rodriguez, J. Generating IoT Edge Network Datasets based on the TON_IoT Telemetry Dataset. In Proceedings of the IEEE 26th International Workshop on Computer Aided Modeling and Design of Communication Links and Networks (CAMAD-2021), Porto, Portugal, 25–27 October 2021. [Google Scholar] [CrossRef]

- Mazarbhuiya, F.A.; Shenify, M. A Mixed Clustering Approach for Real-Time Anomaly Detection. Appl. Sci. 2023, 13, 4151. [Google Scholar] [CrossRef]

- Mazarbhuiya, F.A.; AlZahrani, M.Y.; Mahanta, A.K. Detecting Anomaly Using Partitioning Clustering with Merging. ICIC Express Lett. 2020, 14, 951–960. [Google Scholar]

- Mazarbhuya, F.A.; AlZahrani, M.Y.; Georgieva, L. Anomaly Detection Using Agglomerative Hierarchical Clustering Algorithm; ICISA 2018. Lecture Notes on Electrical Engineering (LNEE); Springer: Hong Kong, China, 2019; Volume 514, pp. 475–484. [Google Scholar]

- Mazarbhuiya, F.A. Detecting Anomaly using Neighborhood Rough Set based Classification Approach. ICIC Express Lett. 2023, 17, 73–80. [Google Scholar]

- Al Mamun, S.M.A.; Valmaki, J. Anomaly Detection and Classification in Cellular Networks Using Automatic Labeling Technique for Applying Supervised Learning. Procedia Comput. Sci. 2018, 140, 186–195. [Google Scholar] [CrossRef]

- Liu, Y.; Wang, H.; Zhang, X.; Tian, L. An Efficient Framework for Unsupervised Anomaly Detection over Edge-Assisted Internet of Things. ACM Trans. Sens. Netw. 2023, 2023, 1–26. [Google Scholar] [CrossRef]

- Mozaffari, M.; Doshi, K.; Yilmaz, Y. Self-Supervised Learning for Online Anomaly Detection in High-Dimensional Data Streams. Electronics 2023, 12, 1971. [Google Scholar] [CrossRef]

- Angiulli, F.; Fasetti, F.; Serrao, C. Anomaly detection with correlation laws. Data Knowl. Eng. 2023, 145, 102181. [Google Scholar] [CrossRef]

- Fan, Z.; Wang, G.; Zhang, K.; Liu, S.; Zhong, T. Semi-Supervised Anomaly Detection via Neural Process. IEEE Trans. Knowl. Data Eng. 2023, 2023, 1–13. [Google Scholar] [CrossRef]

- Lu, T.; Wang, L.; Zhao, X. Review of Anomaly Detection Algorithms for Data Streams. Appl. Sci. 2023, 13, 6353. [Google Scholar] [CrossRef]

- Hartigan, J.A. Hartigan Clustering Algorithms; John Wiley & Sons: Hoboken, NJ, USA, 1975. [Google Scholar]

- Cheng, Y.-M.; Jia, H. A Unified Metric for Categorical and Numeric Attributes in Data Clustering. Hong Kong University Technical Report. 2011. Available online: https://www.comp.hkbu.edu.hk/tech-report (accessed on 12 June 2018).

- Mazarbhuiya, F.A.; Abulaish, M. Clustering Periodic Patterns using Fuzzy Statistical Parameters. Int. J. Innov. Comput. Inf. Control. 2012, 8, 2113–2124. [Google Scholar]

- Gil-Garcia, R.; Badia-Contealles, J.M.; Pons-Porrata, A. Dynamic Hierarchical Compact Clustering Algorithm. In Progress in Pattern Recognition, Image Analysis and Applications; Sanfeliu, A., Cortés, M.L., Eds.; CIARP 2005, LNCS 3775; Springer: Berlin/Heidelberg, Germany, 2005; pp. 302–310. [Google Scholar]

- Hammouda, K.M.; Kamel, M.S. Efficient phrase-based document indexing for Web document clustering. IEEE Trans. Knowl. Data Eng. 2004, 16, 1279–1296. [Google Scholar] [CrossRef]

- Erfani, S.M.; Rajasegrar, S.; Karunasekera, S.; Leckie, C. High-dimensional and large-scale anomaly detection using a linear one-class SVM with deep learning. Pattern Recognit. 2016, 58, 121–134. [Google Scholar] [CrossRef]

- Hodge, V.; Austin, J. A survey of outlier detection methodologies. Artif. Intell. Rev. 2004, 22, 85–126. [Google Scholar] [CrossRef]

- Kaya, M.; Schoop, M. Analytical Comparison of Clustering Techniques for the Recognition of Communication Patterns. Group Decis. Negot. 2022, 31, 555–589. [Google Scholar] [CrossRef]

- Aggarwaal, C.C.; Philip, S.Y. An effective and efficient algorithm for high-dimensional outlier detection. VLDB J. 2005, 14, 211–221. [Google Scholar] [CrossRef]

- Ramchandran, A.; Sangaiaah, A.K. Chapter 11—Unsupervised Anomaly Detection for High Dimensional Data—An Exploratory Analysis. In Computational Intelligence for Multimedia Big Data on the Cloud with Engineering Applications; Intelligent Data-Centric Systems; Academic Press: Cambridge, MA, USA, 2018; pp. 233–251. [Google Scholar]

- Retting, L.; Khayati, M.; Cudre-Maurooux, P.; Piorkowski, M. Online anomaly detection over Big Data streams. In Proceedings of the 2015 IEEE International Conference on Big Data, Santa Clara, CA, USA, 29 October–1 November 2015. [Google Scholar]

- Alguliyev, R.; Aliguuliyev, R.; Sukhostat, L. Anomaly Detection in Big Data based on Clustering. Stat. Optim. Inf. Comput. 2017, 5, 325–340. [Google Scholar] [CrossRef]

- Hahsler, M.; Piekenbroock, M.; Doran, D. dbscan: Fast Density-Based Clustering with R. J. Stat. Softw. 2019, 91, 1–30. [Google Scholar] [CrossRef]

- Song, H.; Jiang, Z.; Men, A.; Yang, B. A Hybrid Semi-Supervised Anomaly Detection Model for High Dimensional Data. Comput. Intell. Neurosci. 2017, 2017, 8501683. [Google Scholar] [CrossRef] [PubMed]

- Mazarbhuiya, F.A. Detecting IoT Anomaly Using Rough Set and Density Based Subspace Clustering. ICIC Express Lett. 2022. accepted. [Google Scholar] [CrossRef]

- Ahmed, S.; Lavin, A.; Purdy, S.; Aghaa, Z. Unsupervised real-time anomaly detection for streaming data. Neurocomputing 2017, 262, 134–147. [Google Scholar] [CrossRef]

- Pawlak, Z. Rough sets. Int. J. Comput. Inf. Sci. 1982, 11, 341–356. [Google Scholar] [CrossRef]

- Thivagar, M.L.; Richaard, C. On nano forms of weakly open sets. Int. J. Math. Stat. Invent. 2013, 1, 31–37. [Google Scholar]

- Thivagar, M.L.; Priyalaatha, S.P.R. Medical diagnosis in an indiscernibility matrix based on nano topology. Cogent Math. 2017, 4, 1330180. [Google Scholar] [CrossRef]

- Kim, B.; Alawaami, M.A.; Kim, E.; Oh, S.; Park, J.; Kim, H. A Comparative Study of Time Series Anomaly Detection, Models for Industrial Control Systems. Sensors 2023, 23, 1310. [Google Scholar] [CrossRef]

- Alghawli, A.S. Complex methods detect anomalies in real time based on time series analysis. Alex. Eng. J. 2022, 61, 549–561. [Google Scholar] [CrossRef]

- Younas, M.Z. Anomaly Detection using Data Mining Techniques: A Review. Int. J. Res. Appl. Sci. Eng. Technol. 2020, 8, 568–574. [Google Scholar] [CrossRef]

- Thudumu, S.; Branch, P.; Jin, J.; Siingh, J. A comprehensive survey of anomaly detection techniques for high dimensional big data. J. Big Data 2020, 7, 42. [Google Scholar] [CrossRef]

- Habeeb, R.A.A.; Nasaaruddin, F.; Gani, A.; Hashem, I.A.T.; Ahmed, E.; Imran, M. Real-time big data processing for anomaly detection: A Survey. Int. J. Inf. Manag. 2019, 45, 289–307. [Google Scholar] [CrossRef]

- Wang, B.; Hua, Q.; Zhang, H.; Tan, X.; Nan, Y.; Chen, R.; Shu, X. Research on anomaly detection and real-time reliability evaluation with the log of cloud platform. Alex. Eng. J. 2022, 61, 7183–7193. [Google Scholar] [CrossRef]

- Halstead, B.; Koh, Y.S.; Riddle, P.; Pechenizkiy, M.; Bifet, A. Combining Diverse Meta-Features to Accurately Identify Recurring Concept Drift in Data Streams. ACM Trans. Knowl. Discov. Data 2023, 17, 1–36. [Google Scholar] [CrossRef]

- Zhao, Z.; Birke, R.; Han, R.; Robu, B.; Buchenak, S.; Ben Mokhtar, S.; Chen, L.Y. RAD: On-line Anomaly Detection for Highly Unreliable Data. arXiv 2019, arXiv:1911.04383. [Google Scholar]

- Chenaghlou, M.; Moshtghi, M.; Lekhie, C.; Salahi, M. Online Clustering for Evolving Data Streams with Online Anomaly Detection. Advances in Knowledge Discovery and Data Mining. In Proceedings of the 22nd Pacific-Asia Conference, PAKDD 2018, Melbourne, VIC, Australia, 3–6 June 2018; pp. 508–521. [Google Scholar]

- Firoozjaei, M.D.; Mahmoudyar, N.; Baseri, Y.; Ghorbani, A.A. An evaluation framework for industrial control system cyber incidents. Int. J. Crit. Infrastruct. Prot. 2022, 36, 100487. [Google Scholar] [CrossRef]

- Chen, Q.; Zhou, M.; Cai, Z.; Su, S. Compliance Checking Based Detection of Insider Threat in Industrial Control System of Power Utilities. In Proceedings of the 2022 7th Asia Conference on Power and Electrical Engineering (ACPEE), Hangzhou, China, 15–17 April 2022; pp. 1142–1147. [Google Scholar]

- Zhao, Z.; Mehrootra, K.G.; Mohan, C.K. Online Anomaly Detection Using Random Forest. In Recent Trends and Future Technology in Applied Intelligence; Mouhoub, M., Sadaoui, S., Ait Mohamed, O., Ali, M., Eds.; IEA/AIE 2018; Lecture Notes in Computer Science; Springer: Cham, Switzerland, 2018. [Google Scholar]

- Izakian, H.; Pedryecz, W. Anomaly detection in time series data using fuzzy c-means clustering. In Proceedings of the 2013 Joint IFSA World congress and NAFIPS Annual Meeting, Edmonton, AB, Canada, 24–28 June 2013. [Google Scholar]

- Decker, L.; Leite, D.; Giommi, L.; Bonakorsi, D. Real-time anomaly detection in data centers for log-based predictive maintenance using fuzzy-rule based approach. arXiv 2020, arXiv:2004.13527v1. [Google Scholar]

- Masdari, M.; Khezri, H. Towards fuzzy anomaly detection-based security: A comprehensive review. Fuzzy Optim. Decis. Mak. 2020, 20, 1–49. [Google Scholar] [CrossRef]

- de Campos Souza, P.V.; Guimarães, A.J.; Rezenede, T.S.; Silva Araujo, V.J.; Araujo, V.S. Detection of Anomalies in Large-Scale Cyberattacks Using Fuzzy Neural Networks. AI 2020, 1, 92–116. [Google Scholar] [CrossRef]

- Habeeb, R.A.A.; Nasauddin, F.; Gani, A.; Hashem, I.A.T.; Amanullah, A.M.E.; Imran, M. Clustering-based real-time anomaly detection—A breakthrough in big data technologies. Trans. Emerg. Telecommun. Technol. 2022, 33, e3647. [Google Scholar]

- Mahanta, A.K.; Mazarbhuiya, F.A.; Baruuah, H.K. Finding calendar-based periodic patterns. Pattern Recognit. Lett. 2008, 29, 1274–1284. [Google Scholar] [CrossRef]

- Mazarbhuiya, F.A.; Mahanta, A.K.; Baruah, H.K. The Solution of fuzzy equation A+X=B using the method of superimposition. Appl. Math. 2011, 2, 1039–1045. [Google Scholar] [CrossRef]

- Zadeh, L.A. Fuzzy sets as a basis for a theory of possibility. Fuzzy Sets Syst. 1978, 1, 3–28. [Google Scholar] [CrossRef]

- Loeve, M. Probability Theory; Springer Verlag: New York, NY, USA, 1977. [Google Scholar]

- Klir, J.; Yuan, B. Fuzzy Sets and Logic Theory and Application; Prentice Hill Pvt. Ltd.: Upper Saddle River, NJ, USA, 2002. [Google Scholar]

- Qiana, Y.; Dang, C.; Liaanga, J.; Tangc, D. Set-valued ordered information systems. Inf. Sci. 2009, 179, 2809–2832. [Google Scholar] [CrossRef]

- Stripling, E.; Baeseens, B.; Chizi, B.; Broucke, B.V. Isolation-based conditional anomaly detection on mixed-attribute data to uncover workers’ compensation fraud. Decis. Support Syst. 2018, 111, 13–26. [Google Scholar] [CrossRef]

- Ding, Z.; Fei, M. An Anomaly Detection Approach Based on Isolation Forest Algorithm for Streaming Data using Sliding Window. IFAC Proc. Vol. 2013, 46, 12–17. [Google Scholar] [CrossRef]

- Abdullah, J.; Chandran, N. Hierarchical Density-based Clustering of Malware Behaviour. J. Telecommun. Electron. Comput. Eng. (JTEC) 2017, 9, 159–164. [Google Scholar]

- KDD CUP’99 Data. Available online: https://kdd.ics.uci.edu/databases/kddcup99/kddcup99.html (accessed on 15 January 2020).

- Kitsune Network Attack Dataset. Available online: https://github.com/ymirsky/Kitsune-py (accessed on 12 December 2021).

| Algorithms | Evaluation Metrics | Execution Time (in Seconds) | Periodic Clusters Obtained | |||

|---|---|---|---|---|---|---|

| Recall | Precision | F1-Score | ||||

| 1 | k-means | 0.9605 | 0.9400 | 0.9500 | 28 | × |

| 2 | IF model | 0.8301 | 0.850 | 0.8400 | 19 | × |

| 3 | SC | 0.6220 | 0.6004 | 0.6110 | 44 | × |

| 4 | HDBSCAN | 0.2530 | 0.2300 | 0.2410 | 95 | × |

| 5 | ACA | 0.8400 | 0.8010 | 0.8200 | 16 | × |

| 6 | LOF | 0.9550 | 0.9390 | 0.9470 | 14 | × |

| 7 | SSWLOFCC | 0.9665 | 0.9460 | 0.9560 | 12 | × |

| 8 | PCM | 0.8800 | 0.8420 | 0.8600 | 26 | × |

| 9 | OnCAD | 0.9751 | 0.9650 | 0.9700 | 30 | × |

| 10 | MICA | 0.9822 | 0.9780 | 0.9800 | 28 | × |

| 11 | Proposed Approach (RADSPCA) | 0.9812 | 0.9790 | 0.9800 | 58 | √ |

| Algorithms | Evaluation Metrics | Execution Time (in Seconds) | Periodic Clusters Obtained | |||

|---|---|---|---|---|---|---|

| Recall | Precision | F1-Score | ||||

| 1 | k-means | 0.8701 | 0.8501 | 0.8600 | 95 | × |

| 2 | IF model | 0.7300 | 0.7502 | 0.7400 | 64.5 | × |

| 3 | SC | 0.6645 | 0.6420 | 0.6530 | 149.5 | × |

| 4 | HDBSCAN | 0.3899 | 0.3793 | 0.3850 | 150 | × |

| 5 | ACA | 0.7410 | 0.7010 | 0.7200 | 54.4 | × |

| 6 | LOF | 0.90401 | 0.9000 | 0.9020 | 47.6 | × |

| 7 | SSWLOFCC | 0.9280 | 0.9499 | 0.9390 | 40 | × |

| 8 | PCM | 0.7430 | 0.7810 | 0.7600 | 88 | × |

| 9 | OnCAD | 0.8450 | 0.8353 | 0.8400 | 102 | × |

| 10 | MICA | 0.9832 | 0.9770 | 0.9800 | 68 | × |

| 11 | Proposed Approach (RADSPCA) | 0.9860 | 0.9801 | 0.9830 | 88.5 | √ |

| Acronym | Full Form and Purpose |

|---|---|

| IF | Isolation Forest: It is an anomaly detection using binary tree. |

| SC | Spectral Clustering: It has been used as an outlier detection algorithm many times |

| HDBSCAN | Hierarchical Density-based Spatial Clustering of Applications with Noise: It is a density–based hierarchical clustering approach that has been used for anomaly detection many times with less efficacies |

| ACA | Agglomerative Clustering Algorithm: It is a hierarchical clustering approach for anomaly detection. |

| LOF | Local Outlier Factor: It is an algorithm to identify outliers based on local neighborhood. |

| SSWLOFCC | Streaming Sliding Window Local Outlier Factor Coreset Clustering Algorithm: It focuses on real-time detection of anomalies using big data technologies. |

| PCM | Partitioning Clustering with Merging: It is an algorithm for finding anomalies which uses both partitioning and Hierarchical approaches |

| OnCAD | Online Clustering and Anomaly Detection: It is a clustering-based anomaly detection approach in data streams that considers the temporal as well as spatial proximity of observations to detect the real-time anomaly. |

| MICA | Mixed Clustering Algorithm: It is an algorithm for finding real-time anomalies using both partitioning and Hierarchical approaches |

| RADSPSCA | Real-time Anomaly Detection with Subspace Periodic Clustering Approach is the method proposed in this article. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Mazarbhuiya, F.A.; Shenify, M. Real-Time Anomaly Detection with Subspace Periodic Clustering Approach. Appl. Sci. 2023, 13, 7382. https://doi.org/10.3390/app13137382

Mazarbhuiya FA, Shenify M. Real-Time Anomaly Detection with Subspace Periodic Clustering Approach. Applied Sciences. 2023; 13(13):7382. https://doi.org/10.3390/app13137382

Chicago/Turabian StyleMazarbhuiya, Fokrul Alom, and Mohamed Shenify. 2023. "Real-Time Anomaly Detection with Subspace Periodic Clustering Approach" Applied Sciences 13, no. 13: 7382. https://doi.org/10.3390/app13137382

APA StyleMazarbhuiya, F. A., & Shenify, M. (2023). Real-Time Anomaly Detection with Subspace Periodic Clustering Approach. Applied Sciences, 13(13), 7382. https://doi.org/10.3390/app13137382