Automated Beehive Acoustics Monitoring: A Comprehensive Review of the Literature and Recommendations for Future Work

Abstract

:1. Introduction

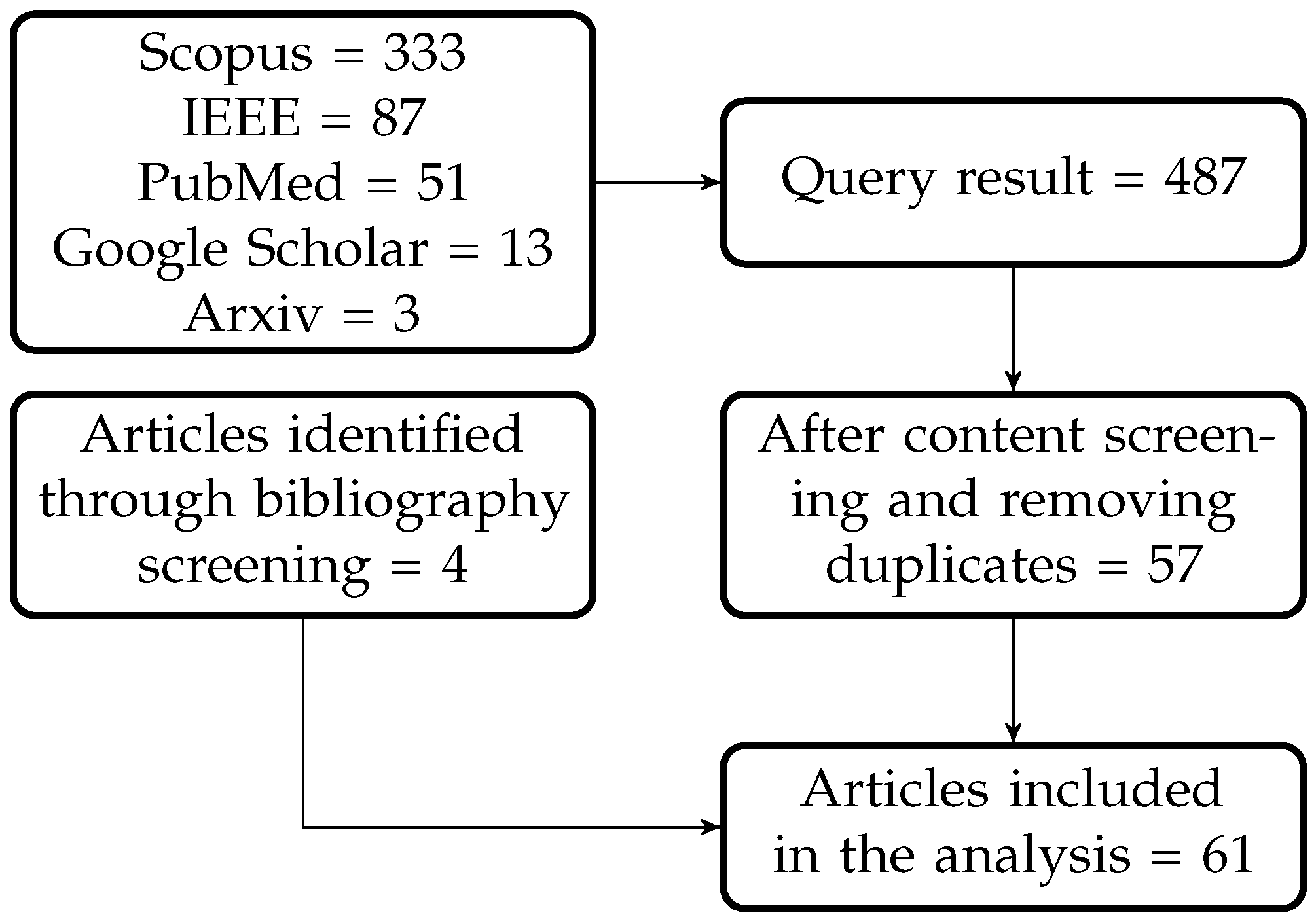

2. Methods

- Apis mellifera;

- Apis cerana;

- Apis dorsata;

- Apis bombus;

- Bumblebee;

- Bee;

- Hive;

- Honeybee;

- Apiculture;

- Sound;

- Audio;

- Acoustics;

- ZigBee.

3. Results and Discussion

3.1. Origin of the Selected Articles

3.2. Study Goal

3.2.1. Honeybees

3.2.2. Bombus and Other Bee Species

3.3. Experiment Setup

3.3.1. Bee Species

3.3.2. Number of Beehives

3.3.3. Time of the Recordings

3.3.4. Sampling Rate of the Audio Signals

3.3.5. Microphone Characteristics

3.3.6. Audio Preprocessing

3.4. Audio Analysis Method

3.4.1. Features

3.4.2. Machine Learning Algorithm

3.4.3. Performance Metrics

3.5. Reproducibility

3.5.1. Data Availability

3.5.2. Code Availability

3.6. Recommendations

- Provide exact information about bee species: As many studies did not mention what species of bees were used in their research (e.g., [45,51,59]), it becomes hard to replicate findings. As such, it is recommended that future works report this information for better comparisons and understanding of each bee species acoustic behaviour.

- Apply audio denoising methods: Very few studies reported the use of noise removal steps prior to feature analysis. In realistic settings, ambient noise can contaminate the beehive audio, thus potentially leading to erroneous decisions. As such, future work should explore the use of noise removal algorithms, as well as test if existing tools remove discriminatory information from the recordings and if custom algorithms need to be built.

- Consider environmental factors: Environment factors, such as temperature, humidity, and pollution can affect bee behaviour. In the studies that explored foraging behaviour, it was shown to be important to report the flowering species information, as this can affect beehive acoustics. Few works reported environmental factors, and future studies should attempt to incorporate additional sensor information to provide these additional contextual factors.

- Consideration of hive opening for hive inspections: Most articles reviewed included one or more hive inspections. As hive openings can cause changes in hive acoustics, as well as internal temperature and humidity, not to mention the bees’ defensive behaviour and smoke reactions, these could lead to outliers that could result in erroneous decisions. In future studies, hive opening metadata should be collected to allow for hive opening detectors to be built.

- Provide detailed information about the recording procedure: We know that the experiment setup has a direct effect on the audio samples and their quality. Therefore, reporting microphone frequency ranges, sampling rates, and any preprocessing is important to allow for comparisons between works to be made. Very few works reported these details; thus, future works should place some emphasis on these aspects.

- Clear description of the machine learning pipeline: It is known that the procedure to train and test the efficacy of different machine learning algorithms is crucial information needed for replication. For example, changing the testing setup (e.g., cross-validation, versus train/test split versus leave-one-hive-out) can lead to very different models, as can different hyperparameter tuning strategies, different normalization schemes, or regularization methods. These details were seldom reported in the surveyed papers, thus future work should strive to provide a complete description of the testing setup.

- Data and code sharing: As this is a new research field, there are still many unanswered questions that need to be answered. Sharing of data and code will be crucial for the field to grow and for studies to be replicated and benchmarked. Future works should prioritize the sharing of any newly-collected data, as well as any new codes developed. Researchers could also consider running some data processing and machine learning challenges, which have been shown to help advance emerging fields (e.g., see [120]).

4. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- FAO; Apimondia; CAAS; IZSLT. Good Beekeeping Practices for Sustainable Apiculture; FAO: Rome, Italy, 2021. [Google Scholar]

- Liao, Y.; McGuirk, A.; Biggs, B.; Chaudhuri, A.; Langlois, A.; Deters, V. Noninvasive Beehive Monitoring through Acoustic Data Using SASA® Event Stream Processing and SAS® Viya®; SAS Institute Inc.: Belgrade, Serbia, 2020. [Google Scholar]

- Van der Zee, R.; Pisa, L.; Andonov, S.; Brodschneider, R.; Charriere, J.D.; Chlebo, R.; Coffey, M.F.; Crailsheim, K.; Dahle, B.; Gajda, A.; et al. Managed honey bee colony losses in Canada, China, Europe, Israel and Turkey, for the winters of 2008–2009 and 2009–2010. J. Apic. Res. 2012, 51, 100–114. [Google Scholar] [CrossRef]

- Jacques, A.; Laurent, M.; Consortium, E.; Ribière-Chabert, M.; Saussac, M.; Bougeard, S.; Budge, G.E.; Hendrikx, P.; Chauzat, M.P. A pan-European epidemiological study reveals honey bee colony survival depends on beekeeper education and disease control. PLoS ONE 2017, 12, e0172591. [Google Scholar]

- Kulhanek, K.; Steinhauer, N.; Rennich, K.; Caron, D.M.; Sagili, R.R.; Pettis, J.S.; Ellis, J.D.; Wilson, M.E.; Wilkes, J.T.; Tarpy, D.R.; et al. A national survey of managed honey bee 2015–2016 annual colony losses in the USA. J. Apic. Res. 2017, 56, 328–340. [Google Scholar] [CrossRef] [Green Version]

- Brodschneider, R.; Gray, A.; Adjlane, N.; Ballis, A.; Brusbardis, V.; Charrière, J.D.; Chlebo, R.; Coffey, M.F.; Dahle, B.; de Graaf, D.C.; et al. Multi-country loss rates of honey bee colonies during winter 2016/2017 from the COLOSS survey. J. Apic. Res. 2018, 57, 452–457. [Google Scholar] [CrossRef] [Green Version]

- Gray, A.; Brodschneider, R.; Adjlane, N.; Ballis, A.; Brusbardis, V.; Charrière, J.D.; Chlebo, R.; Coffey, M.F.; Cornelissen, B.; Amaro da Costa, C.; et al. Loss rates of honey bee colonies during winter 2017/18 in 36 countries participating in the COLOSS survey, including effects of forage sources. J. Apic. Res. 2019, 58, 479–485. [Google Scholar] [CrossRef] [Green Version]

- Gray, A.; Adjlane, N.; Arab, A.; Ballis, A.; Brusbardis, V.; Charrière, J.D.; Chlebo, R.; Coffey, M.F.; Cornelissen, B.; Amaro da Costa, C.; et al. Honey bee colony winter loss rates for 35 countries participating in the COLOSS survey for winter 2018–2019, and the effects of a new queen on the risk of colony winter loss. J. Apic. Res. 2020, 59, 744–751. [Google Scholar] [CrossRef]

- Oldroyd, B.P. What’s killing American honey bees? PLoS Biol. 2007, 5, e168. [Google Scholar] [CrossRef] [Green Version]

- Porrini, C.; Mutinelli, F.; Bortolotti, L.; Granato, A.; Laurenson, L.; Roberts, K.; Gallina, A.; Silvester, N.; Medrzycki, P.; Renzi, T.; et al. The status of honey bee health in Italy: Results from the nationwide bee monitoring network. PLoS ONE 2016, 11, e0155411. [Google Scholar] [CrossRef]

- Stanimirović, Z.; Glavinić, U.; Ristanić, M.; Aleksić, N.; Jovanović, N.; Vejnović, B.; Stevanović, J. Looking for the causes of and solutions to the issue of honey bee colony losses. Acta Vet. 2019, 69, 1–31. [Google Scholar] [CrossRef] [Green Version]

- Cecchi, S.; Spinsante, S.; Terenzi, A.; Orcioni, S. A Smart Sensor-Based Measurement System for Advanced Bee Hive Monitoring. Sensors 2020, 20, 2726. [Google Scholar] [CrossRef]

- Meikle, W.; Holst, N. Application of continuous monitoring of honeybee colonies. Apidologie 2015, 46, 10–22. [Google Scholar] [CrossRef] [Green Version]

- Braga, A.R.; Gomes, D.G.; Rogers, R.; Hassler, E.E.; Freitas, B.M.; Cazier, J.A. A method for mining combined data from in-hive sensors, weather and apiary inspections to forecast the health status of honey bee colonies. Comput. Electron. Agric. 2020, 169, 105161. [Google Scholar] [CrossRef]

- Stalidzans, E.; Berzonis, A. Temperature changes above the upper hive body reveal the annual development periods of honey bee colonies. Comput. Electron. Agric. 2013, 90, 1–6. [Google Scholar] [CrossRef]

- Edwards-Murphy, F.; Magno, M.; Whelan, P.M.; O’Halloran, J.; Popovici, E.M. b+ WSN: Smart beehive with preliminary decision tree analysis for agriculture and honey bee health monitoring. Comput. Electron. Agric. 2016, 124, 211–219. [Google Scholar] [CrossRef]

- Bromenshenk, J.J.; Henderson, C.B.; Seccomb, R.A.; Rice, S.D.; Etter, R.T. Honey Bee Acoustic Recording and Analysis System for Monitoring Hive Health. U.S. Patent 7,549,907, 23 June 2009. [Google Scholar]

- Michelsen, A.; Kirchner, W.H.; Lindauer, M. Sound and vibrational signals in the dance language of the honeybee, Apis mellifera. Behav. Ecol. Sociobiol. 1986, 18, 207–212. [Google Scholar] [CrossRef]

- Hunt, J.; Richard, F.J. Intracolony vibroacoustic communication in social insects. Insectes Sociaux 2013, 60, 403–417. [Google Scholar] [CrossRef]

- Zlatkova, A.; Kokolanski, Z.; Tashkovski, D. Honeybees swarming detection approach by sound signal processing. In Proceedings of the 2020 XXIX International Scientific Conference Electronics (ET), Sozopol, Bulgaria, 16–18 September 2020; pp. 1–3. [Google Scholar]

- Žgank, A. Acoustic monitoring and classification of bee swarm activity using MFCC feature extraction and HMM acoustic modeling. In Proceedings of the 2018 ELEKTRO, Mikulov, Czech Republic, 21–23 May 2018; pp. 1–4. [Google Scholar]

- A Closer Look: Piping, Tooting, Quacking. Available online: https://www.beeculture.com/a-closer-look-piping-tooting-quacking/ (accessed on 31 October 2021).

- Michelsen, A.; Kirchner, W.H.; Andersen, B.B.; Lindauer, M. The tooting and quacking vibration signals of honeybee queens: A quantitative analysis. J. Comp. Physiol. A 1986, 158, 605–611. [Google Scholar] [CrossRef]

- Kirchner, W. Acoustical communication in social insects. In Orientation and Communication in Arthropods; Springer: Berlin, Germany, 1997; pp. 273–300. [Google Scholar]

- Thom, C.; Gilley, D.C.; Tautz, J. Worker piping in honey bees (Apis mellifera): The behavior of piping nectar foragers. Behav. Ecol. Sociobiol. 2003, 53, 199–205. [Google Scholar] [CrossRef]

- Pratt, S.; Kühnholz, S.; Seeley, T.D.; Weidenmüller, A. Worker piping associated with foraging in undisturbed queenright colonies of honey bees. Apidologie 1996, 27, 13–20. [Google Scholar] [CrossRef]

- Seeley, T.D.; Tautz, J. Worker piping in honey bee swarms and its role in preparing for liftoff. J. Comp. Physiol. A 2001, 187, 667–676. [Google Scholar] [CrossRef]

- Qandour, A.; Ahmad, I.; Habibi, D.; Leppard, M. Remote Beehive Monitoring Using Acoustic Signals. Acoust. Aust. 2014, 42, 205. [Google Scholar]

- Sarma, M.S.; Fuchs, S.; Werber, C.; Tautz, J. Worker piping triggers hissing for coordinated colony defence in the dwarf honeybee Apis florea. Zoology 2002, 105, 215–223. [Google Scholar] [CrossRef] [PubMed]

- Ramsey, M.T.; Bencsik, M.; Newton, M.I.; Reyes, M.; Pioz, M.; Crauser, D.; Delso, N.S.; Le Conte, Y. The prediction of swarming in honeybee colonies using vibrational spectra. Sci. Rep. 2020, 10, 1–17. [Google Scholar]

- Robles-Guerrero, A.; Saucedo-Anaya, T.; González-Ramérez, E.; Galván-Tejada, C.E. Frequency Analysis of Honey Bee Buzz for Automatic Recognition of Health Status: A Preliminary Study. Res. Comput. Sci. 2017, 142, 89–98. [Google Scholar] [CrossRef]

- Farina, A.; Gage, S.H. The duality of sounds: Ambient and communication. In Ecoacoustics; Farina, A., Gage, S.H., Eds.; Wiley: Hoboken, NJ, USA, 2017; pp. 13–29. [Google Scholar]

- Woods, E.F. Electronic prediction of swarming in bees. Nature 1959, 184, 842–844. [Google Scholar] [CrossRef]

- Woods, E.F. Means for Detecting and Indicating the Activities of Bees and Conditions in Beehives. U.S. Patent 2806082A, 10 September 1957. [Google Scholar]

- Apivox. Available online: https://apivox-smart-monitor.weebly.com/ (accessed on 31 October 2021).

- Bee Health Guru. Available online: https://www.beehealth.guru/ (accessed on 31 October 2021).

- Arnia. Available online: https://www.arnia.co/ (accessed on 31 October 2021).

- Nectar. Available online: https://www.nectar.buzz/ (accessed on 31 October 2021).

- Cecchi, S.; Terenzi, A.; Orcioni, S.; Riolo, P.; Ruschioni, S.; Isidoro, N. A preliminary study of sounds emitted by honey bees in a beehive. In Proceedings of the Audio Engineering Society Convention 144, Milan, Italy, 23–26 May 2018. [Google Scholar]

- Open Source Beehives Project. Available online: https://www.osbeehives.com/pages/about-us (accessed on 13 October 2021).

- Kulyukin, V.; Mukherjee, S.; Amlathe, P. Toward audio beehive monitoring: Deep learning vs. standard machine learning in classifying beehive audio samples. Appl. Sci. 2018, 8, 1573. [Google Scholar] [CrossRef] [Green Version]

- Barlow, S.E.; O’Neill, M.A. Technological advances in field studies of pollinator ecology and the future of e-ecology. Curr. Opin. Insect Sci. 2020, 38, 15–25. [Google Scholar] [CrossRef]

- Eskov, E. Generation, perception, and use of acoustic and electric fields in honeybee communication. Biophysics 2013, 58, 827–836. [Google Scholar] [CrossRef]

- Terenzi, A.; Cecchi, S.; Spinsante, S. On the importance of the sound emitted by honey bee hives. Vet. Sci. 2020, 7, 168. [Google Scholar] [CrossRef]

- Dubois, S.; Choveton-Caillat, J.; Kane, W.; Gilbert, T.; Nfaoui, M.; El Boudali, M.; Rezzouki, M.; Ferré, G. Bee Detection For Fruit Cultivation. In Proceedings of the 2021 IEEE International Symposium on Circuits and Systems (ISCAS), Daegu, Korea, 22–28 May 2021; pp. 1–5. [Google Scholar]

- Heise, D.; Miller-Struttmann, N.; Galen, C.; Schul, J. Acoustic detection of bees in the field using CASA with focal templates. In Proceedings of the 2017 IEEE Sensors Applications Symposium (SAS), Montréal, QC, Canada, 11–13 August 2017; pp. 1–5. [Google Scholar]

- Kim, J.; Oh, J.; Heo, T.Y. Acoustic Scene Classification and Visualization of Beehive Sounds Using Machine Learning Algorithms and Grad-CAM. Math. Probl. Eng. 2021, 2021, 5594498. [Google Scholar] [CrossRef]

- Nolasco, I.; Benetos, E. To bee or not to bee: Investigating machine learning approaches for beehive sound recognition. arXiv 2018, arXiv:1811.06016. [Google Scholar]

- Zhang, T.; Zmyslony, S.; Nozdrenkov, S.; Smith, M.; Hopkins, B. Semi-Supervised Audio Representation Learning for Modeling Beehive Strengths. arXiv 2021, arXiv:2105.10536. [Google Scholar]

- Ruvinga, S.; Hunter, G.J.; Duran, O.; Nebel, J.C. Use of LSTM Networks to Identify “Queenlessness” in Honeybee Hives from Audio Signals. In Proceedings of the 2021 17th International Conference on Intelligent Environments (IE), Dubai, United Arab Emirates, 20–23 June 2021; pp. 1–4. [Google Scholar]

- Peng, R.; Ardekani, I.; Sharifzadeh, H. An Acoustic Signal Processing System for Identification of Queen-less Beehives. In Proceedings of the 2020 Asia-Pacific Signal and Information Processing Association Annual Summit and Conference (APSIPA ASC), Online, 7–10 December 2020; pp. 57–63. [Google Scholar]

- Terenzi, A.; Cecchi, S.; Orcioni, S.; Piazza, F. Features extraction applied to the analysis of the sounds emitted by honey bees in a beehive. In Proceedings of the 2019 11th International Symposium on Image and Signal Processing and Analysis (ISPA), Dubrovnik, Croatia, 23–25 September 2019; pp. 03–08. [Google Scholar]

- Nolasco, I.; Terenzi, A.; Cecchi, S.; Orcioni, S.; Bear, H.L.; Benetos, E. Audio-based identification of beehive states. In Proceedings of the ICASSP 2019-2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Brighton, UK, 12–17 May 2019; pp. 8256–8260. [Google Scholar]

- Terenzi, A.; Ortolani, N.; Nolasco, I.; Benetos, E.; Cecchi, S. Comparison of Feature Extraction Methods for Sound-based Classification of Honey Bee Activity. IEEE/ACM Trans. Audio Speech Lang. Process. 2021, 30, 112–122. [Google Scholar] [CrossRef]

- Robles-Guerrero, A.; Saucedo-Anaya, T.; González-Ramírez, E.; De la Rosa-Vargas, J.I. Analysis of a multiclass classification problem by lasso logistic regression and singular value decomposition to identify sound patterns in queenless bee colonies. Comput. Electron. Agric. 2019, 159, 69–74. [Google Scholar] [CrossRef]

- Cejrowski, T.; Szymański, J.; Mora, H.; Gil, D. Detection of the bee queen presence using sound analysis. In Proceedings of the Asian Conference on Intelligent Information and Database Systems, Dong Hoi City, Vietnam, 19–21 March 2018; pp. 297–306. [Google Scholar]

- Howard, D.; Duran, O.; Hunter, G.; Stebel, K. Signal processing the acoustics of honeybees (Apis mellifera) to identify the “queenless” state in Hives. Proc. Inst. Acoust. 2013, 35, 290–297. [Google Scholar]

- Gatto, B.B.; Colonna, J.G.; Santos, E.M.D.; Koerich, A.L.; Fukui, K. Discriminative Singular Spectrum Classifier with Applications on Bioacoustic Signal Recognition. arXiv 2021, arXiv:2103.10166. [Google Scholar]

- Krzywoszyja, G.; Rybski, R.; Andrzejewski, G. Bee swarm detection based on comparison of estimated distributions samples of sound. IEEE Trans. Instrum. Meas. 2018, 68, 3776–3784. [Google Scholar] [CrossRef]

- Anand, N.; Raj, V.B.; Ullas, M.; Srivastava, A. Swarm Detection and Beehive Monitoring System using Auditory and Microclimatic Analysis. In Proceedings of the 2018 3rd International Conference on Circuits, Control, Communication and Computing (I4C), Bangalore, India, 3–5 October 2018; pp. 1–4. [Google Scholar]

- Zlatkova, A.; Gerazov, B.; Tashkovski, D.; Kokolanski, Z. Analysis of parameters in algorithms for signal processing for swarming of honeybees. In Proceedings of the 2020 28th Telecommunications Forum (TELFOR), Belgrade, Serbia, 15–16 November 2020; pp. 1–4. [Google Scholar]

- Cecchi, S.; Terenzi, A.; Orcioni, S.; Piazza, F. Analysis of the sound emitted by honey bees in a beehive. In Proceedings of the Audio Engineering Society Convention 147. Audio Engineering Society, New York, NY, USA, 16–19 October 2019. [Google Scholar]

- Zgank, A. IoT-based bee swarm activity acoustic classification using deep neural networks. Sensors 2021, 21, 676. [Google Scholar] [CrossRef]

- Zgank, A. Bee swarm activity acoustic classification for an IoT-based farm service. Sensors 2020, 20, 21. [Google Scholar] [CrossRef] [Green Version]

- Hord, L.; Shook, E. Determining Honey Bee Behaviors from Audio Analysis. 2019. Available online: https://www.google.com.hk/url?sa=t&rct=j&q=&esrc=s&source=web&cd=&ved=2ahUKEwi77vbYhpD3AhUXx4sBHe3HBmgQFnoECAQQAQ&url=https%3A%2F%2Fcs.appstate.edu%2Fret%2Fpapers%2FDeterminingHoneyBeeBehaviorsAudioAnalysis.docx&usg=AOvVaw0mL2ZJS0YDGono-DYA_AcT (accessed on 10 March 2022).

- Tashakkori, R.; Buchanan, G.B.; Craig, L.M. Analyses of Audio and Video Recordings for Detecting a Honey Bee Hive Robbery. In Proceedings of the 2020 SoutheastCon, Online, 12–15 March 2020; pp. 1–6. [Google Scholar]

- Sharif, M.Z.; Wario, F.; Di, N.; Xue, R.; Liu, F. Soundscape Indices: New Features for Classifying Beehive Audio Samples. Sociobiology 2020, 67, 566–571. [Google Scholar] [CrossRef]

- Zhao, Y.; Deng, G.; Zhang, L.; Di, N.; Jiang, X.; Li, Z. Based investigate of beehive sound to detect air pollutants by machine learning. Ecol. Inform. 2021, 61, 101246. [Google Scholar] [CrossRef]

- Pérez, N.; Jesús, F.; Pérez, C.; Niell, S.; Draper, A.; Obrusnik, N.; Zinemanas, P.; Spina, Y.M.; Letelier, L.C.; Monzón, P. Continuous monitoring of beehives’ sound for environmental pollution control. Ecol. Eng. 2016, 90, 326–330. [Google Scholar] [CrossRef]

- Hunter, G.; Howard, D.; Gauvreau, S.; Duran, O.; Busquets, R. Processing of multi-modal environmental signals recorded from a “smart” beehive. Proc. Inst. Acoust. 2019, 41, 339–348. [Google Scholar]

- Henry, E.; Adamchuk, V.; Stanhope, T.; Buddle, C.; Rindlaub, N. Precision apiculture: Development of a wireless sensor network for honeybee hives. Comput. Electron. Agric. 2019, 156, 138–144. [Google Scholar] [CrossRef] [Green Version]

- Zubrzak, B.; Bieńkowski, P.; Cała, P.; Płaskota, P.; Rudno-Rudziński, K.; Nowakowski, P. Thermal and acoustic changes in bee colony due to exposure to microwave electromagnetic field–preliminary research. Przegląd Elektrotechniczny 2018, 94. [Google Scholar] [CrossRef]

- Kawakita, S.; Ichikawa, K.; Sakamoto, F.; Moriya, K. Sound recordings of Apis cerana japonica colonies over 24 h reveal unique daily hissing patterns. Apidologie 2019, 50, 204–214. [Google Scholar] [CrossRef] [Green Version]

- Kawakita, S.; Ichikawa, K.; Sakamoto, F.; Moriya, K. Hissing of A. cerana japonica is not only a direct aposematic response but also a frequent behavior during daytime. Insectes Sociaux 2018, 65, 331–337. [Google Scholar] [CrossRef]

- Wehmann, H.N.; Gustav, D.; Kirkerud, N.H.; Galizia, C.G. The sound and the fury—Bees hiss when expecting danger. PLoS ONE 2015, 10, e0118708. [Google Scholar] [CrossRef]

- Hong, W.; Xu, B.; Chi, X.; Cui, X.; Yan, Y.; Li, T. Long-Term and Extensive Monitoring for Bee Colonies Based on Internet of Things. IEEE Internet Things J. 2020, 7, 7148–7155. [Google Scholar] [CrossRef]

- Zacepins, A.; Kviesis, A.; Ahrendt, P.; Richter, U.; Tekin, S.; Durgun, M. Beekeeping in the future—Smart apiary management. In Proceedings of the 2016 17th International Carpathian Control Conference (ICCC), High Tatras, Slovakia, 29 May–1 June 2016; pp. 808–812. [Google Scholar]

- Murphy, F.E.; Srbinovski, B.; Magno, M.; Popovici, E.M.; Whelan, P.M. An automatic, wireless audio recording node for analysis of beehives. In Proceedings of the 2015 26th Irish Signals and Systems Conference (ISSC), Carlow, Ireland, 24–25 June 2015; pp. 1–6. [Google Scholar]

- Imoize, A.L.; Odeyemi, S.D.; Adebisi, J.A. Development of a Low-Cost Wireless Bee-Hive Temperature and Sound Monitoring System. Indones. J. Electr. Eng. Inform. 2020, 8, 476–485. [Google Scholar]

- Kawakita, S.; Ichikawa, K. Automated classification of bees and hornet using acoustic analysis of their flight sounds. Apidologie 2019, 50, 71–79. [Google Scholar] [CrossRef] [Green Version]

- Gradišek, A.; Slapničar, G.; Šorn, J.; Luštrek, M.; Gams, M.; Grad, J. Predicting species identity of bumblebees through analysis of flight buzzing sounds. Bioacoustics 2017, 26, 63–76. [Google Scholar] [CrossRef]

- Gjoreski, M.; Budna, B.; Gradišek, A.; Gams, M. JSI Sound—A machine-learning tool in Orange for simple biosound classification. In Proceedings of the 26th International Joint Conference on Artificial Intelligence, Melbourne, Australia, 19–25 August 2017. [Google Scholar]

- Ribeiro, A.P.; da Silva, N.F.F.; Mesquita, F.N.; Araújo, P.D.C.S.; Rosa, T.C.; Mesquita-Neto, J.N. Machine learning approach for automatic recognition of tomato-pollinating bees based on their buzzing-sounds. PLoS Comput. Biol. 2021, 17, e1009426. [Google Scholar] [CrossRef] [PubMed]

- Heise, D.; Miller, Z.; Wallace, M.; Galen, C. Bumble Bee Traffic Monitoring Using Acoustics. In Proceedings of the 2020 IEEE International Instrumentation and Measurement Technology Conference (I2MTC), Online, 25 May–25 June 2020; pp. 1–6. [Google Scholar]

- Heise, D.; Miller, Z.; Harrison, E.; Gradišek, A.; Grad, J.; Galen, C. Acoustically Tracking the Comings and Goings of Bumblebees. In Proceedings of the 2019 IEEE Sensors Applications Symposium (SAS), Sophia Antipolis, France, 11–13 March 2019; pp. 1–6. [Google Scholar]

- Gradišek, A.; Cheron, N.; Heise, D.; Galen, C.; Grad, J. Monitoring bumblebee daily activities using microphones. In Proceedings of the 21st Annual International Multiconference Information Society–IS 2018, Ljubljana, Slovenia, 10–14 October 2018; pp. 5–8. [Google Scholar]

- Van Goethem, S.; Verwulgen, S.; Goethijn, F.; Steckel, J. An IoT solution for measuring bee pollination efficacy. In Proceedings of the 2019 IEEE 5th World Forum on Internet of Things (WF-IoT), Limerick, Ireland, 15–18 April 2019; pp. 837–841. [Google Scholar]

- Miller-Struttmann, N.E.; Heise, D.; Schul, J.; Geib, J.C.; Galen, C. Flight of the bumble bee: Buzzes predict pollination services. PLoS ONE 2017, 12, e0179273. [Google Scholar] [CrossRef]

- Cejrowski, T.; Szymański, J.; Logofătu, D. Buzz-based recognition of the honeybee colony circadian rhythm. Comput. Electron. Agric. 2020, 175, 105586. [Google Scholar] [CrossRef]

- Galen, C.; Miller, Z.; Lynn, A.; Axe, M.; Holden, S.; Storks, L.; Ramirez, E.; Asante, E.; Heise, D.; Kephart, S.; et al. Pollination on the dark side: Acoustic monitoring reveals impacts of a total solar eclipse on flight behavior and activity schedule of foraging bees. Ann. Entomol. Soc. Am. 2019, 112, 20–26. [Google Scholar] [CrossRef]

- De Luca, P.A.; Giebink, N.; Mason, A.C.; Papaj, D.; Buchmann, S.L. How well do acoustic recordings characterize properties of bee (Anthophila) floral sonication vibrations? Bioacoustics 2020, 29, 1–14. [Google Scholar] [CrossRef]

- Kulyukin, V.A.; Reka, S.K. Toward Sustainable Electronic Beehive Monitoring: Algorithms for Omnidirectional Bee Counting from Images and Harmonic Analysis of Buzzing Signals. Eng. Lett. 2016, 24, 317–327. [Google Scholar]

- Cane, J.H. The oligolectic bee Osmia brevis sonicates Penstemon flowers for pollen: A newly documented behavior for the Megachilidae. Apidologie 2014, 45, 678–684. [Google Scholar] [CrossRef] [Green Version]

- Schlegel, T.; Visscher, P.K.; Seeley, T.D. Beeping and piping: Characterization of two mechano-acoustic signals used by honey bees in swarming. Naturwissenschaften 2012, 99, 1067–1071. [Google Scholar] [CrossRef]

- Tayal, M.; Kariyat, R. Examining the Role of Buzzing Time and Acoustics on Pollen Extraction of Solanum elaeagnifolium. Plants 2021, 10, 2592. [Google Scholar] [CrossRef] [PubMed]

- Cejrowski, T.; Szymański, J. Buzz-based honeybee colony fingerprint. Comput. Electron. Agric. 2021, 191, 106489. [Google Scholar] [CrossRef]

- Miller, Z.J. What’s the Buzz About? Progress and Potential of Acoustic Monitoring Technologies for Investigating Bumble Bees. IEEE Instrum. Meas. Mag. 2021, 24, 21–29. [Google Scholar] [CrossRef]

- Switzer, C.M.; Hogendoorn, K.; Ravi, S.; Combes, S.A. Shakers and head bangers: Differences in sonication behavior between Australian Amegilla murrayensis (blue-banded bees) and North American Bombus impatiens (bumblebees). Arthropod Plant Interact. 2016, 10, 1–8. [Google Scholar] [CrossRef]

- De Luca, P.A.; Cox, D.A.; Vallejo-Marín, M. Comparison of pollination and defensive buzzes in bumblebees indicates species-specific and context-dependent vibrations. Naturwissenschaften 2014, 101, 331–338. [Google Scholar] [CrossRef] [PubMed]

- Huber, P.J. Robust estimation of a location parameter. In Breakthroughs in Statistics; Springer: Berlin, Germany, 1992; pp. 492–518. [Google Scholar]

- Šekulja, D.; Pechhacker, H.; Licek, E. Drifting behavior of honey bees (Apis Mellifera Carnica Pollman, 1879) in the epidemiology of American foulbrood. Zb. Veleučilišta U Rijeci 2014, 2, 345–358. [Google Scholar]

- Tashakkori, R.; Hernandez, N.P.; Ghadiri, A.; Ratzloff, A.P.; Crawford, M.B. A honeybee hive monitoring system: From surveillance cameras to Raspberry Pis. In Proceedings of the SoutheastCon 2017, Charlotte, NC, USA, 1 April 2017; pp. 1–7. [Google Scholar]

- Kale, D.J.; Tashakkori, R.; Parry, R.M. Automated beehive surveillance using computer vision. In Proceedings of the SoutheastCon 2015, Fort Lauderdale, FL, USA, 9–12 April 2015. [Google Scholar]

- Grenier, É.; Giovenazzo, P.; Julien, C.; Goupil-Sormany, I. Honeybees as a biomonitoring species to assess environmental airborne pollution in different socioeconomic city districts. Environ. Monit. Assess. 2021, 193, 740. [Google Scholar] [CrossRef]

- Como, F.; Carnesecchi, E.; Volani, S.; Dorne, J.; Richardson, J.; Bassan, A.; Pavan, M.; Benfenati, E. Predicting acute contact toxicity of pesticides in honeybees (Apis mellifera) through a k-nearest neighbor model. Chemosphere 2017, 166, 438–444. [Google Scholar] [CrossRef]

- Robertson, H.M.; Wanner, K.W. The chemoreceptor superfamily in the honey bee, Apis mellifera: Expansion of the odorant, but not gustatory, receptor family. Genome Res. 2006, 16, 1395–1403. [Google Scholar] [CrossRef] [Green Version]

- Fuchs, S.; Tautz, J. Colony defence and natural enemies. In Honeybees of Asia; Springer: Berlin, Germany, 2011; pp. 369–395. [Google Scholar]

- Kirchner, W.; Röschard, J. Hissing in bumblebees: An interspecific defence signal. Insectes Sociaux 1999, 46, 239–243. [Google Scholar] [CrossRef]

- Heidelbach, J.; Böhm, H.; Kirchner, W. Sound and vibration signals in a bumble bee colony (Bombus terrestris). Zoology 1998, 101, 82. [Google Scholar]

- Bencsik, M.; Bencsik, J.; Baxter, M.; Lucian, A.; Romieu, J.; Millet, M. Identification of the honey bee swarming process by analysing the time course of hive vibrations. Comput. Electron. Agric. 2011, 76, 44–50. [Google Scholar] [CrossRef]

- Davis, S.; Mermelstein, P. Comparison of parametric representations for monosyllabic word recognition in continuously spoken sentences. IEEE Trans. Acoust. Speech Signal Process. 1980, 28, 357–366. [Google Scholar] [CrossRef] [Green Version]

- Yegnanarayana, B.; Prasanna, S.M.; Zachariah, J.M.; Gupta, C.S. Combining evidence from source, suprasegmental and spectral features for a fixed-text speaker verification system. IEEE Trans. Speech Audio Process. 2005, 13, 575–582. [Google Scholar] [CrossRef]

- Huang, N.E. Introduction to the Hilbert–Huang transform and its related mathematical problems. In Hilbert–Huang Transform and Its Applications; World Scientific: Singapore, 2014; pp. 1–26. [Google Scholar]

- Atal, B.S.; Hanauer, S.L. Speech analysis and synthesis by linear prediction of the speech wave. J. Acoust. Soc. Am. 1971, 50, 637–655. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Szabó, B.T.; Denham, S.L.; Winkler, I. Computational models of auditory scene analysis: A review. Front. Neurosci. 2016, 10, 524. [Google Scholar] [CrossRef] [Green Version]

- Cortes, C.; Vapnik, V. Support-vector networks. Mach. Learn. 1995, 20, 273–297. [Google Scholar] [CrossRef]

- Breiman, L. Bagging predictors. Mach. Learn. 1996, 24, 123–140. [Google Scholar] [CrossRef] [Green Version]

- Breiman, L.; Friedman, J.H.; Olshen, R.A.; Stone, C.J. Classification and Regression Trees; Routledge: Oxfordshire, UK, 2017. [Google Scholar]

- Chen, T.; Guestrin, C. Xgboost: A scalable tree boosting system. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, San Francisco, CA, USA, 13–17 April 2016; pp. 785–794. [Google Scholar]

- Sridhar, K.; Cutler, R.; Saabas, A.; Parnamaa, T.; Loide, M.; Gamper, H.; Braun, S.; Aichner, R.; Srinivasan, S. ICASSP 2021 Acoustic Echo Cancellation Challenge: Datasets, Testing Framework, and Results. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Toronto, ON, Canada, 6–11 June 2021; pp. 151–155. [Google Scholar]

| Inclusion–Studies using audio to: |

| Detect bees |

| Predict hive strength |

| Detect swarming |

| Detect queen bee missing |

| Detect mite attack |

| Identify bee species |

| Measure pollination efficacy |

| Detect environmental effects |

| Detect arrival and departure of bees |

| Exclusion–Studies focusing on: |

| Software development of hive monitoring |

| Hardware development of hive monitoring |

| Category | Data Item | Description |

|---|---|---|

| Origin of article | Type of publication | Whether the study was published as a journal article, a conference paper or in an electronic preprint repository. |

| Venue | Publishing venue, such as the name of a journal or conference. | |

| Country of first author affiliation | Location of the affiliated university, institute or research body of the first author. | |

| Study rationale | Study goal | Application or aim of the article. |

| Experiment setup | Species of bee | Exact name of the bee specie. |

| Number of beehives or bees | Exact number of beehives or single bees. | |

| Time of the recordings | Month and hour of the day for audio recordings. | |

| Sampling rate of audio files | Sampling rate of audio files | |

| Microphone information | Resolution and frequency response of the microphone. | |

| Audio preprocessing | Steps applied to the raw data to prepare it for use by the architecture or for feature extraction. | |

| Audio analysis method | Features | Features extracted from audio files for visualization or a machine learning framework. |

| Machine learning algorithm | Name of machine learning algorithm if a machine learning framework is used | |

| Performance metrics | Metrics used in the study to report performance (e.g., accuracy, f1-score, etc) if a machine learning framework is used. | |

| Reproducibility | Dataset | Whether the data used for the experiment come from private recordings or from a publicly available dataset. |

| Code | Whether the code used for the experiment is available online or not, and if so, where. |

| Study Goal | Articles |

|---|---|

| Bee detection | [41,45,46,47,48] |

| Strength of beehives | [49] |

| Queen absence | [31,39,50,51,52,53,54,55,56,57,58] |

| Swarming detection | [20,21,59,60,61,62,63,64,65] |

| Hive robbery | [66] |

| Infestation detection | [28] |

| Detecting environmental pollution and chemicals | [67,68,69,70] |

| Smoke reaction | [39] |

| Effect of electromagnetic exposure | [71,72] |

| Hissing analysis | [73,74,75] |

| Hive monitoring | [76,77,78,79] |

| Identifying bee species | [80,81,82,83] |

| Bee arrival and departure | [84,85,86] |

| Measuring bee pollination efficacy | [87,88] |

| Identification of bees circadian rhythm | [89] |

| Impacts of a total solar eclipse on flight behavior | [90] |

| Bee audio analysis | [91,92,93,94,95,96,97,98,99] |

| Bee Species | Articles |

|---|---|

| Apis mellifera | [20,28,49,56,60,61,62,67,68,69,71,75,78,79,89] |

| A. cerana | [76] |

| A. mellifera ligustica | [39,41,50,52,53,54,57,58,65,66,94] |

| A. cerana japonica | [73,74] |

| A. mellifera carnica | [31,41,55,57,72] |

| Augochloropsis brachycephala | [83] |

| Amegilla murrayensis | [98] |

| Bombus spp. | [82,90,91] |

| B. impatiens | [91] |

| B. ardens | [80] |

| B. latreille | [90] |

| B. balteatus | [88] |

| B. terrestris | [81,87,99] |

| B. pascuorum | [81,85,86,99] |

| B. sylvicola | [84,85,88] |

| B. morio and B. atratus | [83] |

| B. humilis and B. hypnorum | [81,86] |

| B. argillaceus, B. jonellus, B. ruderarius, B. sylvarum | [81] |

| B. hortorum, B. lapidarius, B. pratorum, B. lucorum | [81,99] |

| B. nevadensis, B. frigidus, B. flavifrons, B. mixtus | [84] |

| Centris tarsata and Centris trigonoides | [83] |

| Eulaema nigrita | [83] |

| Exomalopsis analis and Exomalospsis minor | [83] |

| Halictus | [90,95] |

| Megachile | [90,95] |

| Melipona bicolor and Melipona quadrifasciata | [83] |

| Osmia brevis (oligolectic) | [93] |

| Pseudaugochlora graminea | [83] |

| Slovenian apis mellifera carnica | [50] |

| T. nipponensis and V. s. xanthoptera | [80] |

| Xylocopa nigrocincta and Xylocopa suspecta | [83] |

| Microphone Frequency Range (Hz) | Articles |

|---|---|

| 6–20,000 | [64] |

| 15–20,000 | [41] |

| 20–20,000 | [55] |

| 40–20,000 | [75] |

| 50–10,000 | [88] |

| 70–16,000 | [94] |

| 100–15,000 | [39,71] |

| Feature | Articles |

|---|---|

| Fast Fourier transform and s-transform | [57] |

| Spectrogram (STFT) | [28,41,45,54,57,61,62,69,75,76,77,93] |

| Mel-scaled spectrogram | [41,47,48,53,54] |

| Mel-frequency cepstral coefficients | [21,31,47,48,50,53,54,55,62,63,64,67,68,80,81,82,83,89] |

| Improved mel-frequency cepstral coefficients | [51] |

| Discrete wavelet transform | [52,54,62] |

| Continuous wavelet transform | [54,62] |

| Hilbert–Huang transform | [52,53,54,62] |

| Linear predictive coding | [56,64] |

| Power spectral density | [20,78] |

| Constant-Q transform | [47] |

| Temporal features | [71,79,90,91] |

| Spectral features | [28,84,99] |

| Computational auditory scene analysis | [46,85,88] |

| Sound indices | [67,96] |

| Classifier | Articles |

|---|---|

| Linear discriminant analysis | [28] |

| Logistic regression | [31,50,55,83] |

| Support vector machines | [28,41,47,49,53,56,68,80,81,82,83,87,89] |

| K-nearest neighbours | [68] |

| Naive Bayes | [81,82] |

| Decision tree | [81,82,83] |

| Random forest | [47,67,68,81,82,83] |

| XGBoost | [47] |

| Multi-layer perceptron | [49,50,51,63] |

| Convolutional neural networks | [41,47,49,53,54] |

| Long short-term memory | [50] |

| Gaussian mixture models | [21,64] |

| Hidden Markov models | [21,63,64] |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Abdollahi, M.; Giovenazzo, P.; Falk, T.H. Automated Beehive Acoustics Monitoring: A Comprehensive Review of the Literature and Recommendations for Future Work. Appl. Sci. 2022, 12, 3920. https://doi.org/10.3390/app12083920

Abdollahi M, Giovenazzo P, Falk TH. Automated Beehive Acoustics Monitoring: A Comprehensive Review of the Literature and Recommendations for Future Work. Applied Sciences. 2022; 12(8):3920. https://doi.org/10.3390/app12083920

Chicago/Turabian StyleAbdollahi, Mahsa, Pierre Giovenazzo, and Tiago H. Falk. 2022. "Automated Beehive Acoustics Monitoring: A Comprehensive Review of the Literature and Recommendations for Future Work" Applied Sciences 12, no. 8: 3920. https://doi.org/10.3390/app12083920

APA StyleAbdollahi, M., Giovenazzo, P., & Falk, T. H. (2022). Automated Beehive Acoustics Monitoring: A Comprehensive Review of the Literature and Recommendations for Future Work. Applied Sciences, 12(8), 3920. https://doi.org/10.3390/app12083920