An Organizational and Governance Model to Support Mass Collaborative Learning Initiatives

Abstract

1. Introduction

- It allows the interaction of broad groups of heterogeneous people (who might be dispersed through time and space) in diverse ways, and they can reap the power of collaboration.

- It connects the systems, organizations, and people that are keen to work and learn across boundaries.

- It leads to public engagement in a casual and research study toward learning favorite subjects.

- It enables learners to share knowledge and experiences and learn from each other, thereby promoting their capabilities in obtaining rapid yet significant progress.

- It facilitates fast cycle learning toward impact at scale.

- It increases global interactions with experts and peers.

- It makes the process of learning relatively creative, cost-effective, and flexible.

- It provides an easily accessible public digital data repository for all its members.

- There is insufficient evidence about the successful application of MCL in various fields.

- The concept, organizational structure, and associated mechanism of MCL are still evolving.

- The practice of mass collaboration in the learning community is not clearly formalized.

- There are some ambiguities about the key strategies for stimulating people to join the community and to keep them motivated in providing contributions.

- Filling part of the gap mentioned above;

- Identifying the influential factors that support the creation, operation, and development of MCL initiatives;

- Gaining some insights into the main features of an MCL community.

2. A Brief Overview of Related Work

2.1. Identifying the Positive and Negative Factors in Existing and Emerging Examples of Mass Collaboration

- Wikipedia—a web-based, free-content, and Internet-based encyclopedia written and maintained by a community of volunteer editors in which users can freely share their knowledge [21]:

- -

- Positive factors—it is free and contributed by volunteers. It is open access and easy inclusion, and anyone can participate. Users can play different roles and perform different tasks. It has no power hierarchy. Users are treated (almost) equally. Articles are continuously developed, updated, and checked. Consensus can be reached through friendly and open discussion.

- -

- Negative factors—Wikipedia editors are anonymous. The quantity or frequency of contributions is not controlled. Not all content will be accurate. The scientific level of articles varies. Contents are not free from bias. Anyone can vandalize the articles. Some users might have fake credentials.

- Digg—a social news website that enables users to submit their interesting stories to be selected and voted (“Digg” or “Bury”) by other users [22,23]:

- -

- Positive factors—it is a user-driven website, and it is open to anybody. It has easy inclusion. the login is mandatory, and users need to create a Digg user account. Users are volunteers and they can play different roles and participate in different tasks. Users can add friends and develop their relationships. Users’ information and contributions are associated with their Digg profile. Stories are classified into different groups based on topics; good stories will be promoted. Contents are checked by the system. Digg raises capital from investors.

- -

- Negative factors—there is no editorial control on submissions. An influential group of users can affect information credibility by using promotions, burying information, and votes. Users cannot share their opinions because Digg lacks commenting features on the website.

- Yahoo! Answers—this was a Q&A platform or knowledge market that allowed users to ask questions on any topic and/or answer others’ questions by sharing facts, opinions, and experiences [24] (Yahoo! Answers was shut down on 4 May 2021):

- -

- Positive factors—it was an open learning community, available in 12 languages, and open to all. Users could connect, share info, add comments, ask questions, answer others’ questions, and/or vote. There were some categories with multiple sub-categories for organizing questions. There was a “Point System” (scoring) and a “Voting System”. Users could receive a “badge” under their name, e.g., naming them as a “Top Contributor”. Staff could reach different levels of authority and site access. Supportive users were featured on the Yahoo! Answers Blog. The “user moderation system” handled its misuses. Posts could be detached if they received a sufficient negative weight. It was supported by funds and financial aides. Tt provided diverse supportive services.

- -

- Negative factors—users could use any name and photo for opening an account. There was no system to filter the incorrect answers. There were improper grammar and incorrect spelling in answers. Once the “best answer” was chosen, there was no chance to add more answers nor was there space for improvement.

- SETI@home—a computing project and scientific experiment that allows anyone with a computer and an Internet connection to search for signs of extraterrestrial intelligence [25]:

- -

- Positive factors—it is open to anybody. It features easy inclusion. Participants are volunteers and can build a team and make competitions. It has a “Voting System” to determine the validity of the results. Its “Credit System” can monitor how much work was performed. It can raise financial donations.

- -

- Negative factors—the risk of cheating (for gaining credit) is high. Some participants might misuse the resources of the projects to gain work-unit results. The projects cannot share their resources.

- Scratch—an online community and free programming language tool that allows users to create their own stories, animations, games, art, and music [26]:

- -

- Positive factors—it is open to anybody and available in 70+ languages. It can be used in different settings: schools, libraries, community centers, museums, and homes. Users can ask questions, share their creative ideas, stories, and projects, obtain feedback, and collaborate with others. If something breaks the community’s rules, Scratch will take the corresponding action (e.g., sends a warning to the account, removes it, or blocks the account).

- -

- Negative factors—without creating an account, users can make contributions (e.g., create their own projects, read, and upload comments). Users can create several accounts.

- Galaxy Zoo—a citizen science and crowdsourced astronomy project that invites people to help classify the morphology of more than a million galaxies [27]:

- -

- Positive factors—it has easy inclusion. Users are volunteers and creating a user account is necessary. The username is associated with the user’s contributions. It uses computer technologies and human intelligence for the classification of galaxies. It monitors and analyses some of the contributions and transactions. Information is stored in a secured database. It uses “Amazon Web Services” to rapidly serve the website to a large number of people. It raises funds.

- -

- Negative factors—using the real name is not necessary for registration. Personal information cannot be completely removed from the system. The classification system cannot provide feedback about the process of classification.

- Foldit—a crowdsourcing computer game and protein-folding puzzle for which its solutions help scientists in targeting and eradicating diseases and in creating biological innovations [28]:

- -

- Positive factors—it is open to all. It has easy inclusion, engaging the general public and scientific teams in online research. Players can use the Foldit forum for collaborations, e.g., to train new players. It relies on human-computer interaction. It has a “Ranking and Awarding System”. The website records, monitors, and stores the posts and interactions. It publishes all-important scientific discoveries. The results can be used in scientific publications. It benefits from grants.

- -

- Negative factors—players can play without an account, so there are many anonymous identifiers in the community. It is not easy to learn and play Foldit. Playing Foldit needs a reasonably powerful computer.

- Applications of the Delphi method—a systematic and qualitative method that evaluates the results of multiple rounds of questionnaires sent to a panel of experts. This method can be used to estimate the likelihood and outcome of future events [29]:

- -

- Positive factors—there are different types of Delphi. Each panel will be selected and invited. The experts can discuss or comment on others’ forecasts, and all the experts and their forecasts are given equal weight. It can be applied in several different fields of science. It can raise funds.

- -

- Negative factors—the potential experts might not agree or be available for participation. The method is not able to make complex forecasts with multiple factors. The response times might take several days or weeks.

- Climate Colab—an open problem-solving platform that harnesses the collective intelligence of thousands of people to find solutions for global climate change [30]:

- -

- Positive factors—it benefits from the contribution of experts and crowds, and it has easy inclusion. Users are volunteers and can play different roles and perform different tasks. Users can collaborate on the platform with whoever is interested in similar topics. Users can comment on others’ proposals. It has a “Voting System”, “Rewarding System”, “Messaging System “, and “expert advisory board”. On the website, there is a list of community members and their points, roles, activities, and membership date. It raises funds and financial support.

- -

- Negative factors—it must continuously identify, invite, and maintain a large number of different experts. It uses a top-down approach in the community.

- Assignment Zero—an experiment in crowd-sourced journalism that enables professionals and amateurs to engage in collaborative reporting [31]:

- -

- Positive factors—it is open to all. Users are volunteers. Users must create a user account by providing their real full name and a valid email address. There is a list of tasks that users can perform. Users can contribute to different topics. Users are encouraged to make themselves known to the public by providing their biography. It gives credit to the contributions. It is supported by funds.

- -

- Negative factors—users might produce and share stories recognized as useless; interviews often take place face-to-face, so the candidates must live close to the interviewee.

- DonationCoder—an online community of programmers and developers that help people by organizing and financing software development [32]:

- -

- Positive factors—it provides free tools and services. Registration needs a valid email address. There are different forms of communication. All users are considered equal. It benefits from grants and donations.

- -

- Negative factors—users can sign up at the website by using different emails and names. Some sections of the website are available only to donators. For participation in the forum, participants are required to first donate and then they receive the license key; they then register a forum account and finally upgrade their forum account. The contracting and consulting services are not cheap.

- Experts Exchange (EE)—a trusted community of technology experts that help people solve their technology problems [33]:

- -

- Positive factors—users must register with an accurate email address. Users are not allowed to have more than one account. Users are volunteers. EE covers over 230 tech topics and prioritizes the contents based on usefulness. Users can receive recognition and secure credentials with “Credly” (a digital badge platform that provides digital credentials to individuals through working with credible organizations). EE provides a variety of professional training courses on a wide variety of topics, and it produces various video tutorials.

- -

- Negative factors—EE provides answers only via paid mode. If a user account is a past due, EE might cancel the account for non-payment.

- Waze—a community-driven GPS and navigational app that connects drivers to one another, allowing them to work together to find directions and avoid traffic jams [34]:

- -

- Positive factors—it is a user-generated community. It is free to download and can be used anywhere. It relies on crowdsourced information. Users need registration. Users can connect and work together. It offers points to users. Advertising is the main source of generating revenue.

- -

- Negative factors—using Waze needs enough initial and active users to collectively create the local maps and continuously update data to make it useful. A very limited number of countries (13) have a full base map; in others, either the map is incomplete or not yet used. Waze currently supports only private cars and not public transportation, bicycle, or trucks.

- Makerspaces—a collaborative workspace in which people have access to different resources that enable them to explore, research, learn, and create products and services [35]:

- -

- Positive factors—it is member-driven. It can take different forms (physical amd virtual), shapes, and sizes for different purposes. Most Makerspaces need registration. Users are people with common interests. Users can meet, socialize, collaborate (on projects), co-create, learn new skills, share, research, explore and invent prototypes, solve problems, play, and even boost self-confidence. It benefits from funds and financial support.

- -

- Negative factors—some Makerspaces have membership fees. Physical Makerspaces have been criticized for their high costs associated with tools and materials.

- SAP Community Network—an open, online, and collaborative community of software users, developers, consultants, mentors, and students who use the network to ask for help, share ideas, learn, innovate, and connect with others [36]:

- -

- Positive factors—it serves as a resource repository and a platform for SAP users to collaborate with each other. Software users, developers, consultants, mentors, and students use it as it is open to all. Users are volunteers. It offers/hosts discussion forums, tutorials, expert blogs, SAP code sharing gallery, utilities, technical library, wiki, article downloads, e-learning catalogs, and other facilities through which users can contribute their knowledge. It has its own channel on YouTube. Its users’ knowledge contribution to the community can be quantified. It has a contributor recognition program (CRP) that awards points to community users for contributions. SAP publicly recognizes its most active contributors. It has over 430 spaces (sub-groups).

- -

- Negative factors—knowledge flows are not measurable. The questions asked before are not easily accessible. It is impossible to read the list of problems in the scope of the theme. There is no control to navigate to the blogs section directly. It is difficult to find the important and most liked blogs.

2.2. Adopting Contributions from Collaborative Networks in Terms of Structural and Behavioral Models

- Synthesizing and organizing the base concepts and key principles of the network;

- Representing the entities, relations, and communication channels of the network;

- Defining the recommended functions, processes, and practices to be performed at different levels of the network;

- Representing the component of the network;

- Addressing the governance problems that should be solved.

- At the individual level, some specific behavioral features are at the center of attention, these include personal characteristics (e.g., participant’s personality, cognitive abilities, competencies, perceived usefulness, and perceived ease of use of the supporting platform) and social characteristics (social incentive/pressure, attitudes towards collaboration, collaboration willingness, and readiness).

2.3. Establishing Adequate Learning and Performance Assessment Indicators and Metrics

- Input indicators—which refer to the resources required for implementing the learning program (e.g., enough financial support, equipped environment, and staff);

- Activity indicators—which refer to the activities and operations in the learning program (e.g., motivation factors and active collaboration);

- Output indicators—which refer to the expected effects/changes achieved by the learning program in the short, intermediate, and long term (e.g., improved knowledge and developed relationships);

- Impact indicators—which refer to the learning program’s contribution to higher-level strategic plans (e.g., civic awareness and promotion of collaborative learning).

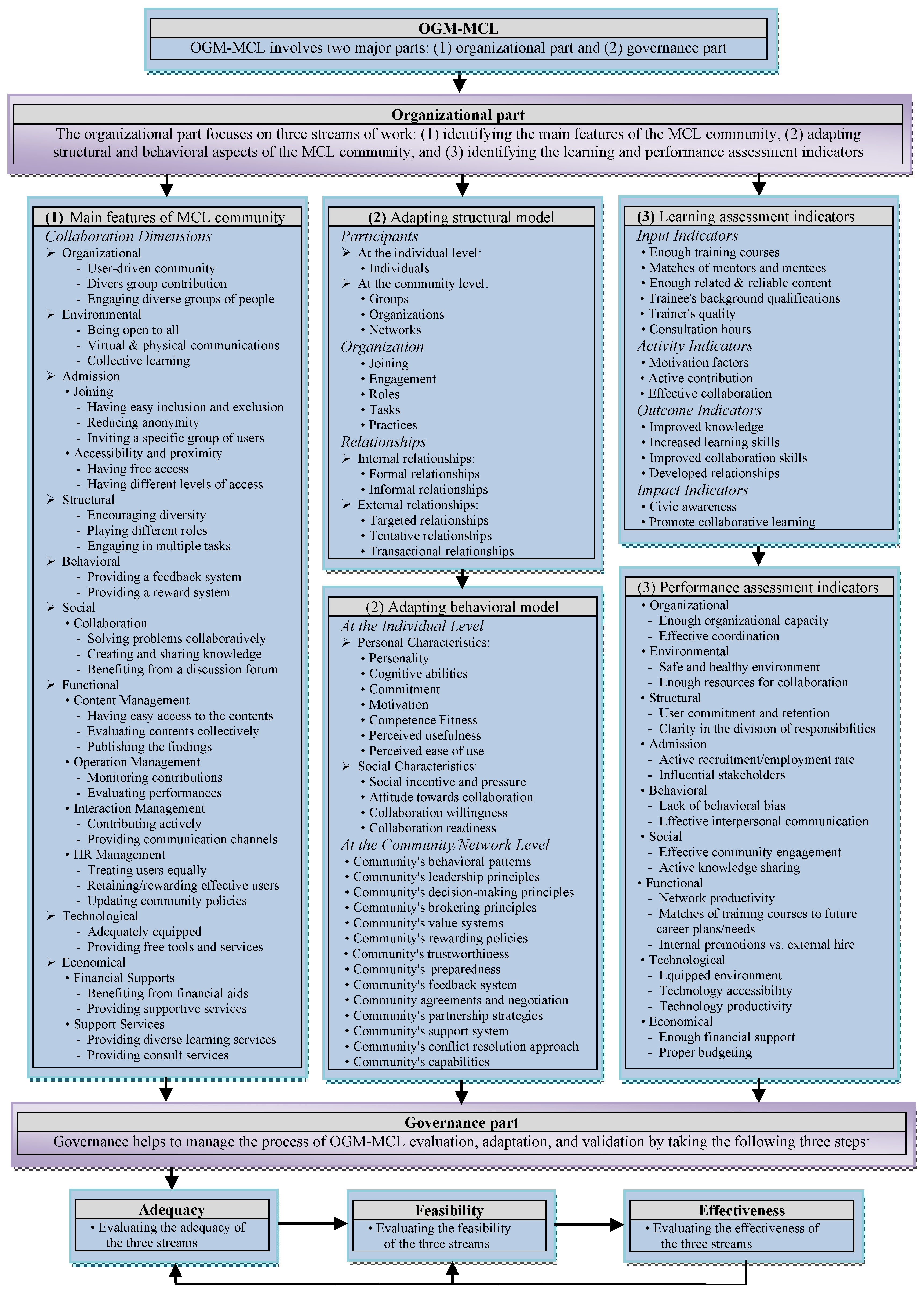

3. Proposed Model

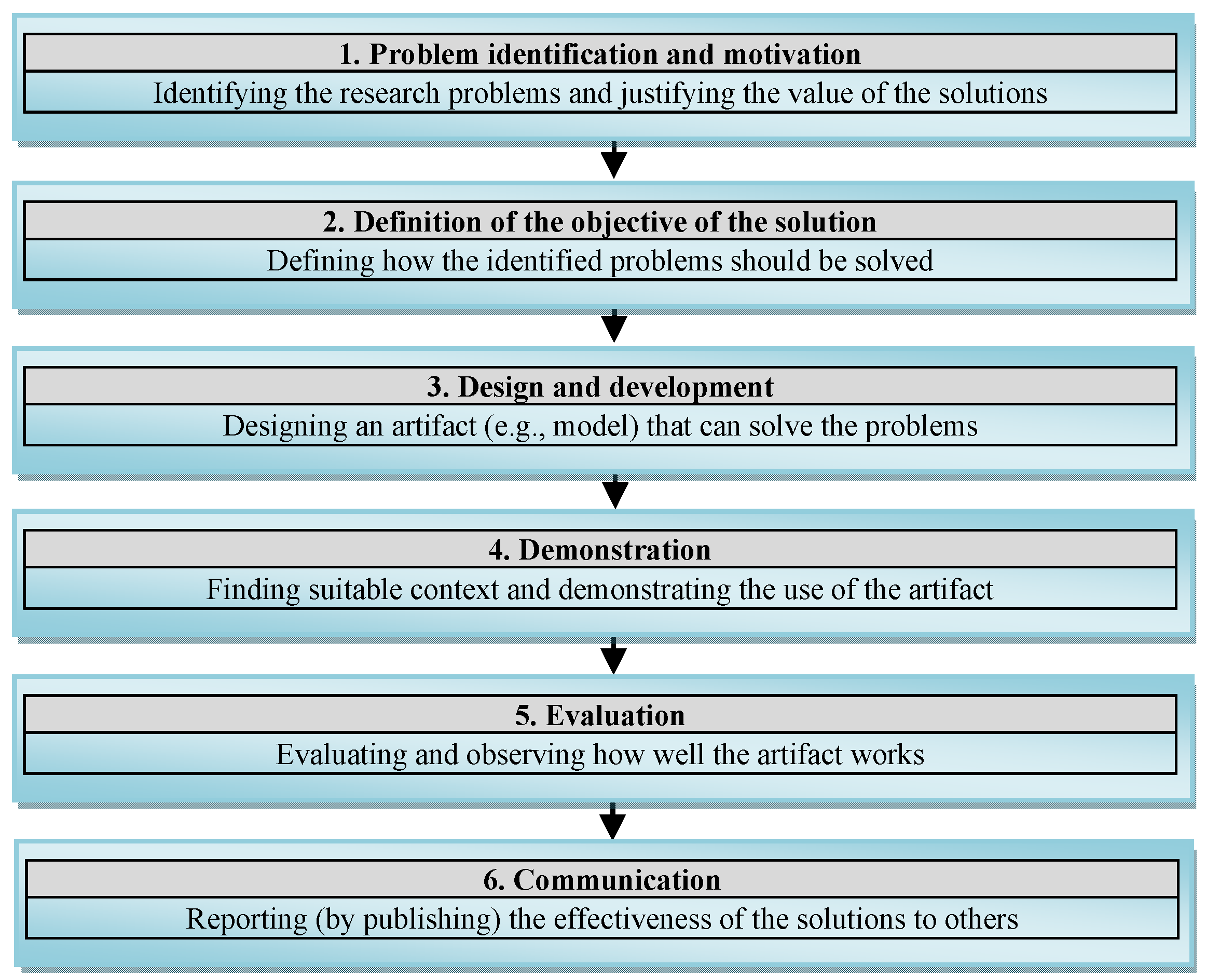

- Problem identification and motivation—as pointed out in the introduction, there is insufficient organized information about the structure, main components, dimensions, and features of MCL initiatives. Thus, more input is needed that provides a basis for supporting community learning through mass collaboration. The contributions from this step focus on (1) identifying the main features and specifications of the MCL community, (2) addressing the structural and behavioral aspects of the MCL community, and (3) finding potential indicators that help assess the learning and performance of individuals and community.

- Definition of the objectives of the solution—this step defines the objectives of the research being conducted and indicates the intended solutions. The objectives of this study are defined and explained in Section 1 and Section 2. In line with this, an extensive literature review was conducted to understand the background of the area and to consolidate what is already known about the concerned issues. In performing this analysis, the principles and models of collaborative networks were used as an underlying framework. Such a review helped us gain a better view of the existing knowledge, latest developments, and current solutions for the identified problems. Consequently, a model is proposed as the main objective, which intends to provide helpful guidelines and directions for supporting and developing the MCL initiatives.

- Design and development—in response to the identified problems (mentioned in step 1), we proposed OGM-MCL (presented in Section 3.1). OGM-MCL is the main contribution of this study, which is inspired by the contributions and solutions reported in the literature (and partially mentioned in Section 2) in combination with our background knowledge and experience. The OGM-MCL comprises (a) three streams of work corresponding to the organizational part and (b) three phases of evaluation, representing the governance part (see Figure 2).

- Demonstration—this step demonstrates the efficacy of the OGM-MCL in solving the problems described in the Introduction. Generally, the demonstration can be fulfilled through, for example, experimentation, simulation, application to a case study, proof, an illustration, or a project. In this study, the demonstration was taken place on an EU project. This step is rendered in Section 4.

- Evaluation—the evaluation step compares the objectives of the solution to the actual observed results from the use of the OGM-MCL in the demonstration. In this step (that is presented in Section 5), we observe and measure to what extent the OGM-MCL can achieve its goals in the used case project.

- Communication—the inputs and outputs of this study (including the identified problems and their importance, the proposed model, the design and development processes, and the assessment of the OGM-MCL) are shared with others through publications.

3.1. The Proposed Organizational and Governance Model for Mass Collaborative Learning

- Organizational part—which embraces three streams of characterization work:

- -

- (Stream 1) main features of the MCL community—in this stream, the eight main dimensions of collaboration and also their related factors and features are addressed. These dimensions are suggested by considering the types and classification of the factors and features of the 15 case studies of MC mentioned above.

- -

- (Stream 2) adapting structural and behavioral models—in this stream, the proposed structural and behavioral models along with their components are introduced. They can align and relate different parts of the MCL communities and bring about consistency.

- -

- (Stream 3) learning and performance assessment indicators—in this stream, some important indicators are selected that can be used for assessing the learning of members and the performance of the MCL community. Such assessment indicators can help determine whether or not the objectives of the community have been achieved.

- Governance part (or evaluation process)—which contains three main evaluation steps namely, adequacy, feasibility, and effectiveness. Through these steps, the three streams of the organizational part are evaluated from the adequacy, feasibility, and effectiveness point of view. This evaluation shows to what extent the addressed elements in each stream could be adapted and applied to a concrete case of MCL. More detailed information about the governance part is presented in Section 4.1.

4. Demonstration

4.1. Governance Process

- Specification phase—encompasses steps 1 to 6. It refers to the identification, selection, and documentation of the specific objectives, dimensions, requirements, and functions to be considered for a specific MCL initiative:

- -

- (Step 1)—Creating a list of the initiative’s objectives and outcomes required—these objectives indicate what the MCL initiative wants to achieve through applying the OGM-MCL. This task can be performed by the decision makers and developers in the initiative.

- -

- (Step 2)—Identifying and selecting the potential items (factors, features, and elements) that can be considered for the creation and development of the MCL initiative—the potential items can be identified by reviewing and analyzing the related literature, examples, cases, etc. The selected items should be then customized based on the specific objectives, requirements, and functions of the MCL initiative.

- -

- (Step 3)—Defining the main functions of the MCL initiative—the functions refer to actions’ execution and transactions of the MCL initiative. Considering the objectives and requirements of the MCL initiative, different functions can be defined by decision makers and developers.

- -

- (Step 4)—Evaluating the “adequacy” of both the selected items and the defined functions—this step first evaluates whether or not the selected items can reasonably and adequately meet the objectives of the MCL initiative. Similarly, the adequacy of the defined functions can be collaboratively evaluated in relation to the objectives of the MCL initiative.

- -

- (Step 5)—Evaluating the “feasibility” of both the selected items and the defined functions—the first part of this step of evaluation tries to uncover the strengths and weaknesses of the selected items from the feasibility point of view. The feasibility of the items can be assessed by considering the technical capabilities of the MCL initiative and the budget available for implementing them. The feasibility of the functions is pertinent to adjusting the number of functions that could be possibly performed by the MCL initiative and developing their descriptions.

- -

- (Step 6)—Evaluating the “effectiveness” of both the selected items and the defined functions—this step of evaluation first evaluates the effectiveness of selected items, aiming at reducing the number of wasted resources that are used for developing the OGM-MCL as well as reaching the desired results. The effectiveness of defined functions can be assessed through, for example, some round-group discussions or questionnaires by the decision makers and developers.

- Implementation phase—embraces step 7. It focuses on designing, developing, and implementing the assets (the selected items and the defined functions):

- -

- (Step 7)—deals with (a) making the desired changes and justifications on selected items and then realizing and designing them, and (b) implementing (e.g., programming) the defined functions to make the functions/services available for users.

- Exploration phase: includes the last step (8) of the governance/evaluation process. It takes care of the operation and function of the MCL initiative and the efficiency of its performance at different levels.

- -

- (Step 8)—oversees the operation of the MCL initiative and it helps make it activated and operative as a service. When the MCL initiative worked for a certain period, its efficiency should then be evaluated, for example, by taking the same procedures used in previous steps. In this step, if any problem related to different parts of the MCL initiative is detected (indicating that the MCL initiative does not work efficiently as expected), it should then be re-evaluated collaboratively.

4.1.1. Creating a List of the EDENCP’s Objectives (Step 1)

- (Objective 1)—determining skills requirements that address the main general and specific skills as well as transversal and transferable competencies that are applicable to both initial and continuing education (based on the principle of education industry cooperation);

- (Objective 2)—co-designing, developing, and training—which provides guidelines and resources that can be used in training events for either people from the educational side who want to implement education–enterprise actions or for people from the business side who need to reinforce their links with the educational system;

- (Objective 3)—detecting, assessing, and clarifying (policy recommendations)—which represents the synthesis of the experiences developed during the project in the five regions targeted by ED-EN HUB. The recommendations will be drafted by classifying and comparing the main policy objectives to which the creation of the joint structure is associated, the range of developed functions and concrete results achieved, difficulties in the start-up, funding and collaboration models, solutions found in consolidating collaboration, models of public-private collaboration, and governance developed;

- (Objective 4)—creating career guidance that presents a complete and secured accompanied pathway for people from their first choice of orientation (at the age of 14, when compulsory education positions the pupil with regard to choosing) to professional reorientation, through the question of guidance and training in collaboration between the stakeholders in education/training and the company;

- (Objective 5)—organizational benchmarking (benchmarking process description)—which clarifies what collaboration activities are expected to take place by means of the EDENCP.

4.1.2. Identifying and Selecting the Potential Factors, Features, and Elements (Step 2)

4.1.3. Defining the Main Functions of EDENCP (Step 3)

- (Function 1)—developing an appropriate search engine;

- (Function 2)—determining the aspects, components, and features of collaboration;

- (Function 3)—managing training process;

- (Function 4)—providing training execution support;

- (Function 5)—designing curriculum;

- (Function 6)—inserting new competence demands;

- (Function 7)—providing suitable tools to evaluate the performances;

- (Function 8)—providing a proper database/service that introduces and offers promising, validated, and trusted tools.

4.1.4. Evaluating the Adequacy of Selected Factors, Features, and Elements (Step 4)

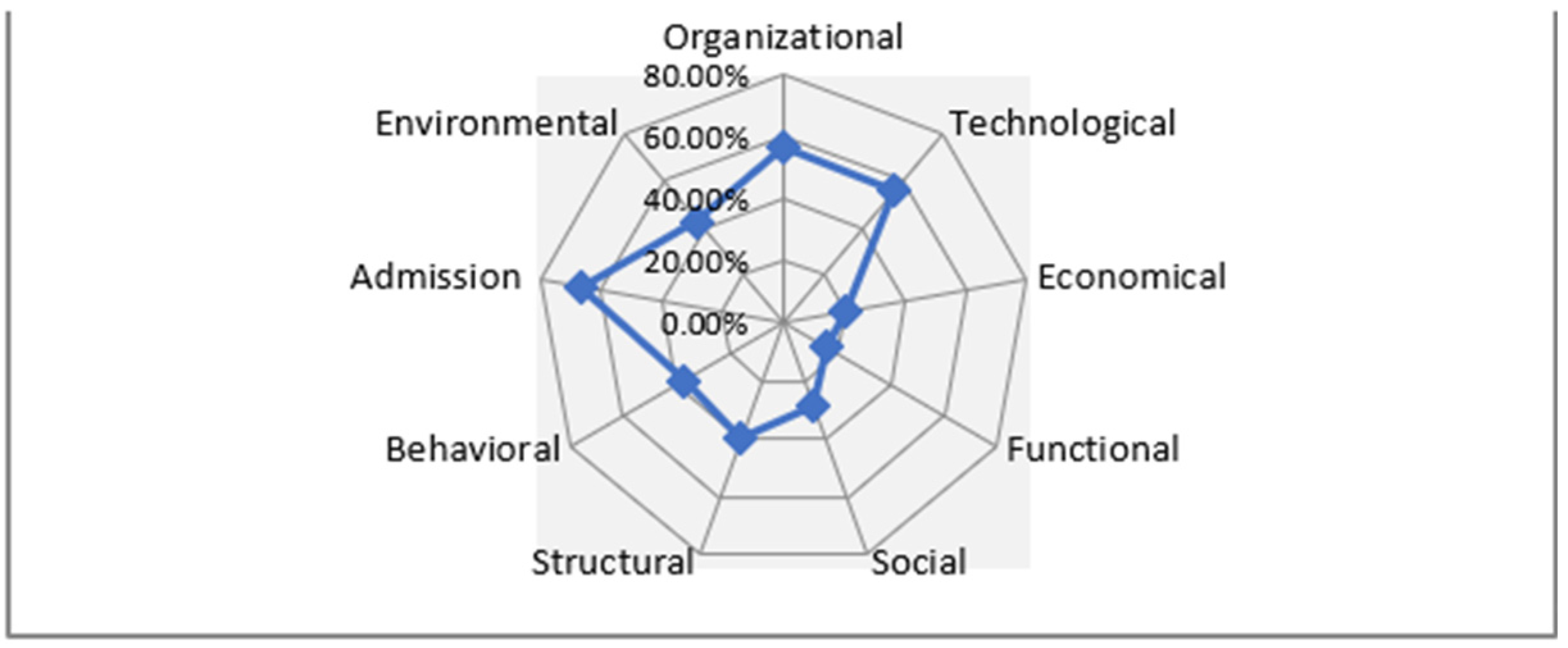

- The nine considered dimensions are generally accepted by all evaluators (partners) because the average popularity given to all dimensions is above 50% (an indicator of acceptance).

- Among the considered dimensions, the organizational dimension and its respective items received the highest average of popularity (83.57%), whereas the economical dimension received the lowest average of popularity (58.50%) from the respondents’ point of view.

- Analyzing all responses given to every single question (in all dimensions) shows that some of the selected and adapted items (that the average of their popularity is lower than 50%) need to be revised, improved, changed, or omitted (in some cases) before moving to the next phase of evaluations. In this direction, the provided feedback by the partners offered very good ideas of what other important points need to be addressed in further developments.

4.1.5. Evaluating the Adequacy of Functions (Step 4)

4.1.6. Evaluating the Feasibility of Selected Factors, Features, and Elements (Step 5)

- The dimension of admission has the highest percentage of popularity at (75%), followed by the organizational dimension with (71.42%). However, the lowest percentage of popularity (given by the evaluators) belongs to the economical dimension (20%).

- The low percentage of the popularity of some dimensions (in both situations, before and after considering the threshold), namely, economical, functional, and behavioral, shows that these dimensions did not receive high attention (in comparison with other considered dimensions) from the evaluators’ point of view.

- Those dimensions that gained higher percentages of popularity, namely, admission, organizational, and technological, indicate that they have a high potential of feasibility (from the evaluators’ perspective) to be implemented in EDENCP. Thus, the focus of attention should be given to these dimensions.

- The low average of popularity given to the economical dimension (16.66%) and technological dimension (41.26%) shows that the majority of addressed factors, features, and elements in these two dimensions do not have much chance to be implemented in the EDENCP from the feasibility point of view.

- The selected factors, features, and elements should be prioritized in the process of implementation on EDENCP. That is, those that received a higher percentage of popularity (e.g., factors, features, and elements in the social dimension used for function 5, with 100%) need to be given more attention and emphasis.

- The dimensions also should be prioritized in the process of implementation in EDENCP. For example, among the addressed dimensions, the environmental dimension received a higher percentage of popularity (68.25%). In this manner, developers and decision makers can manage the resources for implementation according to dimensions’ feasibility and popularity.

4.1.7. Evaluating the Feasibility of Functions (Step 5)

- (Function 1)—search engine for finding available information and tools on EDENCP: provides a software system that is designed to carry out specific searches related to particular competencies (of members), courses, activities, and supporting tools.

- (Function 2)—collaboration: deals with collaborative practices whereby some members work together to complete a task, solve a problem, and/or achieve a shared goal.

- (Function 3)—managing training: focuses on flexible strategies that can properly manage different aspects of training from program creation to evaluation and prioritizing learning needs.

- (Function 4)—training execution support: provides the needed support for (a) training execution, (b) learning engagement strategies, and (c) implementation of performance-based assessment for training proficiency.

- (Function 5)—designing curricula: is a cyclical, and analytical process that helps create a training framework that is able to facilitate and guide the creation of training programs in a particular domain. It integrates different training elements such as learning strategies, processes, materials, and experiences that may help design and develop such training program instructions.

- (Function 6)—the insertion of new competencies demands is the process of identifying and adding the key competencies and basic skills (e.g., cognitive skills of critical thinking, problem-solving, and interpersonal skills) required to perform teaching and training with success. This function supports the identification of competencies that are highly demanding for the companies. It is designed for three main situations: (a) when an employer recognizes that his company needs new workers, but the new workers only arrived from the university and do not have the specifically needed competencies; (b) when the employer recognizes that the existing workers need to improve their competencies or gain new ones for the specific tasks; or (c) when the worker recognizes (by himself/herself) that he/she needs to gain some particular competencies. The main consequences of this function are to improve the existing curricula or add new ones based on the demands of the companies.

- (Function 7)—tools to evaluate the performances (benchmarking): This is the function to evaluate the performance of (a) EDENCP in relation to its functions and (b) the workers of the companies against the transversal competencies that they have already gained. For doing so, it first needs a definition of some specific key performance indicators (KPIs) related to each performance.

4.1.8. Evaluating the Effectiveness of Selected Factors, Features, and Elements (Step 6)

- Generally, the results of this step of the evaluation show how effective the dimensions are from the evaluators’ perspective. Among the addressed dimensions, the organizational dimension obtained the highest average popularity (90%), whereas the technological dimension received the lowest average popularity (75%).

- Those dimensions and their related items with a percentage of popularity (gained from this evaluation) lower than 80% (the considered threshold for this step) are not regarded as sufficiently effective items to be implemented on the EDENCP. This means that the items with a percentage of popularity at 75 (highlighted with the gray color in Table 5) were all taken out from the list of considerations.

- In function implementation (step 7), dimensions that have a higher percentage of popularity should be prioritized. For example, in the implementation of function 1, the priority (based on available resources and capabilities) should be given to organizational (95%) and structural (95%), admission (90%), and social (80%) dimensions.

4.1.9. Evaluating the Effectiveness of Functions (Step 6)

- Can the functions achieve the desired/targeted goals?

- Can the functions gain a certain degree of success?

- Can the functions produce the desired effect?

- Can the functions be operated according to the project plan?

4.1.10. Adjusting the Selected Factors, Features, and Elements (Step 7)

4.1.11. Implementing the Functions (Step 7)

- Individual/student level—provides services such as job seeking, internal and external mobility, certification, guidance, reconnection to the market, self-assessment, and social recognition.

- Company/enterprise level—delivers services such as job and skill planning, internal mobility, recruitment, planning internships, sharing the vision with training and education actors, co-training, and developing partnerships.

- Educational institutes level—provides services such as planning internships, finding required skills, meeting societal and employment actual and future needs, sharing the vision with companies, co-training, and developing partnerships.

5. Evaluation

Validation of Organizational and Governance Model for Using in EDENCP

- Determining the objectives of OGM-MCL validation;

- Determining the needed tools for evaluation (questionnaires and interviews);

- Determining the suitable criteria and parameters for evaluation of the OGM-MCL appropriateness (completeness, purposefulness, perceived usefulness, perceived ease of use, cost-effectiveness, and reasonability);

- Developing related survey questionnaires;

- Identifying the potential evaluators (experts, partners, and stakeholders in the projects);

- Preparing and conducting required interviews with evaluators;

- Performing validation tests;

- Analyzing the collected feedback;

- Reporting the results of the validation.

- All considered criteria and parameters for evaluating the validation and appropriateness of OGM-MCL obtained a percentage over 50 (average), which is a reasonable indicator of general acceptance;

- Among the eleven questions addressed in this survey questionnaire, only three questions that are related to the criteria “perceived ease of use” and “cost-effective” had a percentage between 56% to 63%. The other eight questions had a percentage ≥ 68.75. The average percentage given to all criteria and parameters is 72. This shows a convincing indicator of model compliance.

- The given answers/feedbacks show that there is no “strong disagreement” for the addressed points (criteria and parameters). In fact, there are only seven “disagreements” in total, which is not high.

- Taken together, there were 39 “agreements” and 28 “strong agreements”, which is a considerable positive attitude towards the addressed criteria and parameters and also the validation and appropriateness of the OGM-MCL.

- Taken together, there are only 10 answers that claim that “I am not sure” and 4 answers that said, “I don’t know”. Indeed, this rate is not high at all.

6. Discussion and Conclusions

- We identified that some concepts (e.g., MC, MCL, OGM-MCL, and governance process) are often vague and confusing for the partners and stakeholders of the project

- Theoretically and conceptually evaluating different aspects of the OGM-MCL is an arduous and daunting task.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

| The Questionnaire Used for Assessing the Adequacy of OGM-MCL on EDENCP | |||||

|---|---|---|---|---|---|

| Considered Elements | Main Features That Might Be Integrated into EDENCP | Checklist | |||

| Organizational dimension | It is important that the EDENCP be a user-driven service (users may be co-creators of the service). |  |  |  |  |

| SDA | DA | A | SA | ||

| I don’t know | I’m not sure | ||||

| It is important that the EDENCP engages diverse groups (e.g., the general public, experts, and professionals) in the process of learning. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| Environmental dimension | It is important that the EDENCP be open for all people to contribute. | SDA | DA | A | SA |

| I don’t know | I’m not sure | ||||

| It is important that the EDENCP provides three levels of access (for three groups of users: partners, administrators, and general users). | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| Admission dimension | Inclusion | ||||

| It is important that the EDENCP facilitates the process of joining (inclusion) the groups, hubs, and communities. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| It is important that the EDENCP provides free access for all users. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| Accessibility and Proximity | |||||

| To promote the quality of contributions and develop transparency, it is important that the EDENCP reduces anonymity. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| It is important that the username be associated with the user’s contributions (to facilitate the monitoring of contributions). | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| Social dimension | Collaboration | ||||

| It is important that the EDENCP builds a network for career development. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| It is important that the EDENCP could provide a "discussion forum" for collaboration. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| Functional dimension | Content Management | ||||

| It is important that the users could support the process of creating, sharing, and developing the content. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| It is important that the EDENCP could continually develop and update the content. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| Operation Management | |||||

| It is important that the EDENCP could save users’ personal information and contributions to their profiles. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| It is important that the EDENCP could provide a "monitoring system" to constantly monitor the transactions. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| Interaction Management | |||||

| It is important that the EDENCP could provide an appropriate service for internal interactions such as sharing the resources, training, and learning materials. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| It is important that the EDENCP could provide an appropriate service for external interactions such as exchanging expertise and findings. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| Human Resource Management | |||||

| The users should be treated equally. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| It is important that the EDENCP could provide an advisory board. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| Economical dimension | Supports and Services | ||||

| How important do you think the following 2 services could be for the economic sustainability of the platform: | |||||

| Benefiting from private and public funding, grants, financial aids and donations, capital from investors and sponsors, and advertising. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| Providing supportive training and learning services for schools, organizations, institutions, businesses, and companies. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| Technological dimension | It is important that the EDENCP could provide web-based communication. | SDA | DA | A | SA |

| I don’t know | I’m not sure | ||||

| It is important that the EDENCP could provide a search engine that helps participants to find particular information and services provided in the hub. | SDA | DA | A | SA | |

| Structural dimension | Participants | ||||

| It is important that users from any age, background, culture, and gender could contribute to EDENCP. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| Users will not be paid and they will contribute to the volunteer base. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| Roles and Tasks | |||||

| It is important that the users could play different roles (e.g., expert, advisor, trainer, trainee, editorial, researcher, technical, managerial) based on their qualifications. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| It is important that the users could engage in multiple tasks (e.g., training execution, providing learning content, delivering the contents, exchanging the contents, executing, providing support, commenting, reporting). | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

| Behavioral dimension | The partners and administrators have the authority to bring about structural changes in the EDENCP | SDA | DA | A | SA |

| I don’t know | I’m not sure | ||||

| The general users can contribute to decision-making processes. | SDA | DA | A | SA | |

| I don’t know | I’m not sure | ||||

Appendix B

| The Questionnaire Used for Assessing the Feasibility of OGM-MCL on EDENCP | |||

|---|---|---|---|

| Functions | Considered Factors and Features That Might be Implemented on EDENCP | Answers | |

| F1 | It should be open to all and be used for multiple purposes (e.g., searching, sorting info) | YES | NO |

| It should have different access levels and provide a comprehensive list of available info | YES | NO | |

| F2 | It should be able to engage different members in multiple communication tasks | YES | NO |

| It should be able to create collaboration spaces around different domains/topics | YES | NO | |

| F3 | It should be able to use different characteristics to manage the training planning | YES | NO |

| It should be able to consider the use of different training modes in the training planning | YES | NO | |

| F4 | It should be able to define clear inclusive rules for admission | YES | NO |

| It should be able to provide Learning Management System (e.g., moodle) | YES | NO | |

| F5 | It should be able to create diverse groups profile for contribution | YES | NO |

| It should be able to make open to all the processes of curriculum design | YES | NO | |

| F6 | It should make open to all the insertion of new competencies demands | YES | NO |

| It should be able to suggest concepts for competence demand writing | YES | NO | |

| F7 | It should be able to authorize users for taking different roles in the evaluation process | YES | NO |

| It should have easy and free access to verification of the evaluation results | YES | NO | |

Appendix C

| Questionnaire for Evaluating the Effectiveness of Considered Dimensions of Collaboration and Their Related Factors, Features, and Elements that Have the Potential to be Implemented on EDENCP | |||||

|---|---|---|---|---|---|

| 1. Questions for Search Engines for Finding Information and Tools | 0 | 1 | 2 | 3 | 4 |

| 1. It is effective to be used for multiple purposes (e.g., searching, sorting info) | |||||

| 2. It is effective to be flexible to change (e.g., open to add new info or keywords for searching) | |||||

| 3. It is effective to have cross-functional capabilities (e.g., searching multiple features) | |||||

| 4. It is effective to be customizable (e.g., contains searching features to adapt to users’ profiles) | |||||

| 5. It is effective to use advanced functions (e.g., search in other external hubs) | |||||

| 2. Questions for Collaboration | |||||

| 1. It is effective to engage a diverse group of people in multiple communication tasks (e.g., chat forum) | |||||

| 2. It is effective to create collaboration spaces around different domains/topics | |||||

| 3. It is effective to promote the available skills or make a set of needed competences | |||||

| 4. It is effective to invite users to voluntarily contribute to collaborative activities based on their interest | |||||

| 3. Questions for Managing Training | |||||

| 1. It is effective to use different characteristics to manage the training planning (e.g., cost, success rate, learning needs) | |||||

| 2. It is effective to use different training modes (blended learning) in the training planning | |||||

| 3. It is effective to consider inclusive training and learning | |||||

| 4. It is effective to give freedom to choose the courses (help to prioritize learning needs based on the user’s profile) | |||||

| 5. It is effective to create a training plan such as evaluation of the contents collectively (have a procedure to perform) | |||||

| 4. Questions for Training Execution Support | |||||

| 1. It is effective to analyze what kind of approach or balance can be best used in training execution for different training modes (virtual vs. traditional classes) | |||||

| 2. It is effective to define clear inclusive rules for admission | |||||

| 3 It is effective to use analytics over-collected performance data to improve further training execution and its learning engagement strategies (i.e., knowledge management) | |||||

| 5. Questions for Design Curriculum | |||||

| 1. It is effective to create diverse groups profile for contribution | |||||

| 2. It is effective to design a curriculum based on the demands of companies | |||||

| 3. It is effective to share training elements (e.g., learning strategies, processes, materials, and experiences) for collaborative designing curriculum | |||||

| 4. It is effective to facilitate the process of continuous curriculum adaption | |||||

| 6. Questions for Insertion of New Competencies Demands | |||||

| 1. It is effective to make open to all the insertion of new competencies demands | |||||

| 2. It is effective to create an easy invitation procedure for the contribution of new companies | |||||

| 3. It is effective to encourage volunteer and active participation in finding new competence demands | |||||

| 4. It is effective to facilitate open discussion about the new competence demands | |||||

| 7. Questions for Tools to Evaluate Performance | |||||

| 1. It is effective to create different levels of evaluation | |||||

| 2. It is effective to authorize users for taking different roles in the evaluation process | |||||

| 3. It is effective to have easy and free access to verification of the evaluation results | |||||

| 4. It is effective to create different roles for the evaluation procedure (e.g., evaluators, users, programmers) | |||||

References

- Zamiri, M.; Camarinha-Matos, L.M. Mass Collaboration and Learning: Opportunities, Challenges, and Influential Factors. Appl. Sci. 2019, 9, 2620. [Google Scholar] [CrossRef]

- Camarinha-Matos, L.M.; Afsarmanesh, H. Collaborative networks: A new scientific discipline. Intell. Manuf. 2005, 16, 439–452. [Google Scholar] [CrossRef]

- Cress, U.; Jeong, H.; Moskaliuk, J. Mass Collaboration as an Emerging Paradigm for Education? Theories, Cases, and Research Methods. In Mass Collaboration and Education; Computer-Supported Collaborative Learning Series; Cress, U., Moskaliuk, J., Jeong, H., Eds.; Springer: Cham, Switzerland, 2016; Volume 16. [Google Scholar] [CrossRef]

- Zamiri, M.; Camarinha-Matos, L.M. Organizational Structure for Mass Collaboration and Learning. In Technological Innovation for Industry and Service Systems; DoCEIS 2019. IFIP Advances in Information and Communication Technology; Springer: Cham, Switzerland, 2019; Volume 553, pp. 14–23. [Google Scholar] [CrossRef]

- West, R.E.; Williams, G.S. I don’t think that word means what you think it means: A proposed framework for defining learning communities. Educ. Technol. Res. Dev. 2017, 65, 1569–1582. [Google Scholar] [CrossRef]

- Zamiri, M.; Camarinha-Matos, L.M. A Mixed Method for Assessing the Reliability of Shared Knowledge in Mass Collaborative Learning Community. In Technological Innovation for Applied AI Systems; DoCEIS 2021. IFIP Advances in Information and Communication Technology; Springer: Cham, Switzerland, 2021; Volume 626. [Google Scholar]

- Hew, K.F. Determinants of success for online communities: An analysis of three communities in terms of members’ perceived professional development. Behav. Inf. Technol. 2009, 28, 433–445. [Google Scholar] [CrossRef]

- Wang, P.; Ramiller, N.C. Community Learning in Information Technology Innovation. MIS Q. 2009, 33, 709–734. [Google Scholar] [CrossRef]

- Hipp, K.K.; Huffman, J.B. Professional Learning Communities: Assessment-Development-Effects. In Proceedings of the International Congress for School Effectiveness and Improvement, Sydney, Australia, 5–8 January 2003. [Google Scholar]

- Trust, T. Professional Learning Networks Designed for Teacher Learning. J. Digit. Learn. Teach. Educ. 2012, 28, 133–138. [Google Scholar] [CrossRef]

- Wenger, E. Communities of Practice: Learning, Meaning, and Identity, 1st ed.; Cambridge University Press: Cambridge, UK, 1999. [Google Scholar]

- Habernal, I.; Daxenberger, J.; Gurevych, I. Mass Collaboration on the Web: Textual Content Analysis by Means of Natural Language Processing. In Mass Collaboration and Education; Computer-Supported Collaborative Learning Series; Cress, U., Moskaliuk, J., Jeong, H., Eds.; Springer: Cham, Switzerland, 2016; Volume 16. [Google Scholar] [CrossRef]

- Fischer, G. Exploring, Understanding, and Designing Innovative Socio-Technical Environments for Fostering and Supporting Mass Collaboration. In Mass Collaboration and Education; Computer-Supported Collaborative Learning Series; Cress, U., Moskaliuk, J., Jeong, H., Eds.; Springer: Cham, Switzerland, 2016; Volume 16. [Google Scholar] [CrossRef]

- Eimler, S.C.; Neubaum, G.; Mannsfeld, M.; Krämer, N.C. Altogether now! Mass and small group collaboration in (open) online courses—A case study. In Mass Collaboration and Education; Computer-Supported Collaborative Learning Series; Cress, U., Moskaliuk, J., Jeong, H., Eds.; Springer: Cham, Switzerland, 2016; Volume 16. [Google Scholar] [CrossRef]

- Cress, U.; Feinkohl, I.; Jirschitzka, J.; Kimmerle, J. Mass collaboration as co-evolution of cognitive and social systems. In Mass Collaboration and Education; Computer-Supported Collaborative Learning Series; Cress, U., Moskaliuk, J., Jeong, H., Eds.; Springer: Cham, Switzerland, 2016; Volume 16. [Google Scholar] [CrossRef]

- Fu, W.T. From distributed cognition to collective intelligence: Supporting cognitive search to facilitate massive collaboration. In Mass Collaboration and Education; Computer-Supported Collaborative Learning Series; Cress, U., Moskaliuk, J., Jeong, H., Eds.; Springer: Cham, Switzerland, 2016; Volume 16. [Google Scholar] [CrossRef]

- Halatchliyski, I. Theoretical and empirical analysis of networked knowledge. In Mass Collaboration and Education; Computer-Supported Collaborative Learning Series; Cress, U., Moskaliuk, J., Jeong, H., Eds.; Springer: Cham, Switzerland, 2016; Volume 16. [Google Scholar] [CrossRef]

- Morris, T.; Mietchen, D. Collaborative Structuring of Knowledge by Experts and the Public. In Proceedings of the 5th Open Knowledge Conference, London, UK, 24 April 2010. [Google Scholar]

- Diver, P.; Martinez, I. MOOCs as a massive research laboratory: Opportunities and challenges. Distance Educ. 2015, 36, 5–25. [Google Scholar] [CrossRef]

- Flanagin, A.J.; Metzger, M.J. From Encyclopædia Britannica to Wikipedia—Generational Differences in the Perceived Credibility of Online Encyclopedia Information. Inf. Commun. Soc. 2011, 14, 355–374. [Google Scholar] [CrossRef]

- Wikipedia. Available online: https://en.wikipedia.org/wiki/Wikipedia (accessed on 10 May 2022).

- Gunelius, S. Overview of Digg. Available online: https://www.thoughtco.com/overview-of-digg-3476441 (accessed on 10 May 2022).

- Digg: What Is Digg? Available online: https://www.wordstream.com/digg (accessed on 10 May 2022).

- Yahoo. Available online: https://www.yahoo.com/ (accessed on 10 May 2022).

- SETI@home. Available online: https://setiathome.berkeley.edu/ (accessed on 10 May 2022).

- Scratch. Available online: https://scratch.mit.edu/ (accessed on 10 May 2022).

- GalaxyZoo. Available online: https://www.zooniverse.org/projects/zookeeper/galaxy-zoo/ (accessed on 10 May 2022).

- Foldit. Available online: https://fold.it/ (accessed on 10 May 2022).

- Wikipedia. Available online: https://en.wikipedia.org/wiki/Delphi_method (accessed on 10 May 2022).

- Climate CoLab. Available online: https://www.climatecolab.org/page/about (accessed on 10 May 2022).

- Wikipedia. Available online: https://en.wikipedia.org/wiki/Assignment_Zero (accessed on 10 May 2022).

- DonationCoder. Available online: https://www.donationcoder.com/help/faqs (accessed on 10 May 2022).

- Expert Exchange. Available online: https://go.experts-exchange.com/plansandpricing?mixedRegTrack=headerHome (accessed on 10 May 2022).

- Waze. Available online: https://www.waze.com/live-map/ (accessed on 10 May 2022).

- Makerspace. Available online: https://www.makerspaces.com/what-is-a-makerspace/ (accessed on 10 May 2022).

- SAP Community. Available online: https://community.sap.com/ (accessed on 10 May 2022).

- Camarinha-Matos, L.M.; Afsarmanesh, H. Collaborative Networks: Value creation in a knowledge society. In Proceedings of the International Conference on Programming Languages for Manufacturing (PROLAMAT’06), Shanghai, China, 14–16 June 2006. [Google Scholar] [CrossRef]

- Camarinha-Matos, L.M.; Afsarmanesh, H.; Ermilova, E.; Ferrada, F.; Klen, A.; Jarimo, T. ARCON reference models for collaborative networks. In Collaborative Network: Reference Modeling; Springer: Berlin/Heidelberg, Germany, 2008; pp. 83–112. [Google Scholar] [CrossRef]

- Ellmann, S.; Eschenbaecher, J. Collaborative Network Models: Overview and Functional Requirements. In Virtual Enterprise Integration: Technological and Organizational Perspectives; Idea Group Pub: Hershey, PA, USA, 2005; pp. 102–123. [Google Scholar] [CrossRef]

- Camarinha-Matos, L.M.; Afsarmanesh, H. Behavioral aspects in collaborative enterprise networks. In Proceedings of the 9th IEEE International Conference on Industrial Informatics, Lisbon, Portugal, 26–29 July 2011. [Google Scholar] [CrossRef]

- Esteves, J.; Curto, J.A. Risk and Benefits Behavioral Model to Assess Intentions to Adopt Big Data. Intell. Stud. Bus. 2013, 3, 37–46. [Google Scholar] [CrossRef]

- Li, P.C.; Lu, H.K.; Liu, S.C. Towards an Education Behavioral Intention Model for E-Learning Systems: An Extension of UTAUT. Theor. Appl. Inf. Technol. 2013, 47, 1120–1127. [Google Scholar]

- Leading SDG 4—Education 2030. Available online: https://en.unesco.org/themes/education2030-sdg4 (accessed on 10 May 2022).

- Maki, P.L. Developing an assessment plan to learn about student learning. Acad. Librariansh. 2002, 28, 8–13. [Google Scholar] [CrossRef]

- Harlen, W. The Quality of Learning: Assessment alternatives for primary education. In Primary Review Research Survey 3/4; Faculty of Education, University of Cambridge: Cambridge, UK, 2007. [Google Scholar]

- Nagowah, S.D.; Nagowah, L. Assessment Strategies to enhance Students’ Success. In Proceedings of the IASK International Conference “Teaching and Learning”, Porto, Portugal, 7–9 December 2009. [Google Scholar]

- Aihara, S. Assessment Indicators as a Tool of Process Monitoring, Benchmarking and Learning Outcomes Assessment: Features of Two Types Indicators. Inf. Eng. Express 2016, 2, 45–54. [Google Scholar] [CrossRef]

- Sae-Khow, J. Developing of Indicators of an E-Learning Benchmarking Model for Higher Education Institutions. Turk. Online J. Educ. Technol. 2014, 13, 35–43. [Google Scholar]

- Scheffel, M.; Drachsler, H.; Stoyanov, S.; Specht, M. Quality Indicators for Learning Analytics. Educ. Technol. Soc. 2014, 17, 117–132. [Google Scholar]

- Ferreira, R.P.; Silva, J.N.; Strauhs, F.R.; Soares, A.L. Performance Management in Collaborative Networks: A Methodological Proposal. Univers. Comput. Sci. 2011, 17, 1412–1429. [Google Scholar]

- Dlouhá, J.; Barton, A.; Janoušková, S.; Dlouhý, J. Social learning indicators in sustainability-oriented regional learning networks. J. Clean. Prod. 2013, 49, 64–73. [Google Scholar] [CrossRef]

- Shavelson, R.J.; Zlatkin-Troitschanskaia, O.; Mariño, J.P. Performance indicators of learning in higher education institutions: An overview of the field. In Research Handbook on Quality, Performance and Accountability in Higher Education; Hazelkorn, E., Coates, H., McCormick, A.C., Eds.; Edward Elgar Publishing: Cheltenham, UK, 2018. [Google Scholar]

- Ferreira, P.S.; Shamsuzzoha, A.H.M.; Toscano, C.; Cunha, P. Framework for performance measurement and management in a collaborative business environment. Int. J. Product. Perform. Manag. 2012, 61, 672–690. [Google Scholar] [CrossRef]

- Bititci, U.; Garengo, P.; Dörfler, V.; Nudurupati, S. Performance measurement: Challenges for tomorrow. Int. J. Manag. Rev. 2012, 14, 305–327. [Google Scholar] [CrossRef]

- Varamäki, E.; Kohtamäki, M.; Järvenpää, M.; Vuorinen, T.; Laitinen, E.K.; Sorama, K.; Wingren, T.; Vesalainen, J.; Helo, P.; Tuominen, T.; et al. A framework for network level performance measurement system in SME networks. Int. J. Netw. Virtual Organ. 2008, 3, 415–435. [Google Scholar] [CrossRef]

- Adel, R. Collaborative networks’ performance index. Middle East J. Manag. 2015, 2, 212–230. [Google Scholar] [CrossRef]

- Camarinha-Matos, L.M.; Abreu, A. Performance indicators for collaborative networks based on collaboration benefits. Prod. Plan. Control. 2007, 18, 592–609. [Google Scholar] [CrossRef]

- Haddadi, F.; Yaghoobi, T. Key indicators for organizational performance measurement. Manag. Sci. Lett. 2014, 4, 2021–2030. [Google Scholar] [CrossRef][Green Version]

- Peffers, K.; Tuunanen, T.; Gengler, C.E.; Rossi, M.; Hui, W.; Virtanen, V.; Bragge, J. The Design Science Research Process: A Model for Producing and Presenting Information Systems Research. In Proceedings of the 1st International Conference on Design Science Research in Information Systems and Technology, Claremont, CA, USA, 24–25 February 2006. [Google Scholar]

- Geerts, G.L. A design science research methodology and its application to accounting information systems research. Int. J. Account. Inf. Syst. 2011, 12, 142–151. [Google Scholar] [CrossRef]

- ed-en hub. Available online: http://edenhub.eu/ (accessed on 10 May 2022).

- Davis, F.D.; Bagozzi, R.P.; Warshaw, P.R. User Acceptance of Computer Technology: A Comparison of Two Theoretical Models. Manag. Sci. 1989, 35, 982–1003. [Google Scholar] [CrossRef]

- Ulrich, H.H.; Harrer, A.; Göhnert, T.; Hecking, T. Applying Network Models and Network Analysis Techniques to the Study of Online Communities. In Mass Collaboration and Education; Computer-Supported Collaborative Learning Series; Cress, U., Moskaliuk, J., Jeong, H., Eds.; Springer: Cham, Switzerland, 2016; Volume 16. [Google Scholar] [CrossRef]

- Richardson, M.; Domingos, P. Building Large Knowledge Bases by Mass Collaboration. In Proceedings of the International Conference on Knowledge Capture—K-CAP ’03, Sanibel Island, FL, USA, 23–25 October 2003; pp. 129–137. [Google Scholar] [CrossRef]

- Zamiri, M.; Camarinha-Matos, L.M.; Sarraipa, J. Meta-Governance Framework to Guide the Establishment of Mass Collaborative Learning Communities. Computers 2022, 11, 12. [Google Scholar] [CrossRef]

| Considered Dimensions | Number of Questions Per Dimension | Average of Popularity Gained from Adequacy Evaluation |

|---|---|---|

| -Organizational | 7 | 83.57% |

| -Environmental | 7 | 69.28% |

| -Admission | 12 | 82.91% |

| -Social | 7 | 70.71% |

| -Functional | 25 | 66% |

| -Economical | 10 | 58.50% |

| -Technological | 9 | 76.66% |

| -Structural | 15 | 71% |

| -Behavioral | 8 | 63.75% |

| ED-EN HUB Main Processes | |||||

|---|---|---|---|---|---|

| EDENCP Functions | EDENCP Objectives | ||||

| Determine Skills Requirements | Co-Design, Develop, and Training | Detect, Assess, and Certify | Career Guidance | Organizational Benchmarking | |

| F1. Search engine | x | x | x | x | x |

| F2. Collaboration | x | x | x | x | x |

| F3. Managing training | x | ||||

| F4. Training execution support | x | ||||

| F5. Design Curriculum | x | x | |||

| F6. Insertion of new competencies demands | x | x | x | ||

| F7. Tools to evaluate performance (benchmarking) | x | x | x | ||

| F8. Database/service offering different tools | x | x | x | x | x |

| Considered Dimensions | Results of Adequacy Evaluation | Number of Questions Per Dimension | Number of Questions per Dimension That Their Threshold Is ≥80 |  | The Base for Consideration in Feasibility Evaluation |

| Organizational | 83.57% | 7 | 5 | 71.42% | |

| Environmental | 69.28% | 7 | 3 | 42.85% | |

| Admission | 82.91% | 12 | 9 | 75% | |

| Behavioral | 63.75% | 8 | 3 | 37.50% | |

| Structural | 71% | 15 | 7 | 46.66% | |

| Social | 70.71% | 7 | 4 | 57.14% | |

| Functional | 66% | 25 | 7 | 28% | |

| Economical | 58.50% | 10 | 2 | 20% | |

| Technological | 76.66% | 9 | 6 | 66.66% |

| Functions | Average of Popularity of Functions | Organizational | Environmental | Admission | Behavioral | Structural | Social | Functional | Economical | Technological |

|---|---|---|---|---|---|---|---|---|---|---|

| F1. Search engine | 55.55% | 83.33% | 55.56% | 66.67% | 50.00% | 66.67% | 66.67% | 33.33% | 16.67% | 61.11% |

| F2. Collaboration | 53.08% | 72.22% | 83.33% | 66.67% | 11.11% | 72.22% | 44.44% | 44.44% | 33.33% | 50.00% |

| F3. Managing training | 50.61% | 72.22% | 94.44% | 22.22% | 66.67% | 61.11% | 38.89% | 72.22% | 11.11% | 16.67% |

| F4. Training execution support | 52.46% | 50.00% | 83.33% | 50.00% | 66.67% | 50.00% | 61.11% | 55.56% | 11.11% | 44.44% |

| F5. Design curriculum | 53.70% | 72.22% | 38.89% | 83.33% | 55.56% | 38.89% | 100.00% | 61.11% | 5.56% | 27.78% |

| F6. Competences demands | 52.46% | 50.00% | 61.11% | 50.00% | 83.33% | 61.11% | 61.11% | 38.89% | 22.22% | 44.44% |

| F7. Evaluate performance | 51.85% | 55.56% | 61.11% | 61.11% | 66.67% | 61.11% | 44.44% | 55.56% | 16.67% | 44.44% |

| Av | 52.81% | 65.07% | 68.25% | 57.14% | 57.14% | 58.73% | 59.52% | 50.58% | 16.66% | 41.26% |

| Functions | Organizational | Environmental | Admission | Behavioral | Structural | Social | Functional | Economical | Technological |

|---|---|---|---|---|---|---|---|---|---|

| F1. Search engine | 95% | 90% | 95% | 80% | 75% | ||||

| F2. Collaboration | 90% | 95% | 75% | 90% | |||||

| F3. Managing training | 95% | 95% | 80% | 75% | 90% | ||||

| F4. Training execution support | 90% | 80% | 80% | ||||||

| F5. Design curriculum | 80% | 95% | 95% | 75% | |||||

| F6. Competences demands | 80% | 90% | 80% | 75% | |||||

| F7. Evaluate performance | 75% | 80% | 90% | 80% | |||||

| Sum | 90% | 87% | 85% | 85% | 84% | 82.5% | 82.5% | 75% |

| Criteria and Parameters | Questions | SDA | DA | A | SA | IDK | IANS |

|---|---|---|---|---|---|---|---|

| Completeness |

| ||||||

| |||||||

| Purposefulness |

| ||||||

| |||||||

| Perceived usefulness |

| ||||||

| |||||||

| Perceived ease of use |

| ||||||

| |||||||

| Cost-effective |

| ||||||

| Reasonability |

| ||||||

|

| Criteria and Parameters | Feedback Number | Questions | Weighted Average | Percentages | SDA | DA | A | SA | IANS | IDK |

|---|---|---|---|---|---|---|---|---|---|---|

| Completeness Purposefulness | 8 | Q1 | 3 | 75% | 0 | 0 | 3 | 3 | 1 | 1 |

| 8 | Q2 | 3 | 75% | 0 | 0 | 3 | 3 | 1 | 1 | |

| Purposefulness | 8 | Q3 | 3.25 | 81.25% | 0 | 1 | 4 | 3 | 0 | 0 |

| 8 | Q4 | 2.75 | 68.75% | 0 | 1 | 4 | 2 | 1 | 0 | |

| Perceived Usefulness | 8 | Q5 | 3.28 | 82% | 0 | 0 | 5 | 3 | 0 | 0 |

| 8 | Q6 | 3.13 | 78.25% | 0 | 1 | 5 | 2 | 0 | 0 | |

| Perceived ease of use | 8 | Q7 | 2.38 | 59.50% | 0 | 1 | 3 | 2 | 2 | 0 |

| 8 | Q8 | 2.25 | 56.25% | 0 | 1 | 4 | 1 | 2 | 0 | |

| Cost-effective | 8 | Q9 | 2.50 | 62.50% | 0 | 2 | 1 | 2 | 1 | 2 |

| Reasonability | 8 | Q10 | 3.14 | 78.50% | 0 | 0 | 3 | 4 | 1 | 0 |

| 8 | Q11 | 3 | 75% | 0 | 0 | 4 | 3 | 1 | 0 | |

| Average | - | - | 2.88 | 72% | 0 | 7 | 39 | 28 | 10 | 4 |

| Max | - | - | 4.00 | 100 | 55 | 55 | 55 | 55 | 55 | 55 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zamiri, M.; Sarraipa, J.; Camarinha-Matos, L.M.; Jardim-Goncalves, R. An Organizational and Governance Model to Support Mass Collaborative Learning Initiatives. Appl. Sci. 2022, 12, 8356. https://doi.org/10.3390/app12168356

Zamiri M, Sarraipa J, Camarinha-Matos LM, Jardim-Goncalves R. An Organizational and Governance Model to Support Mass Collaborative Learning Initiatives. Applied Sciences. 2022; 12(16):8356. https://doi.org/10.3390/app12168356

Chicago/Turabian StyleZamiri, Majid, João Sarraipa, Luis M. Camarinha-Matos, and Ricardo Jardim-Goncalves. 2022. "An Organizational and Governance Model to Support Mass Collaborative Learning Initiatives" Applied Sciences 12, no. 16: 8356. https://doi.org/10.3390/app12168356

APA StyleZamiri, M., Sarraipa, J., Camarinha-Matos, L. M., & Jardim-Goncalves, R. (2022). An Organizational and Governance Model to Support Mass Collaborative Learning Initiatives. Applied Sciences, 12(16), 8356. https://doi.org/10.3390/app12168356