A General Framework Based on Machine Learning for Algorithm Selection in Constraint Satisfaction Problems

Abstract

1. Introduction

2. Background

- 1

- The first layer contains the domain-related aspects such as the instances, their characterization, and the available algorithms to choose from.

- 2

- The second layer “learns” which algorithm to apply given the features of the problem at hand.

- 3

- The third layer applies the selected algorithm to solve unseen instances.

2.1. Constraint Satisfaction Problems

Variable Ordering Heuristics

- Domain (DOM) selects the variable with the smallest number of available values in its domain. The idea consists in taking the most restricted variable from those that have not been instantiated yet and, in doing so, reduces the branching factor of the search [50].

- Kappa (KAPPA) selects the variable in such a way that the resulting subproblem minimizes the value of the parameter of the instance [51]. With this heuristic, the search branches on the variable that is estimated to be the most constrained, yielding the least constrained subproblem—the one with the smallest . For an instance, is calculated aswhere is the fraction of forbidden pairs of values in constraint c and is the domain of variable .

- Weighted Degree (WDEG) captures previous states of the search that have already been exploited [52]. To do so, WDEG attaches a weight to every constraint in the instance. The weights are updated whenever a deadend occurs (no more values are available for the current variable). This heuristic first examines the locally inconsistent, or hard, parts of the instance by prioritizing variables with the largest weighted degrees.

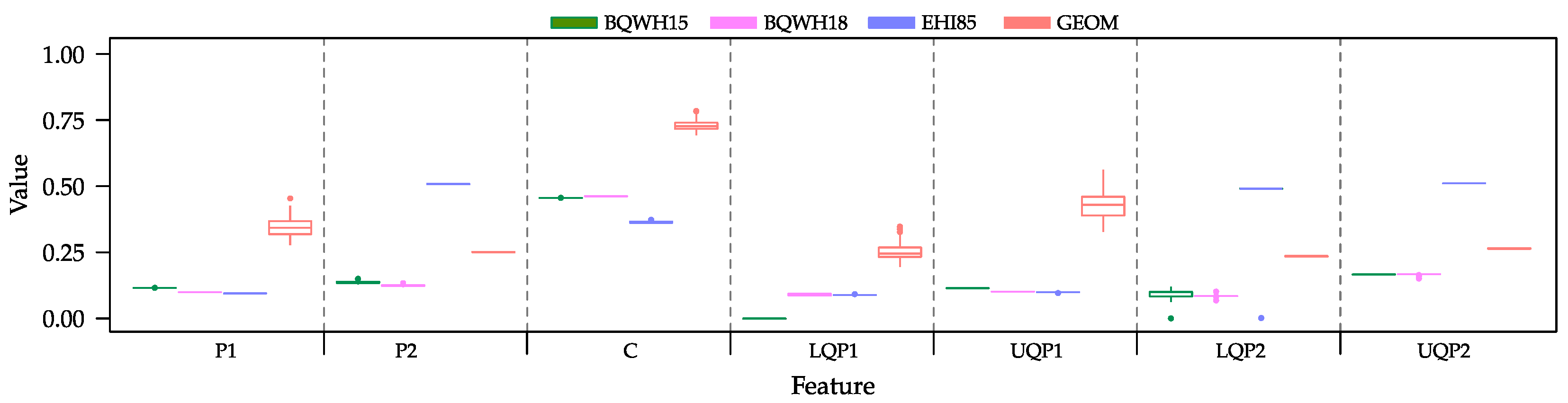

2.2. Instance Characterization

- 1

- Average constraint density is the average of the constraint densities of all the variables within the instance. The constraint density in our example CSP is calculated as

- 2

- Average constraint tightness is the average of the constraint tightnesses of all the constraints within the instance. In our example, the constraint tightness is calculated as

- 3

- Average clustering coefficient is the average of the clustering coefficients of all the variables within the instance. The average clustering coefficient of our example CSP is calculated as

- 4

- Lower quartile of the constraint density is the middle number between the smallest number and the median of the constraint densities of all the variables within the instance. First, we need to sort the constraint densities in our example CSP: 0.25, 0.25, 0.25, 0.5, and 0.75. Based on these values, the median is 0.25 and, in this case, the lower quartile is also 0.25.

- 5

- Upper quartile of the constraint density is the middle number between the median and the largest of the constraint densities of all the variables within the instance. Based on the constraint densities sorted in the previous feature, the upper quartile is 0.50.

- 6

- Lower quartile of the constraint tightness is the middle number between the smallest number and the median of the constraint tightnesses of all the constraints within the instance. After we sort the constraint tightnesses in our example CSP, we obtain 0.20, 0.48, 0.80, and 0.88. Based on these values, the median is 0.64 and the lower quartile is 0.34.

- 7

- Upper quartile of the constraint tightness is the middle number between the median and the largest of the constraint tightnesses of all the constraints within the instance. Based on the constraint tightnesses sorted in the previous feature, the upper quartile is 0.84.

2.3. Benchmark Instances

2.4. Machine Learning Techniques

- Multiclass Logistic Regression (MLR) is a well-known method used to predict the probability of an outcome and is particularly famous for classification tasks. The algorithm predicts the probability of occurrence of an event by fitting data to a logistic function.

- Multiclass Neural Network (MNN) is a set of interconnected layers in which the inputs lead to outputs using a series of weighted edges and nodes. A training process takes place to adjust the weights of the edges based on the input data and the expected outputs. The information within the graph flows from inputs to outputs, passing through one or more hidden layers. All the nodes in the graph are connected by the weighted edges to nodes in the next layer.

- Multiclass Decision Forest (MDF) is an ensemble learning method for classification. The algorithm works by building multiple decision trees and then by voting on the most popular output class. Voting is a form of aggregation, in which each tree outputs a non-normalized frequency histogram of labels. The aggregation process sums these histograms and normalizes the result to get the “probabilities” for each label. The trees that have high prediction confidence have a greater weight in the final decision of the ensemble.

- Multiclass Decision Jungle (MDJ) is an extension of decision forests. A decision jungle consists of an ensemble of decision Directed Acyclic Graphs (DAGs). By allowing tree branches to merge, a decision DAG typically has a better generalization performance than a single decision tree, albeit at the cost of a somewhat higher training time. DAGs have the advantage of performing integrated feature selection and classification and are resilient in the presence of noisy features.

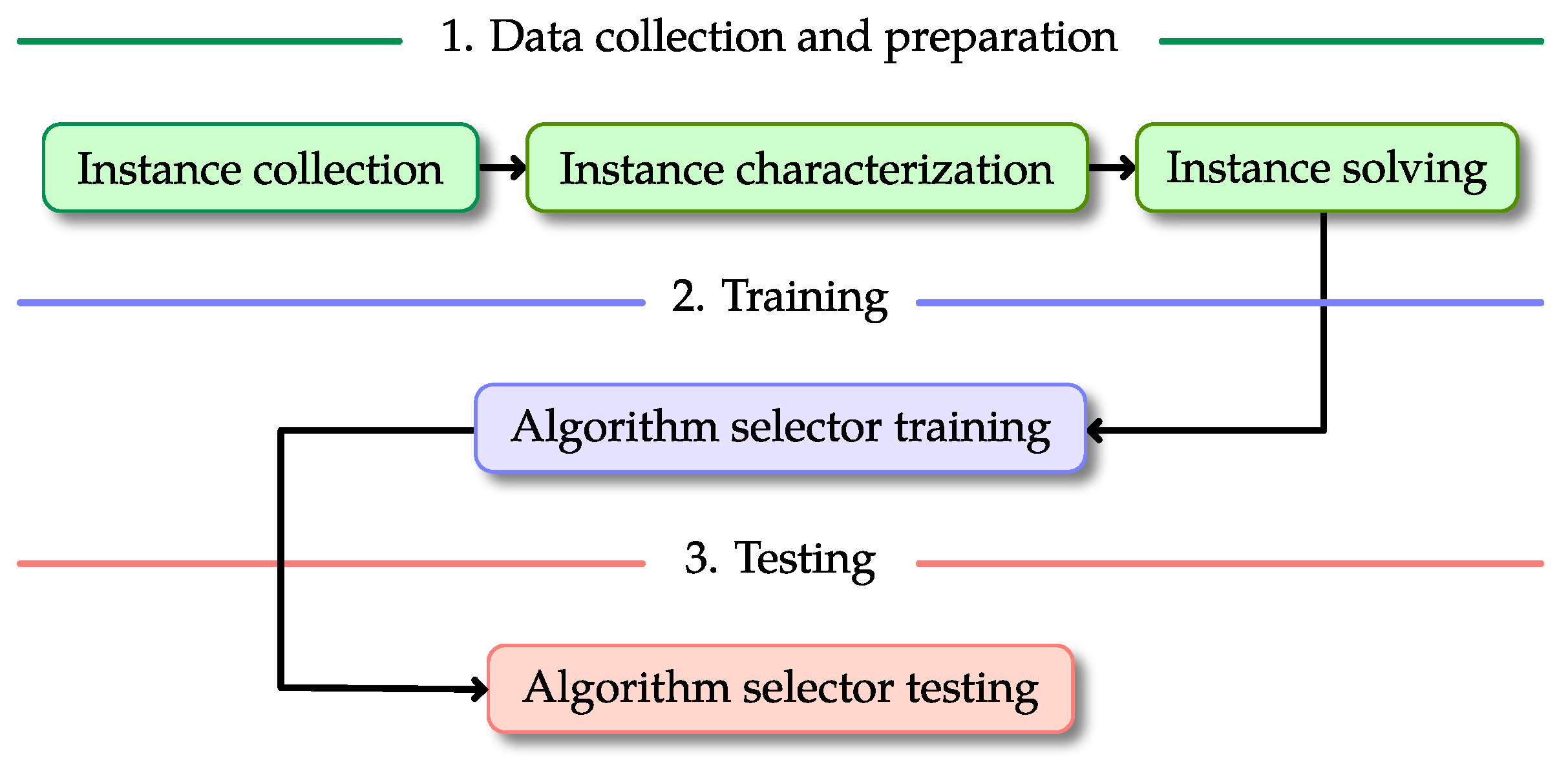

3. Solution Model

- 1

- Data collection and preparation. The first step of the process involves gathering and solving the CSP instances in such a way that we can construct the tables for training and testing. These tables must contain the characterization of each instance as well as the cost of using the heuristics on such instances. Additionally, in our case, a column with a label for the best choice of heuristic is required since we considered supervised Machine Learning methods. However, this column may not be necessary if the methods are unsupervised. The data collection and preparation step is divided into three tasks that are applied sequentially.

- (a)

- Instance collection. The first step of the solution model consists of gathering a set of instances where the methods are trained and tested. It is advisable that these instances contain categories or classes, such that some patterns can be extracted from the instances. For this research, we collected 400 CSP instances, classified into four categories (see Section 2.3 for more details).

- (b)

- Instance characterization. Once the instances have been collected, the model requires characterizing those instances based on the values of some specific features. This characterization allows the algorithm selector to identify patterns that minimize the cost of solving unseen instances. In this work, we considered seven features for characterizing CSPs (see Section 2.2 for more details).

- (c)

- Instance solving. Once the instances have been characterized, the next step consists of solving those instances with each available heuristic (see Section 2.1 for more details). The process requires preserving the result of each heuristic and labelling the best performing one for each individual instance. This information will be used later on to produce the algorithm selector. We estimate heuristic performance by counting the number of consistency checks required to solve each instance.

- 2

- Algorithm selector training. This step generates the mapping from a problem state (defined by the values of the features of the instance) to one suitable algorithm. Once the instances have been solved, the next step is to split the instances (with their corresponding results) into training and test sets. To produce the algorithm selectors described in this work, we first shuffled the instances in each one of the sets described in Section 2.3. Then, we took the first 60% of the instances in each set and merged them into a single training set (the same training set was used for the four algorithm selectors). To avoid biasing the results, we kept the remaining 40% of the instances exclusively for testing purposes. By using the information from the instances and their best performers, we trained four algorithm selectors by using the Machine Learning techniques described in Section 2.4. The result of this process was four mechanisms for discriminating among heuristics for CSPs, based on their problem features. Since we claim that our approach favors the transparency of the research and its reproducibility, we invite the reader to consult the complete process for creating these algorithm selectors. This information is publicly available at https://bit.ly/3hvzwlY (accessed on 16 March 2021).

- 3

- Algorithm selector testing. We used the data from the test set to evaluate the performance of the algorithm selectors (see Section 4 for more details).

4. Experiments and Results

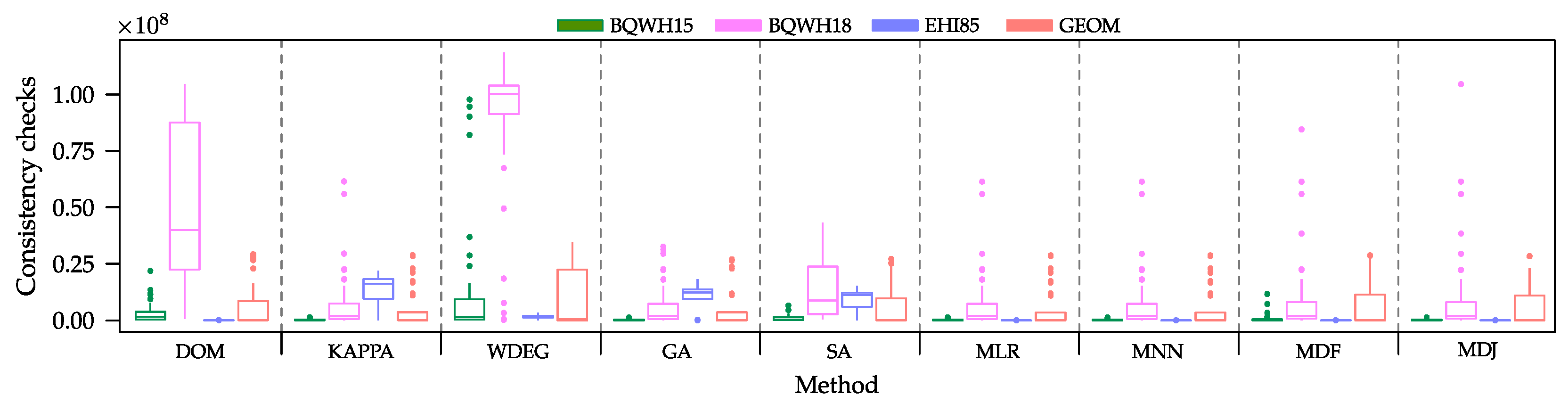

4.1. Challenging the Heuristics

- GA uses a steady-state genetic algorithm to produce algorithm selectors [40]. The evolutionary process starts with 25 randomly initialized individuals and runs for 150 cycles. The crossover and mutation rates are 1.0 and 0.1, respectively. The crossover and mutation operators are tailored for the task. For selecting the individuals for mating, a tournament selection of size two was used.

- SA relies on simulated annealing to generate an algorithm selector [11]. This selector was originally proposed for solving job-shop scheduling problems, but we adapted it for CSPs. The initial temperature is set to 100 with a cooling schedule defined byThe maximum number of iterations of the process is set to 200. The solution representation as well as the mutation operators were taken from [40].

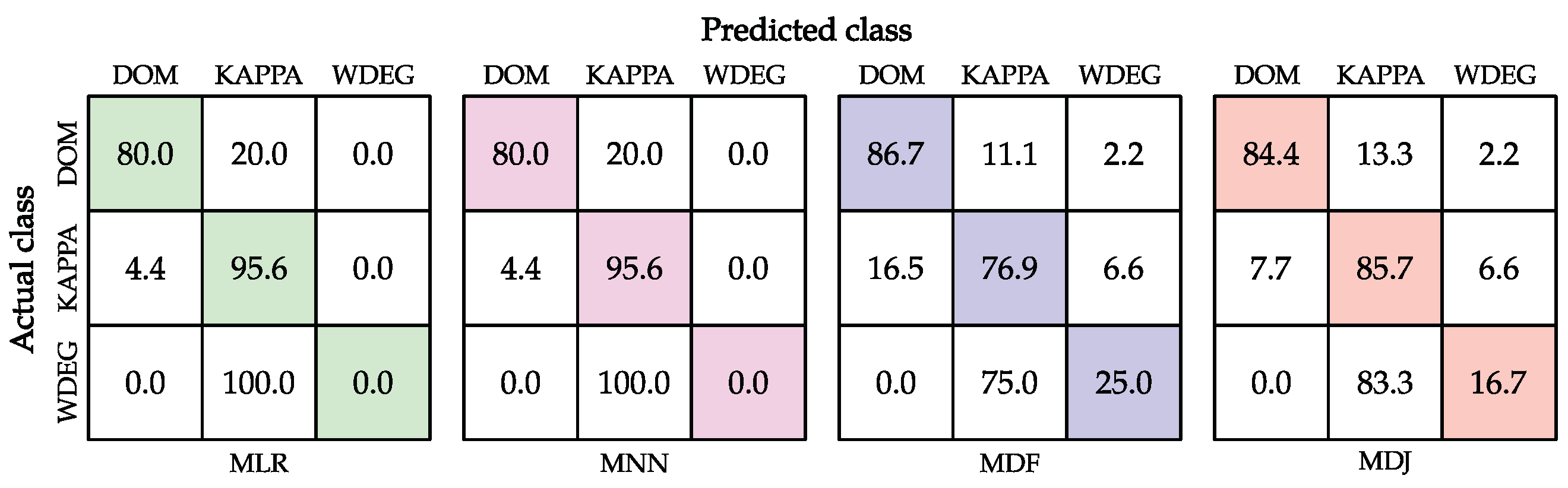

4.2. Challenging the Oracle

4.3. A Glance at the Classifiers

4.4. Discussion

- Data collection and preparation. We solved the instances on a Linux Mint 19 PC with 12 GB of RAM, giving the solvers a time-out of 60 s as stopping criterion for each instance. When the time-out was reached, the search stopped and the current consistency checks were reported. The time needed to solve the instances in both the training and testing sets using the three heuristics was 242 min. This information was later used to train the classifiers. We also used this platform to produce the algorithm selectors used for comparison purposes. GA and SA required 485 and 1270 min, respectively, to generate their corresponding algorithm selectors.

- Training and Testing. Training and testing of the classifiers were executed directly on the Microsoft Azure ML platform (free account). With the data gathered from the previous layer, training and testing the four Machine-Learning-based algorithm selectors took less than four minutes. When we compare this time against those of the metaheuristic-based algorithm selectors, we observe a significant difference in the time required to produce such algorithm selectors, and their overall performance was below the ones of the Machine-Learning-based algorithm selectors.

5. Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Wolpert, D.H.; Macready, W.G. No free lunch theorems for optimization. IEEE Trans. Evol. Comput. 1997, 1, 67–82. [Google Scholar] [CrossRef]

- Rice, J.R. The Algorithm Selection Problem. Adv. Comput. 1976, 15, 65–118. [Google Scholar]

- Epstein, S.L.; Freuder, E.C.; Wallace, R.; Morozov, A.; Samuels, B. The Adaptive Constraint Engine. In Proceedings of the 8th International Conference on Principles and Practice of Constraint Programming (CP ’02), Ithaca, NY, USA, 9–13 September 2002; Springer: Berlin/Heidelberg, Germany, 2002; pp. 525–542. [Google Scholar]

- Lindauer, M.; Hoos, H.H.; Hutter, F.; Schaub, T. AutoFolio: An Automatically Configured Algorithm Selector. J. Artif. Int. Res. 2015, 53, 745–778. [Google Scholar] [CrossRef]

- Loreggia, A.; Malitsky, Y.; Samulowitz, H.; Saraswat, V. Deep Learning for Algorithm Portfolios. In Proceedings of the Thirtieth AAAI Conference on Artificial Intelligence (AAAI’16), Phoenix, AZ, USA, 12–17 February 2016; AAAI Press: Palo Alto, CA, USA, 2016; pp. 1280–1286. [Google Scholar]

- Malitsky, Y.; Sabharwal, A.; Samulowitz, H.; Sellmann, M. Non-Model-Based Algorithm Portfolios for SAT. In Theory and Applications of Satisfiability Testing—SAT 2011; Sakallah, K.A., Simon, L., Eds.; Springer: Berlin/Heidelberg, Germany, 2011; pp. 369–370. [Google Scholar] [CrossRef]

- O’Mahony, E.; Hebrard, E.; Holland, A.; Nugent, C.; O’Sullivan, B. Using Case-based Reasoning in an Algorithm Portfolio for Constraint Solving. In Proceedings of the 19th Irish Conference on Artificial Intelligence and Cognitive Science, Dublin, Ireland, 7–8 December 2008; pp. 1–10. [Google Scholar]

- Amaya, I.; Ortiz-Bayliss, J.C.; Rosales-Pérez, A.; Gutiérrez-Rodríguez, A.E.; Conant-Pablos, S.E.; Terashima-Marín, H.; Coello, C.A.C. Enhancing Selection Hyper-Heuristics via Feature Transformations. IEEE Comput. Intell. Mag. 2018, 13, 30–41. [Google Scholar] [CrossRef]

- Branke, J.; Hildebrandt, T.; Scholz-Reiter, B. Hyper-heuristic Evolution of Dispatching Rules: A Comparison of Rule Representations. Evol. Comput. 2015, 23, 249–277. [Google Scholar] [CrossRef] [PubMed]

- Drake, J.H.; Kheiri, A.; Özcan, E.; Burke, E.K. Recent Advances in Selection Hyper-heuristics. Eur. J. Oper. Res. 2020, 285, 405–428. [Google Scholar] [CrossRef]

- Garza-Santisteban, F.; Sanchez-Pamanes, R.; Puente-Rodriguez, L.A.; Amaya, I.; Ortiz-Bayliss, J.C.; Conant-Pablos, S.; Terashima-Marin, H. A Simulated Annealing Hyper-heuristic for Job Shop Scheduling Problems. In Proceedings of the 2019 IEEE Congress on Evolutionary Computation (CEC), Wellington, New Zealand, 10–13 June 2019; pp. 57–64. [Google Scholar] [CrossRef]

- van der Stockt, S.A.; Engelbrecht, A.P. Analysis of selection hyper-heuristics for population-based meta-heuristics in real-valued dynamic optimization. Swarm Evol. Comput. 2018, 43, 127–146. [Google Scholar] [CrossRef]

- Malitsky, Y.; Sabharwal, A.; Samulowitz, H.; Sellmann, M. Algorithm Portfolios Based on Cost-sensitive Hierarchical Clustering. In Proceedings of the Twenty-Third International Joint Conference on Artificial Intelligence (IJCAI’13), Beijing, China, 3–9 August 2013; AAAI Press: Palo Alto, CA, USA, 2013; pp. 608–614. [Google Scholar]

- Malitsky, Y. Evolving Instance-Specific Algorithm Configuration. In Instance-Specific Algorithm Configuration; Springer International Publishing: Berlin/Heidelberg, Germany, 2014; pp. 93–105. [Google Scholar] [CrossRef]

- Bischl, B.; Kerschke, P.; Kotthoff, L.; Lindauer, M.; Malitsky, Y.; Fréchette, A.; Hoos, H.; Hutter, F.; Leyton-Brown, K.; Tierney, K.; et al. ASlib: A benchmark library for algorithm selection. Artif. Intell. 2016, 237, 41–58. [Google Scholar] [CrossRef]

- Ochoa, G.; Hyde, M.; Curtois, T.; Vazquez-Rodriguez, J.A.; Walker, J.; Gendreau, M.; Kendall, G.; McCollum, B.; Parkes, A.J.; Petrovic, S.; et al. HyFlex: A Benchmark Framework for Cross-Domain Heuristic Search. In Evolutionary Computation in Combinatorial Optimization; Hao, J.K., Middendorf, M., Eds.; Springer: Berlin/Heidelberg, Germany, 2012; pp. 136–147. [Google Scholar]

- Xu, L.; Hutter, F.; Hoos, H.H.; Leyton-Brown, K. SATzilla: Portfolio-based algorithm selection for SAT. J. Artif. Intell. Res. 2008, 32, 565–606. [Google Scholar] [CrossRef]

- Goldberg, D.; Holland, J. Genetic algorithms and machine learning. Mach. Learn. 1988, 3, 95–99. [Google Scholar] [CrossRef]

- Kirkpatrick, S.; Gelatt, C.D., Jr.; Vecchi, M.P. Optimization by Simulated Annealing Optimization by Simulated Annealing. Science 1983, 220, 671–680. [Google Scholar] [CrossRef] [PubMed]

- Kotthoff, L. Algorithm Selection for Combinatorial Search Problems: A Survey. In Data Mining and Constraint Programming: Foundations of a Cross-Disciplinary Approach; Bessiere, C., De Raedt, L., Kotthoff, L., Nijssen, S., O’Sullivan, B., Pedreschi, D., Eds.; Springer International Publishing: Cham, Switzerland, 2016; pp. 149–190. [Google Scholar] [CrossRef]

- Lindauer, M.; van Rijn, J.N.; Kotthoff, L. The algorithm selection competitions 2015 and 2017. Artif. Intell. 2019, 272, 86–100. [Google Scholar] [CrossRef]

- Amaya, I.; Ortiz-Bayliss, J.C.; Conant-Pablos, S.; Terashima-Marin, H. Hyper-heuristics Reversed: Learning to Combine Solvers by Evolving Instances. In Proceedings of the 2019 IEEE Congress on Evolutionary Computation (CEC), Wellington, New Zealand, 10–13 June 2019; pp. 1790–1797. [Google Scholar] [CrossRef]

- Aziz, Z.A. Ant Colony Hyper-heuristics for Travelling Salesman Problem. Procedia Comput. Sci. 2015, 76, 534–538. [Google Scholar] [CrossRef]

- Kendall, G.; Li, J. Competitive travelling salesmen problem: A hyper-heuristic approach. J. Oper. Res. Soc. 2013, 64, 208–216. [Google Scholar] [CrossRef]

- Sabar, N.R.; Zhang, X.J.; Song, A. A math-hyper-heuristic approach for large-scale vehicle routing problems with time windows. In Proceedings of the 2015 IEEE Congress on Evolutionary Computation (CEC), Sendai, Japan, 25–28 May 2015; pp. 830–837. [Google Scholar] [CrossRef]

- Amaya, I.; Ortiz-Bayliss, J.C.; Gutierrez-Rodriguez, A.E.; Terashima-Marin, H.; Coello, C.A.C. Improving hyper-heuristic performance through feature transformation. In Proceedings of the 2017 IEEE Congress on Evolutionary Computation (CEC), Donostia, Spain, 5–8 June 2017; pp. 2614–2621. [Google Scholar] [CrossRef]

- Berlier, J.; McCollum, J. A constraint satisfaction algorithm for microcontroller selection and pin assignment. In Proceedings of the IEEE SoutheastCon 2010 (SoutheastCon), Concord, NC, USA, 18–21 March 2010; pp. 348–351. [Google Scholar]

- Bochkarev, S.V.; Ovsyannikov, M.V.; Petrochenkov, A.B.; Bukhanov, S.A. Structural synthesis of complex electrotechnical equipment on the basis of the constraint satisfaction method. Russ. Electr. Eng. 2015, 86, 362–366. [Google Scholar] [CrossRef]

- Smith-Miles, K.A. Cross-disciplinary perspectives on meta-learning for algorithm selection. ACM Comput. Surv. CSUR 2009, 41, 6. [Google Scholar] [CrossRef]

- Smith-Miles, K.; Lopes, L. Measuring instance difficulty for combinatorial optimization problems. Comput. Oper. Res. 2012, 39, 875–889. [Google Scholar] [CrossRef]

- Gutierrez-Rodríguez, A.E.; Conant-Pablos, S.E.; Ortiz-Bayliss, J.C.; Terashima-Marín, H. Selecting meta-heuristics for solving vehicle routing problems with time windows via meta-learning. Expert Syst. Appl. 2019, 118, 470–481. [Google Scholar] [CrossRef]

- Makkar, S.; Devi, G.N.R.; Solanki, V.K. Applications of Machine Learning Techniques in Supply Chain Optimization. In Proceedings of the International Conference on Intelligent Computing and Communication Technologies, Hyderabad, India, 9–11 January 2019; Springer: Berlin/Heidelberg, Germany, 2019; pp. 861–869. [Google Scholar]

- Haykin, S. Neural Networks: A Comprehensive Foundation; Prentice-Hall, Inc.: Upper Saddle River, NJ, USA, 2007. [Google Scholar]

- Wright, R.E. Logistic regression. In Reading & Understanding Multivariate Statistics; American Psychological Association: Washington, DC, USA, 1995; pp. 217–244. [Google Scholar]

- Ho, T.K. Random decision forests. In Proceedings of the 3rd International Conference on Document Analysis And Recognition, Montreal, QC, Canada, 14–16 August 1995; Volume 1, pp. 278–282. [Google Scholar]

- Shotton, J.; Sharp, T.; Kohli, P.; Nowozin, S.; Winn, J.; Criminisi, A. Decision jungles: Compact and rich models for classification. In Proceedings of the Advances in Neural Information Processing Systems, Lake Tahoe, NV, USA, 5–10 December 2013; pp. 234–242. [Google Scholar]

- Burke, E.K.; Gendreau, M.; Hyde, M.; Kendall, G.; Ochoa, G.; Özcan, E.; Qu, R. Hyper-heuristics: A survey of the state of the art. J. Oper. Res. Soc. 2013, 64, 1695–1724. [Google Scholar] [CrossRef]

- Pillay, N.; Qu, R. Hyper-Heuristics: Theory and Applications; Natural Computing Series; Springer International Publishing: Cham, Switzerland, 2018. [Google Scholar] [CrossRef]

- Ortiz-Bayliss, J.C.; Terashima-Marín, H.; Conant-Pablos, S.E. Learning vector quantization for variable ordering in constraint satisfaction problems. Pattern Recogn. Lett. 2013, 34, 423–432. [Google Scholar] [CrossRef]

- Ortiz-Bayliss, J.C.; Terashima-Marín, H.; Conant-Pablos, S.E. Combine and conquer: An evolutionary hyper-heuristic approach for solving constraint satisfaction problems. Artif. Intell. Rev. 2016, 46, 327–349. [Google Scholar] [CrossRef]

- Kiraz, B.; Etaner-Uyar, A.Ş.; Özcan, E. An Ant-Based Selection Hyper-heuristic for Dynamic Environments. In Proceedings of the Applications of Evolutionary Computation: 16th European Conference, EvoApplications 2013, Vienna, Austria, 3–5 April 2013; Esparcia-Alcázar, A.I., Ed.; Springer: Berlin/Heidelberg, Germany, 2013; pp. 626–635. [Google Scholar] [CrossRef]

- Bischl, B.; Mersmann, O.; Trautmann, H.; Preuß, M. Algorithm Selection Based on Exploratory Landscape Analysis and Cost-sensitive Learning. In Proceedings of the 14th Annual Conference on Genetic and Evolutionary Computation, GECCO ’12, Philadelphia, PA, USA, 7–11 July 2012; ACM: New York, NY, USA, 2012; pp. 313–320. [Google Scholar] [CrossRef]

- López-Camacho, E.; Terashima-Marín, H.; Ochoa, G.; Conant-Pablos, S.E. Understanding the structure of bin packing problems through principal component analysis. Int. J. Prod. Econ. 2013, 145, 488–499. [Google Scholar] [CrossRef]

- Jussien, N.; Lhomme, O. Local Search with Constraint Propagation and Conflict-Based Heuristics. Artif. Intell. 2012, 139, 21–45. [Google Scholar] [CrossRef]

- Tsang, E.P.K.; Borrett, J.E.; Kwan, A.C.M.; Sq, C.C. An Attempt to Map the Performance of a Range of Algorithm and Heuristic Combinations. In Proceedings of Adaptation in Artificial and Biological Systems (AISB’95); IOS Press: Amsterdam, The Netherlands, 1995; pp. 203–216. [Google Scholar]

- Petrovic, S.; Epstein, S.L. Random Subsets Support Learning a Mixture of Heuristics. Int. J. Artif. Intell. Tools 2008, 17, 501–520. [Google Scholar] [CrossRef]

- Bittle, S.A.; Fox, M.S. Learning and using hyper-heuristics for variable and value ordering in constraint satisfaction problems. In Proceedings of the 11th Annual Conference Companion on Genetic and Evolutionary Computation Conference: Late Breaking Papers; ACM: New York, NY, USA, 2009; pp. 2209–2212. [Google Scholar]

- Crawford, B.; Soto, R.; Castro, C.; Monfroy, E. A hyperheuristic approach for dynamic enumeration strategy selection in constraint satisfaction. In Proceedings of the 4th International Conference on Interplay Between Natural and Artificial Computation: New Challenges on Bioinspired Applications—Volume Part II (IWINAC’11), Mallorca, Spain, 10–14 June 2011; Springer: Berlin/Heidelberg, Germany, 2011; pp. 295–304. [Google Scholar]

- Soto, R.; Crawford, B.; Monfroy, E.; Bustos, V. Using Autonomous Search for Generating Good Enumeration Strategy Blends in Constraint Programming. In Computational Science and Its Applications—ICCSA 2012, Proceedings of the 12th International Conference, Salvador de Bahia, Brazil, 18–21 June 2012; Springer: Berlin/Heidelberg, Germany, 2012; pp. 607–617. [Google Scholar]

- Moreno-Scott, J.H.; Ortiz-Bayliss, J.C.; Terashima-Marín, H.; Conant-Pablos, S.E. Experimental Matching of Instances to Heuristics for Constraint Satisfaction Problems. Comput. Intell. Neurosci. 2016, 2016, 1–15. [Google Scholar] [CrossRef] [PubMed]

- Gent, I.; MacIntyre, E.; Prosser, P.; Smith, B.; Walsh, T. An empirical study of dynamic variable ordering heuristics for the constraint satisfaction problem. In Proceedings of the International Conference on Principles and Practice of Constraint Programming (CP’96), Cambridge, MA, USA, 19–22 August 1996; pp. 179–193. [Google Scholar]

- Boussemart, F.; Hemery, F.; Lecoutre, C.; Sais, L. Boosting Systematic Search by Weighting Constraints. In Proceedings of the European Conference on Artificial Intelligence (ECAI’04), Valencia, Spain, 23–27 August 2004; pp. 146–150. [Google Scholar]

- Minton, S.; Johnston, M.D.; Phillips, A.; Laird, P. Minimizing Conflicts: A Heuristic repair Method for CSP and Scheduling Problems. Artif. Intell. 1992, 58, 161–205. [Google Scholar] [CrossRef]

- Bacchus, F. Extending Forward Checking. In Proceedings of the International Conference on Principles and Practice of Constraint Programming (CP’00), Louvain-la-Neuve, Belgium, 7–11 September 2000; Springer: Berlin/Heidelberg, Germany, 2000; pp. 35–51. [Google Scholar]

- Rossi, F.; Petrie, C.; Dhar, V. On the Equivalence of Constraint Satisfaction Problems. In Proceedings of the 9th European Conference on Artificial Intelligence, Stockholm, Sweden, 6–10 August 1990; pp. 550–556. [Google Scholar]

- Refalo, P. Impact-Based Search Strategies for Constraint Programming. In Principles and Practice of Constraint Programming—CP 2004; Wallace, M., Ed.; Springer: Berlin/Heidelberg, Germany, 2004; pp. 557–571. [Google Scholar]

- Michel, L.; Van Hentenryck, P. Activity-Based Search for Black-Box Constraint Programming Solvers. In Integration of AI and OR Techniques in Contraint Programming for Combinatorial Optimzation Problems; Beldiceanu, N., Jussien, N., Pinson, É., Eds.; Springer: Berlin/Heidelberg, Germany, 2012; pp. 228–243. [Google Scholar]

- Al-Obeidat, F.; Belacel, N.; Spencer, B. Combining Machine Learning and Metaheuristics Algorithms for Classification Method PROAFTN. In Enhanced Living Environments: Algorithms, Architectures, Platforms, and Systems; Ganchev, I., Garcia, N.M., Dobre, C., Mavromoustakis, C.X., Goleva, R., Eds.; Springer International Publishing: Cham, Switzerland, 2019; pp. 53–79. [Google Scholar] [CrossRef]

- Talbi, E. Machine Learning for Metaheuristics—State of the Art and Perspectives. In Proceedings of the 2019 11th International Conference on Knowledge and Smart Technology (KST), Phuket, Thailand, 23–26 January 2019; p. XXIII. [Google Scholar]

| Method | BQWH15 | BQWH18 | EHI85 | GEOM | All Sets |

|---|---|---|---|---|---|

| DOM | 3,454,102.95 | 50,005,020.88 | 50,618.45 | 6,840,338.70 | 15,087,520.24 |

| KAPPA | 267,675.63 | 7,917,330.05 | 12,943,721.48 | 4,887,645.25 | 6,504,093.10 |

| WDEG | 15,950,318.70 | 87,374,458.50 | 1,637,849.73 | 10,807,434.65 | 28,942,515.39 |

| GA | 267,675.63 | 6,580,857.28 | 10,101,026.05 | 5,880,428.30 | 5,707,496.81 |

| SA | 969,515.73 | 15,215,961.18 | 8,765,488.58 | 6,420,713.60 | 7,842,919.77 |

| MLR | 267,675.63 | 7,917,330.05 | 50,618.45 | 4,887,645.25 | 3,280,817.34 |

| MNN | 267,675.63 | 7,917,330.05 | 50,618.45 | 4,887,645.25 | 3,280,817.34 |

| MDF | 853,809.85 | 10,148,086.18 | 50,618.45 | 5,897,675.00 | 4,237,547.37 |

| MDJ | 267,675.63 | 10,787,268.33 | 50,618.45 | 5,338,607.935 | 4,111,042.58 |

| Method | Accuracy (%) | p-Value |

|---|---|---|

| DOM | 27.500 | 4.809 |

| KAPPA | 57.500 | 9.370 |

| WDEG | 15.000 | 7.283 |

| GA | N/A | 3.176 |

| SA | N/A | 2.679 |

| MLR | 76.875 | 0.771068 |

| MNN | 76.875 | 0.771068 |

| MDF | 71.875 | 0.269258 |

| MDJ | 75.000 | 0.345435 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ortiz-Bayliss, J.C.; Amaya, I.; Cruz-Duarte, J.M.; Gutierrez-Rodriguez, A.E.; Conant-Pablos, S.E.; Terashima-Marín, H. A General Framework Based on Machine Learning for Algorithm Selection in Constraint Satisfaction Problems. Appl. Sci. 2021, 11, 2749. https://doi.org/10.3390/app11062749

Ortiz-Bayliss JC, Amaya I, Cruz-Duarte JM, Gutierrez-Rodriguez AE, Conant-Pablos SE, Terashima-Marín H. A General Framework Based on Machine Learning for Algorithm Selection in Constraint Satisfaction Problems. Applied Sciences. 2021; 11(6):2749. https://doi.org/10.3390/app11062749

Chicago/Turabian StyleOrtiz-Bayliss, José C., Ivan Amaya, Jorge M. Cruz-Duarte, Andres E. Gutierrez-Rodriguez, Santiago E. Conant-Pablos, and Hugo Terashima-Marín. 2021. "A General Framework Based on Machine Learning for Algorithm Selection in Constraint Satisfaction Problems" Applied Sciences 11, no. 6: 2749. https://doi.org/10.3390/app11062749

APA StyleOrtiz-Bayliss, J. C., Amaya, I., Cruz-Duarte, J. M., Gutierrez-Rodriguez, A. E., Conant-Pablos, S. E., & Terashima-Marín, H. (2021). A General Framework Based on Machine Learning for Algorithm Selection in Constraint Satisfaction Problems. Applied Sciences, 11(6), 2749. https://doi.org/10.3390/app11062749