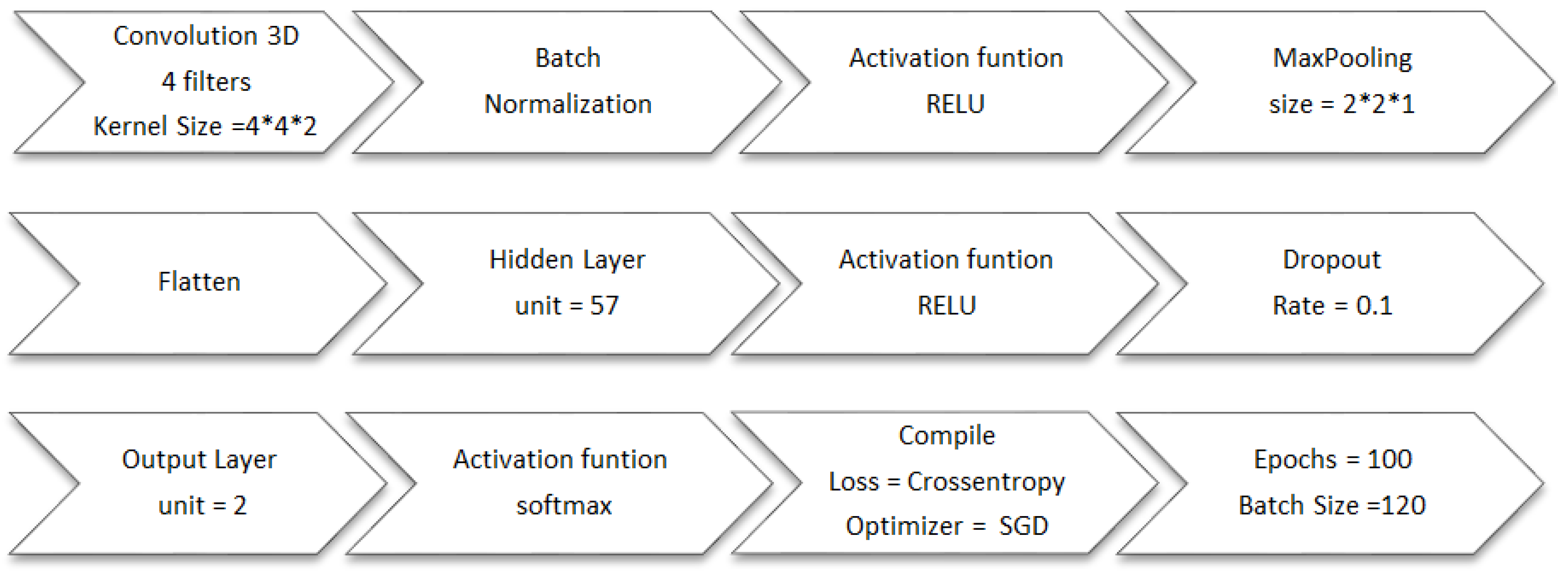

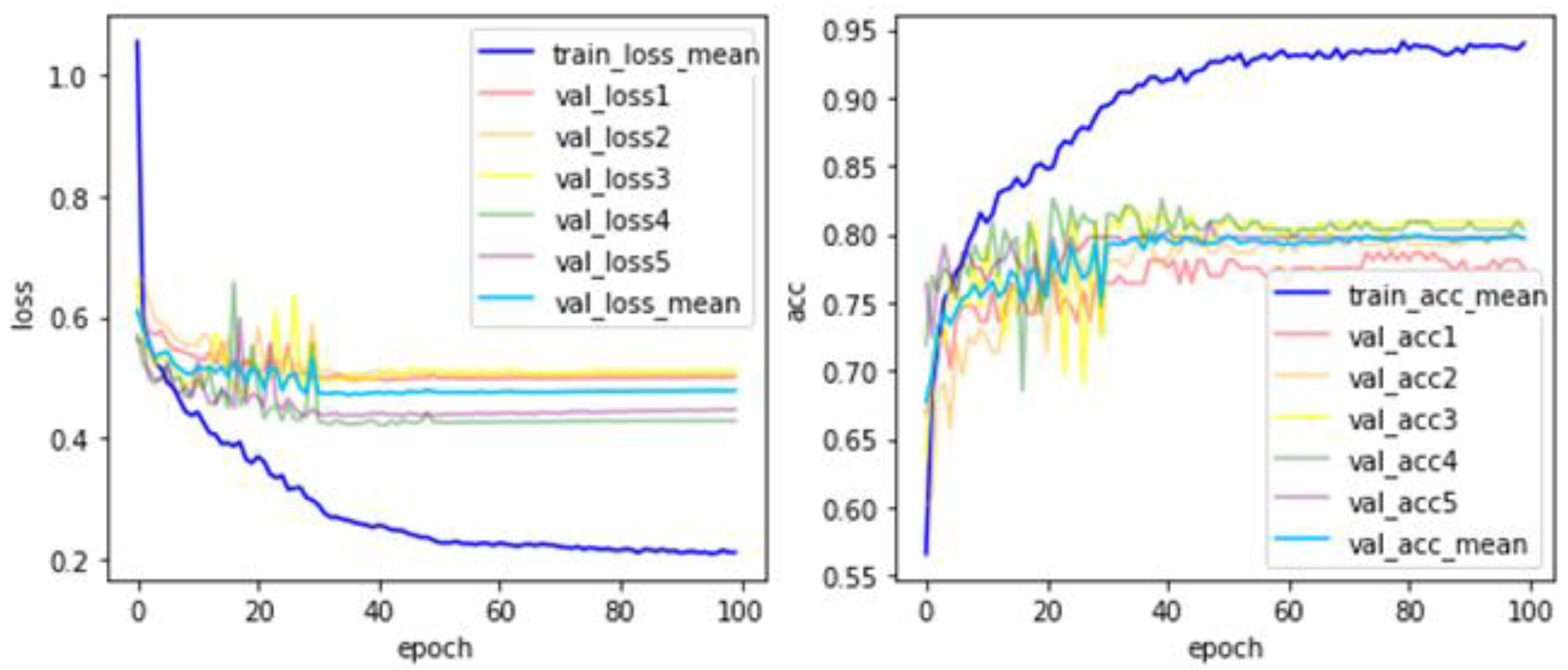

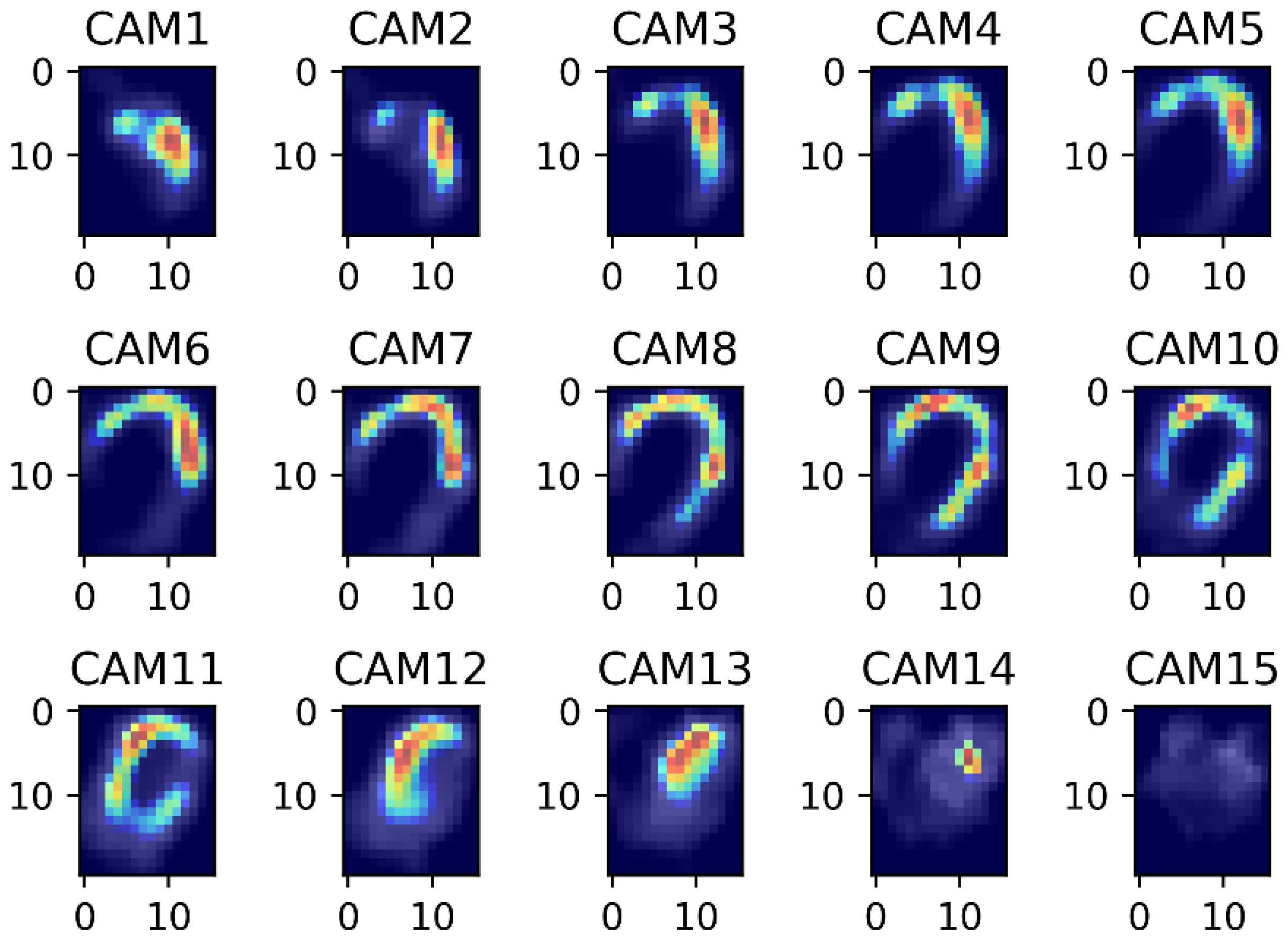

2.1. Image Preprocessing

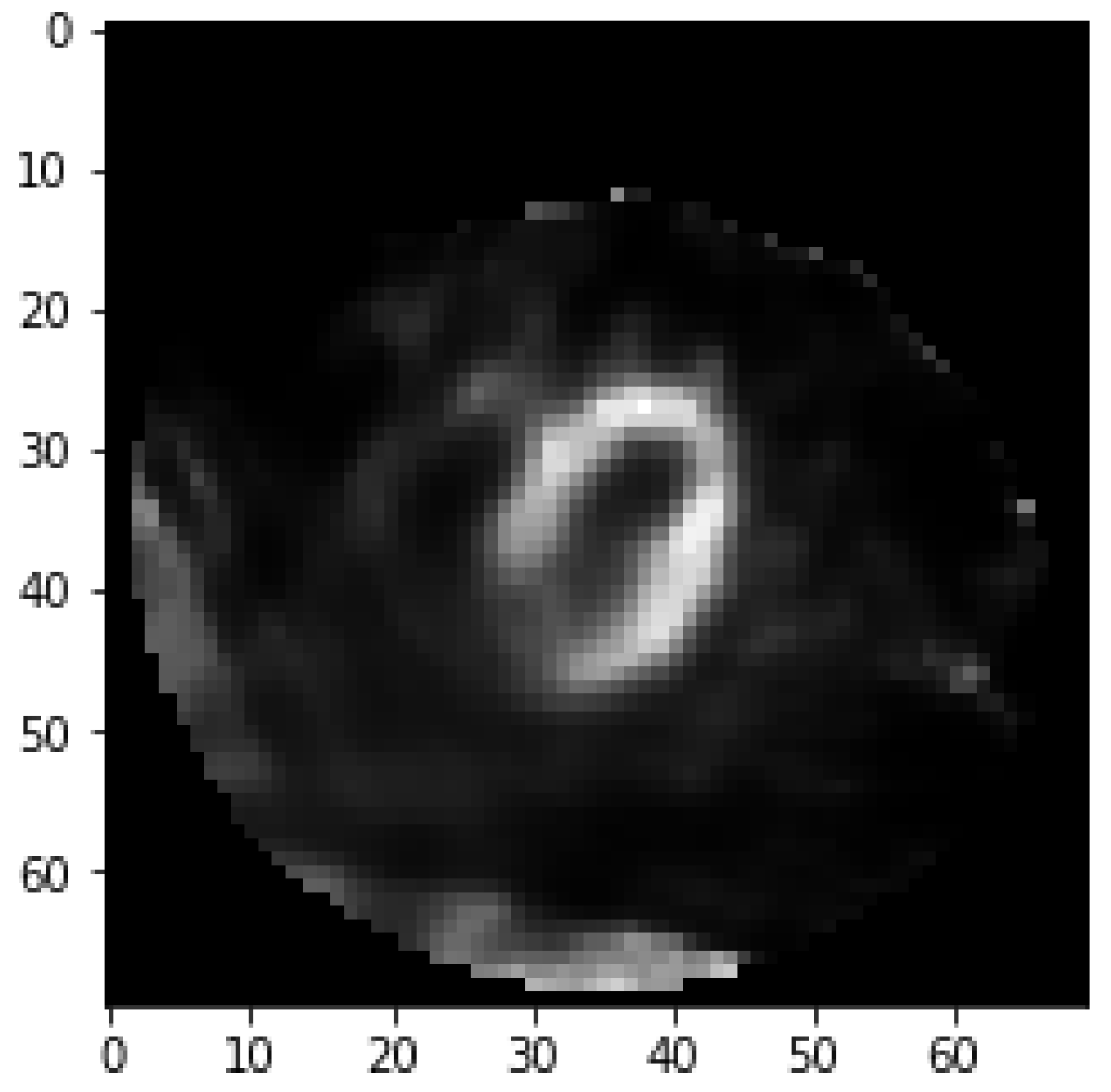

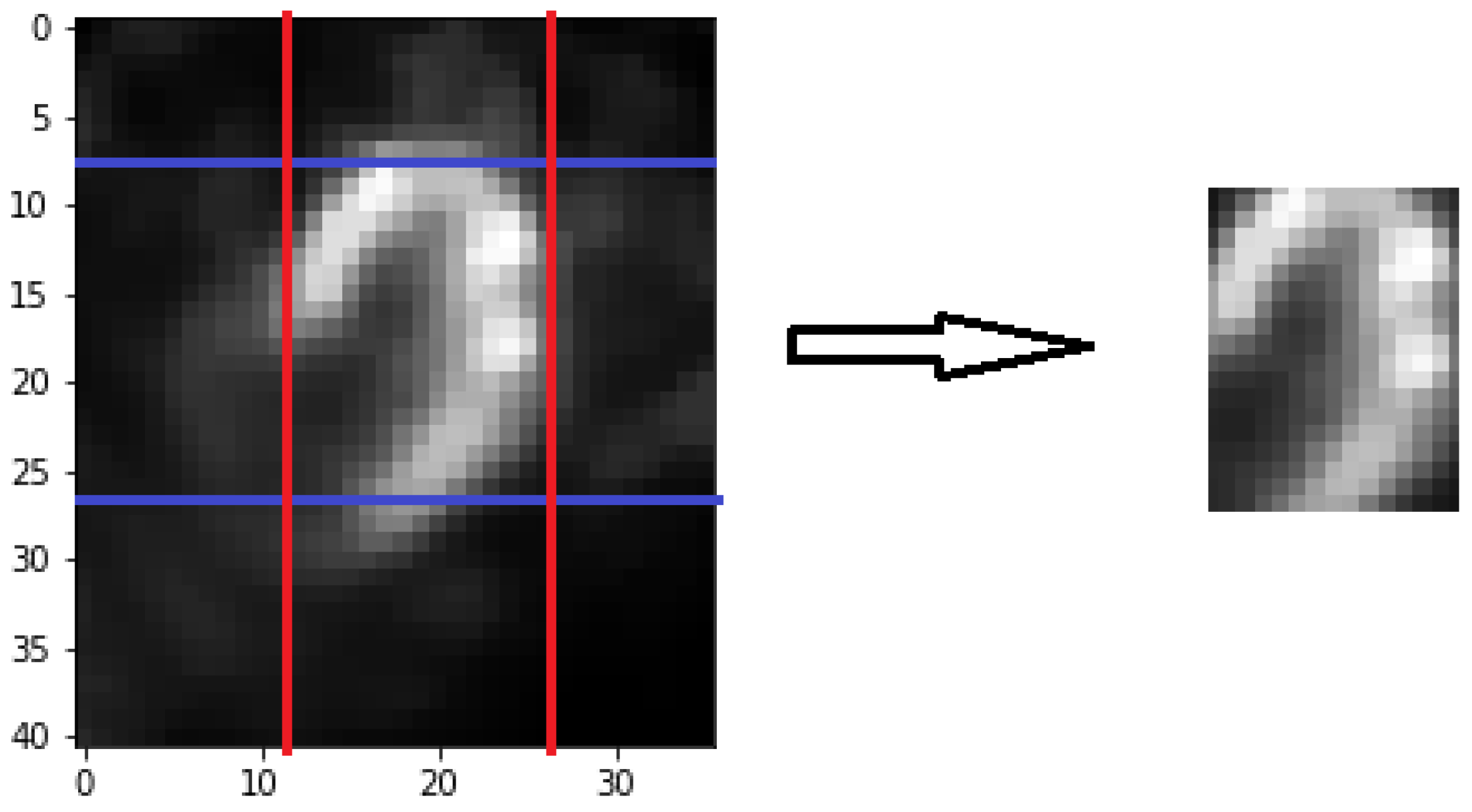

Fifty cross-sectional heart images are for each subject. The pixel for each image is 70 × 70. Myocardium that belongs to coronary artery has the circumference shape opening in lower left. In clinical diagnosis, doctors determine whether the subject has a myocardial defect based on the degree of saturation for the myocardium circumference area in the image. Subject tends to be healthy if the brightness in circumference shape is saturated that is shown in

Figure 1.

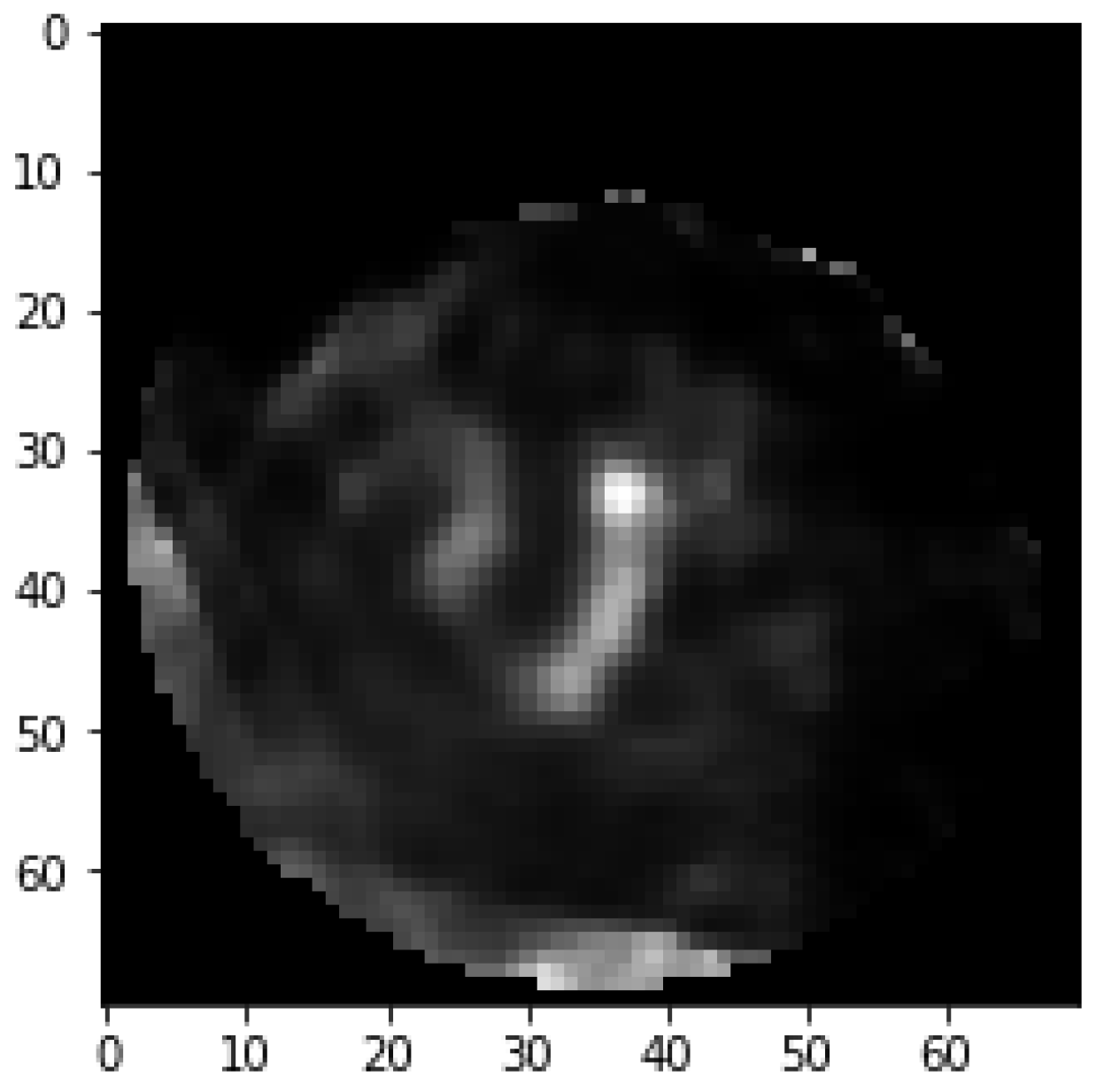

On the other hand, if there is an apparent dark part, it means that the subject suffers from coronary heart disease that is shown in

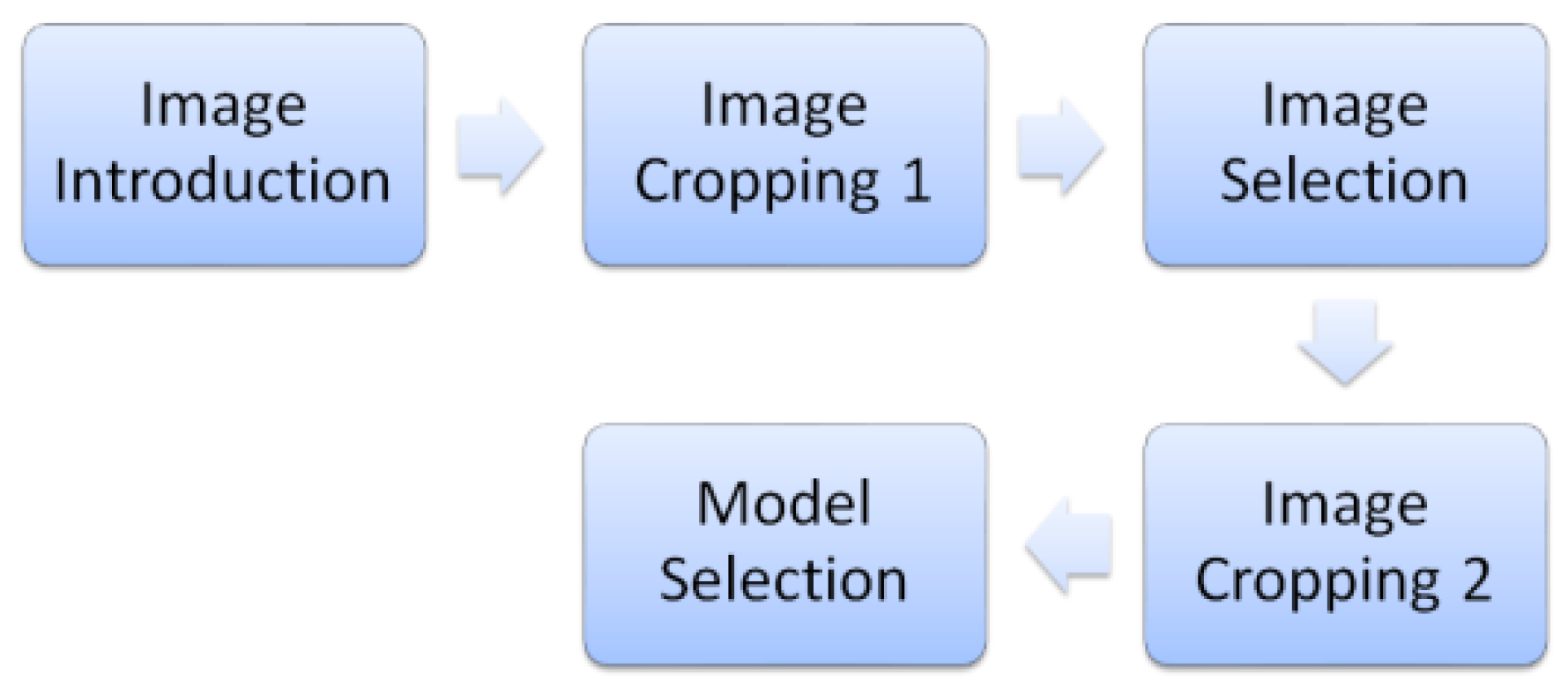

Figure 2. This set of data requires a series of image preprocessing to take out the myocardium circumference area and remove the surrounding noise. First, remove surrounding non-myocardial parts by initial cropping. Second, select the images near the center for cross-sectional images by the image selection method. Finally, align the myocardium in the center by image cropping again. The image process is shown in

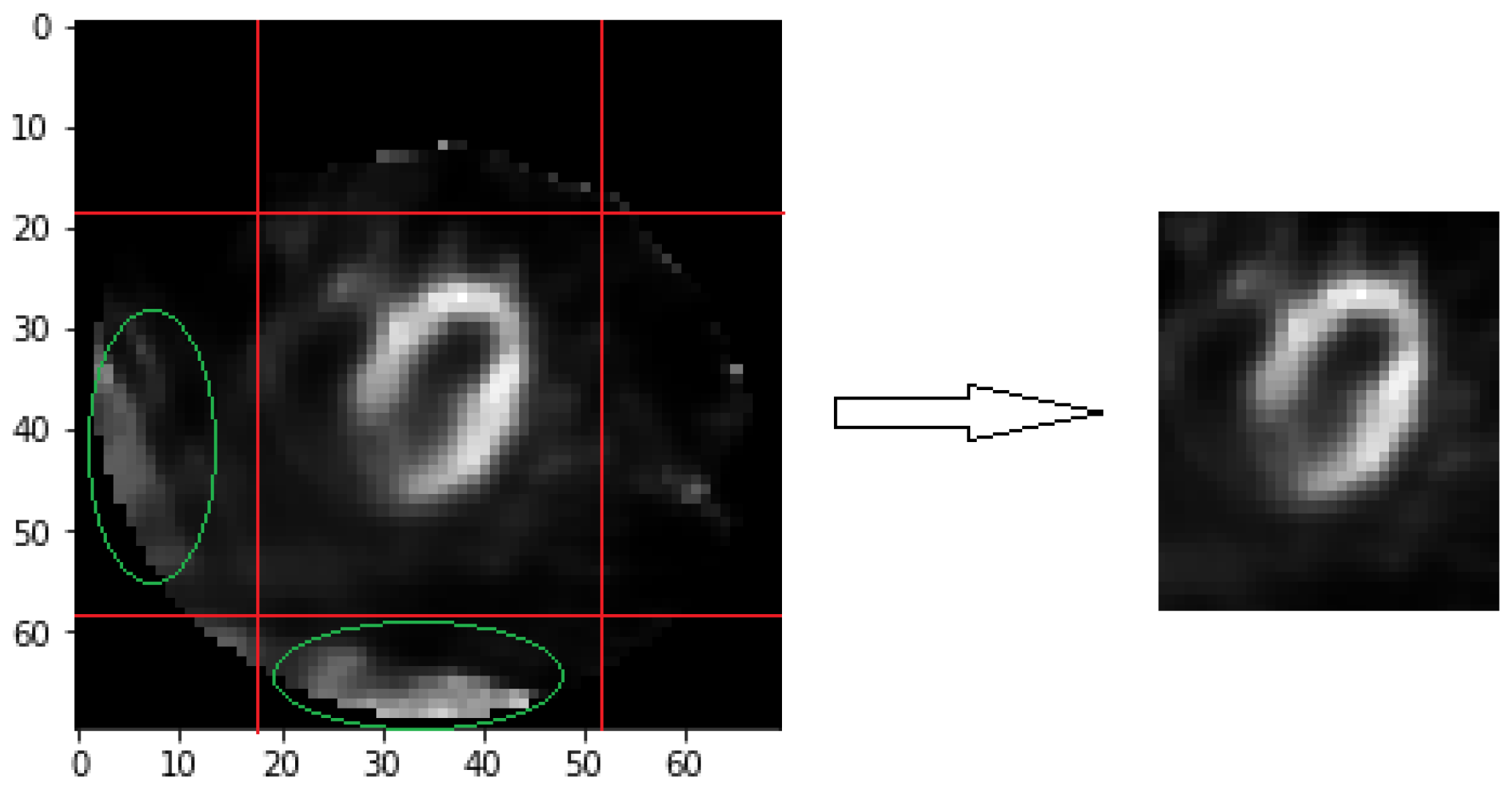

Figure 3. Organs around the coronary arteries contain non-myocardial parts in these cross-sectional images [

13]. The muscles reflect an image when it has absorbed the tracer. All process methods are related to brightness for image selection and cropping. Therefore, the brightness of these organs leads to interference. We retain specific columns and rows for each image as initial image cropping. Each image has 41 × 36 pixels after cropping, as shown in

Figure 4. It removes most non-myocardial muscles, which are unimportant parts.

The myocardium covers more area when closed to the sequence center of the image. Disease symptoms usually are more obvious in the sequencing center of the images. It means the image features between healthy and unhealthy patients are more different. Since the heart position is not aligned, the heart sequence center does not necessarily appear in the middle of the 50 images in the respective sequence. The following two methods are used to find out the sequence center image for each subject. The first method is about brightness sum. The first step is to add up all the pixel values of the two images before and after for each image, corresponding to Equation (1). The

means that the pixel value of

i-th row and

j-th column in

k-th image for a subject. This step can avoid the situation where the selected image is very bright and the two images before and after are very dark. This situation is not consistent with the characteristics of the heart sequence center image. In the second step, find the image with the maximum sum of brightness values. This image is regarded as the image sequence center

obtained using the first method, which corresponds to Equation (2).

The second method is about brightness threshold. When the original image cropping procedure cannot completely remove the non-heart organs in the image, these organs appear particularly bright. This brightness relative to the image accounted for a large proportion. Compared with the two image selection methods, the first method more easily selects the wrong image sequence center. These non-heart organs are usually located at the edges of the image. Even if it is very bright, the number of grids occupied is very small. The choice of the second method is related to the number of grids, so it can select the correct heart sequence center image. In contrast to the previous situation, the myocardial area that the tracer is well absorbed for the subject is small. “Brightness sum” method can choose the right heart sequence center image.

In the first step, every pixel is divided by a number that is the average of these images that belong to a subject. In the next step, the pixel that passes the threshold value was taken out to calculate the number of rows and columns that contain these pixels. Suppose there are two matrixes—C and R.

is the

j-th column stored values and

is the

i-th row stored value in

k-th image are used in Equations (3) and (4). The

or

will be set as one when there is at least one pixel that pass threshold value in this row or column. The threshold value is set as 3 by tuning in this paper. In the final step, we will multiply the number of rows and columns that pass the threshold to obtains the multiple values that are named “threshold area” for each image. The maximum of these threshold areas will be treated as heart sequence center image

by this method in Equation (5). The two methods take out their respective heart images, which are numbered

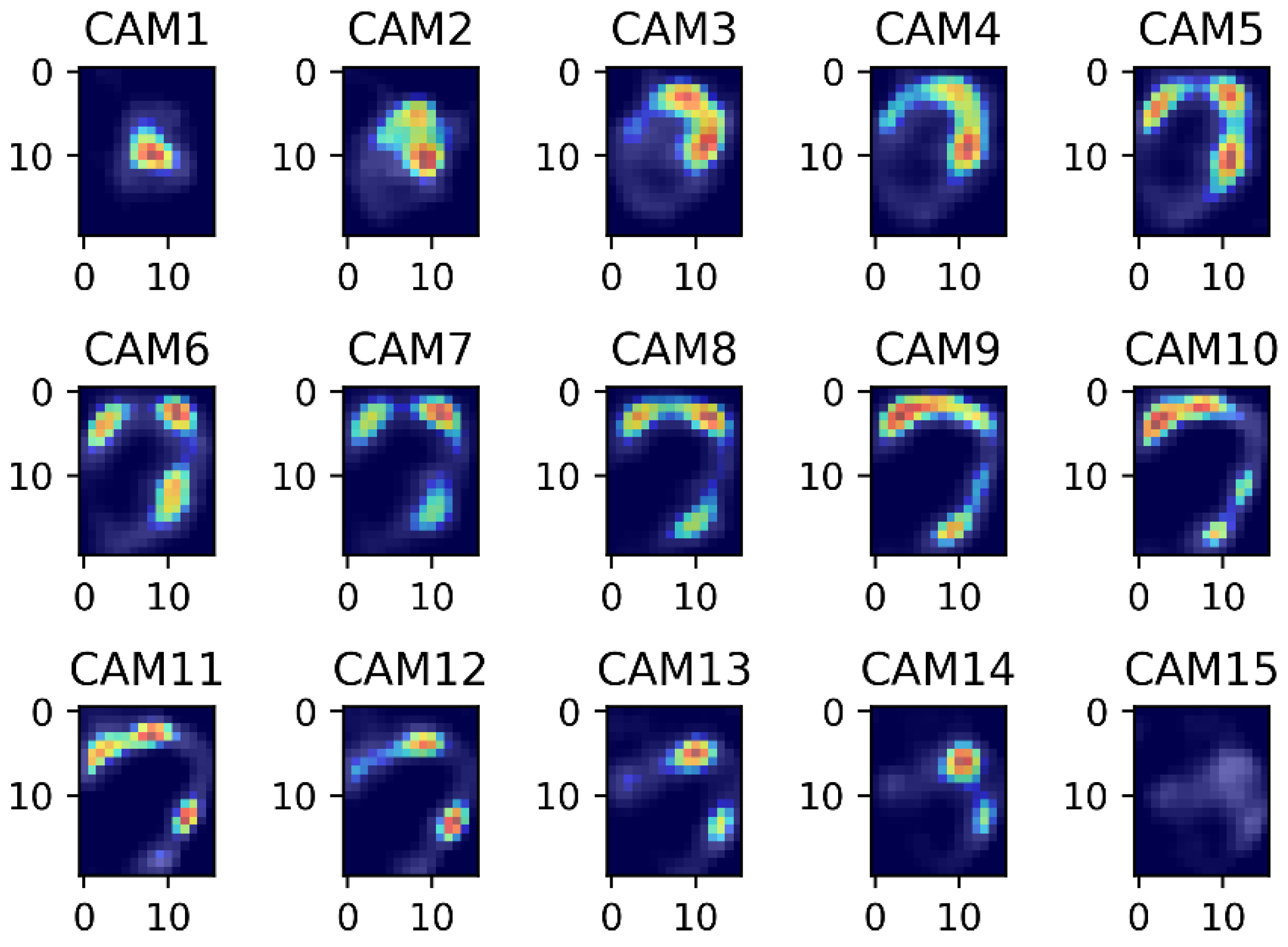

and

. The average value of

and

is taken as the final central image of the sequence. From near the central image of the sequence, select 15 images that will be placed in the final model. In the experimental chapter, we will verify that 15 images are the best choice.

The myocardium is not in the middle in most of the images, even if images are processed by initial image cropping and image selection. Due to the subjects’ heart positions are slightly different. So, these images need to be cropped again to solve this problem. Cropping process needs to be performed separately for rows and columns. Currently every image has 41 × 36 pixels. The first step, suppose there are two vectors A and B in Equations (6) and (7).

is equal to the sum of bright forward some row pixels as this

p-th row’s representative brightness sum value.

is equal to the sum of bright forward some column pixels as this q-th column’s representative brightness sum value. Add 19 rows or 16 columns by tuning in this paper. The purpose is to find out the initial of cropping. These rows contain the entire myocardium coverage by observing. In Equations (8) and (9), choose the row that has maximum value in A as the first cropping row. Choose the column that has maximum value in B as the first cropping column. The first cropping row and column will be at the top left border of the cropping. The second step, select forward several rows and columns from the first cropping row and column by experiment. In

Figure 5, the image which is complete cropping that the myocardium will show in the middle. For every image, we will do pixel normalization, which reduces the stuck during training in neural network model.