An Enhanced Multimodal Stacking Scheme for Online Pornographic Content Detection

Abstract

1. Introduction

2. Literature Review

3. Methods

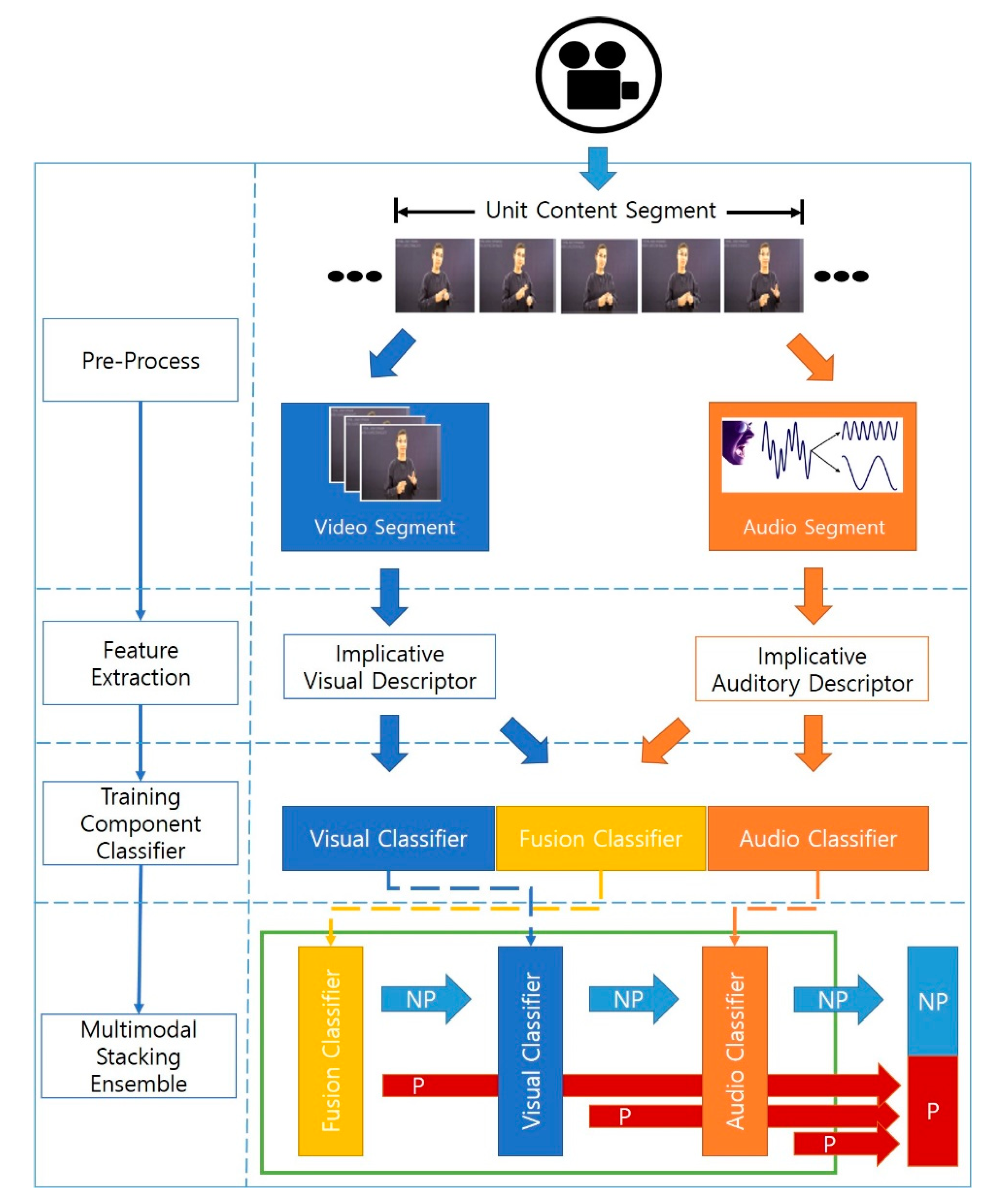

3.1. Overall Procedure of the Enhanced Multimodal Stacking Scheme

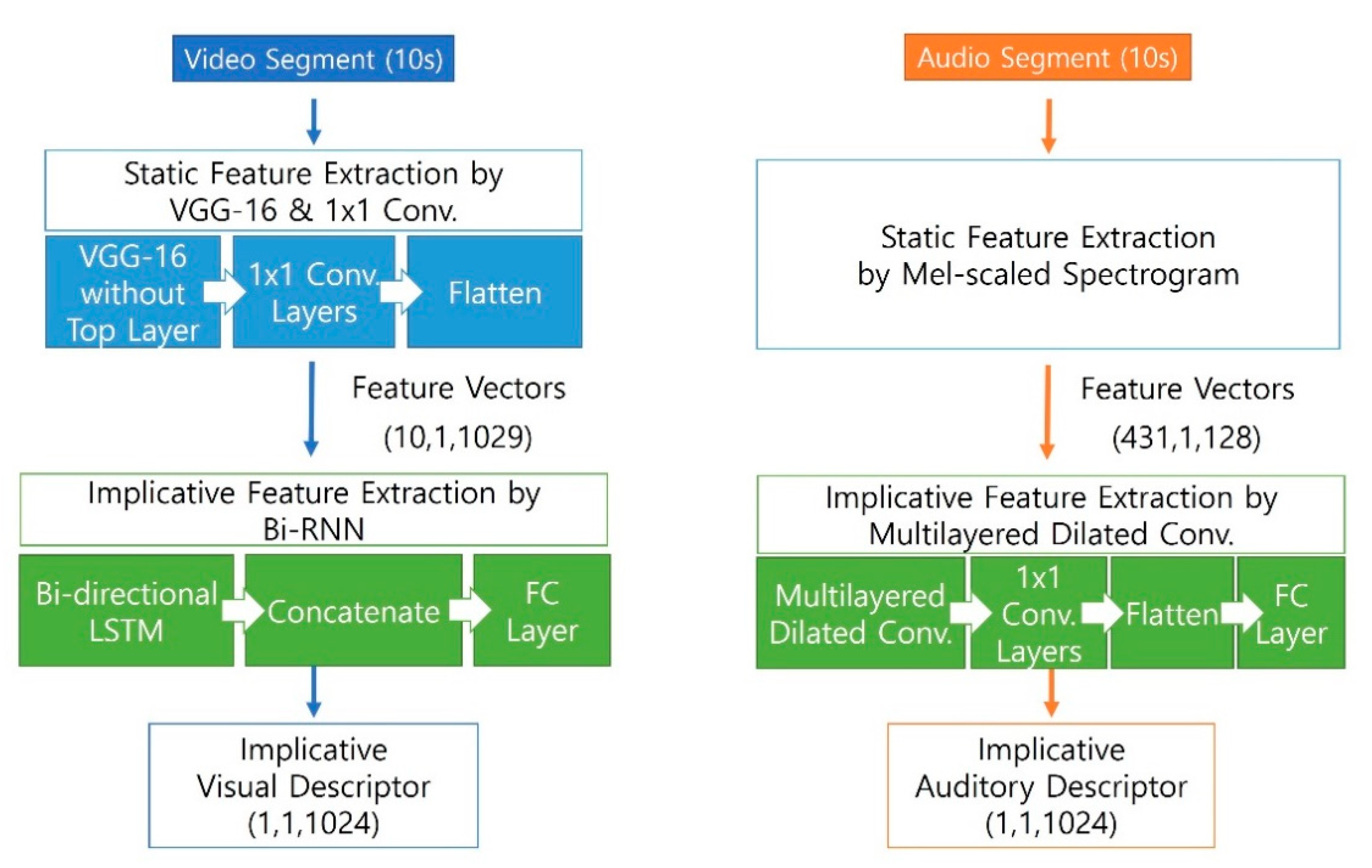

3.2. Extraction of Implicative Features

3.3. Training Component Classifiers

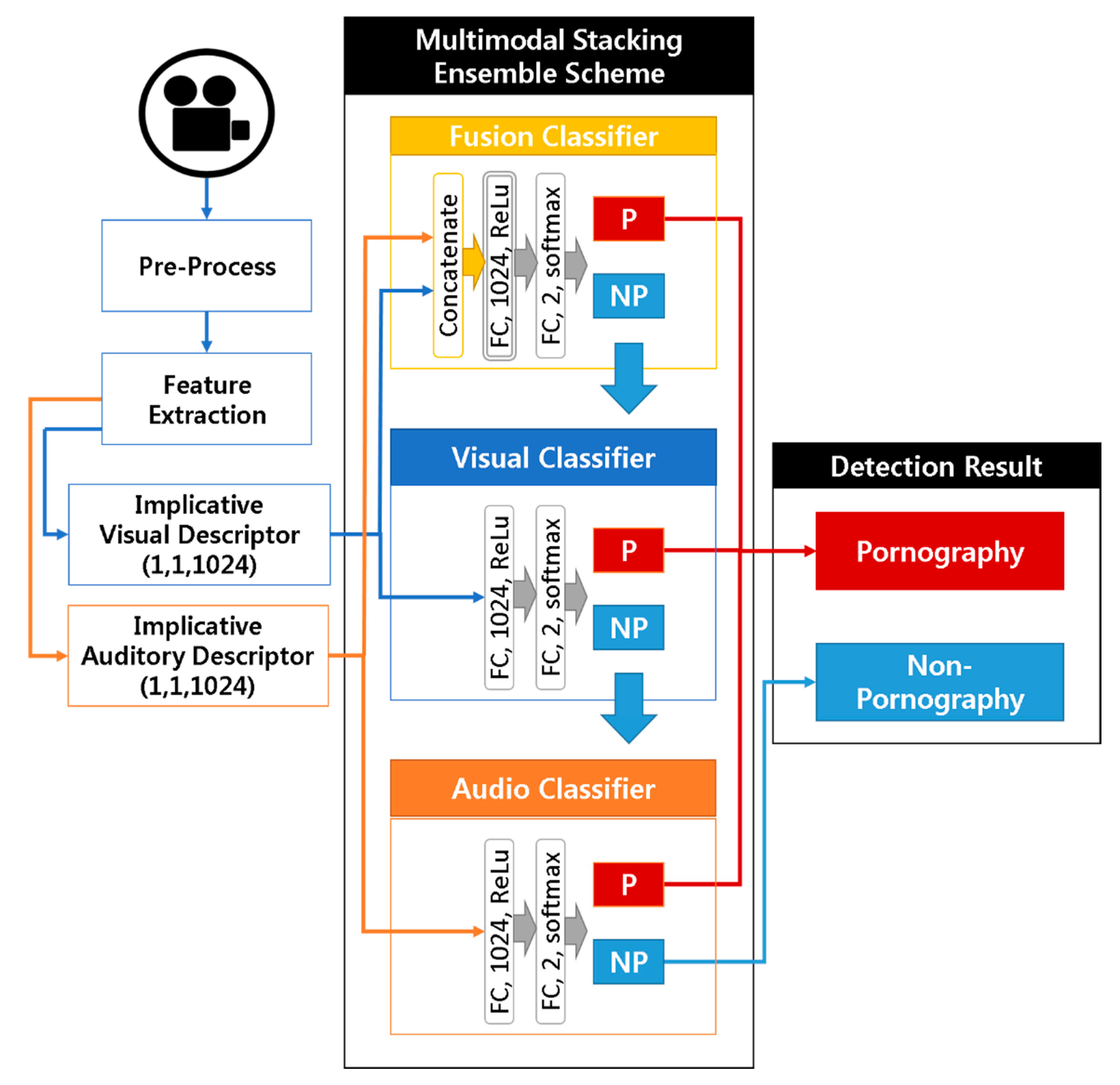

3.4. Ensembling Component Classifiers

3.5. Implementation and Optimizations

4. Experiment Results

4.1. Performance Evaluations

4.2. Analysis Results for Video Classifier

4.3. Analysis Results for Audio Classifier

4.4. Analysis Results for Multimodal Ensemble

4.5. Analysis Results for Detection Time

5. Discussion

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- KOREA Communications Commission. They Try to Block the Distribution Source of Pornographic Content. Available online: http://it.chosun.com/news/article.html?no=2843689 (accessed on 20 December 2019).

- Agarwal, S.; Farid, H.; Gu, Y.; He, M.; Nagano, K.; Li, H. Protecting world leaders against deep fakes. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, Long Beach, CA, USA, 15–21 June 2019; pp. 38–45. [Google Scholar]

- Moustaf, N.M. Applying deep learning to classify pornographic images and videos. arXiv 2015, arXiv:1511.08899. [Google Scholar]

- Moreira, D.; Avila, S.; Perez, M.; Moraes, D.; Testoni, V.; Valle, E.; Goldenstein, S.; Rocha, A. Pornography Classification: The Hidden Clues in Video Space-Time. Forensic Sci. Int. 2016, 268, 46–61. [Google Scholar] [CrossRef] [PubMed]

- Song, K.; Kim, Y. Pornographic Video Detection Scheme using Video Descriptor based on Deep Learning Architecture. In Proceedings of the 4th International Conference on Emerging Trends in Academic Research, Bali, Indonesia, 27–28 November 2017; pp. 59–65. [Google Scholar]

- Varges, M.; Marana, A.N. Spatiotemporal CNNs for Pornography Detection in Videos. In Progress in Pattern Recognition, Image Analysis, Computer Vision, and Applications; Springer: Cham, Switzerland, 2019; pp. 547–555. [Google Scholar]

- Jin, X.; Wang, Y.; Tan, X. Pornographic Image Recognition via Weighted Multiple Instance Learning. IEEE Trans. Cybern. 2019, 49, 4412–4420. [Google Scholar] [CrossRef] [PubMed]

- Xiao, X.; Xu, Y.; Zhang, C.; Li, X.; Zhang, B.; Bian, Z. A new method for pornographic video detection with the integration of visual and textual information. In Proceedings of the IEEE 3rd Advanced Information Management, Communicates, Electronic and Automation Control Conference (IMCEC), Chongqing, China, 11–13 October 2019; pp. 1600–1604. [Google Scholar]

- Farooq, M.S.; Khan, M.A.; Abbas, S.; Athar, A.; Ali, N.; Hassan, A. Skin Detection based Pornography Filtering using Adaptive Back Propagation Neural Network. In Proceedings of the 8th International Conference on Information and Communication Technologies (ICICT), Karachi, Pakistan, 16–17 November 2019; pp. 106–112. [Google Scholar]

- Kusrini, H.A.; Fatta, S.P.; Widiyanto, W.W. Prototype of Pornographic Image Detection with YCbCr and Color Space (RGB) Methods of Computer Vision. In Proceedings of the 2019 International Conference on Information and Communications Technology (ICOIACT), Yogyakarta, Indonesia, 24–25 July 2019; pp. 117–122. [Google Scholar]

- Ashan, B.; Cho, H.; Liu, Q. Performance Evaluation of Transfer Learning for Pornographic Detection. In Proceedings of the International Conference on Natural Computation, Fuzzy Systems and Knowledge Discovery (ICNC-FSKD 2019), Kunming, China, 20–22 July 2019; pp. 403–414. [Google Scholar]

- Gangwar, A.; Fidalgo, E.; Alegre, E.; González-Castro, V. Pornography and child sexual abuse detection in image and video: A comparative evaluation. In Proceedings of the 8th International Conference on Imaging for Crime Detection and Prevention (ICDP 2017), Madrid, Spain, 13–15 December 2017; pp. 37–42. [Google Scholar]

- He, Y.; Shi, J.; Wang, C.; Huang, H.; Liu, J.; Li, G.; Liu, R.; Wang, J. Semi-supervised Skin Detection by Network with Mutual Guidance. In Proceedings of the IEEE International Conference on Computer Vision, Seoul, Korea, 27–28 October 2019; pp. 2111–2120. [Google Scholar]

- Macedo, J.; Costa, F.; dos Santos, J.A. A benchmark methodology for child pornography detection. In Proceedings of the 2018 31st SIBGRAPI Conference on Graphics, Patterns and Images (SIBGRAPI), Parana, Brazil, 29 October–1 November 2018; pp. 455–462. [Google Scholar]

- Perez, M.; Avila, S.E.; Moreira, D.; Moraes, D.; Testoni, V.; Valle, E.; Goldenstein, S.; Rocha, A. Video pornography detection through deep learning techniques and motion information. Neurocomputing 2017, 230, 279–293. [Google Scholar] [CrossRef]

- Liu, Y.; Yang, Y.; Xie, H.; Tang, S. Fusing audio vocabulary with visual features for pornographic video detection. Future Gener. Comput. Syst. 2014, 31, 69–76. [Google Scholar] [CrossRef]

- Song, K.; Kim, Y. Pornographic Video Detection Scheme Using Multimodal Features. J. Eng. Appl. Sci. 2018, 13, 1174–1182. [Google Scholar]

- Liu, Q.; Feng, C.; Song, Z.; Louis, J.; Zhou, J. Deep Learning Model Comparison for Vision-Based Classification of Full/Empty-Load Trucks in Earthmoving Operations. Appl. Sci. 2019, 9, 4871. [Google Scholar] [CrossRef]

- Zeyer, A.; Doetsch, P.; Voigtlaender, P.; Schlüter, R.; Ney, H. A comprehensive study of deep bidirectional LSTM RNNS for acoustic modeling in speech recognition. In Proceedings of the 2017 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), New Orleans, LA, USA, 5–9 March 2017; pp. 2462–2466. [Google Scholar]

- Song, K.; Kim, Y. A Fusion Architecture of CNN and Bi-directional RNN for Pornographic Video Detection. In Proceedings of the 7th International Conference on Big Data Applications and Services, Jeju, Korea, 21–24 August 2019; pp. 102–114. [Google Scholar]

- Google Deepmind. Wavenet: A generative model for raw audio. arXiv 2016, arXiv:1609.03499v2. [Google Scholar]

- Farha, Y.A.; Gall, J. Ms-tcn: Multi-stage temporal convolutional network for action segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; pp. 3575–3584. [Google Scholar]

- Song, K.; Kim, Y. Pornographic Contents Detection Scheme using Bi-Directional Relationships in Audio Signal. J. Korea Contents Assoc. 2020. accepted. [Google Scholar]

- Adnan, A.; Nawaz, M. RGB and Hue Color in Pornography Detection. Inf. Technol. New Gener. 2016, 448, 1041–1050. [Google Scholar]

- Jang, S.; Huh, M. Human Body Part Detection Representing Harmfulness of Images. J. Korean Inst. Inf. Technol. 2013, 11, 51–58. [Google Scholar] [CrossRef]

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef] [PubMed]

- Sak, H.; Senior, A.W.; Beaufays, F. Long short-term memory recurrent neural network architectures for large scale acoustic modeling. In Proceedings of the 15th Annual Conference of the International Speech Communication Association, Singapore, 14–18 September 2014. [Google Scholar]

- Zhang, X.; Yao, J.; He, Q. Research of STRAIGHT Spectrogram and Difference Subspace Algorithm for Speech Recognition. In Proceedings of the 2nd International Congress on Image and Signal Processing, Tianjin, China, 17–19 October 2009; pp. 1–4. [Google Scholar]

- Keras: Deep Learning Library for Theano and Tensorflow. Available online: https://keras.io (accessed on 13 April 2020).

- Kingma, D.; Ba, J. Adam: A method for stochastic optimization. arXiv 2017, arXiv:1412.6980v9. [Google Scholar]

| Visual Classifier with Different Visual Feature Extractions | Accuracy |

|---|---|

| Visual classifier using VGG-16 and Bi-direction RNN | 95.33% |

| Stacking ensemble with only the three visual classifiers used in [17] | 94.63% |

| Audio Classifier with Different Auditory Feature Extractions | Accuracy |

|---|---|

| Audio classifier using a multilayered dilated convolution network | 89.16% |

| Audio classifier in [17] | 61.83% |

| Multimodal Ensemble Scheme | Accuracy | False Negative Rate |

|---|---|---|

| Enhanced multimodal stacking | 92.33% | 4.60% |

| Previous multimodal stacking used in [17] | 88.17% | 5.67% |

| Majority-voting ensemble | 84.30% | 27.33% |

| True Positives | False Negatives | |||||

|---|---|---|---|---|---|---|

| 1st Classifier | 2nd Classifier | 3rd Classifier | 4th Classifier | Sum | ||

| Enhanced stacking | Fusion classifier | Video classifier | Audio classifier | None | 1431/1500 (95.40%) | 69/1500 (4.60%) |

| 1398/1500 (93.20%) | 29/102 (28.43%) | 4/73 (5.47%) | ||||

| Previous stacking [17] | Video classifier | Image classifier | Motion classifier | Audio classifier | 1415/1500 (94.33%) | 85/1500 (5.67%) |

| 1162/1500 (77.47%) | 97/338 (28.70%) | 85/241 (35.27%) | 71/156 (45.51%) | |||

| 1st Classifier | 2nd Classifier | 3rd Classifier | 4th Classifier | Weighted Average Detection Time | |

|---|---|---|---|---|---|

| Enhanced stacking | 0.13 | 0.13 (0.26) | 0.04 (0.30) | None | 0.14 |

| Previous stacking [17] | 0.10 | 0.55 (0.65) | 0.50 (1.15) | 0.03 (1.18) | 0.37 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Song, K.; Kim, Y.-S. An Enhanced Multimodal Stacking Scheme for Online Pornographic Content Detection. Appl. Sci. 2020, 10, 2943. https://doi.org/10.3390/app10082943

Song K, Kim Y-S. An Enhanced Multimodal Stacking Scheme for Online Pornographic Content Detection. Applied Sciences. 2020; 10(8):2943. https://doi.org/10.3390/app10082943

Chicago/Turabian StyleSong, Kwangho, and Yoo-Sung Kim. 2020. "An Enhanced Multimodal Stacking Scheme for Online Pornographic Content Detection" Applied Sciences 10, no. 8: 2943. https://doi.org/10.3390/app10082943

APA StyleSong, K., & Kim, Y.-S. (2020). An Enhanced Multimodal Stacking Scheme for Online Pornographic Content Detection. Applied Sciences, 10(8), 2943. https://doi.org/10.3390/app10082943