1. Introduction

Transformations are a frequently encountered procedure in geodesy, Global Positioning System (GPS) science and technology, geographical information science (GIS), and other scientific fields. For example, (1) a 3D similarity transformation is usually applied to transform GPS- (World Geodetic System 84) WGS84-based coordinates to those in a local coordinate system using a bunch of common points with coordinate values in both systems. (2) In GIS, digital data produced by tracing old paper maps over a digitizing tablet need to be converted from the tablet’s non-georeferenced plane data into georeferenced plane data that can be georegistered with other digital data layers. (3) For the purpose of monitoring a whole dam, the combining of multiple point clouds from different laser stations is needed by transformations. This process is called registration of the scans/images in photogrammetry and remote sensing. (4) In computer vision, mapping a single shape obtained from one sensor to a single shape obtained from another by computing an appropriate transformation between them makes the image 3D visible.

A common approach to solving the transformation problem is the least squares (LS) adjustment of the transformation parameters from a redundant set of nonlinear forms of the Gauss–Markov (GM) model. However, the GM model assumes that only the coordinates of the target points are random in the observation vector within the GM model. It is obvious that uncertainties from the source coordinate system are missing in the coefficient matrix within the GM model [

1,

2].

Taking the uncertainties in the coefficient matrix into account, the symmetric transformation problems are referred to as the errors-in-variables (EIV) model, and the LS estimation within the EIV model is called total least squares (TLS) estimation [

3,

4,

5,

6,

7,

8]. There are several classes of methods to obtain TLS solutions: 1) Procrustes analysis (e.g., [

9,

10]); 2) quaternions (e.g., [

5,

11,

12]); (3) the Lagrange approach (e.g., [

8,

13,

14,

15,

16]); and 4) unconstrained optimization (e.g., [

3,

17,

18]). From the numerical point of view, although the analytical method is available for certain special covariance matrices [

19], transformation problems are solved by iterative methods in general, for example, the Gauss–Helmert model, the iterative GM model, or the sequential quadratic program (SQP).

Although the aforementioned transformation problems have been successfully addressed, they all focus on the similarity transformations. When we incorporate constraints into the EIV model, all kinds of transformations, including affine, orthogonal, and rigid types, can be formulated. [

17] proposed SQP to provide the constrained TLS solution for certain types of transformations. [

13] implemented variance component estimation for the EIV model with constraints. However, no one has solved the constrained TLS problem using a Gauss–Newton (GN)-type solver, which is much easier than SQP, and the statistical characteristics of the parameter estimates are straightforwardly available.

In this paper, we propose a constrained TLS algorithm by iteratively using the constrained TLS normal equation. The algorithm is suitable for solving all kinds of transformation problems, either in 2D or in 3D. Furthermore, the statistical characteristics of the parameter estimates are explicitly given by the inverted constrained normal matrix.

The remainder of this paper is organized as follows: First, we give the mathematical formulation of the constrained TLS problem and its relation to the transformation models in

Section 2. Second, we solve the TLS problem algebraically using Lagrange multipliers in

Section 3. Third, in

Section 4, we design the unconstrained and constrained TLS algorithm for all kinds of transformations. Two examples are given in

Section 5 to demonstrate the performance of the proposed robust methods in 2D and 3D. Finally, we draw conclusions in

Section 6.

2. Adaptation of the Transformation Models to the Constrained/Unconstrained TLS Problem

In the following, we introduce the constrained TLS problem and the transformation models in 2D and 3D, and finally, all types of the coordinate transformations which adapt to the constrained/unconstrained EIV model.

2.1. The Constrained TLS Problem

Let us start with the functional part of the errors-in-variables model with constraints:

In the above equation, and are the observation vector and its associated random error vector, respectively. The coefficient matrix is random or partly random, and the matrices are the associated random error matrices. Vector is the unknown parameter vector. The constraints are available.

The stochastic part of the EIV model to describe the statistical properties of all random errors is as follows:

The complete error vector is defined by a vectorization operator which reshapes the matrix to the long vector by column order. The symbol represents the unknown unit variance. Matrix is the non-negative definite cofactor matrix of the error vector .

2.2. Adaptation of 2D Transformations to the Functional Model of the TLS Problem

The 2D affine transformation with six unknown parameters for a single point is introduced as:

are the coordinates of the target system, while are the coordinates of the source system. and are the scale factors, and are the rotation angles, and are the translations. The sign is used as we omit the random errors in the equation for the sake of simple formulation.

When all six parameters are replaced by

, the above equation is rewritten as:

with transformation matrix

.

is the row vectorization operator according to the row order, i.e., the nth row stacks after the (n-1)th row.

Considering all control points, the unconstrained EIV model of the affine transformation in 2D can be given by:

Equation (5) explicitly defines the matrix and the vector in Eq (1) for all other transformations in 2D.

The operator denotes the Kronecker Product. and are the source x and y coordinate vectors and the target x and y coordinate vectors, respectively, for all control points. The vector denotes the vector of ones with length the control point number.

The orthogonal, similarity, and rigid transformation models for the single point:

are simplified versions of Equation (3). Moreover, it is obvious that Equations (6)–(8) can be reformulated using Equation (3) with three different type constraints, respectively:

Equation (5) explicitly defines the matrix and the vector in Equation (1) for all other transformations in 2D.

When the original unknown parameters are fewer in the transformation models, the number of constraints increases.

2.3. Adaptation of 3D Transformations to the Functional Model of the TLS Problem

In full analogy with the 2D affine transformation model (5), the 3D affine transformation model can be explicitly extended as follows:

with

.

Equation (12) explicitly defines the matrix and the vector in equation (1) for all other transformations in 3D.

When the other three kinds of transformations are considered, the three, five, and six constraints:

can be correspondingly incorporated for orthogonal, similarity, and rigid transformations, respectively.

2.4. Adaptation of the Transformation Models to the Stochastic Model of the TLS Problem

In

Section 2.2 and

Section 2.3, we showed that the four kinds of transformation models can be formulated using a constrained or unconstrained EIV model. In order to adapt the stochastic part of the EIV model, we propagate the covariance matrix of the observed coordinates in both the source and target systems to the covariance matrix of the EIV model for all 2D cases:

where D denotes the dispersion operator, and the Jacobian matrix for 2D is:

Similarly, we give the stochastic model of the EIV model for all kinds of 3D transformations:

where D denotes the dispersion operator, and the Jacobian matrix for 3D is:

3. A Fixed-Point Solution to the Constrained TLS Problem

In order to solve the constrained TLS optimization problem:

the traditional Lagrange approach [

20] is applied as follows:

where

and

are the vectors of the Lagrange multipliers associated with the functional part of the EIV model and the constraints, respectively. The matrix

is expressed via Kronecker product operator.

By calculating the first derivative of Equation (21), the necessary condition of the objective function (20) is given as follows:

with

.

From Equation (23), we know that

By inserting Equation (26) into Equation (24), the vector of the Lagrange multipliers is:

Combining Equations (23) and (27), the error vector can be predicted:

which also implies that the error matrix

is predicted.

Equations (22) and (25) are immediately reformulated by:

which is equivalent to:

In order to simplify the above equation,

are defined.

Now, we establish the constrained nonlinear normal equation of the constrained TLS problem:

and the corresponding solution is:

When no constraints are available (e.g., for affine transformation), the estimator of the unknown parameter is

The estimator of the unknown parameter can be expressed alternatively as:

where

denotes that the solution is of fixed-point type and can therefore be solved iteratively.

Furthermore, the statistical properties of the estimator can be also approximately given by:

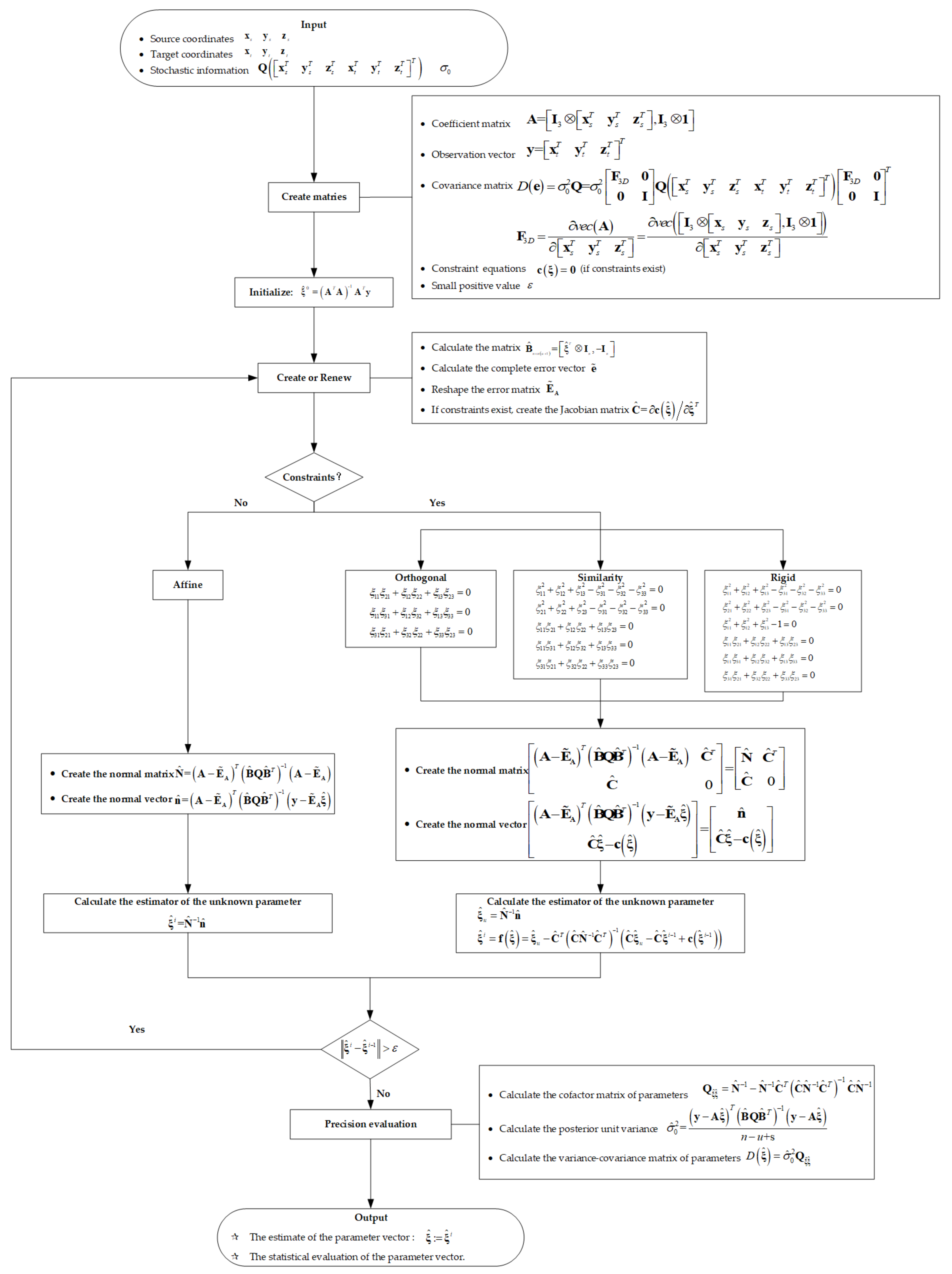

4. Algorithm Design

In this part, we summarize the developed formulas to present the general algorithm for all kinds of 3D coordinate transformations. The coordinates of the source system , the coordinates of the target system , and the corresponding stochastic information are given as input data. Note that the algorithm for 2D transformations is the simplified version of the 3D case and is therefore omitted here.

Through Equation (12), the coefficient matrix

and the observation vector

can be established as:

According to Equation (18), we propagate the cofactor matrix of the matrix by the dispersion matrix of the target and source coordinates. The a priori dispersion matrix of the target and source coordinates is usually given by the instrument precision or the precision determined by adjustment in the previous period.

The transformation kinds define the constraints. For affine transformation, no constraints are available. For orthogonal, similarity, and rigid transformations, the constraints are defined according to (13), (14), and (15), respectively.

After the abovementioned preprocessing, we can present the input for the computation:

Input (the available conditions):

- ✓

Coefficient matrix with dimensions .

- ✓

Observation vector with dimensions .

- ✓

Cofactor matrix with dimensions .

- ✓

If constraints exist, multiple quadratic constraints are given.

From the given input, we calculate the initial value of the parameter vector using .

As soon as the initial values for the parameter vector are obtained, the iterative procedure starts with the following steps:

- ⮚

Create the matrix .

- ⮚

Calculate the error vector according to Equation (28) and reshape the error matrix .

- ⮚

Create the Jacobian matrix , if constraints exist.

- ⮚

Create the normal matrix and the normal vector.

- ◆

If constraints exist, the normal matrix and the normal vector are given by the left-hand side and the right-hand side of Equation (32), respectively.

- ◆

If no constraints exist, the normal matrix and the normal vector are given by the left-hand side and the right-hand side of Equation (34), respectively.

- ⮚

Calculate the estimates of the unknown parameter.

- ◆

If constraints exist, we calculate according to Equation (33) or (35).

- ◆

If no constraints exist, we calculate according to Equation (34).

When (a suitably small positive value), the iteration terminates. Then, we provide the precision evaluation, that is, we 1) calculate the cofactor matrix of by Equation (36); 2) calculate the posterior unit variance by Equation (37); and 3) calculate the variance–covariance matrix of by Equation (38).

Finally, we present the estimate of the parameter vector

and the corresponding variance–covariance matrix as

the final output. In order to illustrate the entire computation process, we give the following flow chart of the algorithm for all kinds of transformations in

Figure 1.

5. Numerical Examples

In this part, two numerical examples are provided to verify the proposed constrained TLS algorithm. In the first example, data from [

21] presents a typical photogrammetry problem which consists of the 2-D coordinates that were measured and respectively calibrated of four side fiducial marks in a photograph, as seen in

Table 1. Here, the coordinates in the target system

,

(“Calibrated Coordinates”) and the coordinates in the source system

,

(“Measured Coordinates”) are presented in

Figure 2 to clarify their positions, and we regard them as equally weighted uncorrelated observations. The similarity transformation for a dataset was adjusted for the EIV model before by [

22] and by [

6] in two different parameterizations, i.e., either with or without shift/translation parameters, all in 2D. They both need complicated preprocessing, i.e., either the reduction of parameters or the selection of independent random elements within the coefficient matrix. Furthermore, these authors only implemented the similarity transformation.

By applying Algorithm 1 to the data set of

Table 1, the estimated 2D transformation parameters for all kinds of transformations and the TLS objective function were determined and are presented in

Table 2. The four parameters are completely different in the transformation matrix

in the affine and orthogonal transformations, whereas the four parameters are pairwise different in the similarity and rigid transformations. The results successfully correspond with the transformation models, as the affine and orthogonal transformations have different scale factors. The estimated values of the objective functions become larger and larger from the affine transformation to the rigid transformation (see the last row of

Table 2), since more and more constraints are considered. The standard deviation of the parameter estimates is given in

Table 3. The table reveals that the precision of the parameter estimates becomes higher with more constraints. However, in the last row of

Table 3, the transformation models expressed by larger numbers of constraints do not provide larger estimates of the unit standard deviation, which depends on both the redundant number and the objective function values.

In the second example, a dataset for 3D transformations, shown in

Table 4, was given by the Coordinate Systems Analysis Team (CSAT) at the National Geospatial-Intelligence Agency [

9]. CSAT preserves and releases a set of datum transformation parameter estimates for different countries via the CSAT website. Felus and Burtch provided the adjustment result for similarity transformation based on Procrustes analysis on the assumption that the covariance matrix must be expressed by the Kronecker product. Here, we implemented our proposed algorithm for all kinds of transformations by applying the dataset in

Table 4. It is important to note that our algorithm can adjust the dataset with an arbitrary covariance matrix, which is beyond the inherent assumption in Procrustes analysis.

With the dataset in

Table 4, four types of 3D transformation models were adjusted for the six control points. The estimation results for the affine, orthogonal, similarity, and rigid transformations are presented in

Table 5,

Table 6,

Table 7, and

Table 8, respectively. The results indicate that the translation parameters differ significantly among the distinct transformation models, whereas the estimated elements within the transformation matrix change slightly, which matches the precision analysis in

Table 9. The other analysis results are almost the same as the 2D results in that the values of the objective function become larger with more constraints, the precision is higher with more constraints, and the variation of the estimates

has no rule.

6. Conclusions and Outlook

In this contribution, we presented transformation models in the context of the TLS method, developed the corresponding algorithm based on constrained nonlinear normal equations, and provided a statistical assessment of the TLS adjustment results, including the cofactor matrix of the parameter estimator and the a posteriori variance factor.

In the adaptation of the transformation problems to the EIV model, we explicitly expressed the functional and stochastic models by the source and target coordinates and emphasized the differences between the transformations distinguished only by the quadratic constraints. The adaptations to 2D and 3D are quite similar. In particular, the structure of the matrix and the vector need to be enlarged by the z coordinates in the assigned place. For the adaptation, it is important to note that the number of constraints equals the number of the transformation matrix minus the number of independent parameter numbers. The number of constraints fixes the degree of freedom in the model.

After formulating the transformation models using an unconstrained or constrained EIV model, Lagrange multipliers were applied to provide the first-order necessary conditions of the TLS optimization. After some rearrangements, the constrained nonlinear normal equations were established, based on which the Newton-type iterative procedure could be implemented. The further advantage of the formulation of the constrained nonlinear normal equations is that one can explicitly compute the cofactor matrix and the variance factor, unlike with other existing methods, e.g., the sequential quadratic program.

We applied the proposed algorithm to selected examples so as to present and explain the adjustment results of all transformations with regard to the objective function value and statistical characteristics. We showed that with more available constraints, the objective function values are larger and the cofactors of the parameter estimates are smaller. The numerical results correspond to the theoretical inference.

This algorithm is not only valid for the case of many geodetic datum conversion problems, but also for other applications (photogrammetry, GIS, etc.) where the scale changes may be different or be fixed to one, which will justify the use of suitable constraints within the EIV model.

Furthermore, we hope that the discussed model and the developed algorithm contribute to convincing many—not only geodetic—researchers that the benefits arising from the use of orthogonal regression analysis outweigh the additional effort. From the methodological point of view, our TLS estimation can be generalized to any M- or L-type estimation, which will be promising in robustifying data processing for large data sets such as point clouds.