Deep-Learning-Based Models for Pain Recognition: A Systematic Review

Abstract

1. Introduction

- Review of the pain-recognition studies that are based on deep learning;

- Presentation and discussion of the main deep-learning methods employed in the reviewed papers;

- Review of the available data sets for pain recognition;

- Discussion of some challenges and future works.

2. Methodology

2.1. Search Strategy

2.2. Inclusion and Exclusion Criteria

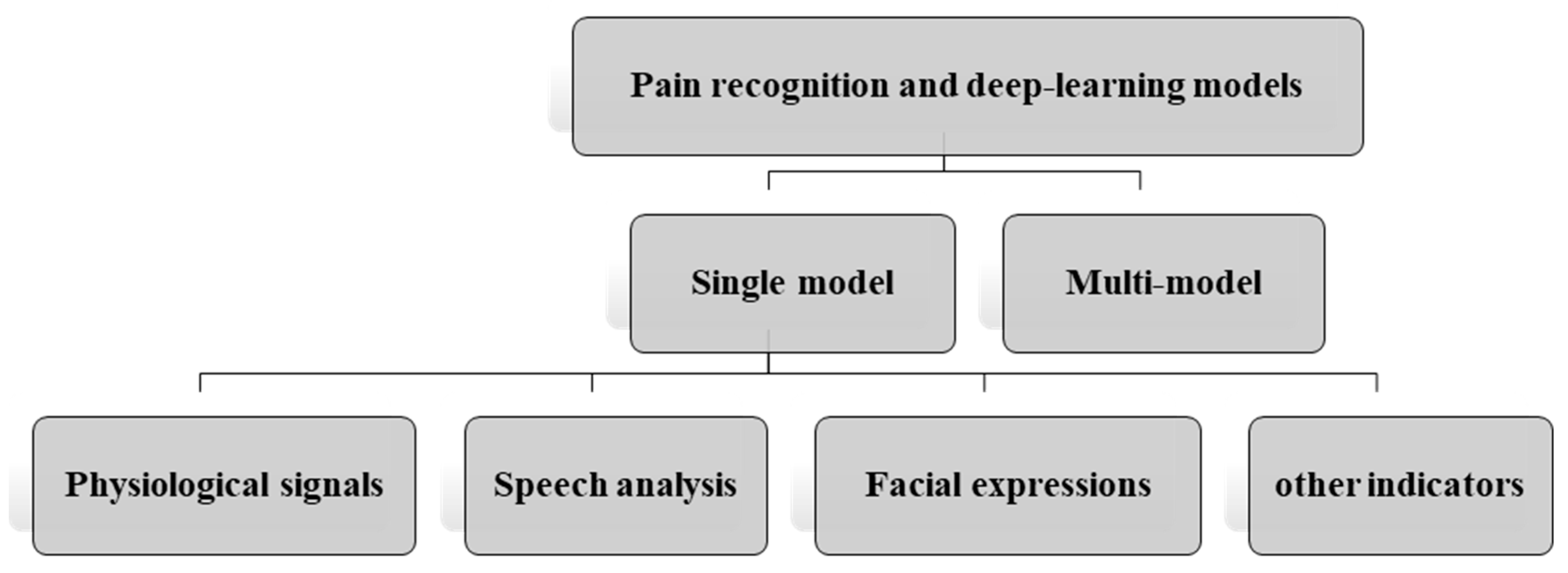

2.3. Categorization Method

- Pain recognition and deep-learning models

- o

- Single model

- ▪

- Physiological signals;

- ▪

- Speech analysis;

- ▪

- Facial expressions.

- o

- Multi-model

3. Review Papers

3.1. Single-Model-Based Pain Recognition

3.1.1. Physiological Signals

3.1.2. Speech Analysis

3.1.3. Facial Expressions

3.1.4. Other Indicators

3.2. Multi-Model-Based Pain Recognition

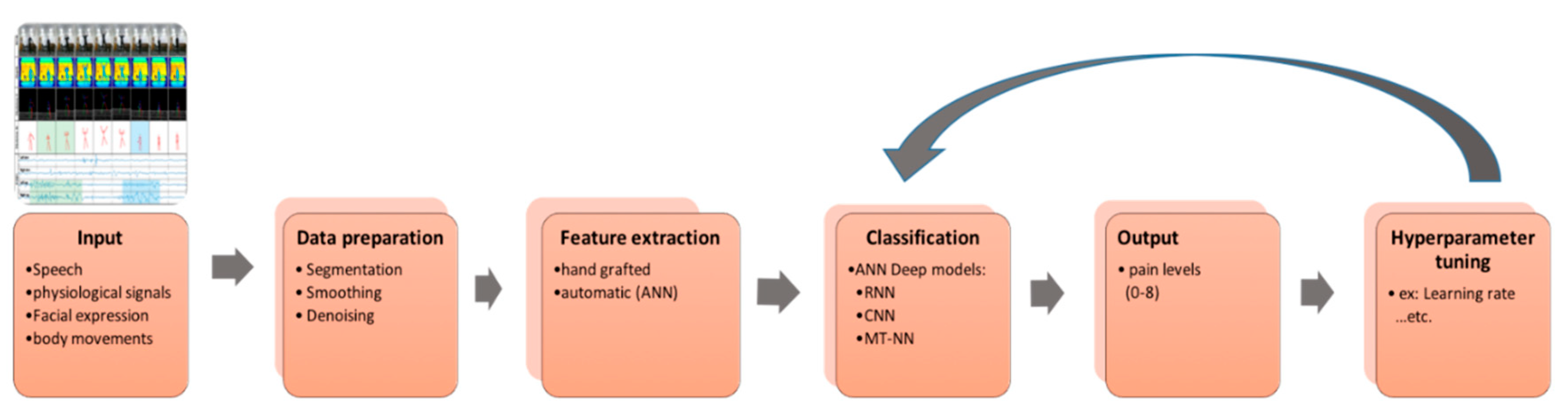

4. Primary Deep-Learning Methods Employed for Pain Recognition

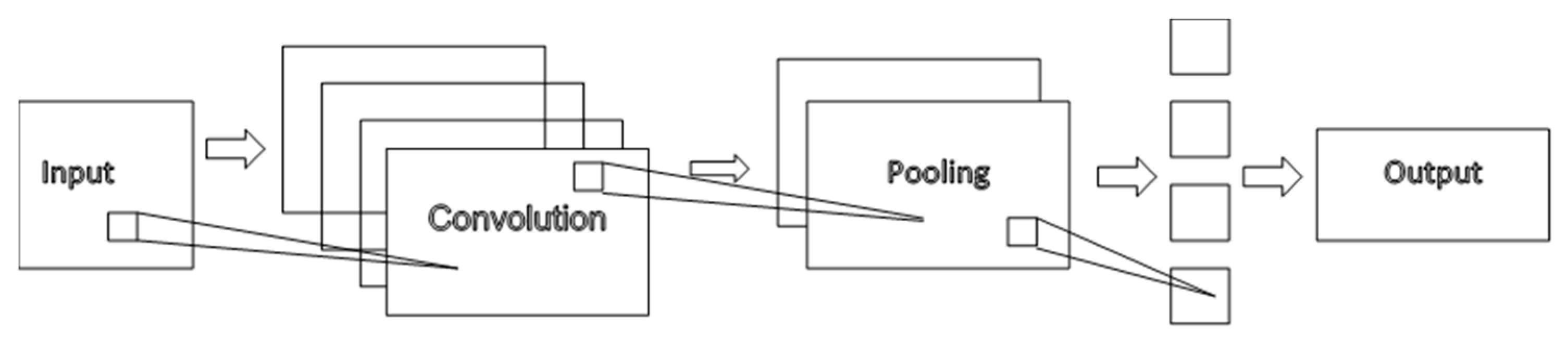

4.1. Convolutional Neural Networks (CNNs)

4.2. Recurrent Neural Networks (RNNs)

- The first setting is standard, which learns from labeled data and predicts the output;

- The second setting is called sequence setting and is able to learn data from multiple labels; It has sequences with combinations of different kinds of data and cannot break them; Therefore, it takes a full sequence to predict the next state and more;

- The third setting is called predict next setting and can take unlabeled data or implicitly labeling, such as words in a sentence. In this example application, the RNN breaks the words down into subsequences and considers the next subsequence as a target.

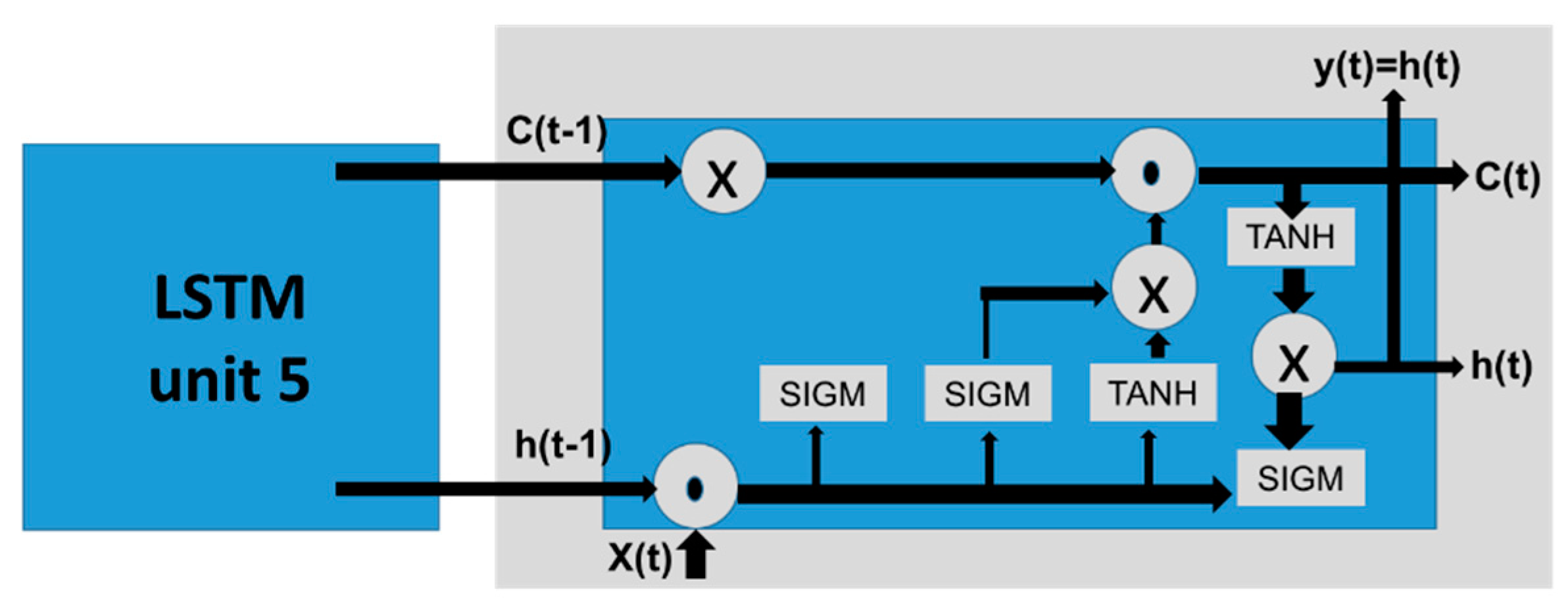

4.3. Long-Short Term Memory Neural Networks (LSTM-NNs)

4.4. Multitask Neural Network (MT-NN)

5. Datasets for Pain Recognition

6. Challenges and Future Directions

7. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Werner, P.; Lopez-Martinez, D.; Walter, S.; Al-Hamadi, A.; Gruss, S.; Picard, R. Automatic Recognition Methods Supporting Pain Assessment: A Survey. IEEE Trans. Affect. Comput. 2019, 1. [Google Scholar] [CrossRef]

- Lopez-Martinez, D.; Picard, R. Multi-task neural networks for personalized pain recognition from physiological signals. In Proceedings of the 2017 Seventh International Conference on Affective Computing and Intelligent Interaction Workshops and Demos (ACIIW), San Antonio, TX, USA, 23–26 October 2017; pp. 181–184. [Google Scholar]

- Del Toro, S.F.; Wei, Y.; Olmeda, E.; Ren, L.; Guowu, W.; Díaz, V. Validation of a Low-Cost Electromyography (EMG) System via a Commercial and Accurate EMG Device: Pilot Study. Sensors 2019, 19, 5214. [Google Scholar] [CrossRef] [PubMed]

- Tsai, F.-S.; Weng, Y.-M.; Ng, C.-J.; Lee, C.-C. Embedding stacked bottleneck vocal features in a LSTM architecture for automatic pain level classification during emergency triage. In Proceedings of the 2017 Seventh International Conference on Affective Computing and Intelligent Interaction (ACII), San Antonio, TX, USA, 23–26 October 2017; pp. 313–318. [Google Scholar]

- Martinez, D.L.; Rudovic, O.; Picard, R. Personalized Automatic Estimation of Self-Reported Pain Intensity from Facial Expressions. arXiv 2017, arXiv:1706.07154. Available online: http://arxiv.org/abs/1706.07154 (accessed on 26 July 2018).

- Rodriguez, P.; Cucurull, G.; Gonzalez, J.; Gonfaus, J.M.; Nasrollahi, K.; Moeslund, T.B.; Roca, F.X.; López, P.R. Deep Pain: Exploiting Long Short-Term Memory Networks for Facial Expression Classification. IEEE Trans. Cybern. 2017, 1–11. [Google Scholar] [CrossRef] [PubMed]

- Egede, J.; Valstar, M.; Martinez, B. Fusing Deep Learned and Hand-Crafted Features of Appearance, Shape, and Dynamics for Automatic Pain Estimation. In Proceedings of the 2017 12th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2017), Washington, DC, USA, 30 May–3 June 2017; pp. 689–696. [Google Scholar] [CrossRef]

- Wang, F.; Xiang, X.; Liu, C.; Tran, T.D.; Reiter, A.; Hager, G.D.; Quon, H.; Cheng, J.; Yuille, A.L. Regularizing face verification nets for pain intensity regression. In Proceedings of the 2017 IEEE International Conference on Image Processing (ICIP), Beijing, China, 17–20 September 2017; pp. 1087–1091. [Google Scholar] [CrossRef]

- Jaiswal, S.; Egede, J.; Valstar, M. Deep Learned Cumulative Attribute Regression. In Proceedings of the 2018 13th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2018), Xi’an, China, 15–19 May 2018; pp. 715–722. [Google Scholar] [CrossRef]

- Haque, M.A.; Bautista, R.B.; Noroozi, F.; Kulkarni, K.; Laursen, C.B.; Irani, R.; Bellantonio, M.; Escalera, S.; Anbarjafari, G.; Nasrollahi, K.; et al. Deep Multimodal Pain Recognition: A Database and Comparison of Spatio-Temporal Visual Modalities. In Proceedings of the 2018 13th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2018), Xi’an, China, 15–19 May 2018; pp. 250–257. [Google Scholar] [CrossRef]

- Bellantonio, M.; Haque, M.A.; Rodriguez, P.; Nasrollahi, K.; Telve, T.; Escalera, S.; Gonzalez, J.; Moeslund, T.B.; Rasti, P.; Anbarjafari, G.; et al. Spatio-temporal Pain Recognition in CNN-Based Super-Resolved Facial Images. In Image Processing, Computer Vision, Pattern Recognition, and Graphics; Springer: Cham, Switzerland, 2016; Volume 10165, pp. 151–162. [Google Scholar]

- Kulkarni, K.R.; Gaonkar, A.; Vijayarajan, V.; Manikandan, K. Analysis of lower back pain disorder using deep learning. IOP Conf. Series Mater. Sci. Eng. 2017, 263, 42086. [Google Scholar] [CrossRef]

- Hu, B.; Kim, C.; Ning, X.; Xu, X. Using a deep learning network to recognise low back pain in static standing. Ergonomics 2018, 61, 1374–1381. [Google Scholar] [CrossRef] [PubMed]

- Wang, C.; Peng, M.; Olugbade, T.A.; Lane, N.D.; Williams, A.C.D.C.; Bianchi-Berthouze, N. Learning Bodily and Temporal Attention in Protective Movement Behavior Detection. arXiv 2019, arXiv:1904.10824. Available online: http://arxiv.org/abs/1904.10824 (accessed on 5 June 2020).

- Lopez-Martinez, D.; Rudovic, O.; Picard, R. Physiological and behavioral profiling for nociceptive pain estimation using personalized multitask learning. arXiv 2017, arXiv:1711.04036. Available online: http://arxiv.org/abs/1711.04036 (accessed on 26 July 2018).

- Kächele, M.; Amirian, M.; Thiam, P.; Werner, P.; Walter, S.; Palm, G.; Schwenker, F. Adaptive confidence learning for the personalization of pain intensity estimation systems. Evol. Syst. 2016, 8, 71–83. [Google Scholar] [CrossRef]

- Thiam, P.; Bellmann, P.; Kestler, H.; Schwenker, F. Exploring Deep Physiological Models for Nociceptive Pain Recognition. Sensors 2019, 19, 4503. [Google Scholar] [CrossRef] [PubMed]

- Wang, C.; Olugbade, T.A.; Mathur, A.; Williams, A.C.D.C.; Lane, N.D.; Bianchi-Berthouze, N. Recurrent network based automatic detection of chronic pain protective behavior using MoCap and sEMG data. In Proceedings of the 23rd International Symposium on Wearable Computers, London, UK, 9–13 September 2019; pp. 225–230. [Google Scholar] [CrossRef]

- Skansi, S. Introduction to Deep Learning: From Logical Calculus to Artificial Intelligence; Springer: Berlin, Germany, 2018. [Google Scholar]

- Obinikpo, A.A.; Kantarci, B. Big Sensed Data Meets Deep Learning for Smarter Health Care in Smart Cities. J. Sens. Actuator Netw. 2017, 6, 26. [Google Scholar] [CrossRef]

- Sathyanarayana, A.; Joty, S.; Fernandez-Luque, L.; Ofli, F.; Srivastava, J.; Elmagarmid, A.; Arora, T.; Taheri, S.; Ridgers, N.; Bin, Y.S. Sleep Quality Prediction From Wearable Data Using Deep Learning. JMIR mHealth uHealth 2016, 4, e125. [Google Scholar] [CrossRef] [PubMed]

- Lucey, P.; Cohn, J.F.; Prkachin, K.M.; Solomon, P.E.; Matthews, I. Painful data: The UNBC-McMaster shoulder pain expression archive database. In Face and Gesture; IEEE: Santa Barbara, CA, USA, 2011; pp. 57–64. [Google Scholar] [CrossRef]

- Walter, S.; Gruss, S.; Ehleiter, H.; Tan, J.; Traue, H.C.; Crawcour, S.; Werner, P.; Al-Hamadi, A.; Andrade, A.O. The biovid heat pain database data for the advancement and systematic validation of an automated pain recognition system. In Proceedings of the 2013 IEEE International Conference on Cybernetics (CYBCO), Lausanne, Switzerland, 13–15 June 2013; pp. 128–131. [Google Scholar] [CrossRef]

- Zhang, X.; Yin, L.; Cohn, J.F.; Canavan, S.; Reale, M.; Horowitz, A.; Liu, P.; Girard, J.M. BP4D-Spontaneous: A high-resolution spontaneous 3D dynamic facial expression database. Image Vis. Comput. 2014, 32, 692–706. [Google Scholar] [CrossRef]

- Zhang, Z.; Girard, J.M.; Wu, Y.; Zhang, X.; Liu, P.; Ciftci, U.; Canavan, S.; Reale, M.; Horowitz, A.; Yang, H.; et al. Multimodal Spontaneous Emotion Corpus for Human Behavior Analysis. pp. 3438–3446. Available online: https://www.cv-foundation.org/openaccess/content_cvpr_2016/html/Zhang_Multimodal_Spontaneous_Emotion_CVPR_2016_paper.html (accessed on 7 December 2019).

- Velana, M.; Gruss, S.; Layher, G.; Thiam, P.; Zhang, Y.; Schork, D.; Kessler, V.; Meudt, S.; Neumann, H.; Kim, J.; et al. The SenseEmotion Database: A Multimodal Database for the Development and Systematic Validation of an Automatic Pain- and Emotion-Recognition System. In Multimodal Pattern Recognition of Social Signals in Human-Computer-Interaction; Springer: Cham, Switzerland, 2016; pp. 127–139. [Google Scholar] [CrossRef]

- Aung, M.S.H.; Kaltwang, S.; Romera-Paredes, B.; Martinez, B.; Singh, A.; Cella, M.; Valstar, M.; Meng, H.; Kemp, A.; Shafizadeh, M.; et al. The Automatic Detection of Chronic Pain-Related Expression: Requirements, Challenges and the Multimodal EmoPain Dataset. IEEE Trans. Affect. Comput. 2015, 7, 435–451. [Google Scholar] [CrossRef] [PubMed]

- Gruss, S.; Geiger, M.; Werner, P.; Wilhelm, O.; Traue, H.C.; Al-Hamadi, A.; Walter, S. Multi-Modal Signals for Analyzing Pain Responses to Thermal and Electrical Stimuli. J. Vis. Exp. 2019, e59057. [Google Scholar] [CrossRef] [PubMed]

- Brahnam, S.; Chuang, C.-F.; Shih, F.; Slack, M.R. SVM Classification of Neonatal Facial Images of Pain. Comput. Vis. 2005, 3849, 121–128. [Google Scholar] [CrossRef]

- Harrison, D.; Sampson, M.; Reszel, J.; Abdulla, K.; Barrowman, N.J.; Cumber, J.; Fuller, A.; Li, C.; Nicholls, S.G.; Pound, C. Too many crying babies: A systematic review of pain management practices during immunizations on YouTube. BMC Pediatr. 2014, 14, 134. [Google Scholar] [CrossRef] [PubMed]

- Mittal, V.K. Discriminating the Infant Cry Sounds Due to Pain vs. Discomfort Towards Assisted Clinical Diagnosis. 2016. Available online: https://www.isca-speech.org/archive/SLPAT_2016/abstracts/7.html. (accessed on 2 December 2019). [CrossRef]

- Al-Eidan, R.M.; Al-Khalifa, H.; Al-Salman, A.M.S. A Review of Wrist-Worn Wearable: Sensors, Models, and Challenges. J. Sens. 2018, 2018, 1–20. Available online: https://www.hindawi.com/journals/js/2018/5853917/abs/ (accessed on 7 February 2019). [CrossRef]

- Spruit, M.; Lytras, M.D. Applied data science in patient-centric healthcare: Adaptive analytic systems for empowering physicians and patients. Telemat. Inform. 2018, 35, 643–653. [Google Scholar] [CrossRef]

| Study | Deep-Learning Approaches | Task | Features-Devices | Dataset | Metric-Score |

|---|---|---|---|---|---|

| 2017 [3] | Multitask neural network (MT-NN) | Classification | Skin conductance (SC) and heart-rate features (ECG) only | Available: BioVid Heat Pain database | Accuracy 82.75% |

| 2017 [5] | Long-short term memory neural networks (LSTMs) | Feature extraction | Vocal from audio Face from video Device: Sony HDR handy cam | Collected: Triage Pain-Level Multimodal database Available: Speech data: Chinese corpus: The DaAi database Three-class (severe, moderate and mild) | WAR 72.3%: binary classes 54.2%: three-class classes |

| 2017 [6] | LSTMs | Classification | Face | Available: UNBC-MacMaster Shoulder Pain Expression Archive database | MAE 2.47 (0.18) ICC 0.36 (0.08) Confusion matrices |

| 2018 [7] | -Convolutional neural networks (CNNs) -LSTMs | -Feature extraction -Classification | Face | Available: UNBC-MacMaster Shoulder Pain Expression Archive database Cohn Kanade + facial expression database | AUC: 93.3% |

| 2017 [8] | CNN | Feature extraction | Face | Available: UNBC-MacMaster Shoulder Pain Expression Archive database | CORR 0.67 RMSE 0.99 |

| 2017 [9] | CNN-Fine-tuning-regularizing | Classification | Face | Available: UNBC-MacMaster Shoulder Pain Expression Archive database The face verification network [12] is trained on CASIA-WebFace dataset [16], which contains 494,414 training images from 10,575 identities | Unweighted Metrics MAE 0.389 MSE 0.804 PCC 0.651 Weighted Metrics: Weighted MAE 0.991 Weighted MSE 1.720 |

| 2018 [10] | Cumulative attributes (CA)-CNN | Classification | Face | Available: UNBC-MacMaster Shoulder Pain Expression Archive database | Regression: PCC (0.47, 0.53) RMSE (1.20, 1.23) Multiclass: PCC (0.36, 0.41) RMSE (1.17, 1.19) |

| 2018 [11] | CNN and LSTM | Feature extraction and classification | Face -Microsoft Kinect Version2. -Axis Q1922 thermal camera | Collected: Multimodal Intensity Pain (MIntPAIN)’ database Healthy subjects Classes: 5 | 5-fold cross-validation Accuracy The confusion matrix |

| 2017 [16] | MT-NN | Classification | ECG, SC Face | Available: BioVid Heat Pain database | MAE, RMSE, ICC |

| 2017 [17] | NN | Confidence estimation | ECG, SC, EMG Face | Available: BioVid Heat Pain database | Cross validation RMSE: 0.347 CC: 0.183 |

| 2017 [13] | LSTM (Tensor Flow) | Classification | Tomography lumbar spine pictures–from Meta Picture (MHD) arrange | Classes: 6 | 65% |

| 2018 [14] | LSTM | Classification | -Kinematic data -Motion sensors | 22 healthy people and 22 LBP patients | Accuracy: 97.2% |

| 2019 [18] | CNNs | Feature extraction and classification | EDA, ECG, EMG | Available: BioVid Heat Pain database (part1) | Accuracy: 84.40% |

| 2019 [19] | LSTM | Classification | Kinematic data EMG | Available: EmoPain database | mean F1:0.815 |

| 2019 [15] | LSTM with attention | Classification | Kinematic data | Available: EmoPain database | mean F1: 0.844 |

| Dataset Name | Title | Features | Devices | Stimuli | Participants | Classes |

|---|---|---|---|---|---|---|

| UNBC 2011 [23] | PAINFUL DATA: The UNBC-McMaster Shoulder Pain Expression Archive Database | Facial expression RGB | Two Sony digital cameras | Natural shoulder pain | 129 Shoulder pain patients (63 males, 66 females) | 0–16 (PSPI) and 0–10 (VAS) |

| BioVid 2013 [24] | Data for the Advancement and Systematic Validation of an Automated Pain Recognition System | -Video: Facial expression RGB -Biopotential signals (SCL, ECG, sEMG, EEG) | -Kinect camera -Nexus-32 amplifier | Heat pain at right forearm thermode (PATHWAY, http://www.medoc-web.com) | 90 Healthy | 4 levels of pain |

| BP4D-Spontaneous Database (BP4D) 2014 [25] | BP4D-Spontaneous: a high-resolution spontaneous 3D dynamic facial expression database | -Facial expression | Two stereo cameras and one texture video camera | Cold pressor test with left arm. | 41 healthy | 8 classes of pain as one the emotions (happiness/amusement sadness, startle, embarrassment fear, physical pain, anger, disgust) |

| BP4D + 2016 [26] | Multimodal Spontaneous Emotion Corpus for Human Behavior Analysis | -Facial expression -EDA, heart rate, respiration rate, blood pressure | -3D camera Di3D -infrared camera FLIR -BioPac | Same as before | 141 healthy | Same as before |

| SenseEmotion 2016 [27] | The SenseEmotion Database: A Multimodal Database for the Development and Systematic Validation of an Automatic Pain- and Emotion-Recognition System | -Facial expressions: -Biosignals -ECG, EMG -GSR - RSP -Audio | -3 cameras (IDS UI-3060CP-C-HQ) -g.MOBIlab -g.GSRsensor -Piezoelectric crystal sensor (chest respiration waveforms) www.gtec.at/Products/Electrodes-and-Sensors/g.Sensors-Specs-Features -Digital wireless headset microphone (Line6 XD-V75HS) + directional microphone (Rode M3) | Heat pain Medoc Pathway thermal simulator | 40 heathy (20 male, 20 female) | 5 (no pain, 4 levels of pain) |

| EmoPain 2016 [28] | The Automatic Detection of Chronic Pain-Related Expression: Requirements, Challenges and the Multimodal EmoPain Dataset | -Audio, Facial expressions -Body movements -sEMG | -8 cameras -Animzaoo IGS-190 -BTS FREEEMG 300 | Natural while doing physical exercises. |

22 chronic low back pain (CLBP) (7 male, 15 female) | 2 for face 6 for body behaviors combined: binary |

| MIntPAIN 2018 [11] | Deep Multimodal Pain Recognition: A Database and Comparison of Spatio-Temporal Visual Modalities | Facial expression -RGB, depth -Thermal | -Microsoft Kinect Version2 -Axis Q1922 thermal camera | Electrical pain | 20 healthy | 5 classes (0–4) |

| X-ITE pain 2019 [11] | Multi-Modal Signals for Analyzing Pain Responses to Thermal and Electrical Stimuli | -Audio, Facial expressions -ECG, SCL, sEMG (trapezius, corrugator, zygomaticus) | -4 cameras -BioPac | Heat and electrical. | 134 healthy adults | 3 |

| COPE 2005 [29] | SVM Classification of Neonatal Facial Images of Pain | Facial expression | heel lancing | heel lancing for blood collection | 26 neonates (age 18–36 h) | 5 (pain, rest, cry, air puff or friction) |

| YouTube 2014 [30] | Too many crying babies: a systematic review of pain management practices during immunizations on YouTube. |

-Video -Audio | injection | immunizations (injection) | 142 infants | FLACC observer pain assessment |

| IIIT-S ICSD 2016 [31] | Discriminating the Infant Cry Sounds Due to Pain vs. Discomfort Towards Assisted Clinical Diagnosis | -Audio | injection | immunizations (injection) | 33 infants | 6 (pain, discomfort, hunger/thirst and three others) |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

M. Al-Eidan, R.; Al-Khalifa, H.; Al-Salman, A. Deep-Learning-Based Models for Pain Recognition: A Systematic Review. Appl. Sci. 2020, 10, 5984. https://doi.org/10.3390/app10175984

M. Al-Eidan R, Al-Khalifa H, Al-Salman A. Deep-Learning-Based Models for Pain Recognition: A Systematic Review. Applied Sciences. 2020; 10(17):5984. https://doi.org/10.3390/app10175984

Chicago/Turabian StyleM. Al-Eidan, Rasha, Hend Al-Khalifa, and AbdulMalik Al-Salman. 2020. "Deep-Learning-Based Models for Pain Recognition: A Systematic Review" Applied Sciences 10, no. 17: 5984. https://doi.org/10.3390/app10175984

APA StyleM. Al-Eidan, R., Al-Khalifa, H., & Al-Salman, A. (2020). Deep-Learning-Based Models for Pain Recognition: A Systematic Review. Applied Sciences, 10(17), 5984. https://doi.org/10.3390/app10175984