1. Introduction

Heritage can be described as a mode of cultural production in the present that has recourse to the past [

1]. This past, mankind’s history, is fundamental to our identity as individuals and as members of society [

2]. Such importance arises from the belief that cultural heritage allows people to identify, protect and manage aspects of the past in favour of the present and posterity [

3], as well as contributing towards people’s sense of cultural identity. In order to show different forms of heritage to current and future generations, museums have become the cultural conscience of nations [

4] by providing visitors with learning experiences [

5] about their exhibits.

Extensive research has been carried out on the relevance of museums as educational institutions [

6] and how should they approach the displays of cultural heritage [

7], motivating visitors to effectively engage in museum experiences [

8].

Consequently, these institutions are increasingly advocating immersive, first-hand experiences where people are given the chance to look, touch or even experiment with exhibited objects [

9,

10]. This method of increasing visitor engagement encourages the use of different technologies and approaches. For instance, virtual reality in the cultural heritage domain is gaining popularity, in museums [

11,

12] and also to augment monuments [

13]. In addition, an example of a more physical approach to engage visitors relies on the use of 3d printed replicas that visitors can touch and interact with [

14,

15].

Also, the Internet of Things (IoT) [

16] can be a rather succulent emerging technology to exploit for the purpose of improving visitors’ experience. IoT can be defined in terms of

smart objects. These can be depicted as “

autonomous, physical digital objects augmented with sensing/actuating, processing, storing and networking capabilities” [

17].

Enriching museum visits with smart objects contributes to an enhanced promotion of cultural heritage because users benefit from more enjoyable cultural experiences. It thus appears preferable to employ multimedia facilities which enable visitors to interact with their surrounding cultural environment and better acquire knowledge [

18].

One approach for integrating smart objects into museums is by means of mobile phones acting as nexuses between exhibits and visitors. Combined they can provide added value to museums through advanced visualization of exhibits or

gamification [

19] techniques. Question-based gamification methods are representative under this category and one must acknowledge the arduous task of creating broad, varied and appealing question sets. Likewise laborious and time-consuming might prove to be the maintenance of smart objects.

A number of works shed light upon large scale question generation methodologies [

20,

21,

22], often resorting to Natural Language Processing (NLP) [

23] techniques in order to automate this laborious process. Other lines of research [

24,

25,

26,

27,

28] have explored the feasibility of automatically producing questions for games by harnessing semantic web technologies [

29]. These frequently rely on the extraction of information from linked data [

30] knowledge graphs, such as Wikipedia’s (Wikipedia site:

https://www.wikipedia.org/) semantic version, DBpedia (DBpedia site:

https://www.dbpedia.org).

However, the potential of automatic question generation schemes using linked data applied to gamified museum environments has not yet been addressed by research. The challenge is, therefore, to effectively exploit Linked Datasets to automatically yield questions adapted to cultural heritage displayed in museums.

Furthermore, the combination of smart objects and automatic questions allows to easily grant interactive capabilities to every museum exhibit. Normally, only some of the exhibitions in a museum are augmented with technology, typically a few rooms. This means, therefore, that with the use of the proposed platform, visitors benefit from having a broader range of augmented exhibits at their disposal, thus allowing them to interact with any exhibit of their choice. This, in turn, offers them a potentially richer museum experience. Additionally, the automatic nature of the questions produced means that museum owners also benefit from the use of the platform, since smart object maintenance will require a considerably reduced time investment for museum owners.

This paper proposes a solution that consists of a gamification platform to enrich and enhance museum experiences. The aim is to provide visitors with more knowledge about the collection of exhibits through an enjoyable learning mechanism. In order to achieve this goal, the platform is based on multiple-choice questions that are automatically produced by extracting linked data from DBpedia and mining text descriptions about the exhibits through entity linking tools [

31]. The platform is validated in a real world scenario, concretely in the Telecommunications Museum

Professor Joaquín Serna located at

Escuela Técnica Superior de Ingenieros de Telecomunicación, Universidad Politécnica de Madrid, Spain.

The rest of this paper is organised as follows. First, an overview of related work in Linked Data, smart objects and gamification in museums is given in

Section 2. Second,

Section 3 describes the methodology followed to automatically produce question sets. Afterwards,

Section 4 presents the architecture of the proposed gamification platform.

Section 5 portrays a case study implementing and adapting the gamification platform to

Joaquín Serna Telecommunications Museum. Then,

Section 6 elaborates on the evaluation of the platform at the aforementioned museum. Finally,

Section 7 addresses the conclusions drawn from this work.

2. Background

This section details the background and related work for the platform introduced in this paper. Firstly, an outline on Linked Data and its applications in automatic question generation schemes is given in

Section 2.1. Secondly,

Section 2.2 explores the use of Linked Data towards the promotion of cultural heritage in museums. Finally, gamification- and smart object-driven strategies employed in museums to promote visits are discussed in

Section 2.3.

2.1. Linked Data

Data on the Semantic Web [

29] are represented following the conventions of Resource Description Framework (RDF) [

32] standard, using triples format. Triples are each constituted by a subject, a predicate and an object. To express these, several standardised languages have been developed, such as RDF XML [

33], Turtle [

34], JSON-LD [

35], etc. Furthermore, RDF data can be queried using the semantics and syntax of standarised and well known RDF query languages, such as SPARQL [

36].

Additionally, a set of vocabularies known as ontologies enable to describe, represent and relate terms pursuant to their domain. Typically, each ontology represents an area of concern by providing controlled vocabularies of concepts with machine-readable semantics. There is a large number of widely used ontologies to describe different fields of knowledge. For example, the International Committee for Documentation Conceptual Reference Model (CIDOC CRM) ontology has been internationally adopted to enable data interchange of cultural heritage and it has become an ISO standard since 2006 [

37].

To further highlight the extent of the aforementioned ontology’s relevance, the renowned (In 2018 Prado Museum was ranked amongst the top 15 most visited European museums

https://www.statista.com/statistics/747942/attendance-at-leading-museums-in-europe/) Prado Museum in Spain underwent a digital transformation in 2016 [

38] resulting in the publication of their online collection as an RDF graph. The model was described using International Committee for Documentation (CIDOC) Conceptual Reference Model (CRM) vocabularies. Other examples of prominent museums having their holdings published as Linked Data based on CIDOC CRM ontology include: The British Museum [

39] and The National Gallery [

40].

It must be taken into account that, in the cultural heritage domain, there are also other ontologies and schemas used, such as Descriptive Ontology for Linguistic and Cognitive Engineering (DOLCE) [

41] and Dublin Core (DC) [

42].

DOLCE is, like CIDOC CRM, a global ontology consisting of general terms that can be applied across different domains. This ontology was not specifically conceived to describe and represent cultural heritage, however, since it aims to provide a formal description of a particular conceptualization of the world [

43], DOLCE is also used in this area. In addition, DC schema provides a small list of vocabularies that can be used to describe metadata about a given item.

Given that an ontology usually refers to a semantically complex set of vocabularies with rich relationships between them, DC is considered a metadata element set or schema because it is a rather simple ontology. This simplicity is sometimes, in fact, a desirable trait when describing cultural heritage because some properties of exhibits require expert knowledge [

44] in order to be correctly described using CIDOC CRM or DOLCE vocabularies. DC can offer a more straightforward, yet less accurate alternative.

Furthermore, there are also hierarchical collections of words known as thesauri. These aim to help index and extract terms classified into a particular topic or area of knowledge. For instance, UNESCO offers a thesaurus that intends to contribute towards helping to analyse and retrieve documents and publications in several fields, including education and culture [

45]. This thesaurus is constructed based on the SKOS taxonomy [

46], which, unlike ontologies, it focuses on organising knowledge instead of representing it.

Provided the machine-readable semantics brought about by Linked Data, one of the many approaches towards harnessing these data consists in the automation of knowledge extraction procedures. A large number of fields enjoy the benefits of such automation, as is the case of question generation schemes.

There is considerable research on methods to exploit Linked Datasets to automatically generate questions, often resulting in some type of quiz-based game. Some of these examples include

Clover Quiz [

28],

WhoKnows [

27] or

Linked Data Movie Quiz (LDMQ) [

26]. Clover Quiz is a multiplayer trivia game for Android that automates the generation of multiple-choice questions using SPARQL templates to extract knowledge from DBpedia. The main purpose is to evaluate the quality of the data and categorisation of DBpedia’s entities. Similarly, Whoknows offers a quiz game which aims to provide an appealing form of ranking DBedia properties according to their relevance in describing a given entity. Unlike the first two mentioned games, LDMQ does not rely on DBpedia to generate questions. Instead, this game produces quizzes based on a linked dataset about cinematography.

Additionally, other works focus on automatically generating questions to create educational evaluation items from Linked Data. Some examples include a quiz game based on the greek DBpedia [

24] and a platform for low stake assessment [

25]. These seek to determine the viability of using such questions in educational contexts.

The aforementioned quiz games use relevant data in DBpedia to automatically produce questions about any topic contained in this Linked Dataset. By querying categories such as history, science or places, they can extract many entities from DBpedia to produce questions. Our proposed platform also intends to extract semantic data from DBpedia to produce questions. However, the proposed approach requires to previously analyse textual descriptions of exhibits in a process known as entity linking (refer to

Section 3), in order to automatically extract relevant complementary information about a particular item in the cultural heritage domain. This means that we aim to find data with a greater level of granularity and, therefore, the data extraction process is more intricate.

2.2. Linked Data towards the Promotion of Cultural Heritage

Although increasingly more museums are deciding to publish their holdings as Linked Data, there are few bodies of research that focus on exploiting Linked Data towards the promotion of cultural heritage visits and the creation of value-added museum experiences.

Kovalenko et al. [

47] have designed an application to serve personalised museum tours. This is achieved by displaying information about exhibits which is enriched through live queries to external Linked Data sets (e.g., DBpedia). Queries aim to extract complementary relevant data regarding the artefact. A recommendation system is also included based on visitors’ profiles.

Wang et al. [

48] propose a similar platform based on RDF models that recommends exhibits and provides an interactive mobile museum guide comparable to the previous item of research mentioned. Additionally, this platform offers a

Tour Wizard that generates online museum tours where visitors can semantically search for artworks or related concepts to add them to the tours.

Unlike both aforementioned bodies of research, that exploit Linked Data to conceive an application to provide custom museum tours-, Kiourt et al. [

49] aim to blend cultural heritage and education through a virtual museum framework. It is based on semantic web and game engine technologies where users can tailor virtual 3D exhibitions. However, this framework is not mobile-phone based, therefore hindering the possibility to use it throughout a real museum visit. The platform proposed in this paper also combines gamification with Linked Data, but it has been designed to be used during visits as the game is conceived for smartphones.

2.3. Gamification and Smart Objects in Museums

Digital games, whether on computer-, console- or mobile-based platforms, have become an integral part of culture [

50]. Their appeal and popularity are primarily attributed to the interactive [

51] and competitive [

52] qualities of games. Therefore, it comes as no surprise that there is a growing interest in aiming to extrapolate these engaging gaming features to serve other purposes than pure leisure. On this sense, a new term known as

gamification has been coined. Deterding et al. [

19] define gamification as “

an informal umbrella term for the use of video game elements in non-gaming systems to improve user experience (UX) and user engagement”.

Amongst the techniques employed by museums to provide more appealing and engaging ways of displaying cultural heritage, we endeavour to delve into the integration of smart objects and gamification methods in museum environments.

There are many museums that employ gamification strategies to entertain visitors during visits. Such is the case, for instance, of the National Museum of Scotland [

53], RijksMuseum (Amsterdam) [

54] or The British Museum [

55]. The first is based on capture the flag games where two groups compete against each other by scanning and capturing locations inside the museum to win. The second is a family-oriented quest app challenging visitors to solve a number of puzzles about some of the exhibits displayed. The third app enables visitors to play a scavenger hunt game, searching for and scanning the required artefacts to learn fun facts about them through questions.

The last two aforementioned approaches are, like the platform proposed in this paper, question-based. However, their apps draw visitors’ attention to only a narrow selection of exhibits, thus limiting visitors’ museum experience. Provided that the questions displayed have to be manually crafted and updated, it is reasonable to fathom the vast amount of time and resources required to scale their game to include all of the museums’ holdings. In contrast to this limitation, the proposed app allows visitors to have a personalised museum experience by scanning and playing questions on any exhibit of their choice. As a result, any exhibit can be seamlessly granted with smart capabilities, highlighting the versatile creation of smart objects from museum holdings.

Regarding smart objects, there are many projects proposing different ways in which they can be integrated into the museum environment. The work published by Biondi et al. [

56] relies on Bluetooth beacon devices to automatically display on visitors’ smartphones information about exhibits. Chianese et al. [

57,

58] carried out a project to enhance sculpture-based exhibitions. By means of Bluetooth sensors, nearby sculptures are detected and displayed in an app. Instead of presenting textual information, the sculpture shown on screen begins to talk about itself as well as recommend other sculptures. This approach provides an alternative to traditional audio guides.

Petrelli et al. [

59] introduced a platform refered to as

the meSch project Within this project, different prototypes have been implemented in several museums. One of these projects is also Bluetooth sensor dependent. These sensors are integrated as brooches that distinguish visitors according to their age and language. Visitors also are provided with NFC-augmented historical postcards that can be scanned using NFC readers equipped with small screens. The combination of the brooch and the NFC reader determines which content will be displayed on the screen. Another application of their technology [

12] is a magnifying glass that reveals different layers of digital content when visitors point it over exhibits that have been augmented. The meSch project was also extended to create smart replicas [

15] in the “Atlantic Wall” exhibition of the Museon in Hague, Netherlands. These replicas are 3D printed models of the original objects. Visitors are offered a simple interaction procedure where they can place replicas on display cases and these play multimedia related to the given exhibit.

3. Automatic Question Generation Methodology

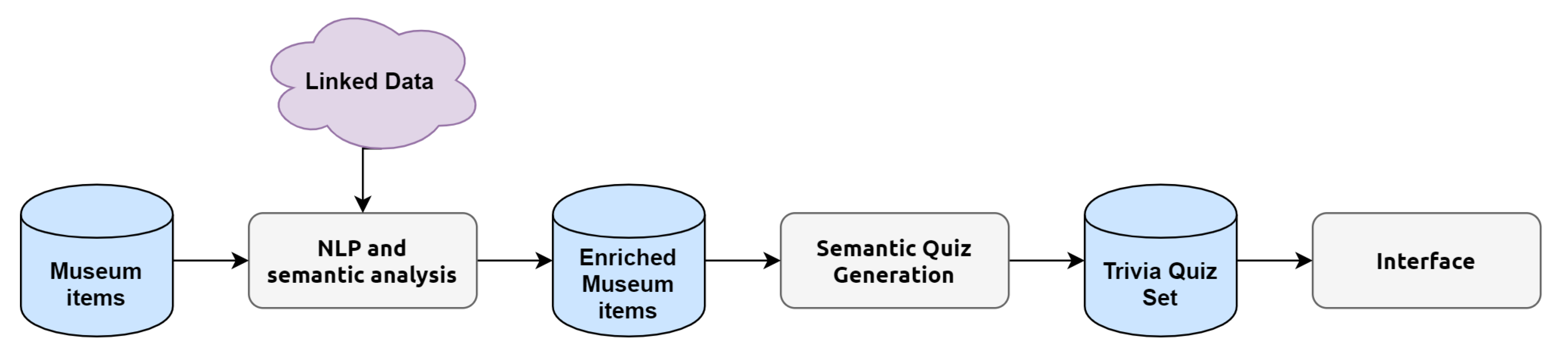

The proposed platform aims to provide educational and custom museum experiences by means of smart objects combined with gamification techniques. A crucial aspect of this platform is the automatic generation of questions. Therefore, in order to produce these questions, a methodology has been followed, which is portrayed in

Figure 1. Thus, this section will elucidate the concepts behind the creation of such quiz set. With respect to smart objects, their implementation is more suitably described within the interface and user interaction schemes, which are addressed in

Section 4.

Regarding the procedure outlined, there are two principal noteworthy stages:

NLP and semantic analysis together with

semantic quiz generation. The former process is described in

Section 3.1; the latter in

Section 3.2. The ideas conveyed will be illustrated with examples from popular artworks belonging to the renowned Prado Museum (Prado Museum website:

https://www.museodelprado.es/) in Spain.Given its popularity and well-documented holdings, we considered it to be an appropriate starting point to assess the viability of the project and methodology in mind.

3.1. NLP and Semantic Analysis

Museum exhibits are often complemented with their corresponding captions which briefly describe them. These short descriptions are typically excerpts from more extensive documentation that can be found on museums’ online collection. The intention is, therefore, to extract relevant information from these exhibits through the mining and analysis of these elaborated descriptions using NLP techniques and by relying on Linked Datasets to obtain additional relationships about such works.

The

Entity Linking process (the semantic annotation process) employs tools that perform Named Entity Recognition (NER) and Named Entity Disambiguation (NED) tasks to locate, classify and distinguish between named entities in the text provided. The disambiguation stage yields matching entries in the Linked Datasets provided. As mentioned in the introduction, DBpedia is the Linked Data knowledge base of choice for our purposes due to its vast amount of entries. To carry out the entity recognition and disambiguation procedures, Babelfy [

60] API (Babelfy API official site:

http://babelfy.org/) has been selected due to its multilingual capabilities (the text to be processed is written in Spanish) and seamless integration with Wiki- and DB- pedia.

Once results are returned in the form of URLs to the matching DBpedia entries, these have to be classified conforming to the type of entity (category) they represent. Different ontology terms are used to describe diverse properties of entities according to their type. Thus, this categorisation procedure is primordial because only this way can one appropriately query for details of given resources on DBpedia using the right ontology terms. Finally, the classified Linked Entities and the documentation about the corresponding museum item are stored.

For instance, complementary information on Velazquez’s

Las Meninas is to be retrieved from Prado Museum’s online collection. An extract from the result of processing this text (Prado Museums’ online documentation about

Las Meninas:

https://www.museodelprado.es/en/the-collection/art-works?searchObras=las%20meninas) with Babelfy API is provided:

“[...]Reflected in the mirror are the faces of Philip IV and Mariana of Austria[...]”.

The underlined concepts are those that have been linked to their corresponding DBpedia entities. The term “mirror” has been identified, hence it can be classified as an object using the schemes described above. A potential question to ask could be for example what other paintings portray mirrors in them. Additionally, the entities linking to King Philip IV of Spain and Queen Mariana of Austria have been recognised. These can be classified as persons, concretely royalty. DBpedia describes people with specific ontology vocabularies, which will be different from the terms used to describe objects (e.g., mirrors). Therefore, possible questions that spring to mind could potentially consist in asking about their place and date of birth/death, which can be known by querying specific properties that characterise the set representing people, i.e., .

However, the suggestions of proposed questions are merely illustrative thus far; they have been introduced to inspire the reader to think about possible questions that can be devised using this scheme.

Section 3.2 further elaborates on the quiz generation strategy.

In addition to the aforementioned semantic annotation of text descriptions, another form of obtaining supplementary relevant data related to a given museum item consists in directly resorting to DBpedia. Since the exhibit’s documentation presumably tends to cover aspects such as the authorship and type of work, it is important to shift our efforts towards searching for information that is not included in the item’s description. Particularly interesting results considering the

topics DBpedia encompasses entities in. Referring back to

Las Meninas example, the result of the

entity search (

Las Meninas’ structured data version published in DBpedia:

http://dbpedia.org/page/Las_Meninas) shows that one of the subjects Velazquez’s painting has been classified is

Portraits of Monarchs. Therefore, querying about such topic will yield other works portraying royalty and hence one could prepare questions based on these results. However, the matter of relevance at this stage is the list of subjects presented. These are retrieved from DBpedia and complement the item’s documentation. This, together with the already included Linked Entities, form the enriched version of museum items.

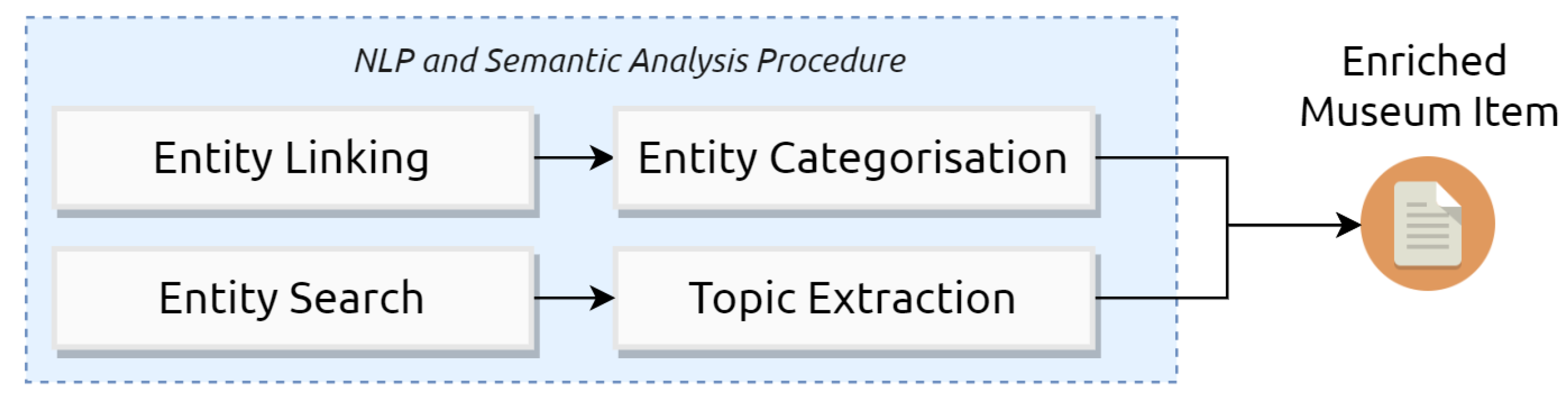

Figure 2 is presented as a means to encapsulate the two main approaches described to extract additional information about museum items.

3.2. Semantic Quiz Generation

Prior to starting the devising of questions, some considerations shall be taken into account. It must be borne in mind that the quiz game will be displayed in users’ smartphones, thereby laying emphasis on producing a mobile-friendly interface. A multiple choice-based trivia is therefore an appropriate form of presenting questions in smartphones. Multiple choice questions take less time to answer [

61], thus allowing users to easily and promptly tap the selected answer in their smartphones instead of having to type it in.

The structure of a Multiple Choice Question (MCQ) consists in the following three items [

62]: the

stem: the question to formulate; the

key: the correct answer; and the

distractors: a set of wrong, yet plausible alternatives. The objective of the

semantic quiz generation stage is to produce sets of MCQs according to the described format and in an automated manner.

In order to achieve such automation, MCQs will be generated through question templates. Question templates contain three main elements: the question to formulate; the category, if any, into which the question has been classified; and a parametrised SPARQL query. These, together with the enriched museum data, are fed into the automated question generator. Such generator consists in a script that evaluates the annotated data obtained about a museum item against the templates that comply with the specified criteria, i.e., the question category or subject matches the topics of at least one of the pieces of annotated data. Then, the SPARQL query is executed in order to retrieve: (i) the correct answer to the question ( the key) and (ii) a list of randomly selected distractors from a set of elements similar to the answer.

With the intention of implementing gamification rules for the Trivia Quiz Game, distractors will be sorted into different sublists according to their difficulty, thus adapting the game to different expertise levels. The suggested approach benefits from the research produced by Zhu and Iglesias [

63], which exploits entity similarity scores computed by Sematch (Sematch is an entity similarity score calculator which has a working demo found in:

http://sematch.cluster.gsi.dit.upm.es/) framework in order to classify distractors in consonance with their difficulty. Such scores range from 0 (no apparent similarity between the two named entities) to 1 (strong similarity between them). A total of three sublists of distractors will be generated, corresponding to easy, medium and hard difficulties.

The output of this stage is hence the enriched museum item together with an array of questions, their answers and the three aforementioned distractor lists. The assignation of the three distractors needed to complete the MCQ is performed in a posterior stage where players’ expertise level can be checked. Further details are provided in

Section 4.

Referring back to

Las Meninas example presented in

Section 3.1, given the enriched data version produced on this exhibit, it can be, for instance, evaluated against a question template resembling the structure presented in

Listing 1. The question generator executes the query on DBpedia’s SPARQL endpoint in order to obtain a set of distractors. A possible output of this evaluation is illustrated through the extract provided in

Listing 2, portraying the enriched museum item data and the questions formed. The assorted lists of distractors contain their name and their associated similarity score with respect to the entity

Philip IV of Spain.

Listing 1. Example of a simple question template.

{ "stem": "Who is the successor of Entity.name?",

"category": "Royalty",

"query": "SELECT ?uri ?name WHERE{

?uri a dbo:Royalty.

?uri rdfs:label ?name.

FILTER (lang(?name) = ’en’) }"

}

Listing 2. Example extract of an output produced by the automated question generator. For illustrative and simplistic purposes, some properties have been omitted.

{ "name": "Las Meninas",

"description": "This is one of Velázquez‘s largest paintings ..."

"annotated_entities":[ {"uri":"http://dbpedia.org/page/Philip_IV_of_Spain",

"type":"Royalty"}],

"game":[{"question":"Who is the successor of Philip IV of Spain?",

"answer":"Charles II",

"easy_distractors":[ {"label":"John of Bohemia" , "score":0.293},

{"label":"Christina, Queen of Sweden" , "score":0}],

"medium_distractors": [ {"label":"George V" , "score":0.614},

{"label":"Alfonso XIII" , "score":0.653}],

"hard_distractors": [ {"label":"Philip V" , "score":1},

{"label":"Amadeus I" , "score":0.900}] }]

}

4. System Architecture

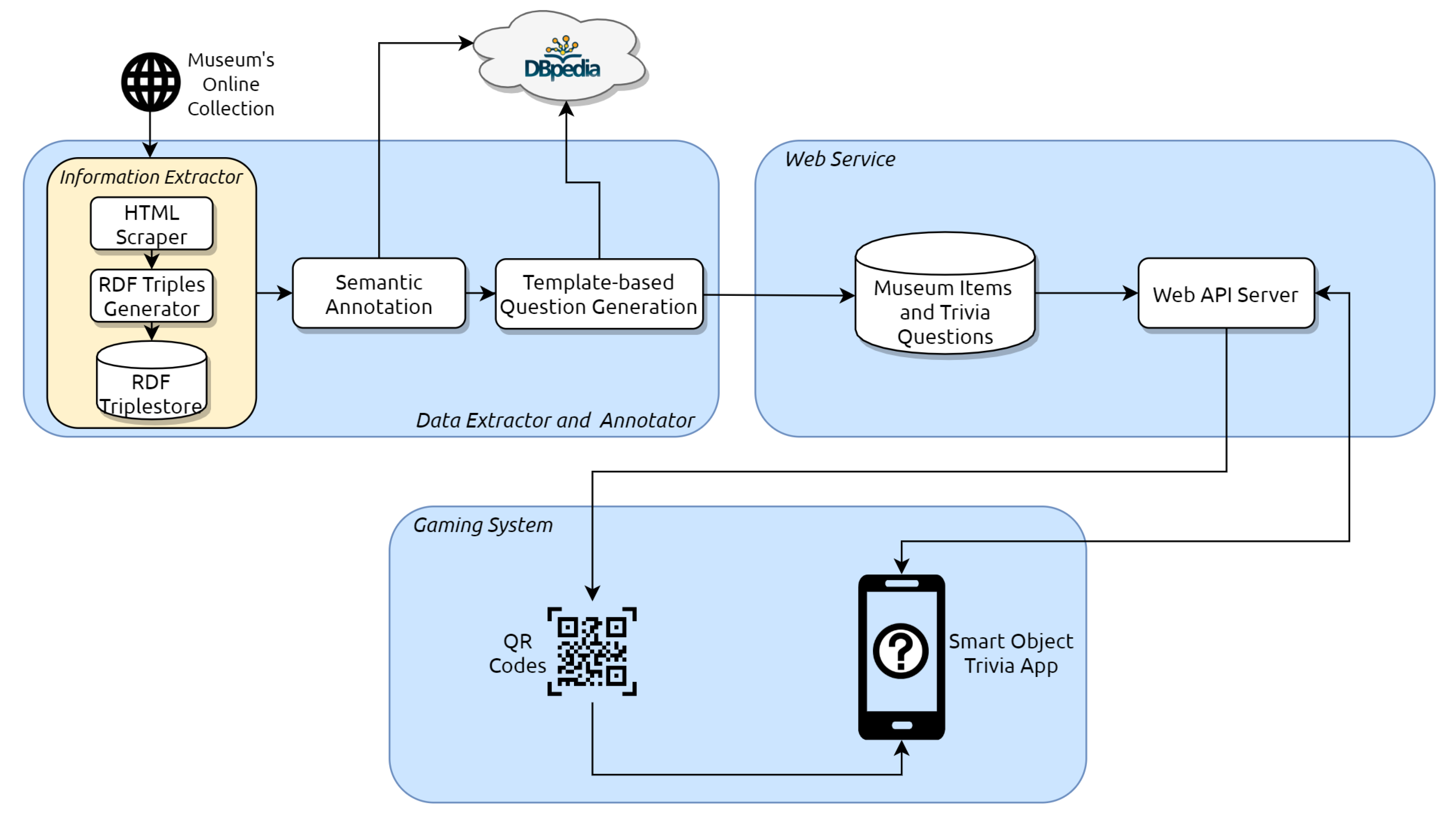

Taking into consideration the question generation methodology outlined in

Section 3, the resulting architecture consists of the following three main components as depicted in

Figure 3: a

data extractor and annotator; a

web service and the

gaming system.

The

data extractor and annotator performs two major tasks. First, this module aims to retrieve museum objects’ documentation from their website using web scraping technologies. Second, the extracted data undergoes a semantic annotation procedure and finally, these data are fed into a template-based question generator. This yields batches of questions associated with every museum item. The latter procedure has been carried out according to the question generation methodology portrayed in

Section 3.1.

The

web service component stores the information on museum items and their respective generated questions. These are stored as document collections in MongoDB (MongoDB official site:

https://www.mongodb.com/), the database of choice because it enables to store documents (NoSQL) in JSON format.Additionally, there is another document to add, which consists of user data and game statistics. The implementation of the web server has been carried out considering REST API paradigms, and it grants the gaming system access to the required resources (object data, questions and user statistics). The server has been developed using Python Flask (Flask official site:

https://www.palletsprojects.com/p/flask/) web application framework, which is fast and straightforward to set up, whilst providing an excellent performance for our purposes (GET requests only return a JSON-formatted text response). In order to be able to perform the majority of API calls to the service, a valid user key must be used within each request. Such key is granted to a user when they register on our application. For the purpose of improving security, this key is updated each time the user logs in. A demo version of the web service has been uploaded to Heroku (Site for demo web service of the proposed system:

https://museowebapi.herokuapp.com/). This version does not require keys to perform API calls and is at the reader’s disposal for illustrative purposes (no user data is collected; only items’ documentation and questions).

Regarding the

gaming system, it comprises two main artefacts: QR codes and a smartphone application. These codes contain an URL (A working example to illustrate this is shown in:

https://museowebapi.herokuapp.com/objects/502504) identifying the museum item of interest. Accessing these URLs yield a JSON response containing information about the object. These data are elegantly formatted and presented in the application, so that it provides advanced visualization of the exhibits as well as access to the Trivia quiz game. Each object will allow users to play three questions. It must also be noted that images associated to the objects are not stored in our designed database. The main reason is to reduce the load and optimise the speed of the transactions. Instead, we have decided to host them on Cloudinary (Cloudinary Site:

https://cloudinary.com/) servers.

The application has been developed with JavaScript using React Native (React Native Official Website:

http://www.reactnative.com/) framework, granting cross-compatibility with Android and iOS. The gaming system, therefore, allows the interaction of visitors with the gamified museum environment. The objective of this system is to successfully and effectively accomplish the creation of an enjoyable museum ecosystem, where QR codes and the designed smartphone application play a critical role.

For the purpose of enhancing the game with motivational affordances, some gamification rules have been devised:

A score system rewards a number of points between 0 and 10, both inclusive. Players will obtain points by selecting the correct answer within the time limit, and the faster the answer is given, the more points they can be rewarded with, provided the answer picked was the right one. A wrong response does not affect the score.

Earning points allows registered users to be able to ascend in a ranking system and compare their attained scores with the rest of players by means of a leaderboard.

As a player makes further progress in the game, questions shown will be harder to adjust for expertise level.

Tokens can be used throughout quizzes as a means of additional help. They cross out some of the incorrect answers. These tokens can only be obtained by levelling up and ascending ranks.

Once the three questions about a given object are played, visitors may no longer have access to quizzes for that object again. This is done to encourage visitors to interact with as many museum items as possible, because only by scanning a new object are they able to play quizzes and consequently earn points to climb up the leaderboard.

Additionally, players enjoy other engaging features when using the app:

Answer statistics: users can check the percentage of questions they have answered (in)correctly. This information is displayed as a pie chart.

Visited objects: after the corresponding three questions about an object are played, this object will be added to the collection of museum items visited by the user. Hence, visited objects will be available to the visitor without having to scan their corresponding QR codes again (although questions are no longer available, as it has been previously explained).

5. A Case Study

The proposed platform has been implemented in the Telecommunications Museum

Professor Joaquín Serna located at

Escuela Técnica Superior de Ingenieros de Telecomunicación, Universidad Politécnica de Madrid, Spain. The collection is constituted by 873 telecommunication devices. The platform has been adapted to present information and questions in Spanish. The smartphone app that enables visitors to interact with the designed smart object environment is available at Google Play Store (Smart Objects Android App:

https://play.google.com/store/apps/details?id=com.gsi.objetosinteligentesmuseoetsit).

When endeavouring to perform the entity extraction and annotation procedures outlined in

Section 3, we came to the realisation that although DBpedia is a large structured dataset, there are many entities and domains regarding the subject of telecommunications that are missing documentation, not only in Spanish but also in English. This hinders the ability to produce more specific questions about a given object. An example to illustrate this drawback is that the corresponding DBpedia entry about voltmeters (DBpedia entry about voltmeters:

http://dbpedia.org/page/Voltmeter) does not describe the different types of voltmeters available or the physical quantity that is being measured. Therefore, a solution to this inconvenience was to classify items into categories in order to generate additional questions related to the theme the object belongs to.As a result, exhibits were classified into four main categories:

Sound and Image;

Telephony;

Radio and Telegraphy; and

Instrumentation.

Table 1 portrays the result of such classification. It must be noted that

some items belong to more than one theme. A total of 853 relevant pieces of data could be extracted from items’ descriptions and direct DBpedia queries to fill the question templates.

The number of adequate question templates (and thus the number of questions generated) depends on the availability of information regarding a particular subject in DBpedia. Therefore, from this procedure, it is possible to evaluate the amount and quality of data relating to these four telecommunication categories in DBpedia. For instance, more templates on instrumentation could have been prepared if the information about measuring instruments were better annotated and structured. Given the limitation mentioned, the resulting number of templates and questions generated per category is portrayed in

Table 2. A total of 1013 questions have been produced.

As it has been illustrated throughout the paper, complementary data to produce questions rely strongly on the quantity and quality of data stored in DBpedia about the topics of the exhibits of interest. It is important to note that in the cultural heritage field, the information being dealt with can be peculiar and rather specific depending on the reference domain of the exhibit. This means that unless DBpedia (or similarly, Wikipedia) data related to the topics searched has been reviewed by experts, it is not possible to guarantee the correctness of the information retrieved about the questions produced. In contrast, the information about exhibits that is displayed via app has been written and reviewed by telecommunications experts. If this platform were to be implemented in a museum of bigger impact, the gamification system could exploit the Linked Data endpoint of that museum’s holdings which would have been validated by experts (as in the case of Prado Museum mentioned in

Section 2.1). Alternatively, cultural heritage experts might also take an interest in reviewing information stored in DBpedia. Another solution to this problem would be to resort to another source of Linked Data, instead of DBPedia, and extract semantic items from datasets that have already been reviewed by experts such as those in Digital Libraries. For example, we could exploit the semantic information provided by the World Digital Library (World Digital Library programmatic API:

http://api.wdl.org/) or Europeana (Europeana Digital Library SPARQL endpoint:

http://sparql.europeana.eu/). However, the advantage of using DBpedia is that there are already several tools available that perform entity linking procedures against DBPedia’s dataset. In case of using semantic data from Digital Libraries, we would have to program and train a model that would allow performing entity linking procedures on the dataset of choice, which can be more convenient, but would add increased complexity to the platform.

Moreover, QR codes have been printed into hard cardboards. We prepared a list of 8 QR codes per cardboard, each of them complemented with an image of the exhibit and its name. The choice of format aims to encourage visitors interested in learning more about any museum object to take any of these cardboards and walk around the museum looking for the objects shown in the cardboard. They may scan objects whenever they desire and once they do so, the digital version of the given exhibit will display in-app. Not only can visitors play questions about scanned items but they are also provided with additional documentation about them. Hence, the visit shifts towards some kind of treasure-hunt experience.

Figure 4 portrays the final aspect of these hard cardboards.

The smartphone application has been styled and adapted for Joaquin Serna Telecommunications Museum. An example of the app interface is provided in

Figure 5. Concretely,

Figure 5a,b show the representation of an exhibit’s documentation in the app.

Figure 5c depicts the user interface of a multiple-choice question, where gamification elements can be seen, such as time left and remaining tokens. Finally,

Figure 5d illustrates the use of tokens that act as lifelines to aid visitors with difficult questions. These helpers work by deleting two distractors, thus leaving the key and only one distractor to choose the answer from.

6. Evaluation

6.1. Research Model and Hypotheses

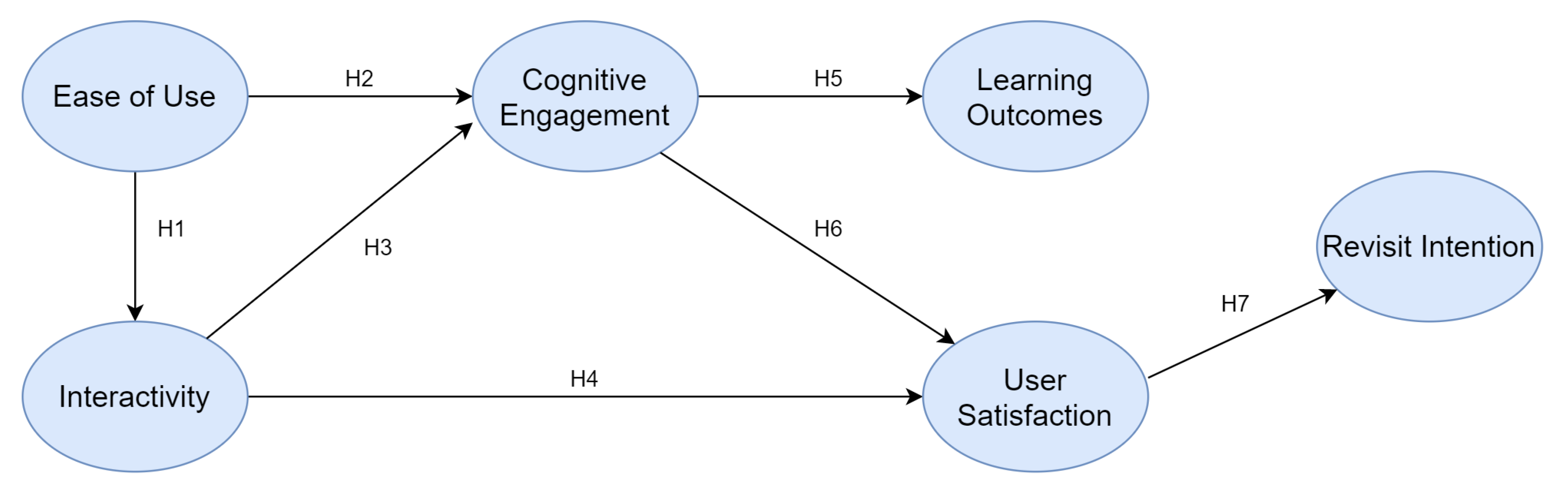

The proposed research model consists of six factors or variables that have been measured and validated by other works about cultural heritage institutions. These are: ease of use, interactivity, cognitive engagement, learning outcomes, user satisfaction and revisit intentions.

The research model proposed to assess the designed platform is an adaptation and an extension from the model elaborated by Pallud [

64]. Their model is based on a comprehensive literature review that addresses the effects of technological dimensions in the process of learning in museums. It also takes into consideration how the use of technology in museums affects psychological factors such as curiosity, enjoyment or focus and how these in turn influence learning outcomes. Thus, our first four (latent) variables have been adapted from their research.

However, the aforementioned work does not analyse the impact of technology on the achievement of visitor satisfaction and their behavioural intentions. It is of importance to consider these two variables when assessing the usefulness of the proposed platform because, according to Harrison and Shaw [

65], one of the main goals of museums is to achieve visitor satisfaction, provided that visitors are an essential asset of success. Therefore, the gamification platform should also be evaluated with respect to its contribution towards a satisfactory museum experience.

Regarding visitor satisfaction, the study conducted by Reino et al. [

66] reveals that interactive qualities of multimedia representations of content contribute positively toward user satisfaction during a museum visit. Also, the work produced by Fino [

67] demonstrates that the cognitive dimension is a significant predictor of visitor satisfaction in museums. This reveals that the way information is provided and what can visitors see affects the creation of satisfying museum experiences. Consequently, if a consumer is satisfied with the product or service offered by a company, they tend to recommend it to others and often buy the product or service again [

68]. Particularly in the museum environment, bodies of research such as Kang et al. [

69] and McLean [

70] show a strong correlation between satisfactory museum experiences and the intention of visitors to return.

Bearing in mind the aforementioned research, the resulting measurement model is depicted in

Figure 6.

Therefore, based on the review of the aforementioned academic literature, seven hypotheses are raised to assess the effectiveness of the proposed system.

H1,H2,H3 and

H4 have been formulated in virtue of the results yielded by Pallud [

64].

H5, H6 and

H7 have been hypothesised referring to the extension of the model considering visitor satisfaction and revisit intentions.

H1: The proposed system’s ease of use positively influences interactivity.

H2: The ease of use of the proposed platform increases cognitive engagement.

H3: The interactive capabilities of the system have a positive effect on cognitive engagement.

H4: Cognitive engagement through the use of the gamification system has a positive effect on learning outcomes.

H5: The interactivity of the proposed platform positively influences museum visitors’ satisfaction.

H6: Visitors’ cognitive engagement through the use of the proposed platform positively influences visitor’s satisfaction.

H7: Intentions to revisit the museum are positively affected by visitors’ satisfaction with the platform.

6.2. Participants

A total of 40 participants, male and female, were involved in the experiment. All of them are students of the same university where the telecommunications museum is located. Thus, the age group of participants is 18–25 years. They all have the required technical background to understand the telecommunications concepts tested by the quiz game. This implementation of the proposed platform for the museum is fundamentally oriented to visitors with knowledge of telecommunications, hence the choice of participants made.

6.3. Materials

The proposed smart object gamification platform requires few materials to function as expected. These consist in:

Hard cardboards containing QR codes (A4 format).

A smartphone per participant that runs a recent Android version.

The smart object platform’s .apk installed in participants’ smartphones.

A server that hosts the web service. It is equipped with sufficient memory and computing power to handle the expected load generated by participants. The application performs API calls to the designed web service in order to obtain necessary data for the game.

6.4. Procedure

To perform the experiment, participants are gathered in one of the museum’s main rooms where hard cardboards are available. Each participant is given several hard cardboards to choose from. They select one and start walking around the museum, looking at the exhibits whilst carrying these cardboards.

Participants can scan the QR codes from hard cardboards whenever they desire, although the recommended experience would suggest to scan the QR code after finding the corresponding physical item in the museum. They can view additional information of the exhibit and play quizzes about them. Given that the museum is rather small, participants have a time limit of 30 minutes to visit the room, which is sufficient to allow them to interact and experiment with the gamification platform.

After this time, the experiment finishes and participants are given a questionnaire with questions based on a 5-point Likert scale (“strongly disagree” to “strongly agree”) [

71]. The objective of such questions is to record participants’ opinion about the platform. The indicators used are adaptations of measures that have been validated in other bodies of research and that have been readjusted for the purpose of this work. To measure the system’s interactive attributes, as well its ease of use, learning outcomes, and visitors’ cognitive engagement (curiosity, interest, focus), we adapted scales used by Pallud [

64]. To measure user satisfaction, we adapted a scale proposed by Mimoun and Poncin [

72]. With respect to the revisit intention measure, scales were adapted from Pallud and Straub [

73].

Table 3 provides a summary of the constructs i.e. latent variables, and the items they consist of, which correspond to the questions raised to participants. It must be noted that only valid items (see

Section 6.5) are shown.

6.5. Results and Discussion

In order to assess the data collected through the questionnaires, we made use of partial least squares structural equation modelling (PLS-SEM) [

74]. This analysis technique is most appropriate in situations where sample sizes are small and the data to process is not normally distributed [

75]. In the proposed experimentation, 40 participants performed the evaluation and Likert scales are intrinsically non-normal. Therefore, these conditions justify the choice of technique employed to test for causality. It should be borne in mind, however, that the recommended minimum sample size ought to be at least 10 times the maximum number of indicators in a construct [

76]. In the proposed model, there are at most 3 items pointing at a latent variable, which implies a minimum of 30 samples. Hence, our sample size complies with such criterion.

To evaluate the research model using PLS-SEM methods, there are two stages of assessment: (i) the

measurement model and (ii) the

structural model [

77]. Data analysis was carried out using the SmartPLS 3.0 tool [

78].

Regarding the

measurement model, it focuses on analysing the relationship between the constructs and their indicators [

79]. The aim of exploring this model is to assess its validity. This is carried out by testing that the criteria imposed by certain properties of our latent variables and items are satisfied. Concretely, we evaluate individual item reliability, internal consistency reliability, convergent validity, and discriminant validity [

80].

Item reliability is verified through the analysis of indicators’ loadings [

80]. Research recommends a minimum value of 0.708 [

81]. Out of the 15 initial indicators, EOU3 (0.435) and CE1 (0.576) had to be disregarded from the measurement model analysis since they did not attain the required level of reliability. Furthermore, two other loadings that were slightly under the threshold have been maintained, IN1 (0.673) and US3 (0.688). The reason for this is that it can be acceptable to keep factor loadings of a minimum of 0.500 if the other indicators of the same construct present good reliability values [

72]. The remaining loadings were between 0.750 and 0.879, thus providing adequate reliability.

Internal consistency reliability is checked by means of the composite reliability indicator, which requires a threshold value of 0.70 to be considered acceptable [

79]. Scores ranged from 0.741 to 0.854, therefore lying above the minimum and, hence, deeming construct reliability values satisfactory.

The convergent validity measure is the “

extent to which the construct converges to explain the validity of its items” [

80]. The indicator used to assess convergent validity is the average variance extracted (AVE) for every indicator in each latent variable. Literature suggests that the AVE values must be greater than 0.50 [

82] to confirm convergent validity of the results. Our values were between 0.593 and 0.745, higher than the threshold. The aforementioned reliability results together with convergent validity scores are summarised in

Table 4.

With respect to discriminant validity, it is verified when each indicator shows a strong correlation with the latent variable it is linked to in the model, while having a weak correlation with the rest of the constructs. Fornell and Larcker [

83] define a criterion that suggests that discriminant validity between two latent variables has been fulfilled if the AVE for each individual construct is greater than the shared variance (the square of the correlation coefficient) between pairs of constructs.

Table 5 shows that discriminant validity is demonstrated, because the square root of each construct’s AVE (bold elements of the diagonal) is greater than any value in that corresponding row and column.

Once the measurement model is adequate, the

structural model can be assessed. It explores the significance of the paths between latent variables [

84], which means that this model indicates whether or not the raised hypotheses are supported by the results. We configured the SmartPLS tool to calculate the structural model taking into account the recommended number of 5000 bootstrap samples [

74]. The results obtained are reflected in

Table 6.

As is shown in

Table 6, two out of seven hypotheses have been rejected: H1 and H4. Thus, based on these results we can infer that, although the platform appears to be easy to use by visitors, this quality does not contribute towards the interactivity of the gamification system. Also, the interactive qualities of the platform do not necessarily affect visitors’ satisfaction with it and with the museum experience.

The platform’s ease of use positively influences visitors’ cognitive engagement during the museum visit ( = 0.361; p < 0.05), therefore H2 is validated. Similarly, cognitive engagement is positively affected by the interactive qualities of the smart objects system ( = 0.391; p < 0.05), thus asserting the validity of H3. In addition, cognitive engagement is positively correlated with the learning outcomes of visitors ( = 0.485; p < 0.01) and their satisfaction with the platform and the museum visit ( = 0.570; p < 0.05), supporting H5 and H6. Finally, it has also been identified that user satisfaction has a significant impact on their intentions to return to the museum ( = 0.495; p < 0.01), hence affirming H7.

Based on the results obtained it is possible to propose that the smart object gamification platform appears to be a rather interesting complement to the museum experience, both to visitors and museum owners. The use of the platform by visitors suggests increased levels of cognitive engagement which in turn affect their overall satisfaction with the visit. Consequently, results also show that satisfied visitors are more likely to return to the museum, which is of great interest to museum owners.

7. Conclusions and Outlook

In this paper, we introduced a smart object gamification platform for museums. The system exploits a combination of semantic web and Internet of Things technologies in order to craft appealing and memorable museum experiences. We have detailed the process by which questions are automatically generated based on a set of templates. Then, we described the interaction of visitors with the museum environment by means of QR codes and a smartphone application that provides advanced visualization and information about the exhibits of interest. The app also offers visitors the opportunity to play the questions that have been generated, thus gamifying their experience. Finally, the prototype of the designed platform was implemented and evaluated in Joaquín Serna Telecommunications Museum.

The experimentation performed shows that the platform is easy to use as well as interactive. Both factors contribute to higher levels of cognitive engagement. Visitors’ increased cognitive engagement when using the platform enhance learning outcomes and their satisfaction with the visit. This, in turn, contributes towards a higher likelihood for visitors to return to the telecommunications museum. These results encourage the use of the gamification platform in the telecommunications museum. It therefore appears to be a promising work that could be extrapolated and adapted to other kinds of museums or cultural heritage institutions.

As future work, several lines could be explored. For instance, instead of only providing textual information on the scanned object, the platform could also offer an audio-based description of the exhibit’s documentation. Another line of work is to train a machine learning model to classify keywords contained in objects’ descriptions. This will allow to extract more relevant concepts from exhibits’ descriptions. The approach could help overcome problems that arise from data availability issues in Linked Data knowledge graphs, which affect the quantity and quality of the produced questions.