Age-Related Response Bias in the Decoding of Sad Facial Expressions

Abstract

:1. Introduction

1.1. Age-of-Actor Effects on Facial Expression Decoding

1.2. Age-of-Rater Effects on Facial Expression Decoding

1.3. Own-Age Effects on Facial Expression Decoding

1.4. The Present Study

2. Method

2.1. Participants

2.2. Current Affect

2.3. Age-Related Stereotypes

2.4. Stimulus Materials

2.5. Procedure

2.6. Statistical Analyses

3. Results

3.1. Age Effects on Raw Hit Rates (Step 1)

| Raw Hit Rate | Unbiased Hit Rate | |||||||

|---|---|---|---|---|---|---|---|---|

| Source | df | F | p | ηp² | df | F | p | ηp² |

| Age-of-actor (AA) | 1, 57 | 0.09 | 0.767 | 0.001 | 1, 57 | 0.02 | 0.901 | 0.000 |

| Age-of-rater (AR) | 1, 57 | 14.17 | <0.001 | 0.199 | 1, 57 | 15.98 | <0.001 | 0.219 |

| Targetemotion (TE) | 3, 199 | 85.76 | <0.001 | 0.601 | 3, 168 | 78.33 | <0.001 | 0.579 |

| AR × AA | 1, 57 | 5.56 | 0.022 | 0.089 | 1, 57 | 2.06 | 0.156 | 0.035 |

| AR × TE | 4, 217 | 3.05 | 0.020 | 0.051 | 3, 168 | 3.88 | 0.011 | 0.064 |

| AA × TE | 4, 228 | 19.69 | <0.001 | 0.257 | 3, 173 | 9.67 | <0.001 | 0.145 |

| AR × AA × TE | 4, 228 | 2.43 | 0.049 | 0.041 | 3, 173 | 2.05 | 0.107 | 0.035 |

| (A) Age-of-Actor | |||||||

| Emotion and Outcome Measure | Young Actors | Older Actors | |||||

| M | SD | M | SD | F(1, 57) | p | ηp2 | |

| Raw hit rate | |||||||

| Fear | 0.07 | 0.12 | 0.15 | 0.17 | 9.99 | 0.003 | 0.149 |

| Disgust | 0.30 | 0.22 | 0.12 | 0.16 | 42.23 | <0.001 | 0.426 |

| Happiness | 0.62 | 0.26 | 0.59 | 0.24 | 1.23 | 0.273 | 0.021 |

| Sadness | 0.21 | 0.21 | 0.36 | 0.17 | 23.25 | <0.001 | 0.290 |

| Anger | 0.26 | 0.18 | 0.28 | 0.18 | 0.40 | 0.530 | 0.007 |

| Arcsine unbiased hit rate | |||||||

| Fear | 0.03 | 0.06 | 0.07 | 0.12 | 5.76 | 0.020 | 0.091 |

| Disgust | 0.26 | 0.23 | 0.11 | 0.16 | 22.55 | <0.001 | 0.283 |

| Happiness | 0.39 | 0.24 | 0.45 | 0.29 | 1.81 | 0.184 | 0.031 |

| Sadness | 0.15 | 0.18 | 0.19 | 0.11 | 2.90 | 0.094 | 0.048 |

| Anger | 0.13 | 0.13 | 0.13 | 0.11 | 0.01 | 0.945 | 0.000 |

| (B) Age-of-Rater | |||||||

| Emotion and Outcome Measure | Young Raters | Older Raters | |||||

| M | SD | M | SD | F(1, 57) | p | ηp2 | |

| Raw hit rate | |||||||

| Fear | 0.12 | 0.1 | 0.10 | 0.12 | 0.58 | 0.451 | 0.010 |

| Disgust | 0.28 | 0.17 | 0.15 | 0.14 | 9.39 | 0.003 | 0.141 |

| Happiness | 0.67 | 0.22 | 0.54 | 0.18 | 6.56 | 0.013 | 0.103 |

| Sadness | 0.33 | 0.16 | 0.23 | 0.12 | 5.63 | 0.021 | 0.090 |

| Anger | 0.26 | 0.15 | 0.29 | 0.15 | 0.70 | 0.408 | 0.012 |

| Arcsine unbiased hit rate | |||||||

| Fear | 0.06 | 0.05 | 0.04 | 0.06 | 0.77 | 0.385 | 0.013 |

| Disgust | 0.23 | 0.16 | 0.13 | 0.13 | 5.96 | 0.018 | 0.095 |

| Happiness | 0.46 | 0.21 | 0.32 | 0.13 | 11.68 | 0.001 | 0.170 |

| Sadness | 0.18 | 0.11 | 0.14 | 0.10 | 1.75 | 0.191 | 0.030 |

| Anger | 0.13 | 0.08 | 0.10 | 0.07 | 1.76 | 0.190 | 0.030 |

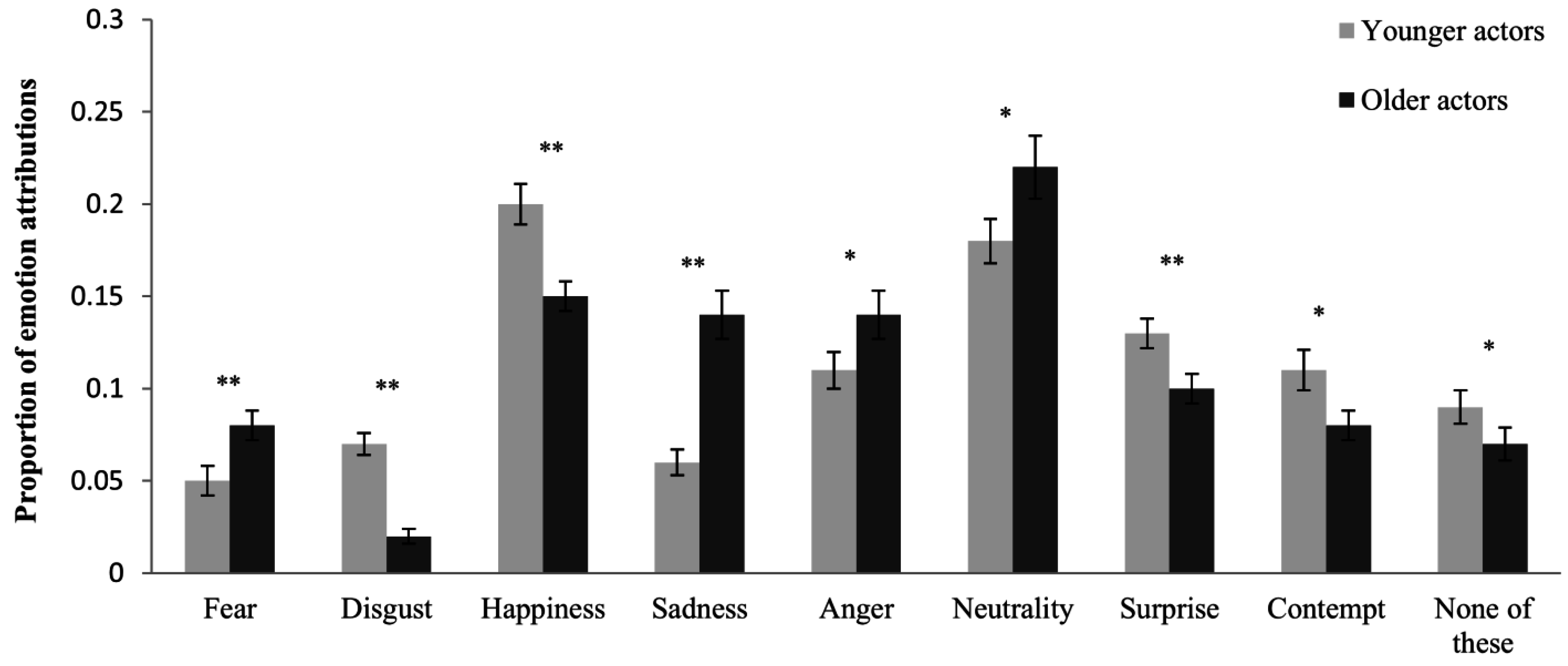

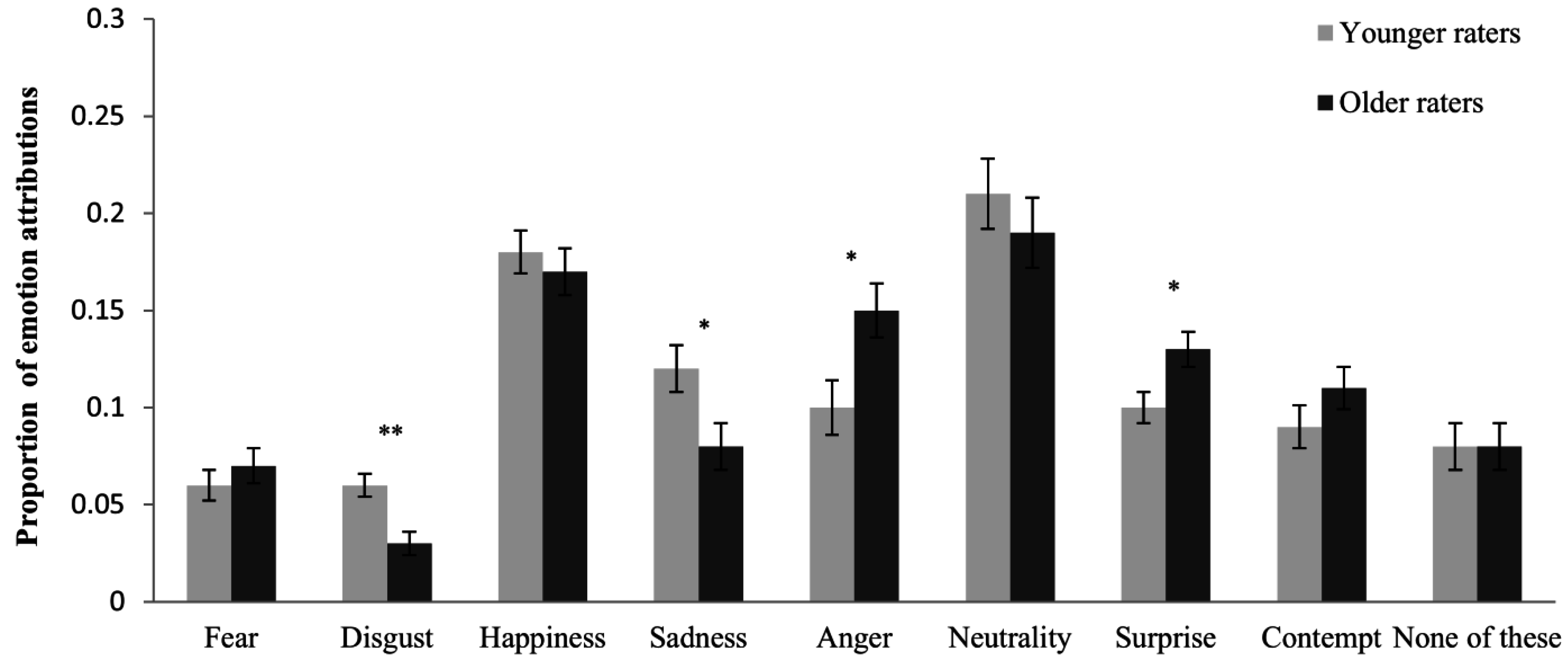

3.2. Age-Related Response Bias (Step 2)

| Age-of-Actor | Age-of-Rater | |||||

|---|---|---|---|---|---|---|

| Emotion | F(1, 57) | p | ηp2 | F(1, 57) | p | ηp2 |

| Fear | 10.92 | 0.002 | 0.161 | 0.38 | 0.538 | 0.007 |

| Disgust | 64.84 | <0.001 | 0.532 | 10.19 | 0.002 | 0.152 |

| Happiness | 22.24 | <0.001 | 0.281 | 0.81 | 0.371 | 0.014 |

| Sadness | 28.99 | <0.001 | 0.337 | 5.24 | 0.026 | 0.084 |

| Neutrality | 5.02 | 0.029 | 0.081 | 0.75 | 0.390 | 0.013 |

| Anger | 6.18 | 0.016 | 0.098 | 7.00 | 0.011 | 0.109 |

| Surprise | 11.73 | 0.001 | 0.171 | 5.29 | 0.025 | 0.085 |

| Contempt | 5.69 | 0.020 | 0.091 | 1.80 | 0.185 | 0.031 |

| None of these | 5.75 | 0.020 | 0.092 | 0.25 | 0.622 | 0.004 |

3.3. Unbiased Hit Rates (Step 3)

4. Discussion

4.1. Age-of-Actor Effects on Facial Expression Decoding

4.2. Age-of-Rater Effects on Facial Expression Decoding

4.3. Own-Age Effects on Facial Expression Decoding

4.4. Limitations and Outlook

5. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Ekman, P.; Friesen, W.V.; Ellsworth, P. Does the human face provide accurate information? In Emotion in the Human Face; Ekman, P., Ed.; Cambridge University Press: New York, NY, USA, 1982; pp. 56–97. [Google Scholar]

- Hess, U.; Adams, R.B.J.; Simard, A.; Stevenson, M.T.; Kleck, R.E. Smiling and sad wrinkles: Age-related changes in the face and the perception of emotions and intentions. J. Exp. Soc. Psychol. 2012, 48, 1377–1380. [Google Scholar] [CrossRef] [PubMed]

- Riediger, M.; Voelkle, M.C.; Ebner, N.C.; Lindenberger, U. Beyond “happy, angry, or sad?”: Age-of-poser and age-of-rater effects on multi-dimensional emotion perception. Cogn. Emot. 2011, 25, 968–982. [Google Scholar] [CrossRef] [PubMed]

- Ruffman, T.; Henry, J.D.; Livingstone, V.; Phillips, L.H. A meta-analytic review of emotion recognition and aging: Implications for neuropsychological models of aging. Neurosci. Biobehav. Rev. 2008, 32, 863–881. [Google Scholar] [CrossRef] [PubMed]

- Fölster, M.; Hess, U.; Werheid, K. Facial age affects emotional expression decoding. Front. Psychol. 2014, 5. [Google Scholar] [CrossRef] [PubMed]

- Malatesta, C.Z.; Izard, C.E. The facial expression of emotion: Young, middle-aged, and older adult expressions. In Emotion in Adult Development; Malatesta, C.Z., Izard, C.E., Eds.; Sage Publications: London, UK, 1984; pp. 253–273. [Google Scholar]

- Phillips, L.H.; Allen, R. Adult aging and the perceived intensity of emotions in faces and stories. Aging Clin. Exp. Res. 2004, 16, 190–199. [Google Scholar] [CrossRef] [PubMed]

- Bucks, R.S.; Garner, M.; Tarrant, L.; Bradley, B.P.; Mogg, K. Interpretation of emotionally ambiguous faces in older adults. J. Gerontol. B-Psychol. 2008, 63, P337–P343. [Google Scholar] [CrossRef]

- Ebner, N.C.; Johnson, M.K. Young and older emotional faces: Are there age group differences in expression identification and memory? Emotion 2009, 9, 329–339. [Google Scholar] [CrossRef] [PubMed]

- Ebner, N.C.; He, Y.; Johnson, M.K. Age and emotion affect how we look at a face: Visual scan patterns differ for own-age versus other-age emotional faces. Cogn. Emot. 2011, 25, 983–997. [Google Scholar] [CrossRef] [PubMed]

- Malatesta, C.Z.; Izard, C.E.; Culver, C.; Nicolich, M. Emotion communication skills in young, middle-aged, an older women. Psychol. Aging 1987, 2, 193–203. [Google Scholar] [CrossRef] [PubMed]

- Wagner, H.L. On measuring performance in category judgment of nonverbal behavior. J. Nonverbal. Behav. 1993, 17, 3–28. [Google Scholar] [CrossRef]

- Borod, J.; Yecker, S.; Brickman, A.; Moreno, C.; Sliwinski, M.; Foldi, N.; Alpert, M.; Welkowitz, J. Changes in posed facial expression of emotion across the adult life span. Exp. Aging Res. 2004, 30, 305–331. [Google Scholar] [CrossRef] [PubMed]

- Richter, D.; Dietzel, C.; Kunzmann, U. Age differences in emotion recognition: The task matters. J. Gerontol. B-Psychol. 2011, 66, 48–55. [Google Scholar] [CrossRef] [PubMed]

- Macchi Cassia, V. Age biases in face processing: The effects of experience across development. Br. J. Psychol. 2011, 102, 816–829. [Google Scholar] [CrossRef] [PubMed]

- Firestone, A.; Turk-Browne, N.B.; Ryan, J.D. Age-related deficits in face recognition are related to underlying changes in scanning behavior. Aging Neuropsychol. C 2007, 14, 594–607. [Google Scholar] [CrossRef] [PubMed]

- Ebner, N.C.; He, Y.; Fichtenholtz, H.M.; McCarthy, G.; Johnson, M.K. Electrophysiological correlates of processing faces of younger and older individuals. Soc. Cogn. Affect Neurosci. 2011, 6, 526–535. [Google Scholar] [CrossRef] [PubMed]

- Ebner, N.C.; Johnson, M.K.; Fischer, H. Neural mechanisms of reading facial emotions in young and older adults. Front. Psychol. 2012, 3. [Google Scholar] [CrossRef] [PubMed]

- Hess, U.; Kirouac, G. Emotion expression in groups. In Handbook of Emotions; Lewis, M., Ed.; Guilford Press: New York, NY, USA, 2000; pp. 368–381. [Google Scholar]

- Gluth, S.; Ebner, N.C.; Schmiedek, F. Attitudes toward younger and older adults: The german aging semantic differential. Int. J. Behav. Dev. 2010, 34, 147–158. [Google Scholar] [CrossRef]

- Bzdok, D.; Langner, R.; Hoffstaedter, F.; Turetsky, B.I.; Zilles, K.; Eickhoff, S.B. The modular neuroarchitecture of social judgments on faces. Cereb. Cortex 2012, 22, 951–961. [Google Scholar] [CrossRef] [PubMed]

- Völkle, M.C.; Ebner, N.C.; Lindenberger, U.; Riediger, M. Let me guess how old you are: Effects of age, gender, and facial expression on perceptions of age. Psychol. Aging 2012, 27, 265–277. [Google Scholar] [CrossRef] [PubMed]

- Sasson, N.J.; Pinkham, A.E.; Richard, J.; Hughett, P.; Gur, R.E.; Gur, R.C. Controlling for response biases clarifies sex and age differences in facial affect recognition. J. Nonverbal. Behav. 2010, 34, 207–221. [Google Scholar] [CrossRef]

- Isaacowitz, D.M.; Lockenhoff, C.E.; Lane, R.D.; Wright, R.; Sechrest, L.; Riedel, R.; Costa, P.T. Age differences in recognition of emotion in lexical stimuli and facial expressions. Psychol. Aging 2007, 22, 147–159. [Google Scholar] [CrossRef] [PubMed]

- Isaacowitz, D.M.; Stanley, J.T. Bringing an ecological perspective to the study of aging and recognition of emotional facial expressions: Past, current, and future methods. J. Nonverbal. Behav. 2011, 35, 261–278. [Google Scholar] [CrossRef] [PubMed]

- Carstensen, L.L.; Charles, S.T. Emotion in the second half of life. Curr. Dir. Psychol. Sci. 1998, 7, 144–149. [Google Scholar] [CrossRef]

- Carstensen, L.L.; Mikels, J.A. At the intersection of emotion and cognition—aging and the positivity effect. Curr. Dir. Psychol. Sci. 2005, 14, 117–121. [Google Scholar] [CrossRef]

- Suzuki, A.; Hoshino, T.; Shigemasu, K.; Kawamura, M. Decline or improvement? Age-related differences in facial expression recognition. Biol. Psychol. 2007, 74, 75–84. [Google Scholar] [CrossRef] [PubMed]

- Wagner, H.L.; MacDonald, C.J.; Manstead, A. Communication of individual emotions by spontaneous facial expressions. J. Pers. Soc. Psychol. 1986, 50, 737. [Google Scholar] [CrossRef]

- Ekman, P.; Friesen, W.V.; Hager, J.C. Facial Action Coding System (FACS); Consulting: Palo Alto, CA, USA, 1978. [Google Scholar]

- Henry, J.D.; MacLeod, M.S.; Phillips, L.H.; Crawford, J.R. A meta-analytic review of prospective memory and aging. Psychol. Aging 2004, 19, 27. [Google Scholar] [CrossRef] [PubMed]

- Kite, M.E.; Stockdale, G.D.; Whitley, B.E.; Johnson, B.T. Attitudes toward younger and older adults: An updated meta-analytic review. J. Soc. Issues 2005, 61, 241–266. [Google Scholar] [CrossRef]

- Hummert, M.L.; Garstka, T.A.; Shaner, J.L.; Strahm, S. Stereotypes of the elderly held by young, middle-aged, and elderly adults. J. Gerontol. 1994, 49, P240–P249. [Google Scholar] [CrossRef] [PubMed]

- Krumhuber, E.; Kappas, A. Moving smiles: The role of dynamic components for the perception of the genuineness of smiles. J. Nonverbal Behav. 2005, 29, 3–24. [Google Scholar] [CrossRef]

- Cunningham, D.W.; Wallraven, C. Dynamic information for the recognition of conversational expressions. J. Vis. 2009, 9, 1–17. [Google Scholar] [CrossRef] [PubMed]

- Schmidt, K.-H.; Metzler, P. Wortschatztest: WST; Beltz Test: Göttingen, Germany, 1992. [Google Scholar]

- Horn, W. Leistungsprüfsystem: L-P-S, 2nd ed.; Verl. für Psychologie, Hogrefe: Göttingen, Germany, 1983. [Google Scholar]

- Watson, D.; Clark, L.A.; Tellegen, A. Development and validation of brief measures of positive and negative affect - the PANAS scales. J. Pers. Soc. Psychol. 1988, 54, 1063–1070. [Google Scholar] [CrossRef] [PubMed]

- Krohne, H.W.; Egloff, B.; Kohlmann, C.W.; Tausch, A. Investigations with a german version of the positive and negative affect schedule (PANAS). Diagnostica 1996, 42, 139–156. [Google Scholar]

- Rosencranz, H.A.; McNevin, T.E. A factor analysis of attitudes toward the aged. Gerontologist 1969, 9, 55–59. [Google Scholar] [CrossRef] [PubMed]

- Stange, A. German Translation of the Aging Semantic Differential; Max Planck Institute for Human Development: Berlin, Germany, 2003. [Google Scholar]

- Christophe, V.; Rimé, B. Exposure to the social sharing of emotion: Emotional impact, listener responses and secondary social sharing. Eur. J. Soc. Psychol. 1997, 27, 37–54. [Google Scholar] [CrossRef]

- Hess, U.; Bourgeois, P. You smile-I smile: Emotion expression in social interaction. Biol. Psychol. 2010, 84, 514–520. [Google Scholar] [CrossRef] [PubMed]

- Dovidio, J.F.; Kawakami, K.; Beach, K.R. Implicit and explicit attitudes: Examination of the relationship between measures of intergroup bias. In Blackwell Handbook of Social Psychology: Intergroup Processes; Brown, R., Gaertner, S., Eds.; Blackwell: Malden, MA, USA, 2003; pp. 175–197. [Google Scholar]

- Hense, R.L.; Penner, L.A.; Nelson, D.L. Implicit memory for age stereotypes. Soc. Cogn. 1995, 13, 399–415. [Google Scholar] [CrossRef]

- Tam, T.; Hewstone, M.; Harwood, J.; Voci, A.; Kenworthy, J. Intergroup contact and grandparent-grandchild communication: The effects of self-disclosure on implicit and explicit biases against older people. Group Process. Intergroup Relat. 2006, 9, 413–429. [Google Scholar] [CrossRef]

- Cuddy, A.J.C.; Fiske, S.T. Doddering but dear: Process, content, and function in stereotyping of older persons. In Ageism: Stereotyping and Prejudice Against Older Persons; Nelson, T.D., Ed.; The MIT Press: Cambridge, MA, USA, 2002; pp. 3–26. [Google Scholar]

- Hess, U.; Blairy, S.; Kleck, R.E. The influence of facial emotion displays, gender, and ethnicity on judgments of dominance and affiliation. J. Nonverbal Behav. 2000, 24, 265–283. [Google Scholar] [CrossRef]

- Knutson, B. Facial expressions of emotion influence interpersonal trait inferences. J. Nonverbal Behav. 1996, 20, 165–182. [Google Scholar] [CrossRef]

- Calder, A.J.; Young, A.W.; Keane, J.; Dean, M. Configural information in facial expression perception. J. Exp. Psychol. Hum. 2000, 26, 527–551. [Google Scholar] [CrossRef]

- Wong, B.; Cronin-Golomb, A.; Neargarder, S. Patterns of visual scanning as predictors of emotion identification in normal aging. Neuropsychology 2005, 19, 739–749. [Google Scholar] [CrossRef] [PubMed]

- Sullivan, S.; Ruffman, T.; Hutton, S.B. Age differences in emotion recognition skills and the visual scanning of emotion faces. J. Gerontol. B-Psychol. 2007, 62, P53–P60. [Google Scholar] [CrossRef]

- Salthouse, T.A. The processing-speed theory of adult age differences in cognition. Psychol. Rev. 1996, 103, 403. [Google Scholar] [CrossRef] [PubMed]

- Elfenbein, H.A.; Ambady, N. On the universality and cultural specificity of emotion recognition: A meta-analysis. Emotion 2002, 128, 203–235. [Google Scholar] [CrossRef]

- Palmore, E. What can the USA learn from japan about aging? Gerontologist 1975, 15, 64–67. [Google Scholar] [CrossRef] [PubMed]

- Löckenhoff, C.E.; de Fruyt, F.; Terracciano, A.; McCrae, R.R.; de Bolle, M.; Costa, P.T., Jr.; Aguilar-Vafaie, M.E.; Ahn, C.-K.; Ahn, H.-N.; Alcalay, L. Perceptions of aging across 26 cultures and their culture-level associates. Psychol. Aging 2009, 24, 941. [Google Scholar] [CrossRef] [PubMed]

- Boduroglu, A.; Yoon, C.; Luo, T.; Park, D.C. Age-related stereotypes: A comparison of american and chinese cultures. Gerontology 2006, 52, 324–333. [Google Scholar] [CrossRef] [PubMed]

- Magai, C.; Consedine, N.S.; Krivoshekova, Y.S.; Kudadjie-Gyamfi, E.; McPherson, R. Emotion experience and expression across the adult life span: Insights from a multimodal assessment study. Psychol. Aging 2006, 21, 303–317. [Google Scholar] [CrossRef] [PubMed]

- Malatesta, C.Z.; Fiore, M.J.; Messina, J.J. Affect, personality, and facial expressive characteristics of older people. Psychol. Aging 1987, 2, 64–69. [Google Scholar] [CrossRef] [PubMed]

- 1WST results were missing for one older rater.

- 2Degrees of freedom were corrected due to unequal variances.

- 3Because some actors did not give consent to the distribution of their video clips, only a subset of the video clips can be made available to other researchers.

© 2015 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Fölster, M.; Hess, U.; Hühnel, I.; Werheid, K. Age-Related Response Bias in the Decoding of Sad Facial Expressions. Behav. Sci. 2015, 5, 443-460. https://doi.org/10.3390/bs5040443

Fölster M, Hess U, Hühnel I, Werheid K. Age-Related Response Bias in the Decoding of Sad Facial Expressions. Behavioral Sciences. 2015; 5(4):443-460. https://doi.org/10.3390/bs5040443

Chicago/Turabian StyleFölster, Mara, Ursula Hess, Isabell Hühnel, and Katja Werheid. 2015. "Age-Related Response Bias in the Decoding of Sad Facial Expressions" Behavioral Sciences 5, no. 4: 443-460. https://doi.org/10.3390/bs5040443

APA StyleFölster, M., Hess, U., Hühnel, I., & Werheid, K. (2015). Age-Related Response Bias in the Decoding of Sad Facial Expressions. Behavioral Sciences, 5(4), 443-460. https://doi.org/10.3390/bs5040443