Body Knowledge and Emotion Recognition in Preschool Children: A Comparative Study of Human Versus Robot Tutors

Abstract

1. Introduction

1.1. Social Learning and Selective Tutor Preference

1.2. Social Robots in Educational Contexts

1.3. Embodied Cognition and Body-Centered Learning

1.4. Bodily Expression Recognition in Learning Contexts

1.5. Study Rationale and Objectives

- (1)

- Do preschool children demonstrate comparable learning and recognition performance when interacting with social robots compared to human demonstrators in body-centered tasks?

- (2)

- How do developmental factors modulate children’s responsiveness to different agent types?

2. Methods

2.1. Participants

- -

- Group 1 (G1): 3 years 1 month to 4 years 1 month (mean ± SE = 3.73 ± 0.07).

- -

- Group 2 (G2): 4 years 2 months to 5 years 1 month (mean ± SE = 4.71 ± 0.06).

- -

- Group 3 (G3): 5 years 2 months to 6 years 1 month (mean ± SE = 5.65 ± 0.059).

2.2. Experimental Procedure

2.2.1. General Setup

- (1)

- Human group: Exposure to an adult demonstrator

- (2)

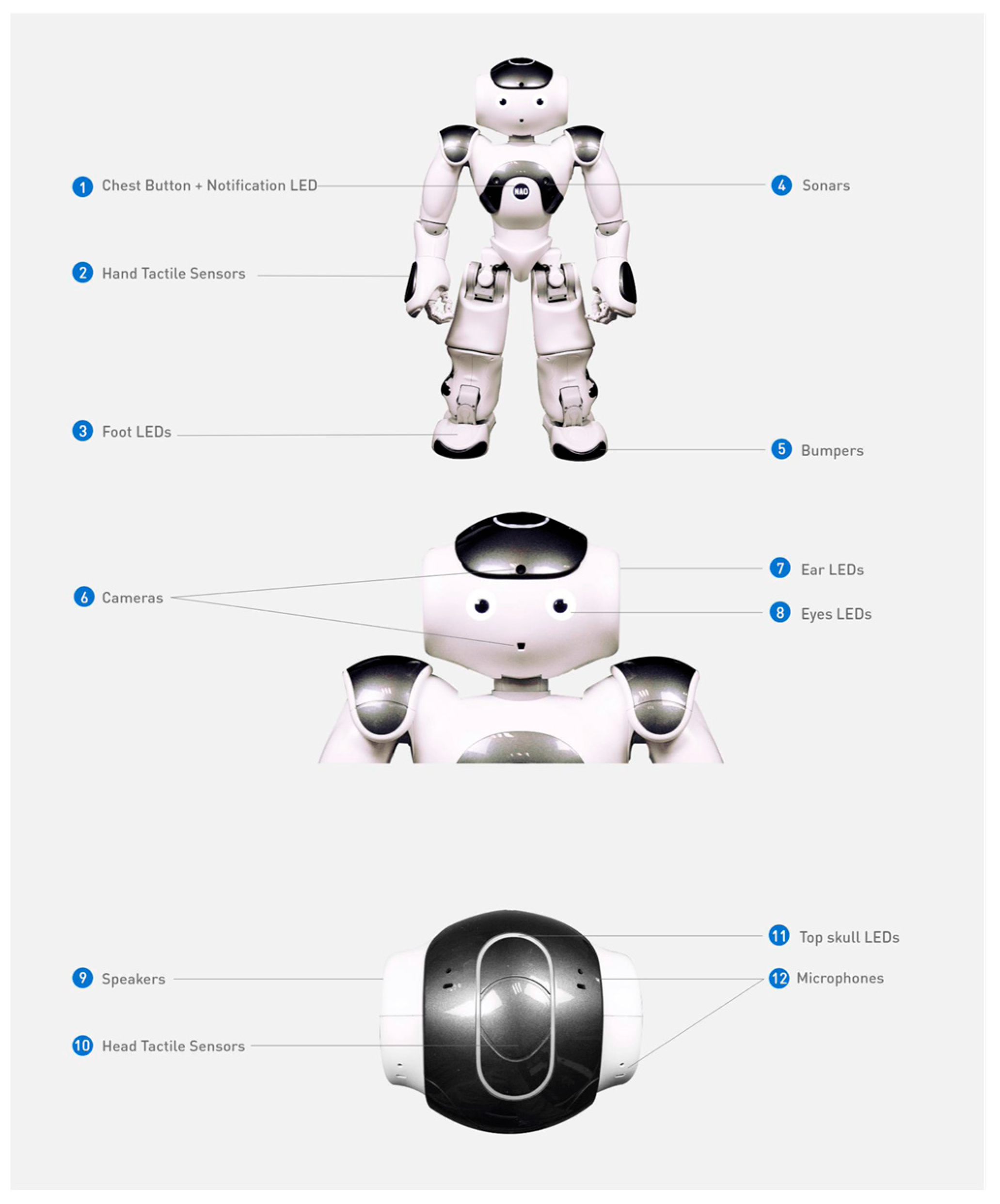

- Robot group: Exposure to the NAO robot demonstrator.

2.2.2. Experimental Tasks and Scores

- (a)

- Comprehension of Body Part Labels

- (b)

- Imitation of Body Part Sequences

- (c)

- Emotions Task

2.2.3. Coding Procedure and Reliability

3. Results

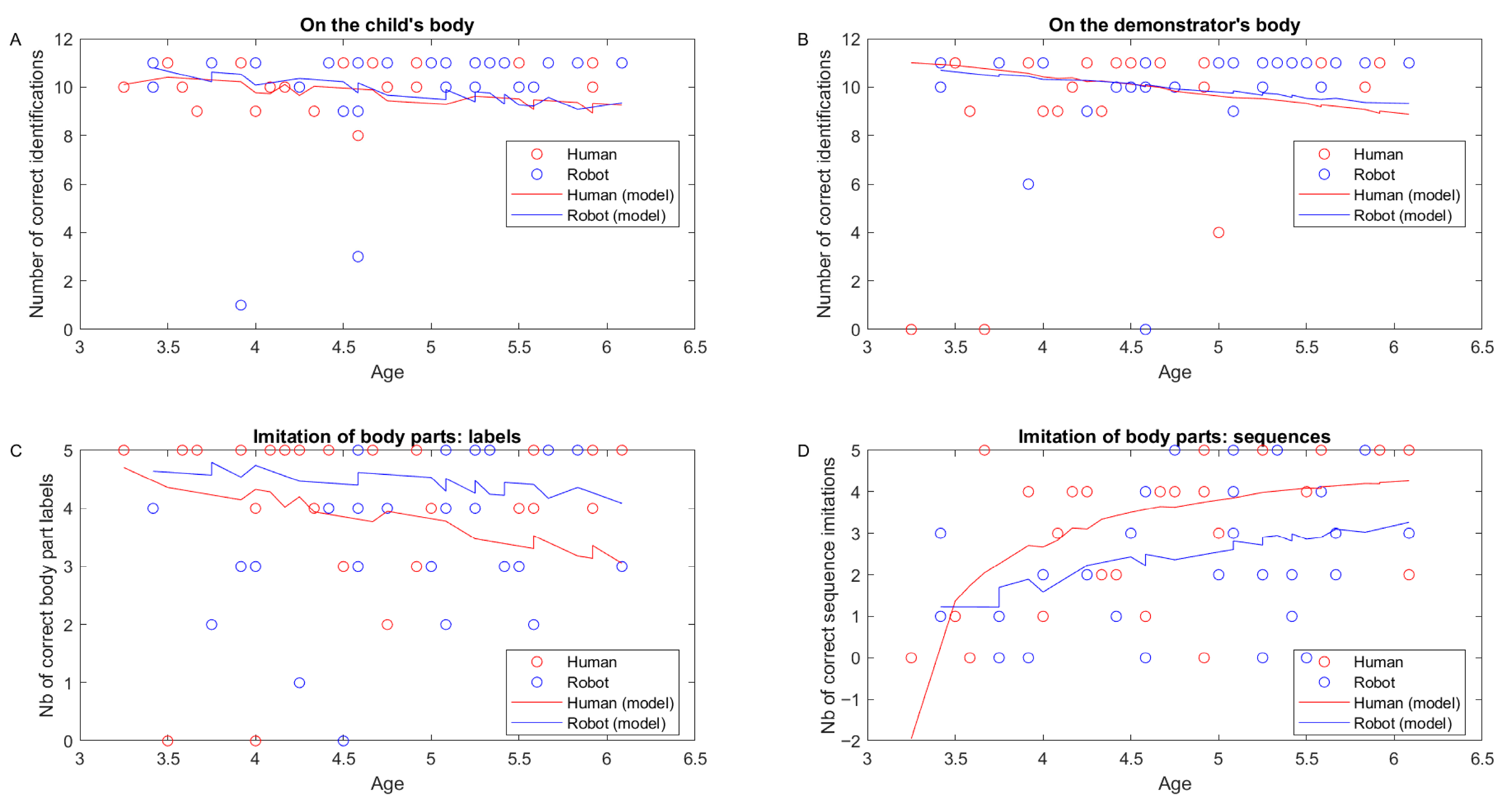

3.1. Comprehension of Body Part Labels

3.2. Imitation of Body Part Sequences

3.3. Emotions Task

4. Discussion

4.1. Core Empirical Findings

4.2. Theoretical Implications

4.3. Methodological Implications

4.4. Practical and Educational Implications

4.5. Limitations and Future Directions

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Akinola, S. O., Oyewole, O. J., & Salau, A. O. (2023). Application of artificial intelligence in library and information science: A systematic review. Library Hi Tech, 41(4), 893–912. [Google Scholar]

- Aksoy, P., & Baran, G. (2010). Review of studies aimed at bringing social learning theory to preschool children. Procedia-Social and Behavioral Sciences, 2(2), 663–669. [Google Scholar] [CrossRef]

- Alibali, M. W., & Nathan, M. J. (2012). Embodiment in mathematics teaching and learning: Evidence from learners’ and teachers’ gestures. Journal of the Learning Sciences, 21(2), 247–286. [Google Scholar] [CrossRef]

- Alves-Oliveira, P., Sequeira, P., Melo, F. S., Castellano, G., & Paiva, A. (2019). Empathic robot for group learning: A field study. Human-Computer Interaction, 34(2), 85–114. [Google Scholar] [CrossRef]

- Aviezer, H., Trope, Y., & Todorov, A. (2012). Body cues, not facial expressions, discriminate between intense positive and negative emotions. Science, 338(6111), 1225–1229. [Google Scholar] [CrossRef]

- Bandura, A. (1977). Social learning theory. Prentice Hall. [Google Scholar]

- Barsalou, L. W. (2008). Grounded cognition. Annual Review of Psychology, 59, 617–645. [Google Scholar] [CrossRef] [PubMed]

- Bartneck, C., & Forlizzi, J. (2004, September 22). A design-centred framework for social human-robot interaction. 13th IEEE International Workshop on Robot and Human Interactive Communication (pp. 591–594), Kurashiki, Japan. [Google Scholar]

- Beck, A., Cañamero, L., & Bard, K. A. (2010, September 13–15). Towards an affect space for robots to display emotional body language. 19th IEEE International Symposium on Robot and Human Interactive Communication (pp. 464–469), Viareggio, Italy. [Google Scholar]

- Beck, A., Stevens, B., Bard, K. A., & Cañamero, L. (2013). Emotional body language displayed by artificial agents. ACM Transactions on Interactive Intelligent Systems, 2(1), 1–29. [Google Scholar] [CrossRef]

- Begus, K., Gliga, T., & Southgate, V. (2016). Infants’ preferences for native speakers are associated with an expectation of information. Proceedings of the National Academy of Sciences, 113(44), 12397–12402. [Google Scholar] [CrossRef]

- Belpaeme, T., Kennedy, J., Ramachandran, A., Scassellati, B., & Tanaka, F. (2018). Social robots for education: A review. Science Robotics, 3(21), eaat5954. [Google Scholar] [CrossRef] [PubMed]

- Bergès, J., & Lézine, I. (1963). Test d’imitation de gestes [Test of gesture imitation]. Masson. [Google Scholar]

- Boone, R. T., & Cunningham, J. G. (1998). Children’s decoding of emotion in expressive body movement: The development of cue attunement. Developmental Psychology, 34(5), 1007–1016. [Google Scholar] [CrossRef]

- Bridgers, S., Jara-Ettinger, J., & Gweon, H. (2020). Young children consider the expected utility of others’ learning to decide what to teach. Nature Human Behaviour, 4(2), 144–152. [Google Scholar] [CrossRef]

- Brosseau-Liard, P., Cassels, T., & Birch, S. (2014). You seem certain but you were wrong before: Developmental change in preschoolers’ relative trust in accurate versus confident speakers. PLoS ONE, 9(9), e108308. [Google Scholar]

- Brosseau-Liard, P. E., & Birch, S. A. (2010). “I bet you know more and are nicer too!”: What children infer from others’ accuracy. Developmental Science, 13(5), 772–778. [Google Scholar] [PubMed]

- Camões-Costa, V., Erjavec, M., & Horne, P. J. (2011). Language and learning in children: How do children learn about the body? The Psychological Record, 61(4), 4. [Google Scholar]

- Ciornei, I., Dima, M., & Robins, B. (2023). The effectiveness of using a social robot versus a human tutor in teaching preschool children. Computers & Education, 191, 104627. [Google Scholar]

- Clark, A. (1997). Being there: Putting brain, body, and world together again. MIT Press. [Google Scholar]

- Clark, A., Chalmers, D., & Karmiloff-Smith, A. (2024). Embodied cognition and artificial intelligence: A philosophical perspective. Mind & Machine, 34(1), 1–25. [Google Scholar]

- Cohen, I., Looije, R., & Neerincx, M. A. (2012). Child’s recognition of emotions in robot’s face and body. In Proceedings of the 7th ACM/IEEE international conference on human-robot interaction (pp. 123–124). IEEE. [Google Scholar]

- Corriveau, K., Fusaro, M., & Harris, P. L. (2009). Going with the flow: Preschoolers prefer nondissenters as informants. Psychological Science, 20(3), 372–377. [Google Scholar]

- Csibra, G., & Gergely, G. (2009). Natural pedagogy. Trends in Cognitive Sciences, 13(4), 148–153. [Google Scholar] [CrossRef]

- de Vignemont, F. (2010). Body schema and body image—Pros and cons. Neuropsychologia, 48(3), 669–680. [Google Scholar] [CrossRef]

- Diehl, J. J., Schmitt, L. M., Villano, M., & Crowell, C. R. (2012). The clinical use of robots for individuals with autism spectrum disorders: A critical review. Research in Autism Spectrum Disorders, 6(1), 249–262. [Google Scholar] [CrossRef]

- Einav, S., & Robinson, E. J. (2010). Children’s sensitivity to error magnitude when evaluating informants. Cognitive Development, 25(3), 218–229. [Google Scholar] [CrossRef]

- Ekman, P., & Friesen, W. V. (1976). Pictures of facial affect. Consulting Psychologists Press. [Google Scholar]

- Fenson, L., Dale, P. S., Reznick, J. S., Bates, E., Thal, D. J., Pethick, S. J., Tomasello, M., Mervis, C. B., & Stiles, J. (1994). Variability in early communicative development. Monographs of the Society for Research in Child Development, 59(5), i+iii-v+1-185. [Google Scholar] [CrossRef]

- Fischer, M. H., & Zwaan, R. A. (2008). Embodied language: A review of the role of the motor system in language comprehension. Quarterly Journal of Experimental Psychology, 61(6), 825–850. [Google Scholar] [CrossRef]

- Fong, A., Yudkowsky, M., Noh, A., Bures, M., Ramachandran, A., Flood, M., Dickens, L., Brown, R., Hartel, L., Cullinan, D., Johnson, A., & Zielinski, D. (2023). Embodied mathematical imagination and cognition (EMI): Learning fractions with a social robot. Frontiers in Psychology, 14, 1195823. [Google Scholar]

- Gallagher, S. (2005). How the body shapes the mind. Oxford University Press. [Google Scholar]

- Gallese, V., & Lakoff, G. (2005). The brain’s concepts: The role of the sensory-motor system in conceptual knowledge. Cognitive Neuropsychology, 22(3–4), 455–479. [Google Scholar] [CrossRef]

- Ghiglino, D., Floris, F., De Tommaso, D., Rodà, A., Wykowska, A., & Chevalier, P. (2025). Enhancing theory of mind in autism through humanoid robot interaction in a randomized controlled trial. Scientific Reports, 15, 27650. [Google Scholar] [CrossRef] [PubMed]

- Glenberg, A. M. (2010). Embodiment as a unifying perspective for psychology. Wiley Interdisciplinary Reviews: Cognitive Science, 1(4), 586–596. [Google Scholar]

- Goldin-Meadow, S. (2003). Hearing gesture: How our hands help us think. Harvard University Press. [Google Scholar]

- Guellai, B., & Streri, A. (2022). Mouth movements as possible cues of social interest at birth: New evidences for early communicative behaviors. Frontiers in Psychology, 13, 831733. [Google Scholar] [CrossRef]

- Gweon, H., Pelton, H., Konopka, J. A., & Schulz, L. E. (2014). Sins of omission: Children selectively explore when teachers are under-informative. Cognition, 132(3), 335–341. [Google Scholar] [CrossRef] [PubMed]

- Herba, C., & Phillips, M. (2004). Annotation: Development of facial expression recognition from childhood to adolescence: Behavioural and neurological perspectives. Journal of Child Psychology and Psychiatry, 45(7), 1185–1198. [Google Scholar] [CrossRef] [PubMed]

- Izard, C., Fine, S., Schultz, D., Mostow, A., Ackerman, B., & Youngstrom, E. (2001). Emotion knowledge as a predictor of social behavior and academic competence in children at risk. Psychological Science, 12(1), 18–23. [Google Scholar] [CrossRef]

- Jaswal, V. K. (2010). Believing what you’re told: Young children’s trust in unexpected testimony about the physical world. Cognitive Psychology, 61(3), 248–272. [Google Scholar] [CrossRef] [PubMed]

- Jones, S. S. (2009). The development of imitation in infancy. Philosophical Transactions of the Royal Society B: Biological Sciences, 364(1528), 2325–2335. [Google Scholar] [CrossRef]

- Kahn, P. H., Jr., Kanda, T., Ishiguro, H., Freier, N. G., Severson, R. L., Gill, B. T., Ruckert, J. H., & Shen, S. (2012). “Robovie, you’ll have to go into the closet now”: Children’s social and moral relationships with a humanoid robot. Developmental Psychology, 48(2), 303–314. [Google Scholar] [CrossRef]

- Kennedy, J., Baxter, P., & Belpaeme, T. (2015, March 2–5). The robot who tried too hard: Social behaviour of a robot tutor can negatively affect child learning. 2015 10th ACM/IEEE International Conference on Human-Robot Interaction (HRI) (pp. 67–74), Portland, OR, USA. [Google Scholar]

- Kim, E. S., Berkovits, L. D., Bernier, E. P., Leyzberg, D., Shic, F., Paul, R., & Scassellati, B. (2013). Social robots as embedded reinforcers of social behavior in children with autism. Journal of Autism and Developmental Disorders, 43(5), 1038–1049. [Google Scholar] [CrossRef]

- Kinzler, K. D., Dupoux, E., & Spelke, E. S. (2007). The native language of social cognition. Proceedings of the National Academy of Sciences, 104(30), 12577–12580. [Google Scholar] [CrossRef] [PubMed]

- Kinzler, K. D., & Spelke, E. S. (2011). Do infants show social preferences for people differing in race? Cognition, 119(1), 1–9. [Google Scholar] [CrossRef]

- Koenig, M. A., & Harris, P. L. (2005). Preschoolers mistrust ignorant and inaccurate speakers. Child Development, 76(6), 1261–1277. [Google Scholar] [CrossRef]

- Kuhl, P. K., Tsao, F. M., & Liu, H. M. (2003). Foreign-language experience in infancy: Effects of short-term exposure and social interaction on phonetic learning. Proceedings of the National Academy of Sciences, 100(15), 9096–9101. [Google Scholar] [CrossRef]

- Lagerlöf, I., & Djerf, M. (2009). Children’s understanding of emotion in dance. European Journal of Developmental Psychology, 6(4), 409–431. [Google Scholar] [CrossRef]

- Lakoff, G., & Johnson, M. (1999). Philosophy in the flesh: The embodied mind and its challenge to Western thought. Basic Books. [Google Scholar]

- Li, L., Chen, M., Nie, Z., Xu, J., & Wang, Y. (2024). Embodied artificial intelligence: Trends and challenges. Engineering, 10, 12–24. [Google Scholar]

- Longo, M. R., Azañón, E., & Haggard, P. (2010). More than skin deep: Body representation beyond primary somatosensory cortex. Neuropsychologia, 48(3), 655–668. [Google Scholar] [CrossRef]

- Maure, C., & Bruno, B. (2023). Storytelling with robots: Effects of robot language ability and non-verbal behaviour on child-robot interaction. International Journal of Social Robotics, 15(3), 441–463. [Google Scholar]

- Meeren, H. K., van Heijnsbergen, C. C., & de Gelder, B. (2005). Rapid perceptual integration of facial expression and emotional body language. Proceedings of the National Academy of Sciences, 102(45), 16518–16523. [Google Scholar] [CrossRef] [PubMed]

- Mega, C., Ronconi, L., & De Beni, R. (2014). What makes a good student? How emotions, self-regulated learning, and motivation contribute to academic achievement. Journal of Educational Psychology, 106(1), 121–131. [Google Scholar] [CrossRef]

- Meltzoff, A. N., Kuhl, P. K., Movellan, J., & Sejnowski, T. J. (2009). Foundations for a new science of learning. Science, 325(5938), 284–288. [Google Scholar] [CrossRef]

- Meltzoff, A. N., & Moore, M. K. (1977). Imitation of facial and manual gestures by human neonates. Science, 198(4312), 75–78. [Google Scholar] [CrossRef]

- Mills, C. M. (2013). Knowing when to doubt: Developing a critical stance when learning from others. Developmental Psychology, 49(3), 404–418. [Google Scholar] [CrossRef]

- Mix, K. S., & Cheng, Y. L. (2012). The relation between space and math: Developmental and educational implications. Advances in Child Development and Behavior, 42, 197–243. [Google Scholar]

- Mondloch, C. J. (2012). Sad or fearful? The influence of body posture on adults’ and children’s perception of facial displays of emotion. Journal of Experimental Child Psychology, 111(2), 180–196. [Google Scholar] [CrossRef]

- Montgomery, C., Guellai, B., & Rämä, P. (2024). Is that you I hear? Speaker familiarity modulates neural signatures of lexical-semantic activation in 18-month-old infants. Journal of Cognitive Neuroscience, 36(9), 1963–1976. [Google Scholar] [CrossRef] [PubMed]

- Mori, M., MacDorman, K. F., & Kageki, N. (2012). The uncanny valley [from the field]. IEEE Robotics & Automation Magazine, 19(2), 98–100. [Google Scholar] [CrossRef]

- Naito, E., & Ehrsson, H. H. (2006). Somatic sensation of hand-object interactive movement is associated with activity in the left inferior parietal cortex. Journal of Neuroscience, 26(14), 3783–3790. [Google Scholar] [CrossRef]

- Newcombe, N. S., & Frick, A. (2010). Early education for spatial intelligence: Why, what, and how. Mind, Brain, and Education, 4(3), 102–111. [Google Scholar] [CrossRef]

- Okumura, Y., Kanakogi, Y., Kanda, T., Ishiguro, H., & Itakura, S. (2013). The power of human gaze on infant learning. Cognition, 128(2), 127–133. [Google Scholar] [CrossRef]

- Olson, K. R., & Spelke, E. S. (2008). Foundations of cooperation in young children. Cognition, 108(1), 222–231. [Google Scholar] [CrossRef] [PubMed]

- Pasquini, E. S., Corriveau, K. H., Koenig, M., & Harris, P. L. (2007). Preschoolers monitor the relative accuracy of informants. Developmental Psychology, 43(5), 1216–1226. [Google Scholar] [CrossRef]

- Pekrun, R., Goetz, T., Titz, W., & Perry, R. P. (2002). Academic emotions in students’ self-regulated learning and achievement: A program of qualitative and quantitative research. Educational Psychologist, 37(2), 91–105. [Google Scholar] [CrossRef]

- Penfield, W., & Rasmussen, T. (1950). The cerebral cortex of man: A clinical study of localization of function. Macmillan. [Google Scholar]

- Perloff, R. M. (1982). Social comparison and imitation in children: A developmental perspective. Social Cognition, 1(2), 103–129. [Google Scholar]

- Pfeifer, R., & Bongard, J. (2006). How the body shapes the way we think: A new view of intelligence. MIT Press. [Google Scholar]

- Pisella, L., Roulin, J. L., Gaveau, V., Rode, G., & Rossetti, Y. (2019). Autotopagnosia and body-image disturbances: Some thoughts from a specific single case. Cortex, 119, 565–568. [Google Scholar]

- Poulin-Dubois, D., & Brosseau-Liard, P. (2016). The developmental origins of selective social learning. Current Directions in Psychological Science, 25(1), 60–64. [Google Scholar] [CrossRef]

- Raimo, S., Boccia, M., Di Vita, A., Iona, T., Cropano, M., Gaita, M., Guariglia, C., & Grossi, D. (2021). The body across adulthood: On the relation between interoception and body representations. Frontiers in Neuroscience, 15, 586684. [Google Scholar] [CrossRef]

- Riggio, H. R., & Riggio, R. E. (2002). Emotional expressiveness, extraversion, and neuroticism: A meta-analysis. Journal of Nonverbal Behavior, 26(4), 195–218. [Google Scholar] [CrossRef]

- Robinson, N. L., Cottier, T. V., & Kavanagh, D. J. (2019). Psychosocial health interventions by social robots: Systematic review of randomized controlled trials. Journal of Medical Internet Research, 21(5), e13203. [Google Scholar] [CrossRef]

- Roseberry, S., Hirsh-Pasek, K., & Golinkoff, R. M. (2014). Skype me! Socially contingent interactions help toddlers learn language. Child Development, 85(3), 956–970. [Google Scholar] [CrossRef]

- Ruba, A. L., & Pollak, S. D. (2020). Children’s emotion inferences from masked faces: Implications for social interactions during COVID-19. PLoS ONE, 15(12), e0243708. [Google Scholar] [CrossRef] [PubMed]

- Russo, N., Stella, G., Massaglia, G., & Savoini, M. (2018). Body knowledge assessment in preschool children: Validation of the Bergès-Lézine test. Developmental Neuropsychology, 43(4), 279–295. [Google Scholar]

- Scassellati, B., Admoni, H., & Matarić, M. (2012). Robots for use in autism research. Annual Review of Biomedical Engineering, 14, 275–294. [Google Scholar] [CrossRef]

- Schwoebel, J., Buxbaum, L. J., & Coslett, H. B. (2004). Representations of the human body in the production and imitation of complex movements. Cognitive Neuropsychology, 21(2–4), 285–298. [Google Scholar] [CrossRef] [PubMed]

- Shutts, K., Kinzler, K. D., McKee, C. B., & Spelke, E. S. (2009). Social information guides infants’ selection of foods. Journal of Cognition and Development, 10(1–2), 1–17. [Google Scholar] [CrossRef]

- Somogyi, E., Tran, T. T. U., Guellai, B., Király, I., & Esseily, R. (2020). The effect of language on prosocial behaviors in preschool children. PLoS ONE, 15(10), e0240028. [Google Scholar] [CrossRef] [PubMed]

- Stock-Homburg, R., Hannig, M., & Balaguer-Ballester, E. (2018). Robotic emotion research: A systematic review of emotions in HRI. In Proceedings of the 11th pervasive technologies related to assistive environments conference (pp. 331–340). Association for Computing Machinery. [Google Scholar]

- Tomasello, M., Carpenter, M., Call, J., Behne, T., & Moll, H. (2005). Understanding and sharing intentions: The origins of cultural cognition. Behavioral and Brain Sciences, 28(5), 675–691. [Google Scholar] [CrossRef]

- Tsutsui, K., Shiomi, M., Kanda, T., Ishiguro, H., & Hagita, N. (2024). Children’s learning with a social robot: The effects of robot’s contingent behavior on learning outcomes. Computers & Education, 198, 104756. [Google Scholar]

- van den Berghe, R., Verhagen, J., Oudgenoeg-Paz, O., van der Ven, S., & Leseman, P. (2019). Social robots for language learning: A review. Review of Educational Research, 89(2), 259–295. [Google Scholar] [CrossRef]

- Varela, F. J., Thompson, E., & Rosch, E. (1991). The embodied mind: Cognitive science and human experience. MIT Press. [Google Scholar]

- Widen, S. C. (2013). Children’s interpretation of facial expressions: The long path from valence-based to specific discrete categories. Emotion Review, 5(1), 72–77. [Google Scholar] [CrossRef]

- Widen, S. C., & Russell, J. A. (2003). A closer look at preschoolers’ freely produced labels for facial expressions. Developmental Psychology, 39(1), 114–128. [Google Scholar] [CrossRef]

- Wilson, M. (2002). Six views of embodied cognition. Psychonomic Bulletin & Review, 9(4), 625–636. [Google Scholar] [CrossRef]

- Wood, L. A., Kendal, R. L., & Flynn, E. G. (2017). Copy me or copy you? The effect of prior experience on social learning. Cognition, 169, 67–77. [Google Scholar] [CrossRef] [PubMed]

- Wykowska, A. (2020). Social robots to test flexibility of human social cognition. International Journal of Social Robotics, 12, 1203–1211. [Google Scholar] [CrossRef]

- Zmyj, N., Buttelmann, D., Carpenter, M., & Daum, M. M. (2010). The reliability of a model influences 14-month-olds’ imitation. Journal of Experimental Child Psychology, 106(4), 208–220. [Google Scholar] [CrossRef] [PubMed]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Araguas, A.; Blanchard, A.; Derégnaucourt, S.; Chopin, A.; Guellai, B. Body Knowledge and Emotion Recognition in Preschool Children: A Comparative Study of Human Versus Robot Tutors. Behav. Sci. 2026, 16, 29. https://doi.org/10.3390/bs16010029

Araguas A, Blanchard A, Derégnaucourt S, Chopin A, Guellai B. Body Knowledge and Emotion Recognition in Preschool Children: A Comparative Study of Human Versus Robot Tutors. Behavioral Sciences. 2026; 16(1):29. https://doi.org/10.3390/bs16010029

Chicago/Turabian StyleAraguas, Alice, Arnaud Blanchard, Sébastien Derégnaucourt, Adrien Chopin, and Bahia Guellai. 2026. "Body Knowledge and Emotion Recognition in Preschool Children: A Comparative Study of Human Versus Robot Tutors" Behavioral Sciences 16, no. 1: 29. https://doi.org/10.3390/bs16010029

APA StyleAraguas, A., Blanchard, A., Derégnaucourt, S., Chopin, A., & Guellai, B. (2026). Body Knowledge and Emotion Recognition in Preschool Children: A Comparative Study of Human Versus Robot Tutors. Behavioral Sciences, 16(1), 29. https://doi.org/10.3390/bs16010029