Machine Learning Design for High-Entropy Alloys: Models and Algorithms

Abstract

1. Introduction

2. Machine Learning (ML) in HEA Design

3. Common ML Models and Algorithms in HEA Design

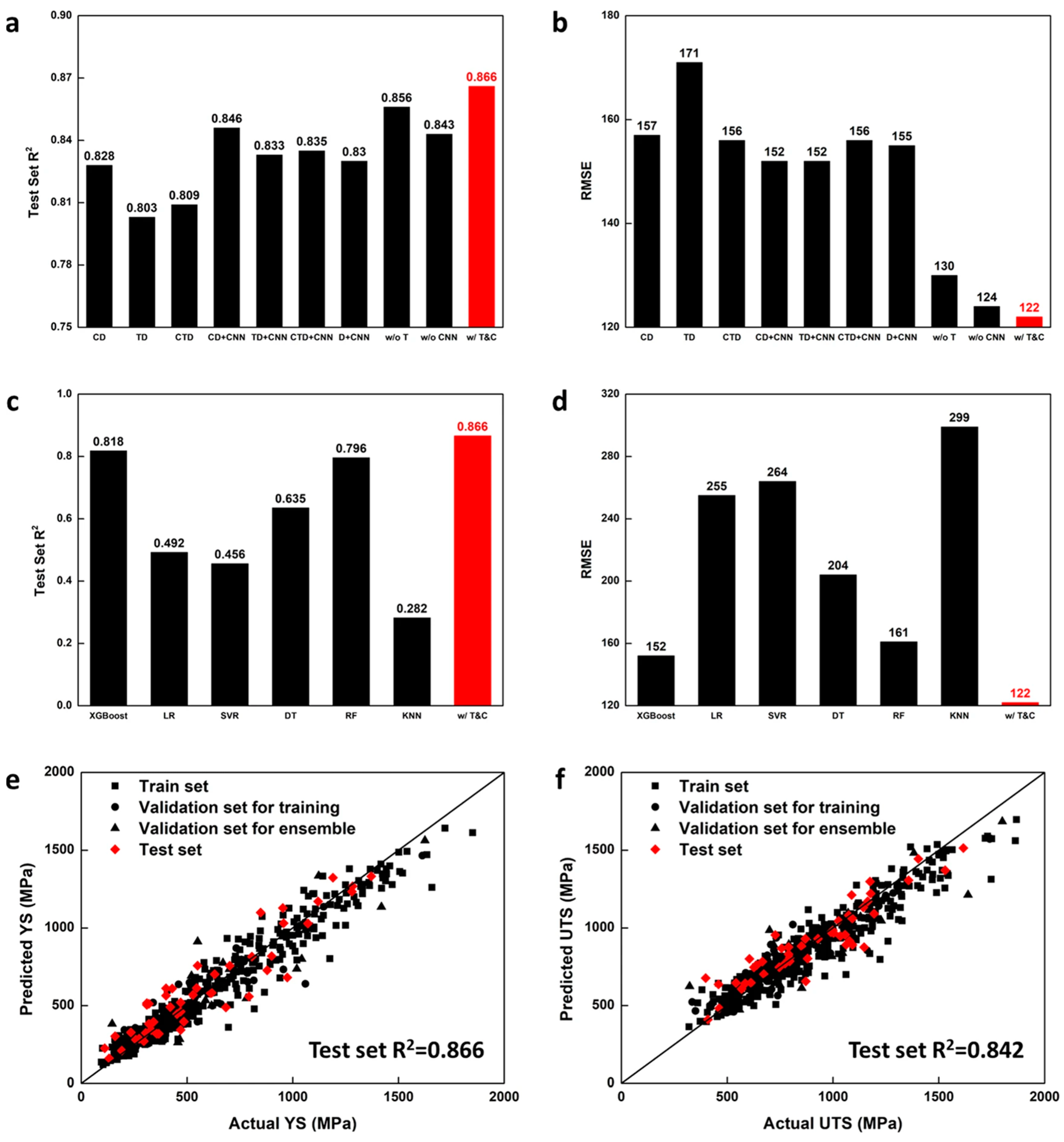

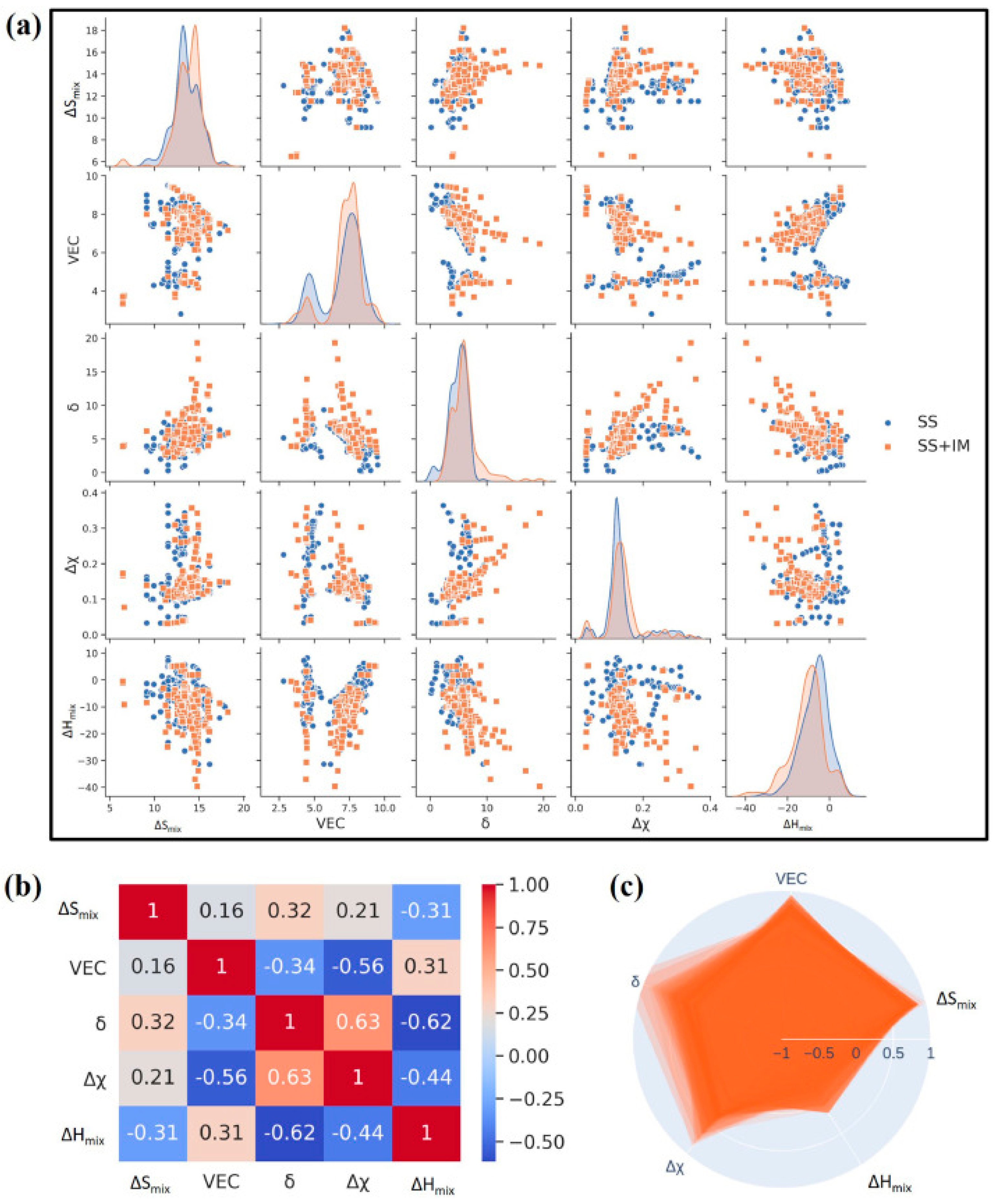

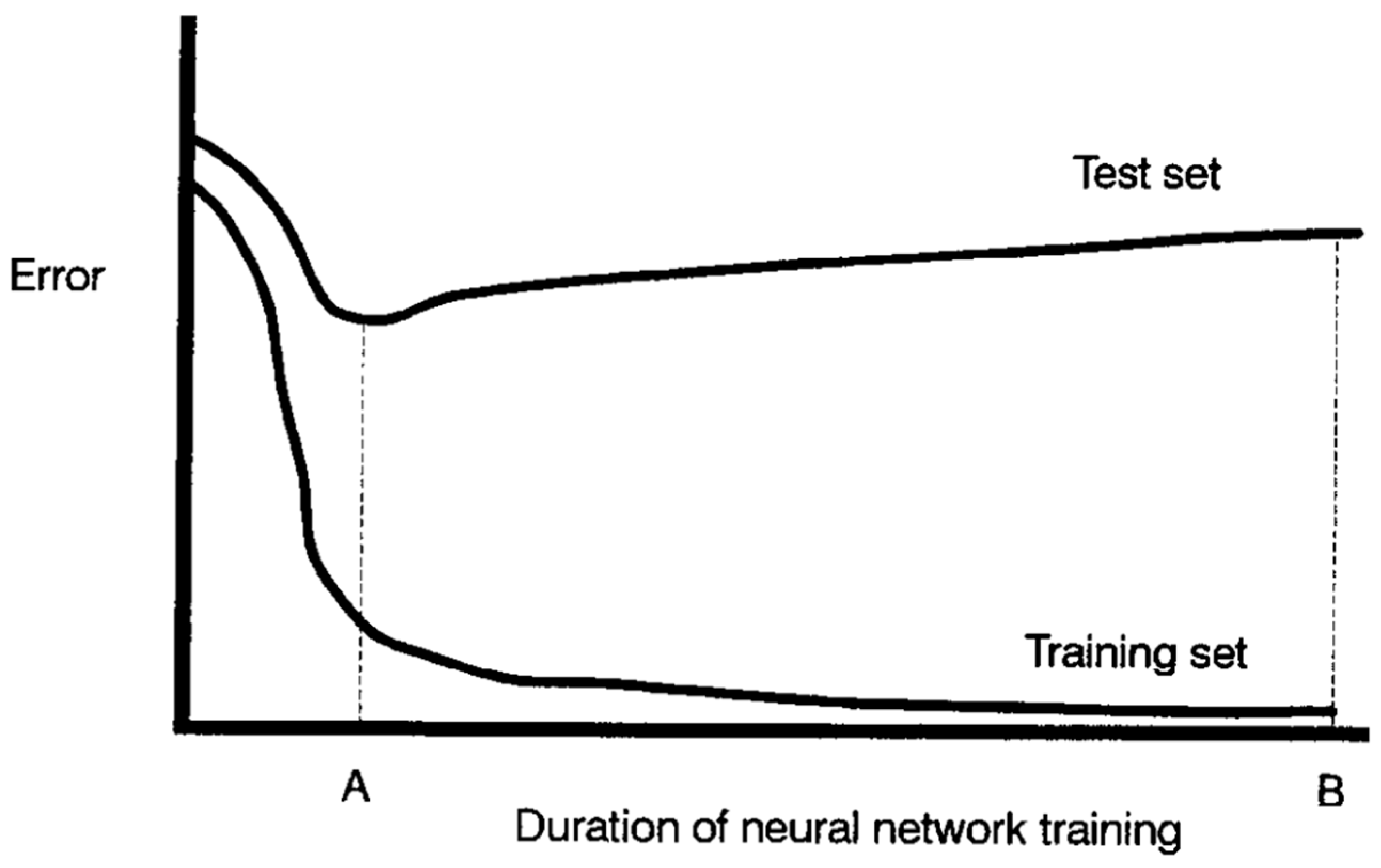

3.1. Neural Networks (NNs)

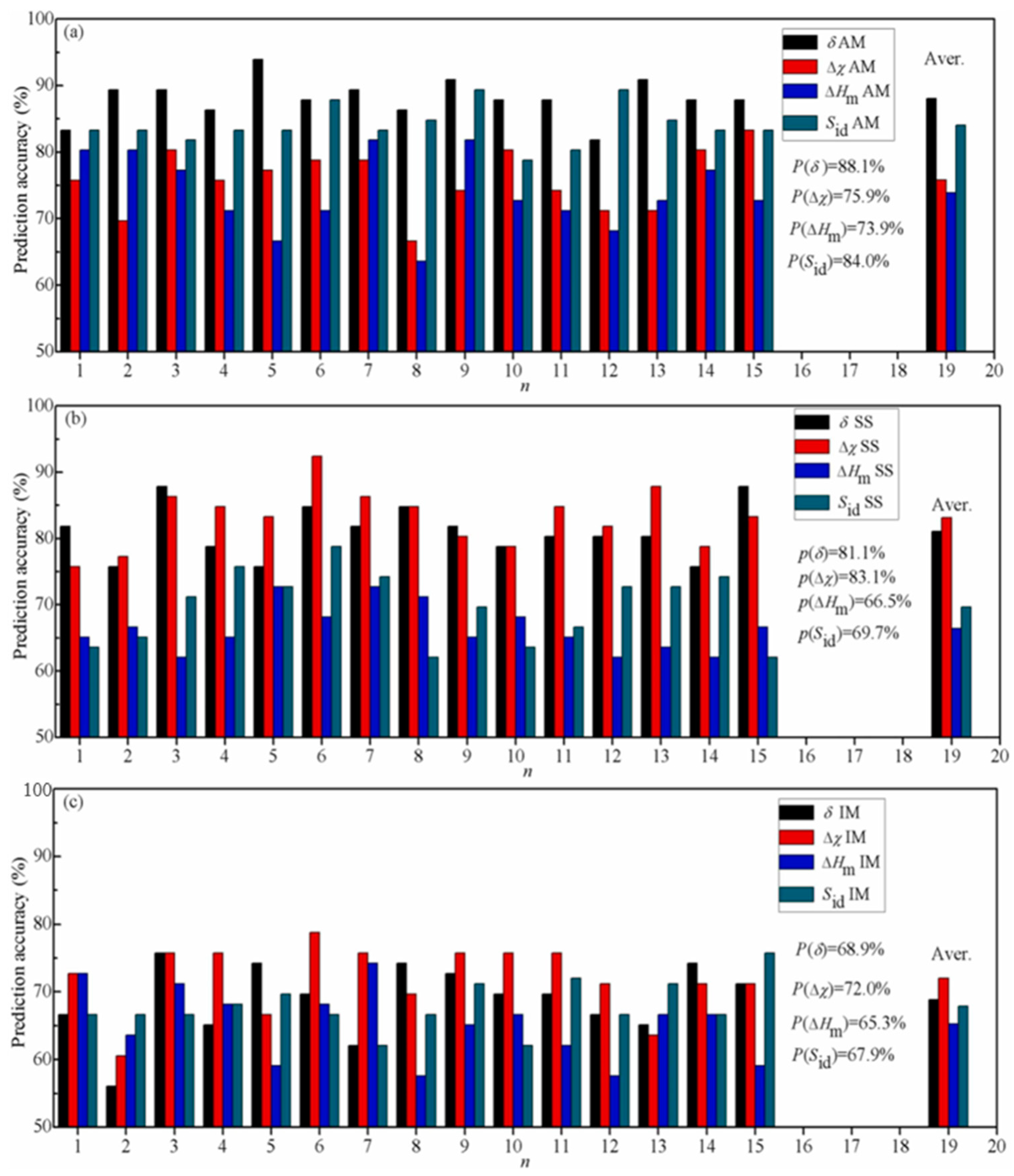

3.2. Support Vector Machine (SVM) Algorithm

3.3. Gaussian Process (GP) Model

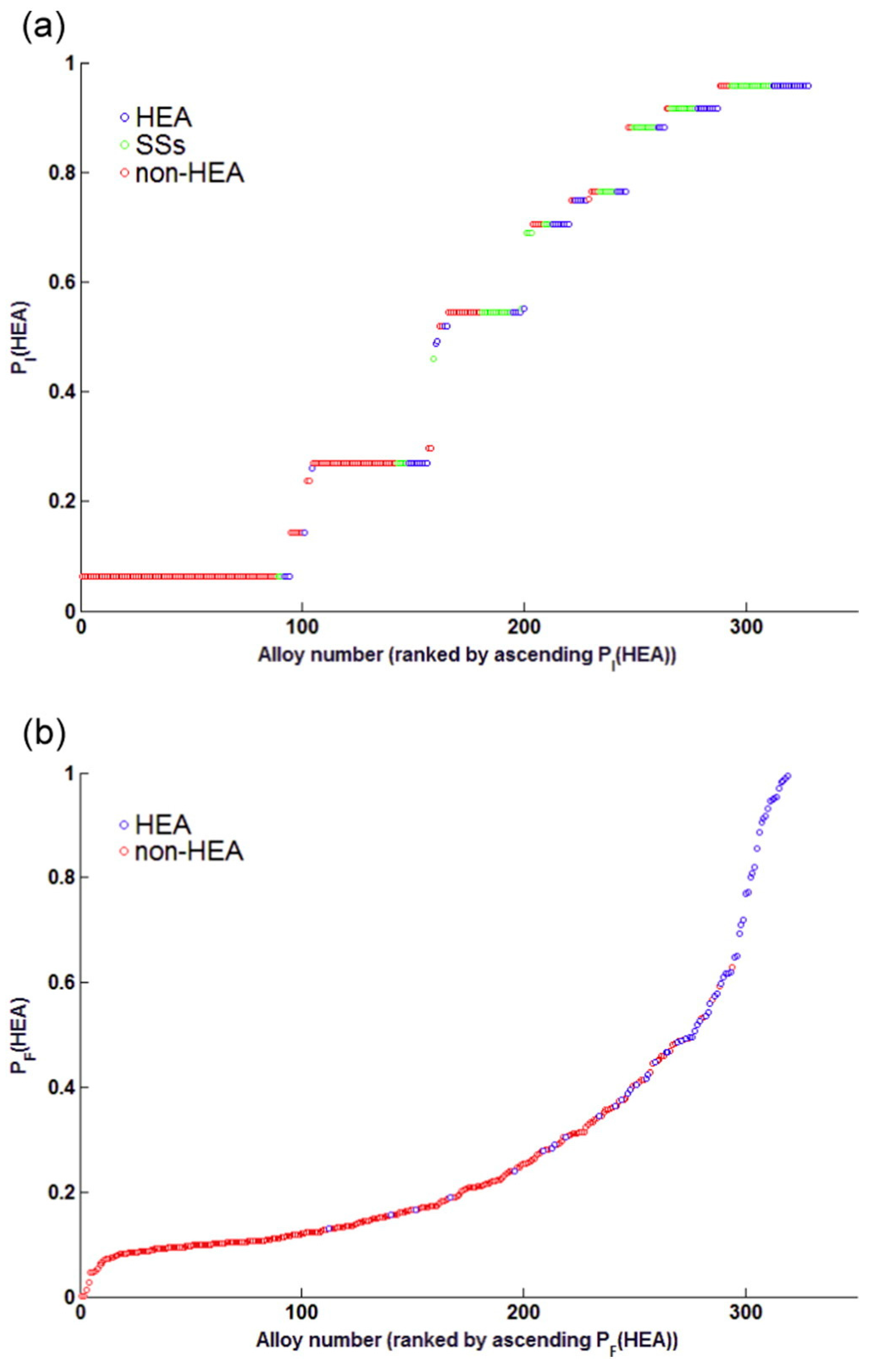

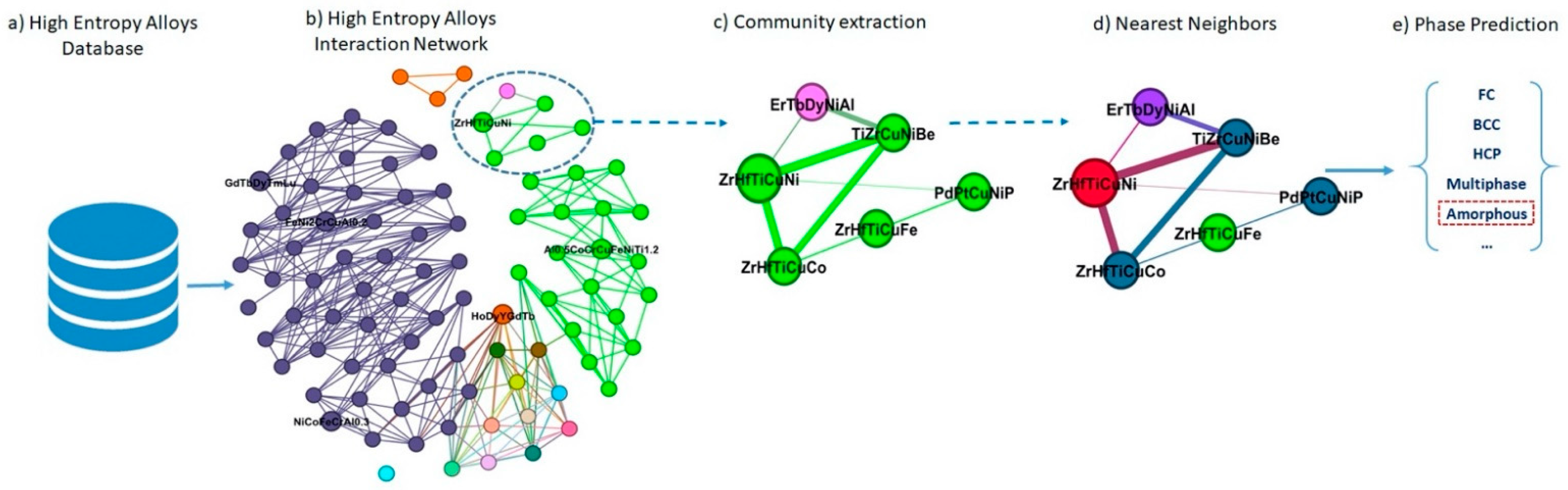

3.4. K-Nearest Neighbors (KNN) Model

3.5. Random Forests (RFs) Algorithm

4. Advanced ML Models and Algorithms in HEA Design

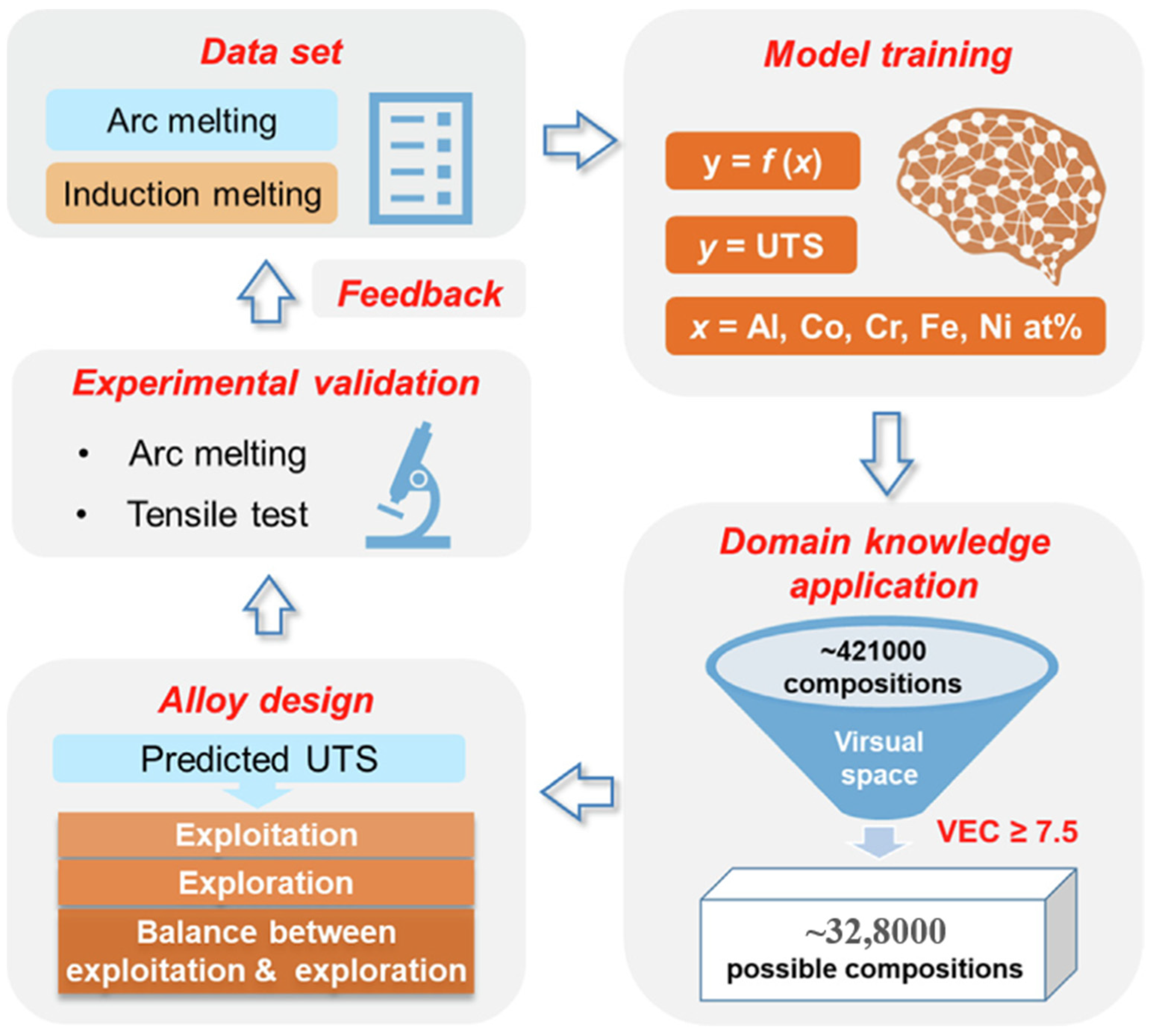

4.1. Active Learning (AL) Algorithm

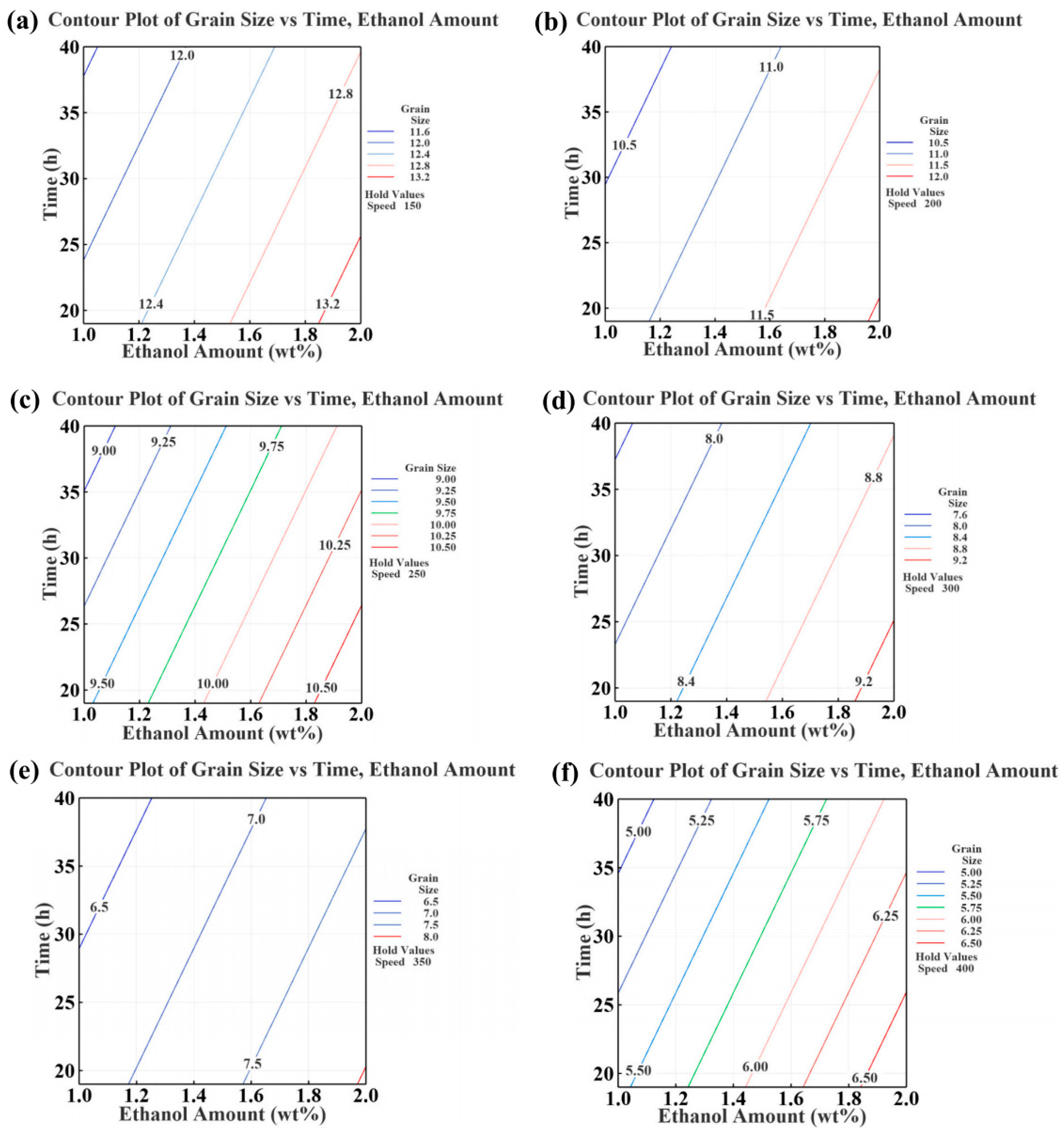

4.2. Genetic Algorithm (GA)

4.3. Deep Learning (DL) Algorithm

4.4. Transfer Learning (TL) Algorithm

5. Potential Weakness of ML Models and Optimization Strategies

5.1. Data Dependence and Generalization

5.2. Model Complexity

5.3. Interpretability

5.4. Integration of Computational Theory and Experiment

6. Prospect

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Cantor, B.; Chang, I.T.H.; Knight, P.; Vincent, A.J.B. Microstructural development in equiatomic multicomponent alloys. Mater. Sci. Eng. A 2004, 375–377, 213–218. [Google Scholar] [CrossRef]

- Yeh, J.W.; Chen, S.K.; Lin, S.J.; Gan, J.Y.; Chin, T.S.; Shun, T.T.; Tsau, C.H.; Chang, S.Y. Nanostructured high-entropy alloys with multiple principal elements: Novel alloy design concepts and outcomes. Adv. Eng. Mater. 2004, 6, 299–303. [Google Scholar] [CrossRef]

- Miracle, D.B.; Senkov, O.N. A critical review of high entropy alloys and related concepts. Acta Mater. 2017, 122, 448–511. [Google Scholar] [CrossRef]

- Dippo, O.F.; Vecchio, K.S. A universal configurational entropy metric for high-entropy materials. Scr. Mater. 2021, 201, 113974. [Google Scholar] [CrossRef]

- Marik, S.; Motla, K.; Varghese, M.; Sajilesh, K.; Singh, D.; Breard, Y.; Boullay, P.; Singh, R. Superconductivity in a new hexagonal high-entropy alloy. Phys. Rev. Mater. 2019, 3, 060602. [Google Scholar] [CrossRef]

- Yeh, J.W. Recent progress in high-entropy alloys. Ann. Chim. Sci. Mat. 2006, 31, 633–648. [Google Scholar] [CrossRef]

- Zhang, Y.; Zuo, T.T.; Tang, Z.; Gao, M.C.; Dahmen, K.A.; Liaw, P.K.; Lu, Z.P. Microstructures and properties of high-entropy alloys. Prog. Mater. Sci. 2014, 61, 1–93. [Google Scholar] [CrossRef]

- Chang, X.; Zeng, M.; Liu, K.; Fu, L. Phase engineering of high-entropy alloys. Adv. Mater. 2020, 32, 1907226. [Google Scholar] [CrossRef]

- Han, C.; Fang, Q.; Shi, Y.; Tor, S.B.; Chua, C.K.; Zhou, K. Recent advances on high-entropy alloys for 3D printing. Adv. Mater. 2020, 32, 1903855. [Google Scholar] [CrossRef]

- Wang, B.; Yang, C.; Shu, D.; Sun, B. A Review of Irradiation-Tolerant Refractory High-Entropy Alloys. Metals 2023, 14, 45. [Google Scholar] [CrossRef]

- Zhang, C.; Jiang, X.; Zhang, R.; Wang, X.; Yin, H.; Qu, X.; Liu, Z.K. High-throughput thermodynamic calculations of phase equilibria in solidified 6016 Al-alloys. Comp. Mater. Sci. 2019, 167, 19–24. [Google Scholar] [CrossRef]

- Li, R.; Xie, L.; Wang, W.Y.; Liaw, P.K.; Zhang, Y. High-throughput calculations for high-entropy alloys: A brief review. Front. Mater. 2020, 7, 290. [Google Scholar] [CrossRef]

- Yang, X.; Wang, Z.; Zhao, X.; Song, J.; Zhang, M.; Liu, H. MatCloud: A high-throughput computational infrastructure for integrated management of materials simulation, data and resources. Comp. Mater. Sci. 2018, 146, 319–333. [Google Scholar] [CrossRef]

- Feng, R.; Liaw, P.K.; Gao, M.C.; Widom, M. First-principles prediction of high-entropy-alloy stability. NPJ Comput. Mater. 2017, 3, 50. [Google Scholar] [CrossRef]

- Gao, J.; Zhong, J.; Liu, G.; Yang, S.; Song, B.; Zhang, L.; Liu, Z. A machine learning accelerated distributed task management system (Malac-Distmas) and its application in high-throughput CALPHAD computation aiming at efficient alloy design. Adv. Powder Technol. 2022, 1, 100005. [Google Scholar] [CrossRef]

- Feng, R.; Zhang, C.; Gao, M.C.; Pei, Z.; Zhang, F.; Chen, Y.; Ma, D.; An, K.; Poplawsky, J.D.; Ouyang, L. High-throughput design of high-performance lightweight high-entropy alloys. Nat. Commun. 2021, 12, 4329. [Google Scholar] [CrossRef]

- Gruetzemacher, R.; Whittlestone, J. The transformative potential of artificial intelligence. Futures 2022, 135, 102884. [Google Scholar] [CrossRef]

- Minh, D.; Wang, H.X.; Li, Y.F.; Nguyen, T.N. Explainable artificial intelligence: A comprehensive review. Artif. Intell. Rev. 2022, 55, 3503–3568. [Google Scholar] [CrossRef]

- Batra, R.; Song, L.; Ramprasad, R. Emerging materials intelligence ecosystems propelled by machine learning. Nat. Rev. Mater. 2021, 6, 655–678. [Google Scholar] [CrossRef]

- Heidenreich, J.N.; Gorji, M.B.; Mohr, D. Modeling structure-property relationships with convolutional neural networks: Yield surface prediction based on microstructure images. Int. J. Plast. 2023, 163, 103506. [Google Scholar] [CrossRef]

- Huang, E.W.; Lee, W.J.; Singh, S.S.; Kumar, P.; Lee, C.Y.; Lam, T.N.; Chin, H.H.; Lin, B.H.; Liaw, P.K. Machine-learning and high-throughput studies for high-entropy materials. Mater. Sci. Eng. R. 2022, 147, 100645. [Google Scholar] [CrossRef]

- Ren, W.; Zhang, Y.F.; Wang, W.L.; Ding, S.J.; Li, N. Prediction and design of high hardness high entropy alloy through machine learning. Mater. Des. 2023, 235, 112454. [Google Scholar] [CrossRef]

- Liu, Y.; Zhao, T.; Ju, W.; Shi, S. Materials discovery and design using machine learning. J. Materiomics 2017, 3, 159–177. [Google Scholar] [CrossRef]

- Oneto, L.; Navarin, N.; Biggio, B.; Errica, F.; Micheli, A.; Scarselli, F.; Bianchini, M.; Demetrio, L.; Bongini, P.; Tacchella, A. Towards learning trustworthily, automatically, and with guarantees on graphs: An overview. Neurocomputing 2022, 493, 217–243. [Google Scholar] [CrossRef]

- Zhang, Y.; Ling, C. A strategy to apply machine learning to small datasets in materials science. NPJ Comput. Mater. 2018, 4, 25. [Google Scholar] [CrossRef]

- Schleder, G.R.; Padilha, A.C.; Acosta, C.M.; Costa, M.; Fazzio, A. From DFT to machine learning: Recent approaches to materials science—A review. J. Phys. Mater. 2019, 2, 032001. [Google Scholar] [CrossRef]

- Raccuglia, P.; Elbert, K.C.; Adler, P.D.; Falk, C.; Wenny, M.B.; Mollo, A.; Zeller, M.; Friedler, S.A.; Schrier, J.; Norquist, A.J. Machine-learning-assisted materials discovery using failed experiments. Nature 2016, 533, 73–76. [Google Scholar] [CrossRef]

- He, H.; Wang, Y.; Qi, Y.; Xu, Z.; Li, Y.; Wang, Y. From Prediction to Design: Recent Advances in Machine Learning for the Study of 2D Materials. Nano Energy 2023, 118, 108965. [Google Scholar] [CrossRef]

- Lee, S.Y.; Byeon, S.; Kim, H.S.; Jin, H.; Lee, S. Deep learning-based phase prediction of high-entropy alloys: Optimization, generation, and explanation. Mater. Des. 2021, 197, 109260. [Google Scholar] [CrossRef]

- Zhang, Y.; Wen, C.; Wang, C.; Antonov, S.; Xue, D.; Bai, Y.; Su, Y. Phase prediction in high entropy alloys with a rational selection of materials descriptors and machine learning models. Acta Mater. 2020, 185, 528–539. [Google Scholar] [CrossRef]

- Shapeev, A. Accurate representation of formation energies of crystalline alloys with many components. Comput. Mater. Sci. 2016, 139, 26–30. [Google Scholar] [CrossRef]

- Song, K.; Xing, J.; Dong, Q. Optimization of the processing parameters during internal oxidation of Cu-Al alloy powders using an artificial neural network. Mater. Des. 2005, 26, 337–341. [Google Scholar] [CrossRef]

- Sun, Y.; Zeng, W.D.; Zhao, Y.Q.; Qi, Y.L.; Ma, X.; Han, Y.F. Development of constitutive relationship model of Ti600 alloy using artificial neural network, Comput. Mater. Sci. 2010, 48, 686–691. [Google Scholar] [CrossRef]

- Su, J.; Dong, Q.; Liu, P.; Li, H.; Kang, B. Prediction of Properties in Thermomechanically Treated Cu-Cr-Zr Alloy by an Artificial Neural Network. J. Mater. Sci. Technol. 2003, 19, 529–532. [Google Scholar]

- Lederer, Y.; Toher, C.; Vecchio, K.S.; Curtarolo, S. The search for high entropy alloys: A high-throughput ab-initio approach. Acta Mater. 2018, 159, 364–383. [Google Scholar] [CrossRef]

- Tancret, F.; Toda-Caraballo, I.; Menou, E.; Díaz-Del, P.E.J.R. Designing high entropy alloys employing thermodynamics and Gaussian process statistical analysis. Mater. Des. 2017, 115, 486–497. [Google Scholar] [CrossRef]

- Grabowski, B.; Ikeda, Y.; Srinivasan, P.; Körmann, F.; Freysoldt, C.; Duff, A.I.; Shapeev, A.; Neugebauer, J. Ab initio vibrational free energies including anharmonicity for multicomponent alloys. NPJ Comput. Mater. 2019, 5, 80. [Google Scholar] [CrossRef]

- Malinov, S.; Sha, W.; McKeown, J. Modelling the correlation between processing parameters and properties in titanium alloys using artificial neural network. Comput. Mater. Sci. 2001, 21, 375–394. [Google Scholar] [CrossRef]

- Warde, J.; DM, K. Use of neural networks for alloy design. ISIJ Int. 1999, 39, 1015–1019. [Google Scholar] [CrossRef]

- Sun, Y.; Zeng, W.; Zhao, Y.; Zhang, X.; Shu, Y.; Zhou, Y. Modeling constitutive relationship of Ti40 alloy using artificial neural network. Mater. Des. 2011, 32, 1537–1541. [Google Scholar] [CrossRef]

- Lin, Y.; Zhang, J.; Zhong, J. Application of neural networks to predict the elevated temperature flow behavior of a low alloy steel. Comput. Mater. Sci. 2008, 43, 752–758. [Google Scholar] [CrossRef]

- Haghdadi, N.; Zarei-Hanzaki, A.; Khalesian, A.; Abedi, H. Artificial neural network modeling to predict the hot deformation behavior of an A356 aluminum alloy. Mater. Des. 2013, 49, 386–391. [Google Scholar] [CrossRef]

- Dewangan, S.K.; Samal, S.; Kumar, V. Microstructure exploration and an artificial neural network approach for hardness prediction in AlCrFeMnNiWx High-Entropy Alloys. J. Alloys Compd. 2020, 823, 153766. [Google Scholar] [CrossRef]

- Zhou, Z.; Zhou, Y.; He, Q.; Ding, Z.; Li, F.; Yang, Y. Machine learning guided appraisal and exploration of phase design for high entropy alloys. npj Comput. Mater. 2019, 5, 128. [Google Scholar] [CrossRef]

- Li, Y.; Guo, W. Machine-learning model for predicting phase formations of high-entropy alloys. Phys. Rev. Mater. 2019, 3, 095005. [Google Scholar] [CrossRef]

- Wen, C.; Zhang, Y.; Wang, C.; Xue, D.; Bai, Y.; Antonov, S.; Dai, L.; Lookman, T.; Su, Y. Machine learning assisted design of high entropy alloys with desired property. Acta Mater. 2019, 170, 109–117. [Google Scholar] [CrossRef]

- Singh, J.; Singh, S. Support vector machine learning on slurry erosion characteristics analysis of Ni-and Co-alloy coatings. Surf. Rev. Let. 2023, 2340006. [Google Scholar] [CrossRef]

- Alajmi, M.S.; Almeshal, A.M. Estimation and optimization of tool wear in conventional turning of 709M40 alloy steel using support vector machine (SVM) with Bayesian optimization. Materials 2021, 14, 3773. [Google Scholar] [CrossRef]

- Lu, W.C.; Ji, X.B.; Li, M.J.; Liu, L.; Yue, B.H.; Zhang, L.M. Using support vector machine for materials design. Adv. Manuf. 2013, 1, 151–159. [Google Scholar] [CrossRef]

- Nain, S.S.; Garg, D.; Kumar, S. Evaluation and analysis of cutting speed, wire wear ratio, and dimensional deviation of wire electric discharge machining of super alloy Udimet-L605 using support vector machine and grey relational analysis. Adv. Manuf. 2018, 6, 225–246. [Google Scholar] [CrossRef]

- Lei, C.; Mao, J.; Zhang, X.; Wang, L.; Chen, D. Crack prediction in sheet forming of zirconium alloys used in nuclear fuel assembly by support vector machine method. Energy Rep. 2021, 7, 5922–5932. [Google Scholar] [CrossRef]

- Xiang, G.; Zhang, Q. Multi-object optimization of titanium alloy milling process using support vector machine and NSGA-II algorithm. Int. J. Simul. Syst. Sci. Technol. 2016, 17, 35. [Google Scholar] [CrossRef]

- Kong, D.; Chen, Y.; Li, N.; Duan, C.; Lu, L.; Chen, D. Tool wear estimation in end milling of titanium alloy using NPE and a novel WOA-SVM model. IEEE. Trans. Instrum. Meas. 2019, 69, 5219–5232. [Google Scholar] [CrossRef]

- Caixu, Y.; Zhenlong, X.; Xianli, L.; Mingwei, Z. Chatter prediction of milling process for titanium alloy thin-walled workpiece based on EMD-SVM. J. Adv. Manuf. Sci. Technol. 2022, 2, 2022010. [Google Scholar] [CrossRef]

- Meshkov, E.A.; Novoselov, I.I.; Shapeev, A.; Yanilkin, V.A. Sublattice formation in CoCrFeNi high-entropy alloy. Intermetallics 2019, 112, 106542. [Google Scholar] [CrossRef]

- Park, S.M.; Lee, T.; Lee, J.H.; Kang, J.S.; Kwon, M.S. Gaussian process regression-based Bayesian optimization of the insulation-coating process for Fe–Si alloy sheets. J. Mater. Res. Technol. 2023, 22, 3294–3301. [Google Scholar] [CrossRef]

- Khatamsaz, D.; Vela, B.; Arróyave, R. Multi-objective Bayesian alloy design using multi-task Gaussian processes. Mater. Lett. 2023, 351, 135067. [Google Scholar] [CrossRef]

- Tancret, F. Computational thermodynamics, Gaussian processes and genetic algorithms: Combined tools to design new alloys. Model. Simul. Mat. Sci. Eng. 2013, 21, 045013. [Google Scholar] [CrossRef]

- Mahmood, M.A.; Rehman, A.U.; Karakaş, B.; Sever, A.; Rehman, R.U.; Salamci, M.U.; Khraisheh, M. Printability for additive manufacturing with machine learning: Hybrid intelligent Gaussian process surrogate-based neural network model for Co-Cr alloy. J. Mech. Behav. Biomed. Mater. 2022, 135, 105428. [Google Scholar] [CrossRef]

- Sabin, T.; Bailer-Jones, C.; Withers, P. Accelerated learning using Gaussian process models to predict static recrystallization in an Al-Mg alloy. Model. Simul. Mat. Sci. Eng. 2000, 8, 687. [Google Scholar] [CrossRef][Green Version]

- Gong, X.; Yabansu, Y.C.; Collins, P.C.; Kalidindi, S.R. Evaluation of Ti–Mn Alloys for Additive Manufacturing Using High-Throughput Experimental Assays and Gaussian Process Regression. Materials 2020, 13, 4641. [Google Scholar] [CrossRef]

- Hasan, M.S.; Kordijazi, A.; Rohatgi, P.K.; Nosonovsky, M. Triboinformatic modeling of dry friction and wear of aluminum base alloys using machine learning algorithms. Tribol. Int. 2021, 161, 107065. [Google Scholar] [CrossRef]

- Bobbili, R.; Ramakrishna, B. Prediction of phases in high entropy alloys using machine learning. Mater. Today Commun. 2023, 36, 106674. [Google Scholar] [CrossRef]

- Huang, W.; Martin, P.; Zhuang, H.L. Machine-learning phase prediction of high-entropy alloys. Acta Mater. 2019, 169, 225–236. [Google Scholar] [CrossRef]

- Ghouchan Nezhad Noor Nia, R.; Jalali, M.; Houshmand, M. A Graph-Based k-Nearest Neighbor (KNN) Approach for Predicting Phases in High-Entropy Alloys. Appl. Sci. 2022, 12, 8021. [Google Scholar] [CrossRef]

- Gupta, A.K.; Chakroborty, S.; Ghosh, S.K.; Ganguly, S. A machine learning model for multi-class classification of quenched and partitioned steel microstructure type by the k-nearest neighbor algorithm. Comput. Mater. Sci. 2023, 228, 112321. [Google Scholar] [CrossRef]

- Zhang, J.; Wu, J.F.; Yin, A.; Xu, Z.; Zhang, Z.; Yu, H.; Lu, Y.; Liao, W.; Zheng, L. Grain size characterization of Ti-6Al-4V titanium alloy based on laser ultrasonic random forest regression. Appl. Optics 2022, 62, 735–744. [Google Scholar] [CrossRef]

- Zhang, Z.; Yang, Z.; Ren, W.; Wen, G. Random forest-based real-time defect detection of Al alloy in robotic arc welding using optical spectrum. J. Manuf. Process. 2019, 42, 51–59. [Google Scholar] [CrossRef]

- Prieto, A.; Prieto, B.; Ortigosa, E.M.; Ros, E.; Pelayo, F.; Ortega, J.; Rojas, I. Neural networks: An overview of early research, current frameworks and new challenges. Neurocomputing 2016, 214, 242–268. [Google Scholar] [CrossRef]

- Yuste, R. From the neuron doctrine to neural networks. Nat. Rev. Neurosci. 2015, 16, 487–497. [Google Scholar] [CrossRef]

- Islam, M.M.; Murase, K. A new algorithm to design compact two-hidden-layer artificial neural networks. Neural. Netw. 2001, 14, 1265–1278. [Google Scholar] [CrossRef]

- Apicella, A.; Donnarumma, F.; Isgrò, F.; Prevete, R. A survey on modern trainable activation functions. Neural Netw. 2021, 138, 14–32. [Google Scholar] [CrossRef]

- Wang, J.; Kwon, H.; Kim, H.S.; Lee, B.J. A neural network model for high entropy alloy design. NPJ Comput. Mater. 2023, 9, 60. [Google Scholar] [CrossRef]

- Aslani, M.; Seipel, S. Efficient and decision boundary aware instance selection for support vector machines. Inf. Sci. 2021, 577, 579–598. [Google Scholar] [CrossRef]

- Chapelle, O.; Haffner, P.; Vapnik, V.N. Support vector machines for histogram-based image classification. IEEE Trans. Neural Netw. 1999, 10, 1055–1064. [Google Scholar] [CrossRef]

- Xu, X.; Liang, T.; Zhu, J.; Zheng, D.; Sun, T. Review of classical dimensionality reduction and sample selection methods for large-scale data processing. Neurocomputing 2019, 328, 5–15. [Google Scholar] [CrossRef]

- Hussain, S.F. A novel robust kernel for classifying high-dimensional data using Support Vector Machines. Expert Syst. Appl. 2019, 131, 116–131. [Google Scholar] [CrossRef]

- Zhang, W.; Li, P.; Wang, L.; Wan, F.; Wu, J.; Yong, L. Explaining of prediction accuracy on phase selection of amorphous alloys and high entropy alloys using support vector machines in machine learning. Mater. Today Commun. 2023, 35, 105694. [Google Scholar] [CrossRef]

- Chau, N.H.; Kubo, M.; Hai, L.V.; Yamamoto, T. Support Vector Machine-Based Phase Prediction of Multi-Principal Element Alloys. Vietnam. J. Comput. Sci. 2022, 10, 101–116. [Google Scholar] [CrossRef]

- Li, P.; Chen, S. Gaussian process approach for metric learning. Pattern Recognit. 2019, 87, 17–28. [Google Scholar] [CrossRef]

- Liu, H.; Ong, Y.S.; Shen, X.; Cai, J. When Gaussian process meets big data: A review of scalable GPs. IEEE Trans. Neural Netw. Learn Syst. 2020, 31, 4405–4423. [Google Scholar] [CrossRef]

- Ertuğrul, Ö.F.; Tağluk, M.E. A novel version of k nearest neighbor: Dependent nearest neighbor. Appl. Soft Comput. 2017, 55, 480–490. [Google Scholar] [CrossRef]

- Adithiyaa, T.; Chandramohan, D.; Sathish, T. Optimal prediction of process parameters by GWO-KNN in stirring-squeeze casting of AA2219 reinforced metal matrix composites. Mater. Today Proc. 2020, 21, 1000–1007. [Google Scholar] [CrossRef]

- Jahromi, M.Z.; Parvinnia, E.; John, R. A method of learning weighted similarity function to improve the performance of nearest neighbor. Inf. Sci. 2009, 179, 2964–2973. [Google Scholar] [CrossRef]

- Chen, Y.; Hao, Y. A feature weighted support vector machine and K-nearest neighbor algorithm for stock market indices prediction. Expert Syst. Appl. 2017, 80, 340–355. [Google Scholar] [CrossRef]

- Utkin, L.V.; Kovalev, M.S.; Coolen, F.P. Imprecise weighted extensions of random forests for classification and regression. Appl. Soft Comput. 2020, 92, 106324. [Google Scholar] [CrossRef]

- Özçift, A. Random forests ensemble classifier trained with data resampling strategy to improve cardiac arrhythmia diagnosis. Comput. Biol. Med. 2011, 41, 265–271. [Google Scholar] [CrossRef]

- Rokach, L. Decision forest: Twenty years of research. Inf. Fusion 2016, 27, 111–125. [Google Scholar] [CrossRef]

- Yang, L.; Shami, A. IoT data analytics in dynamic environments: From an automated machine learning perspective. Eng. Appl. Artif. Intell. 2022, 116, 105366. [Google Scholar] [CrossRef]

- Krishna, Y.V.; Jaiswal, U.K.; Rahul, M. Machine learning approach to predict new multiphase high entropy alloys. Scr. Mater. 2021, 197, 113804. [Google Scholar] [CrossRef]

- Livingstone, D.J.; Manallack, D.T.; Tetko, I.V. Data modelling with neural networks: Advantages and limitations. J. Comput.-Aided Mol. Des. 1997, 11, 135–142. [Google Scholar] [CrossRef]

- Tu, V.J. Advantages and disadvantages of using artificial neural networks versus logistic regression for predicting medical outcomes. J. Clin. Epidemiol. 1996, 49, 1225–1231. [Google Scholar] [CrossRef]

- Cervantes, J.; Garcia-Lamont, F.; Rodríguez-Mazahua, L.; Lopez, A. A comprehensive survey on support vector machine classification: Applications, challenges and trends. Neurocomputing 2020, 408, 189–215. [Google Scholar] [CrossRef]

- Brieuc, M.S.; Waters, C.D.; Drinan, D.P.; Naish, K.A. A practical introduction to Random Forest for genetic association studies in ecology and evolution. Mol. Ecol. Resour. 2018, 18, 755–766. [Google Scholar] [CrossRef]

- Kasdekar, D.K.; Parashar, V. Principal component analysis to optimize the ECM parameters of Aluminium alloy. Mater. Today Proc. 2018, 5, 5398–5406. [Google Scholar] [CrossRef]

- Sonawane, S.A.; Kulkarni, M.L. Optimization of machining parameters of WEDM for Nimonic-75 alloy using principal component analysis integrated with Taguchi method. J. King Saud Univ. Eng. Sci. 2018, 30, 250–258. [Google Scholar] [CrossRef]

- Bouchard, M.; Mergler, D.; Baldwin, M.; Panisset, M.; Roels, H.A. Neuropsychiatric symptoms and past manganese exposure in a ferro-alloy plant. Neurotoxicology 2007, 28, 290–297. [Google Scholar] [CrossRef]

- Liu, Y.; Yao, C.; Niu, C.; Li, W.; Shen, T. Text mining of hypereutectic Al-Si alloys literature based on active learning. Mater. Today Commun. 2021, 26, 102032. [Google Scholar] [CrossRef]

- Tang, B.; Li, X.; Wang, J.; Ge, W.; Yu, Z.; Lin, T. STIOCS: Active learning-based semi-supervised training framework for IOC extraction. Comput. Electr. Eng. 2023, 112, 108981. [Google Scholar] [CrossRef]

- Li, H.; Yuan, R.; Liang, H.; Wang, W.Y.; Li, J.; Wang, J. Towards high entropy alloy with enhanced strength and ductility using domain knowledge constrained active learning. Mater. Des. 2022, 223, 111186. [Google Scholar] [CrossRef]

- Ahn, K.K.; Kha, N.B. Modeling and control of shape memory alloy actuators using Preisach model, genetic algorithm and fuzzy logic. Mechatronics 2008, 18, 141–152. [Google Scholar] [CrossRef]

- Anijdan, S.H.M.; Bahrami, A.; Hosseini, H.R.M. Using genetic algorithm and artificial neural network analyses to design an Al–Si casting alloy of minimum porosity. Mater. Des. 2006, 27, 605–609. [Google Scholar] [CrossRef]

- Scrucca, L. GA: A package for genetic algorithms in R. J. Stat. Softw. 2013, 53, 1–37. [Google Scholar] [CrossRef]

- Adaan-Nyiak, M.A.; Alam, I.; Tiamiyu, A.A. Ball milling process variables optimization for high-entropy alloy development using design of experiment and genetic algorithm. Powder Technol. 2023, 427, 118766. [Google Scholar] [CrossRef]

- Menou, E.; Tancret, F.; Toda-Caraballo, I.; Ramstein, G.; Castany, P.; Bertrand, E.; Gautier, N.; Rivera Díaz-Del-Castillo, P.E.J. Computational design of light and strong high entropy alloys (HEA): Obtainment of an extremely high specific solid solution hardening. Scr. Mater. 2018, 156, 120–123. [Google Scholar] [CrossRef]

- Kriegeskorte, N. Deep neural networks: A new framework for modeling biological vision and brain information processing. Annu. Rev. Vis. Sci. 2015, 1, 417–446. [Google Scholar] [CrossRef]

- Shen, C. A transdisciplinary review of deep learning research and its relevance for water resources scientists. Water Resour. Res. 2018, 54, 8558–8593. [Google Scholar] [CrossRef]

- Zhu, W.; Huo, W.; Wang, S.; Wang, X.; Ren, K.; Tan, S.; Fang, F.; Xie, Z.; Jiang, J. Phase formation prediction of high-entropy alloys: A deep learning study. J. Mater. Res. Technol. 2022, 18, 800–809. [Google Scholar] [CrossRef]

- Pan, W. A survey of transfer learning for collaborative recommendation with auxiliary data. Neurocomputing 2016, 177, 447–453. [Google Scholar] [CrossRef]

- Feng, S.; Zhou, H.; Dong, H. Application of deep transfer learning to predicting crystal structures of inorganic substances. Comput. Mater. Sci. 2021, 195, 110476. [Google Scholar] [CrossRef]

- Wang, X.; Tran, N.D.; Zeng, S.; Hou, C.; Chen, Y.; Ni, J. Element-wise representations with ECNet for material property prediction and applications in high-entropy alloys. NPJ Comput. Mater. 2022, 8, 253. [Google Scholar] [CrossRef]

- Beg, A.H.; Islam, M.Z. Advantages and limitations of genetic algorithms for clustering records. In Proceedings of the 2016 IEEE 11th Conference on Industrial Electronics and Applications (ICIEA), Hefei, China, 5–7 June 2016; pp. 2478–2483. [Google Scholar] [CrossRef]

- Papernot, N.; McDaniel, P.; Jha, S.; Fredrikson, M.; Celik, Z.B.; Swami, A. The limitations of deep learning in adversarial settings. In Proceedings of the 2016 IEEE European Symposium on Security and Privacy (EuroS&P), Saarbruecken, Germany, 21–24 March 2016; pp. 372–387. [Google Scholar] [CrossRef]

- Shen, C.; Nguyen, D.; Zhou, Z.; Jiang, S.B.; Dong, B.; Jia, X. An introduction to deep learning in medical physics: Advantages, potential, and challenges. Phys. Med. Biol. 2020, 65, 05TR01. [Google Scholar] [CrossRef] [PubMed]

- Ahmed, S.F.; Alam, M.S.B.; Hassan, M.; Rozbu, M.R.; Ishtiak, T.; Rafa, N.; Mofijur, M.; Ali, A.S.; Gandomi, A.H. Deep learning modelling techniques: Current progress, applications, advantages, and challenges. Artif. Intell. Rev. 2023, 56, 13521–13617. [Google Scholar] [CrossRef]

- Zhao, Z.; Alzubaidi, L.; Zhang, J.; Duan, Y.; Gu, Y. A comparison review of transfer learning and self-supervised learning: Definitions, applications, advantages and limitations. Expert Syst. Appl. 2023, 242, 122807. [Google Scholar] [CrossRef]

- Niu, S.; Liu, Y.; Wang, J.; Song, H. A decade survey of transfer learning (2010–2020). IEEE Trans. Artif. Intell. 2020, 1, 151–166. [Google Scholar] [CrossRef]

- Maharana, K.; Mondal, S.; Nemade, B. A review: Data pre-processing and data augmentation techniques. Glob. Transit. Proc. 2022, 3, 91–99. [Google Scholar] [CrossRef]

- Le, N.T.; Wang, J.-W.; Le, D.H.; Wang, C.-C.; Nguyen, T.N. Fingerprint enhancement based on tensor of wavelet subbands for classification. IEEE Access 2020, 8, 6602–6615. [Google Scholar] [CrossRef]

- Zhai, X.; Oliver, A.; Kolesnikov, A.; Beyer, L. S4l: Self-supervised semi-supervised learning. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 1476–1485. [Google Scholar]

- Hospedales, T.; Antoniou, A.; Micaelli, P.; Storkey, A. Meta-learning in neural networks: A survey. IEEE Trans Pattern Anal. Mach. Intell. 2021, 44, 5149–5169. [Google Scholar] [CrossRef]

- Ciliberto, C.; Herbster, M.; Ialongo, A.D.; Pontil, M.; Rocchetto, A.; Severini, S.; Wossnig, L. Quantum machine learning: A classical perspective. Proc. Math. Phys. Eng. Sci. 2018, 474, 20170551. [Google Scholar] [CrossRef]

| Model | Advantages | Limitations |

|---|---|---|

| NNs | (1) Powerful for complex, non-linear relationships. (2) Robust to noisy data. (3) Ability to learn from large datasets. | (1) Prone to overfitting, especially with small datasets. (2) Requires careful tuning of parameters. (3) Black-box nature makes interpretation difficult. |

| SVM [93] | (1) Effective in high-dimensional spaces. (2) Works well with small to medium-sized datasets. (3) Versatile due to kernel trick for non-linear classification. | (1) Can be slow to train on large datasets. (2) Sensitivity to choice of kernel parameters. (3) Memory-intensive for large-scale problems. |

| GP | (1) Provides uncertainty estimates for predictions. (2) Flexible and interpretable modeling. (3) Can handle small datasets effectively. | (1) Provides uncertainty estimates for predictions. (2) Flexible and interpretable modeling. (3) Can handle small datasets effectively. |

| KNN [94] | (1) Simple and easy to understand. (2) No training phase, making it fast for inference. (3) Robust to noisy data and outliers. | (1) Simple and easy to understand. (2) No training phase, making it fast for inference. (3) Robust to noisy data and outliers. |

| RF | (1) High accuracy and robustness. (2) Works well with high-dimensional data. (3) Handles missing values and maintains accuracy. | (1) Can be slow to predict on large datasets. (2) Lack of interpretability due to ensemble nature. (3) May overfit noisy datasets if not tuned properly. |

| Models | Advantages | Limitations |

|---|---|---|

| AL | (1) Reduces labeling effort (2) Improves model performance with limited data (3) Allows for adaptive training | (1) Requires expert query strategies (2) Can be computationally expensive (3) Depends on query strategy quality |

| GA [112] | (1) Optimizes complex problems (2) Searches across wide spaces (3) Handles multi-objective tasks | (1) No guaranteed global optimum (2) Complexity increases with dimensions (3) Sensitive to noisy objectives |

| DL [113,114,115] | (1) Learns complex patterns (2) Automatically extracts features (3) Excels in various tasks | (1) Needs large, labeled data (2) Prone to overfitting (3) Requires powerful hardware |

| TL [116,117] | (1) Leverages related knowledge (2) Reduces data need for new tasks (3) Speeds up training, improves performance | (1) Performance depends on domain similarity (2) Domain shift may affect transferability (3) Fine-tuning may still be necessary |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Liu, S.; Yang, C. Machine Learning Design for High-Entropy Alloys: Models and Algorithms. Metals 2024, 14, 235. https://doi.org/10.3390/met14020235

Liu S, Yang C. Machine Learning Design for High-Entropy Alloys: Models and Algorithms. Metals. 2024; 14(2):235. https://doi.org/10.3390/met14020235

Chicago/Turabian StyleLiu, Sijia, and Chao Yang. 2024. "Machine Learning Design for High-Entropy Alloys: Models and Algorithms" Metals 14, no. 2: 235. https://doi.org/10.3390/met14020235

APA StyleLiu, S., & Yang, C. (2024). Machine Learning Design for High-Entropy Alloys: Models and Algorithms. Metals, 14(2), 235. https://doi.org/10.3390/met14020235