Coverage of Artificial Intelligence and Machine Learning within Academic Literature, Canadian Newspapers, and Twitter Tweets: The Case of Disabled People

Abstract

1. Introduction

- (a)

- as potential non-therapeutic users (consumer angle)

- (b)

- as potential therapeutic users

- (c)

- as potential diagnostic targets (diagnostics to prevent ‘disability’, or to judge ‘disability’)

- (d)

- by changing societal parameters caused by humans using AI/ML (military, changes in how humans interact, employers using it in the workplace, etc.)

- (e)

- AI/ML outperforming humans (e.g., workplace)

- (f)

- increasing autonomy of AI/ML (AI/ML judging disabled people)

1.1. Portrayal and Role, Identity, and Stake Narrative of Disabled People and AI/ML

1.2. The Tone of the Discourse

1.3. The Issue of Social Good

2. Methods

2.1. Study Design

2.2. Identifying and Clarifying the Purpose and Research Questions

- (a)

- as potential non-therapeutic users (consumer angle)

- (b)

- as potential therapeutic users

- (c)

- as potential diagnostic targets (diagnostic to prevent disability or to judge disability)

- (d)

- by changing societal parameters caused by humans using AI/ML (military, changes in how humans interact, employers using it in the workplace, etc.)

- (e)

- AI/ML outperforming humans (see workplace)

- (f)

- increasing autonomy of AI/ML (AI/ML judging disabled people)

2.3. Data Sources and Data Collection

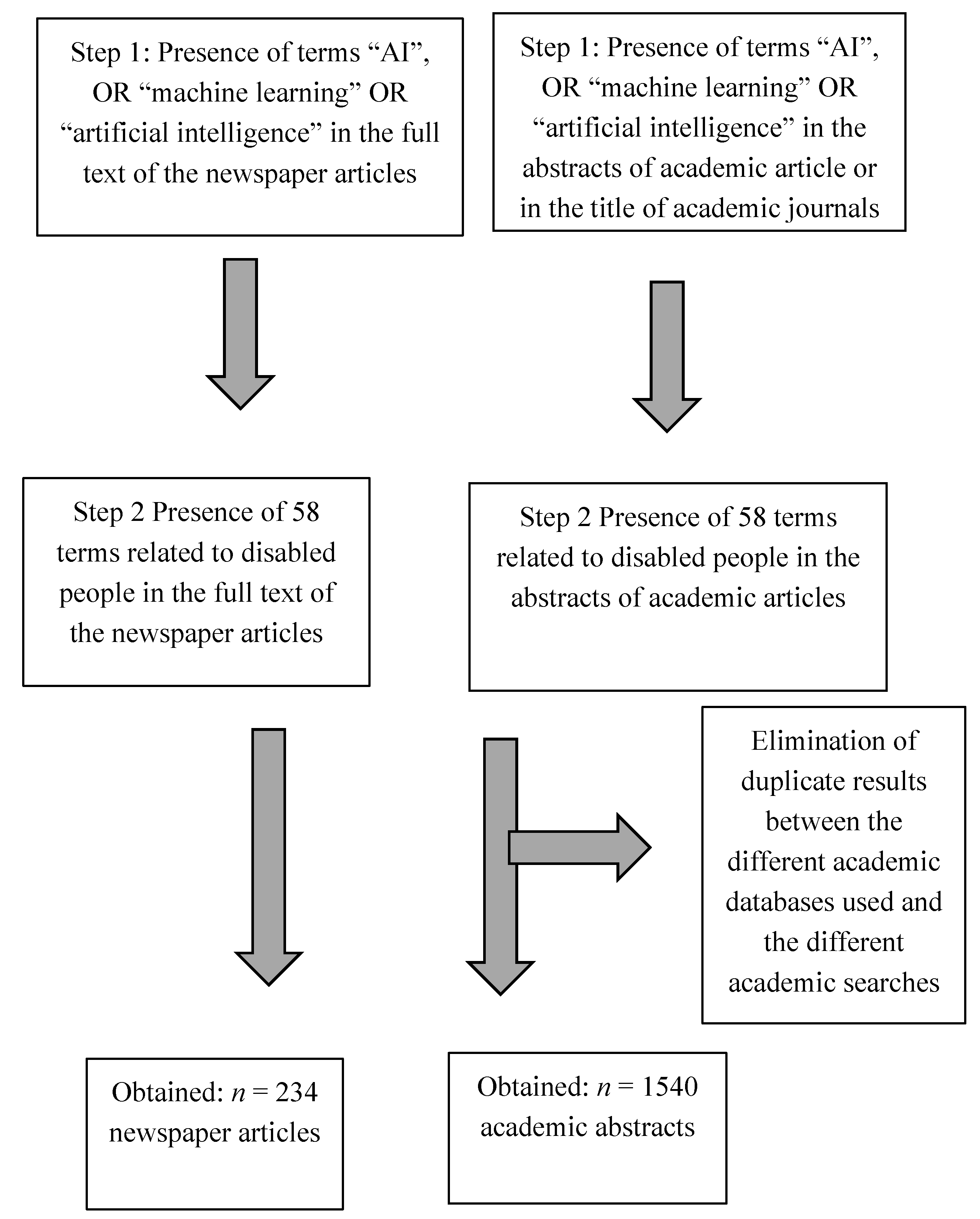

2.3.1. Search Strategy 1: Newspapers

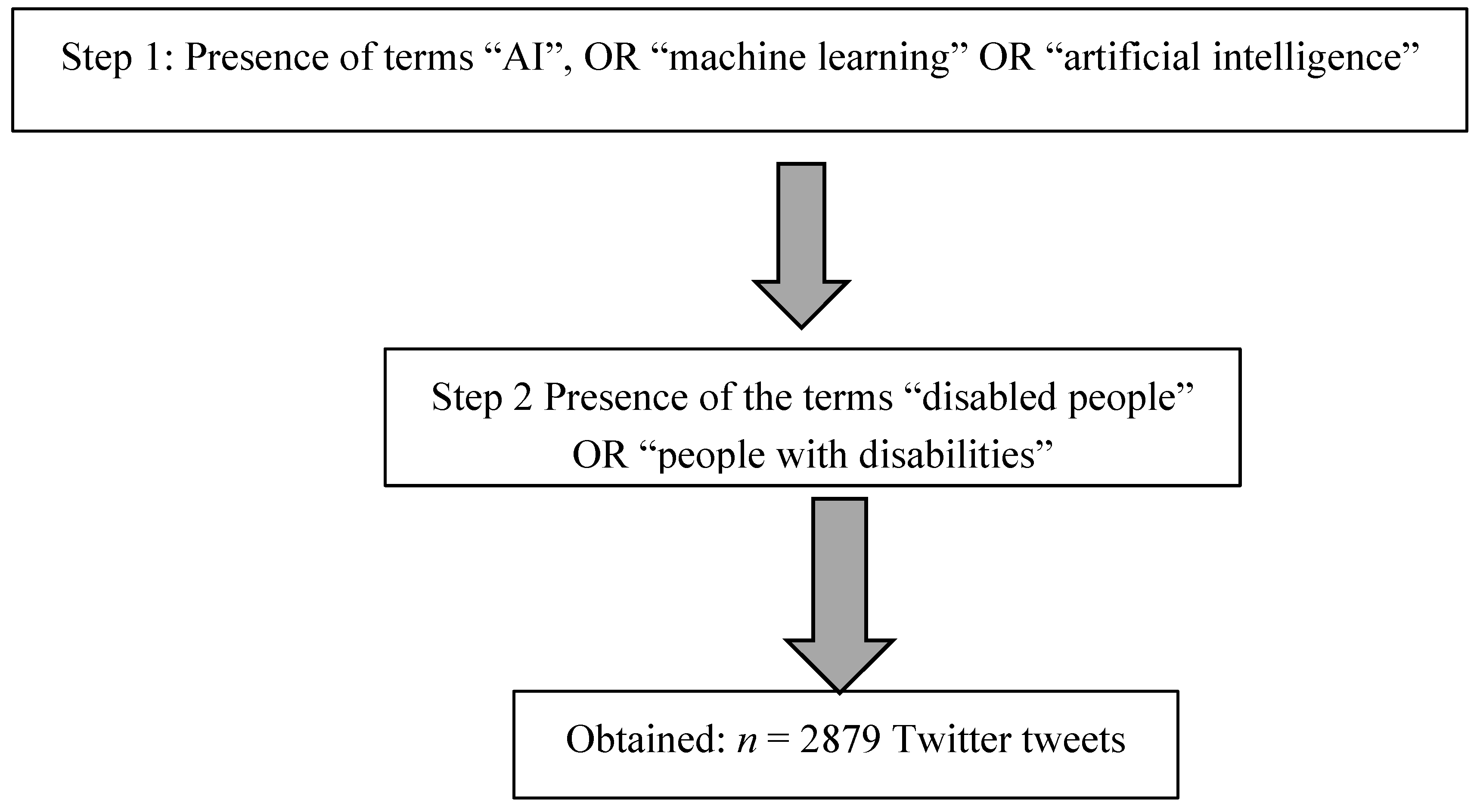

2.3.2. Search Strategy 2: Twitter

- Step 2a:

- We searched for the presence of “AI” OR “machine learning” OR “artificial intelligence”.

- Step 2b:

- We searched for the presence of “disabled people” OR “people with disabilities” within the tweets of step 2a.

2.3.3. Search Strategy 3: Academic Literature

- Strategy 3a:

- We searched the abstracts of EBSCO-ALL, Scopus, arXiv, IEEE Xplore, and ACM Guide to Computing Literature using the same search terms used for the newspapers and the same download criteria.

- Strategy 3b:

- We searched Scopus for the presence of the AI terms used for the newspapers in the academic journal title and the presence of the term “patient” and the 58 terms depicting disabled people we used for the newspapers in the abstracts of the academic articles; we used the same download criteria as mentioned under newspapers.

2.4. Data Analysis

2.5. Trustworthiness Measures

2.6. Limitation

3. Results

3.1. Part 1: Classification of Disabled People and Focus of Coverage

3.2. Part 2: Tone of Coverage

3.2.1. Academic Literature

3.2.2. Newspapers

3.2.3. Twitter

3.3. Part 3: Role, Identity, and Stake Narrative

3.4. Part 4: Mentioning of “Social Food” or “for Good”

4. Discussion

4.1. Part 1: Classification of Disabled People and Focus of Coverage

4.2. Part 2 and 3: Tone of Coverage and Role, Identity, and Stake Narrative

4.2.1. The Issue of Techno-Optimism

4.2.2. Linking Techno-Optimism to the Role, Identity, and Stake Narrative

4.2.3. Techno-Optimism, User Narrative, and the Issue of Governance

4.3. The Social Good Discourse

5. Conclusions and Future Research

Author Contributions

Funding

Conflicts of Interest

References

- Lillywhite, A.; Wolbring, G. Coverage of ethics within the artificial intelligence and machine learning academic literature: The case of disabled people. Assist. Technol. 2019, 1–7. [Google Scholar] [CrossRef] [PubMed]

- Feng, R.; Badgeley, M.; Mocco, J.; Oermann, E.K. Deep learning guided stroke management: A review of clinical applications. J. NeuroInterventional Surg. 2017, 10, 358–362. [Google Scholar] [CrossRef] [PubMed]

- Ilyasova, N.; Kupriyanov, A.; Paringer, R.; Kirsh, D. Particular Use of BIG DATA in Medical Diagnostic Tasks. Pattern Recognit. Image Anal. 2018, 28, 114–121. [Google Scholar] [CrossRef]

- André, Q.; Carmon, Z.; Wertenbroch, K.; Crum, A.; Frank, D.; Goldstein, W.; Huber, J.; Van Boven, L.; Weber, B.; Yang, H. Consumer Choice and Autonomy in the Age of Artificial Intelligence and Big Data. Cust. Needs Solutions 2017, 5, 28–37. [Google Scholar] [CrossRef]

- Deloria, R.; Lillywhite, A.; Villamil, V.; Wolbring, G. How research literature and media cover the role and image of disabled people in relation to artificial intelligence and neuro-research. Eubios J. Asian Int. Bioeth. 2019, 29, 169–182. [Google Scholar]

- Hassabis, D.; Kumaran, D.; Summerfield, C.; Botvinick, M. Neuroscience-Inspired Artificial Intelligence. Neuron 2017, 95, 245–258. [Google Scholar] [CrossRef]

- Bell, A.J. Levels and loops: The future of artificial intelligence and neuroscience. Philos. Trans. R. Soc. B Boil. Sci. 1999, 354, 2013–2020. [Google Scholar] [CrossRef][Green Version]

- Lee, J. Brain–computer interfaces and dualism: A problem of brain, mind, and body. AI Soc. 2014, 31, 29–40. [Google Scholar] [CrossRef]

- Cavazza, M.; Aranyi, G.; Charles, F. BCI Control of Heuristic Search Algorithms. Front. Aging Neurosci. 2017, 11, 225. [Google Scholar] [CrossRef]

- Buttazzo, G. Artificial consciousness: Utopia or real possibility? Computer 2001, 34, 24–30. [Google Scholar] [CrossRef]

- De Garis, H. Artificial Brains. Inf. Process. Med. Imaging 2007, 8, 159–174. [Google Scholar]

- Catherwood, P.; Finlay, D.; McLaughlin, J. Intelligent Subcutaneous Body Area Networks: Anticipating Implantable Devices. IEEE Technol. Soc. Mag. 2016, 35, 73–80. [Google Scholar] [CrossRef]

- Meeuws, M.; Pascoal, D.; Bermejo, I.; Artaso, M.; De Ceulaer, G.; Govaerts, P. Computer-assisted CI fitting: Is the learning capacity of the intelligent agent FOX beneficial for speech understanding? Cochlea- Implant. Int. 2017, 18, 198–206. [Google Scholar] [CrossRef] [PubMed]

- Wu, Y.-C.; Feng, J.-W. Development and Application of Artificial Neural Network. Wirel. Pers. Commun. 2017, 102, 1645–1656. [Google Scholar] [CrossRef]

- Garden, H.; Winickoff, D. Issues in Neurotechnology Governance. Available online: https://doi.org/10.1787/18151965 (accessed on 26 January 2020).

- Crowson, M.G.; Lin, V.; Chen, J.M.; Chan, T.C.Y. Machine Learning and Cochlear Implantation—A Structured Review of Opportunities and Challenges. Otol. Neurotol. 2020, 41, e36–e45. [Google Scholar] [CrossRef]

- Wangmo, T.; Lipps, M.; Kressig, R.W.; Ienca, M. Ethical concerns with the use of intelligent assistive technology: Findings from a qualitative study with professional stakeholders. BMC Med. Ethic. 2019, 20, 1–11. [Google Scholar] [CrossRef]

- Neto, J.S.D.O.; Silva, A.L.M.; Nakano, F.; Pérez-Álcazar, J.J.; Kofuji, S.T. When Wearable Computing Meets Smart Cities. In Smart Cities and Smart Spaces; IGI Global: Pennsylvania, PA, USA, 2019; pp. 1356–1376. [Google Scholar]

- Ding, J. Deciphering China’s AI Dream. Available online: https://www.fhi.ox.ac.uk/wp-content/uploads/Deciphering_Chinas_AI-Dream.pdf (accessed on 26 January 2020).

- Dutton, T. An Overview of National AI Strategies. Available online: https://medium.com/politics-ai/an-overview-of-national-ai-strategies-2a70ec6edfd (accessed on 26 January 2020).

- Floridi, L.; Cowls, J.; Beltrametti, M.; Chatila, R.; Chazerand, P.; Dignum, V.; Luetge, C.; Madelin, R.; Pagallo, U.; Rossi, F.; et al. AI4People—An Ethical Framework for a Good AI Society: Opportunities, Risks, Principles, and Recommendations. Minds Mach. 2018, 28, 689–707. [Google Scholar] [CrossRef]

- Asilomar and AI Conference Participants. Asilomar AI Principles Principles Developed in Conjunction with the 2017 Asilomar Conference. Available online: https://futureoflife.org/ai-principles/?cn-reloaded=1 (accessed on 26 January 2020).

- The IEEE Global Initiative on Ethics of Autonomous and Intelligent Systems, T.I.G.I. Ethically Aligned Design: A Vision for Prioritizing Human Well-Being with Autonomous and Intelligent Systems (A/IS). Available online: http://standards.ieee.org/develop/indconn/ec/ead_v2.pdf (accessed on 26 January 2020).

- Participants in the Forum on the Socially Responsible Development of AI. Montreal Declaration for a Responsible Development of Artificial Intelligence. Available online: https://www.montrealdeclaration-responsibleai.com/the-declaration (accessed on 26 January 2020).

- European Group on Ethics in Science and New Technologies. J. Med Ethic 1998, 24, 247. [CrossRef]

- University of Southern California USC Center for Artificial Intelligence in Society. USC Center for Artificial Intelligence in Society: Mission Statement. Available online: https://www.cais.usc.edu/wp-content/uploads/2017/05/USC-Center-for-Artificial-Intelligence-in-Society-Mission-Statement.pdf (accessed on 26 January 2020).

- Lehman-Wilzig, S.N. Frankenstein unbound: Towards a legal definition of artificial intelligence. Futures 1981, 13, 442–457. [Google Scholar] [CrossRef]

- Brundage, M.; Avin, S.; Clark, J.; Toner, H.; Eckersley, P.; Garfinkel, B.; Dafoe, A.; Scharre, P.; Zeitzoff, T.; Filar, B.; et al. The Malicious Use of Artificial Intelligence: Forecasting, Prevention, and Mitigation. Available online: https://img1.wsimg.com/blobby/go/3d82daa4-97fe-4096-9c6b-376b92c619de/downloads/1c6q2kc4v_50335.pdf (accessed on 26 January 2020).

- Smith, K.J. The AI community and the united nations: A missing global conversation and a closer look at social good. In Proceedings of the AAAI Spring Symposium—Technical Report, Palo Alto, CA, USA, 27–29 March 2017; pp. 95–100. [Google Scholar]

- Prasad, M. Back to the future: A framework for modelling altruistic intelligence explosions. In Proceedings of the AAAI Spring Symposium—Technical Report, Palo Alto, CA, USA, 27–29 March 2017; pp. 60–63. [Google Scholar]

- Cowls, J.; King, T.; Taddeo, M.; Floridi, L. Designing AI for Social Good: Seven Essential Factors. SSRN Electron. J. 2019, 1–21. [Google Scholar] [CrossRef]

- Varshney, K.R.; Mojsilovic, A. Open Platforms for Artificial Intelligence for Social Good: Common Patterns as a Pathway to True Impact. Available online: https://aiforsocialgood.github.io/icml2019/accepted/track1/pdfs/39_aisg_icml2019.pdf (accessed on 26 January 2020).

- Ortega, A.; Otero, M.; Steinberg, F.; Andrés, F. Technology Can Help to Right Technology’s Social Wrongs: Elements for a New Social Compact for Digitalisation. Available online: https://t20japan.org/policy-brief-technology-help-right-technology-social-wrongs/ (accessed on 26 January 2020).

- Whittlestone, J.; Nyrup, R.; Alexandrova, A.; Dihal, K.; Cave, S. Ethical and Societal Implications of Algorithms, Data, and Artificial Intelligence: A Roadmap for Research. Available online: https://www.nuffieldfoundation.org/sites/default/files/files/Ethical-and-Societal-Implications-of-Data-and-AI-report-Nuffield-Foundat.pdf (accessed on 26 January 2020).

- Clopath, C.; De Winne, R.; Emtiyaz Khan, M.; Schaul, T. Report from Dagstuhl Seminar 19082, AI for the Social Good. Available online: http://drops.dagstuhl.de/opus/volltexte/2019/10862/ (accessed on 26 January 2020).

- Hager, G.D.; Drobnis, A.; Fang, F.; Ghani, R.; Greenwald, A.; Lyons, T.; Parkes, D.C.; Schultz, J.; Saria, S.; Smith, S.F.; et al. Artificial Intelligence for Social Good. Available online: https://cra.org/ccc/wp-content/uploads/sites/2/2016/04/AI-for-Social-Good-Workshop-Report.pdf (accessed on 26 January 2020).

- Berendt, B. AI for the Common Good?! Pitfalls, challenges, and ethics pen-testing. Paladyn J. Behav. Robot. 2019, 10, 44–65. [Google Scholar] [CrossRef]

- Efremova, N.; West, D.; Zausaev, D. AI-Based Evaluation of the SDGs: The Case of Crop Detection With Earth Observation Data. SSRN Electron. J. 2019, 1–4. [Google Scholar] [CrossRef]

- Canadian Institute for Advanced Research (CIFAR). AI & Society. Available online: https://www.cifar.ca/ai/ai-society (accessed on 26 January 2020).

- Gasser, U.; Almeida, V. A Layered Model for AI Governance. IEEE Internet Comput. 2017, 21, 58–62. [Google Scholar] [CrossRef]

- Lauterbach, B.; Bonim, A. Artificial Intelligence: A Strategic Business and Governance Imperative. Available online: https://gecrisk.com/wp-content/uploads/2016/09/ALauterbach-ABonimeBlanc-Artificial-Intelligence-Governance-NACD-Sept-2016.pdf (accessed on 26 January 2020).

- Rahwan, I. Society-in-the-loop: Programming the algorithmic social contract. Ethic- Inf. Technol. 2017, 20, 5–14. [Google Scholar] [CrossRef]

- Boyd, M.; Wilson, N. Rapid developments in Artificial Intelligence: How might the New Zealand government respond? Policy Q. 2017, 13, 36–43. [Google Scholar] [CrossRef]

- Wang, W.; Siau, K. Artificial Intelligence: A Study on Governance, Policies, and Regulations. Available online: https://aisel.aisnet.org/cgi/viewcontent.cgi?article=1039&context=mwais2018 (accessed on 26 January 2020).

- Wilkinson, C.; Bultitude, K.; Dawson, E. “Oh Yes, Robots! People Like Robots; the Robot People Should do Something” Perspectives and Prospects in Public Engagement With Robotics. Sci. Commun. 2010, 33, 367–397. [Google Scholar] [CrossRef]

- Stahl, B.C.; Wright, D. Ethics and Privacy in AI and Big Data: Implementing Responsible Research and Innovation. IEEE Secur. Priv. Mag. 2018, 16, 26–33. [Google Scholar] [CrossRef]

- European Commission. Report from the High-Level Hearing ‘A European Union Strategy for Artificial Intelligence’. Available online: https://ec.europa.eu/epsc/sites/epsc/files/epsc_-_report_-_hearing_-_a_european_union_strategy_for_artificial_intelligence.pdf (accessed on 26 January 2020).

- McKelvey, F. Next Steps for Canadian AI Governance: Reflections on Student Symposium on AI and Human Rights. Available online: http://www.amo-oma.ca/en/2018/05/10/next-steps-for-canadian-ai-governance-reflections-on-student-symposium-on-ai-and-human-rights/ (accessed on 26 January 2020).

- Diep, L. Anticipatory Governance, Anticipatory Advocacy, Knowledge Brokering, and the State of Disabled People’s Rights Advocacy in Canada: Perspectives of Two Canadian Cross-Disability Rights Organizations. Master’s Thesis, University of Calgary, Calgary, AB, Cannada, September 2017. [Google Scholar]

- Fairclough, N. Analysing Discourse: Textual Analysis for Social Research. Available online: https://pdfs.semanticscholar.org/a3cd/84f4fd0d89eda5a15b9f9c7fa01394aca9d9.pdf (accessed on 26 January 2020).

- Gill, R. Discourse Analysis. In Qualitative Researching with Text, Image and Sound; Bauer, M.W., Gaskell, G., Eds.; A Practical Handbook; Sage Publications: London, UK, 2000; pp. 172–190. [Google Scholar]

- Schröter, M.; Taylor, C. Exploring silence and absence in discourse: Empirical approaches; Springer: New York, NY, USA, 2017; p. 395. [Google Scholar]

- Van Dijk, T.A. Discourse, knowledge, power and politics. In Critical Discourse Studies in Context and Cognition; John Benjamins Publishing Company: Amsterdam, The Netherlands, 2011; pp. 27–63. [Google Scholar]

- Longmore, P.K. A Note on Language and the Social Identity of Disabled People. Am. Behav. Sci. 1985, 28, 419–423. [Google Scholar] [CrossRef]

- Hutchinson, K.; Roberts, C.; Daly, M. Identity, impairment and disablement: Exploring the social processes impacting identity change in adults living with acquired neurological impairments. Disabil. Soc. 2017, 33, 175–196. [Google Scholar] [CrossRef]

- Fujimoto, Y.; Rentschler, R.; Le, H.; Edwards, D.; Härtel, C. Lessons Learned from Community Organizations: Inclusion of People with Disabilities and Others. Br. J. Manag. 2013, 25, 518–537. [Google Scholar] [CrossRef]

- Wolbring, G. Solutions follow perceptions: NBIC and the concept of health, medicine, disability and disease. Heal. Law Rev. 2004, 12, 41–47. [Google Scholar]

- Yumakulov, S.; Yergens, D.; Wolbring, G. Imagery of Disabled People within Social Robotics Research. Comput. Vis. 2012, 7621, 168–177. [Google Scholar]

- Zhang, L.; Haller, B. Consuming Image: How Mass Media Impact the Identity of People with Disabilities. Commun. Q. 2013, 61, 319–334. [Google Scholar] [CrossRef]

- Wolbring, G.; Diep, L. The Discussions around Precision Genetic Engineering: Role of and Impact on Disabled People. Laws 2016, 5, 37. [Google Scholar] [CrossRef]

- Barnes, C. Disability Studies: New or not so new directions? Disabil. Soc. 1999, 14, 577–580. [Google Scholar] [CrossRef]

- Titsworth, B.S. An Ideological Basis for Definition in Public Argument: A Case Study of the Individuals with Disabilities in Education Act. Argum. Advocacy 1999, 35, 171–184. [Google Scholar] [CrossRef]

- Maturo, A. The medicalization of education: ADHD, human enhancement and academic performance. Ital. J. Sociol. Educ. 2013, 5, 175–188. [Google Scholar]

- Varul, M.Z. Talcott Parsons, the Sick Role and Chronic Illness. Body Soc. 2010, 16, 72–94. [Google Scholar] [CrossRef]

- Wilson, R. The Discursive Construction of Elderly’s Needs—A Critical Discourse Analysis of Political Discussions in Sweden. Available online: http://www.diva-portal.org/smash/get/diva2:1339948/FULLTEXT01.pdf (accessed on 26 January 2020).

- Schulz, H.M. Reference group influence in consumer role rehearsal narratives. Qual. Mark. Res. Int. J. 2015, 18, 210–229. [Google Scholar] [CrossRef]

- Hogg, M.A.; Terry, D.J.; White, K.M. A Tale of Two Theories: A Critical Comparison of Identity Theory with Social Identity Theory. Soc. Psychol. Q. 1995, 58, 255. [Google Scholar] [CrossRef]

- Dirth, T.P.; Branscombe, N.R. Recognizing Ableism: A Social Identity Analysis of Disabled People Perceiving Discrimination as Illegitimate. J. Soc. Issues 2019, 75, 786–813. [Google Scholar] [CrossRef]

- Jiang, C.; Vitiello, C.; Axt, J.R.; Campbell, J.T.; Ratliff, K.A. An examination of ingroup preferences among people with multiple socially stigmatized identities. Self Identit. 2019, 1–18. [Google Scholar] [CrossRef]

- Burke, P.J.; Reitzes, N.C. An Identity Theory Approach to Commitment. Soc. Psychol. Q. 1991, 54, 239. [Google Scholar] [CrossRef]

- Crane, A.; Ruebottom, T. Stakeholder Theory and Social Identity: Rethinking Stakeholder Identification. J. Bus. Ethic 2011, 102, 77–87. [Google Scholar] [CrossRef]

- Mitchell, R.K.; Agle, B.R.; Wood, D.J. Toward a theory of stakeholder identification and salience: Defining the principle of who and what really counts. Acad. Manag. Rev. 1997, 22, 853–886. [Google Scholar] [CrossRef]

- Friedman, A.L.; Miles, S. Stakeholders: Theory and practice; Oxford University Press on Demand: Oxford, UK, 2006; p. 362. [Google Scholar]

- Clarkson, M. A risk based model of stakeholder theory. In Proceedings of the second Toronto conference on stakeholder theory; University of Toronto: Toronto, ON, Canada, 1994; pp. 18–19. [Google Scholar]

- Schiller, C.; Winters, M.; Hanson, H.M.; Ashe, M.C. A framework for stakeholder identification in concept mapping and health research: A novel process and its application to older adult mobility and the built environment. BMC Public Heal. 2013, 13, 428. [Google Scholar] [CrossRef]

- Inclezan, D.; Pradanos, L.I. Viewpoint: A Critical View on Smart Cities and AI. J. Artif. Intell. Res. 2017, 60, 681–686. [Google Scholar] [CrossRef]

- Einsiedel, E.F. Framing science and technology in the Canadian press. Public Underst. Sci. 1992, 1, 89–101. [Google Scholar] [CrossRef]

- Yudkowsky, E. Artificial Intelligence as a Positive and Negative Factor in Global Risk. Available online: https://www.researchgate.net/profile/James_Peters/post/Can_artificial_Intelligent_systems_replace_Human_brain/attachment/59d62a00c49f478072e9cbc4/AS:272471561834509@1441973690551/download/AIPosNegFactor.pdf (accessed on 26 January 2020).

- Nierling, L.; João-Maia, M.; Hennen, L.; Bratan, T.; Kuuk, P.; Cas, J.; Capari, L.; Krieger-Lamina, J.; Mordini, E.; Wolbring, G. Assistive technologies for people with disabilities Part III: Perspectives on assistive technologies. Available online: http://www.europarl.europa.eu/RegData/etudes/IDAN/2018/603218/EPRS_IDA(2018)603218(ANN3)_EN.pdf (accessed on 26 January 2020).

- Wolbring, G.; Diep, L.; Jotterand, F.; Dubljevic, V. Cognitive/Neuroenhancement Through an Ability Studies Lens. In Cognitive Enhancement; Oxford University Press (OUP): Oxford, UK, 2016; pp. 57–75. [Google Scholar]

- Diep, L.; Wolbring, G. Who Needs to Fit in? Who Gets to Stand out? Communication Technologies Including Brain-Machine Interfaces Revealed from the Perspectives of Special Education School Teachers Through an Ableism Lens. Educ. Sci. 2013, 3, 30–49. [Google Scholar] [CrossRef]

- Diep, L.; Wolbring, G. Perceptions of Brain-Machine Interface Technology among Mothers of Disabled Children. Disabil. Stud. Q. 2015, 35, 35. [Google Scholar] [CrossRef]

- Garlington, S.B.; Collins, M.E.; Bossaller, M.R.D. An Ethical Foundation for Social Good: Virtue Theory and Solidarity. Res. Soc. Work. Pr. 2019, 30, 196–204. [Google Scholar] [CrossRef]

- Singell, L.; Engell, J.; Dangerfield, A. Saving Higher Education in the Age of Money. Academe 2006, 92, 67. [Google Scholar] [CrossRef]

- Gerrard, H. Skills as Trope, Skills as Target: Universities and the Uncertain Future. N. Z. J. Educ. Stud. 2017, 52, 363–370. [Google Scholar] [CrossRef]

- Blanco, P.T. Volver a donde nunca se estuvo. Pacto social, felicidad pública y educación en Chile (c.1810-c.2010). Araucaria 2017, 19, 323–344. [Google Scholar] [CrossRef]

- Wahid, N.A.; Alias, N.H.; Takara, K.; Ariffin, S.K. Water as a business: Should water tariff remain? Descriptive analyses on Malaysian households’ socio-economic background. Int. J. Econ. Res. 2017, 14, 367–375. [Google Scholar]

- Walker, G. Health as an Intermediate End and Primary Social Good. Public Heal. Ethic 2017, 11, 6–19. [Google Scholar] [CrossRef]

- Riches, G. First World Hunger: Food Security and Welfare Politics; Springer: New York, NY, USA, 1997; p. 200. [Google Scholar]

- Morrison, A. Contributive justice: Social class and graduate employment in the UK. J. Educ. Work. 2019, 32, 335–346. [Google Scholar] [CrossRef]

- Daniels, N. Equity of Access to Health Care: Some Conceptual and Ethical Issues. Milbank Mem. Fund Quarterly. Heal. Soc. 1982, 60, 51. [Google Scholar] [CrossRef]

- Castiglioni, C.; Lozza, E.; Bonanomi, A. The Common Good Provision Scale (CGP): A Tool for Assessing People’s Orientation towards Economic and Social Sustainability. Sustainability 2019, 11, 370. [Google Scholar] [CrossRef]

- Rioux, M.; Zubrow, E. Social disability and the public good. In The Market or The Public Domain; Routledge: Abington on the Thames, UK, 2005; pp. 162–186. [Google Scholar]

- Bogomolov, A.; Lepri, B.; Staiano, J.; Letouzé, E.; Oliver, N.; Pianesi, F.; Pentland, A. Moves on the Street: Classifying Crime Hotspots Using Aggregated Anonymized Data on People Dynamics. Big Data 2015, 3, 148–158. [Google Scholar] [CrossRef]

- Bryant, C.; Pham, A.T.; Remash, H.; Remash, M.; Schoenle, N.; Zimmerman, J.; Albright, S.D.; Rebelsky, S.A.; Chen, Y.; Chen, Z.; et al. A Middle-School Camp Emphasizing Data Science and Computing for Social Good. In Proceedings of the 50th ACM Technical Symposium on Computer Science Education—SIGCSE ’19; Association for Computing Machinery (ACM), Minneapolis, MN, USA, February 2019; pp. 358–364. [Google Scholar]

- Iyer, L.S.; Dissanayake, I.; Bedeley, R.T. “RISE IT for Social Good”—An experimental investigation of context to improve programming skills. In Proceedings of the 2017 ACM SIGMIS Conference on Computers and People Research, Bangalore, India, 21–23 June 2017; pp. 49–52. [Google Scholar]

- Chen, Y.; Rebelsky, S.A.; Chen, Z.; Gumidyala, S.; Koures, A.; Lee, S.; Msekela, J.; Remash, H.; Schoenle, N.; Albright, S.D. A Middle-School Code Camp Emphasizing Digital Humanities. In Proceedings of the SIGCSE ’19: The 50th ACM Technical Symposium on Computer Science Education, Minneapolis, MN, USA, 27 February–2 March 2019. [Google Scholar]

- Fisher, D.H.; Cameron, J.; Clegg, T.; August, S. Integrating Social Good into CS Education. In Proceedings of the 49th ACM Technical Symposium on Computer Science Education, SIGCSE 2018, Baltimore, MD, USA, 21–24 February 2018. [Google Scholar]

- Goldweber, M. Strategies for Adopting CSG-Ed In CS 1. In Proceedings of the 2018 Research on Equity and Sustained Participation in Engineering, Computing, and Technology (RESPECT), Baltimore, MD, USA, 21–21 February 2018; pp. 1–2. [Google Scholar]

- Shi, Z.R.; Wang, C.; Fang, F. Artificial Intelligence for Social Good: A Survey. Available online: https://arxiv.org/pdf/2001.01818.pdf (accessed on 26 January 2020).

- Musikanski, L.; Rakova, B.; Bradbury, J.; Phillips, R.; Manson, M. Artificial Intelligence and Community Well-being: A Proposal for an Emerging Area of Research. Int. J. Community Well-Being 2020, 1–17. [Google Scholar] [CrossRef]

- United Nations. Convention on the Rights of Persons with Disabilities (CRPD). Available online: https://www.un.org/development/desa/disabilities/convention-on-the-rights-of-persons-with-disabilities.html (accessed on 26 January 2020).

- Thompson, W.S. Eugenics and the Social Good. Soc. Forces 1925, 3, 414–419. [Google Scholar] [CrossRef]

- Graby, S. Access to work or liberation from work? Disabled people, autonomy, and post-work politics. Can. J. Disabil. Stud. 2015, 4, 132. [Google Scholar] [CrossRef]

- Arksey, H.; O’Malley, L. Scoping studies: Towards a methodological framework. Int. J. Soc. Res. Methodol. 2005, 8, 19–32. [Google Scholar] [CrossRef]

- Anderson, S.; Allen, P.; Peckham, S.; Goodwin, N. Asking the right questions: Scoping studies in the commissioning of research on the organisation and delivery of health services. Heal. Res. Policy Syst. 2008, 6, 7. [Google Scholar] [CrossRef] [PubMed]

- News Media Canada. FAQ. Available online: https://nmc-mic.ca/about-newspapers/faq/ (accessed on 26 January 2020).

- News Media Canada. Snapshot 2016 Daily Newspapers. Available online: https://nmc-mic.ca/wp-content/uploads/2015/02/Snapshot-Fact-Sheet-2016-for-Daily-Newspapers-3.pdf (accessed on 26 January 2020).

- Ye, S.; Wu, S.F. Measuring Message Propagation and Social Influence on Twitter.com. In Computer Vision; Springer Science and Business Media LLC: Berlin/Heidelberg, Germany, 2010; Volume 6430, pp. 216–231. [Google Scholar]

- Kim, Y.; Chandler, J.D. How social community and social publishing influence new product lauch: The case of twitter during the playstation 4 and XBOX One launches. J. Mark. Theory Pract. 2018, 26, 144–157. [Google Scholar] [CrossRef]

- Zannettou, S.; Caulfield, T.; De Cristofaro, E.; Sirivianos, M.; Stringhini, G.; Blackburn, J. Disinformation Warfare: Understanding State-Sponsored Trolls on Twitter and Their Influence on the Web. Available online: https://arxiv.org/abs/1801.09288 (accessed on 26 February 2020).

- Young, K.; Ashby, D.; Boaz, A.; Grayson, L. Social Science and the Evidence-based Policy Movement. Soc. Policy Soc. 2002, 1, 215–224. [Google Scholar] [CrossRef]

- Bowen, S.; Zwi, A. Pathways to “Evidence-Informed” Policy and Practice: A Framework for Action. PLoS Med. 2005, 2, e166. [Google Scholar] [CrossRef]

- Head, B. Three Lenses of Evidence-Based Policy. Aust. J. Public Adm. 2008, 67, 1–11. [Google Scholar] [CrossRef]

- Davis, K.; Drey, N.; Gould, D.; Drey, N. What are scoping studies? A review of the nursing literature. Int. J. Nurs. Stud. 2009, 46, 1386–1400. [Google Scholar] [CrossRef]

- Burwell, S.; Sample, M.; Racine, E. Ethical aspects of brain computer interfaces: A scoping review. BMC Med. Ethic 2017, 18, 60. [Google Scholar] [CrossRef]

- Hsieh, H.-F.; Shannon, S.E. Three Approaches to Qualitative Content Analysis. Qual. Heal. Res. 2005, 15, 1277–1288. [Google Scholar] [CrossRef]

- Edling, S.; Simmie, G.M. Democracy and emancipation in teacher education: A summative content analysis of teacher educators’ democratic assignment expressed in policies for Teacher Education in Sweden and Ireland between 2000-2010. Citizenship Soc. Econ. Educ. 2017, 17, 20–34. [Google Scholar] [CrossRef]

- Ahuvia, A. Traditional, Interpretive, and Reception Based Content Analyses: Improving the Ability of Content Analysis to Address Issues of Pragmatic and Theoretical Concern. Soc. Indic. Res. 2001, 54, 139–172. [Google Scholar] [CrossRef]

- Cullinane, K.; Toy, N. Identifying influential attributes in freight route/mode choice decisions: A content analysis. Transp. Res. Part E: Logist. Transp. Rev. 2000, 36, 41–53. [Google Scholar] [CrossRef]

- Downe-Wamboldt, B. Content analysis: Method, applications, and issues. Heal. Care Women Int. 1992, 13, 313–321. [Google Scholar] [CrossRef] [PubMed]

- Woodrum, E. “Mainstreaming” Content Analysis in Social Science: Methodological Advantages, Obstacles, and Solutions. Soc. Sci. Res. 1984, 13, 1. [Google Scholar] [CrossRef]

- Clarke, V.; Braun, V. Thematic Analysis. In Encyclopedia of Critical Psychology; Springer Science and Business Media LLC: Berlin/Heidelberg, Germany, 2014; pp. 1947–1952. [Google Scholar]

- Baxter, P.; Jack, S. Qualitative case study methodology: Study design and implementation for novice researchers. Qual. Rep 2008, 13, 544–559. [Google Scholar]

- Lincoln, Y.S.; Guba, E.G.; Pilotta, J.J. Naturalistic inquiry. Int. J. Intercult. Relations 1985, 9, 438–439. [Google Scholar] [CrossRef]

- Shenton, A. Strategies for ensuring trustworthiness in qualitative research projects. Educ. Inf. 2004, 22, 63–75. [Google Scholar] [CrossRef]

- Miesenberger, K.; Ossmann, R.; Archambault, D.; Searle, G.; Holzinger, A. More Than Just a Game: Accessibility in Computer Games. Available online: https://www.researchgate.net/publication/221217630_More_Than_Just_a_Game_Accessibility_in_Computer_Games (accessed on 26 February 2020).

- Dengler, S.; Awad, A.; Dressler, F. Sensor/Actuator Networks in Smart Homes for Supporting Elderly and Handicapped People. In Proceedings of the 21st International Conference on Advanced Information Networking and Applications Workshops (AINAW’07), Niagara Falls, ON, Canada, 21–23 May 2007. [Google Scholar]

- Mintz, J.; Gyori, M.; Aagaard, M. Touching the Future Technology for Autism?: Lessons from the HANDS Project; IOS Press: Amsterdam, The Netherlands, 2012; p. 135. [Google Scholar]

- Agangiba, M.A.; Nketiah, E.B.; Agangiba, W.A. Web Accessibility for the Visually Impaired: A Case of Higher Education Institutions’ Websites in Ghana. Form. Asp. Compon. Softw. 2017, 10473, 147–153. [Google Scholar]

- Brewer, J. Exploring Paths to a More Accessible Digital Future. In Proceedings of the ASSETS ’18: 20th International ACM SIGACCESS Conference on Computers and Accessibility, Galway, Ireland, 22–24 October 2018. [Google Scholar]

- Venter, A.; Renzelberg, G.; Homann, J.; Bruhn, L. Which Technology Do We Want? Ethical Considerations about Technical Aids and Assisting Technology. Comput. Vis. 2008, 5105, 1325–1331. [Google Scholar]

- Lasecki, W.S. Crowdsourcing for deployable intelligent systems. In Proceedings of the Twenty-Seventh AAAI Conference on Artificial Intelligence, Bellvue, WA, USA, 14–18 July 2013. [Google Scholar]

- Bruhn, L.; Homann, J.; Renzelberg, G. Participation in Development of Computers Helping People. Comput. Vis. 2006, 4061, 532–535. [Google Scholar]

- Braffort, A. Research on Computer Science and Sign Language: Ethical Aspects. Comput. Vis. 2002, 2298, 1–8. [Google Scholar]

- Adams, K.; Encarnação, P.; Rios-Rincón, A.M.; Cook, A. Will artificial intelligence be a blessing or concern in assistive robots for play? J. Hum. Growth Dev. 2018, 28, 213–218. [Google Scholar] [CrossRef]

- Kirka, D. Talking Gloves, Tactile Windows: AI Helps the Disabled. Available online: https://apnews.com/f67a9be7cc77406ab84baf20f4d739f3/Talking-gloves,-tactile-windows:-new-tech-helps-the-disabled (accessed on 25 February 2020).

- Stonehouse, D. The cyborg evolution: Kevin Warwick’s experiments with implanting chips to talk to computers isn’t as far-fetched as one might think. The Ottawa Citizen, 28 March 2002; F2. [Google Scholar]

- Dingman, S. Even Stephen Hawking fears the rise of machines. The Globe and Mail, 3 December 2014. [Google Scholar]

- Rivers, H. Automation: ’very big threat and necessary evil’. Tillsonburg News, 4 July 2018; A6. [Google Scholar]

- Michigan Medicine. “This technology has great potential to help people with disabilities, but it also has potential for misuse and unintended consequences.” Learn more about why ethics are important as brain implants and artificial intelligence merge. Twitter: Twitter. 2017. Available online: https://twitter.com/umichmedicine/status/932601930899165186 (accessed on 25 February 2020).

- grumpybrummie. Totally unethical #uber. People with disabilities next? Plus AI should be used for the greater good. Imagine the outcry if someone patented AI that spotted potential suicides and patented it! The #bbc picked this up and did not note the significance. Tweet, 2018. Available online: https://twitter.com/grumpybrummie/status/1006507671141388288 (accessed on 25 February 2020).

- Celestino Güemes @tguemes. This is great. I expect they include some money on ethical aspects of AI, to fight “artificial bias amplification” that impact all of us. “Microsoft commits $25M over 5 years for new ‘AI for Accessibility’ initiative to help people with disabilities”. Twitter: Twitter. 2018. Available online: https://twitter.com/tguemes/status/993724895405203456 (accessed on 25 February 2020).

- MyOneWomanShow. @DavidLepofsky: AI can create barriers for people with disabilities. Says we need to take action in order to mitigate that risk. #AIsocialgood #ipOZaichallenge @lawyersdailyca. Tweet, 2018.

- atlaak. Microsoft will use AI to help people with disabilities deal with challenges in three key areas: Employment, human connection and modern life. Tweet, 2018. Available online: https://twitter.com/MyOneWomanShow/status/959523216573304833 (accessed on 25 February 2020).

- MrTopple. Why have you done this again to @NicolaCJeffery, @TwitterSupport? We’ve been here before with your AI/algorithms intentionally targeting disabled people. And you’ve done it again. You know this apparent discrimination is unacceptable yes? #DisabilityRights. Tweet, 2018. Available online: https://twitter.com/MrTopple/status/983473326797574145 (accessed on 25 February 2020).

- Jenny_L_Davis. “AI performs tasks according to existing formations in the social order, amplifying implicit biases, and ignoring disabled people or leaving them exposed”. Tweet, 2018. Available online: https://twitter.com/Jenny_L_Davis/status/964619430268354560 (accessed on 25 February 2020).

- PeaceGeeks. There is increasing evidence that women, ethnic minority, people with disabilities and LGBTQ experience discrimination by biased algorithms. How do we make sure that artificial intelligence doesn’t further marginalize these groups? Tweet. 2017. Available online: https://twitter.com/PeaceGeeks/status/1020023012563746816 (accessed on 25 February 2020).

- SFdirewolf. Content warning: Suicide, suicidal ideation MT Facebook’s AI suicide prevention tool raises concerns for people with mental. Tweet, 2017. Available online: https://twitter.com/SFdirewolf/status/940235227682701313 (accessed on 25 February 2020).

- jont. More bad news for disabled people, I want to know how @hirevue and personality test tools like @saberruk avoid AI driven discrimination. Tweet, 2017. Available online: https://twitter.com/jont/status/905392476952944641 (accessed on 25 February 2020).

- karineb. Real problematic: #AI ranks a job applicant with 25,000 criteria. Do you smile? do you make an eye contact? Good. What if not? what chance does this process give to people who are not behaving like the mainstream? of people with disabilities? Real problematic. Tweet, 2018. Available online: https://twitter.com/karineb/status/991644766071861248 (accessed on 25 February 2020).

- Bühler, C. Design for All—From Idea to Practise. Comput. Vis. 2008, 5105, 106–113. [Google Scholar]

- Hubert, M.; Bühler, C.; Schmitz, W. Implementing UNCRPD—Strategies of Accessibility Promotion and Assistive Technology Transfer in North Rhine-Westphalia. Comput. Vis. 2016, 9758, 89–92. [Google Scholar]

- Constantinou, V.; Kosmas, P.; Parmaxi, A.; Ioannou, A.; Klironomos, I.; Antona, M.; Stephanidis, C.; Zaphiris, P. Towards the Use of Social Computing for Social Inclusion: An Overview of the Literature. Form. Asp. Compon. Softw. 2018, 10924 LNCS, 376–387. [Google Scholar]

- Treviranus, J.; Clark, C.; Mitchell, J.; Vanderheiden, G.C. Prosperity4All—Designing a Multi-Stakeholder Network for Economic Inclusion. Univers. Access -Hum.-Comput. Interact. Aging Assist. Environ. 2014, 8516, 453–461. [Google Scholar]

- Gjøsæter, T.; Radianti, J.; Chen, W. Universal Design of ICT for Emergency Management. Lect. Notes Comput. Sci. 2018, 10907 LNCS, 63–74. [Google Scholar]

- Dalmau, F.V.; Redondo, E.; Fonseca, D. E-Learning and Serious Games. Form. Asp. Compon. Softw. 2015, 9192, 632–643. [Google Scholar]

- juttatrevira. Replying to @juttatrevira @melaniejoly @bigideaproj @CQualtro training AI to serve “outliers”, including people with disabilities results in better prediction, planning, risk aversion & design Tweet. 2017. Available online: https://twitter.com/juttatrevira/status/867387763808718852 (accessed on 25 February 2020).

- SmartCitiesL. “People with #disabilities must be involved in the design & development of #SmartCities and #AI because we will build-in #accessibility and human-centered innovation from the beginning in a way that businesses and non-disabled techies could never think of otherwise,” says @DLBLLC. Tweet, 2018. Available online: https://twitter.com/juttatrevira/status/867387763808718852 (accessed on 25 February 2020).

- SPMazrui. @HonTonyCoelho urges business to include people with disabilities in the development of AI. Applauds companies like Apple for including people with disabilities and making products better for everyone! @AppleNews @USBLN. Tweet, 2018. Available online: https://twitter.com/SPMazrui/status/1016716434674552832 (accessed on 25 February 2020).

- newinquiry. “There are Innovations for Disabled People being made in the Field of aCcessible Design and Medical Technologies, Such As AI Detecting Autism (Again). However, in These Narratives, Technologies Come First—As “Helping People with Disabilities”. Tweet, 2018. Available online: https://twitter.com/newinquiry/status/961974216643022848 (accessed on 25 February 2020).

- zagbah. AI may help improve the lives of Disabled people who can afford to access the technology. Tech ain’t free & Dis folk are typically poor. Tweet, 25 February 2017. Available online: https://twitter.com/zagbah/status/892180529587642369 (accessed on 25 February 2020).

- The Calgary Sun. Google to give $25 million to fund humane AI projects. The Calgary Sun, 30 October 2018; A47. [Google Scholar]

- Canada NewsWire. UAE Launches ’AI and Robotics Award for Good’ Competition to Transform Use of Robotics and Artificial Intelligence. Available online: https://www.prnewswire.com/news-releases/uae-launches-ai-and-robotics-award-for-good-competition-to-transform-use-of-robotics-and-artificial-intelligence-291430031.html (accessed on 25 February 2020).

- Mulley, A.; Gelijns, A. The patient’s stake in the changing health care economy. In Technology and Health Care in an Era of Limits; National Academy Press: Washington, DC, USA, 1992; pp. 153–163. [Google Scholar]

- Eschler, J.; O’Leary, K.; Kendall, L.; Ralston, J.D.; Pratt, W. Systematic Inquiry for Design of Health Care Information Systems: An Example of Elicitation of the Patient Stakeholder Perspective. In Proceedings of the 2015 48th Hawaii International Conference on System Sciences, Kauai, HI, USA, 5–8 January 2015. [Google Scholar]

- Reuters. Amazon scraps secret AI recruiting tool that showed bias against women. Available online: https://www.reuters.com/article/us-amazon-com-jobs-automation-insight/amazon-scraps-secret-ai-recruiting-tool-that-showed-bias-against-women-idUSKCN1MK08G (accessed on 26 January 2020).

- Wolbring, G.; Mackay, R.; Rybchinski, T.; Noga, J. Disabled People and the Post-2015 Development Goal Agenda through a Disability Studies Lens. Sustainability 2013, 5, 4152–4182. [Google Scholar] [CrossRef]

- Participants of the UN Department of Economic and Social Affairs (UNDESA) and UNICEF organized Online Consultation - 8 March - 5 April Disability inclusive development agenda towards 2015 & beyond. Disability inclusive development agenda towards 2015 & beyond. Available online: http://www.un.org/en/development/desa/news/social/disability-inclusive-development.html (accessed on 26 January 2020).

- Ackerman, E. My Fight With a Sidewalk Robot. Available online: https://www.citylab.com/perspective/2019/11/autonomous-technology-ai-robot-delivery-disability-rights/602209/ (accessed on 26 January 2020).

- Wolbring, G. Ecohealth Through an Ability Studies and Disability Studies Lens. In Understanding Emerging Epidemics: Social and Political Approaches; Emerald: Bingley, West Yorkshire, England, 2013; pp. 91–107. [Google Scholar]

- Fenney, D. Ableism and Disablism in the UK Environmental Movement. Environ. Values 2017, 26, 503–522. [Google Scholar] [CrossRef]

- Yuste, R.; Goering, S.; Arcas, B.A.Y.; Bi, G.-Q.; Carmena, J.M.; Carter, A.; Fins, J.J.; Friesen, P.; Gallant, J.; Huggins, J.; et al. Four ethical priorities for neurotechnologies and AI. Nature News 2017, 551, 159–163. [Google Scholar] [CrossRef]

- Government of Italy. White Paper: Artificial Intelligence at the service of the citizen. Available online: https://ai-white-paper.readthedocs.io/en/latest/doc/capitolo_3_sfida_7.html (accessed on 26 January 2020).

- Executive Office of the President National Science and Technology Council Committee on Technology. Preparing for the future of artificial intelligence. Available online: https://obamawhitehouse.archives.gov/sites/default/files/whitehouse_files/microsites/ostp/NSTC/preparing_for_the_future_of_ai.pdf (accessed on 26 January 2020).

- Government of France. Artificial Intelligence: “Making France a Leader”. Available online: https://www.gouvernement.fr/en/artificial-intelligence-making-france-a-leader (accessed on 26 January 2020).

- Villani, C. For a meaningful artificial intelligence towards a French and European strategy. Available online: https://www.aiforhumanity.fr/pdfs/MissionVillani_Report_ENG-VF.pdf (accessed on 26 January 2020).

- European Commission. Artificial Intelligence for Europe. Available online: http://ec.europa.eu/newsroom/dae/document.cfm?doc_id=51625 (accessed on 26 January 2020).

- House of Lords Select Committee on Artificial Intelligence. AI in the UK: Ready, willing and able? Available online: https://publications.parliament.uk/pa/ld201719/ldselect/ldai/100/100.pdf (accessed on 26 January 2020).

- AI Forum New Zealand. Shaping a Future New Zealand An Analysis of the Potential Impact and Opportunity of Artificial Intelligence on New Zealand’s Society and Economy. Available online: https://aiforum.org.nz/wp-content/uploads/2018/07/AI-Report-2018_web-version.pdf (accessed on 26 January 2020).

- Executive Office of the President (United States). Artificial intelligence, automation, and the economy. Available online: https://obamawhitehouse.archives.gov/sites/whitehouse.gov/files/documents/Artificial-Intelligence-Automation-Economy.PDF (accessed on 26 January 2020).

- Wolbring, G. Employment, Disabled People and Robots: What Is the Narrative in the Academic Literature and Canadian Newspapers? Societies 2016, 6, 15. [Google Scholar] [CrossRef]

- Christopherson, S. The Fortress City: Privatized Spaces, Consumer Citizenship. Post-Fordism 2008, 409–427. [Google Scholar]

- Kelly, C. Wrestling with Group Identity: Disability Activism and Direct Funding. Disabil. Stud. Q. 2010, 30, 30. [Google Scholar] [CrossRef]

- Canada Research Coordinating Committee. Canada Research Coordinating Committee Consultation - Key Priorities. Available online: http://www.sshrc-crsh.gc.ca/CRCC-CCRC/priorities-priorites-eng.aspx#edi (accessed on 26 January 2020).

- Canadian Institute for Advanced Research (CIFAR). Pan-Canadian Artificial Intelligence Strategy. Available online: https://www.cifar.ca/ai/pan-canadian-artificial-intelligence-strategy (accessed on 26 January 2020).

- United Nations, Transforming our World: The 2030 Agenda for Sustainable Development. Available online: https://stg-wedocs.unep.org/bitstream/handle/20.500.11822/11125/unep_swio_sm1_inf7_sdg.pdf?sequence=1 (accessed on 25 February 2020).

- Wolbring, G.; Djebrouni, M.; Johnson, M.; Diep, L.; Guzman, G. The Utility of the “Community Scholar” Identity from the Perspective of Students from one Community Rehabilitation and Disability Studies Program. Interdiscip. Perspect. Equal. Divers. 2018, 4, 1–22. [Google Scholar]

- Hutcheon, E.J.; Wolbring, G. Voices of “disabled” post secondary students: Examining higher education “disability” policy using an ableism lens. J. Divers. High. Educ. 2012, 5, 39–49. [Google Scholar] [CrossRef]

| 1 | We acknowledge that there is an ongoing discussion whether one should use people first language (people with disability instead of using the phrase disabled people). We use both types of phrases in our search strategies in order not to miss articles, but we use disabled people instead of people first language in our own writing. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lillywhite, A.; Wolbring, G. Coverage of Artificial Intelligence and Machine Learning within Academic Literature, Canadian Newspapers, and Twitter Tweets: The Case of Disabled People. Societies 2020, 10, 23. https://doi.org/10.3390/soc10010023

Lillywhite A, Wolbring G. Coverage of Artificial Intelligence and Machine Learning within Academic Literature, Canadian Newspapers, and Twitter Tweets: The Case of Disabled People. Societies. 2020; 10(1):23. https://doi.org/10.3390/soc10010023

Chicago/Turabian StyleLillywhite, Aspen, and Gregor Wolbring. 2020. "Coverage of Artificial Intelligence and Machine Learning within Academic Literature, Canadian Newspapers, and Twitter Tweets: The Case of Disabled People" Societies 10, no. 1: 23. https://doi.org/10.3390/soc10010023

APA StyleLillywhite, A., & Wolbring, G. (2020). Coverage of Artificial Intelligence and Machine Learning within Academic Literature, Canadian Newspapers, and Twitter Tweets: The Case of Disabled People. Societies, 10(1), 23. https://doi.org/10.3390/soc10010023