A Risk-Oriented and Explainable Hierarchical AI Framework for Chronic Kidney Disease Classification

Abstract

1. Introduction

- A hierarchical CKD classification framework is developed and validated to separate advanced disease detection from severity staging and individualized risk assessment, closely aligning the modeling strategy with real-world clinical workflows.

- A continuous risk scoring mechanism is designed for individuals without confirmed CKD, leveraging routinely collected laboratory profiles to enable early risk stratification and targeted clinical follow-up.

- A real-world clinical laboratory dataset from kidney clinics in Saudi Arabia is curated and analyzed, addressing the scarcity of region-specific evidence in CKD prediction research.

- Feature selection and SHAP-based explainability are integrated within the predictive pipeline to deliver transparent, interpretable, and clinically meaningful decision support.

2. Related Work

3. Materials and Methods

3.1. Proposed System Overview

3.2. Dataset

3.3. Preprocessing

3.3.1. Handling Features with High Missing Values

3.3.2. Handling Missing Values

3.3.3. Data Scaling

3.3.4. Data Splitting

3.4. Feature Selection

3.5. Model Development

3.5.1. Training Strategy

3.5.2. Implementation Details and Hyperparameters

3.5.3. Hierarchical Classification

3.5.4. Threshold Tuning for Binary Classification

3.5.5. Model Explainability

4. Hierarchical Classifiers Results

External Validation on a Public Dataset

5. Discussion of Hierarchical Classifiers Results

6. Proposed CKD Risk Assessment System

6.1. System Overview

6.2. Input Handling and System Workflow

6.3. Unified Inference Preprocessing (Deployment Readiness)

6.4. Hierarchical Classification Framework

6.4.1. Binary Head Model Selection

6.4.2. Binary Head Architecture and Decision Logic

6.4.3. Stage Head Architecture

6.5. Risk Score Computation for Non-CKD Predictions

6.6. CKD-like Pattern Detection

6.6.1. Data-Driven Reference Ranges

6.6.2. Cutpoint-Based Abnormality Detection

6.7. Explainability Framework

6.7.1. Global Explainability (Model-Level)

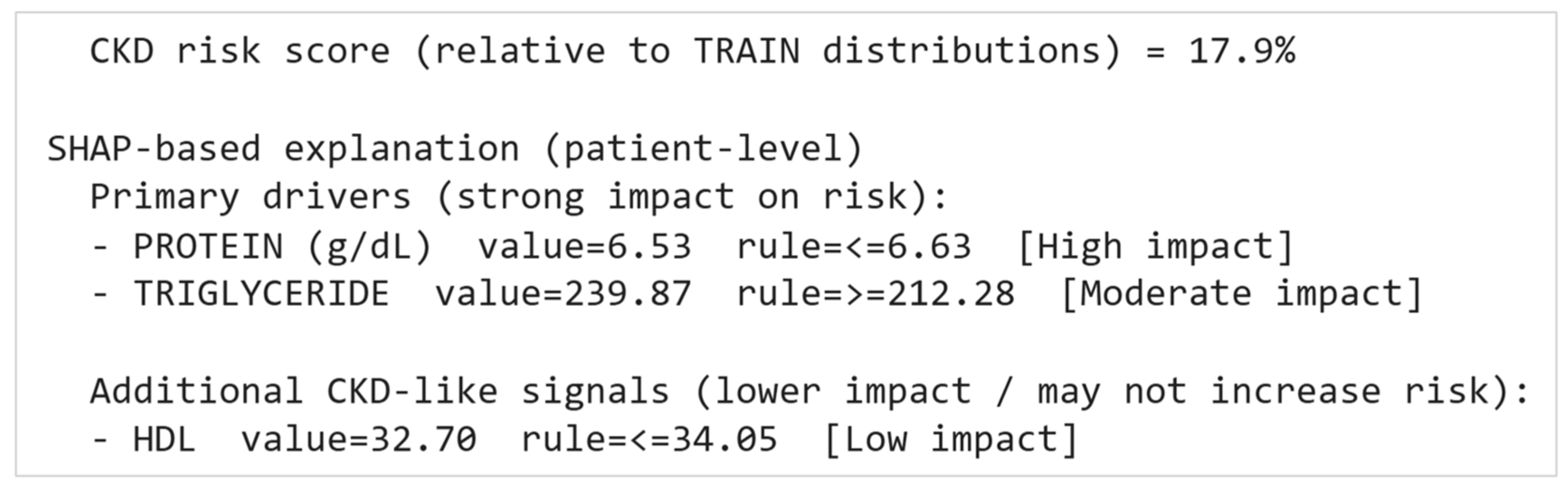

6.7.2. Local Patient-Level Explainability (Case-Level)

- PROTEIN (g/dL) with its value and cutpoint rule, flagged as High impact

- TRIGLYCERIDE with its value and cutpoint rule, flagged as Moderate impact

6.7.3. Controlled Explanation Policy

6.8. Deployment and Reproducibility

7. Conclusions

8. Limitations and Future Work

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

| Feature | Non-CKD Range (Q5–Q95) | CKD-Like Cutpoint |

|---|---|---|

| ALB (g/dL) | [3.1557, 4.7] | <=3.1557 |

| ALP (U/L) | [47, 110.332] | >=110.332 |

| ALT (U/L) | [9.2, 57.1] | <=9.2 |

| AST (U/L) | [11.1, 42.0702] | <=11.1 |

| Age | [24, 73.5] | >=73.5 |

| BUN (mg/dL) | [7, 23.4899] | >=23.7 |

| CA (mg/dL) | [6.94482, 12.3593] | <=6.94482 |

| CHOL (mg/dL) | [120, 254.45] | <=120 |

| CL | [97.0513, 107] | >=107 |

| CREA (mg/dL) | [0.545, 1.275] | >=1.63 |

| GLU GLUCOSE (mg/dL) | [74.3531, 232.235] | >=232.235 |

| HCT | [32, 48.75] | <=32 |

| HDL | [33.8108, 70.05] | <=33.8108 |

| HGB | [10.1, 16.2] | <=10.1 |

| K (MMOL/L) | [3.68, 4.885] | >=4.885 |

| LDL | [53.9651, 170.228] | <=53.9651 |

| MCH | [22.6, 31.45] | >=31.45 |

| MCHC | [30.6, 34.8] | >=34.8 |

| MCV | [71.45, 95.35] | <=71.45 |

| Mg (mg/dL) | [1.67, 2.13] | >=2.13 |

| NA (MMOL/L) | [132, 142] | >=142 |

| P (mg/dL) | [2.675, 4.3] | >=4.3 |

| PLATEL | [165, 443.5] | <=165 |

| PROTEIN (g/dL) | [6.65263, 8.00493] | <=6.65263 |

| RBC | [3.79, 5.815] | <=3.79 |

| RBCUrine | [0.1, 10.1429] | >=10.1429 |

| RDW-CV | [12.2, 17.05] | >=17.05 |

| TBIL (mg/dL) | [0.0606559, 0.902033] | <=0.0606559 |

| TRIGLYCERIDE | [54.65, 219.256] | >=219.256 |

| U.A (mg/dL) | [3.2, 7.65559] | >=7.65559 |

| WBC | [3.78, 10.53] | >=10.53 |

| WBCUrine | [0, 42.1791] | >=42.1791 |

References

- Bazira, P.J. Anatomy of the Kidney and Ureter. Surg.-Oxf. Int. Ed. 2025, 43, 349–357. [Google Scholar] [CrossRef]

- Jha, V.; Al-Ghamdi, S.M.G.; Li, G.; Wu, M.-S.; Stafylas, P.; Retat, L.; Card-Gowers, J.; Barone, S.; Cabrera, C.; Garcia Sanchez, J.J. Global Economic Burden Associated with Chronic Kidney Disease: A Pragmatic Review of Medical Costs for the Inside CKD Research Programme. Adv. Ther. 2023, 40, 4405–4420. [Google Scholar] [CrossRef] [PubMed]

- Dai, L.; Zhuang, J.; Fan, L.; Zou, X.; Chan, K.H.K.; Yu, X.; Li, J. Association between Birth Weight and the Risk of Chronic Kidney Disease in Men and Women: Findings from a Large Prospective Cohort Study. Med. Adv. 2023, 1, 44–52. [Google Scholar] [CrossRef]

- Islam, M.A.; Majumder, M.Z.H.; Hussein, M.A. Chronic Kidney Disease Prediction Based on Machine Learning Algorithms. J. Pathol. Inform. 2023, 14, 100189. [Google Scholar] [CrossRef]

- Hema, K.; Meena, K.; Pandian, R. Analyze the Impact of Feature Selection Techniques in the Early Prediction of CKD. Int. J. Cogn. Comput. Eng. 2024, 5, 66–77. [Google Scholar] [CrossRef]

- Urine Albumin-Creatinine Ratio (uACR)|National Kidney Foundation. Available online: https://www.kidney.org/kidney-failure-risk-factor-urine-albumin-creatinine-ratio-uacr (accessed on 27 February 2026).

- Stevens, P.E.; Ahmed, S.B.; Carrero, J.J.; Foster, B.; Francis, A.; Hall, R.K.; Herrington, W.G.; Hill, G.; Inker, L.A.; Kazancıoğlu, R.; et al. KDIGO 2024 Clinical Practice Guideline for the Evaluation and Management of Chronic Kidney Disease. Kidney Int. 2024, 105, S117–S314. [Google Scholar] [CrossRef]

- Van Mil, D.; Kieneker, L.M.; Heerspink, H.J.L.; Gansevoort, R.T. Screening for Chronic Kidney Disease: Change of Perspective and Novel Developments. Curr. Opin. Nephrol. Hypertens. 2024, 33, 583–592. [Google Scholar] [CrossRef] [PubMed]

- Chronic Kidney Disease (CKD)-Symptoms, Causes, Treatment|National Kidney Foundation. Available online: https://www.kidney.org/kidney-topics/chronic-kidney-disease-ckd (accessed on 27 February 2026).

- Vassalotti, J.A.; Francis, A.; Soares Dos Santos, A.C.; Correa-Rotter, R.; Abdellatif, D.; Hsiao, L.-L.; Roumeliotis, S.; Haris, A.; Kumaraswami, L.A.; Lui, S.-F.; et al. Are Your Kidneys Ok? Detect Early to Protect Kidney Health. Kidney Int. Rep. 2025, 10, 629–636. [Google Scholar] [CrossRef]

- Kushner, P.; Khunti, K.; Cebrián, A.; Deed, G. Early Identification and Management of Chronic Kidney Disease: A Narrative Review of the Crucial Role of Primary Care Practitioners. Adv. Ther. 2024, 41, 3757–3770. [Google Scholar] [CrossRef] [PubMed]

- Chou, A.; Li, K.C.; Brown, M.A. Survival of Older Patients with Advanced CKD Managed Without Dialysis: A Narrative Review. Kidney Med. 2022, 4, 100447. [Google Scholar] [CrossRef]

- Gulamali, F.F.; Sawant, A.S.; Nadkarni, G.N. Machine Learning for Risk Stratification in Kidney Disease. Curr. Opin. Nephrol. Hypertens. 2022, 31, 548–552. [Google Scholar] [CrossRef]

- Narasimhan, P.; Iqbal, U.; Li, Y.-C. Artificial Intelligence in Clinical Risk Prediction: Promise, Performance and the Path Forward? BMJ Health Care Inf. 2025, 32, e101707. [Google Scholar] [CrossRef] [PubMed]

- Abbasi, T.; Pinky, L. Personalized Prediction in Nephrology: A Comprehensive Review of Artificial Intelligence Models Using Biomarker Data. BioMedInformatics 2025, 5, 67. [Google Scholar] [CrossRef]

- Khalid, F.; Alsadoun, L.; Khilji, F.; Mushtaq, M.; Eze-odurukwe, A.; Mushtaq, M.M.; Ali, H.; Farman, R.O.; Ali, S.M.; Fatima, R.; et al. Predicting the Progression of Chronic Kidney Disease: A Systematic Review of Artificial Intelligence and Machine Learning Approaches. Cureus 2024, 16, e60145. [Google Scholar] [CrossRef] [PubMed]

- He, J.; Wang, X.; Zhu, P.; Wang, X.; Zhang, Y.; Zhao, J.; Sun, W.; Hu, K.; He, W.; Xie, J. Identification and Validation of an Explainable Early-Stage Chronic Kidney Disease Prediction Model: A Multicenter Retrospective Study. EClinicalMedicine 2025, 84, 103286. [Google Scholar] [CrossRef]

- Bahrami, P.; Tanbakuchi, D.; Afzalaghaee, M.; Ghayour-Mobarhan, M.; Esmaily, H. Development of Risk Models for Early Detection and Prediction of Chronic Kidney Disease in Clinical Settings. Sci. Rep. 2024, 14, 32136. [Google Scholar] [CrossRef]

- Rubini, L.; Eswaran, P.S. Chronic Kidney Disease Data Set. Available online: https://archive.ics.uci.edu/dataset/336 (accessed on 4 April 2026).

- Kalpana, C.; Jagadeesh, K.; Vennila, A.; Radhika, R. A Stacking Based Explainable Boosting Model for Early Prediction of Chronic Kidney Disease. In Proceedings of the 2025 6th International Conference on Inventive Research in Computing Applications (ICIRCA), Coimbatore, India, 25–27 June 2025; IEEE: Coimbatore, India, 2025; pp. 1584–1589. [Google Scholar]

- Halder, R.K.; Uddin, M.N.; Uddin, M.d.A.; Aryal, S.; Saha, S.; Hossen, R.; Ahmed, S.; Rony, M.A.T.; Akter, M.F. ML-CKDP: Machine Learning-Based Chronic Kidney Disease Prediction with Smart Web Application. J. Pathol. Inform. 2024, 15, 100371. [Google Scholar] [CrossRef]

- Surekha, Y.; Kodepogu, K.R.; Kumari, G.L.; Babu, N.R.; Lanka, T.; Volla, M.A.; Pillutla, M.; Kari, A. Prediction of Chronic Kidney Disease with Machine Learning Models and Feature Analysis Using SHAP. RIA 2023, 37, 493–499. [Google Scholar] [CrossRef]

- Alsekait, D.M.; Saleh, H.; Gabralla, L.A.; Alnowaiser, K.; El-Sappagh, S.; Sahal, R.; El-Rashidy, N. Toward Comprehensive Chronic Kidney Disease Prediction Based on Ensemble Deep Learning Models. Appl. Sci. 2023, 13, 3937. [Google Scholar] [CrossRef]

- Lu, Y.; Ning, Y.; Li, Y.; Zhu, B.; Zhang, J.; Yang, Y.; Chen, W.; Yan, Z.; Chen, A.; Shen, B.; et al. Risk Factor Mining and Prediction of Urine Protein Progression in Chronic Kidney Disease: A Machine Learning- Based Study. BMC Med. Inf. Decis. Mak. 2023, 23, 173. [Google Scholar] [CrossRef]

- Saif, D.; Sarhan, A.M.; Elshennawy, N.M. Deep-Kidney: An Effective Deep Learning Framework for Chronic Kidney Disease Prediction. Health Inf. Sci. Syst. 2024, 12, 3. [Google Scholar] [CrossRef]

- Aracri, F.; Bianco, M.G.; Quattrone, A.; Sarica, A. Bridging the Gap: Missing Data Imputation Methods and Their Effect on Dementia Classification Performance. Brain Sci. 2025, 15, 639. [Google Scholar] [CrossRef]

- Pinheiro, J.M.H.; Oliveira, S.V.B.D.; Silva, T.H.S.; Saraiva, P.A.R.; Souza, E.F.D.; Godoy, R.V.; Ambrosio, L.A.; Becker, M. The Impact of Feature Scaling in Machine Learning: Effects on Regression and Classification Tasks. IEEE Access 2025, 13, 199903–199931. [Google Scholar] [CrossRef]

- Chandrashekar, G.; Sahin, F. A Survey on Feature Selection Methods. Comput. Electr. Eng. 2014, 40, 16–28. [Google Scholar] [CrossRef]

- Silla, C.N.; Freitas, A.A. A Survey of Hierarchical Classification across Different Application Domains. Data Min. Knowl. Discov. 2011, 22, 31–72. [Google Scholar] [CrossRef]

- Tjoa, E.; Guan, C. A Survey on Explainable Artificial Intelligence (XAI): Toward Medical XAI. IEEE Trans. Neural Netw. Learn. Syst. 2021, 32, 4793–4813. [Google Scholar] [CrossRef] [PubMed]

- Lundberg, S.; Lee, S.-I. A Unified Approach to Interpreting Model Predictions. arXiv 2017, arXiv:1705.07874. [Google Scholar] [CrossRef]

| Category | # of Features | Features |

|---|---|---|

| Basic Information | 3 | ID, Gender, Age |

| Clinical Conditions | 5 | Hypertension, Systolic Blood Pressure (Systolic_BP), Diastolic Blood Pressure (Diastolic_BP), Diabetes Mellitus, Coronary Artery Disease |

| Biochemistry Tests | 31 | Creatinine, Protein, Blood Urea Nitrogen (BUN), Albumin (ALB), Alkaline Phosphatase (ALP), Alanine Aminotransferase (ALT), Aspartate Aminotransferase (AST), Calcium (Ca), Cholesterol (CHOL), Glucose (Glu), Sodium (Na), Phosphorus (P), Total Bilirubin (TBIL), Direct Bilirubin (DBIL), Uric Acid (UA), Potassium (K), Magnesium (Mg), Lactate Dehydrogenase (LDH), Non-High-Density Lipoprotein Cholesterol (Non-HDL), High-Density Lipoprotein Cholesterol (HDL), Low-Density Lipoprotein Cholesterol (LDL), Glycated Hemoglobin, Chloride (Cl), Troponin I, Iron, Triglycerides, Gamma-Glutamyl Transferase (GGT), Lipase, Amylase, Creatine Kinase (CK), Creatine Kinase-MB (CK-MB) |

| Hematology Tests | 9 | Hematocrit (HCT), Hemoglobin (HGB), Mean Corpuscular Hemoglobin (MCH), Mean Corpuscular Hemoglobin Concentration (MCHC), Mean Corpuscular Volume (MCV), Platelet Count (PLT), Red Blood Cell Count (RBC), White Blood Cell Count (WBC), Red Cell Distribution Width-Coefficient of Variation (RDW-CV) |

| Hormone Tests | 7 | Vitamin B12, Ferritin, Vitamin D, Thyroid Stimulating Hormone (TSH), Free Thyroxine (FT4), Free Triiodothyronine (FT3), Parathyroid Hormone (PTH) |

| Urine Tests | 5 | Specific Gravity, Protein, Glucose, Red Blood Count (RBC), White Blood Cell Count (WBC) |

| Target class (classification) | 1 | 0: Non-CKD, 1: CKD Stage 3a, 2: CKD Stage 3b, 3: CKD Stage 4, 4: CKD Stage 5 |

| Component | Configuration |

|---|---|

| Binary Model | XGBoost with RFE (26 selected features) |

| Decision Strategy | Threshold optimized on validation set (range: 0.10–0.90, step = 0.01, optimized using balanced accuracy) |

| Stage Model | MLP with SelectKBest (22 selected features) |

| MLP Architecture | Hidden layers (128, 64); max_iter = 700 |

| Head | Accuracy | Precision (Macro) | Recall (Macro) | F1-Score (Macro) | AUC |

|---|---|---|---|---|---|

| Binary | 0.965 ± 0.018 | 0.965 ± 0.025 | 0.973 ± 0.033 | 0.969 ± 0.017 | 0.991 ± 0.010 |

| Stage | 0.798 ± 0.041 | 0.800 ± 0.045 | 0.799 ± 0.041 | 0.798 ± 0.043 | 0.948 ± 0.016 |

| Feature Selection Method | Head | Accuracy | Precision (Macro) | Recall (Macro) | F1-Score (Macro) | AUC | End-to-End Runtime |

|---|---|---|---|---|---|---|---|

| Random Forest | |||||||

| All features | Binary | 0.96 | 0.96 | 0.96 | 0.96 | 0.99 | 24 s |

| Stage | 0.77 | 0.80 | 0.77 | 0.78 | 0.93 | ||

| RFE | Binary | 0.97 | 0.97 | 0.98 | 0.97 | 0.99 | 54 s |

| Stage | 0.69 | 0.70 | 0.69 | 0.69 | 0.91 | ||

| RFECV | Binary | 0.96 | 0.96 | 0.97 | 0.96 | 0.99 | 7 min |

| Stage | 0.71 | 0.71 | 0.71 | 0.71 | 0.90 | ||

| XGBoost | |||||||

| All features | Binary | 0.96 | 0.96 | 0.97 | 0.96 | 0.98 | 5 s |

| Stage | 0.81 | 0.81 | 0.81 | 0.81 | 0.94 | ||

| RFE | Binary | 0.97 | 0.97 | 0.97 | 0.97 | 0.99 | 51 s |

| Stage | 0.79 | 0.80 | 0.79 | 0.79 | 0.93 | ||

| RFECV | Binary | 0.97 | 0.97 | 0.97 | 0.97 | 0.99 | 37 s |

| Stage | 0.79 | 0.80 | 0.79 | 0.79 | 0.94 | ||

| AdaBoost | |||||||

| All features | Binary | 0.96 | 0.96 | 0.96 | 0.96 | 0.99 | 12 min |

| Stage | 0.84 | 0.85 | 0.84 | 0.84 | 0.97 | ||

| RFE | Binary | 0.96 | 0.95 | 0.96 | 0.96 | 0.98 | 54 s |

| Stage | 0.81 | 0.81 | 0.81 | 0.81 | 0.96 | ||

| RFECV | Binary | 0.96 | 0.96 | 0.96 | 0.96 | 0.99 | 6 min |

| Stage | 0.85 | 0.87 | 0.86 | 0.85 | 0.96 | ||

| MLP | |||||||

| All features | Binary | 0.94 | 0.94 | 0.94 | 0.94 | 0.99 | 44 s |

| Stage | 0.85 | 0.85 | 0.85 | 0.85 | 0.95 | ||

| SelectK-Best | Binary | 0.97 | 0.97 | 0.97 | 0.97 | 0.98 | 8 s |

| Stage | 0.85 | 0.86 | 0.86 | 0.86 | 0.96 | ||

| Feature Selection Method | Head | Selected Features |

|---|---|---|

| Random Forest | ||

| RFE | Binary | 22: CREA, Age, hypertension, BUN, ALB, ALP, CA, CHOL, P, TBIL, U.A, K, Mg, HDL, TRGLYCERIDE, HCT, HGB, RBC, RDW-CV, PROTEINUrine, RBCUrine, WBCUrine |

| Stage | 18: CREA, BUN, ALB, ALP, ALT, CHOL, P, HDL, LDL, CL, TRIGLYCERIDE, HCT, HGB, PLATEL, RBC, WBC, RDW-CV, PROTEINUrine | |

| RFECV | Binary | 24: CREA, Age, hypertension, PROTEIN, BUN, ALB, ALP, ALT, CA, P, TBIL, U.A, K, Mg, HDL, LDL, TRIGLYCERIDE, HCT, HGB, RBC, RDW-CV, PROTEINUrine, RBCUrine, WBCUrine |

| Stage | 7: CREA, BUN, P, HCT, HGB, RBC, PROTEINUrine | |

| XGBoost | ||

| RFE | Binary | 28: CREA, Gender, Age, PROTEIN, BUN, ALP, ALT, AST, CA, CHOL, NA, P, TBIL, U.A, K, Mg, HDL, LDL, CL, TRIGLYCERIDE, HGB, MCHC, MCV, PLATEL, RDW-CV, PROTEINUrine, RBCUrine, WBCUrine |

| Stage | 18: CREA, Gender, Age, BUN, ALB, ALP, CA, CHOL, NA, TBIL, U.A, K, LDL, HCT, PLATEL, RBC, RDW-CV, RBCUrine | |

| RFECV | Binary | 10: CREA, Gender, Age, PROTEIN, BUN, ALP, ALT, NA, Mg, WBCUrine |

| Stage | 10: CREA (mg/dL), Gender, Age, BUN, ALB, TBIL, U.A, HCT, RBC, RBCUrine | |

| AdaBoost | ||

| RFE | Binary | 15: CREA, Gender, Age, PROTEIN, NA, P, Mg, HDL, LDL, TRIGLYCERIDE, MCHC, PLATEL, WBC, PROTEINUrine, WBCUrine |

| Stage | 24: CREA, Gender, Age, PROTEIN, BUN, ALB, ALP, ALT, AST, GLU GLUCOSE, P, U.A, K, Mg, CL, TRIGLYCERIDE, HCT, HGB, MCH, MCV, RBC, RDW-CV, RBCUrine, WBCUrine | |

| RFECV | Binary | 26: CREA, Gender, Age, PROTEIN, BUN, ALB, ALP, ALT, AST, NA, P, TBIL, U.A, K, Mg, HDL, LDL, CL, TRIGLYCERIDE, HGB, MCHC, MCV, PLATEL, WBC, PROTEINUrine, WBCUrine |

| Stage | 10: CREA, Gender, Age, BUN, ALB, ALT, GLU GLUCOSE, HCT, MCV, RDW-CV | |

| MLP | ||

| SelectKBest | Binary | 18: CREA, Age, hypertension, diabetes mellitus, BUN, ALB, ALP, CHOL, P, U.A, K, HDL, HCT, HGB, RBC, RDW-CV, PROTEINUrine, WBCUrine |

| Stage | 22: CREA, Gender, Age, PROTEIN, BUN, ALB, ALP, CA, NA, P, U.A, HDL, LDL, CL, HCT, HGB, RBC, WBC, RDW-CV, PROTEINUrine, RBCUrine, WBCUrine | |

| Evaluation | K | Accuracy | Precision (Macro) | Recall (Macro) | F1-Score (Macro) | AUC |

|---|---|---|---|---|---|---|

| Hold-out (70/15/15) | 8 | 0.983 | 1.000 | 0.973 | 0.986 | 1.000 |

| 5-Fold CV (mean ± std) | 8 | 0.987 ± 0.009 | 0.984 ± 0.016 | 0.996 ± 0.009 | 0.990 ± 0.007 | 0.999 ± 0.000 |

| Model | End-to-End Runtime | # Flagged Non-CKD Cases |

|---|---|---|

| Random Forest + RFE | 33 s | 42 |

| XGBoost + RFE | 8 s | 15 |

| XGBoost + RFECV | 19 s | 17 |

| MLP + SelectKBest | ~2 min | 12 |

| Feature | Non-CKD Range (Q5–Q95) | CKD-Like Cutpoint |

|---|---|---|

| CREA (mg/dL) | [0.545, 1.275] | 1.63 |

| BUN (mg/dL) | [7, 23.4899] | 23.7 |

| HGB | [10.1, 16.2] | 10.1 |

| HCT | [32, 48.75] | 32 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Alhaifi, S.; Naemi, F.M.A.; Alowidi, N. A Risk-Oriented and Explainable Hierarchical AI Framework for Chronic Kidney Disease Classification. Diagnostics 2026, 16, 1157. https://doi.org/10.3390/diagnostics16081157

Alhaifi S, Naemi FMA, Alowidi N. A Risk-Oriented and Explainable Hierarchical AI Framework for Chronic Kidney Disease Classification. Diagnostics. 2026; 16(8):1157. https://doi.org/10.3390/diagnostics16081157

Chicago/Turabian StyleAlhaifi, Sara, Fatmah M. A. Naemi, and Nahed Alowidi. 2026. "A Risk-Oriented and Explainable Hierarchical AI Framework for Chronic Kidney Disease Classification" Diagnostics 16, no. 8: 1157. https://doi.org/10.3390/diagnostics16081157

APA StyleAlhaifi, S., Naemi, F. M. A., & Alowidi, N. (2026). A Risk-Oriented and Explainable Hierarchical AI Framework for Chronic Kidney Disease Classification. Diagnostics, 16(8), 1157. https://doi.org/10.3390/diagnostics16081157