1. Introduction

Obstructive sleep apnea (OSA) is a chronic disorder characterized by recurrent upper airway collapse during sleep, manifesting as snoring, intermittent apnea, and sleep fragmentation. These abnormalities are strongly associated with an increased risk of hypertension, stroke, coronary artery disease, and other severe comorbidities [

1,

2,

3]. Currently, the clinical gold standard for diagnosing OSA is polysomnography (PSG). However, PSG involves overnight monitoring of physiological signals in specialized laboratories and requires time-consuming manual scoring by trained technicians [

4,

5].

In China, an estimated 176 million adults aged 30 to 69 are at high risk of OSA, representing the largest affected population globally [

6]. Despite this prevalence, a 2023 survey indicates that there are only approximately 3000 sleep medicine centers nationwide, each with a limited daily capacity of fewer than five PSG tests. Consequently, the current diagnostic capability covers less than 20% of the population in need, creating a severe disparity between supply and demand [

7]. To address this shortage, unattended portable monitoring (PM) has been adopted as an alternative to PSG for patients with a high pre-test probability of OSA and no significant comorbidities [

8]. However, its scalability remains limited by the requirement for professional manual scoring and the slow turnover rate of devices. In recent years, alternative screening approaches have been explored to overcome these barriers, including artificial intelligence models based on clinical-demographic data [

9] and wearable devices equipped with single- or multi-channel smart sensors [

10]. Furthermore, emerging non-contact sensing solutions, such as smart mattresses [

11] and millimeter-wave radar [

12], have also shown potential for OSA screening. Despite these advancements, these methods often demonstrate variable performance stability or rely heavily on specific hardware. Consequently, they still face logistical challenges that hinder large-scale deployment. Given these constraints, there is an urgent imperative to develop a convenient, accurate, and scalable screening method.

Sleep audio analysis offers a promising solution to this challenge. Specifically, snoring, as the most prominent symptom of OSA and the primary complaint prompting clinical consultation, contains acoustic characteristics that reflect upper airway obstruction and correlate with OSA-related complications [

13,

14]. Notably, unlike PSG and other screening approaches, the acquisition of sleep audio is non-invasive and does not rely on specialized medical instrumentation. High-quality audio can be captured using consumer-grade devices such as smartphones, making this approach highly suitable for widespread screening in community settings.

In recent years, the integration of Artificial Intelligence has advanced audio-based OSA screening, marking a transition from manual feature engineering to end-to-end deep learning. Early approaches primarily relied on handcrafted acoustic features, including Mel-frequency Cepstral Coefficients, Linear Prediction Coefficients, spectral flux, pitch, zero-crossing rate, and spectral entropy. These features were combined with traditional machine learning algorithms such as Support Vector Machines to classify sleep sounds [

15,

16,

17,

18]. However, these methods often exhibited limited robustness against environmental noise. Furthermore, they typically required complex manual feature selection processes dependent on domain expertise, which limited their generalizability. The adoption of Deep Learning shifted the paradigm toward automated feature extraction. Convolutional Neural Networks became a standard method for learning time-frequency representations directly from spectrograms, often integrated with Recurrent Neural Networks to capture the temporal evolution of respiratory patterns [

19,

20,

21,

22,

23]. Although Transformer-based architectures have recently gained prominence in general audio tasks and are emerging in OSA screening [

24,

25], their quadratic computational complexity and substantial parameter count present challenges for resource-constrained edge deployment. Moreover, the global attention mechanism may be functionally redundant for the semi-periodic, physiological nature of breathing sounds. Consequently, Convolutional Neural Networks and Convolutional Recurrent Neural Networks remain practical choices for audio-based OSA screening. This observation aligns with the conclusions of a 2025 meta-analysis by Tan et al. [

26].

Parallel to these algorithmic advancements, audio-based OSA screening methods can be broadly stratified into three levels of granularity. First, subject-level analysis extracts global features from the entire night’s recording to directly classify the patient’s risk status [

18]. While this approach is efficient for rapid screening, it operates as a “black box,” failing to provide crucial clinical details such as the Apnea-Hypopnea Index (AHI) or the temporal distribution of respiratory events. Second, segment-level analysis divides audio into fixed-duration epochs (e.g., 30 s) to determine the presence of respiratory events within each segment [

21,

22,

23]. Although this enables coarse-grained annotation of respiratory events and rough AHI estimation, it is prone to calculation errors driven by boundary effects, particularly where a single event is truncated across windows or a segment captures incomplete parts of multiple events. Third, snore-level analysis involves detecting isolated snore episodes and defining respiratory events through the post-processing of temporal patterns or intensity envelopes of snore clusters [

24,

27,

28]. While this approach achieves finer granularity, its performance is fundamentally limited by the sensitivity of snore detection. Furthermore, relying exclusively on snoring sounds may lead to misclassification, as periods of quiet normal breathing between snores can be easily misinterpreted as apnea events.

In summary, while the aforementioned methods exhibit distinct limitations, they share a common critical deficiency: the inability to precisely delineate the onset and offset boundaries of respiratory events. To bridge this gap, this study proposes a fine-grained, lightweight detection framework utilizing a Convolutional Recurrent Neural Network (CRNN). By leveraging frame-level audio classification, we explore whether this approach can achieve real-time and high-precision identification of respiratory events relevant to OSA screening, thereby providing a solid foundation for accessible home-based OSA screening.

The remainder of this paper is organized as follows:

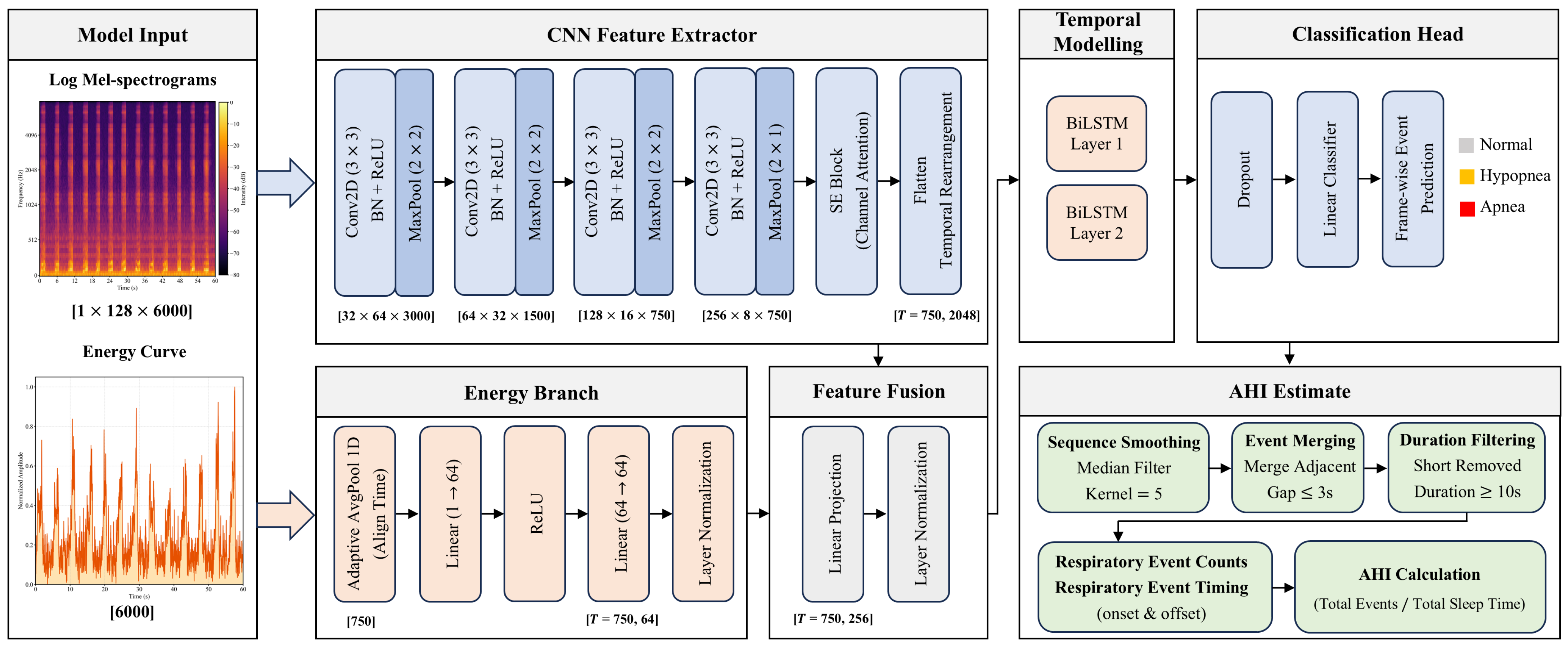

Section 2 provides a comprehensive description of the study population and data acquisition protocols, followed by the data preprocessing pipeline. It details the methodological framework, including the rationale and design of the proposed dual-stream CRNN architecture, the hybrid loss function utilized for optimization, the post-processing strategy, and the specific evaluation metrics defined for frame-level, event-level, and patient-level assessment.

Section 3 presents the comprehensive experimental results, covering frame-level classification, event-level temporal localization, and patient-level AHI estimation across both internal and external cohorts, followed by ablation studies, sensitivity analyses, and error analysis.

Section 4 discusses the principal findings, compares performance against state-of-the-art methods, critically analyzes the challenges associated with hypopnea detection, and outlines limitations and future research directions. Finally,

Section 5 summarizes the conclusions of the study.

3. Results

3.1. Frame-Level Classification Performance (Internal Test Set)

The frame-level classification performance of the proposed Dual-Stream CRNN on the internal test set (Sismanoglio Cohort) is summarized in

Table 1. The model achieved a macro-averaged F1-score of 0.64 and an overall accuracy of 0.81.

For Apnea events, the model exhibited robust performance, achieving a precision of 0.74 and a recall of 0.71. This indicates the model’s effectiveness in characterizing periods of complete airflow cessation, which are acoustically distinct. The Normal breathing segments were identified with high reliability, yielding an F1-score of 0.89, ensuring a low false-positive rate for healthy breathing intervals.

As anticipated, Hypopnea detection proved more challenging due to the high acoustic similarity between hypopnea-associated snoring and normal snoring. Consequently, the model achieved an F1-score of 0.31 for this class. However, notably, the recall for Hypopnea was 0.41. This sensitivity is a significant achievement for granular frame-level detection, as it ensures that a sufficient number of subtle respiratory reduction events are captured, providing valuable cues for the subsequent post-processing and patient-level AHI estimation.

The confusion matrix, illustrated in

Figure 2, further visualizes the distribution of predictions. It can be observed that the primary source of error stems from the misclassification between Hypopnea and Normal classes.

3.2. Event-Level Temporal Localization and Qualitative Analysis (Internal Test Set)

Beyond frame-level statistics, we quantitatively evaluated the model’s capability to localize discrete events. After delineating individual event boundaries from the frame-level outputs via post-processing, we calculated the Intersection over Union (IoU) and boundary deviations against manual annotations. On the internal test set, the detected events achieved an Average IoU of 0.82, indicating a high degree of overlap. Furthermore, the temporal alignment was precise, with a Mean Absolute Error (MAE) of 1.49 s for onset and 1.12 s for offset. Given the typical 10–40 s duration of respiratory events, these deviations confirm the model’s accuracy in capturing the initiation and termination of airflow reduction.

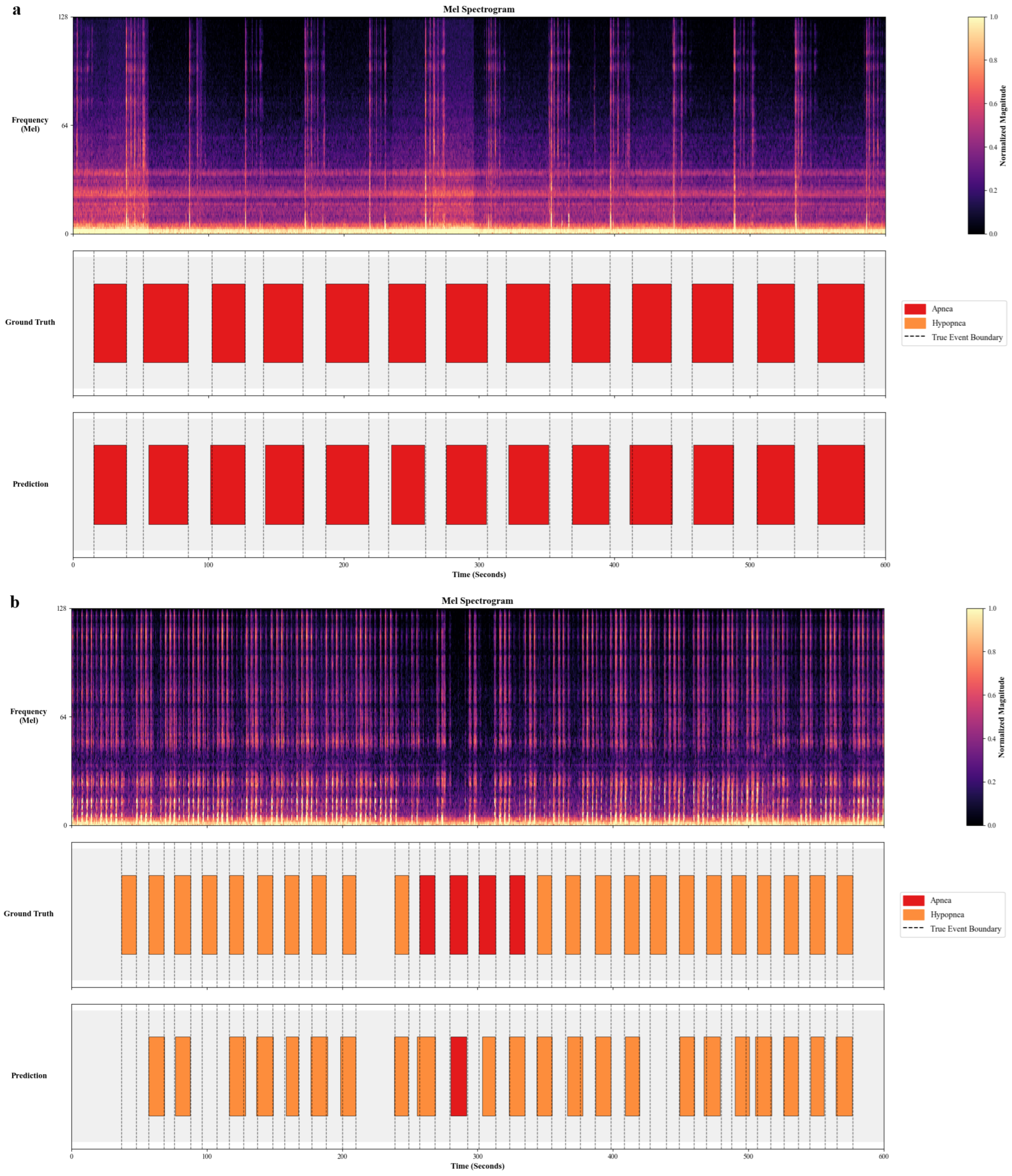

To visually corroborate these metrics,

Figure 3 compares model predictions with manual annotations for two internal test subjects.

Figure 3a displays a segment dominated by Apnea events. The model achieved precise temporal alignment, accurately delineating the contiguous apnea episodes (red segments) which correspond to silence intervals.

Figure 3b illustrates a Hypopnea-dominant scenario. The model demonstrated strong capability in localizing the majority of respiratory events. While the overall event structure was correctly captured, minor discrepancies were observed, including the omission of some subtle, low-intensity hypopneas and occasional misclassification between event types. This reflects the inherent acoustic challenge in distinguishing the fine-grained severity between partial and complete airway obstruction.

3.3. Patient-Level AHI Estimation and OSA Screening (Internal Test Set)

Building upon the discrete respiratory events identified in the previous step, we further calculated the patient-level clinical metrics. specifically, we derived the Apnea-Hypopnea Index (AHI), Apnea Index (AI), and Hypopnea Index (HI) for each subject in the test set by normalizing the event counts over the total sleep duration.

Figure 4 illustrates the agreement between the audio-derived indices and the ground truth PSG indices.

The scatter plots (

Figure 4a–c) reveal strong positive linear correlations across all three metrics. The model achieved a Pearson correlation coefficient (

) of 0.96 (95% CI: 0.93–0.98) for AHI, with a Mean Absolute Error (MAE) of 4.79 events/h (95% CI: 3.66–5.95). Notably, the AI demonstrated strong agreement (

= 0.97 [95% CI: 0.94–0.99], MAE = 4.69 events/h [95% CI: 3.53–5.86]), reflecting the distinct acoustic characteristics of apnea events. The HI, while more challenging due to the acoustic subtlety of the events, still maintained a substantial correlation (

= 0.77 [95% CI: 0.60–0.88], MAE = 6.05 events/h [95% CI: 4.72–7.31]).

To further assess the agreement, Bland–Altman analysis was conducted (

Figure 4d–f). For AHI, the mean bias was −0.02 events/h, indicating a negligible bias between the model predictions and ground truth. Furthermore, the majority of the data points fell within the 95% Limits of Agreement (LoA: −14.15 to 14.10 events/h), suggesting that the automated estimations are clinically consistent with standard PSG scoring. Similarly, the bias for specific event types remained within a reasonable range, with AI showing a bias of 2.15 events/h and HI showing a bias of −3.55 events/h, confirming that the model does not exhibit significant systematic errors in classifying specific event types.

Regarding screening on this internal test set, the model demonstrated excellent capability in identifying Severe OSA (AHI ≥ 30). For this critical threshold, the model achieved an accuracy of 96.55% (95% CI: 89.66–100.00%), a sensitivity of 94.44% (95% CI: 83.33–100.00%), a specificity of 100.00% (95% CI: 71.5–100.0%), and an AUC of 0.99 (95% CI: 0.96–1.00). This indicates that despite the dataset’s bias, the model can reliably distinguish severe patients requiring immediate intervention from non-severe cases. However, it is important to note that the internal dataset exhibits a scarcity of healthy controls and mild OSA patients, which is characteristic of clinical referral cohorts. Consequently, calculating screening specificity at lower thresholds (AHI ≥ 5 and 15) on this test set would yield statistically unreliable results due to the insufficient sample size of true negative cases. Therefore, to provide a rigorous and clinically meaningful evaluation of the model’s full screening capability across all severity levels, we detail the comprehensive screening performance in the External Cohort analysis (

Section 3.6).

3.4. Ablation Study: Impact of Dual-Stream and Temporal Modeling

To verify the contribution of the key components in our proposed framework, we conducted an ablation study on the internal test set. We compared the performance of the complete model against two variants: (1) w/o BiLSTM: A baseline CNN model utilizing only spectrogram features without temporal modeling; and (2) w/o Energy Stream: The CRNN model excluding the auxiliary energy input.

Table 2 summarizes the quantitative results. The baseline CNN-only model yielded a significantly lower performance, with a Macro Recall of only 0.37 and a Macro F1 of 0.36. The integration of the BiLSTM module (

w/

o Energy variant) resulted in a significant performance improvement, increasing the Recall to 0.64. This confirms that capturing long-term temporal dependencies is essential for characterizing respiratory events, which are inherently defined by their duration and evolution over time.

Furthermore, the incorporation of the auxiliary Energy stream provided a consistent improvement across all metrics. As shown in

Table 2, the proposed Dual-Stream framework achieved the highest Accuracy (0.81) and Macro F1 (0.64). Compared to the single-stream counterpart, the addition of energy descriptors improved the Recall by 0.02. This increment suggests that explicitly modeling signal intensity aids the network in distinguishing subtle airflow reductions (typical of hypopnea) that might be ambiguous in the spectral domain alone, thereby enhancing the model’s overall sensitivity.

3.5. Hyperparameter Analysis and Robustness Verification

3.5.1. Impact of Input Window Duration on Performance and Efficiency

To empirically validate the optimal input duration derived from our statistical analysis (

Section 2.2.1), we evaluated the model’s performance across a range of window sizes: 5 s, 30 s, 60 s, 90 s, and 120 s.

Supplementary Table S2 details the trade-off between diagnostic accuracy and edge deployment metrics.

Results indicate that the 5 s window yielded the lowest performance, primarily because it fails to capture the minimum duration (≥10 s) required to clinically define a respiratory event. Increasing the window to 30 s resulted in a marked improvement, as this duration covers the majority of event occurrences; however, it still lagged behind the 60 s configuration, suggesting that 30 s provides insufficient surrounding context for precise characterization. Performance saturated at 60 s, with further extensions to 90 s and 120 s yielding no significant gains while substantially prolonging inference time. Regarding deployment feasibility, the 60 s window retains a constant parameter count (1.73 M) and a computational density of 0.40 GFLOPs/s. With a CPU latency of 84.81 ms, the resulting processor duty cycle is approximately 0.14%. These metrics indicate that the model remains suitable for continuous edge monitoring despite the increased input size.

3.5.2. Sensitivity Analysis of Post-Processing Parameters

To evaluate the robustness of post-processing parameters, we performed a sensitivity analysis on median filter size, gap-merging threshold, and minimum duration (

Supplementary Table S3). Variations in median filter size (3–10) produced negligible deviations in metrics. In contrast, increasing the gap-merging threshold to 8 s reduced the IoU to 0.802 and increased the AHI MAE to 5.29; this suggests that excessive gap tolerance merges distinct respiratory events, resulting in boundary errors and AHI underestimation. Regarding minimum duration, an 8 s threshold yielded the lowest statistical error (MAE = 4.65). This improvement occurs because the model may predict the duration of short respiratory events slightly below their ground truth; an 8 s cutoff effectively recovers these valid detections, thereby enhancing sensitivity. However, strictly adhering to the AASM Manual for the Scoring of Sleep and Associated Events (Version 3), which mandates a duration of ≥10 s for respiratory events, we selected the 10 s threshold. This decision prioritizes clinical compliance over marginal statistical gains, ensuring the system generates diagnostically valid indices despite a slight increase in MAE (4.79).

3.6. External Validation: Generalization and Screening Robustness

To validate the robustness of the proposed model against domain shifts caused by distinct recording environments and microphone equipment, we conducted a comprehensive evaluation on the independent Beijing Tongren Hospital cohort.

Event-level Temporal Localization: Before assessing clinical indices, we verified the model’s fundamental capability to detect and localize respiratory events in unseen acoustic domains. Despite differences in background noise profiles and device frequency responses, the model maintained precise temporal alignment with manual annotations. Quantitatively, it achieved an Average IoU of 0.78 on this external set. Furthermore, the boundary detection remained accurate, yielding a Mean Absolute Error (MAE) of 1.89 s for onset and 1.63 s for offset. These results confirm that the learned features effectively capture the intrinsic acoustic signatures of airflow reduction rather than overfitting to source-specific artifacts.

Patient-level AHI Estimation and OSA Screening: Building on the robust event detection, we assessed the consistency of continuous estimation metrics.

Figure 5 illustrates the agreement between the model-derived indices and the reference PSG metrics.

The scatter plots (

Figure 5a–c) reveal strong positive linear correlations across all three metrics. The model demonstrated strong linear correlations for AHI (

= 0.96 [95% CI: 0.93–0.97]) with a MAE of 6.03 events/h (95% CI: 4.61–7.78). Furthermore, the model exhibited robust generalization in quantifying specific event types. The AI achieved a correlation of

= 0.95 (95% CI: 0.90–0.97) with a MAE of 5.14 events/h (95% CI: 3.83–6.55), and the HI yielded a correlation of

= 0.69 (95% CI: 0.44–0.85) with a MAE of 4.63 events/h (95% CI: 3.31–6.10). The Bland–Altman analysis (

Figure 5d–f) further confirms that the vast majority of predictions across all three metrics fall within the 95% limits of agreement, validating the model’s reliability for quantitative assessment in diverse clinical settings.

Screening Capability: Finally, we evaluated the binary screening performance across standard clinical thresholds (

Table 3). Crucially, the relatively balanced distribution of this external cohort allowed for an unbiased assessment of specificity, particularly for mild disease.

Mild OSA (AHI ≥ 5): The model achieved an accuracy of 96.67% (95% CI: 91.67–100.00%) and a specificity of 88.89% (95% CI: 62.50–100.00%). Additionally, the model demonstrated a sensitivity of 98.04% (95% CI: 93.88–100.00%) and an AUC of 0.97 (95% CI: 0.90–1.00). This result validates the model’s capability to effectively differentiate symptomatic patients from healthy individuals, successfully addressing the limitation of the internal dataset regarding negative sample evaluation.

Moderate (AHI ≥ 15) and Severe (AHI ≥ 30) OSA: The model exhibited sustained high performance, with AUCs of 0.97 (95% CI: 0.93–1.00) and 1.00 (95% CI: 0.99–1.00), respectively. Notably, at the AHI ≥ 30 threshold, the high sensitivity (89.29% [95% CI: 76.92–100.00%]) and specificity (100.00% [95% CI: 88.43–100.00%]) confirm the model’s reliability in prioritizing severe cases for immediate intervention, consistent with the findings in the internal validation.

3.7. Error Analysis of Hypopnea Detection

While the proposed framework achieved robust performance in assessing the overall respiratory burden, the evaluation of hypopnea remains less satisfactory. To further investigate the challenges in hypopnea detection, we conducted a quantitative error analysis on the external test set. Preliminary statistics revealed a notable recall deficit: the model predicted only 2226 hypopnea events against a ground truth of 3300. To pinpoint the sources of this discrepancy, events were matched and categorized following the IoU-based greedy strategy defined in the Materials and Methods Section.

Hypopnea misclassified as Normal accounted for the largest proportion of errors (39%), representing the primary source of under-detection. Hypopnea misclassified as Apnea constituted 27% of the discrepancies. Conversely, false positive errors, specifically Normal misclassified as Hypopnea and Apnea misclassified as Hypopnea, accounted for 26% and 8%, respectively. The underlying mechanisms of these misclassifications, including acoustic ambiguity and labeling uncertainty, are further discussed in the Discussion Section.

4. Discussion

4.1. Principal Findings

The primary objective of this study was to develop a fine-grained and lightweight audio-based framework for obstructive sleep apnea diagnosis that is capable of providing clinically interpretable outputs. Specifically, the proposed framework enables relatively accurate patient-level AHI estimation, as well as fine-grained characterization of respiratory events, including the onset and offset times, durations, and temporal distribution. Evaluated across multiple levels of granularity and validated on two independent cohorts, the proposed Dual-Stream CRNN demonstrated robust and consistent performance for audio-based OSA screening and severity assessment.

On the internal PSG-Audio cohort, the model exhibited strong fine-grained detection capability. At the frame level, it achieved a macro-averaged F1-score of 0.64, with a recall of 0.41 for hypopnea events, which are widely recognized as the most acoustically ambiguous respiratory disturbances. This frame-wise sensitivity enabled accurate reconstruction of individual respiratory events, resulting in accurate temporal localization with a high average Intersection over Union (IoU = 0.82) and minimal onset and offset deviations. Importantly, this event-level precision translated into reliable patient-level quantification, as reflected by a strong correlation between the audio-derived AHI and PSG-based AHI (r = 0.96).

On the independent Beijing Tongren Hospital cohort, the model further demonstrated robust generalization in the presence of domain shifts arising from different recording devices and acoustic environments. Despite these variations, the framework maintained stable screening performance across OSA severity levels. In particular, for mild OSA, the model achieved a specificity of 88.89%, supporting its role as a safe screening tool with a low false-positive rate. At the severe OSA threshold, it achieved perfect specificity and high sensitivity, indicating reliable identification and prioritization of patients at highest clinical risk.

Collectively, these results indicate that the proposed framework not only supports accurate OSA screening but also enables fine-grained temporal analysis and clinically meaningful severity estimation using non-contact sleep audio.

4.2. Challenges in Hypopnea Detection and Clinical Implications

While the proposed framework achieved robust performance in assessing the overall respiratory burden, hypopnea detection exhibited relatively lower performance. We hypothesize that this limitation may arise from a combination of data imbalance, acoustic ambiguity, and constraints imposed by current clinical labeling protocols.

First, the model faces a hierarchical class imbalance. In most patients with OSA, the frequency of respiratory events is substantially lower than that of non-respiratory segments. Moreover, the dataset exhibits an Apnea-to-Hypopnea ratio of approximately 4:1. Although we employed a Weighted Focal Loss to penalize hard examples and up-weight the minority hypopnea class, this scarcity necessitates a delicate trade-off: aggressive re-weighting to improve recall risks amplifying false positives, which is counterproductive given the limited sample size.

Second, beyond data distribution, a major challenge lies in the acoustic definition of hypopnea itself, which directly shapes the error patterns observed in our results. Distinguishing hypopnea (≥30% flow reduction) from normal segments is far subtler than detecting the near-silence of apnea. A correctly classified hypopnea, as shown in

Figure 6a, typically manifests as continuous snoring with a perceptible intensity dip. However, the ≥30% flow reduction is an arbitrary human-defined threshold rather than a binary acoustic turning point. Consequently, the absence of significant frequency/energy shifts accounts for the highest proportion of errors (Hypopnea misclassified as Normal, 39%). As illustrated in

Figure 6b, these missed events (mild hypopneas) are acoustically indistinguishable from regular heavy breathing, lacking the clear boundaries required for detection. Conversely, as the degree of obstruction intensifies, snore loudness drops significantly, mimicking the silence of apnea [

35]. This resemblance leads the model to misclassify severe hypopneas as apneas (accounting for 27% of errors), as shown in

Figure 6c.

Third, a critical limitation of audio-only screening is the inability to access the multimodal data required for strict AASM compliance. While audio models can detect acoustic variations stemming from airflow limitation, they cannot verify the physiological consequences (≥3% oxygen desaturation or arousal) required for clinical confirmation of hypopnea events. This inherent lack of physiological context leads to false positives, specifically Normal misclassified as Hypopnea (26%), as illustrated in

Figure 6d, where a segment of low-intensity snoring is erroneously identified as a hypopnea event. Similarly, when Apnea is misclassified as Hypopnea (8%), shown in

Figure 6e, residual rhythmic low-intensity signals are visible during the apnea event, likely reflecting respiratory effort. These ambiguous features mislead the model and result in incorrect classification.

Finally, the inherent subjectivity of polysomnography (PSG) scoring contributes to inter-technician variability, which acts as a confounding factor in model evaluation. As a labor-intensive process, PSG scoring relies on the manual interpretation of physiological signals; consequently, subtle discrepancies in visually estimating whether airflow reduction meets the ≥30% threshold for hypopnea or the ≥90% threshold for apnea, or in identifying micro-arousals, can lead to inconsistencies in hypopnea annotation. Although formal inter-scorer agreement statistics were not quantified for the cohorts in this study, data reliability was strictly safeguarded through a two-tier validation process: all recordings underwent initial scoring by a certified technician, followed by a final, comprehensive review by a senior sleep physician with extensive clinical expertise. Furthermore, data collection was conducted at a specialized sleep medicine center, where all recordings were scored in strict adherence to the AASM Manual for the Scoring of Sleep and Associated Events, ensuring that the ground-truth labels meet high clinical standards. In future studies, to further mitigate labeling uncertainty, stricter multi-rater consensus strategies should be implemented, and inter-technician consistency should be formally evaluated to refine the granularity of event classification.

Despite these challenges, the overall assessment of AHI remains robust (

= 0.96), which may be attributed to the lower prevalence of hypopnea in our dataset and the error cancellation mechanism. Specifically, our error analysis indicated that among the identified misclassifications, a substantial proportion (27%) involved hypopneas being labeled as apneas due to acoustic similarities in severe obstruction. Since the AHI is an aggregate index that sums all respiratory events regardless of subtype, these specific errors do not alter the final event count, effectively preserving the correlation with PSG. This phenomenon aligns with findings from previous audio-based OSA studies [

21,

22,

23], which similarly observed strong AHI alignment despite reduced subtype precision.

However, a critical distinction must be drawn between “misclassification” and “missed detection.” While the error cancellation mechanism protects the AHI against severity label permutations, it offers no defense against the complete under-detection of mild hypopneas (which accounted for 38% of the total errors). The high overall correlation observed in this study likely reflects the specific demographic of our cohort, characterized by moderate-to-severe male patients with an “Apnea-dominant” phenotype, where the sheer volume of correctly identified apneas masks the impact of missed mild hypopneas. Consequently, in cohorts where hypopnea is the dominant event type (e.g., female patients or milder OSA cases), this masking effect would diminish, exposing the risk of AHI underestimation and potential severity downgrading. Future research should aim to curate datasets balanced not only in disease severity but also in respiratory event phenotypes to ensure generalized clinical utility across diverse patient populations.

4.3. Comparison with Previous Studies Based on the PSG-Audio Dataset

To rigorously benchmark the proposed framework, we compared our results with existing studies that employed ambient microphone recordings from the same PSG-Audio dataset for OSA detection. It should be noted that these studies mainly reported internal evaluations, while external validation was not explored, leaving the generalization performance in real-world clinical settings less examined.

Salvatore et al. [

36] utilized a pretrained Google VGGish model for feature extraction, combined with a Bi-LSTM classifier to determine whether 5 s audio segments contained respiratory events. By aggregating predictions via a 1 s sliding window, they provided a coarse estimation of the full-night respiratory events. However, their best model achieved an AHI correlation of only 0.76, which is significantly lower than the 0.96 reported in this study, and it lacked the capability to differentiate between event subtypes. A primary limitation lies in the temporal context: their 5 s input duration, constrained by the standard Google VGGish architecture, is insufficient to capture the complete evolution of respiratory events. In contrast, our framework employs a 60 s window with a 30 s stride. This design strikes an optimal balance, capturing full event patterns without the excessive computational overhead associated with processing extremely long sequences.

Korompili et al. [

17] adopted a multi-stage, rule-based signal processing pipeline. Their approach involved dividing audio into 0.1 s frames, estimating breathing probabilities via Voice Activity Detection (VAD) and Hidden Markov Model (HMM), and identifying events using heuristic rules based on fixed energy thresholds, duration constraints, and minimum intervals. Due to dataset imbalance, they only reported binary classification results for moderate (AHI ≥ 15) and severe (AHI ≥ 30) thresholds. The performance was suboptimal, yielding sensitivities of only 55.4% and 65.6%, with notably low Positive Predictive Values (PPV) of 21.3% and 50%, respectively. While this method also operates at the frame-level, its performance is severely constrained by strong prior assumptions. Specifically, fixed thresholds (e.g., ≥50% RMS energy drop) fail to capture the acoustic heterogeneity of OSA, particularly for hypopneas. Furthermore, energy-driven rules are inherently susceptible to background noise, leading to high false-positive rates and limited generalization. Conversely, our Dual-Stream CRNN employs a data-driven approach, jointly modeling spectral features and energy dynamics. This allows the model to learn flexible, non-linear decision boundaries, resulting in superior screening performance across all thresholds.

Most recently, Kim et al. [

25] proposed ApneaWhisper, innovatively reframing OSA detection as a token-based semantic segmentation task. Leveraging a fully fine-tuned pre-trained Whisper speech recognition backbone, this model encodes 20 s spectrogram clips and utilizes a Transformer-based autoregressive decoder to generate discrete tokens (e.g., “START_OSA”, “END_HYPOPNEA”). However, the reported frame-level performance suggests certain limitations regarding clinical applicability.

First, the model demonstrated limited specificity in distinguishing normal breathing. The Recall for Normal segments was 58.0%, with a notable proportion of normal segments misclassified as respiratory events. Specifically, 25.4% as Hypopnea and 14.8% as OSA. From a clinical perspective, this tendency toward false positives in healthy segments could theoretically contribute to AHI overestimation, particularly in individuals with low disease burden. In contrast, our proposed framework achieved a Normal Recall of 87%, reducing the rate of false alarms.

Second, the sensitivity for subtle events in the token-based baseline model remained constrained, as evidenced by a Hypopnea Recall of 29.5%. This indicates that a substantial portion of hypopnea frames were not detected, suggesting a risk of AHI underestimation in patients with hypopnea-predominant phenotypes. In comparison, our model achieved a Hypopnea Recall of 41%. Although identifying mild flow reductions remains challenging due to acoustic ambiguity, our approach retrieves a larger proportion of valid events compared to the token-based baseline.

A 20 s non-overlapping window is insufficient for capturing the full temporal evolution of respiratory events, which frequently exceed this duration. However, this design choice was an inherent trade-off to accommodate the substantial parameter size of the Whisper model. Since the Transformer’s self-attention mechanism scales quadratically with sequence length (), processing longer sequences at the model’s native 10 ms resolution would result in prohibitive computational costs. Furthermore, a domain mismatch exists: Whisper is optimized for speech recognition, introducing functional redundancy when applied to the semi-periodic, physiological patterns of breathing. In contrast, our Dual-Stream CRNN architecture employs a customized CNN to extract high-resolution spectral features, effectively capturing the fine-grained acoustic signatures of breathing and snoring. Synergistically combined with an auxiliary energy stream that explicitly encodes signal intensity dynamics, this lightweight feature representation allows the BiLSTM to efficiently leverage extended 60 s segments with overlapping strides. This approach not only captures the complete temporal context of respiratory cycles but also utilizes gating mechanisms (i.e., forget and update gates) to selectively focus on relevant state transitions while filtering out inter-breath noise, ultimately achieving superior performance with significantly lower computational overhead.

Furthermore, to objectively justify the “lightweight” designation, we benchmarked the inference latency of the aforementioned CRNN model by Serrano et al. and the Transformer-based ApneaWhisper on the same GPU used in our study. It should be noted that the baseline metrics presented here are estimates derived from architectures reproduced according to the specifications detailed in their respective studies.

For the CRNN-based model by Serrano et al. (5.98 M parameters), the measured inference latency was 172.92 ms/min. This metric is primarily driven by the sliding-window mechanism with a 1 s stride, which necessitates 60 separate forward passes per minute, resulting in cumulative computational overhead. Regarding the Transformer-based ApneaWhisper (40.17 M parameters), the latency was recorded at 146.57 ms/min. While this architecture benefits from hardware parallelism, its efficiency remains inherently constrained by the iterative nature of autoregressive decoding.

In comparison, our Dual-Stream CRNN (1.73 M parameters) achieves a total latency of only 11.60 ms/min. By processing the full 60 s context in a single forward pass, our architecture avoids both the repetitive overhead of sliding windows and the sequential bottleneck of autoregressive generation, making it highly suitable for resource-constrained edge deployment.

4.4. Comparison with State-of-the-Art Non-Contact OSA Screening Methods

To benchmark our model against current state-of-the-art methods, we compiled data from representative non-contact audio-based studies published within the past five years. We focused exclusively on literature that explicitly documented sensitivity and specificity across varying AHI thresholds, allowing for a direct performance assessment against our proposed framework.

Table 4 summarizes the sensitivity and specificity across three clinical thresholds (AHI ≥ 5, 15, and 30 events/h). It should be noted that these comparisons are intended for reference only, as the included studies differ substantially in dataset characteristics, including sample size, distribution of AHI severity, ethnic composition, demographic profiles, and related factors. Such variations may influence reported performance and limit the direct comparability of results across studies. Importantly, our study included external validation across independent cohorts, which may enhance the robustness and generalizability of the reported results compared with studies evaluated on single center or homogeneous datasets.

For mild OSA screening, high sensitivity is particularly important to reduce the risk of missed diagnoses. Our model achieved a sensitivity of 98.0%, which is higher than that reported in the large-scale deep learning study by Han et al. (92.6%) and the global meta-analysis by Tan et al. (94.3%). While previous studies have often observed a tradeoff between sensitivity and specificity, such as the work by Cho et al., who reported a specificity of 64.7%, our model achieved a specificity of 88.9%. This suggests that the proposed approach may provide improved discrimination between mild OSA patients and healthy individuals while limiting excessive false alarms.

At the moderate threshold, the model demonstrated a relatively balanced performance, with a sensitivity of 89.5% and a specificity of 90.9%. These results are comparable to those reported by Han et al. (sensitivity 90.9%, specificity 94.4%) and show improved sensitivity compared with the results reported by Wang et al. (83.5%) and Xie et al. (84.7%).

For the identification of severe OSA, the proposed framework showed stable performance. While some existing methods reported reduced sensitivity in severe cases, for example, Xie et al., who reported a sensitivity of 58.1%, our model achieved a sensitivity of 89.3%. In addition, a specificity of 100% was obtained for severe cases. Although this result should be interpreted with caution, it indicates that the model did not misclassify non severe subjects as severe OSA in this dataset, which may be beneficial for clinical decision support.

4.5. Limitations

While our framework demonstrates robust screening performance, several limitations warrant acknowledgement:

Sample Size and Demographics: Although the study validated the model across two distinct centers, the total sample size remains relatively modest. Furthermore, the cohort consists exclusively of adults. Since the pathophysiology and acoustic characteristics of pediatric OSA may differ significantly from those of adults, our current findings cannot be directly extrapolated to pediatric populations without separate validation.

Impact of Data Imbalance on Severity Classification A notable limitation arises from the high prevalence of severe OSA within the training dataset derived from the Sismanoglio cohort. As observed in the external validation, the model achieves high sensitivity for severe cases, yet its performance variability at lower severity thresholds suggests a bias toward the dominant class. This phenomenon, known as spectrum bias, represents a common challenge in clinical AI. Models trained on tertiary care cohorts with high disease prevalence often demonstrate superior performance on severe cases while exhibiting reduced accuracy for borderline mild or moderate presentations. To mitigate this skew, future work must prioritize training on larger, community-based cohorts that feature a balanced distribution of AHI severity.

Recording Equipment and Real-world Deployment: Both datasets utilized high-fidelity professional microphones (e.g., Behringer ECM8000, Sony PCM-D10) in controlled hospital environments. We have not yet quantified the performance degradation that might occur when using consumer-grade hardware. Deploying this algorithm on portable devices (e.g., smartphones) introduces specific challenges, including variable microphone frequency responses, automatic gain control (AGC) artifacts, and aggressive audio compression algorithms, all of which could distort the spectral features of breathing sounds. Additionally, uncontrolled home environments introduce high-interference acoustic scenarios, such as loud snoring from a bed partner, television noise, or air conditioning sounds, that were not fully represented in our clinical recordings.

Inherent Acoustic Limits and Emerging Clinical Metrics As detailed in the section on Challenges in Hypopnea Detection and Clinical Implications, relying solely on audio signals presents inherent limitations due to the acoustic ambiguity of hypopnea events and the absence of multimodal physiological confirmation. Furthermore, this lack of multimodal data restricts the assessment of emerging clinical metrics that are increasingly critical for patient prognosis. Indicators such as hypoxic burden [

38] and respiratory event-related pulse rate response [

39] have gained significant attention for their ability to predict cardiovascular risks associated with OSA. However, a single-modality audio framework is fundamentally incapable of measuring these physiological consequences, limiting its utility in screening for patients at high risk of comorbidities. Consequently, future research should investigate multi-modal fusion approaches, such as integrating audio analysis with data from wearable pulse oximeters, to capture these physiological dimensions and provide a more reliable, multi-dimensional screening outcome.

4.6. Future Directions

Edge Deployment on Consumer Devices: We aim to leverage the lightweight nature of our CRNN architecture to deploy the model directly on smartphones for edge computing. This on-device processing approach not only enables real-time monitoring without internet dependency but also ensures user data privacy by eliminating the need to upload sensitive audio recordings to the cloud.

Wearable-Integrated Multi-modal Fusion: To overcome the acoustic limitations in detecting hypopnea, we plan to investigate multi-modal fusion by integrating audio analysis with data from consumer wearables (e.g., smartwatches). Specifically, combining audio signals with SpO2 and ECG/PPG data could significantly enhance the recognition accuracy of subtle respiratory events. Furthermore, wearable devices can provide a precise estimation of Total Sleep Time rather than Total Recording Time, allowing for a much more accurate calculation of the AHI in home settings.

High-Standard Database Construction: To address the limitations of current public datasets, future efforts must focus on establishing a large-scale, high-quality database derived from community-based populations. This initiative should prioritize a balanced distribution of AHI severity and respiratory event phenotypes, ensuring that hypopnea events are sufficiently represented to prevent the masking effect where high apnea counts obscure poor hypopnea detection. To guarantee the reliability of the gold standard, Polysomnography annotations should be performed independently by two certified technicians with formal evaluation of inter-scorer agreement. Additionally, collecting audio data directly from uncontrolled home environments is essential to validate model robustness against real-world acoustic interference.