1. Introduction

Refractive errors are among the most prevalent causes of correctable visual impairment worldwide. They affect over two billion individuals, particularly those in younger populations [

1]. Although subjective refraction remains the clinical gold standard for prescription determination, it is a time-consuming process that is examiner-dependent and less feasible in pediatric and communication-limited groups, as well as in high-volume care settings [

2]. Objective techniques, such as autorefraction, provide faster and more standardized measurements; however, the agreement with subjective refraction varies depending on age, accommodative status, and optical media quality. Consequently, clinical refinement is often required [

3,

4].

The use of artificial intelligence (AI) and machine learning (ML) methods in the assessment of refractive errors has increased substantially in recent years. These approaches can be broadly categorized into three groups. They include models that leverage routine tabular clinical data (e.g., biometric or optical measurements), deep learning systems trained on ocular images, and multimodal fusion frameworks that integrate complementary sources of information. Multimodal data fusion has been a subject of extensive exploration in the broader deep learning literature. This general strategy combines heterogeneous inputs to improve predictive robustness and generalizability across complex biomedical applications [

5]. Machine learning (ML) models that incorporate biometric, optical, and demographic features have exhibited superior accuracy in comparison with conventional regression or autorefractor-only estimates, particularly in adult and pediatric populations [

6,

7,

8]. Concurrently, the employment of deep learning models that have been trained on retinal fundus or ultra-widefield images has led to the capacity for direct prediction of spherical equivalent and detection of high myopia, thereby underscoring the potential of image-based inference [

9,

10,

11].

In recent developments, multimodal fusion strategies have been investigated to expand predictions to encompass full refractive components, including sphere, cylinder, and axis, by integrating heterogeneous data sources such as ocular images, biometric parameters, and clinical measurements [

9,

12]. Furthermore, machine learning (ML)-based forecasting models have exhibited efficacy in predicting myopia progression and identifying individuals at risk of high myopia, thereby supporting risk-based follow-up strategies [

13,

14,

15]. Systematic reviews and bibliometric analyses underscore the rapid expansion of AI applications in refractive error management, while also emphasizing persistent challenges related to external generalizability, device dependence, and heterogeneous performance across populations and settings [

10,

16,

17].

Despite this progress, several limitations remain. A substantial proportion of published models depend on wavefront aberrometry, advanced ocular imaging, or large-scale image datasets, which may not be readily available in routine ophthalmology clinics or primary care settings [

7,

9,

10,

11,

12,

16]. Furthermore, a paucity of studies has systematically examined clinical determinants of prediction failure, such as near-emmetropic refractive status, age-related optical changes, or non-cycloplegic measurement conditions, that may critically influence accuracy in real-world settings [

8,

17]. Consequently, the role of AI-based refractive estimation in safely supporting clinical decision-making or patient triage without full subjective refinement remains incompletely defined.

The present study aims to address these gaps by developing a machine-learning model that predicts subjective refraction using routinely available, non-cycloplegic autorefraction and keratometric data, without reliance on specialized imaging or biometric equipment. In addition to evaluating the overall predictive performance, we specifically investigate clinical factors associated with reduced prediction accuracy. This enables us to identify the conditions under which artificial intelligence-assisted refraction may be considered reliable as a decision-support tool. This approach is intended to facilitate the safe and pragmatic integration of AI-based refraction into standard ophthalmic practice, particularly in high-volume clinical settings.

2. Materials and Methods

This retrospective cross-sectional study was conducted at a tertiary referral ophthalmology center between 1 January 2023 and 1 January 2025. The study was conducted in accordance with the tenets of the Declaration of Helsinki and received approval from the institutional ethics committee (Approval No. E-25-431, 16 April 2025). Written informed consent was obtained from all adult participants and from the legal guardians of minors. Prior to analysis, all data were de-identified to ensure patient confidentiality.

2.1. Study Population and Data Collection

The electronic medical records of 1006 consecutive patients were systematically reviewed. Eligible participants were required to be ≥7 years old, literate, and sufficiently cooperative for subjective refraction. Best-corrected visual acuity (BCVA) of 6/6 in each eye monocularly was mandatory to ensure reliable endpoints.

Exclusion criteria included any prior ocular surgery; keratoconus or corneal ectasia; corneal opacity; lens opacity of grade ≥1; posterior-segment pathology affecting refraction; narrow anterior chamber angles; congenital or acquired anterior-segment anomalies; chronic ocular inflammation; prior treatment for amblyopia or strabismus; and incomplete clinical records.

Following patient-level eligibility screening, 1006 patients (2012 eyes) met the initial inclusion criteria. Subsequently, an eye-level filtering step was applied based on predefined refractive-error thresholds (see

Section 2.4). A total of 156 eyes were excluded at this stage, yielding a final dataset of 1856 eyes for machine-learning model development (see

Figure 1). All participants were recruited from a single tertiary referral center in Türkiye, and the study population was predominantly Middle Eastern/Caucasian.

2.2. Objective and Subjective Refraction Procedures

Objective measurements were obtained using an automated kerato-refractometer (KR-1; Topcon, Tokyo, Japan) under standardized low-illumination conditions (~80 lux), with internal fogging to reduce accommodation and monocular fixation on a 6 m high-contrast target. Subsequent to the initial acquisition, three consecutive readings were obtained and averaged. Measurements deviating by >±1.00 D triggered a repeat acquisition. Automatic centration and pupil alignment routines ensured consistency. The autorefractor was operated in accordance with the manufacturer’s standard maintenance and calibration protocols. All measurements were obtained under standardized conditions by two experienced ophthalmologists (O.C. and G.O.) to ensure procedural consistency and minimize operator-related variability.

Cycloplegia was not a standard procedure for preserving a realistic routine workflow. Eyes requiring cycloplegia for suspected latent hyperopia were excluded from the study.

Subjective refraction was performed by two experienced ophthalmologists (O.C. and G.O.) using a phoropter and Snellen chart positioned at 6 m (~300 lux). Autorefraction served as the initial procedure, followed by fogging and maximum-plus refinement to optimize visual acuity. Duochrome balancing was applied to confirm focal neutrality. The refinement of astigmatism entailed Jackson cross-cylinder testing (±0.25 D) with axis adjustments in 5-degree increments, followed by subsequent 2.5-degree refinement when a cylinder strength of ≥1.50 D was identified. To ensure the reliability of the results, additional reversal checks were employed. The process involved a sequence of steps, beginning with binocular balancing, followed by monocular optimization, and culminating in the recording of final values in minus-cylinder notation. Any discrepancy that exceeded ±0.25 D necessitated the confirmation of a senior reviewer.

2.3. Refractive Parameters and Classification

Refractive error was expressed using power-vector notation [

18] whereby sphere, cylinder, and axis values were converted to spherical equivalent (SE), J0, and J45. The magnitude of the cylinder (CP) was derived from the J components. Detailed equations for forward and inverse transformations are provided in

Appendix A to ensure reproducibility. Refractive categories were defined as follows: myopia (SE ≤ −0.50 D), hyperopia (SE ≥ +0.50 D), and emmetropia (|SE| < 0.50 D). The presence of astigmatism was defined as |CP| ≥ 0.50 D. Eyes failing to meet the established refractive-error criteria were excluded from model training.

2.4. Machine-Learning Model Development

Subjective SE, J0, and J45 served as target outputs for model prediction. A multi-output histogram gradient boosting regressor was implemented in Python (version 3.13.7) using Scikit-learn (version 1.7) and the KNIME Analytics Platform. The input features encompassed objective refraction (S, C, axis), derived vector components (SE, J0, J45), keratometry (K1, K2), and demographic variables (age, sex). Predicted vector outputs were subsequently transformed back into cylinder power and axis using inverse power-vector equations to enable clinical interpretation.

The development of machine learning (ML) models was based on data from both eyes for each subject. Hyperparameter optimization was conducted using a combination of grid search and patient-level GroupKFold cross-validation to prevent information leakage between fellow eyes. The search space was deliberately constrained to plausible ranges to control model complexity. Specifically, the depth of the trees was constrained to shallow configurations, with a maximum depth of 3. The histogram bin resolution was restricted to 64 or 128 bins, and the learning rates were maintained at low to moderate levels (0.02, 0.03, 0.05). Additional regularization was imposed through minimum samples per leaf (20 or 50) and L2 regularization penalties (5.0, 10.0). The maximum number of boosting iterations was set at 100 or 200, with early stopping determining the effective number of iterations. Detailed workflows and parameter configurations are provided in

Appendix A and

Appendix B.

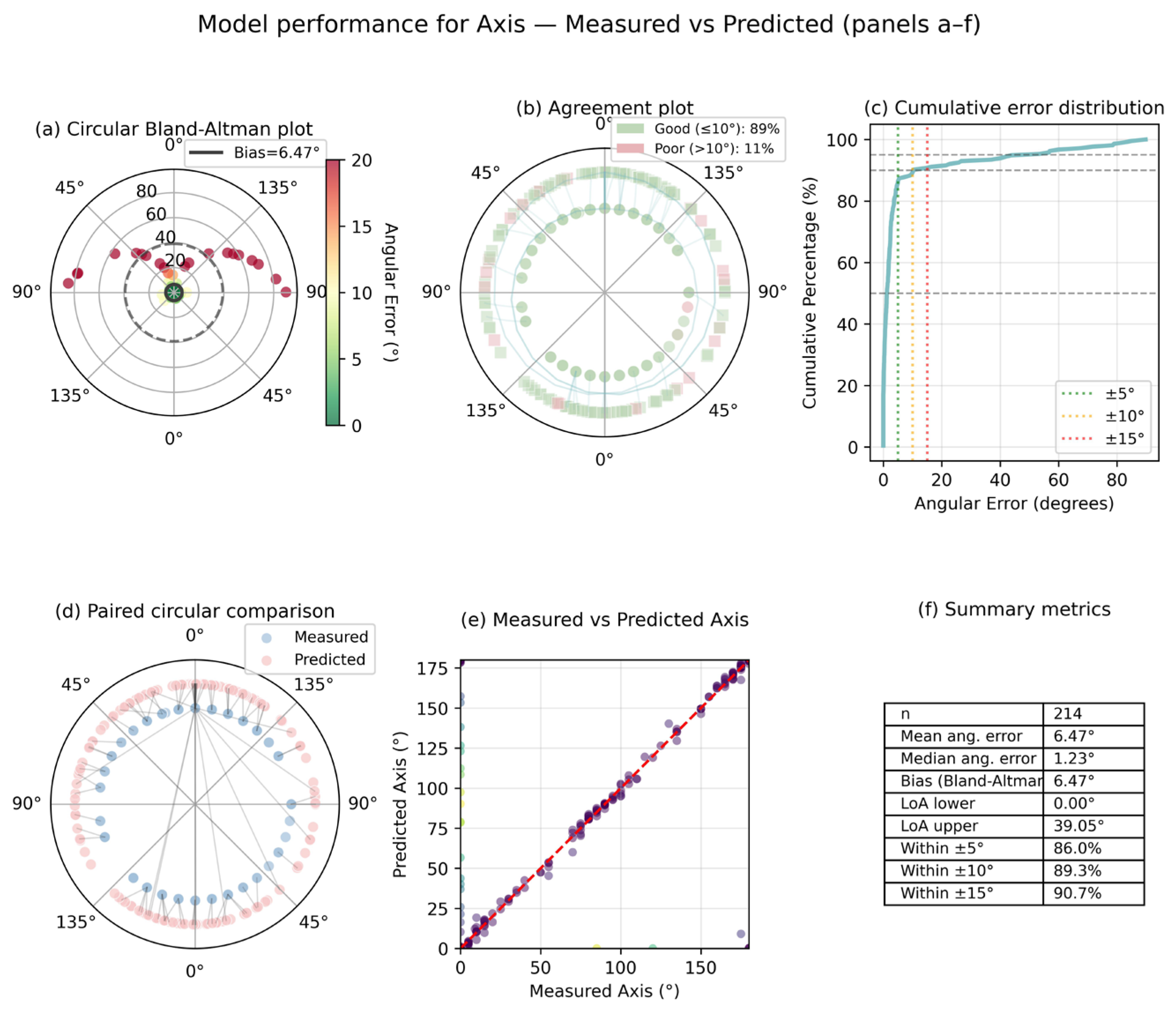

The performance of the model was evaluated by means of the coefficient of determination (R2), the mean absolute error (MAE), and the root-mean-square error (RMSE). Given the circular nature of the astigmatic axis, angular residuals were wrapped within the 180° periodicity by computing the minimum angular difference (|Δθ| or 180° − |Δθ|). This was used to derive circular R2 and to fully eliminate boundary-related directional bias at the 0°/180° transition. In order to assess whether nonlinear modeling provided performance gains in addition to linear regression, additional gradient boosting models were evaluated within the training dataset for comparative purposes. This comparison was used solely to inform model selection; only the selected histogram-based gradient boosting model was subsequently evaluated on the independent held-out test dataset. The model reporting was conducted in accordance with the TRIPOD-AI checklist.

2.5. Model Performance Metrics

Model performance was evaluated on an independent, temporally separated held-out test dataset comprising patients recruited during a later period, with no patient overlap with the training data. The test dataset was not accessed during hyperparameter tuning or model development and was used exclusively for final performance evaluation. The prediction performance for spherical and cylindrical components was evaluated using the coefficient of determination (R2), mean absolute error (MAE), mean squared error (MSE), and root mean squared error (RMSE), all reported in diopters (D). Given the circular nature of astigmatic axis data, angular residuals were wrapped within the 180° periodicity by computing the minimum angular difference (|Δθ| or 180° − |Δθ|). The performance of the Axis prediction was consequently evaluated using circular correlation (ρ), mean and median absolute angular error, and clinically relevant success rates (≤5°, ≤10°, and ≤15°), reported in degrees (°).

2.6. Statistical Analysis

Statistical analyses were performed using Python (version 3.13.7) with the SciPy, Statsmodels (version 0.14.4), and Scikit-learn libraries. Continuous variables were summarized as mean ± standard deviation (SD) or median (interquartile range), depending on the distribution of the data. The assessment of normality was conducted through the implementation of the Shapiro–Wilk test and a Q–Q plot inspection. Although both eyes were utilized for machine-learning development, only right eyes were incorporated into inferential statistical analyses to circumvent the potential influence of correlated-eye bias. The prediction outcomes were dichotomized into two categories: well-predicted and poorly predicted eyes. These categories were determined using two predefined thresholds:

Primary definition: |ΔS| ≤ 0.50 D, |ΔC| ≤ 0.50 D, |ΔAxis| ≤ 10°;

Strict definition (sensitivity analysis): |ΔS| ≤ 0.25 D, |ΔC| ≤ 0.25 D, |ΔAxis| ≤ 5°.

Subsequently, group comparisons were conducted using Mann–Whitney U tests or independent-samples t-tests for continuous variables and chi-square or Fisher’s exact tests for categorical variables, as appropriate. The quantification of effect sizes was conducted by employing the epsilon-squared (ε2) metric for non-parametric comparisons. To identify independent predictors of poor prediction, multivariable logistic regression was performed using only objective pre-refraction variables (age, sex, objective SE, cylindrical magnitude, axis, and keratometry) to avoid circularity with the reference standard. Odds ratios (ORs) with 95% confidence intervals (CIs) were reported. A two-sided p-value less than 0.05 was considered statistically significant.

4. Discussion

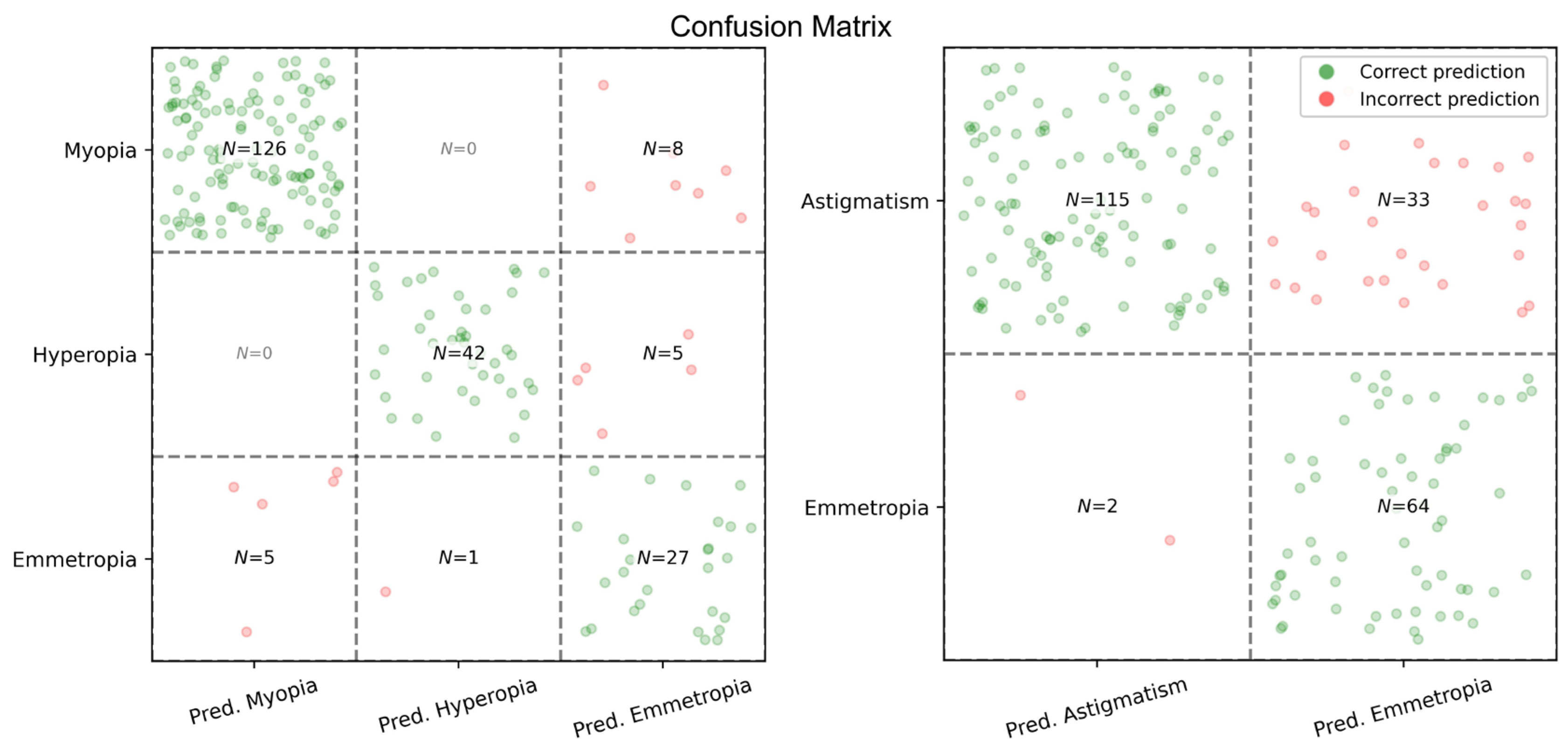

The present study demonstrates that a machine-learning model trained exclusively on routinely available, non-cycloplegic autorefraction and keratometric data can achieve clinically meaningful prediction of subjective refraction. Notably, the model demonstrated the capacity to predict not only spherical equivalent, but also cylindrical power and astigmatic axis, utilizing inputs that are a component of standard ophthalmic examinations. By emphasizing the utilization of readily available measurements as opposed to the employment of specialized imaging or biometric devices, the present study aims to overcome a significant impediment to the practical implementation of artificial intelligence-assisted refraction in real-world settings.

A comparative analysis of model performance across various refractive components revealed that prediction accuracy was most pronounced for spherical and cylindrical components. In the independent test dataset, both parameters exhibited low absolute errors and negligible mean signed differences, indicating the absence of systematic overcorrection or undercorrection. From a clinical perspective, this lack of directional bias is particularly important, as even minor systematic shifts in spherical or cylindrical power can result in patient discomfort or diminished visual satisfaction despite relatively low absolute error values.

These findings are consistent with, and in some cases comparable to, previous machine-learning-based refractive prediction studies that incorporated biometric measurements such as axial length, anterior corneal surface metrics, or wavefront aberrometry [

6,

7,

8,

12]. However, many of these approaches depend on advanced instrumentation that is not routinely available in primary care or high-volume ophthalmology clinics. In contrast, the present study demonstrates that comparable predictive performance for spherical and cylindrical components can be achieved using only standard autorefractor and keratometry data. This distinction is clinically significant because it prioritizes scalability and feasibility over maximal theoretical accuracy. Together, these findings indicate that clinically acceptable refractive prediction can be achieved without reliance on specialized imaging or biometric devices.

When evaluated in comparison with multivariable linear regression, gradient boosting approaches exhibited a consistent enhancement in predictive performance across spherical, cylindrical, and axis components within the training dataset. This finding suggests that nonlinear modeling may more effectively capture the intricate relationships underlying refractive outcomes than simple linear correction of autorefractor bias. The consistent observation of these performance gains across various nonlinear models lends support to the hypothesis of the presence of a clinically meaningful nonlinear structure, rather than merely bias adjustment. Despite the high explained variance for spherical and cylindrical components, which necessitates meticulous interpretation, model complexity was explicitly constrained through shallow tree depth, regularization, and patient-level grouped cross-validation, thereby reducing the likelihood of overfitting. Given the comparable performance across gradient boosting approaches, histogram-based gradient boosting was selected due to its greater stability in the presence of measurement noise inherent in routine autorefraction and keratometry data. The selection of this approach placed a priority on stability and reliability in real-world clinical use, rather than on marginal gains in predictive accuracy [

6,

7,

12].

While the model demonstrated strong performance for spherical and cylindrical components, the prediction of astigmatic axis proved to be inherently more challenging. This discrepancy is not unexpected and reflects fundamental properties of axis measurements rather than a limitation of the modeling approach. Unlike spherical and cylindrical power, the astigmatic axis is a circular variable with 180° periodicity, for which conventional linear error metrics are inappropriate and may substantially misrepresent true prediction error, particularly near the 0°/180° boundary. Accordingly, axis performance in the present study was evaluated using circular correlation and angular error–based metrics with residuals wrapped within the 180° periodicity, ensuring mathematically and clinically meaningful interpretation [

18]. Despite appropriate circular handling, axis prediction accuracy remained lower than that of spherical and cylindrical components. This finding is consistent with prior refractive modeling studies, which have reported greater intrinsic variability in axis estimation compared with spherical and cylindrical power, particularly in eyes with low cylindrical magnitude [

6,

7,

12]. In such cases, small absolute changes in cylinder magnitude may result in disproportionately large angular deviations despite minimal optical or clinical impact. Accordingly, absolute angular error alone may overstate the clinical relevance of axis disagreement when cylinder power is low. This consideration underscores the importance of stratified analyses that contextualize axis error by cylinder magnitude and astigmatism type.

Stratified analyses further demonstrated that axis prediction accuracy was substantially higher in eyes with moderate-to-high astigmatism and with-the-rule or against-the-rule configurations, whereas larger angular deviations were predominantly confined to low-cylinder or oblique astigmatism. Similar patterns have been reported in previous machine-learning–based refractive studies, supporting the interpretation that reduced axis performance is largely confined to refractive scenarios in which axis precision is both more variable and less clinically consequential [

6,

12].

Beyond aggregate accuracy metrics, we further examined the clinical conditions under which prediction failure is most likely to occur. Rather than relying solely on aggregate accuracy measures, we investigated which eyes were more likely to fall outside clinically acceptable error thresholds. A pragmatic primary definition that reflects routine clinical tolerance (|ΔS| ≤ 0.50 D, |ΔC| ≤ 0.50 D, |ΔAxis| ≤ 10°) was utilized in a multivariable logistic regression analysis. This analysis identified keratometric parameters, particularly steeper K2 values, and objective cylindrical power as independent predictors of poorer prediction performance. Age, sex, and spherical power were not independently associated with prediction failure after adjustment. Taken together, these findings indicate that corneal steepness and increasing cylindrical complexity may introduce additional sources of variability that are not fully captured by routine autorefractor measurements. Importantly, although low cylinder magnitude was associated with larger angular axis errors in univariate analyses, higher cylindrical power emerged as a determinant of overall prediction failure in multivariable modeling, reflecting the combined contribution of sphere, cylinder, and axis errors rather than axis instability alone. By identifying predictors of reduced accuracy, the present analysis provides clinically actionable insight into the conditions under which AI-assisted refraction is most likely to perform reliably.

From a practical standpoint, the findings support a hybrid implementation strategy in which machine-learning–assisted refraction functions as a decision-support tool rather than a replacement for subjective refraction. In cases of definite refractive errors and moderate-to-high astigmatism, the model provides precise initial values that have the potential to enhance the efficiency of clinical procedures. Conversely, eyes exhibiting low cylinder magnitude, oblique astigmatism, or steeper corneal profiles can be readily identified as requiring full subjective refinement. This approach aligns with current recommendations for the responsible and trustworthy clinical deployment of artificial intelligence in eye care [

19,

20].

The current model provides point estimates only and was intentionally evaluated under this constraint to reflect routine autorefractor output. In this context, it is important to note that model performance was evaluated at the population level, without explicit modeling of individual-level prediction uncertainty. Uncertainty-aware approaches, including prediction intervals, quantile regression, and confidence scoring, are recognized as important next steps for safe clinical implementation and are prioritized for future prospective studies.

Several limitations of the present study must be acknowledged. First, all measurements were obtained using a single autorefractor model (Topcon KR-1). Consequently, the model’s predictive performance may partly reflect learned instrument-specific characteristics rather than purely device-independent refractive patterns. Autorefractors differ across manufacturers in optical design, internal algorithms, keratometric assumptions, and calibration protocols, which may limit the direct transferability of the present findings across platforms. Accordingly, deployment on alternative autorefractors (e.g., Nidek or Zeiss) would likely require device-specific recalibration or modest local fine-tuning to correct for systematic offsets. Second, the study population was derived from a single tertiary referral center and was predominantly Middle Eastern/Caucasian; therefore, external validation in ethnically diverse cohorts is warranted to confirm population-level generalizability. However, model performance was evaluated using a temporally separated independent test cohort recruited during a later period, which partially mitigates concerns regarding overfitting and temporal instability of model performance.

Moreover, the routine non-use of cycloplegia may have limited the detection of latent hyperopia, particularly in younger individuals, although this reflects common real-world clinical practice. Furthermore, the requirement of best-corrected visual acuity of 6/6 was applied to ensure a reliable reference standard for subjective refraction. However, this criterion may limit the applicability of the findings to patients with media opacities or reduced visual acuity. Finally, the model did not incorporate biometric predictors such as axial length or higher-order aberrations, which have been shown to improve refractive estimation in borderline or complex cases. In addition, predictors of reduced prediction accuracy were also examined retrospectively, and the number of poorly predicted eyes was limited; accordingly, the multivariable logistic regression analysis should be interpreted as exploratory rather than confirmatory.

From a clinical perspective, the integration of AI-assisted refraction as a decision-support tool within routine ophthalmic practice is likely to be more effective than its use as a direct replacement for subjective refraction. In high-volume clinical settings, the proposed model could provide an initial refractive estimate prior to or during subjective refraction, potentially reducing examination time, facilitating patient flow, and preserving clinician oversight. Because the model relies exclusively on routinely acquired autorefraction and keratometry measurements, its implementation would not require additional devices or procedural adjustments. Importantly, such integration may allow clinicians to focus subjective refinement on cases with higher predicted uncertainty, thereby improving efficiency without compromising visual outcomes or patient satisfaction. However, the clinical utility of this approach has yet to be formally quantified in prospective settings, and broader clinical adoption should therefore proceed through cautious, stepwise validation rather than assumed universal deployment.