Prediction of Joint Space Narrowing Progression in Knee Osteoarthritis Patients

Abstract

1. Introduction

2. Materials and Methods

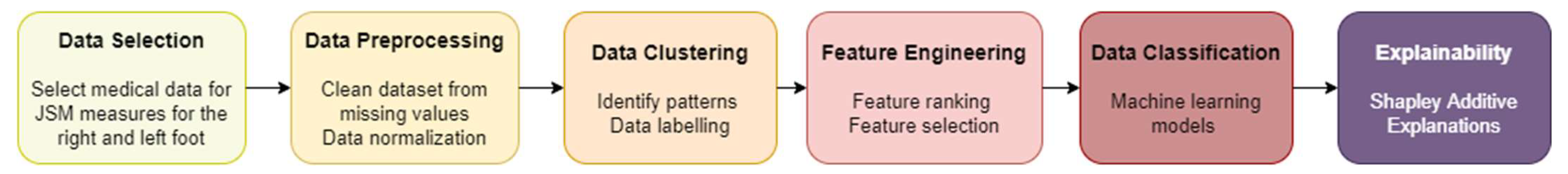

2.1. Methodology

2.1.1. Data Pre-Processing

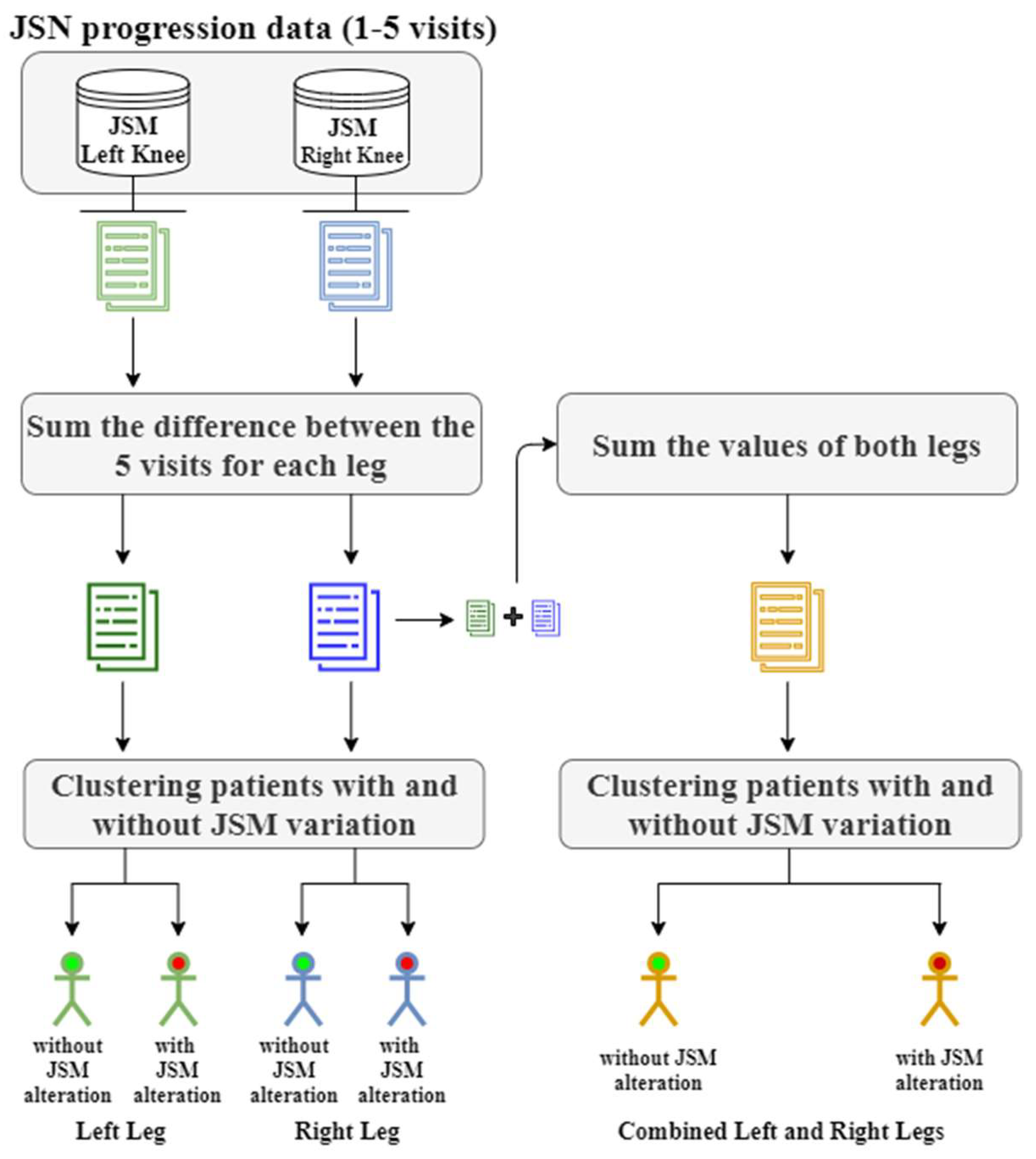

2.1.2. Data Clustering

| Algorithm 1 Pseudoalgorithm of the clustering process |

| Input: JSM measurements of the first five visits Output: Labeled data |

| 1. For each patient p ∈ : Calculate the differences between the consecutive JSM measurements: Calculate the sum of the absolute differences: End for each 2. For each clustering method examined Perform clustering evaluation with Davies Boulding index and calculate the optimal number of clusters . Perform clustering with clusters End for each 3. Return labels and evaluate the clustered data |

2.1.3. Feature Engineering

| Algorithm 2 Pseudoalgorithm of the feature selection |

| Input: Clinical data |

| 1. All features were normalized as described in the Pre-processing Section 2. For each feature j, Set End for each . For each FS technique For each feature j If feature is in , End for each End for each 4. Rank features to descending order with respect to . In case of equality the ranking is shaped from the feature importance of the best performing FS technique. End |

2.1.4. Data Classification

- Gradient boosting model (GBM) is an ensemble ML algorithm, which can be used for classification or regression predictive tasks. Weak learners are used from GBM to produce strong learners through a gradual, additive, and sequential process. Hence, for the development of a new improved tree a modified version of the initial training data set is fitted in GBM [30,31];

- Logistic regression (LR) describes the relationship of data to a dichotomous dependent variable. LR is based on the logistic function (1). This model is designed to describe the data with a probability in the range of 0 and 1 [32]:

- Neural networks (NNs), both shallow and deep NNs were employed. NNs are based on a supervised training procedure to generate a nonlinear model for prediction. They consist of layers (e.g., input layer, hidden layers, and output layer). Following a layered feedforward structure, the information is transferred unidirectionally from the input layer to output layer through the hidden layers [33,34,35];

- Support vector machines (SVMs) are another supervised learning model [40,41]. SVMs target to create the hyperplane, which is a decision boundary between two classes that enables the prediction of labels from one or more feature vectors. The main aim of SVMs is to maximize the class margin that is actually the distance between the closest points (support vectors) of each class [42].

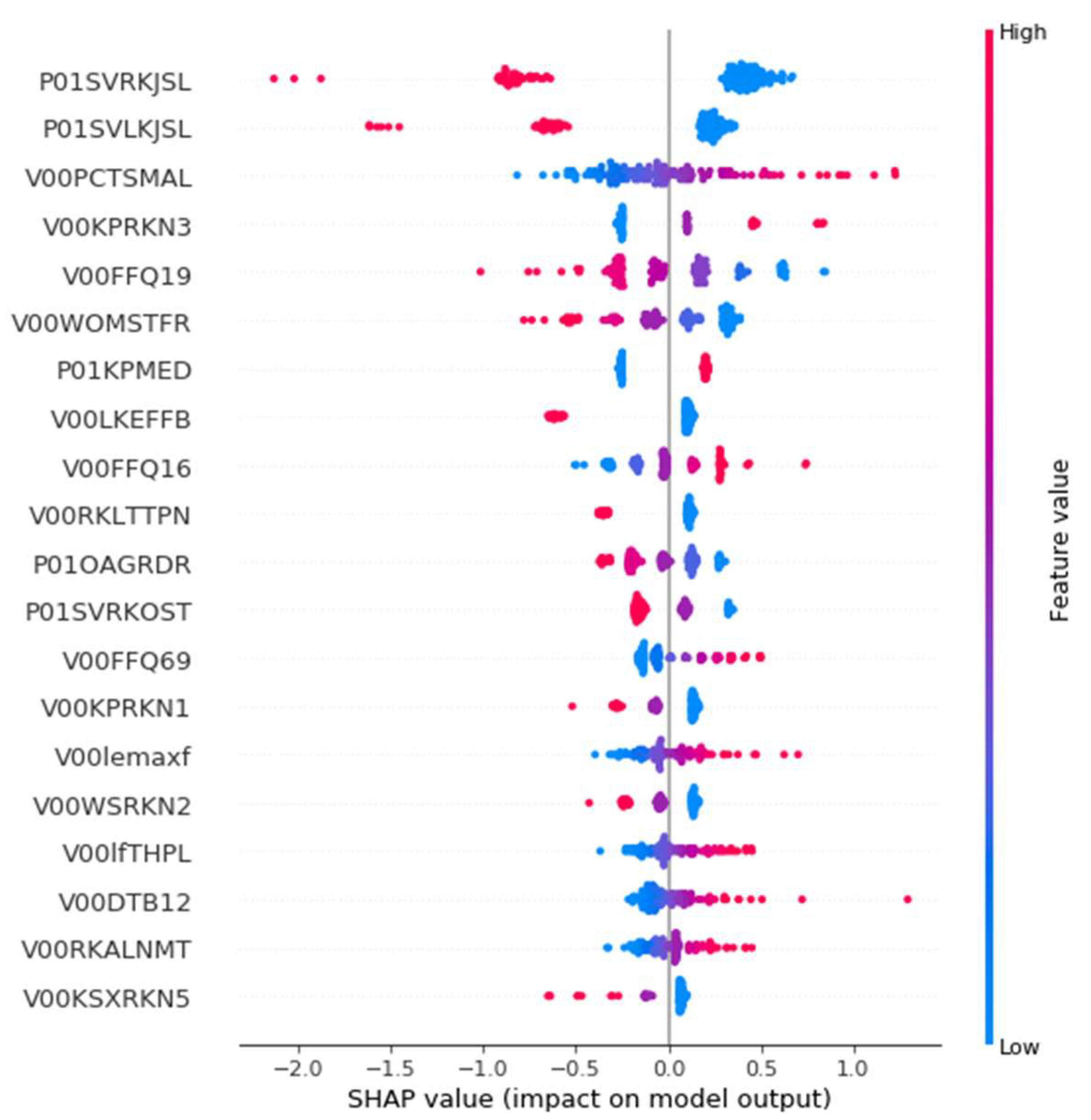

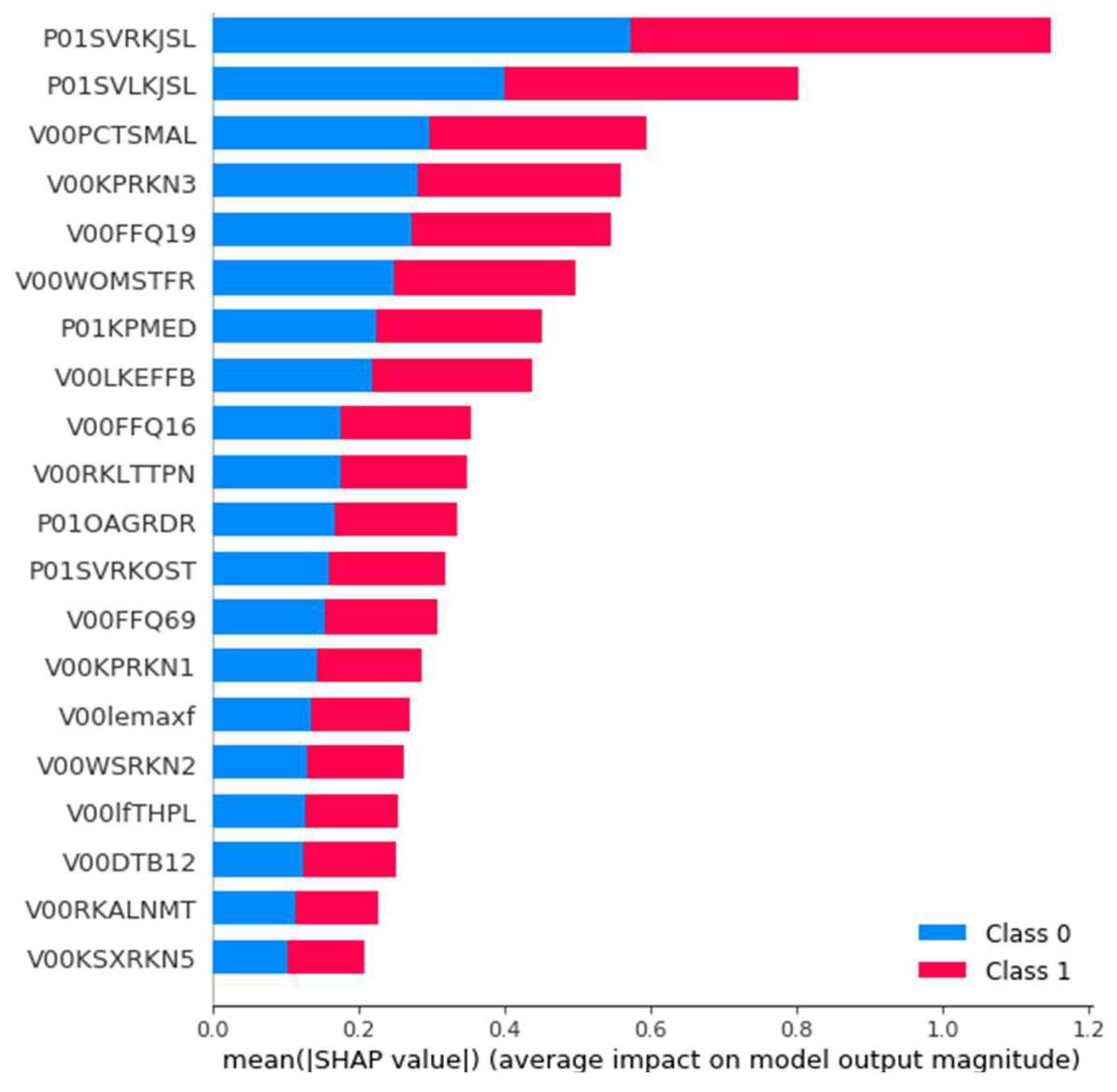

2.1.5. Post-Hoc Interpretation/Explainability

3. Evaluation

3.1. Medical Data

3.2. Evaluation Methodology

3.3. Results and Discussion

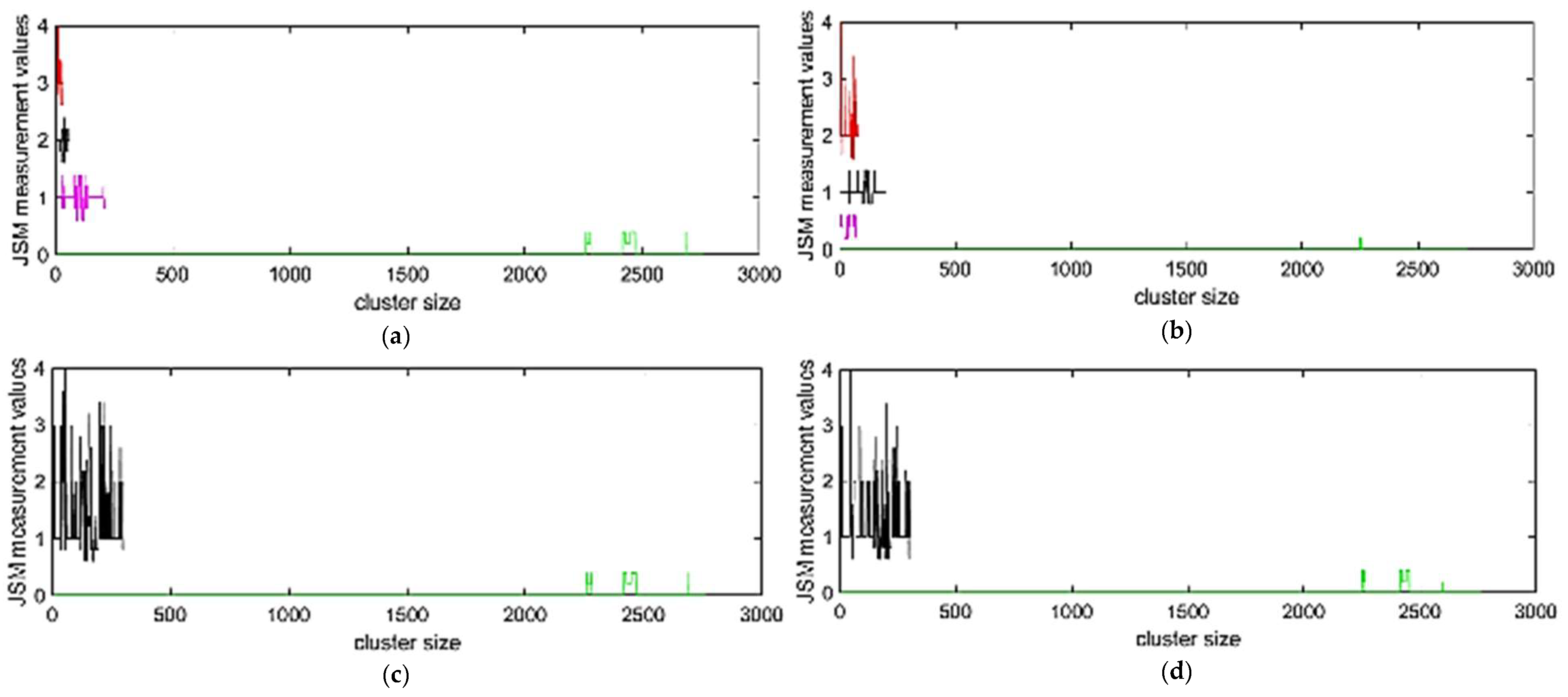

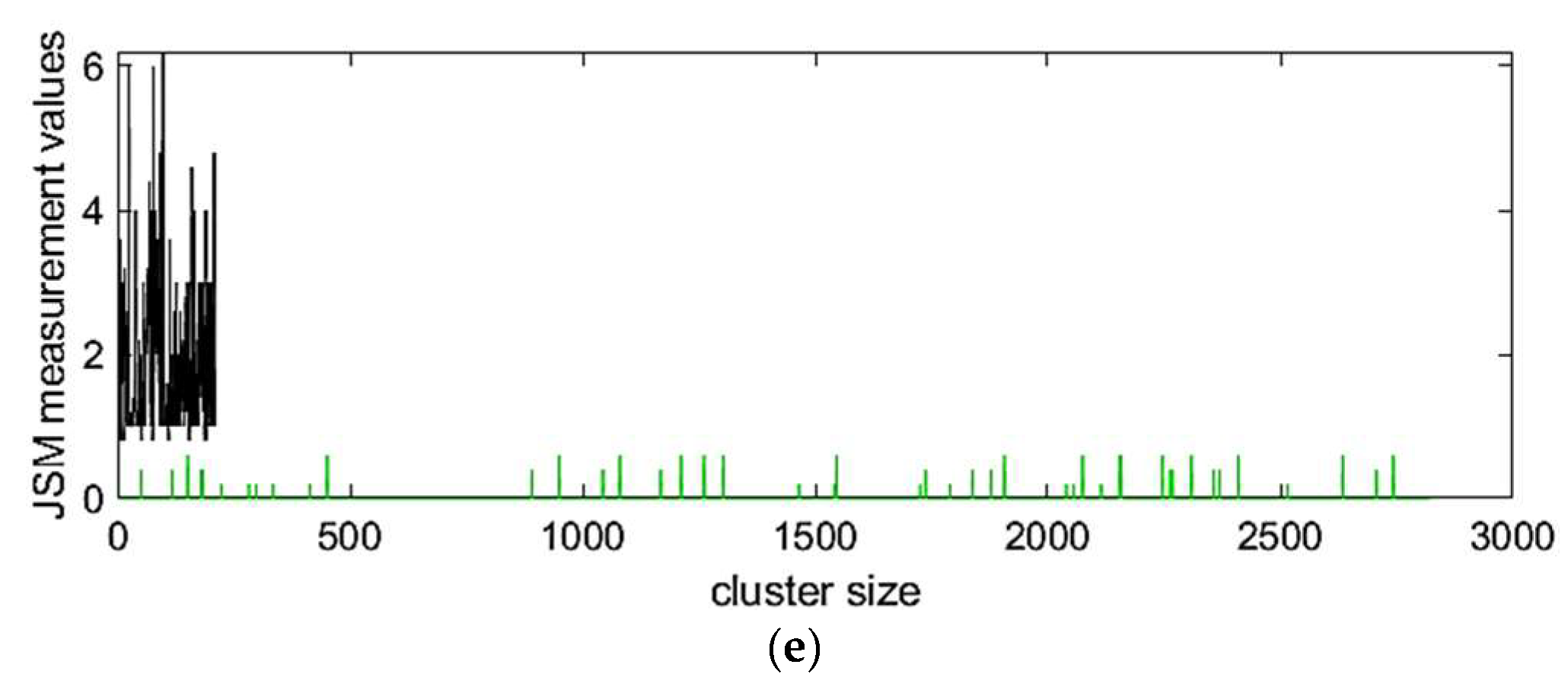

3.3.1. Clustering Results

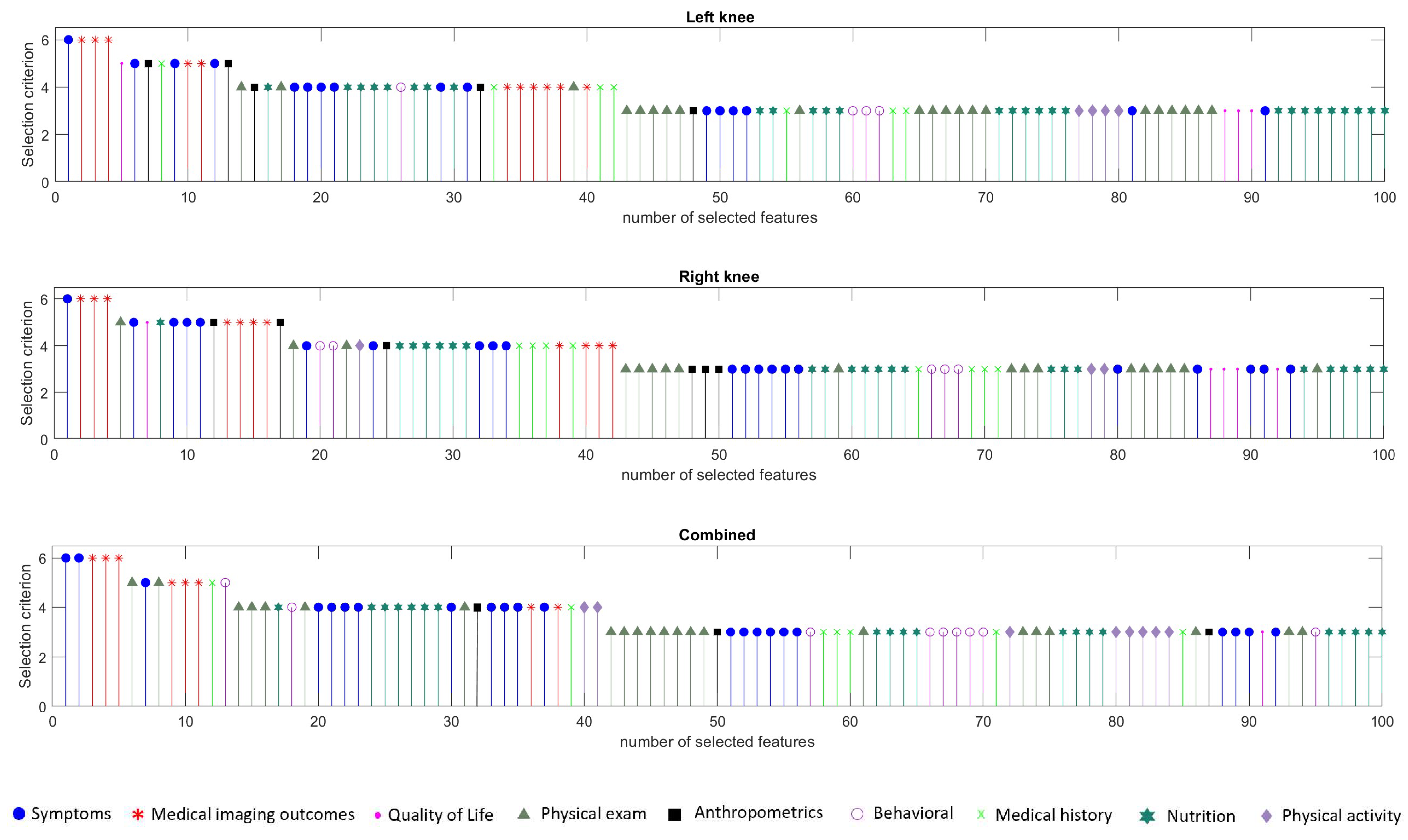

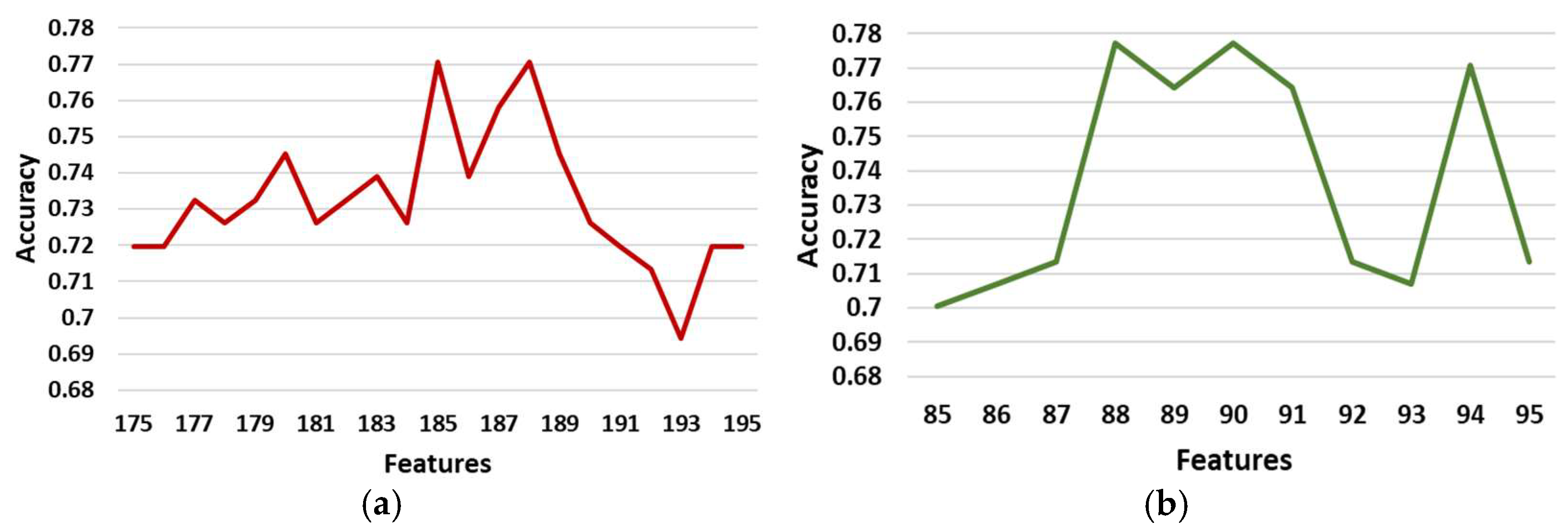

3.3.2. Feature Selection Results

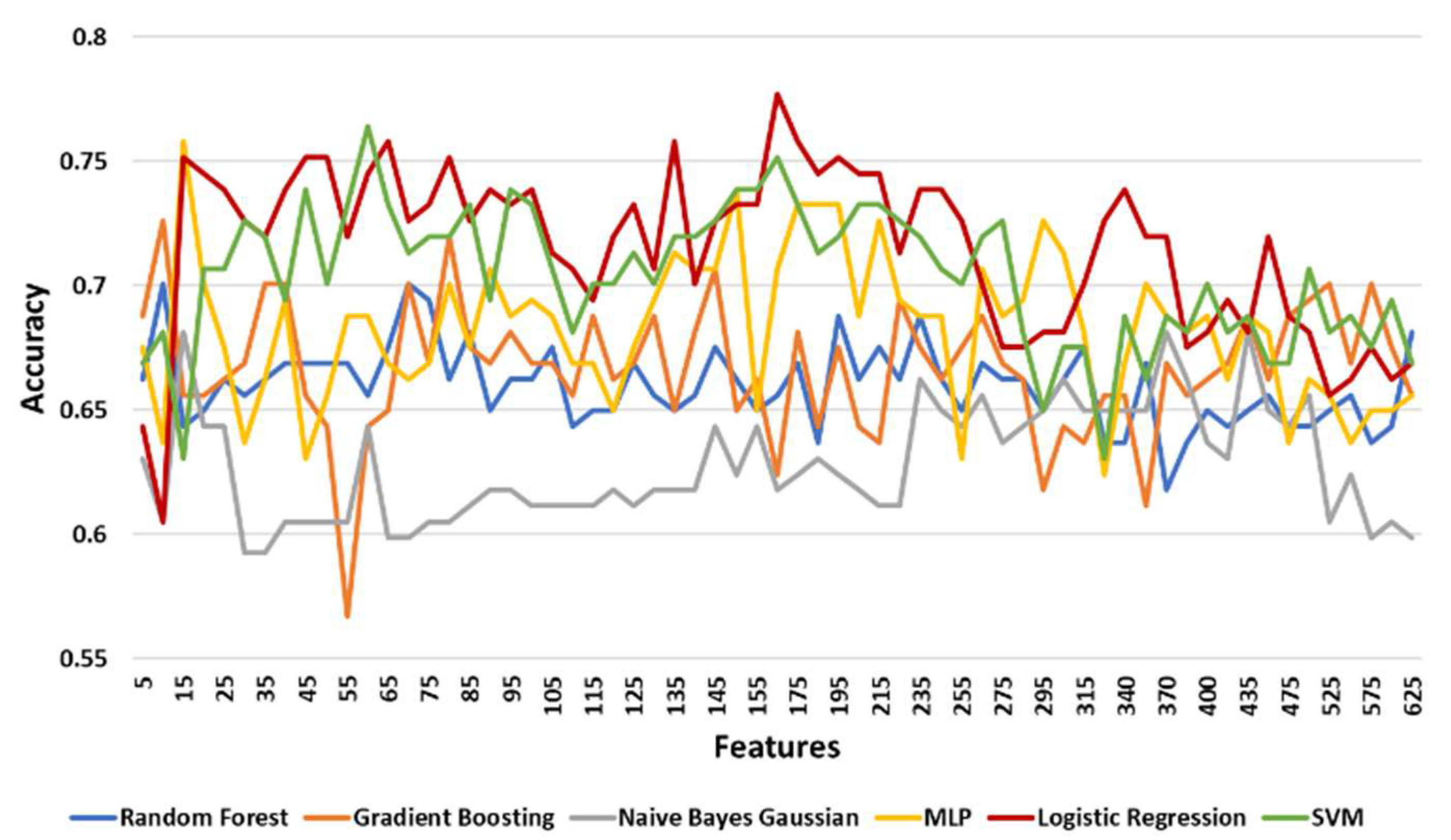

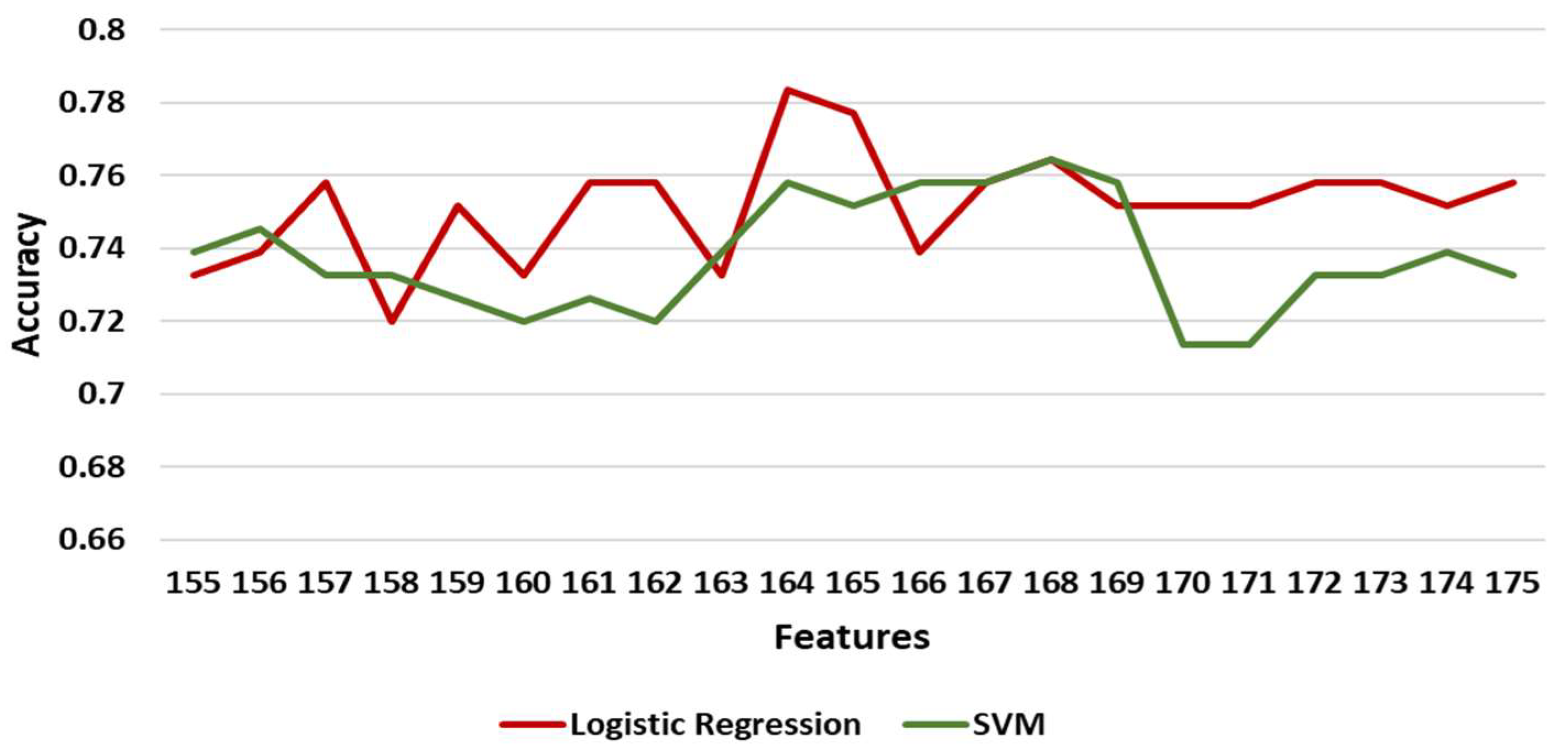

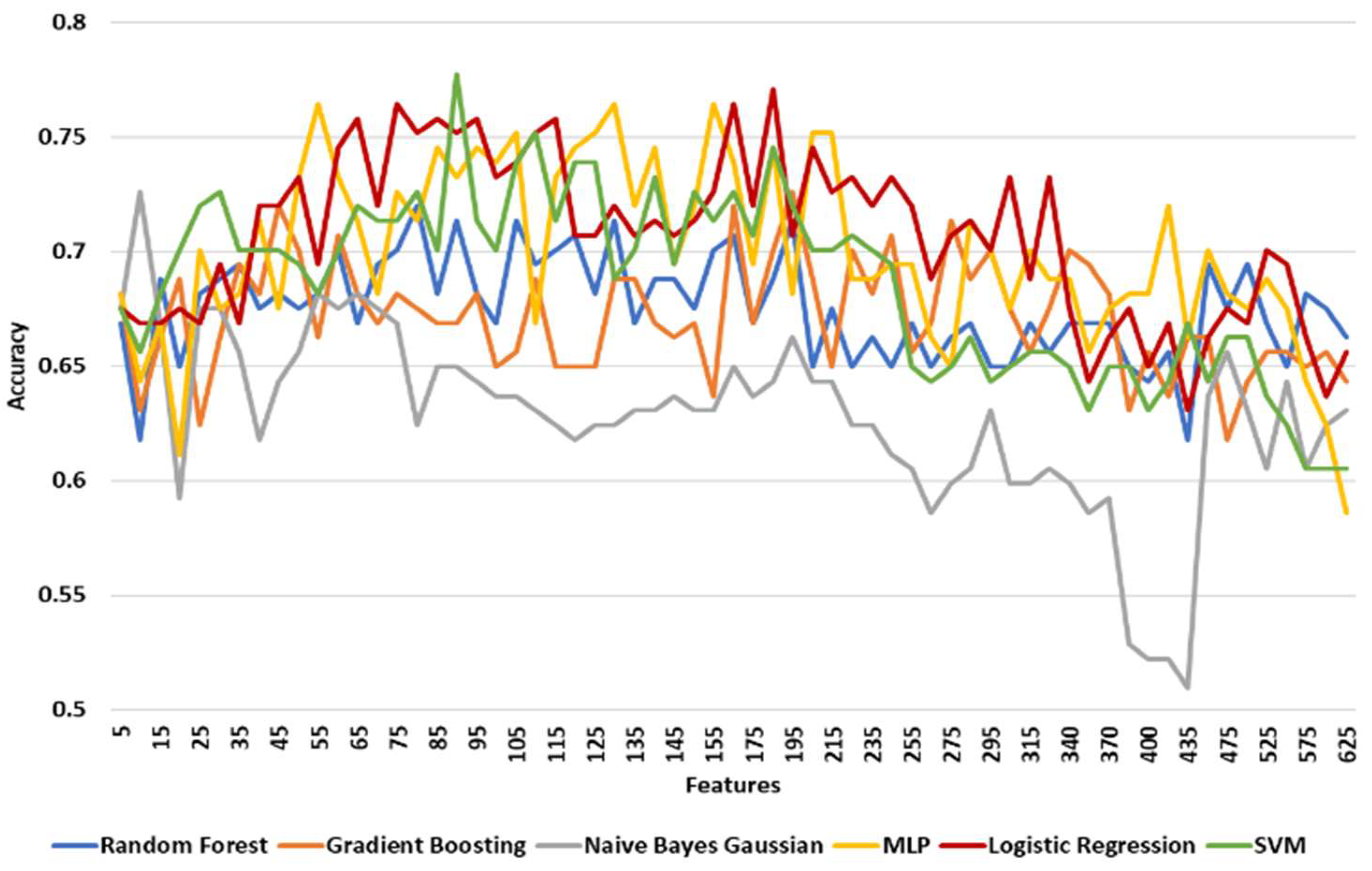

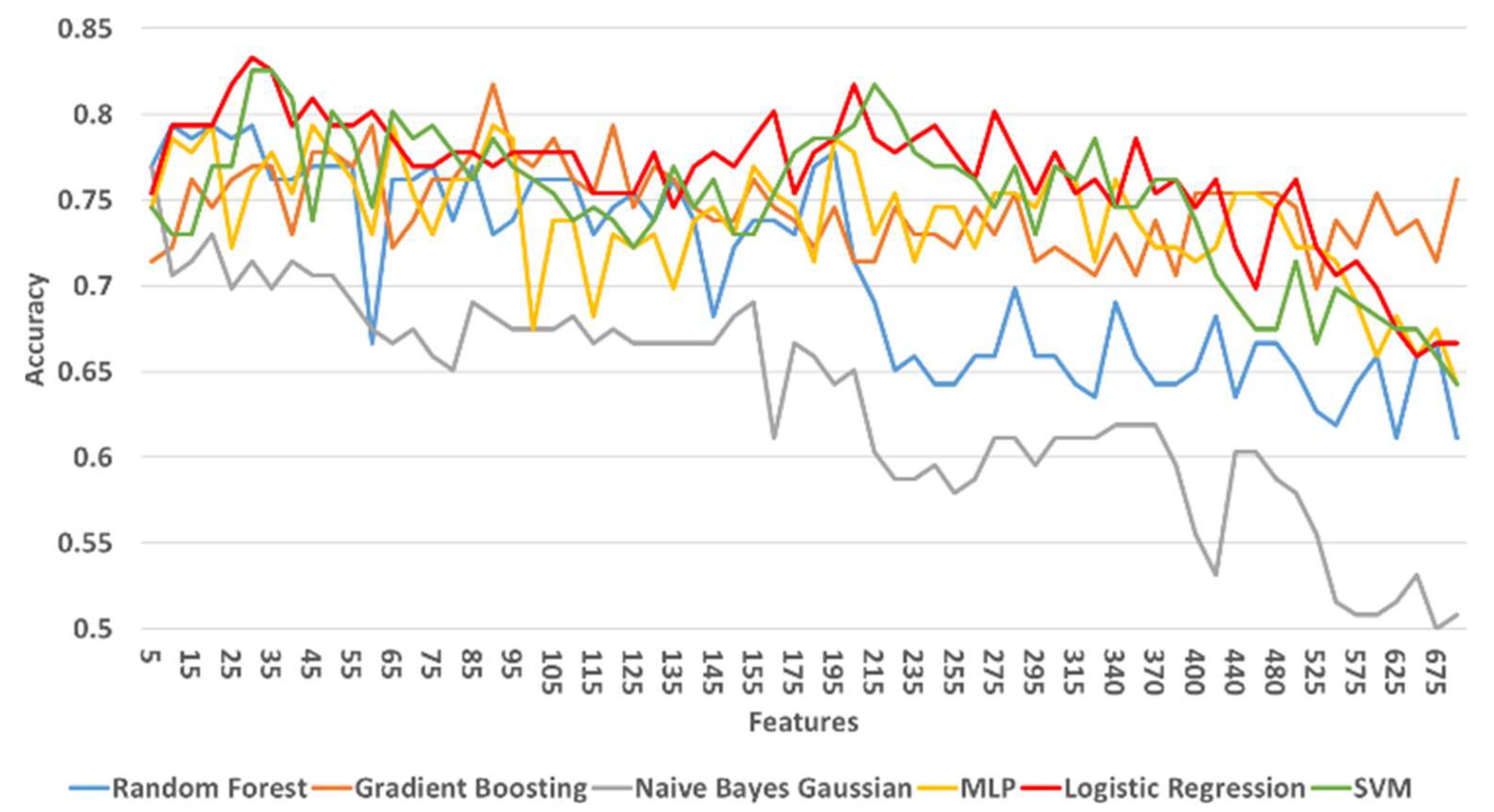

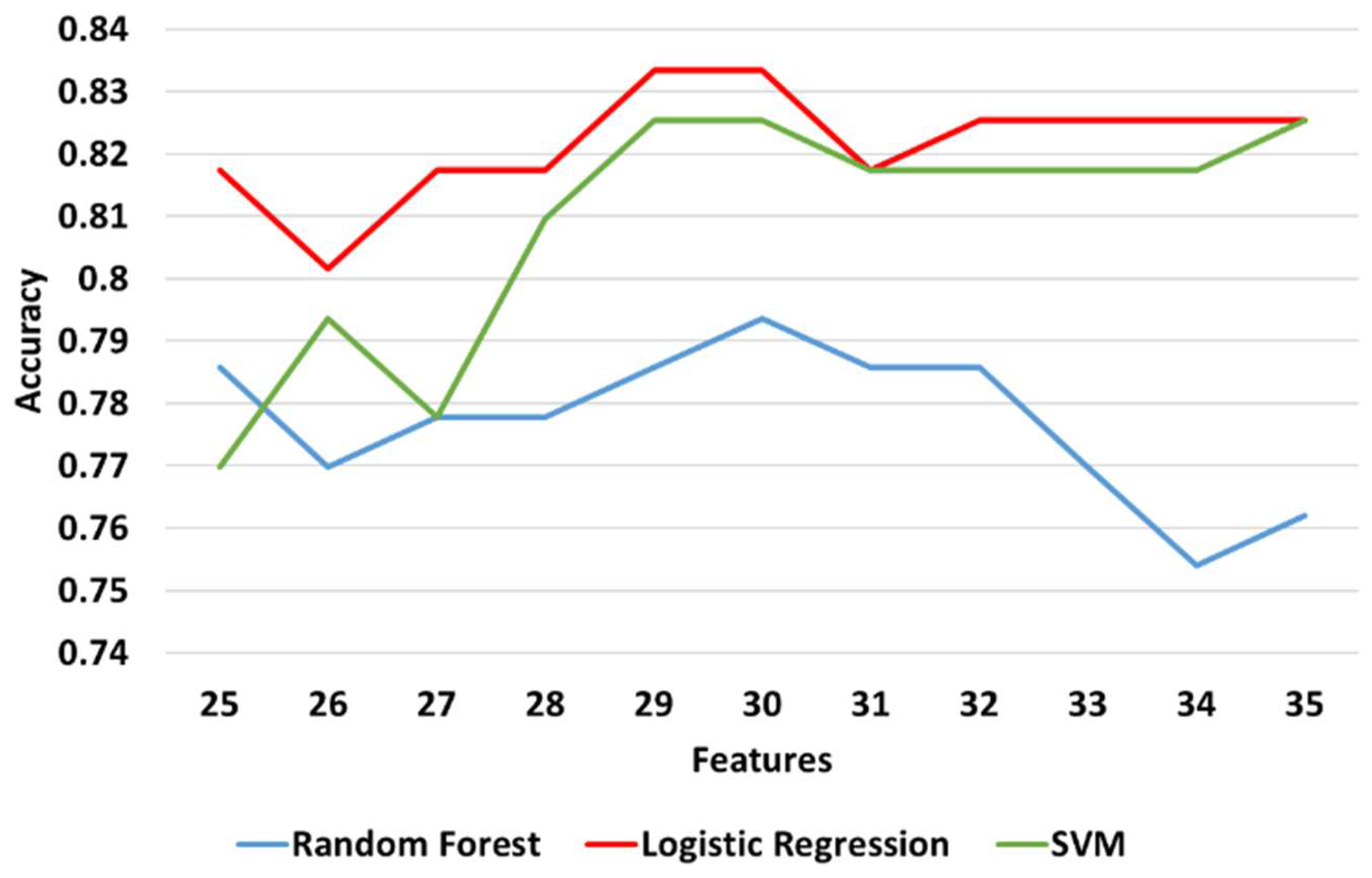

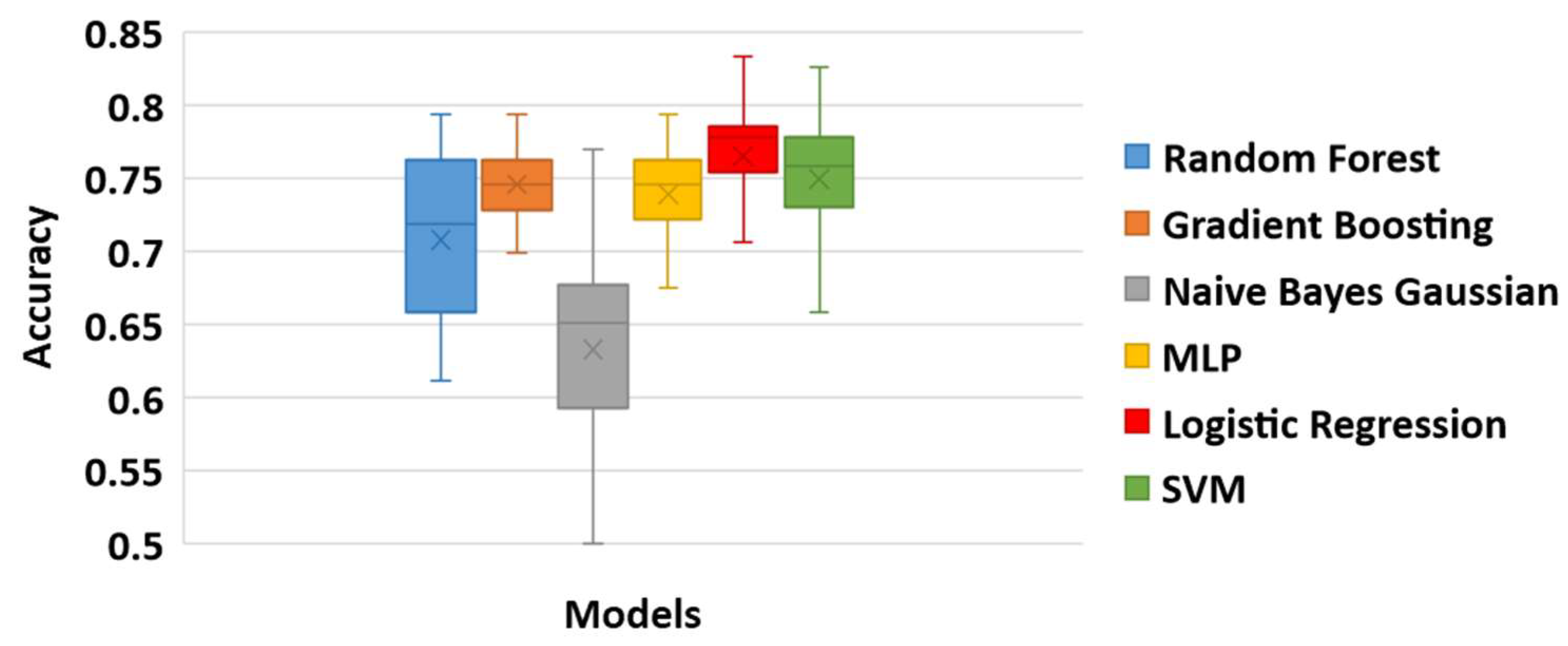

3.3.3. Classification Results

3.3.4. Post-Hoc Explainability Results

4. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Dell’Isola, A.; Steultjens, M. Classification of patients with knee osteoarthritis in clinical phenotypes: Data from the osteoarthritis initiative. PLoS ONE 2018, 13, e0191045. [Google Scholar] [CrossRef]

- Vitaloni, M.; Bemden, A.B.; Contreras, R.M.S.; Scotton, D.; Bibas, M.; Quintero, M.; Monfort, J.; Carné, X.; de Abajo, F.; Oswald, E.; et al. Global management of patients with knee osteoarthritis begins with quality of life assessment: A systematic review. BMC Musculoskelet. Disord. 2019, 20, 493. [Google Scholar] [CrossRef]

- Kokkotis, C.; Moustakidis, S.; Papageorgiou, E.; Giakas, G.; Tsaopoulos, D. Machine learning in knee osteoarthritis: A review. Osteoarthr. Cartil. Open 2020, 2, 100069. [Google Scholar] [CrossRef]

- Jamshidi, A.; Pelletier, J.-P.; Martel-Pelletier, J. Machine-learning-based patient-specific prediction models for knee osteoarthritis. Nat. Rev. Rheumatol. 2018, 15, 49–60. [Google Scholar] [CrossRef]

- Du, Y.; Almajalid, R.; Shan, J.; Zhang, M. A Novel Method to Predict Knee Osteoarthritis Progression on MRI Using Machine Learning Methods. IEEE Trans. NanoBiosci. 2018, 17, 228–236. [Google Scholar] [CrossRef]

- Lazzarini, N.; Runhaar, J.; Bay-Jensen, A.; Thudium, C.; Bierma-Zeinstra, S.; Henrotin, Y.; Bacardit, J. A machine learning approach for the identification of new biomarkers for knee osteoarthritis development in overweight and obese women. Osteoarthr. Cartil. 2017, 25, 2014–2021. [Google Scholar] [CrossRef]

- Halilaj, E.; Le, Y.; Hicks, J.L.; Hastie, T.J.; Delp, S.L. Modeling and predicting osteoarthritis progression: Data from the osteoarthritis initiative. Osteoarthr. Cartil. 2018, 26, 1643–1650. [Google Scholar] [CrossRef] [PubMed]

- Tiulpin, A.; Klein, S.; Bierma-Zeinstra, S.M.A.; Thevenot, J.; Rahtu, E.; Van Meurs, J.; Oei, E.H.G.; Saarakkala, S. Multimodal Machine Learning-based Knee Osteoarthritis Progression Prediction from Plain Radiographs and Clinical Data. Sci. Rep. 2019, 9, 1–11. [Google Scholar] [CrossRef] [PubMed]

- Nelson, A.E.; Fang, F.; Arbeeva, L.; Cleveland, R.J.; Schwartz, T.A.; Callahan, L.F.; Marron, J.S.; Loeser, R.F. A machine learning approach to knee osteoarthritis phenotyping: Data from the FNIH Biomarkers Consortium. Osteoarthr. Cartil. 2019, 27, 994–1001. [Google Scholar] [CrossRef] [PubMed]

- Pedoia, V.; Haefeli, J.; Morioka, K.; Teng, H.-L.; Nardo, L.; Souza, R.B.; Ferguson, A.R.; Majumdar, S. MRI and biomechanics multidimensional data analysis reveals R2-R1ρ as an early predictor of cartilage lesion progression in knee osteoarthritis. J. Magn. Reson. Imaging 2018, 47, 78–90. [Google Scholar] [CrossRef] [PubMed]

- Abedin, J.; Antony, J.; McGuinness, K.; Moran, K.; O’Connor, N.E.; Rebholz-Schuhmann, D.; Newell, J. Predicting knee osteoarthritis severity: Comparative modeling based on patient’s data and plain X-ray images. Sci. Rep. 2019, 9, 1–11. [Google Scholar] [CrossRef] [PubMed]

- Widera, P.; Welsing, P.M.J.; Ladel, C.; Loughlin, J.; Lafeber, F.P.F.J.; Dop, F.P.; Larkin, J.; Weinans, H.; Mobasheri, A.; Bacardit, J. Multi-classifier prediction of knee osteoarthritis progression from incomplete imbalanced longitudinal data. Sci. Rep. 2020, 10, 1–15. [Google Scholar] [CrossRef] [PubMed]

- Wang, Y.; You, L.; Chyr, J.; Lan, L.; Zhao, W.; Zhou, Y.; Xu, H.; Noble, P.; Zhou, X. Causal Discovery in Radiographic Markers of Knee Osteoarthritis and Prediction for Knee Osteoarthritis Severity With Attention–Long Short-Term Memory. Front. Public Health 2020, 8, 845. [Google Scholar] [CrossRef] [PubMed]

- Lim, J.; Kim, J.; Cheon, S. A Deep Neural Network-Based Method for Early Detection of Osteoarthritis Using Statistical Data. Int. J. Environ. Res. Public Health 2019, 16, 1281. [Google Scholar] [CrossRef] [PubMed]

- Brahim, A.; Jennane, R.; Riad, R.; Janvier, T.; Khedher, L.; Toumi, H.; Lespessailles, E. A decision support tool for early detection of knee OsteoArthritis using X-ray imaging and machine learning: Data from the OsteoArthritis Initiative. Comput. Med. Imaging Graph. 2019, 73, 11–18. [Google Scholar] [CrossRef]

- Alexos, A.; Kokkotis, C.; Moustakidis, S.; Papageorgiou, E.; Tsaopoulos, D. Prediction of pain in knee osteoarthritis patients using machine learning: Data from Osteoarthritis Initiative. In Proceedings of the 2020 11th International Conference on Information, Intelligence, Systems and Applications IISA, Piraeus, Greece, 15–17 July 2020; pp. 1–7. [Google Scholar]

- Kokkotis, C.; Moustakidis, S.; Giakas, G.; Tsaopoulos, D. Identification of Risk Factors and Machine Learning-Based Prediction Models for Knee Osteoarthritis Patients. Appl. Sci. 2020, 10, 6797. [Google Scholar] [CrossRef]

- Ntakolia, C.; Kokkotis, C.; Moustakidis, S.; Tsaopoulos, D. A machine learning pipeline for predicting joint space narrowing in knee osteoarthritis patients. In Proceedings of the 2020 IEEE 20th International Conference on Bioinformatics and Bioengineering (BIBE), Cincinnati, OH, USA, 26–28 October 2020; pp. 934–941. [Google Scholar]

- Jamshidi, A.; Leclercq, M.; Labbe, A.; Pelletier, J.-P.; Abram, F.; Droit, A.; Martel-Pelletier, J. Identification of the most important features of knee osteoarthritis structural progressors using machine learning methods. Ther. Adv. Musculoskelet. Dis. 2020, 12. [Google Scholar] [CrossRef]

- Alsabti, K.; Ranka, S.; Singh, V. An Efficient K-Means Clustering Algorithm; Electrical Engineering and Computer Science; Syracuse University: Syracuse, NY, USA, 1997. [Google Scholar]

- Rdusseeun, L.K.P.J.; Kaufman, P. Clustering by Means of Medoids. In Proceedings of the Statistical Data Analysis Based on the L1 Norm Conference, Neuchatel, Switzerland, 31 August–4 September 1987; pp. 405–416. [Google Scholar]

- Johnson, S.C. Hierarchical clustering schemes. Psychometrika 1967, 32, 241–254. [Google Scholar] [CrossRef] [PubMed]

- Bezdek, J.; Pal, N. Some new indexes of cluster validity. IEEE Trans. Syst. Man, Cybern. Part B 1998, 28, 301–315. [Google Scholar] [CrossRef]

- Biesiada, J.; Duch, W. Feature Selection for High-Dimensional Data—A Pearson Redundancy Based Filter. In Advances in Intelligent and Soft Computing; Springer: New York, NY, USA, 2007; pp. 242–249. [Google Scholar]

- Thaseen, I.S.; Kumar, C.A. Intrusion detection model using fusion of chisquare feature selection and multi class SVM. J. King Saud Univ.-Comput. Inf. Sci. 2017, 29, 462–472. [Google Scholar] [CrossRef]

- Xiong, M.; Fang, X.; Zhao, J. Biomarker Identification by Feature Wrappers. Genome Res. 2001, 11, 1878–1887. [Google Scholar] [CrossRef]

- Zhou, Q.; Zhou, H.; Li, T. Cost-sensitive feature selection using random forest: Selecting low-cost subsets of informative features. Knowl.-Based Syst. 2016, 95, 1–11. [Google Scholar] [CrossRef]

- Al Daoud, E. Comparison between XGBoost, LightGBM and CatBoost Using a Home Credit Dataset. Int. J. Comput. Inf. Eng. 2019, 13, 6–10. [Google Scholar]

- Nie, F.; Huang, H.; Cai, X.; Ding, C.H. Efficient and robust feature selection via joint ℓ2, 1-norms minimization. Adv. Neural Inf. Process. Syst. 2010, 23, 1813–1821. [Google Scholar]

- Friedman, J.H. Greedy function approximation: A gradient boosting machine. Ann. Stat. 2001, 29, 1189–1232. [Google Scholar] [CrossRef]

- Hastie, T.; Tibshirani, R.; Friedman, J. Boosting and Additive Trees. In The Elements of Statistical Learning; Springer: New York, NY, USA, 2009; pp. 337–387. [Google Scholar]

- Kleinbaum, D.G.; Klein, M. Logistic regression, statistics for biology and health. Retrieved DOI 2010, 10, 978–981. [Google Scholar]

- Moustakidis, S.; Christodoulou, E.; Papageorgiou, E.; Kokkotis, C.; Papandrianos, N.; Tsaopoulos, D. Application of machine intelligence for osteoarthritis classification: A classical implementation and a quantum perspective. Quantum Mach. Intell. 2019, 1, 73–86. [Google Scholar] [CrossRef]

- Taud, H.; Mas, J. Multilayer perceptron (MLP). In Geomatic Approaches for Modeling Land Change Scenarios; Springer: New York, NY, USA, 2018; pp. 451–455. [Google Scholar]

- Ntakolia, C.; Diamantis, D.E.; Papandrianos, N.; Moustakidis, S.; Papageorgiou, E.I. A Lightweight Convolutional Neural Network Architecture Applied for Bone Metastasis Classification in Nuclear Medicine: A Case Study on Prostate Cancer Patients. Healthcare 2020, 8, 493. [Google Scholar] [CrossRef] [PubMed]

- Pérez, A.; Larrañaga, P.; Inza, I. Supervised classification with conditional Gaussian networks: Increasing the structure complexity from naive Bayes. Int. J. Approx. Reason. 2006, 43, 1–25. [Google Scholar] [CrossRef]

- Jahromi, A.H.; Taheri, M. A non-parametric mixture of Gaussian naive Bayes classifiers based on local independent features. In Proceedings of the 2017 Artificial Intelligence and Signal Processing Conference (AISP), Shiraz, Iran, 25–27 October 2017; pp. 209–212. [Google Scholar]

- Biau, G.; Scornet, E. A random forest guided tour. TEST 2016, 25, 197–227. [Google Scholar] [CrossRef]

- Breiman, L. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Vapnik, V. The Nature of Statistical Learning Theory; Springer Science & Business Media: Berlin/Heidelberg, Germany, 2013. [Google Scholar]

- Cortes, C.; Vapnik, V. Support-vector networks. Mach. Learn. 1995, 20, 273–297. [Google Scholar] [CrossRef]

- Huang, S.; Cai, N.; Pacheco, P.P.; Narrandes, S.; Wang, Y.; Xu, W. Applications of Support Vector Machine (SVM) Learning in Cancer Genomics. Cancer Genom.-Proteom. 2018, 15, 41–51. [Google Scholar] [CrossRef]

- Lundberg, S.M.; Lee, S.-I. A unified approach to interpreting model predictions. In Proceedings of the 31st International Conference on Neural Information Processing Systems; Curran Associates Inc.: Red Hook, NY, USA, 2017; pp. 4765–4774. [Google Scholar]

| Category | Description | Number of Features |

|---|---|---|

| Anthropometrics | Includes measurements of participants such as height, weight, BMI (body mass index), etc. | 37 |

| Behavioral | Questionnaire results which describe the participants’ social behaviour | 61 |

| Symptoms | Includes variables of participants’ arthritis symptoms and general arthritis or health-related function and disability | 108 |

| Quality of life | Variables which describe the quality level of daily routine | 12 |

| Medical history | Questionnaire results regarding a participant’s arthritis-related and general health histories and medications | 123 |

| Medical imaging outcome | Variables which contain medical imaging outcomes (e.g., osteophytes and joint space narrowing (JSN)) | 21 |

| Nutrition | Variables resultfrom the use of the modified Block Food Frequency questionnaire | 224 |

| Physical exam | Variables of participants’ measurements, performance measures, and knee and hand exams | 115 |

| Physical activity | Questionnaire data results regarding household activities, leisure activities, etc. | 24 |

| Total number of features: | 725 |

| Clustering Method | Parameters |

|---|---|

| K-Means | City block distance, 5 replicates |

| K-Medoids | City block distance, 5 replicates |

| Hierarchical | Agglomerative cluster tree, Chebychev distance, farthest distance between clusters, 3 maximum number of clusters |

| Classification Model | Hyper Parameters Tuning |

|---|---|

| GBM | The number of boosting stages to perform from 10 to 500 with 10 step size The maximum depth of the individual regression estimators from 1 to 10 with 1 step size The minimum number of samples required to split an internal node: 2, 5 and 10 The minimum number of samples required to be at a leaf node: 1, 2 and 4 The number of features to consider when looking for the best split: or |

| LR | The inverse of regularization strength was tested on 0.001, 0.01, 0.1, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10 Algorithm to use in the optimization problem was set to 4 different solvers that handle L2 or no penalty, such as ‘newton-cg’, ‘lbfgs’, ‘sag’ and ‘saga’ A binary problem is fit for each label or the loss minimized is the multinomial loss fit across the entire probability distribution, even when the data is binary With and without reusing the solution of the previous call to fit as initialization |

| NN | Both shallow and deep structures were investigated Hidden layers varying from 1 to 3 with different number of nodes per layer (50, 100, 200) Activator function: Relu and Solver for weight optimization: adam, stochastic gradient descent, stochastic gradient-based optimizer proposed by Kingma, Diederik, and Jimmy Ba and an optimizer in the family of quasi-Newton methods L2 penalty (regularization term) parameter: 0.0001 and 0.05 The learning rate schedule for weight updates was set as a constant learning rate given by the given number and as adaptive by keeping the learning rate constant to the given number as long as training loss keeps decreasing. |

| NBG | - |

| RF | The number of trees in the forest from 10 to 500 with 10 step size The maximum depth of the tree from 1 to 10 with 1 step size The minimum number of samples required to split an internal node: 2, 5 and 10 The minimum number of samples required to be at a leaf node: 1, 2 and 4 The number of features to consider when looking for the best split: or With and without bootstrap |

| SVM | The regularization parameter was tested on 0.001, 0.01, 0.1, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10 Kernel type was set to linear, polynomial, sigmoid and radial basis functions |

| Clustering Method | Number of Clusters | Cluster Elements | ||||

|---|---|---|---|---|---|---|

| Left | Right | Both | Left | Right | Both | |

| K-Means | [2822,209] | |||||

| K-Medoids | ||||||

| Hierarchical | ||||||

| Clustering Method | Number of Clusters | Cluster Elements | ||||

|---|---|---|---|---|---|---|

| Left | Right | Both | Left | Right | Both | |

| K-Means | [2763,299] | [2764,302] | [2822,209] | |||

| K-Medoids | ||||||

| Hierarchical | ||||||

| Prediction Model | Maximum Accuracy | Minimum Accuracy | Mean Accuracy | Standard Deviation |

|---|---|---|---|---|

| Gradient Boosting | 0.72611 | 0.56688 | 0.66707 | 0.02622 |

| Logistic Regression | 0.77707 | 0.60510 | 0.71540 | 0.03353 |

| NNs (Neural Networks) | 0.75796 | 0.62420 | 0.68234 | 0.02933 |

| Naïve Bayes Gaussian | 0.68153 | 0.59236 | 0.62794 | 0.02301 |

| Random Forest | 0.70064 | 0.61783 | 0.65989 | 0.01616 |

| SVM | 0.76433 | 0.63057 | 0.70377 | 0.02783 |

| Prediction Model | Maximum Accuracy | Minimum Accuracy | Mean Accuracy | Standard Deviation |

|---|---|---|---|---|

| Gradient Boosting | 0.72611 | 0.61783 | 0.67172 | 0.02445 |

| Logistic Regression | 0.77070 | 0.63057 | 0.70691 | 0.03560 |

| NNs | 0.76433 | 0.58599 | 0.69983 | 0.03858 |

| Naïve Bayes Gaussian | 0.72611 | 0.50955 | 0.62774 | 0.03926 |

| Random Forest | 0.71975 | 0.61783 | 0.67577 | 0.02217 |

| SVM | 0.77707 | 0.60510 | 0.68598 | 0.03929 |

| Prediction Model | Maximum Accuracy | Minimum Accuracy | Mean Accuracy | Standard Deviation |

|---|---|---|---|---|

| Gradient Boosting | 0.81746 | 0.69841 | 0.74591 | 0.02449 |

| Logistic Regression | 0.83333 | 0.65873 | 0.76503 | 0.03725 |

| NNs | 0.79365 | 0.64286 | 0.73870 | 0.03470 |

| Naïve Bayes Gaussian | 0.76984 | 0.50000 | 0.63300 | 0.06331 |

| Random Forest | 0.79365 | 0.61111 | 0.70755 | 0.05645 |

| SVM | 0.82540 | 0.64286 | 0.74928 | 0.04223 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ntakolia, C.; Kokkotis, C.; Moustakidis, S.; Tsaopoulos, D. Prediction of Joint Space Narrowing Progression in Knee Osteoarthritis Patients. Diagnostics 2021, 11, 285. https://doi.org/10.3390/diagnostics11020285

Ntakolia C, Kokkotis C, Moustakidis S, Tsaopoulos D. Prediction of Joint Space Narrowing Progression in Knee Osteoarthritis Patients. Diagnostics. 2021; 11(2):285. https://doi.org/10.3390/diagnostics11020285

Chicago/Turabian StyleNtakolia, Charis, Christos Kokkotis, Serafeim Moustakidis, and Dimitrios Tsaopoulos. 2021. "Prediction of Joint Space Narrowing Progression in Knee Osteoarthritis Patients" Diagnostics 11, no. 2: 285. https://doi.org/10.3390/diagnostics11020285

APA StyleNtakolia, C., Kokkotis, C., Moustakidis, S., & Tsaopoulos, D. (2021). Prediction of Joint Space Narrowing Progression in Knee Osteoarthritis Patients. Diagnostics, 11(2), 285. https://doi.org/10.3390/diagnostics11020285