A 3D Keypoints Voting Network for 6DoF Pose Estimation in Indoor Scene

Abstract

:1. Introduction

2. Related Work

2.1. Pose from RGB Images

2.2. Pose from Point Cloud

2.3. Pose from RGBD Data

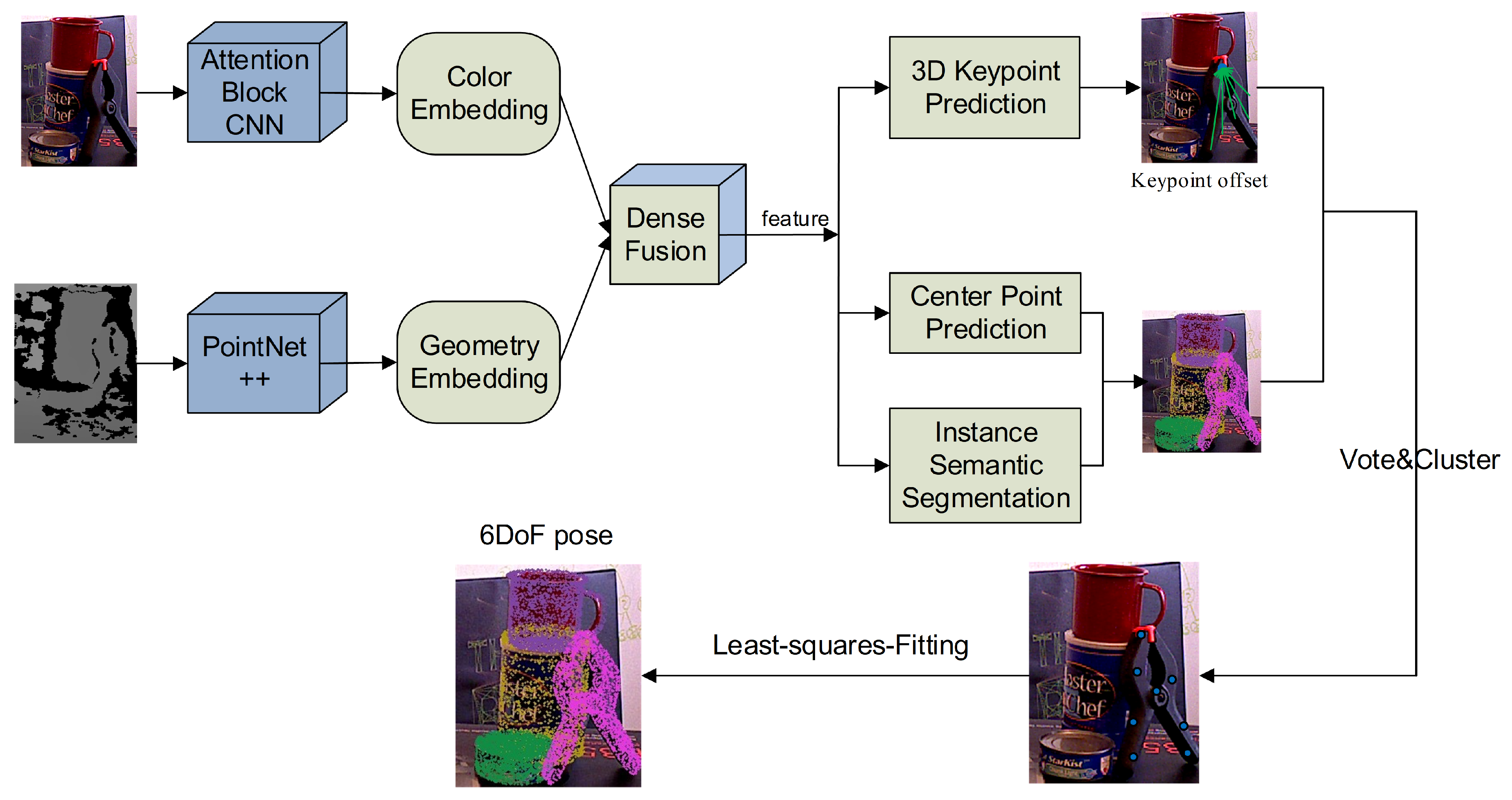

3. Methodology

3.1. Split-Attention for Image Feature Extraction

3.2. Pointcloud Feature Extraction

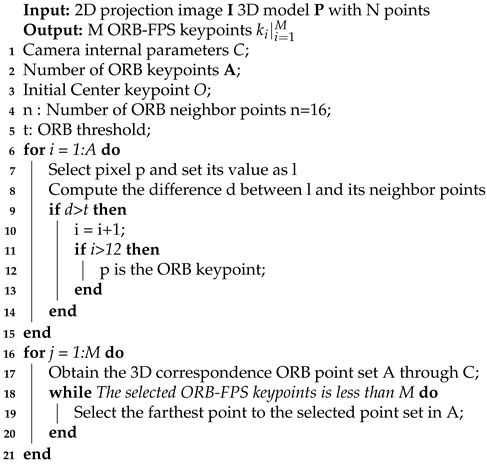

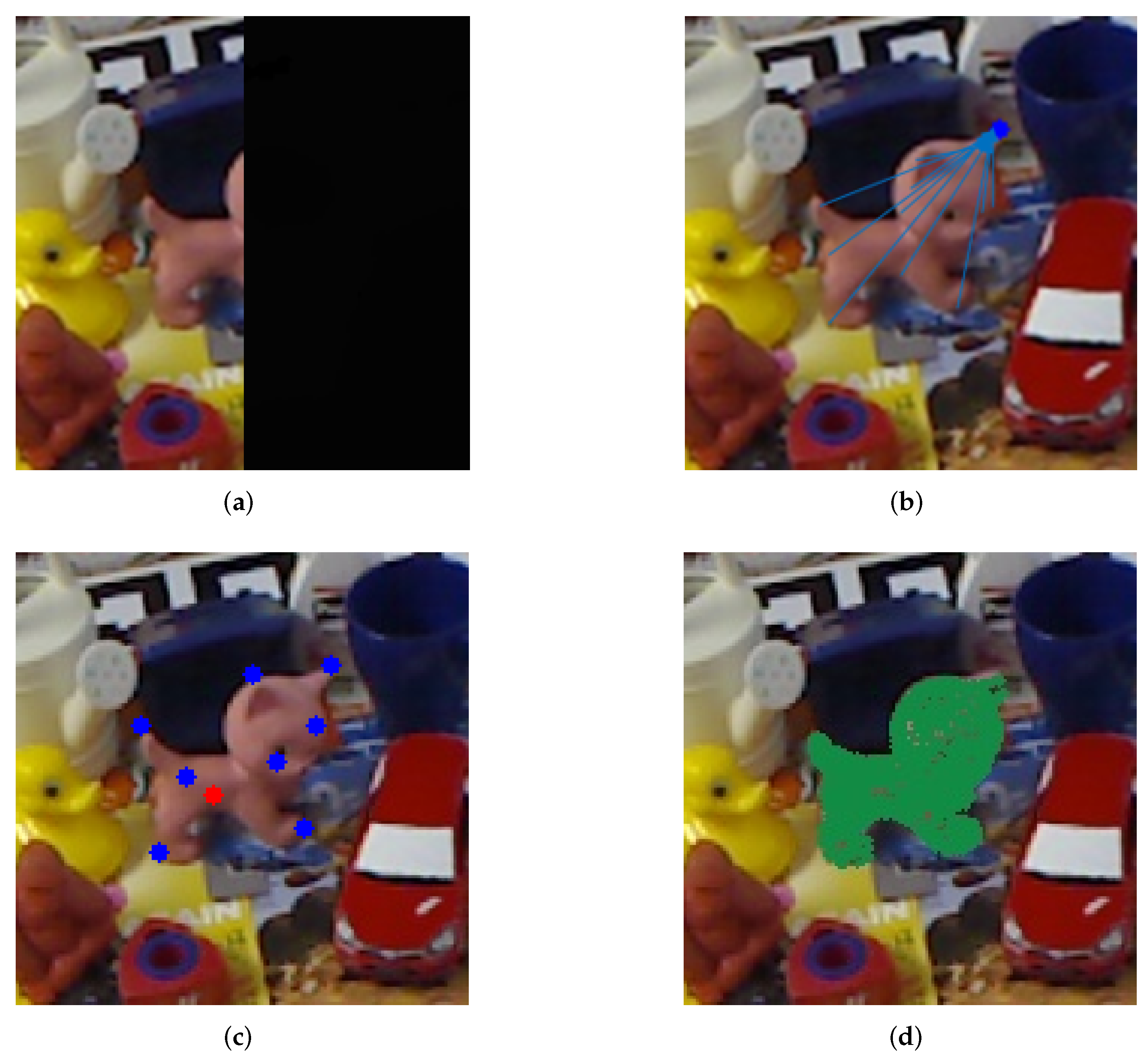

3.3. ORB-FPS Keypoint Selection

| Algorithm 1: ORB-FPS keypoint algorithm. |

|

3.4. 3D Keypoint Voting

3.5. Instance Semantic Segmentation

3.6. Center Point Voting

3.7. Pose Calculate

4. Experiments

4.1. Datasets

4.2. Evaluation Metrics

4.3. Implementation Details

4.4. Results on the Benchmark Datasets

4.4.1. Result on the Linemod Dataset

4.4.2. Result on the YCB-Dataset

4.5. Ablation Study

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Marchand, E.; Uchiyama, H.; Spindler, F. Pose estimation for augmented reality: A hands-on survey. IEEE Trans. Vis. Comput. Graph. 2015, 22, 2633–2651. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Chen, X.; Ma, H.; Wan, J.; Li, B.; Xia, T. Multi-view 3d object detection network for autonomous driving. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 1907–1915. [Google Scholar]

- El Madawi, K.; Rashed, H.; El Sallab, A.; Nasr, O.; Kamel, H.; Yogamani, S. Rgb and lidar fusion based 3d semantic segmentation for autonomous driving. In Proceedings of the 2019 IEEE Intelligent Transportation Systems Conference (ITSC), Auckland, New Zealand, 27–30 October 2019; pp. 7–12. [Google Scholar]

- Tremblay, J.; To, T.; Sundaralingam, B.; Xiang, Y.; Fox, D.; Birchfield, S. Deep object pose estimation for semantic robotic grasping of household objects. arXiv 2018, arXiv:1809.10790. [Google Scholar]

- Wu, Y.; Zhang, Y.; Zhu, D.; Chen, X.; Coleman, S.; Sun, W.; Hu, X.; Deng, Z. Object-Driven Active Mapping for More Accurate Object Pose Estimation and Robotic Grasping. arXiv 2020, arXiv:2012.01788. [Google Scholar]

- Mur-Artal, R.; Montiel, J.M.M.; Tardos, J.D. ORB-SLAM: A versatile and accurate monocular SLAM system. IEEE Trans. Rob. 2015, 31, 1147–1163. [Google Scholar] [CrossRef] [Green Version]

- Peng, S.; Liu, Y.; Huang, Q.; Zhou, X.; Bao, H. Pvnet: Pixel-wise voting network for 6dof pose estimation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 4561–4570. [Google Scholar]

- Hinterstoisser, S.; Cagniart, C.; Ilic, S.; Sturm, P.; Navab, N.; Fua, P.; Lepetit, V. Gradient Response Maps for Real-Time Detection of Textureless Objects. IEEE Trans. Pattern Anal. Mach. Intell. 2012, 34, 876–888. [Google Scholar] [CrossRef] [Green Version]

- Xiang, Y.; Schmidt, T.; Narayanan, V.; Fox, D. PoseCNN: A Convolutional Neural Network for 6D Object Pose Estimation in Cluttered Scenes. arXiv 2017, arXiv:1711.00199. Available online: https://arxiv.org/pdf/1711.00199.pdf (accessed on 26 May 2018).

- Besl, P.J.; McKay, N.D. Method for registration of 3-D shapes. Sensor fusion IV: Control paradigms and data structures. Int. Soc. Opt. Photonics 1992, 1611, 586–606. [Google Scholar]

- Chi, L.; Jin, B.; Hager, G.D. A Unified Framework for Multi-View Multi-Class Object Pose Estimation. arXiv 2018, arXiv:1803.08103. Available online: https://arxiv.org/abs/1803.08103 (accessed on 8 September 2018).

- Wang, C.; Xu, D.; Zhu, Y.; Martín-Martín, R.; Lu, C.; Fei-Fei, L.; Savarese, S. Densefusion: 6d object pose estimation by iterative dense fusion. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 3343–3352. [Google Scholar]

- Qi, C.R.; Su, H.; Mo, K.; Guibas, L.J. PointNet: Deep Learning on Point Sets for 3D Classification and Segmentation. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- He, Y.; Huang, H.; Fan, H.; Chen, Q.; Sun, J. FFB6D: A Full Flow Bidirectional Fusion Network for 6D Pose Estimation. arXiv 2021, arXiv:2103.02242. Available online: https://arxiv.org/abs/2103.02242 (accessed on 24 June 2021).

- He, Y.; Sun, W.; Huang, H.; Liu, J.; Fan, H.; Sun, J. Pvn3d: A deep point-wise 3d keypoints voting network for 6dof pose estimation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 11632–11641. [Google Scholar]

- Kehl, W.; Manhardt, F.; Tombari, F.; Ilic, S.; Navab, N. Ssd-6d: Making rgb-based 3d detection and 6d pose estimation great again. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 1521–1529. [Google Scholar]

- Zeng, A.; Yu, K.T.; Song, S.; Suo, D.; Walker, E.; Rodriguez, A.; Xiao, J. Multi-view Self-supervised Deep Learning for 6D Pose Estimation in the Amazon Picking Challenge. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA), Singapore, 29 May–3 June 2017; pp. 1383–1386. [Google Scholar]

- Tekin, B.; Sinha, S.N.; Fua, P. Real-Time Seamless Single Shot 6D Object Pose Prediction. 2017. Available online: https://openaccess.thecvf.com/content_cvpr_2018/papers/Tekin_Real-Time_Seamless_Single_CVPR_2018_paper.pdf (accessed on 24 June 2021).

- Zakharov, S.; Shugurov, I.; Ilic, S. DPOD: 6D Pose Object Detector and Refiner. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Korea, 27 October–2 November 2020. [Google Scholar]

- Song, C.; Song, J.; Huang, Q. Hybridpose: 6d object pose estimation under hybrid representations. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 431–440. [Google Scholar]

- Wang, G.; Manhardt, F.; Tombari, F.; Ji, X. GDR-Net: Geometry-Guided Direct Regression Network for Monocular 6D Object Pose Estimation. arXiv 2021, arXiv:2102.12145. [Google Scholar]

- Stevsic, S.; Hilliges, O. Spatial Attention Improves Iterative 6D Object Pose Estimation. arXiv 2020, arXiv:2101.01659. [Google Scholar]

- Zhang, Y.; Song, S.; Tan, P.; Xiao, J. Panocontext: A whole-room 3d context model for panoramic scene understanding. In Proceedings of the 13th European Conference on Computer Vision (ECCV2014), Zurich, Switzerland, 6–12 September 2014; Springer: Cham, Switzerland, 2014; pp. 668–686. [Google Scholar]

- Xu, J.; Stenger, B.; Kerola, T.; Tung, T. Pano2cad: Room layout from a single panorama image. In Proceedings of the 2017 IEEE Winter Conference on Applications of Computer Vision (WACV), Santa Rosa, CA, USA, 24–31 March 2017; pp. 354–362. [Google Scholar]

- Pintore, G.; Ganovelli, F.; Villanueva, A.J.; Gobbetti, E. Automatic modeling of cluttered multi-room floor plans from panoramic images. Comput. Graph. Forum 2019, 38, 347–358. [Google Scholar] [CrossRef]

- Rusu, R.B.; Blodow, N.; Beetz, M. Fast point feature histograms (FPFH) for 3D registration. In Proceedings of the 2009 IEEE International Conference on Robotics and Automation, Kobe, Japan, 12–17 May 2009; pp. 3212–3217. [Google Scholar]

- Tombari, F.; Salti, S.; Di Stefano, L. Unique signatures of histograms for local surface description. In Proceedings of the European Conference on Computer Vision, Crete, Greece, 5–11 September 2010; Springer: Berlin, Germany, 2010; pp. 356–369. [Google Scholar]

- Qi, C.R.; Li, Y.; Hao, S.; Guibas, L.J. PointNet++: Deep Hierarchical Feature Learning on Point Sets in a Metric Space. arXiv 2017, arXiv:1706.02413. Available online: https://arxiv.org/pdf/1706.02413.pdf (accessed on 7 June 2017).

- Qi, C.R.; Litany, O.; He, K.; Guibas, L.J. Deep Hough Voting for 3D Object Detection in Point Clouds. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Korea, 27–28 October 2019. [Google Scholar]

- Aoki, Y.; Goforth, H.; Srivatsan, R.A.; Lucey, S. PointNetLK: Robust & Efficient Point Cloud Registration Using PointNet. arXiv 2019, arXiv:1903.05711. Available online: https://arxiv.org/abs/1903.05711 (accessed on 15 June 2019).

- Weng, Y.; Wang, H.; Zhou, Q.; Qin, Y.; Duan, Y.; Fan, Q.; Chen, B.; Su, H.; Guibas, L.J. CAPTRA: Category-level Pose Tracking for Rigid and Articulated Objects from Point Clouds. arXiv 2021, arXiv:2104.03437. [Google Scholar]

- Huang, J.; Shi, Y.; Xu, X.; Zhang, Y.; Xu, K. StablePose: Learning 6D Object Poses from Geometrically Stable Patches. arXiv 2021, arXiv:2102.09334. [Google Scholar]

- Sarode, V.; Li, X.; Goforth, H.; Aoki, Y.; Srivatsan, R.A.; Lucey, S.; Choset, H. PCRNet: Point Cloud Registration Network Using PointNet Encoding. arXiv 2019, arXiv:1908.07906. Available online: https://arxiv.org/pdf/1908.07906.pdf (accessed on 4 November 2019).

- Gao, G.; Lauri, M.; Hu, X.; Zhang, J.; Frintrop, S. CloudAAE: Learning 6D Object Pose Regression with On-line Data Synthesis on Point Clouds. arXiv 2021, arXiv:2103.01977. Available online: https://arxiv.org/pdf/2103.01977.pdf (accessed on 2 March 2021).

- Shao, L.; Cai, Z.; Liu, L.; Lu, K. Performance evaluation of deep feature learning for RGB-D image/video classification. Inf. Sci. 2017, 385, 266–283. [Google Scholar] [CrossRef] [Green Version]

- Li, C.; Bai, J.; Hager, G.D. A unified framework for multi-view multi-class object pose estimation. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018. [Google Scholar]

- Joukovsky, B.; Hu, P.; Munteanu, A. Multi-modal deep network for RGB-D segmentation of clothes. Electron. Lett. 2020, 56, 432–435. [Google Scholar] [CrossRef]

- Cheng, Y.; Zhu, H.; Acar, C.; Jing, W.; Wu, Y.; Li, L.; Tan, C.; Lim, J.H. 6d pose estimation with correlation fusion. arXiv 2019, arXiv:1909.12936. [Google Scholar]

- Wang, C.; Martín-Martín, R.; Xu, D.; Lv, J.; Lu, C.; Fei-Fei, L.; Savarese, S.; Zhu, Y. 6-pack: Category-level 6d pose tracker with anchor-based keypoints. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), Paris, France, 31 May–31 August 2020; pp. 10059–10066. [Google Scholar]

- Szegedy, C.; Vanhoucke, V.; Ioffe, S.; Shlens, J.; Wojna, Z. Rethinking the inception architecture for computer vision. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 2818–2826. [Google Scholar]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-excitation networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 7132–7141. [Google Scholar]

- Li, X.; Wang, W.; Hu, X.; Yang, J. Selective kernel networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 510–519. [Google Scholar]

- Zhang, H.; Wu, C.; Zhang, Z.; Zhu, Y.; Zhang, Z.; Lin, H.; Sun, Y.; He, T.; Mueller, J.; Manmatha, R.; et al. Resnest: Split-attention networks. arXiv 2020, arXiv:2004.08955. [Google Scholar]

- Li, Y.; Bu, R.; Sun, M.; Wu, W.; Di, X.; Chen, B. Pointcnn: Convolution on x-transformed points. Adv. Neural Inf. Process. Syst. 2018, 31, 820–830. [Google Scholar]

- Hu, Q.; Yang, B.; Xie, L.; Rosa, S.; Guo, Y.; Wang, Z.; Trigoni, N.; Markham, A. Randla-net: Efficient semantic segmentation of large-scale point clouds. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 11108–11117. [Google Scholar]

- Lin, T.Y.; Goyal, P.; Girshick, R.; He, K.; Dollár, P. Focal loss for dense object detection. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 2980–2988. [Google Scholar]

- Hinterstoisser, S.; Lepetit, V.; Ilic, S.; Holzer, S.; Bradski, G.; Konolige, K.; Navab, N. Model based training, detection and pose estimation of texture-less 3d objects in heavily cluttered scenes. In Proceedings of the Asian Conference on Computer Vision, Daejeon, Korea, 5–9 November 2012; Springer: Berlin, Germany, 2012; pp. 548–562. [Google Scholar]

- Everingham, M.; Van Gool, L.; Williams, C.K.I.; Winn, J.; Zisserman, A. The PASCAL Visual Object Classes Challenge 2012 (VOC2012) Results. Available online: http://www.pascal-network.org/challenges/VOC/voc2012/workshop/index.html (accessed on 18 May 2012).

- Xu, D.; Anguelov, D.; Jain, A. Pointfusion: Deep sensor fusion for 3d bounding box estimation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 8–23 June 2018; pp. 244–253. [Google Scholar]

| PoseCNN | PVNet | PointFusion | Densefusion | PVN3D | Ours | |

|---|---|---|---|---|---|---|

| ape | 77.0 | 43.6 | 70.4 | 79.5 | 96.0 | 96.4 |

| benchvise | 97.5 | 99.9 | 80.7 | 84.2 | 94.2 | 94.5 |

| cam | 93.5 | 86.9 | 60.8 | 76.5 | 92.3 | 95.0 |

| can | 96.5 | 95.5 | 61.1 | 86.6 | 93.8 | 94.9 |

| cat | 82.1 | 79.3 | 79.1 | 88.8 | 95.4 | 95.8 |

| driller | 95 | 96.4 | 47.3 | 77.7 | 93.0 | 94.0 |

| duck | 77.7 | 52.6 | 63.0 | 76.3 | 93.7 | 92.9 |

| eggbox | 97.1 | 99.2 | 99.9 | 99.9 | 96.2 | 96.7 |

| glue | 99.4 | 95.7 | 99.3 | 99.4 | 96.4 | 97.4 |

| holepuncher | 52.8 | 81.9 | 71.8 | 79.0 | 94.5 | 96.3 |

| iron | 98.3 | 98.9 | 83.2 | 92.1 | 92.2 | 93.1 |

| lamp | 97.5 | 99.3 | 62.3 | 92.3 | 93.5 | 93.7 |

| phone | 87.7 | 92.4 | 78.8 | 88.0 | 93.5 | 93.6 |

| average | 88.6 | 86.3 | 73.7 | 86.2 | 94.2 | 94.5 |

| PoseCNN | DenFusion | PVN3D | Ours | |||||

|---|---|---|---|---|---|---|---|---|

| ADD-S | ADD(s) | ADD-S | ADD(s) | ADD-S | ADD(s) | ADD-S | ADD(s) | |

| 002 master chef can | 83.9 | 50.2 | 95.3 | 70.7 | 95.8 | 79.6 | 96.4 | 81 |

| 003 cracker box | 76.9 | 53.1 | 92.5 | 86.9 | 95.4 | 93.0 | 94.5 | 90.0 |

| 004 sugar box | 84.2 | 68.4 | 95.1 | 90.8 | 97.2 | 95.9 | 97.1 | 95.6 |

| 005 tomato soup can | 81 | 66.2 | 93.8 | 84.7 | 95.7 | 89.8 | 95.6 | 89.2 |

| 006 mustard bottle | 90.4 | 81 | 95.8 | 90.9 | 97.6 | 96.5 | 97.6 | 95.4 |

| 007 tuna fish can | 88 | 70.7 | 95.7 | 79.6 | 96.7 | 91.7 | 96.7 | 87.0 |

| 008 pudding box | 79.1 | 62.7 | 94.3 | 89.3 | 96.5 | 93.6 | 95.1 | 90.6 |

| 009 gelatin box | 87.2 | 75.2 | 97.2 | 95.8 | 97.4 | 95.1 | 97.2 | 95.6 |

| 010 potted meat can | 78.5 | 59.5 | 89.3 | 79.6 | 92.1 | 84.4 | 91.2 | 87.1 |

| 011 banana | 86 | 72.3 | 90 | 76.7 | 96.3 | 92.4 | 96.9 | 94.1 |

| 019 pitcher base | 77 | 53.3 | 93.6 | 87.1 | 96.2 | 93.8 | 96.9 | 95.5 |

| 021 bleach cleanser | 71.6 | 50.3 | 94.4 | 87.5 | 95.5 | 90.9 | 96.1 | 93.4 |

| ADD-S | ADD(s) | ADD-S | ADD(s) | ADD-S | ADD(s) | ADD-S | ADD(s) | |

| 024 bowl | 69.6 | 69.6 | 86 | 86 | 85.5 | 85.5 | 95.4 | 95.4 |

| 025 mug | 78.2 | 58.5 | 95.3 | 83.8 | 97.1 | 94.0 | 97.3 | 92.8 |

| 035 power drill | 72.7 | 55.3 | 92.1 | 83.7 | 96.6 | 95.0 | 96.7 | 94.9 |

| 036 wood block | 64.3 | 64.3 | 89.5 | 89.5 | 90.8 | 90.8 | 95.1 | 95.1 |

| 037 scissors | 56.9 | 35.8 | 90.1 | 77.4 | 91.8 | 91.8 | 96.5 | 92.6 |

| 040 large marker | 71.7 | 58.3 | 95.1 | 89.1 | 95.2 | 90.1 | 94.3 | 86.2 |

| 051 large clamp | 50.2 | 50.2 | 71.5 | 71.5 | 90.0 | 90.0 | 95.4 | 95.4 |

| 052 extra large clamp | 44.1 | 44.1 | 70.2 | 70.2 | 77.6 | 77.6 | 95.5 | 95.5 |

| 061 foam brick | 88 | 88 | 92.2 | 92.2 | 95.4 | 95.4 | 96.5 | 96.5 |

| Average | 75.2 | 61.3 | 90.9 | 82.9 | 94.2 | 90.7 | 95.8 | 91.4 |

| BB8 | FPS8 | ORB-FPS4 | ORB-FPS8 | ORB-FPS12 | |

|---|---|---|---|---|---|

| ADD-S | 93.2 | 94.2 | 94.1 | 95.8 | 94.7 |

| ADD(S) | 89.4 | 90.7 | 90.5 | 91.4 | 91.0 |

| Without | With | |

|---|---|---|

| ADD-S | 95.6 | 95.8 |

| ADD(S) | 91.0 | 91.4 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Liu, H.; Liu, G.; Zhang, Y.; Lei, L.; Xie, H.; Li, Y.; Sun, S. A 3D Keypoints Voting Network for 6DoF Pose Estimation in Indoor Scene. Machines 2021, 9, 230. https://doi.org/10.3390/machines9100230

Liu H, Liu G, Zhang Y, Lei L, Xie H, Li Y, Sun S. A 3D Keypoints Voting Network for 6DoF Pose Estimation in Indoor Scene. Machines. 2021; 9(10):230. https://doi.org/10.3390/machines9100230

Chicago/Turabian StyleLiu, Huikai, Gaorui Liu, Yue Zhang, Linjian Lei, Hui Xie, Yan Li, and Shengli Sun. 2021. "A 3D Keypoints Voting Network for 6DoF Pose Estimation in Indoor Scene" Machines 9, no. 10: 230. https://doi.org/10.3390/machines9100230

APA StyleLiu, H., Liu, G., Zhang, Y., Lei, L., Xie, H., Li, Y., & Sun, S. (2021). A 3D Keypoints Voting Network for 6DoF Pose Estimation in Indoor Scene. Machines, 9(10), 230. https://doi.org/10.3390/machines9100230