1. Introduction

Riccati differential equations (RDEs) play significant role in many fields of applied science [

1]. For example, a one-dimensional static Schrödinger equation [

2,

3,

4]. The applications of this equation found not only in random processes, optimal control, and diffusion problems [

1] but also in stochastic realization theory, optimal control, network synthesis and financial mathematics. Now, RDEs attracted much attention. Recently, various iterative methods are employed for the numerical and analytical solution of functional equations such as Adomian’s decomposition method (ADM) (see [

5,

6]), homotopy perturbation method (HPM) [

7], variational iteration method (VIM) [

8], and differential transform method (DTM) [

9].

The GPs are non-orthogonal polynomials, which were first applied to solve fractional calculus problem (FCP) involving differential equation [

10], this GPs were successfully applied to solve different kinds of problems in numerical analysis, system of Volterra integro-differential equation [

11] and fractional Klein-Gordon equation [

12], differential topology (differential structures on spheres), theory of modular forms (Eisenstein series).

In this paper, a new operational matrix of fractional order derivative based on Genocchi polynomials is introduced to provide approximate solutions of QRDE. Although the method is very easy to utilize and straightforward, the obtained results are satisfactory (see the numerical results).

The outline of this sequel is as follows: In

Section 2, Some basic preliminaries are stated. Explanation of the problem is explained in

Section 3. Some numerical results are provided in

Section 4. A remark is provided about MPDDEs and OCSs. Numerical applications for solving MPDDEs are stated in

Section 5. Finally,

Section 6 will give a conclusion briefly.

2. Some Basic Preliminaries

Genocchi numbers (

) and Genocchi polynomials (

) have been extensively studied in various papers, (see [

13]). The classical Genocchi polynomials

are usually defined by the following form

where

from (

2), we have

also, we have

3. Explanation of the Problem

Firstly, Riccati differential equation (RDE) is considered

where

,

and

are continuous,

,

and

are arbitrary constants, and

is unknown function.

Now, the collocation method based on Genocchi operational matrix of derivatives to solve numerically RDEs is presented.

Our strategy is utilizing Genocchi polynomials (GPs) to approximate the solution

by

is as given below.

where

then, the

k-th derivative of

can be stated as

by Equations (

3) and (

6), we have

to obtain

, one may use the collocation points

,

.

These equations can be solved by Maple 15 software.

Lemma 1. If and , then is the best approximation of out of U whenwhere and . Proof. (The proof is coming in [

14], but we state again here). One may set

from Taylor’s expansion, we have

since

is the best approximation of

out of

Y and

, then

therefore

□

4. Numerical Applications

In this section, some results are given to demonstrate the quality of the sated technique in approximating the solution of RDEs.

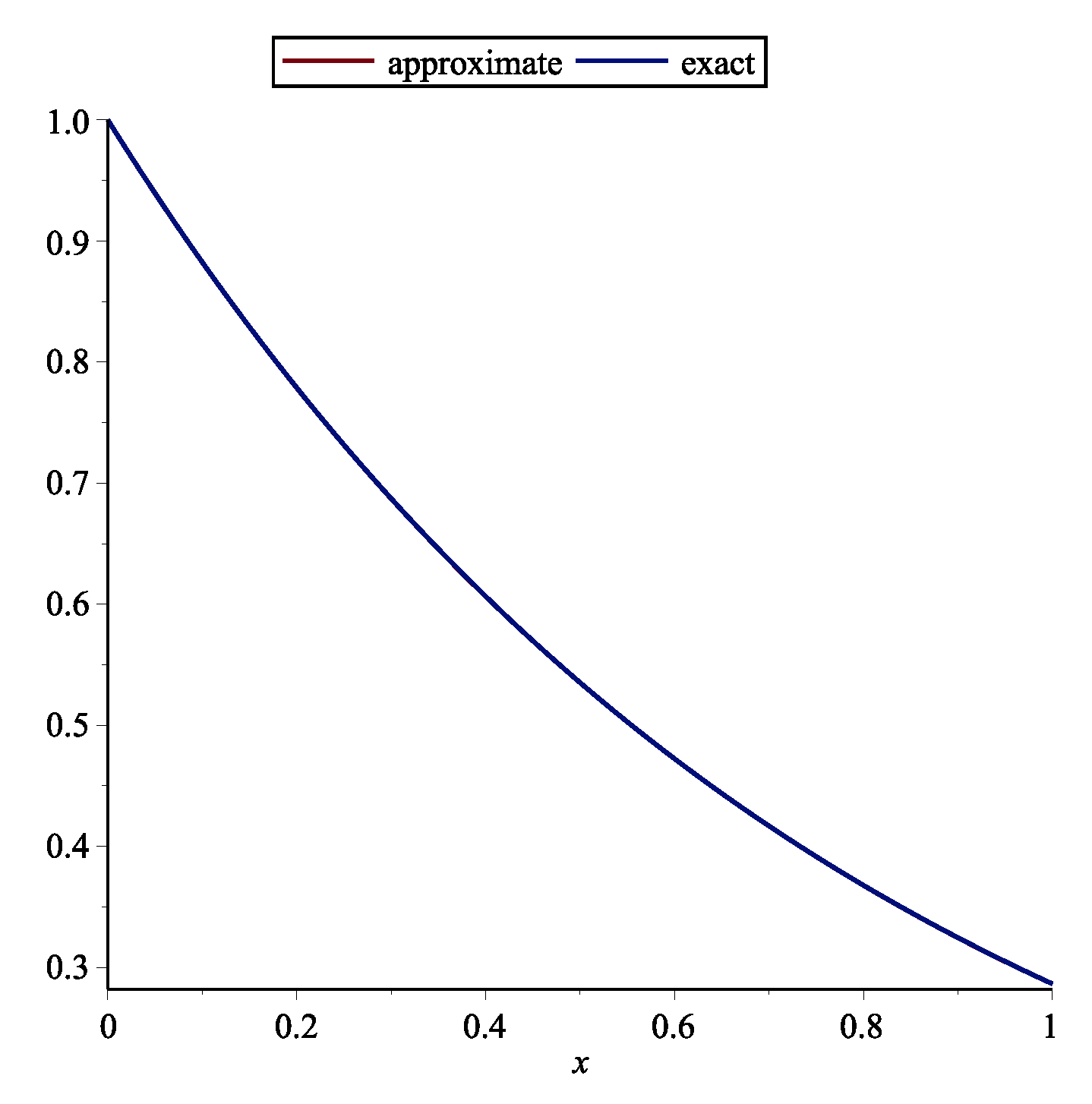

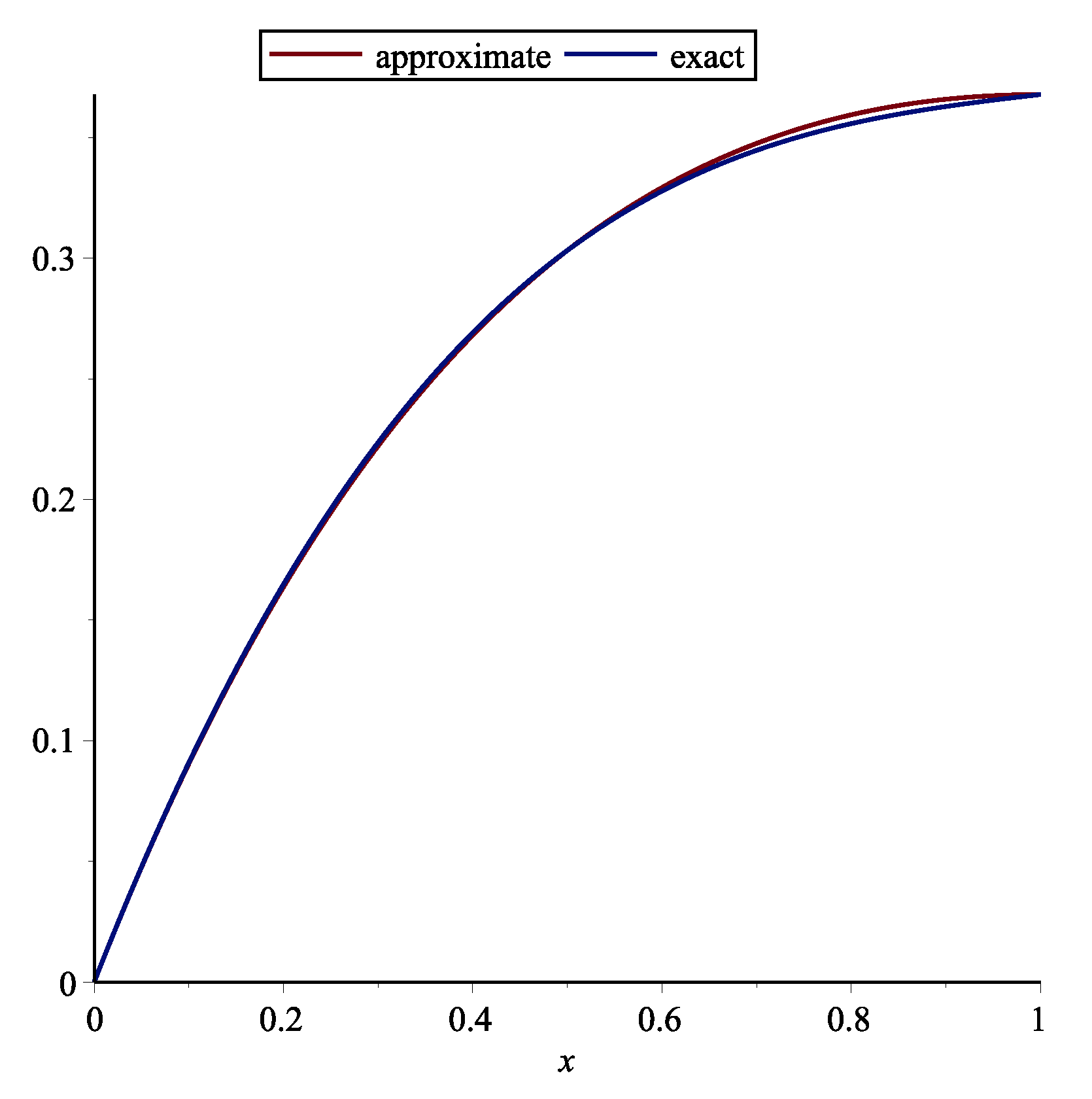

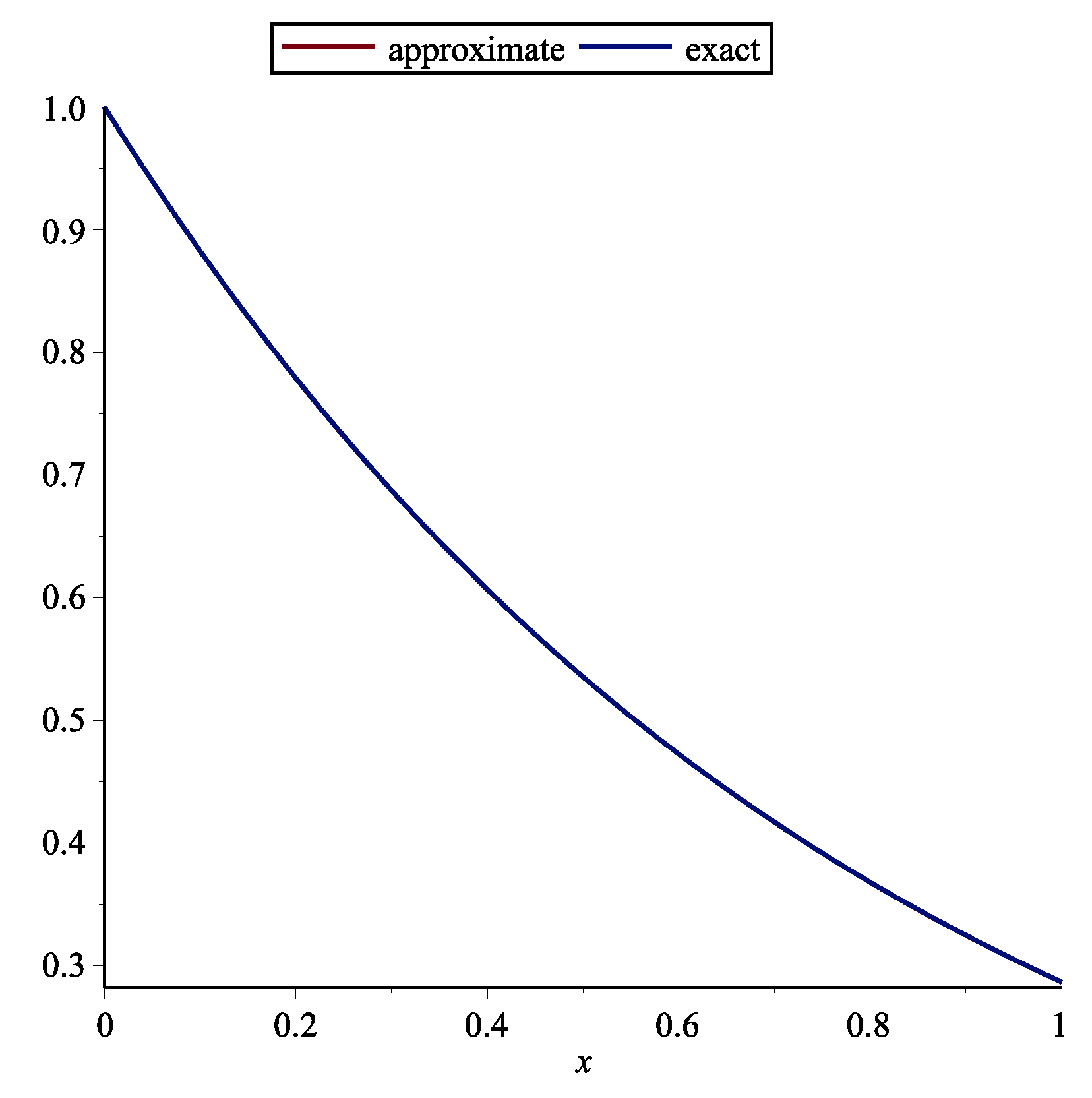

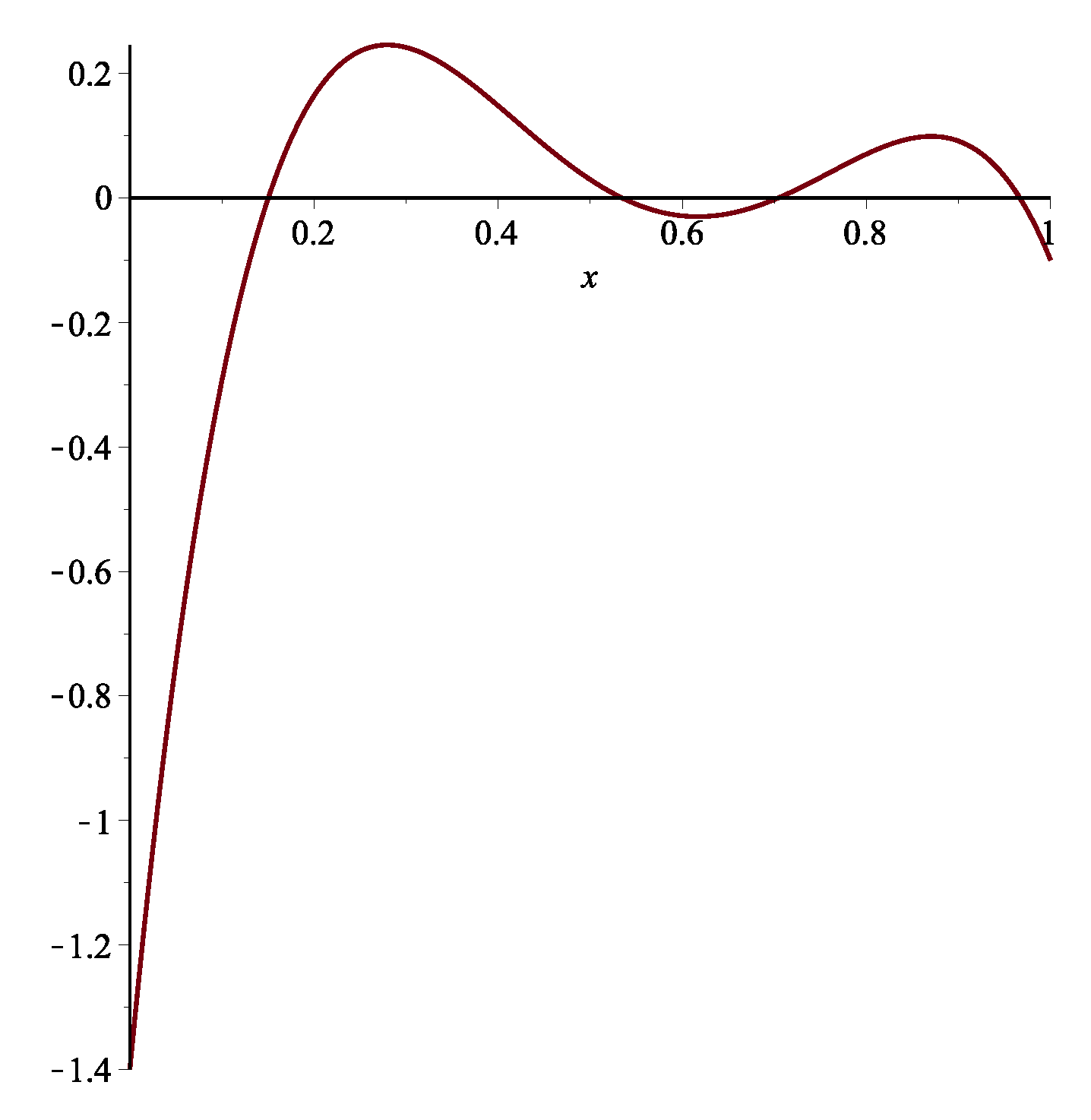

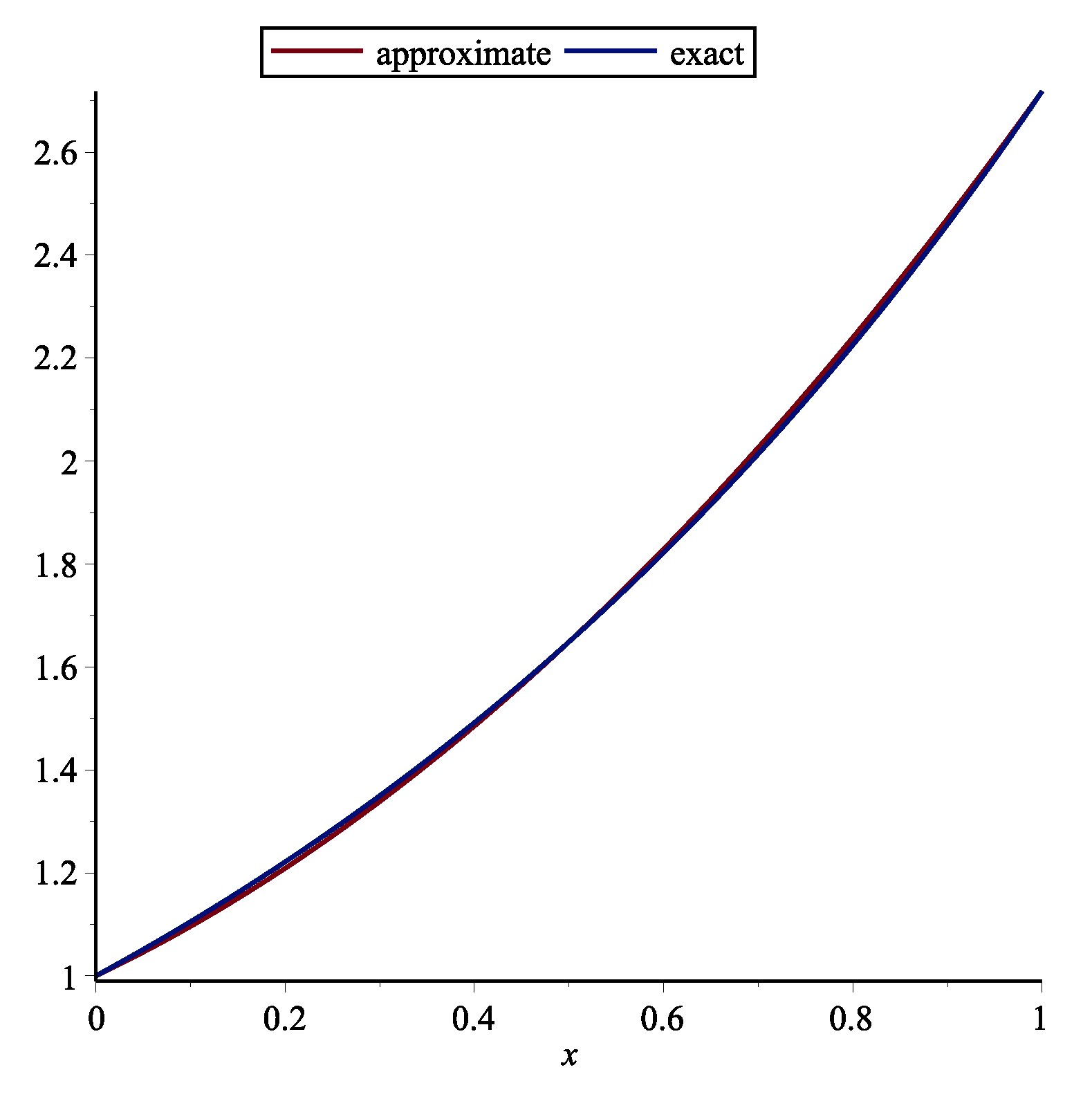

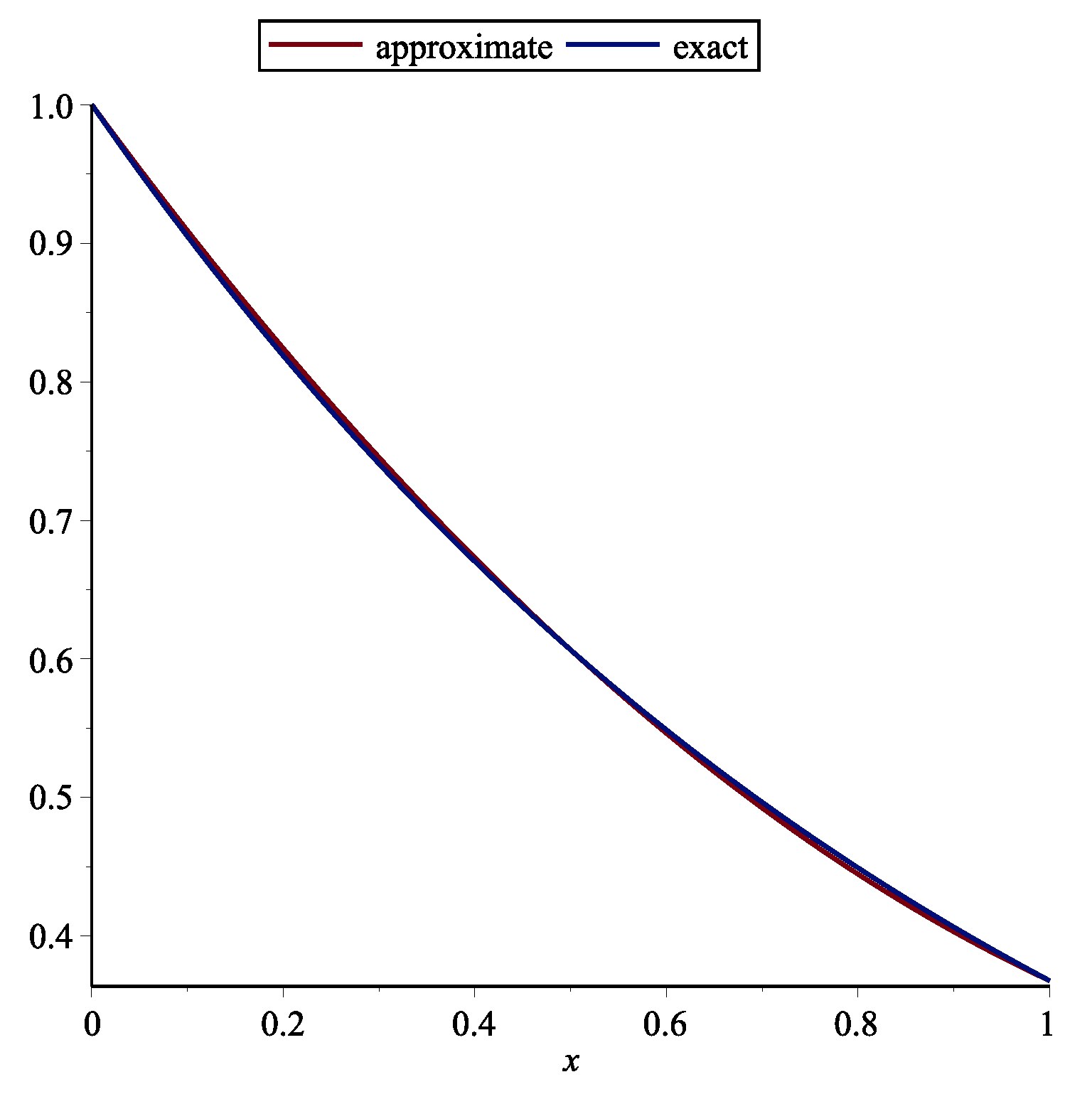

Example 1. First, the following RDE is considered (see [15])One may achieve with this technique by . The approximate and exact solution for are shown in Figure 1. Table 1 demonstrates the absolute error of the this technique. Example 2. Second, the following RDE is considered (see [15])One may obtain with this method by . The approximate and exact solution for are shown in Figure 2. Table 2 demonstrates the absolute error of the this technique and stated technique in [15]. Example 3. Third, the following RDE is considered (see [15])One may obtain with this method by . The approximate and exact solutions for are shown in Figure 3. Table 3 demonstrates the absolute error of the this technique. Remark 1. Delay differential equations (DDEs) are defined as distributed delay systems. DDEs are encountered in various practical systems such as engineering, and in the modeling of feeding system (see [16]). Many researchers used various polynomials for solving DDEs. Orthogonal functions were utilized for solving OCSs with time delay ([17]). Also Chebyshev polynomials (ChPs) were used to solving time-varying systems with distributed time delay. The stated technique in [18] is based on expanding all time functions in terms of ChPs. The Bezier technique is utilized for solving DDEs and switched systems (see [15]). Using Bessel polynomials, pantograph equations were solved in [19]. Here, the following system of MPDDEs is considered

where

is given constant,

and

(

) are given continuous functions.

MPDDEs are in various applications such as astrophysics, number theory, nonlinear dynamical systems (NDSs), quantum mechanics and cell growth, probability theory on algebraic structures, and etc. Properties of the analytic solution of MPDDEs as well as numerical techniques have been studied by several researchers. For example, there are treated in [

20].

In this sequel, a new operational matrix of fractional order derivative based on GPs is introduced to provide approximate solutions of MPDDEs and optimal control systems with pantograph delays.

Our strategy is utilizing GPs to approximate the solution

by

is as given below.

where

also

satisfies in Equations (

4) and (

5), then, the

k-th derivative of

can be stated as

by Equations (

8) and (

6), we have

to obtain

, one may use the collocation points

,

.

5. Numerical Applications for Solving MPDDEs

In this section, some findings are given to demonstrate the quality of the sated technique in approximating the solution of MPDDEs and optimal control systems with pantograph delays.

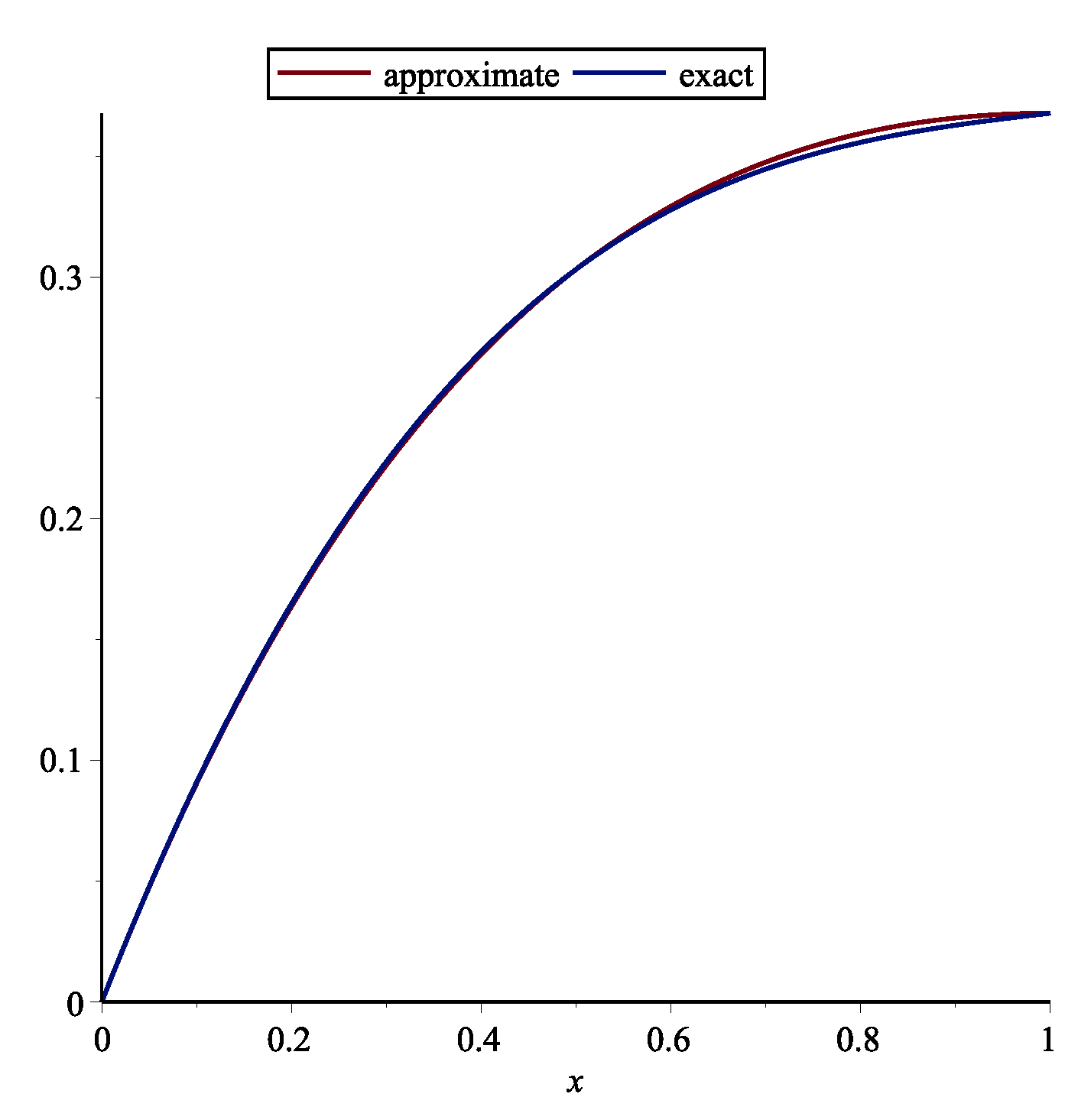

Example 4. The following time-varying system described by (see [21])One may obtainwith this method by . The approximate and exact solution for are shown in Figure 4. Table 4 demonstrates the absolute error of the this technique. Example 5. Consider the time-varying system described by (see [21])One may obtainwith this technique by . The approximate and exact are shown in Figure 5. Example 6. The following optimal control system with pantograph delay is considered (see [21])One may obtainwith this technique by . The approximate and exact is shown in Figure 6. Example 7. First, the following two-dimensional pantograph equations is considered (see [22])One may achievewith this technique by . The approximate and exact and are shown in Figure 7 and Figure 8. Table 5 demonstrates the absolute error of the this technique. 6. Conclusions

In this paper, GPs stated for solving the RDEs, also GPs stated for solving the MPDDEs and optimal control systems with pantograph delays. The stated technique is computationally attractive. Some results are included to explain the validity of this technique. The presented approximate solutions are more accurate compared to the references as it is shown in the tables. By stated technique, the high orders of convergence obtained when it achieved accurate solutions even for small values of n.

Author Contributions

Conceptualization, F.G.; methodology, S.S.; software, F.G.; validation, F.G. and S.S., formal analysis, F.G.; investigation, F.G.; resources, F.G. and S.S.; data curation, S.S.; writing—original draft preparation, F.G.; writing—review and editing, S.S.; visualization, F.G.; supervision, S.S.; project administration, F.G. and S.S.; funding acquisition, S.S. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Conflicts of Interest

The authors declare that there is no conflict.

References

- Reid, W.T. Riccati Differential Equations; Academic Press: NewYork, NY, USA, 1972. [Google Scholar]

- Dehghan, M.; Taleei, A. A compact split-step finite difference method for solving the nonlinear Schrödinger equations withconstant and variable coefficients. Comput. Phys. Commun. 2010, 181, 43–51. [Google Scholar] [CrossRef]

- Medina-Dorantes, F.I.; Villafuerte-Segura, R.; Aguirre-Hernández, B. Controller with time-delay to stabilize first-order procesases with dead-time. J. Control Eng. Appl. Inform. 2018, 20, 42–50. [Google Scholar]

- Villafuerte-Segura, R.; Medina-Dorantes, F.I.; Vite-Hernandez, L.; Aguirre-Hernández, B. Tuning of a time-delayed controller for a general clases of second-order LTI Systems with dead-time. IET Control Theory Appl. 2018, 13, 451–457. [Google Scholar] [CrossRef]

- Bulut, H.; Evans, D.J. On the solution of the Riccati equationby the decomposition method. Int. J. Comput. Math. 2002, 79, 103–109. [Google Scholar] [CrossRef]

- El-Tawil, M.A.; Bahnasawi, A.A.; Abdel-Naby, A. Solving Riccatidifferential equation using Adomian’s decomposition method. Appl. Math. Comput. 2004, 157, 503–514. [Google Scholar]

- Abbasbandy, S. Homotopy perturbation method for quadraticRiccati differential equation and comparison with Adomian’s decomposition method. Appl. Math. Comput. 2006, 172, 485–490. [Google Scholar]

- Geng, F.; Lin, Y.; Cui, M. A piecewise variational iteration methodfor Riccati differential equations. Comput. Math. Appl. 2009, 58, 2518–2522. [Google Scholar] [CrossRef]

- Mukherjee, S.; Roy, B. Solution of Riccati equation with variableco-efficient by differential transform method. Int. J. Nonlinear Sci. 2012, 14, 251–256. [Google Scholar]

- Isah, A.; Phang, C. Operational Matrix Based on Genocchi Polynomials for Solution of Delay Differential Equations. Ain Shams Eng. J. 2018, 9, 2123–2128. [Google Scholar] [CrossRef]

- Loh, J.R.; Phang, C. A New Numerical Scheme for Solving System of Volterra Integro-differential Equation. Alexandria Eng. J. 2018, 57, 1117–1124. [Google Scholar] [CrossRef]

- Afshan, K.; Phang, C.; Iqbal, U. Numerical Solution of Fractional Diffusion Wave Equation and Fractional Klein-Gordon Equation via Two-Dimensional Genocchi Polynomials with a Ritz-Galerkin Method. Computation 2018, 6, 40. [Google Scholar]

- Isah, A.; Phang, C. Operational matrix based on Genocchi polynomials for solution of delay differential equations. Ain Shams Eng. J. 2017. [Google Scholar] [CrossRef]

- Isah, A.; Phang, C. New operational matrix of derivative for solving non-linear fractional differential equations via Genocchi polynomials. J. King Saud Univ.-Sci. 2017. [Google Scholar] [CrossRef]

- Ghomanjani, F.; Khorram, E. Approximate solution for quadratic Riccati differential equation. J. Taibah Univ. Sci. 2017, 11, 246–250. [Google Scholar] [CrossRef]

- Chen, W.; Zheng, W.X. Delay-dependent robust stabilization for uncertain neutral systemswith distributed delays. Automatica 2007, 43, 95–104. [Google Scholar] [CrossRef]

- Nazarzadeh, J. Finite Time Nonlinear Optimal Systems Solution by Spectral Methods. Ph.D. Thesis, Amirkabir University of Technology, Tehran, Iran, 1998. [Google Scholar]

- Esfanjani, R.M.; Nikravesh, S.K.Y. Predictive control for a class of distributed delay systems using Chebyshev polynomials. Int. J. Comput. Math. 2010, 87, 1591–1601. [Google Scholar] [CrossRef]

- Yüzbasi, S.; Sahin, N.; Sezar, M. A Bessel collocation method for numerical solution of generalized pantograph equations. Numer. Methods Part. Equ. 2012, 28, 1105–1123. [Google Scholar] [CrossRef]

- Derfel, G.A.; Vogl, F. On the asymptotics of solutions of a class of linear functional-differential equations. Eur. J. Appl. Math. 1996, 7, 511–518. [Google Scholar] [CrossRef]

- Ghomanjani, F.; Farahi, M.H.; Kamyad, A.V. Numerical solution of some linear optimal control systems with pantograph delays. IMA J. Math. Control Inf. 2015, 32, 225–243. [Google Scholar] [CrossRef]

- Komashynska, I.; Al-Smadi, M.; Al-Habahbeh, A.; Ateiwi, A. Analytical approximate solutions of systems of multi-pantograph delay differential equations using residual power-series method. Aust. J. Basic Appl. Sci. 2014, 8, 664–675. [Google Scholar]

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).