Inertial Iterative Algorithms for Split Variational Inclusion and Fixed Point Problems

Abstract

:1. Introduction

2. Preliminaries

- (i)

- Contraction, if

- (ii)

- Nonexpansive, if

- (iii)

- Firmly nonexpansive, if

- (iv)

- τ-inverse strongly monotone, if there exists such that

- (i)

- The mapping A is called monotone if ;

- (ii)

- ;

- (iii)

- The mapping A is called maximal monotone if is not properly contained in the graph of any other monotone operator.

- (i)

- (ii)

- or

- (i)

- a mapping is τ-inverse strongly monotone if and only if is firmly nonexpansive for .

- (ii)

- If is monotone and is the resolvent of A, then and are firmly nonexpansive for .

- (iii)

- If is nonexpansive, then is demiclosed at zero and if A is firmly nonexpansive, then is firmly nonexpansive.

- (i)

- exists, ,

- (ii)

- (i)

- ;

- (ii)

- ;

- (iii)

- , where .

3. Inertial Iterative Methods

- Iterative Step: Given arbitrary , and , for , choose , where

- Iterative Step: Given arbitrary , and , for , choose , where

4. Main Results

5. Numerical Experiments

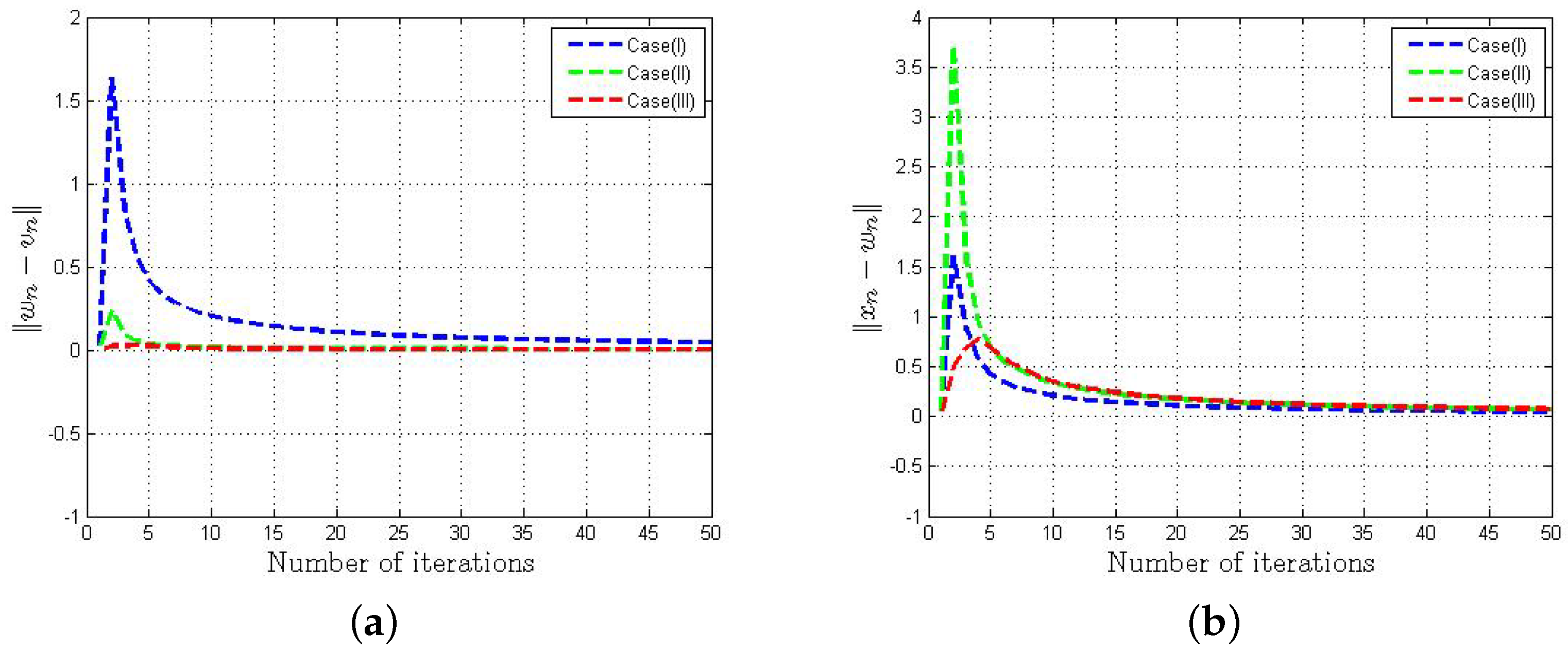

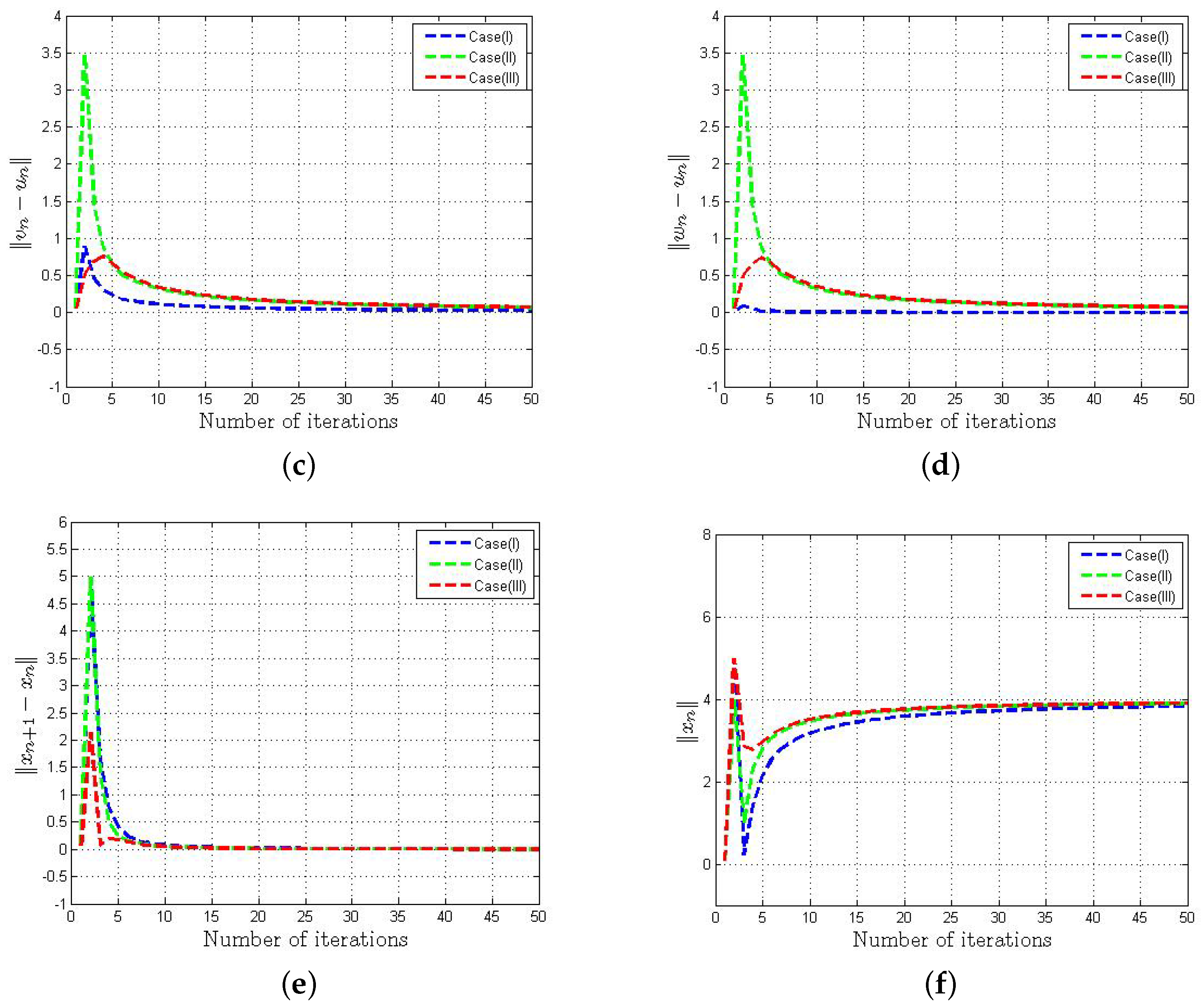

- Case (I): , , , , .

- Case (II): , , , , .

- Case (III): , , , , .

- In Figure 1a–d, we observed that the behavior of and is uniform irrespective of the selection of parameters.

- From Figure 1e–f, we notice that the sequence obtained from Algorithm 2 converges to the same limit with a suitable selection of parameters.

- It is worthwhile to mention that the estimation of is not required to implement the algorithm, which is not so handy to calculate in general.

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Censor, Y.; Elfving, T. A multi projection algorithm using Bregman projections in a product space. Numer. Algor. 1994, 8, 221–239. [Google Scholar] [CrossRef]

- Censor, Y.; Bortfeld, T.; Martin, B.; Trofimov, A. A unified approach for inversion problem in intensity modulated radiation therapy. Phys. Med. Biol. 2006, 51, 2353–2365. [Google Scholar] [CrossRef] [PubMed]

- Censor, Y.; Elfving, T.; Kopf, N.; Bortfeld, T. The multiple-sets split feasibility problem and its applications for inverse problems. Inverse Probl. 2005, 21, 2071–2084. [Google Scholar] [CrossRef]

- Censor, Y.; Motova, X.A.; Segal, A. Perturbed projections and subgradient projections for the multiple-sets split feasibility problem. J. Math. Anal. Appl. 2007, 327, 1244–1256. [Google Scholar] [CrossRef]

- Masad, E.; Reich, S. A note on the multiple-set split convex feasibility problem in Hilbert space. J. Nonlinear Convex Anal. 2007, 8, 367–371. [Google Scholar]

- Censor, Y.; Gibali, A.; Reich, S. The split variational inequality problem, The Technion Institute of Technology, Haifa. arXiv 2010, arXiv:1009.3780. [Google Scholar]

- Censor, Y.; Gibali, A.; Reich, S. Algorithms for the split variational inequality problem. Numer. Alg. 2012, 59, 301–323. [Google Scholar] [CrossRef]

- Moudafi, A. Split monotone variational inclusions. J. Optim. Theory Appl. 2011, 150, 275–283. [Google Scholar] [CrossRef]

- Byrne, C.; Censor, Y.; Gibali, A.; Reich, S. Weak and strong convergence of algorithms for split common null point problem. J. Nonlinear Convex Anal. 2012, 13, 759–775. [Google Scholar]

- Kazmi, K.R.; Rizvi, S.H. An iterative method for split variational inclusion problem and fixed point problem for a nonexpansive mapping. Optim. Lett. 2014, 8, 1113–1124. [Google Scholar] [CrossRef]

- Dilshad, M.; Aljohani, A.F.; Akram, M. Iterative scheme for split variational inclusion and a fixed-point problem of a finite collection of nonexpansive mappings. J. Funct. Spaces 2020, 2020, 3567648. [Google Scholar] [CrossRef]

- Sitthithakerngkiet, K.; Deepho, J.; Kumam, P. A hybrid viscosity algorithm via modify the hybrid steepest descent method for solving the split variational inclusion in image reconstruction and fixed point problems. Appl. Math. Comp. 2015, 250, 986–1001. [Google Scholar] [CrossRef]

- Akram, M.; Dilshad, M.; Rajpoot, A.K.; Babu, F.; Ahmad, R.; Yao, J.-C. Modified iterative schemes for a fixed point problem and a split variational inclusion problem. Mathematics 2022, 10, 2098. [Google Scholar] [CrossRef]

- Alansari, M.; Farid, M.; Ali, R. An iterative scheme for split monotone variational inclusion, variational inequality and fixed point problems. Adv. Differ. Equ. 2020, 485, 1–21. [Google Scholar] [CrossRef]

- Abubakar, J.; Kumam, P.; Deepho, J. Multistep hybrid viscosity method for split monotone variational inclusion and fixed point problems in Hilbert spaces. AIMS Math. 2020, 5, 5969–5992. [Google Scholar] [CrossRef]

- Alansari, M.; Dilshad, M.; Akram, M. Remark on the Yosida approximation iterative technique for split monotone Yosida variational inclusions. Comp. Appl. Math. 2020, 39, 203. [Google Scholar] [CrossRef]

- Dilshad, M.; Siddiqi, A.H.; Ahmad, R.; Khan, F.A. An Iterative Algorithm for a Common Solution of a Split Variational Inclusion Problem and Fixed Point Problem for Non-expansive Semigroup Mappings. In Industrial Mathematics and Complex Systems; Manchanda, P., Lozi, R., Siddiqi, A., Eds.; Industrial and Applied Mathematics; Springer: Singapore, 2017. [Google Scholar]

- Taiwo, A.; Alakoya, T.O.; Mewomo, O.T. Halpern-type iterative process for solving split common fixed point and monotone variational inclusion problem between Banach spaces. Numer. Algor. 2021, 86, 1359–1389. [Google Scholar] [CrossRef]

- Zhu, L.-J.; Yao, Y. Algorithms for approximating solutions of split variational inclusion and fixed-point problems. Mathematics 2023, 11, 641. [Google Scholar] [CrossRef]

- Lopez, G.; Martin-Marquez, V.; Xu, H.K. Solving the split feasibilty problem without prior’ knowledge of matrix norms. Inverse Prob. 2012, 28, 085004. [Google Scholar] [CrossRef]

- Dilshad, M.; Akram, M.; Ahmad, I. Algorithms for split common null point problem without pre-existing estimation of operator norm. J. Math. Inequal. 2020, 14, 1151–1163. [Google Scholar] [CrossRef]

- Gibali, A.; Mai, D.T.; Nguyen, T.V. A new relaxed CQ algorithm for solving Split Feasibility Problems in Hilbert spaces and its applications. J. Indus. Manag. Optim. 2018, 2018, 1–25. [Google Scholar] [CrossRef]

- Moudafi, A.; Gibali, A. l1−l2 Regularization of split feasibility problems. Numer. Algorithms 2017, 1–19. [Google Scholar] [CrossRef]

- Moudafi, A.; Thakur, B.S. Solving proximal split feasibilty problem without prior knowledge of matrix norms. Optim. Lett. 2014, 8, 2099–2110. [Google Scholar] [CrossRef]

- Shehu, Y.; Iyiola, O.S. Convergence analysis for the proximal split feasibiliy problem using an inertial extrapolation term method. J. Fixed Point Theory Appl. 2017, 19, 2483–2510. [Google Scholar] [CrossRef]

- Tang, Y. New algorithms for split common null point problems. Optimization 2020, 1141–1160. [Google Scholar] [CrossRef]

- Alvarez, F.; Attouch, H. An inertial proximal method for maximal monotone operators via discretization of a nonlinear osculattor with damping. Set-Valued Anal. 2001, 9, 3–11. [Google Scholar] [CrossRef]

- Tang, Y.; Zhang, Y.; Gibali, A. New self-adaptive inertial-like proximal point methods for the split common null point problem. Symmetry 2021, 13, 2316. [Google Scholar] [CrossRef]

- Alansari, M.; Ali, R.; Farid, M. Strong convergence of an inertial iterative algorithm for variational inequality problem, generalized equilibrium problem, and fixed point problem in a Banach space. J. Inequal. Appl. 2020, 42, 1–22. [Google Scholar] [CrossRef]

- Abbas, H.A.; Aremu, K.O.; Jolaoso, L.O.; Mewomo, O.T. An inertial forward-backward splitting method for approximating solutions of certain optimization problems. J. Nonlinear Func. Anal. 2020, 2020, 6. [Google Scholar]

- Dilshad, M.; Akram, M.; Nsiruzzaman, M.d.; Filali, D.; Khidir, A.A. Adaptive inertial Yosida approximation iterative algorithms for split variational inclusion and fixed point problems. AIMS Math. 2023, 8, 12922–12942. [Google Scholar] [CrossRef]

- Liu, L.; Cho, S.Y.; Yao, J.C. Convergence analysis of an inertial T-seng’s extragradient algorithm for solving pseudomonotone variational inequalities and applications. J. Nonlinear Var. Anal. 2021, 5, 627–644. [Google Scholar]

- Tang, Y.; Lin, H.; Gibali, A.; Cho, Y.-J. Convegence analysis and applicatons of the inertial algorithm solving inclusion problems. Appl. Numer. Math. 2022, 175, 1–17. [Google Scholar] [CrossRef]

- Tang, Y.; Gibali, A. New self-adaptive step size algorithms for solving split variational inclusion problems and its applications. Numer. Algor. 2019, 83, 305–331. [Google Scholar] [CrossRef]

- Xu, H.K. Iterative algorithms for nonlinear operators. J. Lond. Math. Soc. 2002, 66, 240–256. [Google Scholar] [CrossRef]

- Bauschke, H.H.; Combettes, P.L. Convex Analysis and Monotone Operator Theory in Hilbert Spaces; Springer: Berlin/Heidelberg, Germany, 2011. [Google Scholar]

- Opial, Z. Weak covergence of the sequence of successive approximations of nonexpansive mappings. Bull. Amer. Math. Soc. 1976, 73, 591–597. [Google Scholar] [CrossRef]

- Mainge, P.E. Strong convergence of projected subgradient methods for nonsmooth and nonstrictly convex minimization. Set-Valued Anal. 2008, 16, 899–912. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Filali, D.; Dilshad, M.; Alyasi, L.S.M.; Akram, M. Inertial Iterative Algorithms for Split Variational Inclusion and Fixed Point Problems. Axioms 2023, 12, 848. https://doi.org/10.3390/axioms12090848

Filali D, Dilshad M, Alyasi LSM, Akram M. Inertial Iterative Algorithms for Split Variational Inclusion and Fixed Point Problems. Axioms. 2023; 12(9):848. https://doi.org/10.3390/axioms12090848

Chicago/Turabian StyleFilali, Doaa, Mohammad Dilshad, Lujain Saud Muaydhid Alyasi, and Mohammad Akram. 2023. "Inertial Iterative Algorithms for Split Variational Inclusion and Fixed Point Problems" Axioms 12, no. 9: 848. https://doi.org/10.3390/axioms12090848

APA StyleFilali, D., Dilshad, M., Alyasi, L. S. M., & Akram, M. (2023). Inertial Iterative Algorithms for Split Variational Inclusion and Fixed Point Problems. Axioms, 12(9), 848. https://doi.org/10.3390/axioms12090848