1. Introduction

Over the years, the increase in the usage of social media platforms has contributed to facilitating the phenomenon of spreading false information. The spread of fake news can be linked to achieving several goals, which can be targeted toward specific individuals, groups, politics, regions, religions, ethnicity, culture, race, etc. It can also be connected with illegal and fraudulent activities [

1,

2]. The generation and propagation of fake news has a huge negative impact on the economy, peace, and health all over the world. It is considered a major threat to journalism. It was reported in the year 2013 that the American stock market had lost over USD 130 billion over fake news of its president being injured in an explosion. In 2016, the US elections were packed with fake news glorifying and defaming both presidential candidates. One such fake news claim proclaimed “Pope Francis endorsed President Trump”. This resulted in huge turmoil among the rivals and started riots among the supporters. In recent years, fake news related to the spread and effects of COVID has disturbed the harmony in society, created social instability, and resulted in the economic collapse of several governments.

To overcome the adverse effects of fake news, its propagation needs to be stopped. There is a lot of research in place to moderate social media content with the help of human content moderators. Machine Learning (ML) algorithms, along with previously moderated data, are effective for fake news detection [

1,

2,

3,

4,

5,

6,

7,

8,

9,

10,

11,

12,

13,

14,

15,

16,

17,

18,

19,

20,

21,

22,

23]. However, machine learning models do not provide a significant level of efficacy and improvement over time for various datasets. Keeping in view the success of Deep Learning (DL) in various domains [

24,

25,

26,

27,

28,

29,

30,

31,

32,

33,

34,

35,

36,

37,

38,

39,

40,

41], this research highlights the significance of deep learning techniques for Natural Language Processing (NLP) tasks. The proposed model is a hybrid of deep models, adept placements, and hyperparameter tuning for an effective solution for fake news detection. It utilizes the most valuable expertise of deep learning models in a structured manner to provide an automated, efficient, and effective classification solution for identification and detection of fake news. The main contributions of this research in the field of fake news detection include:

- i.

The introduction of a novel deep neural network model, , for fake news detection;

- ii.

Extraction and evaluation of content attributes at multiple orientations based on deep hypercontext;

- iii.

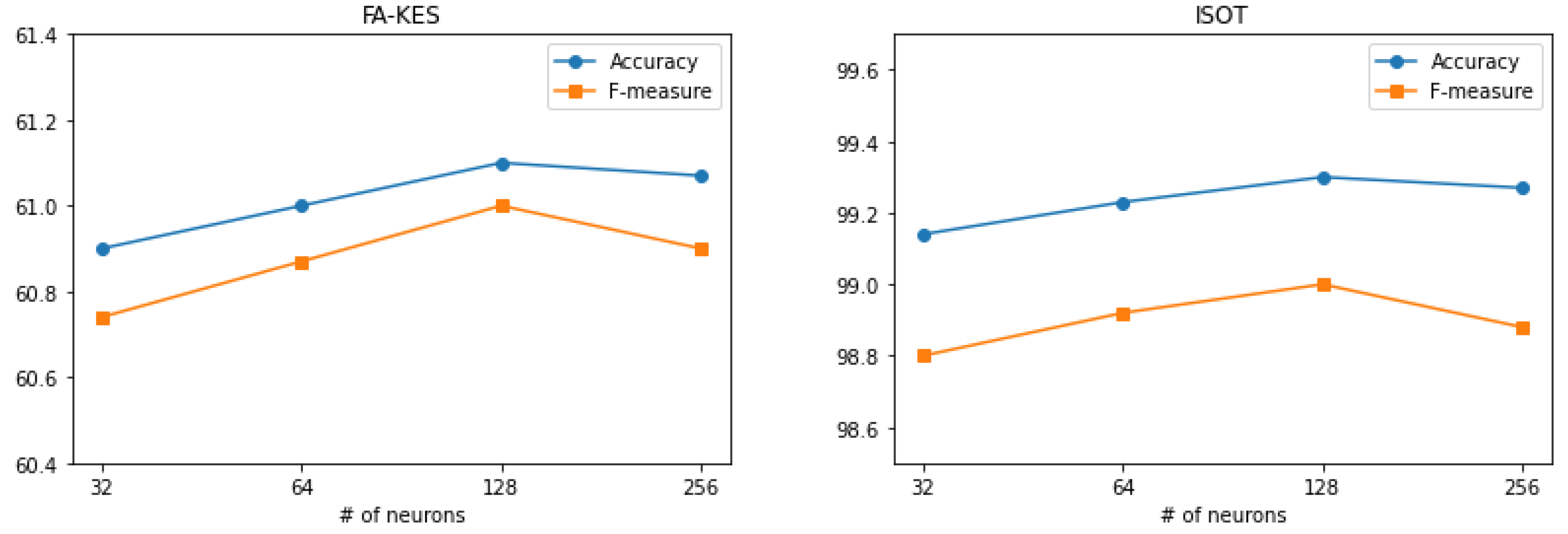

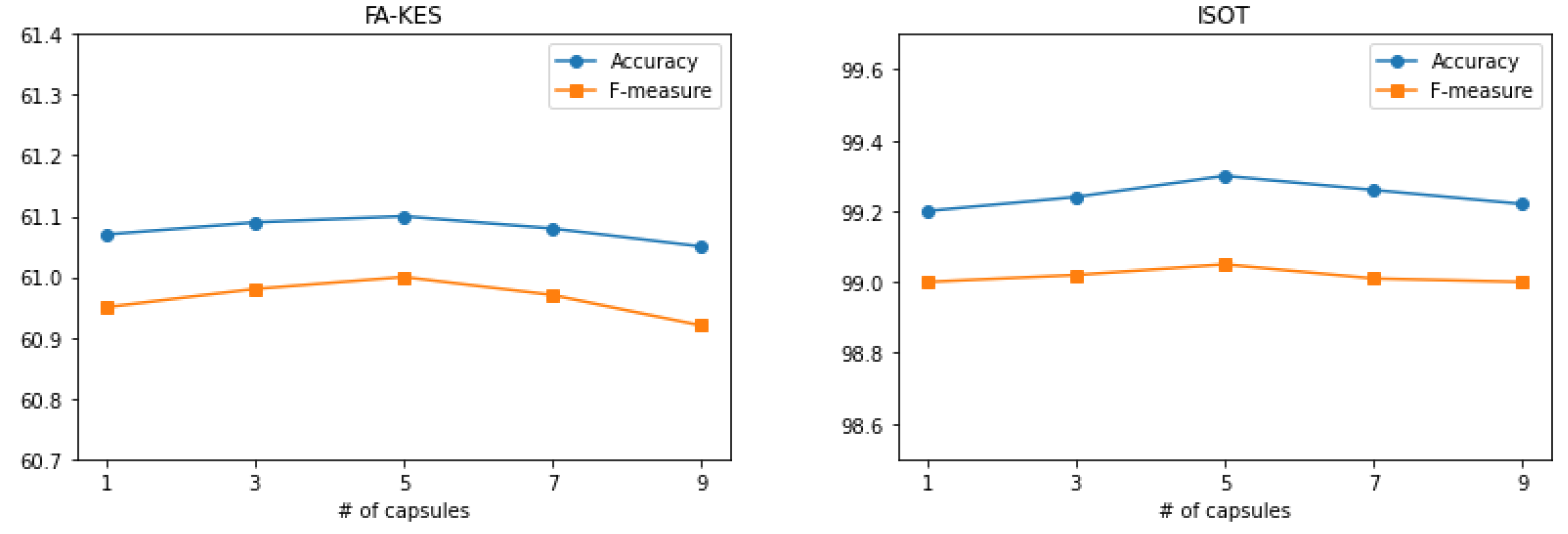

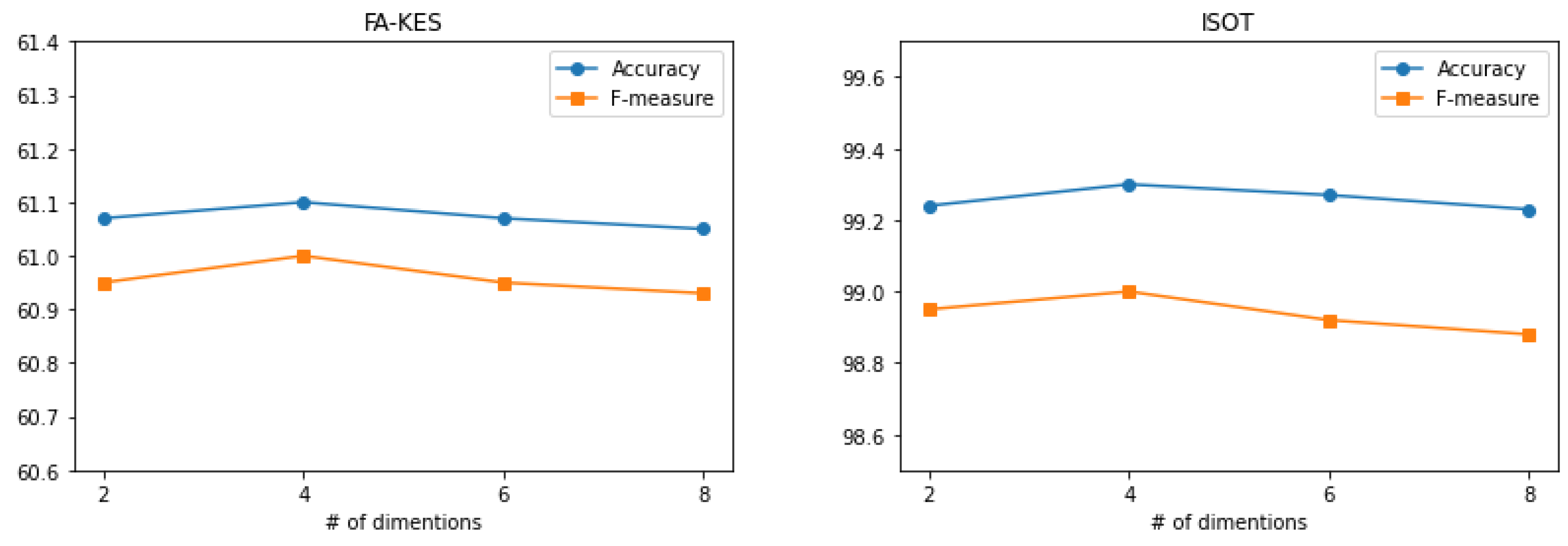

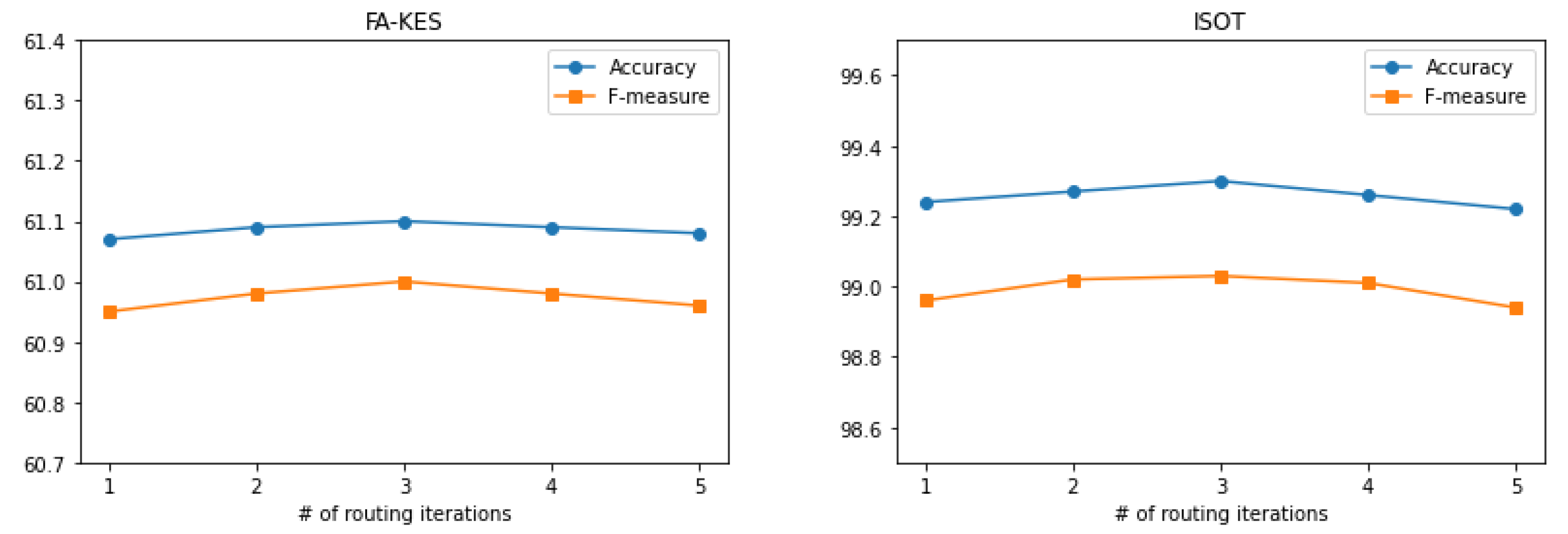

Analysis of the developments in hyperparameter optimization on .

Section 2 describes the literature review of fake news detection along with the limitations in this domain.

Section 3 includes the methodology of the proposed HyproBert model.

Section 4 includes the experiments and results.

Section 5 discusses the evaluation results and comparative analysis. The research along with a description of future work is concluded in

Section 6.

2. Literature Review

In this section, a review of various existing machine learning and deep learning techniques being used for detection of fake news is presented. This section also summarizes the status and limitations of fake news detection in the news media.

The research on fake news detection is divided into two types: supervised learning and unsupervised learning. Ozbay and Alatas [

3] suggested a two-step method to identify false news using text information; the first stage entails preprocessing, and the second stage involves applying the textual feature vector to 23 clever supervised classifiers for further experimental assessment. By applying multiple word embedding approaches across five datasets in three languages sourced from different social media sites, Faustini Covoes [

4] created a model for detecting false news that is independent of language and platform. To this end, Guo et al. [

5] thoroughly reviewed the advancements in the field of bogus information detection, detailing the many problems, unique methodologies, and specifics of available datasets.

For the first time in the domain of fake news detection, Ozbay and Alatas [

6] employed two metaheuristic algorithms for the identification of fake news. Grey Wolf Optimization (GWO) resulted in higher performance for social media data. Kumar et al. [

9] used five different classifiers and thirteen different attributes to categorize tweets; they also used Particle Swarm Optimization (PSO) to extract an ideal feature set from the tweets’ text and improve classifier performance.

Perez-Rosas et al. [

7] created two new datasets for automatic identification of false news, each including seven different types of news related content. They laid out an extensive framework and patterns for distinguishing between authentic and fake news. In order to identify fake news, Ahmed et al. [

8] developed a novel dataset called ISOT that was compiled from actual news stories around the globe. Linear Support Vector Machine (LSVM) classifier along with Term Frequency–Inverse Document Frequency (TF–IDF) evaluation for feature vector representation were utilized for the classification of fake news. However, these methods require bespoke features, which adds to their production and time requirements. Akyol et al. [

10] determined applicability of Random Forest (RF), Gradient Boost Tree (GBT), and Multilayer Perceptron (MLP) for the concept of fake news detection.

With the rise of deep learning theory, an increasing number of researchers employ it to detect fake news. Recent research has been progressively more focused on unsupervised and semi-supervised detection methods. The capacity of deep learning models to automatically extract high-level properties from news articles is a major draw for academics; this makes these methods particularly useful for diagnosing fake news [

11,

12,

13,

14,

15,

16,

17,

18,

19,

20]. Goldani et al. [

19] recommend the use of embedding models and Convolutional Neural Networks (CNN) to detect bogus news. During model training, static and dynamic word embeddings were compared and gradually updated. Their approach was evaluated using two public datasets. ISOT datasets improved accuracy by 7.9% and 2.1%, respectively.

Ma et al. [

14] developed a Recurrent Neural Network (RNN) to represent the textual data sequence for rumor detection. Using text data, Asghar et al. [

11] created a deep learning model that recognizes rumors by combining CNN with Bidirectional Long Short-Term Memory (Bi-LSTM). In order to effectively manage the textual contents in a bi-directional method, Kaliyar et al. [

13] developed a hybrid of the CNN and BERT (Bidirectional Encoder Representations from Transformers) model, named FakeBERT.

Yu et al. [

18] emphasized the shortcomings of RNN-based algorithms for the early identification of disinformation. They suggested a CNN model for extracting significant features and spotting propaganda. To identify and categorize bogus news, Shu et al. [

15] took a pragmatic approach by establishing a co-attention method to find the top 1000 most important lines in the material and combine them with the top 1000 most significant user reactions. Autoencoder-based Unsupervised Fake News Detection (UFNDA) was proposed by Li et al. [

20]. Their methodology combines the context and content data from news to produce a feature vector that will improve the identification of false news. For early rumor detection, Chen et al. [

12] developed an attention-based RNN to accumulate unique language properties over time. Wang [

16] offered a new dataset named LIAR. He suggested a deep learning model that combines CNN and Bi-LSTM to extract textual features and meta-data features, respectively. Yin et al. [

17] employed Support Vector Machine (SVM) classifiers to determine correlation among data. CNN and Principal Component Analysis (PCA) are utilized as the feature extraction tools. In another study, CNN, Long Short-Term Memory, and Convolutional Long Short-Term Memory (C-LSTM) are all used in the ensemble learning model for fake news detection presented by Toumi and Bouramoul [

42]. The hybrid technique of CNN and RNN was proposed by Nasir et al. [

43]. At first, CNN was employed for feature extraction close to the point of interest, whereas RNN was used for discovering a correlation. The difficulty of optimizing RNN is exacerbated by the vanishing and exploding gradient problem [

44].

Current Status and Limitations

The current literature analysis concludes with the reality of false news and its uncontrolled spread. The extraction of deep semantic and contextual information is crucial for the identification and classification of propaganda. Extracting context from an instance’s several orientations is a key function of the Capsule Network (CapsNet) [

45]. CapsNets are also utilized for NLP in various studies, including text-based categorization [

46], misleading headlines recognition [

47], and opinion categorization [

48]. It has also been utilized specifically for fake news detection [

49,

50], but these studies lack the effective exploration of CapsNet. While DL models have seen extensive use in analyzing news articles, they have yet to work out the following: retaining long-term word dependencies, using a parallelization technique in training, accepting bidirectional input sentences, and maintaining an attention mechanism. The proposed HyproBert model integrates the convolution layer, BiGRU, and CapsNet with self-attention to extract the spatial features along with contextual information and hidden representation from multiple orientations. In the current work, DistilBERT, a lighter and more efficient version of the BERT model has been implemented to extract context-based high-level characteristics from news text. A BERT-based model outperforms alternatives in terms of training loss decay time [

13]. Adopting bidirectional pretrained word embeddings accelerates model training and improves classification performance [

21]. Additionally, the proposed HyproBert model integrates the convolution layer, Bidirectional- Gated Recurrent Unit (BiGRU), and CapsNet with self-attention to extract the spatial features along with contextual information and hidden representation from multiple orientations. The BiGRU model is superior in its ability to capture the semantic combination of text and to store extended context data [

50]. A CapsNet equipped with a self-attention mechanism is employed to simplify the routing of CapsNet at each layer [

49]. The goal of the proposed HyproBert model is to improve the performance of classification by offering an interesting, effective, and efficient model to detect fake news.

3. Methodology

In this section, a detailed description of the proposed model HyproBert for fake news detection in news articles is presented.

The proposed HyproBert model is presented in

Figure 1. The title of the news article along with the complete text is utilized for the tokenization process. Afterward, embedding vectors are generated to represent features in the solution space for further classification. Initially, CNN is applied along with operations to extract the spatial features from the embeddings. BiGRU is applied sequentially to the output of CNN to gather contextual information. Capsule networks are used along with the attention layer to enhance the spatial features [

46]. Although CNN has extracted the spatial features from the contents, the capsule network identifies the spatial relations between the extracted features to model the hierarchical relationships within the data [

45]. The proposed HyproBert model is a combination of essential, efficient, and effective DL models. The sequential processing of input extracts, enhances, and correlates highly valuable features semantically and contextually [

51]. The introduction of the attention layer between the BiGRU and CapsNet enlightens context awareness [

46] and helps CapsNet to identify semantic representation and hidden contextual information [

49]. The details of the steps involved, from preprocessing data to the final output of the proposed HyproBert model, are provided in the following section.

3.1. Data Preprocessing

In order to utilize a dataset, it must be cleansed according to the requirements of the model. It will significantly help the model to understand the data in a better manner and increase its performance. Generally, it includes steps, such as stop word removal, tokenization, removal of special characters and spaces, numbers to words, sentence segmentation, etc. The datasets under consideration are real-world news datasets, and they contain several URLs. In this research, we examine text semantics for deep textual context. As a basic preprocessing step, we removed URLs, stop words, and other noise from the given data before feeding it into the proposed model.

3.2. The Proposed HyproBert Model

The components of the proposed HyproBert model along with the function and significance of each component is described as follows.

3.2.1. Input Layer

After preprocessing, the data are sent into the HyproBert input layer. The input layer is responsible for separating the title and text of a news article into smaller tokens. Furthermore, each token is indexed with a unique number using a dictionary. Additionally, padding is used to keep the length of the input text constant. Finally, numerical vectors are created from all the text from the news article N, converted into tokens t, and indexed with a dictionary D, such that . is used for end-to-end tokenization.

3.2.2. Embedding Layer

The embedding layer is responsible for learning the word representation based on training over immense real-world data. It also finds that words that are connected conceptually have comparable vector representations. In this research, we use DistilBERT embedding, which is an approximate version of the BERT. It performs around 97% similar to the BERT model and uses the approximation of the posterior distribution generated by the BERT model [

21]. It has over 110 million parameters and is still much smaller than BERT. The number of transformer layers is also reduced to six layers. Furthermore, the training and prediction times are significantly smaller. This makes DistilBERT the ultimate choice for the proposed model.

3.2.3. Convolutional Layer

Convolutional layers are the fundamental constituents of convolutional neural networks [

18]. The output of neurons is controlled by the convolutional layer. By figuring out the scalar product of their weights and the density region of the input, the neurons are linked to parts of the input that are close to them [

19].

The convolution layer is utilized in HyproBert to extract the spatial features from the embedding layer. In experimentation, a one-dimensional convolution layer along with 128 filters of 3 sizes is employed. The local spatial features are combined in a pooling operation to generate high-order features. function is utilized for the pooling operation. is used as an activation function to improve the performance. The feature sequence can be represented as ), where i is the index of the feature sequence, is the filter weight, is the window size, and b is the bias weight.

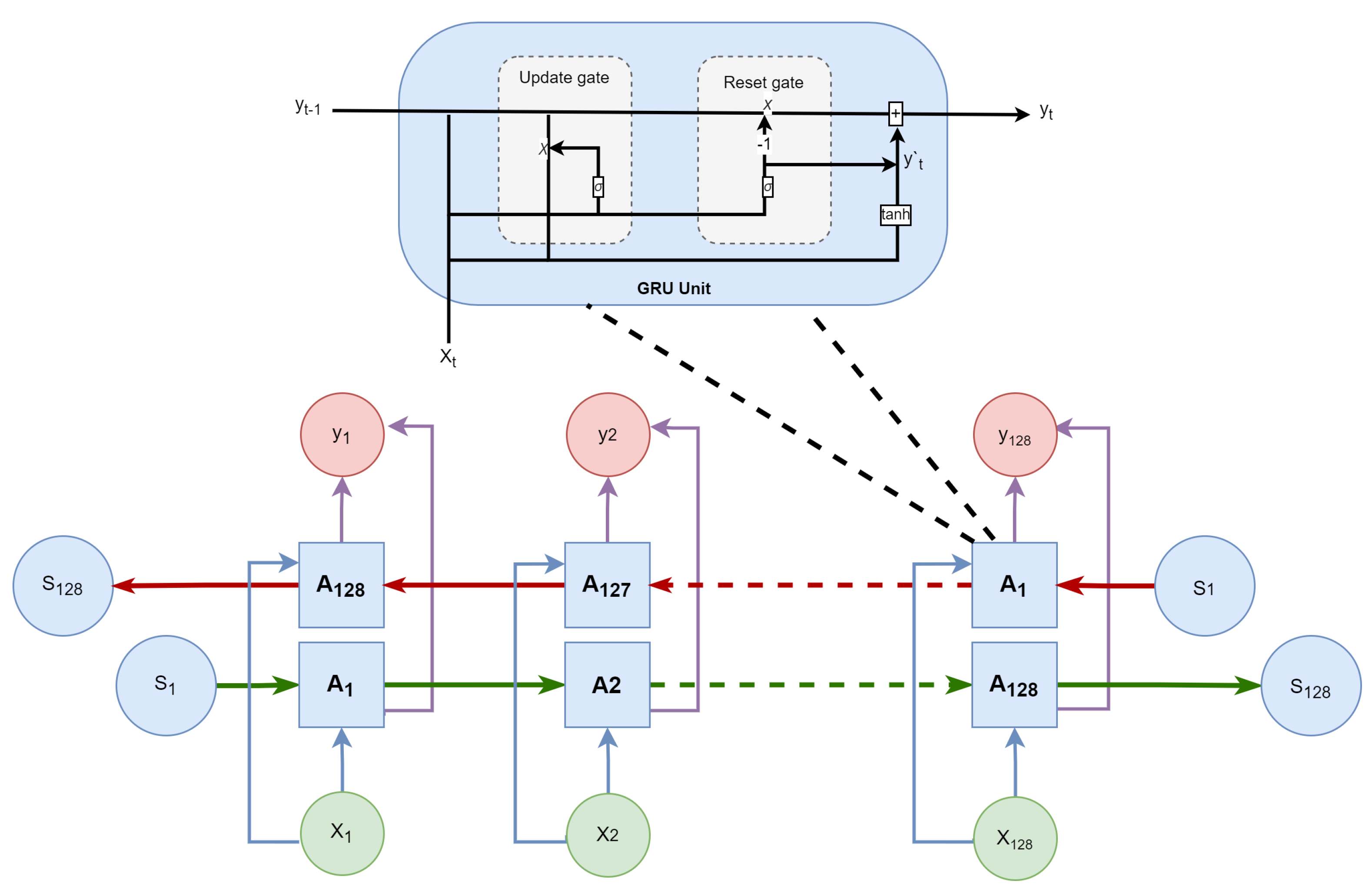

3.2.4. Bigru Layer

The gated recurrent unit (GRU) is the fundamental building block of the Gated Recurrent Neural Network (GRNN). At each iteration, GRNN takes a textual vector as input and uses the previous iteration’s output vector as a weight in a weighted sum that is used to update the nodes in its hidden layer. The present context is built using a bias vector to selectively retain or discard related information. The operation of a hidden layer is managed by the following equations:

where

is the update gate,

is the reset gate,

is a sigmoid function,

is a hyperbolic tangent function, and

,

, and

are training parameters. A

network is capable of understanding and learning relationships between current, past, and future data, which is effective for extracting deep features from the input sequence [

50]. The structure of

is presented in

Figure 2.

The proposed model HyproBert utilizes BiGRU for its specialties in processing context and extracting sentence representations with forwarding and backward direction propagation [

50]. The BiGRU layer is applied directly to the output of the convolution layer. A forward GRU processes the input sequence of

, and the backward GRU processes the input sequence of

. Later, both GRUs are integrated to combine the collected context information. As a result, the output of the BiGRU layer has an improved feature representation of propaganda incorporated in the input news text.

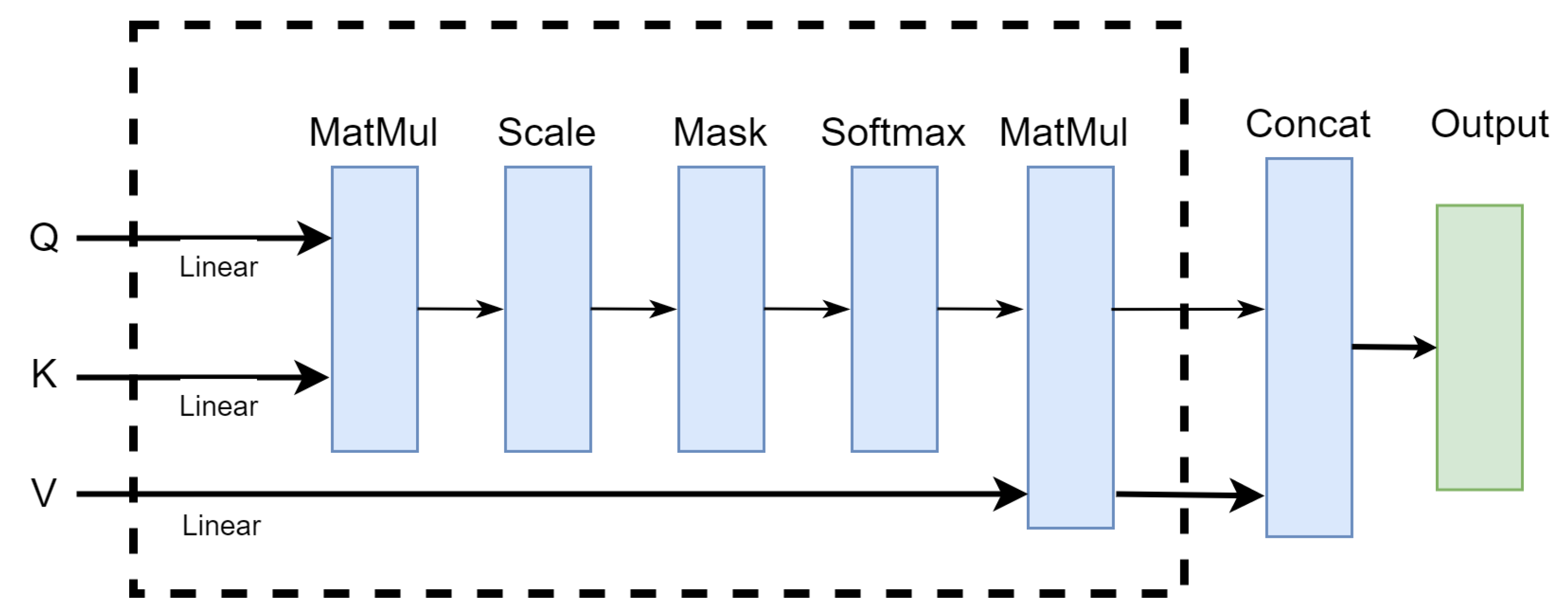

3.2.5. Attention Layer

The contextual information and representations from BiGRU are stored in a fixed-size vector. In the case of large input sequences, the related information cannot be fully stored in the vector, which hinders the understanding of the model, hence decreasing the overall efficiency. The attention mechanisms are known to focus on the most important parts of input while generating dynamic adaptive weights [

22,

23]. Furthermore, self-attention (SA) includes the interaction of inputs all together to generate an output for a single input, as shown in

Figure 3. SA is a powerful tool for identifying self-awareness and dependencies amongst input sequences [

46]. SA considers the entire context of vector representations and substitutes the words on the principle of location and associated weights.

In the proposed model, HyproBert, SA is used to highlight the context awareness and relevant features to detect the hidden relations in news data. This will result in a better understanding of the data and increase classification accuracy.

where

Q is query,

k is key,

v is the value, and

is the linear dimension of

k.The HyproBert model uses the self-attention process; therefore, it sets

Q =

K =

V. This offers the benefit that the present position’s information and the information of all other places may be computed to capture the interdependence throughout the full sequence. If the input is a sentence, for instance, each word must be attention-computed alongside all other words in the phrase.

3.2.6. Capsule Network Layer

Generally, CNN is known to mislay important information during the classification process. A higher performance of CNN requires hyperparameter tuning, which is a cumbersome and manual task. Hinton et al. [

52] presented the CapsNet, a novel neural network architecture. It collects the syntactically enhanced characteristics from the input data while considering the positions and order of other words in a sentence. It has the ability to distinguish between full and partial relations in textual data. It outperforms a CNN in terms of recognizing a representation of the data and underlying relevant information in the input text [

46,

47,

48]. Over the years, it has shown outstanding results for text categorization and information retrieval.

CapsNet is a combination of several capsules connected as neurons to detect semantic and syntactic information from input data. The capsules are presented as vectors of classification probability, and the direction of the capsules represents the position of text in the input. Additionally, the weight of hidden features is adjusted by the dynamic routing algorithm [

53]. This improves and controls the limits on attention and connections between capsules to optimize the capsule network.

The proposed

model employs the capsule network to develop and focus the semantic and syntactic awareness of the news text input [

49]. The output from the attention layer is provided as input for CapsNet. Initially, a nonlinear function is used to convert the input to a feature capsule

, which is then used to produce a prediction vector

along with a weight matrix

to forecast the relationship between the layers of the input and the outcome. Furthermore, the coupling coefficients

are calculated using the dynamic routing algorithm, represented in Equation (

5). The vector

is updated throughout the number of iterations

. This process provides attention to the high weights of related propaganda words and overlooks the irrelevant, less effective words, as shown in

Figure 4. As a result, the output of the capsule has a higher-level contextual representation considering various orientations. The output of the capsule can be represented as Equation (

3), where

and

represent the standard exponential function for the input vector and the output vector, respectively. The final output of capsule

contains a local ordering of words considering various orientations; it is represented in Equation (

5).

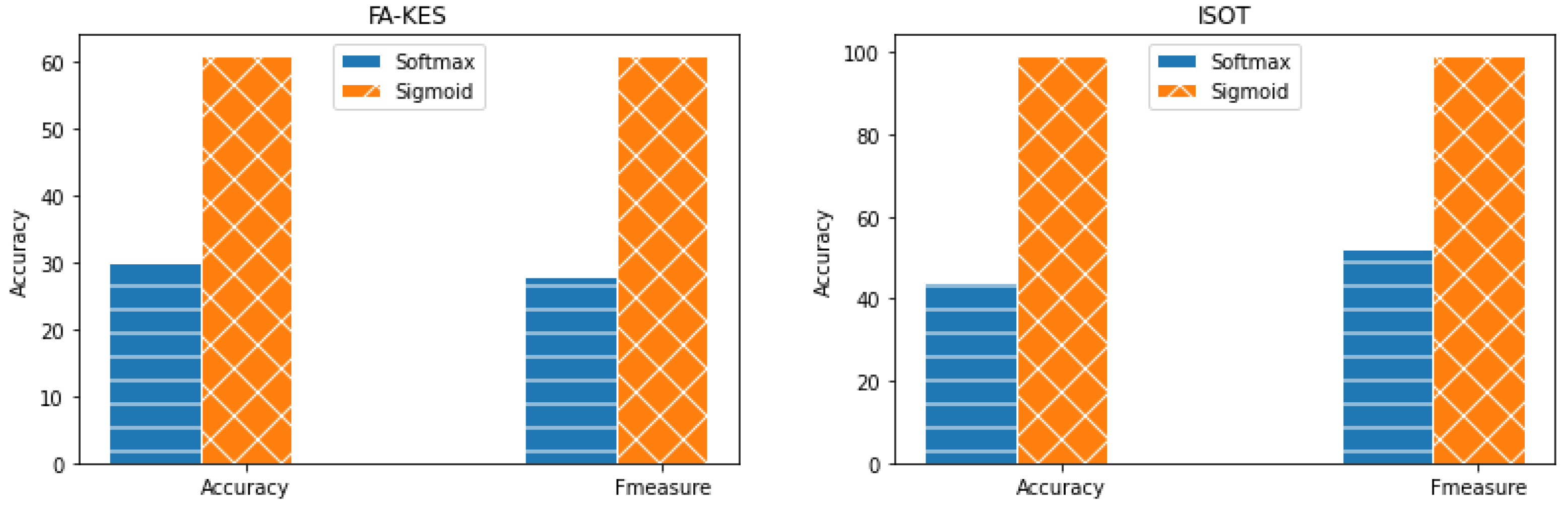

3.2.7. Dense and Output Layer

The proposed HyproBert model uses the output of the capsule network layer as input to the fully connected layer. This layer classifies the input by applying the sigmoid activation function. The collection of higher probability scores determines the news article to be fake news and vice versa.

The orderly processing of the proposed HyproBert model is presented in Algorithm 1. Firstly, the processing of HyproBert is set to sequential. The news article’s title and the text body are separated for tokenization. The tokens are then used for word embeddings. DistilBERT is used for both tokenization and embedding [

21]. A single layer of one-dimensional CNN executes the word embedding vectors to extract the spatial features from the data. An optimized dropout of 0.4 is allotted to the model. Later, two layers of BiGRU are deployed. The self-attention layer, along with the capsule layer with optimized hyperparameters, is deployed. A dense layer with sigmoid activation is deployed to integrate the features required for the classification process. Finally, HyproBert is compiled using the Adam optimizer.

| Algorithm 1 |

- Require:

News articles - Ensure:

Fake news - 1:

Model ← Sequential () - 2:

Model ← model.add(DistilBertTokenizer()) - 3:

Model ← model.add(Distillbert embeddings()) - 4:

Model ← model.add(Conv1D(128, 3, activation = ReLU)) - 5:

Model ← model.add(DropOut(0.4)) - 6:

Model ← model.add(BiGRU(128)) - 7:

Model ← model.add(BiGRU(128)) - 8:

Model ← model.add(Self-attention) - 9:

Model ← model.add(Capsule(3, 5, 4)) - 10:

Model ← model.add(Dense(1, activation = Sigmoid)) - 11:

Model ← Model.compile(binary_crossentropy, Adam,)

|

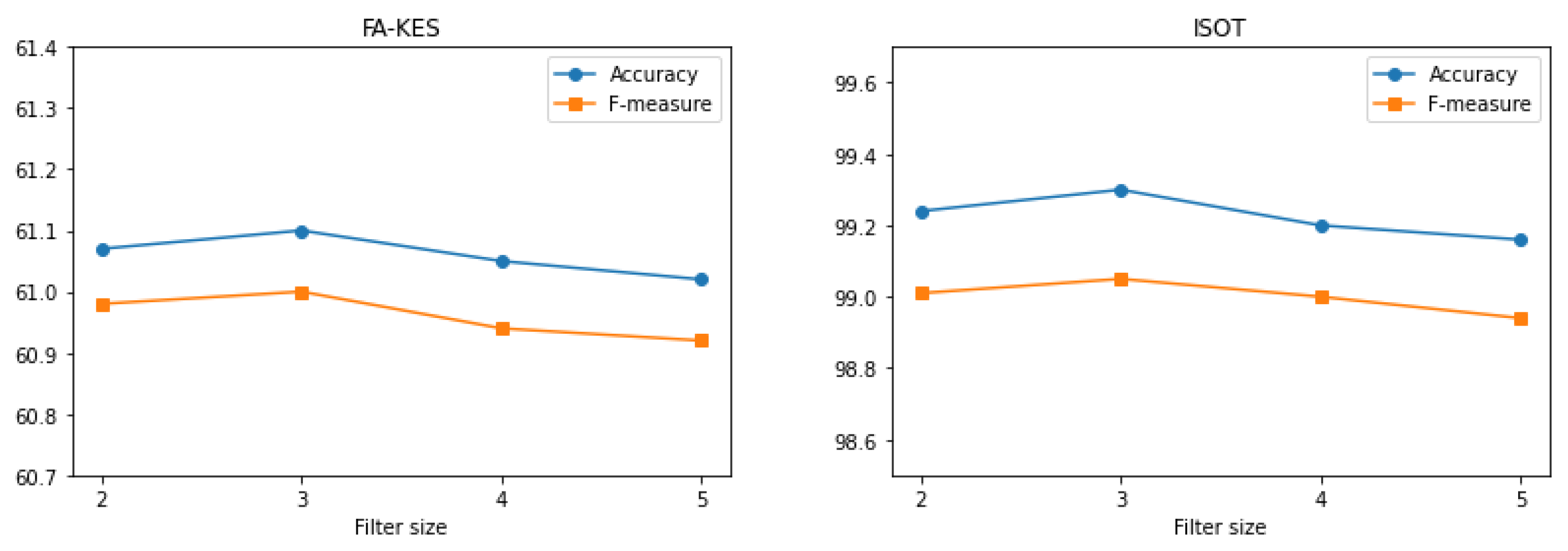

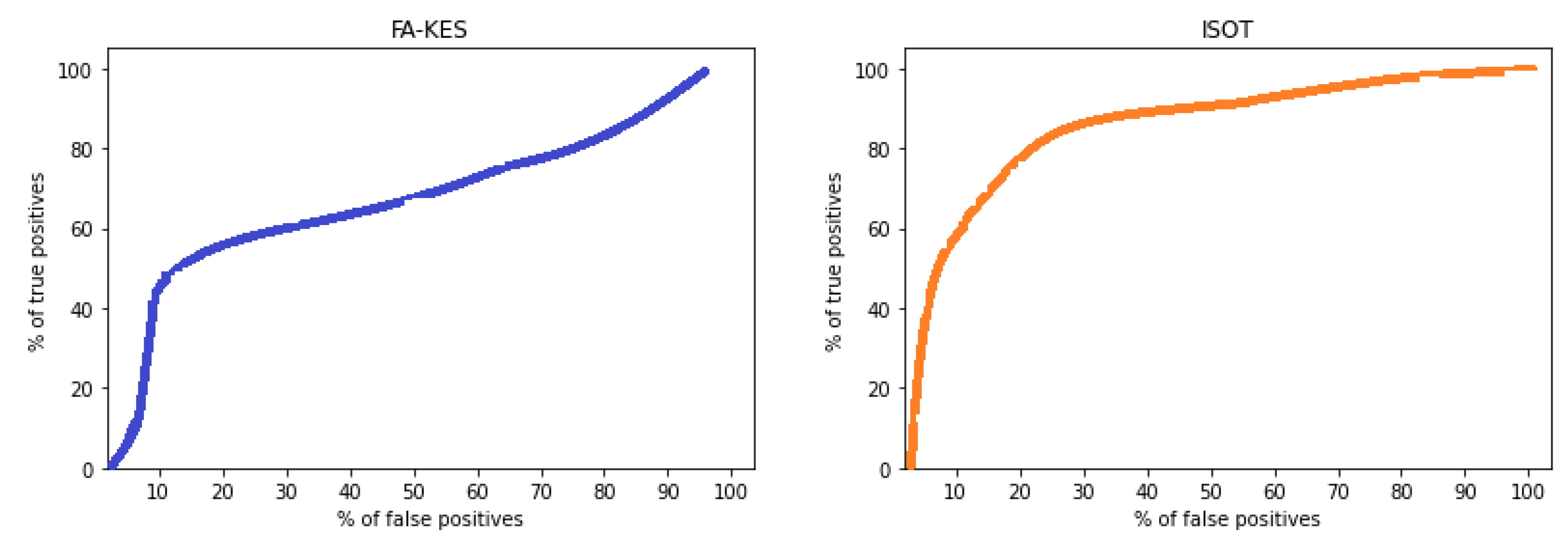

6. Conclusions

A hybrid model based on deep hypercontext for fake news detection, HyproBert, is presented in this paper. HyproBert uses the distill-Bert for embeddings, the convolution neural network for spatial data extraction, the BiGRU for contextual data extraction, and the CapsNet with self-attention for the hierarchical understanding of full and partial relations among data. The CNN, BiGRU, and CapsNet are equipped with optimized hyperparameters. The proposed HyproBert model is evaluated using FA-KES and ISOT datasets. HyproBert results in high performance; it surpassed the performance of the baseline models. The results indicate that the HyproBert has an accuracy of 61.1% and 99.3% for the FA-KES and ISOT datasets, respectively. In the future, a multimodal approach using text and images simultaneously for better classification and fake news detection can be explored.