Two Bregman Projection Methods for Solving Variational Inequality Problems in Hilbert Spaces with Applications to Signal Processing

Abstract

:1. Introduction

2. Preliminaries

3. Main Results

3.1. Bregman Projection Method with Fixed Stepsize

3.2. Bregman Projection Method with Self-Adaptive Stepsize

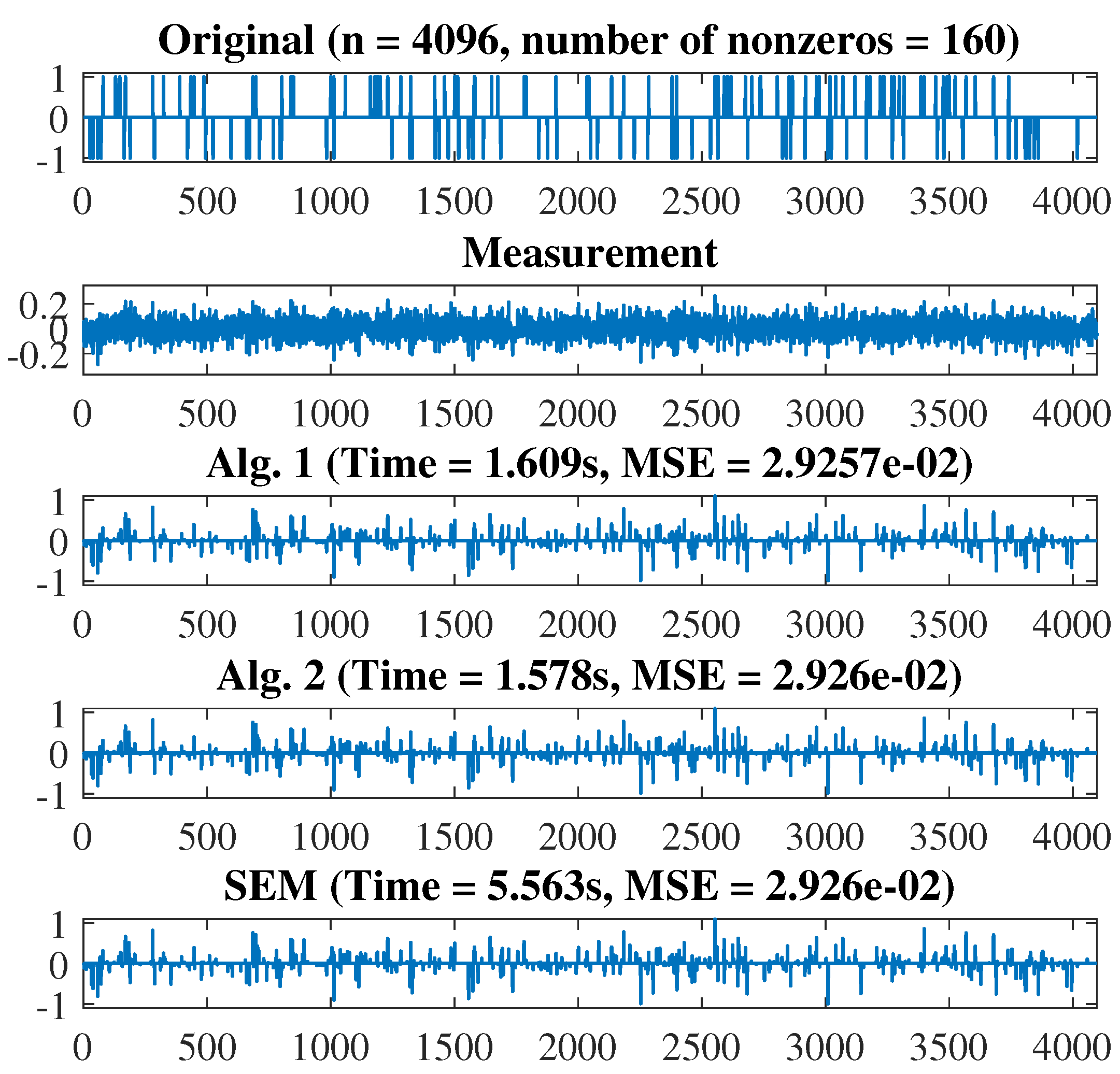

4. Numerical Examples

- (i)

- and for SE,

- (ii)

- and for KL,

- (iii)

- and for IS,

- (iv)

- and for MD.

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Glowinski, R.; Lions, J.L.; Trémolixexres, R. Numerical Analysis of Variational Inequalities; North-Holland: Amsterdam, The Netherlands, 1981. [Google Scholar]

- Kinderlehrer, D.; Stampacchia, G. An Introduction to Variational Inequalities and Their Applications; Academic Press: New York, NY, USA, 1980. [Google Scholar]

- Combettes, P.L.; Pesquet, J.-C. Deep neural structures solving variational inequalities. Set-Valued Var. Anal. 2020, 28, 491–518. [Google Scholar] [CrossRef] [Green Version]

- Juditsky, A.; Nemirovski, A. Solving variational inequalities with monotone operators in domains given by linear minimization oracles. arXiv 2016, arXiv:1312.1073v2. [Google Scholar] [CrossRef]

- Luo, M.J.; Zhang, Y. Robust solution sto box-contrained stochastic linear variational inequality problem. J. Ineq. Appl. 2017, 2017, 253. [Google Scholar] [CrossRef] [Green Version]

- Abass, H.A.; Aremu, K.O.; Jolaoso, L.O.; Mewomo, O.T. An inertial forward-backward splitting algorithm for approximating solutions of certain optimization problems. J. Nonlinear Funct. Anal. 2020, 2020, 6. [Google Scholar]

- Alakoya, T.O.; Jolaoso, L.O.; Mewomo, O.T. Modified Inertial Subgradient Extragradient Method with Self-Adaptive Stepsize for Solving Monotone Variational Inequality and Fixed Point Problems. Available online: https://www.tandfonline.com/doi/abs/10.1080/02331934.2020.1723586. (accessed on 1 November 2020).

- Cruz, J.Y.B.; Iusem, A.N. A strongly convergent direct method for monotone variational inequalities in Hilbert spaces. Numer. Funct. Anal. Optim. 2009, 30, 23–36. [Google Scholar] [CrossRef]

- Cai, G.; Gibali, A.; Iyiola, O.S.; Shehu, Y. A new double-projection method for solving variational inequalities in Banach space. J. Optim Theory Appl. 2018, 178, 219–239. [Google Scholar] [CrossRef]

- Cegielski, A. Iterative Methods for Fixed Point Problems in Hilbert Spaces; Lecture Notes in Mathematics; Springer: Heidelberg, Germany, 2012; Volume 2057. [Google Scholar]

- Ceng, L.C.; Teboulle, M.; Yao, J.C. Weak convergence of an iterative method for pseudomonotone variational inequalities and fixed-point problems. J. Optim. Theory Appl. 2010, 146, 19–31. [Google Scholar] [CrossRef]

- Censor, Y.; Gibali, A.; Reich, S. Algorithms for the split variational inequality problem. Numer. Algorithms 2012, 59, 301–323. [Google Scholar] [CrossRef]

- Censor, Y.; Gibali, A.; Reich, S. Extensions of Korpelevich’s extragradient method for variational inequality problems in Euclidean space. Optimization 2012, 61, 119–1132. [Google Scholar] [CrossRef]

- Censor, Y.; Gibali, A.; Reich, S. The subgradient extragradient method for solving variational inequalities in Hilbert spaces. J. Optim. Theory Appl. 2011, 148, 318–335. [Google Scholar] [CrossRef] [Green Version]

- Censor, Y.; Gibali, A.; Reich, S. Strong convergence of subgradient extragradient methods for the variational inequality problem in Hilbert space. Optim. Methods Softw. 2011, 26, 827–845. [Google Scholar] [CrossRef]

- Chidume, C.E.; Nnakwe, M.O. Convergence theorems of subgradient extragradient algorithm for solving variational inequalities and a convex feasibility problem. Fixed Theory Appl. 2018, 2018, 16. [Google Scholar] [CrossRef]

- Iusem, A.N. An iterative algorithm for the variational inequality problem. Comput. Appl. Math. 1994, 13, 103–114. [Google Scholar]

- Jolaoso, L.O.; Alakoya, T.O.; Taiwo, A.; Mewomo, O.T. An inertial extragradient method via viscosity approximation approach for solving equilibrium problem in Hilbert spaces. Optimization 2020. [Google Scholar] [CrossRef]

- Jolaoso, L.O.; Aphane, M. Weak and strong convergence Bregman extragradient schemes for solving pseudo-monotone and non-Lipschitz variational inequalities. J. Inequalities Appl. 2020, 2020, 195. [Google Scholar] [CrossRef]

- Jolaoso, L.O.; Taiwo, A.; Alakoya, T.O.; Mewomo, O.T. A unified algorithm for solving variational inequality and fixed point problems with application to split equality problems. Comput. Appl. Math. 2020, 39, 38. [Google Scholar] [CrossRef]

- Kanzow, C.; Shehu, Y. Strong convergence of a double projection-type method for monotone variational inequalities in Hilbert spaces. J. Fixed Point Theory Appl. 2018, 20. [Google Scholar] [CrossRef]

- Khanh, P.D.; Vuong, P.T. Modified projection method for strongly pseudomonotone variational inequalities. J. Glob. Optim. 2014, 58, 341–350. [Google Scholar] [CrossRef]

- Korpelevich, G.M. The extragradient method for finding saddle points and other problems. Ekon. Mat. Metody. 1976, 12, 747–756. (In Russian) [Google Scholar]

- Apostol, R.Y.; Semenov, A.A.G.V.V. Iterative algorithms for monotone bilevel variational inequalities. J. Comput. Appl. Math. 2012, 107, 3–14. [Google Scholar]

- Kassay, G.; Reich, S.; Sabach, S. Iterative methods for solving system of variational inequalities in reflexive Banach spaces. SIAM J. Optim. 2011, 21, 1319–1344. [Google Scholar] [CrossRef]

- Bauschke, H.H.; Combettes, P.L. Convex Analysis and Monotone Operator Theory in Hilbert Spaces; (CMS Books in Mathematics); Springer: New York, NY, USA, 2011. [Google Scholar]

- Halpern, B. Fixed points of nonexpanding maps. Proc. Am. Math. Soc. 1967, 73, 957–961. [Google Scholar] [CrossRef] [Green Version]

- Popov, L.C. A modification of the Arrow-Hurwicz method for finding saddle point. Math. Notes 1980, 28, 845–848. [Google Scholar] [CrossRef]

- Malitsky, Y.V.; Semenov, V.V. An extragradient algorithm for monotone variational inequalities. Cybern. Syst. Anal. 2014, 50, 271–277. [Google Scholar] [CrossRef]

- Nomirovskii, D.A.; Rublyov, B.V.; Semenov, V.V. Convergence of two-step method with Bregman divergence for solving variational inequalities. Cybern. Syst. Anal. 2019, 55, 359–368. [Google Scholar] [CrossRef]

- Bregman, L.M. The relaxation method for finding common points of convex sets and its application to the solution of problems in convex programming. USSR Comput. Math. Math. Phys. 1967, 7, 200–217. [Google Scholar] [CrossRef]

- Gibali, A. A new Bregman projection method for solving variational inequalities in Hilbert spaces. Pure Appl. Funct. Anal. 2018, 3, 403–415. [Google Scholar]

- Hieu, D.V.; Cholamjiak, P. Modified Extragradient Method with Bregman Distance for Variational Inequalities. Appl. Anal. 2020. Available online: https://www.tandfonline.com/doi/abs/10.1080/00036811.2020.1757078 (accessed on 1 November 2020).

- Denisov, S.V.; Semenov, V.V.; Stetsynk, P.I. Bregman extragradient method with monotone rule of step adjustment. Cybern. Syst. Anal. 2019, 55, 377–383. [Google Scholar] [CrossRef]

- Bauschke, H.H.; Borwein, J.M.; Combettes, P.L. Essential smoothness, essential strict convexity, and Legendre functions in Banach spaces. Commun. Contemp. Math. 2001, 3, 615–647. [Google Scholar] [CrossRef] [Green Version]

- Beck, A. First-Order Methods in Optimization, Society for Industrial and Applied Mathematics. Philadelphia 2017. Available online: https://www.worldcat.org/title/first-order-methods-in-optimization/oclc/1002692951 (accessed on 1 November 2020).

- Reich, S.; Sabach, S. A strong convergence theorem for proximal type- algorithm in reflexive Banach spaces. J. Nonlinear Convex Anal. 2009, 10, 471–485. [Google Scholar]

- Huang, Y.Y.; Jeng, J.C.; Kuo, T.Y.; Hong, C.C. Fixed point and weak convergence theorems for point-dependent λ-hybrid mappings in Banach spaces. Fixed Point Theory Appl. 2011, 2011, 105. [Google Scholar] [CrossRef] [Green Version]

- Lin, L.J.; Yang, M.F.; Ansari, Q.H.; Kassay, G. Existence results for Stampacchia and Minty type implicit variational inequalities with multivalued maps. Nonlinear Anal. Theory Methods Appl. 2005, 61, 1–19. [Google Scholar] [CrossRef]

- Mashreghi, J.; Nasri, M. Forcing strong convergence of Korpelevich’s method in Banach spaces with its applications in game theory. Nonlinear Anal. 2010, 72, 2086–2099. [Google Scholar] [CrossRef]

- Solodov, M.V.; Svaiter, B.F. A new projection method for variational inequality problems. SIAM J. Control Optim. 1999, 37, 765–776. [Google Scholar] [CrossRef]

- Xiao, Y.; Zhu, H. A conjugate gradient method to solve convex constrained monotone equations with applications in compressive sensing. J. Math. Anal. Appl. 2013, 405, 310–319. [Google Scholar] [CrossRef]

- Figueiredo, M.A.T.; Nowak, R.D.; Wright, S.J. Gradient projection for sparse reconstruction: Application to compressed sensing and other inverse problems. IEEE J. Sel. Top. Signal Process. 2007, 1, 586–597. [Google Scholar] [CrossRef] [Green Version]

- Shehu, Y.; Iyiola, O.S.; Ogbuisi, F.U. Iterative method with inertial terms for nonexpansive mappings: Applications to compressed sensing. Numer. Algorithms 2020, 83, 1321–1347. [Google Scholar] [CrossRef]

| Alg. 1 | Alg. 2 | SEM | ||

|---|---|---|---|---|

| Iter. | 29 | 24 | 41 | |

| CPU time (sec) | 0.0050 | 0.0022 | 0.0083 | |

| Iter. | 32 | 24 | 44 | |

| CPU time (sec) | 0.0165 | 0.0101 | 0.0203 | |

| Iter | 35 | 24 | 44 | |

| CPU time (sec) | 0.0161 | 0.0075 | 0.0211 | |

| Iter. | 37 | 24 | 45 | |

| CPU time (sec) | 0.0321 | 0.0146 | 0.0385 |

| Iter. | CPU | Iter. | CPU | Iter. | CPU | Iter. | CPU | ||

|---|---|---|---|---|---|---|---|---|---|

| SE | Alg. 1 | 23 | 0.0049 | 26 | 0.0099 | 18 | 0.0153 | 18 | 0.0163 |

| Alg. 2 | 15 | 0.0021 | 16 | 0.0039 | 16 | 0.0109 | 16 | 0.0138 | |

| KL | Alg. 1 | 15 | 0.0044 | 17 | 0.0081 | 18 | 0.0150 | 18 | 0.00160 |

| Alg 2. | 15 | 0.0040 | 15 | 0.0058 | 15 | 0.00109 | 15 | 0.0143 | |

| IS | Alg. 1 | 14 | 0.0048 | 14 | 0.0104 | 14 | 0.0133 | 12 | 0.0183 |

| Alg. 2 | 7 | 0.0028 | 5 | 0.0025 | 4 | 0.0041 | 3 | 0.0057 | |

| MD | Alg. 1 | 24 | 0.0104 | 27 | 0.0419 | 28 | 0.0960 | 30 | 0.1910 |

| Alg. 2 | 12 | 0.0094 | 12 | 0.0254 | 12 | 0.0423 | 15 | 0.0698 | |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Jolaoso, L.O.; Aphane, M.; Khan, S.H. Two Bregman Projection Methods for Solving Variational Inequality Problems in Hilbert Spaces with Applications to Signal Processing. Symmetry 2020, 12, 2007. https://doi.org/10.3390/sym12122007

Jolaoso LO, Aphane M, Khan SH. Two Bregman Projection Methods for Solving Variational Inequality Problems in Hilbert Spaces with Applications to Signal Processing. Symmetry. 2020; 12(12):2007. https://doi.org/10.3390/sym12122007

Chicago/Turabian StyleJolaoso, Lateef Olakunle, Maggie Aphane, and Safeer Hussain Khan. 2020. "Two Bregman Projection Methods for Solving Variational Inequality Problems in Hilbert Spaces with Applications to Signal Processing" Symmetry 12, no. 12: 2007. https://doi.org/10.3390/sym12122007

APA StyleJolaoso, L. O., Aphane, M., & Khan, S. H. (2020). Two Bregman Projection Methods for Solving Variational Inequality Problems in Hilbert Spaces with Applications to Signal Processing. Symmetry, 12(12), 2007. https://doi.org/10.3390/sym12122007