1. Introduction

Rough set theory [

1], as a new mathematical tool, is mainly used to deal with imprecise, inconsistent, and incomplete information. Because the purpose and starting point of rough set theory is to analyze and deduce data directly, discover hidden knowledge and reveal potential laws, it is a method of data mining or knowledge discovery [

2,

3,

4,

5]. Compared with other methods dealing with uncertainties, such as data mining based on evidence theory, the most significant difference is that it does not require any prior knowledge beyond the data set that the problem needs to deal with. In recent years, great advances have been made in the popularization and application of rough set theory. Based on formal concept analysis, Xu et al. [

6] proposed two operators between objects and attributes, established a new cognitive system model, and provided a method for arbitrary transformation of information granules in a cognitive system. Then, Xu and Li [

7] established a model and mechanism of a two-way learning system in a fuzzy dataset based on information granules. Medina and Ojeda-Aciego [

8] proposed that multi-adjoint frames can be applied to general T-concept lattices and then proved that the common information of two concept lattices can be regarded as a sublattice of the Cartesian product of two concept lattices. In the field of data mining, Kumar et al. [

9] combined the fuzzy C-means algorithm, based on fuzzy membership, with the improved artificial bee colony algorithm and proposed a hybrid algorithm to overcome the shortcomings of local optimum in the original clustering problem.

Rough set and fuzzy set are closely related to each other and both can deal with imprecision, vagueness, and uncertainty in incomplete data analysis. The application of fuzzy sets is also very extensive. Pozna et al. [

10] proposed a new data symbol representation framework, gave the related primitive operators, and discussed the applications related to the modeling of a fuzzy reasoning system. Jankowski et al. [

11] analyzed the impact of online advertising on advertising effect and user experience and proposed a balanced method of advertising resource development based on fuzzy multi-objective modeling. Not only in these aspects, but also in other areas, rough set theory plays an important role, such as in pattern recognition, machine learning, intelligent control and other fields [

12,

13,

14,

15,

16,

17].

Attribute reduction [

18,

19,

20,

21,

22] is one of the core contents of knowledge discovery. It describes how to delete unnecessary knowledge in an information system, to reduce the quantity of information to be processed in data mining and improve the efficiency of data mining. Since Skowron [

23] proposed an attribute reduction algorithm based on an identifiable matrix, the intuitive simplicity of identifiable matrix has attracted the attention of many scholars. Liang et al. [

24] extended information entropy, which can effectively measure the fuzziness of rough sets. Cao et al. [

25] measured the importance of attributes in decision tables from the definition of information entropy. Based on this, an effective decision table reduction algorithm was proposed. Hu et al. [

26] established a rough set complete reduction algorithm based on concept lattice model and investigated a decision table attribute reduction method based on concept lattice. Jiang and Wang [

27] studied a rough set attribute reduction algorithm based on discernibility matrix. The influence of different discernibility matrices on the efficiency of attribute reduction is analyzed. Then a new discernibility matrix is defined to reduce the number of elements in the matrix to improve the efficiency. Yang [

28] put forward a new discernibility matrix storage method (C-Tree) to realize the compression storage of a discernibility matrix. In recent references, there are many other attribute reduction methods [

29,

30,

31,

32,

33,

34,

35,

36,

37]. Although the appearance of duplicate elements in a discernibility matrix is eliminated and the compression storage of a discernibility matrix is realized, the method is useless for redundant parent set elements. Hence, Jiang [

38] investigated an attribute reduction algorithm based on a discernibility information tree. This algorithm cannot only delete duplicate elements in the discernibility matrix, but also eliminate the influence of parent set elements in most cases and realize compression and storage of the discernibility matrix. Because the above research is carried out under the equivalence relation, and the number of elements in the discernibility matrix under the dominance relation is two times that under the equivalence relation, the advantage of the discernibility information tree compression and storage in the ordered information system is more obvious. Based on this advantage, we generalize the discernibility information tree of equivalence relation to dominance relation. Each attribute set is regarded as a path mapped to the discernibility information tree in the identifiable matrix. The same identifiable attribute set is stored on the same path. Compared with the identifiable matrix, the discernibility information tree eliminates the occupancy of the space of the repeated attribute set. For the attribute sets with the same prefix, take

and

for example, when the two paths occur simultaneously, the path of the attribute set with fewer elements

is selected to replace the path of

, and the path of

is mapped onto the discernibility information tree, which reduces the space occupation of redundant elements. Finally, based on this tree, the algorithm of approximation reduction is established. This is the motivation of this article, behind the research presented here.

The paper is organized as follows. Related concepts and definitions are reviewed briefly in

Section 2, the basic knowledge of lower approximation reduction and discernibility information trees are briefly reviewed. In

Section 3, the discernibility information tree based on the lower approximation identifiable matrix is constructed by combining the lower approximation identifiable attribute set with the ordered tree, and the corresponding algorithm is also provided. Then, a complete lower approximation reduction algorithm is established based on a discernibility information tree. In

Section 4, to illustrate the effectiveness of the proposed method in this paper, a detailed example is presented to verify and explain the lower approximation reduction based on discernibility information system in the inconsistent decision information system. Finally,

Section 5 covers some conclusions.

3. The Method of the Lower Approximation Reduction Based on Discernibility Information Tree in an IODIS

In this section, combined with the lower approximation identifiable matrix, a discernibility information tree algorithm based on the lower approximation identifiable matrix is given under a dominance relation.

Discernibility information tree is a virtual tree structure. It does not need to process data one by one, but only the nodes in the tree through the tree structure. Each set of identifiable attributes is taken as a branch to form a path to achieve the goal. Then, the same set of identifiable attributes is stored in the same path, which reduces the waste of storage space. In addition, the set of identifiable attributes with the same prefix is mapped to the path corresponding to the smallest set of identifiable attributes. Without extending the path, the space occupied by redundant nodes is reduced.

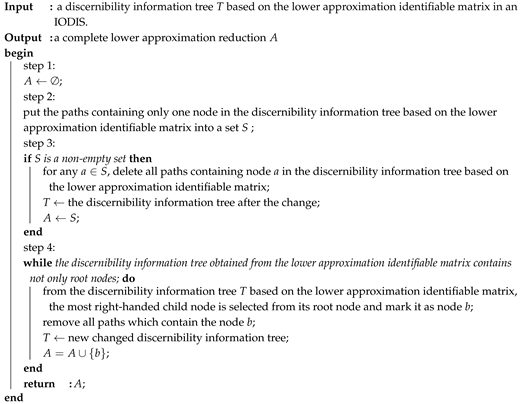

It can be seen from Algorithm 1 that the strategies of non-extended path and of deleting subtrees are used in constructing the discernibility information tree based on the lower approximation identifiable matrix in the ordered decision information system. Through the construction of the discernibility information tree, the following characteristics are summed up. First, map the same lower approximation identifiable attribute set to the same path. Then, map an identifiable attribute set with the same prefix to the path corresponding to the smallest identifiable attribute set. For example, for identifiable attribute sets and , which have the same prefix a, the smallest identifiable attribute set is chosen to map to the path . Finally, identifiable attribute sets in lower approximation identifiable matrices have shared prefixes, such as and , with shared prefixes b. It can compress and store the lower approximation identifiable matrix and reduce the space-time complexity of constructing the discernibility information tree based on the lower approximation identifiable matrix.

| Algorithm 1: The algorithm of discernibility information tree in an inconsistent ordered decision information system. |

![Symmetry 10 00696 i001 Symmetry 10 00696 i001]() |

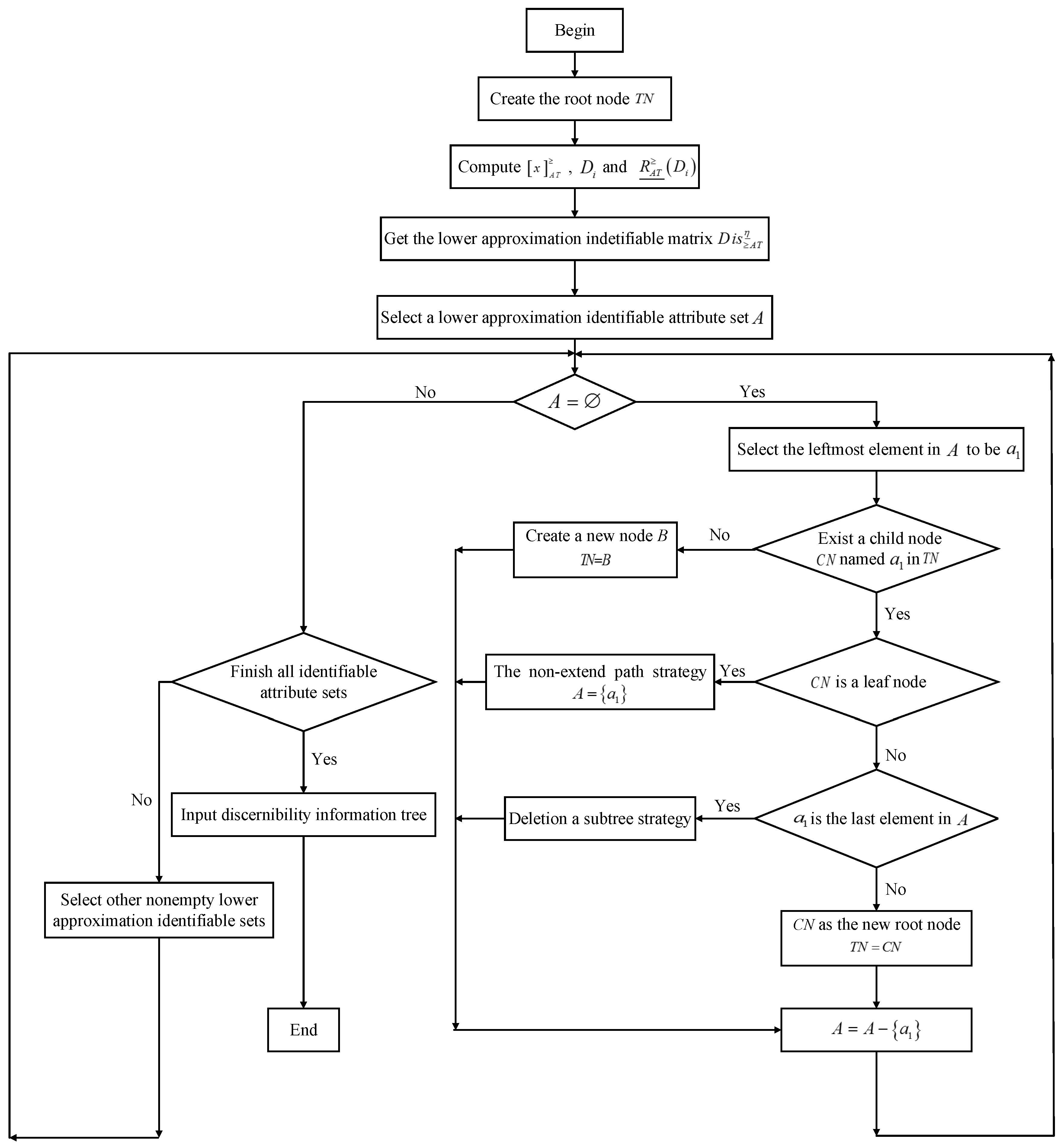

To introduce the algorithm step more clearly and intuitively, the flow chart is used to better explain how the Algorithm 1 is carried out (in

Figure 1).

In the following, based on the lower approximation identifiable matrix, the properties and proofs of the discernibility information tree are given.

Theorem 4. The discernibility information tree based on the lower approximation identifiable matrix includes all the condition attributes that are needed to obtain the lower approximation reduction of the inconsistent ordered decision information system.

Proof. Suppose be the lower approximation identifiable attribute sets corresponding to all paths in the discernibility information tree. The lower approximation identifiable matrix is expressed by . It is easy to know . For any , it satisfies that . There are a and such that . It is known from the lower approximation identifiable matrix that . Therefore, a discernibility information tree based on the lower approximation identifiable matrix includes all the condition attributes that are needed to obtain the lower approximation reduction of the inconsistent ordered decision information system. □

Theorem 5. In the discernibility information tree based on the lower approximation identifiable matrix, the union of identifiable attribute sets which are corresponding to paths with only one node constitutes the core of the condition attribute set in the decision information system.

Proof. According to the discernibility information tree based on lower approximation identifiable matrix, if the attribute name of a node in the information tree is a, there exists a path in the discernibility information tree that only includes the node a, and then there exists a path corresponding to the lower approximation identifiable attribute set . In the lower approximation identifiable matrix, if and , then it is said that a is a necessary relative to the condition attribute set . The collection of all necessary attributes of constitutes the relative core of relative to decision attribute set . Thus, the theorem has been proved. □

Theorem 6. Let A be a condition attribute set represented by all the children of the root node in the discernibility information tree. Then in an IODIS.

Proof. Let be an identifiable attribute set representing all paths based on the lower approximation identifiable matrix in discernibility information tree. Theorem 4 shows that the discernibility information tree based on the lower approximation identifiable matrix contains all the attributes that are needed to obtain the lower approximation reduction of the inconsistent ordered decision information system. For any , there is . Therefore, according to Theorem 2 of the lower approximation coordination set, we can see that . □

For a given inconsistent decision information system based on dominance relation, we derive that the number of objects is and that the number of condition attributes is . Then the number of a subset of a non-empty condition attribute set can be obtained is at most in a lower approximation identifiable matrix. Assuming that the number of non-empty subsets of a condition attribute set in the identifiable matrix is P and . As can be seen from the process of constructing discernibility information tree based on lower approximation identifiable matrix, a discernibility information tree can have at most P different paths and there are at most nodes in each path. Therefore, there are at most nodes in a discernibility information tree. Because there are many paths sharing prefixes in the discernibility information tree based on the lower approximation identifiable matrix, the actual number of nodes in the discernibility information tree is much less than . Thus, in the worst case, the spatial complexity of the discernibility information tree based on the lower approximation identifiable matrix is .

In addition, the algorithm iterates at most times. Meanwhile, during each iteration, it inserts at most nodes and deletes nodes . Thus, the time complexity of the algorithm is . Since the discernibility information tree contains up to nodes, is a maximum value of . Therefore, the time complexity of the discernibility information tree based on the lower approximation identifiable matrix is .

After constructing the discernibility information tree based on the lower approximation identifiable matrix, it avoids the classification and sorting of many identifiable attribute sets. Based on an ordered tree, a concise and intuitive path map is established, which not only realizes the compression and storage of data, but also makes the lower approximation reduction for the inconsistent ordered decision information system. In the following, a complete algorithm of lower approximation reduction is given based on the discernibility information tree.

Based on the analysis of the time complexity of Algorithm 1, we can know that there are at most nodes in the differential information tree. According to the construction process of Algorithm 2, we can know that the maximum number of iterations is times. Assuming that nodes are deleted during each iteration, the maximum number of nodes deleted after times iteration is . Thus, the complexity of Algorithm 2 is .

| Algorithm 2: The algorithm of lower approximation reduction based on the discernibility information tree in an IODIS. |

![Symmetry 10 00696 i002 Symmetry 10 00696 i002]() |

The flow chart in

Figure 2 illustrates the implementation process of Algorithm 2 more directly, which is helpful to understand the operation of the program.

At present, there are many methods for attribute reduction of decision tables, such as the attribute importance measure reduction algorithm. Based on the discernibility matrix of the decision table, the most important attribute is selected to be added to the core attribute set according to the importance measure of the attribute, and then the relative attribute reduction of the decision table is obtained. However, in the case of large data sets, the importance measure of computing all attributes greatly increases the time complexity of the algorithm. Similarly, relative reduction algorithm based on conditional entropy also has some shortcomings. When computing conditional entropy or information gain, floating-point calculation is needed many times, which greatly increases the time complexity of the algorithm and reduces the efficiency of the algorithm. Currently, the most commonly used algorithm is the reduction algorithm based on discernibility matrix. The basic method of this algorithm is to obtain identifiable functions and simplify it to become a disjunctive normal form. The algorithm can guarantee the completeness of the algorithm. However, in the process of simplification, if there are many repeated objects and too many comparisons between objects, the complexity of time and space will be increased. Moreover, the heuristic algorithm based on the discernibility matrix is mainly based on the discernibility matrix to find the core, and then, according to the heuristic rule, add the attributes until the condition is satisfied. Although the time performance of this algorithm is improved greatly, the complete reduction cannot be guaranteed. In this paper, by establishing a virtual tree structure, duplicate identifiable attribute sets are mapped to the same path to reduce the space occupation of repetitive attributes. At the same time, the redundant parent set elements are deleted by sharing a prefix, and the discernibility matrix is compressed and stored. This method not only saves space and reduces the time complexity, but also realizes the completeness of the algorithm.

Moreover, we will prove the completeness of Algorithm 2. In an inconsistent ordered decision information system , the following two conditions are required to prove the completeness of the Algorithm 2 ().

According to the equivalent condition of the lower approximation reduction, the above conditions can be transformed into the following forms by Theorem 2.

, then ;

, such that .

The above two expressions will prove the completeness of the reduction by the lower approximation identifiable set. Because the discernibility information tree based on the lower approximation identifiable matrix contains all the condition attributes needed for lower approximation reduction, the above two formulas are transformed into the following.

where represents a set of all paths in a discernibility information tree based on the lower approximation identifiable matrix.

Therefore, proving that the completeness of Algorithm 2 is equivalent to proving that the two formulas above are established. According to Algorithm 2, for any . Based on Theorem 5, in the discernibility information tree, the union of identifiable attribute sets corresponding to paths with only one node constitutes the core of the condition attribute set of the decision information system. The core of the decision table obtained in the third step of the Algorithm 2 is taken as part of the lower approximation reduction and all paths containing elements of core are deleted from the discernibility information tree. Suppose . If b is the rightmost element of S, the child node on the rightmost side of the root node must be b in the current discernibility information tree, and the subtree that takes this node b as its root does not contain any node corresponding to any attribute in . Therefore, for , such that . Similarly, other elements in S also satisfy this condition. Thus, the lower approximation reduction combined the discernibility information tree is a complete reduction.

4. An Illustrative Example

In the following, we will construct the discernibility information tree under the dominance relation according to the steps of Algorithm 1 and implement the lower approximation reduction based on this tree in the inconsistent ordered decision information system. An inconsistent ordered decision table is given in

Table 1, in which the object set is

, a condition attribute set

and a decision attribute set is

.

First, after a simple calculation, we can get the dominance classes based on the dominance relation .

| , | , |

| , | , |

| , | , |

| , | , |

| , | . |

Based on decision attribute set D, decision classes are

,

,

,

.

According to the above dominance classes, we can obtain . Thus, it is an inconsistent ordered decision information system. Next, we get lower approximations with respect to

Then, based on the lower approximation identifiable attribute set

, the lower approximation identifiable matrix is shown in

Table 2.

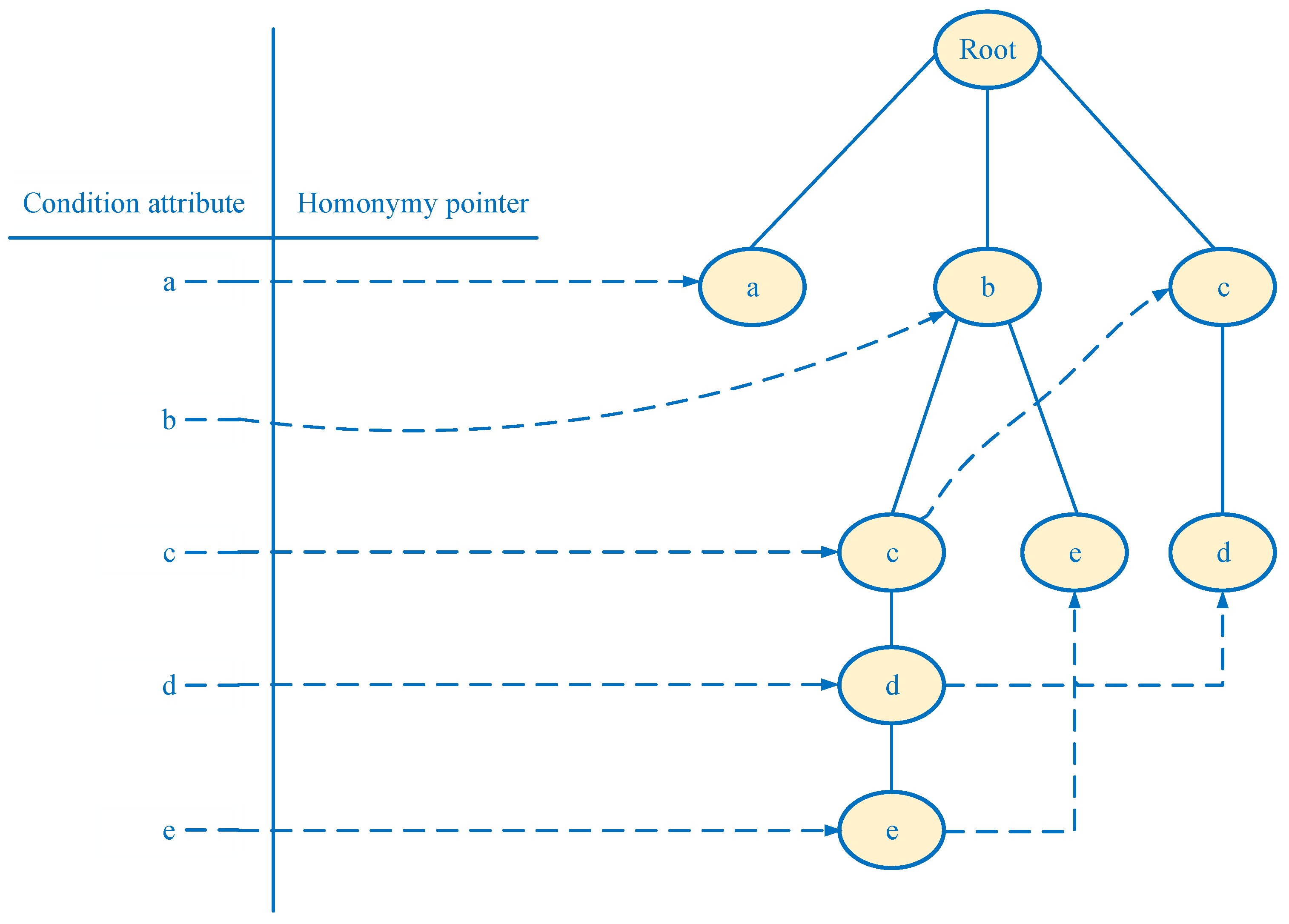

Furthermore, the root node of the discernibility information tree based on the lower approximate identifiable matrix is created. According to each subset of condition attribute set in the lower approximation identifiable matrix, the attributes in the subset are ordered from left to right in the ordered decision information system.

Next, a lower approximation identifiable attribute set of the lower approximation identifiable matrix is selected, and the corresponding path is created. Here we select the first subset

as the first path

and insert the discernibility information tree. Since the second identifiable attribute set is also

, it is also mapped to the first path. In the same way, the same identifiable attribute set is mapped to the same path. For another identifiable attribute set

in the lower approximation identifiable matrix, its corresponding path

is established. Since it is included in the first path, the strategy of deleting subtree is adopted. Delete all nodes

after

a, but keep node

a. That is, the original path

is modified to the path

. Next, another condition attribute set

maps the new path

and creates a new node

b. As for the lower approximation identifiable attribute set

, the corresponding path

is constructed, which has a shared prefix

b with the previous path

. Afterwards, we insert the path

corresponding to the condition attribute set

into the discernibility information tree. Similarly, we repeat the above procedure until inserting the last path into the discernibility information tree. Finally,

Figure 3 shows a lower approximation discernibility information tree based on Algorithm 1 and

Table 2.

In Algorithm 2, we will give a lower approximation reduction under the discernibility information tree. Before that, we first give all the results of reduction through the original method. According to Definition 5 and Theorem 2, the minimum disjunctive normal form is obtained by using conjunctive and disjunctive methods. Then, all the lower approximation reductions are obtained.

Therefore, lower approximation reductions are . Next, combined with Algorithm 2, a lower approximation reduction method based on the discernibility information tree is presented. The specific steps are as follows.

- (1)

According to the first step of Algorithm 2, we first establish an empty set A.

- (2)

Based on the lower approximation identifiable matrix, a path with only one node is selected in the discernibility information tree. Then, delete all the paths that contains only one node . That means removing the path .

- (3)

.

- (4)

Choose the right child node c of the root node in the discernibility information tree and .

- (5)

Delete paths and that contain the node c.

- (6)

At this point, there is only one path on the discernibility information tree. Afterwards, select the node b and delete the path . Finally, .

- (7)

At this time, the root node of the discernibility information tree has no child nodes. Thus, the algorithm is finished. A lower approximation reduction based on discernibility information tree is .

According to the above method, the lower approximation reduction is obtained. By comparing it to the original results, the correctness of the method is verified, and its effectiveness is demonstrated. The method of the discernibility information tree reduces the space occupation of redundant attributes and reduces the computational load.